Design Codesign of Embedded Systems HWSW Partitioning Algorithms

Design & Co-design of Embedded Systems HW/SW Partitioning Algorithms + Process Scheduling Maziar Goudarzi Fall 2005 Design & Co-design of Embedded Systems

Today Program z. Introduction z. Preliminaries z. Hardware/Software Partitioning z. Distributed System Co-Synthesis (Next session) Reference: Wayne Wolf, “Hardware/Software Co-Synthesis Algorithms, ” Chapter 2, Hardware/Software Co-Design: Principles and Practice, Eds: J. Staunstrup, W. Wolf, Kluwer Academic Publishers, 1997. Fall 2005 Design & Co-design of Embedded Systems 2

Topics z. Introduction z. A Classification z. Examples y. Vulcan y. Cosyma Fall 2005 Design & Co-design of Embedded Systems 3

Introduction to HW/SW Partitioning z. The first variety of co-synthesis applications z. Definition y. A HW/SW partitioning algorithm implements a specification on some sort of multiprocessor architecture z. Usually y. Multiprocessor architecture = one CPU + some ASICs on CPU bus Fall 2005 Design & Co-design of Embedded Systems 4

Introduction to HW/SW Partitioning (cont’d) z. A Terminology y. Allocation x. Synthesis methods which design the multiprocessor topology along with the PEs and SW architecture y. Scheduling x. The process of assigning PE (CPU and/or ASICs) time to processes to get executed Fall 2005 Design & Co-design of Embedded Systems 5

Introduction to HW/SW Partitioning (cont’d) z. In most partitioning algorithms y. Type of CPU is fixed and given y. ASICs must be synthesized x. What function to implement on each ASIC? x. What characteristics should the implementation have? y. Are single-rate synthesis problems x. CDFG is the starting model Fall 2005 Design & Co-design of Embedded Systems 6

HW/SW Partitioning (cont’d) z. Normal use of architectural components y. CPU performs less computationally-intensive functions y. ASICs used to accelerate core functions z. Where to use? y. High-performance applications x. No CPU is fast enough for the operations y. Low-cost application x. ASIC accelerators allow use of much smaller, cheaper CPU Fall 2005 Design & Co-design of Embedded Systems 7

A Classification z. Criterion: Optimization Strategy x. Trade-off between Performance and Cost y. Primal Approach x. Performance is the primary goal x. First, all functionality in ASICs. Progressively move more to CPU to reduce cost. y. Dual Approach x. Cost is the primary goal x. First, all functions in the CPU. Move operations to the ASIC to meet the performance goal. Fall 2005 Design & Co-design of Embedded Systems 8

A Classification (cont’d) z. Classification due to optimization strategy (cont’d) y. Example co-synthesis systems x. Vulcan (Stanford): Primal strategy x. Cosyma (Braunschweig, Germany): Dual strategy Fall 2005 Design & Co-design of Embedded Systems 9

Co-Synthesis Algorithms: HW/SW Partitioning Examples: Vulcan Fall 2005 Design & Co-design of Embedded Systems

Partitioning Examples: Vulcan z. Gupta, De Micheli, Stanford University z. Primal approach 1. All-HW initial implementation. 2. Iteratively move functionality to CPU to reduce cost. z. System specification language y. Hardware. C x. Is compiled into a flow graph Fall 2005 Design & Co-design of Embedded Systems 11

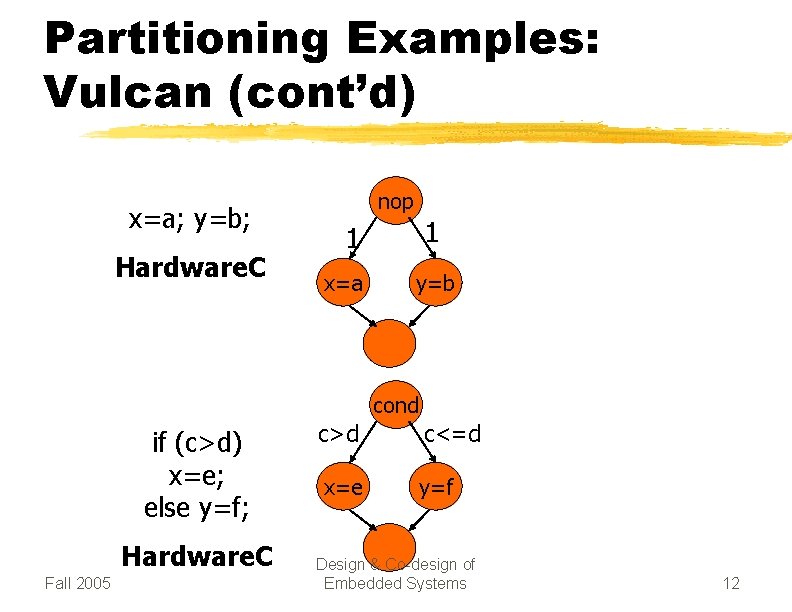

Partitioning Examples: Vulcan (cont’d) x=a; y=b; Hardware. C nop 1 1 x=a y=b cond if (c>d) x=e; else y=f; Hardware. C Fall 2005 c>d c<=d x=e y=f Design & Co-design of Embedded Systems 12

Partitioning Examples: Vulcan (cont’d) z. Flow Graph Definition y. A variation of a (single-rate) task graph y. Nodes x. Represent operations x. Typically low-level operations: mult, add y. Edges x. Represent data dependencies x. Each contains a Boolean condition under which the edge is traversed Fall 2005 Design & Co-design of Embedded Systems 13

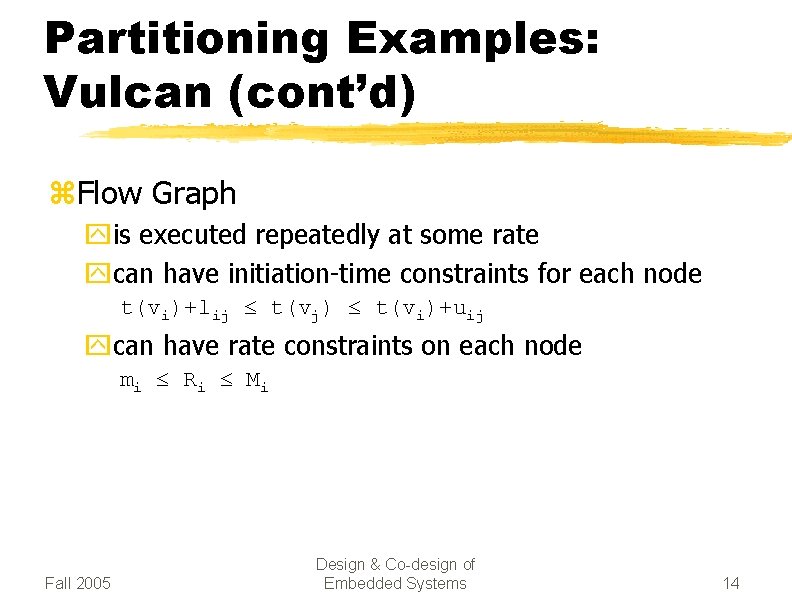

Partitioning Examples: Vulcan (cont’d) z. Flow Graph yis executed repeatedly at some rate ycan have initiation-time constraints for each node t(vi)+lij t(vj) t(vi)+uij ycan have rate constraints on each node mi Ri M i Fall 2005 Design & Co-design of Embedded Systems 14

Partitioning Examples: Vulcan (cont’d) z. Vulcan Co-synthesis Algorithm y. Partitioning quantum is a thread x. Algorithm divides the flow graph into threads and allocates them x. Thread boundary is determined by 1. (always) a non-deterministic delay element, such as wait for an external variable 2. (on choice) other points of flow graph y. Target architecture x. CPU + Co-processor (multiple ASICs) Fall 2005 Design & Co-design of Embedded Systems 15

Partitioning Examples: Vulcan (cont’d) z. Vulcan Co-synthesis algorithm (cont’d) y. Allocation x. Primal approach y. Scheduling xis done by a scheduler on the target CPU • is generated as part of synthesis process • schedules all threads (both HW and SW threads) xcannot be static, due to some threads non-deterministic initiation-time Fall 2005 Design & Co-design of Embedded Systems 16

Partitioning Examples: Vulcan (cont’d) z. Vulcan Co-synthesis algorithm (cont’d) y. Cost estimation x. SW implementation • Code size – relatively straight forward • Data size – Biggest challenge. – Vulcan puts some effort to find bounds for each thread x. HW implementation • ? Fall 2005 Design & Co-design of Embedded Systems 17

Partitioning Examples: Vulcan (cont’d) z. Vulcan Co-synthesis algorithm (cont’d) y. Performance estimation x. Both SW- and HW-implementation • From flow-graph, and basic execution times for the operators Fall 2005 Design & Co-design of Embedded Systems 18

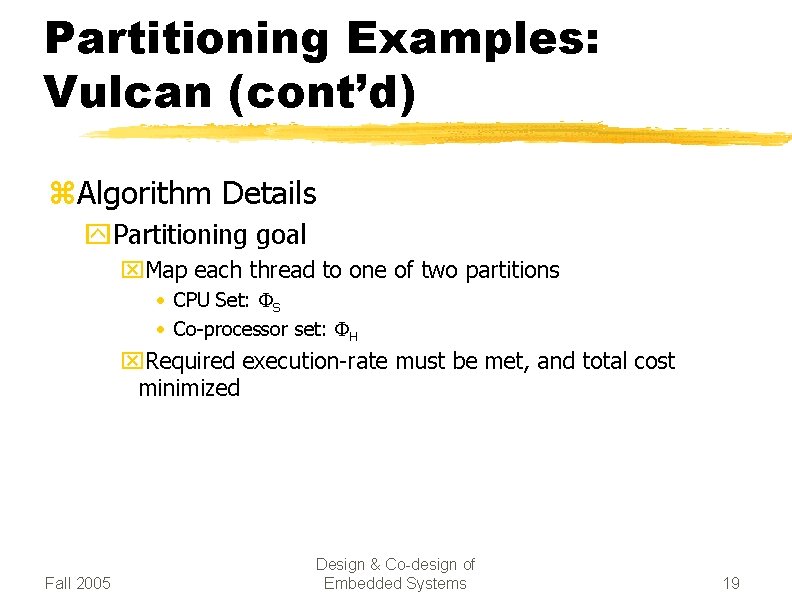

Partitioning Examples: Vulcan (cont’d) z. Algorithm Details y. Partitioning goal x. Map each thread to one of two partitions • CPU Set: FS • Co-processor set: FH x. Required execution-rate must be met, and total cost minimized Fall 2005 Design & Co-design of Embedded Systems 19

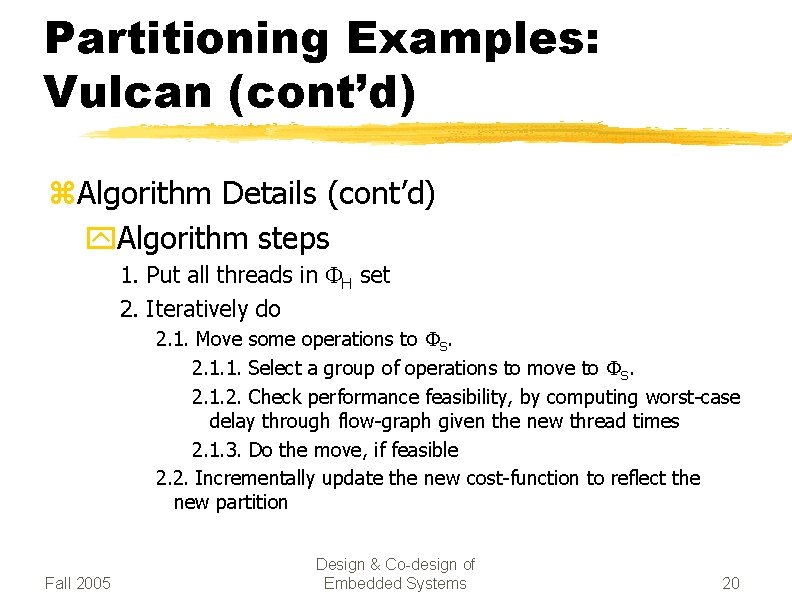

Partitioning Examples: Vulcan (cont’d) z. Algorithm Details (cont’d) y. Algorithm steps 1. Put all threads in FH set 2. Iteratively do 2. 1. Move some operations to FS. 2. 1. 1. Select a group of operations to move to FS. 2. 1. 2. Check performance feasibility, by computing worst-case delay through flow-graph given the new thread times 2. 1. 3. Do the move, if feasible 2. 2. Incrementally update the new cost-function to reflect the new partition Fall 2005 Design & Co-design of Embedded Systems 20

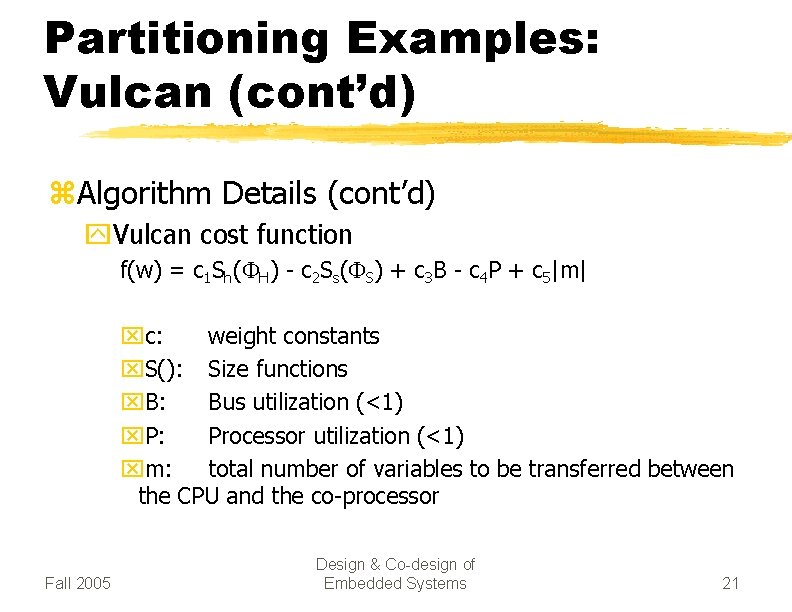

Partitioning Examples: Vulcan (cont’d) z. Algorithm Details (cont’d) y. Vulcan cost function f(w) = c 1 Sh(FH) - c 2 Ss(FS) + c 3 B - c 4 P + c 5|m| xc: weight constants x. S(): Size functions x. B: Bus utilization (<1) x. P: Processor utilization (<1) xm: total number of variables to be transferred between the CPU and the co-processor Fall 2005 Design & Co-design of Embedded Systems 21

Partitioning Examples: Vulcan (cont’d) z Algorithm Details (cont’d) y. Complementary notes x. A heuristic to minimize communication • Once a thread is moved to FS, its immediate successors are placed in the list for evaluation in the next iteration. x. No back-track • Once a thread is assigned to FS, it remains there x. Experimental results • considerably faster implementations than all-SW, but much cheaper than all-HW designs are produced Fall 2005 Design & Co-design of Embedded Systems 22

Co-Synthesis Algorithms: HW/SW Partitioning Examples: Cosyma Fall 2005 Design & Co-design of Embedded Systems

Partitioning Examples: Cosyma z. Rolf Ernst, et al: Technical University of Braunschweig, Germany z. Dual approach 1. All-SW initial implementation. 2. Iteratively move basic blocks to the ASIC accelerator to meet performance objective. z. System specification language y. C x x. Is compiled into an ESG (Extended Syntax Graph) x. ESG is much like a CDFG Fall 2005 Design & Co-design of Embedded Systems 24

Partitioning Examples: Cosyma (cont’d) z. Cosyma Co-synthesis Algorithm y. Partitioning quantum is a Basic Block x. A Basic Block is a branch-free block of program y. Target Architecture x. CPU + accelerator ASIC(s) y. Scheduling y. Allocation y. Cost Estimation y. Performance Estimation y. Algorithm Details Fall 2005 Design & Co-design of Embedded Systems 25

Partitioning Examples: Cosyma (cont’d) z. Cosyma Co-synthesis Algorithm (cont’d) y. Performance Estimation x. SW implementation • Done by examining the object code for the basic block generated by a compiler x. HW implementation • Assumes one operator per clock cycle. • Creates a list schedule for the DFG of the basic block. • Depth of the list gives the number of clock cycles required. x. Communication • Done by data-flow analysis of the adjacent basic blocks. • In Shared-Memory & Co-design of – Proportional. Design to number of variables to be accessed Fall 2005 Embedded Systems 26

Partitioning Examples: Cosyma (cont’d) z. Algorithm Steps y. Change in execution-time caused by moving basic block b from CPU to ASIC: Dc(b) = w( t. HW(b)-t. SW(b) + tcom(Z) - tcom(ZUb)) x It(b) yw : Constant weight yt(b): Execution time of basic block b ytcom(b): Estimated communication time between CPU and the accelerator ASIC, given a set Z of basic blocks implemented on the ASIC y. It(b): Total number of times that b is executed Fall 2005 Design & Co-design of Embedded Systems 27

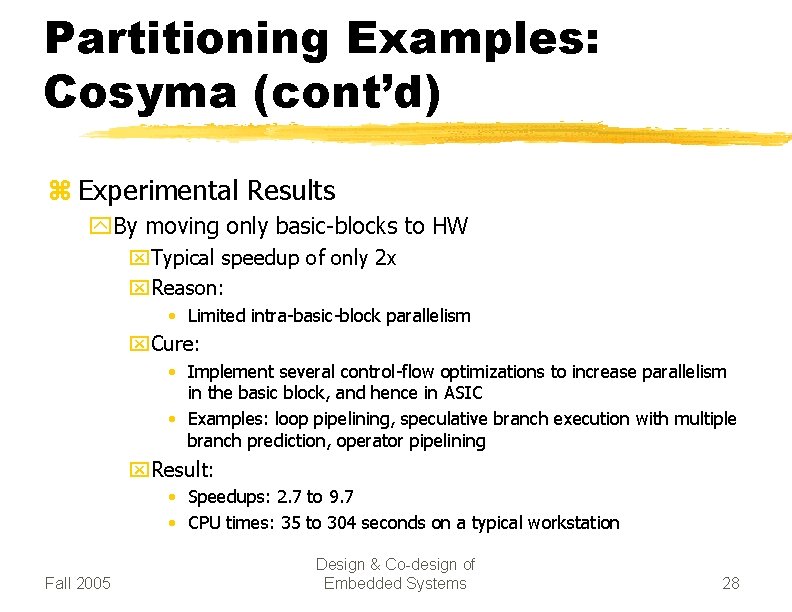

Partitioning Examples: Cosyma (cont’d) z Experimental Results y. By moving only basic-blocks to HW x. Typical speedup of only 2 x x. Reason: • Limited intra-basic-block parallelism x. Cure: • Implement several control-flow optimizations to increase parallelism in the basic block, and hence in ASIC • Examples: loop pipelining, speculative branch execution with multiple branch prediction, operator pipelining x. Result: • Speedups: 2. 7 to 9. 7 • CPU times: 35 to 304 seconds on a typical workstation Fall 2005 Design & Co-design of Embedded Systems 28

Summary z. What’s co-synthesis z. Various keywords used in classification of cosynthesis algorithms z. HW/SW Partitioning : One broad category of co -synthesis algorithms z. Criteria by which a co-synthesis algorithm is categorized Fall 2005 Design & Co-design of Embedded Systems 29

Processes and operating systems z. Scheduling policies: y. RMS; y. EDF. z. Scheduling modeling assumptions. z. Interprocess communication. z. Power management. Reference (and slides): Wayne Wolf, “Computers as Components: Principles of Embedded Computing System Design, ” Chapter 6 (Processes © 2000 Morgan Overheads for Computers as and Operating Systems), MKP, 2001. Kaufman Components 30

Metrics z. How do we evaluate a scheduling policy: y. Ability to satisfy all deadlines. y. CPU utilization---percentage of time devoted to useful work. y. Scheduling overhead---time required to make scheduling decision. © 2000 Morgan Kaufman Overheads for Computers as Components 31

Rate monotonic scheduling z. RMS (Liu and Layland): widely-used, analyzable scheduling policy. z. Analysis is known as Rate Monotonic Analysis (RMA). © 2000 Morgan Kaufman Overheads for Computers as Components 32

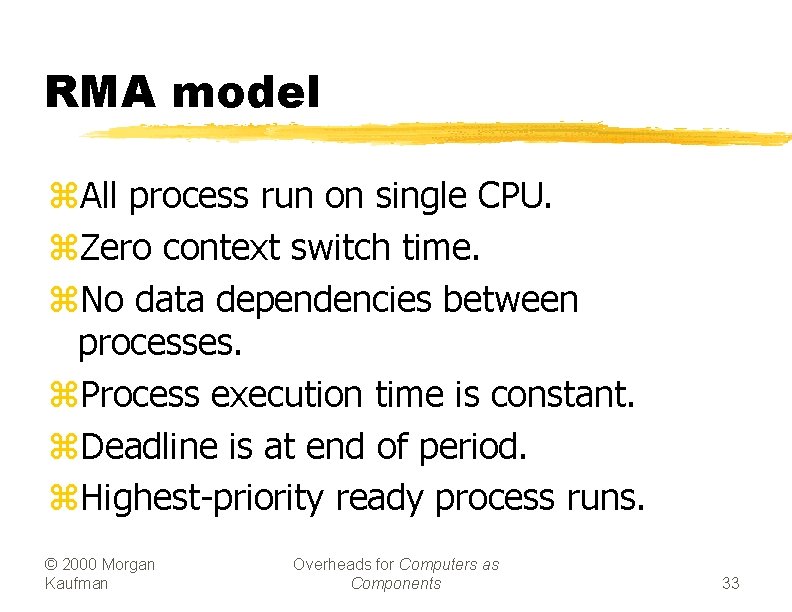

RMA model z. All process run on single CPU. z. Zero context switch time. z. No data dependencies between processes. z. Process execution time is constant. z. Deadline is at end of period. z. Highest-priority ready process runs. © 2000 Morgan Kaufman Overheads for Computers as Components 33

Process parameters z. Ti is computation time of process i; ti is period of process i. period ti Pi computation time Ti © 2000 Morgan Kaufman Overheads for Computers as Components 34

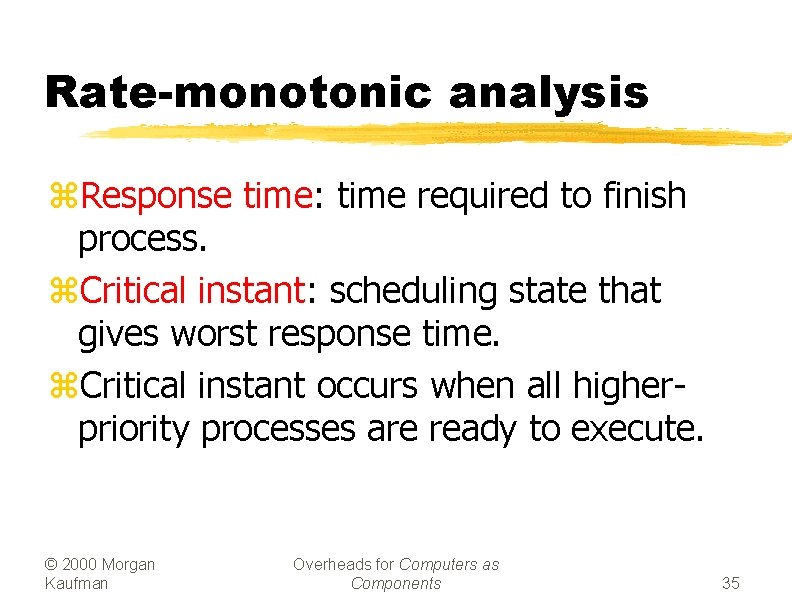

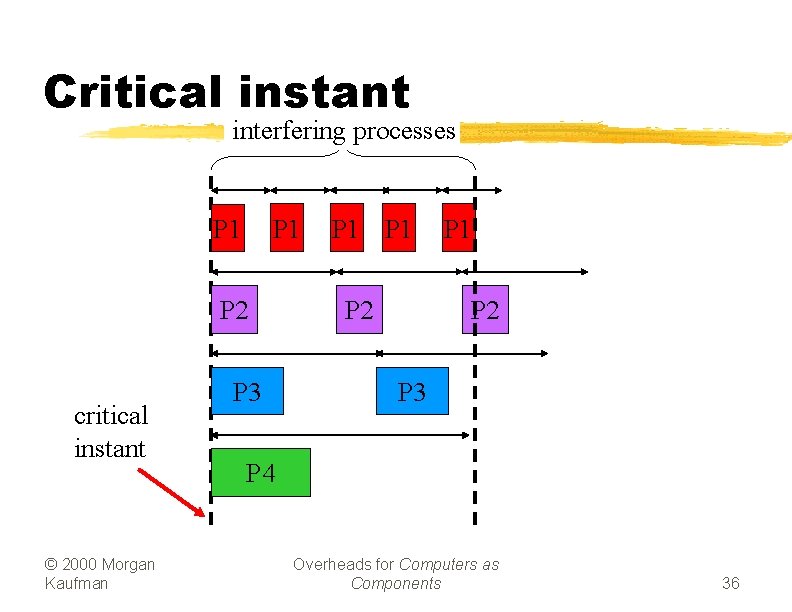

Rate-monotonic analysis z. Response time: time required to finish process. z. Critical instant: scheduling state that gives worst response time. z. Critical instant occurs when all higherpriority processes are ready to execute. © 2000 Morgan Kaufman Overheads for Computers as Components 35

Critical instant interfering processes P 1 P 2 critical instant © 2000 Morgan Kaufman P 3 P 1 P 2 P 3 P 4 Overheads for Computers as Components 36

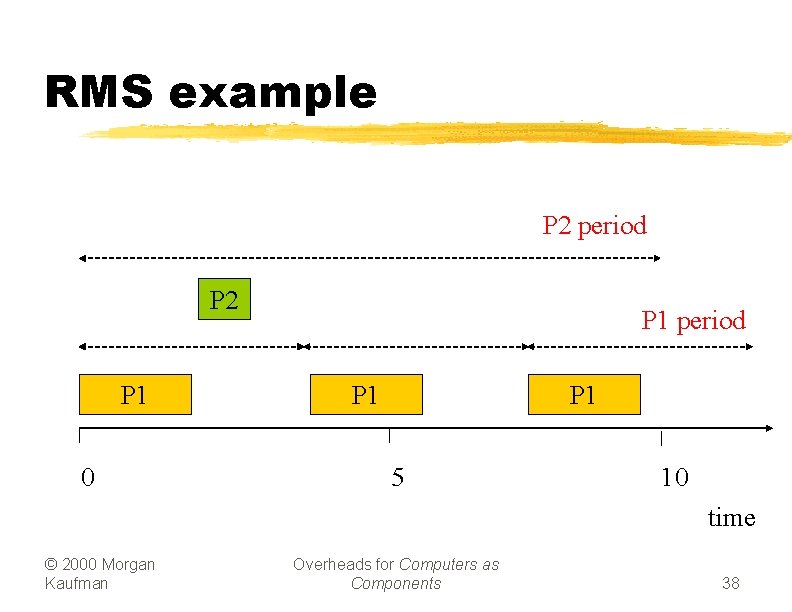

RMS priorities z. Optimal (fixed) priority assignment: yshortest-period process gets highest priority; ypriority inversely proportional to period; ybreak ties arbitrarily. z. No fixed-priority scheme does better. © 2000 Morgan Kaufman Overheads for Computers as Components 37

RMS example P 2 period P 2 P 1 0 P 1 period P 1 5 10 time © 2000 Morgan Kaufman Overheads for Computers as Components 38

RMS CPU utilization z. Utilization for n processes is y. S i T i / t i z. As number of tasks approaches infinity, maximum utilization approaches 69%. © 2000 Morgan Kaufman Overheads for Computers as Components 39

RMS CPU utilization, cont’d. z. RMS cannot asymptotically guarantee use 100% of CPU, even with zero context switch overhead. z. Must keep idle cycles available to handle worst-case scenario. z. However, RMS guarantees all processes will always meet their deadlines. © 2000 Morgan Kaufman Overheads for Computers as Components 40

RMS implementation z. Efficient implementation: yscan processes; ychoose highest-priority active process. © 2000 Morgan Kaufman Overheads for Computers as Components 41

Earliest-deadline-first scheduling z. EDF: dynamic priority scheduling scheme. z. Process closest to its deadline has highest priority. z. Requires recalculating processes at every timer interrupt. © 2000 Morgan Kaufman Overheads for Computers as Components 42

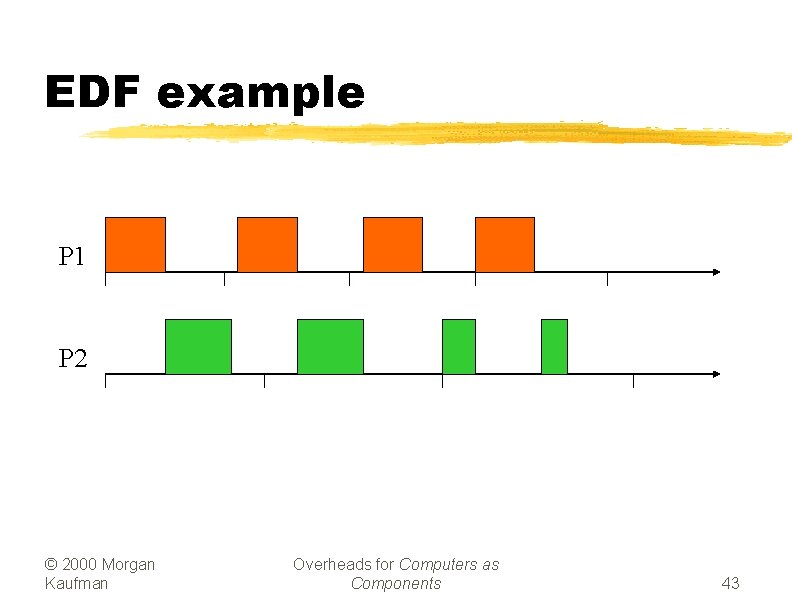

EDF example P 1 P 2 © 2000 Morgan Kaufman Overheads for Computers as Components 43

EDF analysis z. EDF can use 100% of CPU. z. But EDF may miss a deadline. © 2000 Morgan Kaufman Overheads for Computers as Components 44

EDF implementation z. On each timer interrupt: ycompute time to deadline; ychoose process closest to deadline. z. Generally considered too expensive to use in practice. © 2000 Morgan Kaufman Overheads for Computers as Components 45

Fixing scheduling problems z. What if your set of processes is unschedulable? y. Change deadlines in requirements. y. Reduce execution times of processes. y. Get a faster CPU. © 2000 Morgan Kaufman Overheads for Computers as Components 46

Priority inversion z. Priority inversion: low-priority process keeps high-priority process from running. z. Improper use of system resources can cause scheduling problems: y. Low-priority process grabs I/O device. y. High-priority device needs I/O device, but can’t get it until low-priority process is done. z. Can cause deadlock. © 2000 Morgan Kaufman Overheads for Computers as Components 47

Solving priority inversion z. Give priorities to system resources. z. Have process inherit the priority of a resource that it requests. y. Low-priority process inherits priority of device if higher. © 2000 Morgan Kaufman Overheads for Computers as Components 48

Data dependencies z Data dependencies allow us to improve utilization. y. Restrict combination of processes that can run simultaneously. z P 1 and P 2 can’t run simultaneously. © 2000 Morgan Kaufman Overheads for Computers as Components P 1 P 2 49

Context-switching time z. Non-zero context switch time can push limits of a tight schedule. z. Hard to calculate effects---depends on order of context switches. z. In practice, OS context switch overhead is small. © 2000 Morgan Kaufman Overheads for Computers as Components 50

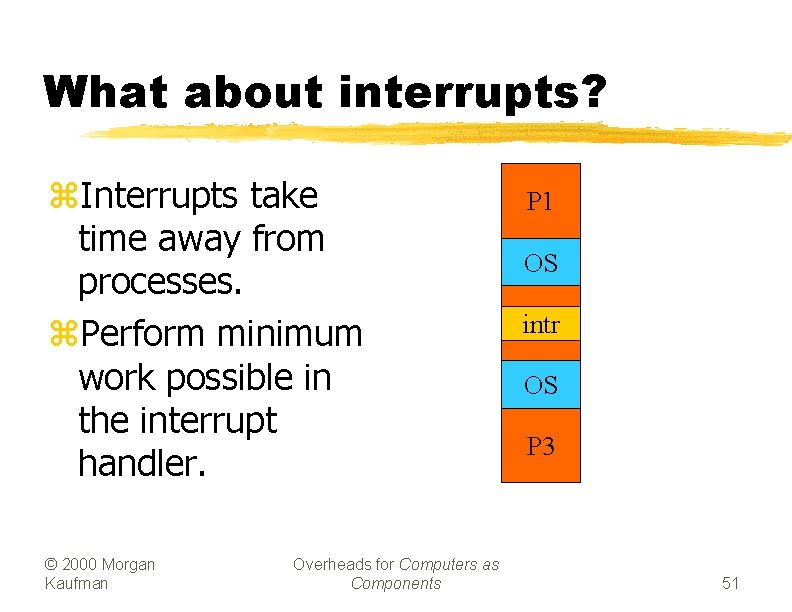

What about interrupts? z. Interrupts take time away from processes. z. Perform minimum work possible in the interrupt handler. © 2000 Morgan Kaufman Overheads for Computers as Components P 1 OS intr P 2 OS P 3 51

Device processing structure z. Interrupt service routine (ISR) performs minimal I/O. y. Get register values, put register values. z. Interrupt service process/thread performs most of device function. © 2000 Morgan Kaufman Overheads for Computers as Components 52

Summary z. Two major scheduling policies y. RMS y. EDF z. Other parameters affecting scheduling y. Priority inversion y. Data dependencies y. Context switching time y. Interrupts Fall 2005 Design & Co-design of Embedded Systems 53

Assignment z. Questions from Chapter 6 of text book y. From 6. 17 to 6. 26 z. Due date: y. Two weeks: Sunday, Azar 27 th Fall 2005 Design & Co-design of Embedded Systems 54

- Slides: 54