Design and Analysis of Experiments Lecture 4 2

![Calculation = EMS(Error) = ½[EMS(Sample) – EMS(Error)] = ¼[EMS(Batch) – EMS(Sample)] Diploma in Statistics Calculation = EMS(Error) = ½[EMS(Sample) – EMS(Error)] = ¼[EMS(Batch) – EMS(Sample)] Diploma in Statistics](https://slidetodoc.com/presentation_image/3aedf8b35ffaa95c7592c724b1819929/image-14.jpg)

- Slides: 50

Design and Analysis of Experiments Lecture 4. 2 Part 1: Components of Variation – identifying sources of variation – hierarchical design for variance component estimation – hierarchical ANOVA Part 2: Measurement System Analysis – – – Accuracy and Precision Repeatability and Reproducibility Components of measurement variation Analysis of Variance Case study: the Micro. Meter Diploma in Statistics Design and Analysis of Experiments 1

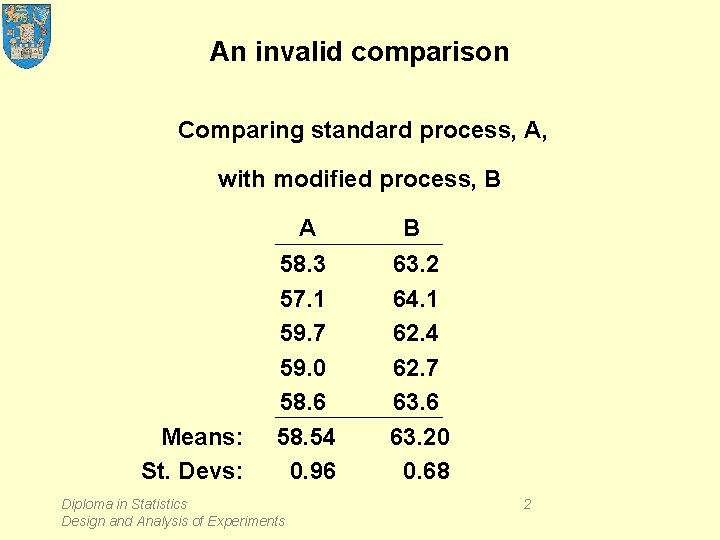

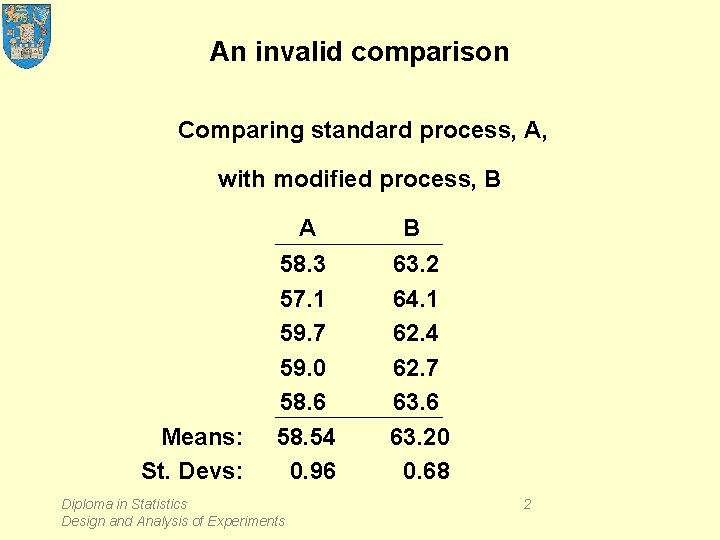

An invalid comparison Comparing standard process, A, with modified process, B Means: St. Devs: A 58. 3 57. 1 59. 7 59. 0 58. 6 58. 54 0. 96 Diploma in Statistics Design and Analysis of Experiments B 63. 2 64. 1 62. 4 62. 7 63. 6 63. 20 0. 68 2

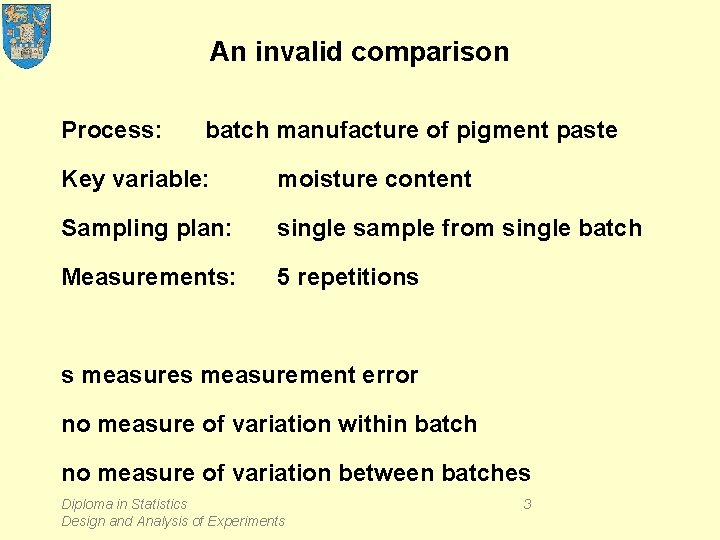

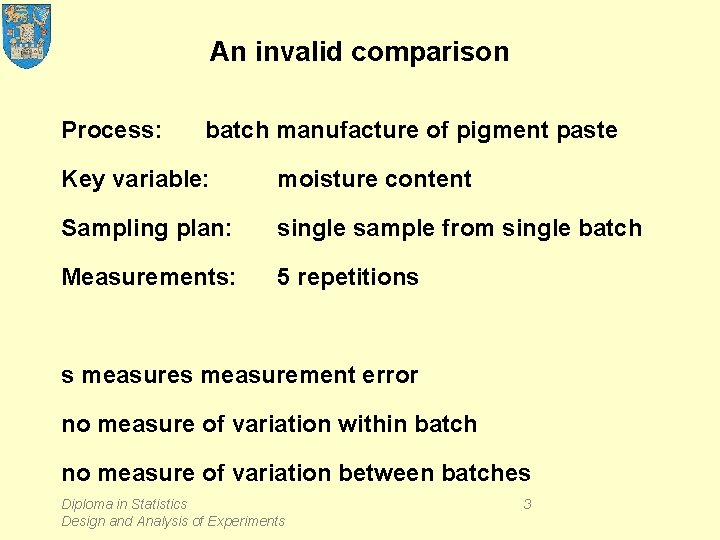

An invalid comparison Process: batch manufacture of pigment paste Key variable: moisture content Sampling plan: single sample from single batch Measurements: 5 repetitions s measurement error no measure of variation within batch no measure of variation between batches Diploma in Statistics Design and Analysis of Experiments 3

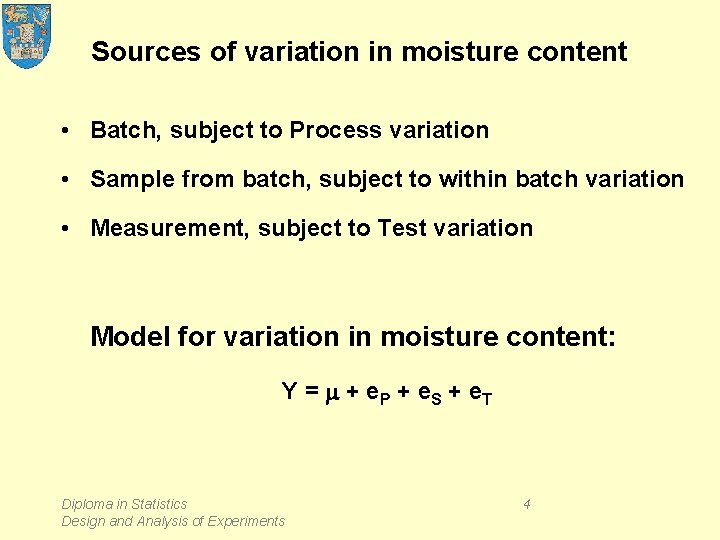

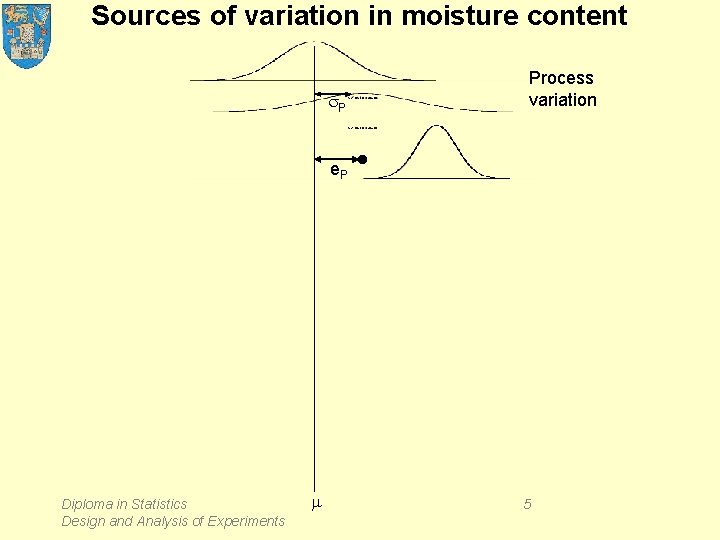

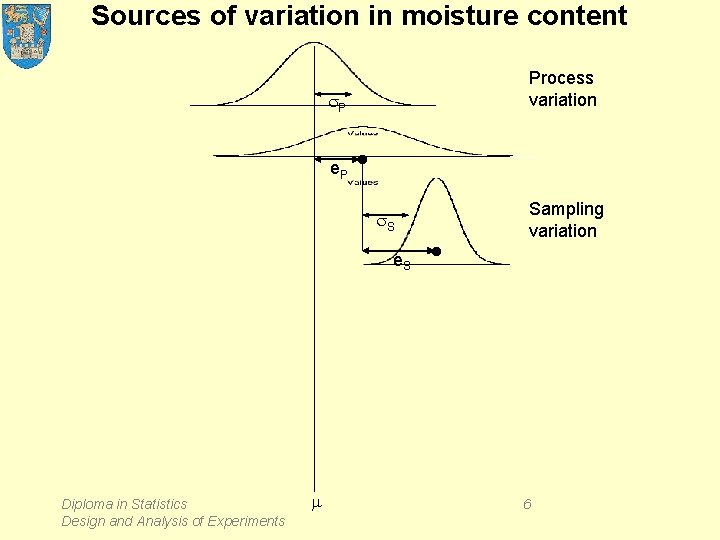

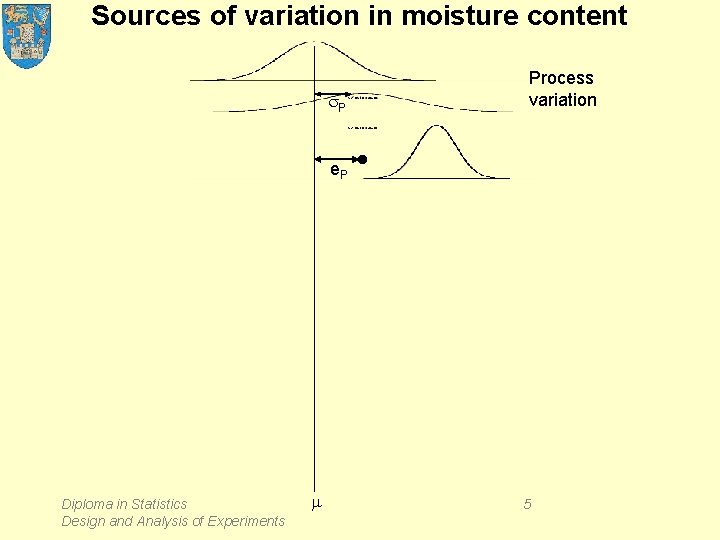

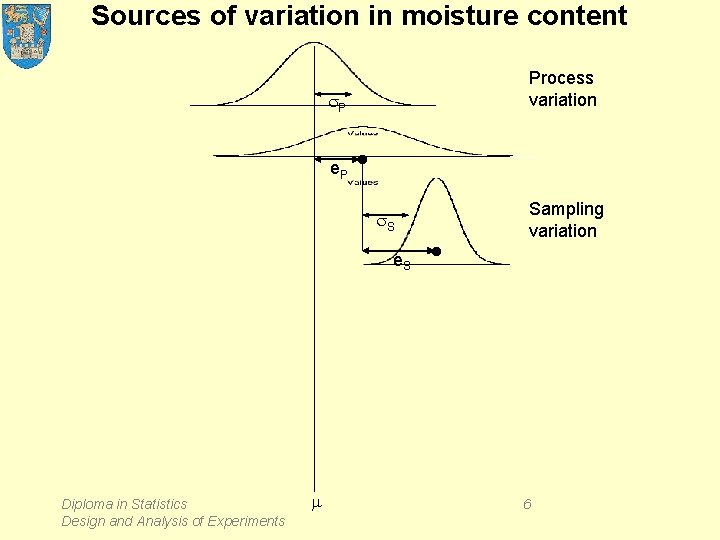

Sources of variation in moisture content • Batch, subject to Process variation • Sample from batch, subject to within batch variation • Measurement, subject to Test variation Model for variation in moisture content: Y = m + e. P + e. S + e. T Diploma in Statistics Design and Analysis of Experiments 4

Sources of variation in moisture content Process variation s. P e. P Diploma in Statistics Design and Analysis of Experiments m • 5

Sources of variation in moisture content Process variation s. P e. P • s. S e. S Diploma in Statistics Design and Analysis of Experiments m • Sampling variation 6

Sources of variation in moisture content Process variation s. P e. P • s. S e. S Sampling variation • Testing variation s. T • e. T Diploma in Statistics Design and Analysis of Experiments m e = e. P + e. P y 7

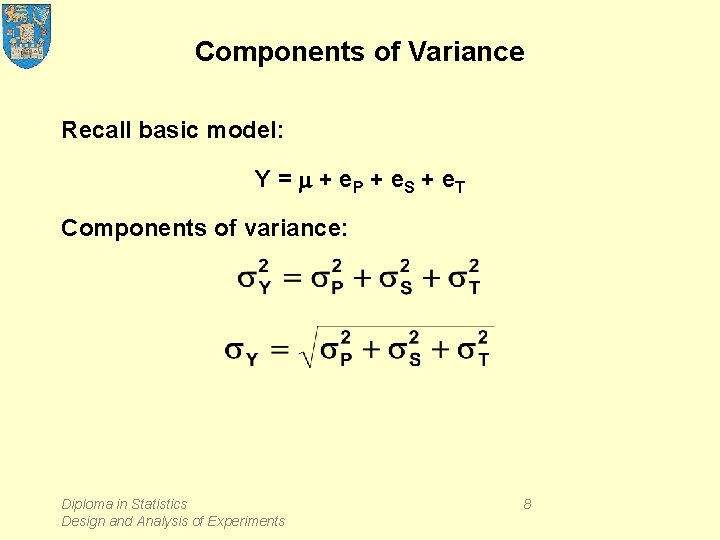

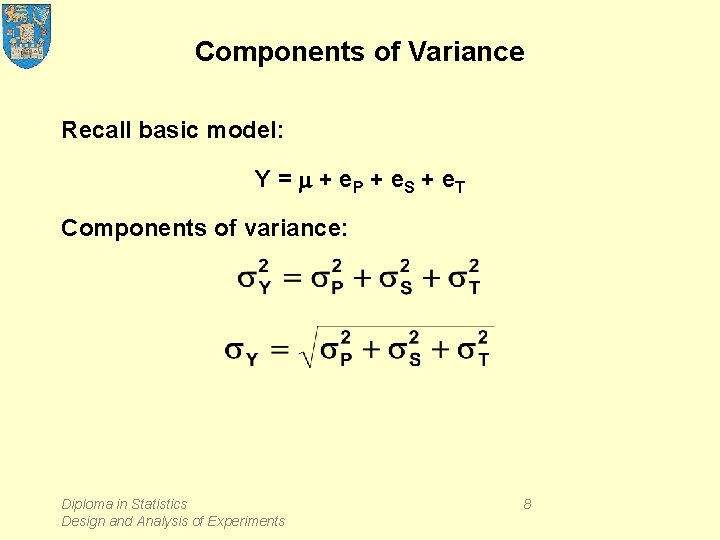

Components of Variance Recall basic model: Y = m + e. P + e. S + e. T Components of variance: Diploma in Statistics Design and Analysis of Experiments 8

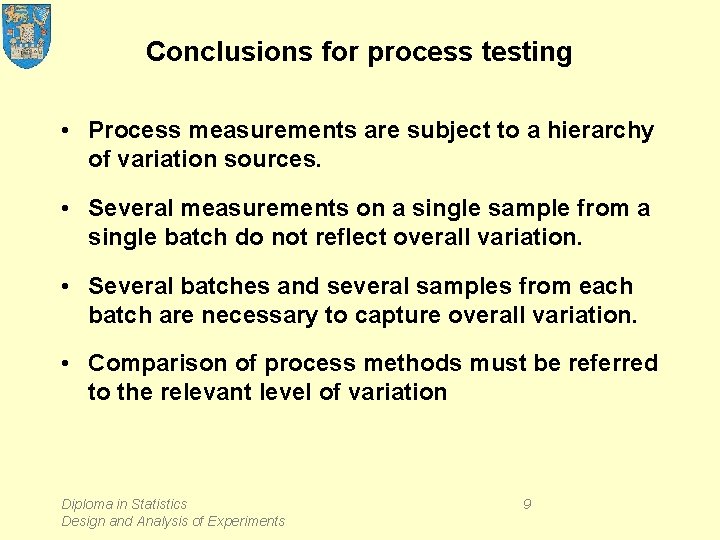

Conclusions for process testing • Process measurements are subject to a hierarchy of variation sources. • Several measurements on a single sample from a single batch do not reflect overall variation. • Several batches and several samples from each batch are necessary to capture overall variation. • Comparison of process methods must be referred to the relevant level of variation Diploma in Statistics Design and Analysis of Experiments 9

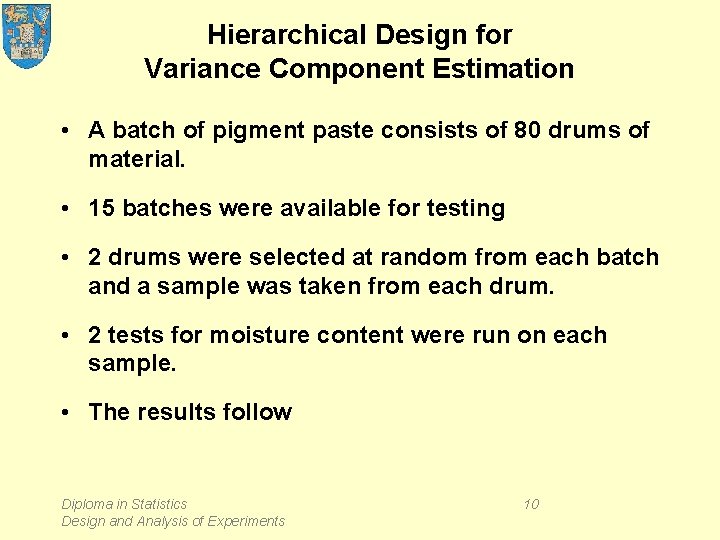

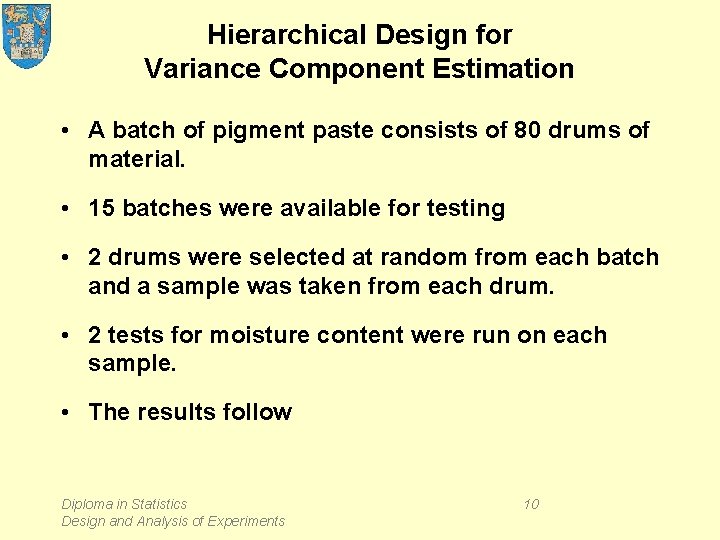

Hierarchical Design for Variance Component Estimation • A batch of pigment paste consists of 80 drums of material. • 15 batches were available for testing • 2 drums were selected at random from each batch and a sample was taken from each drum. • 2 tests for moisture content were run on each sample. • The results follow Diploma in Statistics Design and Analysis of Experiments 10

Hierarchical Design for Variance Component Estimation Diploma in Statistics Design and Analysis of Experiments 11

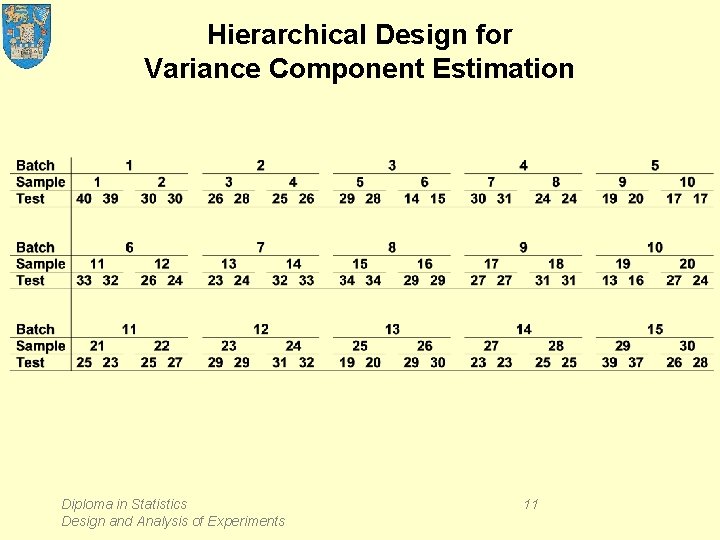

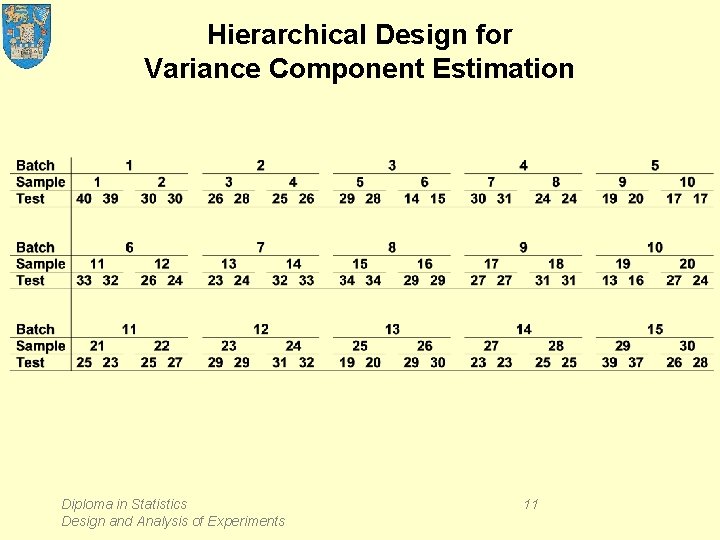

Nested ANOVA: Test versus Batch, Sample Analysis of Variance for Test Source Batch Sample Error Total DF 14 15 30 59 SS 1216. 2333 871. 5000 27. 0000 2114. 7333 MS 86. 8738 58. 1000 0. 9000 F 1. 495 64. 556 P 0. 224 0. 000 Variance Components Source Batch Sample Error Total Var Comp. 7. 193 28. 600 0. 900 36. 693 % of Total 19. 60 77. 94 2. 45 Diploma in Statistics Design and Analysis of Experiments St. Dev 2. 682 5. 348 0. 949 6. 058 12

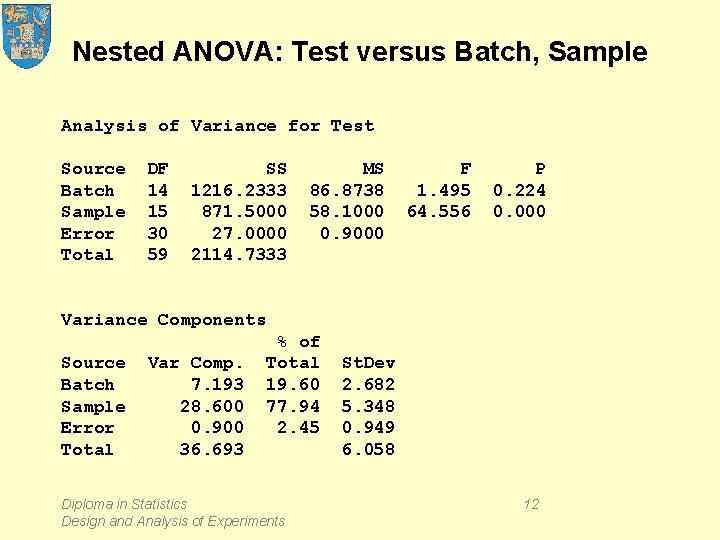

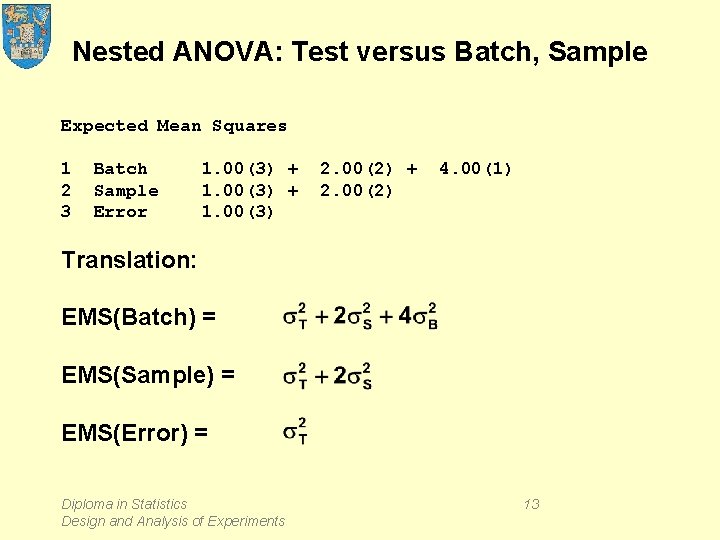

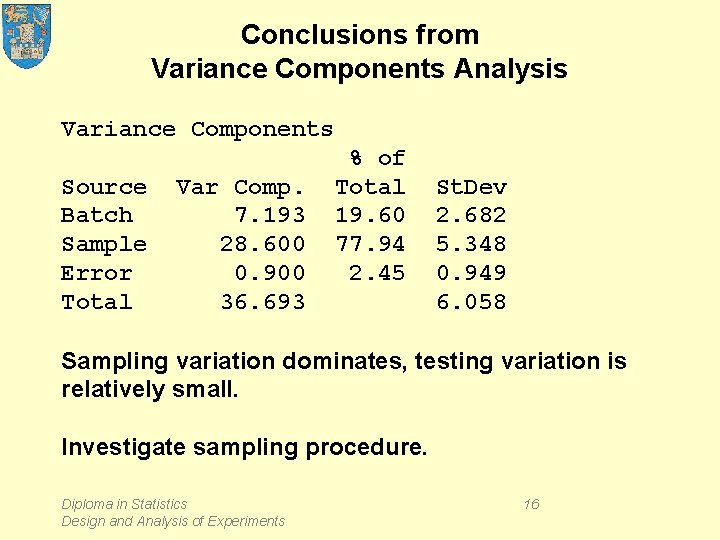

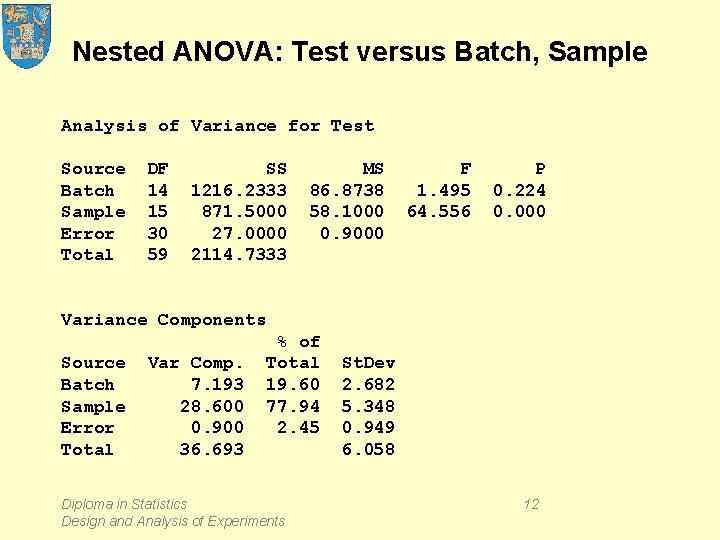

Nested ANOVA: Test versus Batch, Sample Expected Mean Squares 1 2 3 Batch Sample Error 1. 00(3) + 1. 00(3) 2. 00(2) + 2. 00(2) 4. 00(1) Translation: EMS(Batch) = EMS(Sample) = EMS(Error) = Diploma in Statistics Design and Analysis of Experiments 13

![Calculation EMSError ½EMSSample EMSError ¼EMSBatch EMSSample Diploma in Statistics Calculation = EMS(Error) = ½[EMS(Sample) – EMS(Error)] = ¼[EMS(Batch) – EMS(Sample)] Diploma in Statistics](https://slidetodoc.com/presentation_image/3aedf8b35ffaa95c7592c724b1819929/image-14.jpg)

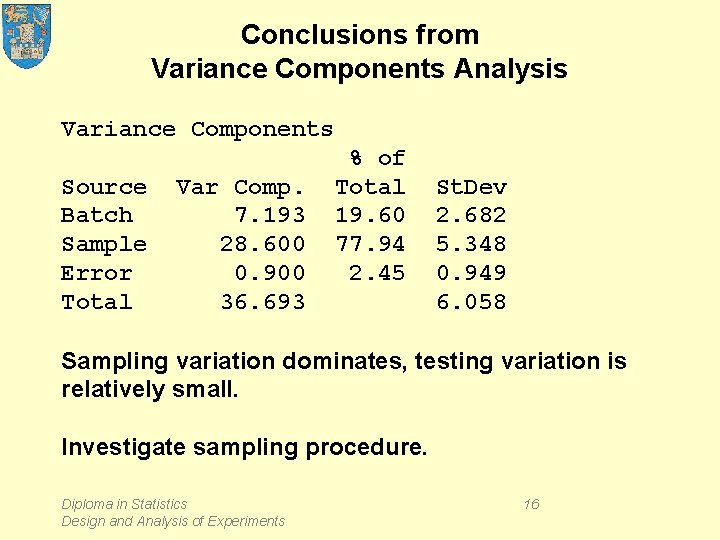

Calculation = EMS(Error) = ½[EMS(Sample) – EMS(Error)] = ¼[EMS(Batch) – EMS(Sample)] Diploma in Statistics Design and Analysis of Experiments 14

Theory Model: Yijk = m + ai + bi(j) + eijk Yij. = m + ai + bi(j) + eij. Yi. . = m + ai + bi(. ) + ei. . Decomposition: (Yijk – Y. . . ) = (Yi. . – Y. . . ) + (Yij. – Yi. . ) + (Yijk – Yij. ) (Yij. – Yi. . ) = (bi(j) – bi(. ) ) + (eij. – ei. . ) EMS involves and Diploma in Statistics Design and Analysis of Experiments 15

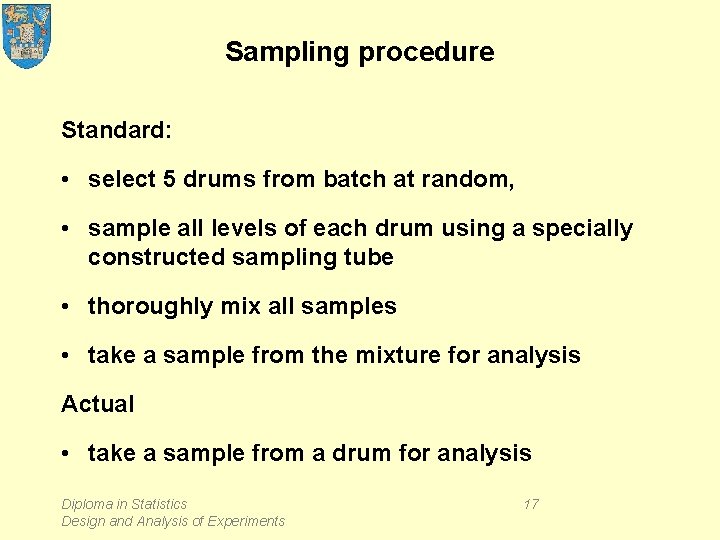

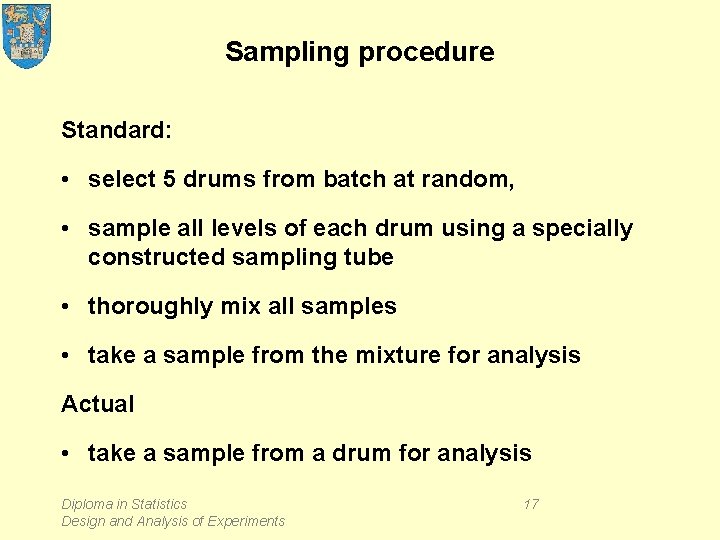

Conclusions from Variance Components Analysis Variance Components Source Batch Sample Error Total Var Comp. 7. 193 28. 600 0. 900 36. 693 % of Total 19. 60 77. 94 2. 45 St. Dev 2. 682 5. 348 0. 949 6. 058 Sampling variation dominates, testing variation is relatively small. Investigate sampling procedure. Diploma in Statistics Design and Analysis of Experiments 16

Sampling procedure Standard: • select 5 drums from batch at random, • sample all levels of each drum using a specially constructed sampling tube • thoroughly mix all samples • take a sample from the mixture for analysis Actual • take a sample from a drum for analysis Diploma in Statistics Design and Analysis of Experiments 17

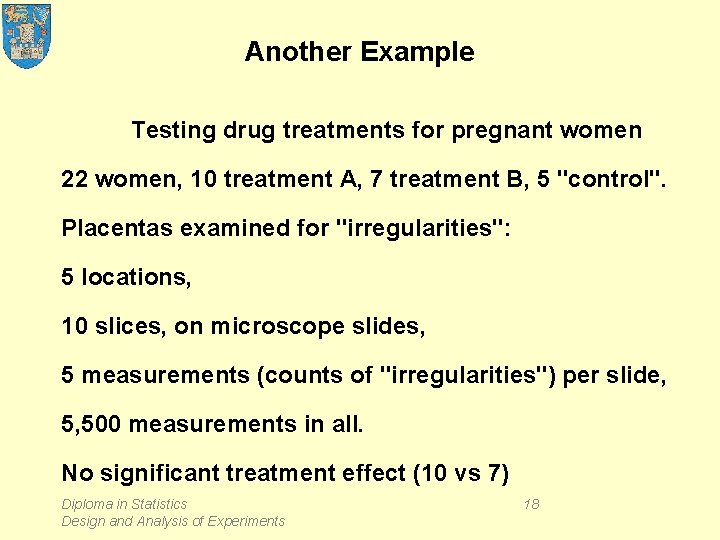

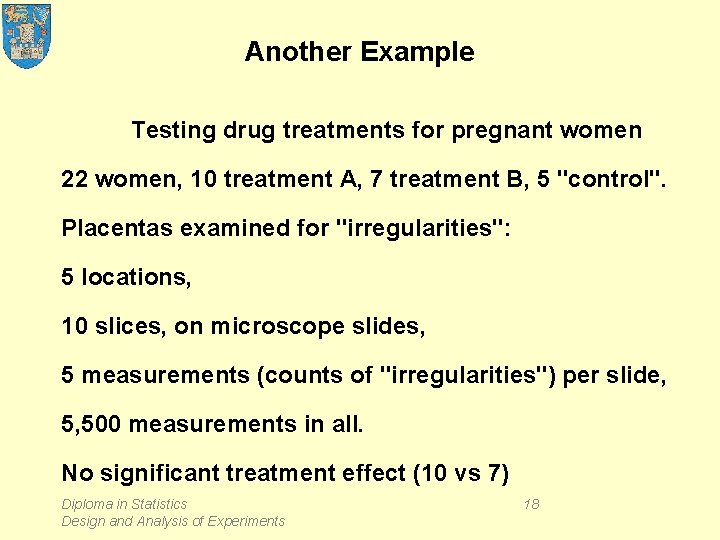

Another Example Testing drug treatments for pregnant women 22 women, 10 treatment A, 7 treatment B, 5 "control". Placentas examined for "irregularities": 5 locations, 10 slices, on microscope slides, 5 measurements (counts of "irregularities") per slide, 5, 500 measurements in all. No significant treatment effect (10 vs 7) Diploma in Statistics Design and Analysis of Experiments 18

Yet Another Example Comparing schools on student performance Schools Classes within schools Students within classes Diploma in Statistics Design and Analysis of Experiments 19

Design and Analysis of Experiments Lecture 4. 2 Part 2: Measurement System Analysis – – – Accuracy and Precision Repeatability and Reproducibility Components of measurement variation Analysis of Variance Case study: the Micro. Meter Diploma in Statistics Design and Analysis of Experiments 20

The Micro. Meter optical comparator Diploma in Statistics Design and Analysis of Experiments 21

The Micro. Meter optical comparator • Place object on stage of travel table • Align cross-hair with one edge • Move and re-align cross-hair with other edge • Read the change in alignment • Sources of variation: – instrument error – operator error – parts (manufacturing process) variation Diploma in Statistics Design and Analysis of Experiments 22

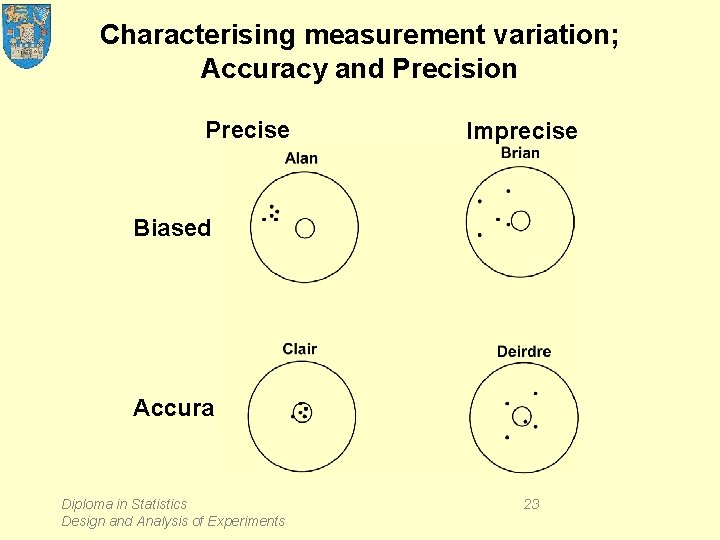

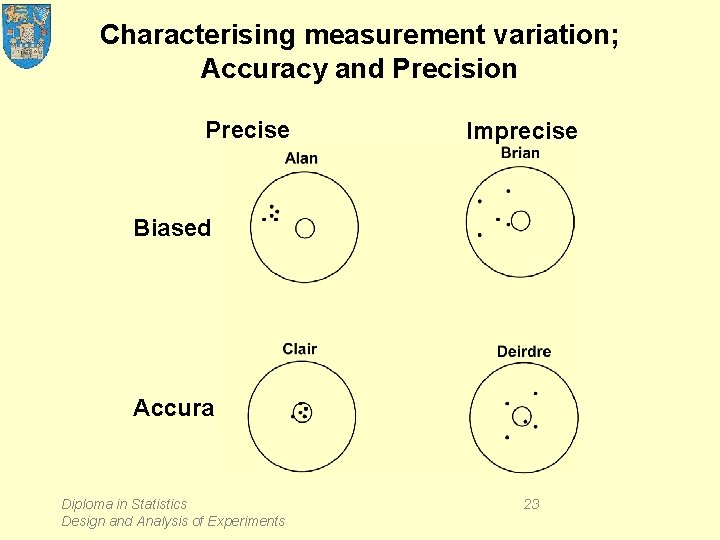

Characterising measurement variation; Accuracy and Precision Precise Imprecise Biased Accurate Diploma in Statistics Design and Analysis of Experiments 23

Characterising measurement variation; Accuracy and Precision Centre and Spread • Accurate means centre of spread is on target; • Precise means extent of spread is small; • Averaging repeated measurements improves precision, SE = s/√n – but not accuracy; seek assignable cause. Diploma in Statistics Design and Analysis of Experiments 24

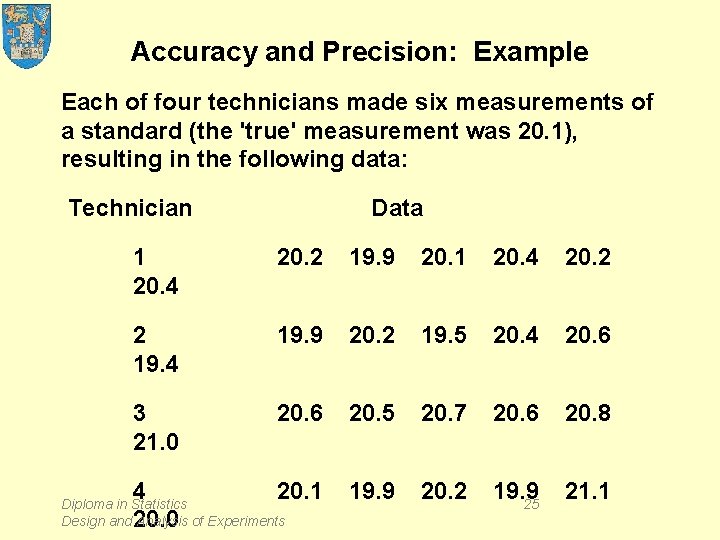

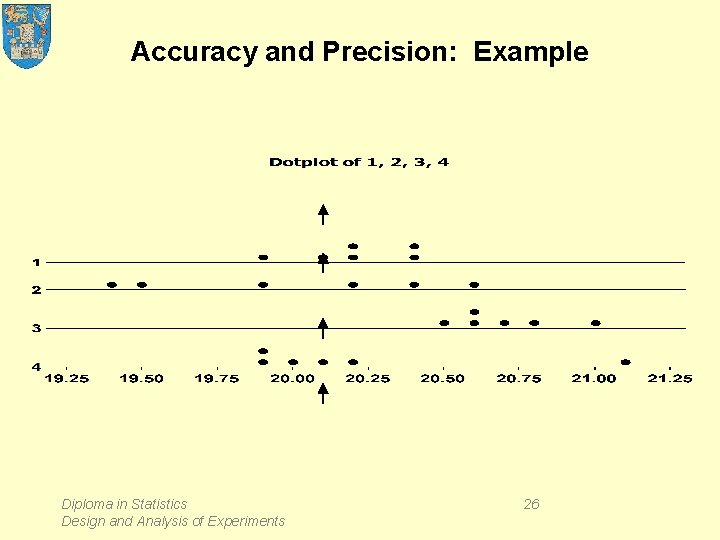

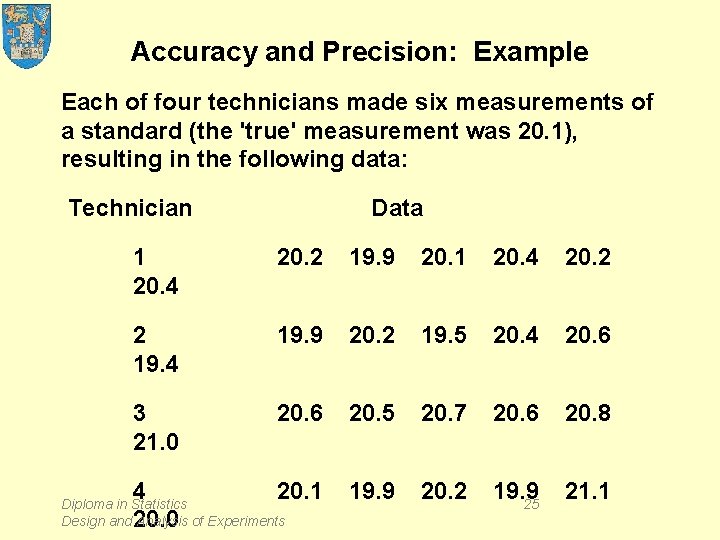

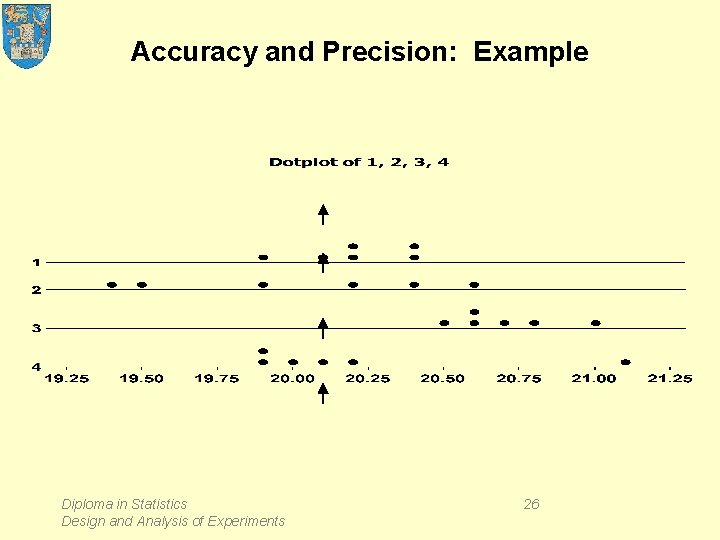

Accuracy and Precision: Example Each of four technicians made six measurements of a standard (the 'true' measurement was 20. 1), resulting in the following data: Technician Data 1 20. 4 20. 2 19. 9 20. 1 20. 4 20. 2 2 19. 4 19. 9 20. 2 19. 5 20. 4 20. 6 3 21. 0 20. 6 20. 5 20. 7 20. 6 20. 8 4 20. 0 20. 1 19. 9 20. 2 19. 9 25 21. 1 Diploma in Statistics Design and Analysis of Experiments

Accuracy and Precision: Example Diploma in Statistics Design and Analysis of Experiments 26

Repeatability and Reproducability Factors affecting measurement accuracy and precision may include: – instrument – material – operator – environment – laboratory – parts (manufacturing) Diploma in Statistics Design and Analysis of Experiments 27

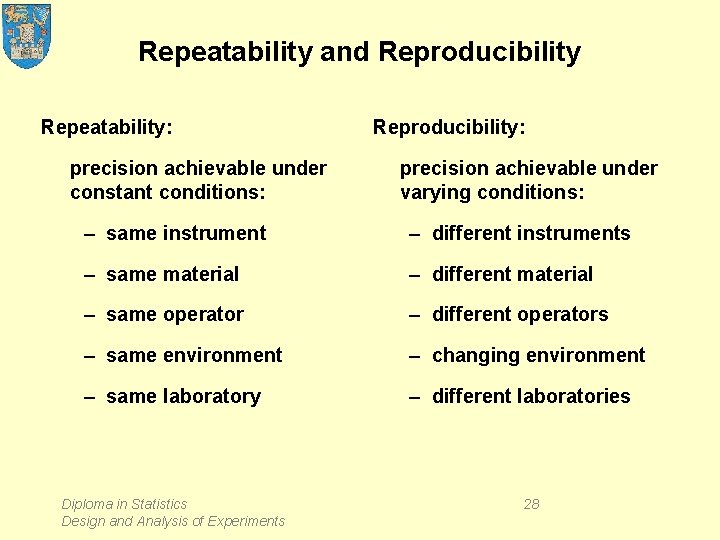

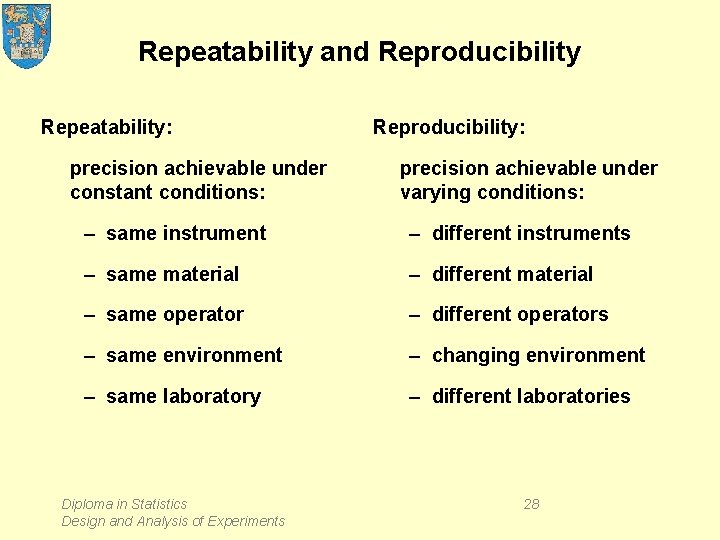

Repeatability and Reproducibility Repeatability: precision achievable under constant conditions: Reproducibility: precision achievable under varying conditions: – same instrument – different instruments – same material – different material – same operator – different operators – same environment – changing environment – same laboratory – different laboratories Diploma in Statistics Design and Analysis of Experiments 28

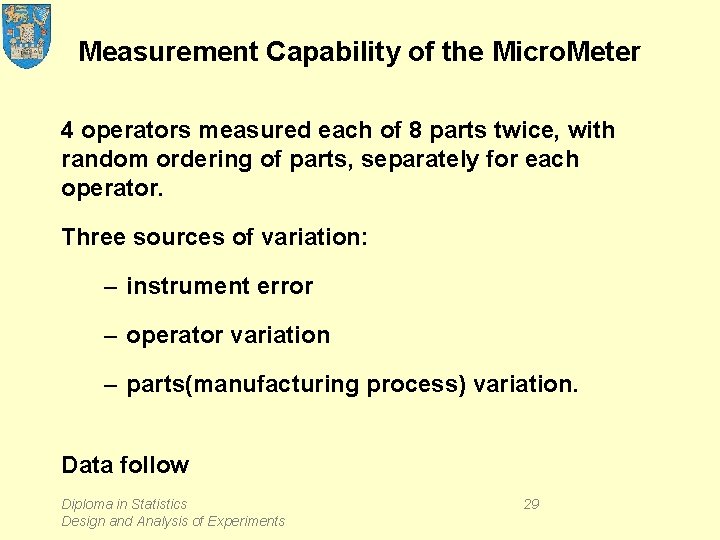

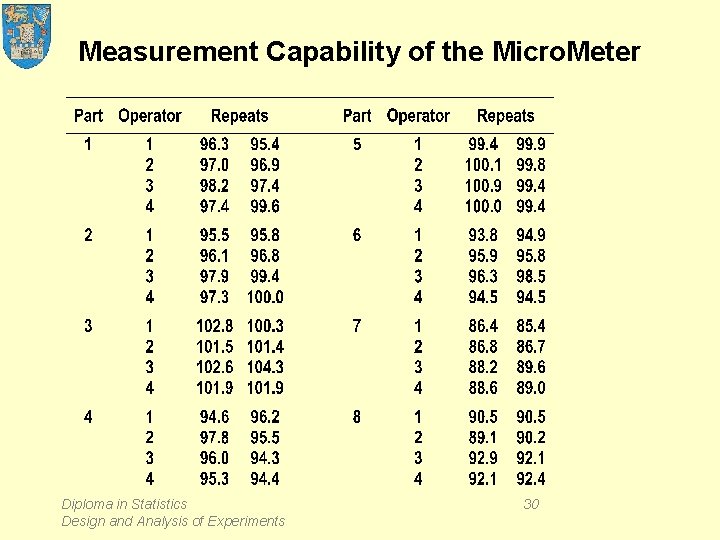

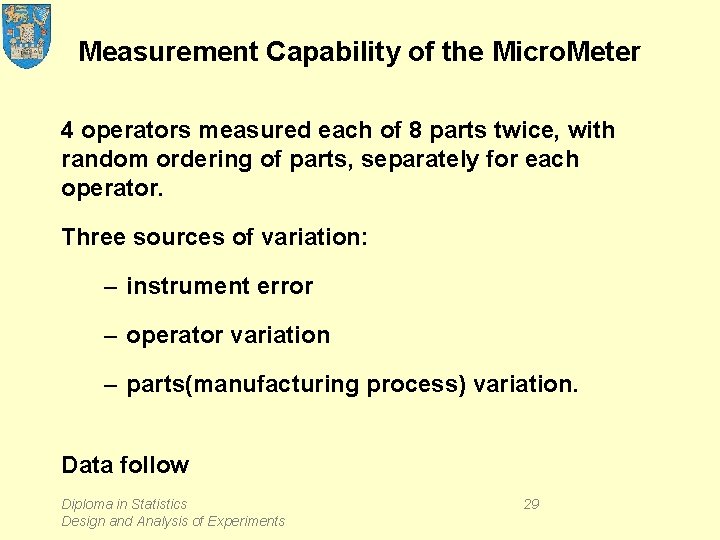

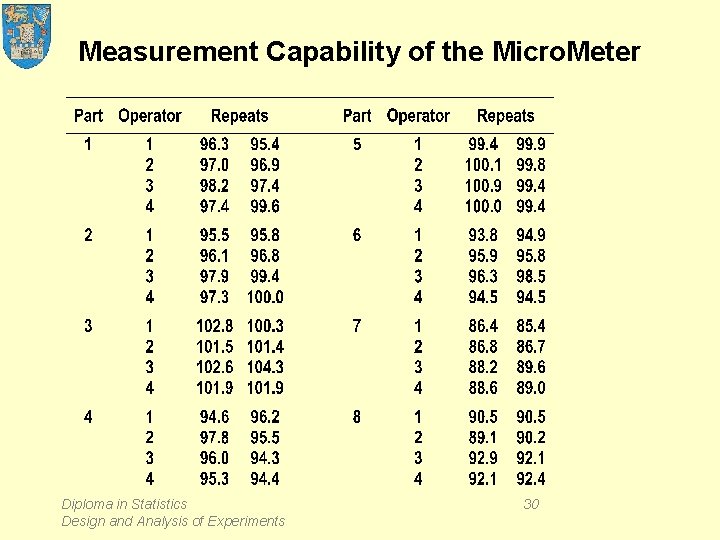

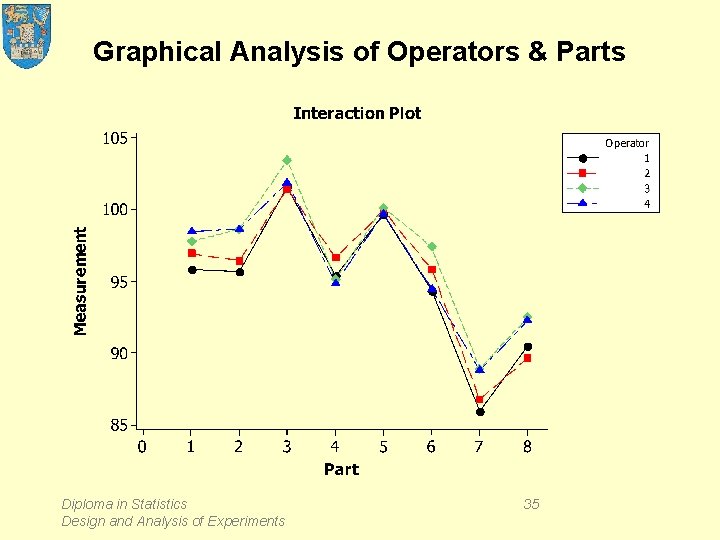

Measurement Capability of the Micro. Meter 4 operators measured each of 8 parts twice, with random ordering of parts, separately for each operator. Three sources of variation: – instrument error – operator variation – parts(manufacturing process) variation. Data follow Diploma in Statistics Design and Analysis of Experiments 29

Measurement Capability of the Micro. Meter Diploma in Statistics Design and Analysis of Experiments 30

Quantifying the variation Each measurement incorporates components of variation from – Operator error – Parts variation – Instrument error and also – Operator by Parts Interaction Diploma in Statistics Design and Analysis of Experiments 31

Measurement Differences Diploma in Statistics Design and Analysis of Experiments 32

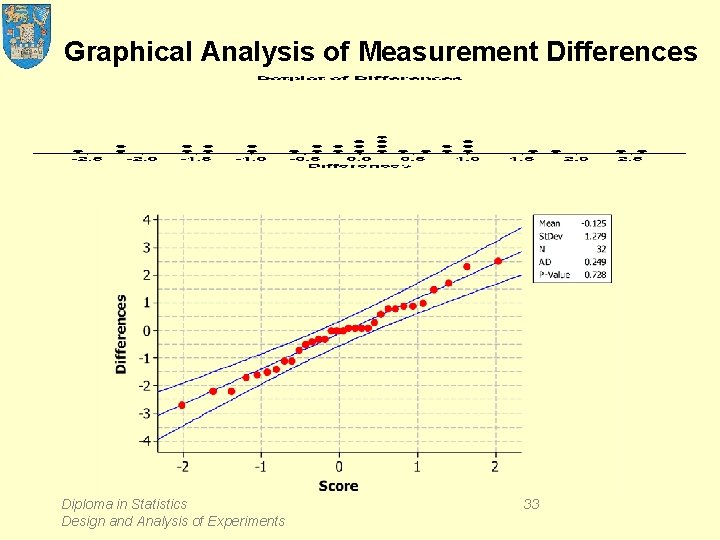

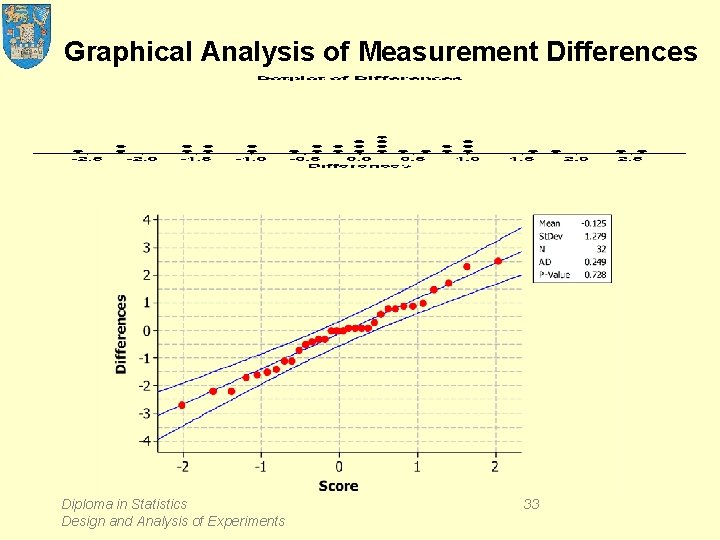

Graphical Analysis of Measurement Differences Diploma in Statistics Design and Analysis of Experiments 33

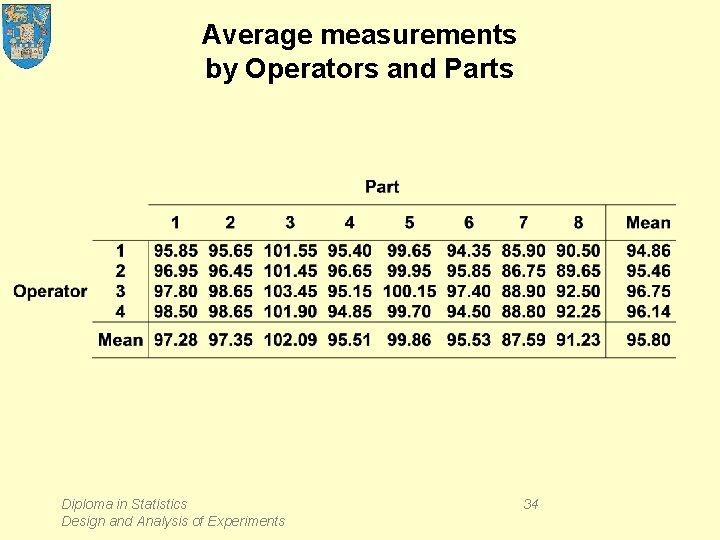

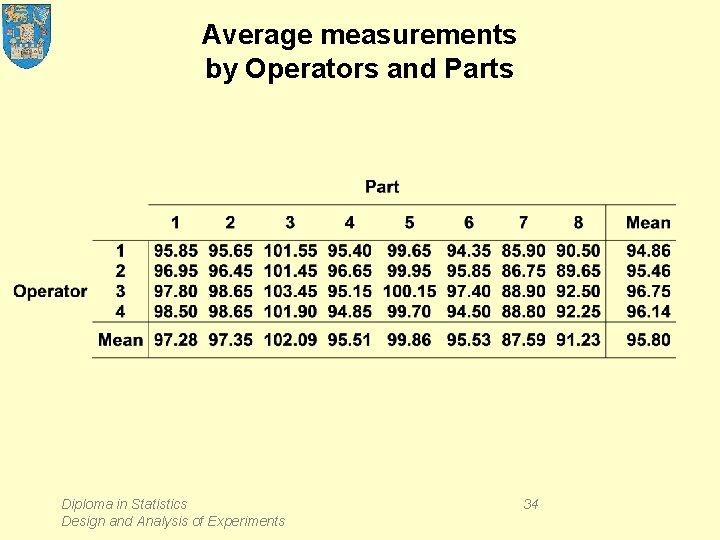

Average measurements by Operators and Parts Diploma in Statistics Design and Analysis of Experiments 34

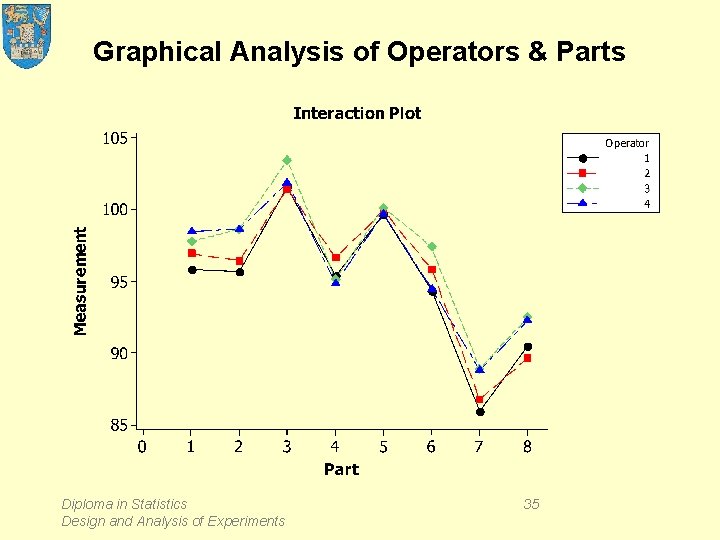

Graphical Analysis of Operators & Parts Diploma in Statistics Design and Analysis of Experiments 35

Graphical Analysis of Operators & Ordered Parts Diploma in Statistics Design and Analysis of Experiments 36

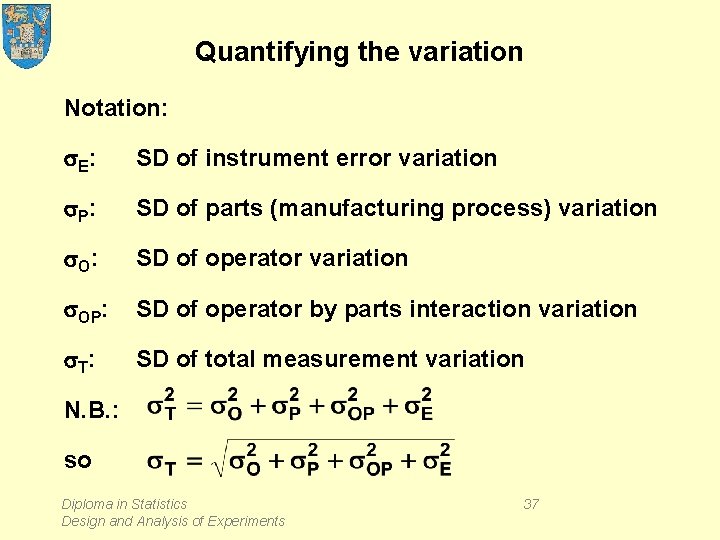

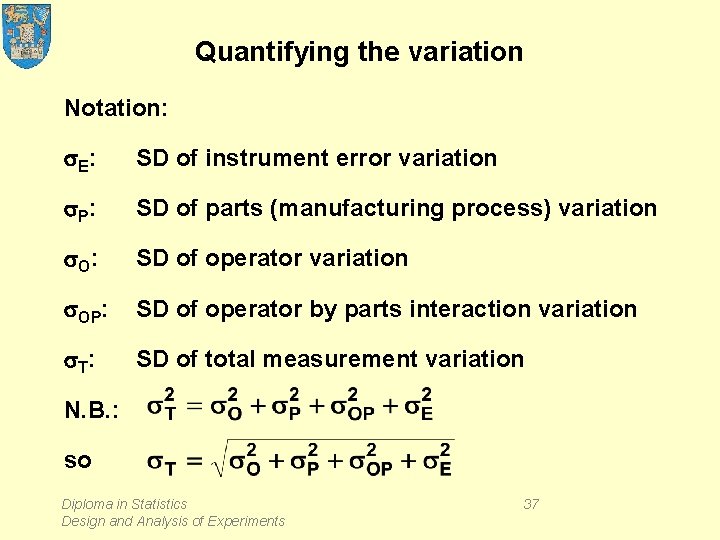

Quantifying the variation Notation: s. E : SD of instrument error variation s. P : SD of parts (manufacturing process) variation s. O : SD of operator variation s. OP: SD of operator by parts interaction variation s. T : SD of total measurement variation N. B. : so Diploma in Statistics Design and Analysis of Experiments 37

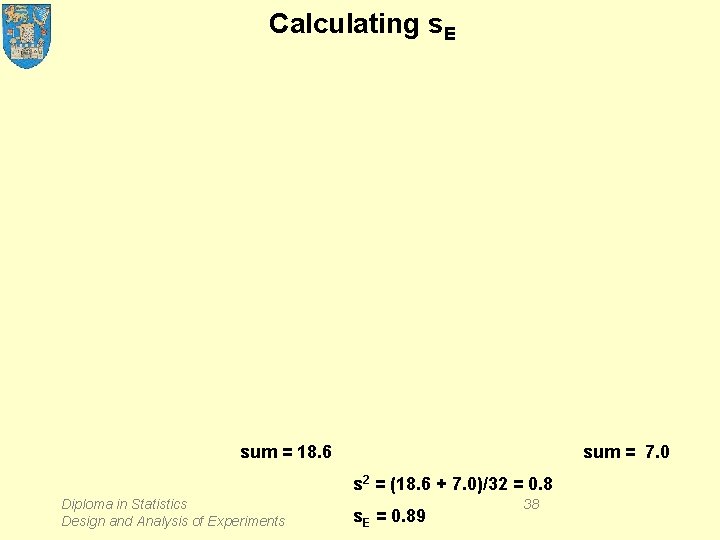

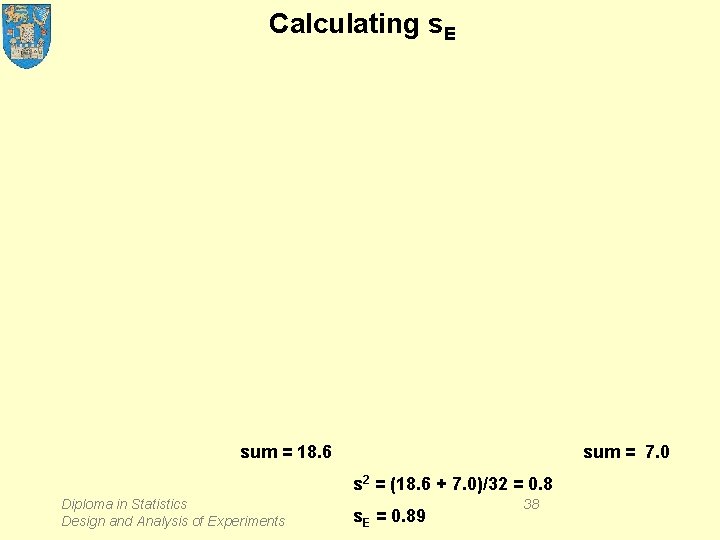

Calculating s. E sum = 18. 6 sum = 7. 0 s 2 = (18. 6 + 7. 0)/32 = 0. 8 Diploma in Statistics Design and Analysis of Experiments s. E = 0. 89 38

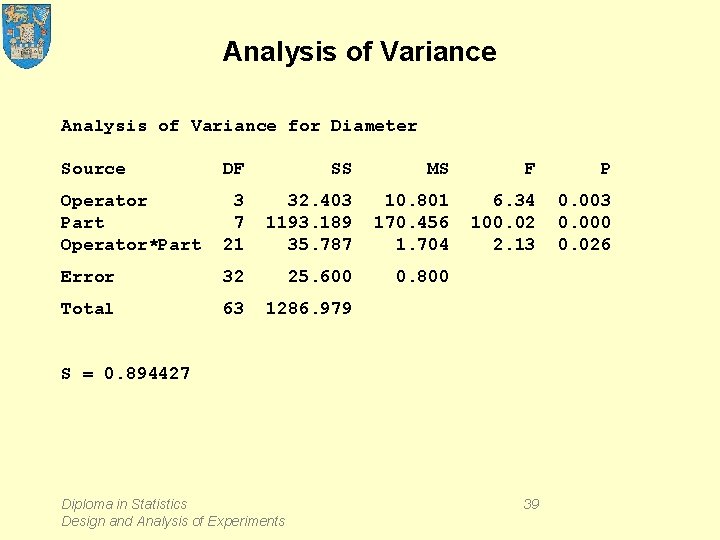

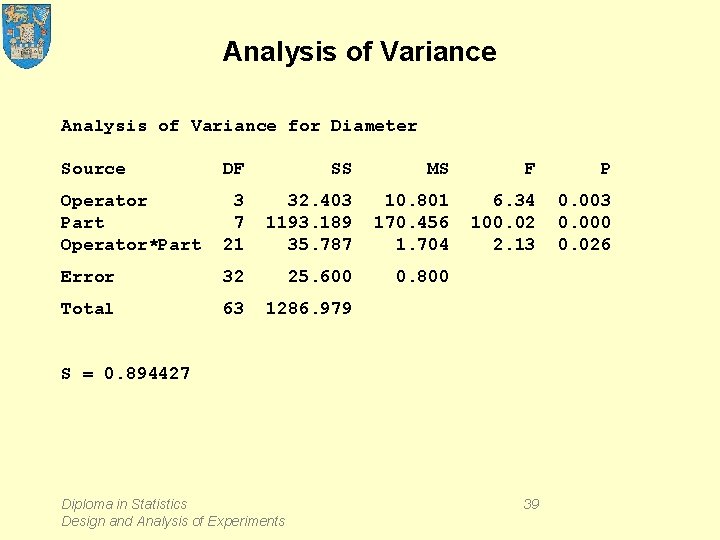

Analysis of Variance for Diameter Source DF SS MS F P Operator Part Operator*Part 3 7 21 32. 403 1193. 189 35. 787 10. 801 170. 456 1. 704 6. 34 100. 02 2. 13 0. 000 0. 026 Error 32 25. 600 0. 800 Total 63 1286. 979 S = 0. 894427 Diploma in Statistics Design and Analysis of Experiments 39

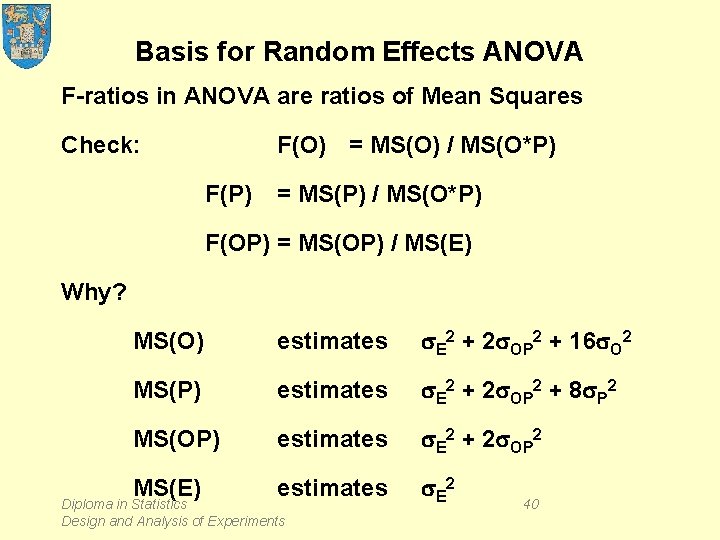

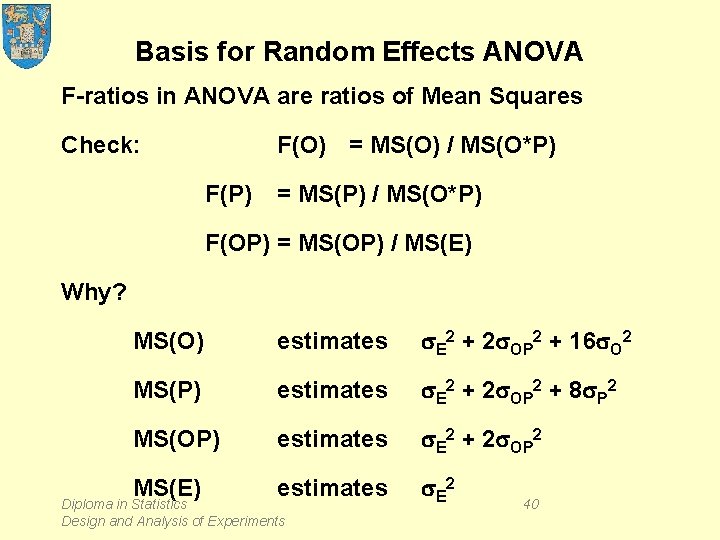

Basis for Random Effects ANOVA F-ratios in ANOVA are ratios of Mean Squares Check: F(O) = MS(O) / MS(O*P) F(P) = MS(P) / MS(O*P) F(OP) = MS(OP) / MS(E) Why? MS(O) estimates s. E 2 + 2 s. OP 2 + 16 s. O 2 MS(P) estimates s. E 2 + 2 s. OP 2 + 8 s. P 2 MS(OP) estimates s. E 2 + 2 s. OP 2 MS(E) estimates s. E 2 Diploma in Statistics Design and Analysis of Experiments 40

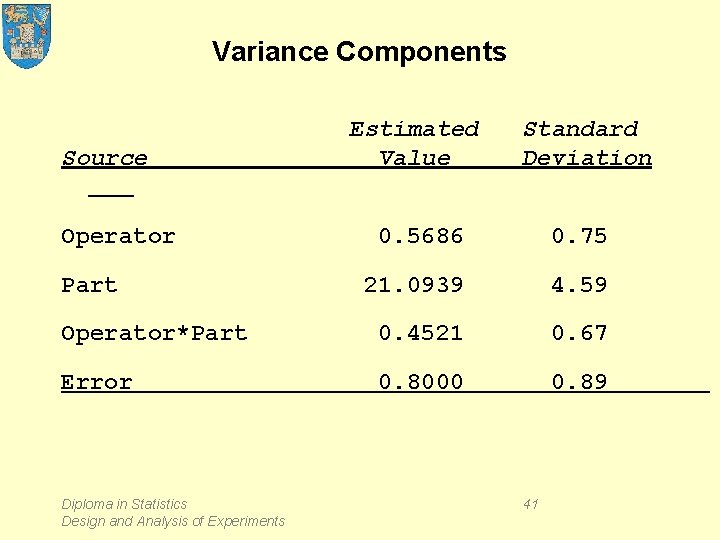

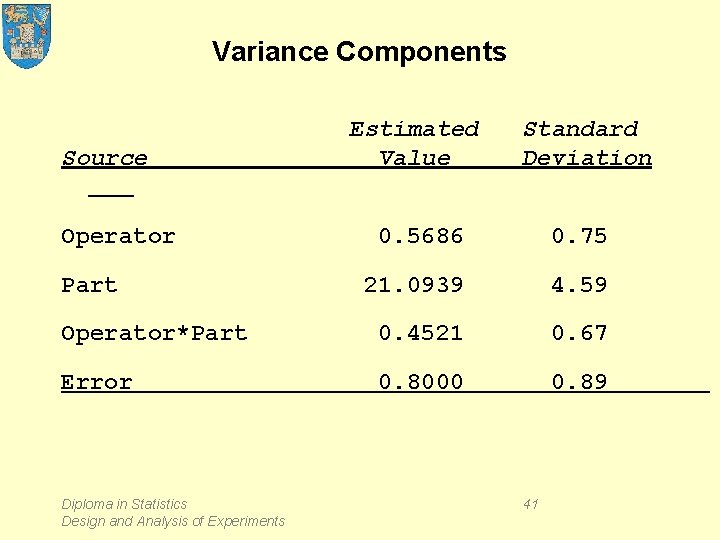

Variance Components Source Operator Estimated Value Standard Deviation 0. 5686 0. 75 21. 0939 4. 59 Operator*Part 0. 4521 0. 67 Error 0. 8000 0. 89 Part Diploma in Statistics Design and Analysis of Experiments 41

Diagnostic Analysis Diploma in Statistics Design and Analysis of Experiments 42

Diagnostic Analysis Diploma in Statistics Design and Analysis of Experiments 43

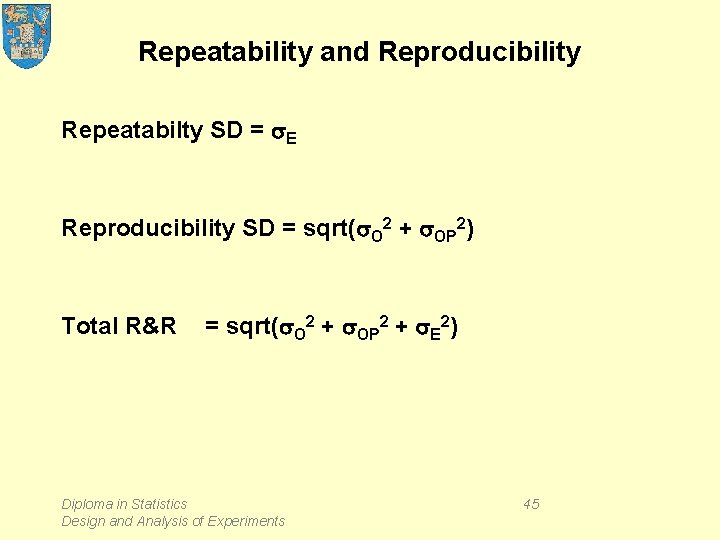

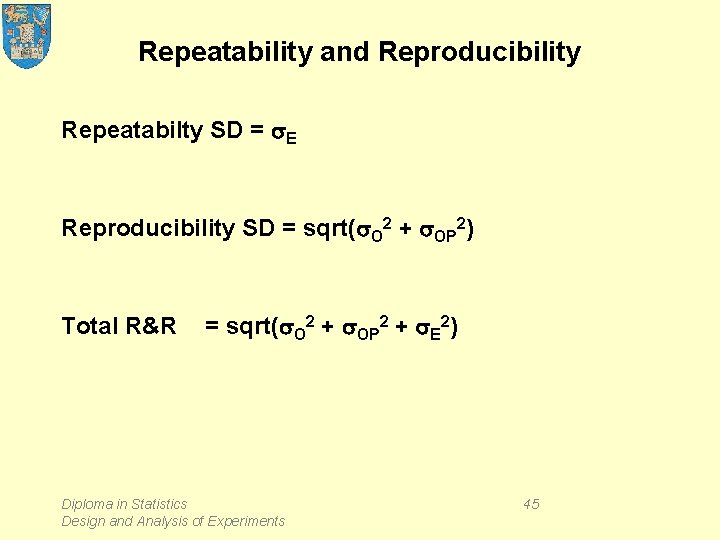

Measurement system capability s. E s. P means measurement system cannot distinguish between different parts. Need s. E << s. P. Define s. TP = sqrt(s. E 2 + s. P 2). Capability ratio = s. TP / s. E should exceed 5 Diploma in Statistics Design and Analysis of Experiments 44

Repeatability and Reproducibility Repeatabilty SD = s. E Reproducibility SD = sqrt(s. O 2 + s. OP 2) Total R&R = sqrt(s. O 2 + s. OP 2 + s. E 2) Diploma in Statistics Design and Analysis of Experiments 45

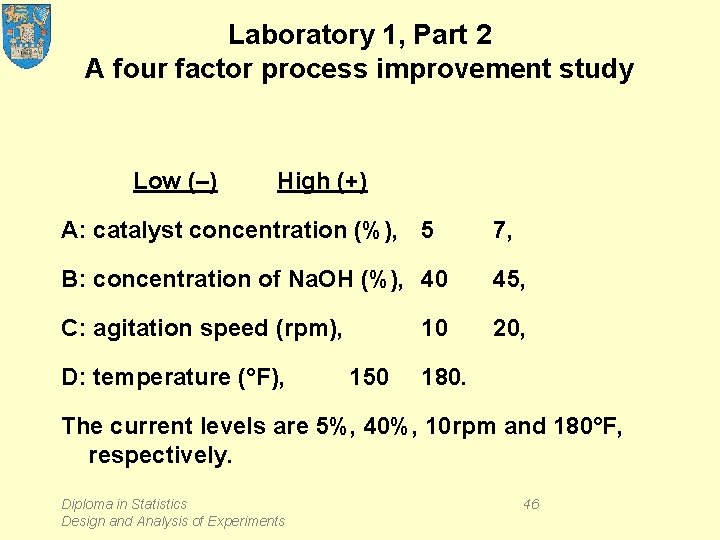

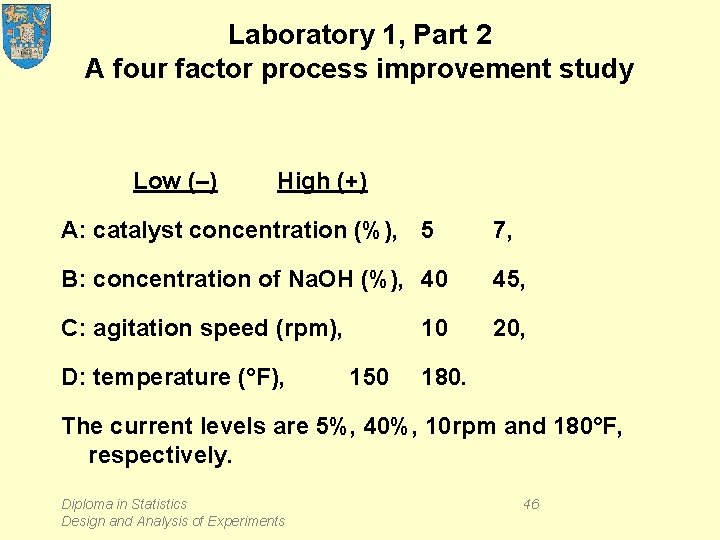

Laboratory 1, Part 2 A four factor process improvement study Low (–) High (+) A: catalyst concentration (%), 5 7, B: concentration of Na. OH (%), 40 45, C: agitation speed (rpm), 20, D: temperature (°F), 10 150 180. The current levels are 5%, 40%, 10 rpm and 180°F, respectively. Diploma in Statistics Design and Analysis of Experiments 46

Design and Results Diploma in Statistics Design and Analysis of Experiments 47

Pros and Cons of omitting "insignificant" terms pro: • the model is simplified • the error term has more degrees of freedom so that s is more precisely estimated • in small samples, comparisons are more precisely made Diploma in Statistics Design and Analysis of Experiments 48

Pros and Cons of omitting "insignificant" terms con: • statistical insignificance does not imply substantive insignificance, so that – when the excluded term has some effect below statistically significant level, the residual standard deviation is likely to increase, giving less precise comparisons, – (although this may be a pro if conservative conclusions are valued) • predictions may be slightly biased. Diploma in Statistics Design and Analysis of Experiments 49

Reading EM § 1. 5. 3, § 7. 5, § 8. 2. 1 MS Introduction to Measurement Systems Analysis BHH, § 9. 3 Diploma in Statistics Design and Analysis of Experiments 50