Derivative based optimization Vali Derhami Yazd University Computer

ﺑﻬﻴﻨﻪ ﺳﺎﺯی ﺑﺮ ﻣﺒﻨﺎی ﻣﺸﺘﻖ Derivative based optimization Vali Derhami Yazd University, Computer Department vderhami@yazd. ac. ir

Outlines o Decent methods n Gradient-based methods o 3 well known Gradient based methods n Steepest decent n Newton’s method n Levenberg-Marquardt’s method o Nonlinear least -squares problems 2/9 Author: Vali Derhami

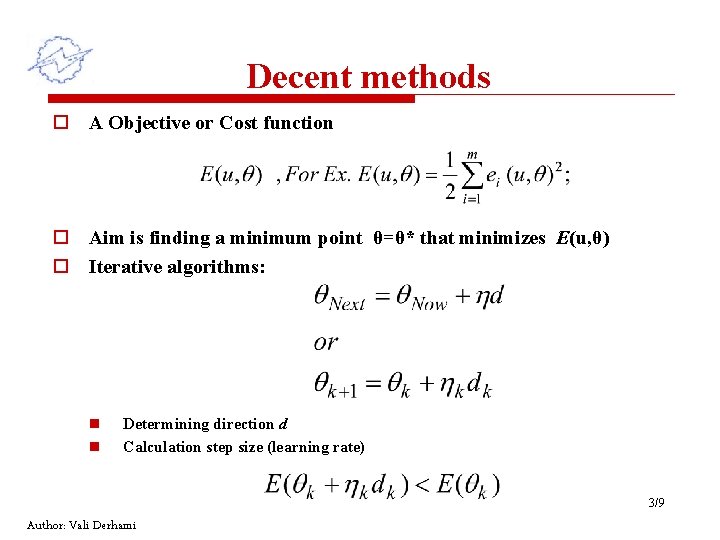

Decent methods o A Objective or Cost function o Aim is finding a minimum point θ=θ* that minimizes E(u, θ) o Iterative algorithms: n n Determining direction d Calculation step size (learning rate) 3/9 Author: Vali Derhami

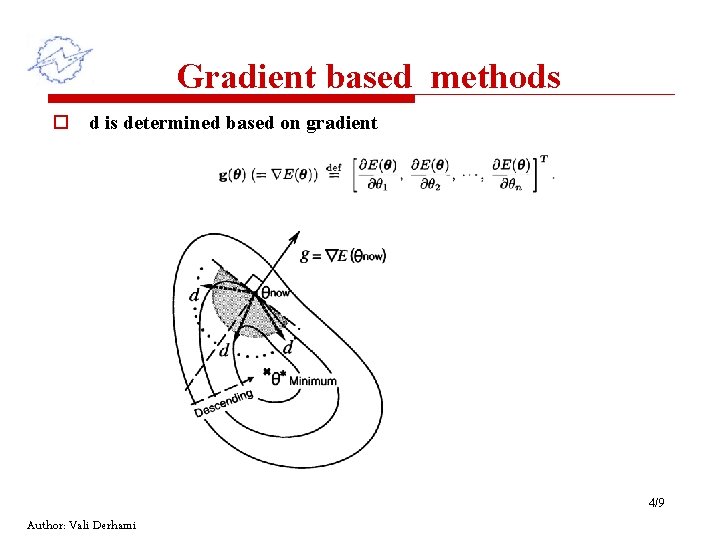

Gradient based methods o d is determined based on gradient 4/9 Author: Vali Derhami

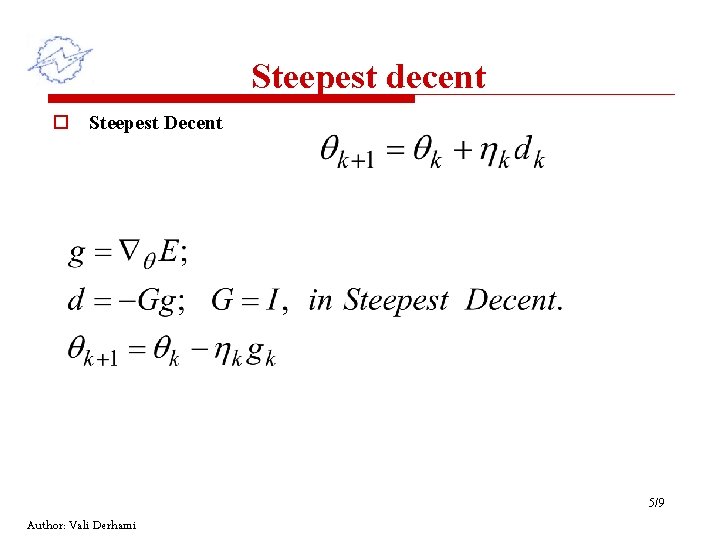

Steepest decent o Steepest Decent 5/9 Author: Vali Derhami

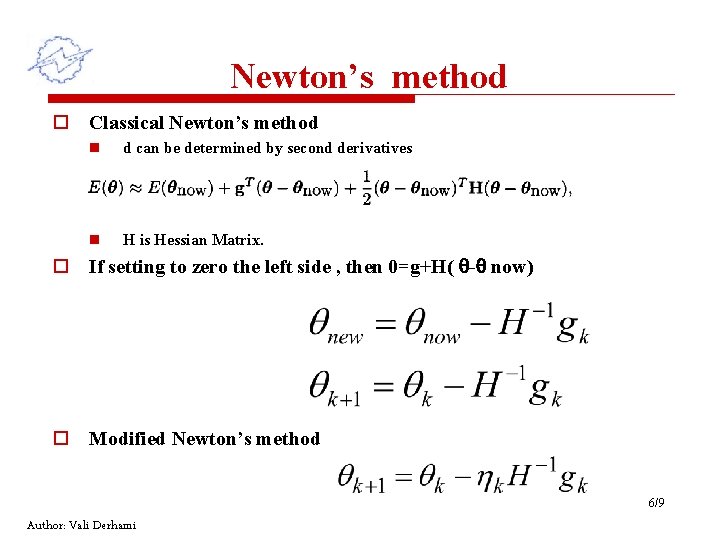

Newton’s method o Classical Newton’s method n d can be determined by second derivatives n H is Hessian Matrix. o If setting to zero the left side , then 0=g+H( - now) o Modified Newton’s method 6/9 Author: Vali Derhami

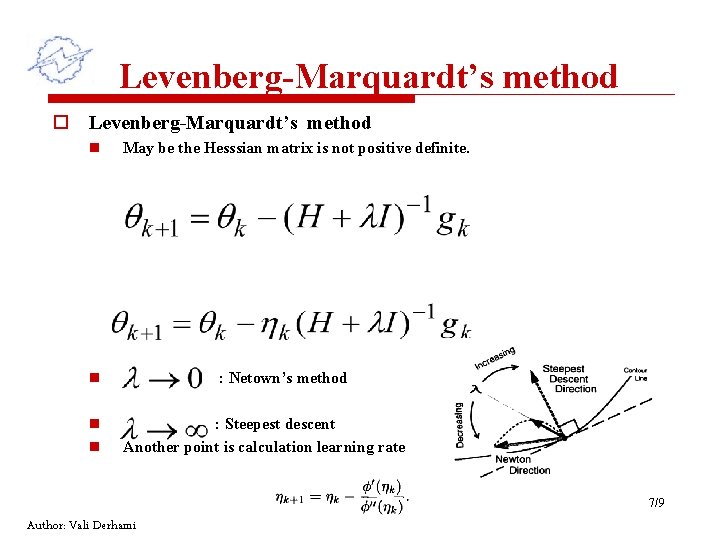

Levenberg-Marquardt’s method o Levenberg-Marquardt’s method n May be the Hesssian matrix is not positive definite. n n n : Netown’s method : Steepest descent Another point is calculation learning rate 7/9 Author: Vali Derhami

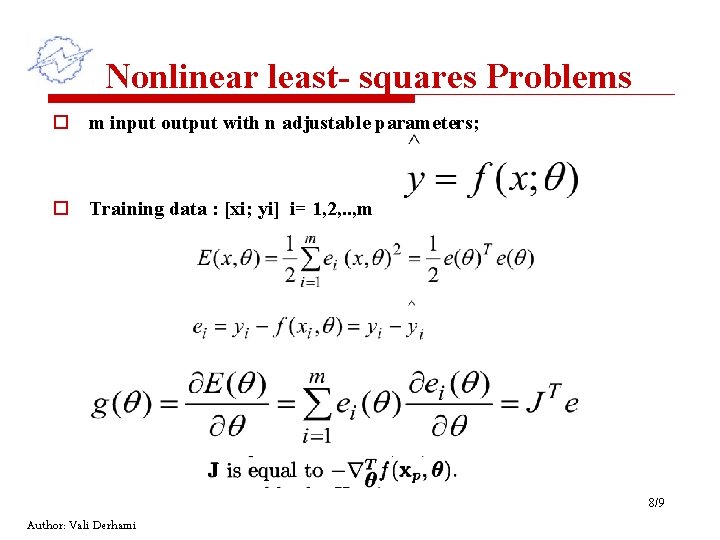

Nonlinear least- squares Problems o m input output with n adjustable parameters; o Training data : [xi; yi] i= 1, 2, . . , m 8/9 Author: Vali Derhami

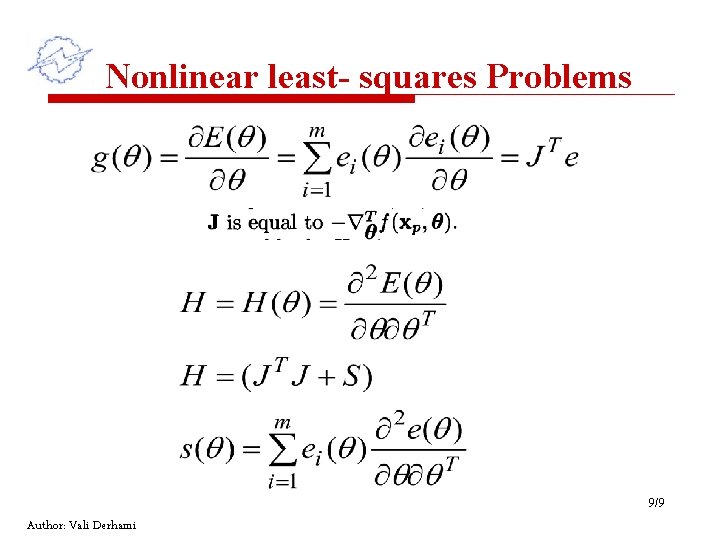

Nonlinear least- squares Problems 9/9 Author: Vali Derhami

- Slides: 9