Deployment Deployment March 2002 Randy Burris Center for

Deployment, Deployment March, 2002 Randy Burris Center for Computational Sciences Oak Ridge National Laboratory OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Overview of this presentation q Our goal: let scientists (our customers) do science without worrying about their computer environment q Our clientele: § Four disciplines (climate, astrophysics, genomics and proteomics, high-energy physics) § National labs and universities § Using resources all over the country § Residing all over the place q We must deploy the result (“Deploy or die”) OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Well, OK. But…deploy what? q Where are the commonalities in our space? § Security and trust – nonexistent to extreme § Network connectivity – dialup to OC 12 § File sizes – bytes to terabytes § File location – local unit to partitions around the world § Visualization – static to dynamic real-time § And so on. q We can’t do it all. q So exactly what are we going to deploy? q And how should we proceed? OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Achieving successful deployment q For § § q In each of the 4 projects, define basic steps: Define target environment(s) Characterize successful deployment (in each) Prototype in a close-to-production environment Deploy in production parallel with the above: § Produce documentation at every step § Develop tools for support staff q Start now. OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 1: Define target environment(s) q We cannot support all combinations. § § Security – {DCE, Kerberos, PKI, gss}, firewalls, … Compute resource – MPP, cluster, workstation, … User platform – MPP, cluster, Unix/linux, Windows, … Storage • Storage resource – HPSS, PVFS, … ? • User API for access to data • Net. CDF, HDF 5, both, something else? • HRM, pftp, Grid. FTP, hsi, … § Network • WAN – Gig. E/jumbo, Fast. E, OC 12, OC 3, ESnet, hops, … • LAN – Gig. E, Fast. E, i. SCSI, Fibre. Channel, … § Visualization – CAVE, workstations, Palm Pilots, … q We will have to choose. OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 2: Characterize successful deployment q A. Correct operation in the security environment q B. Optimized performance in the target network environment q C. Rugged infrastructure q D. Unobtrusive infrastructure q E. Thorough documentation for users and support staff OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 2: Characterize A: Security q I believe we must define the environment into which we intend to deploy. § Starting now § Because it will take a long time and will almost certainly require development. q Questions to which we need answers: § Are we concerned with DOE sites or DOE+NSF+…? § Are there circumstances where clear-text passwords are OK? Where no security is OK? § Must we support authentication in pki, gsi, dce and/or Kerberos? § Will all of our infrastructure work with firewalls at one or both ends of a transfer? Whose firewalls, what filtering parameters, … OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 2: Characterize B: Network q On what network are the end nodes? § What is our target environment – ESnet, ESnet+Internet 2, Grid, www, … q What throughput is needed for effective science? OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 2: Characterize C: Rugged q Must not crash (of course) q Must be in service when needed q Must be secure q Must have a support plan (which does not require an army of support people) q Must have trouble-resolution mechanism and resources q Must be survivable over normal maintenance § System software patches and upgrades § Equipment upgrades OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 2: Characterize D: Unobtrusive q User should need minimal knowledge q The deeper the infrastructure, the less the user should need to know q User should be protected from mistakes § Try not to let the user screw things up § Documentation and real-time warnings § Effective defaults OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 2: Characterize E: Documentation White papers to inform larger community q For users: how-to-use documents q For system-admin staff: q § How to install, debug, maintain, troubleshoot q For user-support staff § How to troubleshoot § Tuning knobs q For programmers § Overview documents to give context § Correct interface documents § Correct documentation for all appropriate platforms OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Step 3: Prototype in close-toproduction environment q Example of deployment approach on Probe: § Deploy early prototypes in Oak Ridge and NERSC • Use Probe, Probe HPSS, Production HPSS and supercomputers • Use (and require) documented code and procedures § As development progresses, evaluate and address deployment issues such as security, network performance, system-admin documentation § As prototype becomes more robust, migrate more functions to Oak Ridge and NERSC production environments § Continue to evaluate and address deployment issues that now include user and user-support documentation § Iterate as necessary q When this sequence is done, you’re in production. OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

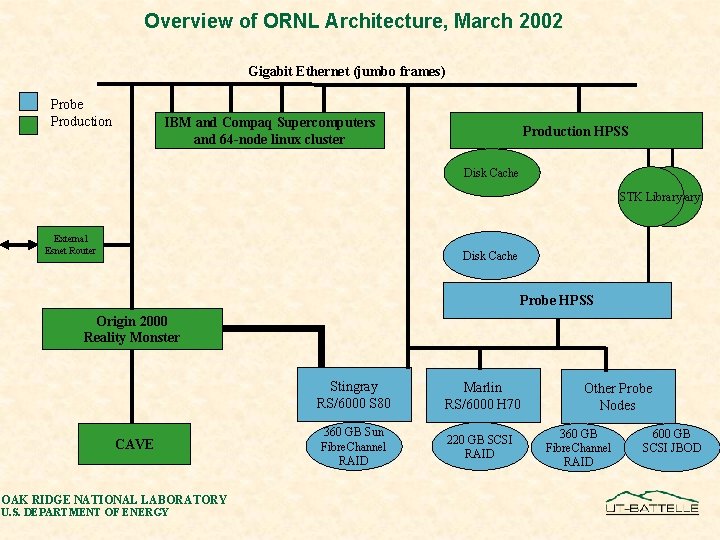

Overview of ORNL Architecture, March 2002 Gigabit Ethernet (jumbo frames) Probe Production IBM and Compaq Supercomputers and 64 -node linux cluster Production HPSS Disk Cache STKSTK Library External Esnet Router Disk Cache Probe HPSS Origin 2000 Reality Monster CAVE OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY Stingray RS/6000 S 80 Marlin RS/6000 H 70 360 GB Sun Fibre. Channel RAID 220 GB SCSI RAID Other Probe Nodes 360 GB Fibre. Channel RAID 600 GB SCSI JBOD

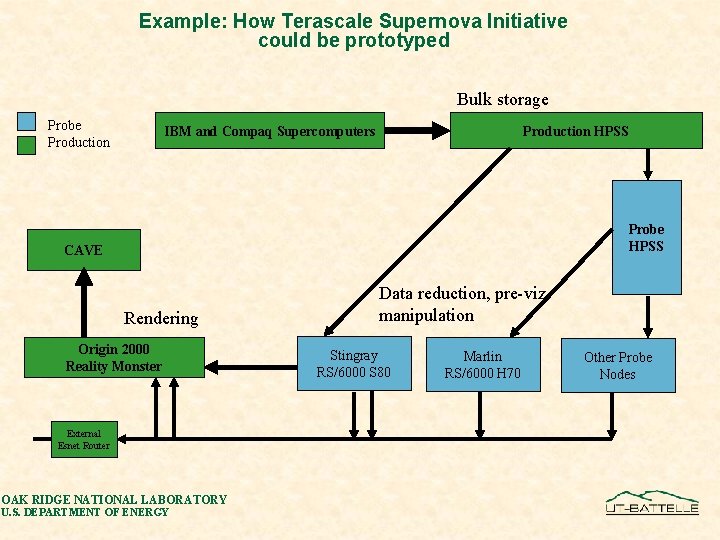

Example: How Terascale Supernova Initiative could be prototyped Bulk storage Probe Production IBM and Compaq Supercomputers Production HPSS Probe HPSS CAVE Rendering Origin 2000 Reality Monster External Esnet Router OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY Data reduction, pre-viz manipulation Stingray RS/6000 S 80 Marlin RS/6000 H 70 Other Probe Nodes

We should start right away: q Select initial, intermediate and ultimate target environments § Including supported applications, platforms, security and target network § Describe in a white paper q Seek common elements in supported applications § Develop a deployment plan for common elements § Write white paper describing deployment plan • Specify our approach to deploying support for those elements • Identifying un-met requirements, and how to remedy • Describing approach to ruggedness and unobtrusiveness q Address non-common elements in supported applications § § Seek to minimize their impact Specify our approach to deploying support for those elements Develop deployment plans and describe them Write white paper describing deployment plan OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

DISCUSSION? OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

Serious questions for early resolution q What is the role of HPSS? § HPSS will never be pervasive – expensive. § Treat HPSS sites as primary repositories? q Which file transfer protocol(s) do we support? § Grid. FTP, pftp, his OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

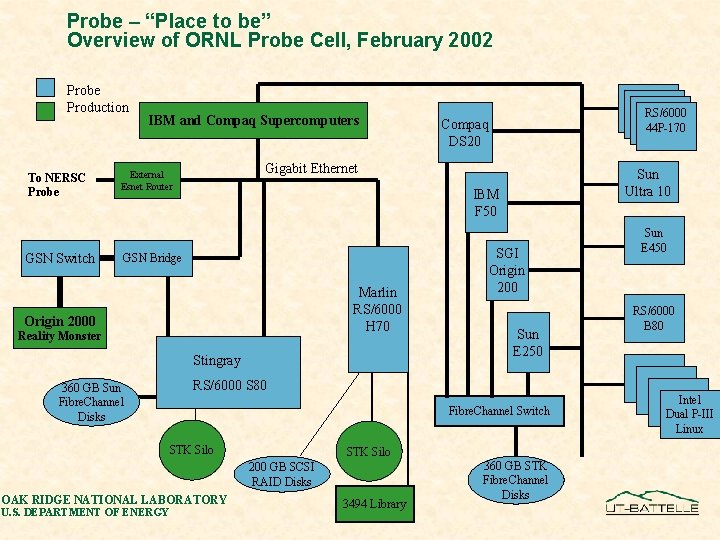

Probe – “Place to be” Overview of ORNL Probe Cell, February 2002 Probe Production To NERSC Probe GSN Switch IBM and Compaq Supercomputers Gigabit Ethernet External Esnet Router Marlin RS/6000 H 70 Origin 2000 Reality Monster Stingray SGI Origin 200 Sun E 250 RS/6000 S 80 Fibre. Channel Switch STK Silo 200 GB SCSI RAID Disks OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY Sun Ultra 10 IBM F 50 GSN Bridge 360 GB Sun Fibre. Channel Disks RS/6000 44 P-170 Compaq DS 20 3494 Library 360 GB STK Fibre. Channel Disks Sun E 450 RS/6000 B 80 Intel Dual P-III Linux

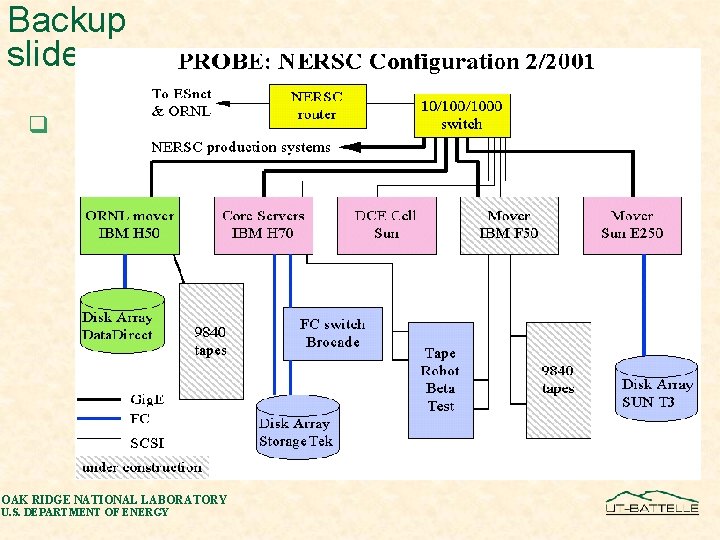

Backup slide q OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

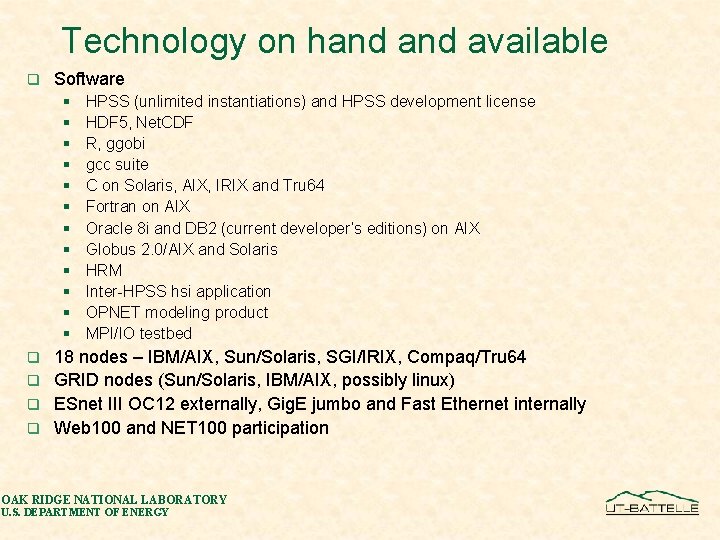

Technology on hand available q Software § § § HPSS (unlimited instantiations) and HPSS development license HDF 5, Net. CDF R, ggobi gcc suite C on Solaris, AIX, IRIX and Tru 64 Fortran on AIX Oracle 8 i and DB 2 (current developer’s editions) on AIX Globus 2. 0/AIX and Solaris HRM Inter-HPSS hsi application OPNET modeling product MPI/IO testbed 18 nodes – IBM/AIX, Sun/Solaris, SGI/IRIX, Compaq/Tru 64 q GRID nodes (Sun/Solaris, IBM/AIX, possibly linux) q ESnet III OC 12 externally, Gig. E jumbo and Fast Ethernet internally q Web 100 and NET 100 participation q OAK RIDGE NATIONAL LABORATORY U. S. DEPARTMENT OF ENERGY

- Slides: 20