Dependency Models abstraction of Probability distributions A dependency

Dependency Models – abstraction of Probability distributions A dependency model M over a finite set of elements U is a rule that assigns truth values to the predicate IM(X, Z, Y) where X, Y, Z are (disjoint) subsets of U. 1

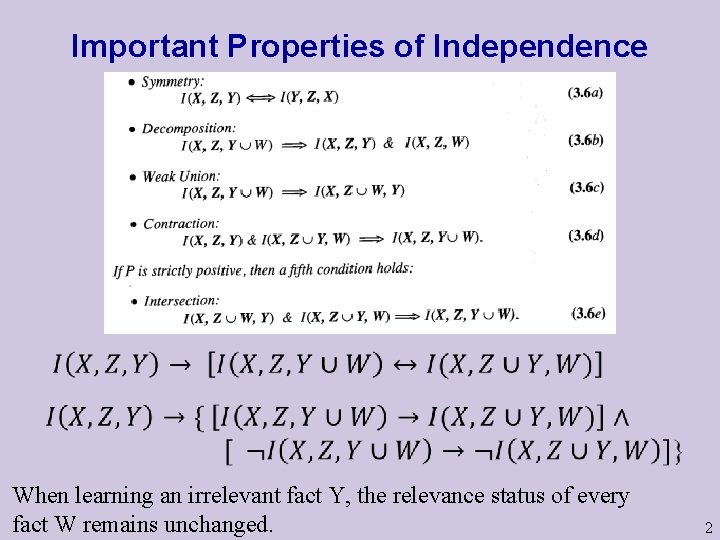

Important Properties of Independence When learning an irrelevant fact Y, the relevance status of every fact W remains unchanged. 2

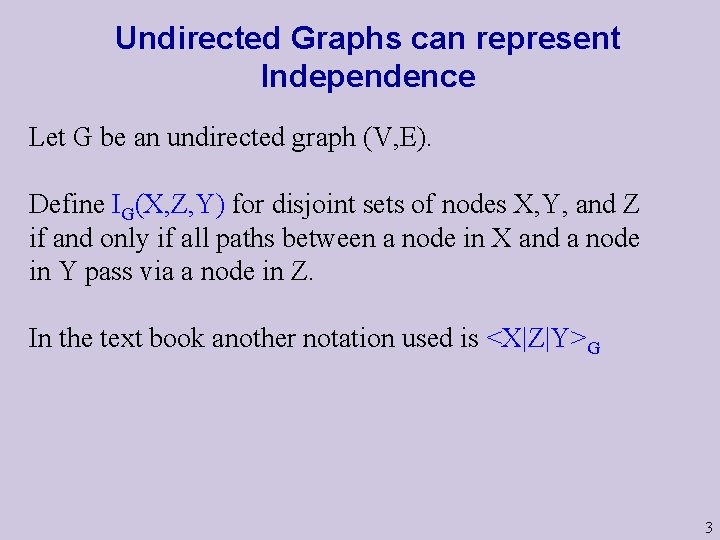

Undirected Graphs can represent Independence Let G be an undirected graph (V, E). Define IG(X, Z, Y) for disjoint sets of nodes X, Y, and Z if and only if all paths between a node in X and a node in Y pass via a node in Z. In the text book another notation used is <X|Z|Y>G 3

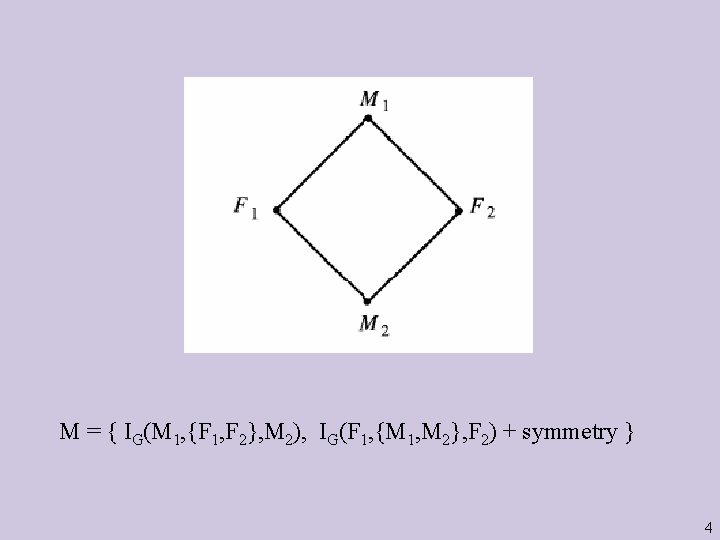

M = { IG(M 1, {F 1, F 2}, M 2), IG(F 1, {M 1, M 2}, F 2) + symmetry } 4

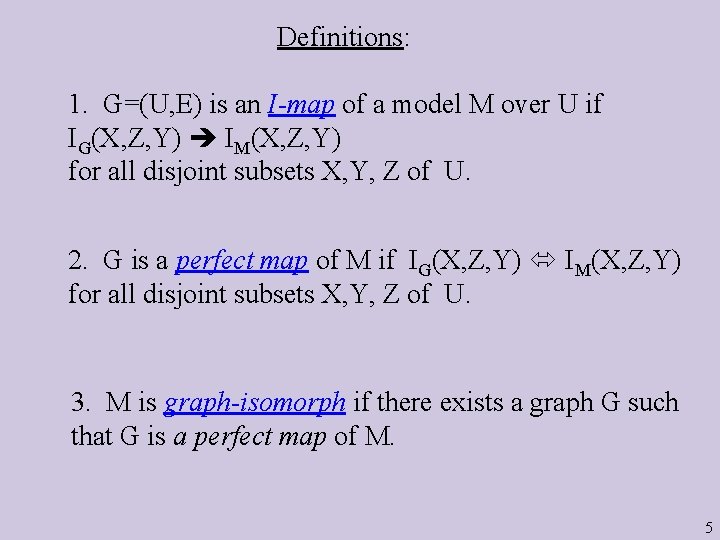

Definitions: 1. G=(U, E) is an I-map of a model M over U if IG(X, Z, Y) IM(X, Z, Y) for all disjoint subsets X, Y, Z of U. 2. G is a perfect map of M if IG(X, Z, Y) IM(X, Z, Y) for all disjoint subsets X, Y, Z of U. 3. M is graph-isomorph if there exists a graph G such that G is a perfect map of M. 5

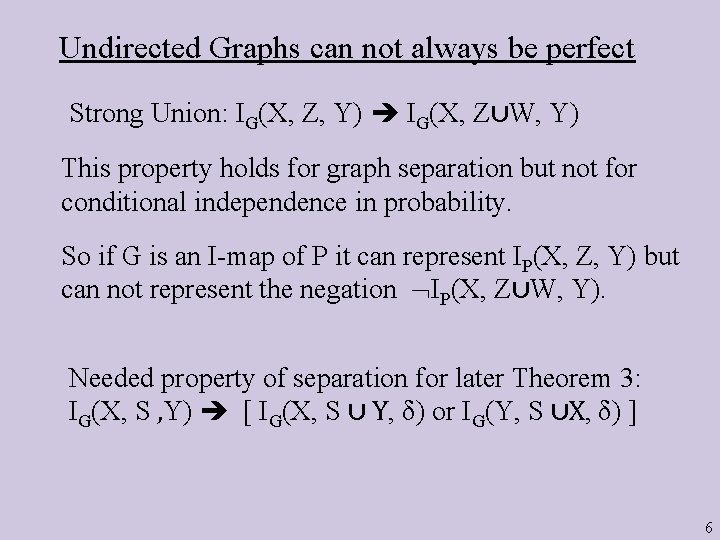

Undirected Graphs can not always be perfect Strong Union: IG(X, Z, Y) IG(X, Z∪W, Y) This property holds for graph separation but not for conditional independence in probability. So if G is an I-map of P it can represent IP(X, Z, Y) but can not represent the negation IP(X, Z∪W, Y). Needed property of separation for later Theorem 3: IG(X, S , Y) [ IG(X, S ∪ Y, δ) or IG(Y, S ∪X, δ) ] 6

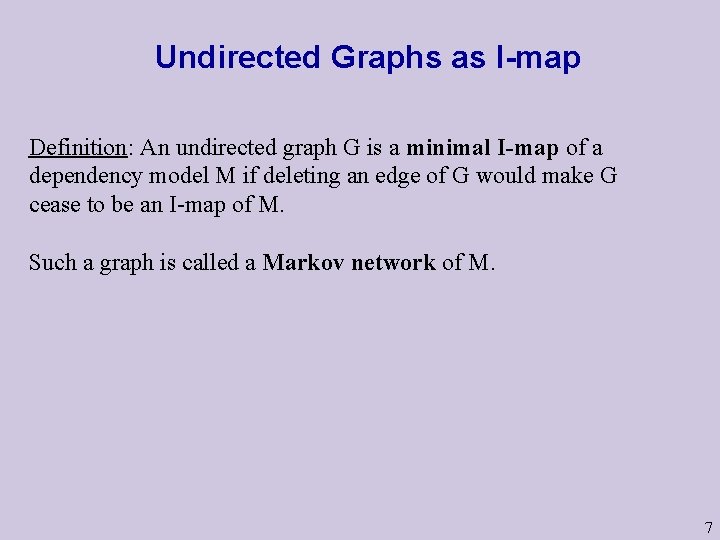

Undirected Graphs as I-map Definition: An undirected graph G is a minimal I-map of a dependency model M if deleting an edge of G would make G cease to be an I-map of M. Such a graph is called a Markov network of M. 7

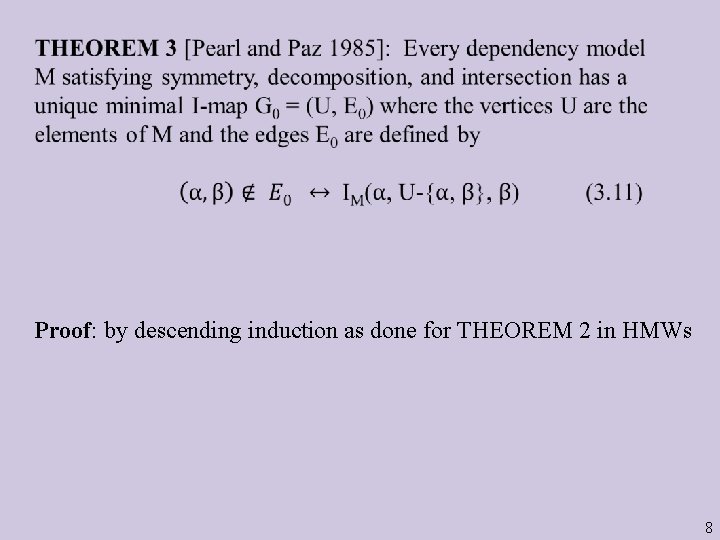

Proof: by descending induction as done for THEOREM 2 in HMWs 8

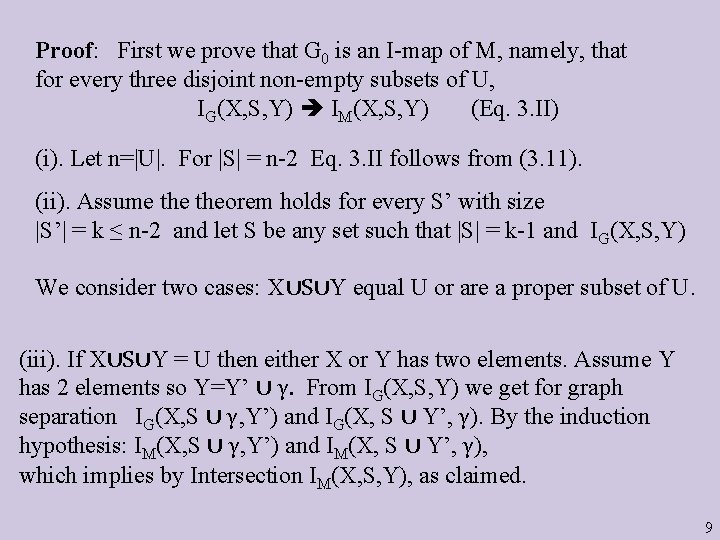

Proof: First we prove that G 0 is an I-map of M, namely, that for every three disjoint non-empty subsets of U, IG(X, S, Y) IM(X, S, Y) (Eq. 3. II) (i). Let n=|U|. For |S| = n-2 Eq. 3. II follows from (3. 11). (ii). Assume theorem holds for every S’ with size |S’| = k ≤ n-2 and let S be any set such that |S| = k-1 and IG(X, S, Y) We consider two cases: X∪S∪Y equal U or are a proper subset of U. (iii). If X∪S∪Y = U then either X or Y has two elements. Assume Y has 2 elements so Y=Y’ ∪ γ. From IG(X, S, Y) we get for graph separation IG(X, S ∪ γ, Y’) and IG(X, S ∪ Y’, γ). By the induction hypothesis: IM(X, S ∪ γ, Y’) and IM(X, S ∪ Y’, γ), which implies by Intersection IM(X, S, Y), as claimed. 9

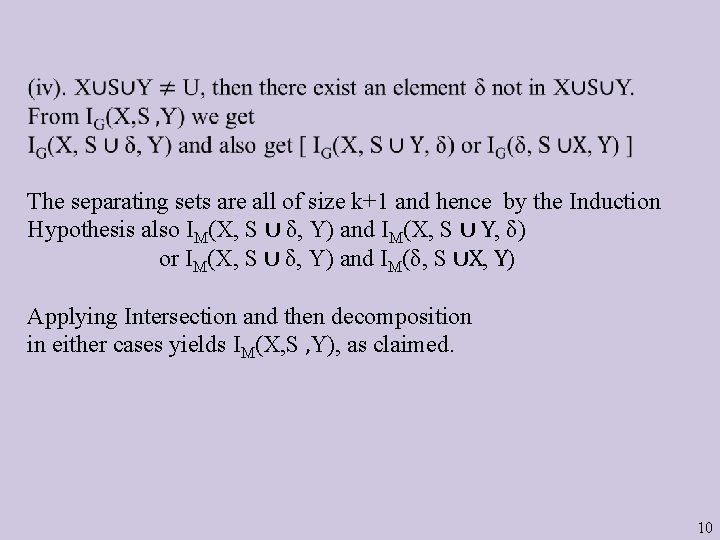

The separating sets are all of size k+1 and hence by the Induction Hypothesis also IM(X, S ∪ δ, Y) and IM(X, S ∪ Y, δ) or IM(X, S ∪ δ, Y) and IM(δ, S ∪X, Y) Applying Intersection and then decomposition in either cases yields IM(X, S , Y), as claimed. 10

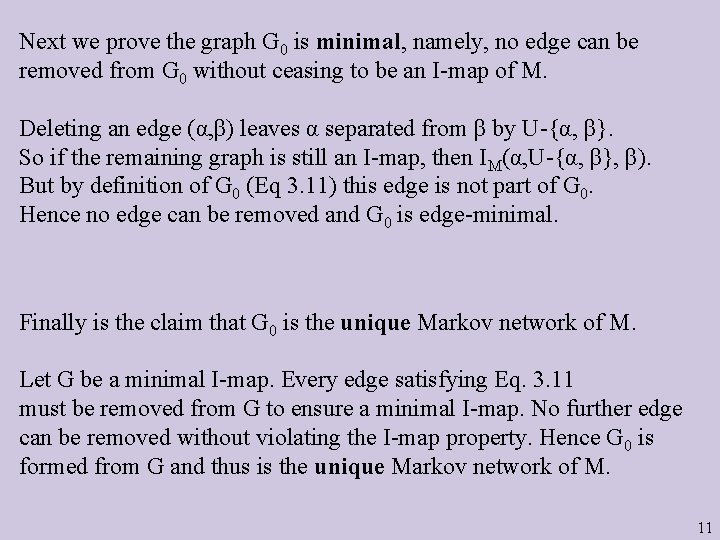

Next we prove the graph G 0 is minimal, namely, no edge can be removed from G 0 without ceasing to be an I-map of M. Deleting an edge (α, β) leaves α separated from β by U-{α, β}. So if the remaining graph is still an I-map, then IM(α, U-{α, β}, β). But by definition of G 0 (Eq 3. 11) this edge is not part of G 0. Hence no edge can be removed and G 0 is edge-minimal. Finally is the claim that G 0 is the unique Markov network of M. Let G be a minimal I-map. Every edge satisfying Eq. 3. 11 must be removed from G to ensure a minimal I-map. No further edge can be removed without violating the I-map property. Hence G 0 is formed from G and thus is the unique Markov network of M. 11

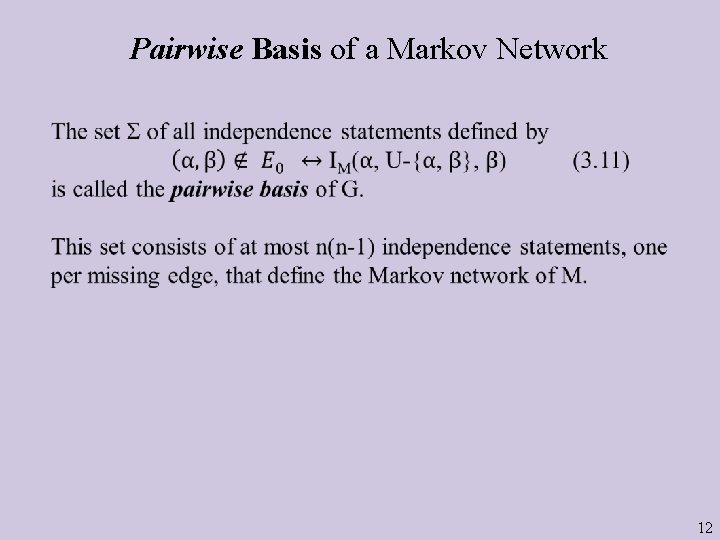

Pairwise Basis of a Markov Network 12

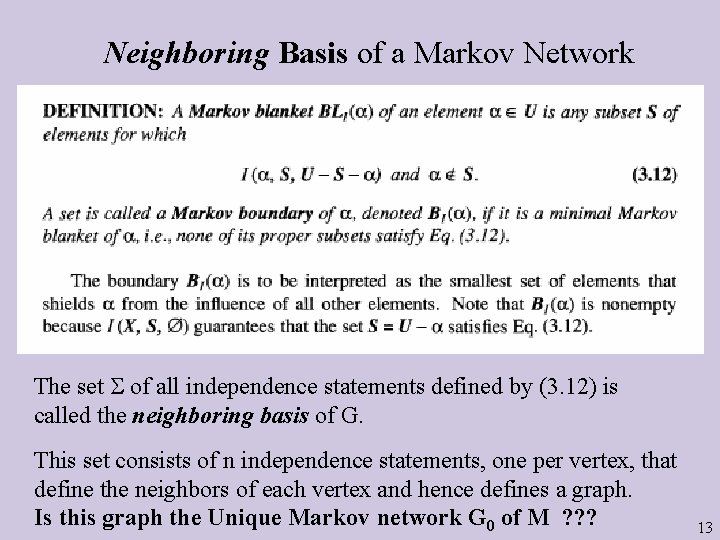

Neighboring Basis of a Markov Network The set of all independence statements defined by (3. 12) is called the neighboring basis of G. This set consists of n independence statements, one per vertex, that define the neighbors of each vertex and hence defines a graph. Is this graph the Unique Markov network G 0 of M ? ? ? 13

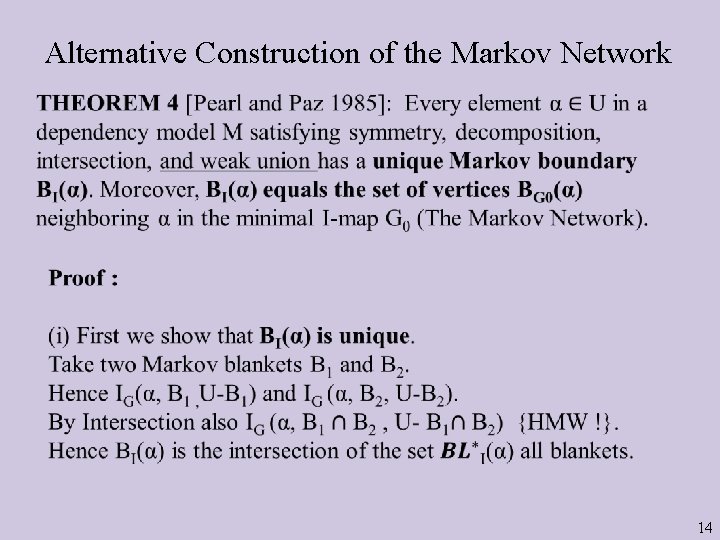

Alternative Construction of the Markov Network 14

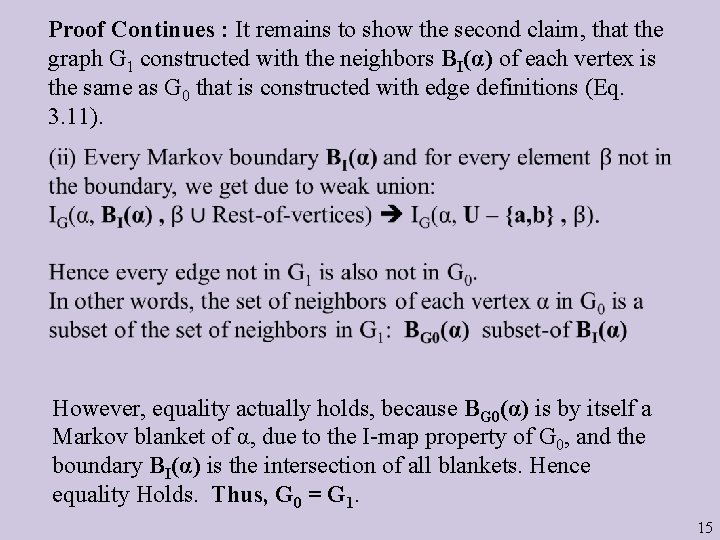

Proof Continues : It remains to show the second claim, that the graph G 1 constructed with the neighbors BI(α) of each vertex is the same as G 0 that is constructed with edge definitions (Eq. 3. 11). However, equality actually holds, because BG 0(α) is by itself a Markov blanket of α, due to the I-map property of G 0, and the boundary BI(α) is the intersection of all blankets. Hence equality Holds. Thus, G 0 = G 1. 15

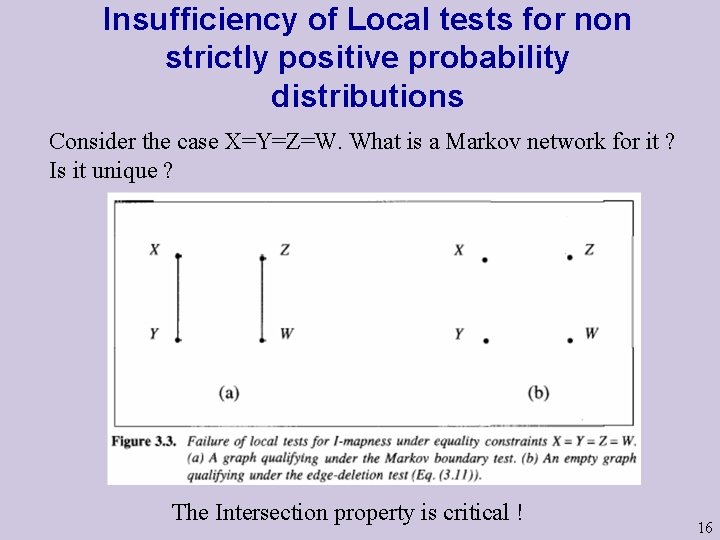

Insufficiency of Local tests for non strictly positive probability distributions Consider the case X=Y=Z=W. What is a Markov network for it ? Is it unique ? The Intersection property is critical ! 16

Markov Networks with probabilities 1. Define for each (maximal) clique Ci a nonnegative function g(Ci) called the compatibility function. 2. Take the product i g(Ci) over all cliques. 3. Define P(X 1, …, Xn) = K· i g(Ci) where K is a normalizing factor (inverse sum of the product). 17

![Theorem 6 [Hammersley and Clifford 1971]: If a probability function P is formed by Theorem 6 [Hammersley and Clifford 1971]: If a probability function P is formed by](http://slidetodoc.com/presentation_image_h2/546e847141f6b5314c2c2ab2fdcdd2c1/image-18.jpg)

Theorem 6 [Hammersley and Clifford 1971]: If a probability function P is formed by a normalized product of non negative functions on the cliques of G, then G is an I-map of P. Proof: It suffices to show (Theorem 4) that the neighborhood basis of G holds in P. Namely, show that I( , BG( ), U- -BG( )) hold in P, or just that: P( , BG( ), U- -BG( )) = f 1( , BG( )) f 2 (U- ) (*) Let J stand for the set of indices marking all cliques in G that include . = f 1( , BG( )) f 2 (U- ) The first product contains only variables adjacent to because Cj is a clique. The second product does not contain . Hence (*) holds. 18

Note: The theorem and its converse hold also for extreme probabilities but the presented proof does not apply due to the use of Intersection in Theorem 4. Theorem X: Every undirected graph G has a distribution P such that G is a perfect map of P. (In light of previous notes, it must have the form of a product over cliques). 19

Proof Sketch of Theorem X Theorem Y (Completeness): Given a graph G, for every independence statement = I( , Z, ) that does NOT hold in G, there exists a probability distribution P that satisfies all independence statements that hold in the graph G and does not satisfy = I( , Z, ). Proof of Theorem Y: Pick a path in G between and that does not contain a node from Z. Define a probability distribution that is a perfect map of the chain and multiply it by any marginal probabilities on all other nodes not on the path, forming P . Sketch for Theorem X (Strong Completeness): “Multiply” all P (via Armstrong relation) to obtain P that is a perfect map of G. (Continue here with “Proof by intimidation” --) 20

Interesting conclusion of Theorem Y: All independence statements that follow for strictly-positive probability from the neighborhood basis are derivable via symmetry, decomposition, intersection, and weak union. These axioms are (sound and) complete for neighborhood bases. These axioms are (sound and) complete also for pairwise bases. In fact for saturated statements conditional independence (whose span of variables is all of U) and vertex separation have exactly the same axioms. Isn’t that amazing ? (See paper P 2). 21

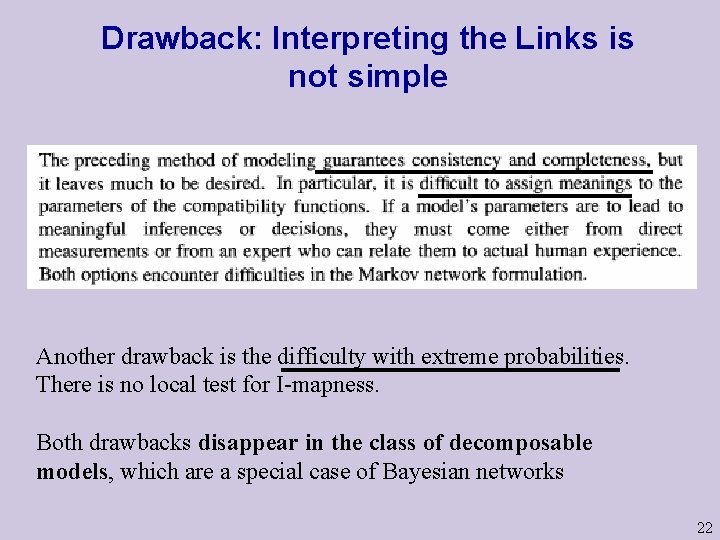

Drawback: Interpreting the Links is not simple Another drawback is the difficulty with extreme probabilities. There is no local test for I-mapness. Both drawbacks disappear in the class of decomposable models, which are a special case of Bayesian networks 22

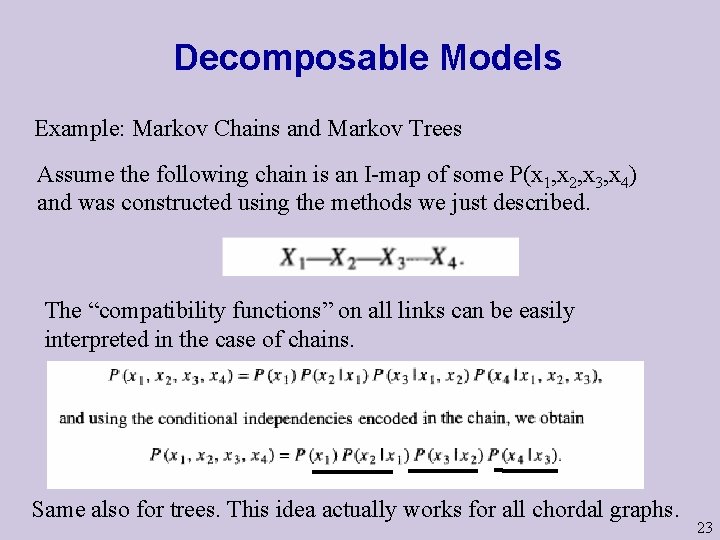

Decomposable Models Example: Markov Chains and Markov Trees Assume the following chain is an I-map of some P(x 1, x 2, x 3, x 4) and was constructed using the methods we just described. The “compatibility functions” on all links can be easily interpreted in the case of chains. Same also for trees. This idea actually works for all chordal graphs. 23

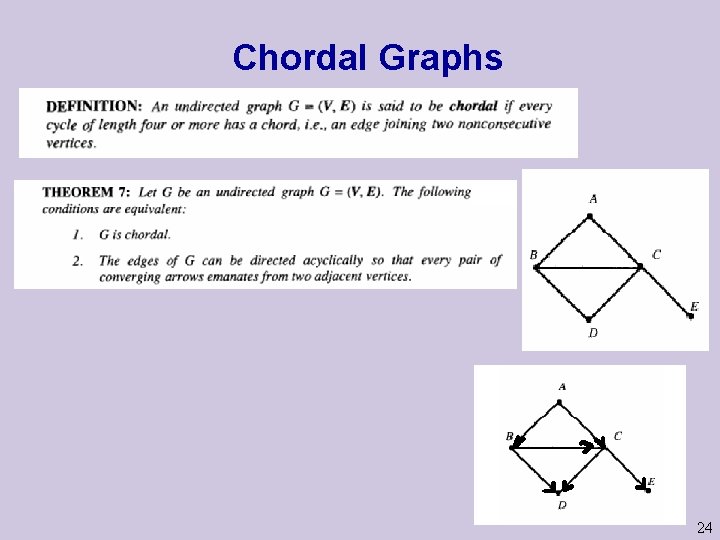

Chordal Graphs 24

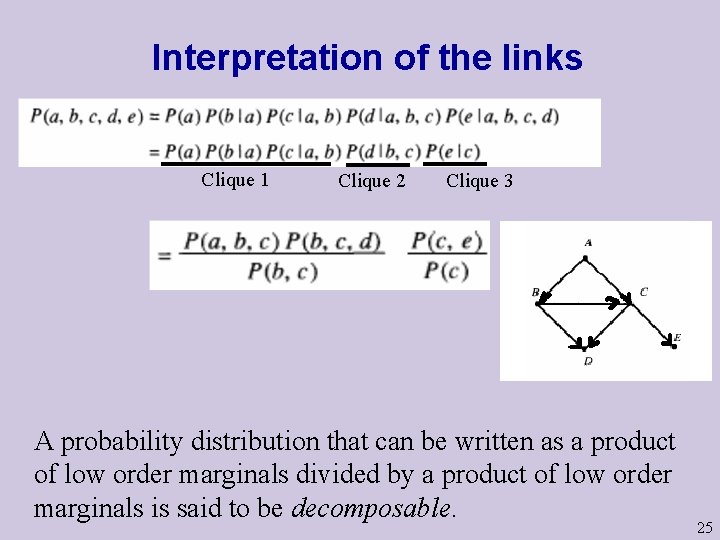

Interpretation of the links Clique 1 Clique 2 Clique 3 A probability distribution that can be written as a product of low order marginals divided by a product of low order marginals is said to be decomposable. 25

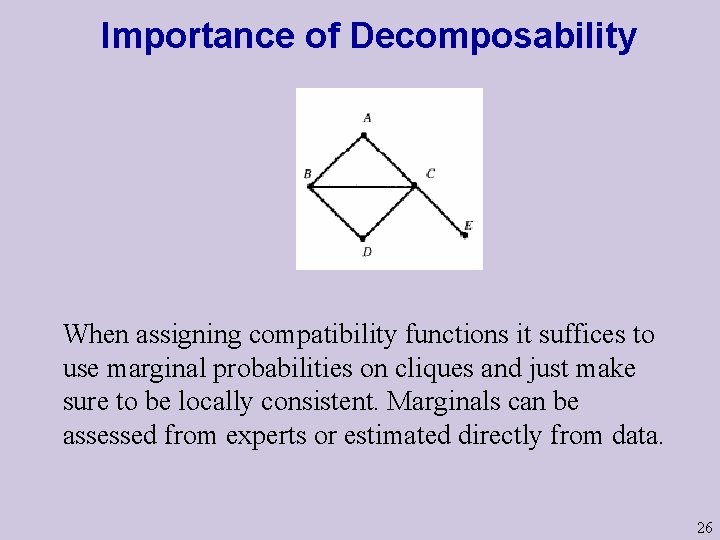

Importance of Decomposability When assigning compatibility functions it suffices to use marginal probabilities on cliques and just make sure to be locally consistent. Marginals can be assessed from experts or estimated directly from data. 26

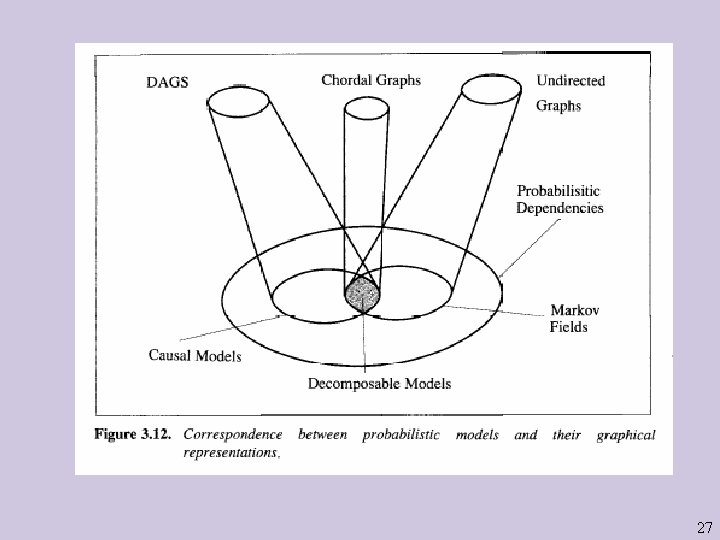

27

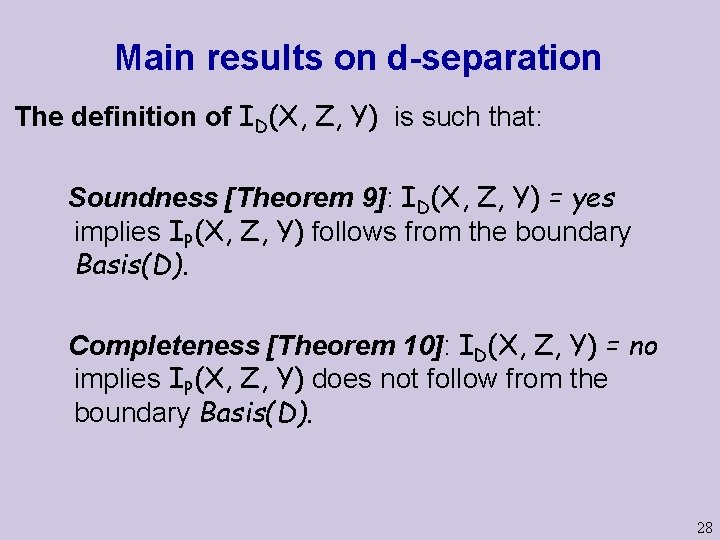

Main results on d-separation The definition of ID(X, Z, Y) is such that: Soundness [Theorem 9]: ID(X, Z, Y) = yes implies IP(X, Z, Y) follows from the boundary Basis(D). Completeness [Theorem 10]: ID(X, Z, Y) = no implies IP(X, Z, Y) does not follow from the boundary Basis(D). 28

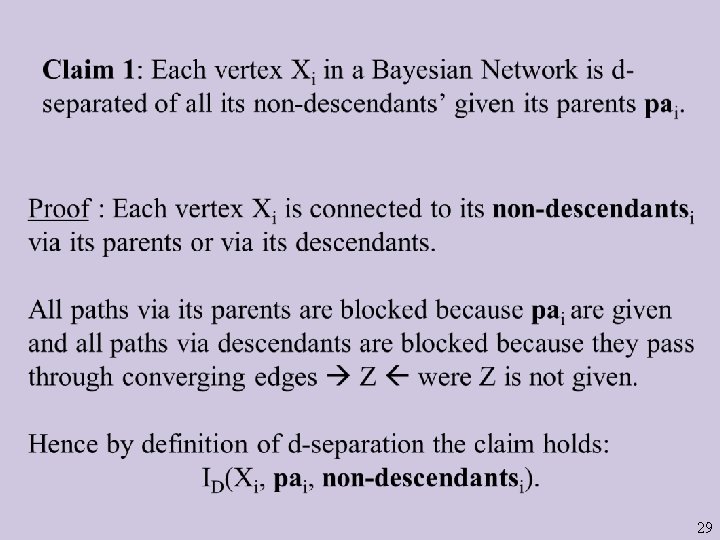

29

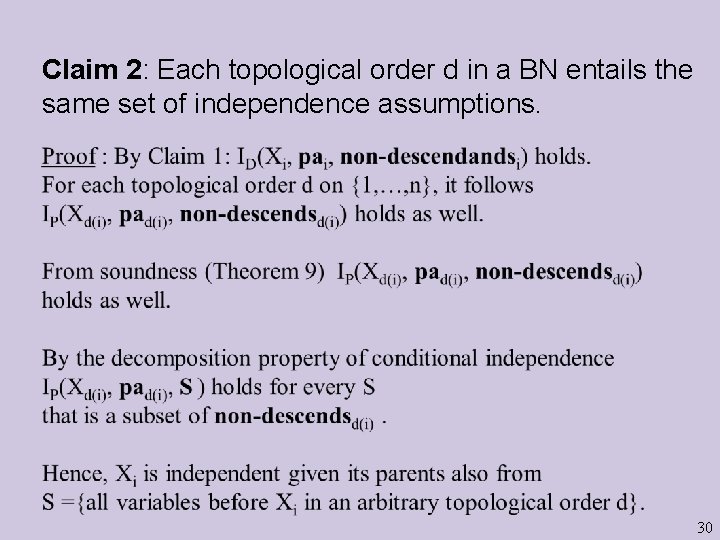

Claim 2: Each topological order d in a BN entails the same set of independence assumptions. 30

- Slides: 30