DENORMALIZATION CSCI 6442 Copyright 2015 David C Roberts

DENORMALIZATION CSCI 6442 © Copyright 2015, David C. Roberts, all rights reserved

2 Agenda • Review of normalization • Goals of denormalization • Denormalization example • Techniques • Guidelines

3 Normalization • Aims at reducing redundancy • Single representation of each fact • Single representation of each entity type • Normalization tends to reduce storage size • At one time this was important • At 10¢ per gigabyte, it’s often not an issue • Redundant data can be inconsistent data • Initial designers know to update all copies (usually) • Later programmers can easily make mistakes

4 . . . but • Normalization • Tends to increase the number of tables • Tends to require more joins in queries • Tends to reduce performance

5 Join • Database tables are independent • Join operations are inherently complex and resource-intensive • The goal of data independence relies on the independence of tables • In a sense, the basic advantages of relational database inflict performance penalties

6 Where We Are • Normalization leads to tables with fewer attributes, hence more joins • Joins are resource-intensive and tend to reduce performance

7 Denormalization Is the introduction of redundant information in a database in order to improve performance That redundancy is most often in a single database or it may be in multiple databases Special denormalized structures have been devised for use in Business Intelligence applications such as star schemas and OLAP

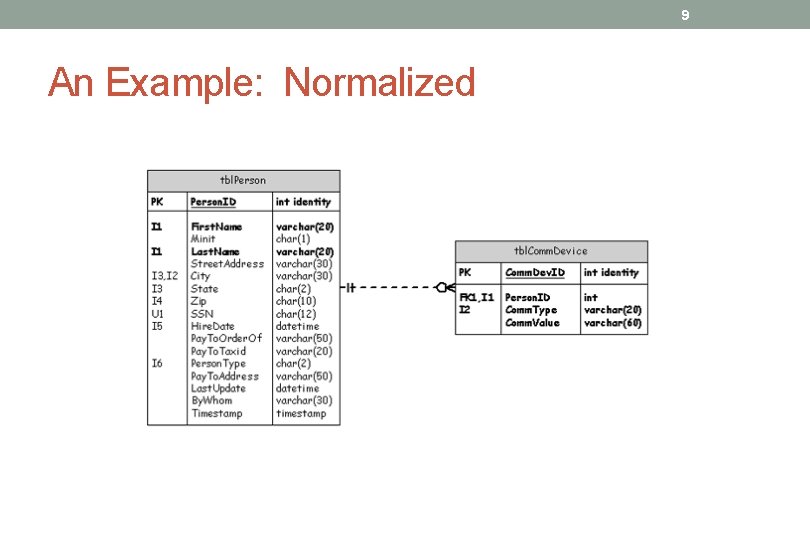

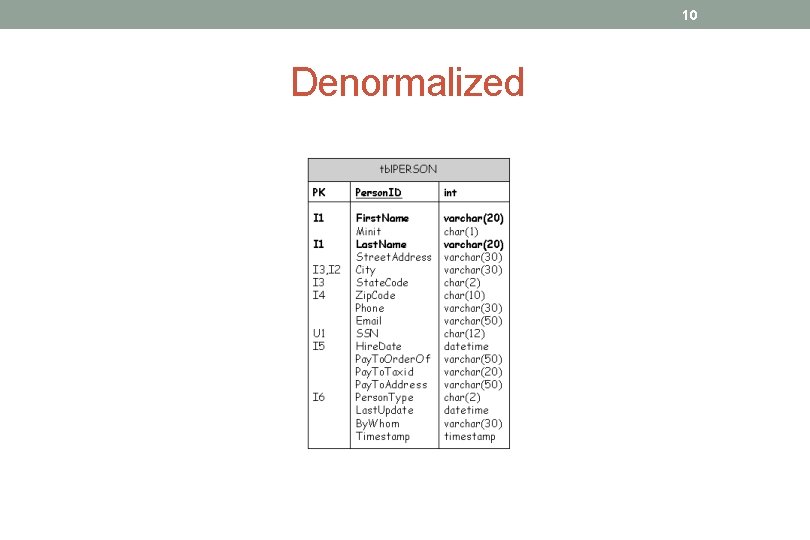

8 A Simple Example Consider a contact manager, with one table of people’s names and another table with their contact methods Each person may have many contact methods, such as cell phone, land line, pager, email, and so on, each with a separate row in the contact method table

9 An Example: Normalized

10 Denormalized

11 Denormalized • All the information for a single person comes from a single table, no join required • Simpler query • Only two types of contact method, email and phone, are now allowed in order to limit complexity • The denormalized data model is not as general and powerful as the normalized data model, but provides improved performance

12 Denormalization Techniques • Duplicate databases • If updates do not have to be available immediately, and reading is the bulk of use, use multiple copies of the whole database • Update a copy that’s not used for reading • Post updates at a time of low traffic • Duplicate tables • Especially useful if all reads can be directed to one table • For systems with lots of reads, can be multiple copies for read, one for write that is replicated to the read copies • Split tables • Used if parts of tables are used by different applications • Horizontal split • Vertical split • Combined tables • Join is eliminated by combining tables • Repeating groups across rows • All repeating groups in a single row • Pre-calculation of derived results • Used particularly for multi-row derivations

13 Duplicate Tables • If OLTP processing and decision support access the same table, decision support can slow up OLTP • Can duplicate a table, use second table for read-only (BI) use because OLTP requires perfect accuracy • For large-scale BI processing, more than two copies of data can be used • Tradeoff can be made of accuracy of the duplicate table vs. resources to keep it up-to-date • Cost: inaccuracy if duplicate table is not continuously updated, or processing resources to keep it up-to-date at all times

14 Split Tables Vertical split: attributes are divided between the two tables, primary key put into both tables • Particularly useful if one group of applications accesses some columns and another group accesses different columns • This can be a practical approach Horizontal split: rows are divided between two tables • Usually rows are divided by range of key values • Because performance drops as log of number of rows, this is often not a productive approach Cost: greater complexity of update, slower operation of some applications

15 Combined Tables If some join is a very popular query, then combine the tables that are often joined • Join is eliminated and previously joined information is obtained from a single query Cost: added complexity of update, slower operation of applications that need to use just one or the other table

16 Repeating Groups Across Rows Can put repeating groups into a single row, growing across rather than down • All repeating groups are obtained in a single row without a join • Oracle has a feature to do this called VARRAYs Cost: loss of flexibility, since an upper limit must be placed on the number of repeats

17 Pre-calculation of Derived Results If frequent queries do GROUP BY or use complex calculations on multiple rows, can do the computation in advance and retrieve results from a summary table • Oracle has a feature to do this called materialized views • Example: total income=salary + commission Cost: processing time is taken to do the computation before it’s needed

18 Summary • Denormalization can improve the performance of some operations and make others slower • Tradeoff of accuracy vs. processing cost can introduce hard-todiagnose problems • Don’t denormalize unless testing shows inability to meet performance standards when normalized • In the era of 3 GHz PCs, a DBMS on a typical laptop can easily query a table of more than 1, 000 rows in one second

19 Recommendation • First, normalize the database • Carefully tune for performance using tools that are available • Consider denormalization only if performance requirements can’t be met with a normalized database • Avoid suggestions to denormalize before the database is even implemented • Remember that “I denormalized for performance” is the argument used by designers who don’t understand normalization. Shun such people! Better, teach them!

20 Assessment • As computer costs decrease and performance improves, the need for denormalization is greatly reduced • Perhaps the era of denormalization is ending

- Slides: 20