Delivery of Test Accessibility Tools and Accommodations Across

Delivery of Test Accessibility Tools and Accommodations Across 3 Platforms: Implementation, Success, and Lessons Learned Trinell Bowman, Maryland Melissa Gholson, West Virginia Jen Paul, Michigan Laurene Christensen, NCEO Cara Laitusis, ETS

The Charlie’s Angels of Accessibility and Accommodations Trinell Bowman Melissa Gholson Jen Paul

Overview of the presentation Three big questions: 1. How were accessibility features and accommodations implemented? 2. Were there performance differences observed? 3. What were your state’s lessons learned? Discussion: Cara Laitusis, ETS

How were accessibility tools and accommodations (for English learners, students with disabilities, and general education students) were implemented on each platform?

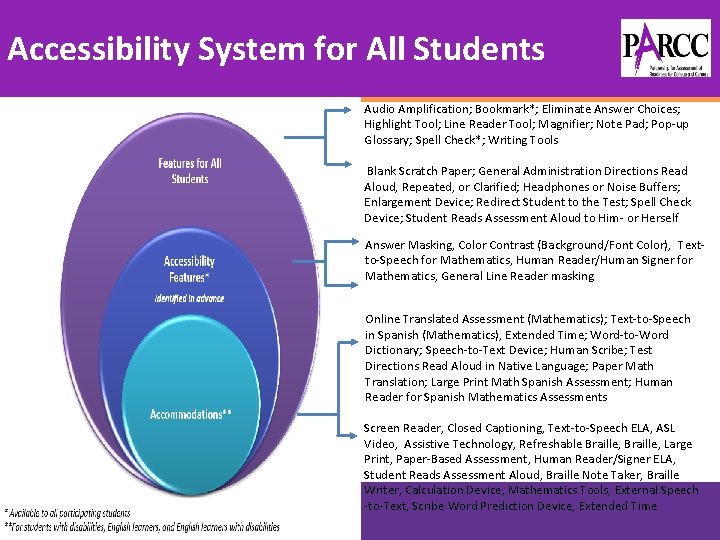

Accessibility System for All Students Audio Amplification; Bookmark*; Eliminate Answer Choices; Highlight Tool; Line Reader Tool; Magnifier; Note Pad; Pop-up Glossary; Spell Check*; Writing Tools Blank Scratch Paper; General Administration Directions Read Aloud, Repeated, or Clarified; Headphones or Noise Buffers; Enlargement Device; Redirect Student to the Test; Spell Check Device; Student Reads Assessment Aloud to Him- or Herself Answer Masking, Color Contrast (Background/Font Color), Textto-Speech for Mathematics, Human Reader/Human Signer for Mathematics, General Line Reader masking Online Translated Assessment (Mathematics); Text-to-Speech in Spanish (Mathematics), Extended Time; Word-to-Word Dictionary; Speech-to-Text Device; Human Scribe; Test Directions Read Aloud in Native Language; Paper Math Translation; Large Print Math Spanish Assessment; Human Reader for Spanish Mathematics Assessments Screen Reader, Closed Captioning, Text-to-Speech ELA, ASL Video, Assistive Technology, Refreshable Braille, Large Print, Paper-Based Assessment, Human Reader/Signer ELA, Student Reads Assessment Aloud, Braille Note Taker, Braille Writer, Calculation Device, Mathematics Tools, External Speech -to-Text, Scribe Word Prediction Device, Extended Time

Accessibility Features and Accommodations • For Accessibility Features in Advance (Tier 2) and Accommodations (Tier 3) PARCC implemented the use of a Personal Needs Profile (PNP). • The Personal Needs Profile (PNP) is a collection of student information regarding a student’s testing condition, materials, or accessibility features and accommodations that are needed to take a PARCC assessment. • During Year 2 of the PARCC assessment, the student registration file and the PNP were combined into one file layout now called the Student Registration/Personal Needs Profile or SR/PNP. • The testing platform used is Pearson’s Test. Nav system.

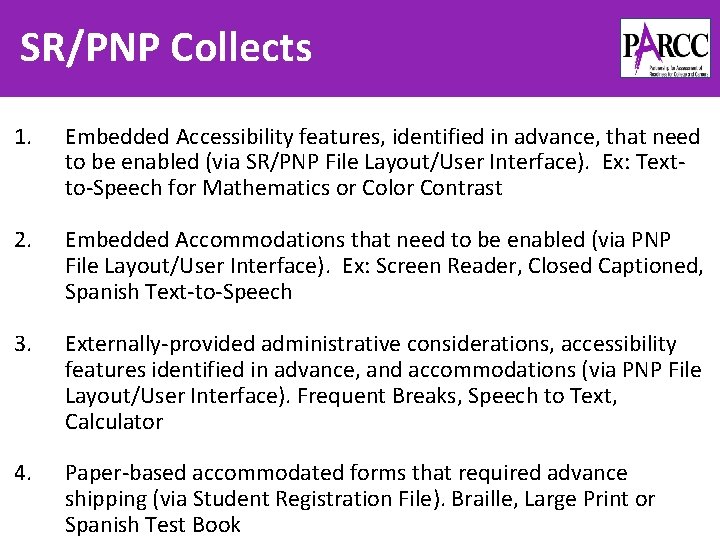

SR/PNP Collects 1. Embedded Accessibility features, identified in advance, that need to be enabled (via SR/PNP File Layout/User Interface). Ex: Textto-Speech for Mathematics or Color Contrast 2. Embedded Accommodations that need to be enabled (via PNP File Layout/User Interface). Ex: Screen Reader, Closed Captioned, Spanish Text-to-Speech 3. Externally-provided administrative considerations, accessibility features identified in advance, and accommodations (via PNP File Layout/User Interface). Frequent Breaks, Speech to Text, Calculator 4. Paper-based accommodated forms that required advance shipping (via Student Registration File). Braille, Large Print or Spanish Test Book

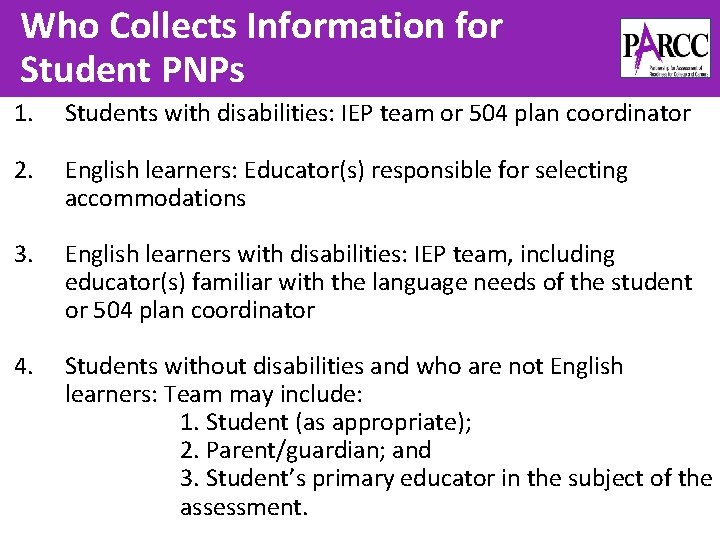

Who Collects Information for Student PNPs 1. Students with disabilities: IEP team or 504 plan coordinator 2. English learners: Educator(s) responsible for selecting accommodations 3. English learners with disabilities: IEP team, including educator(s) familiar with the language needs of the student or 504 plan coordinator 4. Students without disabilities and who are not English learners: Team may include: 1. Student (as appropriate); 2. Parent/guardian; and 3. Student’s primary educator in the subject of the assessment.

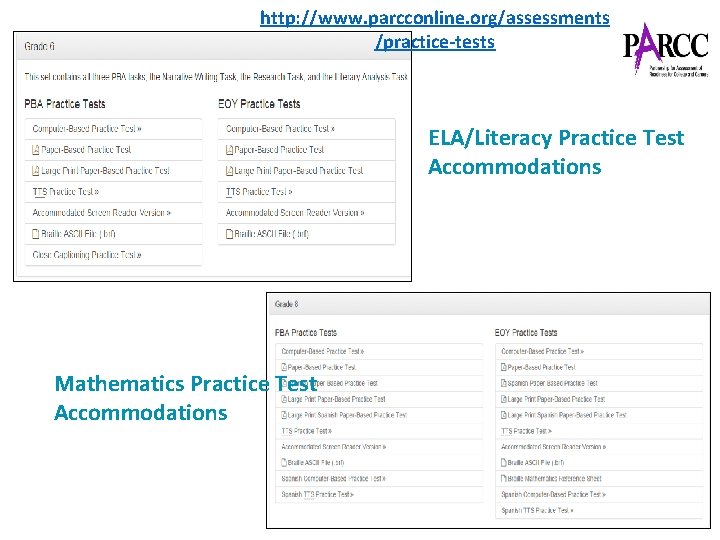

http: //www. parcconline. org/assessments /practice-tests ELA/Literacy Practice Test Accommodations Mathematics Practice Test Accommodations

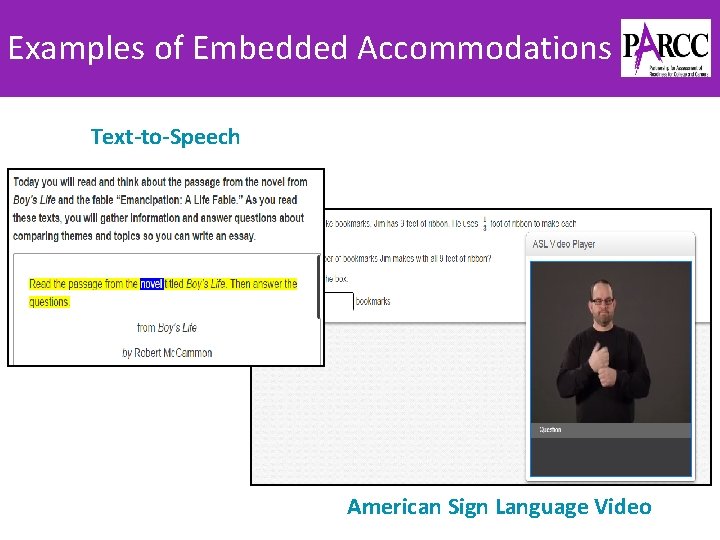

Examples of Embedded Accommodations Text-to-Speech American Sign Language Video

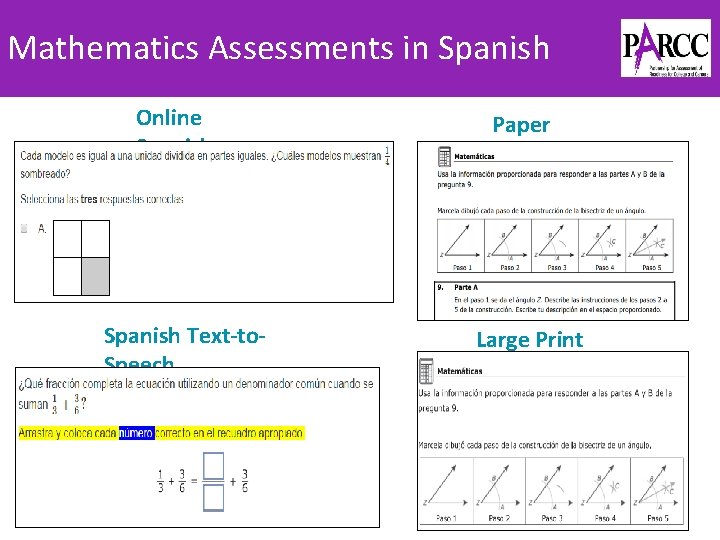

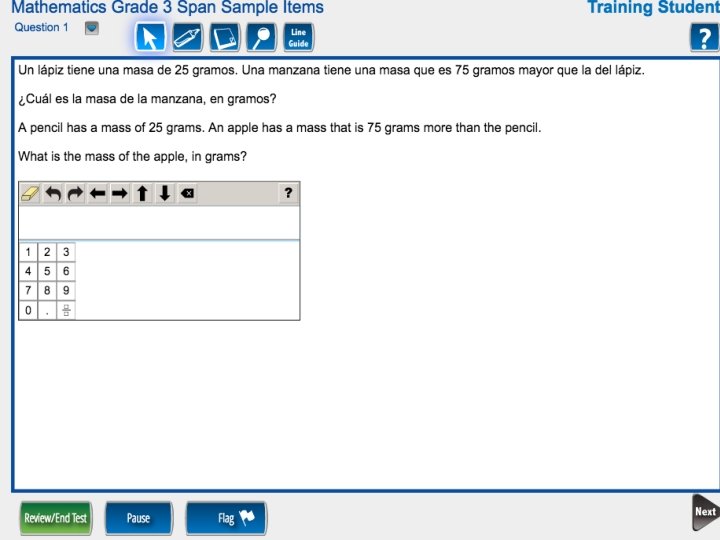

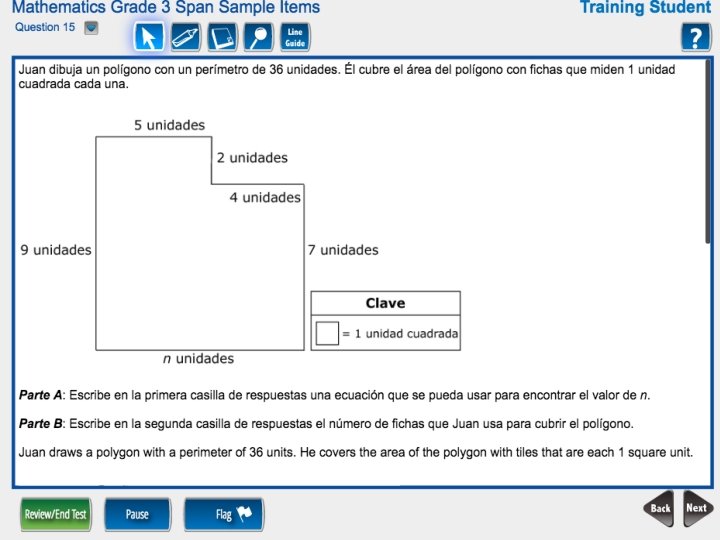

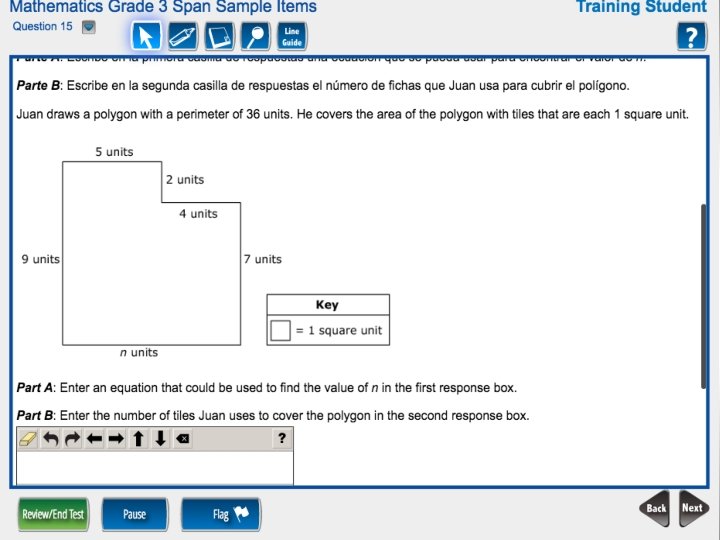

Mathematics Assessments in Spanish Online Spanish Text-to. Speech Paper Spanish Large Print Spanish

Michigan Department of Education Jennifer M. Paul EL & Accessibility Assessment Specialist

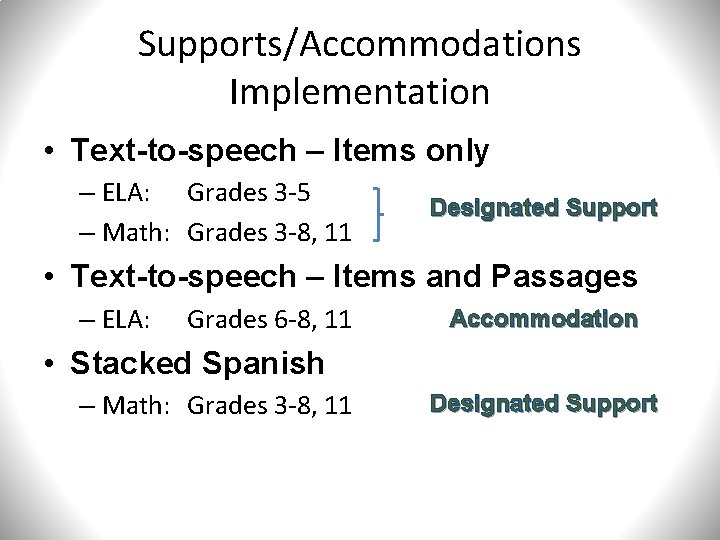

Supports/Accommodations Implementation • Text-to-speech – Items only – ELA: Grades 3 -5 – Math: Grades 3 -8, 11 Designated Support • Text-to-speech – Items and Passages – ELA: Grades 6 -8, 11 Accommodation • Stacked Spanish – Math: Grades 3 -8, 11 Designated Support

Accessibility and Accommodations: Implementation and Lessons Learned Melissa Gholson, Ed. D. Coordinator Office of Assessment

ACCESSIBILITY & IMPLEMENTATION

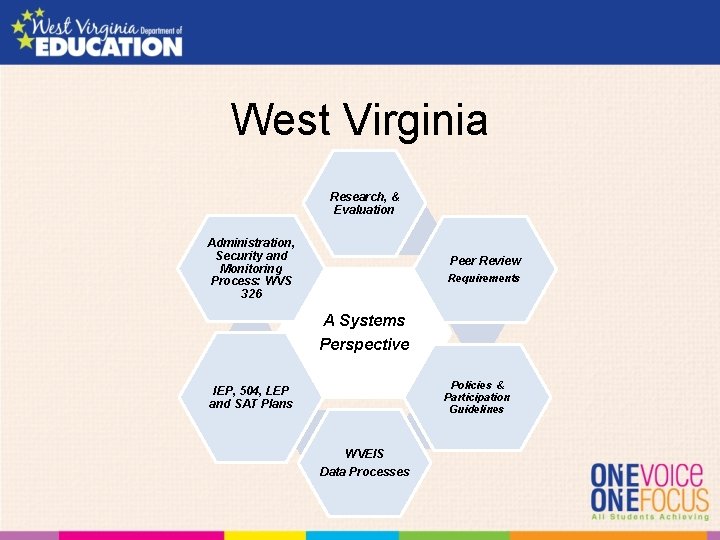

West Virginia Research, & Evaluation Administration, Security and Monitoring Process: WVS 326 Peer Review Requirements A Systems Perspective Policies & Participation Guidelines IEP, 504, LEP and SAT Plans WVEIS Data Processes

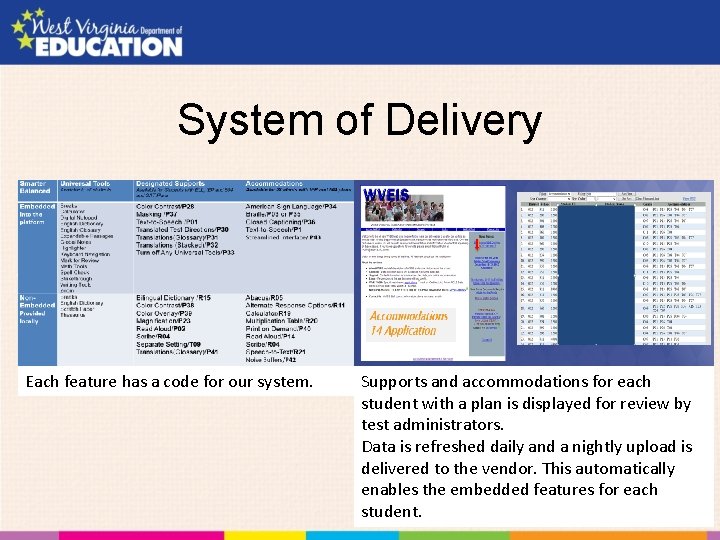

System of Delivery Each feature has a code for our system. Supports and accommodations for each student with a plan is displayed for review by test administrators. Data is refreshed daily and a nightly upload is delivered to the vendor. This automatically enables the embedded features for each student.

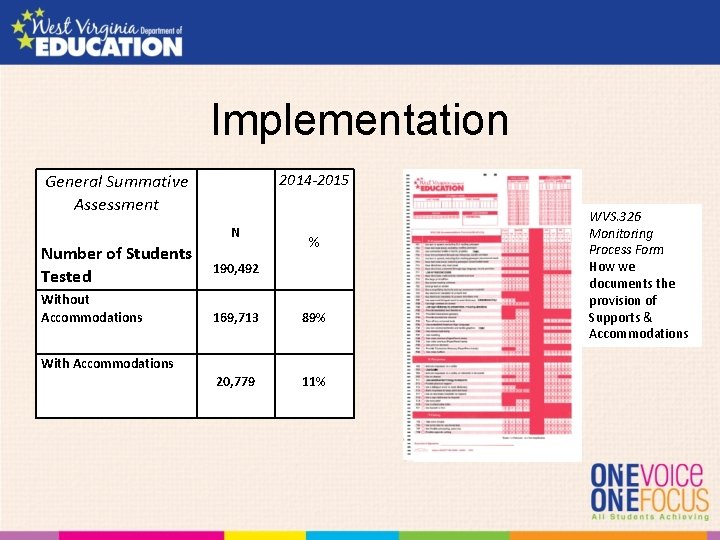

Implementation 2014 -2015 General Summative Assessment N Number of Students Tested Without Accommodations % 190, 492 169, 713 89% 20, 779 11% With Accommodations WVS. 326 Monitoring Process Form How we documents the provision of Supports & Accommodations

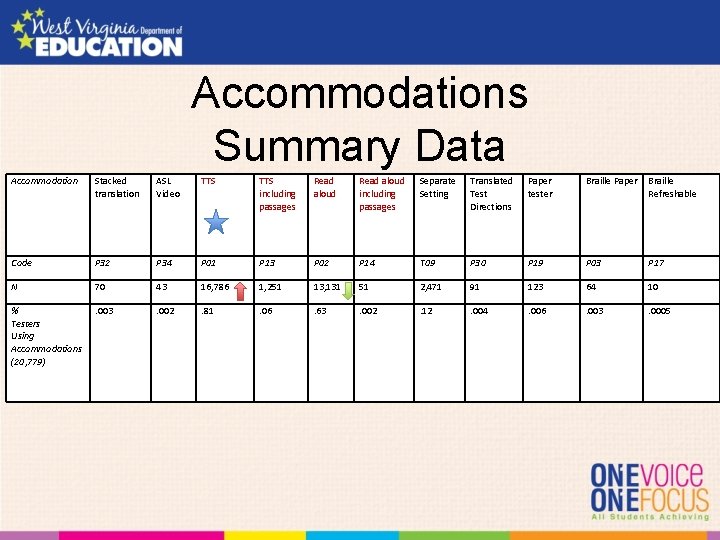

Accommodations Summary Data Accommodation Stacked translation ASL Video TTS including passages Read aloud including passages Separate Setting Translated Test Directions Paper tester Braille Paper Braille Refreshable Code P 32 P 34 P 01 P 13 P 02 P 14 T 09 P 30 P 19 P 03 P 17 N 70 43 16, 786 1, 251 13, 131 51 2, 471 91 123 64 10 % Testers Using Accommodations (20, 779) . 003 . 002 . 81 . 06 . 63 . 002 . 12 . 004 . 006 . 003 . 0005

Challenges • • • Assistive technology-JAWs, RBD, Technology Lack of familiarity of the system use Frequent plan changes Local set versus automatic upload Making sure test administrators checked all features were working properly prior

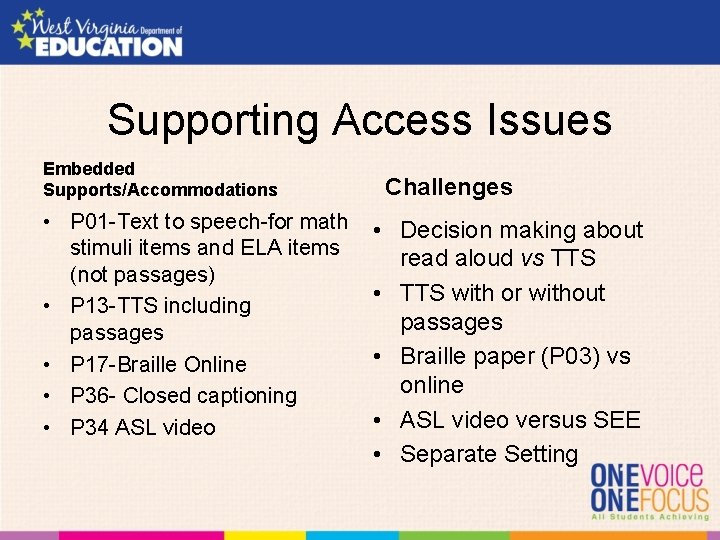

Supporting Access Issues Embedded Supports/Accommodations • P 01 -Text to speech-for math stimuli items and ELA items (not passages) • P 13 -TTS including passages • P 17 -Braille Online • P 36 - Closed captioning • P 34 ASL video Challenges • Decision making about read aloud vs TTS • TTS with or without passages • Braille paper (P 03) vs online • ASL video versus SEE • Separate Setting

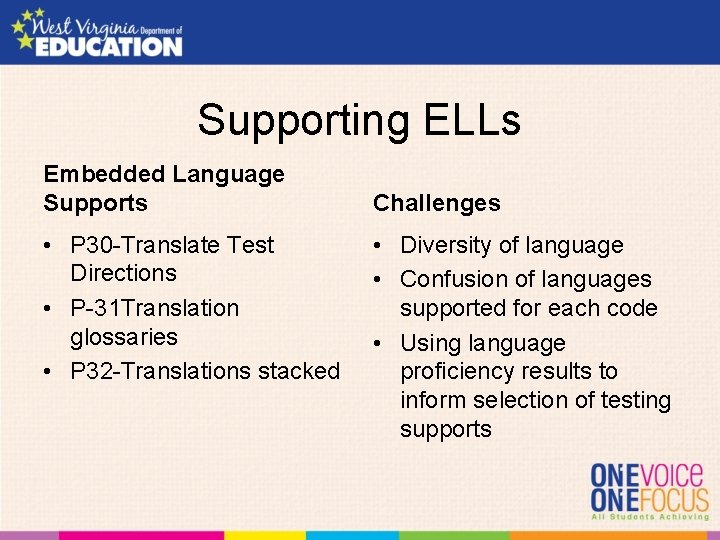

Supporting ELLs Embedded Language Supports • P 30 -Translate Test Directions • P-31 Translation glossaries • P 32 -Translations stacked Challenges • Diversity of language • Confusion of languages supported for each code • Using language proficiency results to inform selection of testing supports

Were there performance differences across students who used the features and those who did not?

2) performance differences across students who used the feature and those that did not;

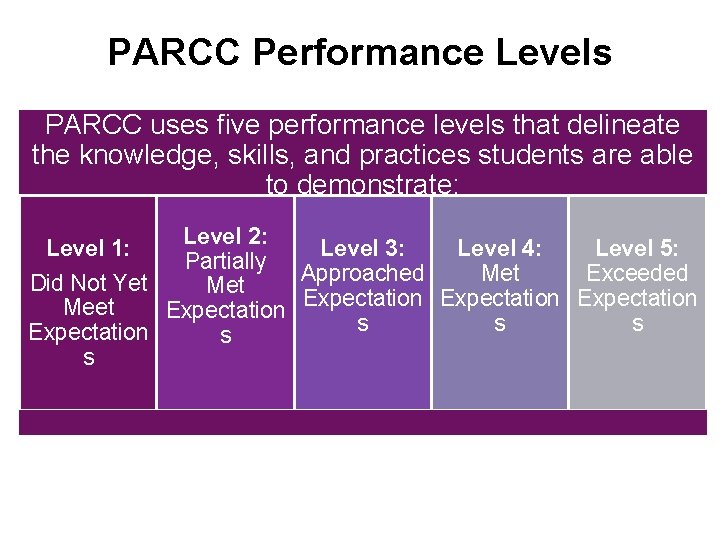

PARCC Performance Levels PARCC uses five performance levels that delineate the knowledge, skills, and practices students are able Place a purple frame around to demonstrate: images Level 2: Level 1: Level 3: Level 4: Level 5: Partially Approached Met Exceeded Did Not Yet Met Expectation Meet Expectation s s s Expectation s s

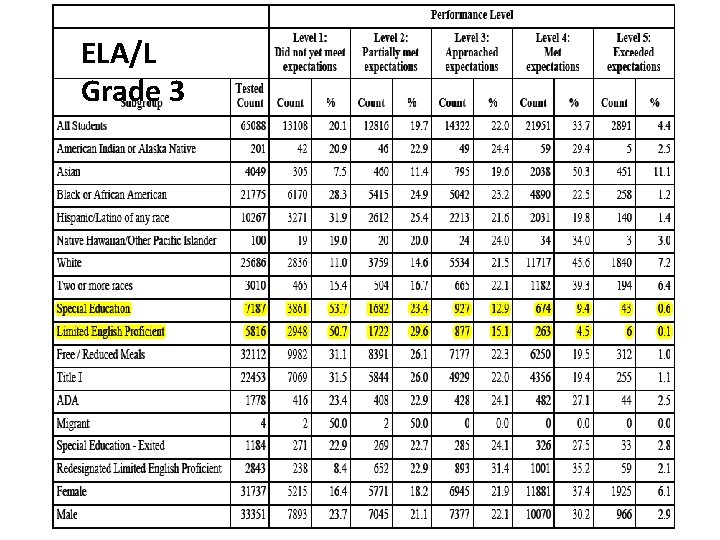

ELA/L Grade 3

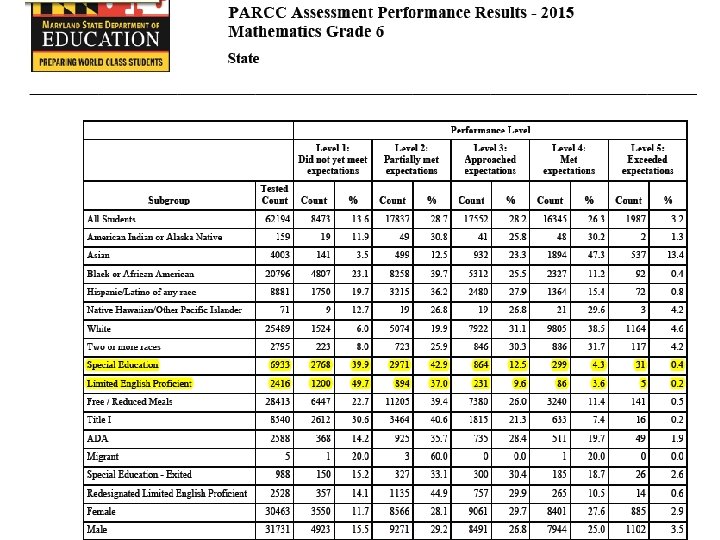

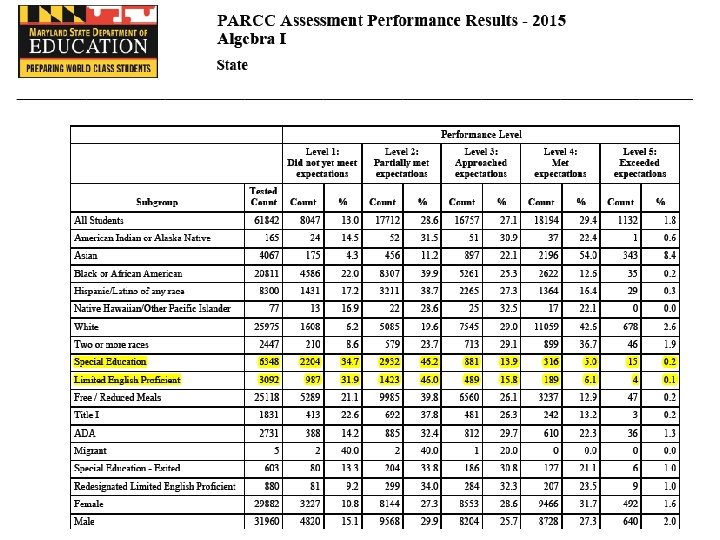

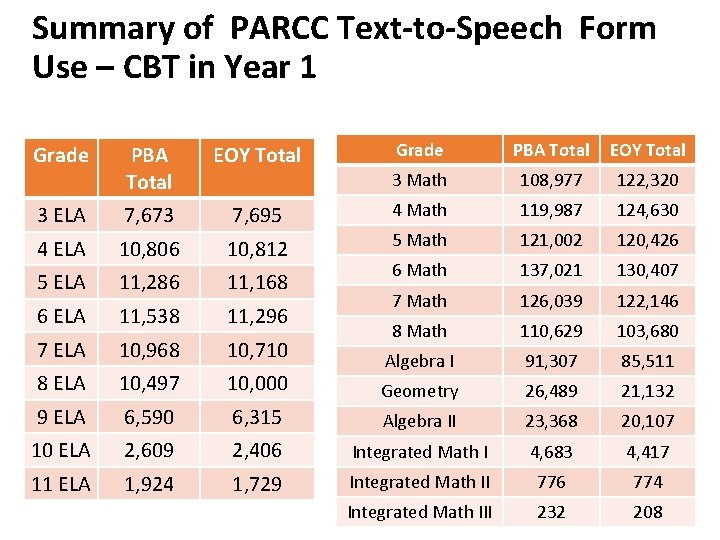

Summary of PARCC Text-to-Speech Form Use – CBT in Year 1 Grade PBA Total EOY Total 3 Math 108, 977 122, 320 7, 695 4 Math 119, 987 124, 630 10, 806 10, 812 5 Math 121, 002 120, 426 5 ELA 11, 286 11, 168 6 Math 137, 021 130, 407 6 ELA 11, 538 11, 296 7 Math 126, 039 122, 146 7 ELA 10, 968 10, 710 8 Math 110, 629 103, 680 8 ELA 10, 497 10, 000 Algebra I 91, 307 85, 511 Geometry 26, 489 21, 132 9 ELA 6, 590 6, 315 Algebra II 23, 368 20, 107 10 ELA 2, 609 2, 406 Integrated Math I 4, 683 4, 417 11 ELA 1, 924 1, 729 Integrated Math II 776 774 Integrated Math III 232 208 Grade PBA Total EOY Total 3 ELA 7, 673 4 ELA

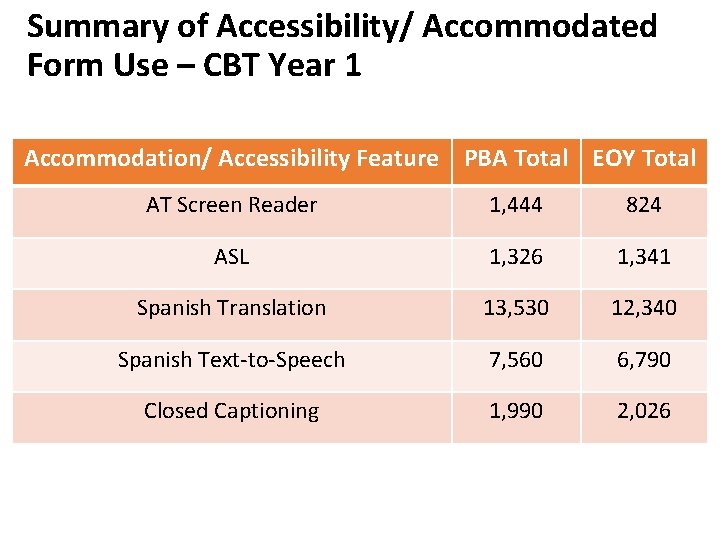

Summary of Accessibility/ Accommodated Form Use – CBT Year 1 Accommodation/ Accessibility Feature PBA Total EOY Total AT Screen Reader 1, 444 824 ASL 1, 326 1, 341 Spanish Translation 13, 530 12, 340 Spanish Text-to-Speech 7, 560 6, 790 Closed Captioning 1, 990 2, 026

Performance Differences • • Spring 2015 M-STEP student results ELA Math Performance Level Descriptors: – PL 1: Not proficient – PL 2: Partially proficient – PL 3: Proficient – PL 4: Advanced

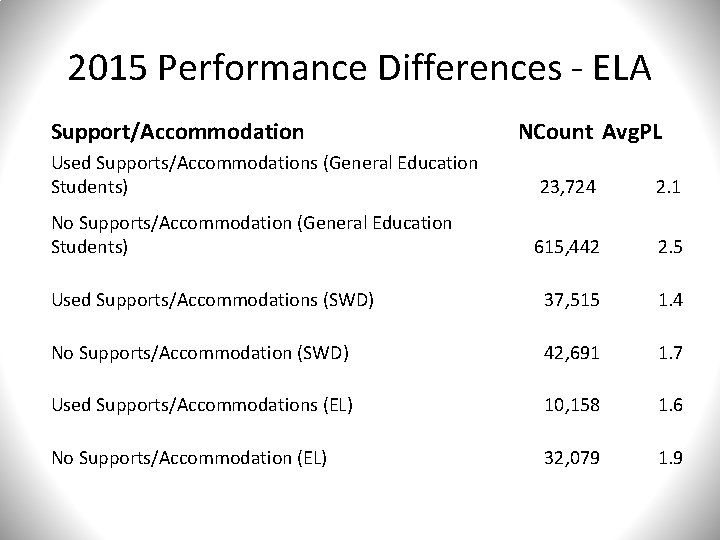

2015 Performance Differences - ELA Support/Accommodation NCount Avg. PL Used Supports/Accommodations (General Education Students) 23, 724 2. 1 No Supports/Accommodation (General Education Students) 615, 442 2. 5 Used Supports/Accommodations (SWD) 37, 515 1. 4 No Supports/Accommodation (SWD) 42, 691 1. 7 Used Supports/Accommodations (EL) 10, 158 1. 6 No Supports/Accommodation (EL) 32, 079 1. 9

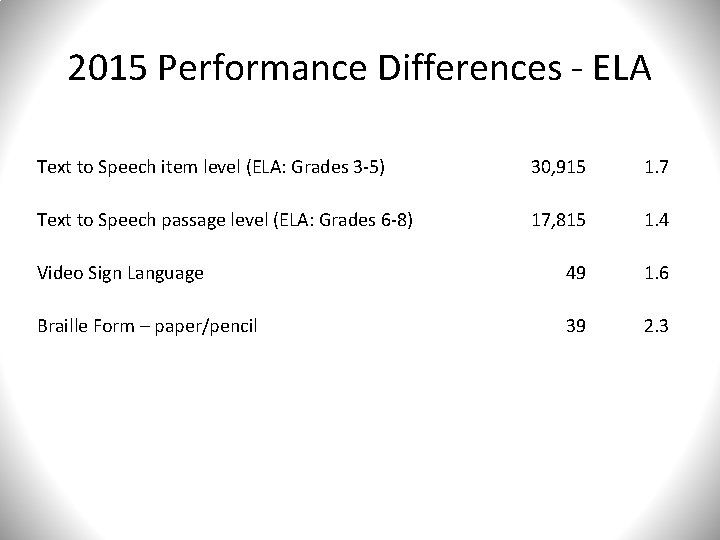

2015 Performance Differences - ELA Text to Speech item level (ELA: Grades 3 -5) 30, 915 1. 7 Text to Speech passage level (ELA: Grades 6 -8) 17, 815 1. 4 Video Sign Language 49 1. 6 Braille Form – paper/pencil 39 2. 3

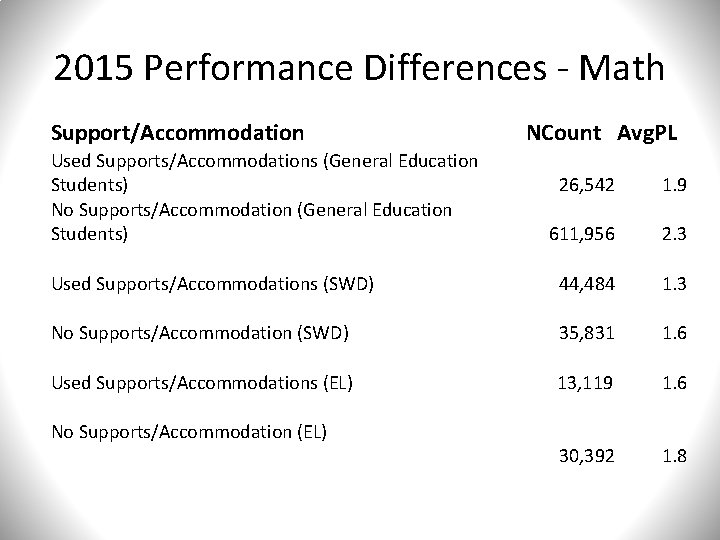

2015 Performance Differences - Math Support/Accommodation Used Supports/Accommodations (General Education Students) No Supports/Accommodation (General Education Students) NCount Avg. PL 26, 542 1. 9 611, 956 2. 3 Used Supports/Accommodations (SWD) 44, 484 1. 3 No Supports/Accommodation (SWD) 35, 831 1. 6 Used Supports/Accommodations (EL) 13, 119 1. 6 30, 392 1. 8 No Supports/Accommodation (EL)

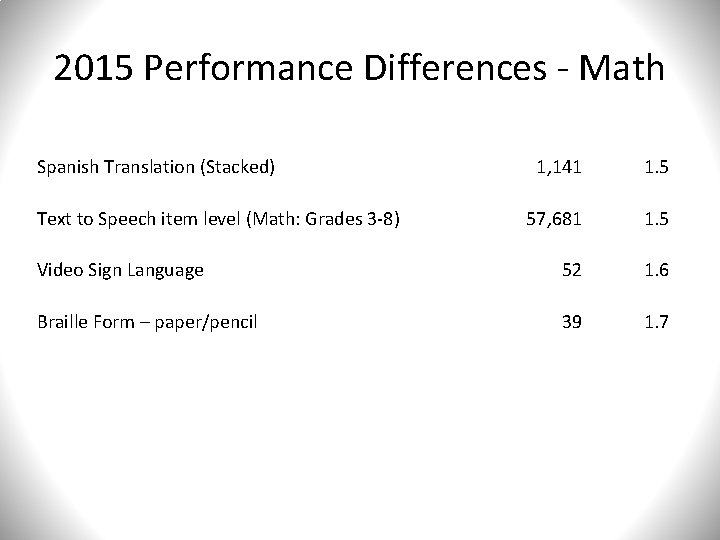

2015 Performance Differences - Math Spanish Translation (Stacked) 1, 141 1. 5 57, 681 1. 5 Video Sign Language 52 1. 6 Braille Form – paper/pencil 39 1. 7 Text to Speech item level (Math: Grades 3 -8)

ACCOMMODATIONS & PERFORMANCE

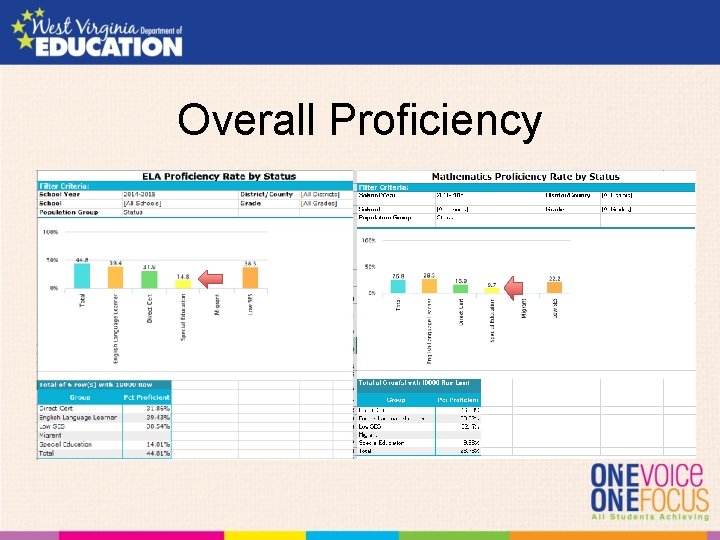

Overall Proficiency

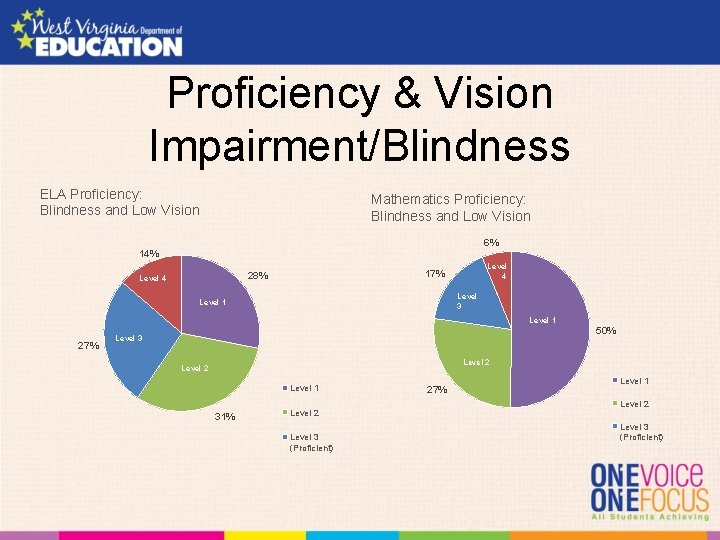

Proficiency & Vision Impairment/Blindness ELA Proficiency: Blindness and Low Vision Mathematics Proficiency: Blindness and Low Vision 6% 14% Level 4 17% 28% Level 4 Level 3 Level 1 27% 50% Level 3 Level 2 Level 1 31% Level 2 Level 3 (Proficient) 27% Level 1 Level 2 Level 3 (Proficient)

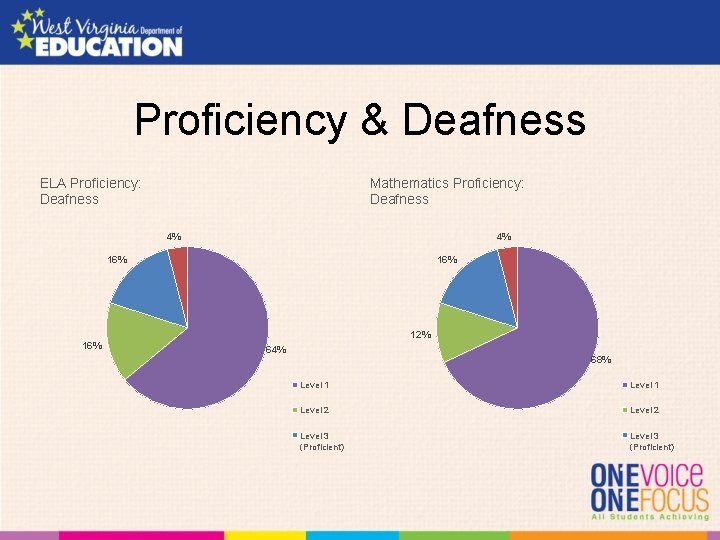

Proficiency & Deafness ELA Proficiency: Deafness Mathematics Proficiency: Deafness 4% 4% 16% 12% 16% 64% 68% Level 1 Level 2 Level 3 (Proficient)

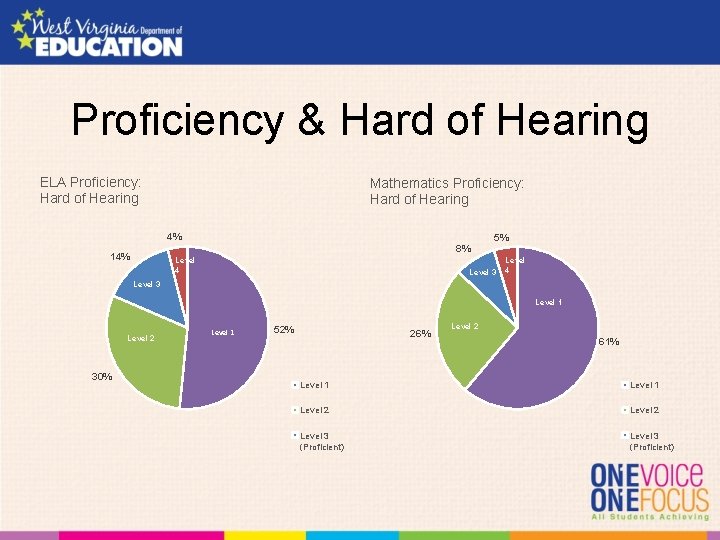

Proficiency & Hard of Hearing ELA Proficiency: Hard of Hearing Mathematics Proficiency: Hard of Hearing 4% 5% 8% 14% Level 4 Level 3 Level 1 Level 2 30% Level 1 52% 26% Level 2 61% Level 1 Level 2 Level 3 (Proficient)

What are the lessons learned about implementation and related challenges?

3. ) Lessons learned about implementation and related challenges

Lesson Learned from Implementation of SR/PNP and Testing Platform…. • PARCC states have the ability to capture accessibility features, administrative considerations and accommodations data via the Student Registration/Personal Needs Profile (SR/PNP) file. • Operational reports which documents all accessibility features, administrative considerations and accommodations at the school, district and state level. • Some states are using this data to monitor the selection of certain accessibility features and accommodations. • Some test administrators were not sure on which accessibility feature or accommodation to select and during testing changes had to be made to provide the student with the correct form.

Lesson Learned from Implementation of SR/PNP and Testing Platform…. • Some schools and districts completed the SR/PNP file during the additional order window which caused materials to arrive late in schools. • Additional training and guidance continues to be refined based on input from the field each administration cycle. Some states will transition to United English Braille in Year 3. • Additional guidance related to the SR/PNP and Before and After Testing was added to the PARCC Accessibility Features and Accommodations Manual • For Year 3, letter designations from the PARCC Accessibility Features and Accommodations Manual and SR/PNP File Layout document will be added to both document.

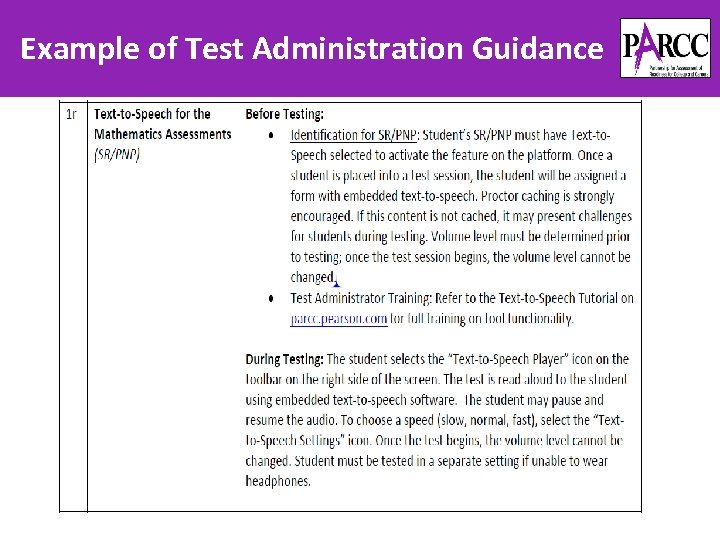

Example of Test Administration Guidance

Student Registration /Personal Needs Profile Lesson Learned…. Student Registration File and Personal Needs Profile was combined into one file (SR/PNP) in Year 2 of the Operational Assessments. Benefits: • All student information will reside in one database versus two separate ones • Test administrators can pull reports from the file to ensure every student has the necessary features/accommodations on testing day • File serves as a record of student’s testing profile • Will reduce administrative burden

Training on the SR/PNP • SR/PNP Educator Training Module • Accessibility Features & Accommodations Training Module • SR/PNP Field Definitions Guide • State-Developed Trainings 53

Challenges • Over identification for use • Lack of understanding of support/accommodation • Lack of understanding of student’s abilities • Only 1 fully translated language for Math Spanish

LESSONS LEARNED & CHALLENGES

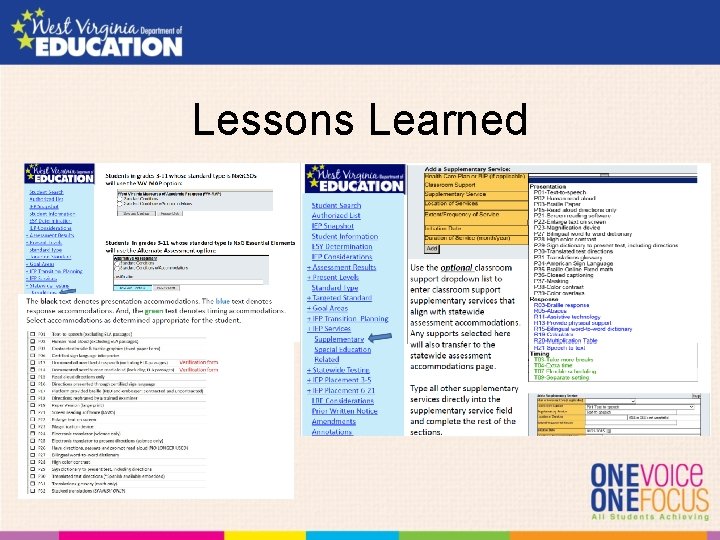

Lessons Learned

Looking Forward • Professional development for selection of tools, supports and accommodations • Interactive sandbox • Opportunities to use tools beyond the practice test • Emphasis on how interims and diagnostics support summative • Ongoing monitoring prior to summative window

Discussion Cara Laitusis

Last year’s themes • Most Accessible Assessments • “Too Much” • Training is essential • Surprises • Researcher Envy • Data and monitoring is impressive 59

One year later • STILL impressed by how far we have come in terms of accessibility features that have survived through to year 2 • Performance Gaps (All students vs students with disabilities) • Data • I miss Audra! 60

Accessibility in K 12 • K 12 students are getting better accommodation and accessibility than any other assessment (licensure, admissions, certification, language testing) • How do we know the accommodations are working for different groups? • TTS for auditory processing • So many accommodations are not universal tools • How will this impact future accommodation requests • “Past Testing Accommodations. Proof of past testing accommodations in similar test settings is generally sufficient to support a request for the same testing accommodations for a current standardized exam or other high-stakes test. ” 61

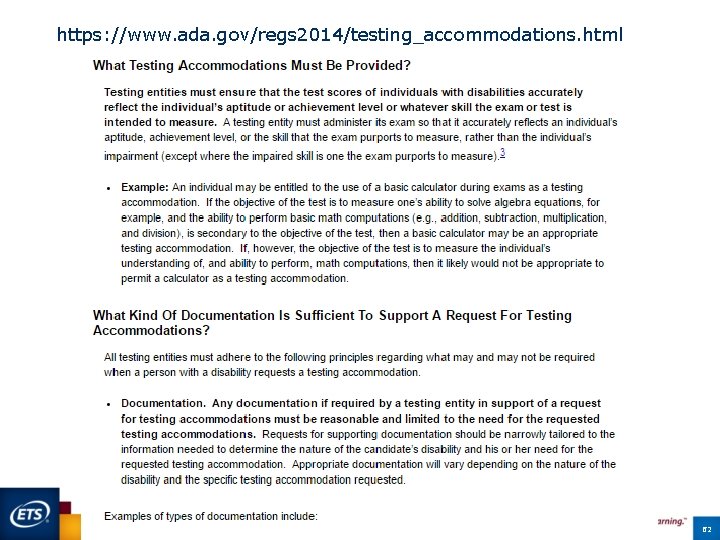

https: //www. ada. gov/regs 2014/testing_accommodations. html 62

Performance Gaps! • Differences seem larger but need to know more about why. Increased rigor of the state standards (i. e. harder tests)? ceiling effect on prior tests Adapting to computer based testing? Learning curve with new assistive technologies (e. g. , text to speech instead of human readers)? • All of the above • • • Need to dig a little more into this as trend data comes in • e. g. , looking into accommodations changes for the ELs and comparing those groups 63

Data • Still need for better data integration • Promise for better data across low incidence disabilities through consortia assessments not fully realized • Still have ‘research envy’ • Suggest states work together through the ASES SCASS to summarize data across states • Start small (one accommodation across different groups) • Progress on capturing features and accommodation needs is impressive as well as general use of features • Still need better information on feature use at the item level • Impressed by WV process of collecting data on accommodation use but hope we can make that automated in the future. 64

Data Capture • Need for improved data structure to allow for data capture during testing. Lessons learned from NAEP claitusis@ets. org @caralaitusis 65

Few more thoughts • Lots of lessons learned from OECD tests on language translation • screen formatting issues for longer languages • Right to left vs left to right languages • TTS challenges • Professional Development, Professional Development • What works best (online, in person, practice tests)? • How to transition knowledge with staff turn over? • Language consistency across assessments 66

Trinell Bowman trinell. bowman@pgcps. org Melissa Gholson mgholson@k 12. wv. us Jen Paul. J@michigan. gov

- Slides: 67