Delivering Parallel Programmability to the Masses via the

Delivering Parallel Programmability to the Masses via the Intel MIC Ecosystem: A Case Study Kaixi Hou, Hao Wang, and Wu-chun Feng Department of Computer Science, Virginia Tech synergy. cs. vt. edu

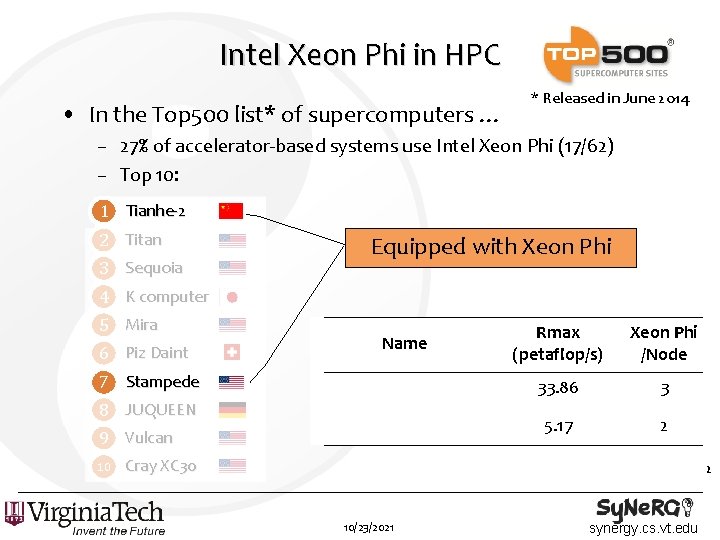

Intel Xeon Phi in HPC • In the Top 500 list* of supercomputers … * Released in June 2014 – 27% of accelerator-based systems use Intel Xeon Phi (17/62) – Top 10: 1 Tianhe-2 2 Titan 3 Sequoia Equipped with Xeon Phi 4 K computer 5 Mira 6 Piz Daint Name 7 Stampede 8 JUQUEEN 9 Vulcan 10 Rmax (petaflop/s) Xeon Phi /Node 33. 86 3 5. 17 2 Cray XC 30 2 10/23/2021 synergy. cs. vt. edu

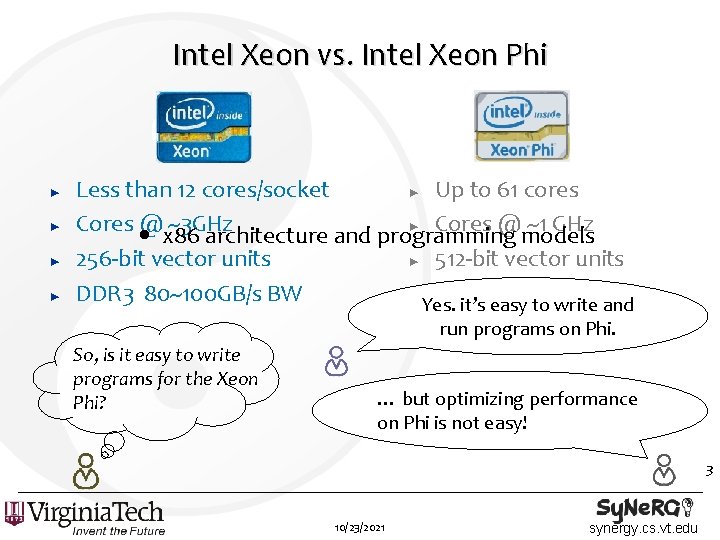

Intel Xeon vs. Intel Xeon Phi ► ► Less than 12 cores/socket ► Up to 61 cores Cores • @x 86 ~3 GHz ► Cores @ ~1 GHz architecture and programming models 256 -bit vector units ► 512 -bit vector units DDR 3 80~100 GB/s BW ► GDDR 5 150 GB/s BW Yes. it’s easy to write and run programs on Phi. So, is it easy to write programs for the Xeon Phi? … but optimizing performance on Phi is not easy! 3 10/23/2021 synergy. cs. vt. edu

![Architecture-Specific Solutions • Transposition in FFT [Park 13] – Reduce memory accesses via cross-lane Architecture-Specific Solutions • Transposition in FFT [Park 13] – Reduce memory accesses via cross-lane](http://slidetodoc.com/presentation_image_h2/e2971c69479f6ed232d573151e2a242e/image-4.jpg)

Architecture-Specific Solutions • Transposition in FFT [Park 13] – Reduce memory accesses via cross-lane intrinsics • Swendsen-Wang multi-cluster algorithm [Wende 13] – Maneuver the elements in registers via the data-reordering intrinsics • Linpack benchmark [Heinecke 13] – Reorganize the computation patterns and instructions via assembly code If the optimizations are Xeon Phi-specific, the codes are not easy to write and portable. 4 10/23/2021 synergy. cs. vt. edu

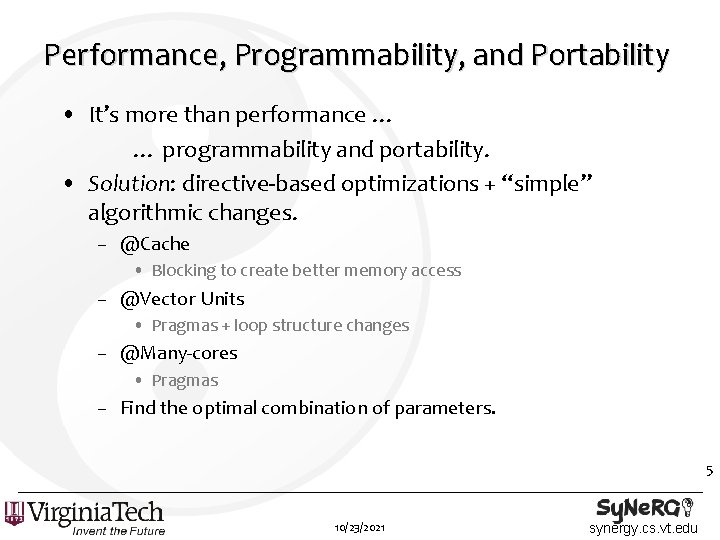

Performance, Programmability, and Portability • It’s more than performance … … programmability and portability. • Solution: directive-based optimizations + “simple” algorithmic changes. – @Cache • Blocking to create better memory access – @Vector Units • Pragmas + loop structure changes – @Many-cores • Pragmas – Find the optimal combination of parameters. 5 10/23/2021 synergy. cs. vt. edu

Outline • Introduction – Intel Xeon Phi – Architecture-Specific Solutions • Case Study : Floyd-Warshall Algorithm – Algorithmic Overview – Optimizations for Xeon Phi • Cache Blocking • Vectorization via Data-Level Parallelism • Many Cores via Thread-Level Parallelism – Performance Evaluation on Xeon Phi • Conclusion 6 10/23/2021 synergy. cs. vt. edu

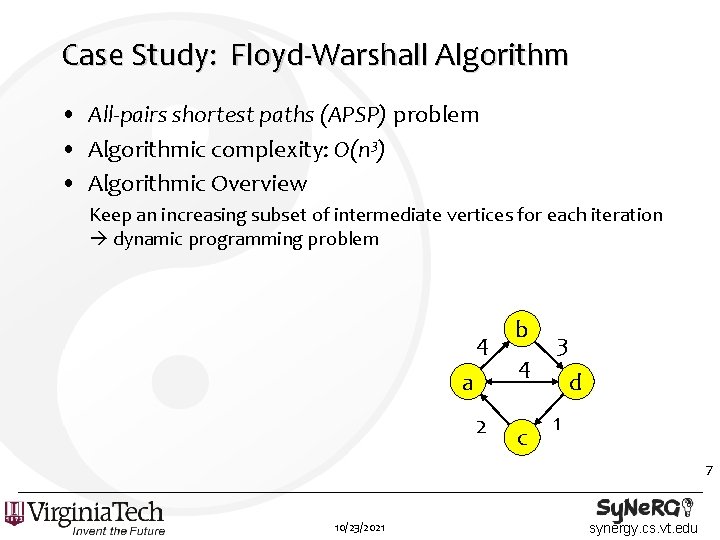

Case Study: Floyd-Warshall Algorithm • All-pairs shortest paths (APSP) problem • Algorithmic complexity: O(n 3) • Algorithmic Overview Keep an increasing subset of intermediate vertices for each iteration dynamic programming problem 4 a 2 b 4 c 3 d 1 7 10/23/2021 synergy. cs. vt. edu

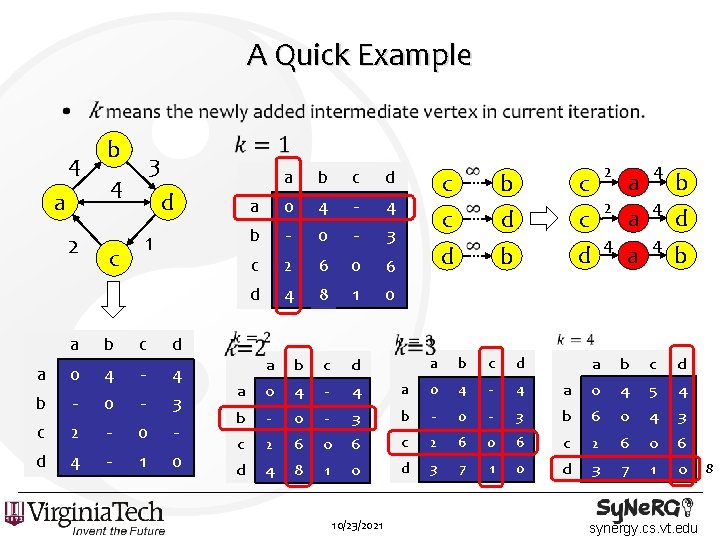

A Quick Example • 4 a 2 b 3 4 d 1 c a b c d a 0 4 - 4 b - 0 - 3 c 2 - 0 - d 4 - 1 0 a b c d a 0 4 - 4 b - 0 - 3 c 2 6 - 0 6 - d 4 8 - 1 0 c c d b d 0 4 5 4 3 b 6 0 4 3 0 6 c 2 6 0 6 1 0 d 3 7 1 0 4 - 4 3 b - 0 6 c 2 6 1 0 d 3 7 4 - 4 b - 0 - c 2 6 d 4 8 10/23/2021 b a 0 0 4 d a a 4 b d c d d a 4 b c c 4 a a a b b 2 c a a 2 b d c synergy. cs. vt. edu 8

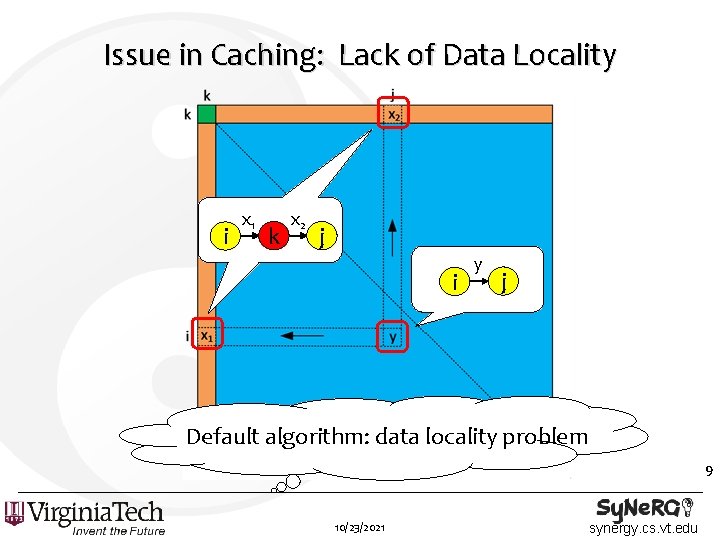

Issue in Caching: Lack of Data Locality i x 1 k x 2 j i y j Default algorithm: data locality problem 9 10/23/2021 synergy. cs. vt. edu

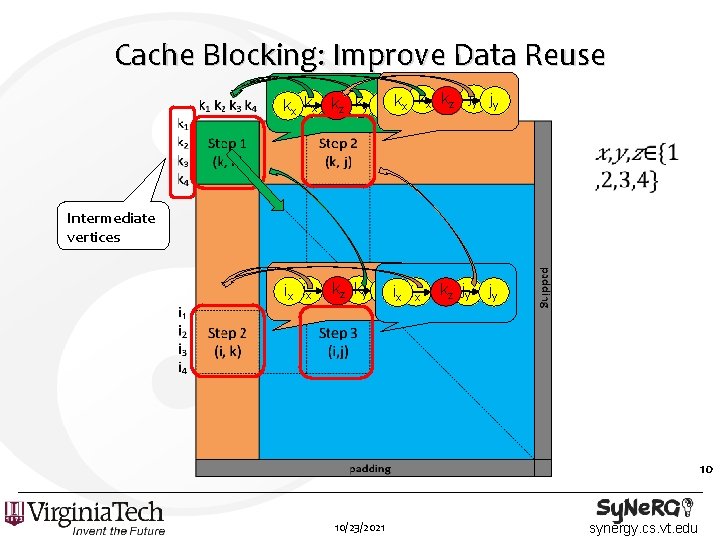

Cache Blocking: Improve Data Reuse kx kx kz ky ky kx kx kz jy jy Intermediate vertices ix ix kz ky kyix ix kz jy jy 10 10/23/2021 synergy. cs. vt. edu

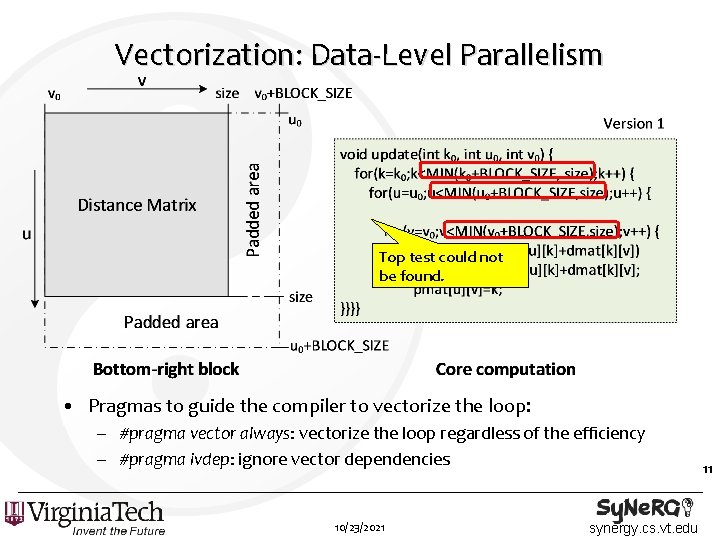

Vectorization: Data-Level Parallelism Top test could not be found. • Pragmas to guide the compiler to vectorize the loop: – #pragma vector always: vectorize the loop regardless of the efficiency – #pragma ivdep: ignore vector dependencies 10/23/2021 synergy. cs. vt. edu 11

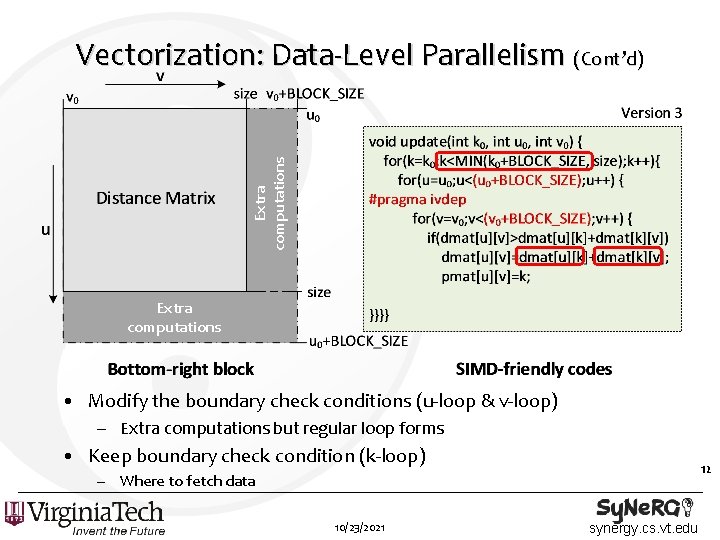

Extra computations Vectorization: Data-Level Parallelism (Cont’d) Extra computations • Modify the boundary check conditions (u-loop & v-loop) – Extra computations but regular loop forms • Keep boundary check condition (k-loop) 12 – Where to fetch data 10/23/2021 synergy. cs. vt. edu

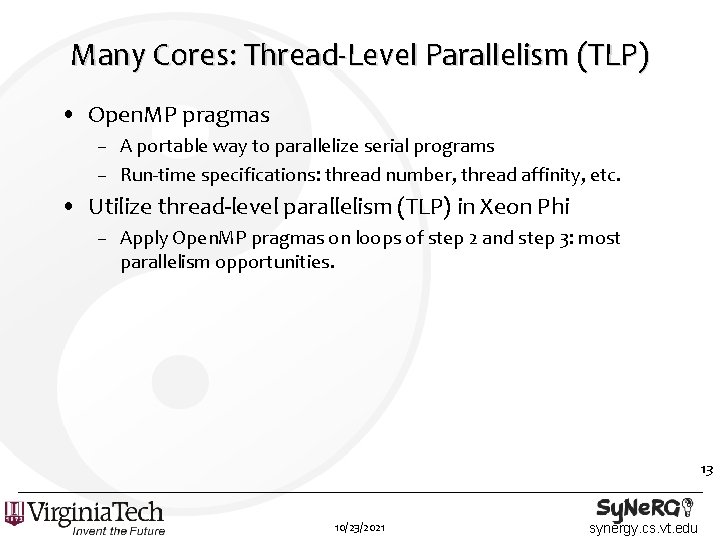

Many Cores: Thread-Level Parallelism (TLP) • Open. MP pragmas – A portable way to parallelize serial programs – Run-time specifications: thread number, thread affinity, etc. • Utilize thread-level parallelism (TLP) in Xeon Phi – Apply Open. MP pragmas on loops of step 2 and step 3: most parallelism opportunities. 13 10/23/2021 synergy. cs. vt. edu

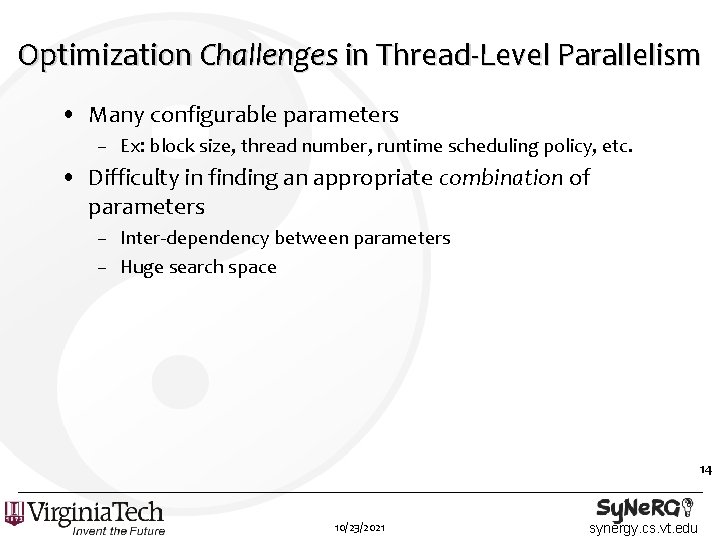

Optimization Challenges in Thread-Level Parallelism • Many configurable parameters – Ex: block size, thread number, runtime scheduling policy, etc. • Difficulty in finding an appropriate combination of parameters – Inter-dependency between parameters – Huge search space 14 10/23/2021 synergy. cs. vt. edu

![Optimization Approach to Thread-Level Parallelism • Starchart: Tree-based partitioning [Jia 13] 15 10/23/2021 synergy. Optimization Approach to Thread-Level Parallelism • Starchart: Tree-based partitioning [Jia 13] 15 10/23/2021 synergy.](http://slidetodoc.com/presentation_image_h2/e2971c69479f6ed232d573151e2a242e/image-15.jpg)

Optimization Approach to Thread-Level Parallelism • Starchart: Tree-based partitioning [Jia 13] 15 10/23/2021 synergy. cs. vt. edu

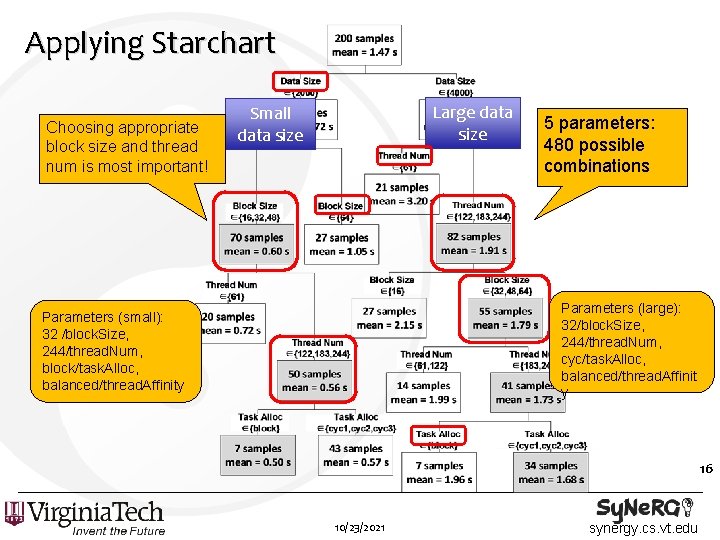

Applying Starchart Choosing appropriate block size and thread num is most important! Large data size Small data size 5 parameters: 480 possible combinations Parameters (large): 32/block. Size, 244/thread. Num, cyc/task. Alloc, balanced/thread. Affinit y Parameters (small): 32 /block. Size, 244/thread. Num, block/task. Alloc, balanced/thread. Affinity 16 10/23/2021 synergy. cs. vt. edu

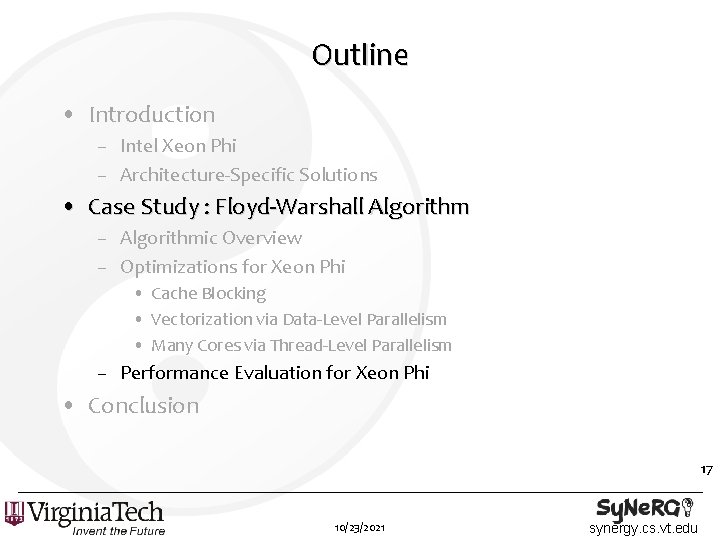

Outline • Introduction – Intel Xeon Phi – Architecture-Specific Solutions • Case Study : Floyd-Warshall Algorithm – Algorithmic Overview – Optimizations for Xeon Phi • Cache Blocking • Vectorization via Data-Level Parallelism • Many Cores via Thread-Level Parallelism – Performance Evaluation for Xeon Phi • Conclusion 17 10/23/2021 synergy. cs. vt. edu

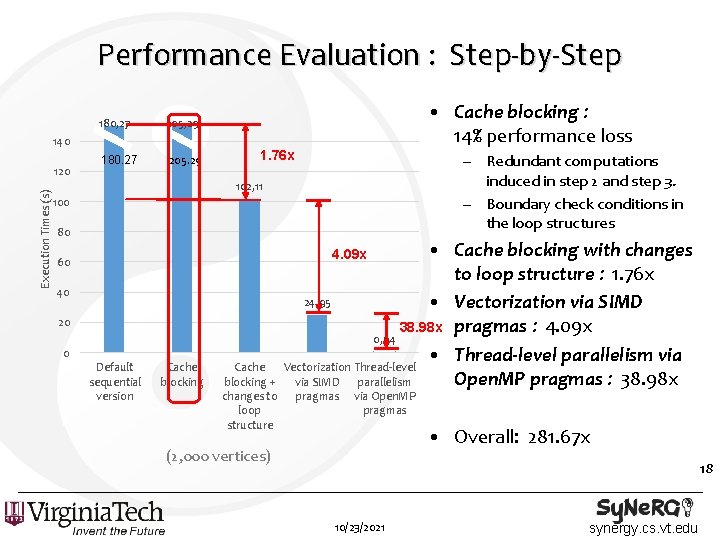

Performance Evaluation : Step-by-Step 180, 27 205, 29 180. 27 205. 29 140 Execution Times (s) 120 • Cache blocking : 14% performance loss 1. 76 x – Redundant computations induced in step 2 and step 3. – Boundary check conditions in the loop structures 102, 11 100 80 • Cache blocking with changes to loop structure : 1. 76 x 24, 95 • Vectorization via SIMD 38. 98 x pragmas : 4. 09 x 0, 64 • Thread-level parallelism via Vectorization Thread-level Open. MP pragmas : 38. 98 x via SIMD parallelism 4. 09 x 60 40 20 0 Default sequential version Cache blocking + changes to loop structure pragmas via Open. MP pragmas • Overall: 281. 67 x (2, 000 vertices) 18 10/23/2021 synergy. cs. vt. edu

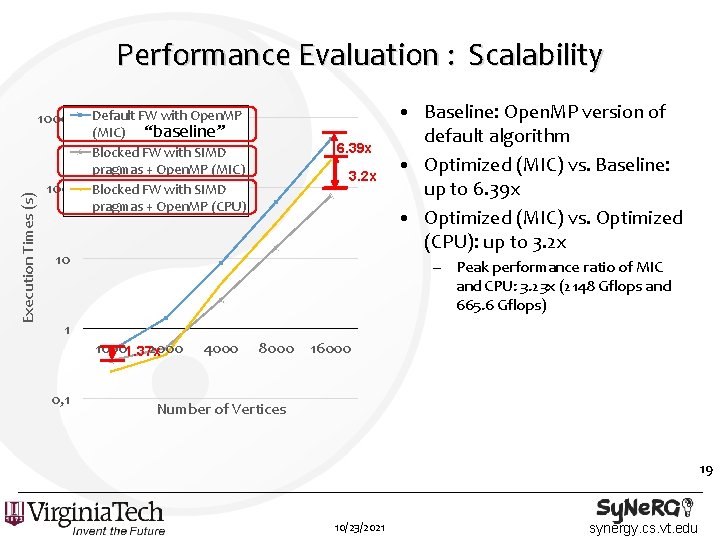

Performance Evaluation : Scalability Execution Times (s) 1000 100 Default FW with Open. MP (MIC) “baseline” Blocked FW with SIMD pragmas + Open. MP (MIC) Blocked FW with SIMD pragmas + Open. MP (CPU) 6. 39 x 3. 2 x 10 • Baseline: Open. MP version of default algorithm • Optimized (MIC) vs. Baseline: up to 6. 39 x • Optimized (MIC) vs. Optimized (CPU): up to 3. 2 x – Peak performance ratio of MIC and CPU: 3. 23 x (2148 Gflops and 665. 6 Gflops) 1 10001. 37 x 2000 0, 1 4000 8000 16000 Number of Vertices 19 10/23/2021 synergy. cs. vt. edu

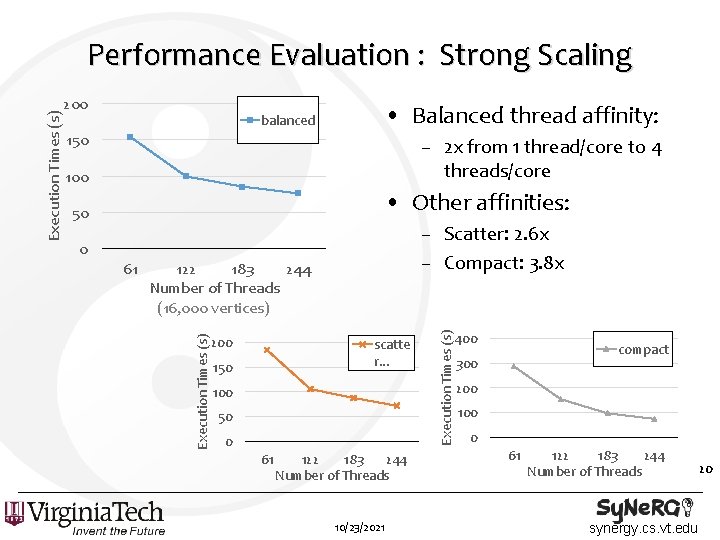

200 • Balanced thread affinity: balanced 150 – 2 x from 1 thread/core to 4 threads/core 100 • Other affinities: 50 61 122 183 244 Number of Threads (16, 000 vertices) 200 scatte r. . . 150 100 50 0 61 122 183 244 Number of Threads 10/23/2021 Execution Times (s) – Scatter: 2. 6 x – Compact: 3. 8 x 0 Execution Times (s) Performance Evaluation : Strong Scaling 400 compact 300 200 100 0 61 122 183 244 Number of Threads synergy. cs. vt. edu 20

Conclusion • CPU programs can be recompiled and directly run on Intel Xeon Phi, but achieving optimized performance requires a considerable effort. – Considerations: Performance, programmability, and portability • We use directive-based optimizations and certain algorithmic changes to achieve significant performance gains for the Floyd-Warshall algorithm as a case study. – 6. 4 x speedup over a default Open. MP version of Floyd-Warshall on Xeon Phi. – 3. 2 x speedup over a 12 -core multicore CPU (Sandy Bridge). Thanks! Questions? 21 10/23/2021 synergy. cs. vt. edu

- Slides: 21