Decision trees MARIO REGIN What is a decision

- Slides: 25

Decision trees ◤ MARIO REGIN

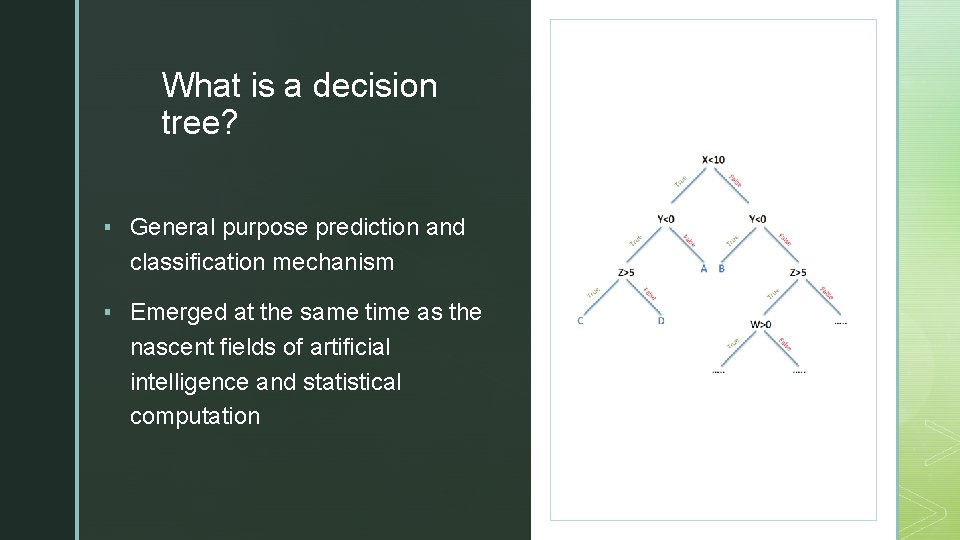

◤ What is a decision tree? ▪ General purpose prediction and classification mechanism ▪ Emerged at the same time as the nascent fields of artificial intelligence and statistical computation

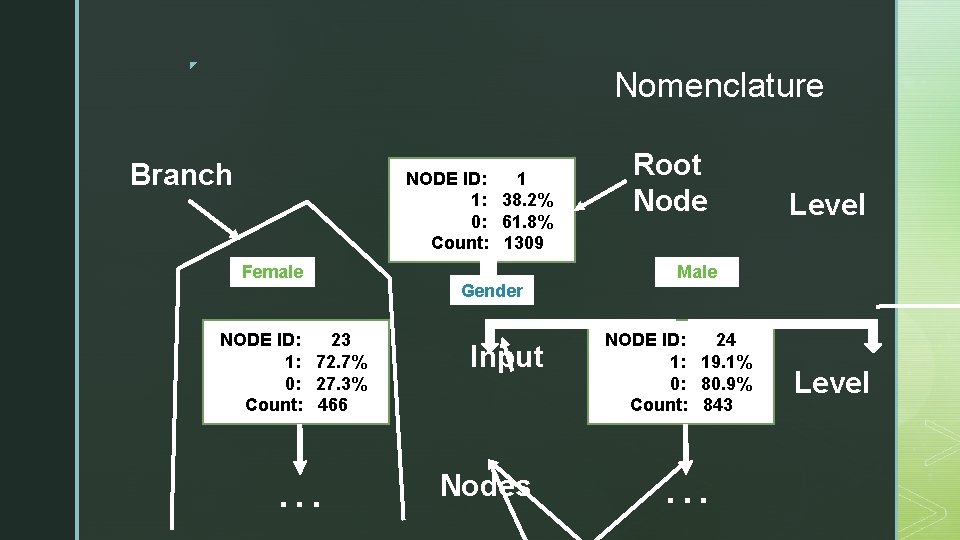

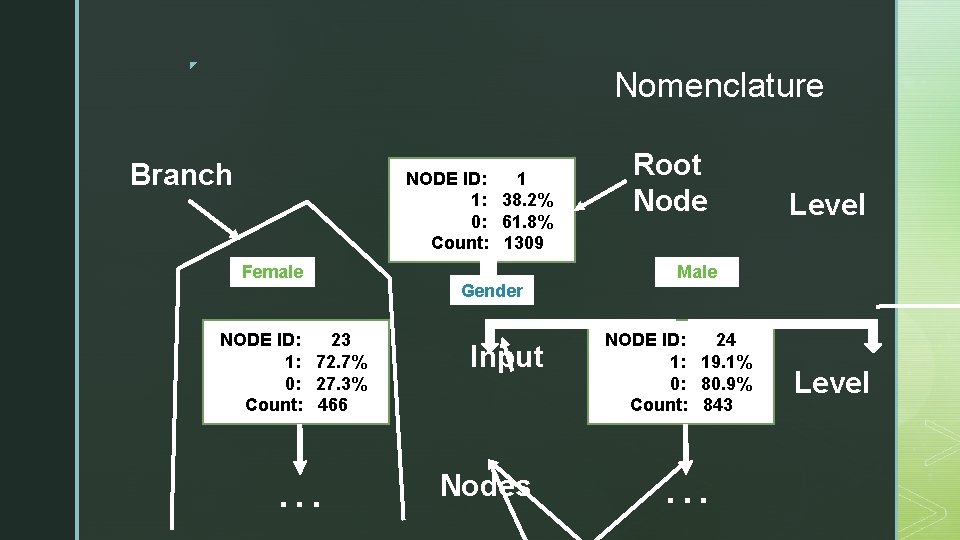

◤ Nomenclature Branch NODE ID: 1 1: 38. 2% 0: 61. 8% Count: 1309 Female NODE ID: 23 1: 72. 7% 0: 27. 3% Count: 466 . . . Gender Input Nodes Root Node Level Male NODE ID: 24 1: 19. 1% 0: 80. 9% Count: 843 . . . Level

◤ Main Characteristic ▪ Recursive subsetting of a target field of data according to the values of associated input fields or predictors to create partitions, and associated descendent data subsets, that contain progressively similar intra-node target values and progressively dissimilar inter-node values at any given level of the tree. ▪ In other words, decision trees reduce the disorder of the data as we go from higher to lower levels of the tree.

◤ Creating a Decision Tree ▪ 1. - Create the root node ▪ 2. - Search through the data set to discover the best partitioning input for the current node. ▪ 3. - Use the best input to partition the current node to form branches. ▪ 4. - Repeat steps 2 and 3 until one of more possible stop conditions are met.

◤ Best Input ▪ The selection of the ‘best’ input field is an open subject of active research. ▪ Decision trees allow for a variety of computational approaches to input selection. ▪ Some possible Approaches to select the best input: ▪ High performance predictive model approach. ▪ Relationship analysis approach.

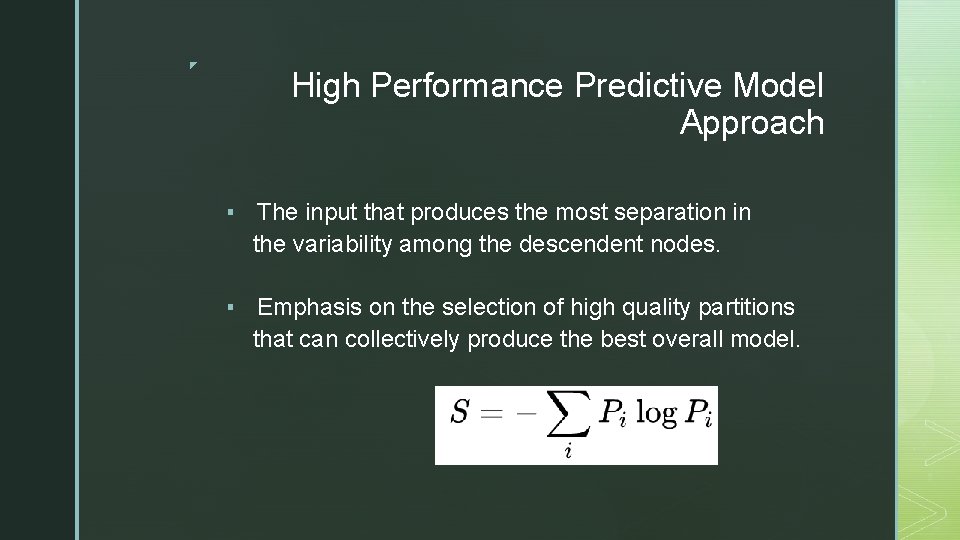

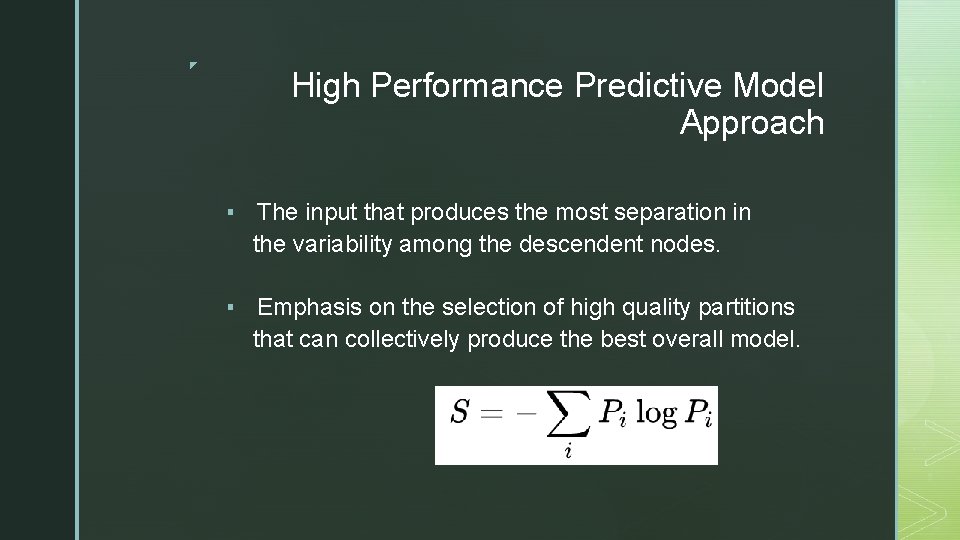

◤ High Performance Predictive Model Approach ▪ The input that produces the most separation in the variability among the descendent nodes. ▪ Emphasis on the selection of high quality partitions that can collectively produce the best overall model.

◤ Relationship Analysis Approach ▪ Guide the branching in order to analyst, discover, support or confirm the conditional relations that are assumed to exist among the various inputs and the component nodes that they produce. ▪ Emphasis on analyzing the interaction in the formation of the tree

◤ Stopping Rule ▪ Generally, stopping rules consist of thresholds on diminishing returns (in terms of test statistics) or in a diminishing supply of training cases (minimum acceptable number of observations in a node).

◤ Tree Construction

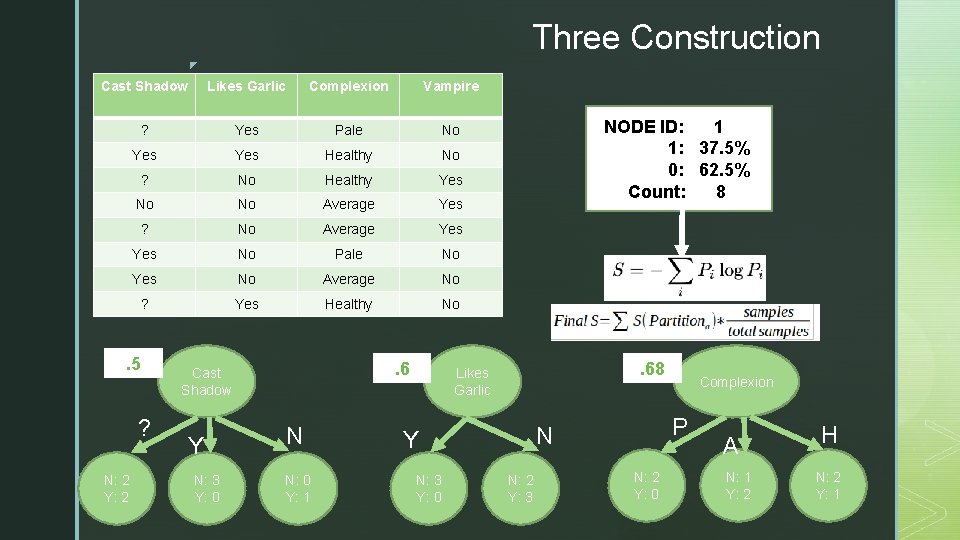

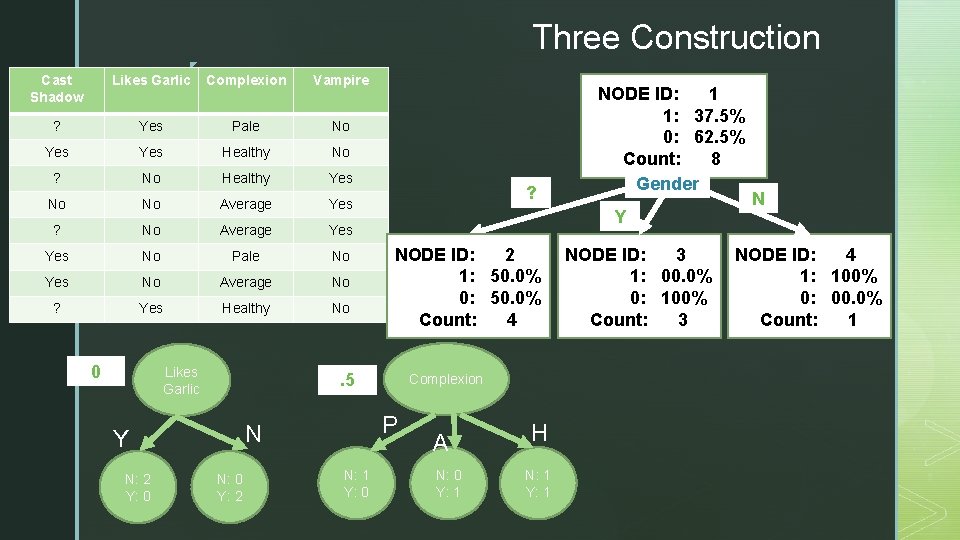

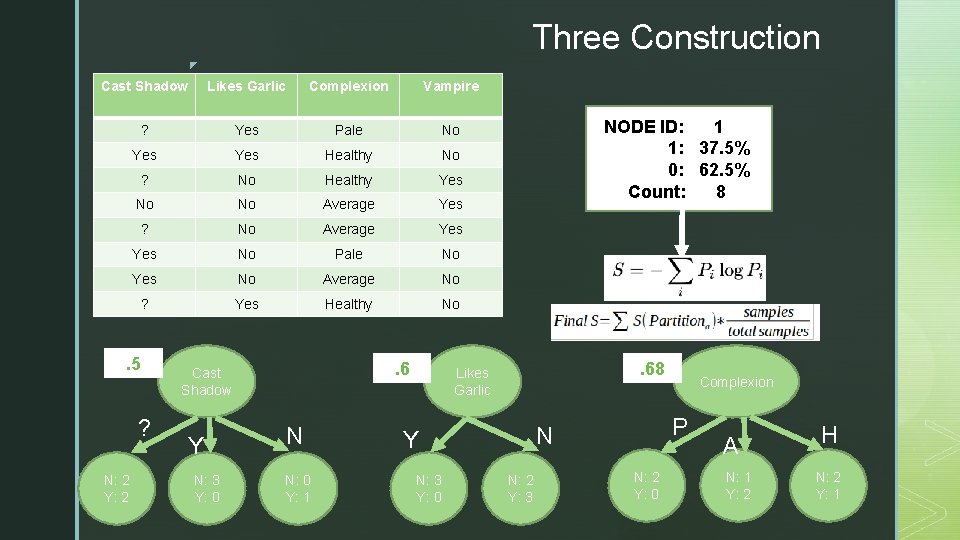

Three Construction ◤ Cast Shadow Likes Garlic Complexion Vampire ? Yes Pale No Yes Healthy No ? No Healthy Yes No No Average Yes ? No Average Yes No Pale No Yes No Average No ? Yes Healthy No . 5 ? N: 2 Y: 2 . 6 Cast Shadow Y N: 3 Y: 0 N N: 0 Y: 1 NODE ID: 1 1: 37. 5% 0: 62. 5% Count: 8 ? . 68 Likes Garlic N: 3 Y: 0 P N Y N: 2 Y: 3 Complexion N: 2 Y: 0 A N: 1 Y: 2 H N: 2 Y: 1

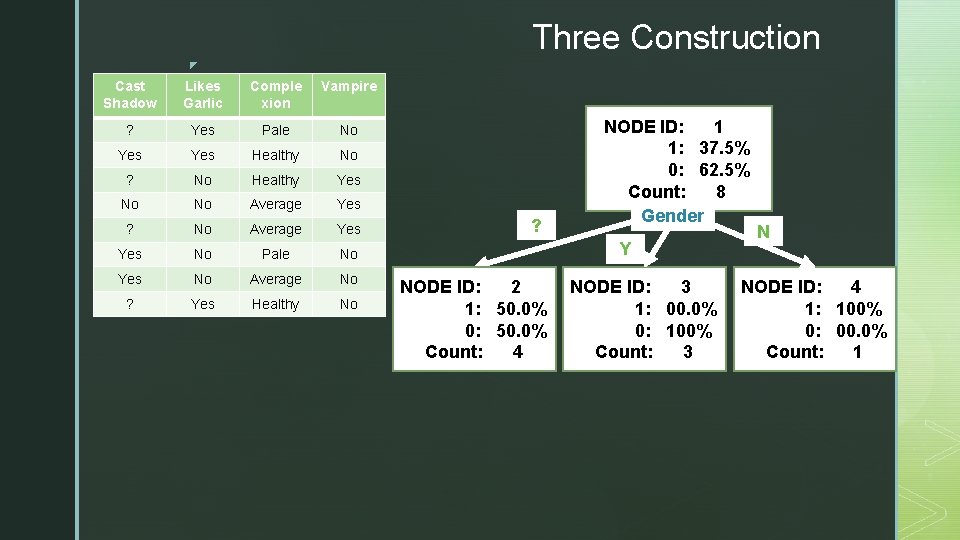

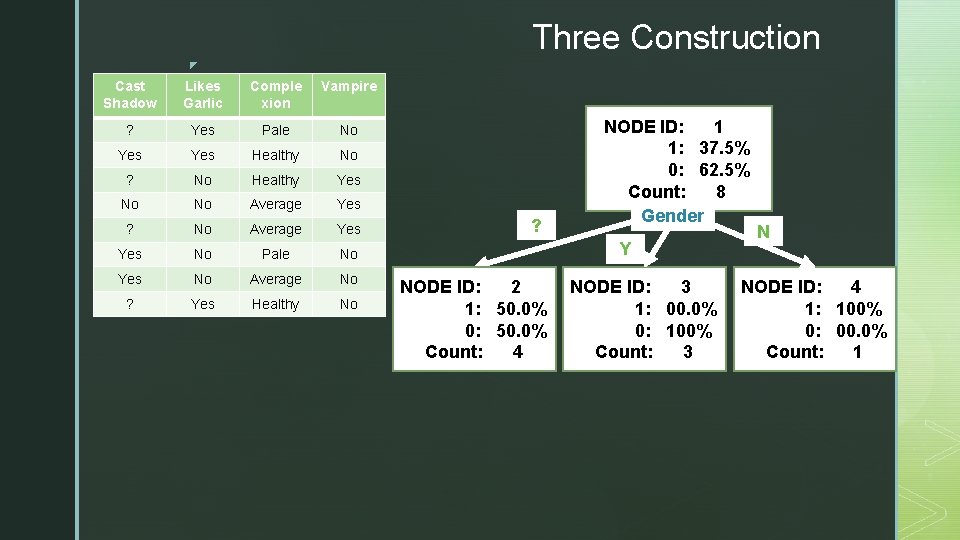

Three Construction ◤ Cast Shadow Likes Garlic Comple xion Vampire ? Yes Pale No Yes Healthy No ? No Healthy Yes No No Average Yes ? No Average Yes No Pale No Yes No Average No ? Yes Healthy No ? NODE ID: 1 1: 37. 5% 0: 62. 5% Count: 8 Gender Y NODE ID: 2 1: 50. 0% 0: 50. 0% Count: 4 NODE ID: 3 1: 00. 0% 0: 100% Count: 3 N NODE ID: 4 1: 100% 0: 00. 0% Count: 1

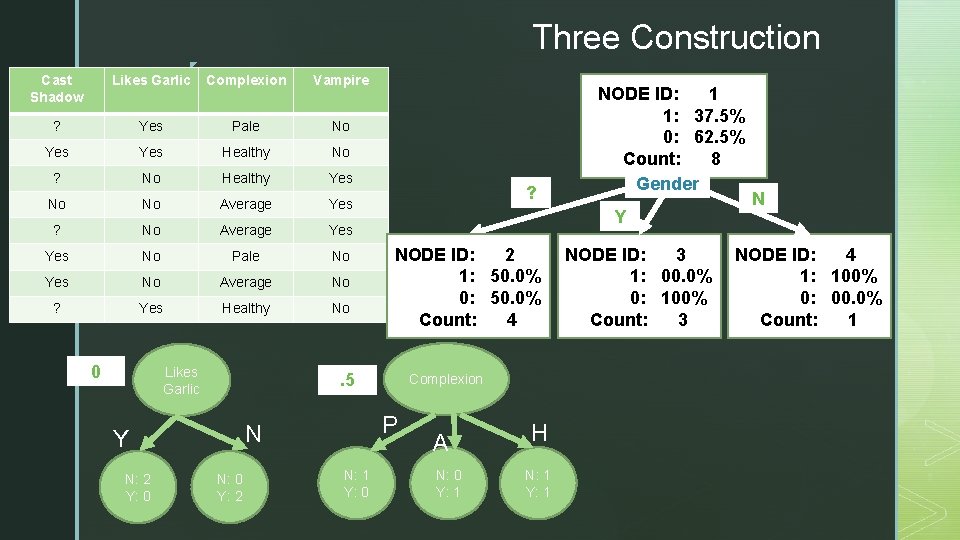

◤ Three Construction Cast Shadow Likes Garlic Complexion Vampire ? Yes Pale No Yes Healthy No ? No Healthy Yes No No Average Yes ? No Average Yes No Pale No Yes No Average No ? Yes Healthy No 0 Likes Garlic Y NODE ID: 2 1: 50. 0% 0: 50. 0% Count: 4 . 5 N: 0 Y: 2 Complexion P N Y N: 2 Y: 0 ? N: 1 Y: 0 NODE ID: 1 1: 37. 5% 0: 62. 5% Count: 8 Gender A N: 0 Y: 1 H N: 1 Y: 1 NODE ID: 3 1: 00. 0% 0: 100% Count: 3 N NODE ID: 4 1: 100% 0: 00. 0% Count: 1

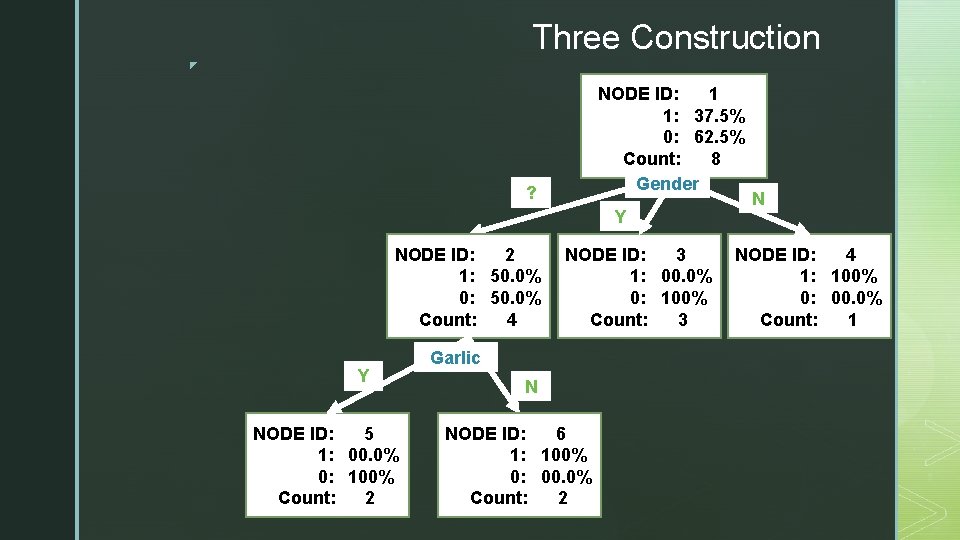

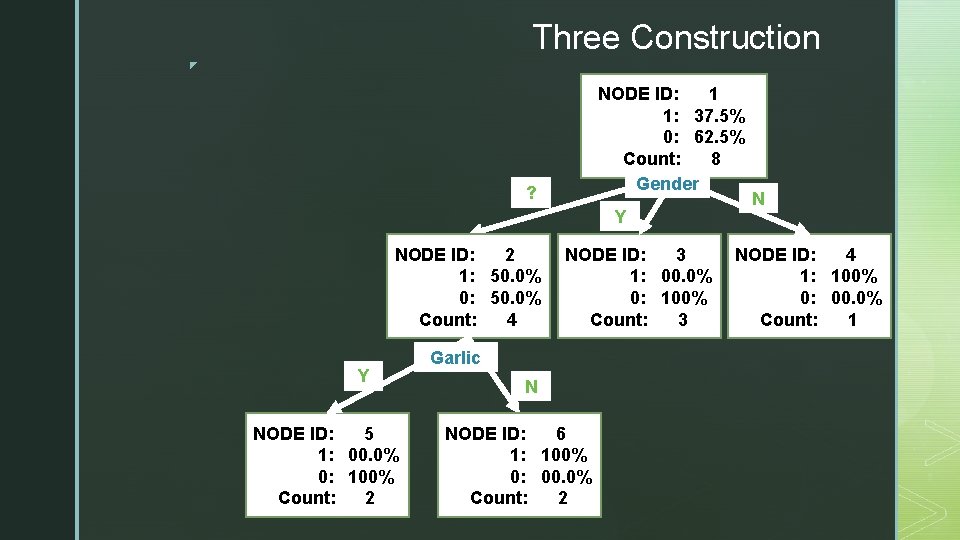

Three Construction ◤ ? NODE ID: 1 1: 37. 5% 0: 62. 5% Count: 8 Gender Y NODE ID: 2 1: 50. 0% 0: 50. 0% Count: 4 Y NODE ID: 5 1: 00. 0% 0: 100% Count: 2 NODE ID: 3 1: 00. 0% 0: 100% Count: 3 Garlic N NODE ID: 6 1: 100% 0: 00. 0% Count: 2 N NODE ID: 4 1: 100% 0: 00. 0% Count: 1

◤ Evaluation

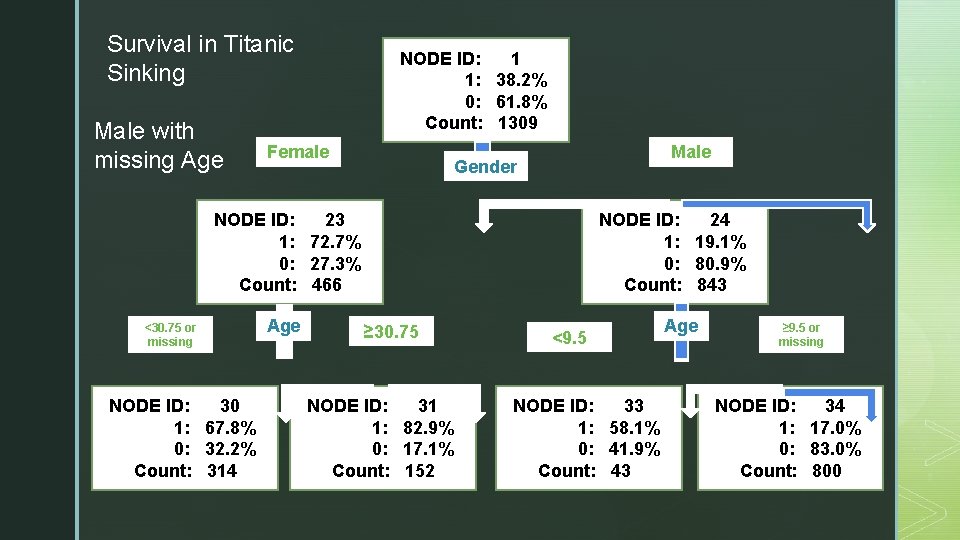

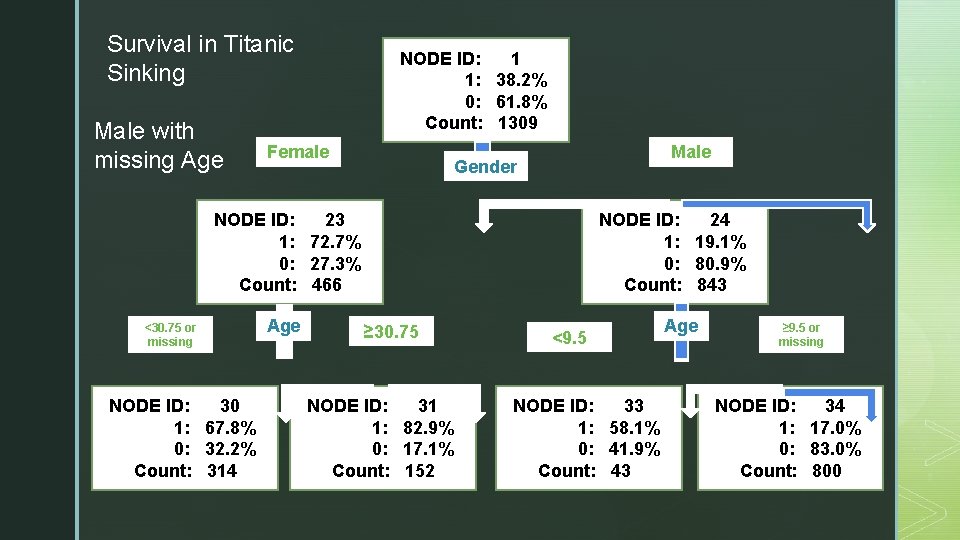

Survival in Titanic Sinking Male with missing Age NODE ID: 1 1: 38. 2% 0: 61. 8% Count: 1309 Female Male Gender NODE ID: 23 1: 72. 7% 0: 27. 3% Count: 466 <30. 75 or missing NODE ID: 30 1: 67. 8% 0: 32. 2% Count: 314 Age NODE ID: 24 1: 19. 1% 0: 80. 9% Count: 843 ≥ 30. 75 NODE ID: 31 1: 82. 9% 0: 17. 1% Count: 152 <9. 5 NODE ID: 33 1: 58. 1% 0: 41. 9% Count: 43 Age ≥ 9. 5 or missing NODE ID: 34 1: 17. 0% 0: 83. 0% Count: 800

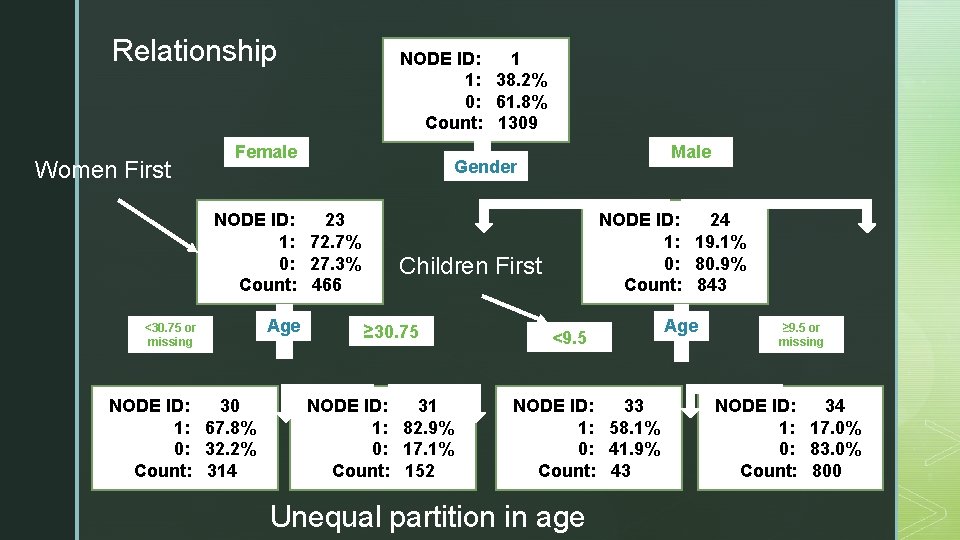

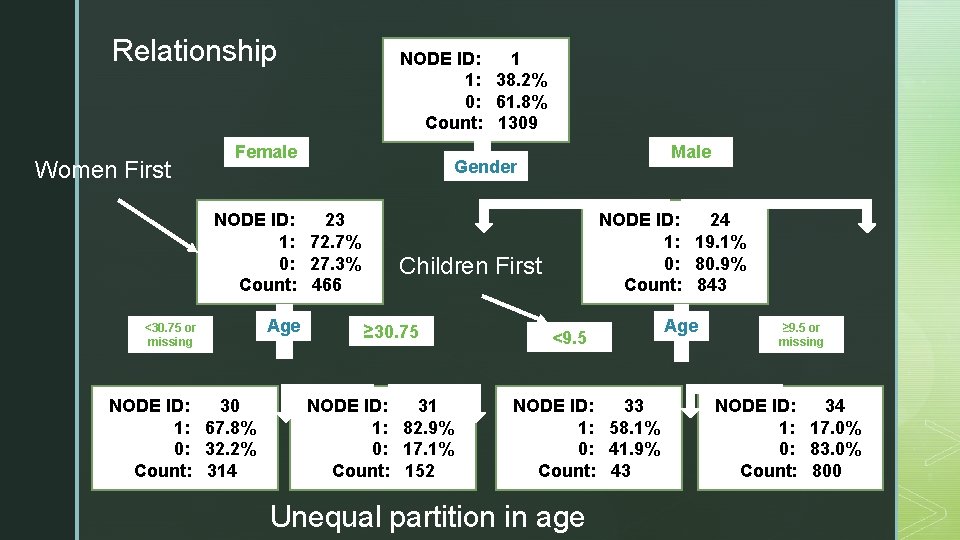

Relationship Women First NODE ID: 1 1: 38. 2% 0: 61. 8% Count: 1309 Female NODE ID: 23 1: 72. 7% 0: 27. 3% Count: 466 <30. 75 or missing NODE ID: 30 1: 67. 8% 0: 32. 2% Count: 314 Age Male Gender NODE ID: 24 1: 19. 1% 0: 80. 9% Count: 843 Children First ≥ 30. 75 NODE ID: 31 1: 82. 9% 0: 17. 1% Count: 152 <9. 5 NODE ID: 33 1: 58. 1% 0: 41. 9% Count: 43 Unequal partition in age Age ≥ 9. 5 or missing NODE ID: 34 1: 17. 0% 0: 83. 0% Count: 800

◤ Characteristics of Decision Trees ▪ Successive partitioning results in the presentation of a tree-like visual display with a top node and descendent branches. ▪ Branch partitions may be two-way or multiway branches. ▪ Partitioning fields may be nominal, ordinal, or interval measurement levels. ▪ The final result can be a class or a number

◤ Characteristics of Decision Trees ▪ Missing values. - Can be grouped with other values or have their own partition. ▪ Symmetry. - Descendent nodes can be balanced and symmetrical, employing a matching set of predictors with each level of the subtree. ▪ Asymmetry. - Descendent nodes can be unbalanced in that subnode partitions could be based on the most powerful predictor for each node.

◤ Advantages of Decision trees ▪ The created decision trees can detect and visually present contextual effects. ▪ There are easy to understand. ▪ The resulting model is a white box. ▪ Flexibility ▪ ▪ Cut-points for the same input can be different in each node. Missing values are allowed. Numerical and nominal data can be used as input. Output can be nominal or numerical.

◤ Disadvantages of Decision Trees ▪ Deep trees tend to over-fit ▪ Poor generalization

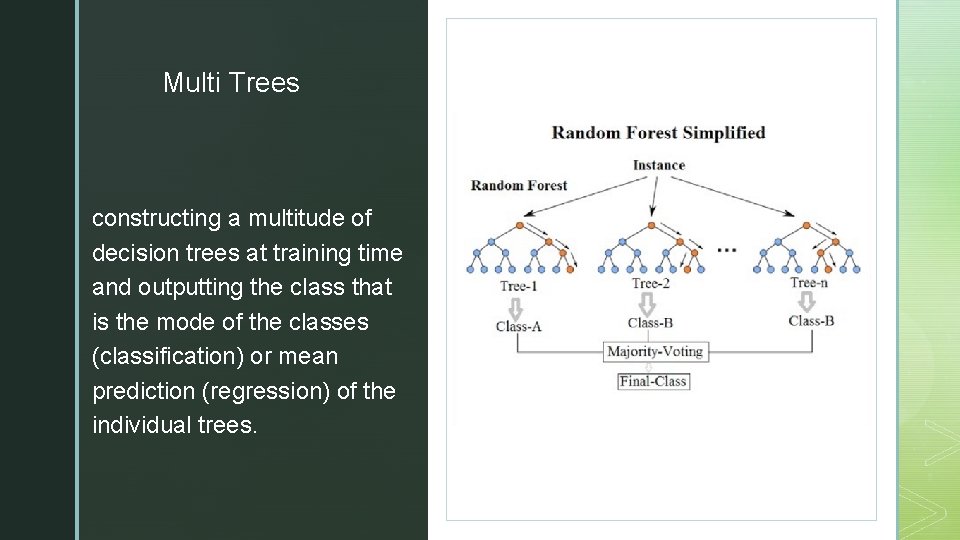

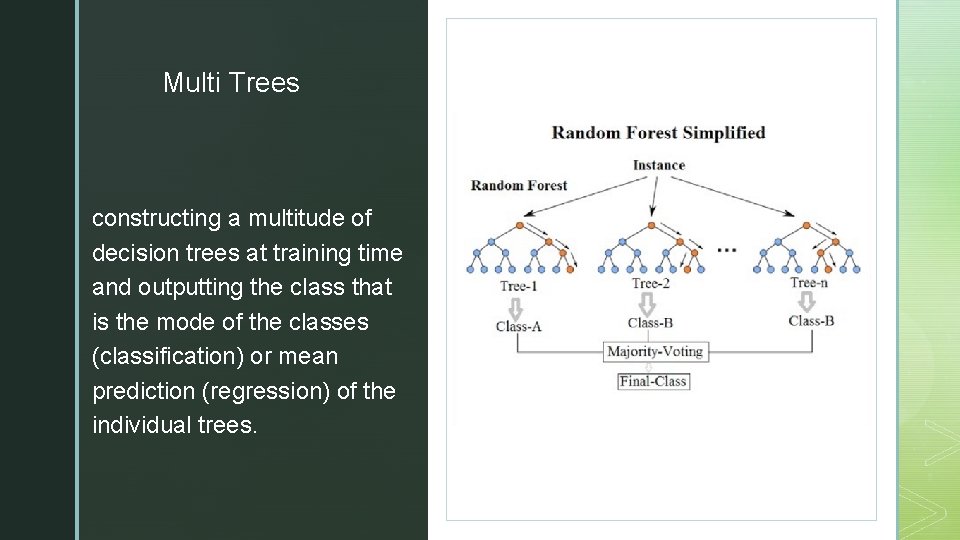

◤ Multi Trees constructing a multitude of decision trees at training time and outputting the class that is the mode of the classes (classification) or mean prediction (regression) of the individual trees.

◤ Creation Method The idea is to create multiple uncorrelated trees ▪ Select a random subset of the training set ▪ Create a tree Ti with these random subset ▪ When creating the partitions of this tree use only a random subset of the inputs to search for the best input.

◤ Evaluation ▪ If the output is a class (Classification) ▪ Evaluate the sample in all the multiple trees ▪ Each tree votes for one class ▪ The selected class is the most voted class ▪ If the output is a number (Regression) ▪ Evaluate the sample in all the multiple trees ▪ Final result is the average of each result

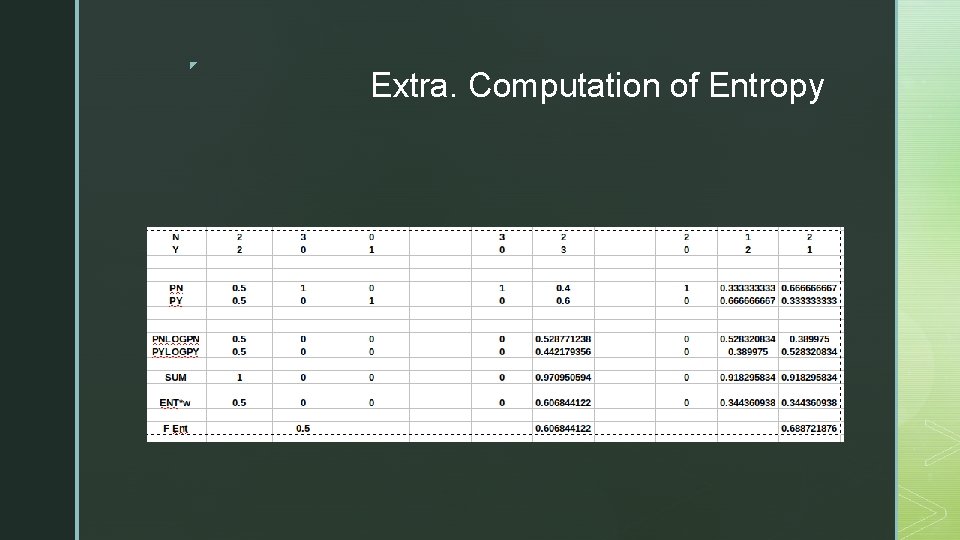

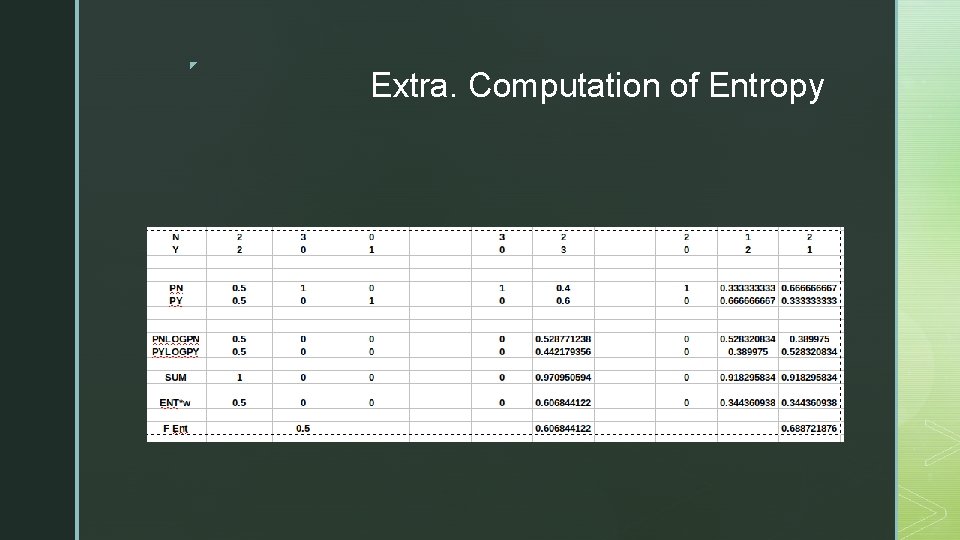

◤ Extra. Computation of Entropy