Decision Making Based on Cohort Scores for Speaker

Decision Making Based on Cohort Scores for Speaker Verification Lantian Li CSLT / RIIT, Tsinghua University lilt 13@mails. tsinghua. edu. cn Co-work with Renyu Wang, Caixia Wang and Thomas Fang Zheng IEEE APSIPA ASC, Dec. 13 -16, 2016 3/3/2021 APSIPA ASC 2016 1

Outline • Introduction • A single-score decision making • Solution by a multi-score decision making • Cohort-based decision making framework • Cohort selection • Feature design • Discriminative model training • Experiments • Conclusions 3/3/2021 APSIPA ASC 2016 2

Introduction • Speaker recognition • Decision making • The single-score decision is simple and efficient • Quite sensitive to variations (score variation) • Text contents, channel, speaking styles. • Difficulty in choosing an appropriate threshold • Error-pron decision 3/3/2021 APSIPA ASC 2016 3

Introduction • Score normalization techniques • Bayes’ theorem (Z-norm, T-norm, etc. ) • Cohort normalization • Cohort replaces the UBM • The alternative hypothesis more accurately • It is also simply averaged to normalize the target score. Still a single-score approach 3/3/2021 APSIPA ASC 2016 4

Motivations • Our idea • Cohort normalization is not just a mean average. • Cohort scores: distributions, ranks, spanning areas, etc. • A new cohort approach • Decision on the whole cohort sets • Employ a powerful discriminative model • A true and reliable multi-score decision making 3/3/2021 APSIPA ASC 2016 5

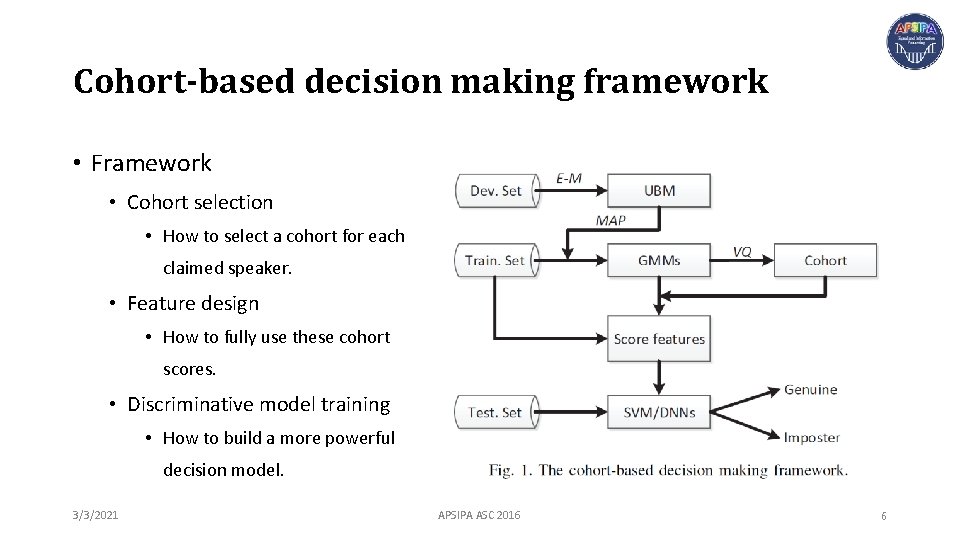

Cohort-based decision making framework • Framework • Cohort selection • How to select a cohort for each claimed speaker. • Feature design • How to fully use these cohort scores. • Discriminative model training • How to build a more powerful decision model. 3/3/2021 APSIPA ASC 2016 6

Cohort-based decision making framework • Cohort selection • Vector quantization (VQ) • K-L distance • Minimize the within-class cost • stopping criterion 3/3/2021 APSIPA ASC 2016 7

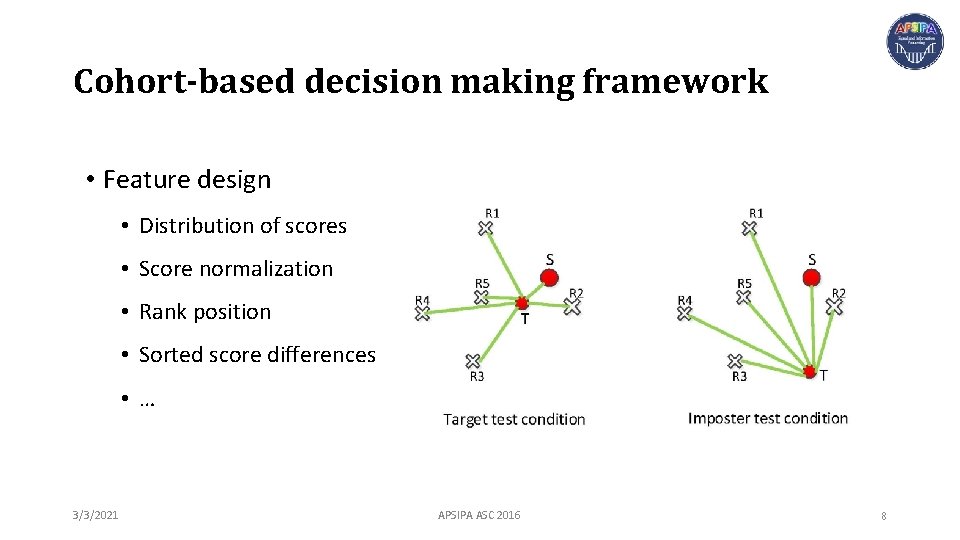

Cohort-based decision making framework • Feature design • Distribution of scores • Score normalization • Rank position • Sorted score differences • … 3/3/2021 APSIPA ASC 2016 8

Experiments • Database (‘CSLT-DSDB’: all recordings is the text-prompted digit strings. ) • Training set: • 200 females and 200 males for UBM training. • Development set: • 145 speakers including 280 enrollment and 2, 874 test utterances. • Cohort selection and feature design. • Evaluation set: • 92 speakers including 1, 220 target trials and 111, 020 non-target trials. • Experimental setups • 13 -dim MFCCs + + • 256 Gaussian components 3/3/2021 APSIPA ASC 2016 9

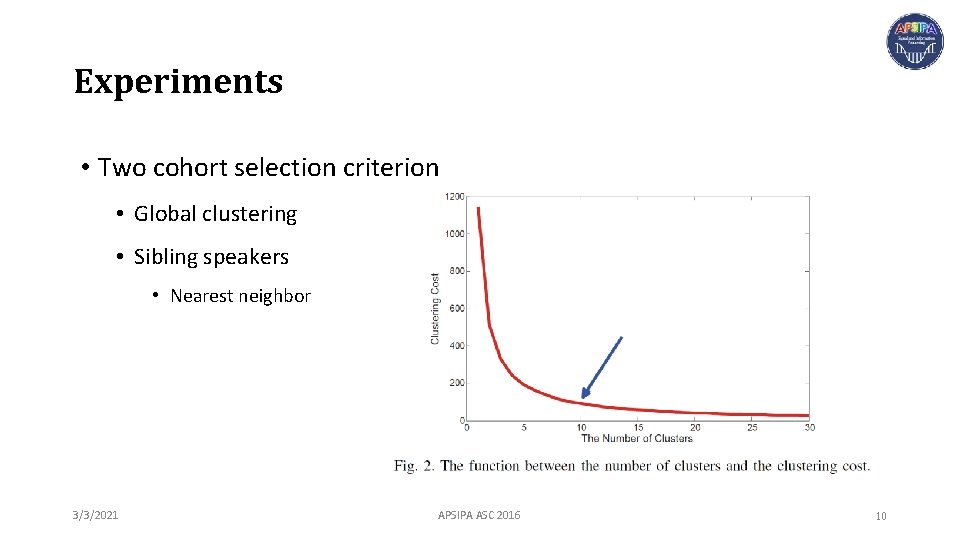

Experiments • Two cohort selection criterion • Global clustering • Sibling speakers • Nearest neighbor 3/3/2021 APSIPA ASC 2016 10

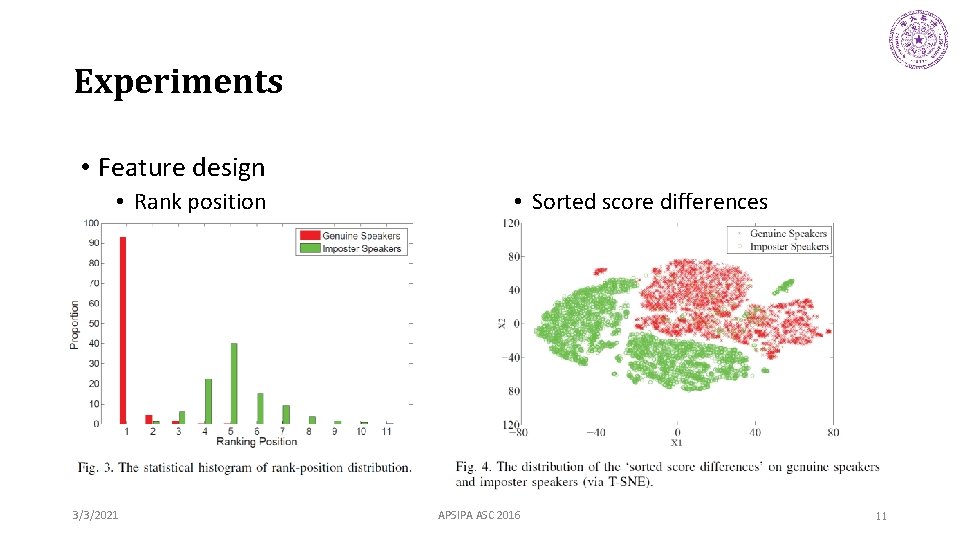

Experiments • Feature design • Rank position 3/3/2021 • Sorted score differences APSIPA ASC 2016 11

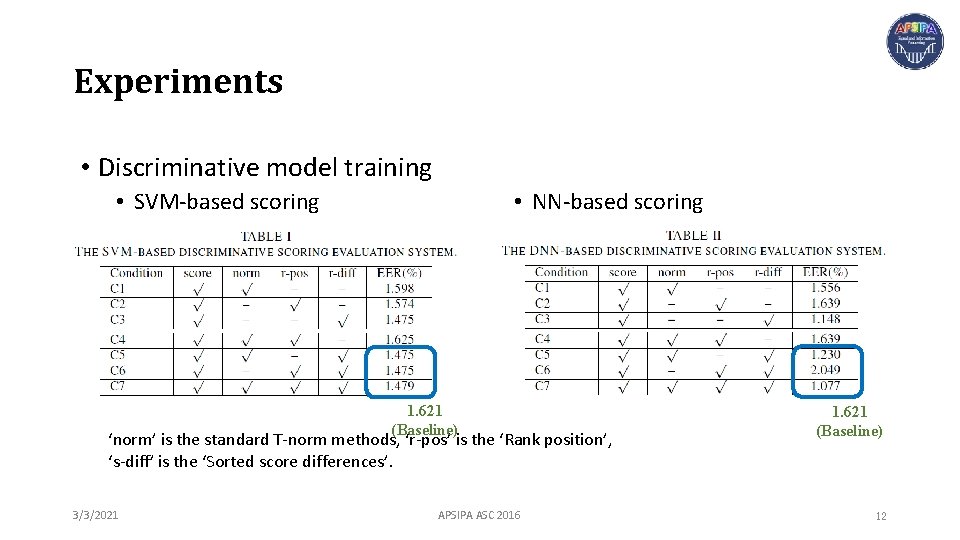

Experiments • Discriminative model training • SVM-based scoring • NN-based scoring 1. 621 (Baseline) ‘norm’ is the standard T-norm methods, ‘r-pos’ is the ‘Rank position’, ‘s-diff’ is the ‘Sorted score differences’. 3/3/2021 APSIPA ASC 2016 1. 621 (Baseline) 12

Conclusions • Decision making method • Distribution of Cohort scores • Design score-level features (‘sorted score differences’) • Powerful discriminative models • Stable and better performance than GMM-UBM baseline • Future work • Feature designing and cohort selection 3/3/2021 APSIPA ASC 2016 13

Thank you lilt. cslt. org IEEE APSIPA ASC Dec. 13 -16, 2016, Jeju, Korea 3/3/2021 APSIPA ASC 2016 14

- Slides: 14