Deciding Under Probabilistic Uncertainty Russell and Norvig Sect

Deciding Under Probabilistic Uncertainty Russell and Norvig: Sect. 17. 1 -3, Chap. 17 CS 121 – Winter 2003 Deciding Under Probabilistic Uncertainty 1

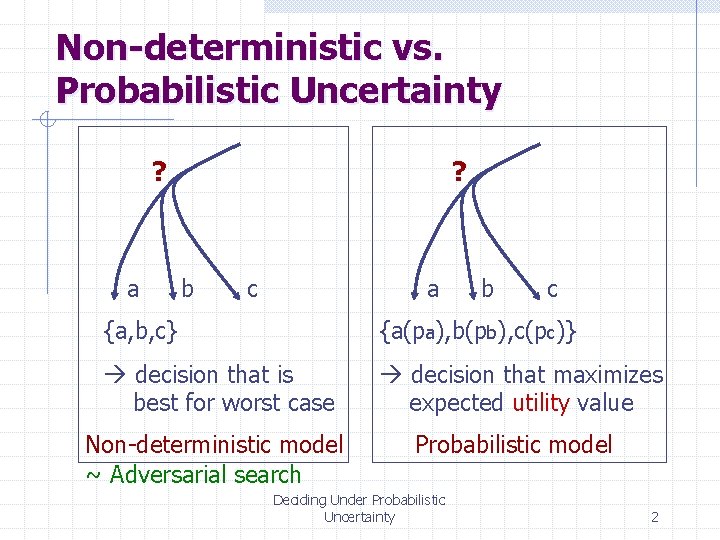

Non-deterministic vs. Probabilistic Uncertainty ? a ? b c a b c {a, b, c} {a(pa), b(pb), c(pc)} decision that is best for worst case decision that maximizes expected utility value Non-deterministic model ~ Adversarial search Probabilistic model Deciding Under Probabilistic Uncertainty 2

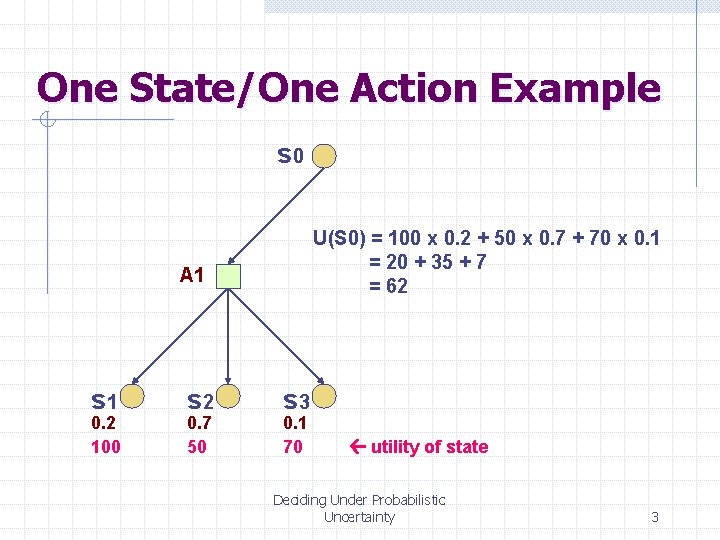

One State/One Action Example s 0 U(S 0) = 100 x 0. 2 + 50 x 0. 7 + 70 x 0. 1 = 20 + 35 + 7 = 62 A 1 s 1 0. 2 100 s 2 0. 7 50 s 3 0. 1 70 utility of state Deciding Under Probabilistic Uncertainty 3

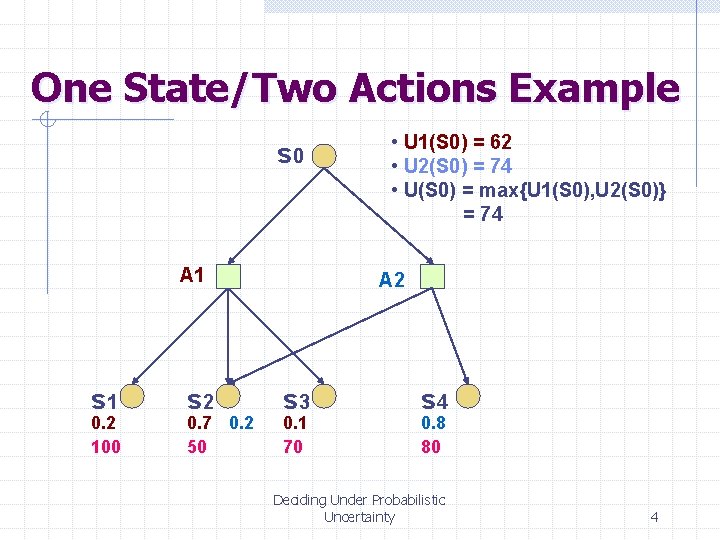

One State/Two Actions Example s 0 A 1 s 1 0. 2 100 s 2 0. 7 0. 2 50 • U 1(S 0) = 62 • U 2(S 0) = 74 • U(S 0) = max{U 1(S 0), U 2(S 0)} = 74 A 2 s 3 0. 1 70 s 4 0. 8 80 Deciding Under Probabilistic Uncertainty 4

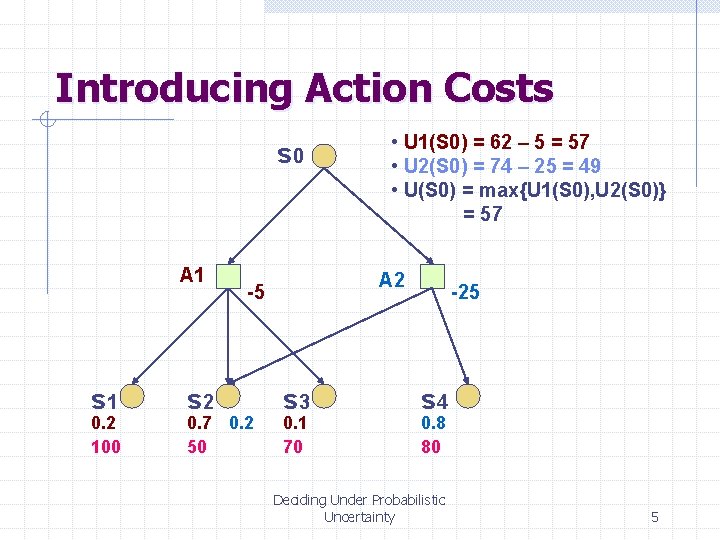

Introducing Action Costs s 0 A 1 s 1 0. 2 100 s 2 A 2 -5 0. 7 0. 2 50 • U 1(S 0) = 62 – 5 = 57 • U 2(S 0) = 74 – 25 = 49 • U(S 0) = max{U 1(S 0), U 2(S 0)} = 57 s 3 0. 1 70 -25 s 4 0. 8 80 Deciding Under Probabilistic Uncertainty 5

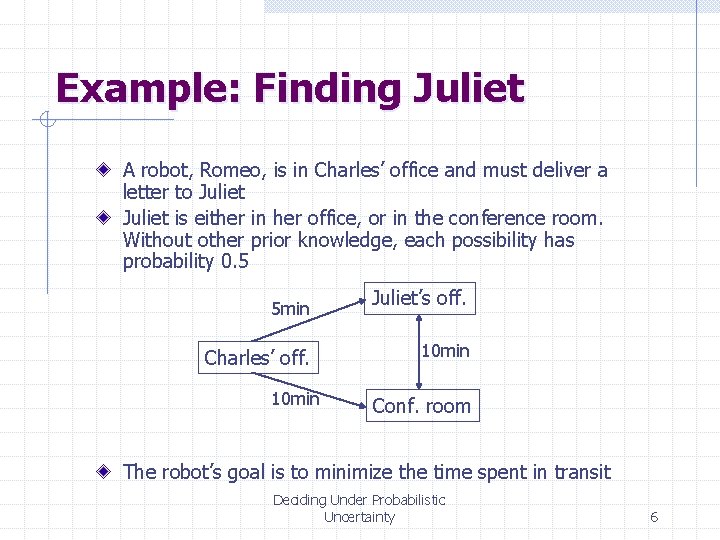

Example: Finding Juliet A robot, Romeo, is in Charles’ office and must deliver a letter to Juliet is either in her office, or in the conference room. Without other prior knowledge, each possibility has probability 0. 5 5 min Charles’ off. 10 min Juliet’s off. 10 min Conf. room The robot’s goal is to minimize the time spent in transit Deciding Under Probabilistic Uncertainty 6

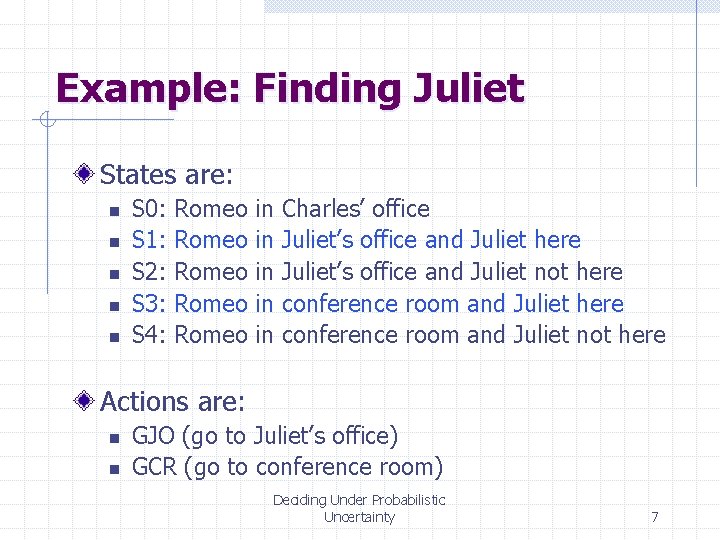

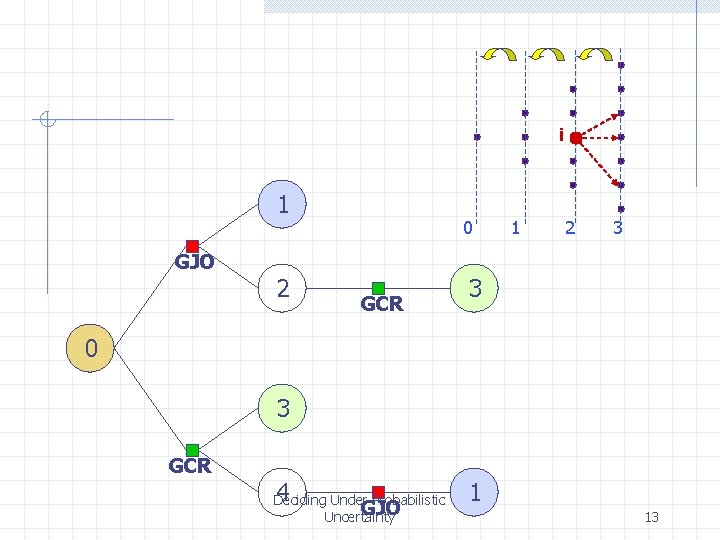

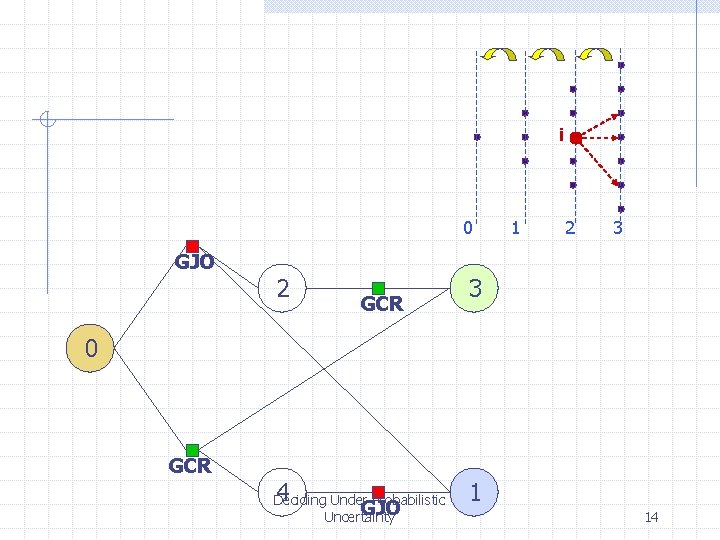

Example: Finding Juliet States are: n n n S 0: S 1: S 2: S 3: S 4: Romeo Romeo in in in Charles’ office Juliet’s office and Juliet here Juliet’s office and Juliet not here conference room and Juliet not here Actions are: n n GJO (go to Juliet’s office) GCR (go to conference room) Deciding Under Probabilistic Uncertainty 7

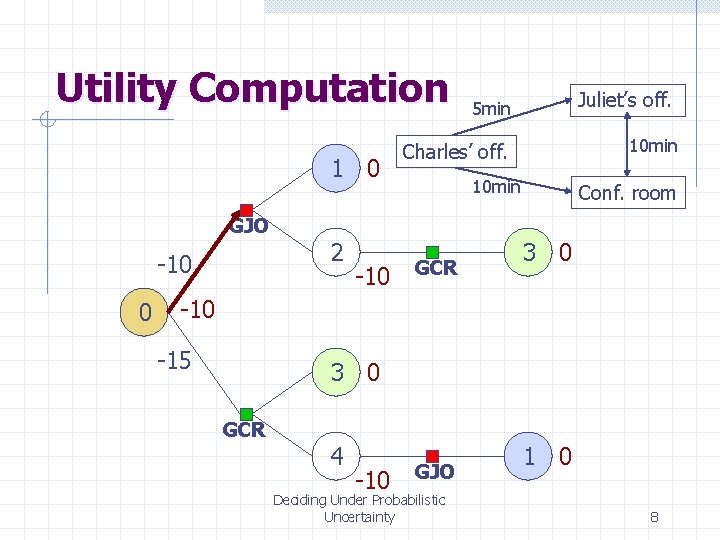

Utility Computation 1 0 GJO -10 0 2 -10 Juliet’s off. 5 min 10 min Charles’ off. 10 min GCR Conf. room 3 0 -15 3 0 GCR 4 -10 GJO Deciding Under Probabilistic Uncertainty 1 0 8

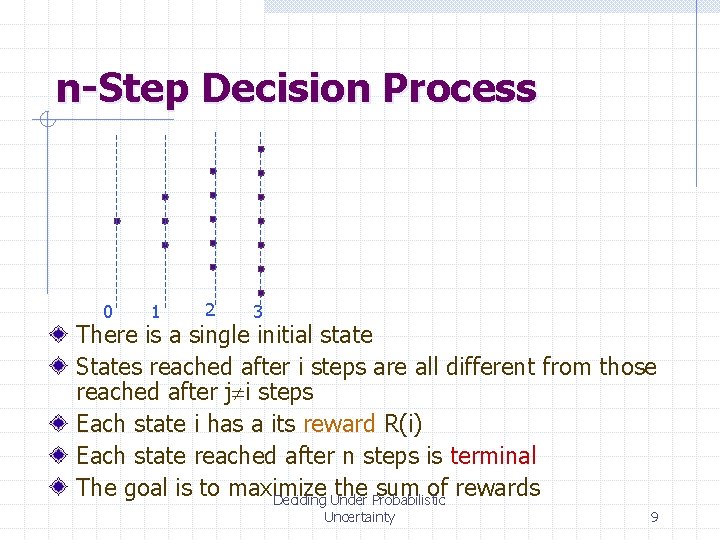

n-Step Decision Process 0 1 2 3 There is a single initial state States reached after i steps are all different from those reached after j i steps Each state i has a its reward R(i) Each state reached after n steps is terminal The goal is to maximize the sum of rewards Deciding Under Probabilistic Uncertainty 9

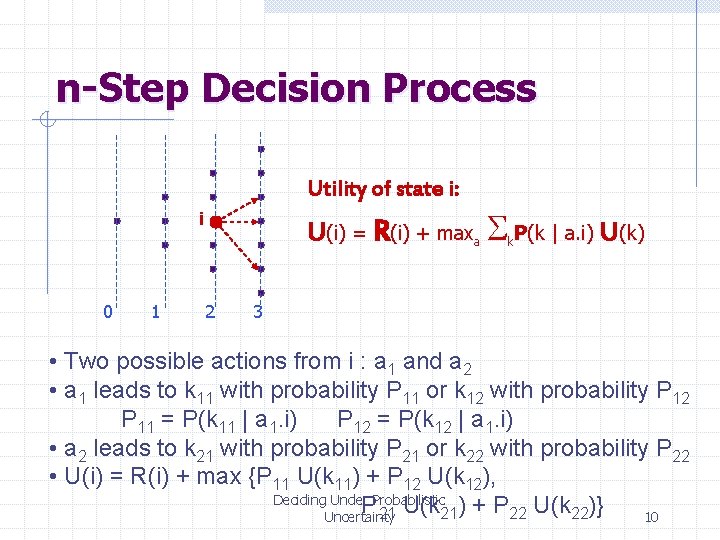

n-Step Decision Process Utility of state i: U(i) = R(i) + maxa Sk. P(k | a. i) U(k) i 0 1 2 3 • Two possible actions from i : a 1 and a 2 • a 1 leads to k 11 with probability P 11 or k 12 with probability P 12 P 11 = P(k 11 | a 1. i) P 12 = P(k 12 | a 1. i) • a 2 leads to k 21 with probability P 21 or k 22 with probability P 22 • U(i) = R(i) + max {P 11 U(k 11) + P 12 U(k 12), Deciding Under Probabilistic P 21 U(k 21) + P 22 U(k 22)} Uncertainty 10

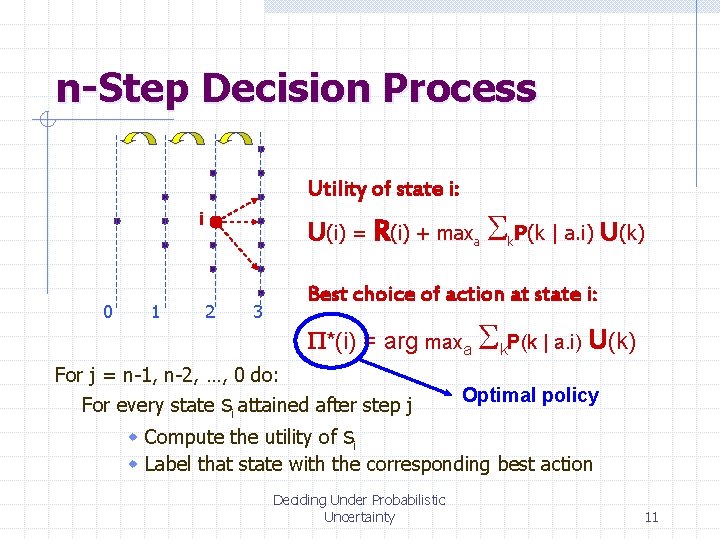

n-Step Decision Process Utility of state i: U(i) = R(i) + maxa Sk. P(k | a. i) U(k) i 0 1 2 Best choice of action at state i: 3 P*(i) = arg maxa Sk. P(k | a. i) U(k) For j = n-1, n-2, …, 0 do: si attained after step j w Compute the utility of si For every state Optimal policy w Label that state with the corresponding best action Deciding Under Probabilistic Uncertainty 11

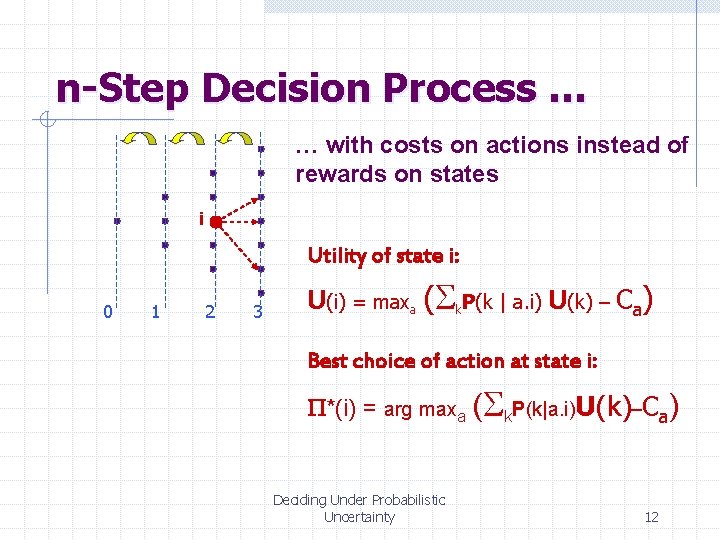

n-Step Decision Process … … with costs on actions instead of rewards on states i Utility of state i: 0 1 2 3 U(i) = maxa (Sk. P(k | a. i) U(k) – Ca) Best choice of action at state i: P*(i) = arg maxa (Sk. P(k|a. i)U(k)–Ca) Deciding Under Probabilistic Uncertainty 12

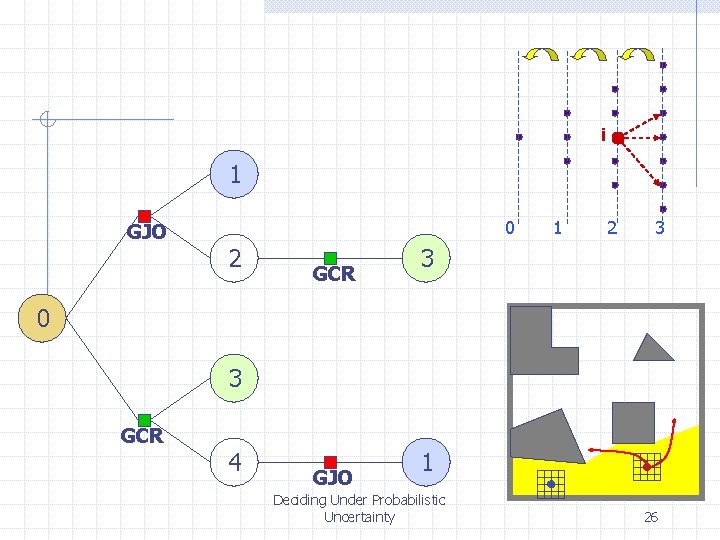

i 1 GJO 2 0 GCR 1 2 3 3 0 3 GCR 4 Deciding Under Probabilistic GJO Uncertainty 1 13

i 0 GJO 2 GCR 1 2 3 3 0 GCR 4 Deciding Under Probabilistic GJO Uncertainty 1 14

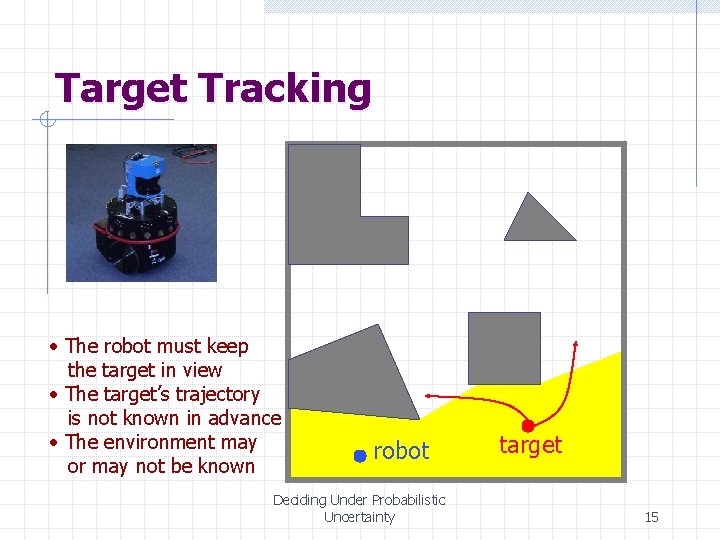

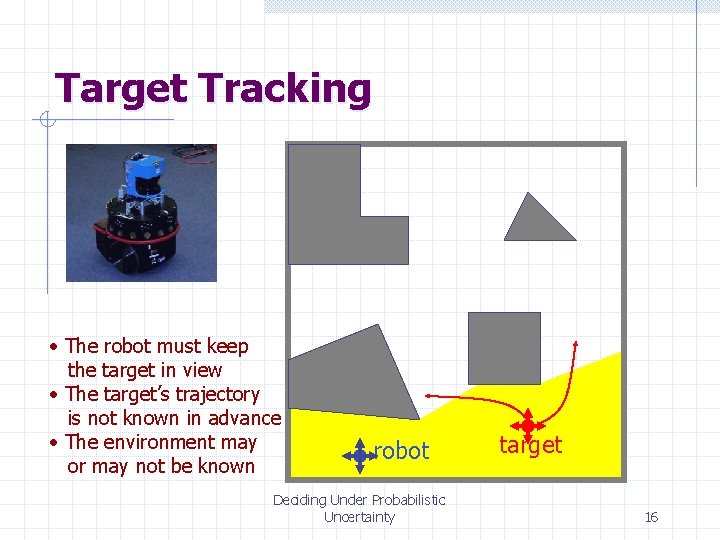

Target Tracking • The robot must keep the target in view • The target’s trajectory is not known in advance • The environment may or may not be known robot Deciding Under Probabilistic Uncertainty target 15

Target Tracking • The robot must keep the target in view • The target’s trajectory is not known in advance • The environment may or may not be known robot Deciding Under Probabilistic Uncertainty target 16

![States Are Indexed by Time ([i, j], [u, v], t) right • • • States Are Indexed by Time ([i, j], [u, v], t) right • • •](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-17.jpg)

States Are Indexed by Time ([i, j], [u, v], t) right • • • ([i+1, j], ([i+1, j], [u, v], t+1) [u-1, v], t+1) [u+1, v], t+1) [u, v-1], t+1) [u, v+1], t+1) • State = (robot-position, target-position, time) • Action = (stop, up, down, right, left) • Outcome of an action = 5 possible states, each with probability 0. 2 Each state has 25 successors Deciding Under Probabilistic Uncertainty 17

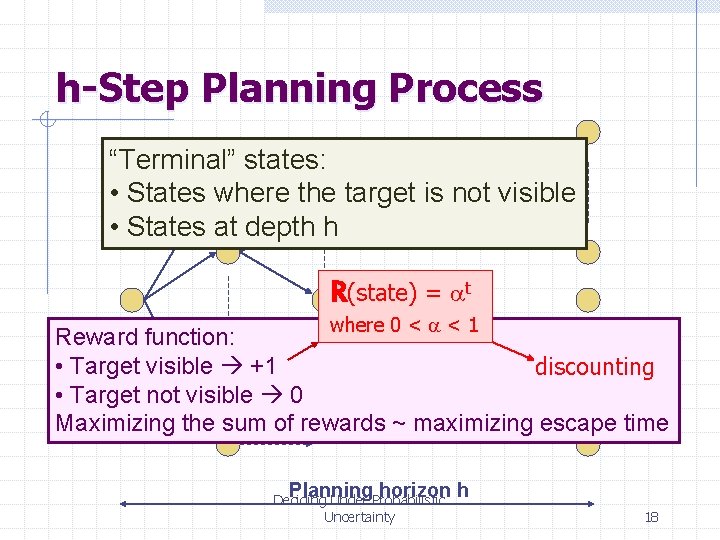

h-Step Planning Process “Terminal” states: • States where the target is not visible • States at depth h R(state) = at where 0 < a < 1 Reward function: • Target visible +1 discounting • Target not visible 0 Maximizing the sum of rewards ~ maximizing escape time Planning horizon h Deciding Under Probabilistic Uncertainty 18

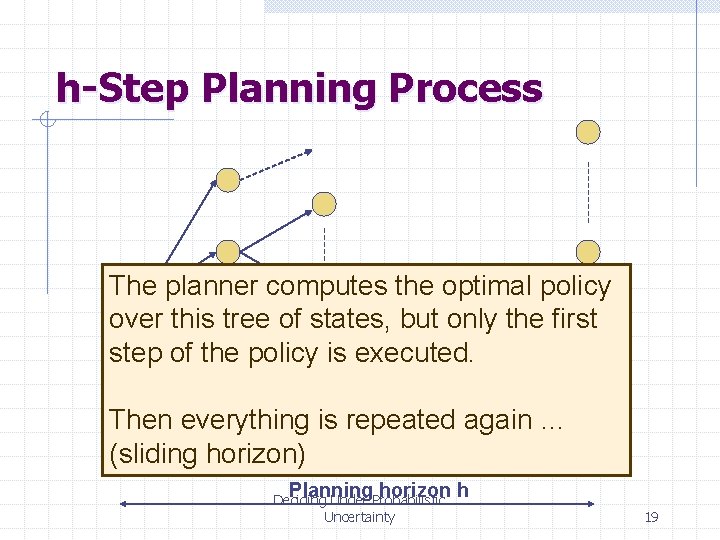

h-Step Planning Process The planner computes the optimal policy over this tree of states, but only the first step of the policy is executed. Then everything is repeated again … (sliding horizon) Planning horizon h Deciding Under Probabilistic Uncertainty 19

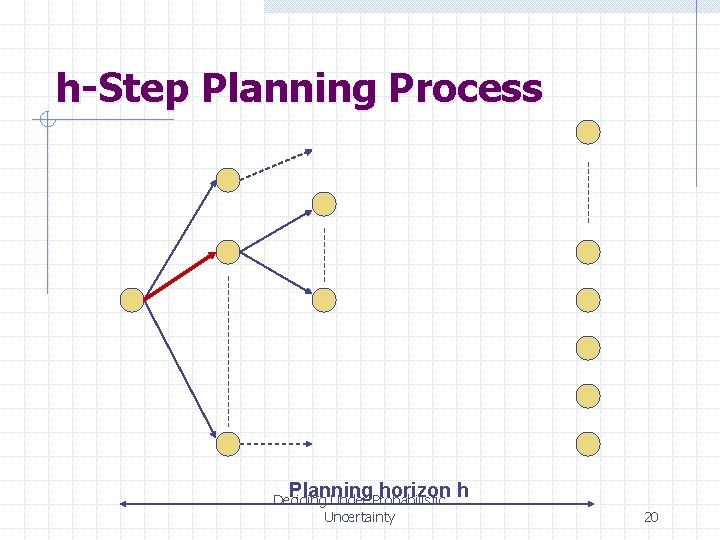

h-Step Planning Process Planning horizon h Deciding Under Probabilistic Uncertainty 20

h-Step Planning Process h is chosen such that the computation of the optimal policy over the tree can be computed in one increment of time Planning horizon h Deciding Under Probabilistic Uncertainty 21

Example With No Planner Deciding Under Probabilistic Uncertainty 22

Example With Planner Deciding Under Probabilistic Uncertainty 23

Other Example Deciding Under Probabilistic Uncertainty 24

h-Step Planning Process The optimal policy over this tree is not the optimal policy that would have been computed if a prior model of the environment had been available, along with an arbitrarily fast computer Planning horizon h Deciding Under Probabilistic Uncertainty 25

i 1 GJO 0 2 GCR 1 2 3 3 0 3 GCR 4 GJO 1 Deciding Under Probabilistic Uncertainty 26

Simple Robot Navigation Problem • In each state, the possible actions are U, D, R, and L Deciding Under Probabilistic Uncertainty 27

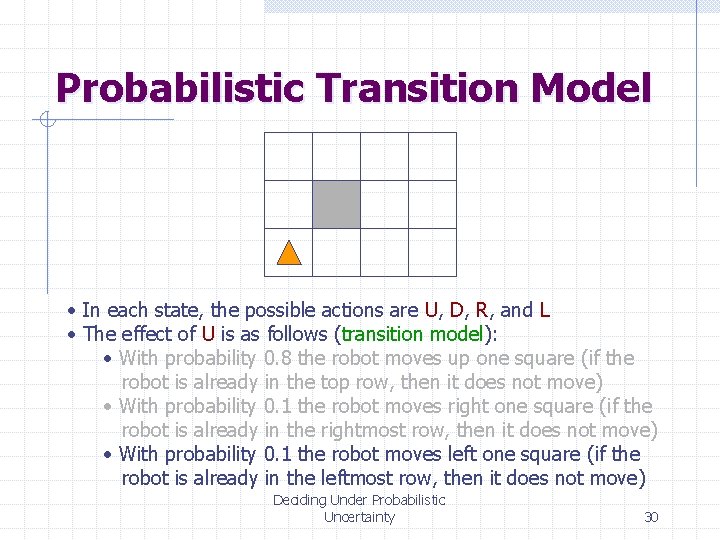

Probabilistic Transition Model • In each state, the possible actions are U, D, R, and L • The effect of U is as follows (transition model): • With probability 0. 8 the robot moves up one square (if the robot is already in the top row, then it does not move) Deciding Under Probabilistic Uncertainty 28

Probabilistic Transition Model • In each state, the possible actions are U, D, R, and L • The effect of U is as follows (transition model): • With probability 0. 8 the robot moves up one square (if the robot is already in the top row, then it does not move) • With probability 0. 1 the robot moves right one square (if the robot is already in the rightmost row, then it does not move) Deciding Under Probabilistic Uncertainty 29

Probabilistic Transition Model • In each state, the possible actions are U, D, R, and L • The effect of U is as follows (transition model): • With probability 0. 8 the robot moves up one square (if the robot is already in the top row, then it does not move) • With probability 0. 1 the robot moves right one square (if the robot is already in the rightmost row, then it does not move) • With probability 0. 1 the robot moves left one square (if the robot is already in the leftmost row, then it does not move) Deciding Under Probabilistic Uncertainty 30

Markov Property The transition properties depend only on the current state, not on previous history (how that state was reached) Deciding Under Probabilistic Uncertainty 31

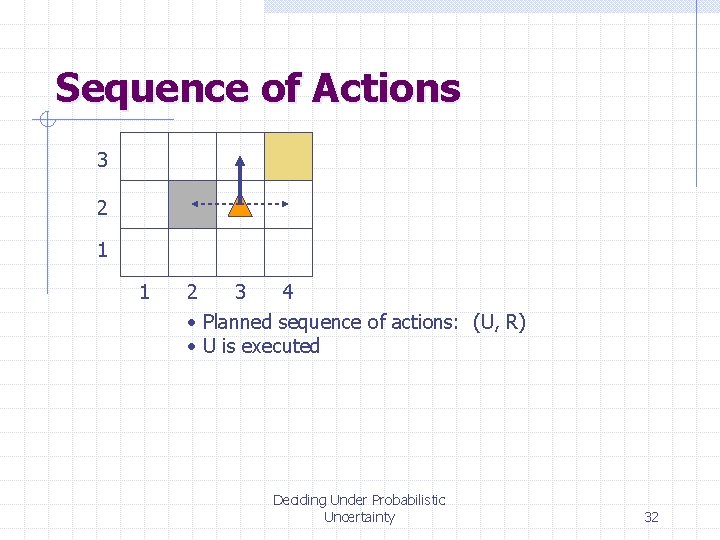

Sequence of Actions 3 2 1 1 2 3 4 • Planned sequence of actions: (U, R) • U is executed Deciding Under Probabilistic Uncertainty 32

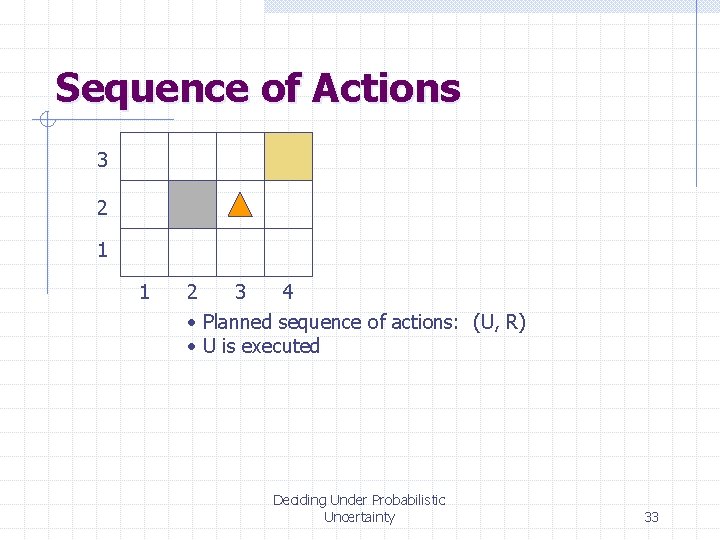

Sequence of Actions 3 2 1 1 2 3 4 • Planned sequence of actions: (U, R) • U is executed Deciding Under Probabilistic Uncertainty 33

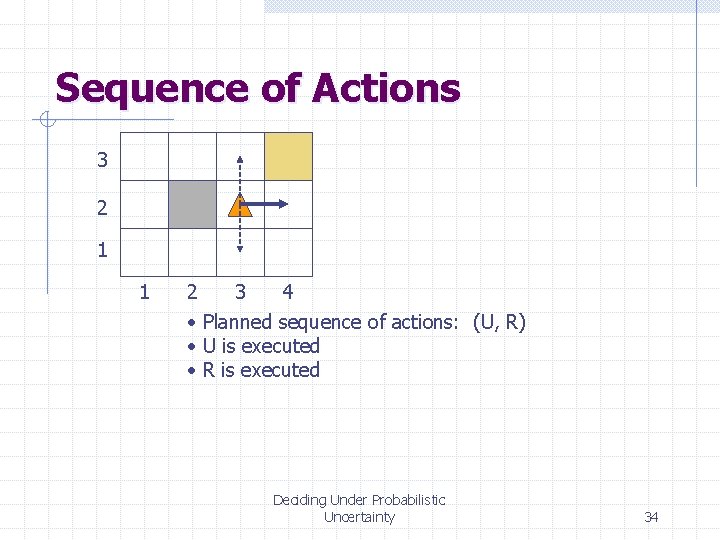

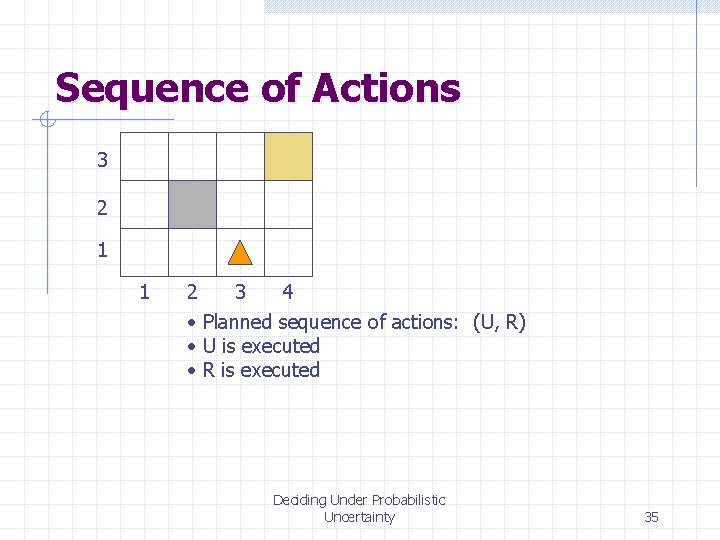

Sequence of Actions 3 2 1 1 2 3 4 • Planned sequence of actions: (U, R) • U is executed • R is executed Deciding Under Probabilistic Uncertainty 34

Sequence of Actions 3 2 1 1 2 3 4 • Planned sequence of actions: (U, R) • U is executed • R is executed Deciding Under Probabilistic Uncertainty 35

![Sequence of Actions [3, 2] 3 2 1 1 2 3 4 • Planned Sequence of Actions [3, 2] 3 2 1 1 2 3 4 • Planned](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-36.jpg)

Sequence of Actions [3, 2] 3 2 1 1 2 3 4 • Planned sequence of actions: (U, R) Deciding Under Probabilistic Uncertainty 36

![Sequence of Actions [3, 2] 3 [3, 2] [3, 3] [4, 2] 2 1 Sequence of Actions [3, 2] 3 [3, 2] [3, 3] [4, 2] 2 1](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-37.jpg)

Sequence of Actions [3, 2] 3 [3, 2] [3, 3] [4, 2] 2 1 1 2 3 4 • Planned sequence of actions: (U, R) • U is executed Deciding Under Probabilistic Uncertainty 37

![Histories [3, 2] 3 [3, 2] [3, 3] [4, 2] 2 1 1 [3, Histories [3, 2] 3 [3, 2] [3, 3] [4, 2] 2 1 1 [3,](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-38.jpg)

Histories [3, 2] 3 [3, 2] [3, 3] [4, 2] 2 1 1 [3, 1] [3, 2] [3, 3] [4, 1] [4, 2] [4, 3] 2 3 4 • Planned sequence of actions: (U, R) • U has been executed • R is executed • There are 9 possible sequences of states – called histories – and 6 possible final states for the robot! Deciding Under Probabilistic Uncertainty 38

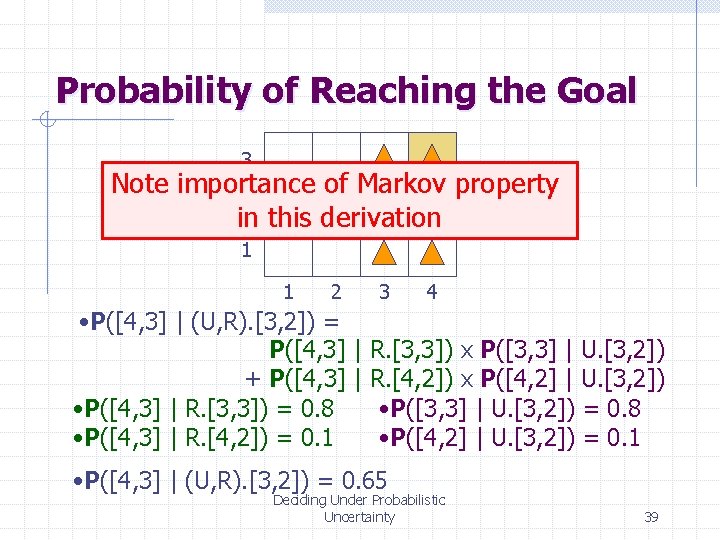

Probability of Reaching the Goal 3 Note importance of Markov property 2 in this derivation 1 1 2 3 4 • P([4, 3] | (U, R). [3, 2]) = P([4, 3] | R. [3, 3]) x P([3, 3] | U. [3, 2]) + P([4, 3] | R. [4, 2]) x P([4, 2] | U. [3, 2]) • P([4, 3] | R. [3, 3]) = 0. 8 • P([3, 3] | U. [3, 2]) = 0. 8 • P([4, 3] | R. [4, 2]) = 0. 1 • P([4, 2] | U. [3, 2]) = 0. 1 • P([4, 3] | (U, R). [3, 2]) = 0. 65 Deciding Under Probabilistic Uncertainty 39

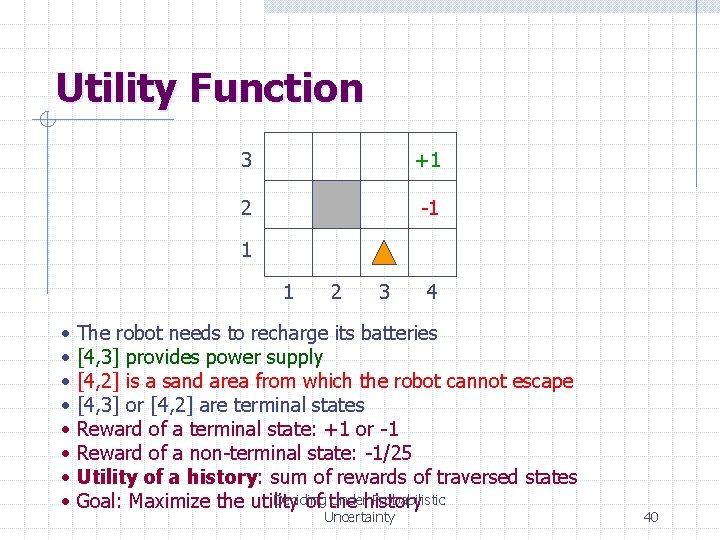

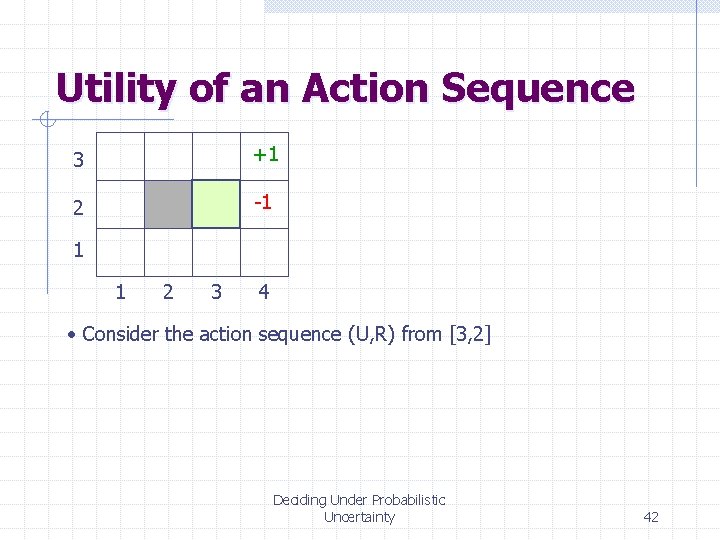

Utility Function 3 +1 2 -1 1 1 • • 2 3 4 The robot needs to recharge its batteries [4, 3] provides power supply [4, 2] is a sand area from which the robot cannot escape [4, 3] or [4, 2] are terminal states Reward of a terminal state: +1 or -1 Reward of a non-terminal state: -1/25 Utility of a history: sum of rewards of traversed states Deciding Underhistory Probabilistic Goal: Maximize the utility of the Uncertainty 40

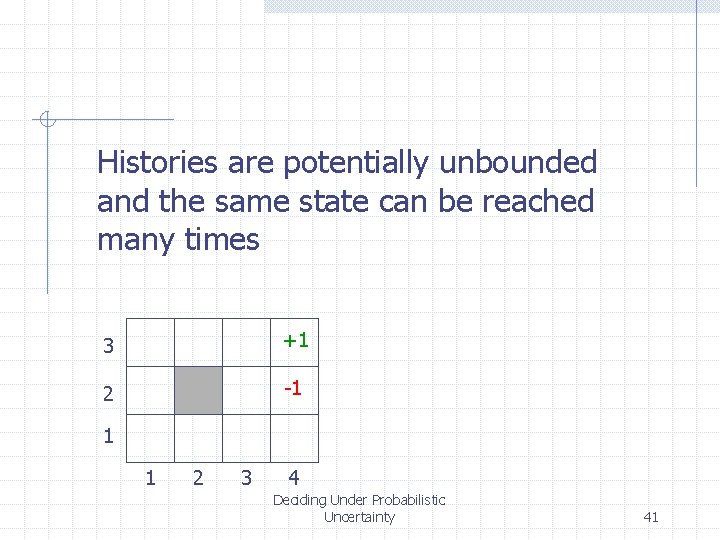

Histories are potentially unbounded and the same state can be reached many times 3 +1 2 -1 1 1 2 3 4 Deciding Under Probabilistic Uncertainty 41

Utility of an Action Sequence 3 +1 2 -1 1 1 2 3 4 • Consider the action sequence (U, R) from [3, 2] Deciding Under Probabilistic Uncertainty 42

![Utility of an Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, Utility of an Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3,](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-43.jpg)

Utility of an Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4, 2] 1 1 2 3 4 [3, 1] [3, 2] [3, 3] [4, 1] [4, 2] [4, 3] • Consider the action sequence (U, R) from [3, 2] • A run produces one among 7 possible histories, each with some probability Deciding Under Probabilistic Uncertainty 43

![Utility of an Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, Utility of an Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3,](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-44.jpg)

Utility of an Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4, 2] 1 1 2 3 4 [3, 1] [3, 2] [3, 3] [4, 1] [4, 2] [4, 3] • Consider the action sequence (U, R) from [3, 2] • A run produces one among 7 possible histories, each with some probability • The utility of the sequence is the expected utility of the histories: U = Sh. Uh P(h) Deciding Under Probabilistic Uncertainty 44

![Optimal Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4, Optimal Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4,](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-45.jpg)

Optimal Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4, 2] 1 1 2 3 4 [3, 1] [3, 2] [3, 3] [4, 1] [4, 2] [4, 3] • Consider the action sequence (U, R) from [3, 2] • A run produces one among 7 possible histories, each with some probability • The utility of the sequence is the expected utility of the histories • The optimal sequence is the one with maximal utility Deciding Under Probabilistic Uncertainty 45

![Optimal Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4, Optimal Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4,](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-46.jpg)

Optimal Action Sequence 3 +1 [3, 2] 2 -1 [3, 2] [3, 3] [4, 2] 1 1 2 3 4 [3, 1] [3, 2] [3, 3] [4, 1] [4, 2] [4, 3] • Consider the action sequence (U, R) from [3, 2] • A run produces one Except among 7 ifpossible histories, each with some NO!! sequence is executed probability blindly is(open-loop • The utility of the sequence the expectedstrategy) utility of the histories • The optimal sequence is the one with maximal utility • But is the optimal action sequence what we want to compute? Deciding Under Probabilistic Uncertainty 46

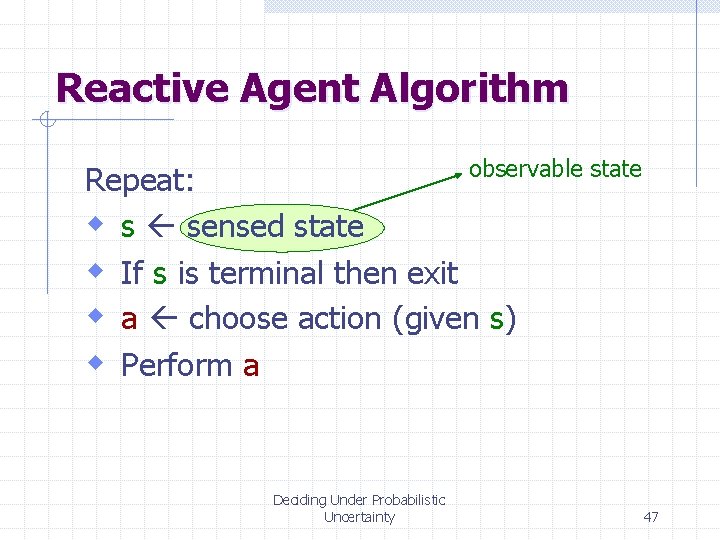

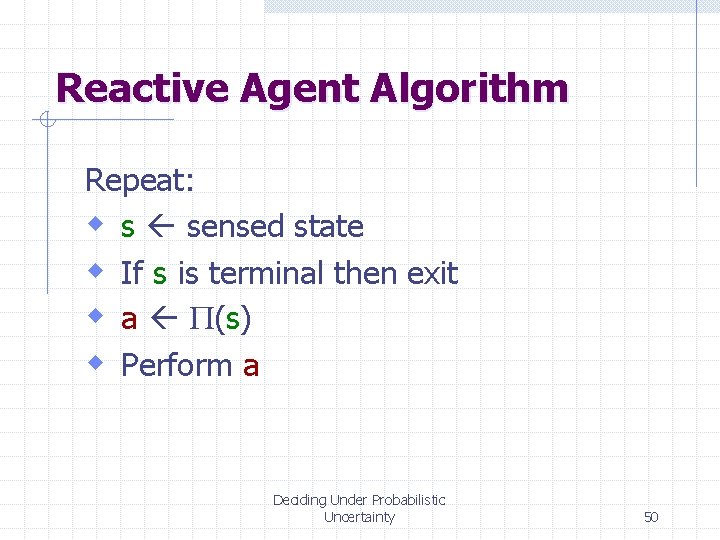

Reactive Agent Algorithm observable state Repeat: w s sensed state w If s is terminal then exit w a choose action (given s) w Perform a Deciding Under Probabilistic Uncertainty 47

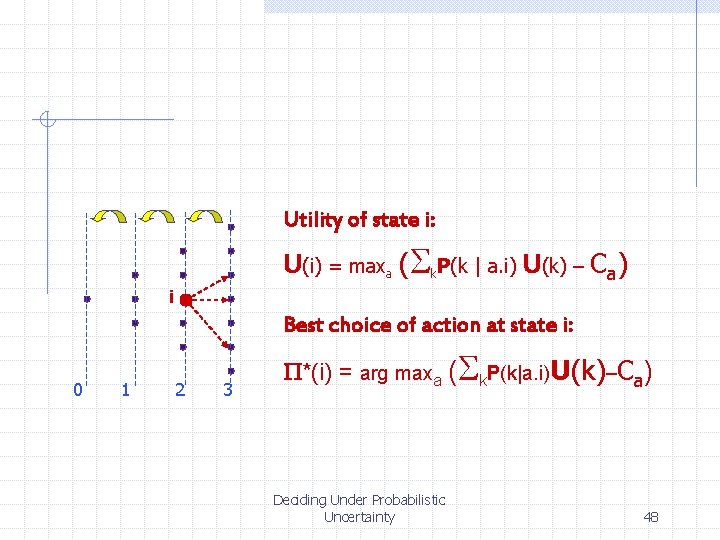

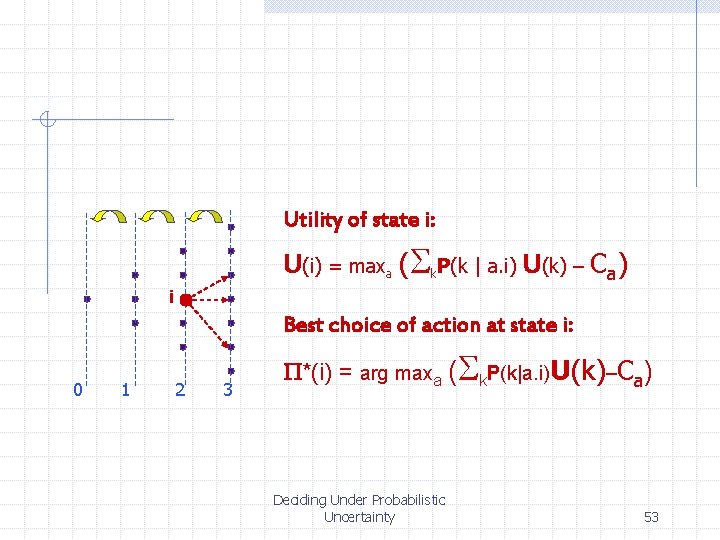

Utility of state i: U(i) = maxa (Sk. P(k | a. i) U(k) – Ca) i Best choice of action at state i: 0 1 2 3 P*(i) = arg maxa (Sk. P(k|a. i)U(k)–Ca) Deciding Under Probabilistic Uncertainty 48

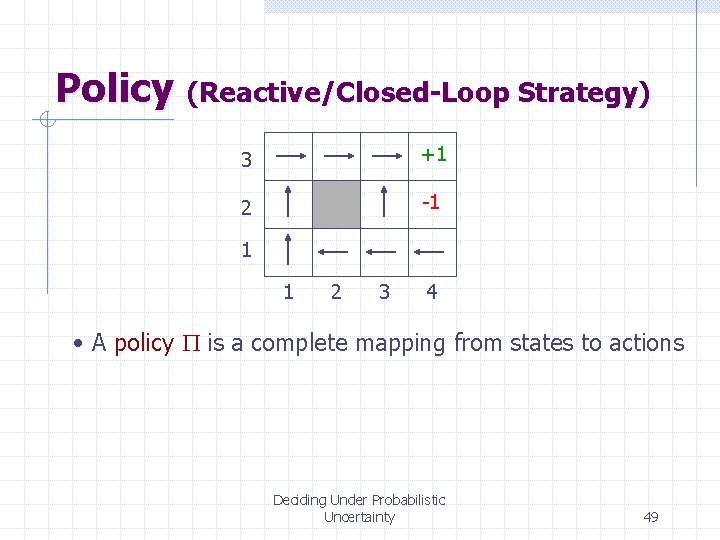

Policy (Reactive/Closed-Loop Strategy) 3 +1 2 -1 1 1 2 3 4 • A policy P is a complete mapping from states to actions Deciding Under Probabilistic Uncertainty 49

Reactive Agent Algorithm Repeat: w s sensed state w If s is terminal then exit w a P(s) w Perform a Deciding Under Probabilistic Uncertainty 50

![Optimal Policy 3 +1 2 -1 1 1 2 3 4 that [3, 2] Optimal Policy 3 +1 2 -1 1 1 2 3 4 that [3, 2]](http://slidetodoc.com/presentation_image_h2/89eac0422bc0fee3bae003ca2c69677b/image-51.jpg)

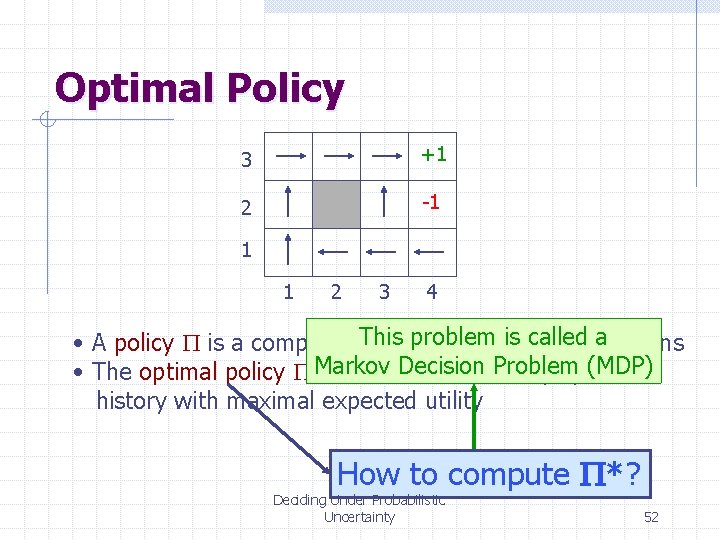

Optimal Policy 3 +1 2 -1 1 1 2 3 4 that [3, 2] a “dangerous” • A policy P is a complete. Note mapping from is states to actions state optimal policy • The optimal policy P* is the onethatthe always yields a tries to maximal avoid history (ending at a terminal state) with expected utility Makes sense because of Markov property Deciding Under Probabilistic Uncertainty 51

Optimal Policy 3 +1 2 -1 1 1 2 3 4 This problem calledtoa actions • A policy P is a complete mapping from isstates Decision Problemyields (MDP) • The optimal policy P*Markov is the one that always a history with maximal expected utility How to compute P*? Deciding Under Probabilistic Uncertainty 52

Utility of state i: U(i) = maxa (Sk. P(k | a. i) U(k) – Ca) i Best choice of action at state i: 0 1 2 3 P*(i) = arg maxa (Sk. P(k|a. i)U(k)–Ca) Deciding Under Probabilistic Uncertainty 53

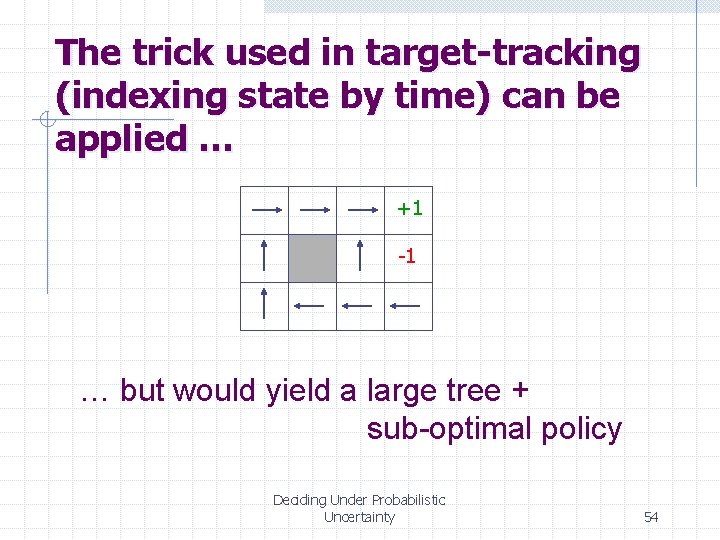

The trick used in target-tracking (indexing state by time) can be applied … +1 -1 … but would yield a large tree + sub-optimal policy Deciding Under Probabilistic Uncertainty 54

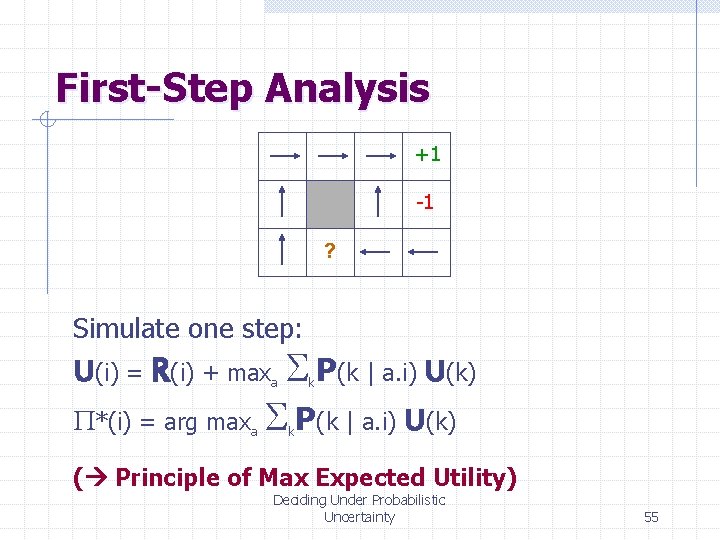

First-Step Analysis +1 -1 ? Simulate one step: U(i) = R(i) + maxa Sk. P(k | a. i) U(k) P*(i) = arg maxa Sk. P(k | a. i) U(k) ( Principle of Max Expected Utility) Deciding Under Probabilistic Uncertainty 55

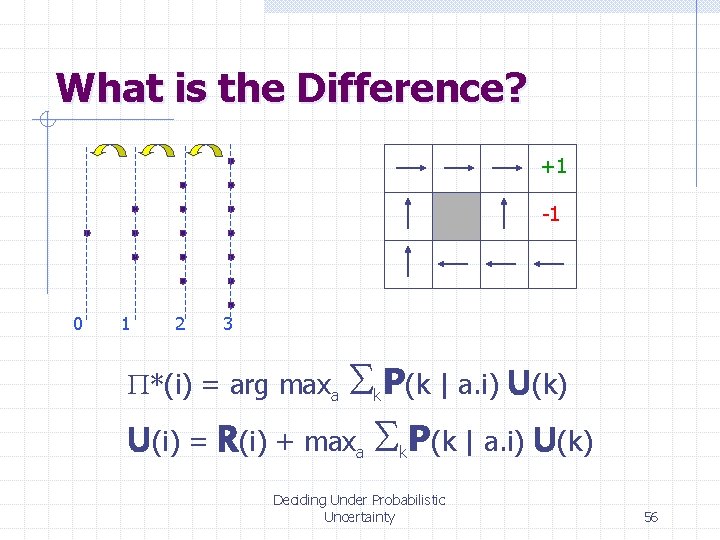

What is the Difference? +1 -1 0 1 2 3 S P(k | a. i) U(k) U(i) = R(i) + max S P(k | a. i) U(k) P*(i) = arg maxa k Deciding Under Probabilistic Uncertainty 56

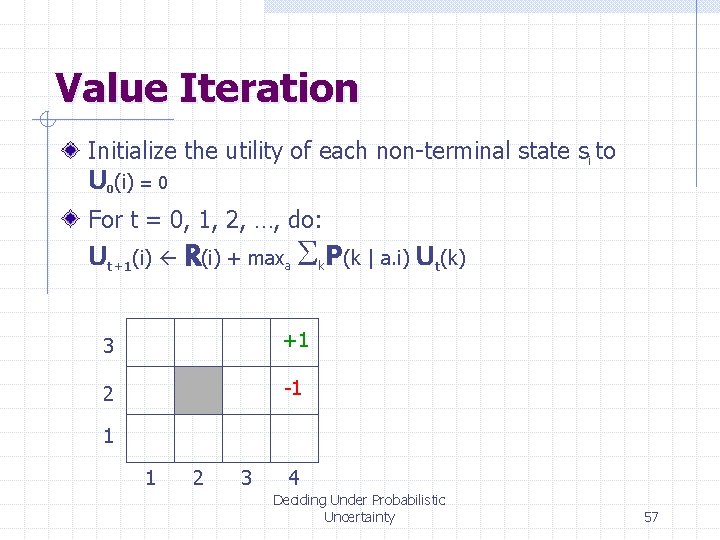

Value Iteration Initialize the utility of each non-terminal state si to U (i) = 0 0 For t = 0, 1, 2, …, do: Ut+1(i) R(i) + maxa Sk. P(k | a. i) Ut(k) 3 +1 2 -1 1 1 2 3 4 Deciding Under Probabilistic Uncertainty 57

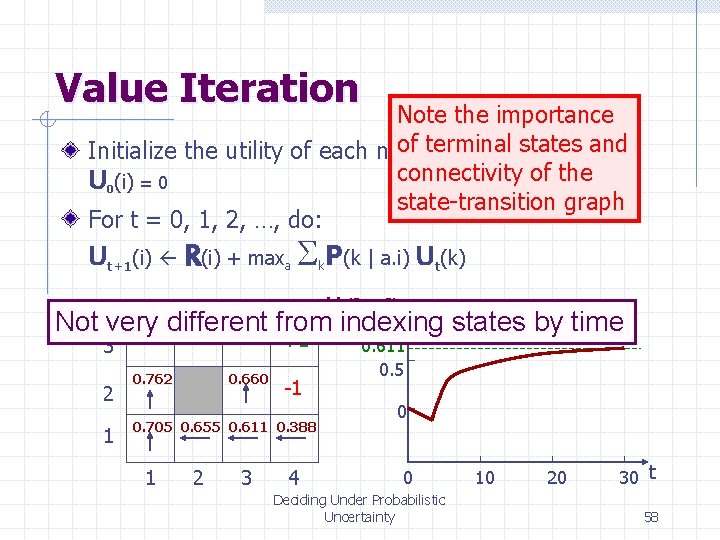

Value Iteration Note the importance of terminal statessand Initialize the utility of each non-terminal i to connectivity of the U 0(i) = 0 state-transition graph For t = 0, 1, 2, …, do: Ut+1(i) R(i) + maxa Sk. P(k | a. i) Ut(k) Ut([3, 1]) Not very 0. 812 different 0. 868 0. 918 from indexing states by time +1 3 2 1 0. 762 0. 660 -1 0. 705 0. 655 0. 611 0. 388 1 2 3 4 0. 611 0. 5 0 0 Deciding Under Probabilistic Uncertainty 10 20 30 t 58

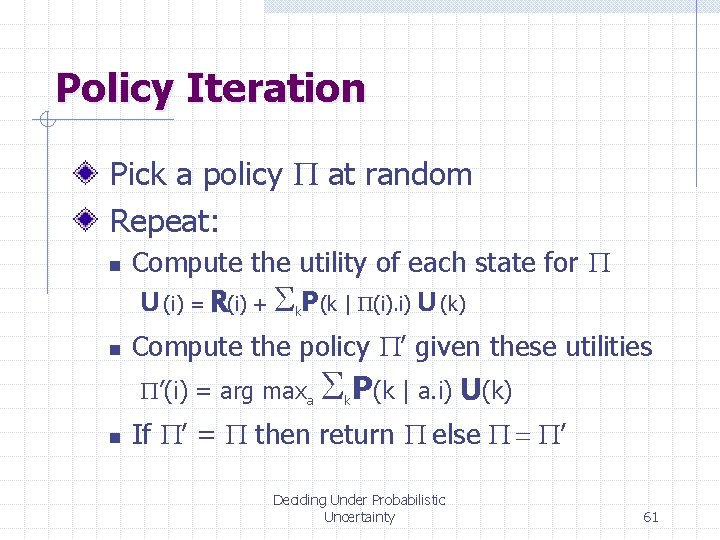

Policy Iteration Pick a policy P at random Deciding Under Probabilistic Uncertainty 59

Policy Iteration Pick a policy P at random Repeat: n Compute the utility of each state for P U (i) = R(i) + Sk. P(k | P(i). i) U (k) Set of linear equations (often a sparse system) Deciding Under Probabilistic Uncertainty 60

Policy Iteration Pick a policy P at random Repeat: n n Compute the utility of each state for P U (i) = R(i) + Sk. P(k | P(i). i) U (k) Compute the policy P’ given these utilities P’(i) = arg maxa n S P(k | a. i) U(k) k If P’ = P then return P else P = P’ Deciding Under Probabilistic Uncertainty 61

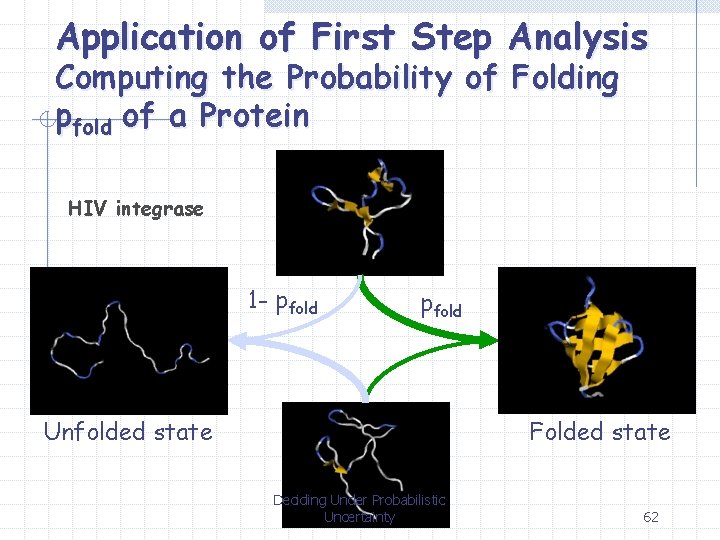

Application of First Step Analysis Computing the Probability of Folding pfold of a Protein HIV integrase 1 - pfold Unfolded state Folded state Deciding Under Probabilistic Uncertainty 62

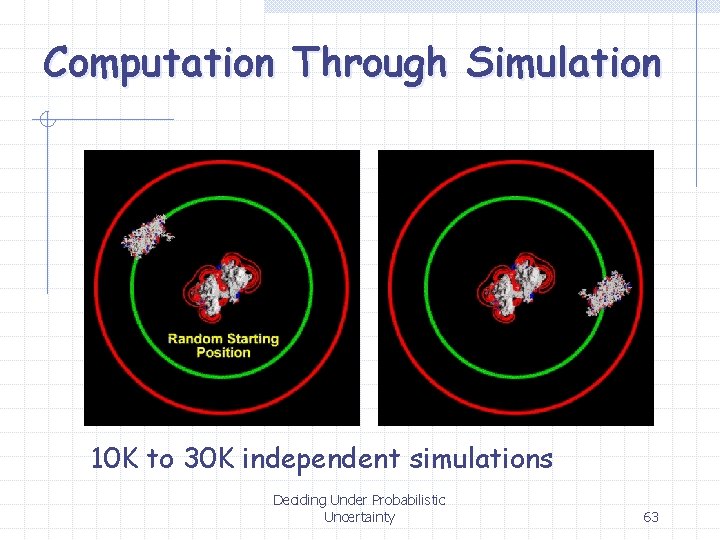

Computation Through Simulation 10 K to 30 K independent simulations Deciding Under Probabilistic Uncertainty 63

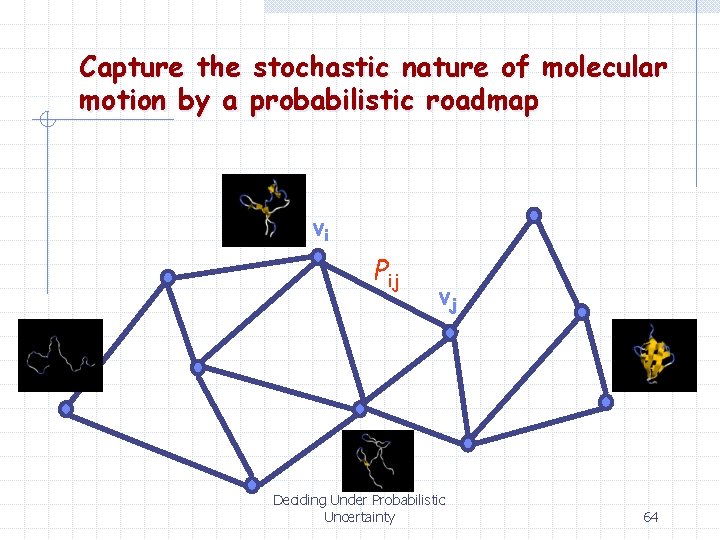

Capture the stochastic nature of molecular motion by a probabilistic roadmap vi Pij vj Deciding Under Probabilistic Uncertainty 64

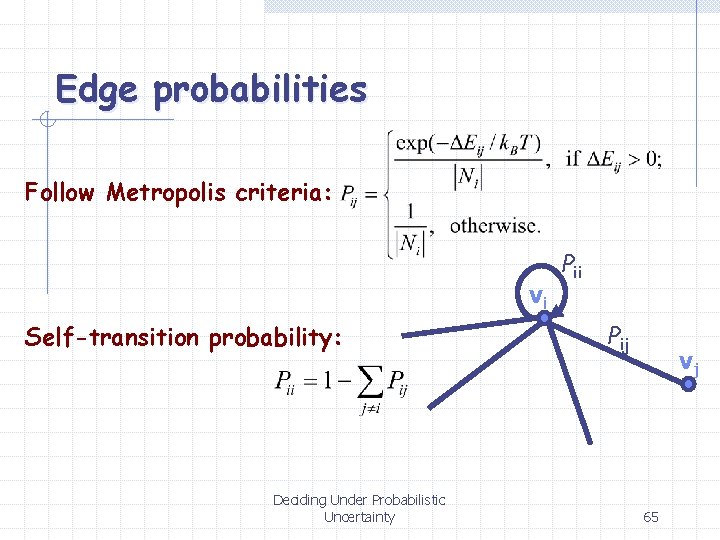

Edge probabilities Follow Metropolis criteria: vi Self-transition probability: Deciding Under Probabilistic Uncertainty Pii Pij vj 65

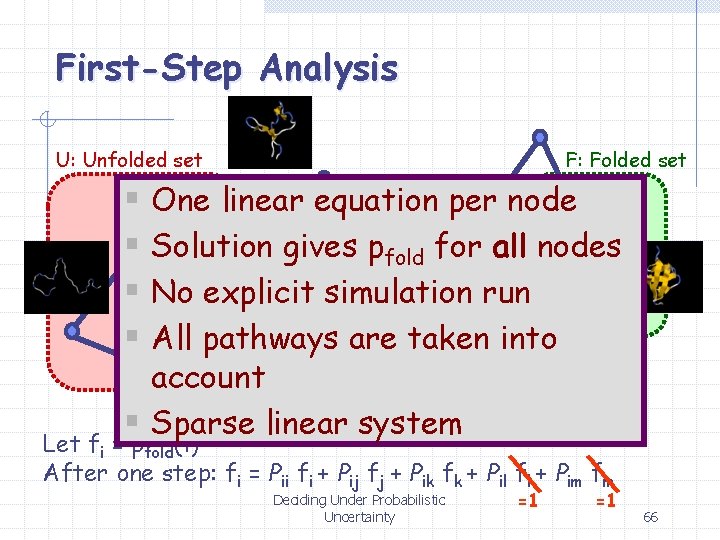

First-Step Analysis U: Unfolded set F: Folded set § One linear equation per node l § Solution gives pfold fork all nodes j Pik § No explicit simulation run P il Pij § All pathways are taken into m account § Sparse linear system i Pim Pii Let fi = pfold(i) After one step: fi = Pii fi + Pij fj + Pik fk + Pil fl + Pim fm Deciding Under Probabilistic Uncertainty =1 =1 66

Partially Observable Markov Decision Problem • Uncertainty in sensing: A sensing operation returns multiple states, with a probability distribution Deciding Under Probabilistic Uncertainty 67

Example: Target Tracking There is uncertainty in the robot’s and target’s positions, and this uncertainty grows with further motion There is a risk that the target escape behind the corner requiring the robot to move appropriately But there is a positioning landmark nearby. Should the robot tries to reduce position uncertainty? Deciding Under Probabilistic Uncertainty 68

Summary Probabilistic uncertainty Utility function Optimal policy Maximal expected utility Value iteration Policy iteration Deciding Under Probabilistic Uncertainty 69

- Slides: 69