DB ES Experiment Support WLCG Tier 0 Tier

DB ES Experiment Support WLCG Tier 0 – Tier 1 Service Coordination Meeting Update Maria. Girone@cern. ch ~~~ WLCG Grid Deployment Board, 23 rd March 2010 CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it

Introduction Ø Since January 2010 a Tier 1 Service Coordination meeting has been held covering medium term issues (e. g. a few weeks), complementing the daily calls and ensuring good information flow between sites, the experiments and service providers • Every two weeks (the maximum practical frequency) at 15: 30 using same dial-in details as the daily call & lasting around 90’ • Also helps communication between teams at a site – including Tier 0! • Clear service recommendations are given – e. g. on deployment of new releases – in conjunction with input from the experiments, as well as longer-term follow-up on service issues • The daily Operations call continues at 15: 00 Geneva time • These calls focus on short-term issues: those that have come up since the previous meeting and short term fixes & last 20 -30’ 2

WLCG T 1 SCM Summary • 4 meetings held so far this year • 5 th is this week (tomorrow) – agenda has already been circulated • We stick closely to agenda times – minutes available within a few days (max) of the meeting • “Standing agenda” (next) + topical issues, including: • • • Service security issues; Oracle 11 g client issues (under “conditions data access”); FTS 2. 2. 3 status and rollout; CMS initiative on handling prolonged site downtimes; Review of Alarm Handling & Incident Escalation for Critical Services; Ø Highlights of each of these issues plus summary of the standing agenda items will follow: see minutes for details! ü Good attendance from experiments, service providers at CERN and Tier 1 s – attendance list will be added as from this week 3

WLCG T 1 SCM Standing Agenda • Data Management & Other Tier 1 Service Issues • Includes update on “baseline versions”, outstanding problems, release update etc. • Conditions Data Access & related services • Fro. NTier, CORAL server, . . . • Experiment Database Service issues • Reports from experiments & DBA teams, Streams replication, … • [ AOB ] Ø As for daily meeting, minutes are Twiki based and prereports encouraged Ø Experts help to compile the material for each topic & summarize in the minutes that are distributed soon after 4

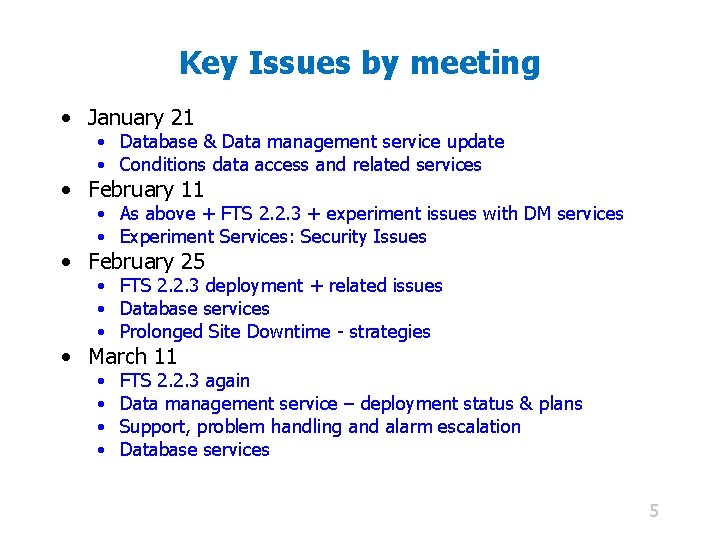

Key Issues by meeting • January 21 • Database & Data management service update • Conditions data access and related services • February 11 • As above + FTS 2. 2. 3 + experiment issues with DM services • Experiment Services: Security Issues • February 25 • FTS 2. 2. 3 deployment + related issues • Database services • Prolonged Site Downtime - strategies • March 11 • • FTS 2. 2. 3 again Data management service – deployment status & plans Support, problem handling and alarm escalation Database services 5

Service security for ATLAS – generalize? • A lot of detailed work presented on security for ATLAS services – too detailed for discussion here • Some – or possibly many – of the points could be applicable also to other experiments • To be followed up at future meetings… 6

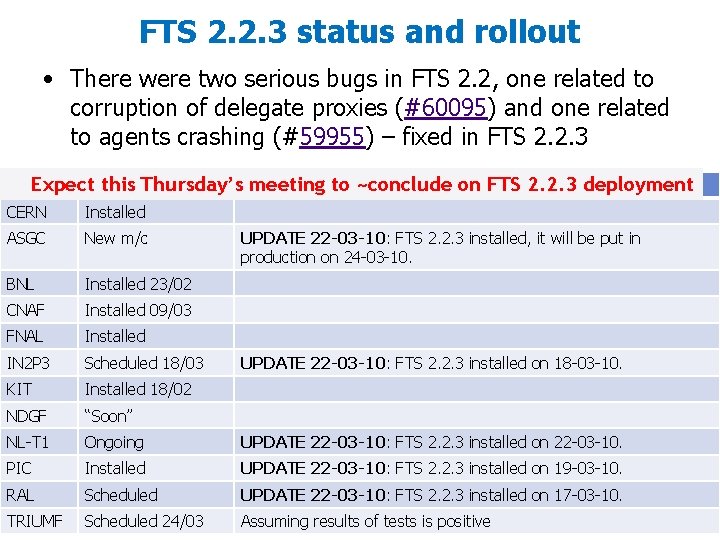

FTS 2. 2. 3 status and rollout • There were two serious bugs in FTS 2. 2, one related to corruption of delegate proxies (#60095) and one related to agents crashing (#59955) – fixed in FTS 2. 2. 3 this Thursday’s. Comment meeting Site. Expect Status to ~conclude on FTS 2. 2. 3 deployment CERN Installed ASGC New m/c BNL Installed 23/02 CNAF Installed 09/03 FNAL Installed IN 2 P 3 Scheduled 18/03 KIT Installed 18/02 NDGF “Soon” NL-T 1 Ongoing UPDATE 22 -03 -10: FTS 2. 2. 3 installed on 22 -03 -10. PIC Installed UPDATE 22 -03 -10: FTS 2. 2. 3 installed on 19 -03 -10. RAL Scheduled UPDATE 22 -03 -10: FTS 2. 2. 3 installed on 17 -03 -10. TRIUMF Scheduled 24/03 Assuming results of tests is positive UPDATE 22 -03 -10: FTS 2. 2. 3 installed, it will be put in production on 24 -03 -10. UPDATE 22 -03 -10: FTS 2. 2. 3 installed on 18 -03 -10. 8

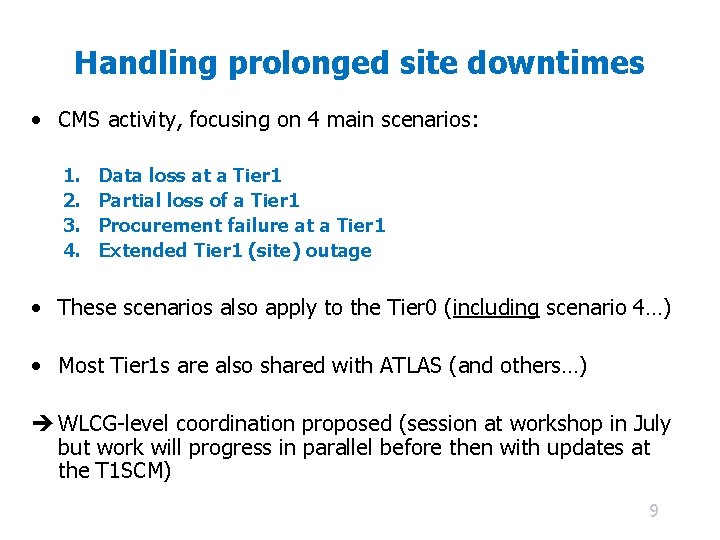

Handling prolonged site downtimes • CMS activity, focusing on 4 main scenarios: 1. 2. 3. 4. Data loss at a Tier 1 Partial loss of a Tier 1 Procurement failure at a Tier 1 Extended Tier 1 (site) outage • These scenarios also apply to the Tier 0 (including scenario 4…) • Most Tier 1 s are also shared with ATLAS (and others…) WLCG-level coordination proposed (session at workshop in July but work will progress in parallel before then with updates at the T 1 SCM) 9

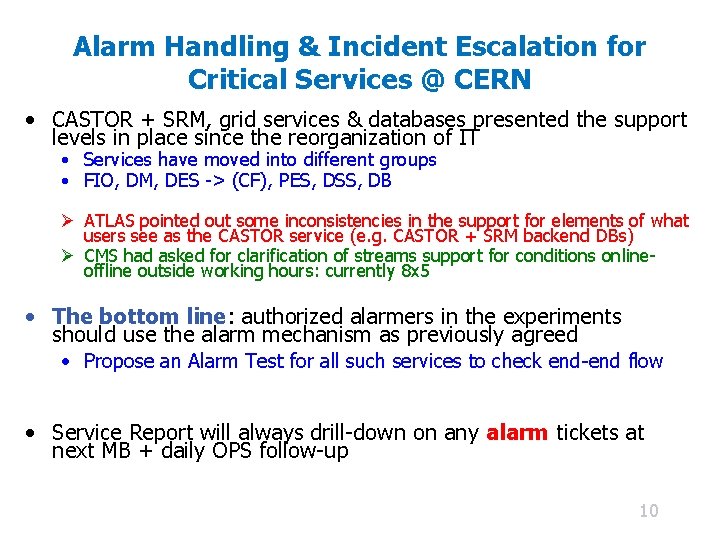

Alarm Handling & Incident Escalation for Critical Services @ CERN • CASTOR + SRM, grid services & databases presented the support levels in place since the reorganization of IT • Services have moved into different groups • FIO, DM, DES -> (CF), PES, DSS, DB Ø ATLAS pointed out some inconsistencies in the support for elements of what users see as the CASTOR service (e. g. CASTOR + SRM backend DBs) Ø CMS had asked for clarification of streams support for conditions onlineoffline outside working hours: currently 8 x 5 • The bottom line: authorized alarmers in the experiments should use the alarm mechanism as previously agreed • Propose an Alarm Test for all such services to check end-end flow • Service Report will always drill-down on any alarm tickets at next MB + daily OPS follow-up 10

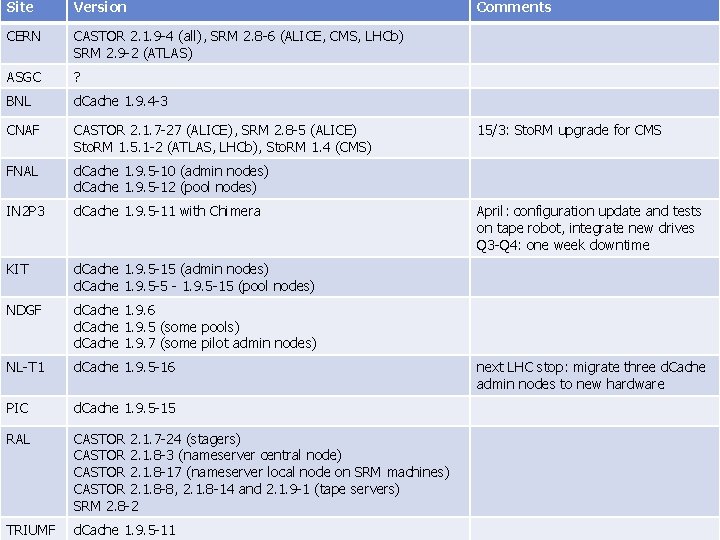

Data Management & Other Tier 1 Service Issues • Regular updates on storage, transfer and data management services • FTS has been one of the main topics but updates on versions of storage systems installed at sites, recommended versions, plans, major issues have also been covered • The status of the installed versions (CASTOR, d. Cache, DPM, Sto. RM, …) and planned updates was covered in the last meeting revealing quite some disparity (abridged table at end) Ø Important to have this global overview of the status of services and medium term planning at the sites… • Release status handover “in practice”: from EGEE (IT-GT) to EGI (SP-PT) (Ibergrid) 11

Conditions Data Access & related services • This includes COOL / CORAL server / Fro. NTier / Squid • Issues reported not only (potentially) impact more than 1 VO but also sites supporting multiple VOs • Discussions about caching strategies and issues, partitioning for conditions, support for Cern. VM at Tier 3 s accessing squids at Tier 2 s… • The recommended versions for the Fro. NTier-squid server and for the Fro. NTier servlet are now included in the WLCG Baseline Versions Table • Detailed report on problems seen with Oracle client and various attempts at their resolution (summarized next) 12

Oracle 11 g client issues • ATLAS reported crashes of the Oracle 11. 2 client used by CORAL and COOL on AMD Opteron quad-core nodes at NDGF (ATLAS bug #62194). • No progress from Oracle in several weeks – some suggested improvements in reporting and followup • ATLAS rolled back to 10. 2 client in February • No loss of functionality for current production releases, although the 11 g client does provide new features that are currently being evaluated for CORAL, like client result caching and integration with Times. Ten caching • Final resolution still pending – and needs to be followed up on: should we maintain an action list? • Global or by area? 13

Experiment Database Service issues • For a full DB service update – see IT-DB • This slide just lists some of the key topics discussed in recent meetings: • Oracle patch update – status at the sites & plans; • Streams bugs, replication problems, status of storage and other upgrades (e. g. memory, RAC nodes); • Need for further consistency checks – e. g. in the case of replication of ATLAS conditions to BNL (followed up on experiment side); • Having DBAs more exposed to the WLCG service perspective – as opposed to the pure DB-centric view – is felt to have been positive 14

Topics for Future Meetings • Key issues from LHCb Tier 1 jamboree in NIKHEF • e. g. “Usability” plots used as a KPI in WLCG operations reports to MB and other dashboard / monitoring issues; Ø Data access – a significant problem since long! (All VOs) • (Update on Data access working group of Tech forum? ) • Update on GGUS ticket routing to the Tier 0 • Alarm testing of all Tier 0 critical services • Not the regular tests of the GGUS alarm chain… • DB monitoring at Tier 1 sites – update (BNL) Ø Jamborees provide very valuable input to the setting of Service Priorities for the coming months • Preparation of July workshop + follow-on events 15

WLCG Collaboration Workshop • The agenda for the July 7 -9 workshop has been updated to give the experiments sequential slots for “jamborees” on Thursday 8 th, plus a summary of the key issues on Friday 9 th. • Protection set so that the experiments can manage their own sessions: Federico, Latchezar, Kors, Ian, Marco, Philippe Ø Non experiment-specific issues should be pulled out into the general sessions • Sites are (presumably) welcome to attend all sessions that they feel are relevant – others too? • There will be a small fee to cover refreshments + a sponsor for a “collaboration drink” • Registration set to open 1 st May 16

Summary • WLCG T 1 SCM is addressing a wide range of important service related problems on a timescale of 1 -2 weeks+ (longer in the case of Prolonged Site downtime strategies) • Complementary to other meetings such as daily operations calls, MB + GDB, workshops – to which regular summaries can be made – plus input to the WLCG Quarterly Reports • Very good attendance so far and positive feedback received from the experiments – including suggestions for agenda items (also from sites)! • Clear service recommendations are a believed strength… Ø Further feedback welcome! 17

BACKUP 18

Site Version CERN CASTOR 2. 1. 9 -4 (all), SRM 2. 8 -6 (ALICE, CMS, LHCb) SRM 2. 9 -2 (ATLAS) ASGC ? BNL d. Cache 1. 9. 4 -3 CNAF CASTOR 2. 1. 7 -27 (ALICE), SRM 2. 8 -5 (ALICE) Sto. RM 1. 5. 1 -2 (ATLAS, LHCb), Sto. RM 1. 4 (CMS) FNAL d. Cache 1. 9. 5 -10 (admin nodes) d. Cache 1. 9. 5 -12 (pool nodes) IN 2 P 3 d. Cache 1. 9. 5 -11 with Chimera KIT d. Cache 1. 9. 5 -15 (admin nodes) d. Cache 1. 9. 5 -5 - 1. 9. 5 -15 (pool nodes) NDGF d. Cache 1. 9. 6 d. Cache 1. 9. 5 (some pools) d. Cache 1. 9. 7 (some pilot admin nodes) NL-T 1 d. Cache 1. 9. 5 -16 PIC d. Cache 1. 9. 5 -15 RAL CASTOR 2. 1. 7 -24 (stagers) CASTOR 2. 1. 8 -3 (nameserver central node) CASTOR 2. 1. 8 -17 (nameserver local node on SRM machines) CASTOR 2. 1. 8 -8, 2. 1. 8 -14 and 2. 1. 9 -1 (tape servers) SRM 2. 8 -2 TRIUMF d. Cache 1. 9. 5 -11 Comments 15/3: Sto. RM upgrade for CMS April: configuration update and tests on tape robot, integrate new drives Q 3 -Q 4: one week downtime next LHC stop: migrate three d. Cache admin nodes to new hardware 19

- Slides: 18