Dave Probert Ph D Windows Kernel Architect Microsoft

Dave Probert, Ph. D. - Windows Kernel Architect Microsoft Windows Division EVOLUTION OF THE WINDOWS KERNEL ARCHITECTURE 08. 10. 2009 Buenos Aires Copyright Microsoft Corporation

About Me Ph. D. in Computer Engineering (Operating Systems w/o Kernels) Kernel Architect at Microsoft for over 13 years Managed platform-independent kernel development in Win 2 K/XP Working on multi-core & heterogeneous parallel computing support �Architect for UMS in Windows 7 / Windows Server 2008 R 2 Co-instigator of the Windows Academic Program Providing kernel source and curriculum materials to universities http: //microsoft. com/Windows. Academic or compsci@microsoft. com Wrote the Windows material for leading OS textbooks �Tanenbaum, Silberschatz, Stallings �Consulted on others, including a successful OS textbook in China

![UNIX vs NT Design Environments Environment which influenced fundamental design decisions UNIX [1969] Windows UNIX vs NT Design Environments Environment which influenced fundamental design decisions UNIX [1969] Windows](http://slidetodoc.com/presentation_image_h/8fbc4fb71cc5e8478acf643bde9eacb3/image-3.jpg)

UNIX vs NT Design Environments Environment which influenced fundamental design decisions UNIX [1969] Windows (NT) [1989] 16 -bit program address space Kbytes of physical memory Swapping system with memory mapping Kbytes of disk, fixed disks Uniprocessor State-machine based I/O devices Standalone interactive systems Small number of friendly users 32 -bit program address space Mbytes of physical memory Virtual memory Mbytes of disk, removable disks Multiprocessor (4 -way) Micro-controller based I/O devices Client/Server distributed computing Large, diverse user populations Copyright Microsoft Corporation

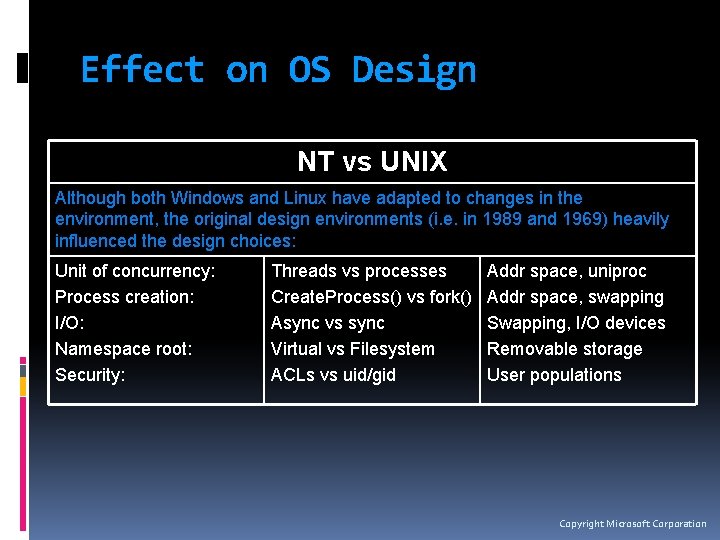

Effect on OS Design NT vs UNIX Although both Windows and Linux have adapted to changes in the environment, the original design environments (i. e. in 1989 and 1969) heavily influenced the design choices: Unit of concurrency: Process creation: I/O: Namespace root: Security: Threads vs processes Create. Process() vs fork() Async vs sync Virtual vs Filesystem ACLs vs uid/gid Addr space, uniproc Addr space, swapping Swapping, I/O devices Removable storage User populations Copyright Microsoft Corporation

![Today’s Environment [2009] 64 -bit addresses GBytes of physical memory TBytes of rotational disk Today’s Environment [2009] 64 -bit addresses GBytes of physical memory TBytes of rotational disk](http://slidetodoc.com/presentation_image_h/8fbc4fb71cc5e8478acf643bde9eacb3/image-5.jpg)

Today’s Environment [2009] 64 -bit addresses GBytes of physical memory TBytes of rotational disk New Storage hierarchies (SSDs) Hypervisors, virtual processors Multi-core/Many-core Heterogeneous CPU architectures, Fixed function hardware High-speed internet/intranet, Web Services Media-rich applications Single user, but vulnerable to hackers worldwide Convergence: Smartphone / Netbook / Laptop / Desktop / TV / Web / Cloud Copyright Microsoft Corporation

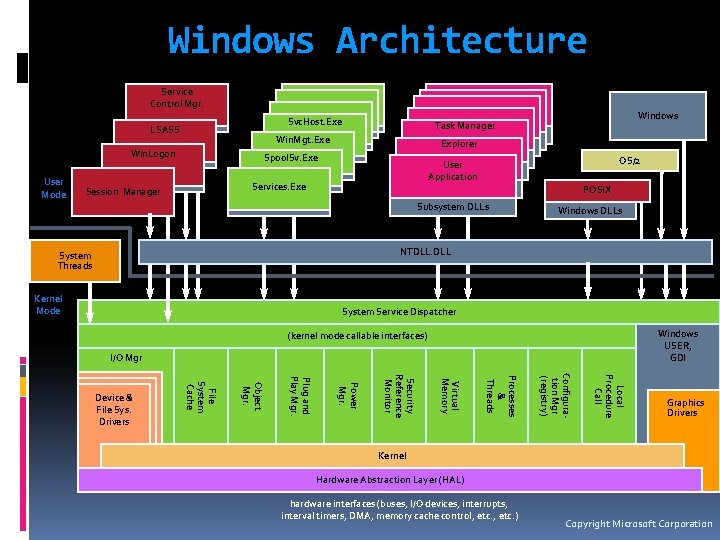

Windows Architecture System Processes Services Environment Subsystems Applications Service Control Mgr. Svc. Host. Exe LSASS Win. Mgt. Exe Win. Logon User Mode Explorer Spool. Sv. Exe OS/2 User Application Services. Exe Session Manager Windows Task Manager POSIX Subsystem DLLs Windows DLLs NTDLL. DLL System Threads Kernel Mode System Service Dispatcher Windows USER, GDI (kernel mode callable interfaces) I/O Mgr Local Procedure Call Configuration Mgr (registry) Processes & Threads Virtual Memory Security Reference Monitor Power Mgr. Plug and Play Mgr. Object Mgr. File System Cache Device & File Sys. Drivers Graphics Drivers Kernel Hardware Abstraction Layer (HAL) hardware interfaces (buses, I/O devices, interrupts, interval timers, DMA, memory cache control, etc. ) Copyright Microsoft Corporation

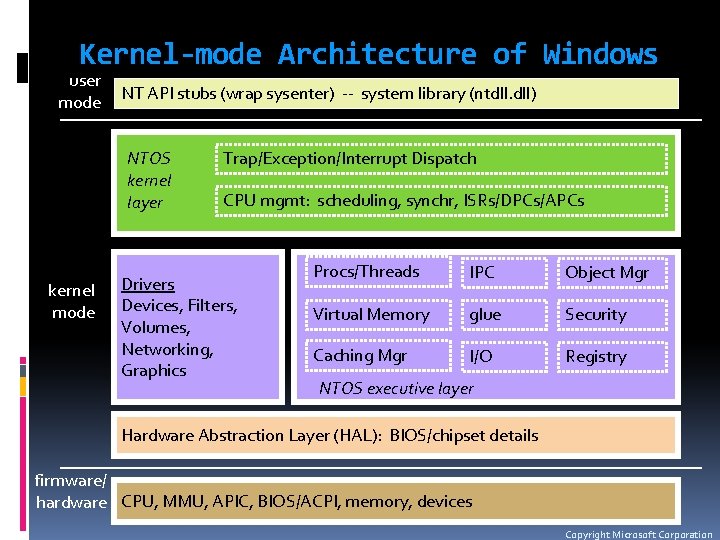

Kernel-mode Architecture of Windows user mode NT API stubs (wrap sysenter) -- system library (ntdll. dll) NTOS kernel layer kernel mode Trap/Exception/Interrupt Dispatch CPU mgmt: scheduling, synchr, ISRs/DPCs/APCs Drivers Devices, Filters, Volumes, Networking, Graphics Procs/Threads IPC Object Mgr Virtual Memory glue Security Caching Mgr I/O Registry NTOS executive layer Hardware Abstraction Layer (HAL): BIOS/chipset details firmware/ hardware CPU, MMU, APIC, BIOS/ACPI, memory, devices Copyright Microsoft Corporation

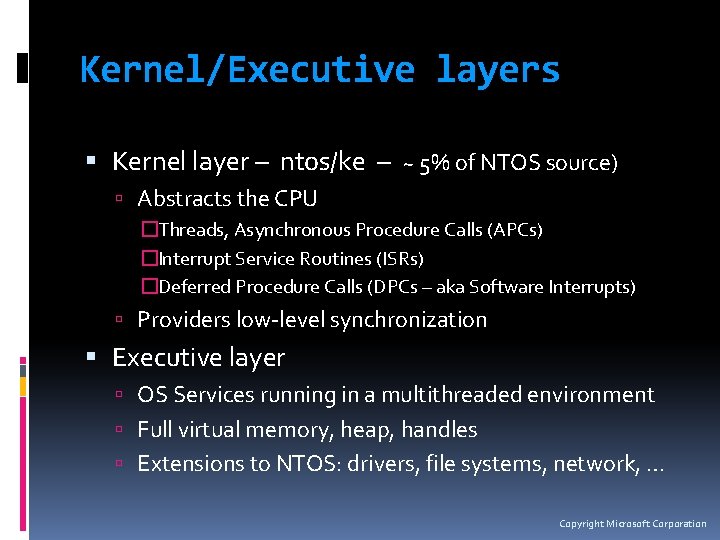

Kernel/Executive layers Kernel layer – ntos/ke – ~ 5% of NTOS source) Abstracts the CPU �Threads, Asynchronous Procedure Calls (APCs) �Interrupt Service Routines (ISRs) �Deferred Procedure Calls (DPCs – aka Software Interrupts) Providers low-level synchronization Executive layer OS Services running in a multithreaded environment Full virtual memory, heap, handles Extensions to NTOS: drivers, file systems, network, … Copyright Microsoft Corporation

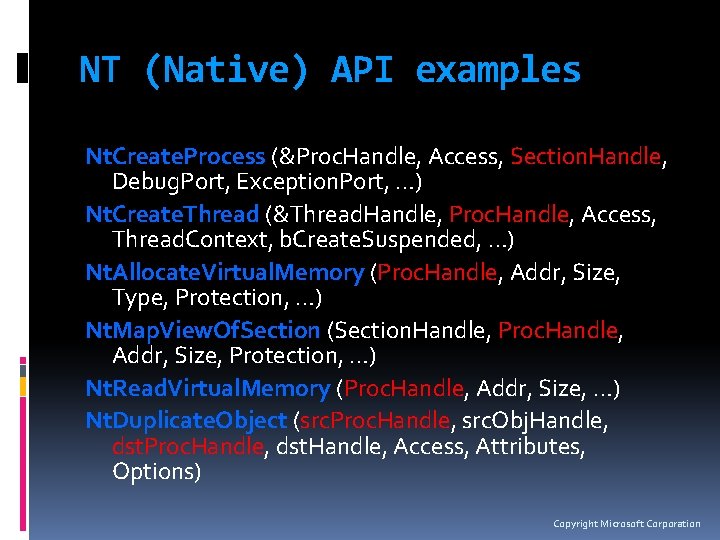

NT (Native) API examples Nt. Create. Process (&Proc. Handle, Access, Section. Handle, Debug. Port, Exception. Port, …) Nt. Create. Thread (&Thread. Handle, Proc. Handle, Access, Thread. Context, b. Create. Suspended, …) Nt. Allocate. Virtual. Memory (Proc. Handle, Addr, Size, Type, Protection, …) Nt. Map. View. Of. Section (Section. Handle, Proc. Handle, Addr, Size, Protection, …) Nt. Read. Virtual. Memory (Proc. Handle, Addr, Size, …) Nt. Duplicate. Object (src. Proc. Handle, src. Obj. Handle, dst. Proc. Handle, dst. Handle, Access, Attributes, Options) Copyright Microsoft Corporation

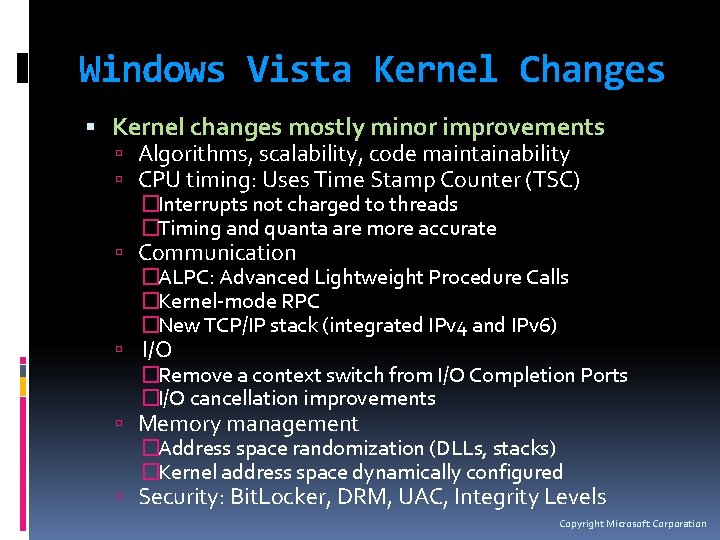

Windows Vista Kernel Changes Kernel changes mostly minor improvements Algorithms, scalability, code maintainability CPU timing: Uses Time Stamp Counter (TSC) �Interrupts not charged to threads �Timing and quanta are more accurate Communication �ALPC: Advanced Lightweight Procedure Calls �Kernel-mode RPC �New TCP/IP stack (integrated IPv 4 and IPv 6) I/O �Remove a context switch from I/O Completion Ports �I/O cancellation improvements Memory management �Address space randomization (DLLs, stacks) �Kernel address space dynamically configured Security: Bit. Locker, DRM, UAC, Integrity Levels Copyright Microsoft Corporation

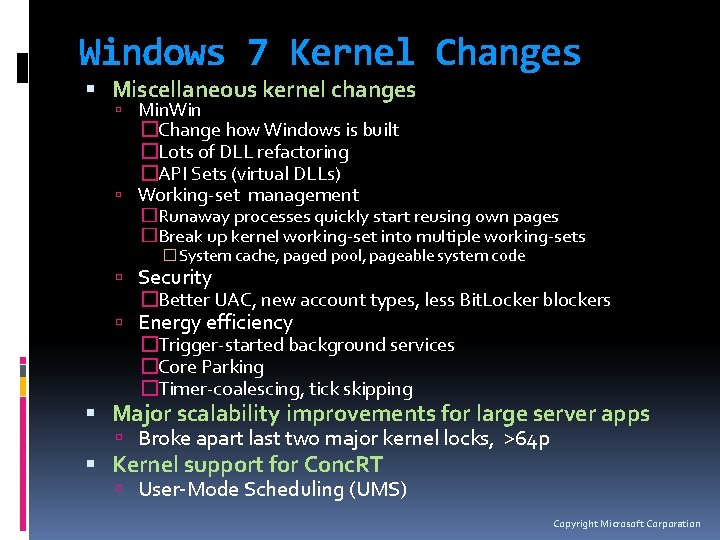

Windows 7 Kernel Changes Miscellaneous kernel changes Min. Win �Change how Windows is built �Lots of DLL refactoring �API Sets (virtual DLLs) Working-set management �Runaway processes quickly start reusing own pages �Break up kernel working-set into multiple working-sets �System cache, paged pool, pageable system code Security �Better UAC, new account types, less Bit. Locker blockers Energy efficiency �Trigger-started background services �Core Parking �Timer-coalescing, tick skipping Major scalability improvements for large server apps Broke apart last two major kernel locks, >64 p Kernel support for Conc. RT User-Mode Scheduling (UMS) Copyright Microsoft Corporation

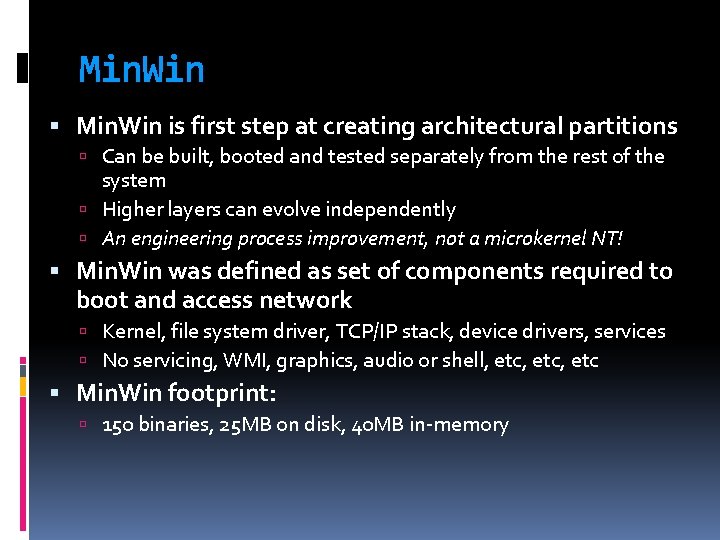

Min. Win is first step at creating architectural partitions Can be built, booted and tested separately from the rest of the system Higher layers can evolve independently An engineering process improvement, not a microkernel NT! Min. Win was defined as set of components required to boot and access network Kernel, file system driver, TCP/IP stack, device drivers, services No servicing, WMI, graphics, audio or shell, etc, etc Min. Win footprint: 150 binaries, 25 MB on disk, 40 MB in-memory

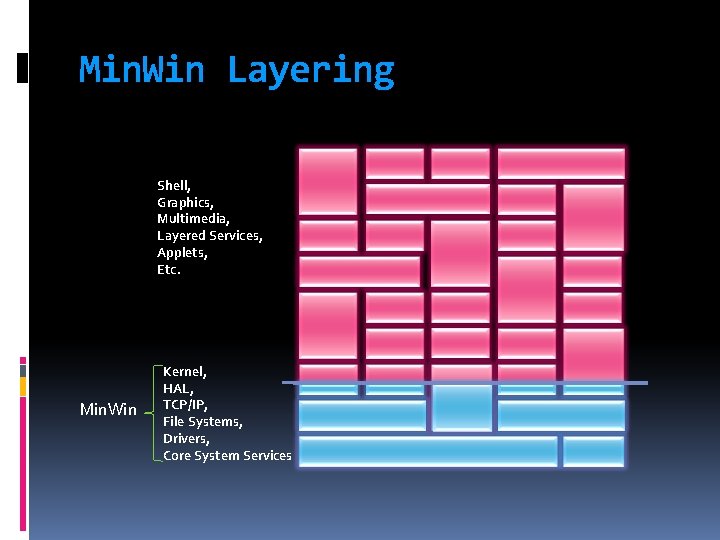

Min. Win Layering Shell, Graphics, Multimedia, Layered Services, Applets, Etc. Min. Win Kernel, HAL, TCP/IP, File Systems, Drivers, Core System Services

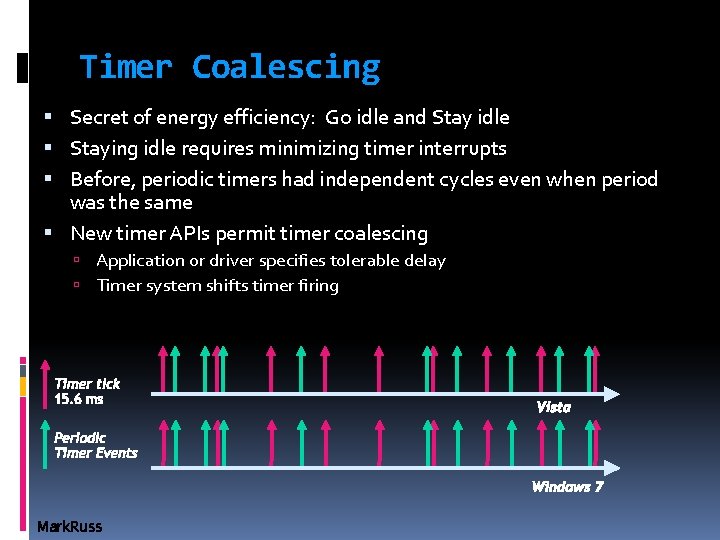

Timer Coalescing Secret of energy efficiency: Go idle and Stay idle Staying idle requires minimizing timer interrupts Before, periodic timers had independent cycles even when period was the same New timer APIs permit timer coalescing Application or driver specifies tolerable delay Timer system shifts timer firing Timer tick 15. 6 ms Vista Periodic Timer Events Windows 7 Mark. Russ

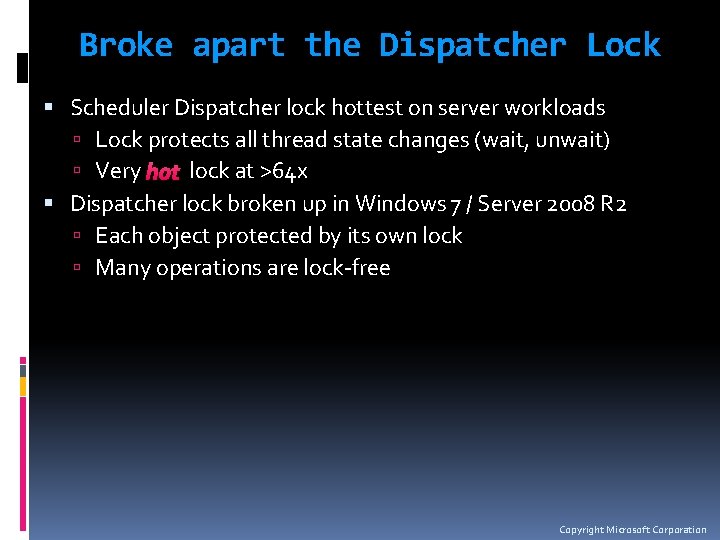

Broke apart the Dispatcher Lock Scheduler Dispatcher lock hottest on server workloads Lock protects all thread state changes (wait, unwait) Very hot lock at >64 x Dispatcher lock broken up in Windows 7 / Server 2008 R 2 Each object protected by its own lock Many operations are lock-free Copyright Microsoft Corporation

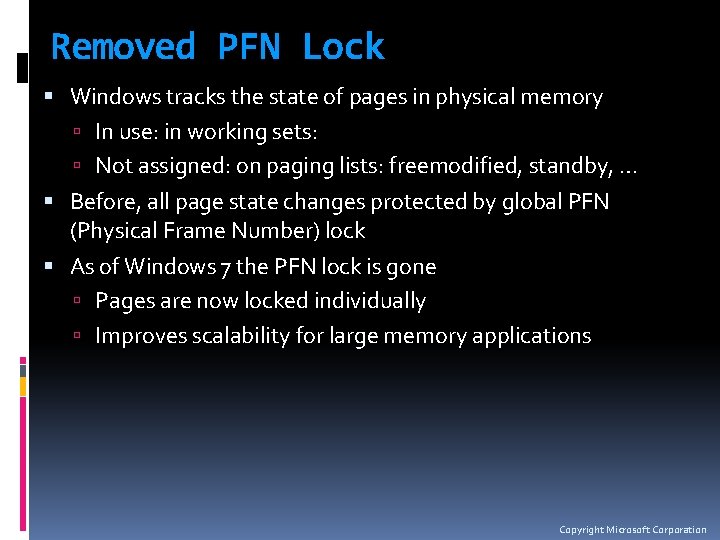

Removed PFN Lock Windows tracks the state of pages in physical memory In use: in working sets: Not assigned: on paging lists: freemodified, standby, … Before, all page state changes protected by global PFN (Physical Frame Number) lock As of Windows 7 the PFN lock is gone Pages are now locked individually Improves scalability for large memory applications Copyright Microsoft Corporation

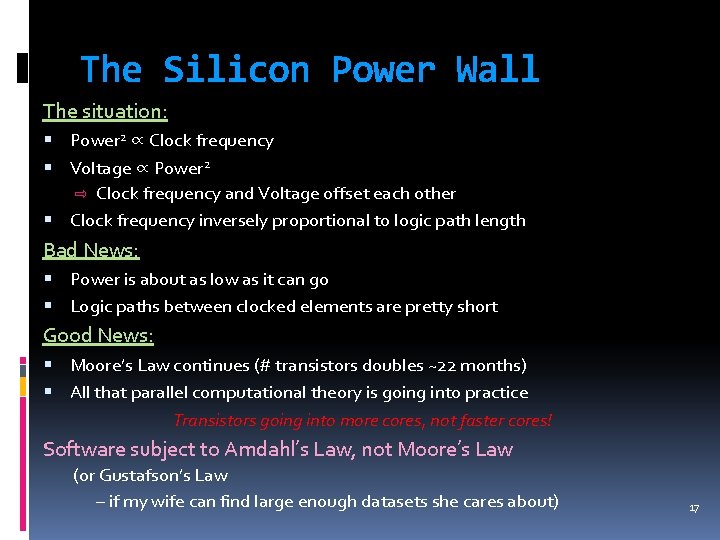

The Silicon Power Wall The situation: Power 2 ∝ Clock frequency Voltage ∝ Power 2 ⇨ Clock frequency and Voltage offset each other Clock frequency inversely proportional to logic path length Bad News: Power is about as low as it can go Logic paths between clocked elements are pretty short Good News: Moore’s Law continues (# transistors doubles ~22 months) All that parallel computational theory is going into practice Transistors going into more cores, not faster cores! Software subject to Amdahl’s Law, not Moore’s Law (or Gustafson’s Law – if my wife can find large enough datasets she cares about) 17

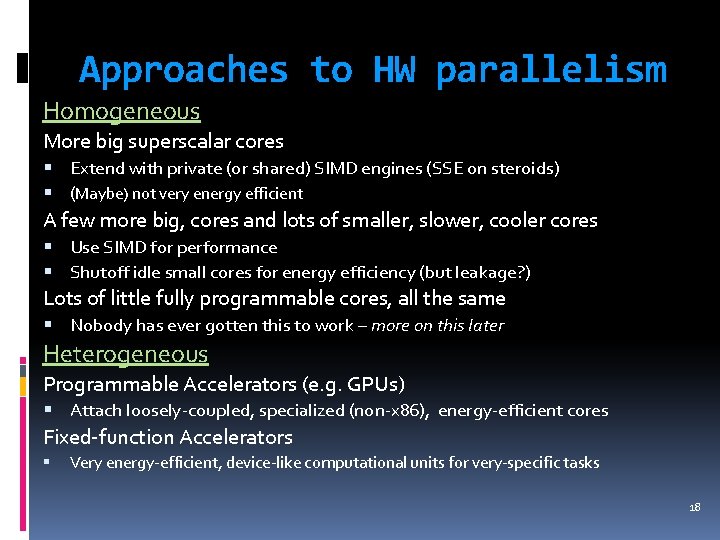

Approaches to HW parallelism Homogeneous More big superscalar cores Extend with private (or shared) SIMD engines (SSE on steroids) (Maybe) not very energy efficient A few more big, cores and lots of smaller, slower, cooler cores Use SIMD for performance Shutoff idle small cores for energy efficiency (but leakage? ) Lots of little fully programmable cores, all the same Nobody has ever gotten this to work – more on this later Heterogeneous Programmable Accelerators (e. g. GPUs) Attach loosely-coupled, specialized (non-x 86), energy-efficient cores Fixed-function Accelerators Very energy-efficient, device-like computational units for very-specific tasks 18

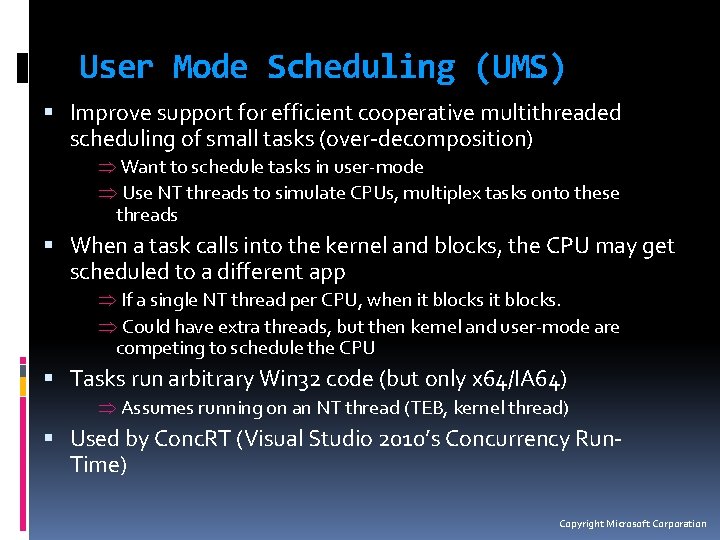

User Mode Scheduling (UMS) Improve support for efficient cooperative multithreaded scheduling of small tasks (over-decomposition) Þ Want to schedule tasks in user-mode Þ Use NT threads to simulate CPUs, multiplex tasks onto these threads When a task calls into the kernel and blocks, the CPU may get scheduled to a different app Þ If a single NT thread per CPU, when it blocks. Þ Could have extra threads, but then kernel and user-mode are competing to schedule the CPU Tasks run arbitrary Win 32 code (but only x 64/IA 64) Þ Assumes running on an NT thread (TEB, kernel thread) Used by Conc. RT (Visual Studio 2010’s Concurrency Run. Time) Copyright Microsoft Corporation

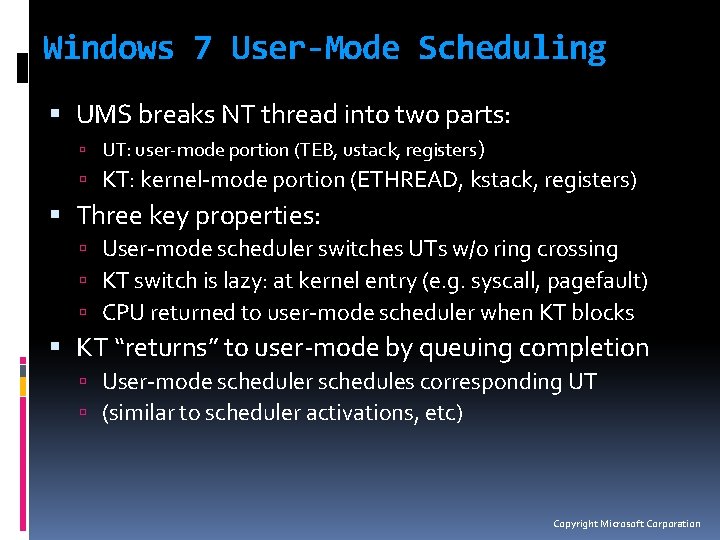

Windows 7 User-Mode Scheduling UMS breaks NT thread into two parts: UT: user-mode portion (TEB, ustack, registers) KT: kernel-mode portion (ETHREAD, kstack, registers) Three key properties: User-mode scheduler switches UTs w/o ring crossing KT switch is lazy: at kernel entry (e. g. syscall, pagefault) CPU returned to user-mode scheduler when KT blocks KT “returns” to user-mode by queuing completion User-mode scheduler schedules corresponding UT (similar to scheduler activations, etc) Copyright Microsoft Corporation

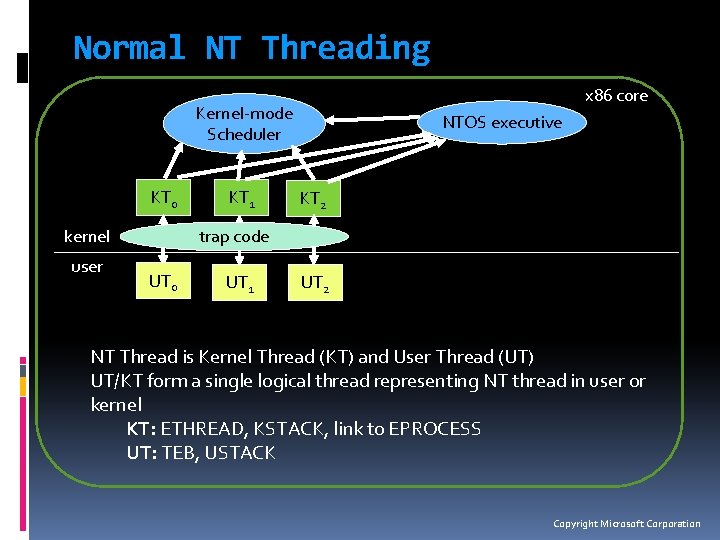

Normal NT Threading x 86 core Kernel-mode Scheduler KT 0 kernel user KT 1 NTOS executive KT 2 trap code UT 0 UT 1 UT 2 NT Thread is Kernel Thread (KT) and User Thread (UT) UT/KT form a single logical thread representing NT thread in user or kernel KT: ETHREAD, KSTACK, link to EPROCESS UT: TEB, USTACK Copyright Microsoft Corporation

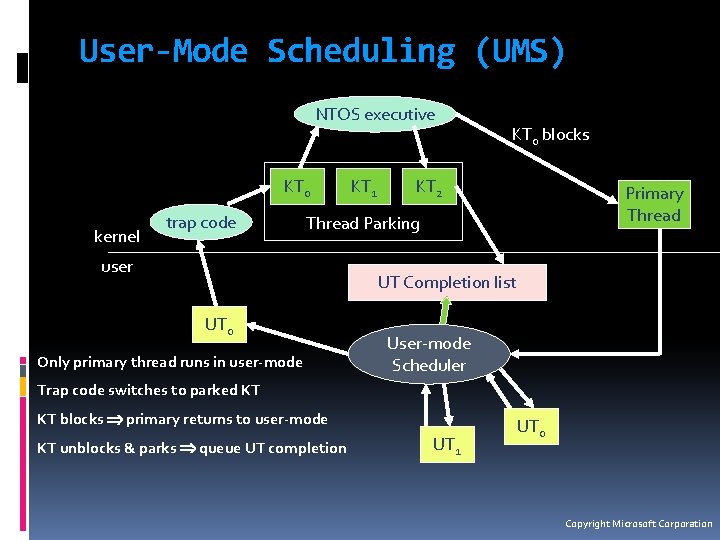

User-Mode Scheduling (UMS) NTOS executive KT 0 kernel trap code KT 1 KT 0 blocks KT 2 Primary Thread Parking user UT Completion list UT 0 Only primary thread runs in user-mode User-mode Scheduler Trap code switches to parked KT KT blocks primary returns to user-mode KT unblocks & parks queue UT completion UT 1 UT 0 Copyright Microsoft Corporation

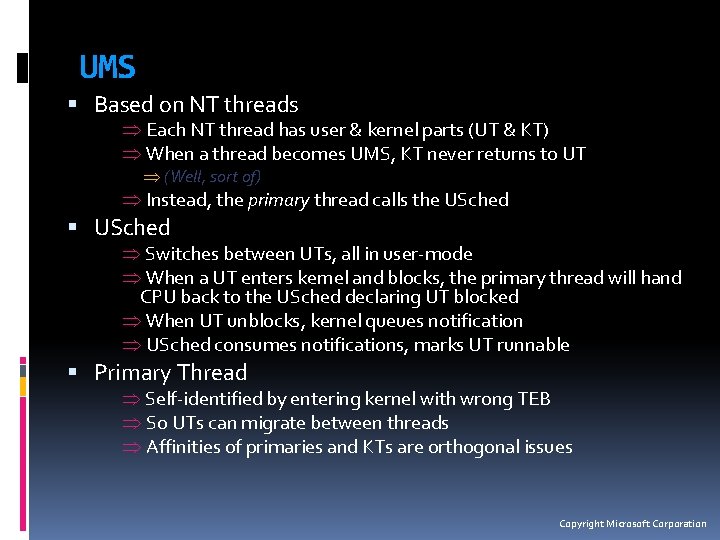

UMS Based on NT threads Þ Each NT thread has user & kernel parts (UT & KT) Þ When a thread becomes UMS, KT never returns to UT Þ (Well, sort of) Þ Instead, the primary thread calls the USched Þ Switches between UTs, all in user-mode Þ When a UT enters kernel and blocks, the primary thread will hand CPU back to the USched declaring UT blocked Þ When UT unblocks, kernel queues notification Þ USched consumes notifications, marks UT runnable Primary Thread Þ Self-identified by entering kernel with wrong TEB Þ So UTs can migrate between threads Þ Affinities of primaries and KTs are orthogonal issues Copyright Microsoft Corporation

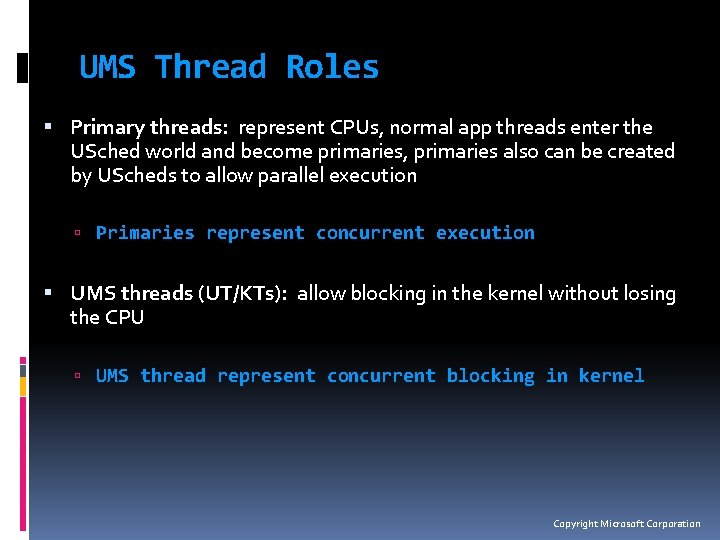

UMS Thread Roles Primary threads: represent CPUs, normal app threads enter the USched world and become primaries, primaries also can be created by UScheds to allow parallel execution Primaries represent concurrent execution UMS threads (UT/KTs): allow blocking in the kernel without losing the CPU UMS thread represent concurrent blocking in kernel Copyright Microsoft Corporation

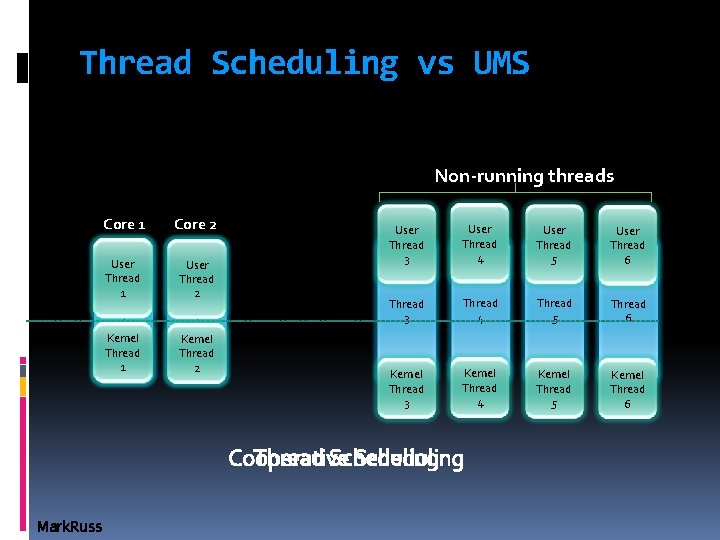

Thread Scheduling vs UMS Non-running threads Core 1 Core 2 User Thread 1 User Thread 2 Kernel Thread 1 Kernel Thread 2 User Thread 3 User Thread 4 User Thread 5 User Thread 6 Thread 3 Thread 4 Thread 5 Thread 6 Kernel Thread 3 Kernel Thread 4 Kernel Thread 5 Kernel Thread 6 Thread Scheduling Cooperative Scheduling Mark. Russ

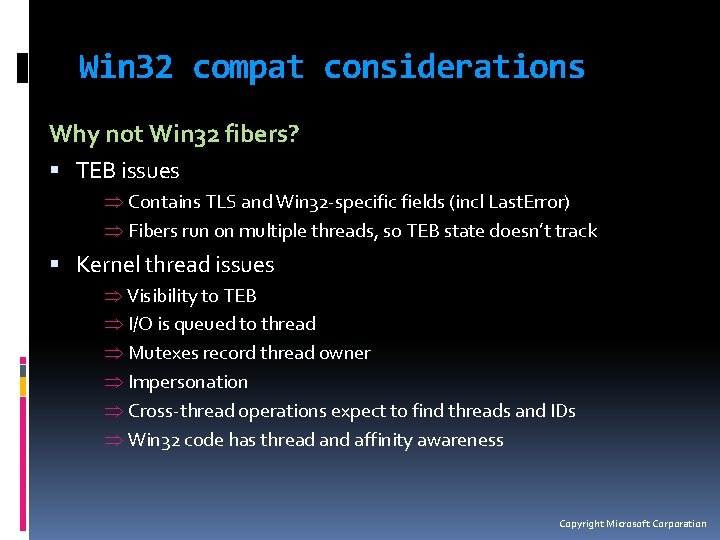

Win 32 compat considerations Why not Win 32 fibers? TEB issues Þ Contains TLS and Win 32 -specific fields (incl Last. Error) Þ Fibers run on multiple threads, so TEB state doesn’t track Kernel thread issues Þ Visibility to TEB Þ I/O is queued to thread Þ Mutexes record thread owner Þ Impersonation Þ Cross-thread operations expect to find threads and IDs Þ Win 32 code has thread and affinity awareness Copyright Microsoft Corporation

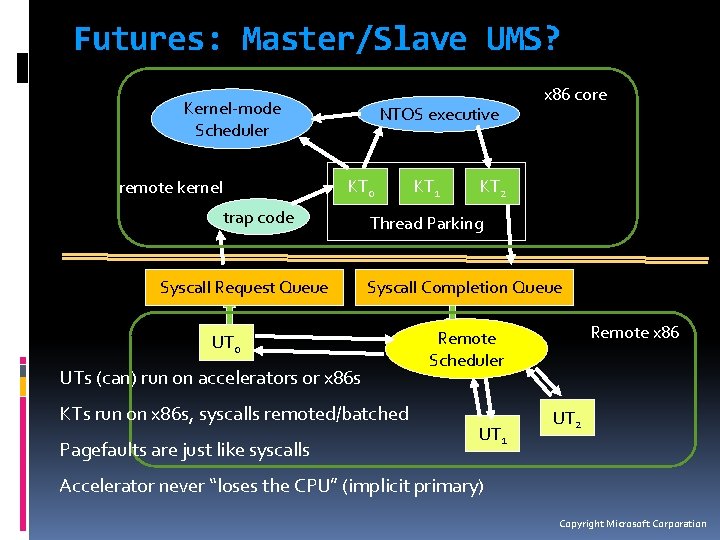

Futures: Master/Slave UMS? Kernel-mode Scheduler NTOS executive KT 0 remote kernel trap code Syscall Request Queue KT 2 Thread Parking Syscall Completion Queue UT 0 UTs (can) run on accelerators or x 86 s KTs run on x 86 s, syscalls remoted/batched Pagefaults are just like syscalls KT 1 x 86 core Remote x 86 Remote Scheduler UT 1 UT 2 Accelerator never “loses the CPU” (implicit primary) Copyright Microsoft Corporation

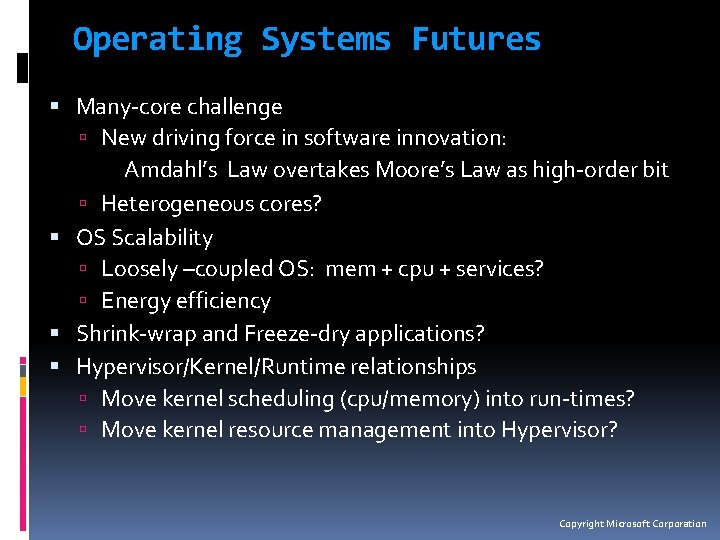

Operating Systems Futures Many-core challenge New driving force in software innovation: Amdahl’s Law overtakes Moore’s Law as high-order bit Heterogeneous cores? OS Scalability Loosely –coupled OS: mem + cpu + services? Energy efficiency Shrink-wrap and Freeze-dry applications? Hypervisor/Kernel/Runtime relationships Move kernel scheduling (cpu/memory) into run-times? Move kernel resource management into Hypervisor? Copyright Microsoft Corporation

Windows Academic Program Windows Kernel Internals Windows kernel in source (Windows Research Kernel – WRK) Windows kernel in Power. Point (Curriculum Resource Kit – CRK) Based on Windows Server 2008 Service Pack 1 Latest kernel at time of release First kernel release with AMD 64 support Joint program between Windows Product Group and MS Academic Groups Program directed by Arkady Retik (Need a DVD? Have questions? ) Information available at �http: //microsoft. com/Windows. Academic OR �compsci@microsoft. com Microsoft Academic Contacts in Buenos Aires Miguel Saez (masaez@microsoft. com) or Ezequiel Glinsky (eglinsky@microsoft. com) Copyright Microsoft Corporation

muchas gracias 30

- Slides: 30