DataDriven Algorithm Design Tim Roughgarden Columbia joint work

Data-Driven Algorithm Design Tim Roughgarden (Columbia) joint work with Rishi Gupta 1

Selecting an Algorithm “I need to solve problem X. Which algorithm should I use? ” 2

Selecting an Algorithm “I need to solve problem X. Which algorithm should I use? ” Answer usually depends on the details of the application (i. e. , the instances of interest). • for most problems, no “silver bullet” algorithm 3

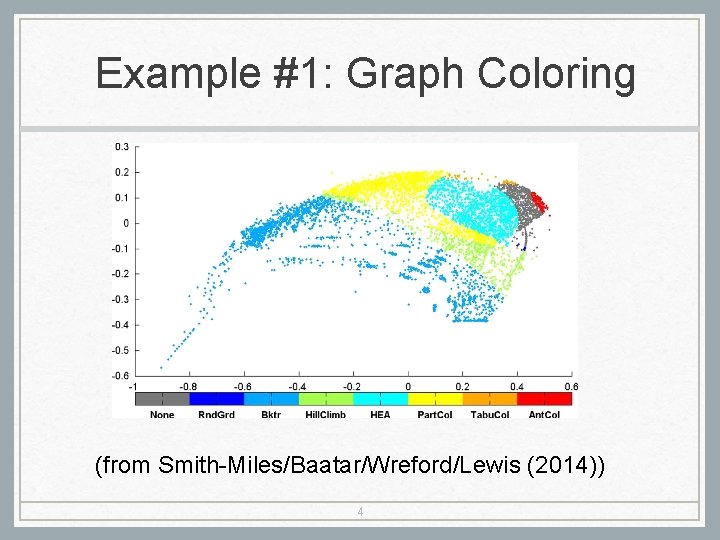

Example #1: Graph Coloring (from Smith-Miles/Baatar/Wreford/Lewis (2014)) 4

Example #2: SATzilla Next level idea: choose an algorithm on a per -instance basis. (as a function of instance features) [Xu/Hutter/Hoos/Leyton-Brown] • Algorithm portfolio of 7 SAT algorithms (widely varying performance). • Identify coarse features of SAT instances • clause/variable ratio, Knuth’s search tree estimate, etc. • Use machine learning/statistics to learn a good mapping from inputs to algorithms. (spoiler: won multiple SAT competitions) 5

Example #3: Parameter Tuning Case Study #1: machine learning. • e. g. , choosing the step size in gradient descent • e. g. , choosing a regularization parameter 6

Example #3: Parameter Tuning Case Study #1: machine learning. • e. g. , choosing the step size in gradient descent • e. g. , choosing a regularization parameter Case Study #2: CPLEX. (LP/IP solver). • 135 parameters! (221 -page reference manual) 7

Example #3: Parameter Tuning Case Study #1: machine learning. • e. g. , choosing the step size in gradient descent • e. g. , choosing a regularization parameter Case Study #2: CPLEX. (LP/IP solver). • 135 parameters! (221 -page reference manual) • manual’s advice: “you may need to 8 experiment with them” (gee, thanks. . . )

Algorithm Design via Learning Question: what would a theory of “applicationspecific algorithm design” look like? • need to go “beyond worst-case analysis” 9

Algorithm Design via Learning Question: what would a theory of “applicationspecific algorithm design” look like? • need to go “beyond worst-case analysis” Idea: model as a learning problem. • algorithms play role of concepts/hypotheses • algorithm performance acts as loss function • two models: offline (batch) learning and online learning (i. e. , regret-minimization) 10

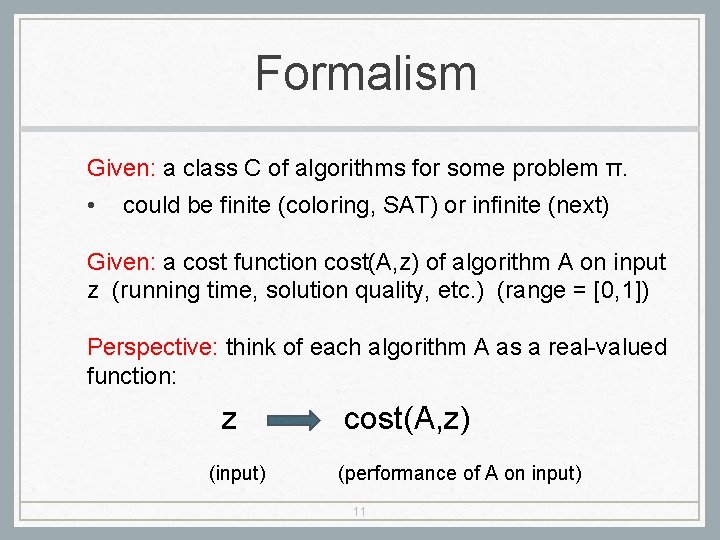

Formalism Given: a class C of algorithms for some problem π. • could be finite (coloring, SAT) or infinite (next) Given: a cost function cost(A, z) of algorithm A on input z (running time, solution quality, etc. ) (range = [0, 1]) Perspective: think of each algorithm A as a real-valued function: z (input) cost(A, z) (performance of A on input) 11

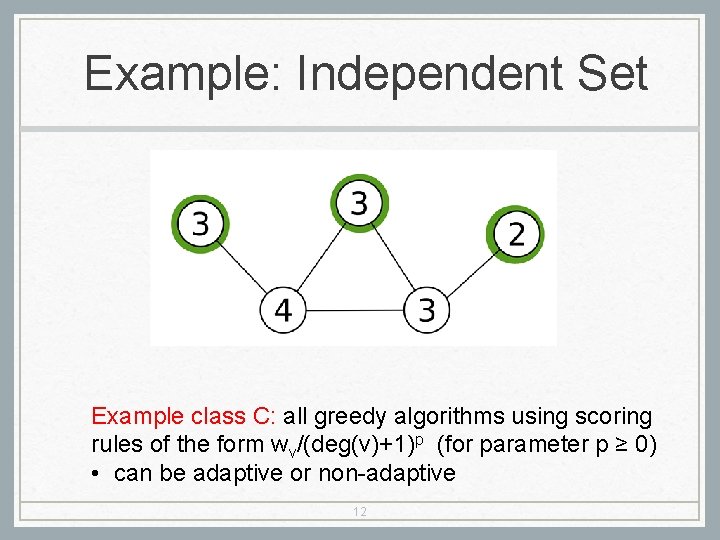

Example: Independent Set Example class C: all greedy algorithms using scoring rules of the form wv/(deg(v)+1)p (for parameter p ≥ 0) • can be adaptive or non-adaptive 12

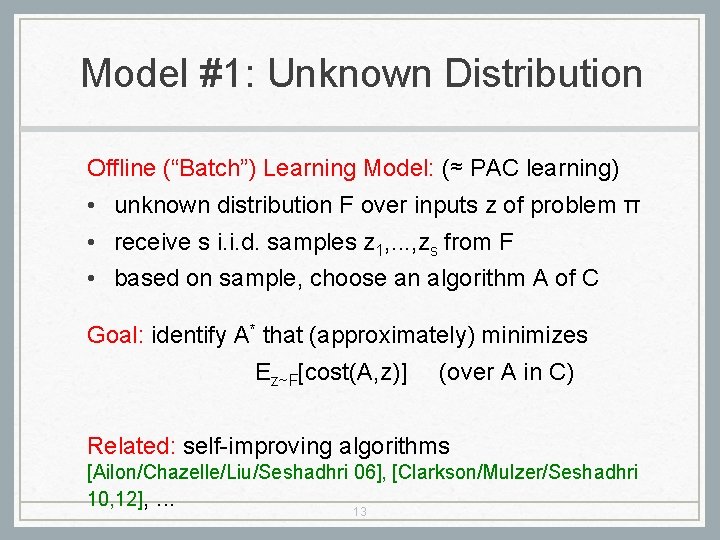

Model #1: Unknown Distribution Offline (“Batch”) Learning Model: (≈ PAC learning) • unknown distribution F over inputs z of problem π • receive s i. i. d. samples z 1, . . . , zs from F • based on sample, choose an algorithm A of C Goal: identify A* that (approximately) minimizes Ez~F[cost(A, z)] (over A in C) Related: self-improving algorithms [Ailon/Chazelle/Liu/Seshadhri 06], [Clarkson/Mulzer/Seshadhri 10, 12], . . . 13

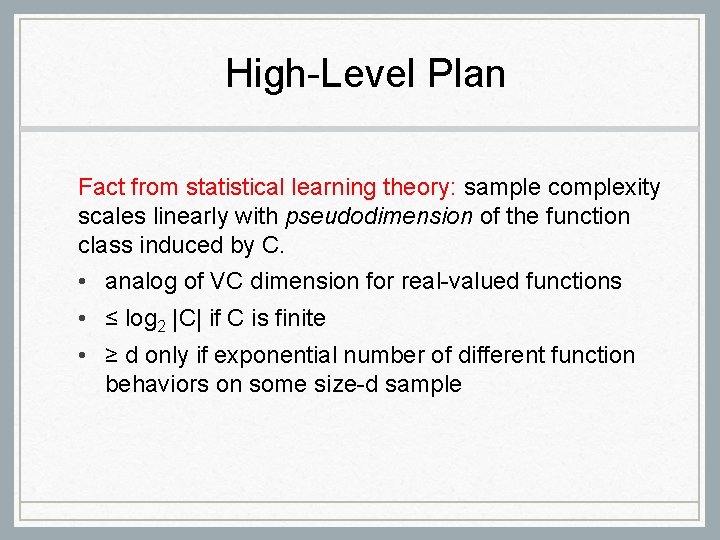

High-Level Plan Fact from statistical learning theory: sample complexity scales linearly with pseudodimension of the function class induced by C. • analog of VC dimension for real-valued functions • ≤ log 2 |C| if C is finite • ≥ d only if exponential number of different function behaviors on some size-d sample

![Pseudodimension: Implications Theorem: [Haussler 92], [Anthony/Bartlett 99] if C has low pseudodimension, then small Pseudodimension: Implications Theorem: [Haussler 92], [Anthony/Bartlett 99] if C has low pseudodimension, then small](http://slidetodoc.com/presentation_image_h2/4879f8c11561149bda8aad9bc6381793/image-15.jpg)

Pseudodimension: Implications Theorem: [Haussler 92], [Anthony/Bartlett 99] if C has low pseudodimension, then small number of samples sufficient to learn the best algorithm in C. • obtain samples z 1, . . . , zs from F, where d = pseudodimension of C, cost(A, z) in [0, 1] • let A* = algorithm of C with best average performance on the samples (i. e. , ERM) Guarantee: with high probability, expected performance of A* (w. r. t. F) withinε of optimal algorithm in C.

But. . . $64 K question: do interesting classes of algorithms have small pseudodimension?

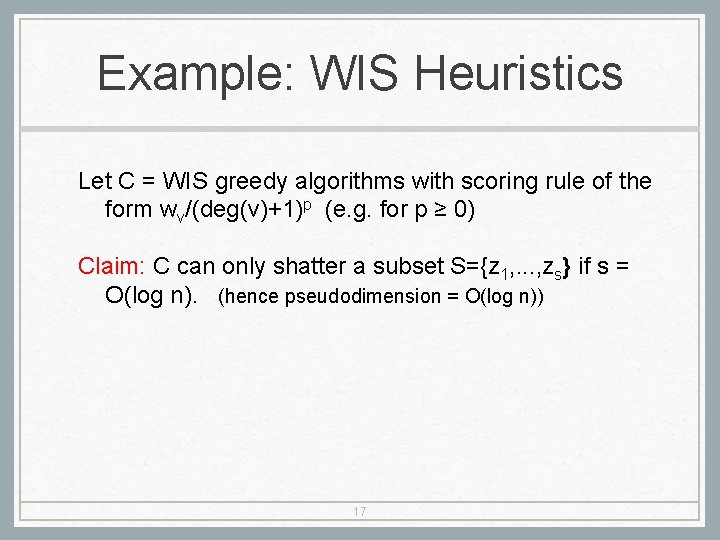

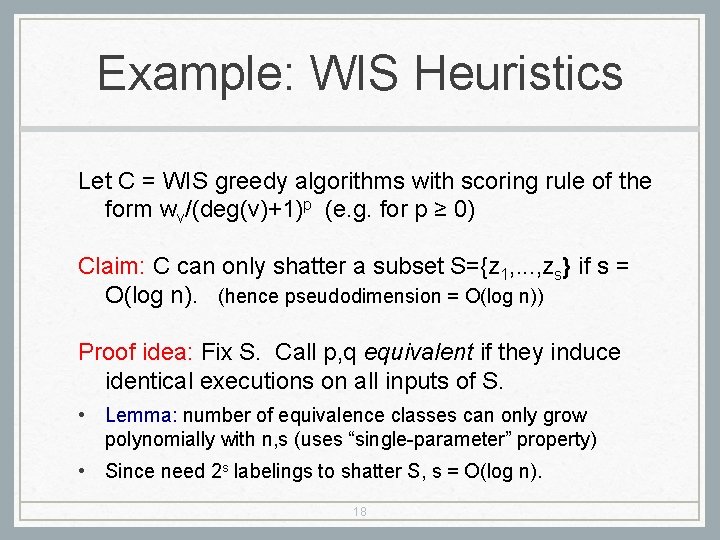

Example: WIS Heuristics Let C = WIS greedy algorithms with scoring rule of the form wv/(deg(v)+1)p (e. g. for p ≥ 0) Claim: C can only shatter a subset S={z 1, . . . , zs} if s = O(log n). (hence pseudodimension = O(log n)) 17

Example: WIS Heuristics Let C = WIS greedy algorithms with scoring rule of the form wv/(deg(v)+1)p (e. g. for p ≥ 0) Claim: C can only shatter a subset S={z 1, . . . , zs} if s = O(log n). (hence pseudodimension = O(log n)) Proof idea: Fix S. Call p, q equivalent if they induce identical executions on all inputs of S. • Lemma: number of equivalence classes can only grow polynomially with n, s (uses “single-parameter” property) • Since need 2 s labelings to shatter S, s = O(log n). 18

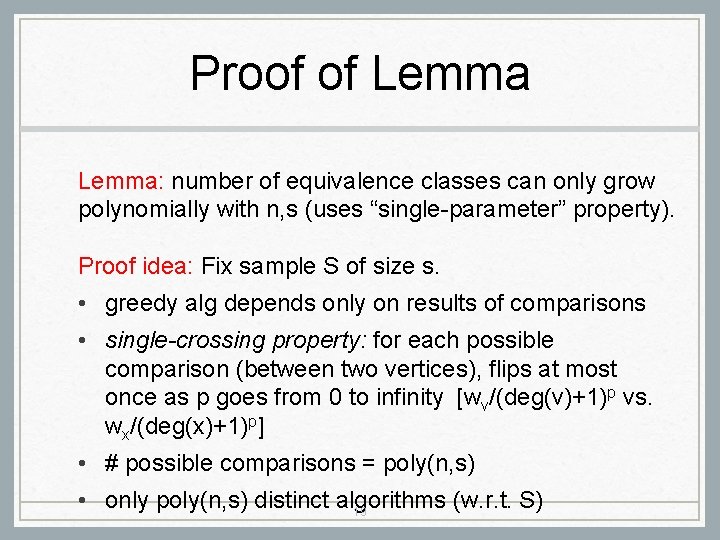

Proof of Lemma: number of equivalence classes can only grow polynomially with n, s (uses “single-parameter” property). Proof idea: Fix sample S of size s. • greedy alg depends only on results of comparisons • single-crossing property: for each possible comparison (between two vertices), flips at most once as p goes from 0 to infinity [wv/(deg(v)+1)p vs. wx/(deg(x)+1)p] • # possible comparisons = poly(n, s) • only poly(n, s) distinct algorithms (w. r. t. S) 19

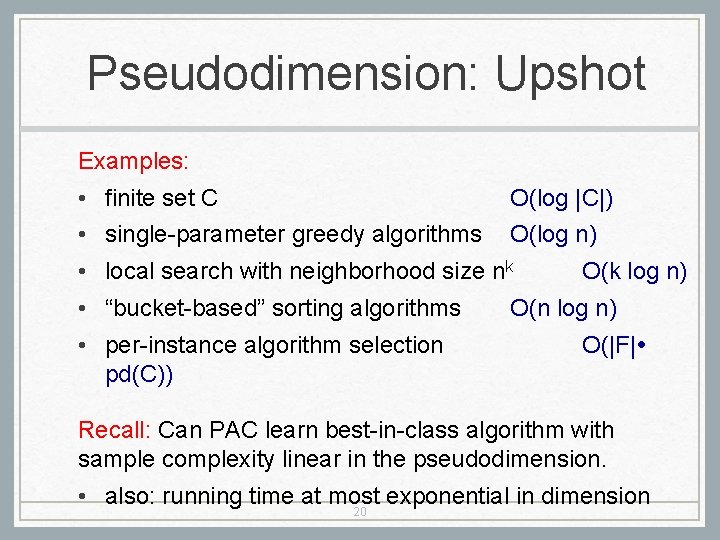

Pseudodimension: Upshot Examples: • finite set C O(log |C|) • single-parameter greedy algorithms O(log n) • local search with neighborhood size nk • “bucket-based” sorting algorithms • per-instance algorithm selection pd(C)) O(k log n) O(n log n) O(|F| Recall: Can PAC learn best-in-class algorithm with sample complexity linear in the pseudodimension. • also: running time at most exponential in dimension 20

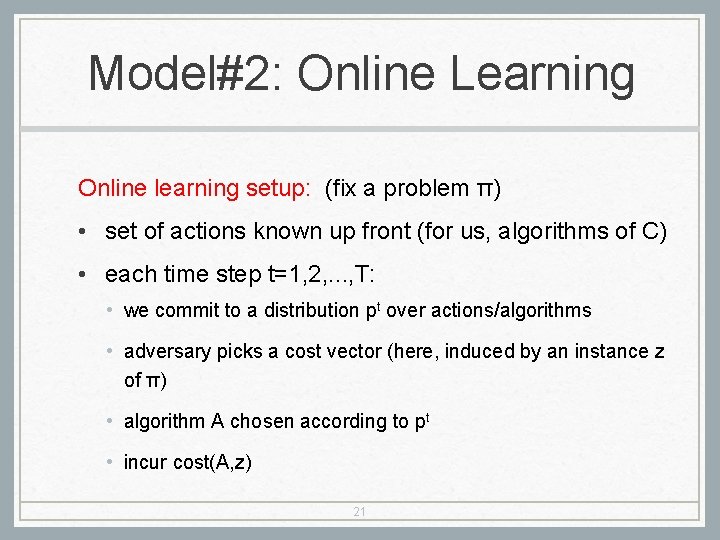

Model#2: Online Learning Online learning setup: (fix a problem π) • set of actions known up front (for us, algorithms of C) • each time step t=1, 2, . . . , T: • we commit to a distribution pt over actions/algorithms • adversary picks a cost vector (here, induced by an instance z of π) • algorithm A chosen according to pt • incur cost(A, z) 21

Regret-Minimization Benchmark: best fixed algorithm A of C (in hindsight) for the adversarially chosen inputs z 1, . . . , z. T Goal: online algorithm that, in expectation, always incurs average cost at most benchmark, plus o(1) error term. 22

Regret-Minimization Benchmark: best fixed algorithm A of C (in hindsight) for the adversarially chosen inputs z 1, . . . , z. T Goal: online algorithm that, in expectation, always incurs average cost at most benchmark, plus o(1) error term. Question #1: Weighted Majority/Multiplicative Weights? 23

Regret-Minimization Benchmark: best fixed algorithm A of C (in hindsight) for the adversarially chosen inputs z 1, . . . , z. T Goal: online algorithm that, in expectation, always incurs average cost at most benchmark, plus o(1) error term. Question #1: Weighted Majority/Multiplicative Weights? • issue: what if A an infinite set? 24

Regret-Minimization Benchmark: best fixed algorithm A of C (in hindsight) for the adversarially chosen inputs z 1, . . . , z. T Goal: online algorithm that, in expectation, always incurs average cost at most benchmark, plus o(1) error term. Question #1: Weighted Majority/Multiplicative Weights? • issue: what if A an infinite set? Question #2: extension to Lipschitz cost vectors? 25

Regret-Minimization Benchmark: best fixed algorithm A of C (in hindsight) for the adversarially chosen inputs z 1, . . . , z. T Goal: online algorithm that, in expectation, always incurs average cost at most benchmark, plus o(1) error term. Question #1: Weighted Majority/Multiplicative Weights? • issue: what if A an infinite set? Question #2: extension to Lipschitz cost vectors? • issue: not at all Lipschitz! (e. g. , for greedy WIS) 26

Positive and Negative Results Theorem 1: there is no online algorithm with a non-trivial regret guarantee for choosing greedy WIS algorithms. • (example crucially exploits non-Lipschitzness) 27

Positive and Negative Results Theorem 1: there is no online algorithm with a non-trivial regret guarantee for choosing greedy WIS algorithms. • (example crucially exploits non-Lipschitzness) Theorem 2: for “smoothed WIS instances” (a la [Spielman/Teng 01]), can achieve vanishing expected regret as T -> infinity. Proof idea: (i) run a no-regret algorithm using a “net” of the space of algorithms; (ii) smoothed instances => the optimal algorithm is typically equivalent to one of the net algorithms 28

![Recent Progress Offline model: clustering and partitioning problems [Balcan/Nagarajan/Vitercek/White 17]; auctions and mechanisms [Morgenstern/Roughgarden Recent Progress Offline model: clustering and partitioning problems [Balcan/Nagarajan/Vitercek/White 17]; auctions and mechanisms [Morgenstern/Roughgarden](http://slidetodoc.com/presentation_image_h2/4879f8c11561149bda8aad9bc6381793/image-29.jpg)

Recent Progress Offline model: clustering and partitioning problems [Balcan/Nagarajan/Vitercek/White 17]; auctions and mechanisms [Morgenstern/Roughgarden 15, 16], [Balcan/Sandholm/Vitercik 16, 18]; mathematical programs [Balcan/Dick/Vitercik 18] Online model: smoothed piecewise constant functions [Cohen-Addad/Kanade 17], generalizations and unification with offline case [Balcan/Dick/Vitercik 18] 29

Open Questions • non-trivial learning algorithms? (or a proof that, under complexity assumptions, none exist) • extend gradient descent analysis to more general hyperparameter optimization problems • connections to more traditional measures of “algorithm complexity”? 30

- Slides: 30