Database System Implementation CSE 507 Query Processing and

Database System Implementation CSE 507 Query Processing and Query Optimization Some slides adapted from Silberschatz, Korth and Sudarshan Database System Concepts – 6 th Edition. And Elamsri and Navathe, Fundamentals of Database Systems – 6 th Edition.

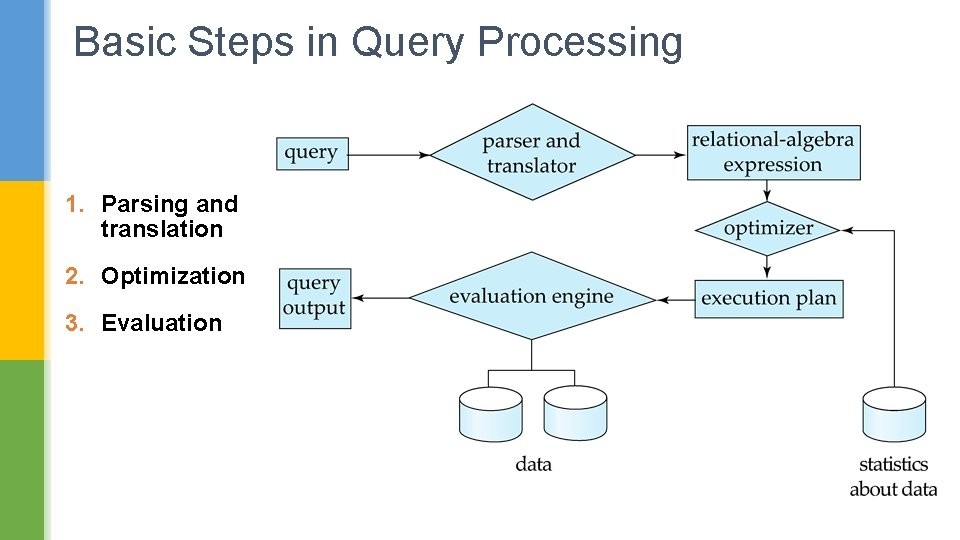

Basic Steps in Query Processing 1. Parsing and translation 2. Optimization 3. Evaluation

Basic Steps: Regarding Optimization (1/3) § One relational algebra expression many equivalent expressions § E. g. , for “Select salary from Instructor where salary < 75000” salary 75000( salary(instructor)) is equivalent to salary( salary 75000(instructor)) § Each relational algebra operator Many candidate algorithms § Thus, a relational-algebra expression can be evaluated in many ways.

Basic Steps: Regarding Optimization (2/3) Query Optimization: Amongst all equivalent evaluation plans choose the one with lowest cost. § Cost is estimated using statistical information from the database catalog § e. g. # tuples in each relation, size of tuples, etc. Material adapted from Silberchatz, Korth and Sudarshan

Basic Steps: Regarding Optimization (3/3) § Evaluation Plan: Annotated expression specifying detailed evaluation strategy for each operator. § E. g. , can use an index on salary to find instructors with salary < 75000, § or can perform complete relation scan and discard instructors with salary 75000

Measures of Query Cost § Total Cost = total elapsed time for answering query Many factors contribute to time cost e. g. , disk accesses, CPU, or even network communication § Typically disk access is the predominant cost, and is also relatively easy to estimate. § Measured by taking into account Number of seeks X average-seek-cost Number of blocks read X average-block-read-cost Number of blocks written X average-block-write-cost

Measures of Query Cost § Some simplifying assumptions will make Average-block-read-cost == Average-block-write-cost § An example: t. T – time to transfer one block t. S – time for one seek Cost for b block transfers plus S seeks b * t. T + S * t S

Measures of Query Cost § Some simplifying assumptions will make Average-block-read-cost == Average-block-write-cost § An example: t. T – time to transfer one block t. S – time for one seek Cost for b block transfers plus S seeks b * t. T + S * t S § Many times we will just work with total #blocks accessed as the total cost of a query algorithm.

Query Processing Algorithms

Selection Operation File scan § Algorithm A 1 (linear search). Scan each file block and test all records to see whether they satisfy the selection condition. § We assume that all blocks of a file are stored in contiguous locations. § Cost estimate = br block transfers + 1 seek § br denotes #blocks containing records from relation r § If selection is on a key attribute, can stop on finding record § cost = (br /2) block transfers + 1 seek

Selection Operation Index scan – search algorithms that use an index § selection condition must be on search-key of index. § A 2 (primary index, equality on key). Retrieve a single record that satisfies the corresponding equality condition § #blocks accessed = height + 1 § Cost = (height + 1) X (t. T + t. S) (assuming that levels are not on same cylinder)

Selection Operation Index scan – search algorithms that use an index § selection condition must be on search-key of index. § A 2 (primary index, equality on key). Retrieve a single record that satisfies the corresponding equality condition § #blocks accessed = height + 1 § Cost = (height + 1) X (t. T + t. S) § A 3 (primary index, equality on nonkey) Retrieve multiple records. § Records will be on consecutive blocks § Let b = #blocks containing matching records § Cost = height X (t. T + t. S) + t. S + t. T X b; total blocks = height + b;

Selection Operation § A 4 (secondary index). § Retrieve a single record if the search-key is a candidate key § Total #blocks accessed = height + 1 § Cost = (height + 1) * (t. T + t. S)

Selection Operation § A 4 (secondary index). § Retrieve a single record if the search-key is a candidate key § Total #blocks accessed = height + 1 § Cost = (height + 1) * (t. T + t. S) § Retrieve multiple records if search-key is not a candidate key § Each of n matching records may be on a different block § Total #blocks accessed = height + n + 1 § Cost = (height + n + 1) * (t. T + t. S)

Join Operation: Nested Loop Join § To compute theta join R S for each tuple tr in R do begin for each tuple ts in S do begin test pair (tr, ts) to see if they satisfy the join condition if they do, add tr • ts to the result. end § R is called the outer relation and S the inner relation of the join. § Requires no indices and can be used with any kind of join condition. § Expensive since it examines every pair of tuples in the two relations ? ?

Join Operation: Nested Loop Join § In the worst case, if there is enough memory only to hold one block of each relation, the estimated cost is nr bs + br blocks transferred, plus nr + b r seeks § If the smaller relation fits entirely in memory, use that as the inner relation. § Reduces cost to br + bs block transfers and 2 seeks § Block nested-loops algorithm (next slide) is preferable.

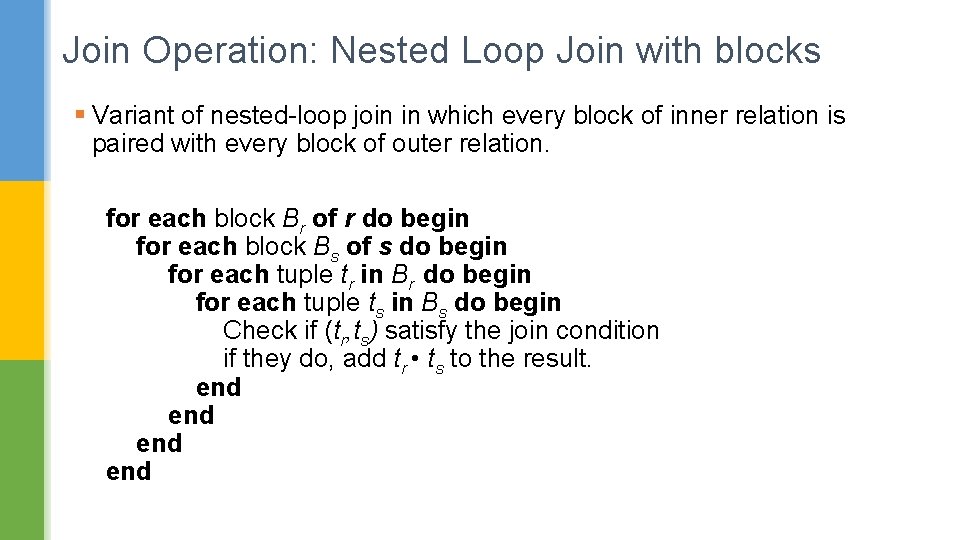

Join Operation: Nested Loop Join with blocks § Variant of nested-loop join in which every block of inner relation is paired with every block of outer relation. for each block Br of r do begin for each block Bs of s do begin for each tuple tr in Br do begin for each tuple ts in Bs do begin Check if (tr, ts) satisfy the join condition if they do, add tr • ts to the result. end end

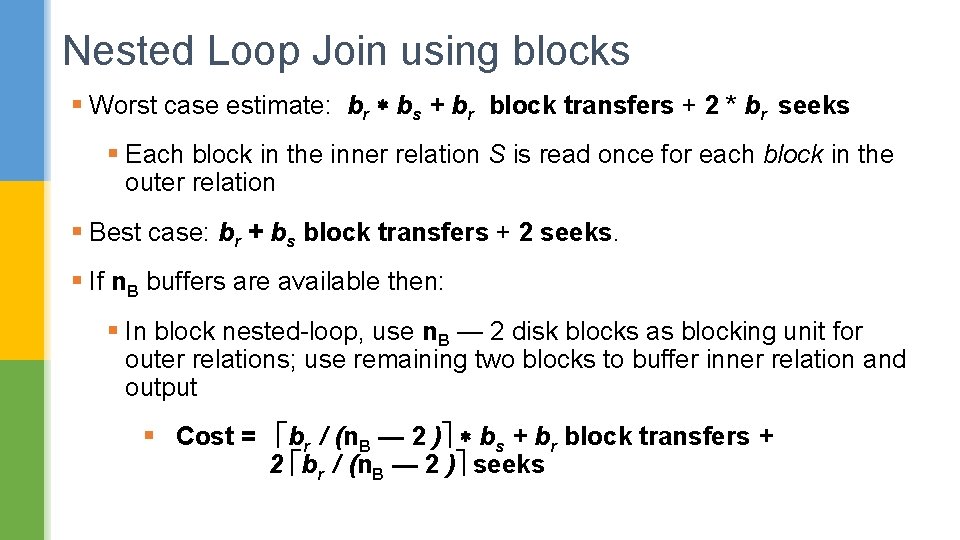

Nested Loop Join using blocks § Worst case estimate: br bs + br block transfers + 2 * br seeks § Each block in the inner relation S is read once for each block in the outer relation § Best case: br + bs block transfers + 2 seeks. § If n. B buffers are available then: § In block nested-loop, use n. B — 2 disk blocks as blocking unit for outer relations; use remaining two blocks to buffer inner relation and output § Cost = br / (n. B — 2 ) bs + br block transfers + 2 br / (n. B — 2 ) seeks

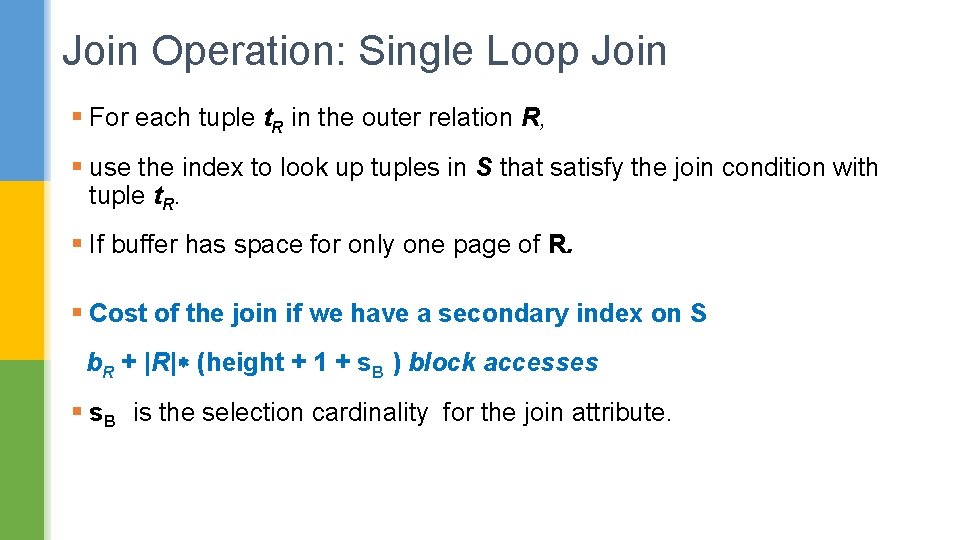

Join Operation: Single Loop Join § For each tuple t. R in the outer relation R, § use the index to look up tuples in S that satisfy the join condition with tuple t. R. § If buffer has space for only one page of R. § Cost of the join if we have a secondary index on S b. R + |R| (height + 1 + s. B ) block accesses § s. B is the selection cardinality for the join attribute.

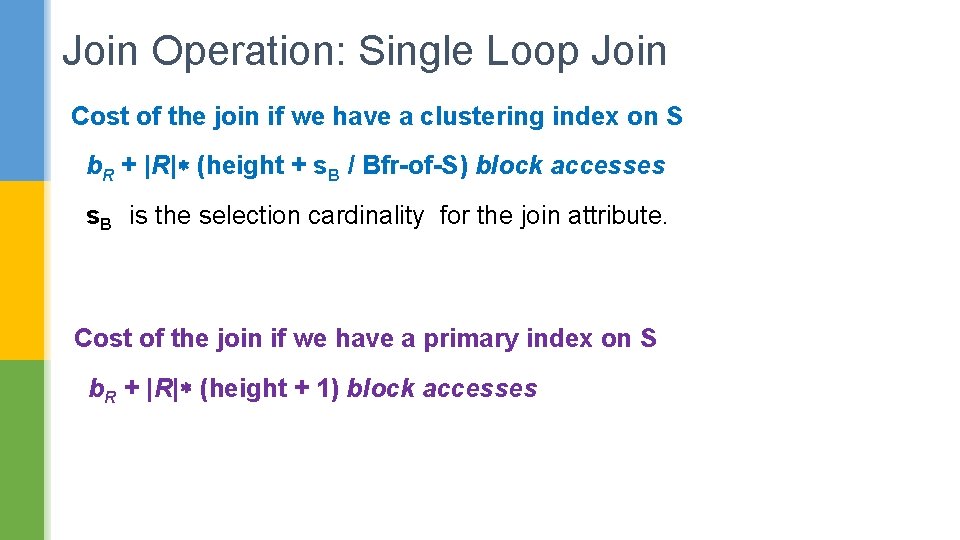

Join Operation: Single Loop Join Cost of the join if we have a clustering index on S b. R + |R| (height + s. B / Bfr-of-S) block accesses s. B is the selection cardinality for the join attribute. Cost of the join if we have a primary index on S b. R + |R| (height + 1) block accesses

Join Operation: Merge Join Algorithm 1. Sort both relations on their join attribute (if not already sorted on the join attributes). 2. Merge the sorted relations to join them 1. Join step is similar to the merge stage of the sort-merge algorithm. 2. Main difference is handling of duplicate values in join attribute — every pair with same value on join attribute must be matched and reported in the result.

Join Operation: Merge Join Algorithm § Each block needs to be read only once. § Under the assumption that all tuples of one relation for any given value of the join attributes fit in memory § If bb is the number buffer blocks allocated to each relation. § The cost of merge join is: br + bs block transfers + br / bb + bs / bb seeks + the cost of sorting if relations are unsorted.

Join Operation: Hash Join Algorithm Step 1: Partition Phase: h maps Join. Attrs values to {0, 1, . . . , n}, where Join. Attrs denotes the common attributes of r and s used in the join. § r 0, r 1, . . . , rn denote partitions of R’s tuples § Each tuple tr R is put in partition ri where i = h(tr [Join. Attrs]). § s 0, , s 1. . . , sn denotes partitions of S’s tuples § Each tuple ts S is put in partition si, where i = h(ts [Join. Attrs]). § Each partition (of S or R) may spread across several disk blocks.

Join Operation: Hash Join Algorithm Intuition of Partition §Phase: R tuples in r need only to be compared with S tuples in s i i § Need not be compared with s tuples in any other partition, since: § an r tuple and an s tuple that satisfy the join condition will have the same value for the join attributes. § If that value is hashed to some value i, the r tuple has to be in ri and the s tuple in si.

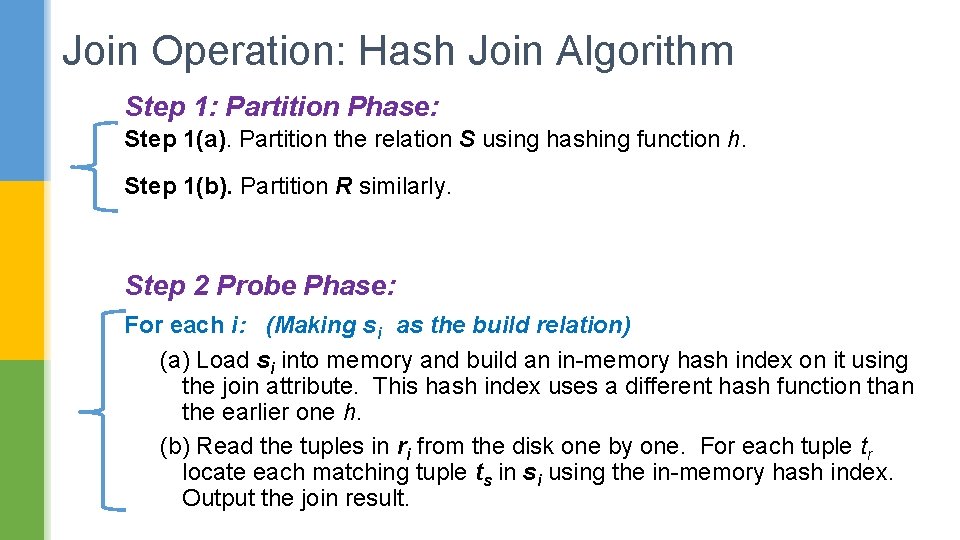

Join Operation: Hash Join Algorithm Step 1: Partition Phase: Step 1(a). Partition the relation S using hashing function h. Step 1(b). Partition R similarly. Step 2 Probe Phase: For each i: (Making si as the build relation) (a) Load si into memory and build an in-memory hash index on it using the join attribute. This hash index uses a different hash function than the earlier one h. (b) Read the tuples in ri from the disk one by one. For each tuple tr locate each matching tuple ts in si using the in-memory hash index. Output the join result.

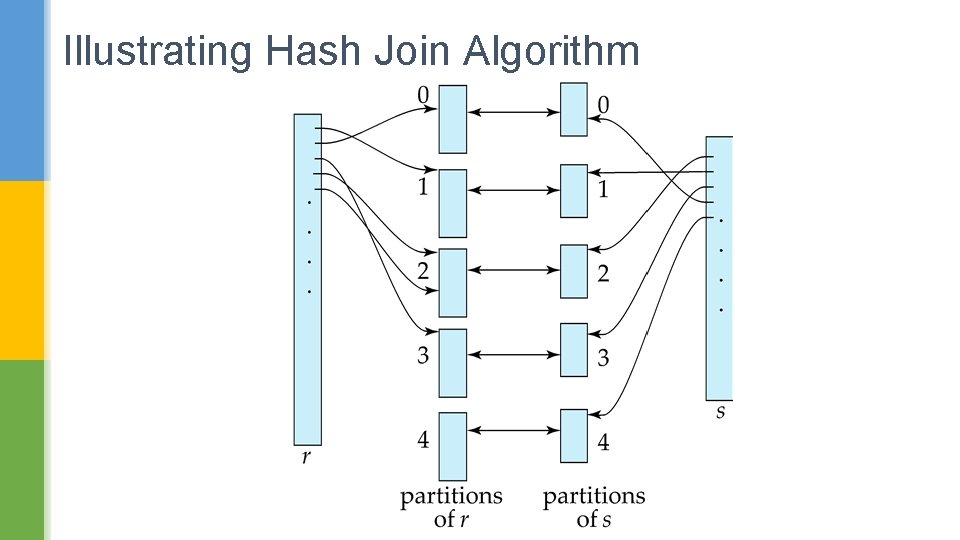

Illustrating Hash Join Algorithm

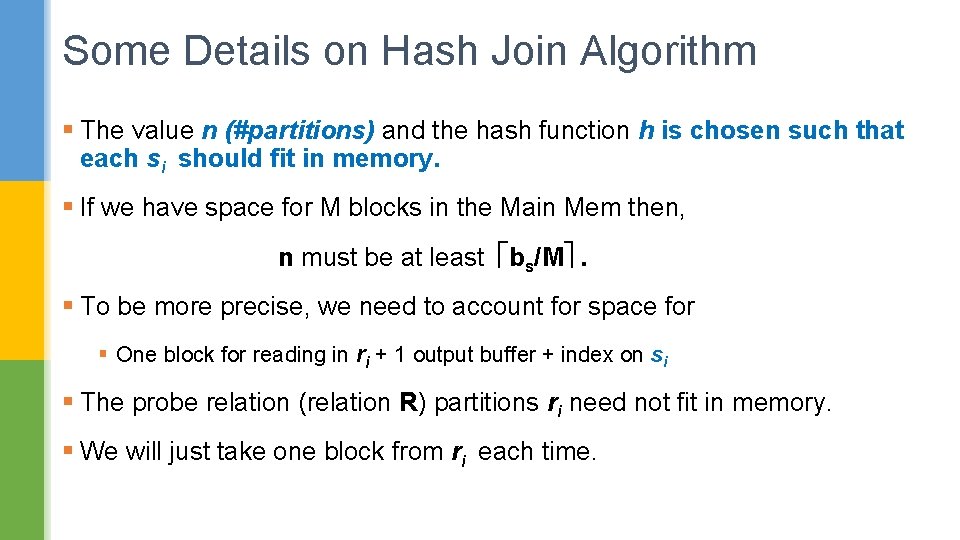

Some Details on Hash Join Algorithm § The value n (#partitions) and the hash function h is chosen such that each si should fit in memory. § If we have space for M blocks in the Main Mem then, n must be at least bs/M . § To be more precise, we need to account for space for § One block for reading in ri + 1 output buffer + index on si § The probe relation (relation R) partitions ri need not fit in memory. § We will just take one block from ri each time.

Some Details Hash Join Algorithm Recursive partitioning § Required if #partitions n is greater than #buffers M available. § Instead of partitioning n ways, use M – 1 partitions. § Further partition the M – 1 partitions using a different hash function § Use same partitioning method on both R and S.

Join Operation: Hash Join Algorithm § Hash-table overflow occurs in partition si if si does not fit in memory despite choosing the number of partitions appropriately. Reasons could be § Many tuples in S with same value for join attributes § Bad hash function § Overflow resolution can be done in build phase § Partition si is further partitioned using different hash function. § Partition ri must be similarly partitioned.

Join Operation: Hash Join Algorithm § Overflow avoidance performs partitioning carefully to avoid overflows during build phase § E. g. partition build relation into many partitions, then combine them § Both approaches fail with large numbers of duplicates § Fallback option: use block nested loops join on overflowed partitions.

Join Operation: Hash Join Algorithm Cost If recursive partitioning is not required: cost of hash join is 3(br + bs) + 4 n block transfers n is the number of partitions created on relation R and S.

Join Operation: Hash Join Algorithm Cost If recursive partitioning is not required: cost of hash join is 3(br + bs) + 4 n block transfers Small, can be ignored!

External Sorting Algorithm N-way Sort-Merge strategy: § Starts by sorting small subfiles (runs) of the main file § Then merges the sorted runs, creating larger sorted subfiles that are merged in turn. § Each merge phase merges >= 2 runs.

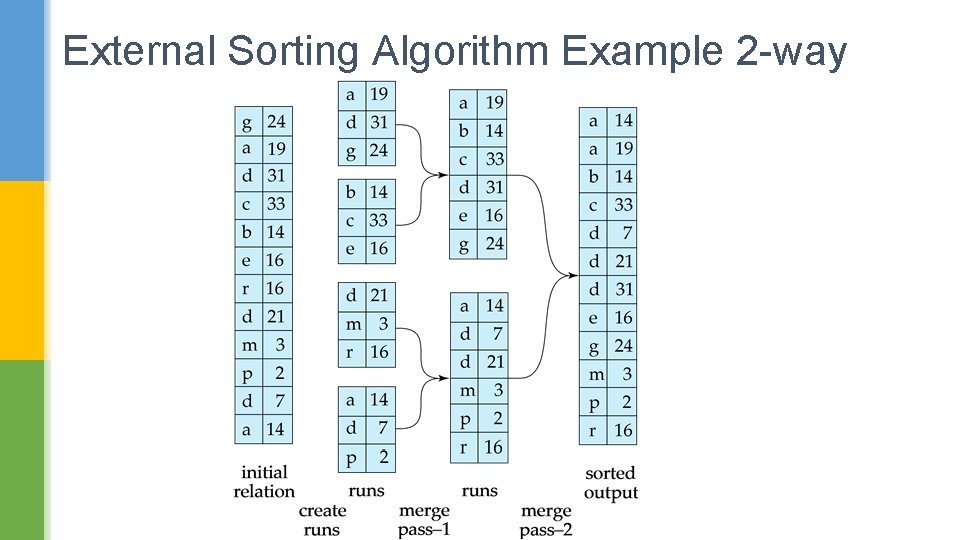

External Sorting Algorithm Example 2 -way

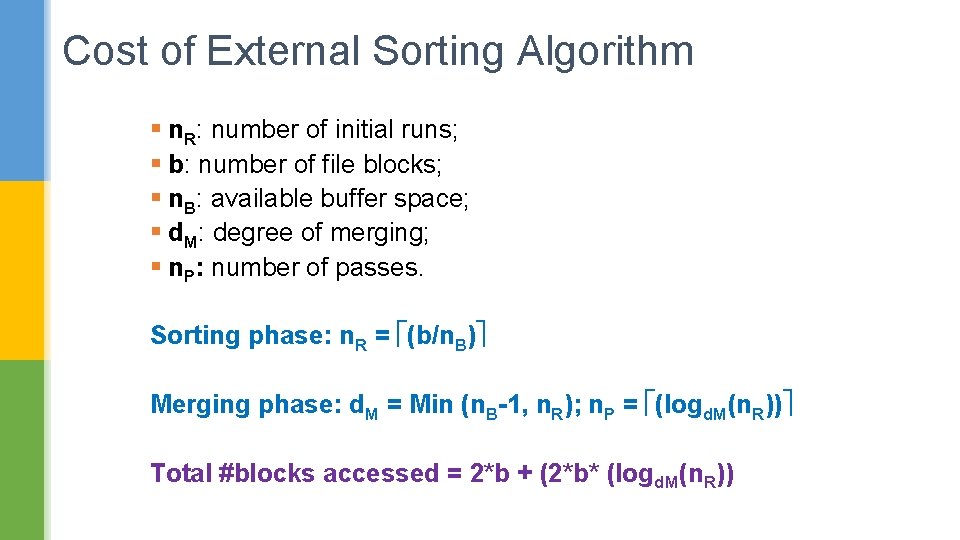

Cost of External Sorting Algorithm § n. R: number of initial runs; § b: number of file blocks; § n. B: available buffer space; § d. M: degree of merging; § n. P: number of passes. Sorting phase: n. R = (b/n. B) Merging phase: d. M = Min (n. B-1, n. R); n. P = (logd. M(n. R)) Total #blocks accessed = 2*b + (2*b* (logd. M(n. R))

Some Meta-Level Evaluation Strategies

Materialization Materialized evaluation: § Evaluate one operation at a time, starting at the lowest-level. § Use intermediate results materialized into temporary relations to evaluate next-level operations.

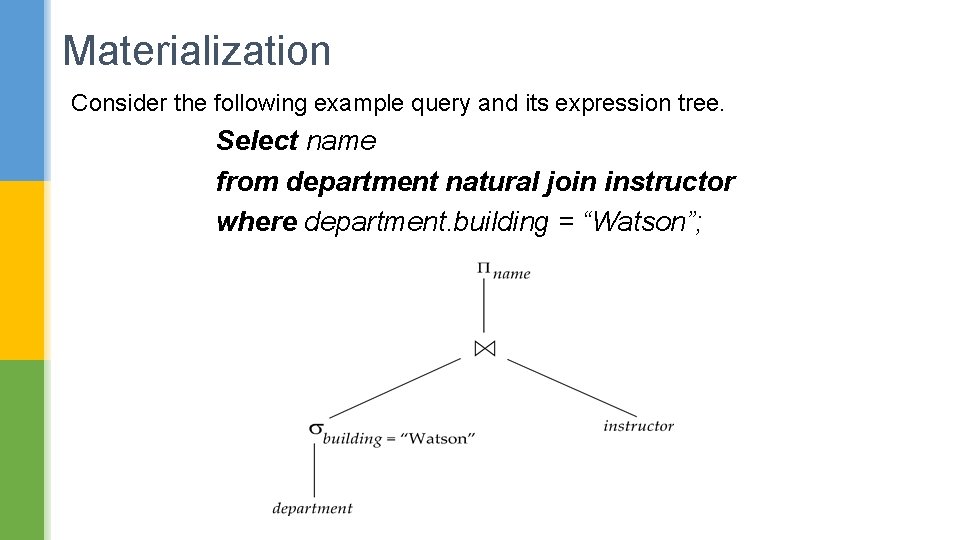

Materialization Consider the following example query and its expression tree. Select name from department natural join instructor where department. building = “Watson”;

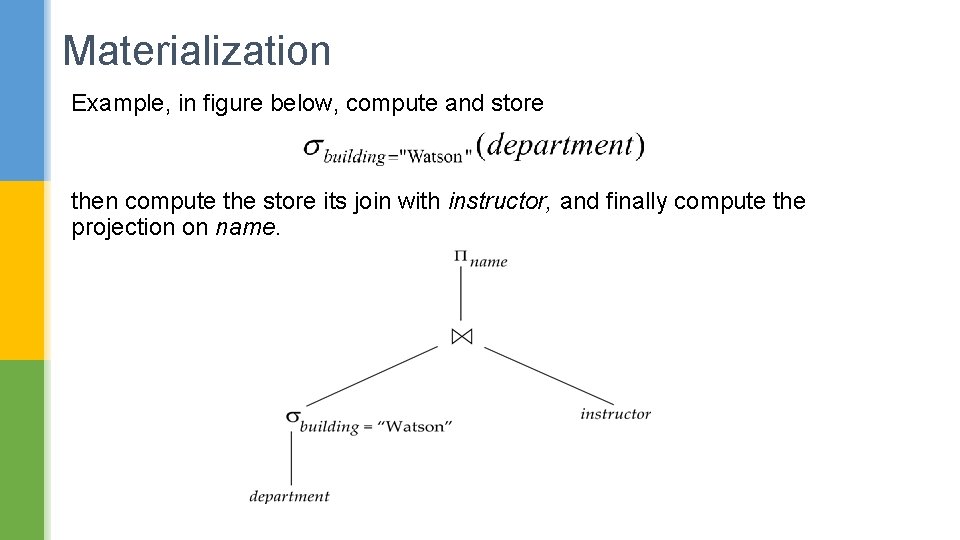

Materialization Example, in figure below, compute and store then compute the store its join with instructor, and finally compute the projection on name.

Materialization Contd. . § Materialized evaluation is always applicable § Cost of writing results to disk and reading them back can be quite high § Our cost formulas for operations for far have ignored cost of writing results to disk, so § Overall cost = Sum of costs of individual operations + cost of writing intermediate results to disk

Pipelining Pipelined evaluation : § Evaluate several operations simultaneously, passing the results of one operation on to the next. § E. g. , in previous expression tree, don’t store result of § instead, pass tuples directly to the join. § Similarly, don’t store result of join, pass tuples directly to projection. § Any thoughts on its applicability? Can it be used in all kinds of query processing algorithms?

Pipelining § Much cheaper than materialization: no need to store a temporary relation to disk. § Pipelining may not always be possible – e. g. , sort, hash-join. § For pipelining to be effective, we need algorithms that generate output tuples even as tuples are received for inputs to the operation. § Pipelines can be executed in two ways: demand driven and producer driven

Pipelining: Demand Driven § In demand driven or lazy evaluation § System repeatedly requests next tuple from top level operation § Each operation requests next tuple from children operations as required, in order to output its next tuple § In between calls, operation has to maintain “state” so it knows what to return next

Pipelining: Producer Driven § In producer-driven or eager pipelining § Operators produce tuples eagerly and pass them up to their parents § Buffer maintained between operators, child puts tuples in buffer, parent removes tuples from buffer § if buffer is full, child waits till there is space in the buffer, and then generates more tuples § System schedules operations that have space in output buffer and can process more input tuples

- Slides: 44