Database storage at CERN IT Department Agenda CERN

Database storage at CERN, IT Department

Agenda • • • CERN introduction Our setup Caching technologies Snapshots Data motion, compression & deduplication Conclusions 3

CERN • • • CERN - European Laboratory for Particle Physics Founded in 1954 by 12 Countries for fundamental physics research in the post-war Europe Today 21 member states + world-wide collaborations About ~1000 MCHF yearly budget 2’ 300 CERN personnel 10’ 000 users from 110 countries 4

Fundamental Research What is 95% of the Universe made of? • Why do particles have mass? • Why is there no antimatter left in the Universe? • What was the Universe like, just after the "Big Bang"? • 5

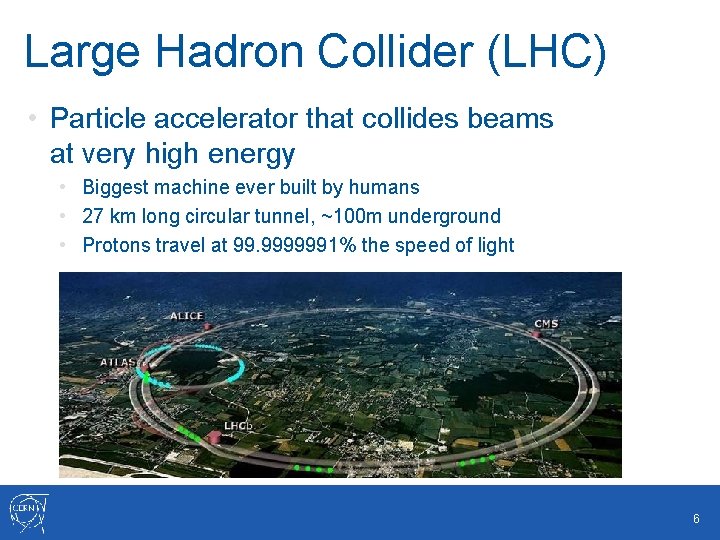

Large Hadron Collider (LHC) • Particle accelerator that collides beams at very high energy • Biggest machine ever built by humans • 27 km long circular tunnel, ~100 m underground • Protons travel at 99. 9999991% the speed of light 6

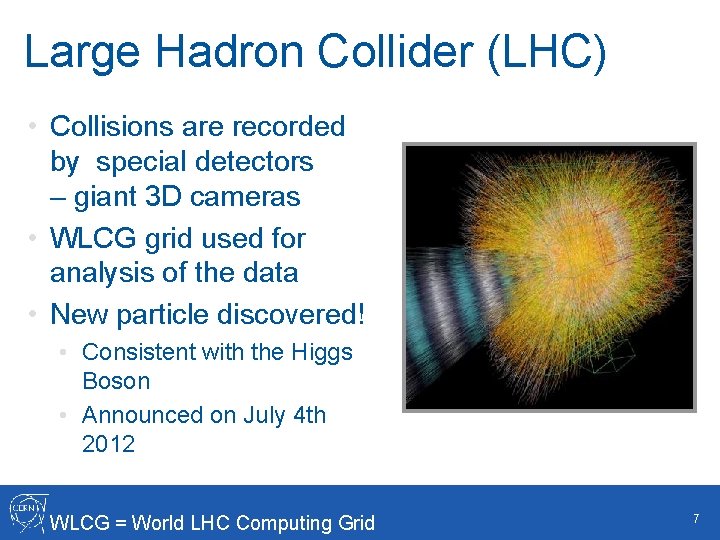

Large Hadron Collider (LHC) • Collisions are recorded by special detectors – giant 3 D cameras • WLCG grid used for analysis of the data • New particle discovered! • Consistent with the Higgs Boson • Announced on July 4 th 2012 WLCG = World LHC Computing Grid 7

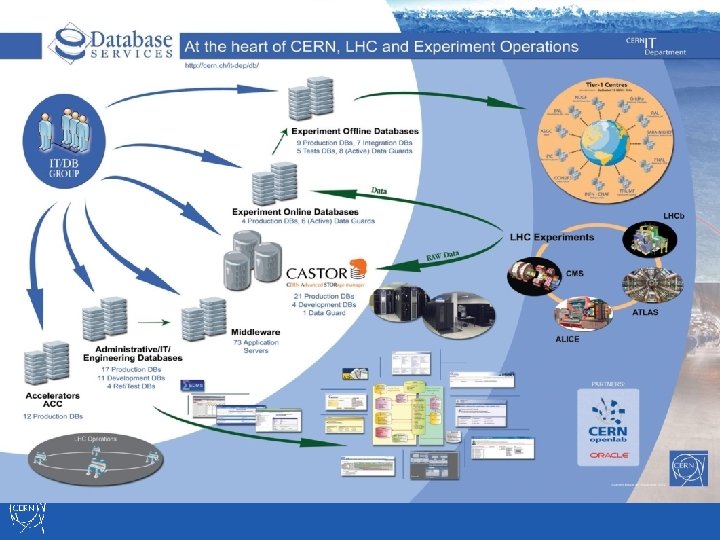

8

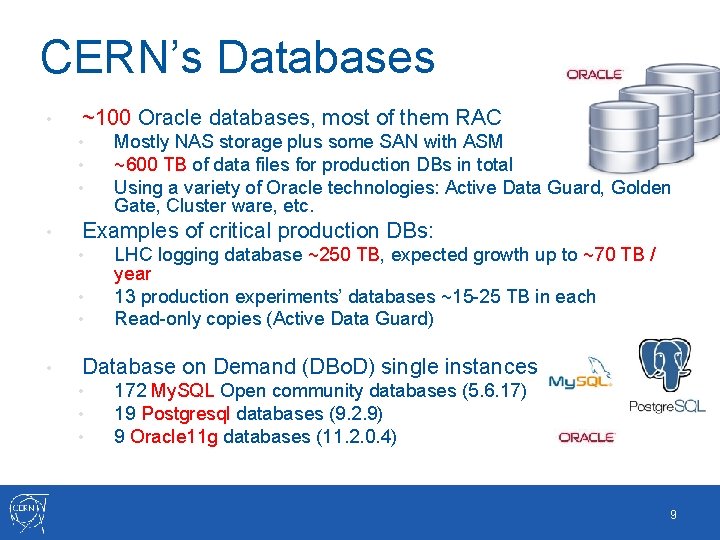

CERN’s Databases • ~100 Oracle databases, most of them RAC • • Examples of critical production DBs: • • Mostly NAS storage plus some SAN with ASM ~600 TB of data files for production DBs in total Using a variety of Oracle technologies: Active Data Guard, Golden Gate, Cluster ware, etc. LHC logging database ~250 TB, expected growth up to ~70 TB / year 13 production experiments’ databases ~15 -25 TB in each Read-only copies (Active Data Guard) Database on Demand (DBo. D) single instances • • • 172 My. SQL Open community databases (5. 6. 17) 19 Postgresql databases (9. 2. 9) 9 Oracle 11 g databases (11. 2. 0. 4) 9

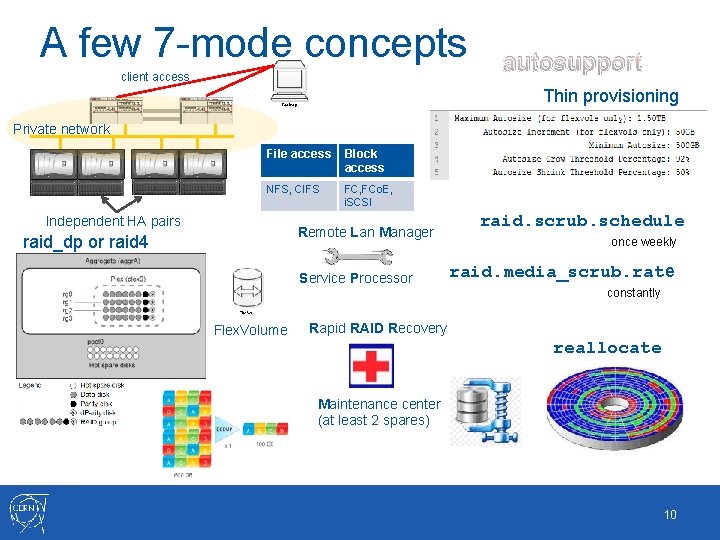

A few 7 -mode concepts client access autosupport Thin provisioning Private network File access Block access NFS, CIFS FC, FCo. E, i. SCSI Independent HA pairs Remote Lan Manager raid_dp or raid 4 Service Processor raid. scrub. schedule once weekly raid. media_scrub. rate constantly Flex. Volume Rapid RAID Recovery reallocate Maintenance center (at least 2 spares) 10

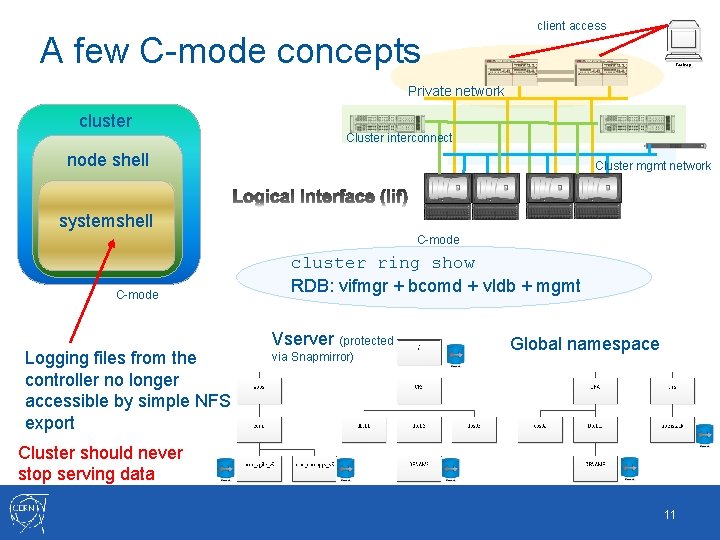

A few C-mode concepts client access Private network cluster Cluster interconnect node shell Cluster mgmt network systemshell C-mode Logging files from the controller no longer accessible by simple NFS export cluster ring show RDB: vifmgr + bcomd + vldb + mgmt Vserver (protected via Snapmirror) Global namespace Cluster should never stop serving data 11

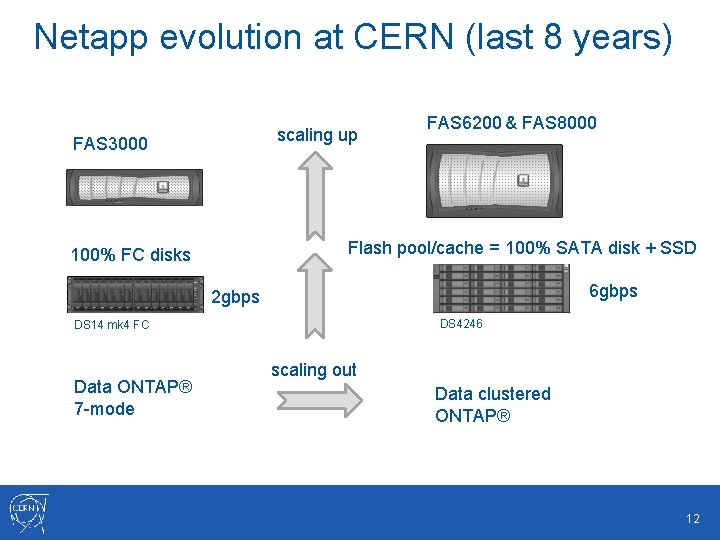

Netapp evolution at CERN (last 8 years) scaling up FAS 3000 FAS 6200 & FAS 8000 Flash pool/cache = 100% SATA disk + SSD 100% FC disks 6 gbps 2 gbps DS 4246 DS 14 mk 4 FC Data ONTAP® 7 -mode scaling out Data clustered ONTAP® 12

Agenda • • • Brief introduction Our setup Caching technologies Snapshots Data motion, compression & dedup Conclusions 13

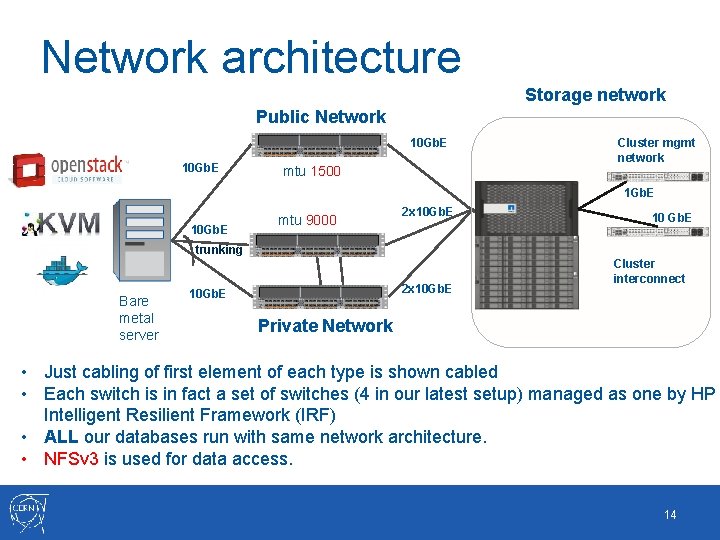

Network architecture Storage network Public Network 10 Gb. E mtu 1500 Cluster mgmt network 1 Gb. E 10 Gb. E mtu 9000 2 x 10 Gb. E 10 Gb. E trunking Bare metal server 2 x 10 Gb. E Cluster interconnect Private Network • Just cabling of first element of each type is shown cabled • Each switch is in fact a set of switches (4 in our latest setup) managed as one by HP Intelligent Resilient Framework (IRF) • ALL our databases run with same network architecture. • NFSv 3 is used for data access. 14

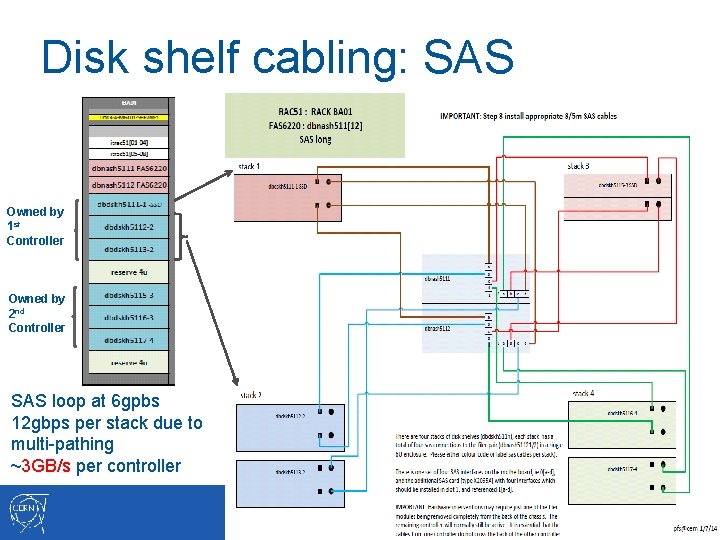

Disk shelf cabling: SAS Owned by 1 st Controller Owned by 2 nd Controller SAS loop at 6 gpbs 12 gbps per stack due to multi-pathing ~3 GB/s per controller 15

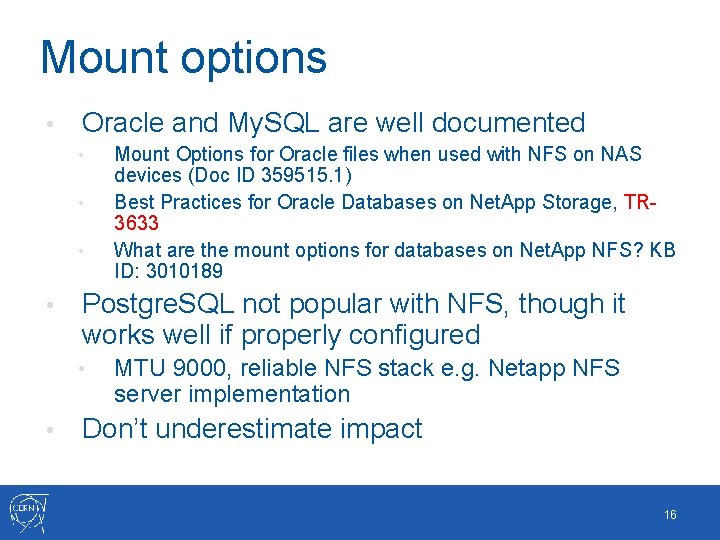

Mount options • Oracle and My. SQL are well documented • • Postgre. SQL not popular with NFS, though it works well if properly configured • • Mount Options for Oracle files when used with NFS on NAS devices (Doc ID 359515. 1) Best Practices for Oracle Databases on Net. App Storage, TR 3633 What are the mount options for databases on Net. App NFS? KB ID: 3010189 MTU 9000, reliable NFS stack e. g. Netapp NFS server implementation Don’t underestimate impact 16

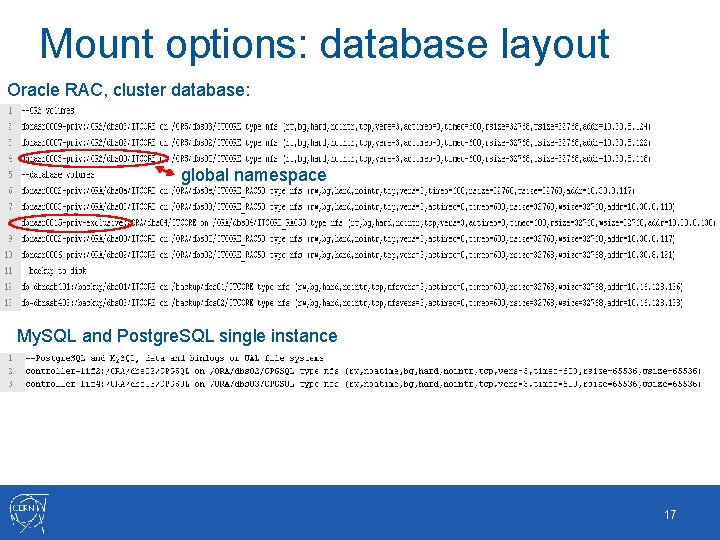

Mount options: database layout Oracle RAC, cluster database: global namespace My. SQL and Postgre. SQL single instance 17

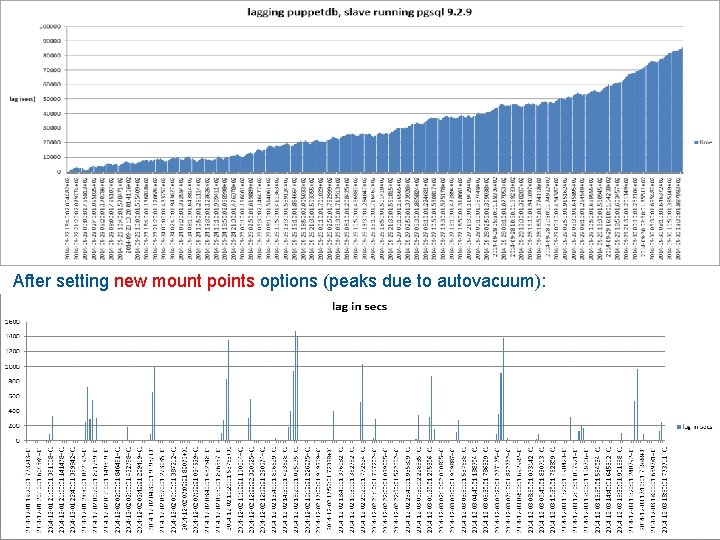

After setting new mount points options (peaks due to autovacuum): 18

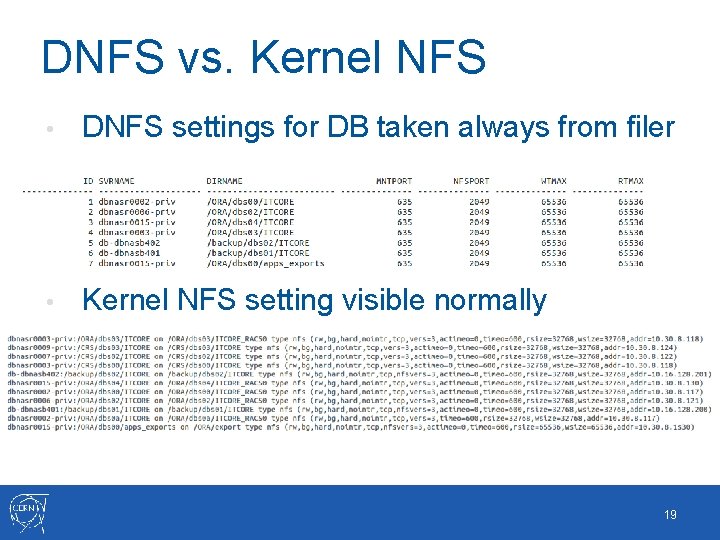

DNFS vs. Kernel NFS • DNFS settings for DB taken always from filer • Kernel NFS setting visible normally 19

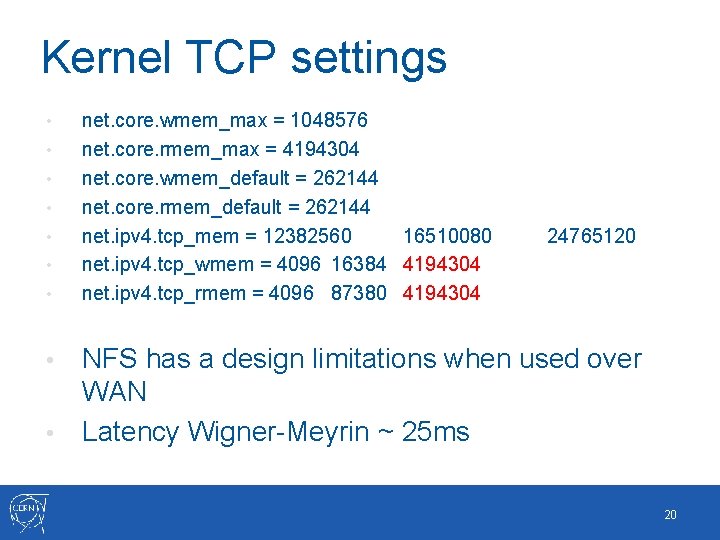

Kernel TCP settings • • net. core. wmem_max = 1048576 net. core. rmem_max = 4194304 net. core. wmem_default = 262144 net. core. rmem_default = 262144 net. ipv 4. tcp_mem = 12382560 16510080 net. ipv 4. tcp_wmem = 4096 16384 4194304 net. ipv 4. tcp_rmem = 4096 87380 4194304 24765120 NFS has a design limitations when used over WAN • Latency Wigner-Meyrin ~ 25 ms • 20

Agenda • • • Brief introduction Our setup Caching technologies Snapshots Data motion, compression & dedup Conclusions 21

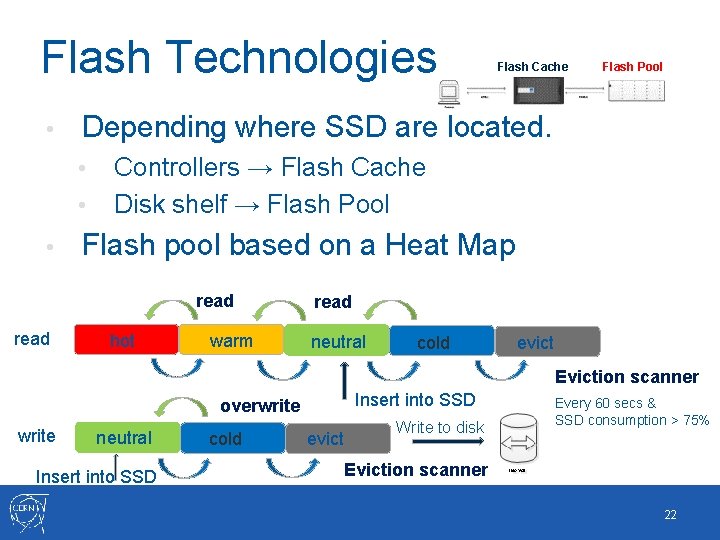

Flash Technologies • • Controllers → Flash Cache Disk shelf → Flash Pool Flash pool based on a Heat Map read Flash Pool Depending where SSD are located. • • Flash Cache hot warm read neutral cold evict Eviction scanner Insert into SSD overwrite neutral Insert into SSD cold evict Write to disk Every 60 secs & SSD consumption > 75% Eviction scanner 22

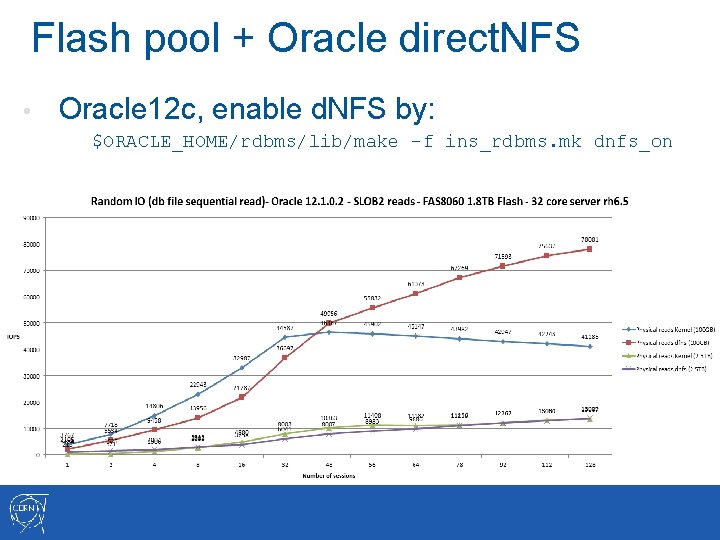

Flash pool + Oracle direct. NFS • Oracle 12 c, enable d. NFS by: $ORACLE_HOME/rdbms/lib/make -f ins_rdbms. mk dnfs_on

Agenda • • • Brief introduction Our setup Caching technologies Snapshots Data motion, compression & dedup Conclusions 25

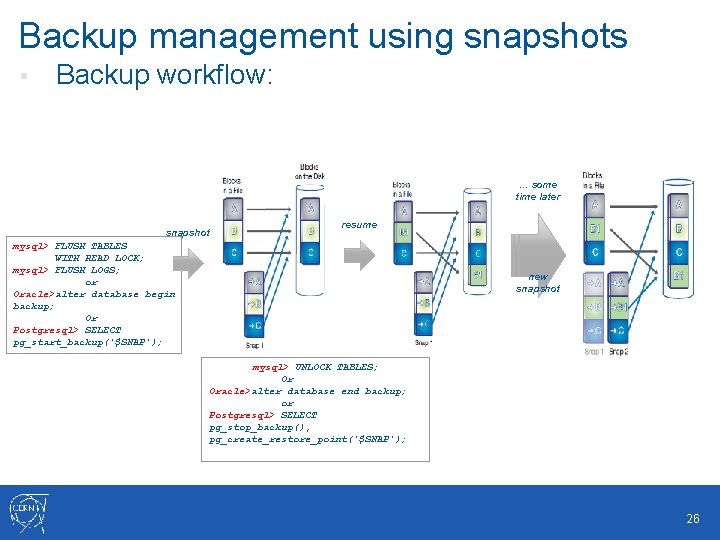

Backup management using snapshots • Backup workflow: … some time later snapshot resume mysql> FLUSH TABLES WITH READ LOCK; mysql> FLUSH LOGS; or Oracle>alter database begin backup; Or Postgresql> SELECT pg_start_backup('$SNAP'); new snapshot mysql> UNLOCK TABLES; Or Oracle>alter database end backup; or Postgresql> SELECT pg_stop_backup(), pg_create_restore_point('$SNAP'); 26

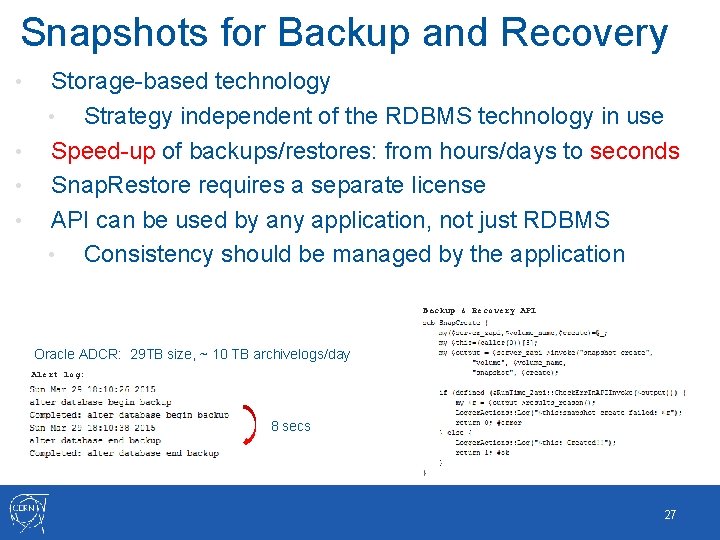

Snapshots for Backup and Recovery • • Storage-based technology • Strategy independent of the RDBMS technology in use Speed-up of backups/restores: from hours/days to seconds Snap. Restore requires a separate license API can be used by any application, not just RDBMS • Consistency should be managed by the application Backup & Recovery API Oracle ADCR: 29 TB size, ~ 10 TB archivelogs/day Alert log: 8 secs 27

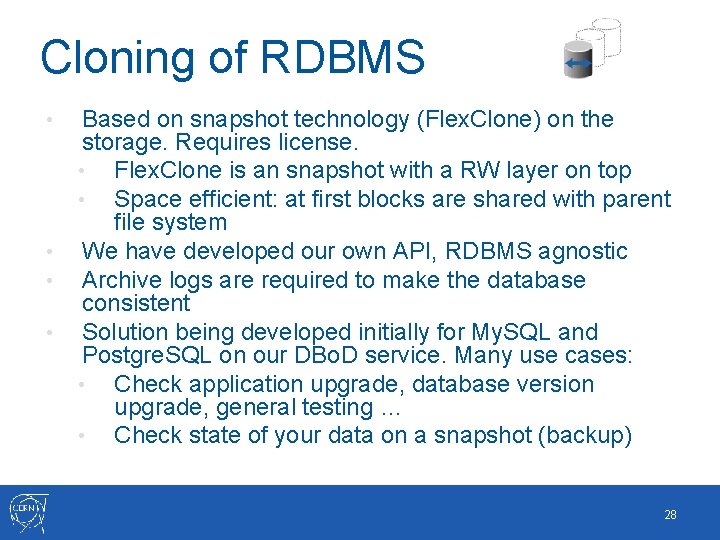

Cloning of RDBMS • • Based on snapshot technology (Flex. Clone) on the storage. Requires license. • Flex. Clone is an snapshot with a RW layer on top • Space efficient: at first blocks are shared with parent file system We have developed our own API, RDBMS agnostic Archive logs are required to make the database consistent Solution being developed initially for My. SQL and Postgre. SQL on our DBo. D service. Many use cases: • Check application upgrade, database version upgrade, general testing … • Check state of your data on a snapshot (backup) 28

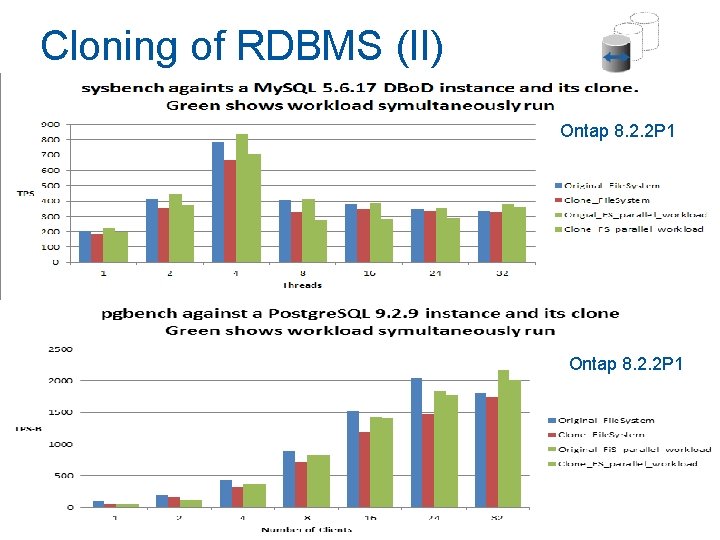

Cloning of RDBMS (II) Ontap 8. 2. 2 P 1 29

Agenda • • • Brief introduction Our setup Caching technologies Snapshots Data motion, compression & dedup Conclusions 30

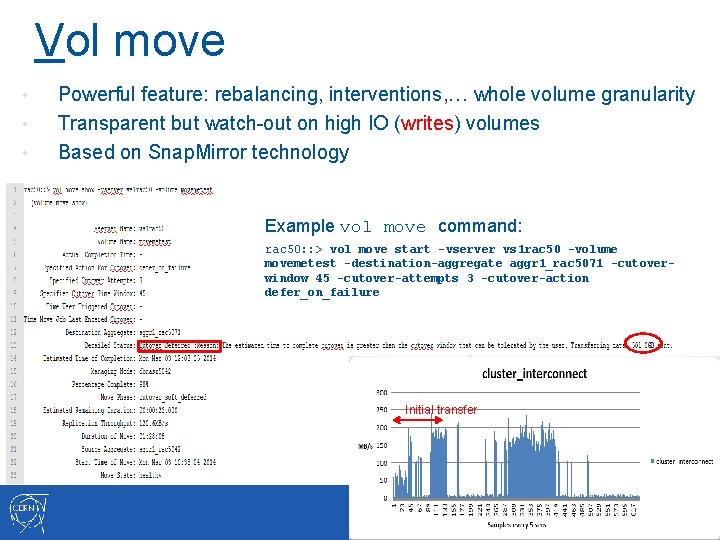

Vol move • • • Powerful feature: rebalancing, interventions, … whole volume granularity Transparent but watch-out on high IO (writes) volumes Based on Snap. Mirror technology Example vol move command: rac 50: : > vol move start -vserver vs 1 rac 50 -volume movemetest -destination-aggregate aggr 1_rac 5071 -cutoverwindow 45 -cutover-attempts 3 -cutover-action defer_on_failure Initial transfer

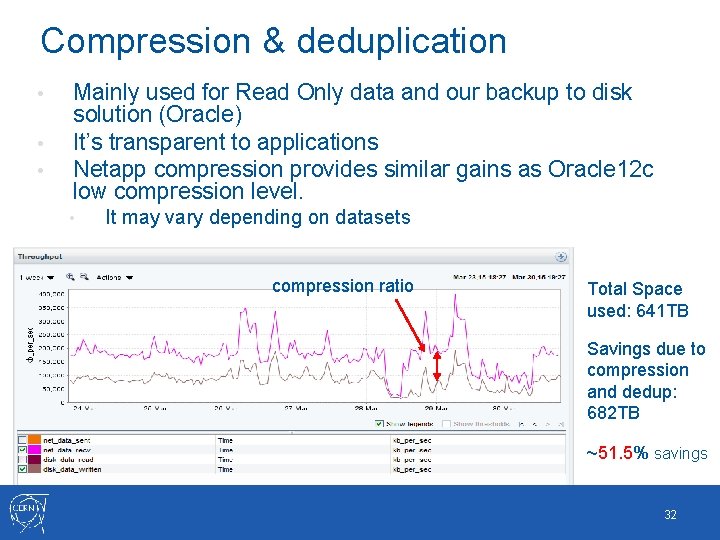

Compression & deduplication • • • Mainly used for Read Only data and our backup to disk solution (Oracle) It’s transparent to applications Netapp compression provides similar gains as Oracle 12 c low compression level. • It may vary depending on datasets compression ratio Total Space used: 641 TB Savings due to compression and dedup: 682 TB ~51. 5% savings 32

Conclusions Positive experience so far running on C-mode • Mid to high end Net. App NAS provide good performance using the Flash. Pool SSD caching solution • Flexibility with clustered ONTAP, helps to reduce the investment • • Same infrastructure used to provide i. SCSI object storage via CINDER Design of stacks and network access require careful planning • Immortal cluster • 33

Questions 34

- Slides: 33