Database replication policies for dynamic content applications Gokul

Database replication policies for dynamic content applications Gokul Soundararajan, Cristiana Amza, Ashvin Goel University of Toronto Presented by Ahmed Ataullah Wednesday, November 22 nd 2006

The plan Motivation and Introduction n Background n Suggested Technique n Optimization/Feeback ‘loop’ n Summary of Results n Discussion n 2

The Problem n 3 -Tier Framework n Problem: n Solution: – Recall: The benefits of partial/full database replication in dynamic content (web) applications – We need to address some issues in the framework as presented in ‘Ganymed’ – How many replicas do we allocate? – How do we take care of overload situations while maximizing utilization and minimizing the number of replicas? – Dynamically (de)allocate replicas as needed 3

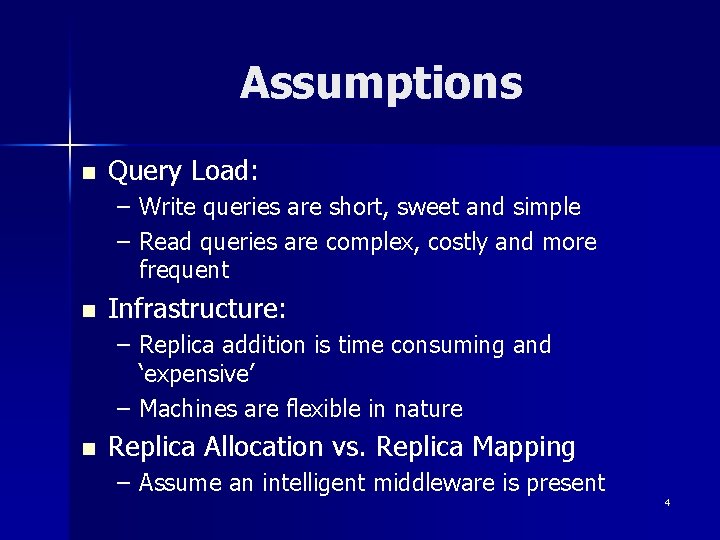

Assumptions n Query Load: – Write queries are short, sweet and simple – Read queries are complex, costly and more frequent n Infrastructure: – Replica addition is time consuming and ‘expensive’ – Machines are flexible in nature n Replica Allocation vs. Replica Mapping – Assume an intelligent middleware is present 4

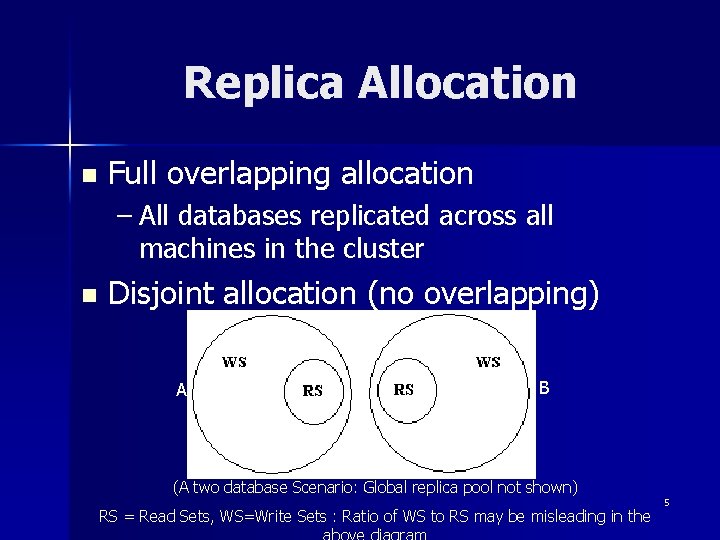

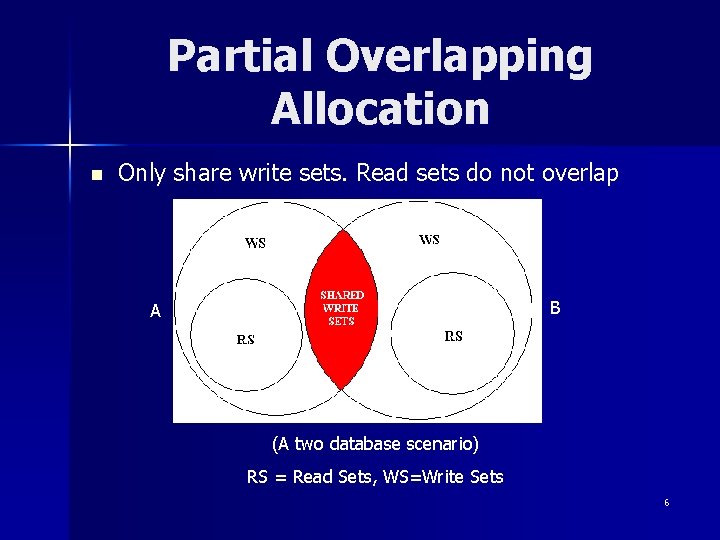

Replica Allocation n Full overlapping allocation – All databases replicated across all machines in the cluster n Disjoint allocation (no overlapping) A B (A two database Scenario: Global replica pool not shown) RS = Read Sets, WS=Write Sets : Ratio of WS to RS may be misleading in the 5

Partial Overlapping Allocation n Only share write sets. Read sets do not overlap B A (A two database scenario) RS = Read Sets, WS=Write Sets 6

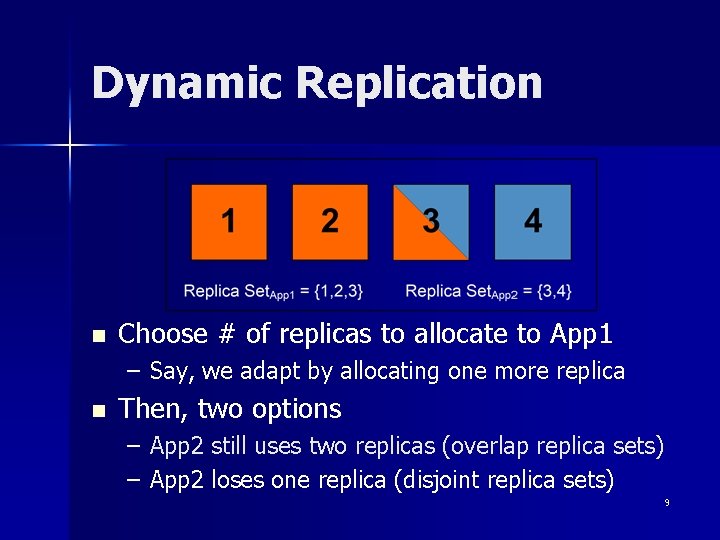

Dynamic Replication (Eurosys 2006 Slides) n Assume a cluster hosts 2 applications – App 1 (Red) using 2 machines – App 2 (Blue) using 2 machines n Assume App 1 has a load spike 7

Dynamic Replication n Choose # of replicas to allocate to App 1 – Say, we adapt by allocating one more replica n Then, two options – App 2 still uses two replicas (overlap replica sets) – App 2 loses one replica (disjoint replica sets) 8

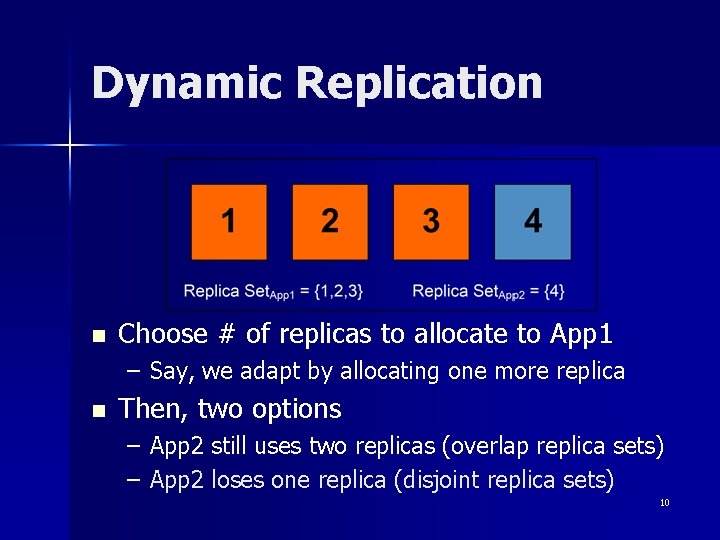

Dynamic Replication n Choose # of replicas to allocate to App 1 – Say, we adapt by allocating one more replica n Then, two options – App 2 still uses two replicas (overlap replica sets) – App 2 loses one replica (disjoint replica sets) 9

Dynamic Replication n Choose # of replicas to allocate to App 1 – Say, we adapt by allocating one more replica n Then, two options – App 2 still uses two replicas (overlap replica sets) – App 2 loses one replica (disjoint replica sets) 10

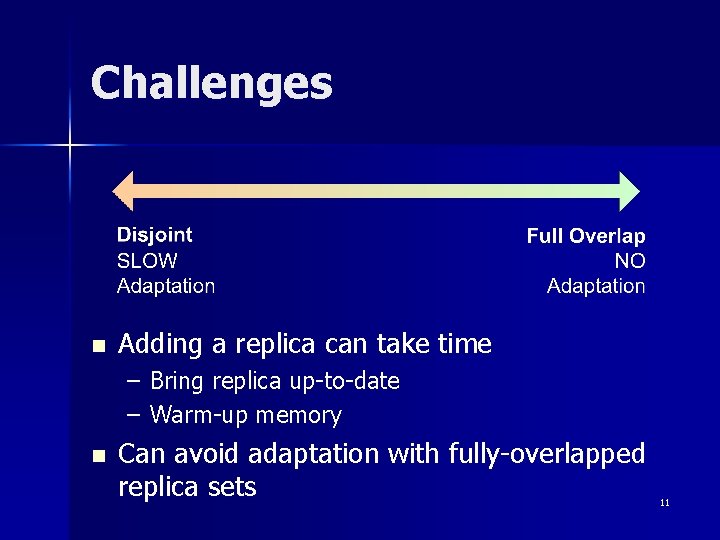

Challenges n Adding a replica can take time – Bring replica up-to-date – Warm-up memory n Can avoid adaptation with fully-overlapped replica sets 11

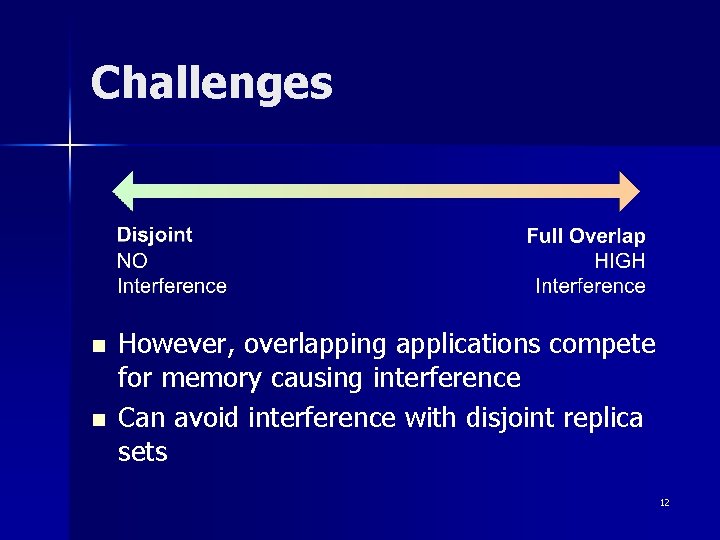

Challenges n n However, overlapping applications compete for memory causing interference Can avoid interference with disjoint replica sets 12

Challenges n n However, overlapping applications compete for memory causing interference Can avoid interference with disjoint replica sets 13 Tradeoff between adaptation delay and interference

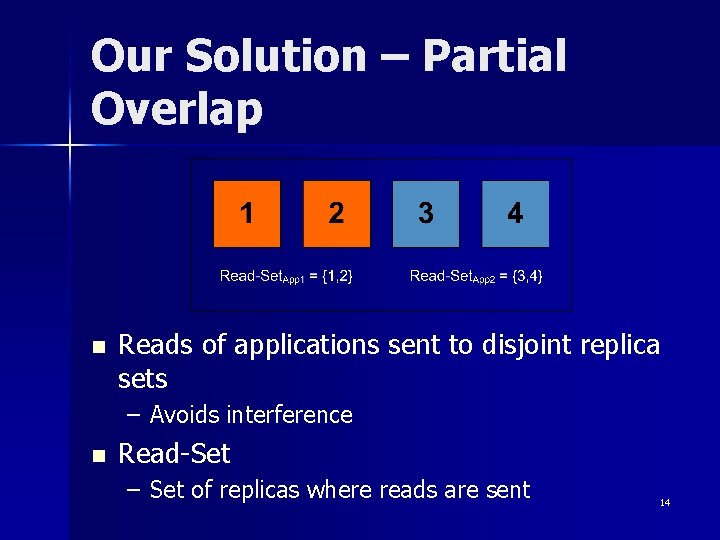

Our Solution – Partial Overlap n Reads of applications sent to disjoint replica sets – Avoids interference n Read-Set – Set of replicas where reads are sent 14

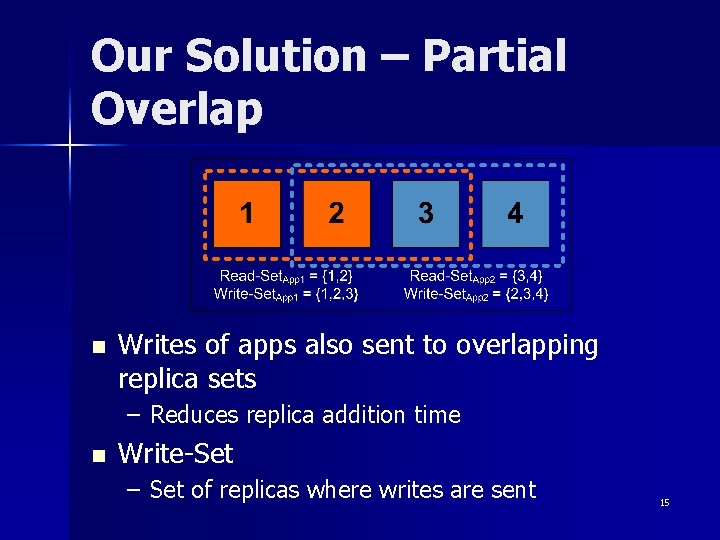

Our Solution – Partial Overlap n Writes of apps also sent to overlapping replica sets – Reduces replica addition time n Write-Set – Set of replicas where writes are sent 15

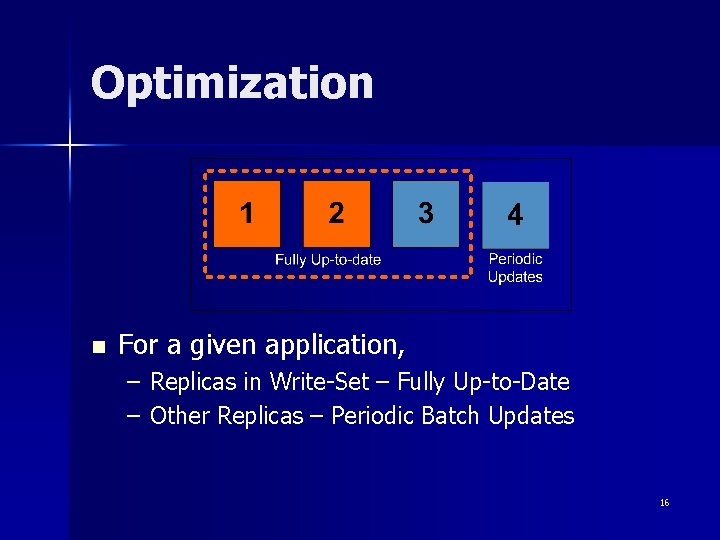

Optimization n For a given application, – Replicas in Write-Set – Fully Up-to-Date – Other Replicas – Periodic Batch Updates 16

Secondary Implementation Details n Scheduler(s): – Separate read-only from read/write queries – One copy serializability is guaranteed n Optimization: – Scheduler also stores some cached information (queries, write sets etc, ) to reduce warm-up/ready time. – Conflict awareness at the scheduler layer 17

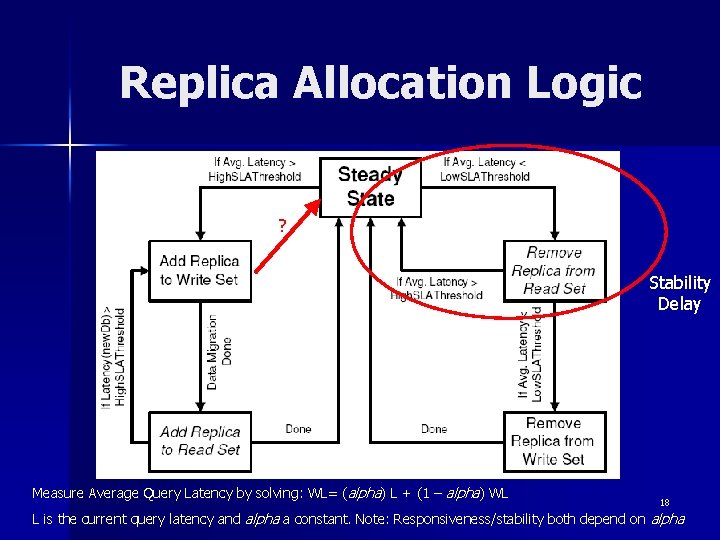

Replica Allocation Logic ? Stability Delay Measure Average Query Latency by solving: WL= (alpha) L + (1 – alpha) WL 18 L is the current query latency and alpha a constant. Note: Responsiveness/stability both depend on alpha

Results It works… 19

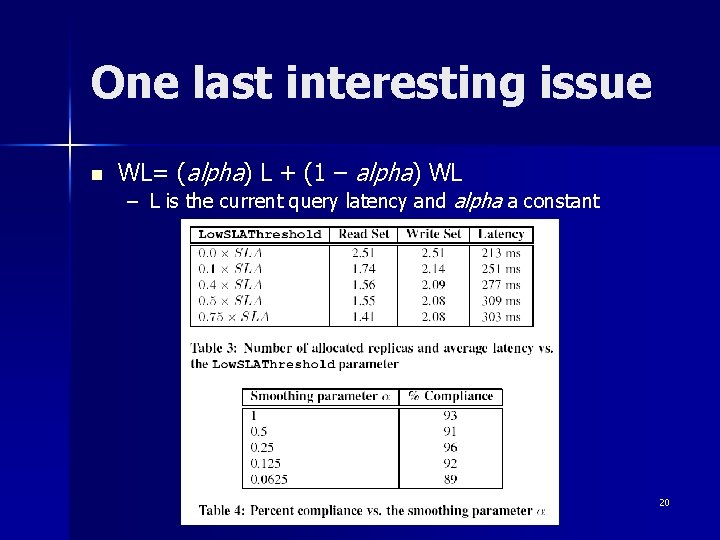

One last interesting issue n WL= (alpha) L + (1 – alpha) WL – L is the current query latency and alpha a constant 20

Discussion n Questionable Assumptions n How closely does this represent a real data center situation? n Actual cost savings vs. Implied cost savings n The issue of readiness in secondary replicas n Management concerns – Are write requests really (always) simple? – Scalability beyond 60 replicas (is it an issue? ) – Load contention issues – Overlap assignment – Determination of alpha(s) – Depends on SLA etc. – Depends on hardware leasing agreements – What level of ‘warmth’ is good enough for each application. Can some machines be turned off? – What about contention in many databases trying to stay warm. – Can we truly provide strong guarantees for keeping our end of the SLA promised? 21

- Slides: 21