Database Normalization Revisited An informationtheoretic approach Leonid Libkin

Database Normalization Revisited: An information-theoretic approach Leonid Libkin Joint work with Marcelo Arenas and Solmaz Kolahi

Sources l M. Arenas, L. An information-theoretic approach to normal forms for relational and XML data, PODS’ 03; J. ACM, 2005. l S. Kolahi, L. Redundancy vs dependency- preservation in normalisation: an informationtheoretic analysis of 3 NF, PODS’ 06.

Outline l Part 1 Database Normalization from the 1970 s and 1980 s l Part 2: Classical theory re done: new justification for normal forms: • • BCNF and relatives (academic, eliminate redundancies) 3 NF (practical, may leave some redundancies) l Part 3: An XML application 2

If you haven’t taught “Intro to DB” lately… l Design: decide how to represent the information in a particular data model. • Even for simple application domains there is a large number of ways of representing the data of interest. l We have to design the schema of the database. • Set of relations. • Set of attributes for each relation. • Set of data dependencies. 3

Normalization Theory Today l Normalization theory for relational databases was developed in the 70 s and 80 s. l Why do we need normalization theory today? • New data models have emerged: XML. • XML documents can contain redundant information. l Redundant information in XML documents: • Can be discovered if the user provides semantic information. • Can be eliminated. 15

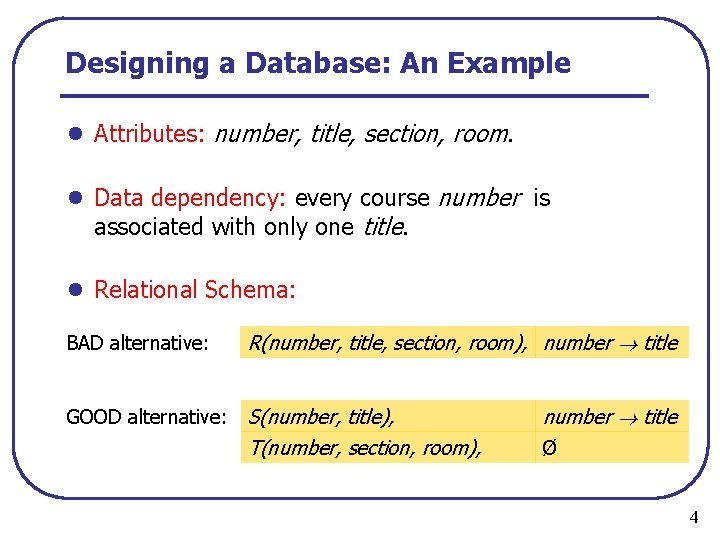

Designing a Database: An Example l Attributes: number, title, section, room. l Data dependency: every course number is associated with only one title. l Relational Schema: BAD alternative: R(number, title, section, room), number title GOOD alternative: S(number, title), T(number, section, room), number title Ø 4

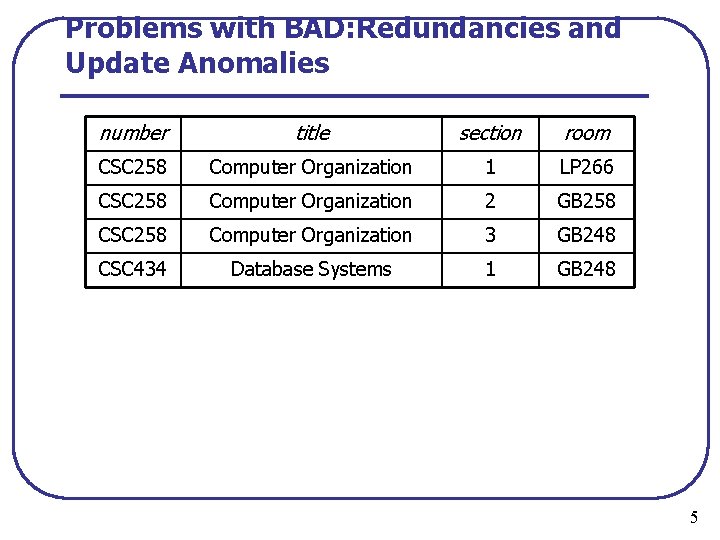

Problems with BAD: Redundancies and Update Anomalies number title section room CSC 258 Computer Organization 1 LP 266 CSC 258 Computer Organization 2 GB 258 CSC 258 Computer Organization 3 GB 248 CSC 434 Database Systems 1 GB 248 5

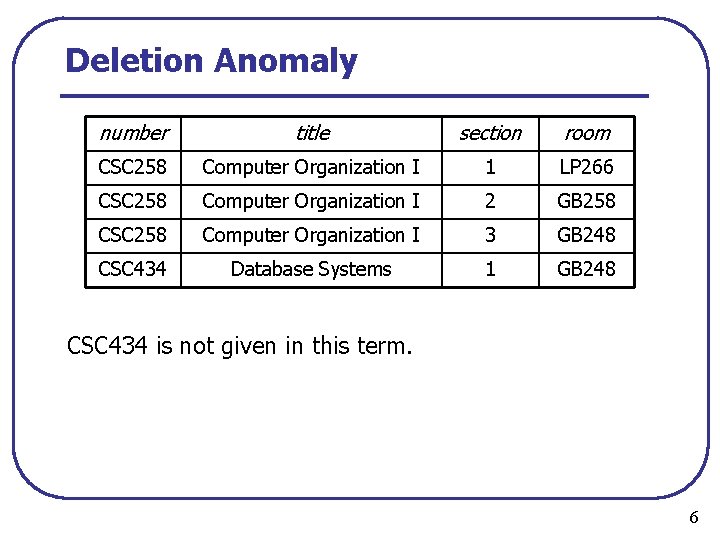

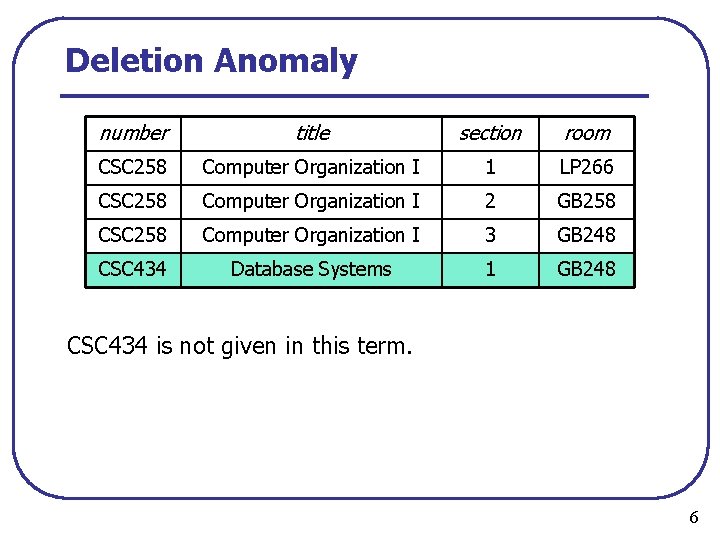

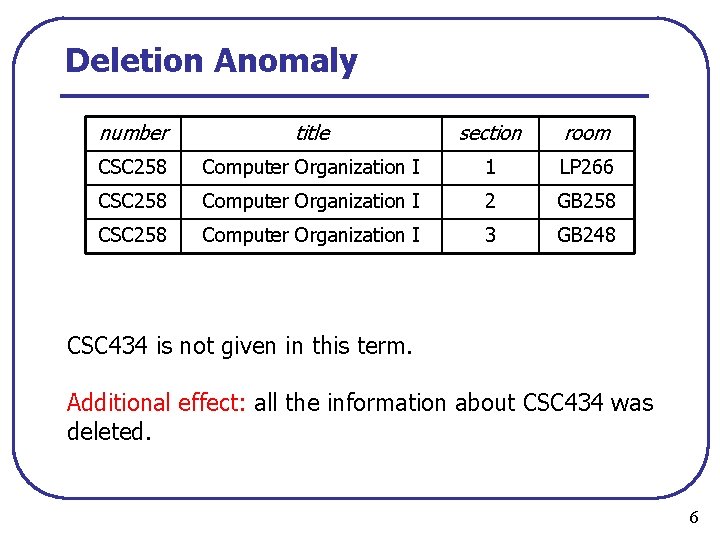

Deletion Anomaly number title section room CSC 258 Computer Organization I 1 LP 266 CSC 258 Computer Organization I 2 GB 258 CSC 258 Computer Organization I 3 GB 248 CSC 434 Database Systems 1 GB 248 CSC 434 is not given in this term. 6

Deletion Anomaly number title section room CSC 258 Computer Organization I 1 LP 266 CSC 258 Computer Organization I 2 GB 258 CSC 258 Computer Organization I 3 GB 248 CSC 434 Database Systems 1 GB 248 CSC 434 is not given in this term. 6

Deletion Anomaly number title section room CSC 258 Computer Organization I 1 LP 266 CSC 258 Computer Organization I 2 GB 258 CSC 258 Computer Organization I 3 GB 248 CSC 434 is not given in this term. Additional effect: all the information about CSC 434 was deleted. 6

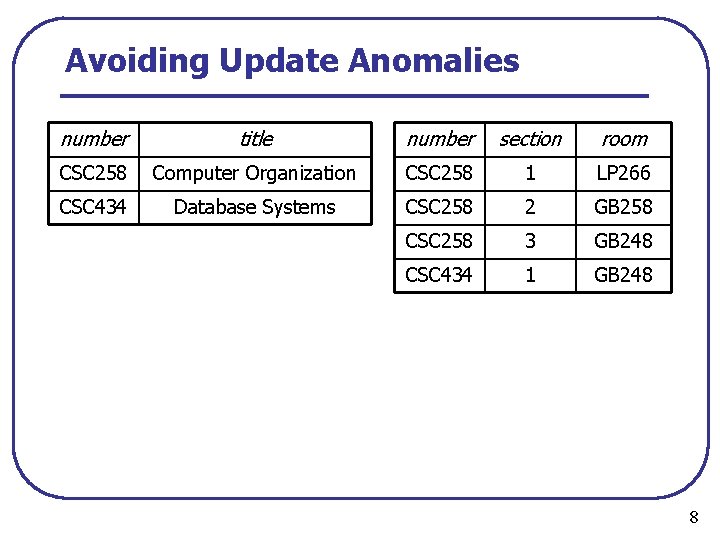

Avoiding Update Anomalies number title number section room CSC 258 Computer Organization CSC 258 1 LP 266 CSC 434 Database Systems CSC 258 2 GB 258 CSC 258 3 GB 248 CSC 434 1 GB 248 8

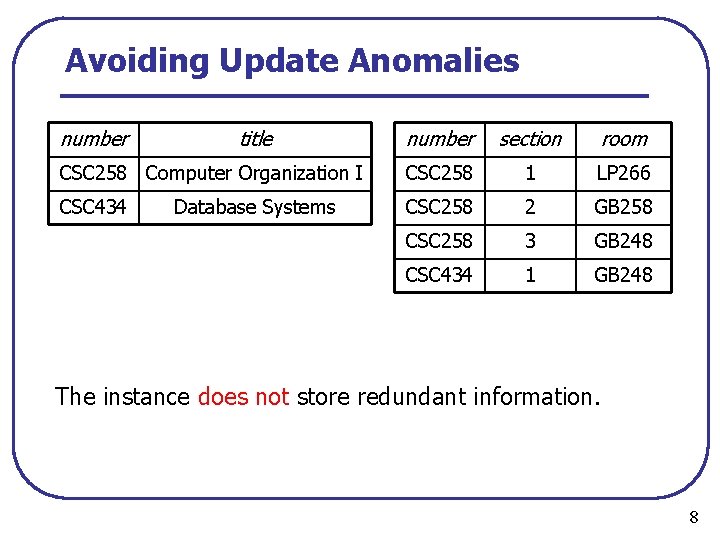

Avoiding Update Anomalies number title number section room CSC 258 Computer Organization I CSC 258 1 LP 266 CSC 434 CSC 258 2 GB 258 CSC 258 3 GB 248 CSC 434 1 GB 248 Database Systems The instance does not store redundant information. 8

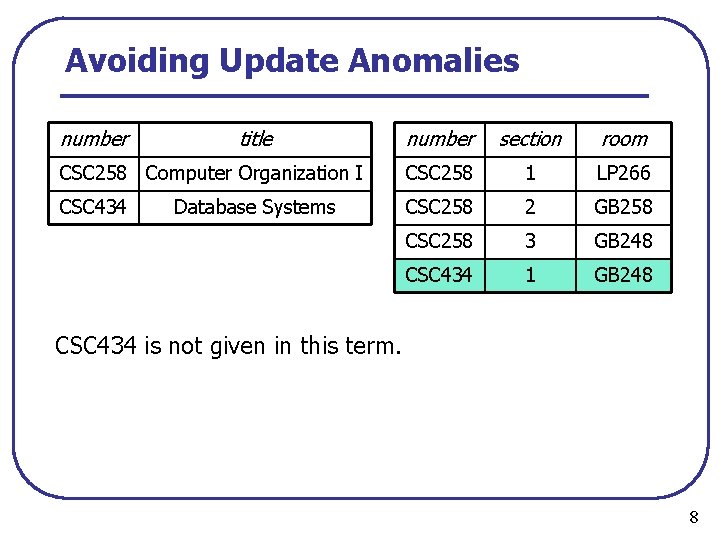

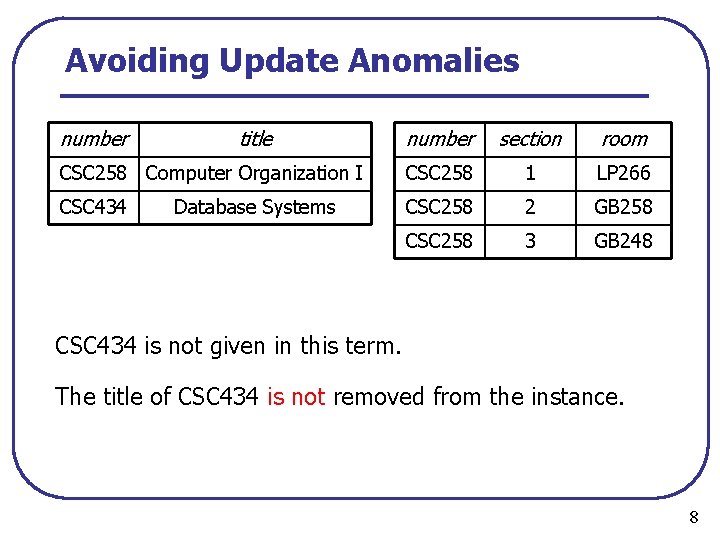

Avoiding Update Anomalies number title number section room CSC 258 Computer Organization I CSC 258 1 LP 266 CSC 434 CSC 258 2 GB 258 CSC 258 3 GB 248 CSC 434 1 GB 248 Database Systems CSC 434 is not given in this term. 8

Avoiding Update Anomalies number title number section room CSC 258 Computer Organization I CSC 258 1 LP 266 CSC 434 CSC 258 2 GB 258 CSC 258 3 GB 248 Database Systems CSC 434 is not given in this term. The title of CSC 434 is not removed from the instance. 8

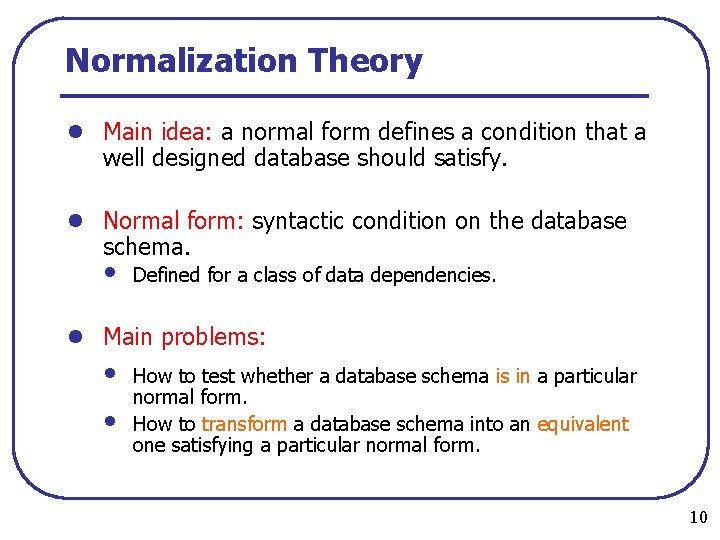

Normalization Theory l Main idea: a normal form defines a condition that a well designed database should satisfy. l Normal form: syntactic condition on the database schema. • Defined for a class of data dependencies. l Main problems: • • How to test whether a database schema is in a particular normal form. How to transform a database schema into an equivalent one satisfying a particular normal form. 10

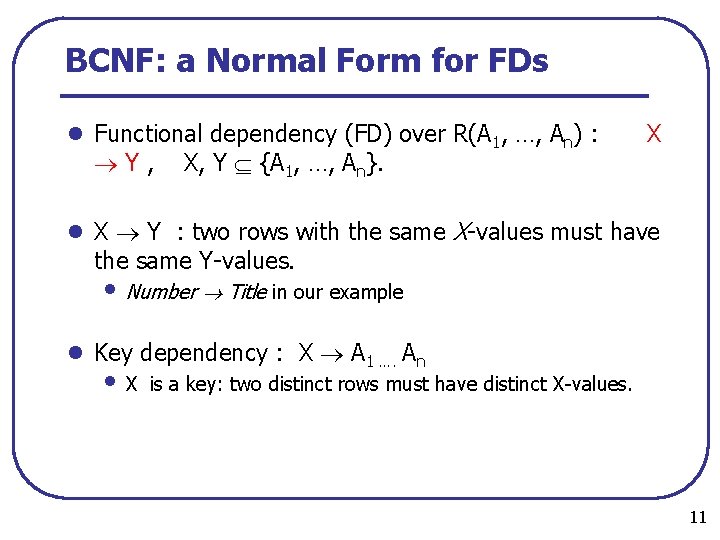

BCNF: a Normal Form for FDs l Functional dependency (FD) over R(A 1, …, An) : Y, X, Y {A 1, …, An}. X l X Y : two rows with the same X values must have the same Y values. • Number Title in our example l Key dependency : X A 1 …. An • X is a key: two distinct rows must have distinct X values. 11

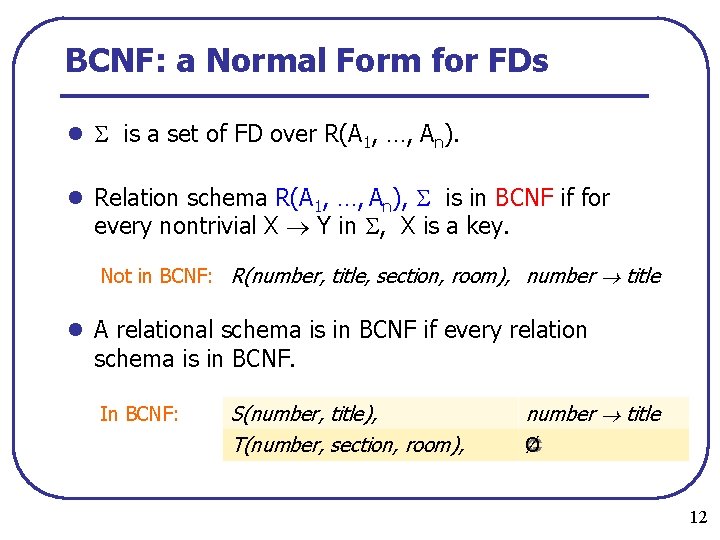

BCNF: a Normal Form for FDs l is a set of FD over R(A 1, …, An). l Relation schema R(A 1, …, An), is in BCNF if for every nontrivial X Y in , X is a key. Not in BCNF: R(number, title, section, room), number title l A relational schema is in BCNF if every relation schema is in BCNF. In BCNF: S(number, title), T(number, section, room), number title Ø 12

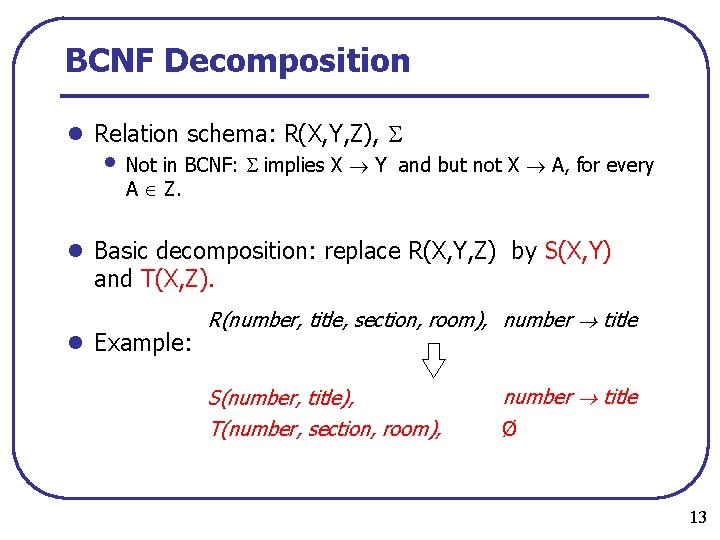

BCNF Decomposition l Relation schema: R(X, Y, Z), • Not in BCNF: implies X Y A Z. and but not X A, for every l Basic decomposition: replace R(X, Y, Z) by S(X, Y) and T(X, Z). l Example: R(number, title, section, room), number title S(number, title), T(number, section, room), number title Ø 13

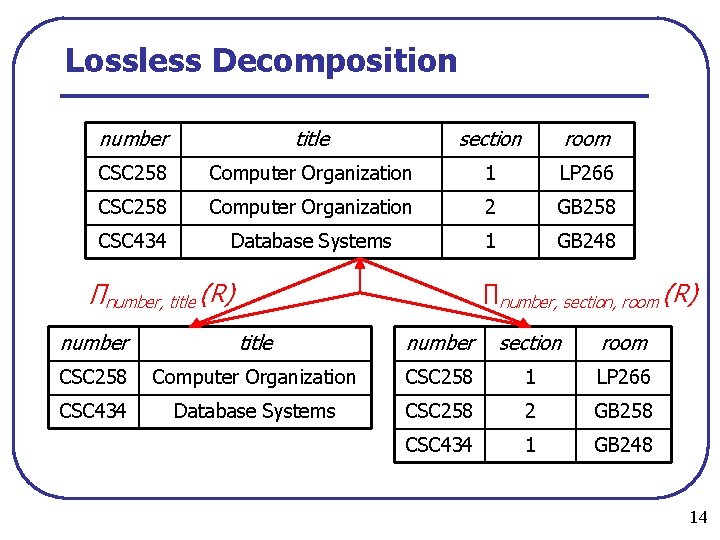

Lossless Decomposition number title section room CSC 258 Computer Organization 1 LP 266 CSC 258 Computer Organization 2 GB 258 CSC 434 Database Systems 1 GB 248 ∏number, title (R) ∏number, section, room (R) number title number section room CSC 258 Computer Organization CSC 258 1 LP 266 CSC 434 Database Systems CSC 258 2 GB 258 CSC 434 1 GB 248 14

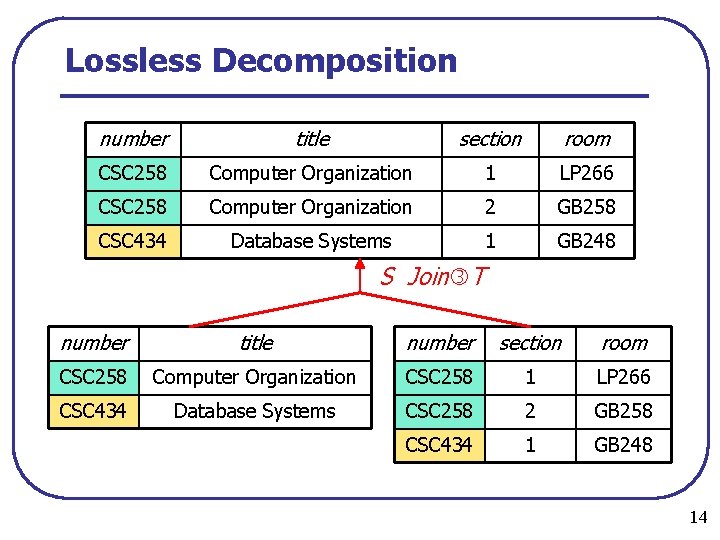

Lossless Decomposition number title section room CSC 258 Computer Organization 1 LP 266 CSC 258 Computer Organization 2 GB 258 CSC 434 Database Systems 1 GB 248 S Join T number title number section room CSC 258 Computer Organization CSC 258 1 LP 266 CSC 434 Database Systems CSC 258 2 GB 258 CSC 434 1 GB 248 14

How to justify good designs? l What is a good database design? • Well known solutions: BCNF, 4 NF, 3 NF… l But what is it that makes a database design good? • Elimination of update anomalies. • Existence of algorithms that produce good designs: lossless decomposition, dependency preservation. 34

Problems with traditional approaches l Many papers tried to justify normal forms. l Problem: tied very closely to the relational model. l Relied on well defined notions of queries/updates. l These days we want to deal with other data models, in particular XML. l We need an approach that extends to other models, in particular, XML.

Justification of Normal Forms l Problematic to evaluate XML normal forms. • No XML update language has been standardized. • No XML query language yet has the same “yardstick” status • as relational algebra. We do not even know if implication of XML FDs is decidable! l We need a different approach. • It must be based on some intrinsic characteristics of the • • data. It must be applicable to new data models. It must be independent of query/update/constraint issues. l Our approach is based on information theory. 35

Information Theory l Entropy measures the amount of information provided by a certain event. l Assume that an event can have n different outcomes with probabilities p 1, …, pn. Amount of information gained by knowing that event i occurred : Average amount of information gained (entropy) : Entropy is maximal if each pi = 1/n : 36

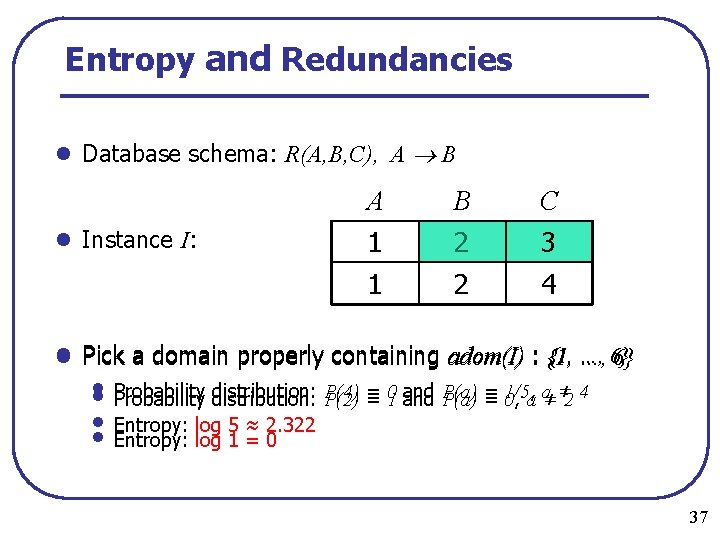

Entropy and Redundancies l Database schema: R(A, B, C), A B l Instance I: A B C 1 1 2 2 3 4 {1, …, l Pick a domain properly containing adom(I) : {1, …, 6} 6} • • Probability distribution: P(4) Probability distribution: P(2) = = 01 and P(a) = = 1/5, 0, a a≠ ≠ 2 4 • • Entropy: log 5 ≈ 2. 322 Entropy: log 1 = 0 37

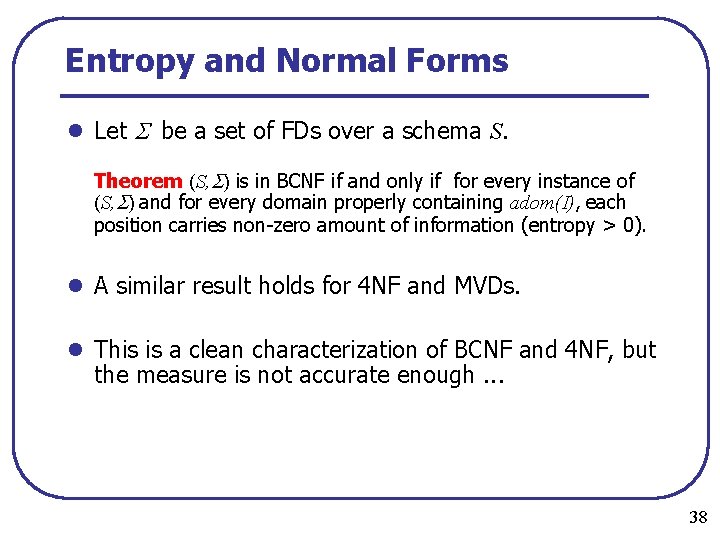

Entropy and Normal Forms l Let be a set of FDs over a schema S. Theorem (S, ) is in BCNF if and only if for every instance of (S, ) and for every domain properly containing adom(I), each position carries non zero amount of information (entropy > 0). l A similar result holds for 4 NF and MVDs. l This is a clean characterization of BCNF and 4 NF, but the measure is not accurate enough. . . 38

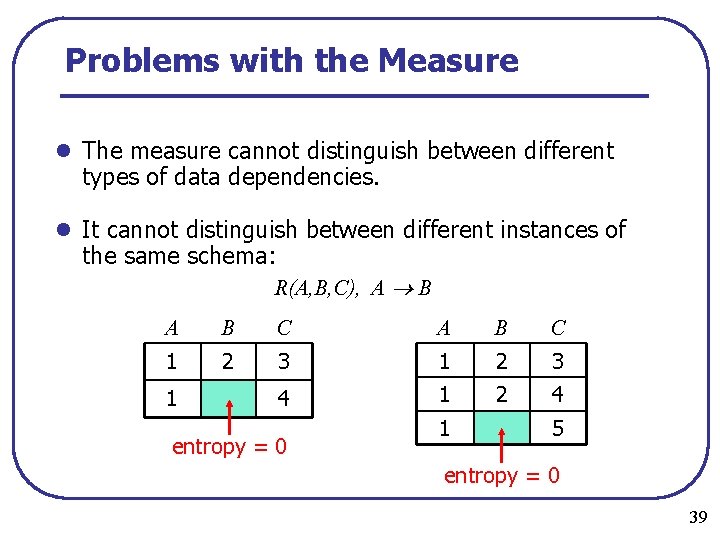

Problems with the Measure l The measure cannot distinguish between different types of data dependencies. l It cannot distinguish between different instances of the same schema: R(A, B, C), A B A B C 1 2 3 4 1 2 4 1 entropy = 0 1 5 entropy = 0 39

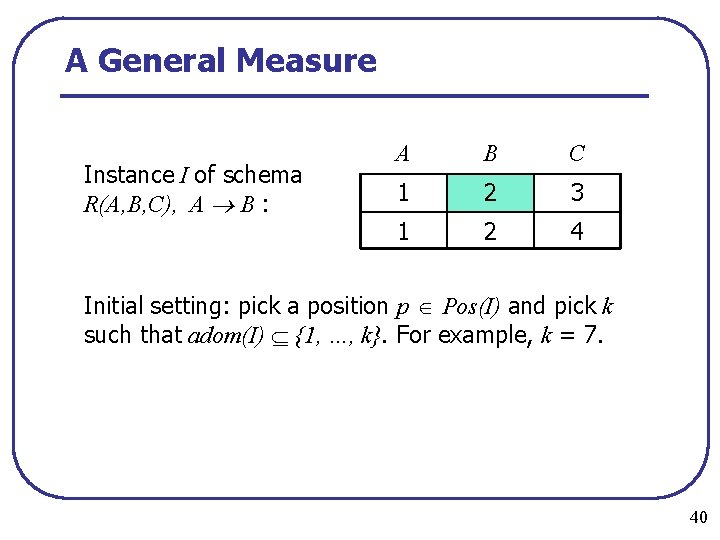

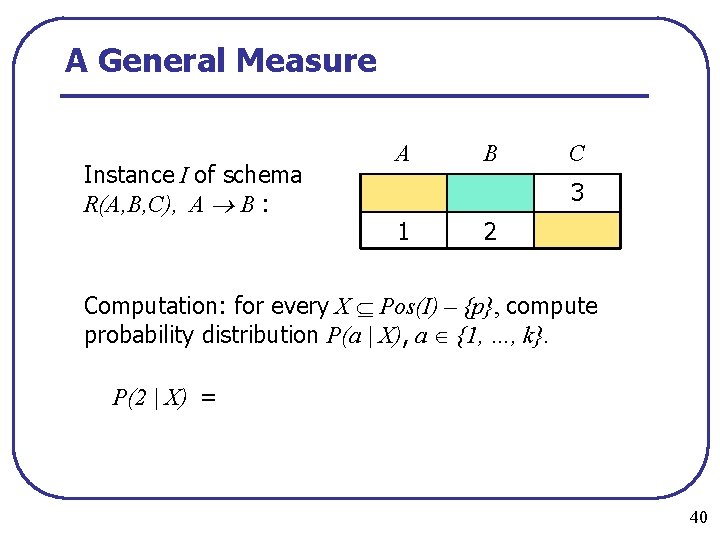

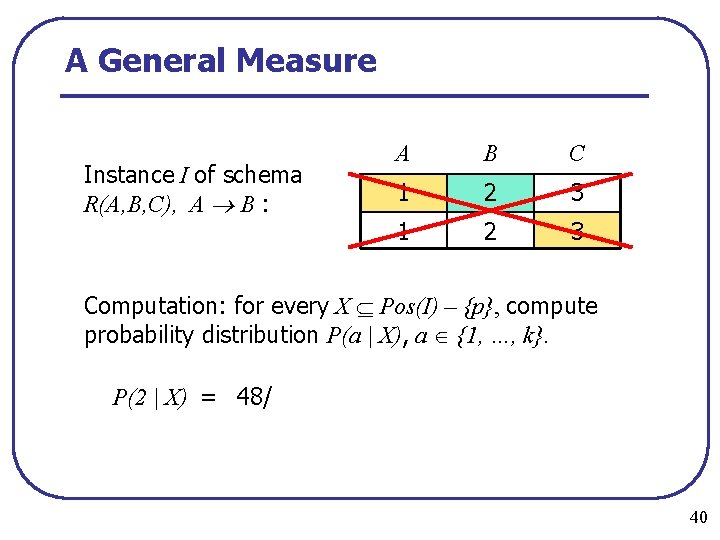

A General Measure Instance I of schema R(A, B, C), A B : A B C 1 2 3 1 2 4 40

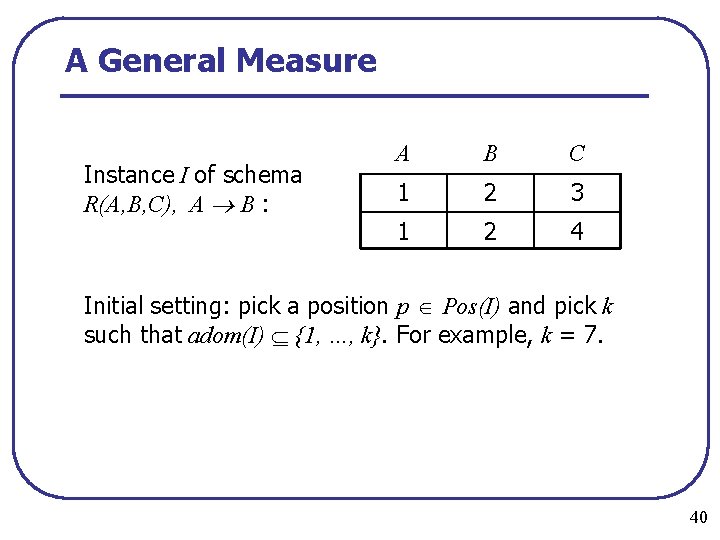

A General Measure Instance I of schema R(A, B, C), A B : A B C 1 2 3 1 2 4 Initial setting: pick a position p Pos(I) and pick k such that adom(I) {1, …, k}. For example, k = 7. 40

A General Measure Instance I of schema R(A, B, C), A B : A B C 1 2 3 1 2 4 Initial setting: pick a position p Pos(I) and pick k such that adom(I) {1, …, k}. For example, k = 7. 40

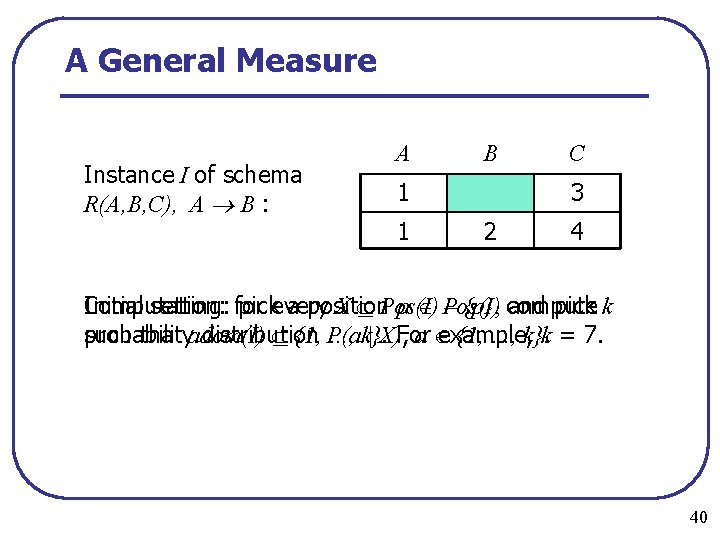

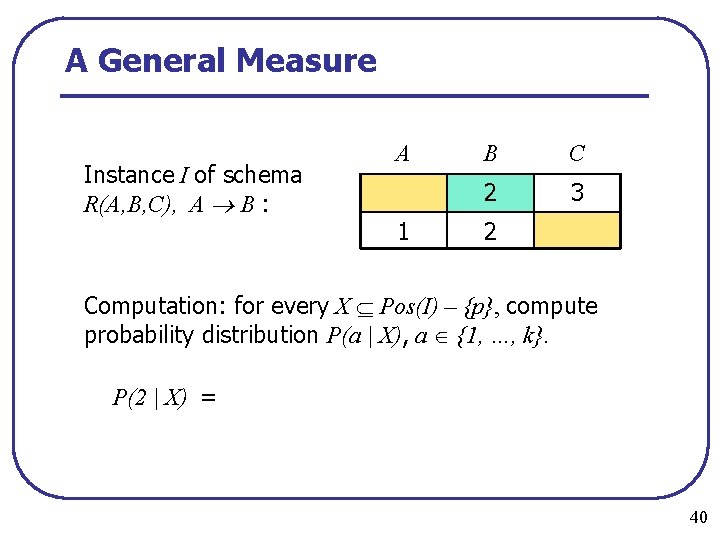

A General Measure Instance I of schema R(A, B, C), A B : A B 1 1 C 3 2 4 Computation: Initial setting: for pickevery a position X Pos(I) p Pos(I) – {p}, compute and pick k such that adom(I) probability distribution {1, P(a …, k}. | X), For a example, {1, …, k}. k = 7. 40

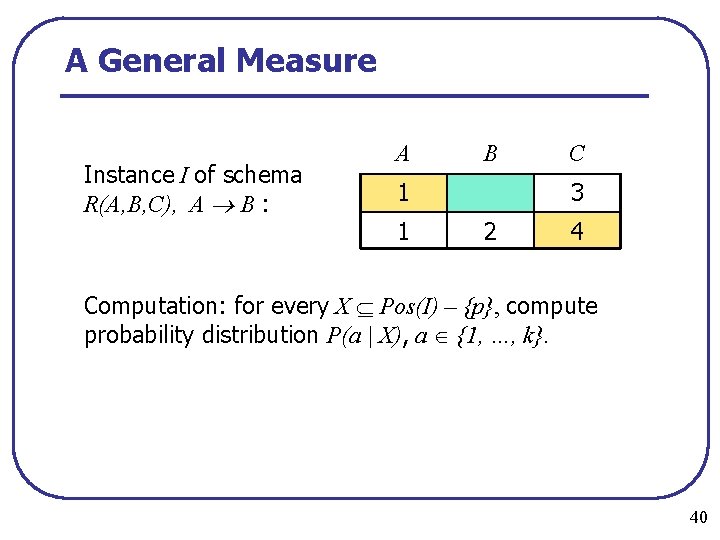

A General Measure Instance I of schema R(A, B, C), A B : A B 1 1 C 3 2 4 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. 40

A General Measure Instance I of schema R(A, B, C), A B : A B C 3 1 2 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. 40

A General Measure Instance I of schema R(A, B, C), A B : A B C 3 1 2 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 40

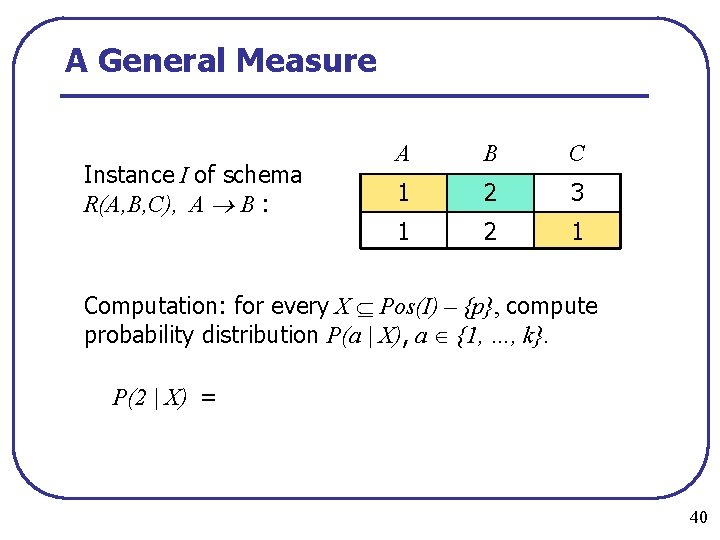

A General Measure Instance I of schema R(A, B, C), A B : A 1 B C 2 3 2 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 40

A General Measure Instance I of schema R(A, B, C), A B : A B C 1 2 3 1 2 1 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 40

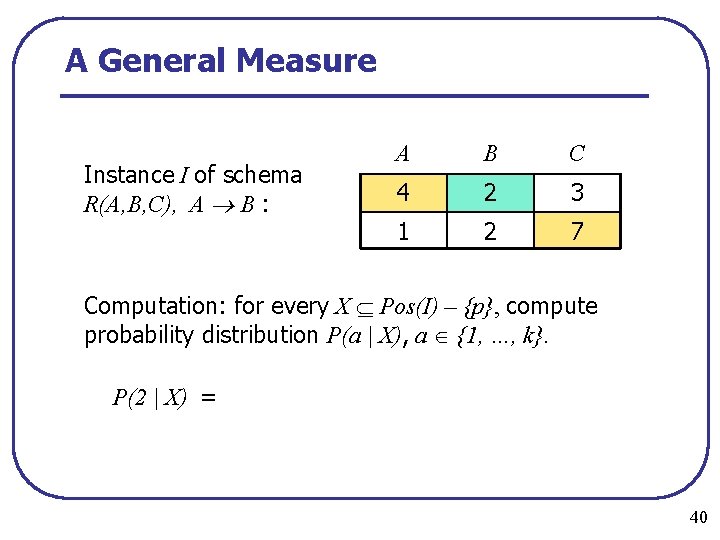

A General Measure Instance I of schema R(A, B, C), A B : A B C 4 2 3 1 2 7 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 40

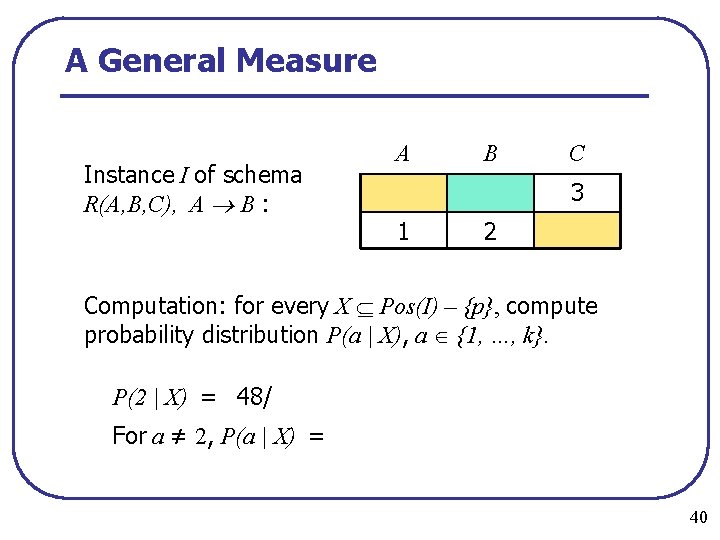

A General Measure Instance I of schema R(A, B, C), A B : A B C 1 2 3 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 48/ 40

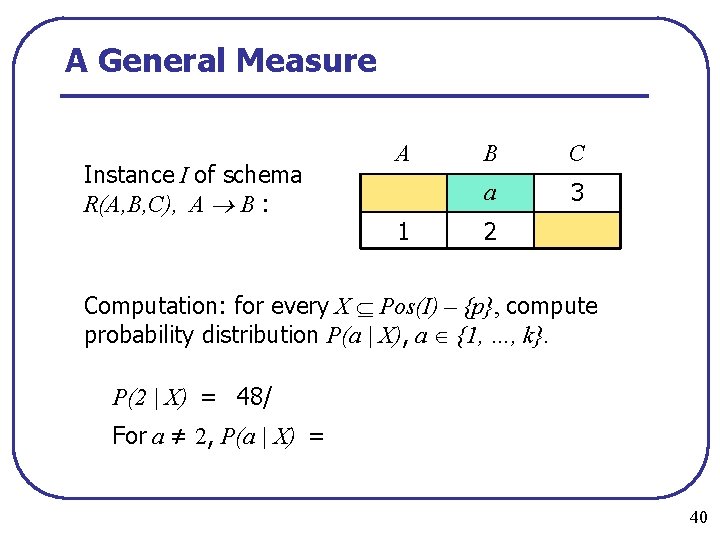

A General Measure Instance I of schema R(A, B, C), A B : A B C 3 1 2 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 48/ For a ≠ 2, P(a | X) = 40

A General Measure Instance I of schema R(A, B, C), A B : A 1 B C a 3 2 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 48/ For a ≠ 2, P(a | X) = 40

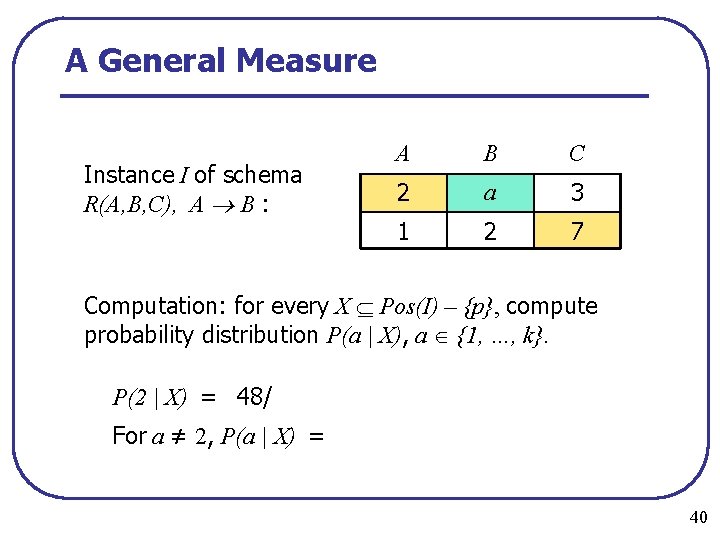

A General Measure Instance I of schema R(A, B, C), A B : A B C 2 a 3 1 2 7 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 48/ For a ≠ 2, P(a | X) = 40

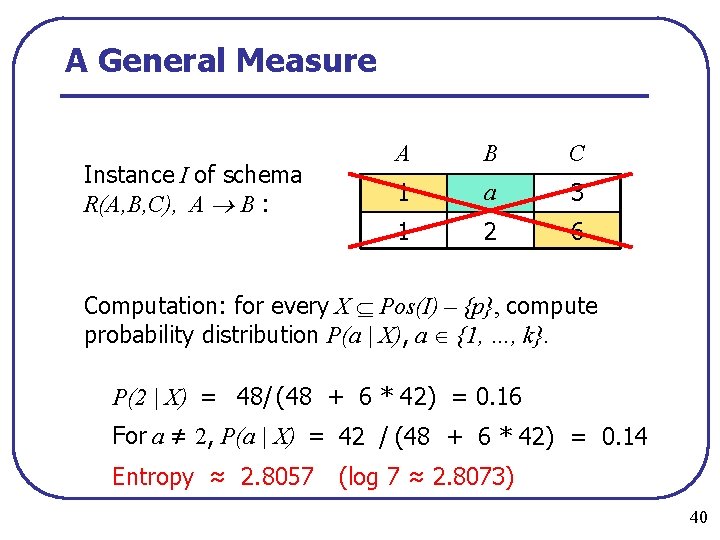

A General Measure Instance I of schema R(A, B, C), A B : A B C 1 a 3 1 2 6 Computation: for every X Pos(I) – {p}, compute probability distribution P(a | X), a {1, …, k}. P(2 | X) = 48/ (48 + 6 * 42) = 0. 16 For a ≠ 2, P(a | X) = 42 / (48 + 6 * 42) = 0. 14 Entropy ≈ 2. 8057 (log 7 ≈ 2. 8073) 40

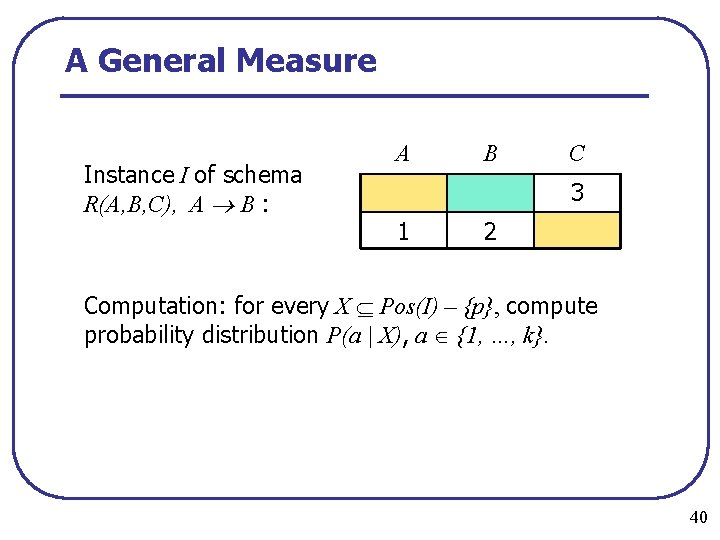

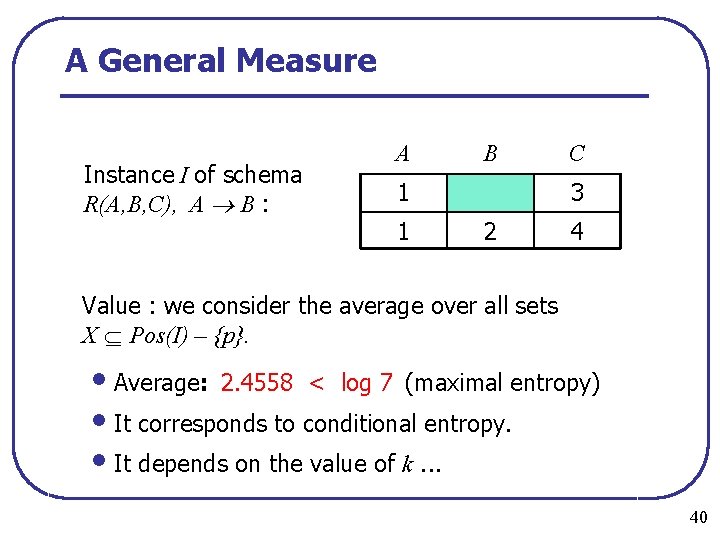

A General Measure Instance I of schema R(A, B, C), A B : A B 1 1 C 3 2 4 Value : we consider the average over all sets X Pos(I) – {p}. • Average: 2. 4558 < log 7 (maximal entropy) • It corresponds to conditional entropy. • It depends on the value of k. . . 40

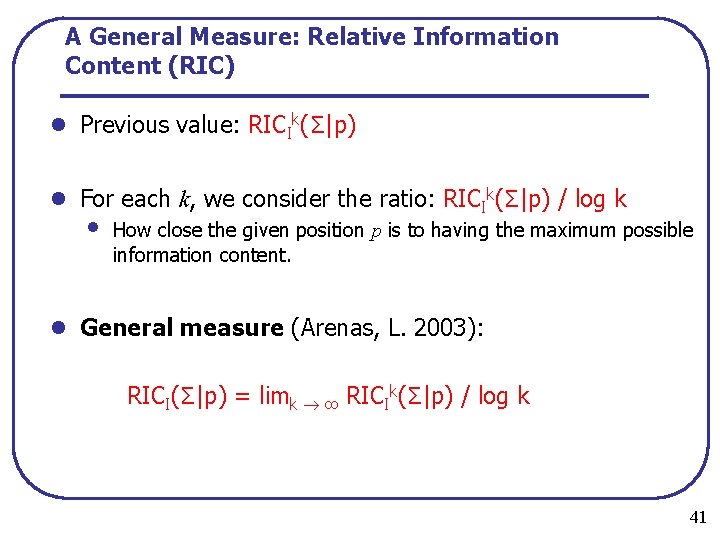

A General Measure: Relative Information Content (RIC) l Previous value: RICIk(Σ|p) l For each k, we consider the ratio: RICIk(Σ|p) / log k • How close the given position p is to having the maximum possible information content. l General measure (Arenas, L. 2003): RICI(Σ|p) = limk ∞ RICIk(Σ|p) / log k 41

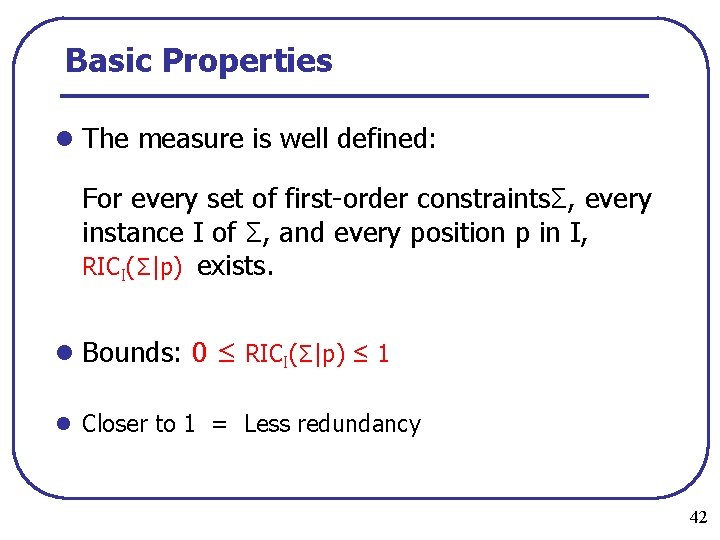

Basic Properties l The measure is well defined: For every set of first order constraintsΣ, every instance I of Σ, and every position p in I, RICI(Σ|p) exists. l Bounds: 0 ≤ RICI(Σ|p) ≤ 1 l Closer to 1 = Less redundancy 42

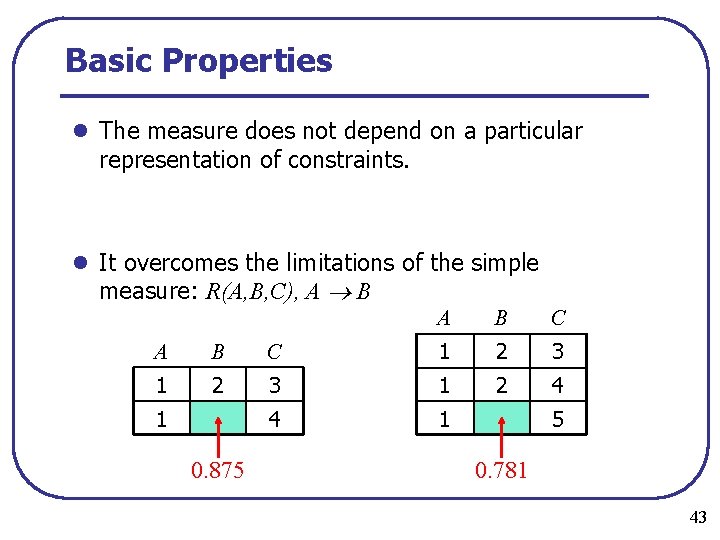

Basic Properties l The measure does not depend on a particular representation of constraints. l It overcomes the limitations of the simple measure: R(A, B, C), A B A B C 1 2 3 1 2 4 4 1 1 0. 875 5 0. 781 43

Well-Designed Databases Definition A database specification (S, ) is welldesigned if for every I inst(S, ) and every p Pos(I), RICI(Σ|p) = 1. In other words, every position in every instance carries the maximum possible amount of information. 44

Relational Databases (Arenas, L. ’ 03) is a set of data dependencies over a schema S: l = Ø: (S, ) is well designed. l is a set of FDs: (S, ) is well designed if and only if (S, ) is in BCNF. l is a set of FDs and MVDs: (S, ) is well designed if and only if (S, ) is in 4 NF. l is a set of FDs and JDs: • If (S, ) is in PJ/NF or in 5 NFR, then (S, ) is well designed. • The converse is not true. A syntactic characterization of being well designed is given in [AL 03]. 45

Decidability Issues l If Σ is a set of First Order integrity constraints, then the problem of verifying whether a relational schema is well designed is undecidable. l If Σ contains only universal constraints (FDs, MVDs, JDs, …), then the problem becomes decidable. l High complexity (co. NEXPTIME) by reduction to the (complement) of Bernays Schönfinkel satisfiability. 46

3 NF l BCNF is the most popular textbook normal form. l In practice 3 NF is much more common. l From Oracle's “General Database Design FAQ”: after defining 1 NF, 2 NF, and 3 NF, it says: that there are other normal forms but “their definitions are of academic concern only, and are rarely required for practical purposes”

Reminder: 3 NF l A candidate key: a minimal (wrt subset) key l A prime attribute: an attribute that belongs to a candidate key. l BCNF: For a nontrivial FD X A, where A is an attribute, X must be a key. l 3 NF (Bernstein/Zaniolo): For a nontrivial FD X A, X must be a key OR A must be prime.

Why 3 NF? l Because some relational schemas do not have decompositions that are both: • In BCNF, and • Preserve all functional dependencies l Example: ABC, AB C, C A l On the other hand, there always exists a lossless dependency preserving 3 NF decomposition.

Redundancies vs Dependency-Preservation l To achieve complete elimination of redundancies (BCNF), one has to pay in terms of dependency preservation. l Losing constraints is often undesirable (database integrity must be enforced). l What is we only consider normal form that guarantee lossless dependency preserving decomposition? l Which is best? Is it 3 NF?

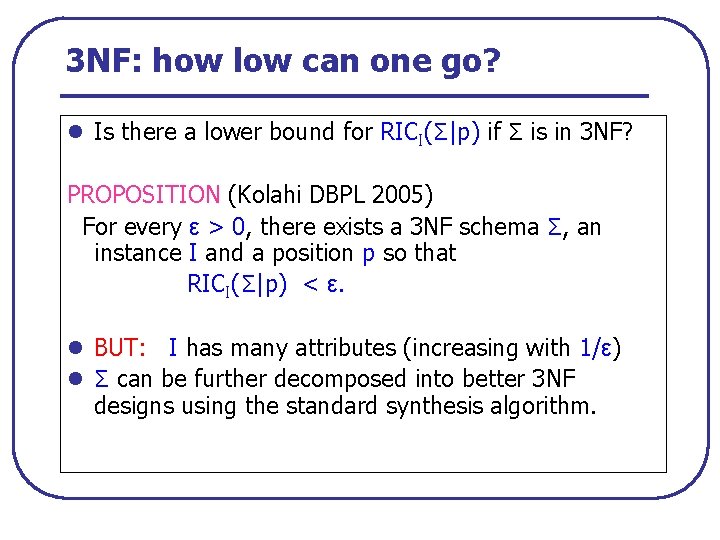

3 NF: how low can one go? l Is there a lower bound for RICI(Σ|p) if Σ is in 3 NF? PROPOSITION (Kolahi DBPL 2005) For every ε > 0, there exists a 3 NF schema Σ, an instance I and a position p so that RICI(Σ|p) < ε. l BUT: I has many attributes (increasing with 1/ε) l Σ can be further decomposed into better 3 NF designs using the standard synthesis algorithm.

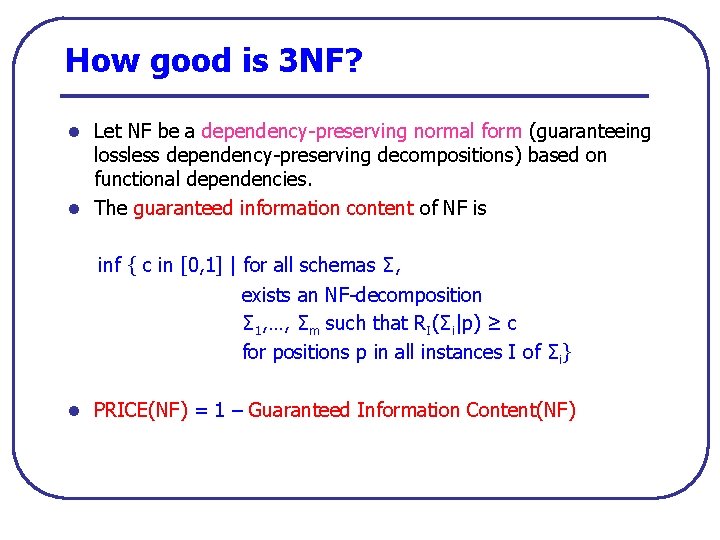

How good is 3 NF? l Let NF be a dependency preserving normal form (guaranteeing lossless dependency preserving decompositions) based on functional dependencies. l The guaranteed information content of NF is inf { c in [0, 1] | for all schemas Σ, exists an NF decomposition Σ 1, …, Σm such that RI(Σi|p) ≥ c for positions p in all instances I of Σi} l PRICE(NF) = 1 – Guaranteed Information Content(NF)

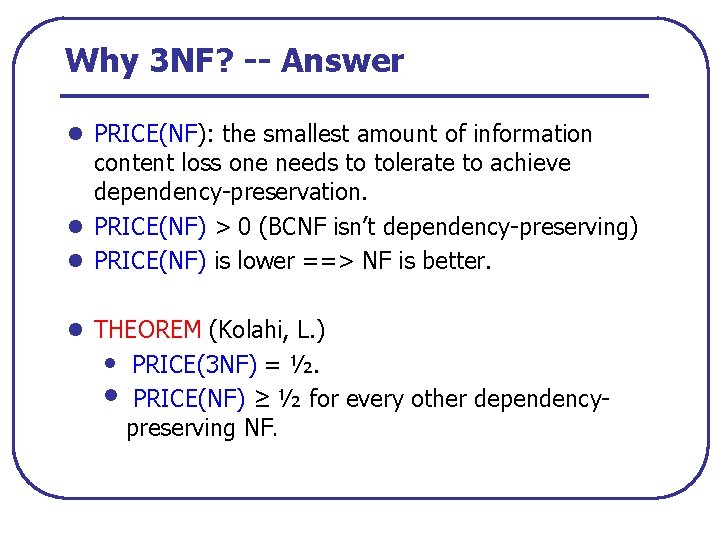

Why 3 NF? -- Answer l PRICE(NF): the smallest amount of information content loss one needs to tolerate to achieve dependency preservation. l PRICE(NF) > 0 (BCNF isn’t dependency preserving) l PRICE(NF) is lower ==> NF is better. l THEOREM (Kolahi, L. ) • PRICE(3 NF) = ½. • PRICE(NF) ≥ ½ for every other dependency preserving NF.

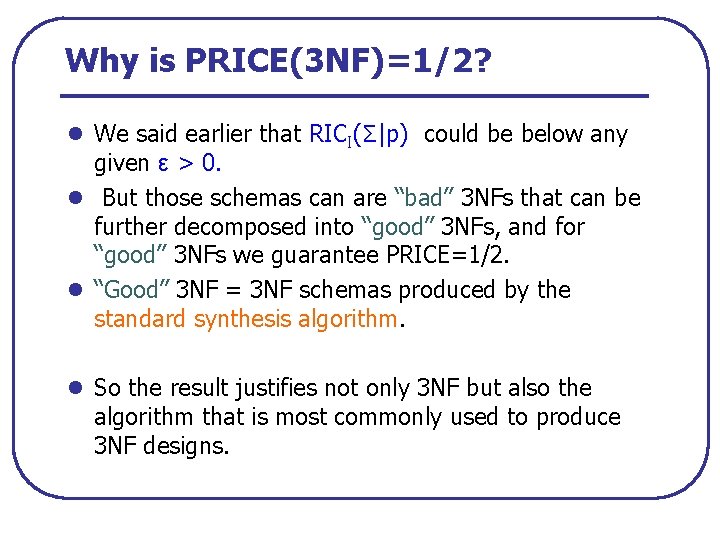

Why is PRICE(3 NF)=1/2? l We said earlier that RICI(Σ|p) could be below any given ε > 0. l But those schemas can are “bad” 3 NFs that can be further decomposed into “good” 3 NFs, and for “good” 3 NFs we guarantee PRICE=1/2. l “Good” 3 NF = 3 NF schemas produced by the standard synthesis algorithm. l So the result justifies not only 3 NF but also the algorithm that is most commonly used to produce 3 NF designs.

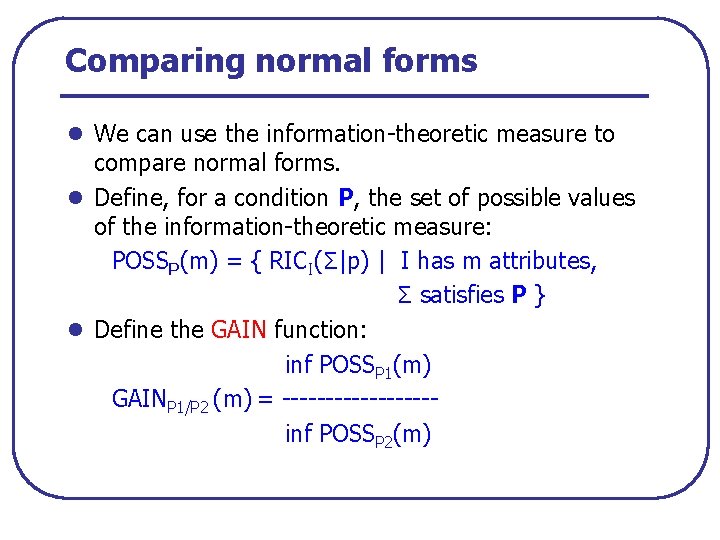

Comparing normal forms l We can use the information theoretic measure to compare normal forms. l Define, for a condition P, the set of possible values of the information theoretic measure: POSSP(m) = { RICI(Σ|p) | I has m attributes, Σ satisfies P } l Define the GAIN function: inf POSSP 1(m) GAINP 1/P 2 (m) = inf POSSP 2(m)

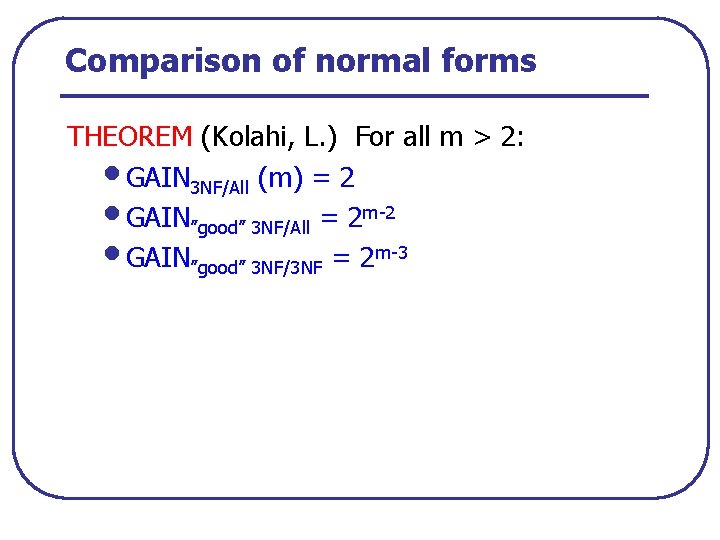

Comparison of normal forms THEOREM (Kolahi, L. ) For all m > 2: • GAIN 3 NF/All (m) = 2 • GAIN”good” 3 NF/All = 2 m 2 • GAIN”good” 3 NF/3 NF = 2 m 3

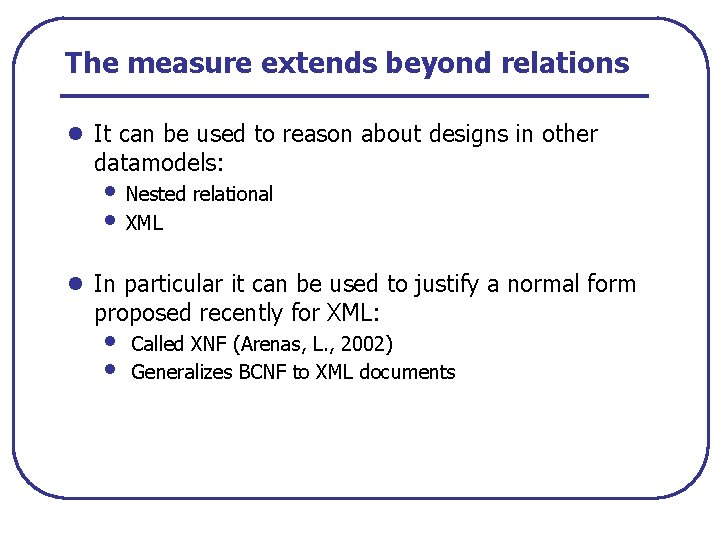

The measure extends beyond relations l It can be used to reason about designs in other datamodels: • Nested relational • XML l In particular it can be used to justify a normal form proposed recently for XML: • • Called XNF (Arenas, L. , 2002) Generalizes BCNF to XML documents

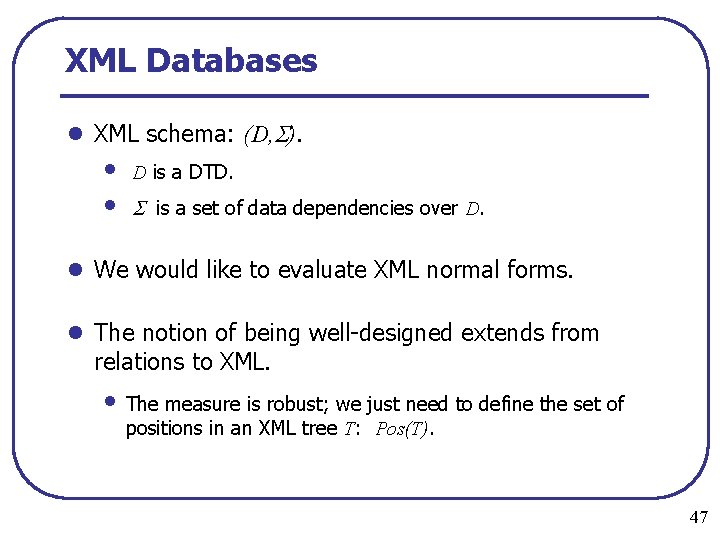

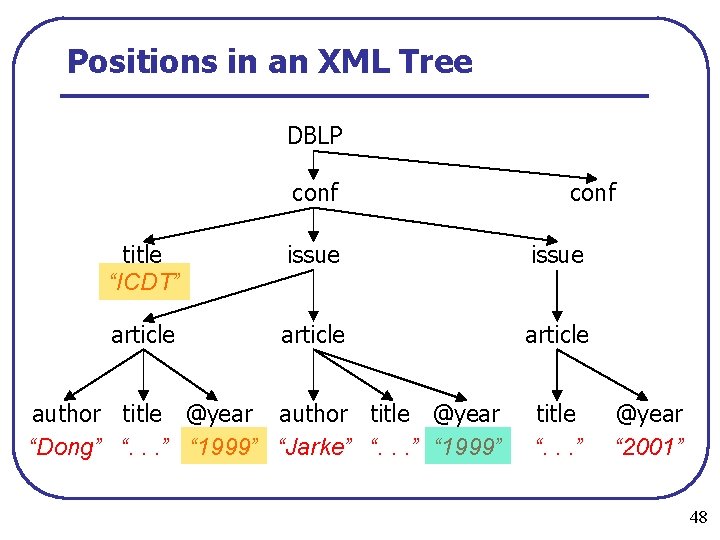

XML Databases l XML schema: (D, ). • • D is a DTD. is a set of data dependencies over D. l We would like to evaluate XML normal forms. l The notion of being well designed extends from relations to XML. • The measure is robust; we just need to define the set of positions in an XML tree T: Pos(T). 47

Positions in an XML Tree DBLP conf title “ICDT” issue article author title @year “Dong” “. . . ” “ 1999” “Jarke” “. . . ” “ 1999” title “. . . ” @year “ 2001” 48

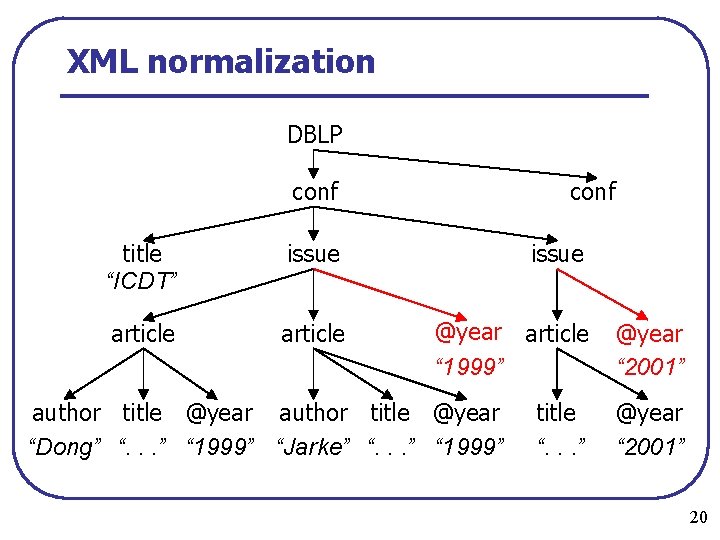

XML normalization DBLP conf title “ICDT” issue article @year article “ 1999” author title @year “Dong” “. . . ” “ 1999” “Jarke” “. . . ” “ 1999” title “. . . ” @year “ 2001” 20

XNF: an XML normal form l XNF is achieved by repeated transformations of two kinds: • As above in the DBLP example, and • Splitting multiple attributes of the same element type in the same manner as in the case of BCNF normalization for relations. l There is also a formal definition which is a natural analog of BCNF in the XML context.

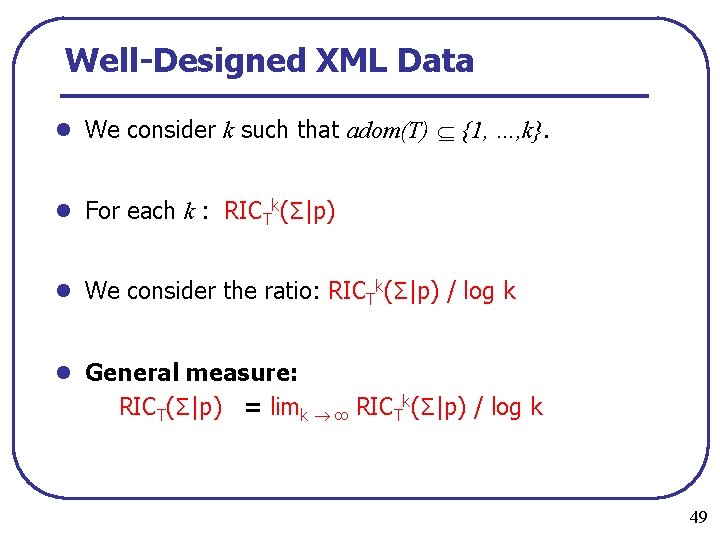

Well-Designed XML Data l We consider k such that adom(T) {1, …, k}. l For each k : RICTk(Σ|p) l We consider the ratio: RICTk(Σ|p) / log k l General measure: RICT(Σ|p) = limk ∞ RICTk(Σ|p) / log k 49

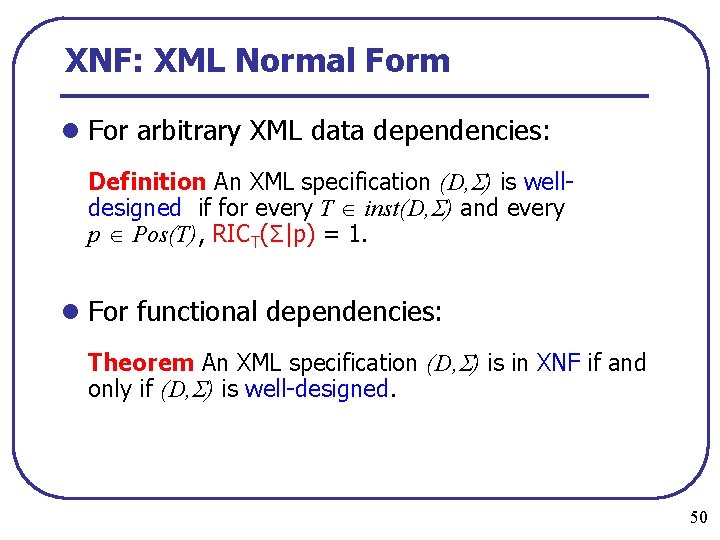

XNF: XML Normal Form l For arbitrary XML data dependencies: Definition An XML specification (D, ) is well designed if for every T inst(D, ) and every p Pos(T), RICT(Σ|p) = 1. l For functional dependencies: Theorem An XML specification (D, ) is in XNF if and only if (D, ) is well designed. 50

Future Work l What is an analog of 3 NF for XML? l We would like to develop better characterizations of normalization algorithms using our measure. • Why is the “usual” BCNF decomposition algorithm good? • Why does it always stop? l What else can this measure be used for? l What about nonuniform distributions? • Are they meaningful here? • If so, how do the results change? 52

- Slides: 67