Data PreProcessing What about your data 1 MingYen

Data Pre-Processing … What about your data? 1 Ming-Yen Lin, IECS, FCU

Why Data Preprocessing? l real world 的資料 「髒」 Ø不完整 incomplete: 缺值、缺有興趣的屬性、只 含 統整值(aggregate data) Ø有雜質 noisy: 有錯誤或有離群值 (outliers) Ø不一致 inconsistent: 編碼或名稱不一致 l No quality data, no quality mining results! Ø有品質的決策乃植基於有品質的資料 ØData warehouse 需要有品質資料的一致整合 2 Ming-Yen Lin, IECS, FCU

Data Quality的多維度量(measure) l 準確度 Accuracy l 完整性 Completeness l 一致性 Consistency l 及時性 Timeliness l 可信度 Believability l 加值性 Value added l 可解讀性 Interpretability l 取及程度 Accessibility integrity, compactness 3 Ming-Yen Lin, IECS, FCU

Data Preprocessing 主要 作 l Data cleaning (清掃) Ø Fill in missing values, smooth noisy data, identify or remove outliers, and resolve inconsistencies l Data integration (整合) Ø Integration of multiple databases, data cubes, or files l Data transformation (轉換) Ø Normalization and aggregation l Data reduction (簡化) Ø Obtains reduced representation in volume but produces the same or similar analytical results Ø Data discretization (離散化) n Part of data reduction but with particular importance, especially for numerical data 4 Ming-Yen Lin, IECS, FCU

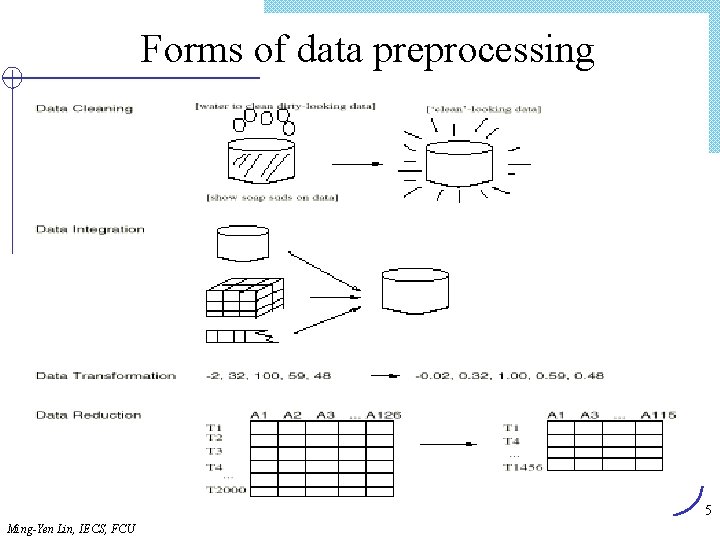

Forms of data preprocessing 5 Ming-Yen Lin, IECS, FCU

Data Cleaning 主要 作 l 填入缺值 Fill in missing values l 確認 outliers 並解決noisy data l 修正不一致 (inconsistent) 的資料 6 Ming-Yen Lin, IECS, FCU

Missing Data l 現象: Data is not always available Ø 如: sales data 中的 customer income l 原因 Ø 儀器錯誤 Ø 與其他欄位不一致而刪除 Ø 因誤解而未輸入 Ø 輸入時覺得不重要而未輸入 l Missing data:可能需要推論 (inferred) 7 Ming-Yen Lin, IECS, FCU

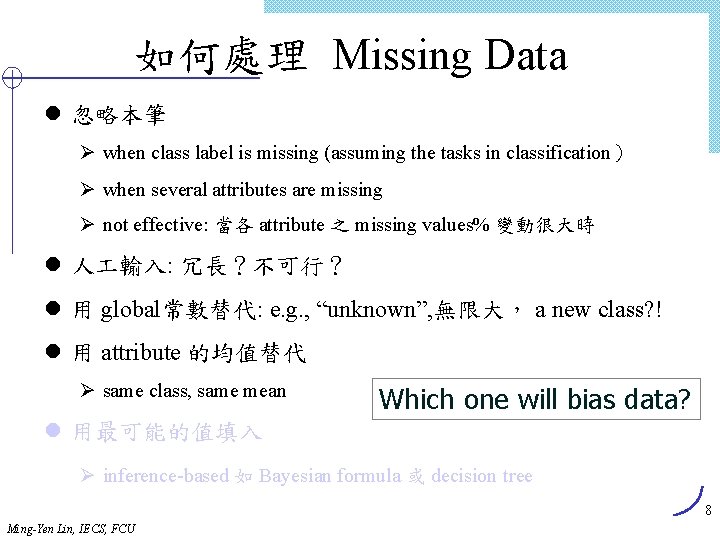

如何處理 Missing Data l 忽略本筆 Ø when class label is missing (assuming the tasks in classification) Ø when several attributes are missing Ø not effective: 當各 attribute 之 missing values% 變動很大時 l 人 輸入: 冗長?不可行? l 用 global常數替代: e. g. , “unknown”, 無限大, a new class? ! l 用 attribute 的均值替代 Ø same class, same mean Which one will bias data? l 用最可能的值填入 Ø inference-based 如 Bayesian formula 或 decision tree 8 Ming-Yen Lin, IECS, FCU

如何處理 Noisy Data l Binning method Ø 先將資料排序,分隔(partition)成(equi-depth) bins Ø 再 smooth by bin means, smooth by bin median, smooth by bin boundaries (see next slide) l Clustering Ø detect and remove outliers l 結合 computer 與人 檢查 Ø 偵測可疑值後由人 檢查 l Regression回歸函數 Ø smooth by fitting the data into regression functions 10 Ming-Yen Lin, IECS, FCU

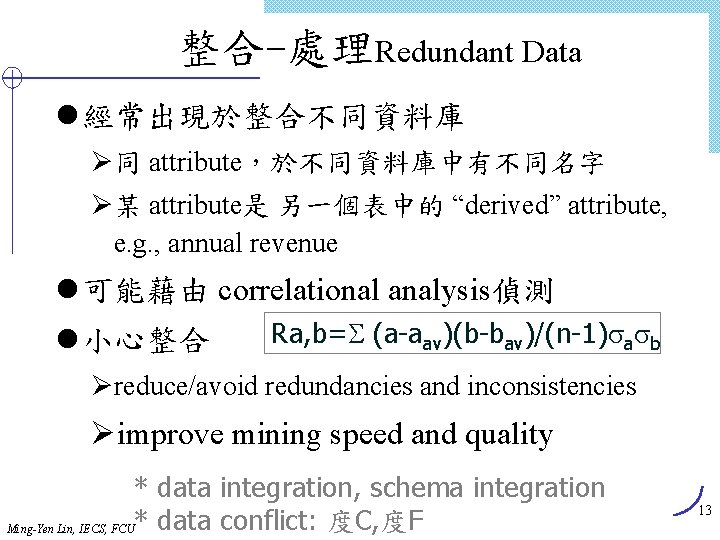

整合-處理Redundant Data l 經常出現於整合不同資料庫 Ø同 attribute,於不同資料庫中有不同名字 Ø某 attribute是 另一個表中的 “derived” attribute, e. g. , annual revenue l 可能藉由 correlational analysis偵測 l 小心整合 Ra, b= (a-aav)(b-bav)/(n-1) a b Øreduce/avoid redundancies and inconsistencies Øimprove mining speed and quality * data integration, schema integration Ming-Yen Lin, IECS, FCU* data conflict: 度C, 度F 13

Data Transformation轉換 將資料轉成適合mining的型態,含 l Smoothing: 從資料中移除 noise l Aggregation: summarization, data cube construction l Generalization: concept hierarchy climbing l Normalization: 縮放(scaling)以落入較小的特定範圍 Ø min-max normalization Ø z-score normalization Ø normalization by decimal scaling l Attribute/feature construction Ø New attributes constructed from the given ones Ø eg. area = h x w 14 Ming-Yen Lin, IECS, FCU

Data Transformation: Normalization l min-max normalization l z-score normalization (0 -mean) l normalization by decimal scaling Where j is the smallest integer such that Max(| |)<1 標準差=[ Sigma((A-Amean)2)/(n-1) ]1/2 15 Ming-Yen Lin, IECS, FCU

Data Reduction Strategies簡化 l Warehouse 的資料量 Ø 可能 數個 terabytes Ø mine complete data 太耗時 l Data reduction: 得到一個data set簡化的表示方式 Ø much smaller in volume but yet produces the same (or almost the same) analytical results l Data reduction strategies Ø Ø Data cube aggregation (聚合data cube) Dimensiony reduction (降維度) Data compression (壓縮) Numerosity reduction (大數化小!) Ø Discretization and concept hierarchy generation (離散化與產生概念 階層) 16 Ming-Yen Lin, IECS, FCU

Data Cube Aggregation l data cube 的最底層 Øthe aggregated data for an individual entity of interest Øe. g. , a customer in a phone calling data warehouse. l aggregate data cube 的各個不同層 ØFurther reduce the size of data to deal with l 參考合適的層次 Ø用足以解決task的最小表示方法 17 Ming-Yen Lin, IECS, FCU

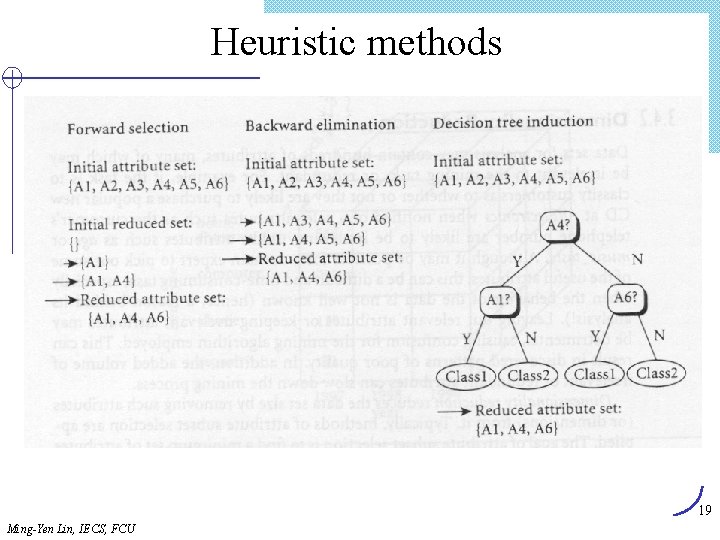

Dimensionality Reduction l 選取 Feature (i. e. , attribute subset selection): Ø 選最小的feature集合,使不同class的機率分佈接近於原始 分佈 Ø reduce # of patterns in the patterns, easier to understand l Heuristic methods (due to exponential # of choices): Ø step-wise forward selection Ø step-wise backward elimination Ø combining forward selection and backward elimination Ø decision-tree induction 18 Ming-Yen Lin, IECS, FCU

Heuristic methods 19 Ming-Yen Lin, IECS, FCU

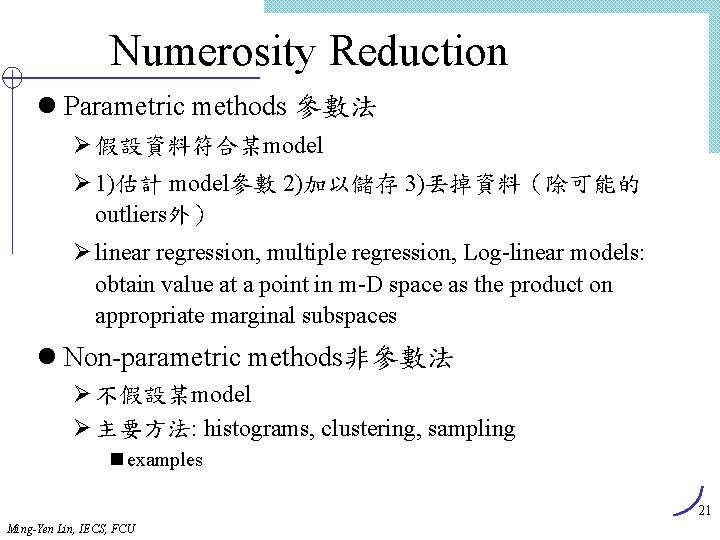

Numerosity Reduction l Parametric methods 參數法 Ø 假設資料符合某model Ø 1)估計 model參數 2)加以儲存 3)丟掉資料(除可能的 outliers外) Ø linear regression, multiple regression, Log-linear models: obtain value at a point in m-D space as the product on appropriate marginal subspaces l Non-parametric methods非參數法 Ø 不假設某model Ø 主要方法: histograms, clustering, sampling n examples 21 Ming-Yen Lin, IECS, FCU

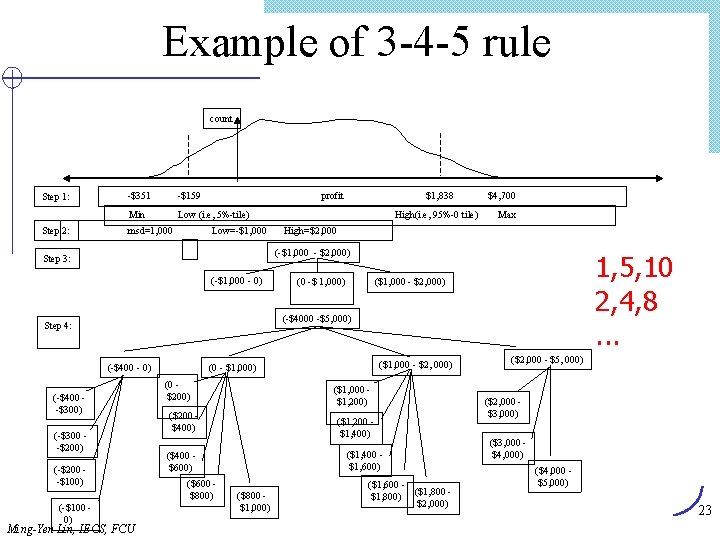

離散化與產生概念階層 l Concept Hierarchy l price Ø 0. . 1000 n 0. . 200, 200. . 400, …, 800. . 1000 – 0. . 100, 100. . 200; (1) 數值資料離散化 l binning, historgram (3. 15), clustering, natural partitioning (3 -4 -5 rule) (2) 產生概念階層 l partial ordering, portion of a hierarchy, set of attributes 22 Ming-Yen Lin, IECS, FCU

Example of 3 -4 -5 rule count Step 1: Step 2: -$351 -$159 Min Low (i. e, 5%-tile) msd=1, 000 profit Low=-$1, 000 High(i. e, 95%-0 tile) $4, 700 Max High=$2, 000 (-$1, 000 - $2, 000) Step 3: (-$1, 000 - 0) 1, 5, 10 2, 4, 8. . . ($1, 000 - $2, 000) (0 -$ 1, 000) (-$4000 -$5, 000) Step 4: (-$400 - 0) (-$400 -$300) (-$300 -$200) (-$200 -$100) (-$100 0) $1, 838 Ming-Yen Lin, IECS, FCU ($1, 000 - $2, 000) (0 - $1, 000) (0 $200) ($1, 000 $1, 200) ($200 $400) ($1, 200 $1, 400) ($1, 400 $1, 600) ($400 $600) ($600 $800) ($800 $1, 000) ($1, 600 ($1, 800) $2, 000) ($2, 000 - $5, 000) ($2, 000 $3, 000) ($3, 000 $4, 000) ($4, 000 $5, 000) 23

類別資料(categorical data)概念階層的產生 l Specification of a partial ordering of attributes explicitly at the schema level by users or experts Ø street < city < state < country l Specification of a portion of a hierarchy by explicit data grouping Ø intermediate level (中部五縣市) l Specification of a set of attributes, but not of their partial ordering Ø system try to generate l Specification of only a partial set of attributes Ming-Yen Lin, IECS, FCU 24

Summary l Data preparation is a big issue for both warehousing and mining l Data preparation includes Ø Data cleaning and data integration Ø Data reduction and feature selection Ø Discretization l A lot a methods have been developed but still an active area of research Ming-Yen Lin, IECS, FCU 比較 Web search 與 KDD process 25

- Slides: 23