Data model and data organization Data Knowledge Base

Data model and data organization Data Knowledge Base Grigorieva M. A. 21/10/2015

Data Knowledge Base Outline � We are working with already existing data sources to build views and tools for the analysis process from a top down (& drill down) perspective. � Metadata sources: ◦ ◦ ◦ AMI (ATLAS Metadata Interface) http: //ami. in 2 p 3. fr/index. php/en/ GLANCE (search engine for the ATLAS publications) https: //atglance. web. cern. ch/atglance/ Rucio (ATLAS Distributed Data Management) http: //rucio. cern. ch/ Prod. Sys 2 (DEFT + JEDI) http: //iopscience. iop. org/article/10. 1088/1742 -6596/513/3/032078/pdf JIRA ITS (ATLAS Issue Tracking Service) http: //information-technology. web. cern. ch/services/JIRAservice ◦ Indico (allows you to manage complex conferences, workshops and meetings) https: //indico. cern. ch/ ◦ CERN Document Server https: //cds. cern. ch ◦ CERN Twiki (a tool for web page collaborative writing) � To aggregate and synthesize a range of primary information sources, augment them with flexible schema-less addition of new information (e. g. user annotations, programmatically generated summary info, data mining results, and cross-correlations), to provide a dynamic and interactive knowledge base serving as the intelligence behind GUIs and APIs that give users and services powerful views into the science data and the computing resources hosting and processing it. 2

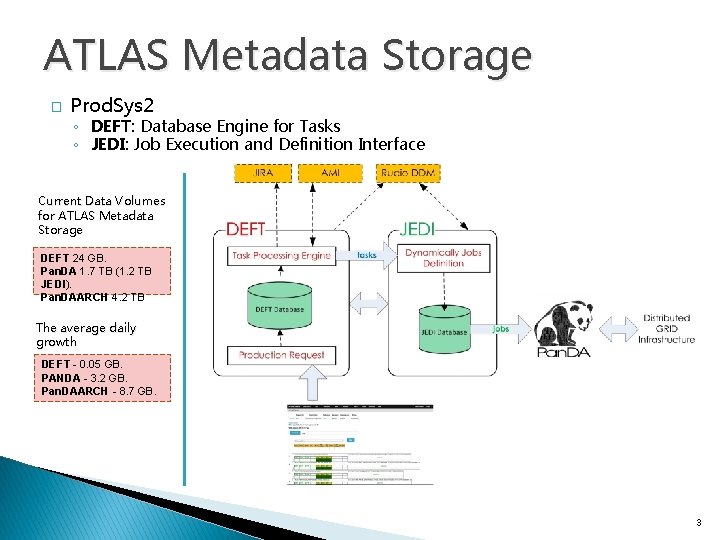

ATLAS Metadata Storage � Prod. Sys 2 ◦ DEFT: Database Engine for Tasks ◦ JEDI: Job Execution and Definition Interface Current Data Volumes for ATLAS Metadata Storage DEFT 24 GB. Pan. DA 1. 7 TB (1. 2 TB JEDI). Pan. DAARCH 4. 2 TB The average daily growth DEFT - 0. 05 GB. PANDA - 3. 2 GB. Pan. DAARCH - 8. 7 GB. 3

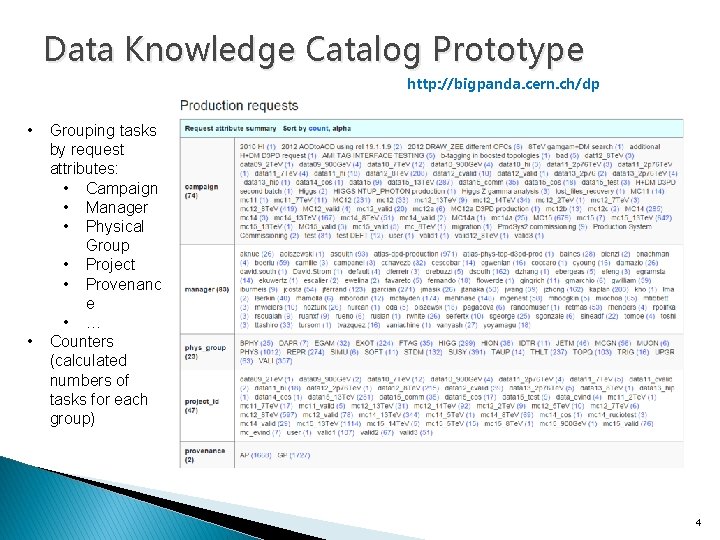

Data Knowledge Catalog Prototype http: //bigpanda. cern. ch/dp • • Grouping tasks by request attributes: • Campaign • Manager • Physical Group • Project • Provenanc e • … Counters (calculated numbers of tasks for each group) 4

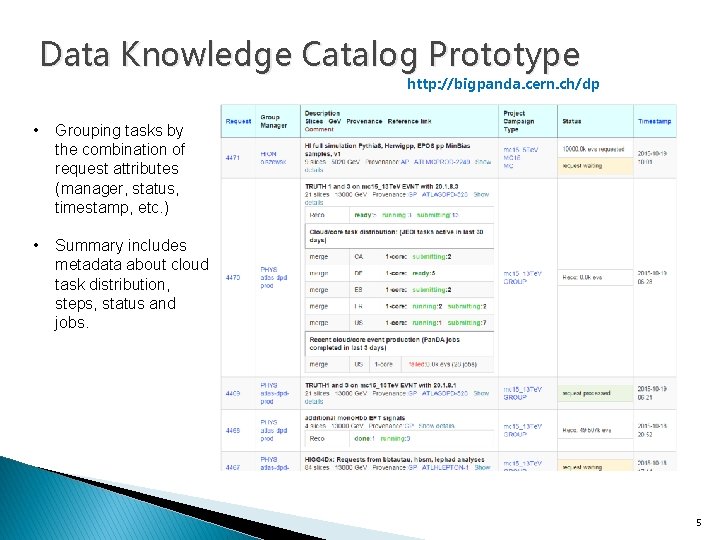

Data Knowledge Catalog Prototype http: //bigpanda. cern. ch/dp • Grouping tasks by the combination of request attributes (manager, status, timestamp, etc. ) • Summary includes metadata about cloud task distribution, steps, status and jobs. 5

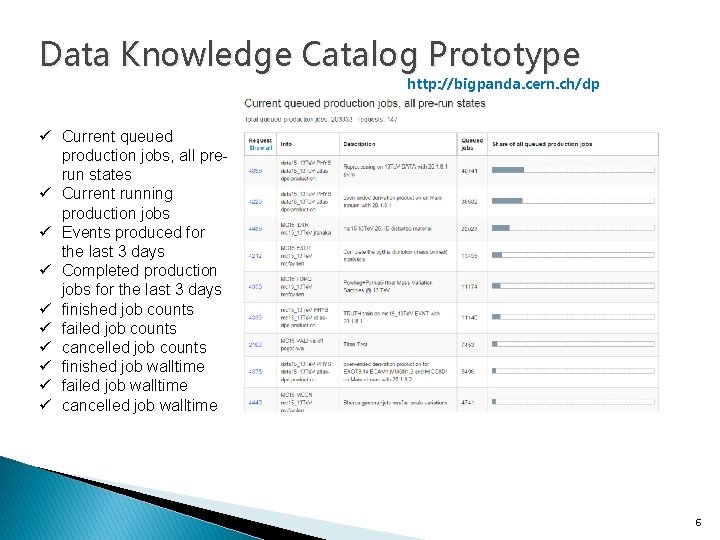

Data Knowledge Catalog Prototype http: //bigpanda. cern. ch/dp ü Current queued production jobs, all prerun states ü Current running production jobs ü Events produced for the last 3 days ü Completed production jobs for the last 3 days ü finished job counts ü failed job counts ü cancelled job counts ü finished job walltime ü failed job walltime ü cancelled job walltime 6

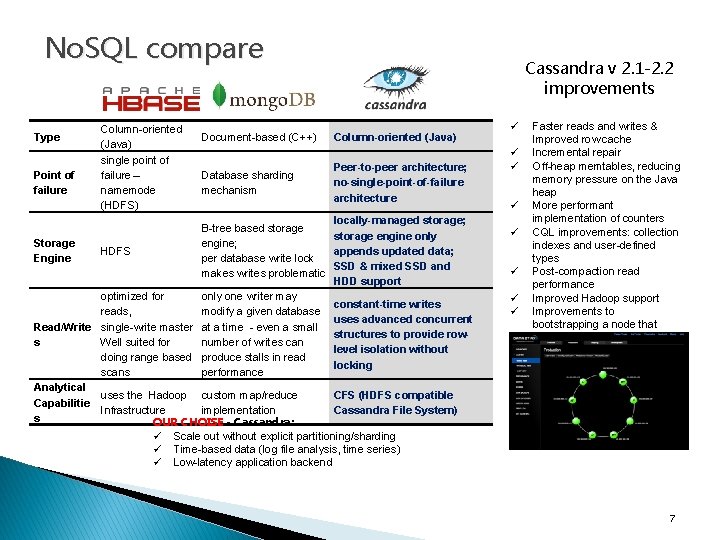

No. SQL compare Type Point of failure Column-oriented (Java) single point of failure – namemode (HDFS) Cassandra v 2. 1 -2. 2 improvements Document-based (C++) Column-oriented (Java) Database sharding mechanism Peer-to-peer architecture; no-single-point-of-failure architecture locally-managed storage; B-tree based storage engine only Storage engine; HDFS appends updated data; Engine per database write lock SSD & mixed SSD and makes writes problematic HDD support optimized for only one writer may constant-time writes reads, modify a given database uses advanced concurrent Read/Write single-write master at a time - even a small structures to provide rows Well suited for number of writes can level isolation without doing range based produce stalls in read locking scans performance Analytical uses the Hadoop custom map/reduce CFS (HDFS compatible Capabilitie Infrastructure implementation Cassandra File System) s OUR CHOISE - Cassandra: ü ü ü ü Faster reads and writes & Improved row cache Incremental repair Off-heap memtables, reducing memory pressure on the Java heap More performant implementation of counters CQL improvements: collection indexes and user-defined types Post-compaction read performance Improved Hadoop support Improvements to bootstrapping a node that ensure data consistency ü Scale out without explicit partitioning/sharding ü Time-based data (log file analysis, time series) ü Low-latency application backend 7

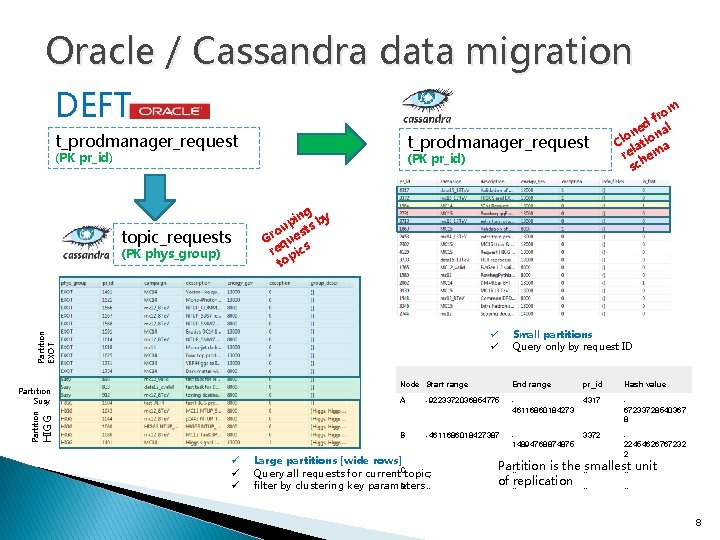

Oracle / Cassandra data migration DEFT t_prodmanager_request (PK pr_id) topic_requests (PK phys_group) (PK pr_id) ng pi s by u o st Gr que re pics to Partition EXOT ü ü HIGG Partition Susy ü ü ü m fro ed al on ion l C lat a re hem sc Small partitions Query only by request ID Node Start range End range pr_id Hash value A -9223372036854775 46116860184273 4317 67233728540367 8 B -4611686018427387 14894768874875 3372 22454626767232 2 Large partitions [wide rows] … Query all requests for current. Ctopic; D … filter by clustering key parameters Partition is the … smallest … … unit of … replication … … 8

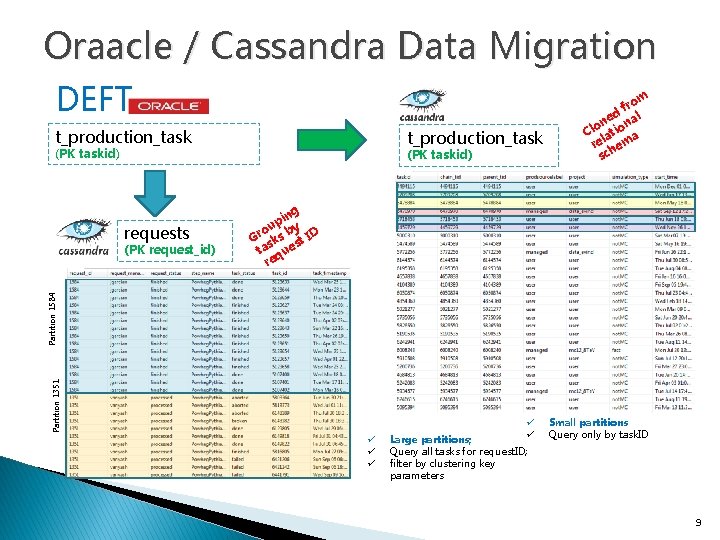

Oraacle / Cassandra Data Migration DEFT t_production_task (PK taskid) requests ng pi y u o b Gr sks st ID ta que re Partition 1351 Partition 1584 (PK request_id) (PK taskid) m fro d l ne ona o Cl lati a re hem sc ü ü ü Large partitions; Query all tasks for request. ID; filter by clustering key parameters Small partitions Query only by task. ID 9

Basic DKC modelling principles � Production requests, tasks, steps, jobs, datasets must be grouped by different combinations of parameters � Each grouping should be translated into a single C* table: �Each grouping value = C* table row [partition] �Partition size in C* - the 2 billion cell limit �One of the most important data model challenge for Cassandra users is controlling row size �Partitions greater than 100 Mb can cause significant pressure on the heap �Determining the right row size for our data will be an iterative process, and will require testing 10

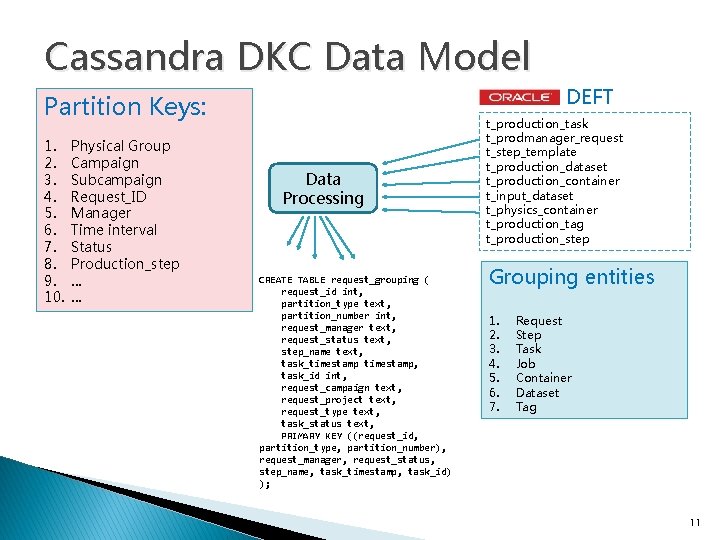

Cassandra DKC Data Model DEFT Partition Keys: 1. 2. 3. 4. 5. 6. 7. 8. 9. 10. Physical Group Campaign Subcampaign Request_ID Manager Time interval Status Production_step … … Data Processing CREATE TABLE request_grouping ( request_id int, partition_type text, partition_number int, request_manager text, request_status text, step_name text, task_timestamp, task_id int, request_campaign text, request_project text, request_type text, task_status text, PRIMARY KEY ((request_id, partition_type, partition_number), request_manager, request_status, step_name, task_timestamp, task_id) ); t_production_task t_prodmanager_request t_step_template t_production_dataset t_production_container t_input_dataset t_physics_container t_production_tag t_production_step Grouping entities 1. 2. 3. 4. 5. 6. 7. Request Step Task Job Container Dataset Tag 11

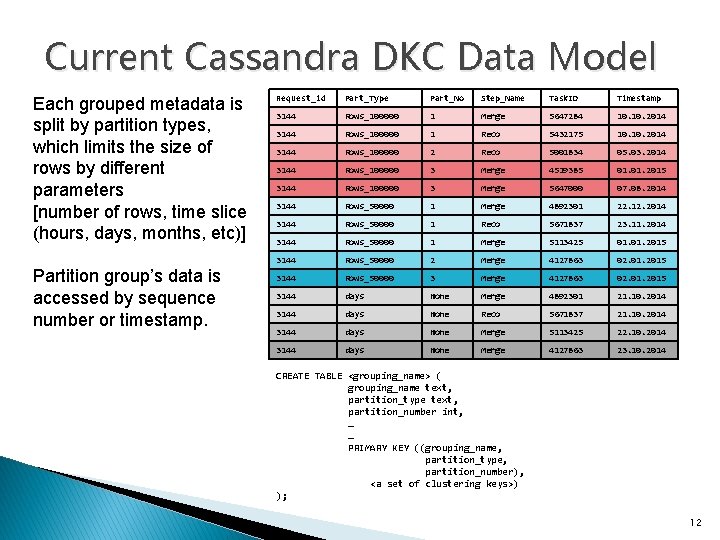

Current Cassandra DKC Data Model Each grouped metadata is split by partition types, which limits the size of rows by different parameters [number of rows, time slice (hours, days, months, etc)] Partition group’s data is accessed by sequence number or timestamp. Request_id Part_Type Part_No Step_Name Task. ID Timestamp 3144 Rows_100000 1 Merge 5647284 10. 2014 3144 Rows_100000 1 Reco 5432175 10. 2014 3144 Rows_100000 2 Reco 5001834 05. 03. 2014 3144 Rows_100000 3 Merge 4519385 01. 2015 3144 Rows_100000 3 Merge 5647000 07. 08. 2014 3144 Rows_50000 1 Merge 4892301 22. 12. 2014 3144 Rows_50000 1 Reco 5671837 23. 11. 2014 3144 Rows_50000 1 Merge 5113425 01. 2015 3144 Rows_50000 2 Merge 4127863 02. 01. 2015 3144 Rows_50000 3 Merge 4127863 02. 01. 2015 3144 days None Merge 4892301 21. 10. 2014 3144 days None Reco 5671837 21. 10. 2014 3144 days None Merge 5113425 22. 10. 2014 3144 days None Merge 4127863 23. 10. 2014 CREATE TABLE <grouping_name> ( grouping_name text, partition_type text, partition_number int, … … PRIMARY KEY ((grouping_name, partition_type, partition_number), <a set of clustering keys>) ); 12

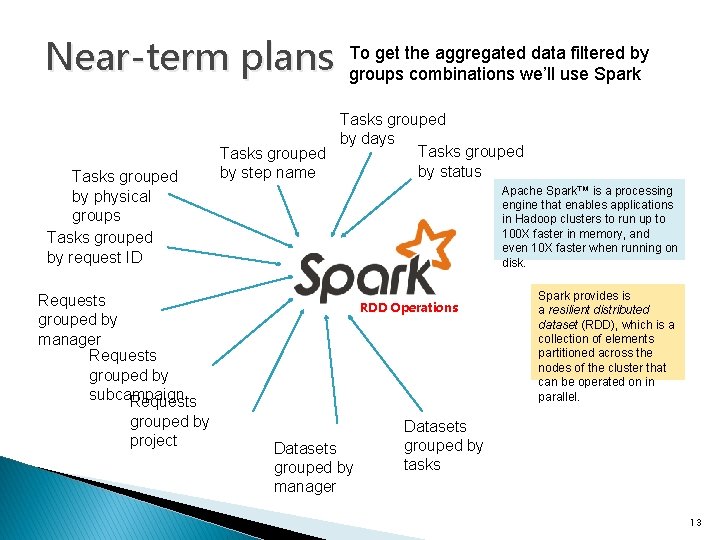

Near-term plans Tasks grouped by physical groups Tasks grouped by request ID To get the aggregated data filtered by groups combinations we’ll use Spark Tasks grouped by days Tasks grouped by status by step name Apache Spark™ is a processing engine that enables applications in Hadoop clusters to run up to 100 X faster in memory, and even 10 X faster when running on disk. Requests grouped by manager Requests grouped by subcampaign Requests grouped by project RDD Operations Datasets grouped by manager Spark provides is a resilient distributed dataset (RDD), which is a collection of elements partitioned across the nodes of the cluster that can be operated on in parallel. Datasets grouped by tasks 13

- Slides: 13