DATA MINING LECTURE 7 Minimum Description Length Principle

- Slides: 37

DATA MINING LECTURE 7 Minimum Description Length Principle Information Theory Co-Clustering

MINIMUM DESCRIPTION LENGTH

Occam’s razor • Most data mining tasks can be described as creating a model for the data • E. g. , the EM algorithm models the data as a mixture of Gaussians, the K-means models the data as a set of centroids. • Model vs Hypothesis • What is the right model? • Occam’s razor: All other things being equal, the simplest model is the best. • A good principle for life as well

Occam's Razor and MDL • What is a simple model? • Minimum Description Length Principle: Every model provides a (lossless) encoding of our data. The model that gives the shortest encoding (best compression) of the data is the best. • Related: Kolmogorov complexity. Find the shortest program that produces the data (uncomputable). • MDL restricts the family of models considered • Encoding cost: cost of party A to transmit to party B the data.

Minimum Description Length (MDL) • The description length consists of two terms • The cost of describing the model (model cost) • The cost of describing the data given the model (data cost). • L(D) = L(M) + L(D|M) • There is a tradeoff between the two costs • Very complex models describe the data in a lot of detail but are expensive to describe • Very simple models are cheap to describe but require a lot of work to describe the data given the model

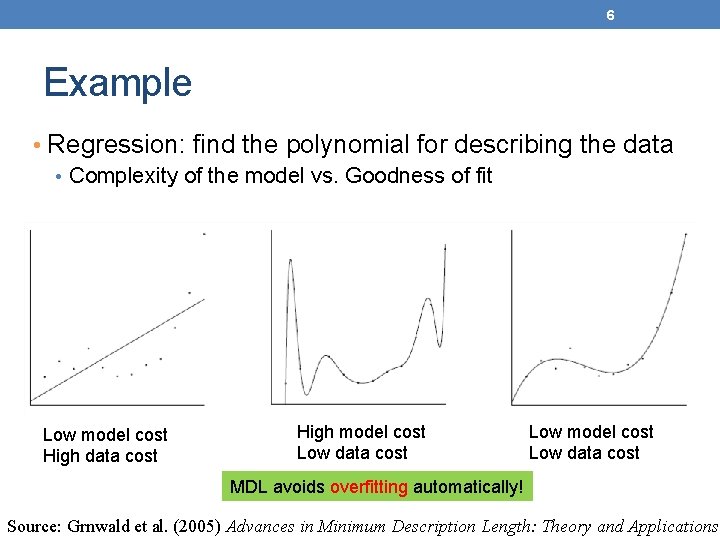

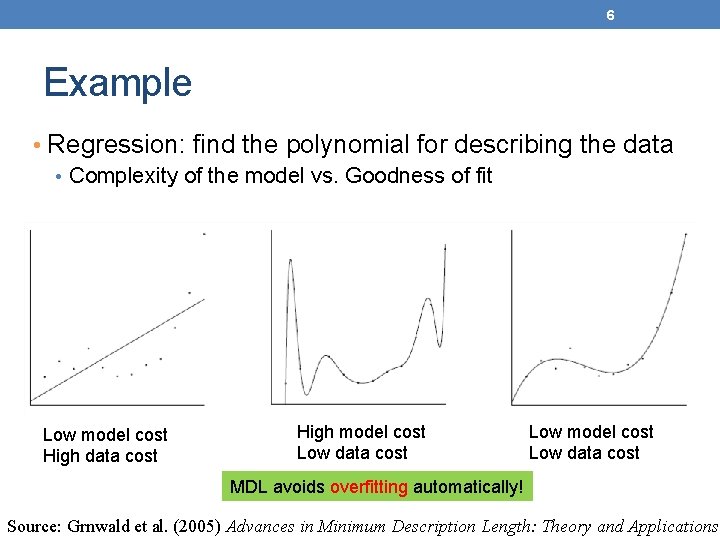

6 Example • Regression: find the polynomial for describing the data • Complexity of the model vs. Goodness of fit Low model cost High data cost High model cost Low data cost Low model cost Low data cost MDL avoids overfitting automatically! Source: Grnwald et al. (2005) Advances in Minimum Description Length: Theory and Applications.

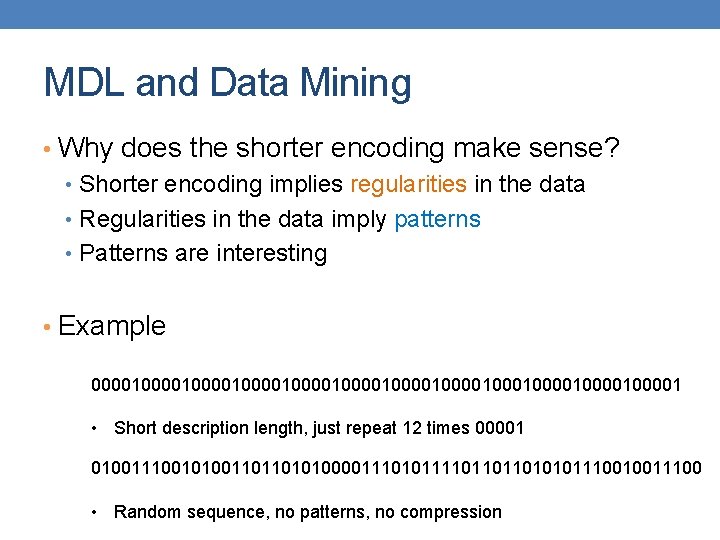

MDL and Data Mining • Why does the shorter encoding make sense? • Shorter encoding implies regularities in the data • Regularities in the data imply patterns • Patterns are interesting • Example 0000100001000010000100010000100001 • Short description length, just repeat 12 times 00001 0100111001010011011010100001110101111011011010101110010011100 • Random sequence, no patterns, no compression

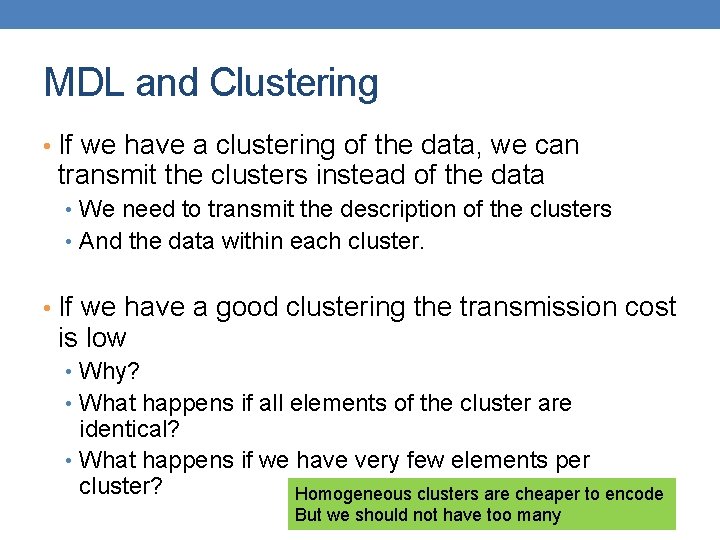

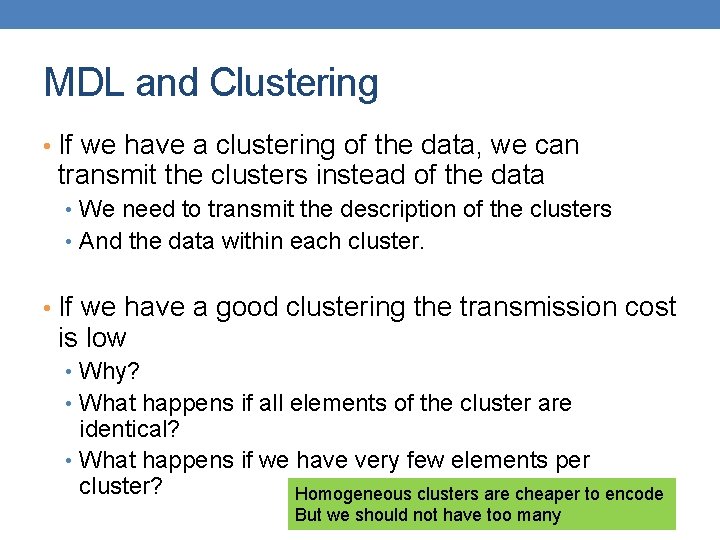

MDL and Clustering • If we have a clustering of the data, we can transmit the clusters instead of the data • We need to transmit the description of the clusters • And the data within each cluster. • If we have a good clustering the transmission cost is low • Why? • What happens if all elements of the cluster are identical? • What happens if we have very few elements per cluster? Homogeneous clusters are cheaper to encode But we should not have too many

Issues with MDL • What is the right model family? • This determines the kind of solutions that we can have • E. g. , polynomials • Clusterings • What is the encoding cost? • Determines the function that we optimize • Information theory

INFORMATION THEORY A short introduction

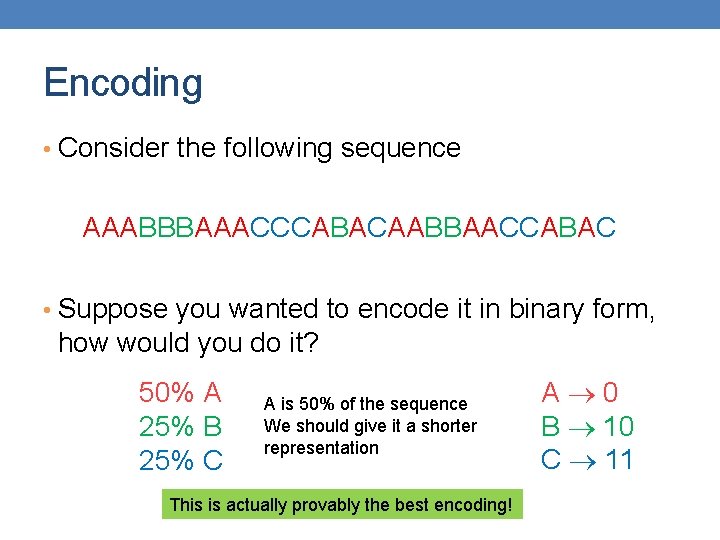

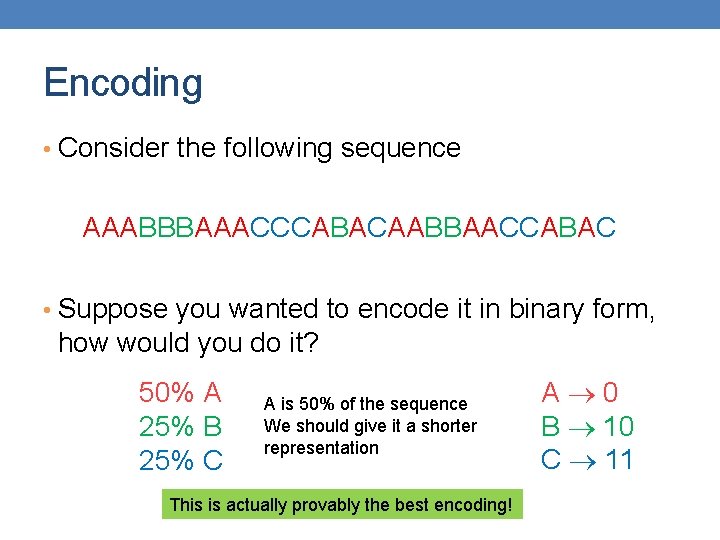

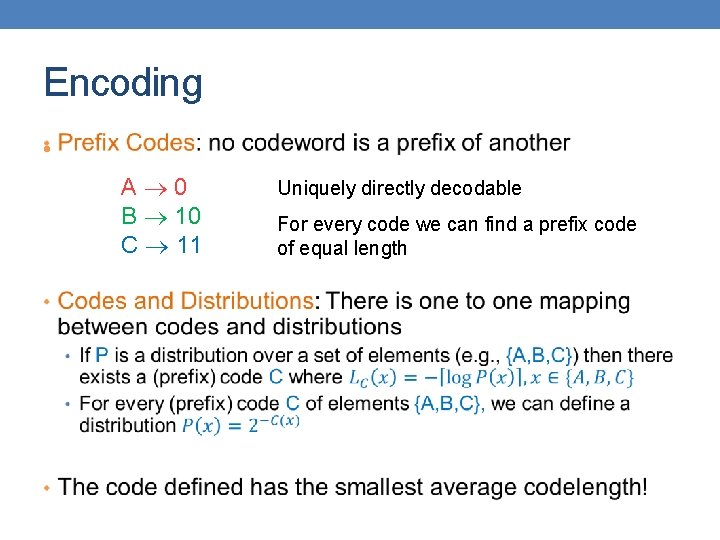

Encoding • Consider the following sequence AAABBBAAACCCABACAABBAACCABAC • Suppose you wanted to encode it in binary form, how would you do it? 50% A 25% B 25% C A is 50% of the sequence We should give it a shorter representation This is actually provably the best encoding! A 0 B 10 C 11

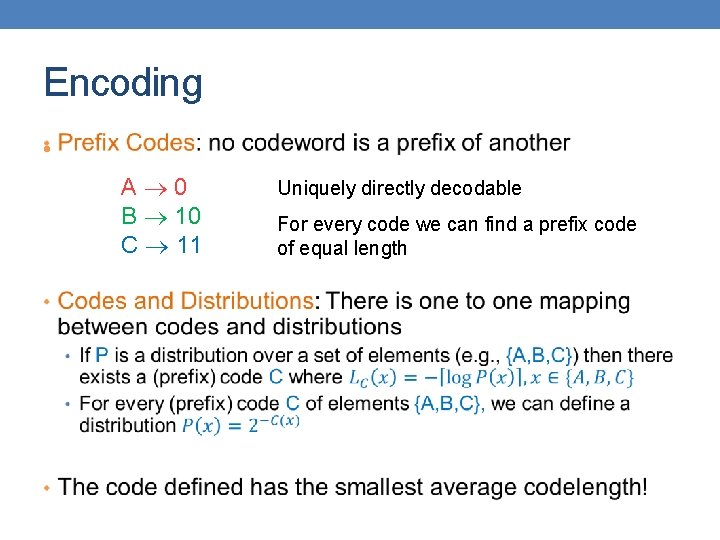

Encoding • A 0 B 10 C 11 Uniquely directly decodable For every code we can find a prefix code of equal length

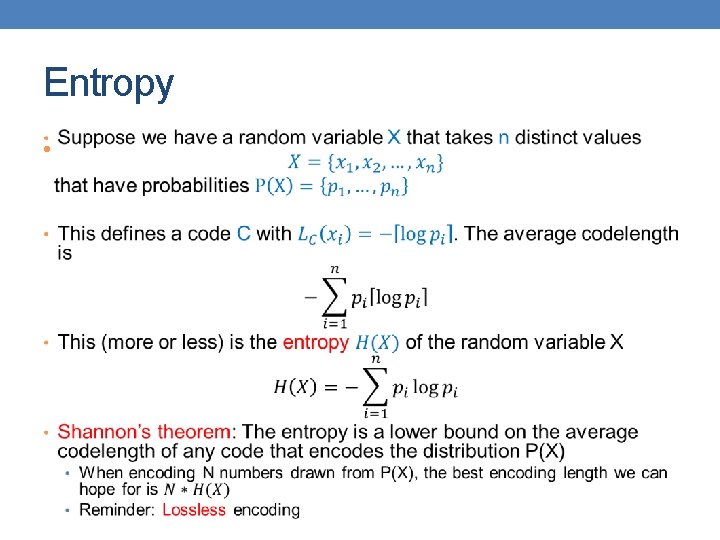

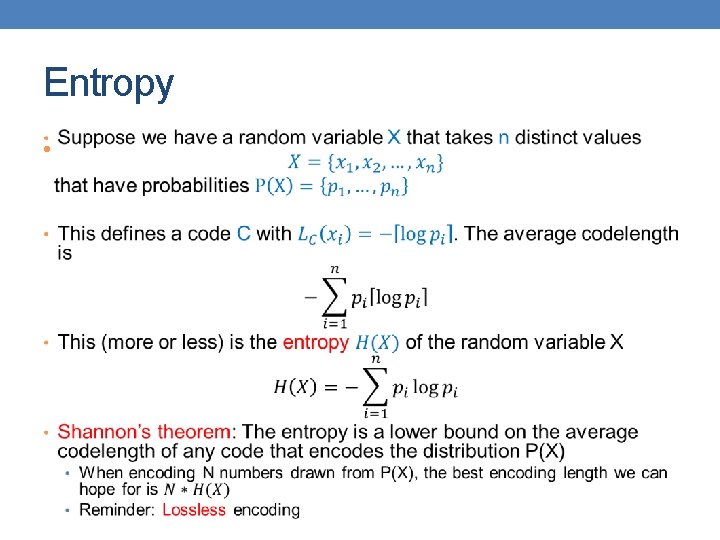

Entropy •

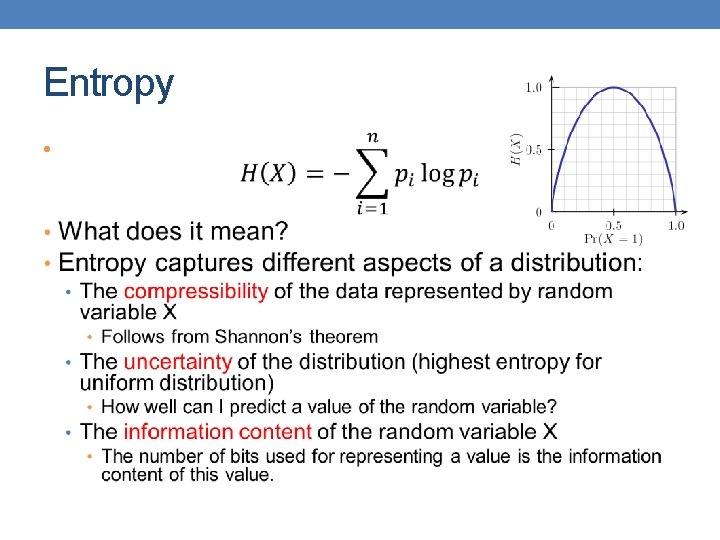

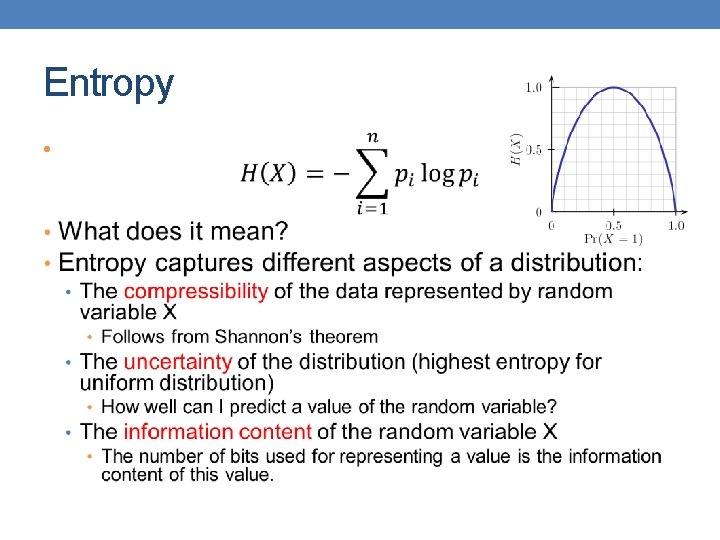

Entropy •

Claude Shannon Father of Information Theory Envisioned the idea of communication of information with 0/1 bits Introduced the word “bit” The word entropy was suggested by Von Neumann • Similarity to physics, but also “nobody really knows what entropy really is, so in any conversation you will have an advantage”

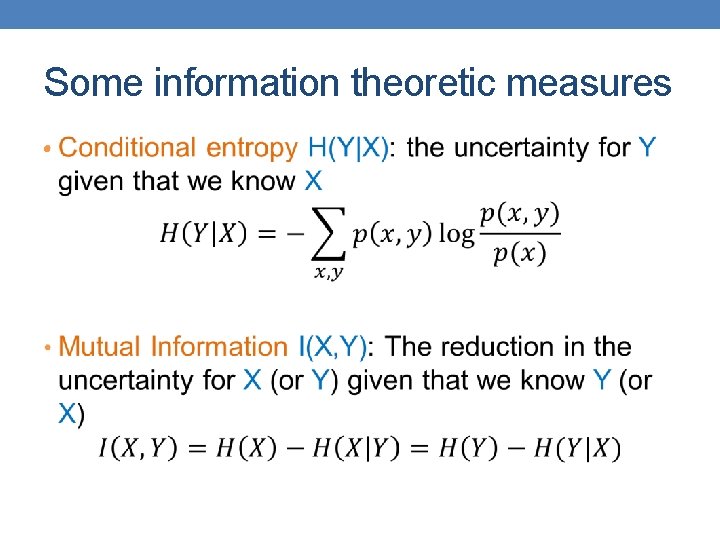

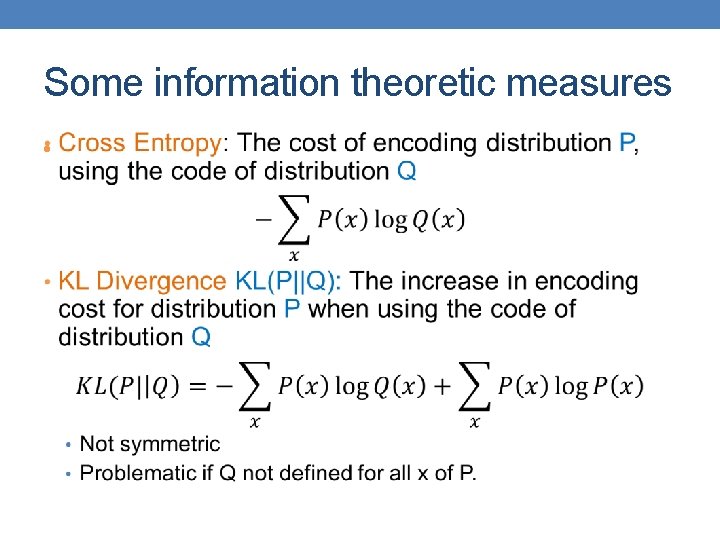

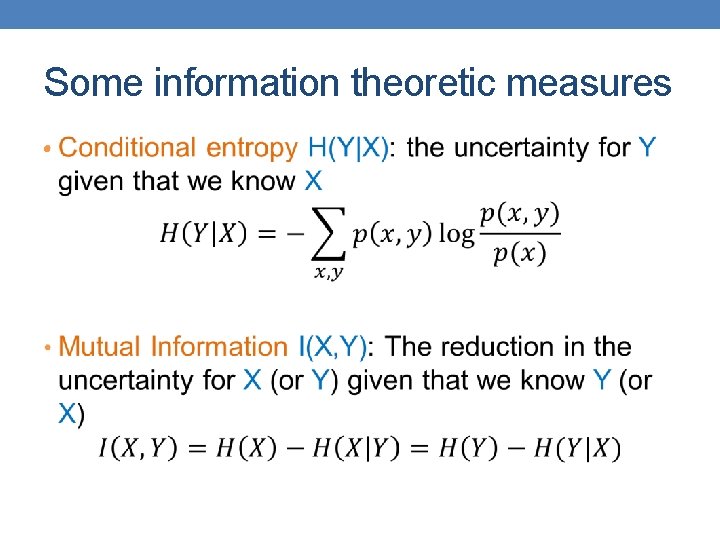

Some information theoretic measures •

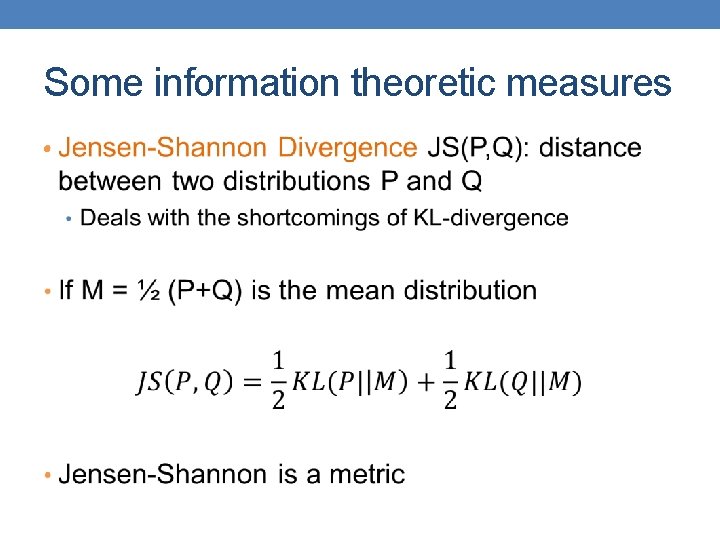

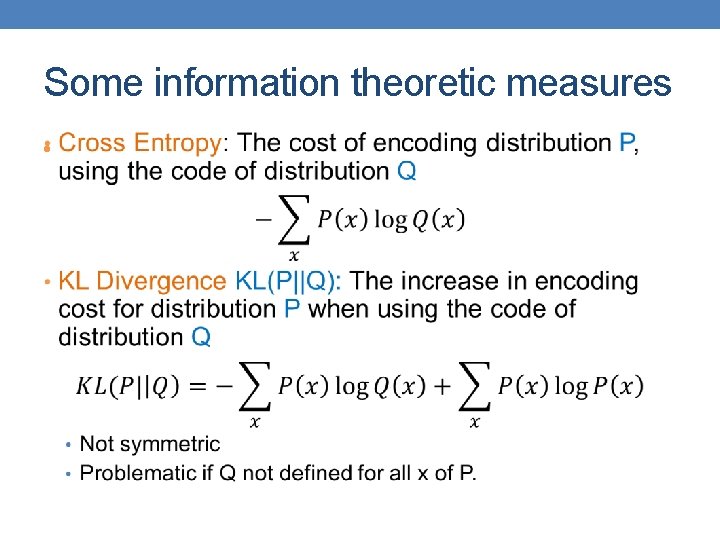

Some information theoretic measures •

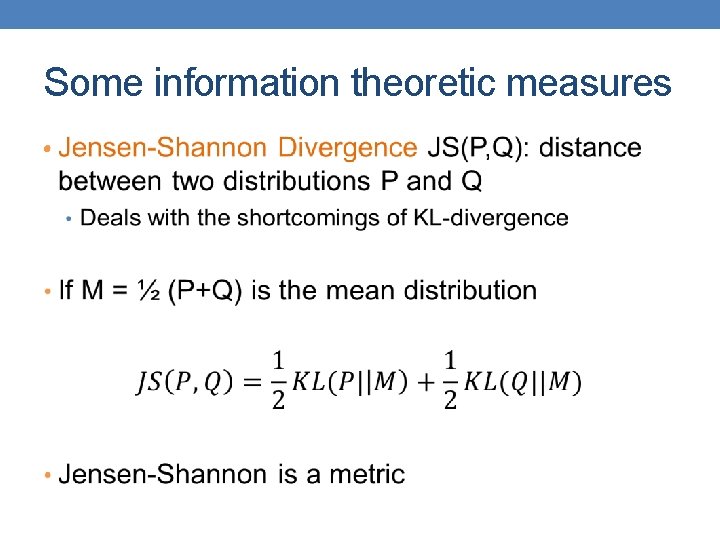

Some information theoretic measures •

USING MDL FOR CO-CLUSTERING (CROSS-ASSOCIATIONS) Thanks to Spiros Papadimitriou.

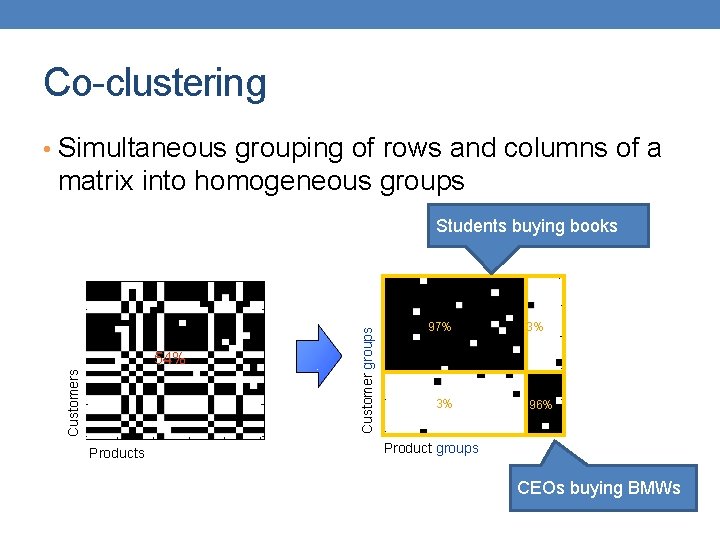

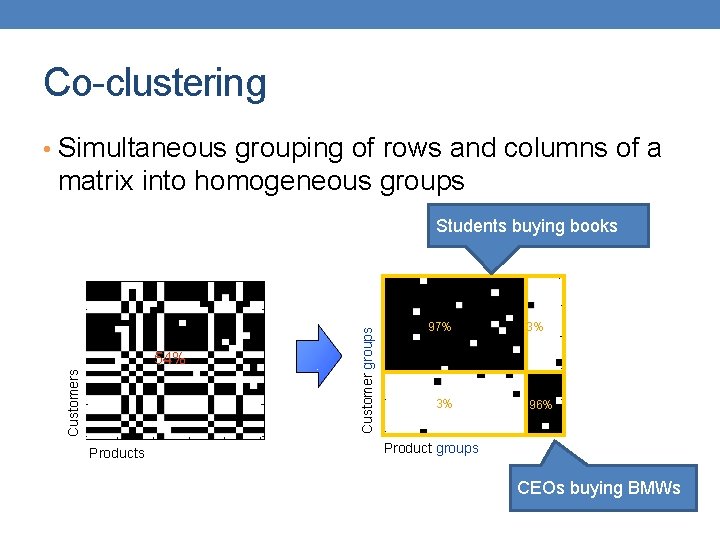

Co-clustering • Simultaneous grouping of rows and columns of a matrix into homogeneous groups Students buying books 5 97% Customer groups 5 3% 10 10 54% Customers 15 15 3% 20 20 96% 25 25 5 Products 10 15 20 25 5 10 Product groups 15 20 25 CEOs buying BMWs

Co-clustering • Step 1: How to define a “good” partitioning? Intuition and formalization • Step 2: How to find it?

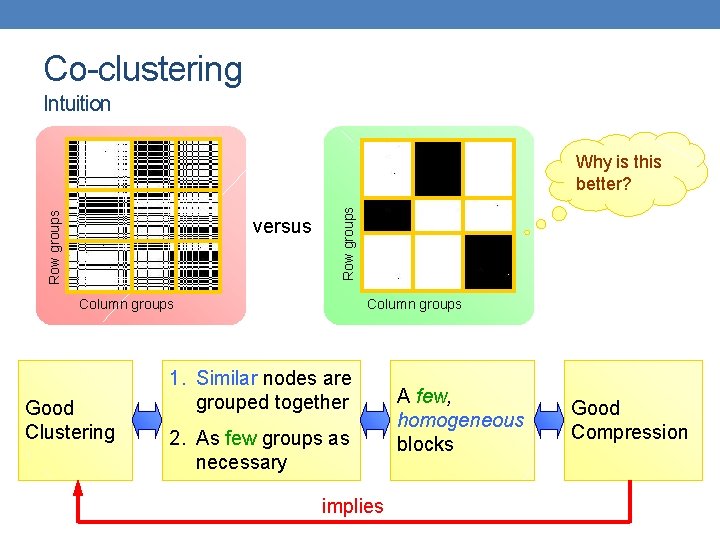

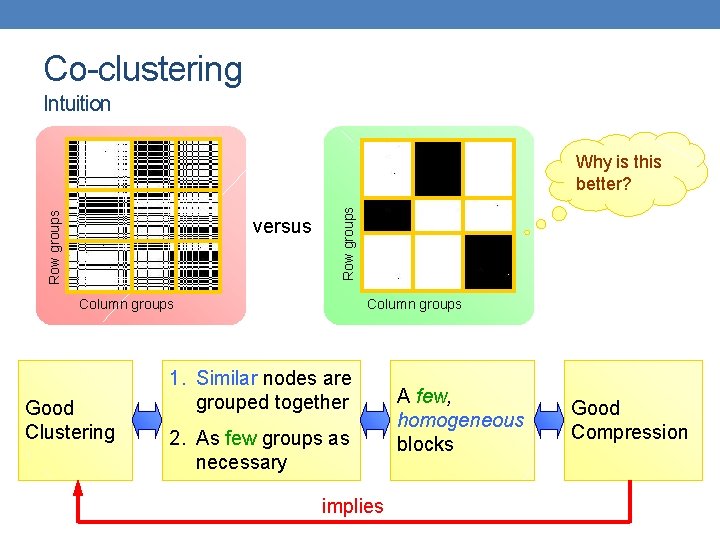

Co-clustering Intuition versus Row groups Why is this better? Column groups Good Clustering 1. Similar nodes are grouped together 2. As few groups as necessary implies A few, homogeneous blocks Good Compression

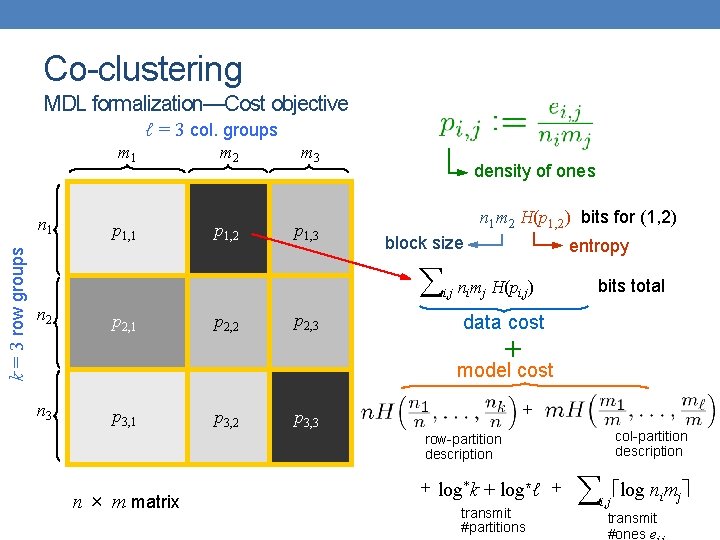

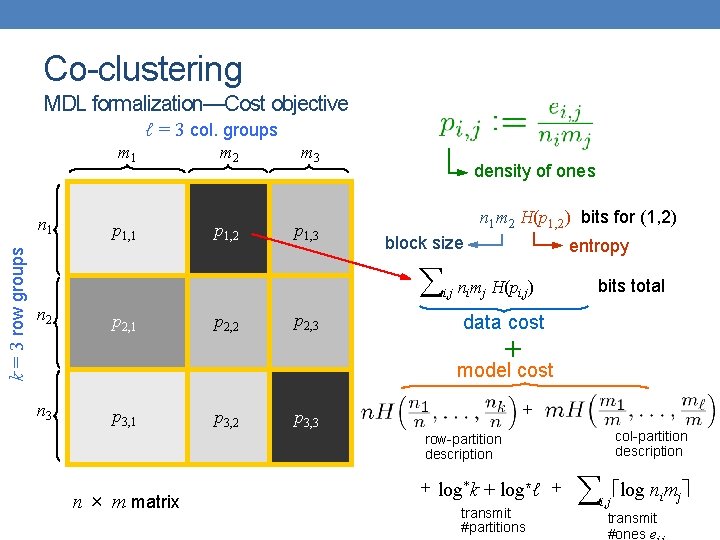

Co-clustering MDL formalization—Cost objective ℓ = 3 col. groups k = 3 row groups n 1 m 2 m 3 p 1, 1 p 1, 2 p 1, 3 density of ones n 1 m 2 H(p 1, 2) bits for (1, 2) block size n 2 p 2, 1 p 2, 2 p 2, 3 i, j entropy nimj H(pi, j) bits total data cost + model cost n 3 p 3, 1 p 3, 2 + p 3, 3 col-partition description row-partition description n × m matrix + log*k + log*ℓ + transmit #partitions i, j log nimj transmit #ones e

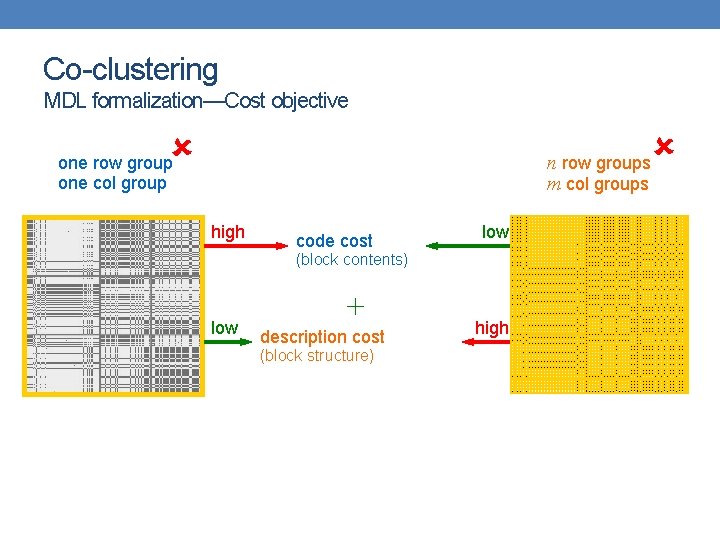

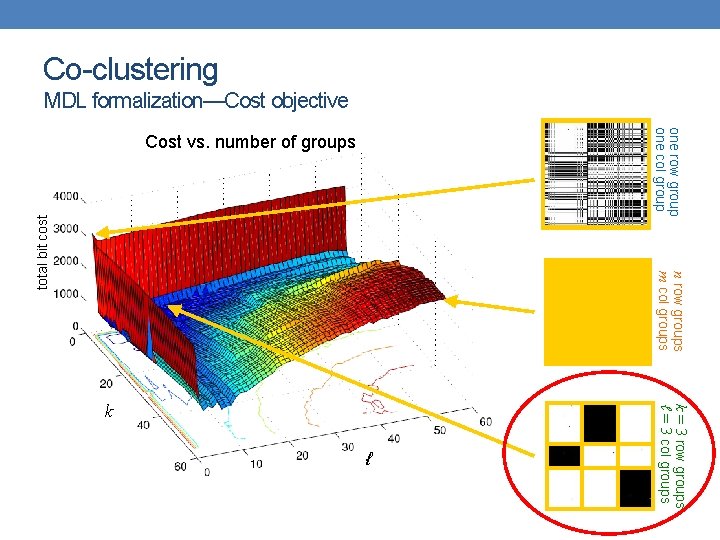

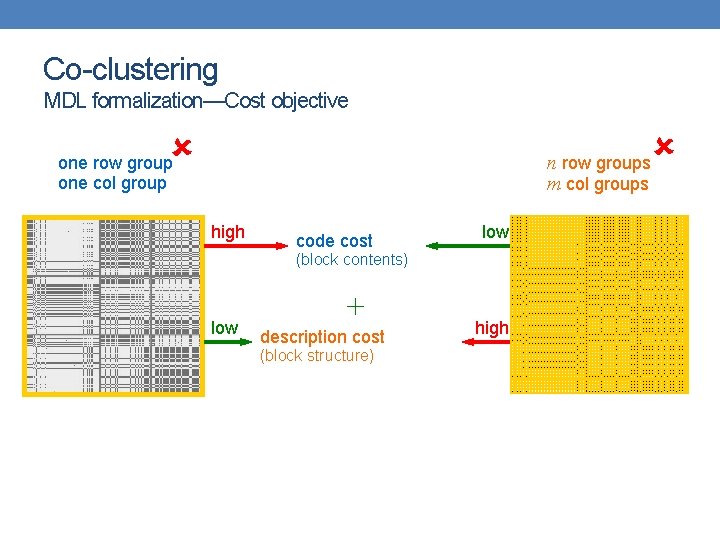

Co-clustering MDL formalization—Cost objective n row groups m col groups one row group one col group high code cost low (block contents) low + description cost (block structure) high

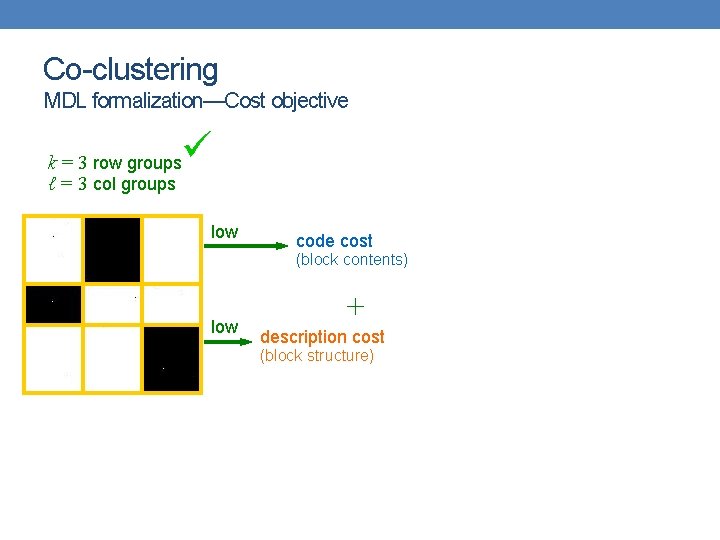

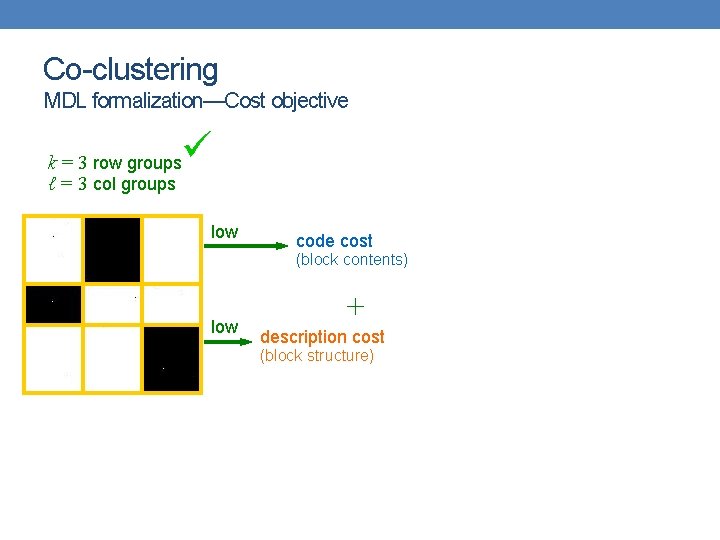

Co-clustering MDL formalization—Cost objective k = 3 row groups ℓ = 3 col groups low code cost (block contents) low + description cost (block structure)

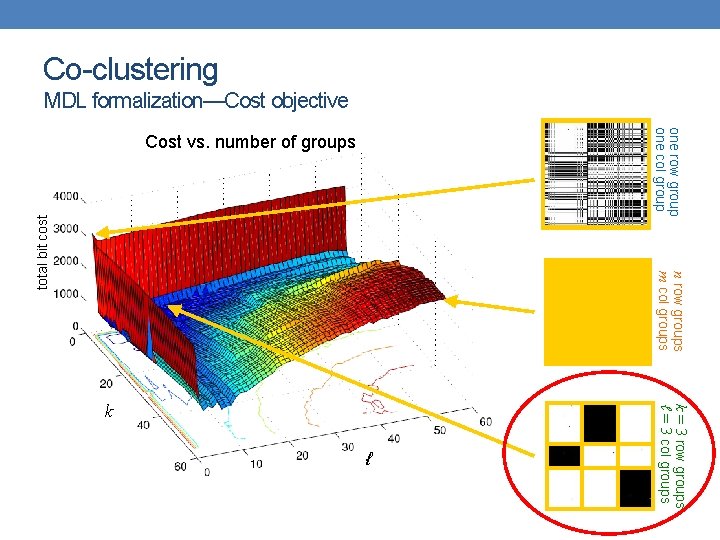

one row group one col group n row groups m col groups total bit cost Co-clustering MDL formalization—Cost objective Cost vs. number of groups ℓ k = 3 row groups ℓ = 3 col groups k

Co-clustering • Step 1: How to define a “good” partitioning? Intuition and formalization • Step 2: How to find it?

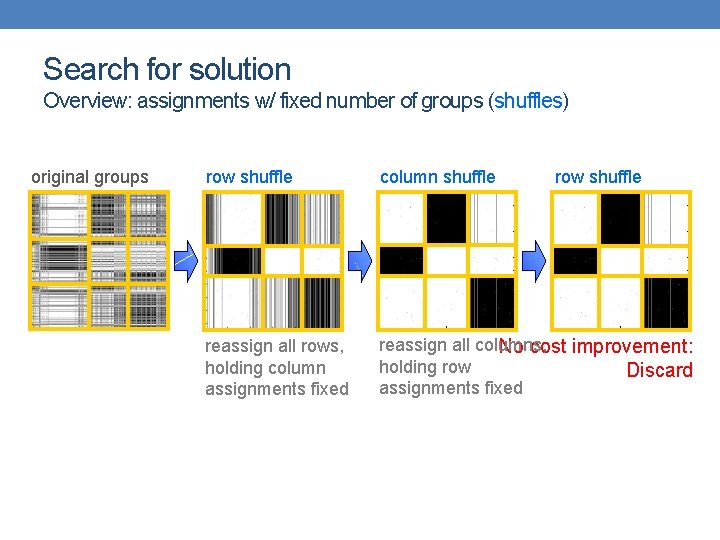

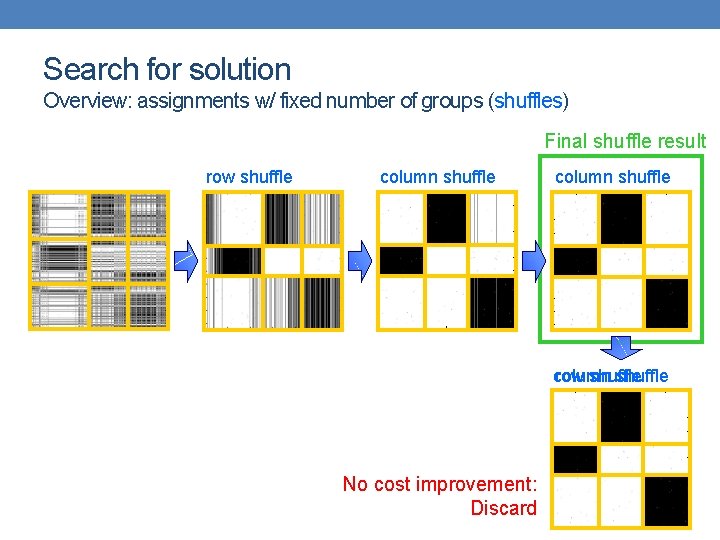

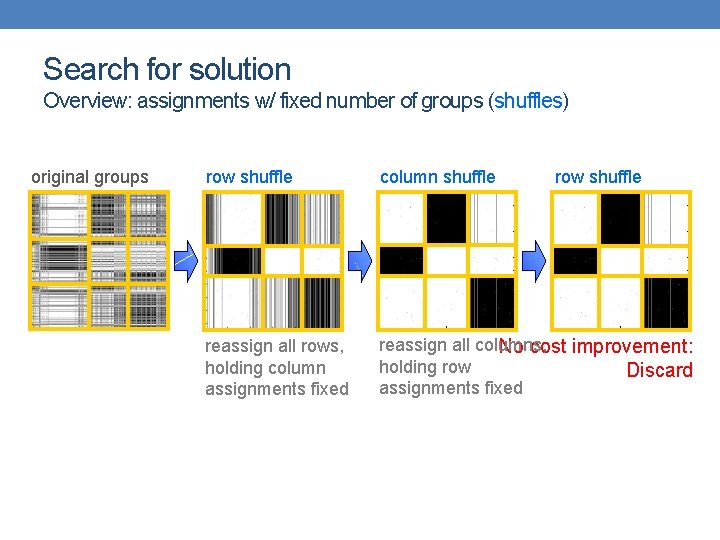

Search for solution Overview: assignments w/ fixed number of groups (shuffles) original groups row shuffle column shuffle row shuffle reassign all rows, holding column assignments fixed reassign all columns, No cost improvement: holding row Discard assignments fixed

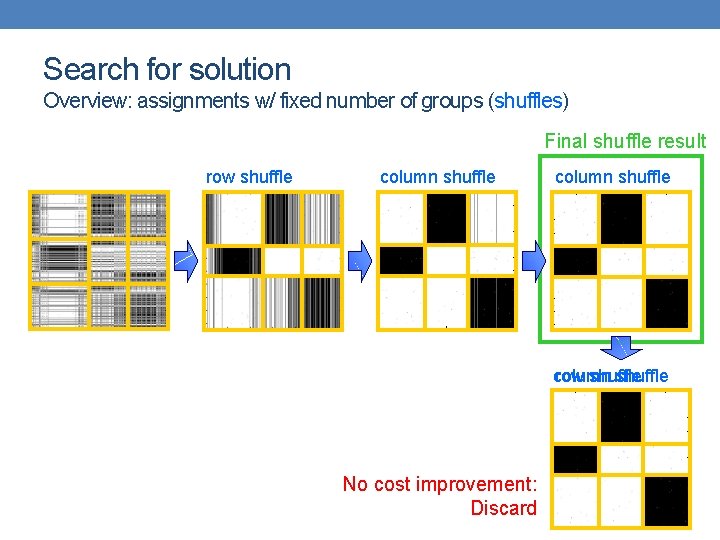

Search for solution Overview: assignments w/ fixed number of groups (shuffles) Final shuffle result row shuffle column row shuffle No cost improvement: Discard

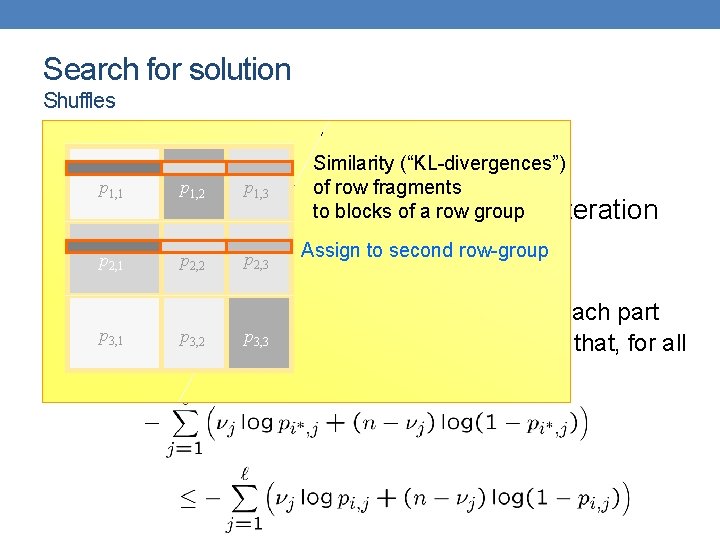

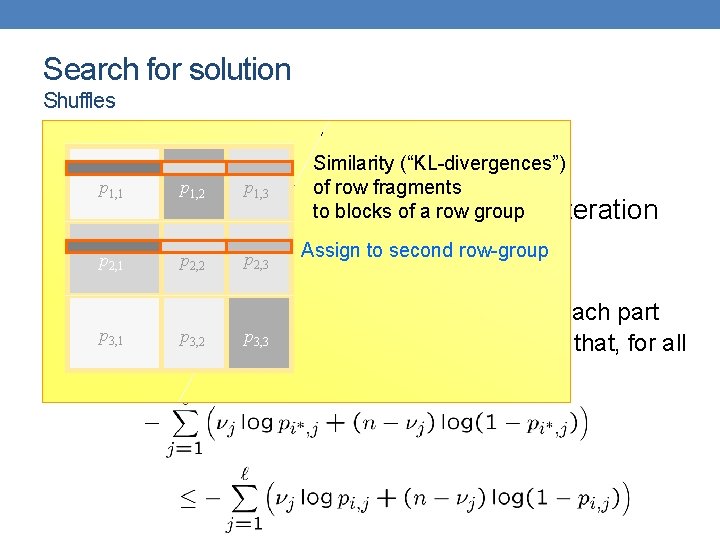

Search for solution Shuffles • Let p 1, 1 p 1, 2 2, 1 2, 2 p 1, 3 Similarity (“KL-divergences”) of row fragments to blocks of aat rowthe group partitions I-th iteration denote row and col. • Fix p I andp for every row x: Assign to second row-group p 2, 3 • Splice into ℓ parts, one for each column group • Let p j, for j = 1, …, ℓ, be the number of ones in each part p 3, 2 3, 1 3, 3 • Assign row x to pthe row group i¤ I+1(x) such that, for all i = 1, …, k,

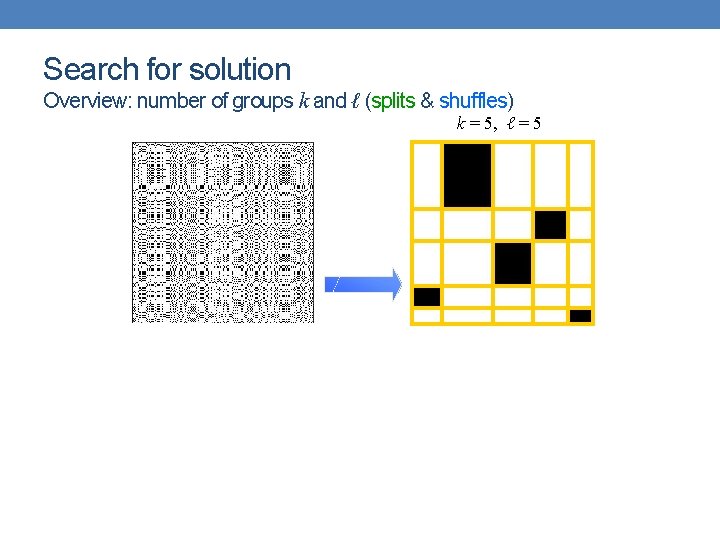

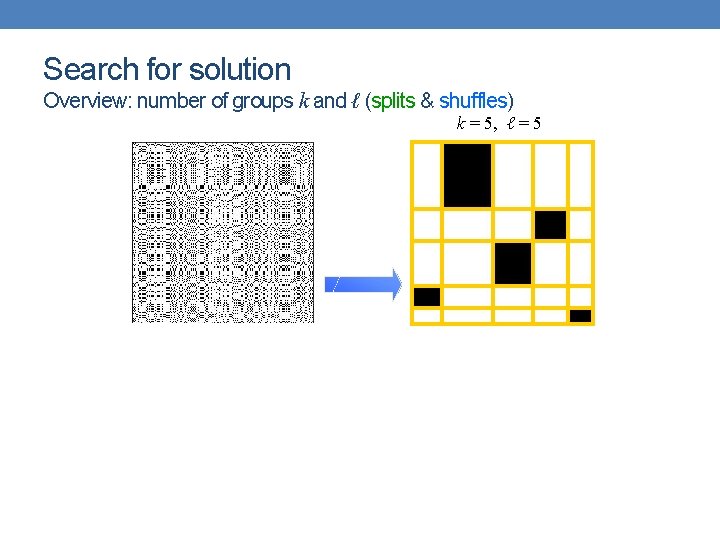

Search for solution Overview: number of groups k and ℓ (splits & shuffles) k = 5, ℓ = 5

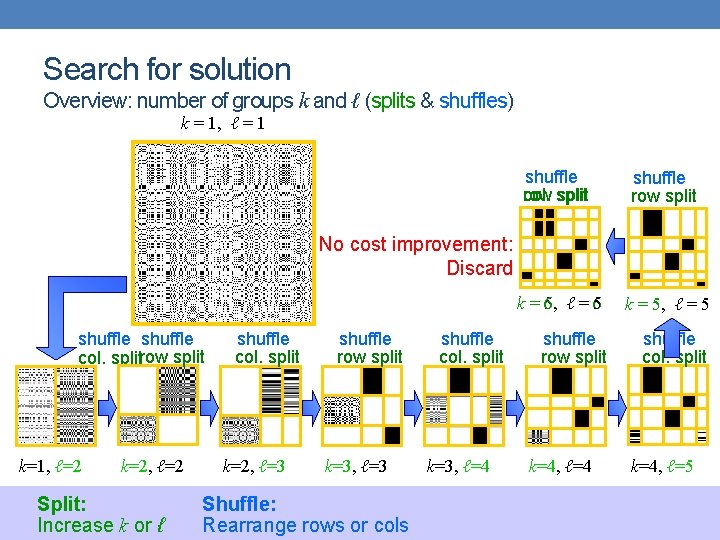

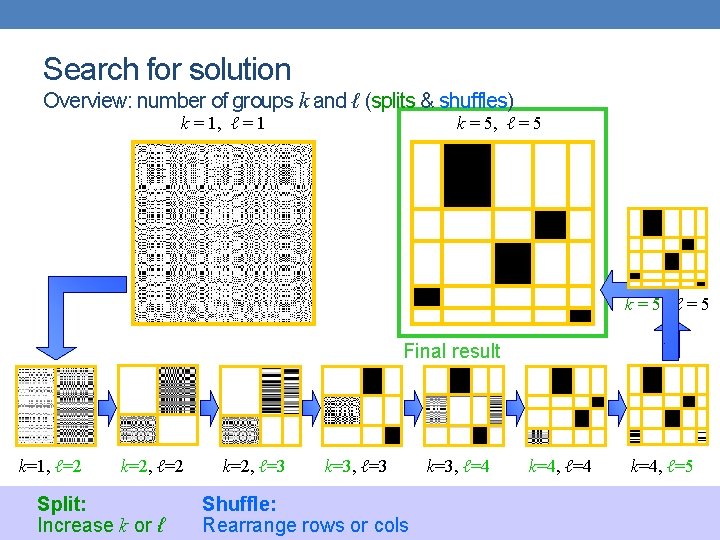

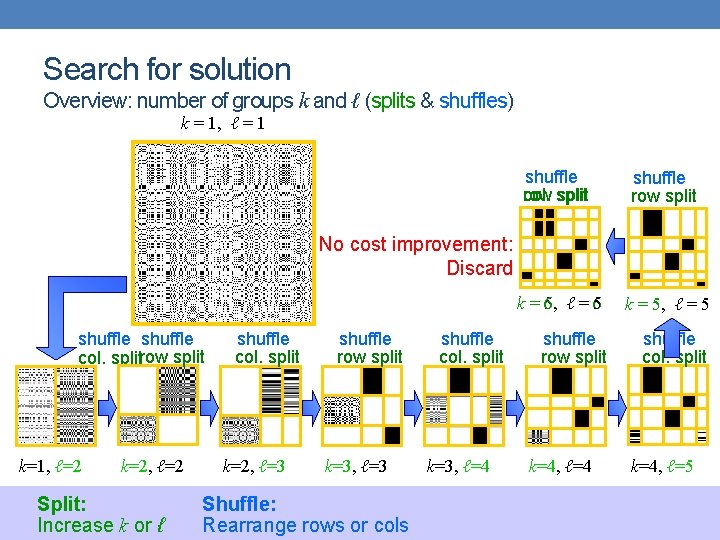

Search for solution Overview: number of groups k and ℓ (splits & shuffles) k = 1, ℓ = 1 shuffle col. split row shuffle row split k = 5, 6, ℓ = 56 k = 5, ℓ = 5 shuffle row split shuffle col. split No cost improvement: Discard shuffle col. splitrow split k=1, ℓ=2 k=2, ℓ=2 Split: Increase k or ℓ shuffle col. split k=2, ℓ=3 shuffle row split k=3, ℓ=3 Shuffle: Rearrange rows or cols shuffle col. split k=3, ℓ=4 k=4, ℓ=5

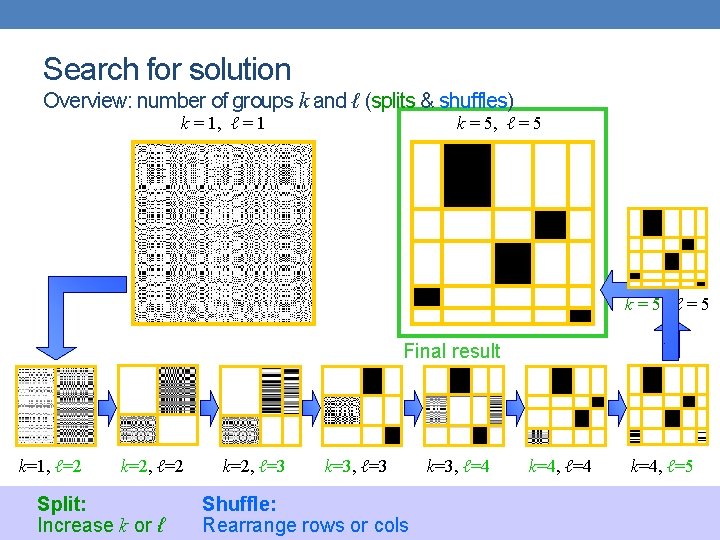

Search for solution Overview: number of groups k and ℓ (splits & shuffles) k = 1, ℓ = 1 k = 5, ℓ = 5 Final result k=1, ℓ=2 k=2, ℓ=2 Split: Increase k or ℓ k=2, ℓ=3 k=3, ℓ=3 Shuffle: Rearrange rows or cols k=3, ℓ=4 k=4, ℓ=5

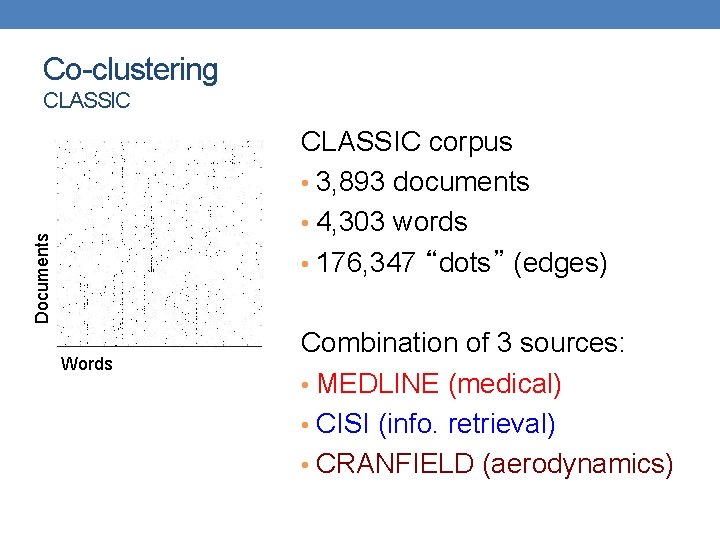

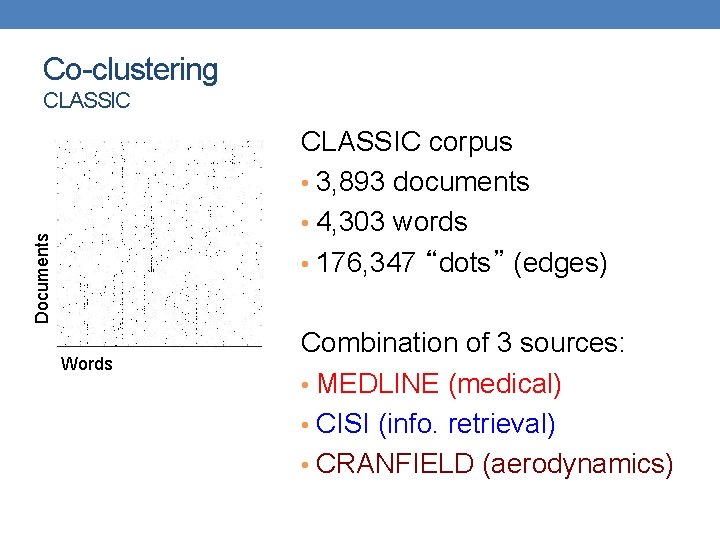

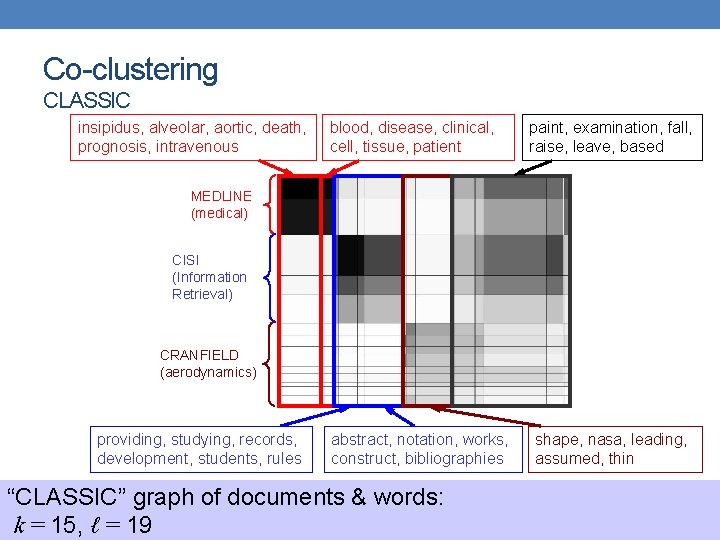

Co-clustering CLASSIC Documents CLASSIC corpus • 3, 893 documents • 4, 303 words • 176, 347 “dots” (edges) Words Combination of 3 sources: • MEDLINE (medical) • CISI (info. retrieval) • CRANFIELD (aerodynamics)

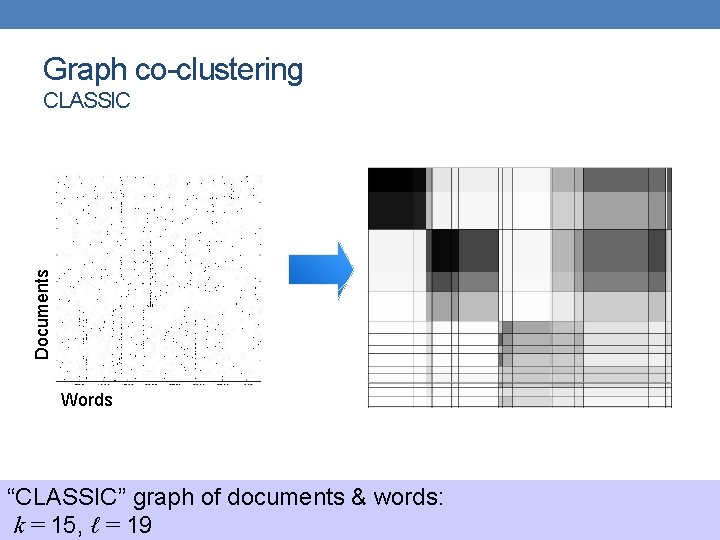

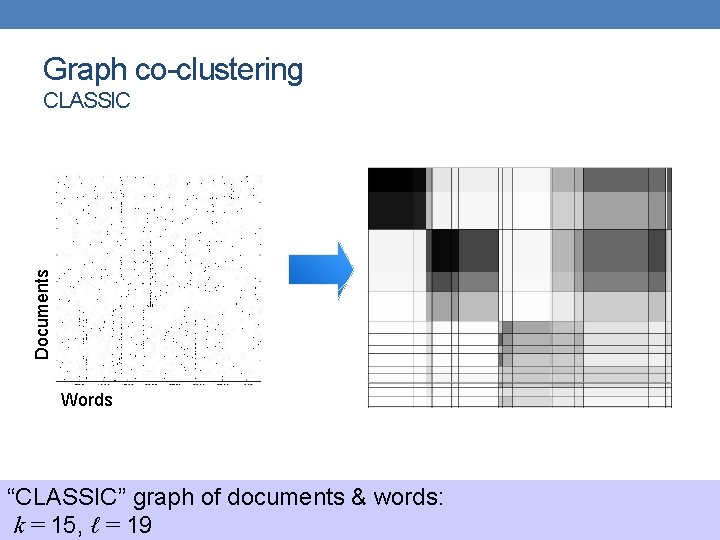

Graph co-clustering Documents CLASSIC Words “CLASSIC” graph of documents & words: k = 15, ℓ = 19

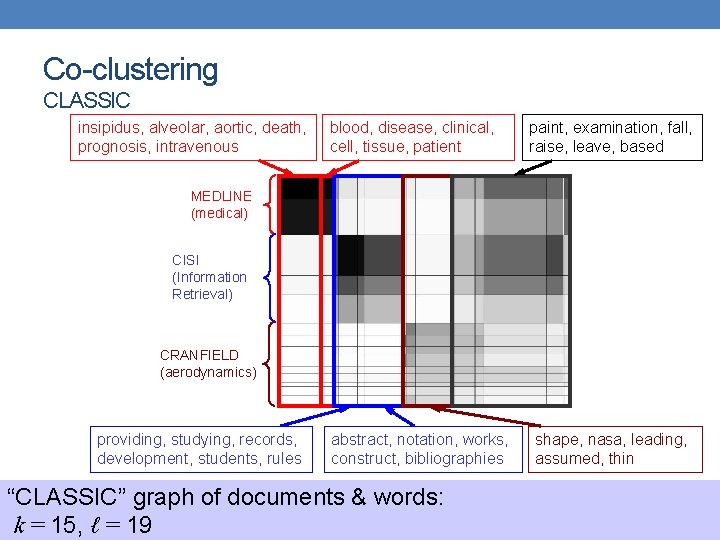

Co-clustering CLASSIC insipidus, alveolar, aortic, death, prognosis, intravenous blood, disease, clinical, cell, tissue, patient paint, examination, fall, raise, leave, based MEDLINE (medical) CISI (Information Retrieval) CRANFIELD (aerodynamics) providing, studying, records, development, students, rules abstract, notation, works, construct, bibliographies “CLASSIC” graph of documents & words: k = 15, ℓ = 19 shape, nasa, leading, assumed, thin

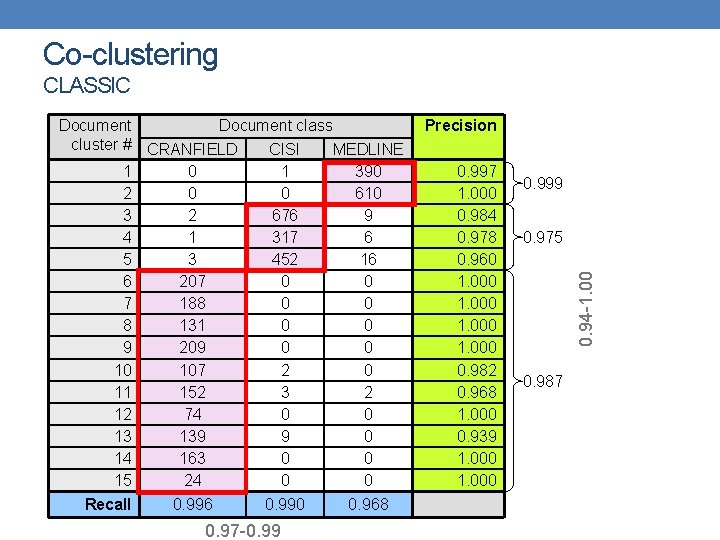

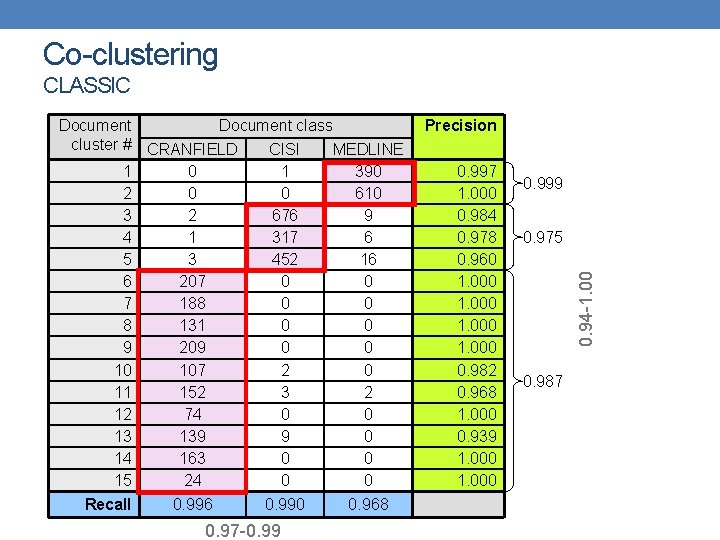

Co-clustering CLASSIC Recall 0. 996 0. 990 0. 97 -0. 99 0. 968 Precision 0. 997 1. 000 0. 984 0. 978 0. 960 1. 000 0. 982 0. 968 1. 000 0. 939 1. 000 0. 999 0. 975 0. 94 -1. 00 Document class cluster # CRANFIELD CISI MEDLINE 1 0 1 390 2 0 0 610 3 2 676 9 4 1 317 6 5 3 452 16 6 207 0 0 7 188 0 0 8 131 0 0 9 209 0 0 10 107 2 0 11 152 3 2 12 74 0 0 13 139 9 0 14 163 0 0 15 24 0 0 0. 987