Data Mining Association Rule Mining CSE 880 Database

Data Mining: Association Rule Mining CSE 880: Database Systems

Data, data everywhere Walmart records ~20 million transactions per day l Google indexed ~3 billion Web pages l Yahoo collects ~10 GB Web traffic data per hour l l – U of M Computer Science department collects ~80 MB of Web traffic on April 28, 2003 NASA Earth Observing Satellites (EOS) produces over 1 PB of Earth Science data per year NYSE trading volume: 1, 443, 627, 000 (Oct 31, 2003) Scientific simulations can produce terabytes of data per hour

Data Mining - Motivation l There is often information “hidden” in the data that is not readily evident l Human analysts may take weeks to discover useful information l Much of the data is never analyzed at all The Data Gap Total new disk (TB) since 1995 Number of analysts From: R. Grossman, C. Kamath, V. Kumar, “Data Mining for Scientific and Engineering Applications”

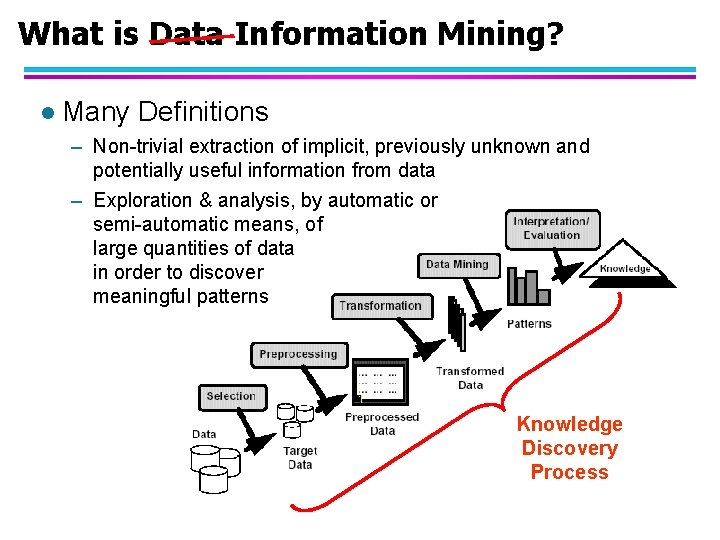

Whatisis. Data. Information Mining? What Mining? l Many Definitions – Non-trivial extraction of implicit, previously unknown and potentially useful information from data – Exploration & analysis, by automatic or semi-automatic means, of large quantities of data in order to discover meaningful patterns Knowledge Discovery Process

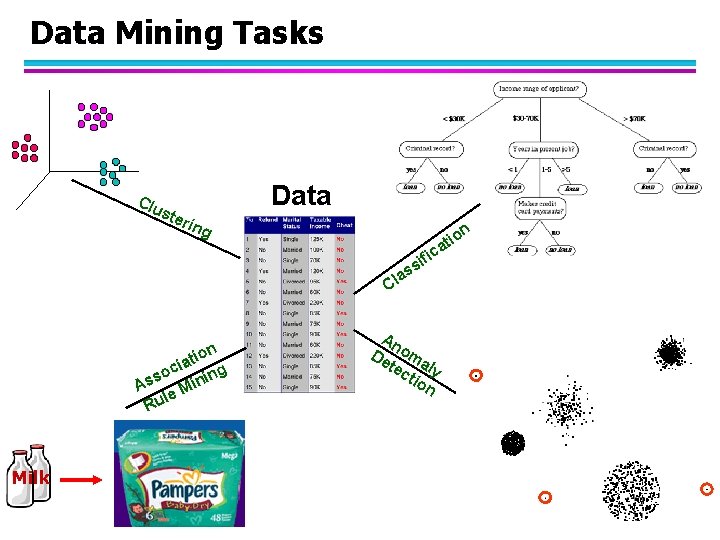

Data Mining Tasks Clu Data ste rin g n tio a fic i s s a l C n tio a i c so ining s A le M u R Milk An De oma tec ly tio n

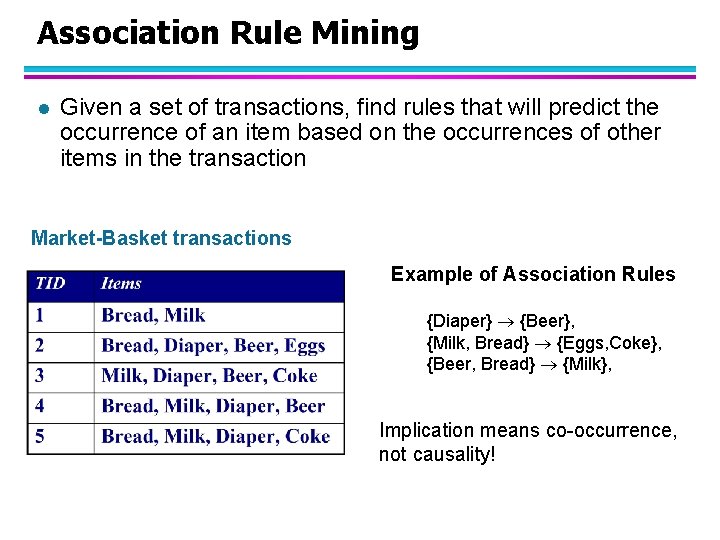

Association Rule Mining l Given a set of transactions, find rules that will predict the occurrence of an item based on the occurrences of other items in the transaction Market-Basket transactions Example of Association Rules {Diaper} {Beer}, {Milk, Bread} {Eggs, Coke}, {Beer, Bread} {Milk}, Implication means co-occurrence, not causality!

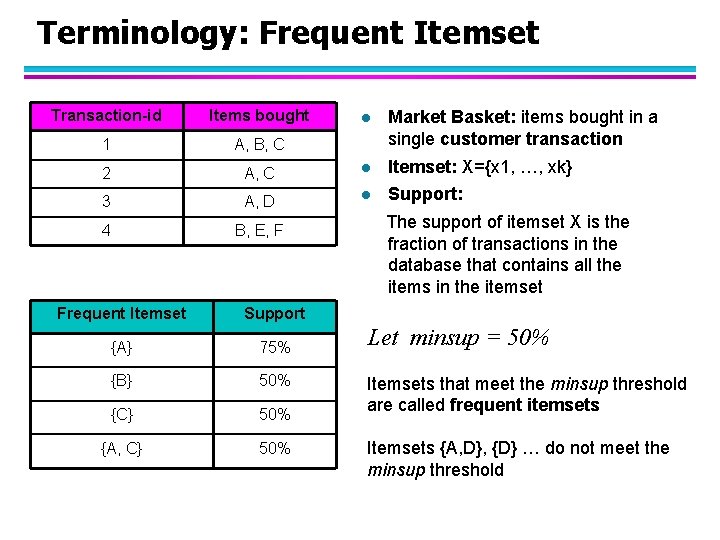

Terminology: Frequent Itemset Transaction-id Items bought 1 A, B, C 2 l Market Basket: items bought in a single customer transaction A, C l Itemset: X={x 1, …, xk} 3 A, D l Support: 4 B, E, F Frequent Itemset Support {A} 75% {B} 50% {C} 50% {A, C} 50% The support of itemset X is the fraction of transactions in the database that contains all the items in the itemset Let minsup = 50% Itemsets that meet the minsup threshold are called frequent itemsets Itemsets {A, D}, {D} … do not meet the minsup threshold

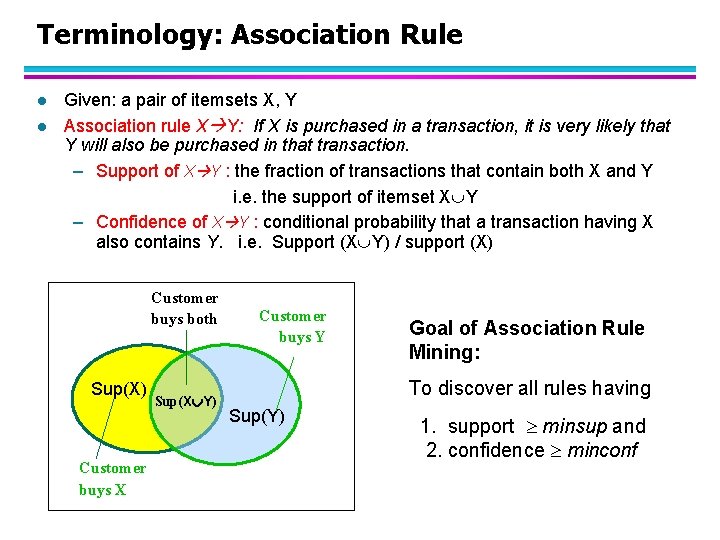

Terminology: Association Rule l l Given: a pair of itemsets X, Y Association rule X Y: If X is purchased in a transaction, it is very likely that Y will also be purchased in that transaction. – Support of X Y : the fraction of transactions that contain both X and Y i. e. the support of itemset X Y – Confidence of X Y : conditional probability that a transaction having X also contains Y. i. e. Support (X Y) / support (X) Customer buys both Sup(X) Customer buys X Sup(X Y) Customer buys Y Goal of Association Rule Mining: To discover all rules having Sup(Y) 1. support minsup and 2. confidence minconf

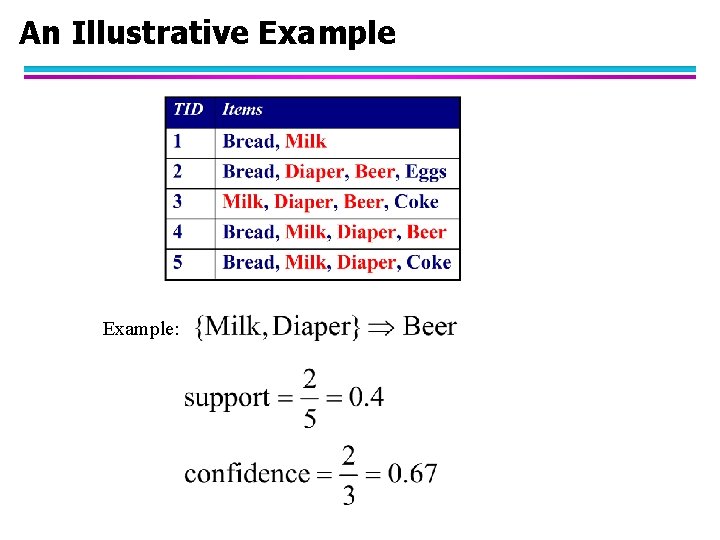

An Illustrative Example:

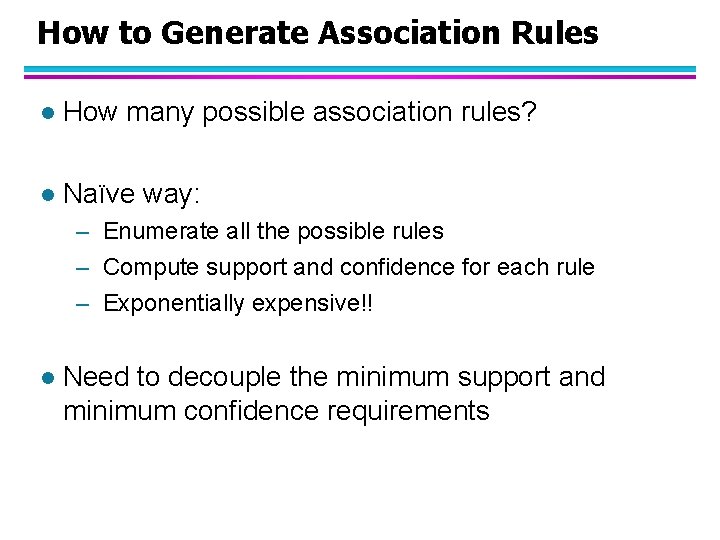

How to Generate Association Rules l How many possible association rules? l Naïve way: – Enumerate all the possible rules – Compute support and confidence for each rule – Exponentially expensive!! l Need to decouple the minimum support and minimum confidence requirements

How to Generate Association Rules? Example of Rules: {Milk, Diaper} {Beer} (s=0. 4, c=0. 67) {Milk, Beer} {Diaper} (s=0. 4, c=1. 0) {Diaper, Beer} {Milk} (s=0. 4, c=0. 67) {Diaper} {Milk, Beer} (s=0. 4, c=0. 5) Observations: • All the rules above have identical support • Why? Approach: divide the rule generation process into 2 steps • Generate the frequent itemsets first • Generate association rules from the frequent itemsets

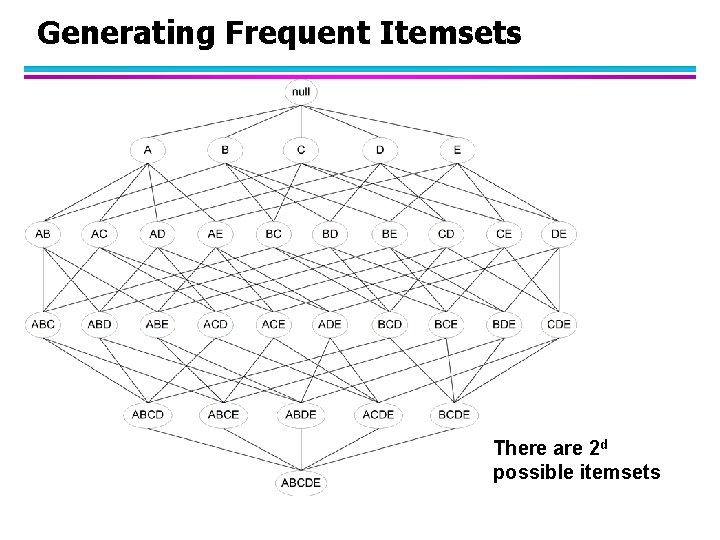

Generating Frequent Itemsets There are 2 d possible itemsets

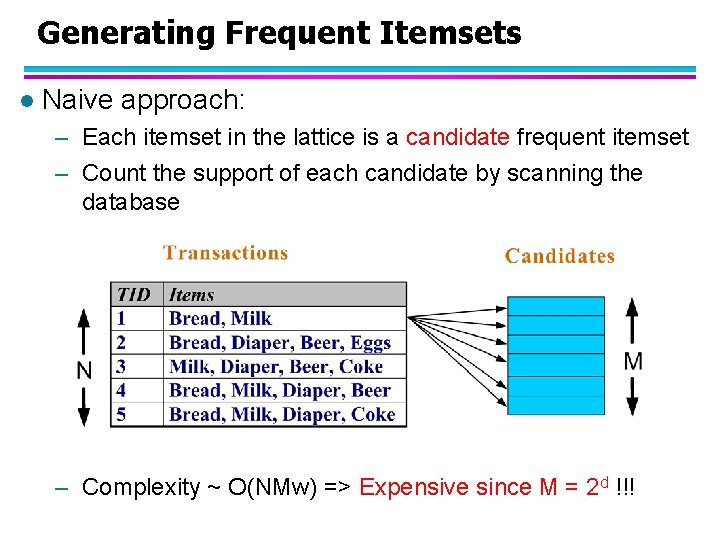

Generating Frequent Itemsets l Naive approach: – Each itemset in the lattice is a candidate frequent itemset – Count the support of each candidate by scanning the database – Complexity ~ O(NMw) => Expensive since M = 2 d !!!

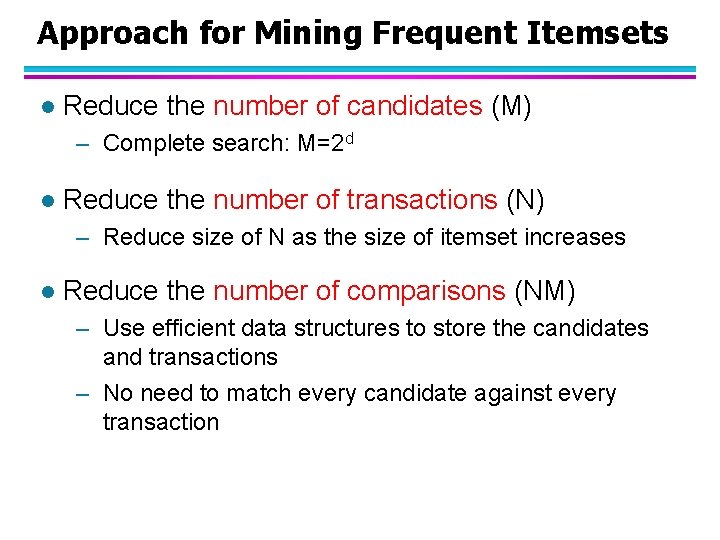

Approach for Mining Frequent Itemsets l Reduce the number of candidates (M) – Complete search: M=2 d l Reduce the number of transactions (N) – Reduce size of N as the size of itemset increases l Reduce the number of comparisons (NM) – Use efficient data structures to store the candidates and transactions – No need to match every candidate against every transaction

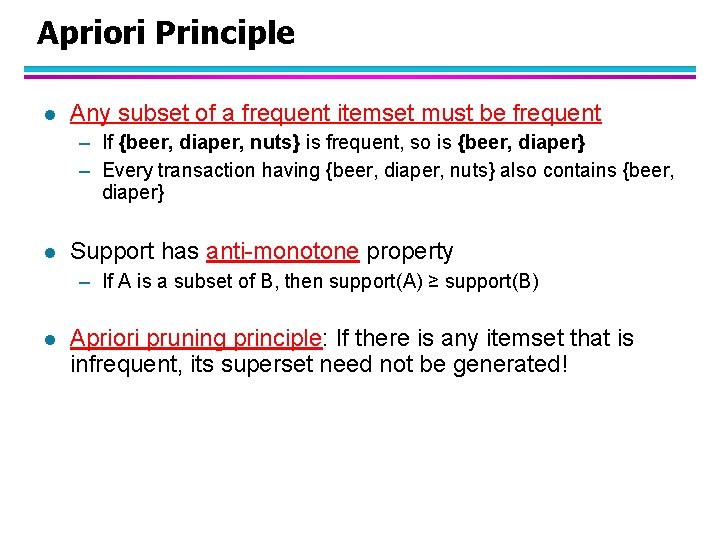

Apriori Principle l Any subset of a frequent itemset must be frequent – If {beer, diaper, nuts} is frequent, so is {beer, diaper} – Every transaction having {beer, diaper, nuts} also contains {beer, diaper} l Support has anti-monotone property – If A is a subset of B, then support(A) ≥ support(B) l Apriori pruning principle: If there is any itemset that is infrequent, its superset need not be generated!

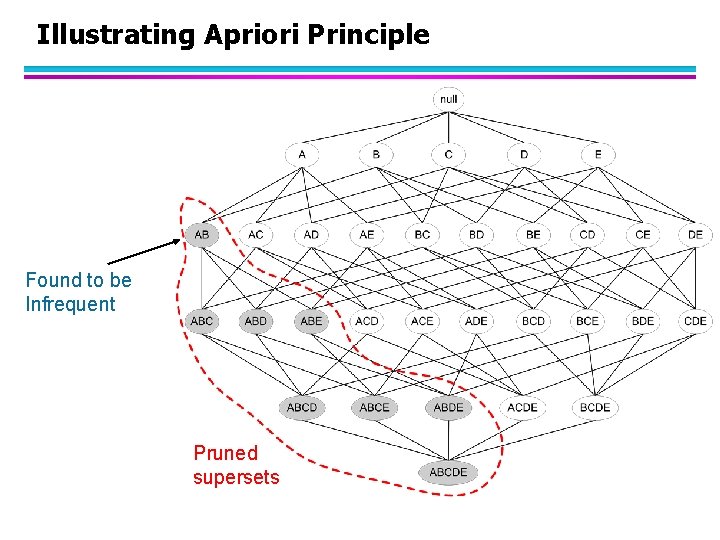

Illustrating Apriori Principle Found to be Infrequent Pruned supersets

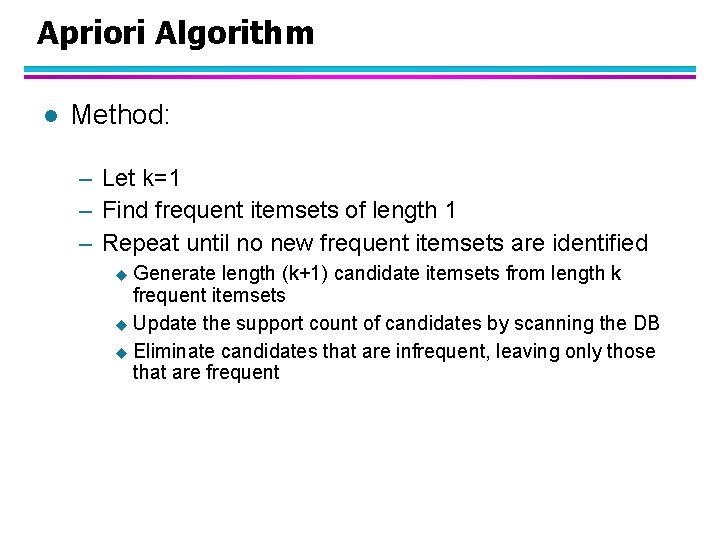

Apriori Algorithm l Method: – Let k=1 – Find frequent itemsets of length 1 – Repeat until no new frequent itemsets are identified u Generate length (k+1) candidate itemsets from length k frequent itemsets u Update the support count of candidates by scanning the DB u Eliminate candidates that are infrequent, leaving only those that are frequent

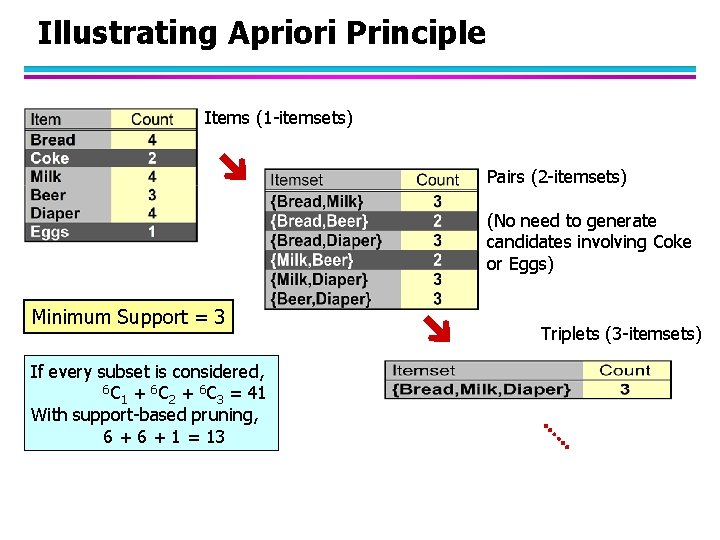

Illustrating Apriori Principle Items (1 -itemsets) Pairs (2 -itemsets) (No need to generate candidates involving Coke or Eggs) Minimum Support = 3 If every subset is considered, 6 C + 6 C = 41 1 2 3 With support-based pruning, 6 + 1 = 13 Triplets (3 -itemsets)

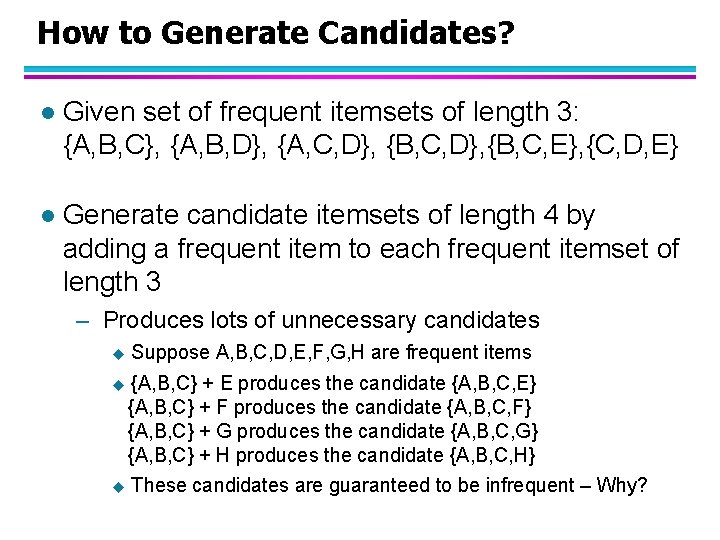

How to Generate Candidates? l Given set of frequent itemsets of length 3: {A, B, C}, {A, B, D}, {A, C, D}, {B, C, E}, {C, D, E} l Generate candidate itemsets of length 4 by adding a frequent item to each frequent itemset of length 3 – Produces lots of unnecessary candidates u Suppose A, B, C, D, E, F, G, H are frequent items u {A, B, C} + E produces the candidate {A, B, C, E} {A, B, C} + F produces the candidate {A, B, C, F} {A, B, C} + G produces the candidate {A, B, C, G} {A, B, C} + H produces the candidate {A, B, C, H} u These candidates are guaranteed to be infrequent – Why?

How to Generate Candidates? l Given set of frequent itemsets of length 3: {A, B, C}, {A, B, D}, {A, C, D}, {B, C, E}, {C, D, E} l Generate candidate itemsets of length 4 by joining pairs of frequent itemsets of length 3 – Joining {A, B, C} with {A, B, D} will produce the candidate {A, B, C, D} – Problem 1: Duplicate/Redundant candidates u Joining {A, B, C} with {B, C, D} will produce the same candidate {A, B, C, D} – Problem 2: Unnecessary candidates u Join {A, B, C} with {B, C, E} will produce the candidate {A, B, C, E}, which is guaranteed to be infrequent

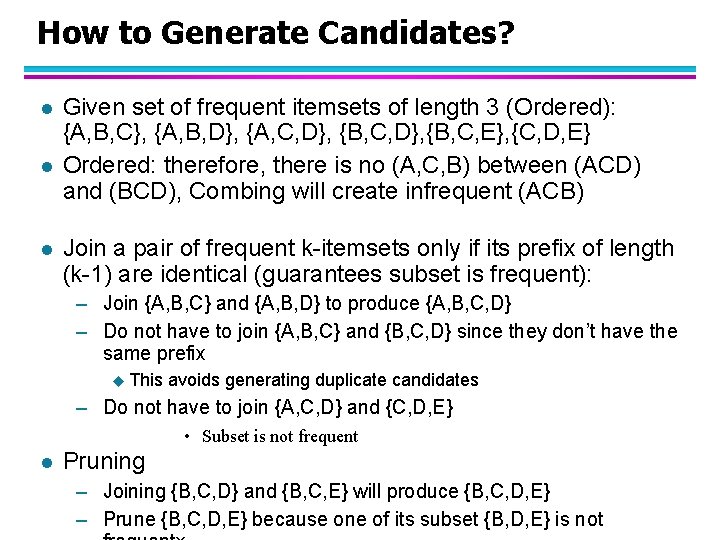

How to Generate Candidates? l l l Given set of frequent itemsets of length 3 (Ordered): {A, B, C}, {A, B, D}, {A, C, D}, {B, C, E}, {C, D, E} Ordered: therefore, there is no (A, C, B) between (ACD) and (BCD), Combing will create infrequent (ACB) Join a pair of frequent k-itemsets only if its prefix of length (k-1) are identical (guarantees subset is frequent): – Join {A, B, C} and {A, B, D} to produce {A, B, C, D} – Do not have to join {A, B, C} and {B, C, D} since they don’t have the same prefix u This avoids generating duplicate candidates – Do not have to join {A, C, D} and {C, D, E} • Subset is not frequent l Pruning – Joining {B, C, D} and {B, C, E} will produce {B, C, D, E} – Prune {B, C, D, E} because one of its subset {B, D, E} is not

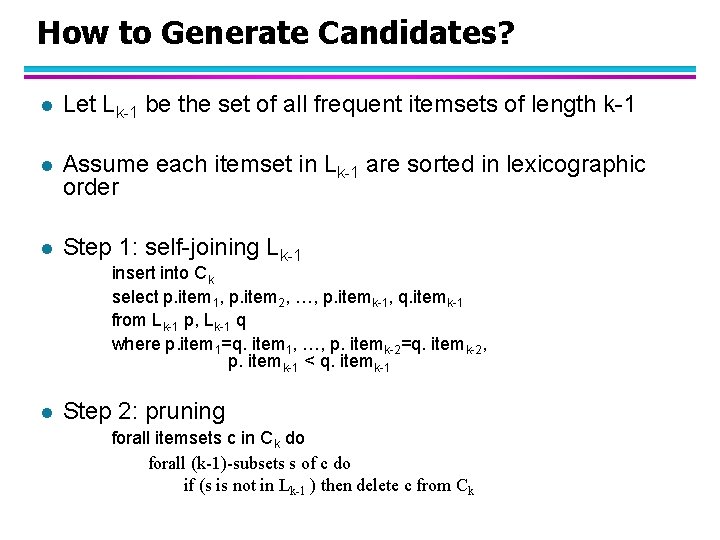

How to Generate Candidates? l Let Lk-1 be the set of all frequent itemsets of length k-1 l Assume each itemset in Lk-1 are sorted in lexicographic order l Step 1: self-joining Lk-1 insert into Ck select p. item 1, p. item 2, …, p. itemk-1, q. itemk-1 from Lk-1 p, Lk-1 q where p. item 1=q. item 1, …, p. itemk-2=q. itemk-2, p. itemk-1 < q. itemk-1 l Step 2: pruning forall itemsets c in Ck do forall (k-1)-subsets s of c do if (s is not in Lk-1 ) then delete c from Ck

How to Count Support of Candidates? l Why counting supports of candidates is a problem? – The total number of candidates can be huge – Each transaction may contain many candidates l Method: – Store candidate itemsets in a hash-tree – Leaf node of the hash-tree contains a list of itemsets and their respective support counts – Interior node contains a hash table

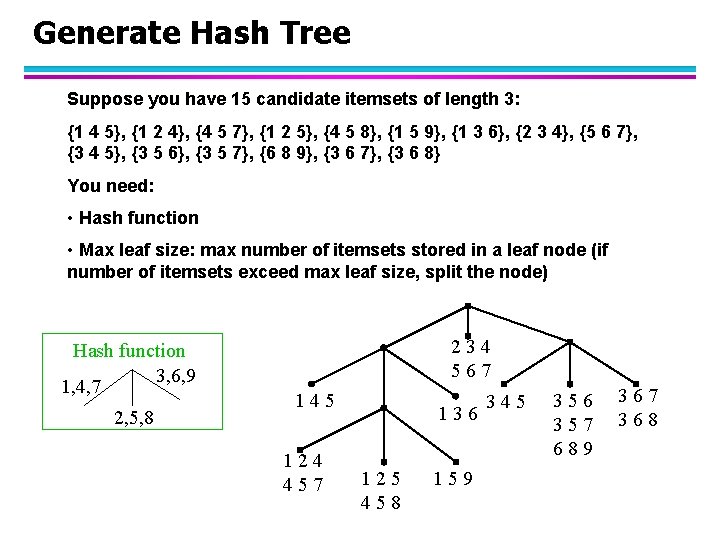

Generate Hash Tree Suppose you have 15 candidate itemsets of length 3: {1 4 5}, {1 2 4}, {4 5 7}, {1 2 5}, {4 5 8}, {1 5 9}, {1 3 6}, {2 3 4}, {5 6 7}, {3 4 5}, {3 5 6}, {3 5 7}, {6 8 9}, {3 6 7}, {3 6 8} You need: • Hash function • Max leaf size: max number of itemsets stored in a leaf node (if number of itemsets exceed max leaf size, split the node) Hash function 3, 6, 9 1, 4, 7 2, 5, 8 234 567 145 124 457 136 125 458 159 345 356 357 689 367 368

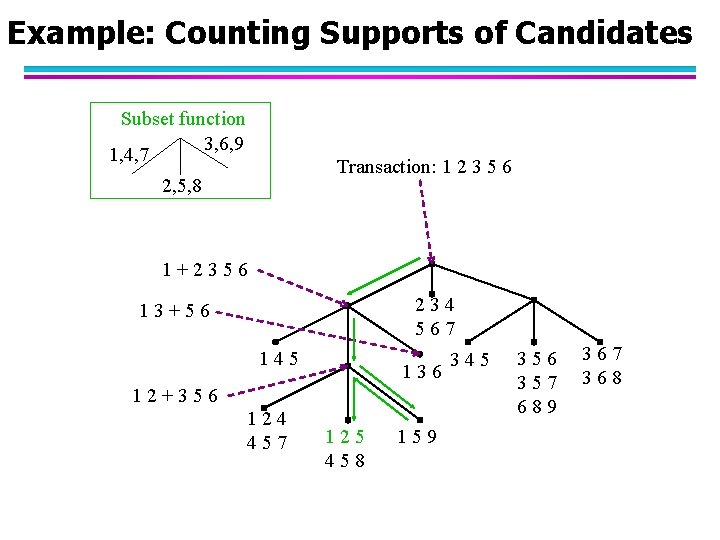

Example: Counting Supports of Candidates Subset function 3, 6, 9 1, 4, 7 Transaction: 1 2 3 5 6 2, 5, 8 1+2356 234 567 13+56 145 136 12+356 124 457 125 458 159 345 356 357 689 367 368

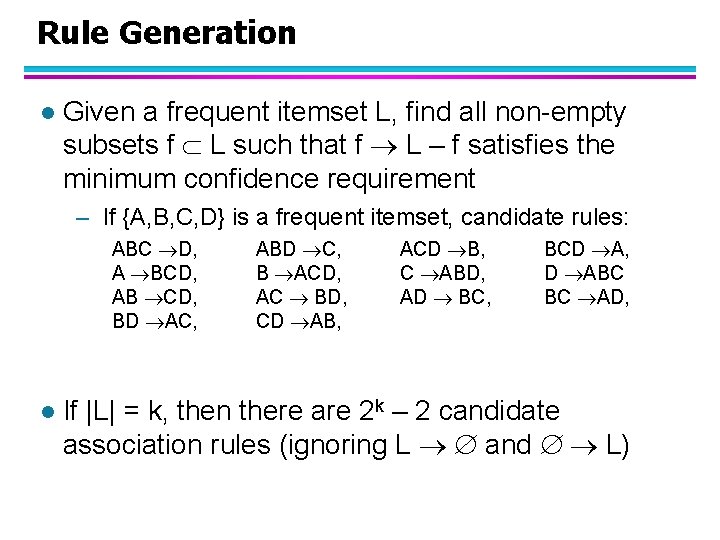

Rule Generation l Given a frequent itemset L, find all non-empty subsets f L such that f L – f satisfies the minimum confidence requirement – If {A, B, C, D} is a frequent itemset, candidate rules: ABC D, A BCD, AB CD, BD AC, l ABD C, B ACD, AC BD, CD AB, ACD B, C ABD, AD BC, BCD A, D ABC BC AD, If |L| = k, then there are 2 k – 2 candidate association rules (ignoring L and L)

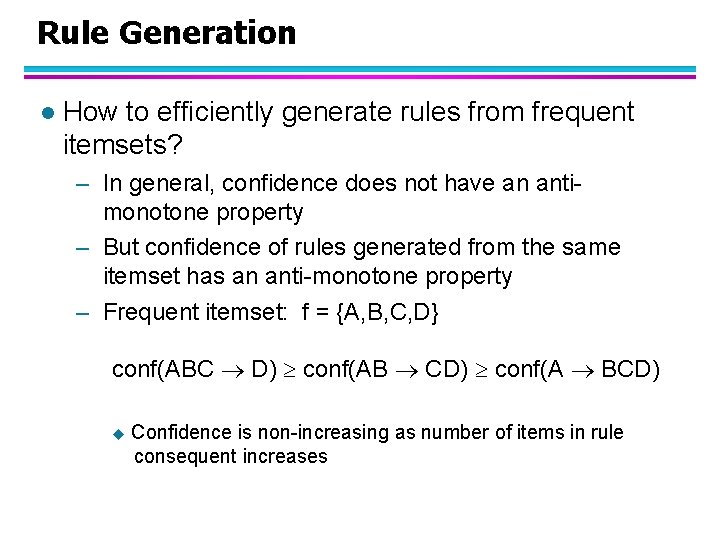

Rule Generation l How to efficiently generate rules from frequent itemsets? – In general, confidence does not have an antimonotone property – But confidence of rules generated from the same itemset has an anti-monotone property – Frequent itemset: f = {A, B, C, D} conf(ABC D) conf(AB CD) conf(A BCD) u Confidence is non-increasing as number of items in rule consequent increases

Rule Generation for Apriori Algorithm Lattice of rules

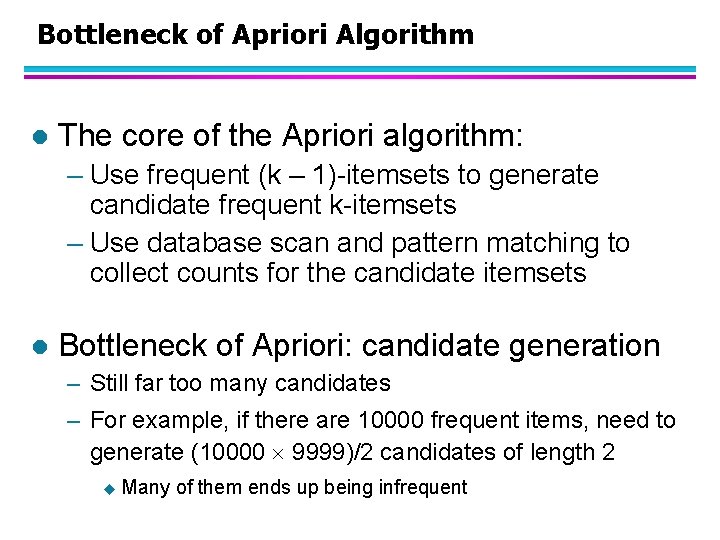

Bottleneck of Apriori Algorithm l The core of the Apriori algorithm: – Use frequent (k – 1)-itemsets to generate candidate frequent k-itemsets – Use database scan and pattern matching to collect counts for the candidate itemsets l Bottleneck of Apriori: candidate generation – Still far too many candidates – For example, if there are 10000 frequent items, need to generate (10000 9999)/2 candidates of length 2 u Many of them ends up being infrequent

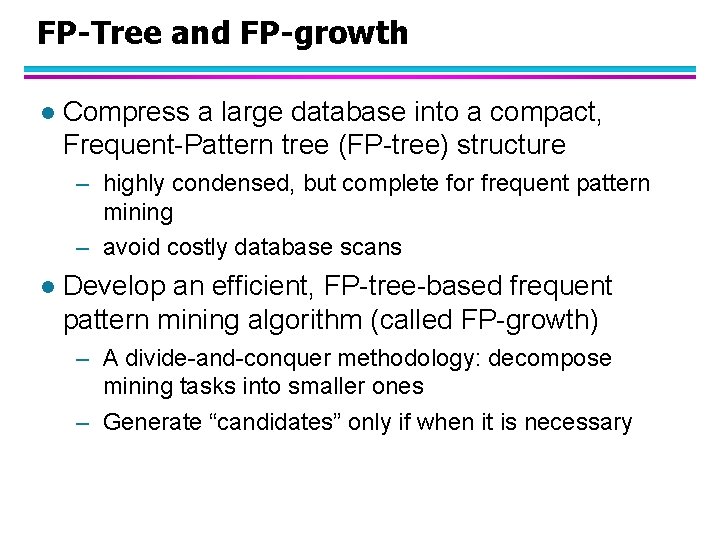

FP-Tree and FP-growth l Compress a large database into a compact, Frequent-Pattern tree (FP-tree) structure – highly condensed, but complete for frequent pattern mining – avoid costly database scans l Develop an efficient, FP-tree-based frequent pattern mining algorithm (called FP-growth) – A divide-and-conquer methodology: decompose mining tasks into smaller ones – Generate “candidates” only if when it is necessary

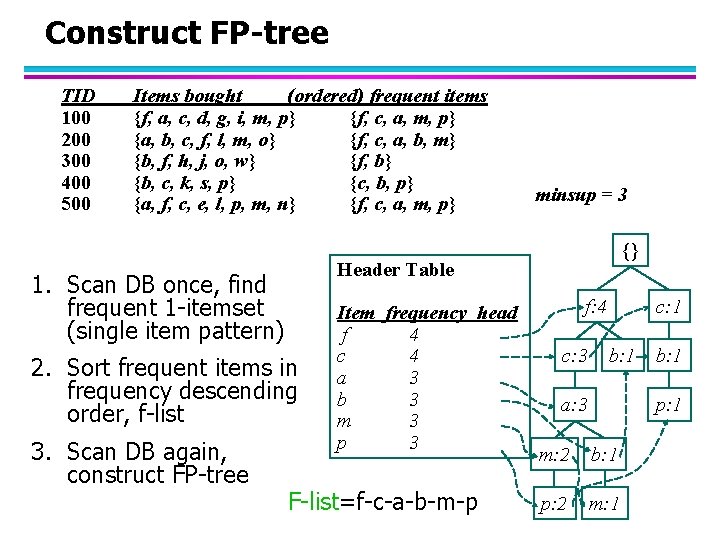

Construct FP-tree TID 100 200 300 400 500 Items bought (ordered) frequent items {f, a, c, d, g, i, m, p} {f, c, a, m, p} {a, b, c, f, l, m, o} {f, c, a, b, m} {b, f, h, j, o, w} {f, b} {b, c, k, s, p} {c, b, p} {a, f, c, e, l, p, m, n} {f, c, a, m, p} {} Header Table 1. Scan DB once, find frequent 1 -itemset (single item pattern) 2. Sort frequent items in frequency descending order, f-list 3. Scan DB again, construct FP-tree minsup = 3 Item frequency head f 4 c 4 a 3 b 3 m 3 p 3 F-list=f-c-a-b-m-p f: 4 c: 3 c: 1 b: 1 a: 3 b: 1 p: 1 m: 2 b: 1 p: 2 m: 1

Benefits of the FP-tree Structure l Completeness – Preserve information for frequent pattern mining l Compactness – Reduce irrelevant info—infrequent items are gone – Items in frequency descending order: the more frequently occurring, the more likely to be shared – Never larger than the original database (if we exclude node-links and the count field) For some dense data sets, compression ratio can be more than a factor of 100 u

Partition Patterns and Databases l Frequent patterns can be partitioned into subsets according to f-list (efficient partitioning because ordered) – – – l F-list=f-c-a-b-m-p Patterns containing p Patterns having m but no p … Patterns having c but no a nor b, m, p Pattern f Completeness and no redundancy

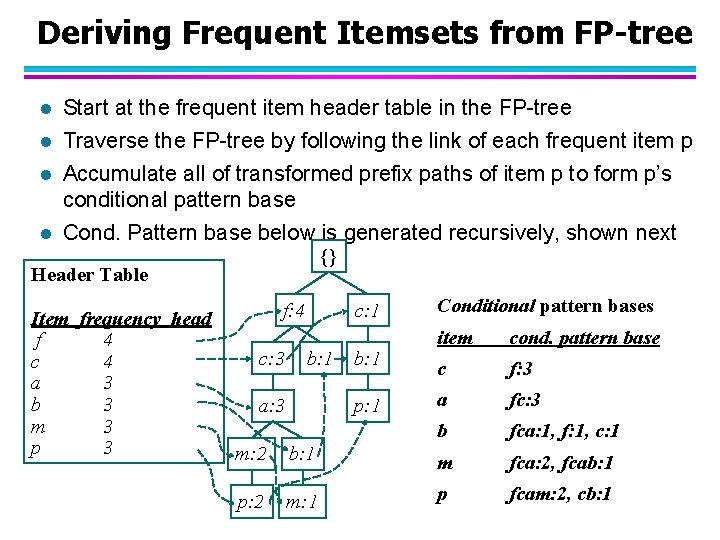

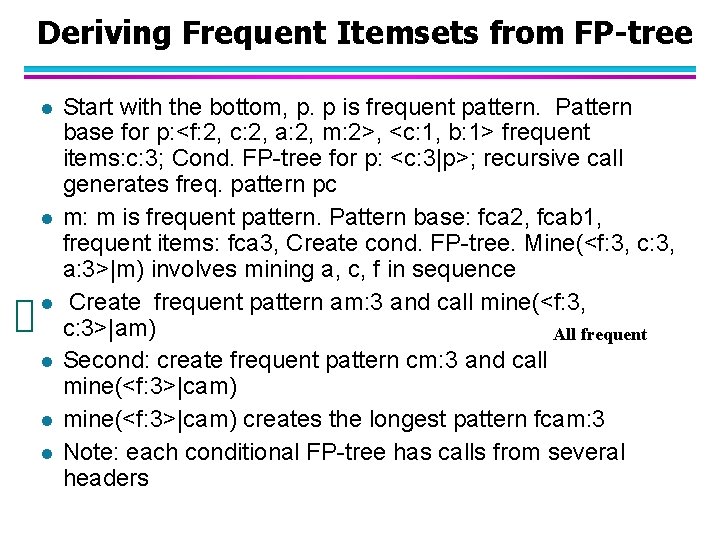

Deriving Frequent Itemsets from FP-tree l Start at the frequent item header table in the FP-tree l Traverse the FP-tree by following the link of each frequent item p l Accumulate all of transformed prefix paths of item p to form p’s conditional pattern base l Cond. Pattern base below is generated recursively, shown next {} Header Table Item frequency head f 4 c 4 a 3 b 3 m 3 p 3 f: 4 c: 3 c: 1 b: 1 a: 3 b: 1 p: 1 Conditional pattern bases item cond. pattern base c f: 3 a fc: 3 b fca: 1, f: 1, c: 1 m: 2 b: 1 m fca: 2, fcab: 1 p: 2 m: 1 p fcam: 2, cb: 1

Deriving Frequent Itemsets from FP-tree l l l Start with the bottom, p. p is frequent pattern. Pattern base for p: <f: 2, c: 2, a: 2, m: 2>, <c: 1, b: 1> frequent items: c: 3; Cond. FP-tree for p: <c: 3|p>; recursive call generates freq. pattern pc m: m is frequent pattern. Pattern base: fca 2, fcab 1, frequent items: fca 3, Create cond. FP-tree. Mine(<f: 3, c: 3, a: 3>|m) involves mining a, c, f in sequence Create frequent pattern am: 3 and call mine(<f: 3, c: 3>|am) All frequent Second: create frequent pattern cm: 3 and call mine(<f: 3>|cam) creates the longest pattern fcam: 3 Note: each conditional FP-tree has calls from several headers

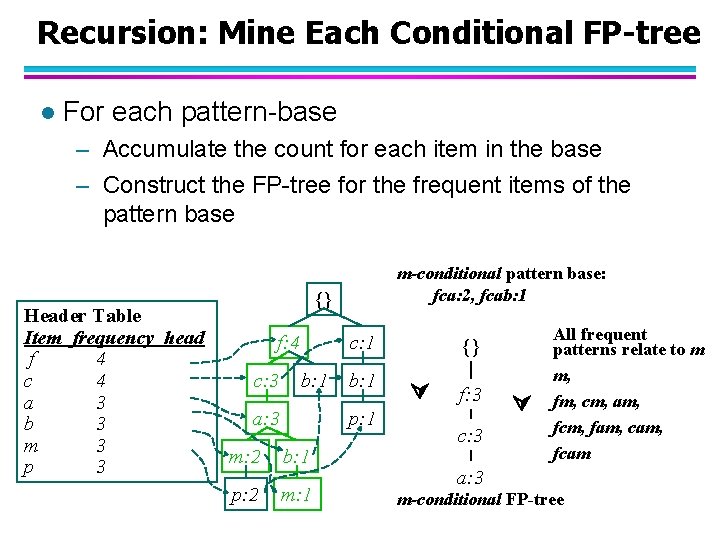

Recursion: Mine Each Conditional FP-tree l For each pattern-base – Accumulate the count for each item in the base – Construct the FP-tree for the frequent items of the pattern base Header Table Item frequency head f 4 c 4 a 3 b 3 m 3 p 3 m-conditional pattern base: fca: 2, fcab: 1 {} f: 4 c: 3 c: 1 b: 1 a: 3 b: 1 p: 1 m: 2 b: 1 p: 2 m: 1 {} f: 3 c: 3 All frequent patterns relate to m m, fm, cm, am, fcm, fam, cam, fcam a: 3 m-conditional FP-tree

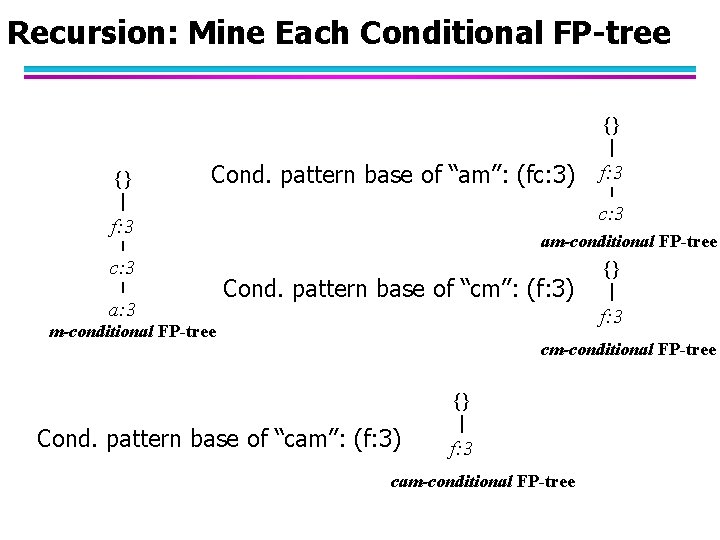

Recursion: Mine Each Conditional FP-tree {} {} Cond. pattern base of “am”: (fc: 3) c: 3 f: 3 c: 3 a: 3 f: 3 am-conditional FP-tree Cond. pattern base of “cm”: (f: 3) {} f: 3 m-conditional FP-tree cm-conditional FP-tree {} Cond. pattern base of “cam”: (f: 3) f: 3 cam-conditional FP-tree

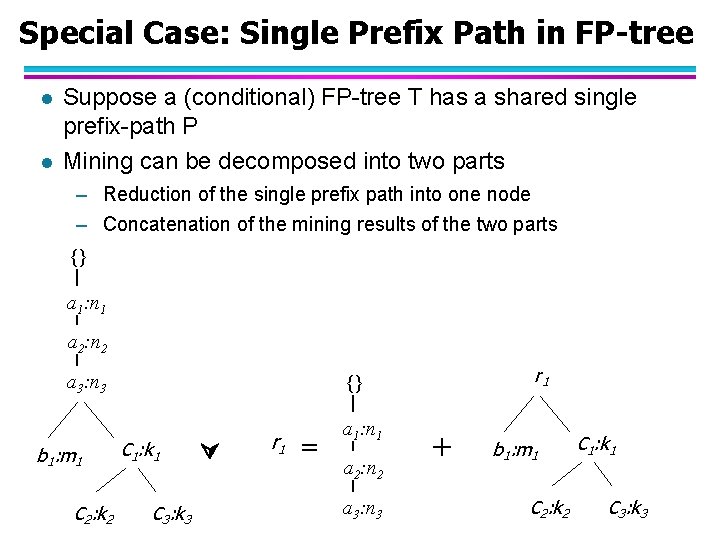

Special Case: Single Prefix Path in FP-tree l l Suppose a (conditional) FP-tree T has a shared single prefix-path P Mining can be decomposed into two parts – Reduction of the single prefix path into one node – Concatenation of the mining results of the two parts {} a 1: n 1 a 2: n 2 a 3: n 3 b 1: m 1 C 2: k 2 r 1 {} C 1: k 1 C 3: k 3 r 1 = a 1: n 1 a 2: n 2 a 3: n 3 + b 1: m 1 C 2: k 2 C 1: k 1 C 3: k 3

Mining Frequent Patterns With FP-trees l Idea: Frequent pattern growth – Recursively grow frequent patterns by pattern and database partition l Method – For each frequent item, construct its conditional pattern-base, and then its conditional FP-tree – Repeat the process on each newly created conditional FP-tree – Until the resulting FP-tree is empty, or it contains only one path—single path will generate all the combinations of its sub-paths, each of which is a frequent pattern

Scaling FP-growth by DB Projection FP-tree cannot fit in memory? —DB projection l First partition a database into a set of projected DBs l Then construct and mine FP-tree for each projected DB l

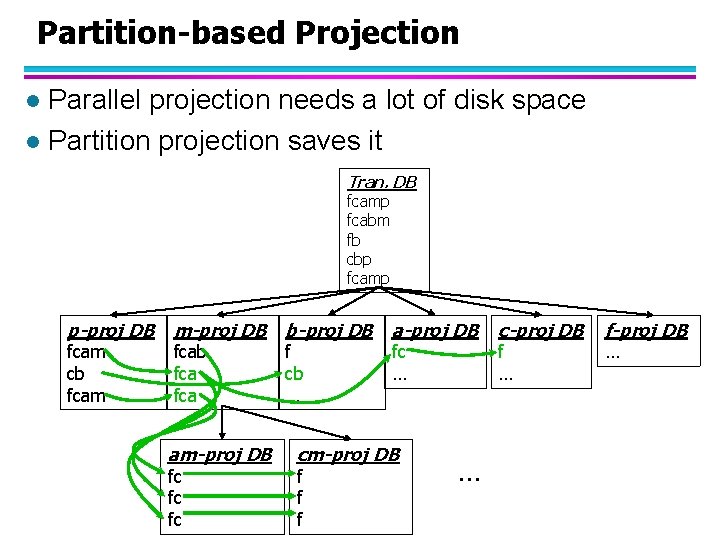

Partition-based Projection Parallel projection needs a lot of disk space l Partition projection saves it l Tran. DB fcamp fcabm fb cbp fcamp p-proj DB fcam cb fcam m-proj DB b-proj DB fcab fca am-proj DB fc fc fc f cb … a-proj DB fc … cm-proj DB f f f … c-proj DB f … f-proj DB …

References l Srikant and Agrawal, “Fast Algorithms for Mining Association Rules” l Han, Pei, Yin, “Mining Frequent Patterns without Candidate Generation”

- Slides: 42