Data Grid projects in HENP R Pordes Fermilab

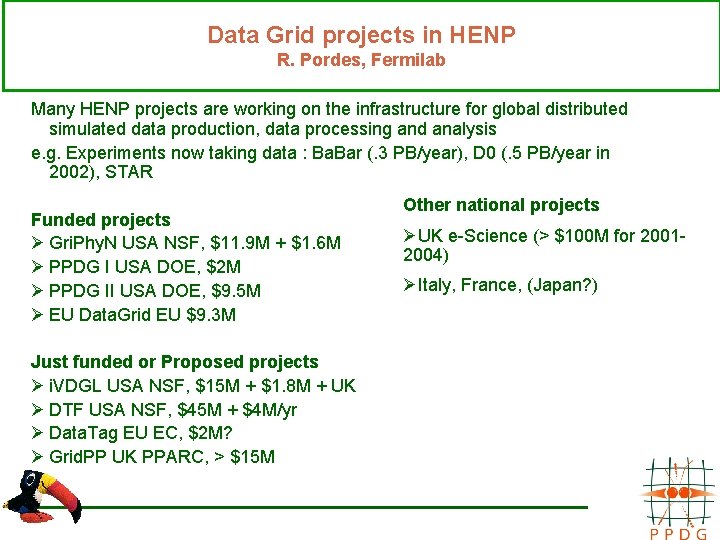

Data Grid projects in HENP R. Pordes, Fermilab Many HENP projects are working on the infrastructure for global distributed simulated data production, data processing and analysis e. g. Experiments now taking data : Ba. Bar (. 3 PB/year), D 0 (. 5 PB/year in 2002), STAR Funded projects Ø Gri. Phy. N USA NSF, $11. 9 M + $1. 6 M Ø PPDG I USA DOE, $2 M Ø PPDG II USA DOE, $9. 5 M Ø EU Data. Grid EU $9. 3 M Just funded or Proposed projects Ø i. VDGL USA NSF, $15 M + $1. 8 M + UK Ø DTF USA NSF, $45 M + $4 M/yr Ø Data. Tag EU EC, $2 M? Ø Grid. PP UK PPARC, > $15 M Other national projects ØUK e-Science (> $100 M for 20012004) ØItaly, France, (Japan? )

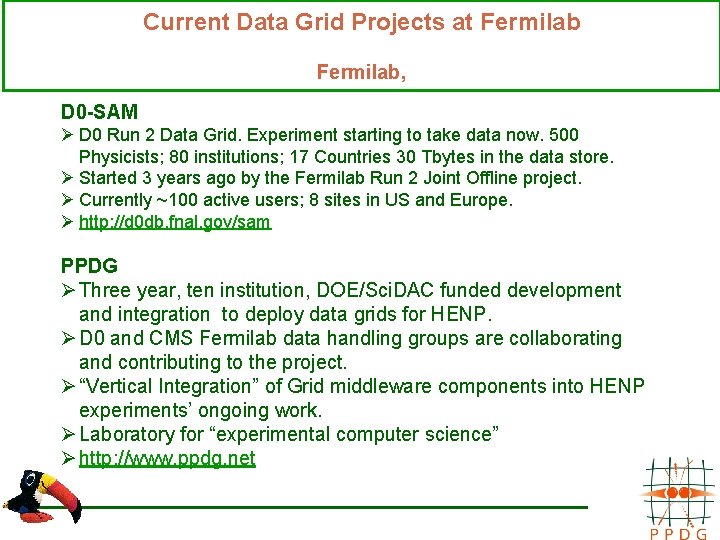

Current Data Grid Projects at Fermilab, D 0 -SAM Ø D 0 Run 2 Data Grid. Experiment starting to take data now. 500 Physicists; 80 institutions; 17 Countries 30 Tbytes in the data store. Ø Started 3 years ago by the Fermilab Run 2 Joint Offline project. Ø Currently ~100 active users; 8 sites in US and Europe. Ø http: //d 0 db. fnal. gov/sam PPDG Ø Three year, ten institution, DOE/Sci. DAC funded development and integration to deploy data grids for HENP. Ø D 0 and CMS Fermilab data handling groups are collaborating and contributing to the project. Ø “Vertical Integration” of Grid middleware components into HENP experiments’ ongoing work. Ø Laboratory for “experimental computer science” Ø http: //www. ppdg. net

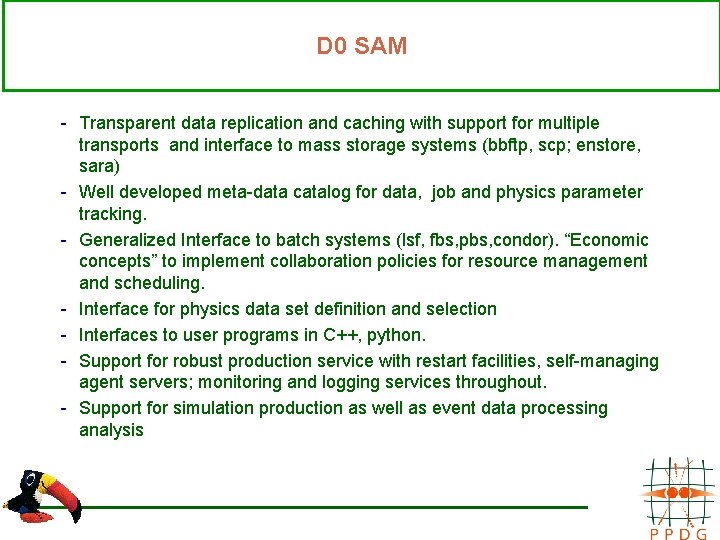

D 0 SAM - Transparent data replication and caching with support for multiple transports and interface to mass storage systems (bbftp, scp; enstore, sara) - Well developed meta-data catalog for data, job and physics parameter tracking. - Generalized Interface to batch systems (lsf, fbs, pbs, condor). “Economic concepts” to implement collaboration policies for resource management and scheduling. - Interface for physics data set definition and selection - Interfaces to user programs in C++, python. - Support for robust production service with restart facilities, self-managing agent servers; monitoring and logging services throughout. - Support for simulation production as well as event data processing analysis

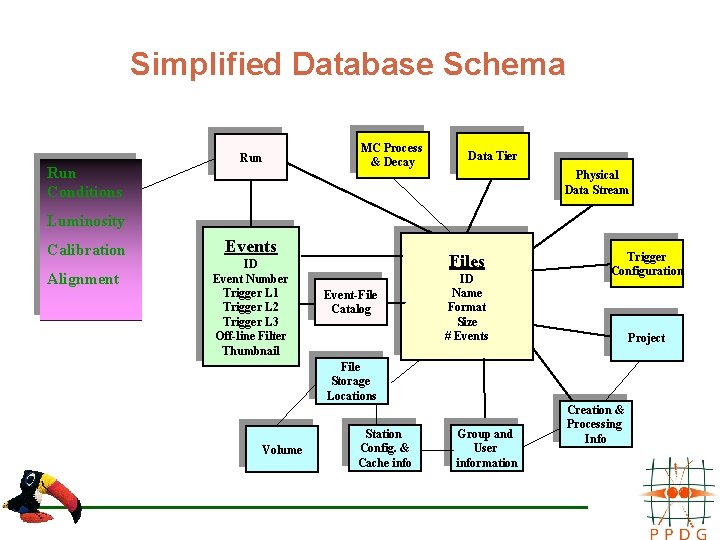

Simplified Database Schema Run Conditions Run MC Process & Decay Data Tier Physical Data Stream Luminosity Calibration Alignment Events ID Event Number Trigger L 1 Trigger L 2 Trigger L 3 Off-line Filter Thumbnail Files Event-File Catalog ID Name Format Size # Events Trigger Configuration Project File Storage Locations Volume Station Config. & Cache info Group and User information Creation & Processing Info

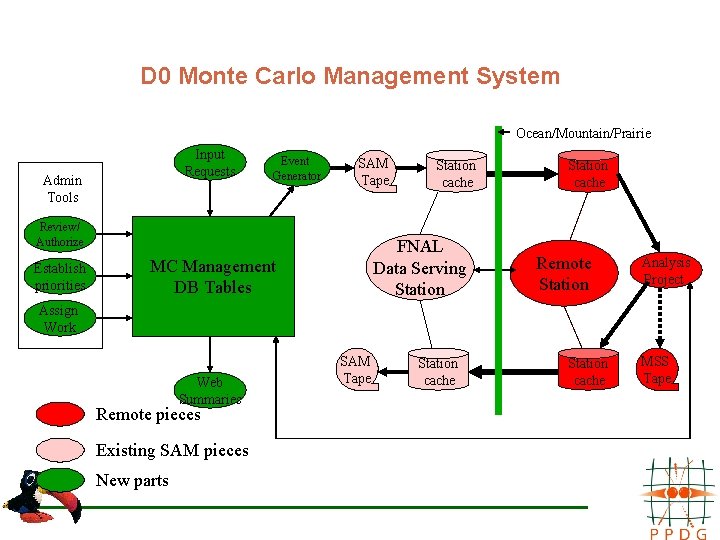

D 0 Monte Carlo Management System Ocean/Mountain/Prairie Input Requests Admin Tools Event Generator SAM Tape Review/ Authorize Establish priorities Station cache FNAL Data Serving Station MC Management DB Tables Station cache Remote Station Analysis Project Assign Work Web Summaries Remote pieces Existing SAM pieces New parts SAM Tape Station cache MSS Tape

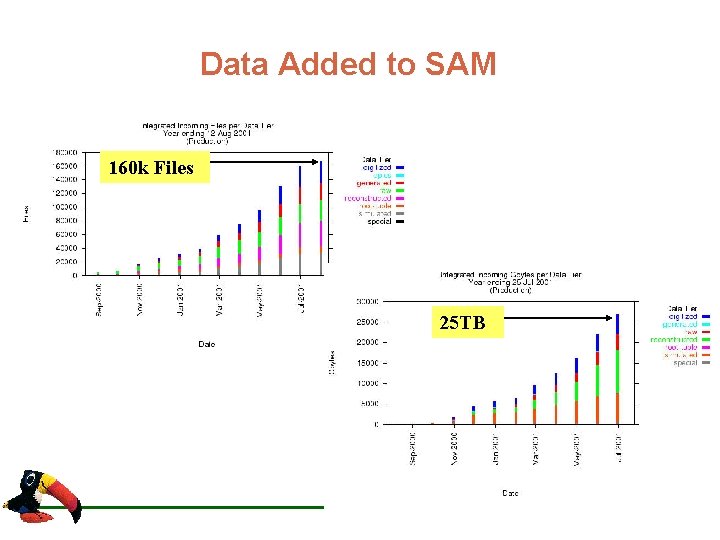

Data Added to SAM 160 k Files 25 TB

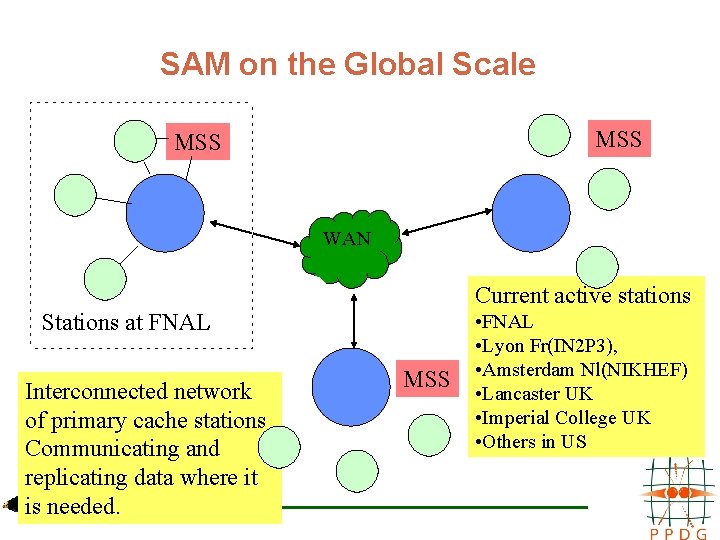

SAM on the Global Scale MSS WAN Current active stations Stations at FNAL Interconnected network of primary cache stations Communicating and replicating data where it is needed. MSS • FNAL • Lyon Fr(IN 2 P 3), • Amsterdam Nl(NIKHEF) • Lancaster UK • Imperial College UK • Others in US

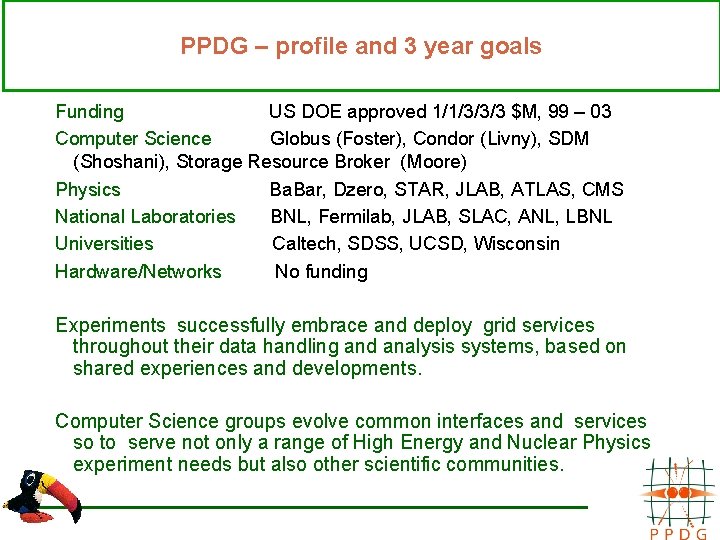

PPDG – profile and 3 year goals Funding US DOE approved 1/1/3/3/3 $M, 99 – 03 Computer Science Globus (Foster), Condor (Livny), SDM (Shoshani), Storage Resource Broker (Moore) Physics Ba. Bar, Dzero, STAR, JLAB, ATLAS, CMS National Laboratories BNL, Fermilab, JLAB, SLAC, ANL, LBNL Universities Caltech, SDSS, UCSD, Wisconsin Hardware/Networks No funding Experiments successfully embrace and deploy grid services throughout their data handling and analysis systems, based on shared experiences and developments. Computer Science groups evolve common interfaces and services so to serve not only a range of High Energy and Nuclear Physics experiment needs but also other scientific communities.

PPDG Main areas of work: Extending Grid services: Ø Storage Resource Management and Interfacing. Ø Robust File Replication and Information Services. Ø Intelligent Job and Resource Management. Ø System monitoring and information capture. End to End applications: Ø Experiments data handling systems in use now and in the near future Ø to give real-world requirements, testing and feedback. Ø Error reporting and response Ø Fault tolerant integration of complex components Cross-project activities: Ø Authenitcation and Certificate authorisation and exchange. Ø European Data Grid common project for data transfer (Grid Data Management Pilot) Ø SC 2001 demo with Gri. Phy. N.

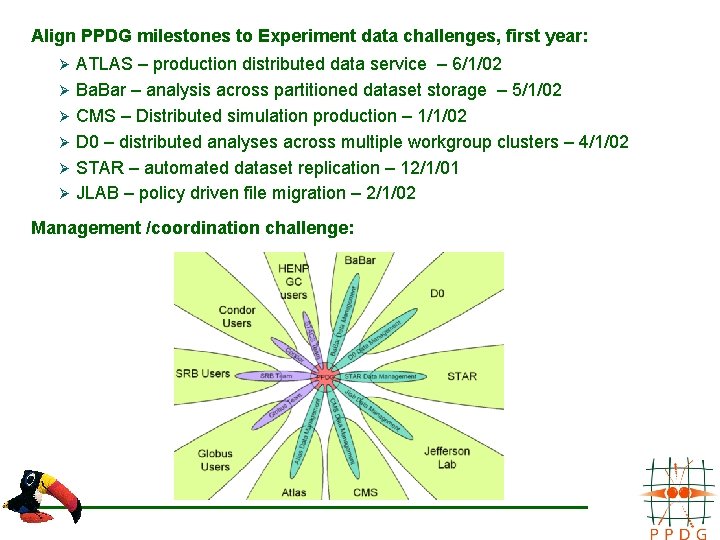

Align PPDG milestones to Experiment data challenges, first year: Ø Ø Ø ATLAS – production distributed data service – 6/1/02 Ba. Bar – analysis across partitioned dataset storage – 5/1/02 CMS – Distributed simulation production – 1/1/02 D 0 – distributed analyses across multiple workgroup clusters – 4/1/02 STAR – automated dataset replication – 12/1/01 JLAB – policy driven file migration – 2/1/02 Management /coordination challenge:

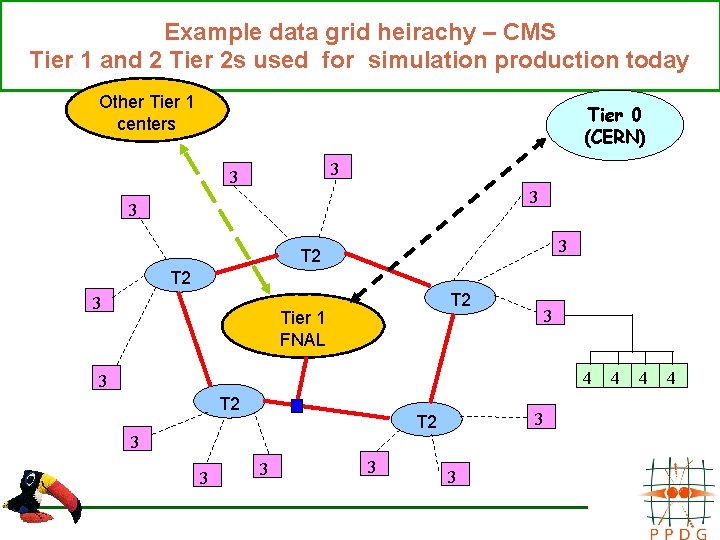

Example data grid heirachy – CMS Tier 1 and 2 Tier 2 s used for simulation production today Other Tier 1 centers Tier 0 (CERN) 3 3 3 T 2 Tier 1 FNAL 3 4 3 T 2 3 3 3 4 4 4

PPDG views cross-project coordination as important: Other Grid Projects in our field: §Gri. Phy. N – Grid for Physics Network §European Data. Grid §Storage Resource Management collaboratory §HENP Data Grid Coordination Committee Deployed systems: §ATLAS, Ba. Bar, CMS, D 0, Star, JLAB experiment data handling systems §i. VDGL – International Virtual Data Grid Laboratory §Use DTF computational facilities? Standards Committees: §Internet 2 High Energy and Nuclear Physics Working Group §Global Grid Forum

- Slides: 13