Data Center TCP DCTCP Mohammad Alizadeh Albert Greenberg

Data Center TCP (DCTCP) Mohammad Alizadeh, Albert Greenberg, David A. Maltz, Jitendra Padhye Parveen Patel, Balaji Prabhakar, Sudipta Sengupta, Murari Sridharan Presented and Adapted by Yikai Lin EECS 582 – W 16 1

Outline • 1. Problem Statement • 2. Design Goals • 3. DCTCP Algorithm • 4. Analysis • 5. Evaluation • 6. Conclusions • 7. Q&A EECS 582 – W 16 2

Data Center Packet Transport • Cloud computing service provider • Amazon, Microsoft, Google • Transport inside the DC • TCP rules (99. 9% of traffic) • How’s TCP doing? EECS 582 – W 16 3

TCP in the Data Center • We’ll see TCP does not meet demands of apps. • Incast Ø Suffers from bursty packet drops • Builds up large queues: Ø Adds significant latency. Ø Wastes precious buffers, esp. bad with shallow-buffered switches. • Operators work around TCP problems. ‒ Ad-hoc, inefficient, often expensive solutions • “Our” solution: Data Center TCP EECS 582 – W 16 4

Case Study: Microsoft Bing • Measurements from 6000 server production cluster • Instrumentation passively collects logs ‒ Application-level ‒ Socket-level ‒ Selected packet-level • More than 150 TB of compressed data over a month EECS 582 – W 16 5

![Workloads • Partition/Aggregate (Query) Delay-sensitive • Short messages [50 KB-1 MB] (Coordination, Control state) Workloads • Partition/Aggregate (Query) Delay-sensitive • Short messages [50 KB-1 MB] (Coordination, Control state)](http://slidetodoc.com/presentation_image/3ce860dddf3b1a08d0de6f84bece8e7c/image-6.jpg)

Workloads • Partition/Aggregate (Query) Delay-sensitive • Short messages [50 KB-1 MB] (Coordination, Control state) Delay-sensitive • Large flows [1 MB-50 MB] (Data update) Throughput-sensitive EECS 582 – W 16 6

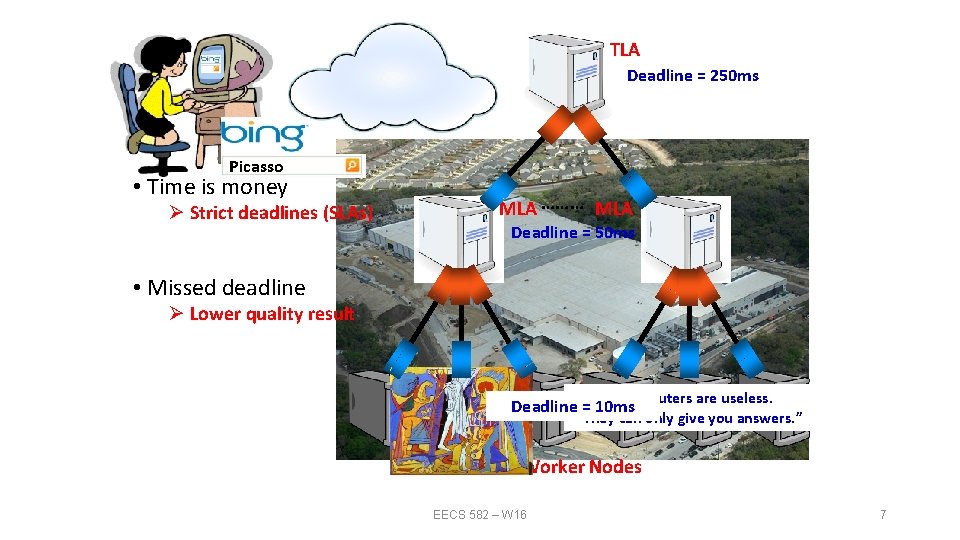

TLA Deadline = 250 ms Picasso • Time is money Ø Strict deadlines (SLAs) MLA ……… MLA Deadline = 50 ms • Missed deadline Ø Lower quality result “It“I'd is “Art “Computers “Inspiration your chief like “Bad isto you awork enemy lie live artists can that in as are does of imagine life acopy. makes useless. creativity poor that exist, man us is the real. ” is Deadline“Everything =“The 10 ms EECS 582 – W 16 They but can itwith ultimate Good must realize only good lots artists find give seduction. “ of the sense. “ you money. “ you truth. steal. ” working. ” answers. ” Worker Nodes EECS 582 – W 16 7

Impairments • Incast • Queue Buildup • Buffer Pressure EECS 582 – W 16 8

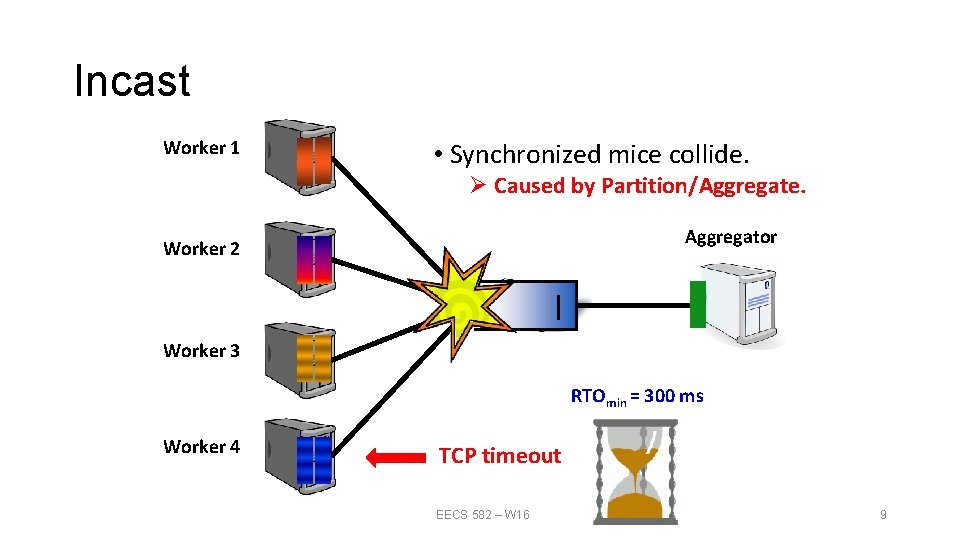

Incast Worker 1 • Synchronized mice collide. Ø Caused by Partition/Aggregate. Aggregator Worker 2 Worker 3 RTOmin = 300 ms Worker 4 TCP timeout EECS 582 – W 16 9

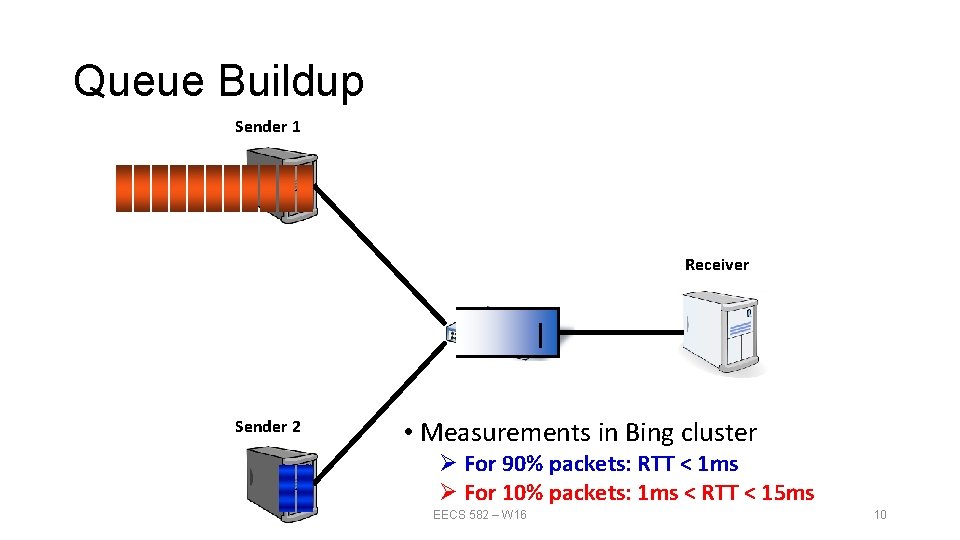

Queue Buildup Sender 1 Receiver Sender 2 • Measurements in Bing cluster Ø For 90% packets: RTT < 1 ms Ø For 10% packets: 1 ms < RTT < 15 ms EECS 582 – W 16 10

Data Center Transport Requirements 1. High Burst Tolerance – Incast due to Partition/Aggregate is common. 2. Low Latency – Short flows, queries 3. High Throughput – Large file transfers EECS 582 – W 16 11

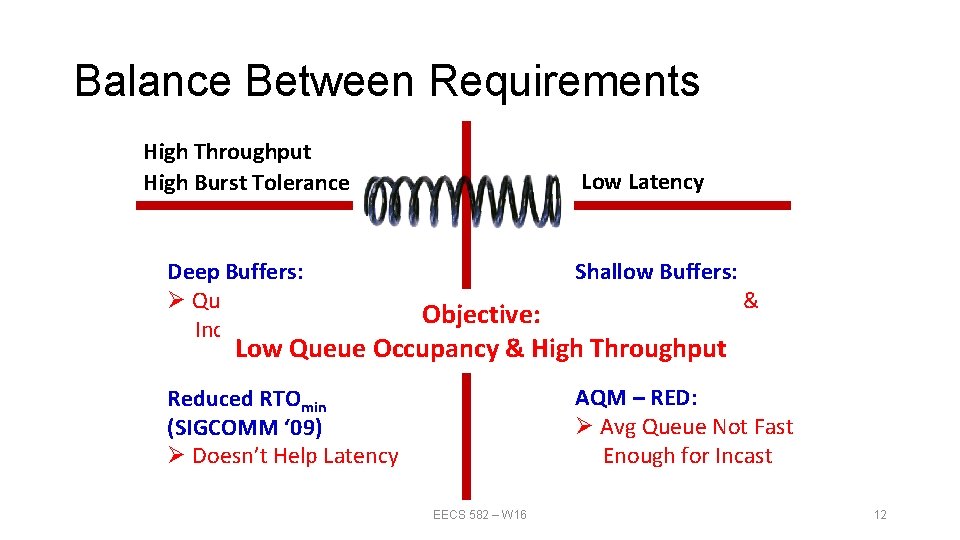

Balance Between Requirements High Throughput High Burst Tolerance Low Latency Deep Buffers: Ø Queuing Delays Increase Latency Shallow Buffers: Ø Bad for Bursts & Throughput Reduced RTOmin (SIGCOMM ‘ 09) Ø Doesn’t Help Latency AQM – RED: Ø Avg Queue Not Fast Enough for Incast Objective: Low Queue Occupancy & High Throughput DCTCP EECS 582 – W 16 12

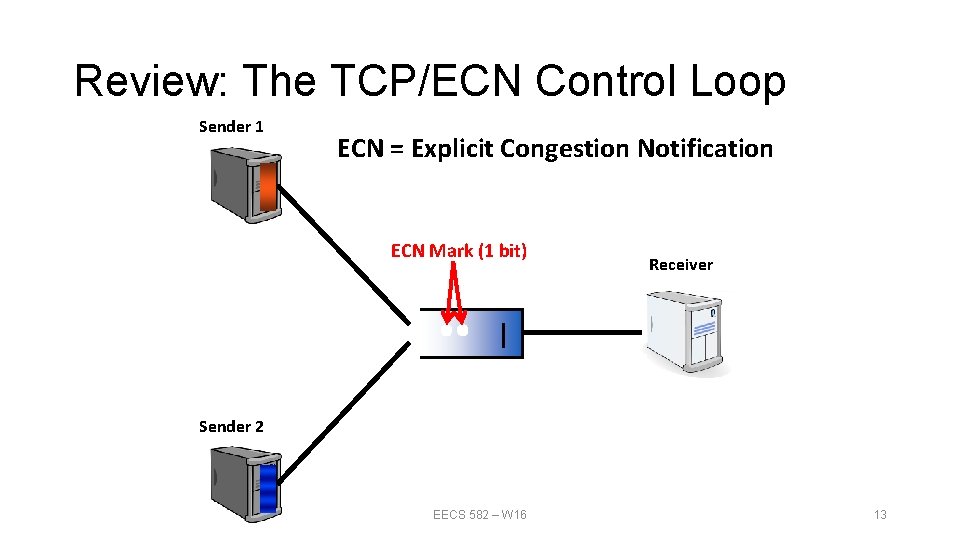

Review: The TCP/ECN Control Loop Sender 1 ECN = Explicit Congestion Notification ECN Mark (1 bit) Receiver Sender 2 EECS 582 – W 16 13

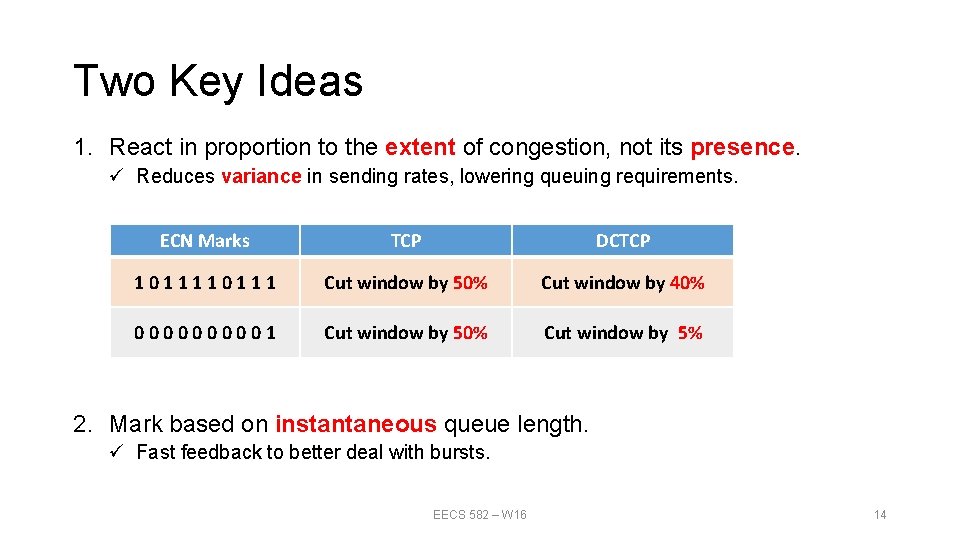

Two Key Ideas 1. React in proportion to the extent of congestion, not its presence. ü Reduces variance in sending rates, lowering queuing requirements. ECN Marks TCP DCTCP 10111 Cut window by 50% Cut window by 40% 000001 Cut window by 50% Cut window by 5% 2. Mark based on instantaneous queue length. ü Fast feedback to better deal with bursts. EECS 582 – W 16 14

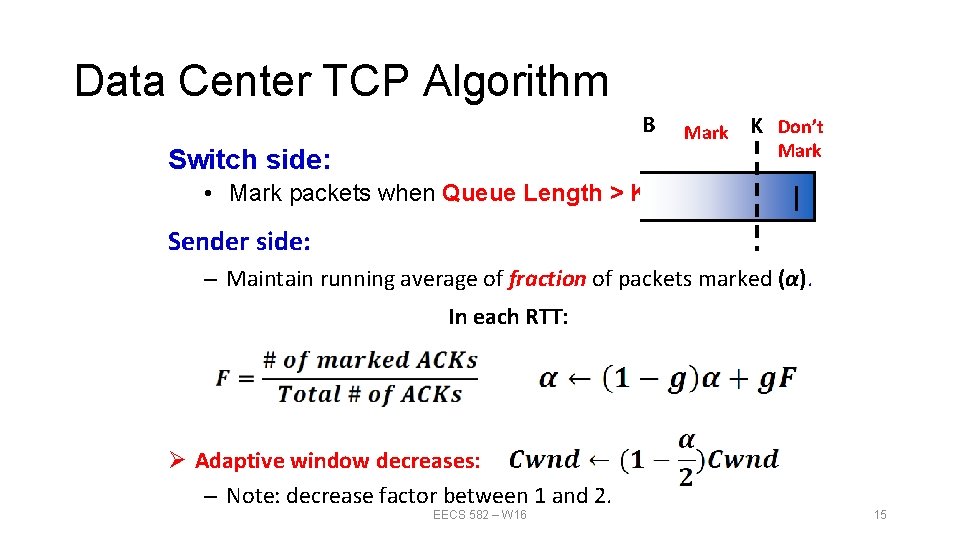

Data Center TCP Algorithm B Switch side: Mark K Don’t Mark • Mark packets when Queue Length > K. Sender side: – Maintain running average of fraction of packets marked (α). In each RTT: Ø Adaptive window decreases: – Note: decrease factor between 1 and 2. EECS 582 – W 16 15

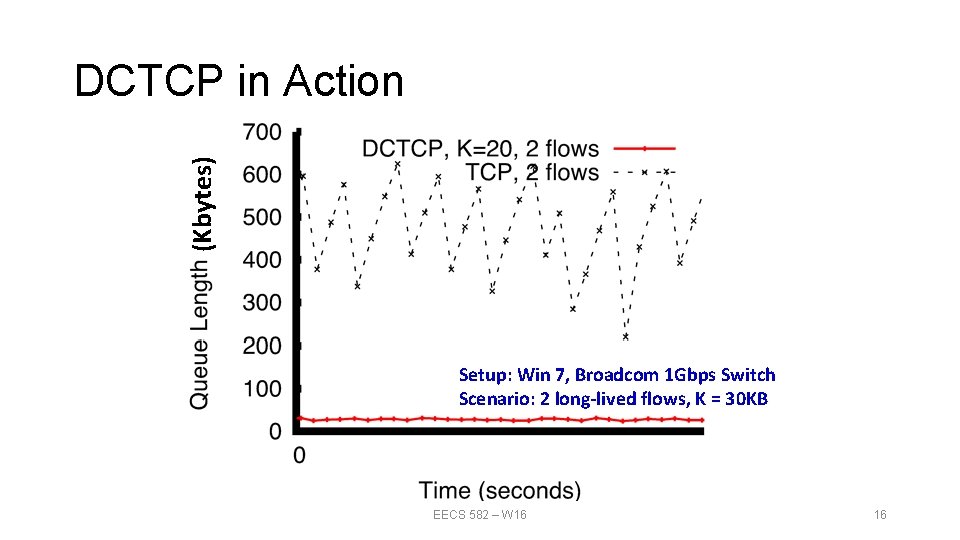

(Kbytes) DCTCP in Action Setup: Win 7, Broadcom 1 Gbps Switch Scenario: 2 long-lived flows, K = 30 KB EECS 582 – W 16 16

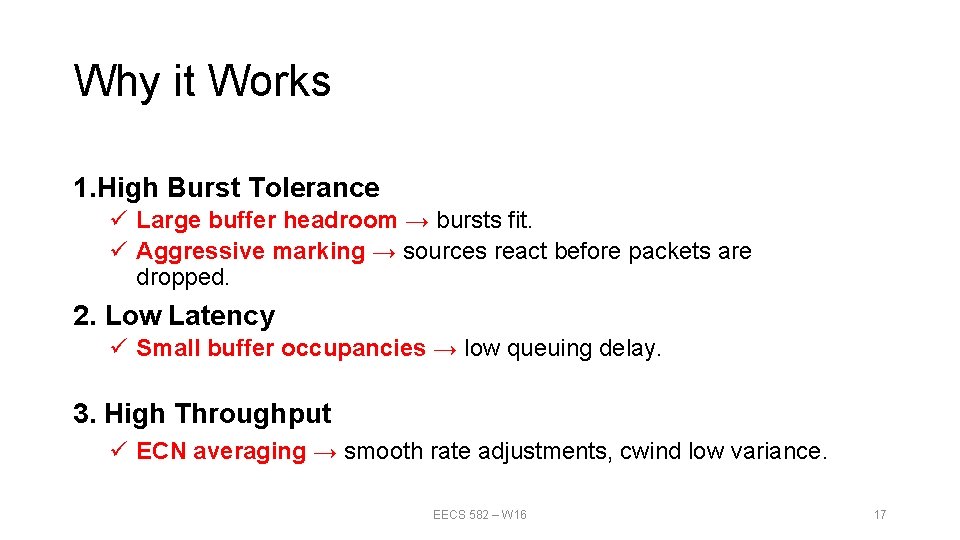

Why it Works 1. High Burst Tolerance ü Large buffer headroom → bursts fit. ü Aggressive marking → sources react before packets are dropped. 2. Low Latency ü Small buffer occupancies → low queuing delay. 3. High Throughput ü ECN averaging → smooth rate adjustments, cwind low variance. EECS 582 – W 16 17

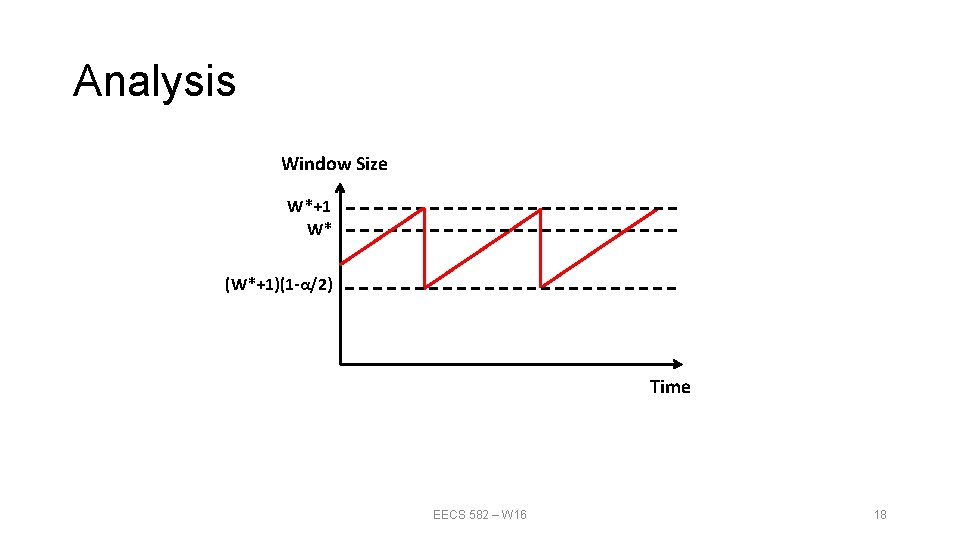

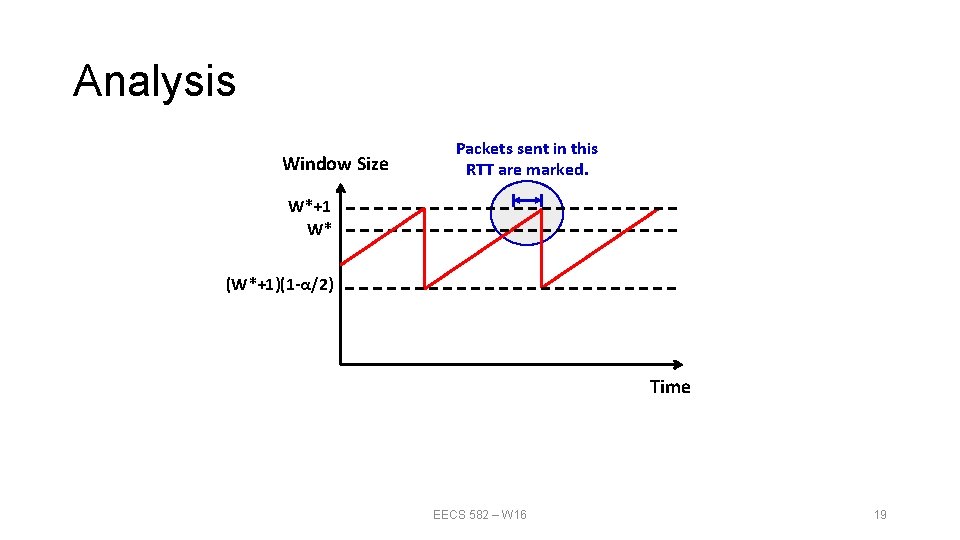

Analysis Window Size W*+1 W* (W*+1)(1 -α/2) Time EECS 582 – W 16 18

Analysis Window Size Packets sent in this RTT are marked. W*+1 W* (W*+1)(1 -α/2) Time EECS 582 – W 16 19

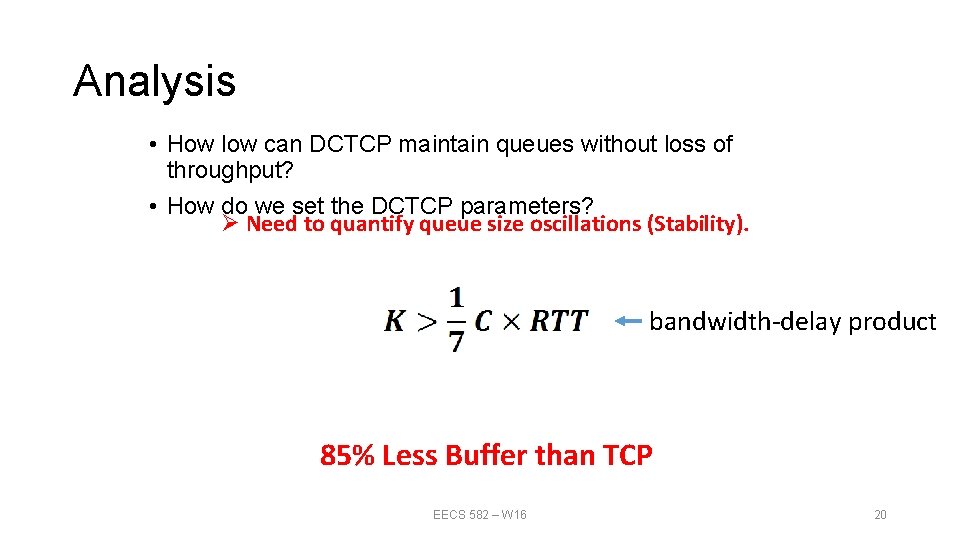

Analysis • How low can DCTCP maintain queues without loss of throughput? • How do we set the DCTCP parameters? Ø Need to quantify queue size oscillations (Stability). bandwidth-delay product 85% Less Buffer than TCP EECS 582 – W 16 20

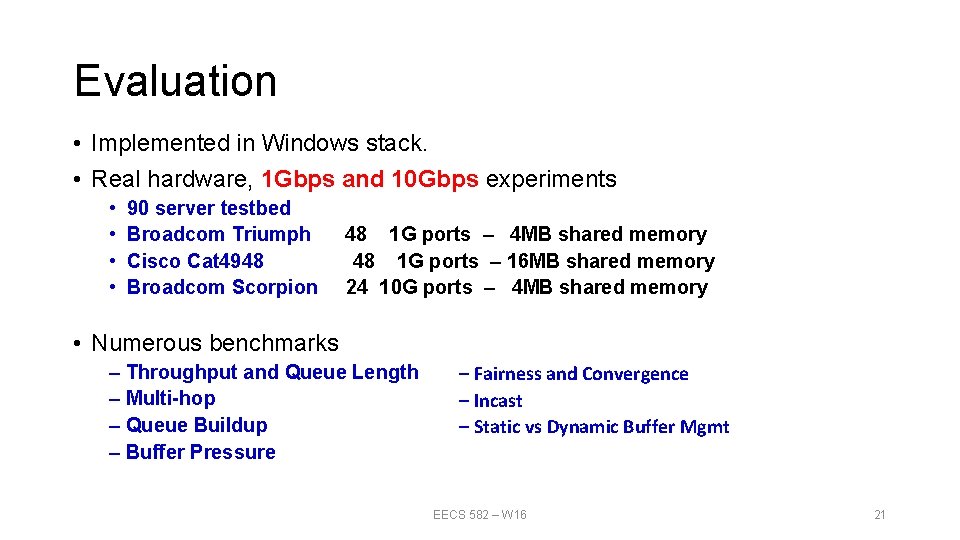

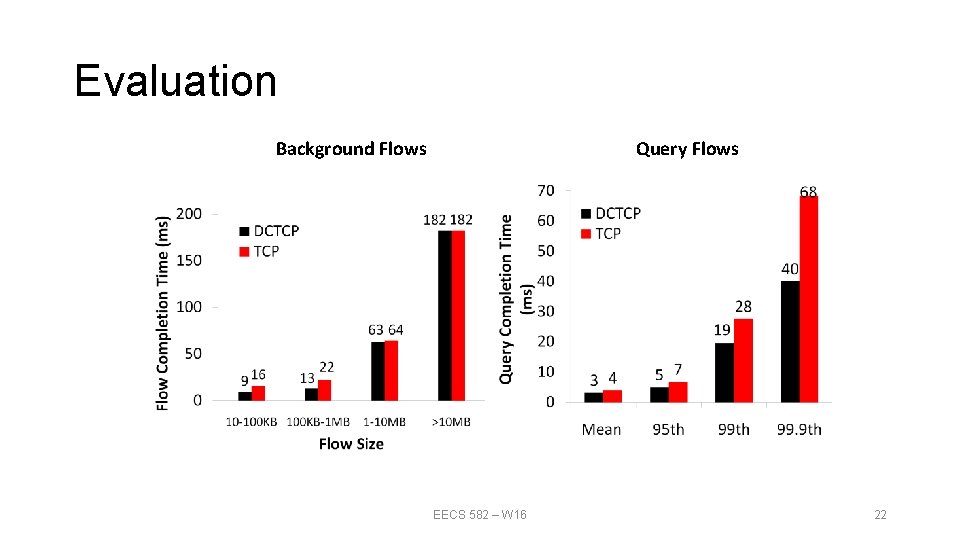

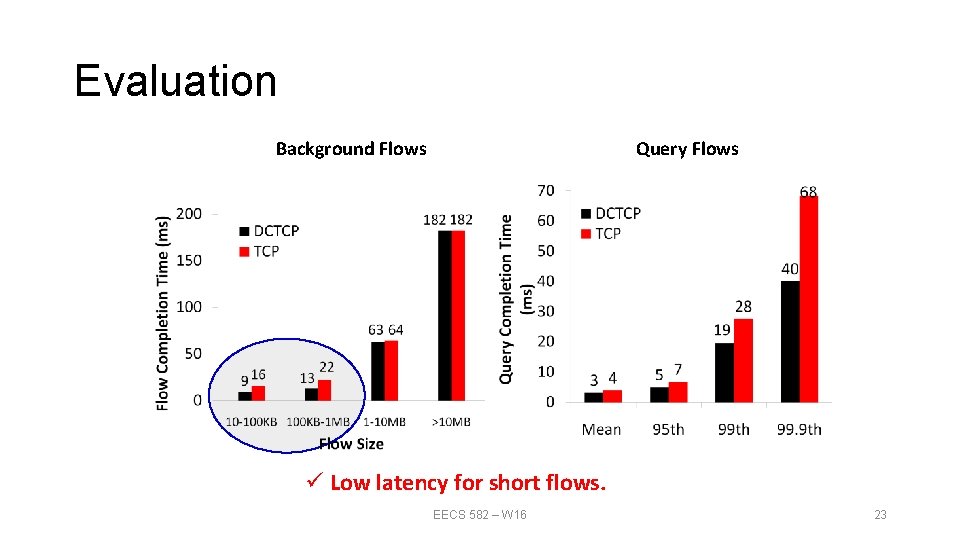

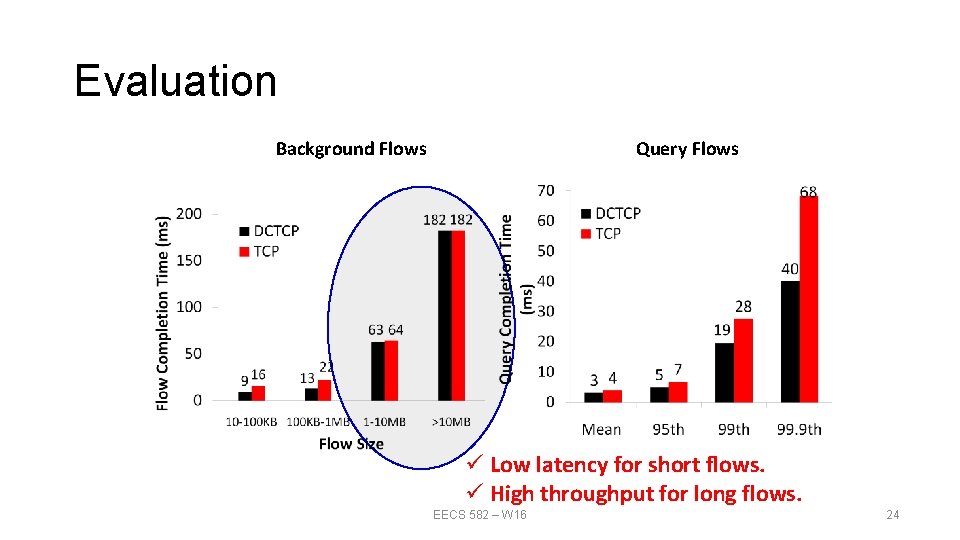

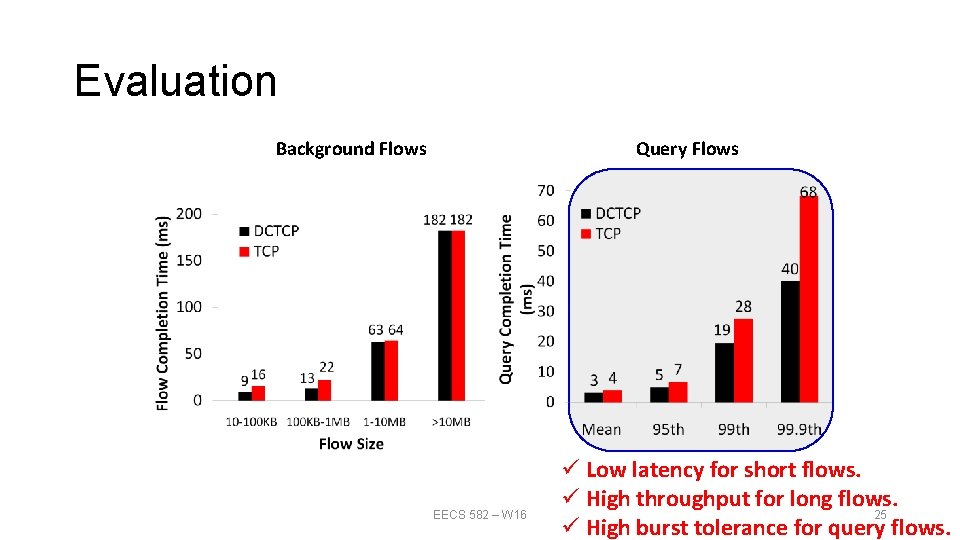

Evaluation • Implemented in Windows stack. • Real hardware, 1 Gbps and 10 Gbps experiments • • 90 server testbed Broadcom Triumph Cisco Cat 4948 Broadcom Scorpion 48 1 G ports – 4 MB shared memory 48 1 G ports – 16 MB shared memory 24 10 G ports – 4 MB shared memory • Numerous benchmarks – Throughput and Queue Length – Multi-hop – Queue Buildup – Buffer Pressure – Fairness and Convergence – Incast – Static vs Dynamic Buffer Mgmt EECS 582 – W 16 21

Evaluation Background Flows Query Flows EECS 582 – W 16 22

Evaluation Background Flows Query Flows ü Low latency for short flows. EECS 582 – W 16 23

Evaluation Background Flows Query Flows ü Low latency for short flows. ü High throughput for long flows. EECS 582 – W 16 24

Evaluation Background Flows Query Flows EECS 582 – W 16 ü Low latency for short flows. ü High throughput for long flows. 25 ü High burst tolerance for query flows.

Conclusions • DCTCP satisfies all our requirements for Data Center packet transport. ü Handles bursts well ü Keeps queuing delays low ü Achieves high throughput • Features: ü Very simple change to TCP and a single switch parameter K. ü Based on ECN mechanisms already available in commodity switch. EECS 582 – W 16 26

Discussions • 1. Will DCTCP perform worse in the internet? • 2. How about SDN using fine-grained TE? Open. TCP? • 3. Not compatible with SACK, which reduces the # of ACKs • 4. Convergence really doesn’t matter? • 5. RTT-fairness (favors small RTT flows)? See “Analysis of DCTCP: Stability, Convergence, and Fairness” EECS 582 – W 16 27

Q&A EECS 582 – W 16 28

- Slides: 28