DATA AND MEASUREMENT Tuesday August 27 BRIEF REVIEW

DATA AND MEASUREMENT Tuesday, August 27

BRIEF REVIEW • Research design: how we go about answering research questions • Hypotheses (predictions) often written as statistical models • Always includes error • Types of error: • Sampling error • Randomization error • Measurement error: systematic or random

OPERATIONALIZING CONCEPTS • Concepts: idea or mental construct representing some phenomena in the real world • Examples: democracy, justice, liberalism, consumerism • Often have to define the concept itself before we can move forward with research • Key characteristics and who they apply to • Example: what do we mean by “justice”?

OPERATIONALIZING CONCEPTS • Cannot stop with a definition of the concept, also need to figure out how it applies to our research • Operationalization: how we intend to measure the concepts involved in our research question • Make sure it is capturing the key elements you described for your concept • Determines what data we are going to collect and how we will perform our analysis

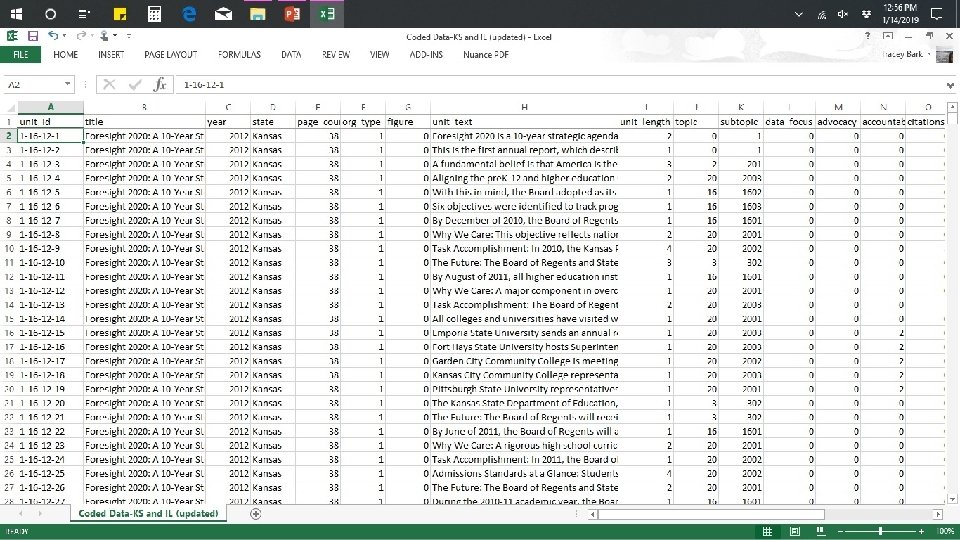

WHAT DATA ARE AND AREN’T • Data are… • Measurements of a concept • Raw numbers or text in a spreadsheet • Data are Not… • Graphics or tables • Preprocessed in any way

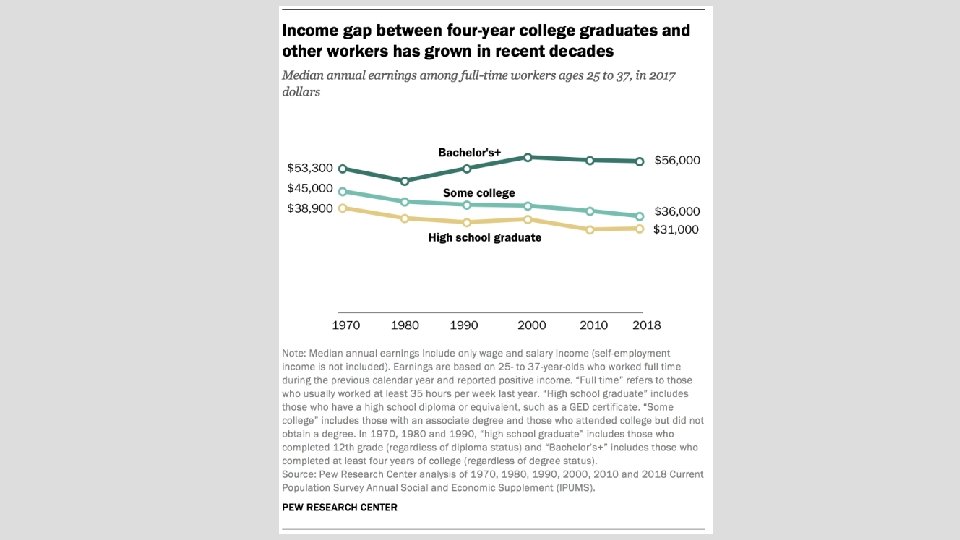

USING DATA • Subsetting: limiting a dataset to just what is useful for a particular purpose • Result is known as a “subset” • Aggregating: combines or summarizes individual observations for assessment at a higher level • Analyzing: calculating statistics and examining relationships within or between variables • Visualizing: representing the data or its characteristics in a visual summary • Can be in the form of a table, graphic, chart, etc.

SAMPLES VS. POPULATIONS • Population: every possible unit within the group you’re studying • Ex: every adult in America, every country in the world • Sample: subset of the population on which we’ve collected data • Ex: people who responded to our survey, countries who have collected the type of data we’re using • Most research is done on samples for feasibility purposes

VARIABLES • Once you’ve operationalized and measured your concept, it becomes a variable • Empirical measurement of a characteristic • Variable name: title of the characteristic being measured • Example: marital status • What is this person’s _____? • Variable value: one of the possible measurements of the characteristic • Examples: married/divorced/single • Answers to the above question

LABELING VARIABLES • Shortened versions of variable names for easier data entry and analysis • Best approach is to keep them short and not include spaces • Can include underscores between words instead • Goal is to be as descriptive as possible without being wordy • Binary variables use a different system • Usually labelled one of the two values

LEVELS OF MEASUREMENT • How precisely a variable measures the characteristic • Determines what statistics and analyses are appropriate for a given variable • Three major types: • Nominal • Ordinal • Interval/ratio

CATEGORICAL LEVELS OF MEASUREMENT • Nominal: only communicates that differences exist between observations • Cannot be ordered • Categories are simply names of different groups • Example: race/ethnicity • Ordinal: communicates relative differences between observations • Values can be ranked • Does not tell us how much difference there is between categories • Example: like or dislike of something

CONTINUOUS LEVELS OF MEASUREMENT • Interval: communicates exact differences between observations • Values can be added and subtracted • Example: temperature • Ratio: specific type of interval variable where zero signifies an absence of the characteristic being measured • Values can be multiplied and divided; proportions between them are accurate and meaningful • Example: age

DETERMINING LEVEL OF MEASUREMENT • Gender • Socio-economic status • Political party • Length of prison sentence • Religious denomination • Income • Generation • SAT scores

TYPES OF VARIABLES • Dependent: variable you are trying to explain • Commonly thought of as the “effect” variable • Usually written in models as Y • Independent: variable(s) expected to explain a change in the dependent variable • Remember, you can have more than one independent variable at a time • Commonly thought of as the “cause” variable • Usually written in models as X and/or Z

TYPES OF VARIABLES • Binary: nominal variables with only two categories • Examples: gender, yes/no survey questions • Dummy variables: • Especially useful for regression analysis • Also called indicators • Coded to indicate the presence (1) or absence (0) of a characteristic

UNIT OF ANALYSIS • Entity being described analyzed in a study • Can be at the individual or aggregate level • Individual level: lowest possible level at which to measure a concept • Example: people responding to a survey • Aggregate level: collection of individual entities • Example: national opinion polls • Important for discussing results of your analysis

ECOLOGICAL FALLACY • Occurs when aggregate-level phenomena or data are used to make inferences at the individual level • Example: You read an article showing that states with a greater proportion of citizens who smoke spend less on healthcare than states with a lower proportion of citizens who smoke. The article concludes people who smoke have lower healthcare costs and must be healthier than people who do not smoke.

RELIABILITY • Extent to which a measurement is consistent • Reliable measure should not change if repeated • Increasing reliability means decreasing random measurement error • Can be evaluated over time (apply measure at multiple points) or internally (two halves of the sample should be the same)

VALIDITY • Extent to which measurement is accurate • Valid measure includes characteristic you’re interested in without including any unintended characteristics • Increasing validity requires minimizing both types of measurement error

TYPES OF VALIDITY • Face validity: using informed judgment to decide whether operationalization measures what it should • Example: measuring running ability by mile times • Construct validity: operationalization is compared to other variables to which it should be related • Example: relationship of SAT and ACT scores to college GPAs

TYPES OF VALIDITY • Internal validity: effects of IV on DV are isolated, no room for rival explanations • External validity: findings of a study can be applied to the outside world • Also called generalizability • Tradeoff exists between these two • These types most commonly discussed in experimental settings

EXAMPLE 1: FLAWED RESEARCH DESIGN • A team of researchers is coding speeches made by the United Nations Secretary General to examine what issues are viewed as most pressing by the UN over time. After coding approximately half of the speeches, the researchers are awarded a grant to work on another project and no longer have time to finish the coding. In order to complete the UN project, they hire a student to finish coding for them. They provide the student with a list of topics to choose from and no further information about the coding process or what to look for. Once the coding is finished, they combine both halves of the data for their analysis. • What is the problem with this research design?

EXAMPLE 2: FLAWED RESEARCH DESIGN • A student is collecting data for a class project. She wants to know whether people with a previous conviction for drug use are more likely to support the sale of marijuana for recreational purposes. To collect data, she decides to conduct an in-person survey and compare the responses from people with no drug convictions to the responses from people with prior drug convictions. After surveying 100 people, the student notices she only has 3 respondents with previous drug convictions in her data. Though surprised, she draws her conclusions based on the data she collected, thinking the small number of respondents with drug convictions means there are simply not many people in her area with previous drug convictions. • What is the problem with this research design?

EXAMPLE 3: FLAWED RESEARCH DESIGN • A researcher is investigating whether Americans have positive or negative views toward subsidized student loans provided by the federal government. Specifically, the researcher is interested in how much these viewpoints change with increases in education. The researcher does not have funding to field a new survey, so instead decides to use data gathered by a national polling organization. The survey does not include a question specifically about respondents’ feelings toward subsidized student loans, so the researcher plans to use the following question as a proxy measure for respondent views: Do high amounts of student loan debt have a negative impact on the U. S. economy? The researcher then tests the effect of increased education on responses to this question. • What is the problem with this research design?

- Slides: 26