DAQ and Trigger upgrade U Marconi INFN Bologna

DAQ and Trigger upgrade U. Marconi, INFN Bologna Firenze, March 2014 1

DAQ and Trigger TDR • TDR planned for June 2014 • Sub-systems involved: – Long distance optical fibres – Readout boards (PCIe 40) and Event Builder Farm – The Event Filter Farm for the HLT – Firmware, LLT, TFC 2

Readout System Review • Held on Feb 25 th, 9: 00 – 16: 00 • 4 reviewers – Christoph Schwick (PH/CMD – CMS DAQ) – Stefan Haas (PH/ESE) – Guido Haefeli (EPFL) – Jan Troska (PH/ESE) • https: //indico. cern. ch/event/297003/ • All documents can be found in EDMS https: //edms. cern. ch/document/1357418/1 3

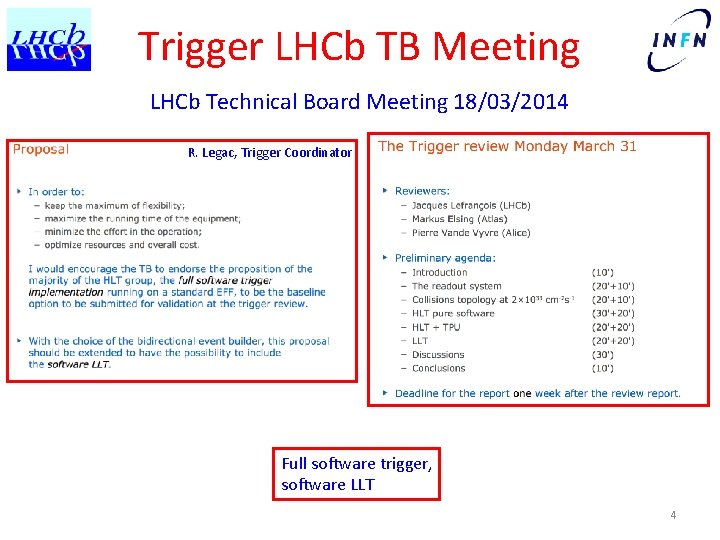

Trigger LHCb TB Meeting LHCb Technical Board Meeting 18/03/2014 R. Legac, Trigger Coordinator Full software trigger, software LLT 4

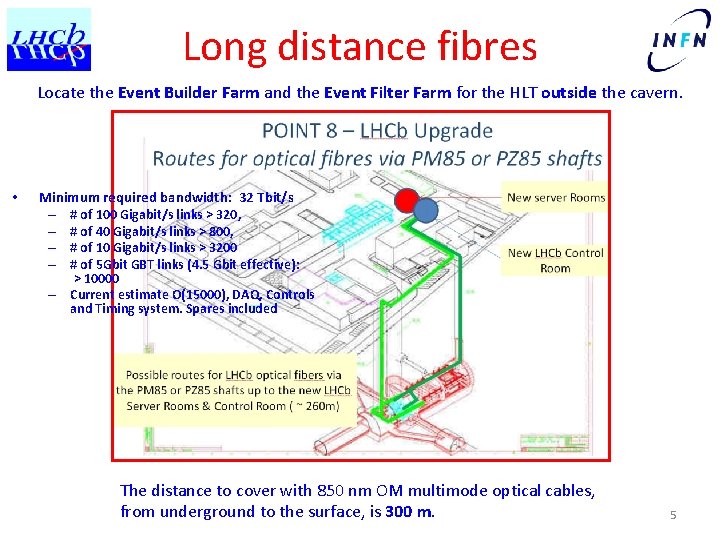

Long distance fibres Locate the Event Builder Farm and the Event Filter Farm for the HLT outside the cavern. • Minimum required bandwidth: 32 Tbit/s – # of 100 Gigabit/s links > 320, – # of 40 Gigabit/s links > 800, – # of 10 Gigabit/s links > 3200 – # of 5 Gbit GBT links (4. 5 Gbit effective): – > 10000 Current estimate O(15000), DAQ, Controls and Timing system. Spares included The distance to cover with 850 nm OM multimode optical cables, from underground to the surface, is 300 m. 5

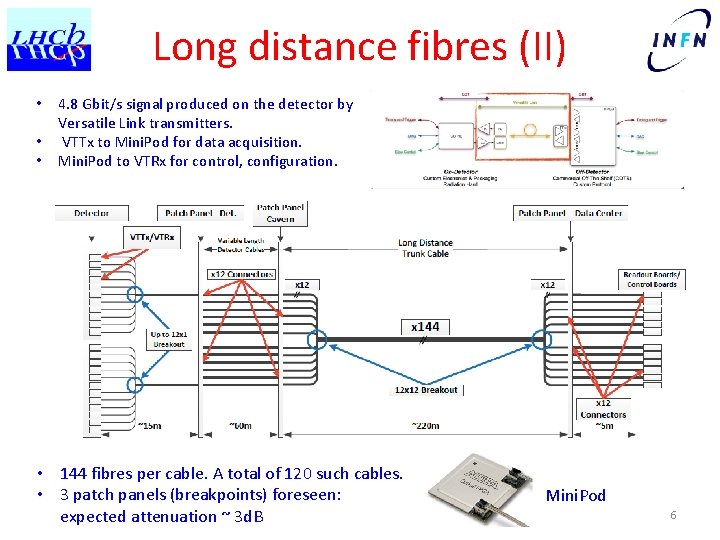

Long distance fibres (II) • • • 4. 8 Gbit/s signal produced on the detector by Versatile Link transmitters. VTTx to Mini. Pod for data acquisition. Mini. Pod to VTRx for control, configuration. • 144 fibres per cable. A total of 120 such cables. • 3 patch panels (breakpoints) foreseen: expected attenuation ~ 3 d. B Mini. Pod 6

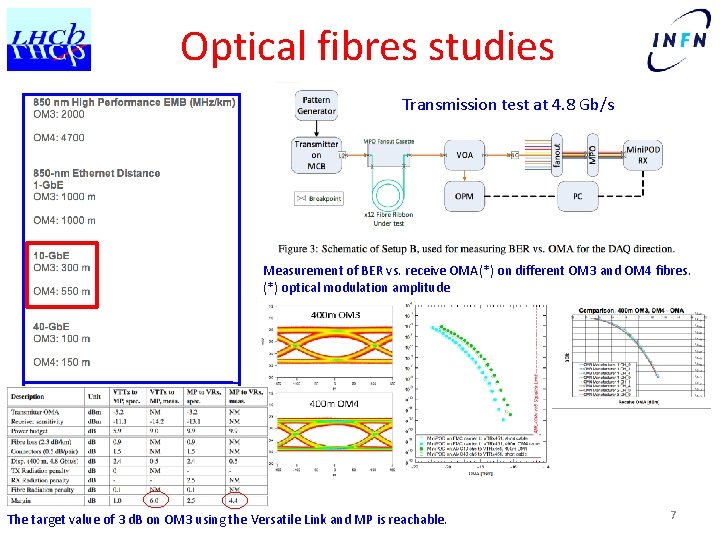

Optical fibres studies Transmission test at 4. 8 Gb/s Measurement of BER vs. receive OMA(*) on different OM 3 and OM 4 fibres. (*) optical modulation amplitude The target value of 3 d. B on OM 3 using the Versatile Link and MP is reachable. 7

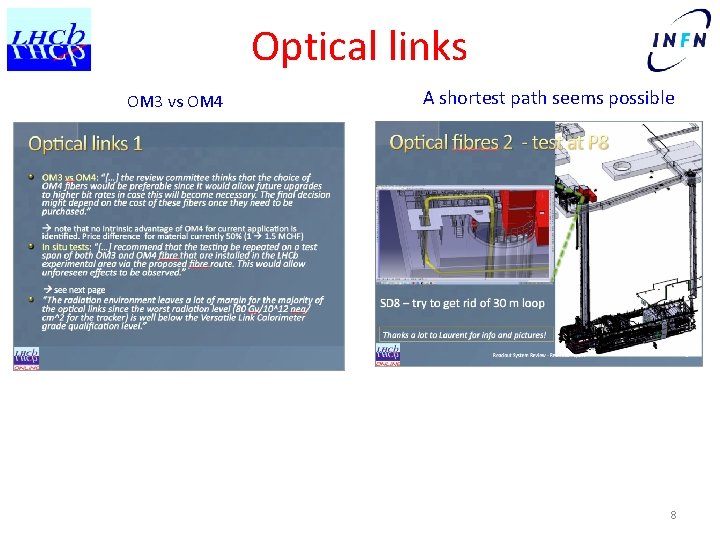

Optical links OM 3 vs OM 4 A shortest path seems possible 8

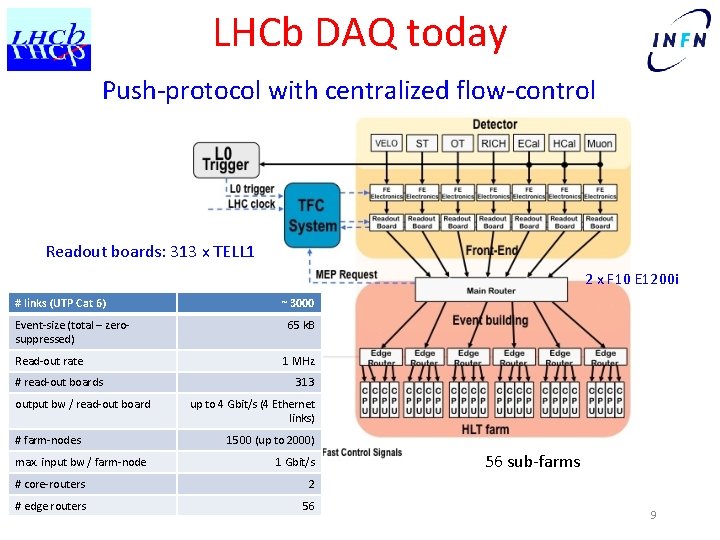

LHCb DAQ today Push-protocol with centralized flow-control Readout boards: 313 x TELL 1 2 x F 10 E 1200 i # links (UTP Cat 6) Event-size (total – zerosuppressed) Read-out rate # read-out boards output bw / read-out board # farm-nodes max. input bw / farm-node ~ 3000 65 k. B 1 MHz 313 up to 4 Gbit/s (4 Ethernet links) 1500 (up to 2000) 1 Gbit/s # core-routers 2 # edge routers 56 56 sub-farms 9

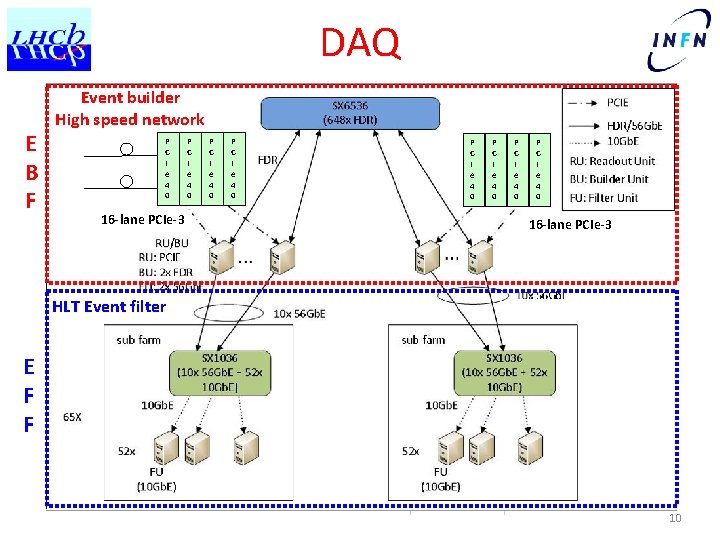

DAQ E B F Event builder High speed network P C I e 4 0 16 -lane PCIe-3 P C I e 4 0 P C I e 4 0 16 -lane PCIe-3 HLT Event filter E F F 10

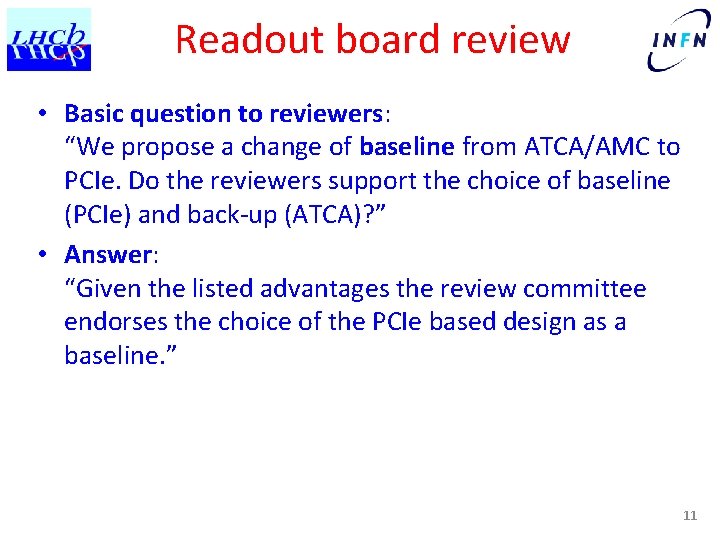

Readout board review • Basic question to reviewers: “We propose a change of baseline from ATCA/AMC to PCIe. Do the reviewers support the choice of baseline (PCIe) and back-up (ATCA)? ” • Answer: “Given the listed advantages the review committee endorses the choice of the PCIe based design as a baseline. ” 11

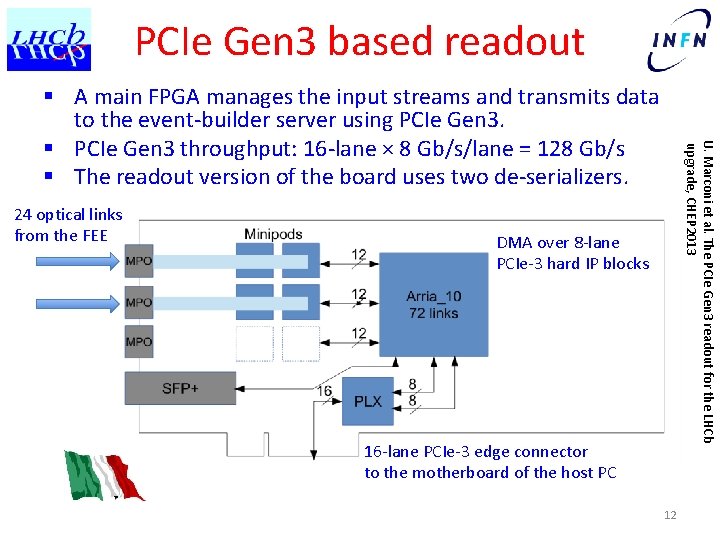

PCIe Gen 3 based readout 24 optical links from the FEE U. Marconi et al. The PCIe Gen 3 readout for the LHCb upgrade, CHEP 2013 § A main FPGA manages the input streams and transmits data to the event-builder server using PCIe Gen 3. § PCIe Gen 3 throughput: 16 -lane × 8 Gb/s/lane = 128 Gb/s § The readout version of the board uses two de-serializers. DMA over 8 -lane PCIe-3 hard IP blocks 16 -lane PCIe-3 edge connector to the motherboard of the host PC 12

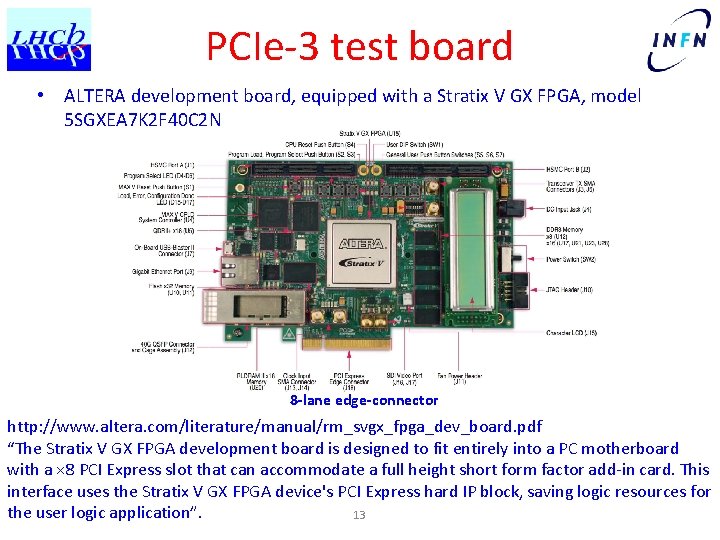

PCIe-3 test board • ALTERA development board, equipped with a Stratix V GX FPGA, model 5 SGXEA 7 K 2 F 40 C 2 N 8 -lane edge-connector http: //www. altera. com/literature/manual/rm_svgx_fpga_dev_board. pdf “The Stratix V GX FPGA development board is designed to fit entirely into a PC motherboard with a × 8 PCI Express slot that can accommodate a full height short form factor add-in card. This interface uses the Stratix V GX FPGA device's PCI Express hard IP block, saving logic resources for the user logic application”. 13

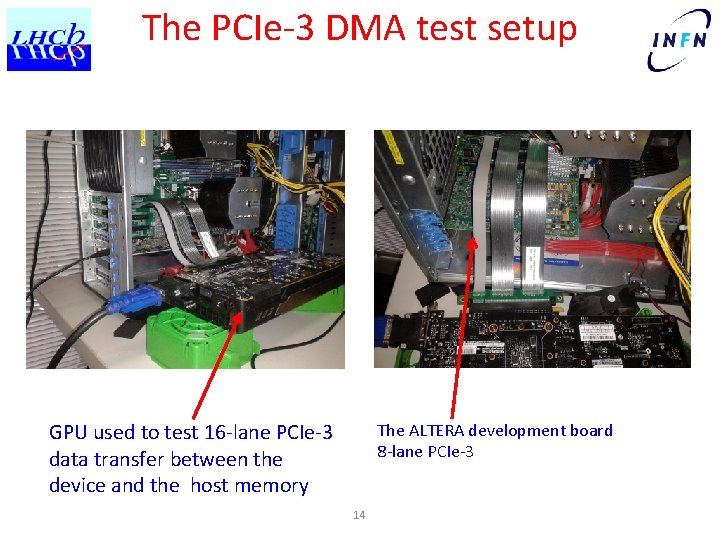

The PCIe-3 DMA test setup GPU used to test 16 -lane PCIe-3 data transfer between the device and the host memory The ALTERA development board 8 -lane PCIe-3 14

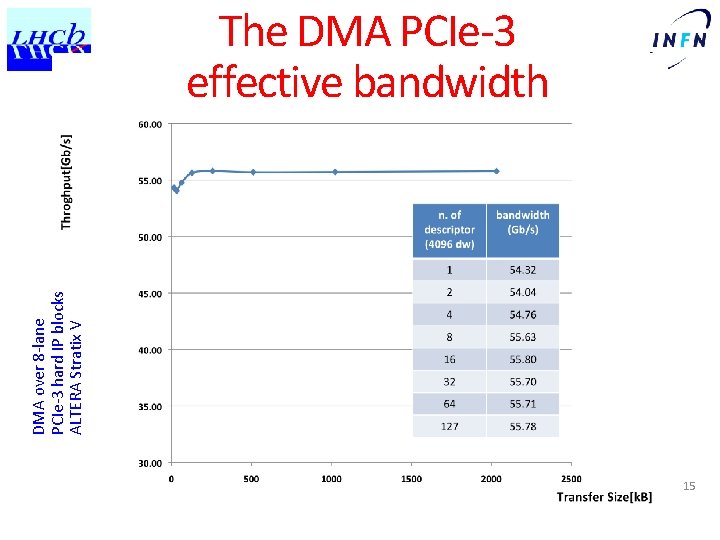

DMA over 8 -lane PCIe-3 hard IP blocks ALTERA Stratix V The DMA PCIe-3 effective bandwidth 15

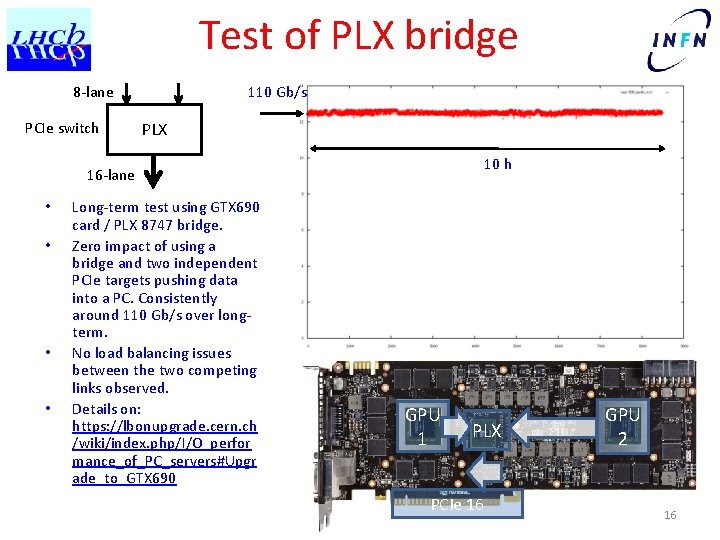

Test of PLX bridge 8 -lane PCIe switch 110 Gb/s PLX 10 h 16 -lane • • Long-term test using GTX 690 card / PLX 8747 bridge. Zero impact of using a bridge and two independent PCIe targets pushing data into a PC. Consistently around 110 Gb/s over longterm. No load balancing issues between the two competing links observed. Details on: https: //lbonupgrade. cern. ch /wiki/index. php/I/O_perfor mance_of_PC_servers#Upgr ade_to_GTX 690 GPU 1 PLX PCIe 16 GPU 2 16

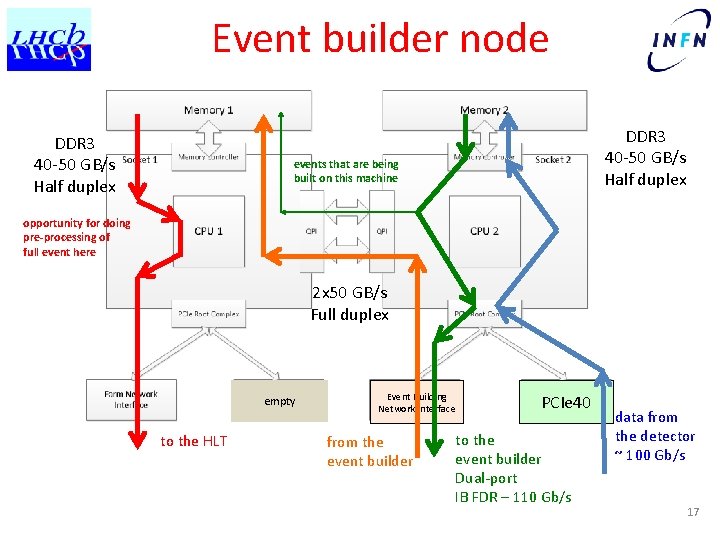

Event builder node DDR 3 40 -50 GB/s Half duplex events that are being built on this machine opportunity for doing pre-processing of full event here 2 x 50 GB/s Full duplex empty to the HLT Event Building Network Interface from the event builder PCIe 40 to the event builder Dual-port IB FDR – 110 Gb/s data from the detector ~ 100 Gb/s 17

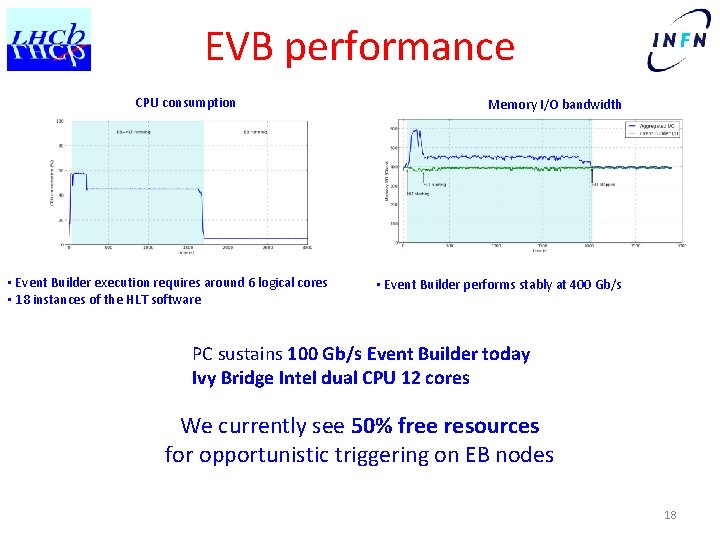

EVB performance CPU consumption • Event Builder execution requires around 6 logical cores • 18 instances of the HLT software Memory I/O bandwidth • Event Builder performs stably at 400 Gb/s PC sustains 100 Gb/s Event Builder today Ivy Bridge Intel dual CPU 12 cores We currently see 50% free resources for opportunistic triggering on EB nodes 18

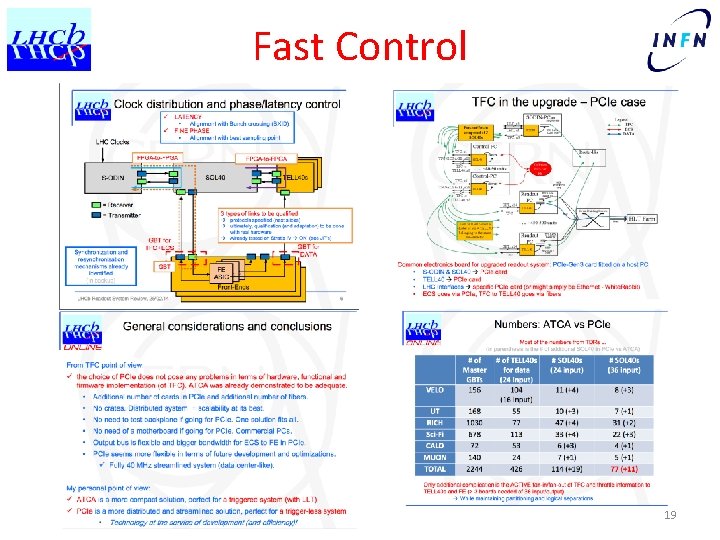

Fast Control 19

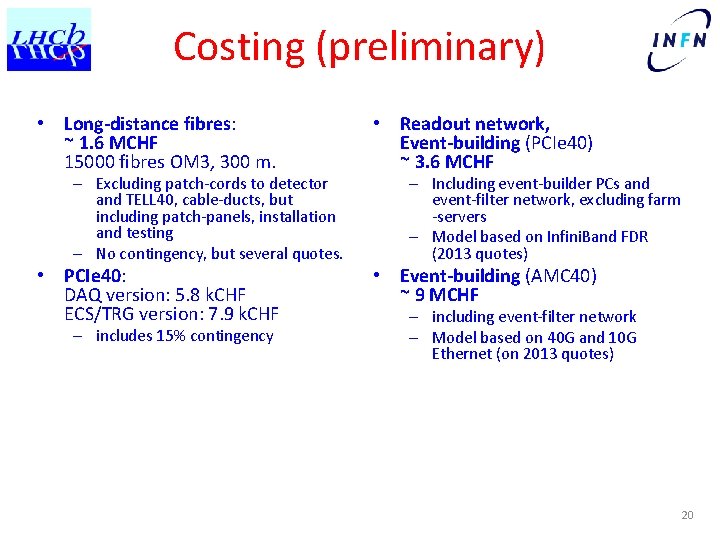

Costing (preliminary) • Long-distance fibres: ~ 1. 6 MCHF 15000 fibres OM 3, 300 m. • Readout network, Event-building (PCIe 40) ~ 3. 6 MCHF • PCIe 40: DAQ version: 5. 8 k. CHF ECS/TRG version: 7. 9 k. CHF • Event-building (AMC 40) ~ 9 MCHF – Excluding patch-cords to detector and TELL 40, cable-ducts, but including patch-panels, installation and testing – No contingency, but several quotes. – includes 15% contingency – Including event-builder PCs and event-filter network, excluding farm -servers – Model based on Infini. Band FDR (2013 quotes) – including event-filter network – Model based on 40 G and 10 G Ethernet (on 2013 quotes) 20

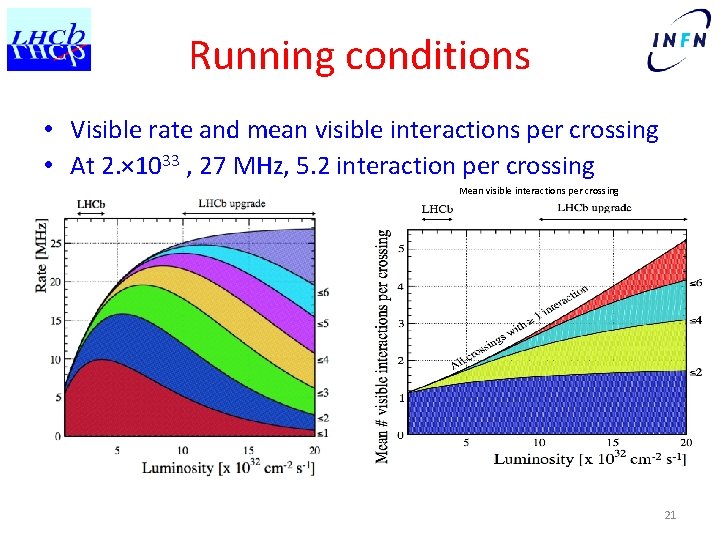

Running conditions • Visible rate and mean visible interactions per crossing • At 2. × 1033 , 27 MHz, 5. 2 interaction per crossing Mean visible interactions per crossing 21

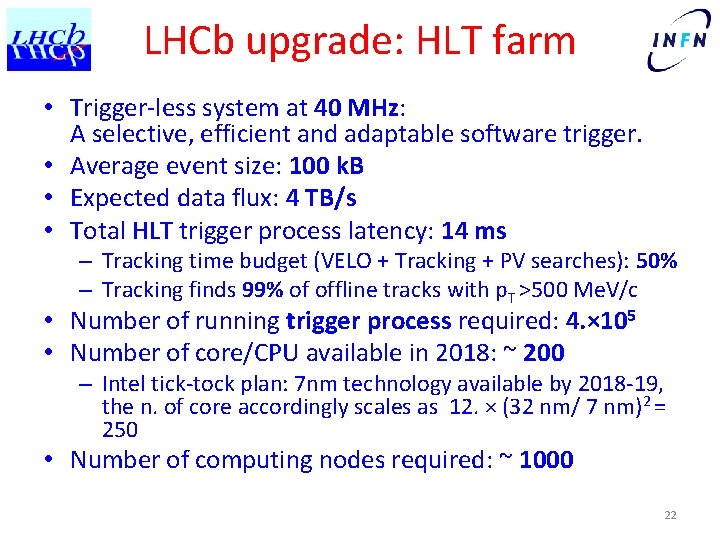

LHCb upgrade: HLT farm • Trigger-less system at 40 MHz: A selective, efficient and adaptable software trigger. • Average event size: 100 k. B • Expected data flux: 4 TB/s • Total HLT trigger process latency: 14 ms – Tracking time budget (VELO + Tracking + PV searches): 50% – Tracking finds 99% of offline tracks with p. T >500 Me. V/c • Number of running trigger process required: 4. × 105 • Number of core/CPU available in 2018: ~ 200 – Intel tick-tock plan: 7 nm technology available by 2018 -19, the n. of core accordingly scales as 12. × (32 nm/ 7 nm)2 = 250 • Number of computing nodes required: ~ 1000 22

LLT Readout reviewers’ opinion … only the software option should be pursued if any. 23

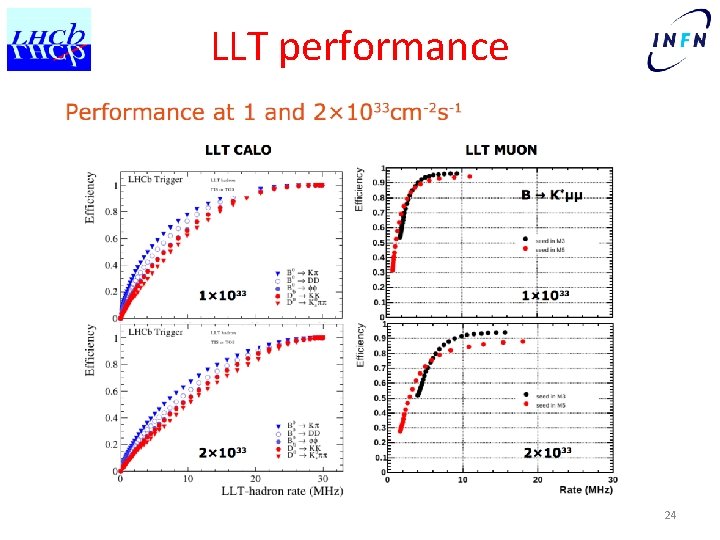

LLT performance 24

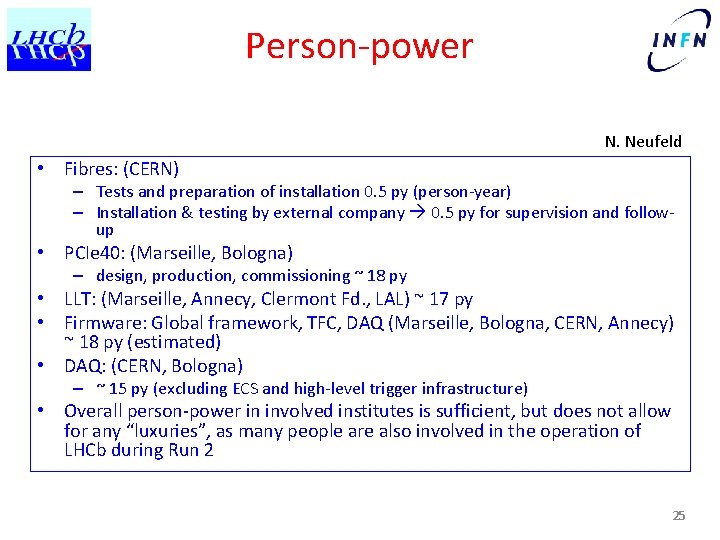

Person-power N. Neufeld • Fibres: (CERN) – Tests and preparation of installation 0. 5 py (person-year) – Installation & testing by external company 0. 5 py for supervision and followup • PCIe 40: (Marseille, Bologna) – design, production, commissioning ~ 18 py • LLT: (Marseille, Annecy, Clermont Fd. , LAL) ~ 17 py • Firmware: Global framework, TFC, DAQ (Marseille, Bologna, CERN, Annecy) ~ 18 py (estimated) • DAQ: (CERN, Bologna) – ~ 15 py (excluding ECS and high-level trigger infrastructure) • Overall person-power in involved institutes is sufficient, but does not allow for any “luxuries”, as many people are also involved in the operation of LHCb during Run 2 25

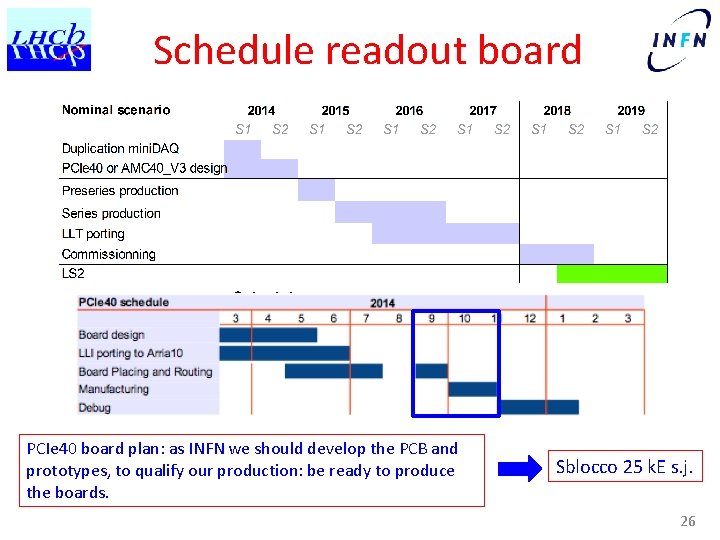

Schedule readout board Schedule TELL 40 PCIe 40 board plan: as INFN we should develop the PCB and prototypes, to qualify our production: be ready to produce the boards. Sblocco 25 k. E s. j. 26

Schedule DAQ • Assume start of data-taking in 2020 N. Neufeld – System for SD-tests ready whenever needed minimal investment • • 2013 – 16: technology following (PC and network) 2015 – 16: Large scale network IB and Ethernet tests 2017: tender preparations 2018: Acquisition of minimal system to be able to read out every GBT – Acquisition of modular data-center • 2019: Acquisition and Commissioning of full system – starting with network – farm as needed 27

Schedule: firmware, LLT, TFC • All these are FPGA firmware projects • First versions of global firmware and TFC ready now (for Mini. DAQ test-systems) – then ongoing development • LLT – Software 2014 - 2015 – Hardware 2016 – 2017 (? ) 28

Schedule long-distance fibres • Test installation 2014 • Assumptions: – validate installation procedure and pre-select provider – Installation can be done without termination in UX (cables terminated on at least one end), if blown, fibres can be blown from bottom to top • Long-term fibre test with AMC 40/PCIe 40 on longdistance 2014/2015 • Full installation in LS 1. 5 or during winter-shutdowns / to be finished before LS 2 29

- Slides: 29