CV 201 Introduction to Deep Learning Oren Freifeld

- Slides: 44

CV 201: Introduction to Deep Learning Oren Freifeld Meitar Ronen Computer Science, Ben-Gurion University [Figure from previous slide taken from https: //ai. googleblog. com/2015/06/inceptionism-going-deeper-into-neural. html]

Contents • Introduction – What is Deep Learning? • Linear Binary Perceptron • Multi-Layer Perceptron

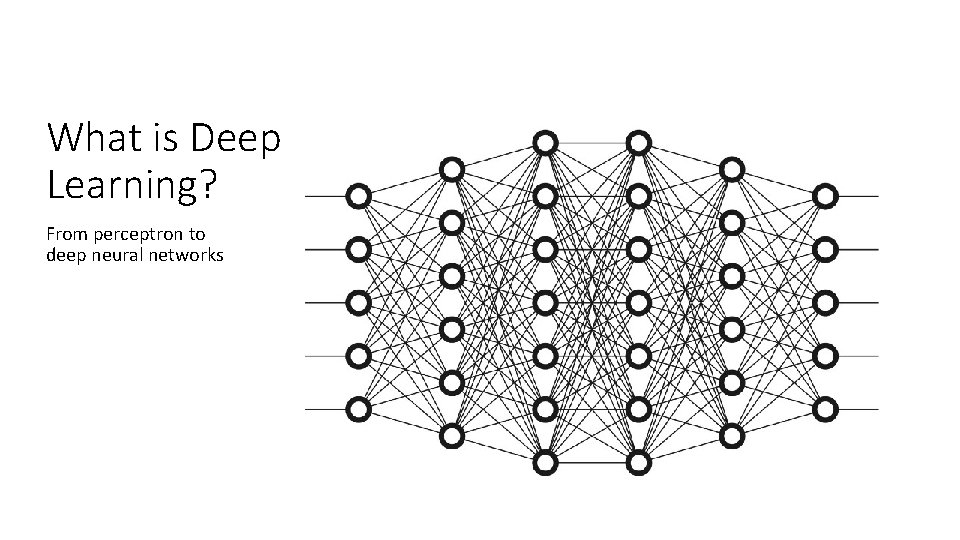

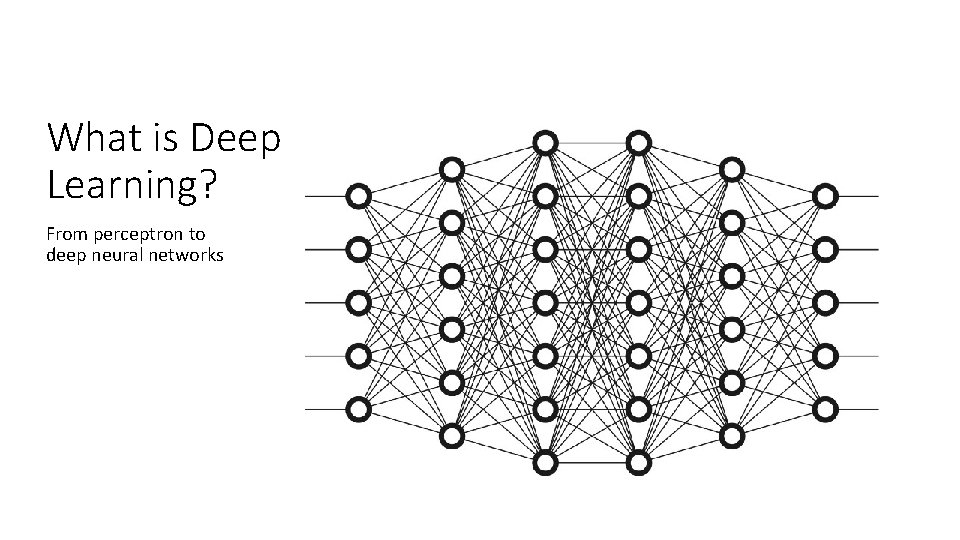

What is Deep Learning? From perceptron to deep neural networks

Deep Learning • • • Dominant ML method, especially in Computer Vision but not only Supervised learning approach Not new at all- first mentioned in the 1950’s • • GPUs advancement made the come-back possible Different tasks, impressive results

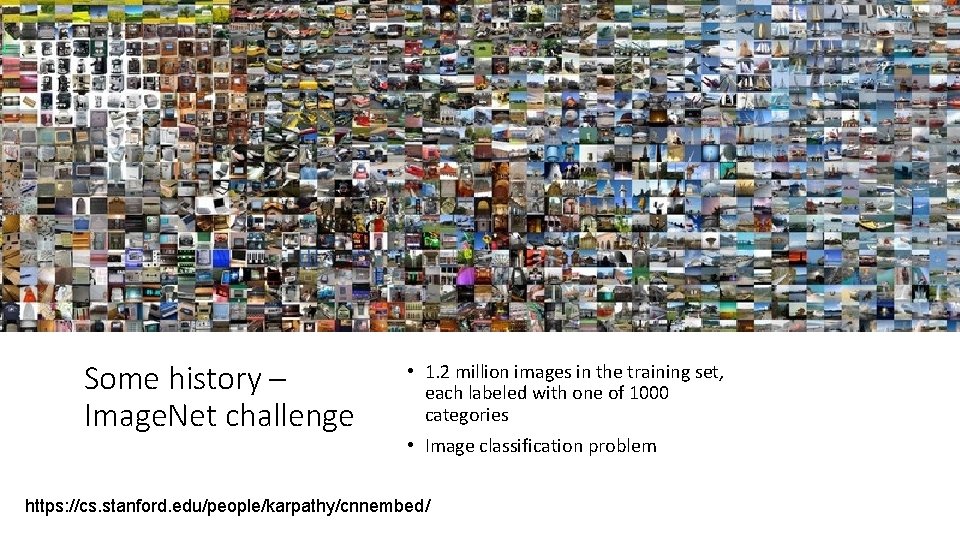

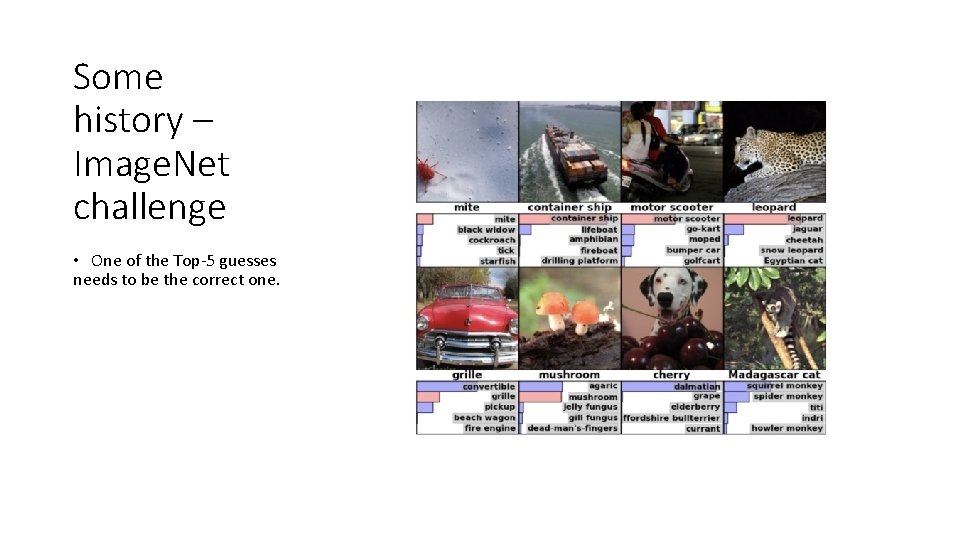

Some history – Image. Net challenge • 1. 2 million images in the training set, each labeled with one of 1000 categories • Image classification problem https: //cs. stanford. edu/people/karpathy/cnnembed/

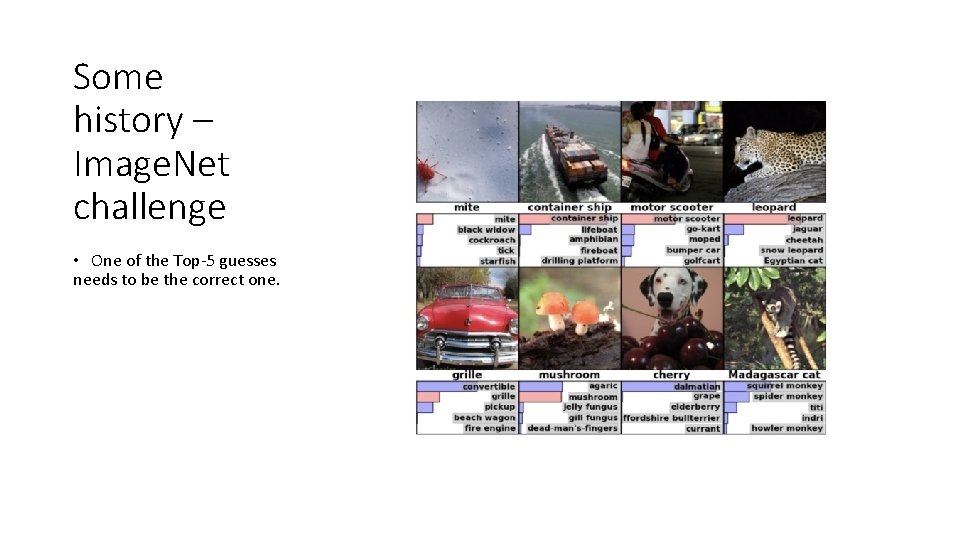

Some history – Image. Net challenge • One of the Top-5 guesses needs to be the correct one.

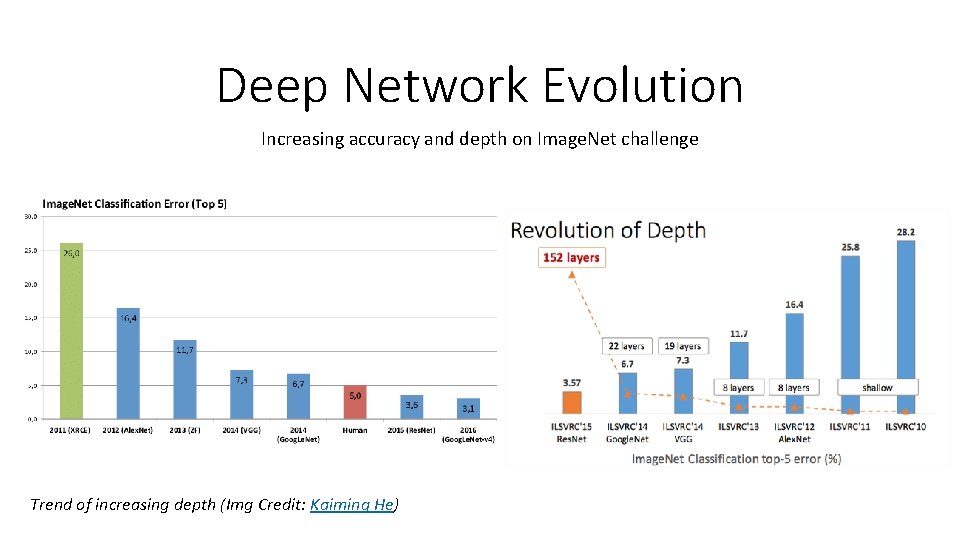

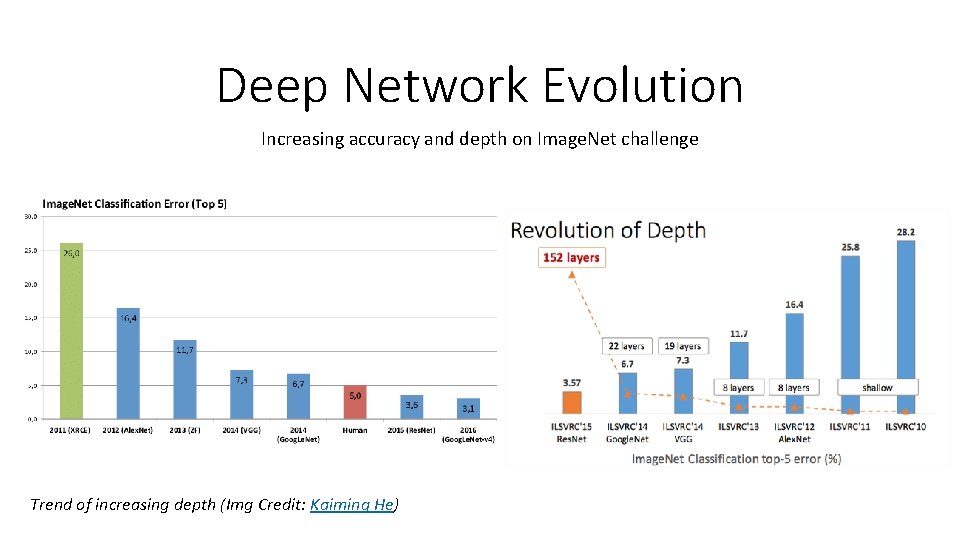

Deep Network Evolution Increasing accuracy and depth on Image. Net challenge Trend of increasing depth (Img Credit: Kaiming He)

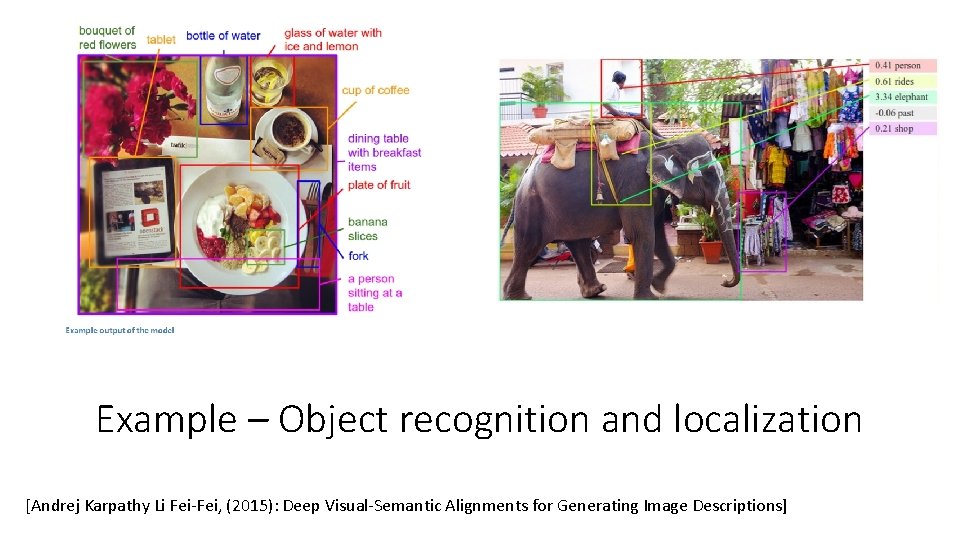

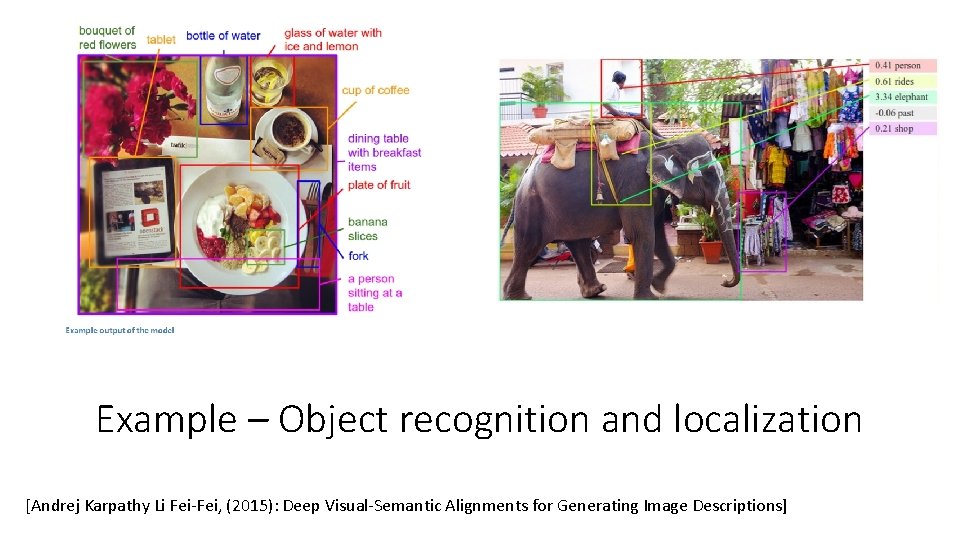

Example – Object recognition and localization [Andrej Karpathy Li Fei-Fei, (2015): Deep Visual-Semantic Alignments for Generating Image Descriptions]

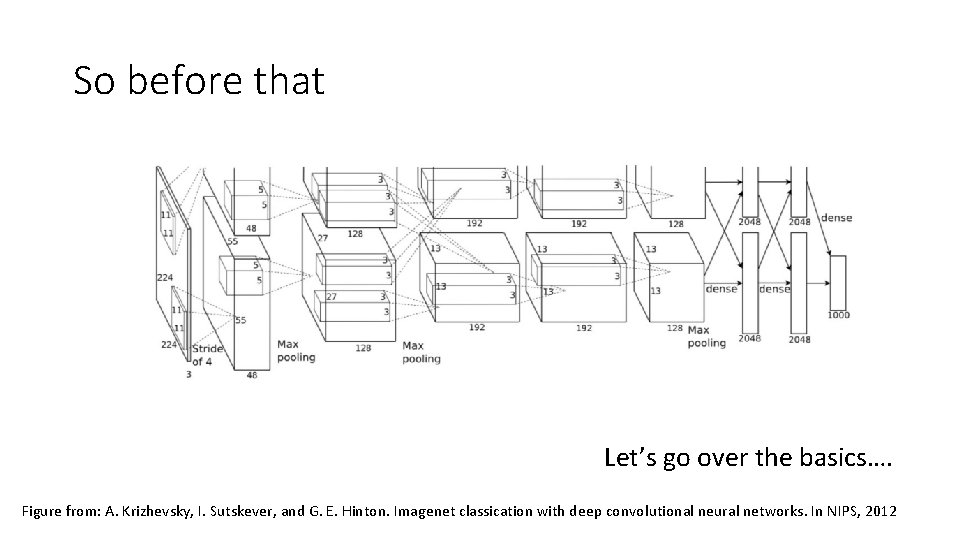

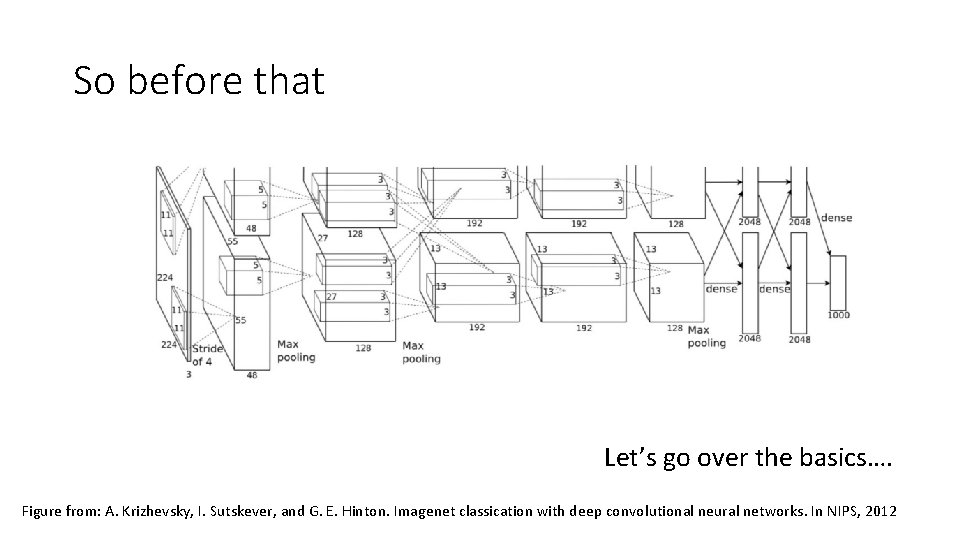

So before that Let’s go over the basics…. Figure from: A. Krizhevsky, I. Sutskever, and G. E. Hinton. Imagenet classication with deep convolutional neural networks. In NIPS, 2012

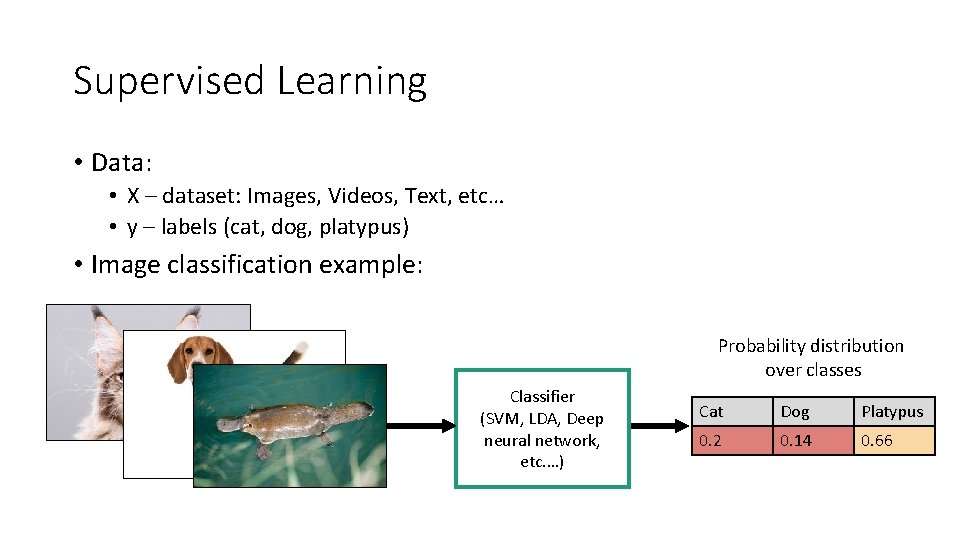

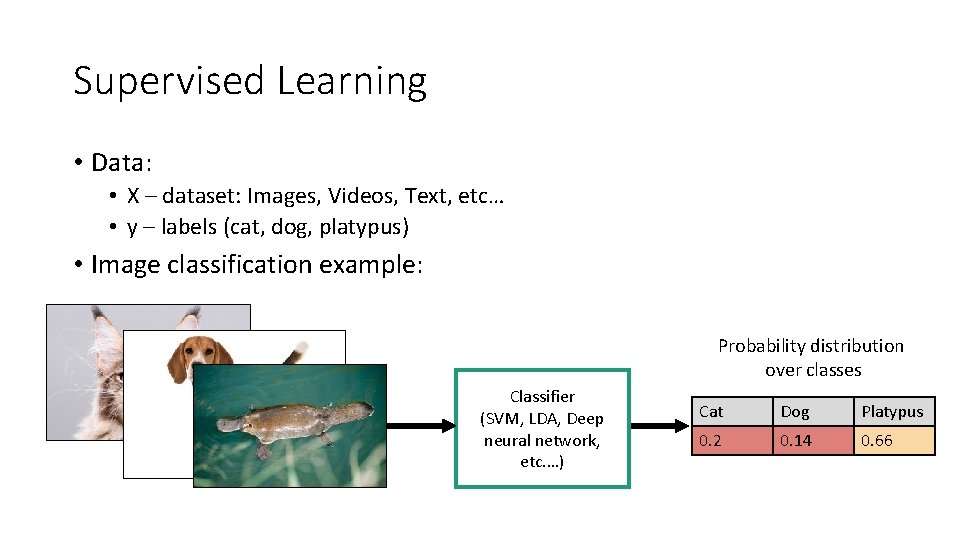

Supervised Learning • Data: • X – dataset: Images, Videos, Text, etc… • y – labels (cat, dog, platypus) • Image classification example: Probability distribution over classes Classifier (SVM, LDA, Deep neural network, etc. …) Cat Dog Platypus 0. 2 0. 14 0. 66

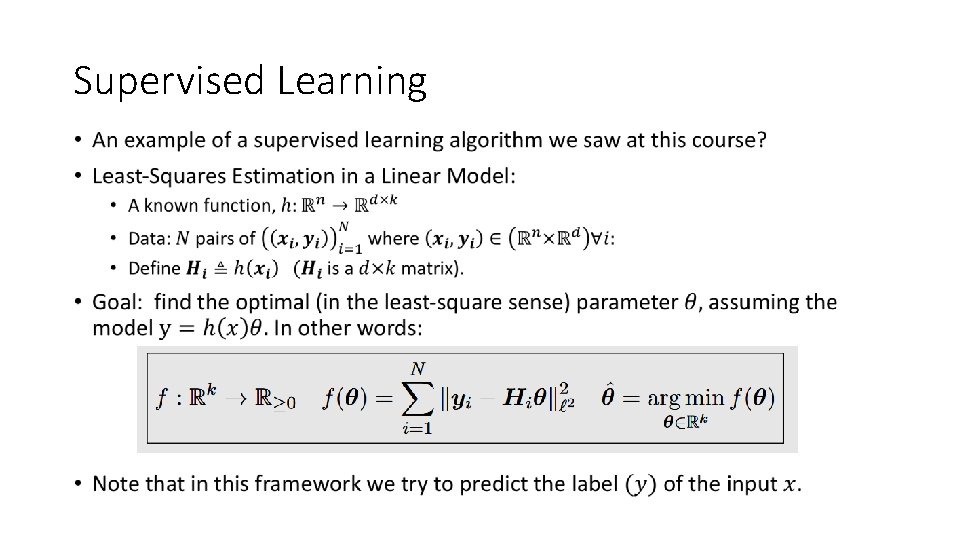

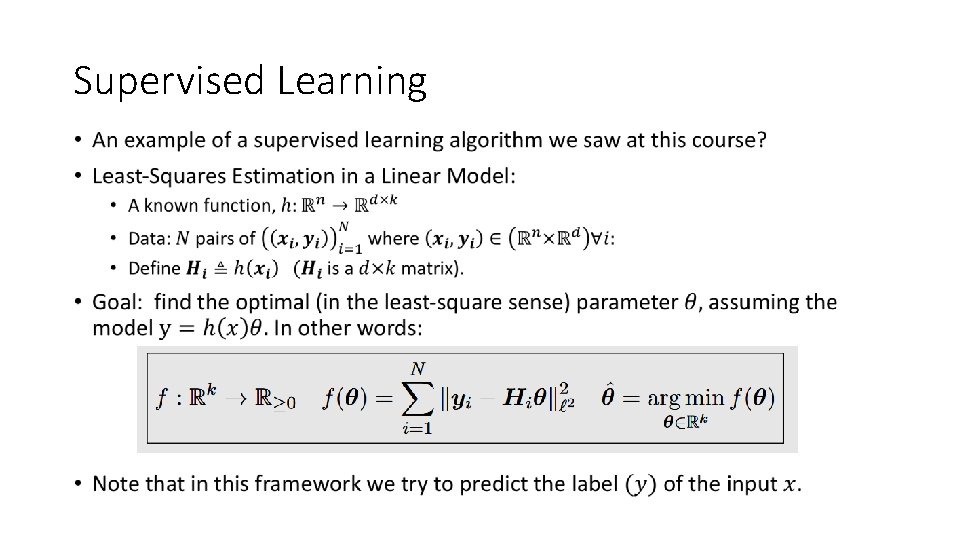

Supervised Learning •

Unsupervised Learning • Solve some task given “unlabeled” data. • Can anyone can think of an example?

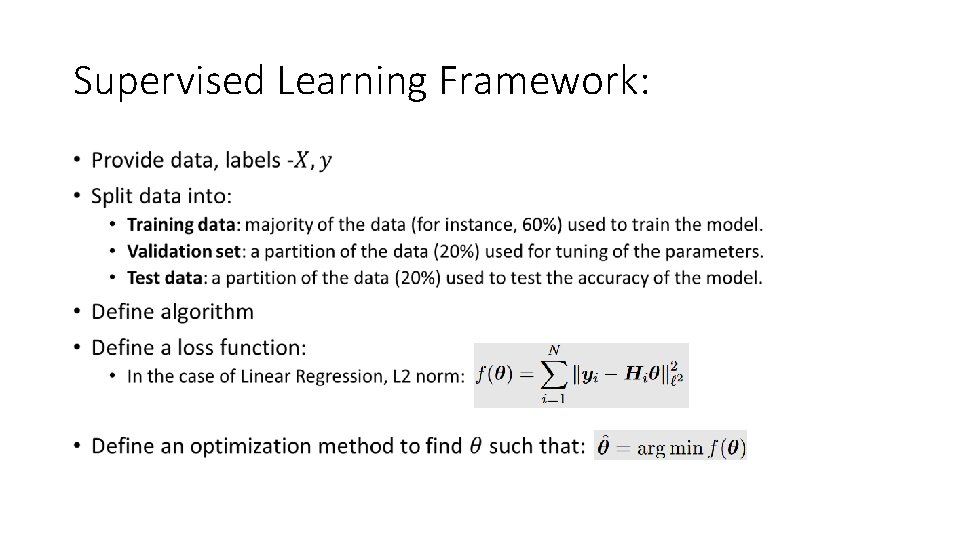

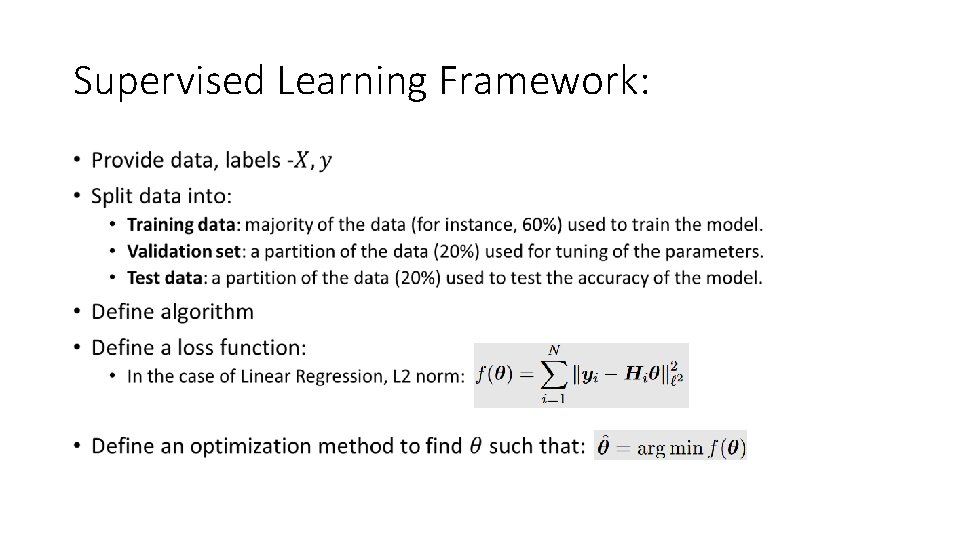

Supervised Learning Framework: •

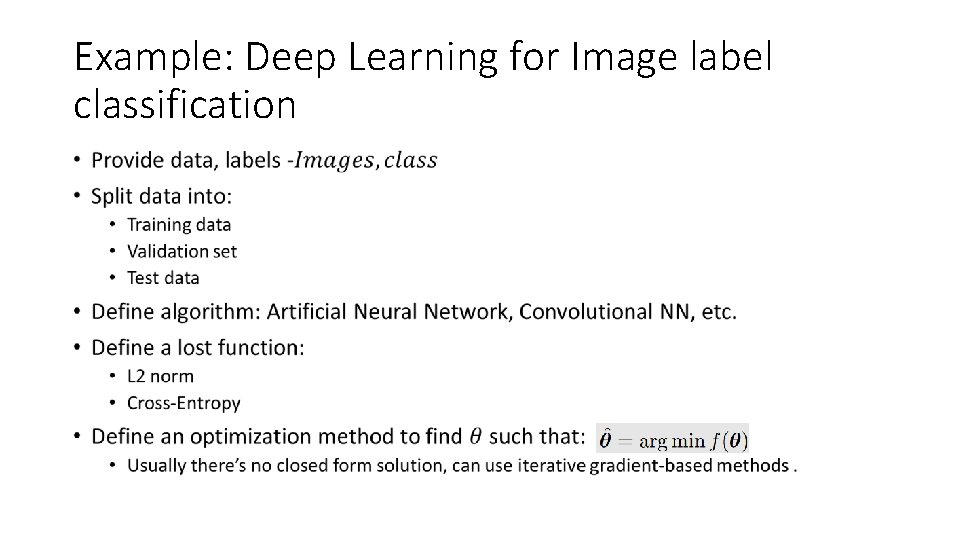

Example: Deep Learning for Image label classification •

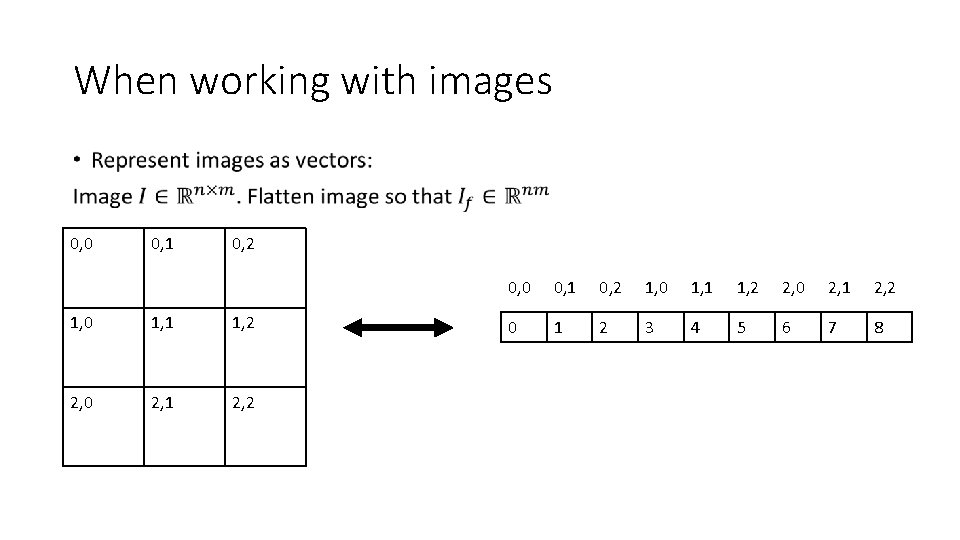

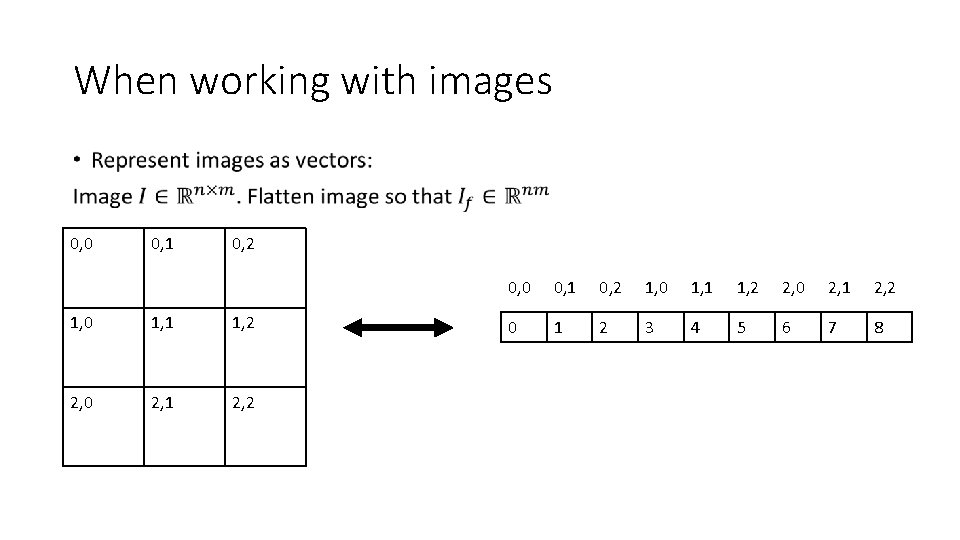

When working with images • 0, 0 0, 1 0, 2 1, 0 1, 1 1, 2 2, 0 2, 1 2, 2 0 1 2 3 4 5 6 7 8

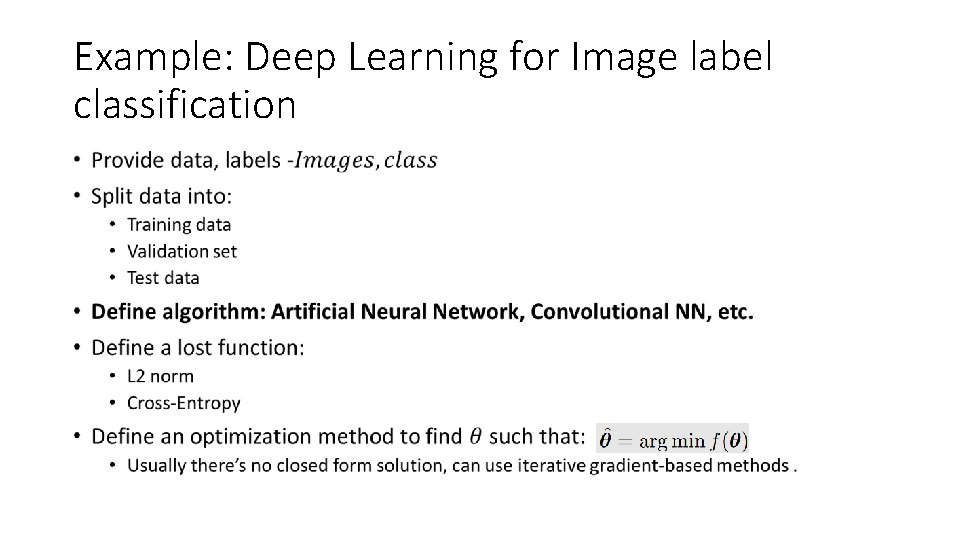

Example: Deep Learning for Image label classification •

The linear perceptron How it all began

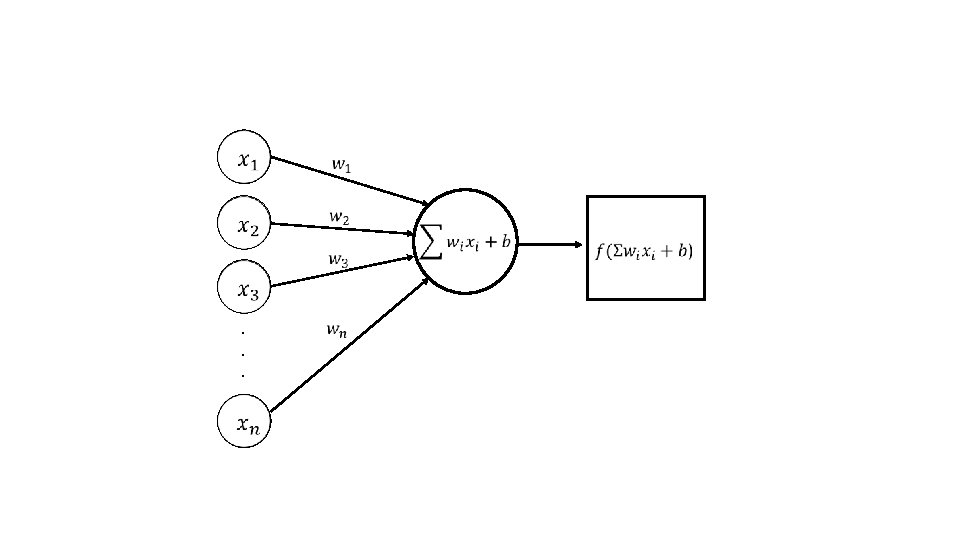

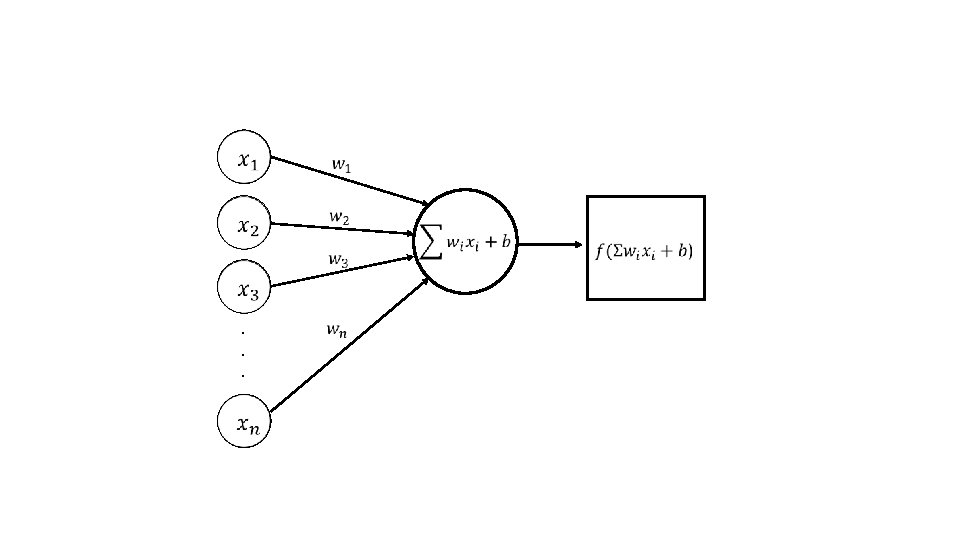

. . . Perceptron

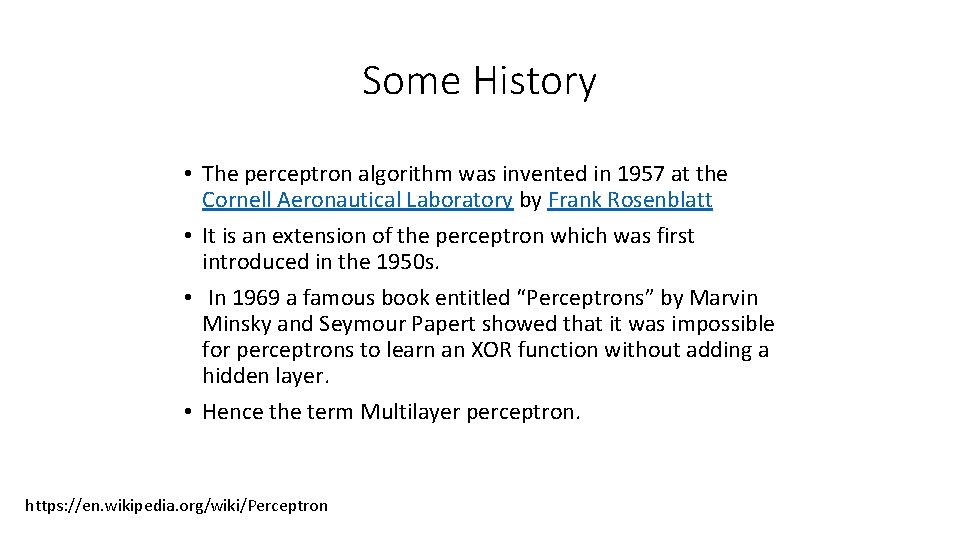

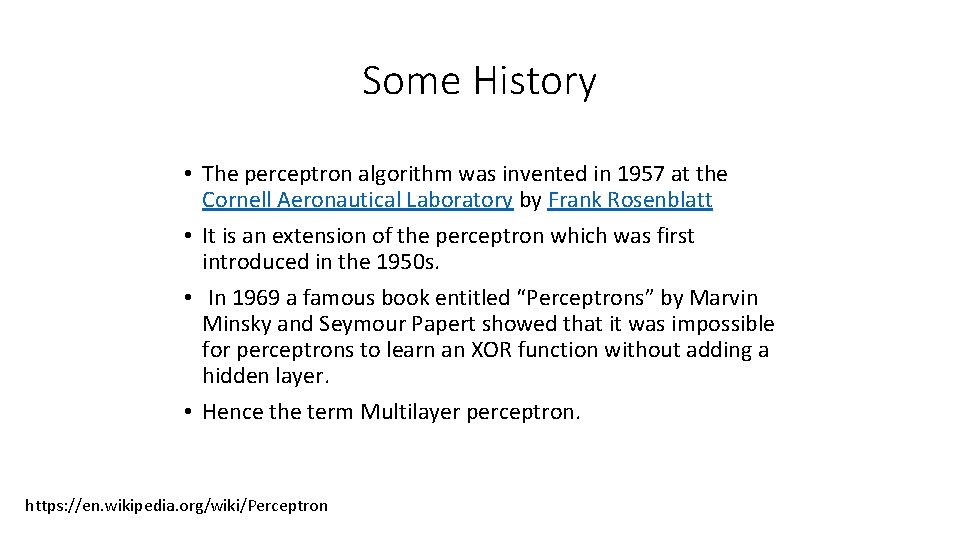

Some History • The perceptron algorithm was invented in 1957 at the Cornell Aeronautical Laboratory by Frank Rosenblatt • It is an extension of the perceptron which was first introduced in the 1950 s. • In 1969 a famous book entitled “Perceptrons” by Marvin Minsky and Seymour Papert showed that it was impossible for perceptrons to learn an XOR function without adding a hidden layer. • Hence the term Multilayer perceptron. https: //en. wikipedia. org/wiki/Perceptron

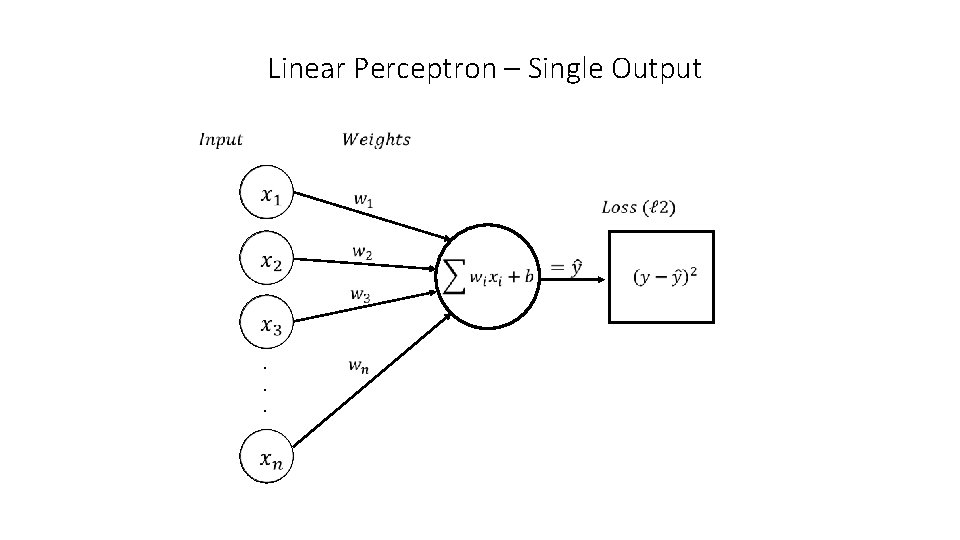

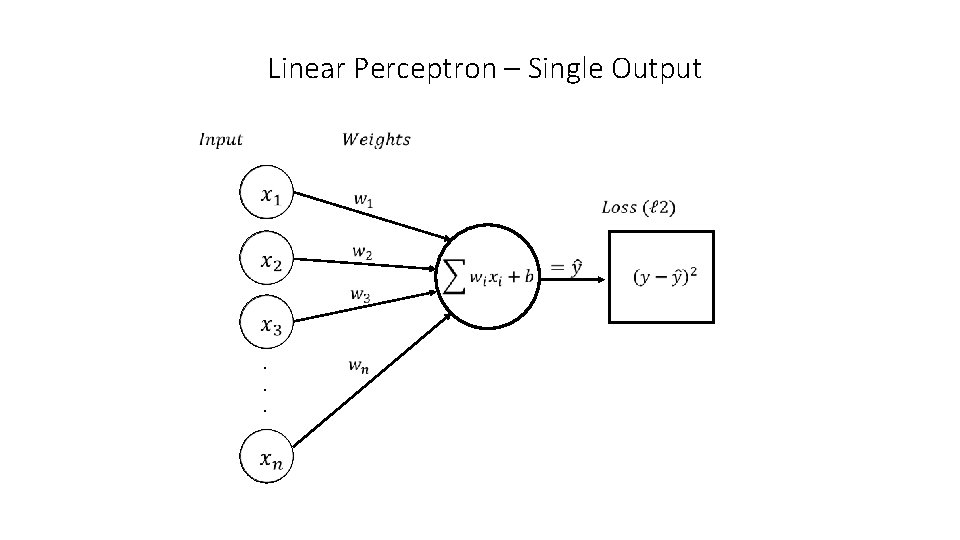

Linear Perceptron – Single Output . . .

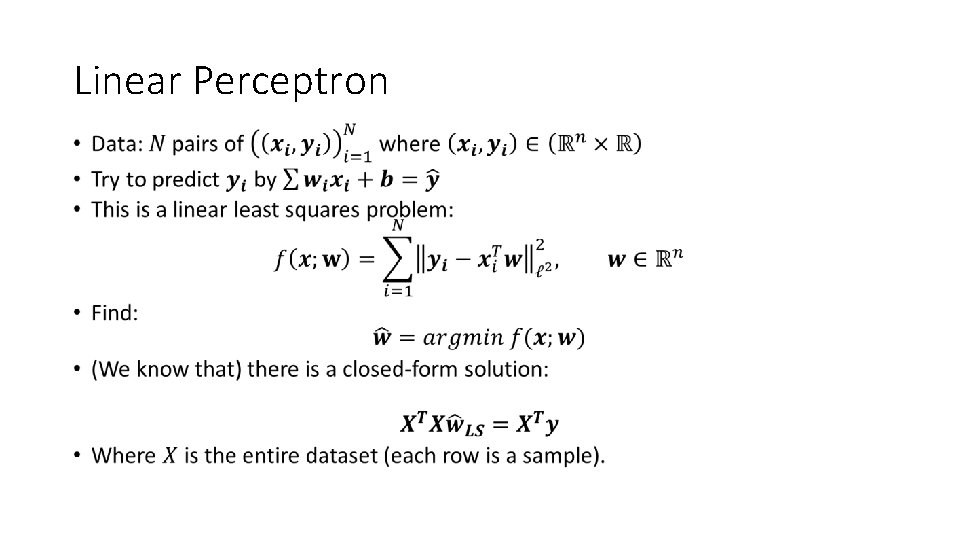

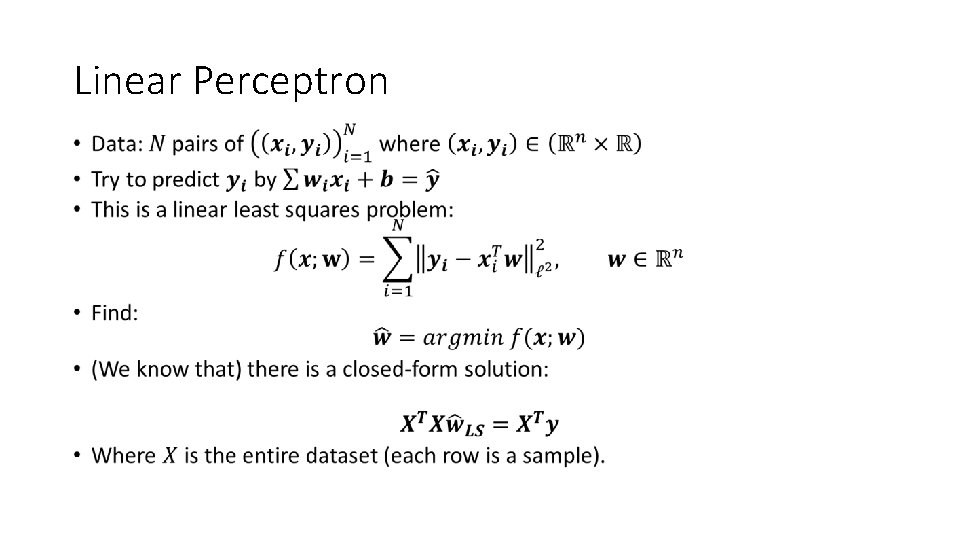

Linear Perceptron •

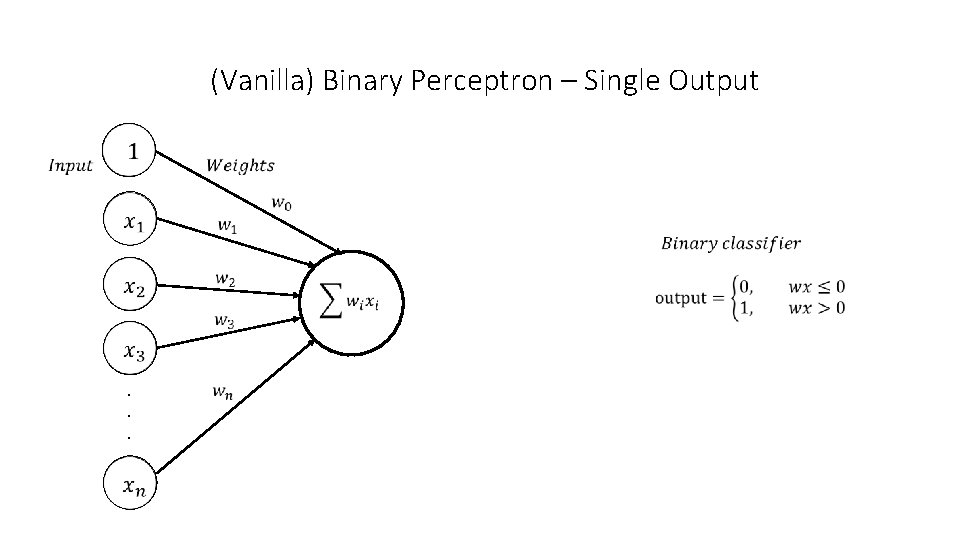

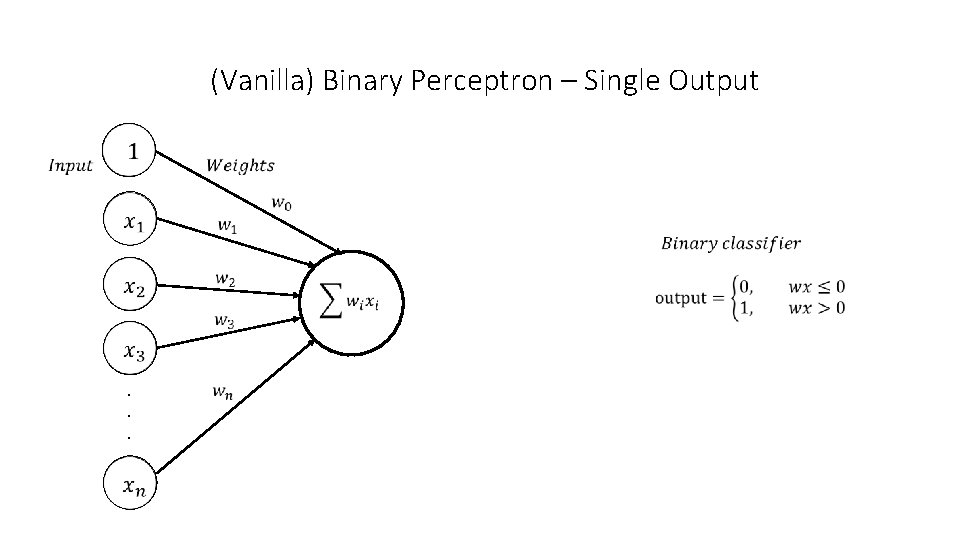

(Vanilla) Binary Perceptron – Single Output . . .

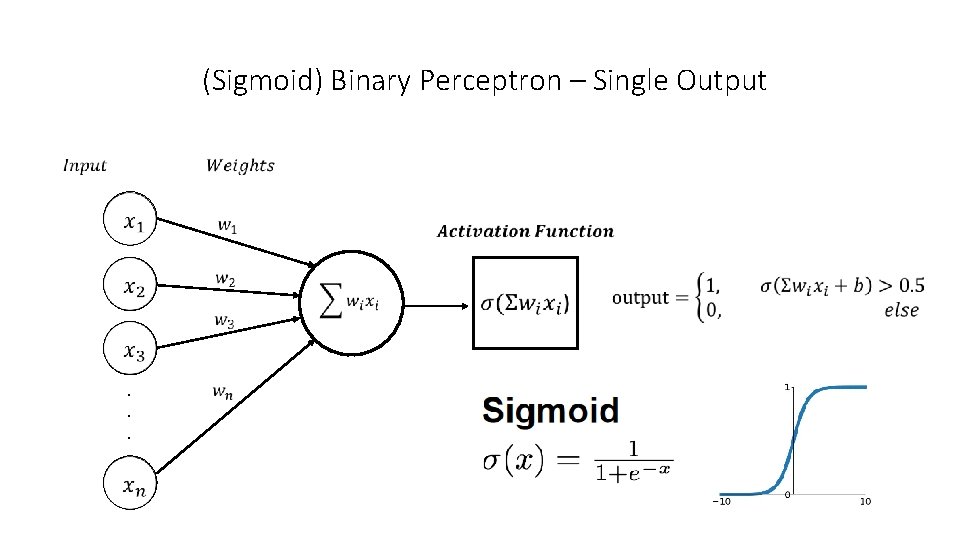

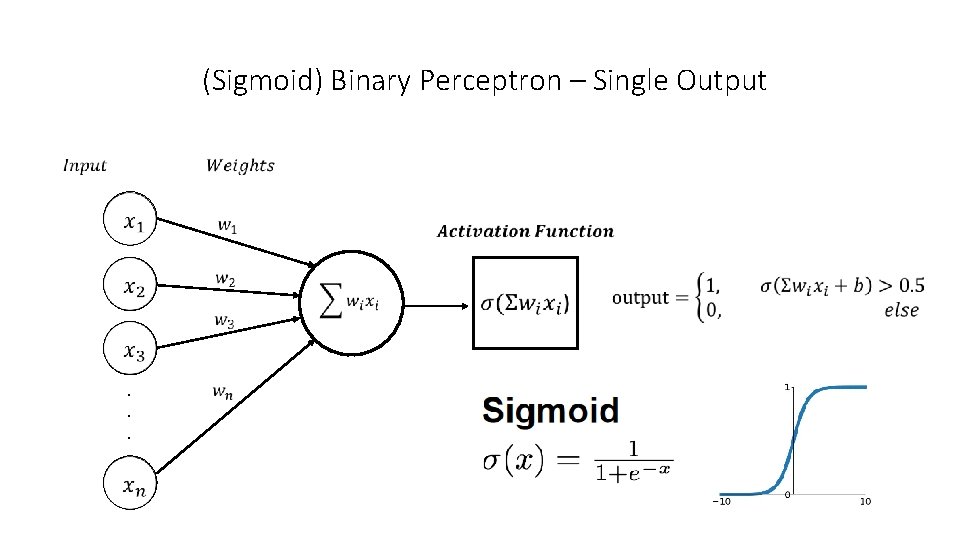

(Sigmoid) Binary Perceptron – Single Output . . .

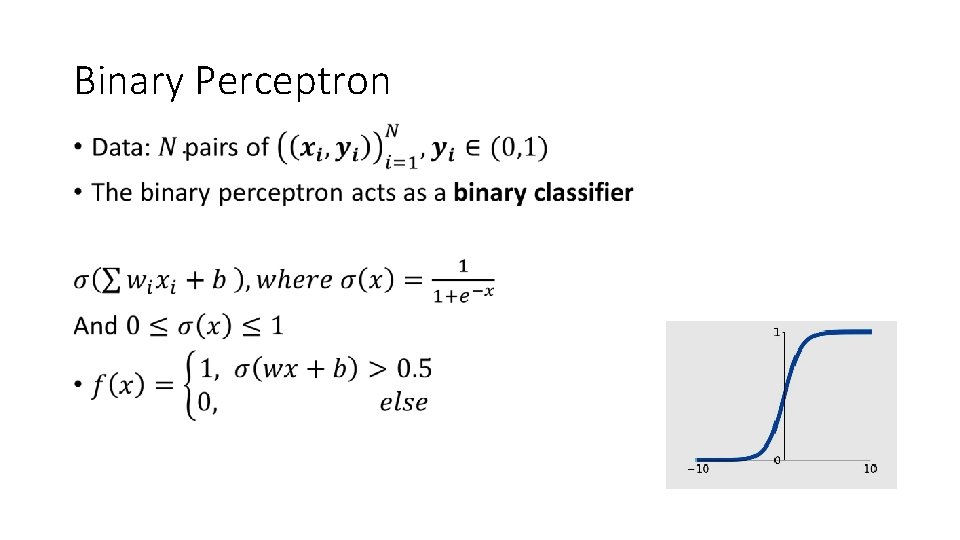

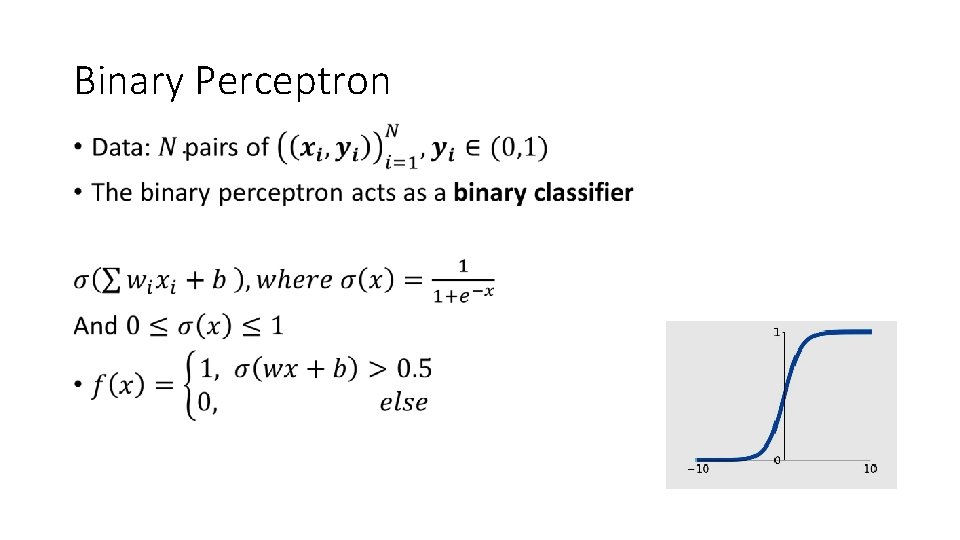

Binary Perceptron •

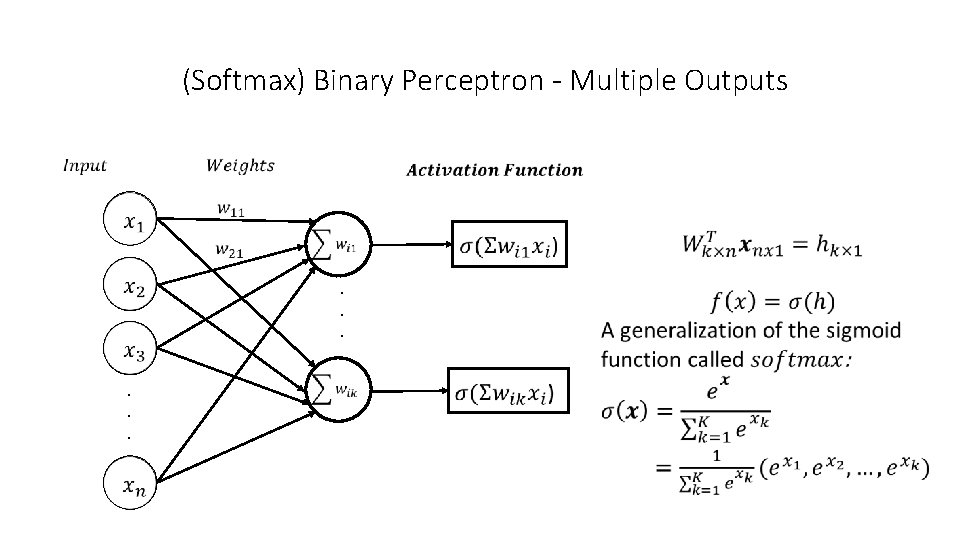

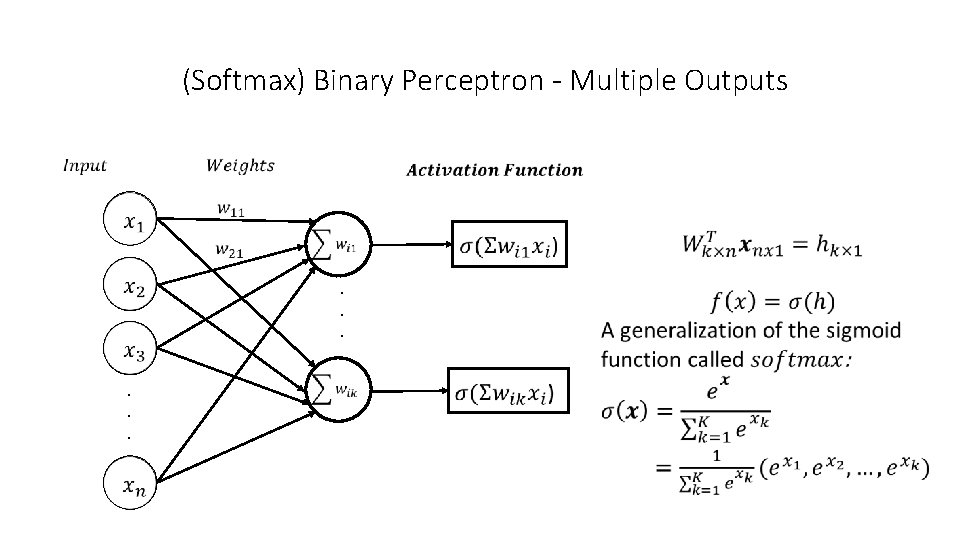

(Softmax) Binary Perceptron - Multiple Outputs . . .

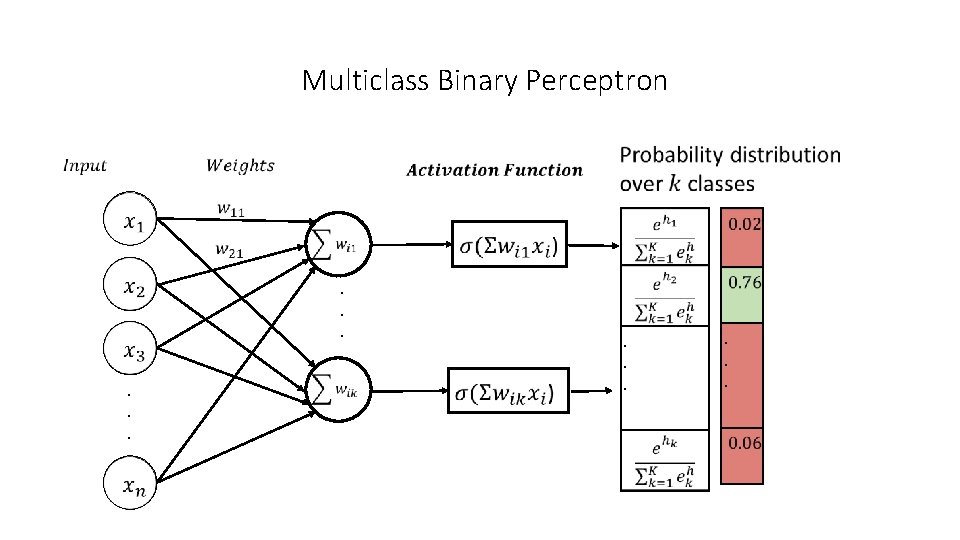

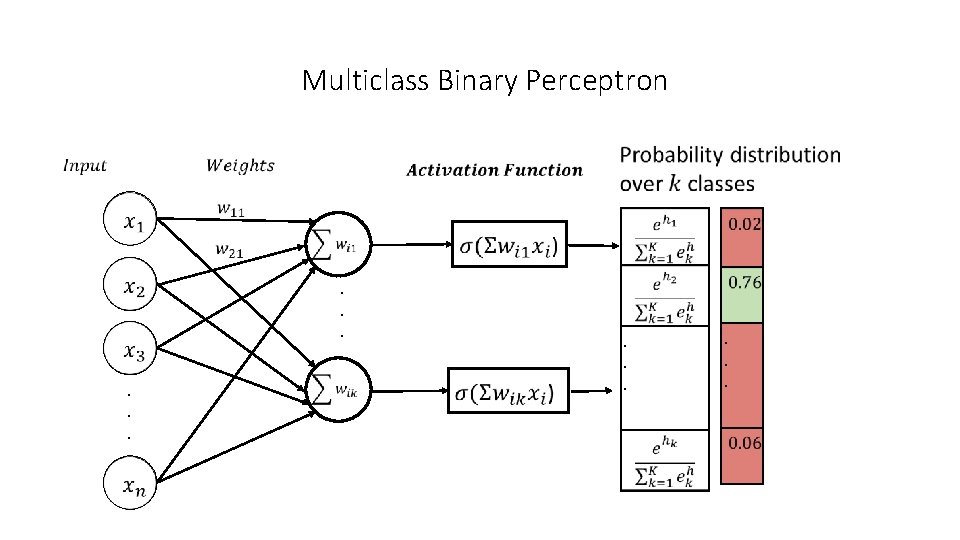

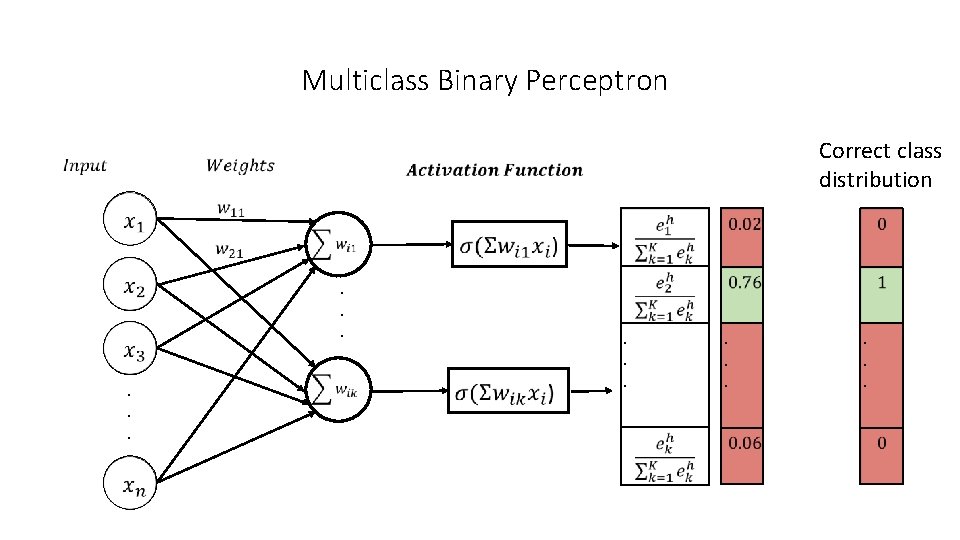

Multiclass Binary Perceptron . . .

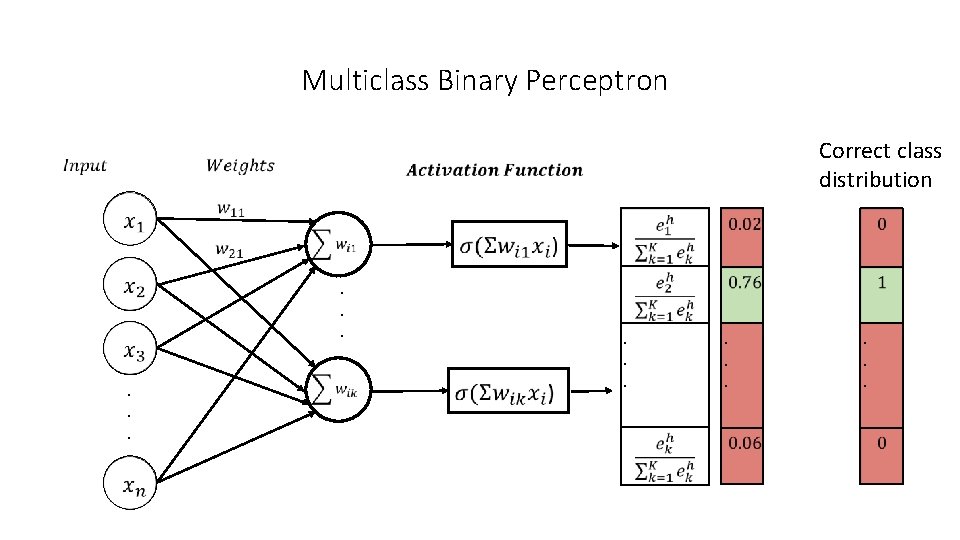

Multiclass Binary Perceptron . . . Correct class distribution . .

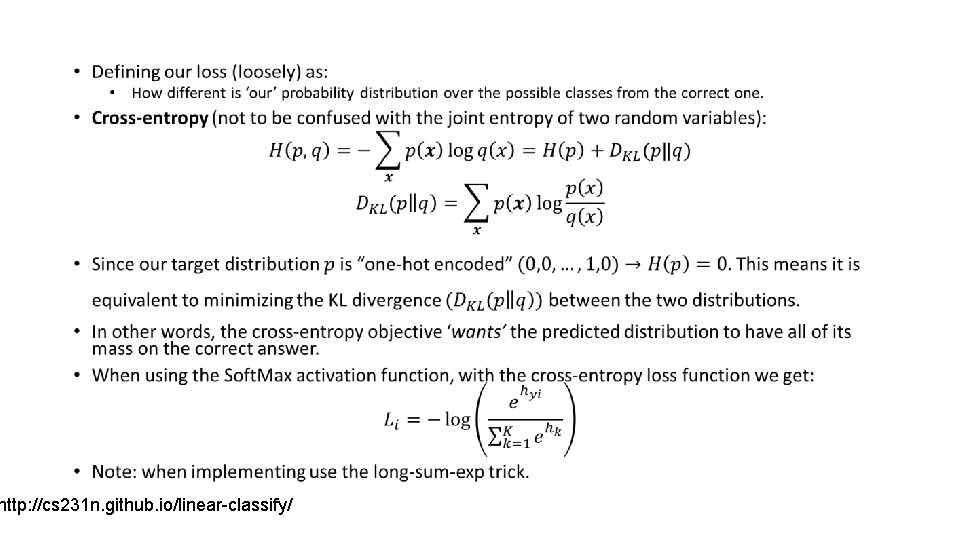

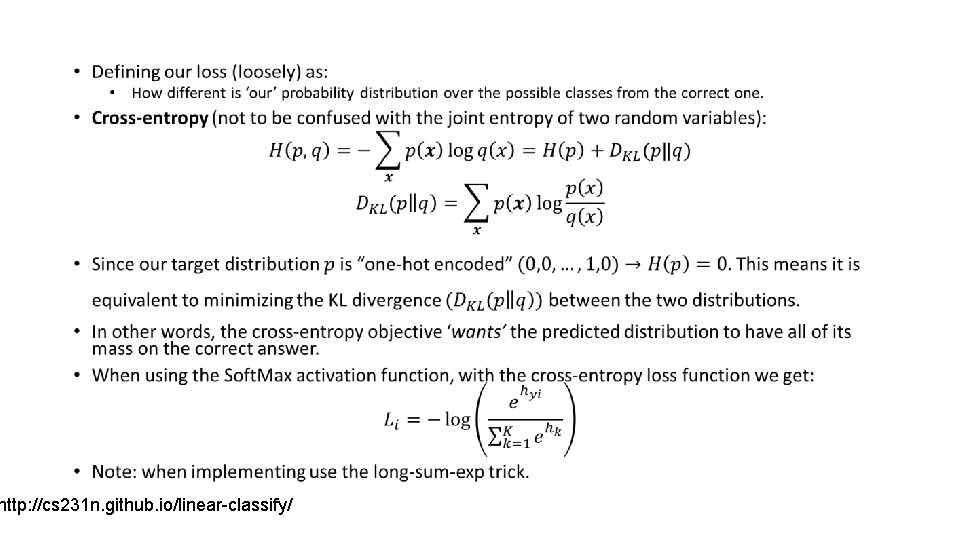

• http: //cs 231 n. github. io/linear-classify/

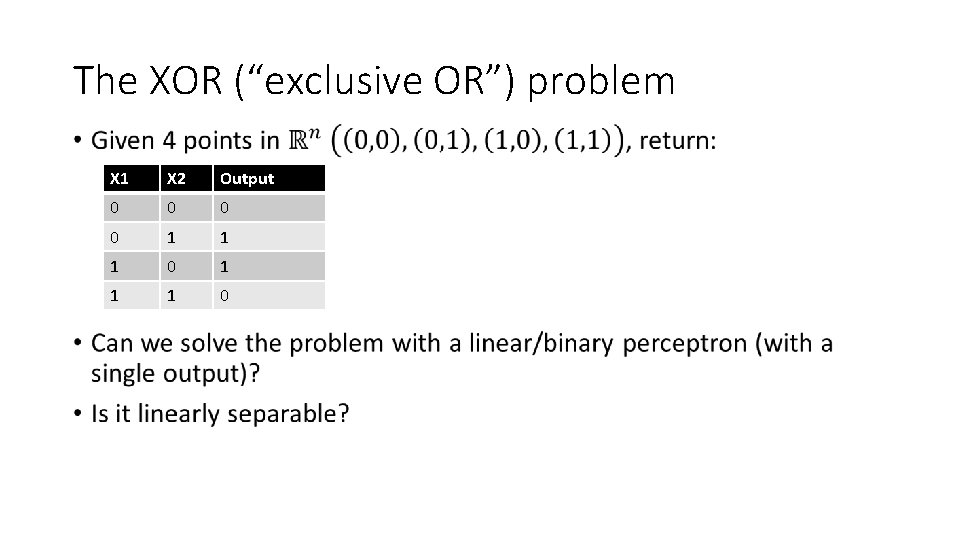

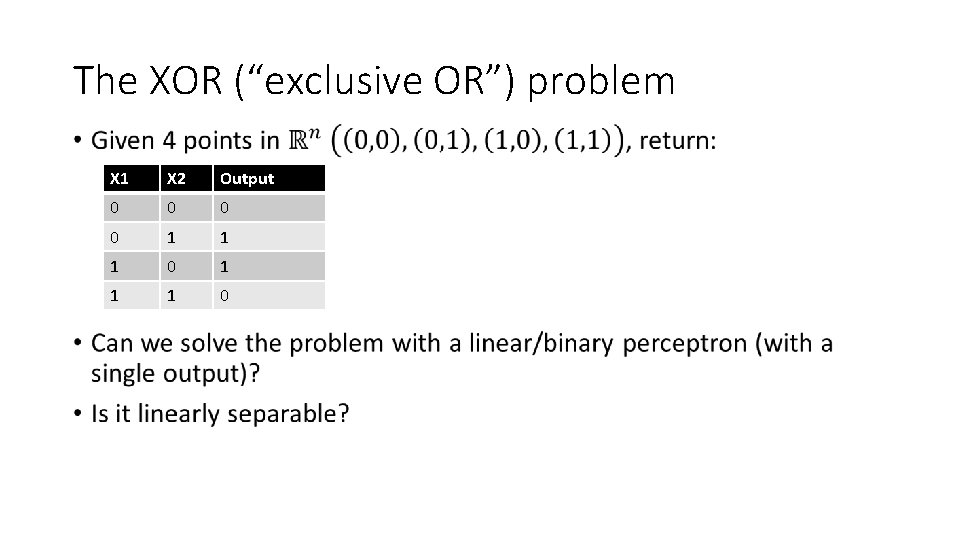

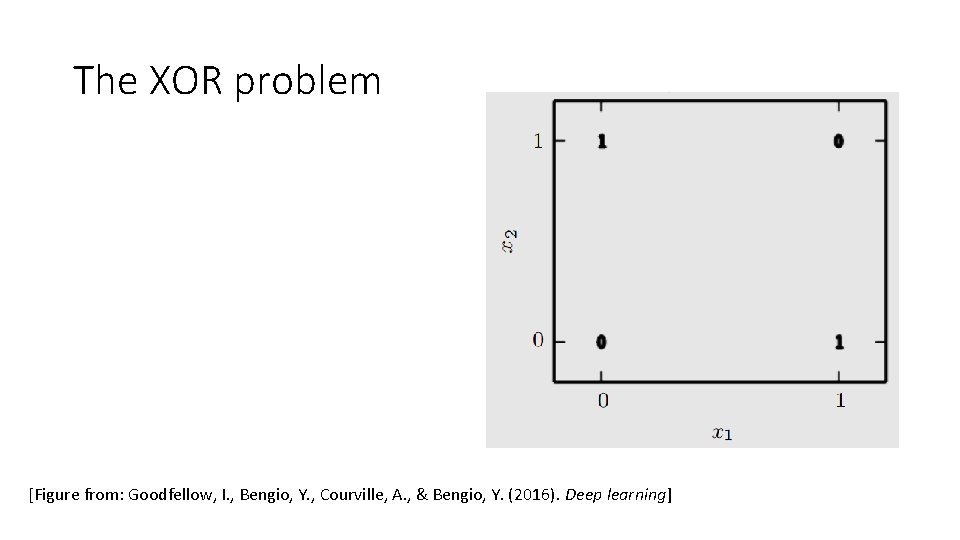

The XOR (“exclusive OR”) problem • X 1 X 2 Output 0 0 1 1 1 0

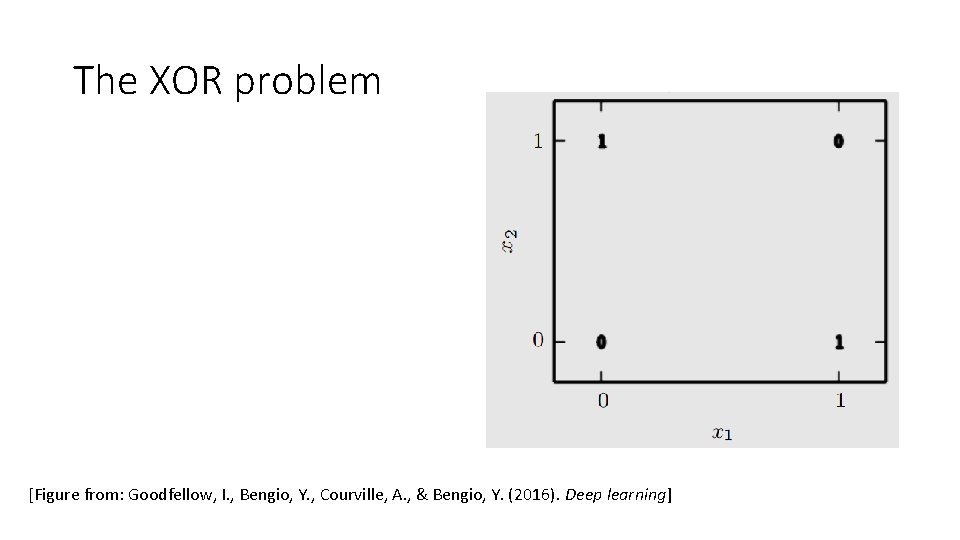

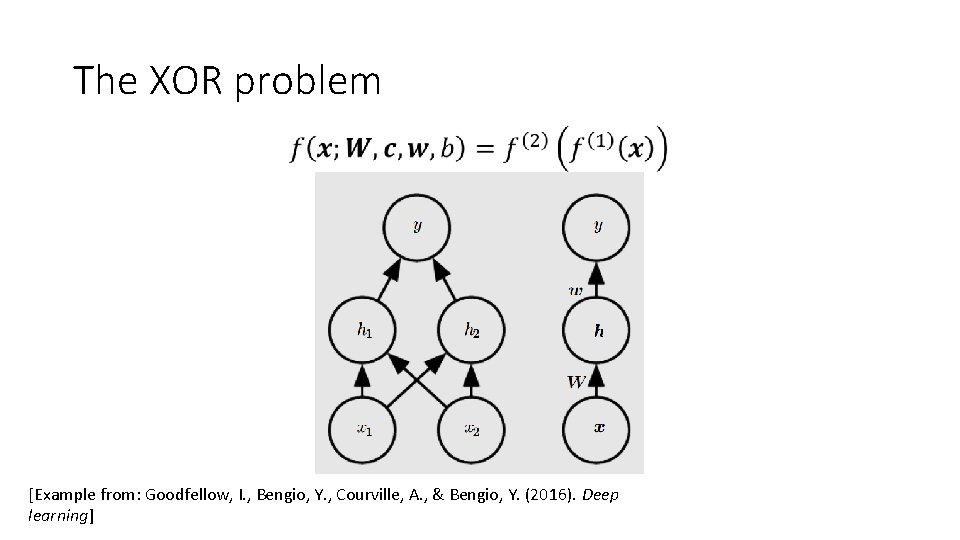

The XOR problem [Figure from: Goodfellow, I. , Bengio, Y. , Courville, A. , & Bengio, Y. (2016). Deep learning]

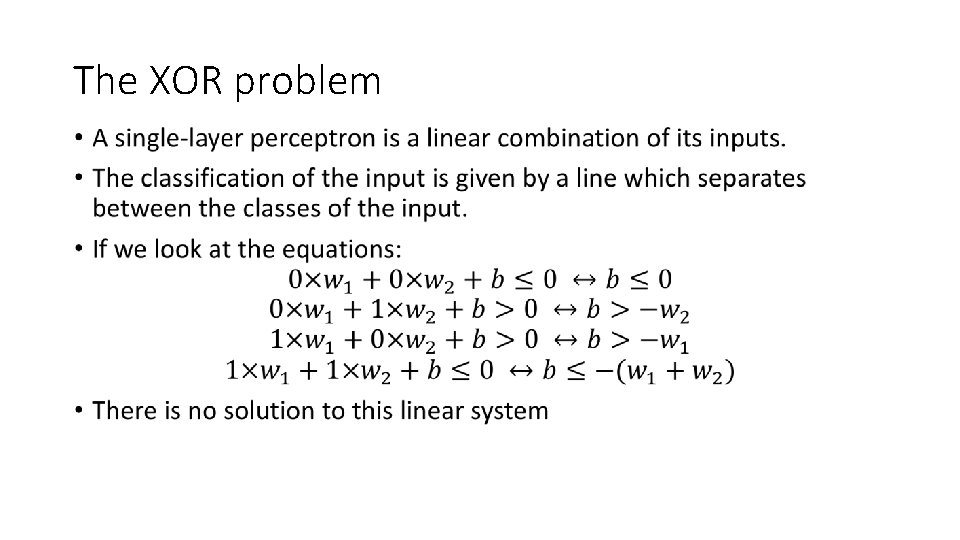

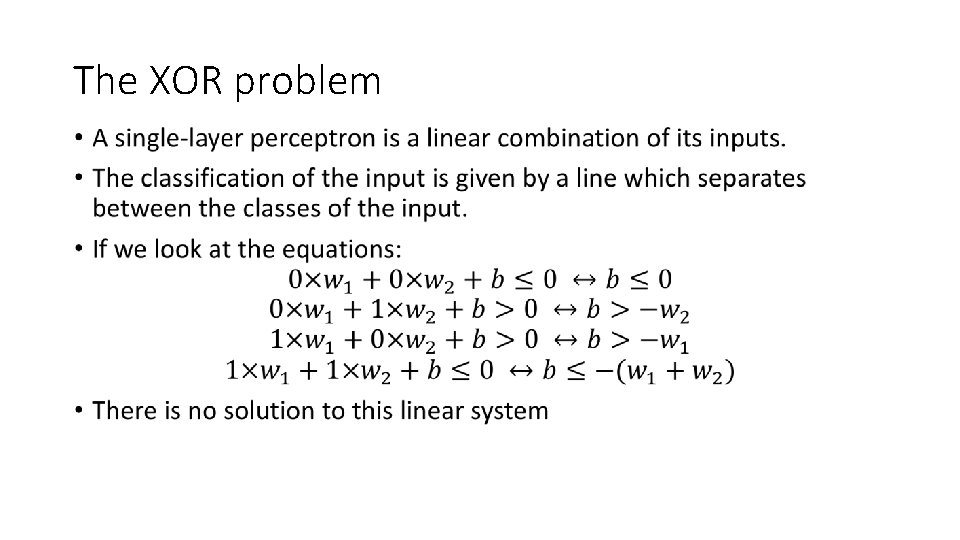

The XOR problem •

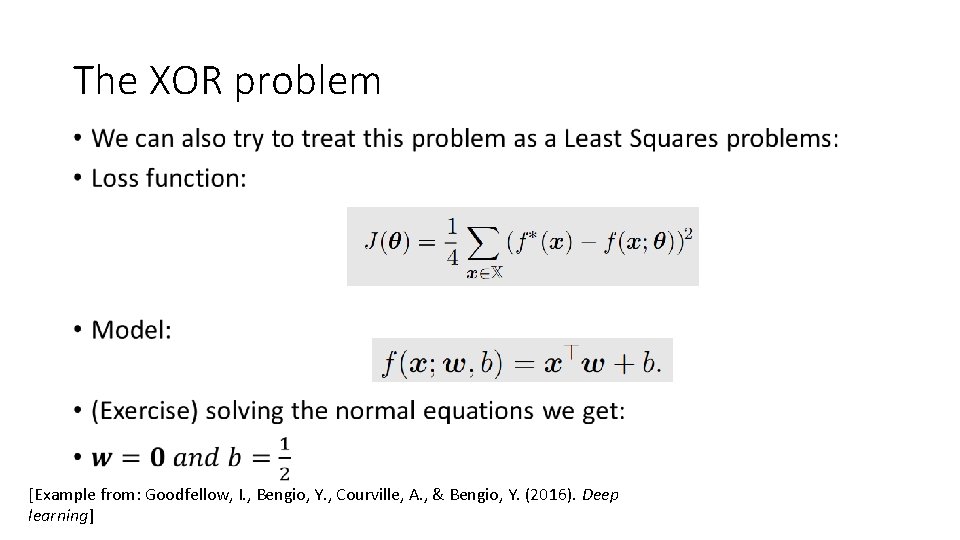

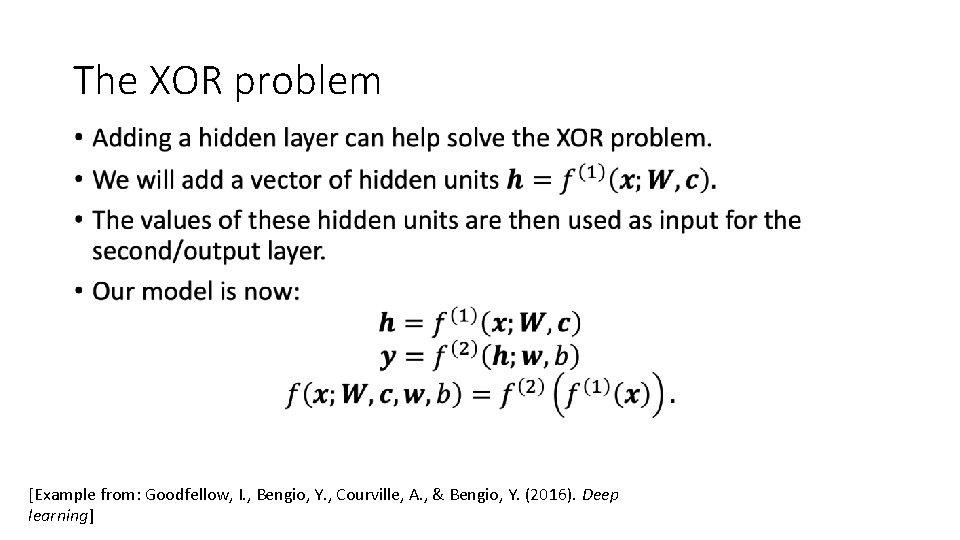

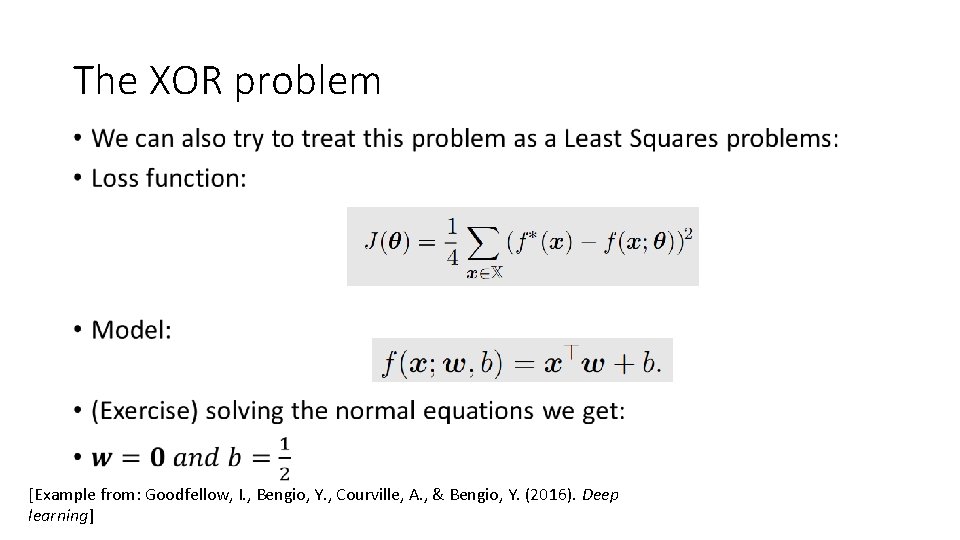

The XOR problem • [Example from: Goodfellow, I. , Bengio, Y. , Courville, A. , & Bengio, Y. (2016). Deep learning]

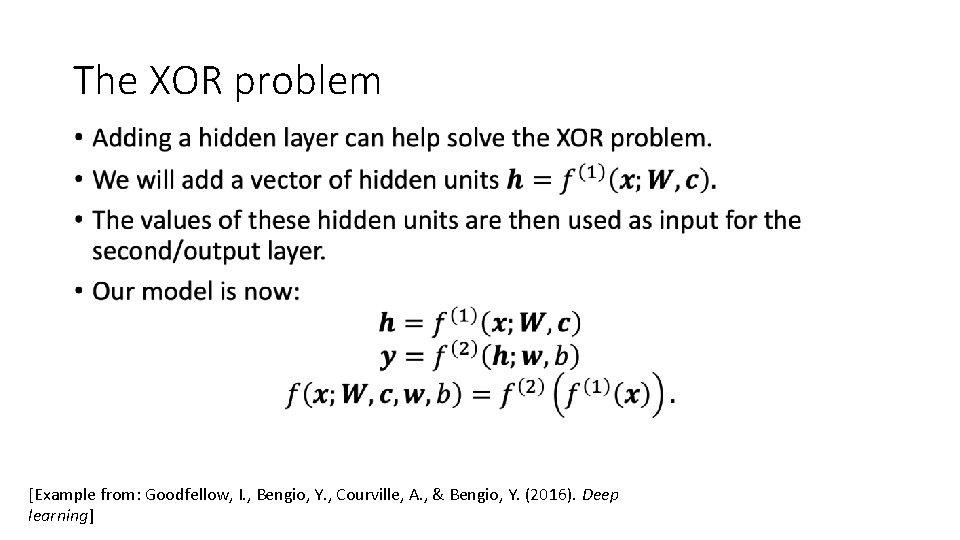

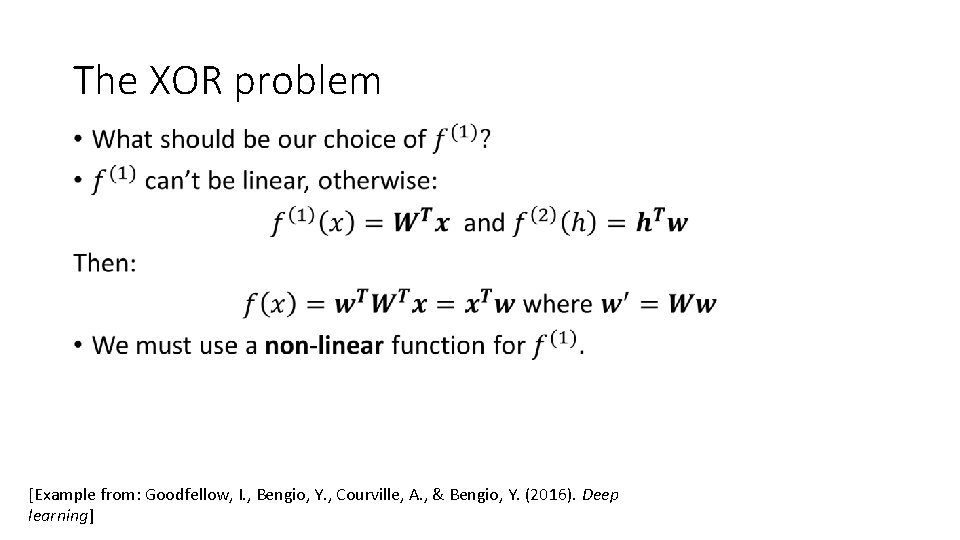

The XOR problem • [Example from: Goodfellow, I. , Bengio, Y. , Courville, A. , & Bengio, Y. (2016). Deep learning]

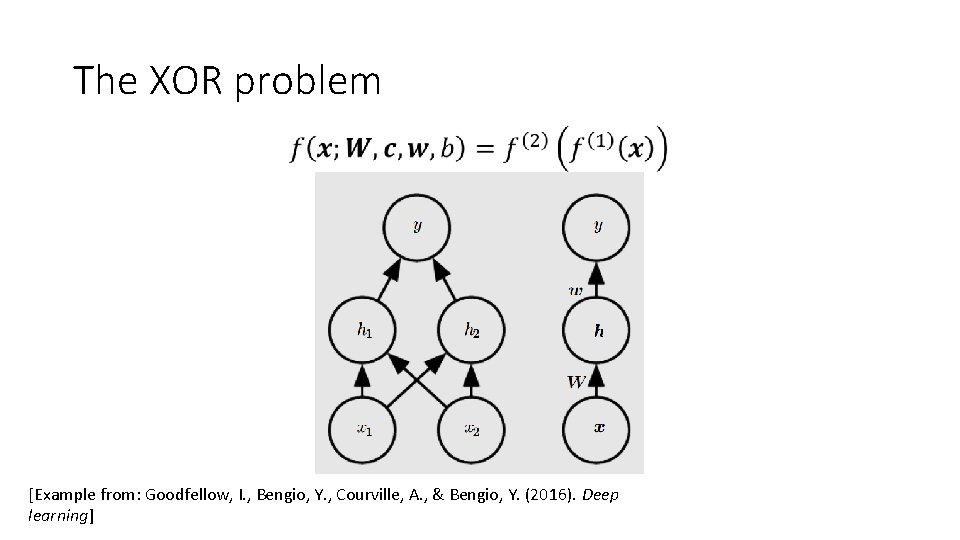

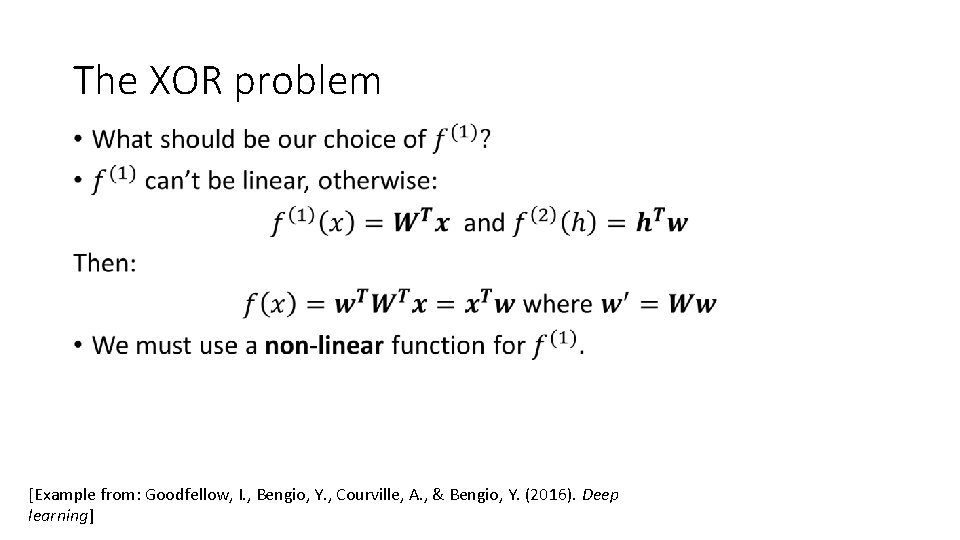

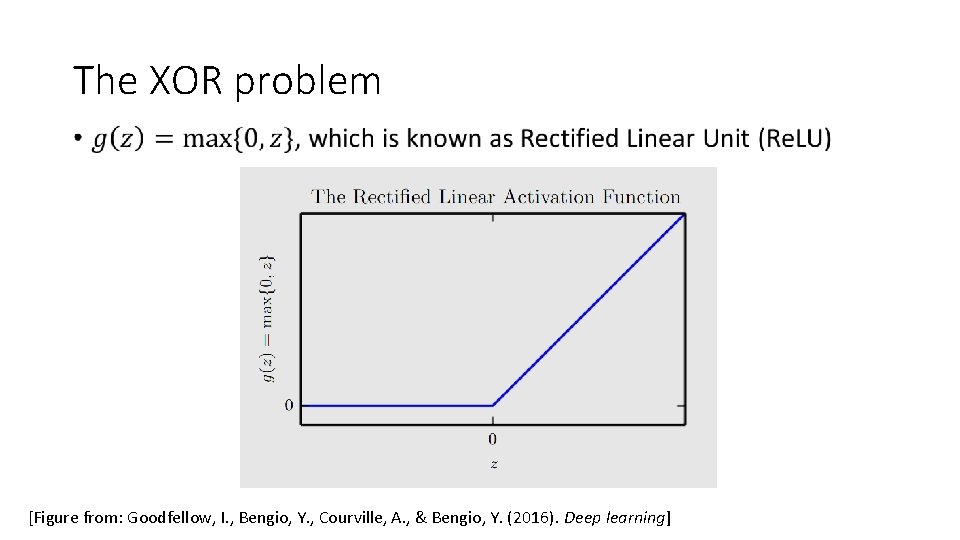

The XOR problem • [Example from: Goodfellow, I. , Bengio, Y. , Courville, A. , & Bengio, Y. (2016). Deep learning]

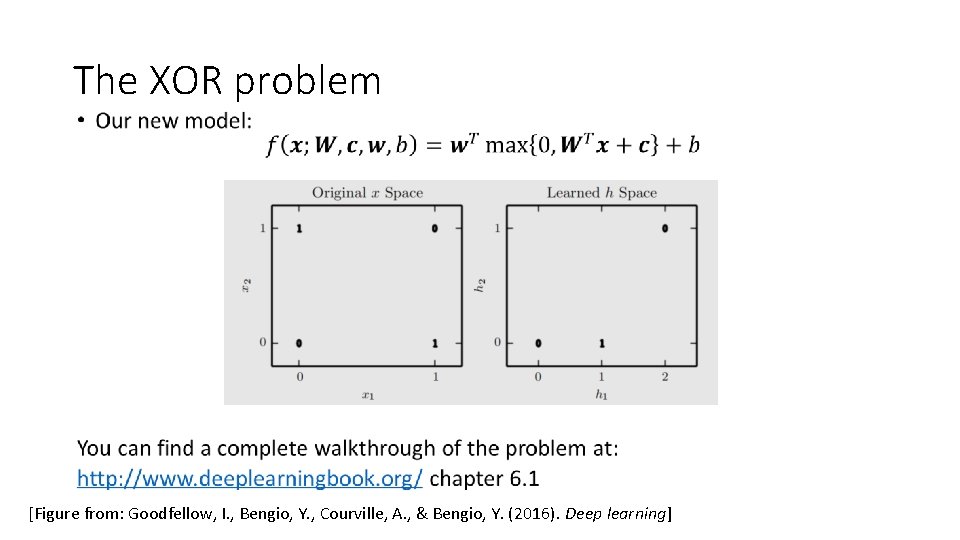

The XOR problem • [Example from: Goodfellow, I. , Bengio, Y. , Courville, A. , & Bengio, Y. (2016). Deep learning]

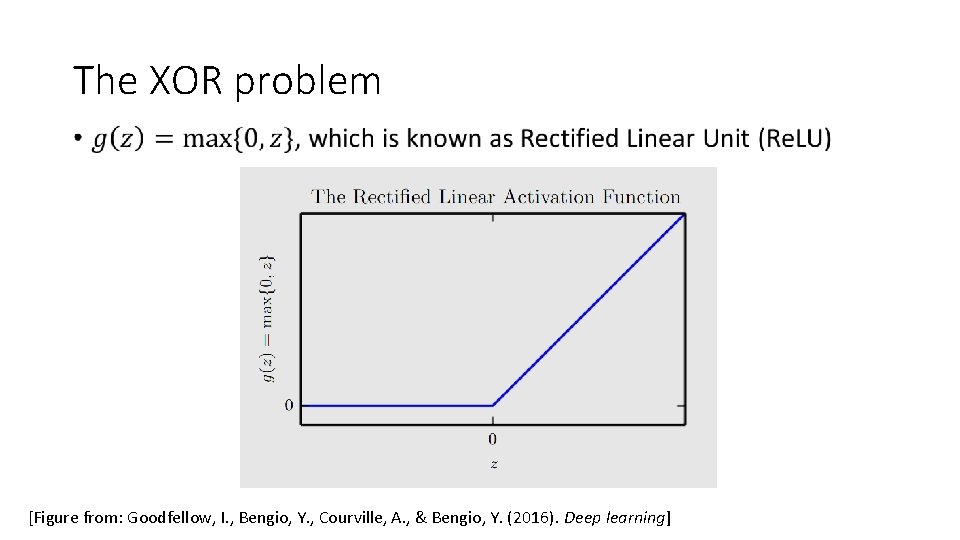

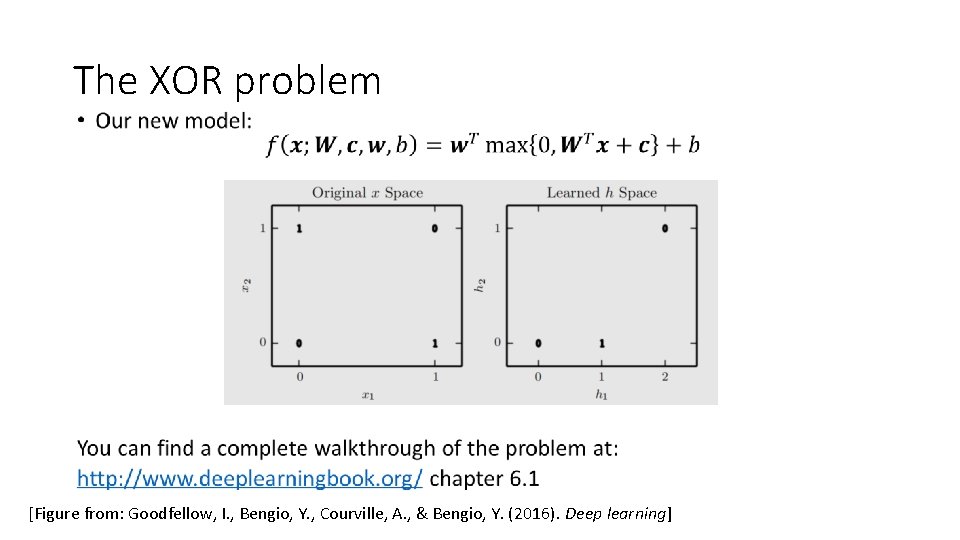

The XOR problem • [Figure from: Goodfellow, I. , Bengio, Y. , Courville, A. , & Bengio, Y. (2016). Deep learning]

The XOR problem [Figure from: Goodfellow, I. , Bengio, Y. , Courville, A. , & Bengio, Y. (2016). Deep learning]

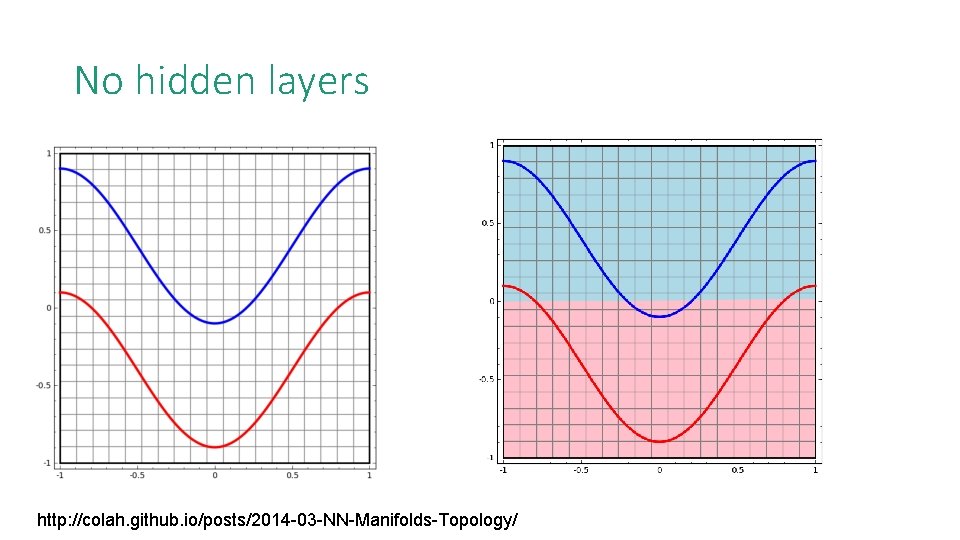

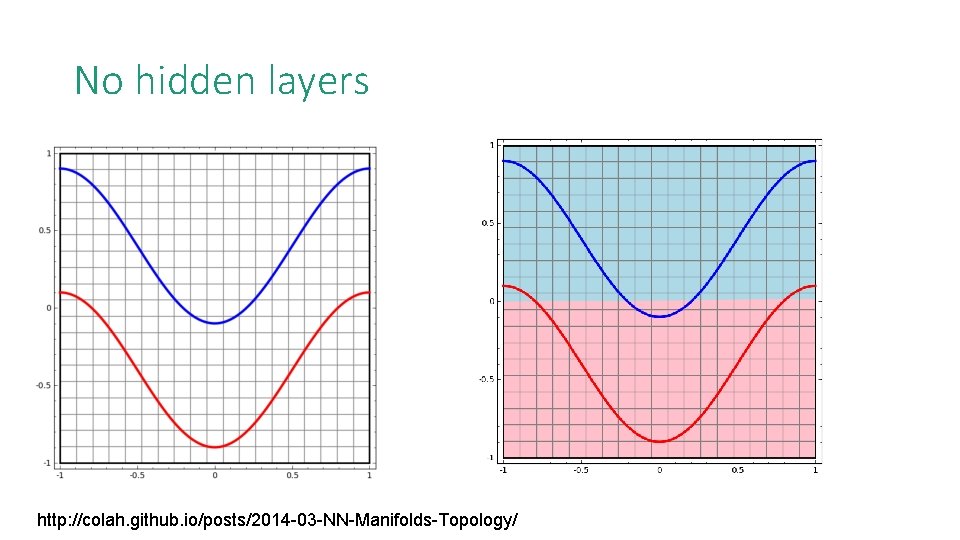

No hidden layers http: //colah. github. io/posts/2014 -03 -NN-Manifolds-Topology/

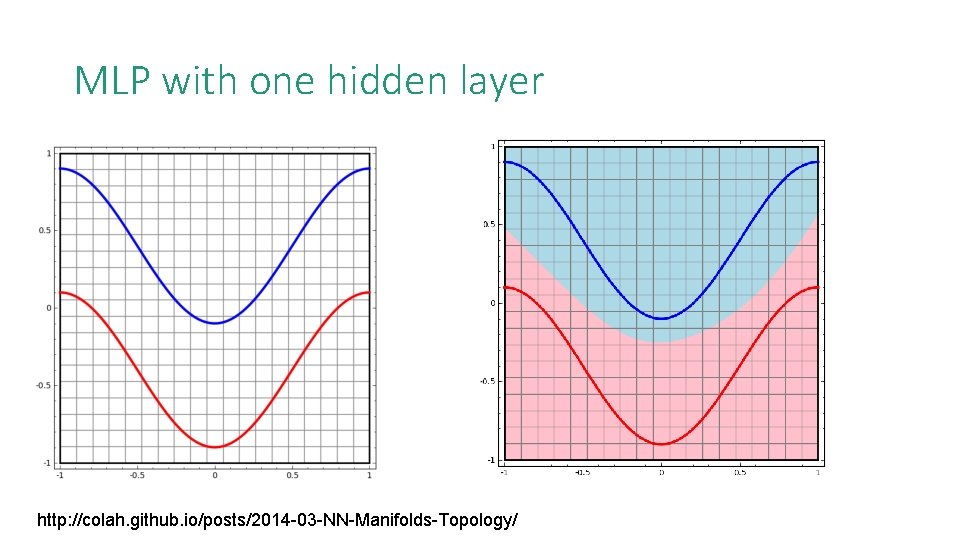

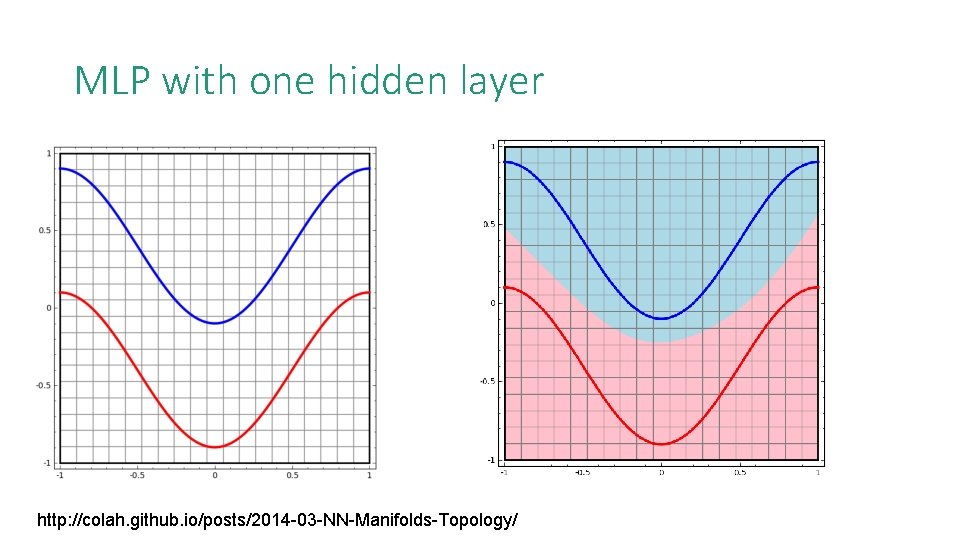

MLP with one hidden layer http: //colah. github. io/posts/2014 -03 -NN-Manifolds-Topology/

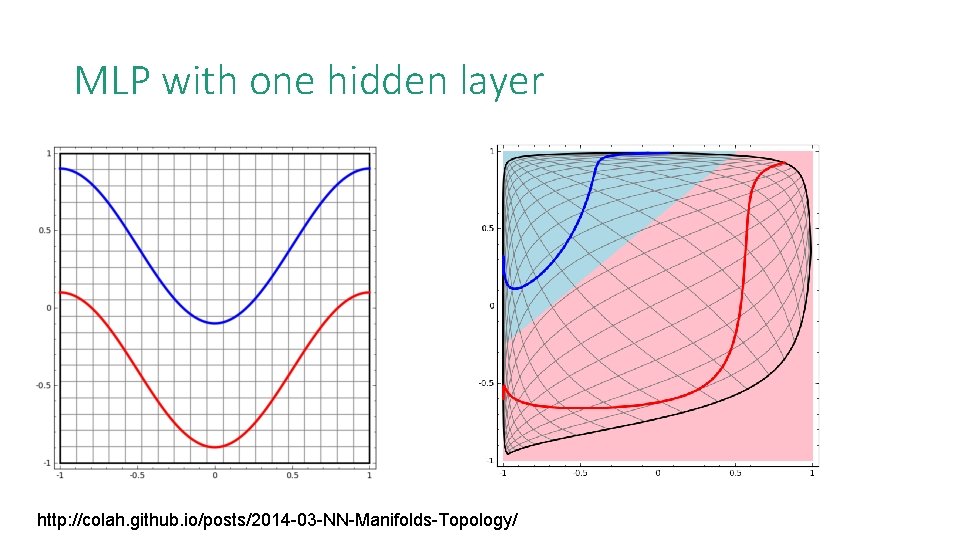

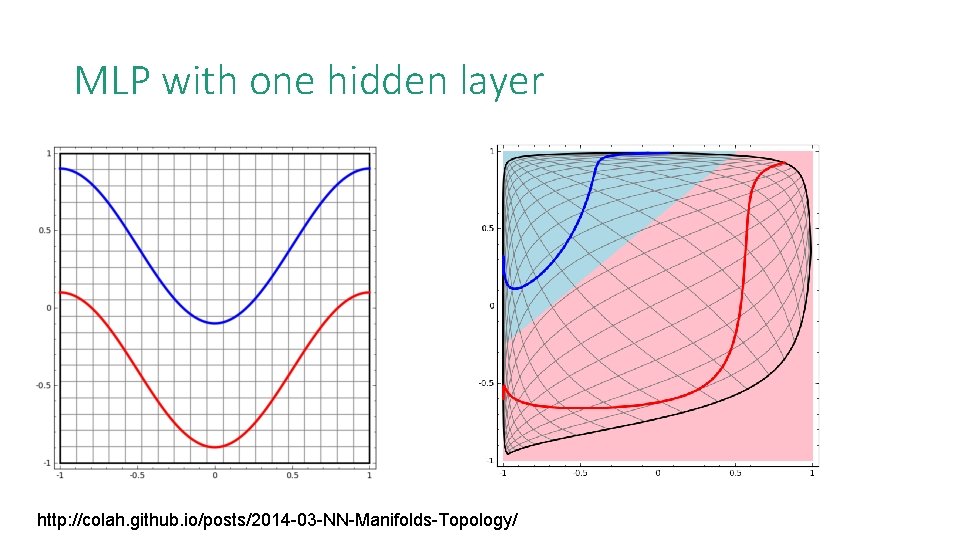

MLP with one hidden layer http: //colah. github. io/posts/2014 -03 -NN-Manifolds-Topology/

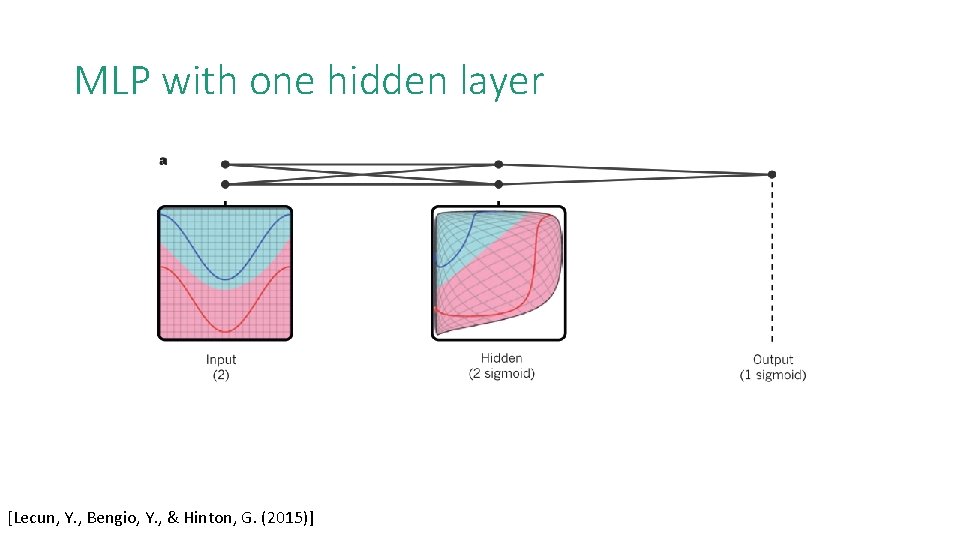

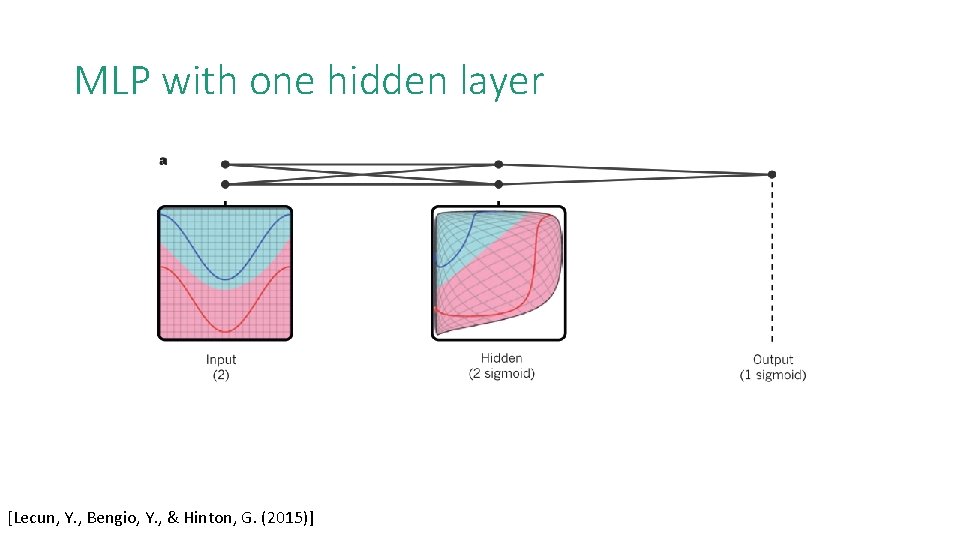

MLP with one hidden layer [Lecun, Y. , Bengio, Y. , & Hinton, G. (2015)]

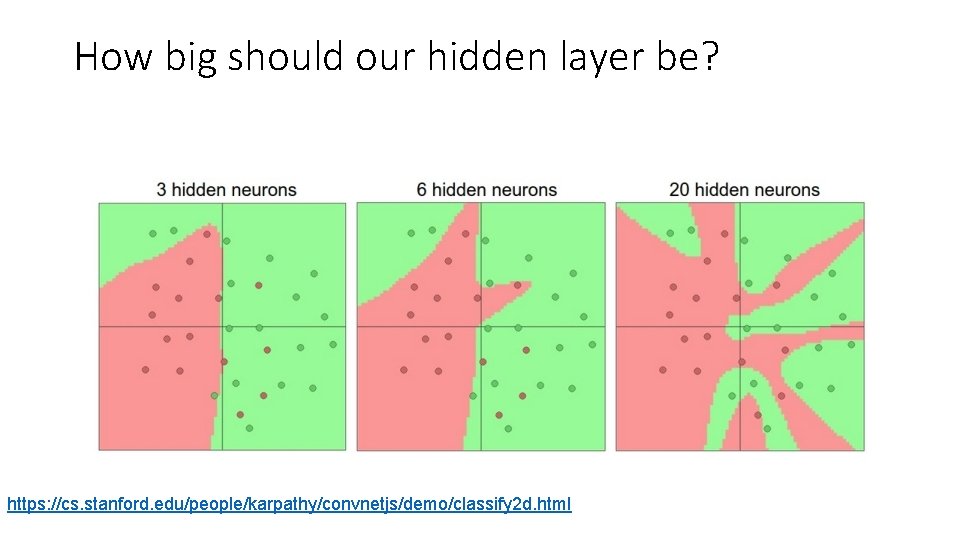

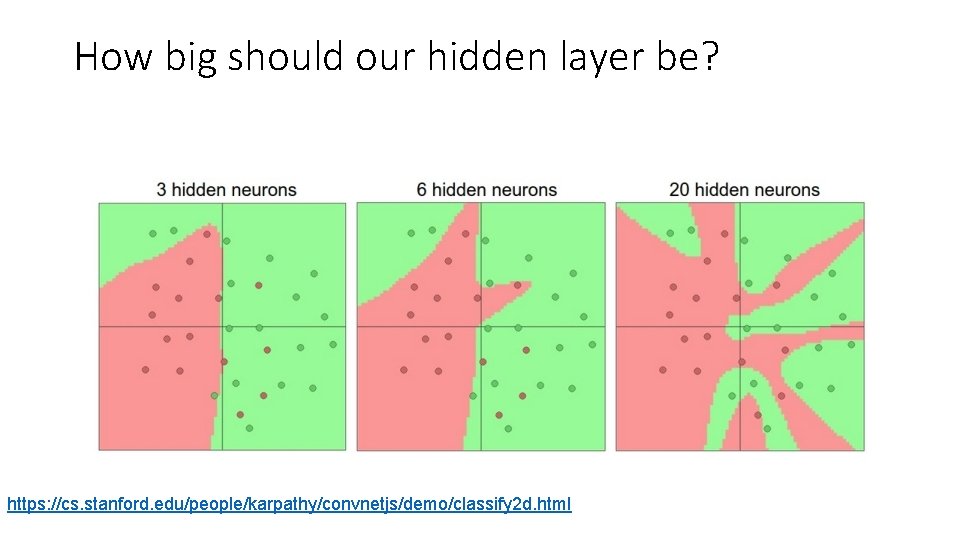

How big should our hidden layer be? https: //cs. stanford. edu/people/karpathy/convnetjs/demo/classify 2 d. html

Summary • Deep learning is a class of supervised learning algorithms. • Linear binary perceptron acts as a linear classifier. • Hidden layers (followed by non-linear activation function) allows for nonlinear transformation of the input so that it could be linear separable. • The number of neurons and connections in each layer determine our model capacity.