Curse your bones Availability Zones IntroductionBackground Craig Anderson

Curse your bones, Availability Zones!

Introduction/Background ● Craig Anderson, Principal Cloud Architect, AT&T ● The target audience is Open. Stack providers that want to understand Availability Zones, how to get the most out of them, and associated challenges ● The first half of the talk will cover high-level Availability Zone concept and design ● The second half of the talk will cover low-level details of how Availability Zones are implemented in three Open. Stack projects ● “AZ(s)” will be used as shorthand for Availability Zone(s)

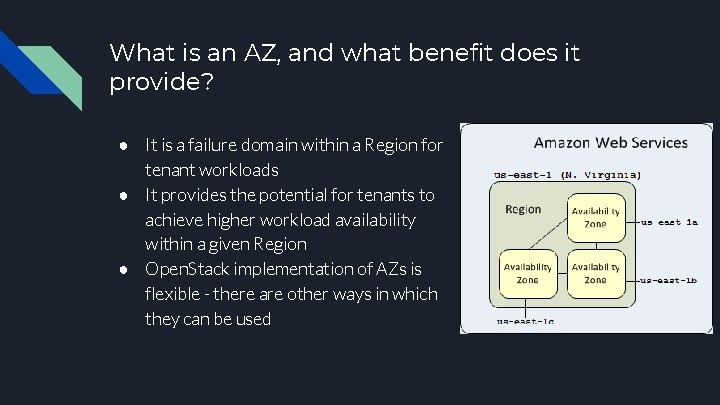

What is an AZ, and what benefit does it provide? ● It is a failure domain within a Region for tenant workloads ● It provides the potential for tenants to achieve higher workload availability within a given Region ● Open. Stack implementation of AZs is flexible - there are other ways in which they can be used

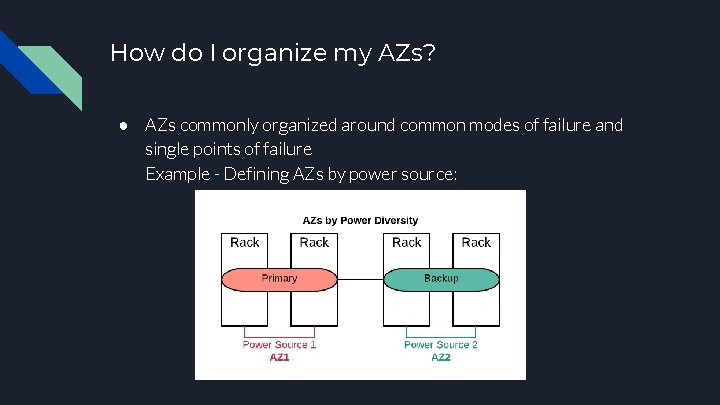

How do I organize my AZs? ● AZs commonly organized around common modes of failure and single points of failure Example - Defining AZs by power source:

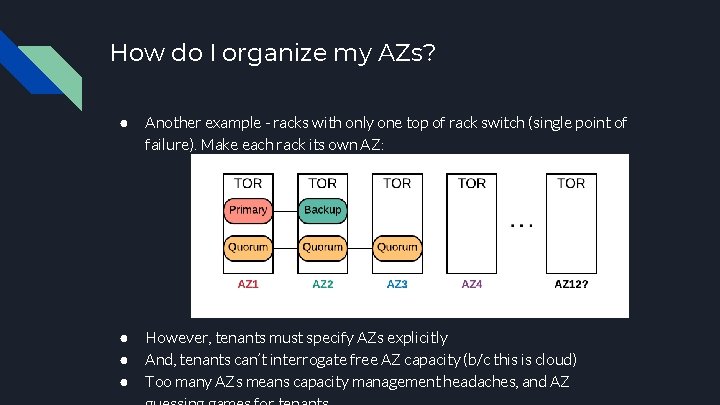

How do I organize my AZs? ● Another example - racks with only one top of rack switch (single point of failure). Make each rack its own AZ: ● ● ● However, tenants must specify AZs explicitly And, tenants can’t interrogate free AZ capacity (b/c this is cloud) Too many AZs means capacity management headaches, and AZ

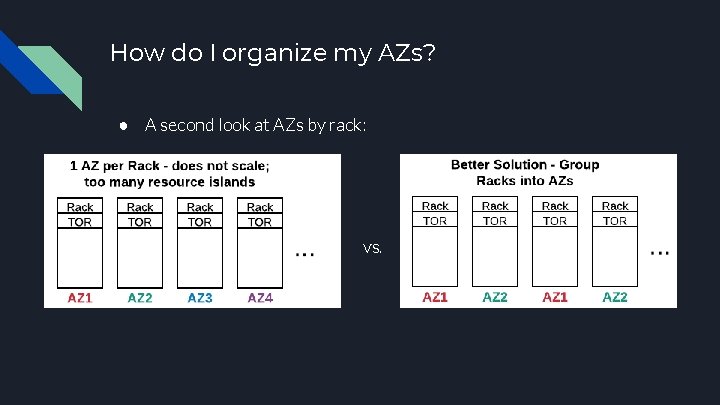

How do I organize my AZs? ● A second look at AZs by rack: vs.

What is the real value proposition for my AZs? ● But, it’s hard to pin down the value provided by your AZs for these kinds of infrequent failure scenarios ○ Data on failure modes is often sparse or unavailable ● Also, you may not have clear single points of failure to define AZs against ○ It’s not uncommon for data centers to be built with power diversity (A/B side) to the rack, redundant server PSUs, primary & backup TORs in each rack, etc.

A better value proposition: Planned Maintenance Planned maintenance activities often account for more downtime than random hardware failures. Ex: ● Data center maintenance - moving & upgrading equipment, recabling, rebuilding a rack, HVAC & electrical work, etc. ● Disruptive software updates - for the kernel, QEMU, certain security patches, Open. Stack and operating system upgrades, etc. The catch is that these maintenance processes need to be aware of your AZs and need to be adapted to take advantage of them

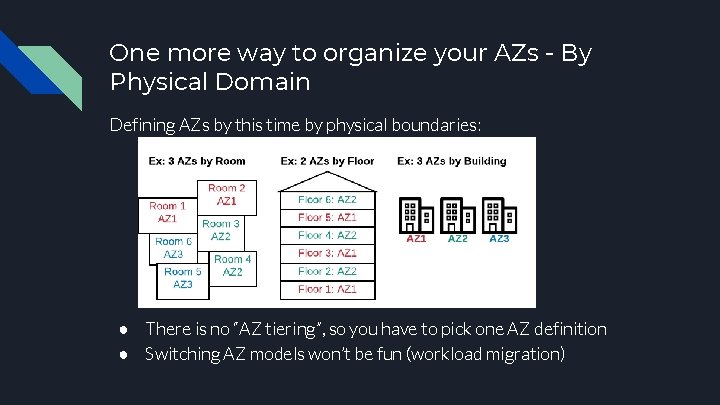

One more way to organize your AZs - By Physical Domain Defining AZs by this time by physical boundaries: ● There is no “AZ tiering”, so you have to pick one AZ definition ● Switching AZ models won’t be fun (workload migration)

Other uses for AZs ● Using AZs to distinguish cloud offerings - e. g. , “VMWare AZ 1” and “KVM AZ 1” ○ Instead can use hypervisor_type image metadata; private flavors & volume types ● Special tenant(s) with their own “private” AZ(s); security concern ○ Better to use multi-tenancy isolation filter; private flavors. AZs don’t provide security; they are usable and visible to everyone ● One AZ per compute host (targeted workload stack per node) ○ Affinity / anti-affinity; same. Host / different. Host filters

AZ Implementations in Open. Stack

The implementation of AZs varies between Open. Stack projects ● Most Open. Stack projects have some concept of AZs ● However, the implementation of AZs varies from project to project ● Therefore, planning your AZs requires a case-by-case look at the Open. Stack projects you will be using ● We will look at the AZ implementation in detail for just three projects - Nova, Cinder, and Neutron

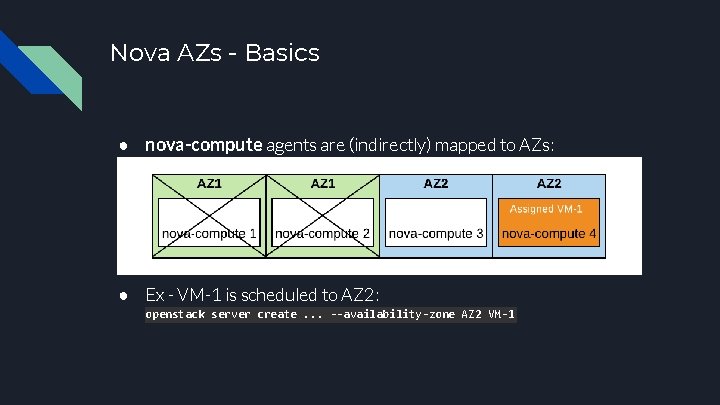

Nova AZs - Basics ● nova-compute agents are (indirectly) mapped to AZs: ● Ex - VM-1 is scheduled to AZ 2: openstack server create. . . --availability-zone AZ 2 VM-1

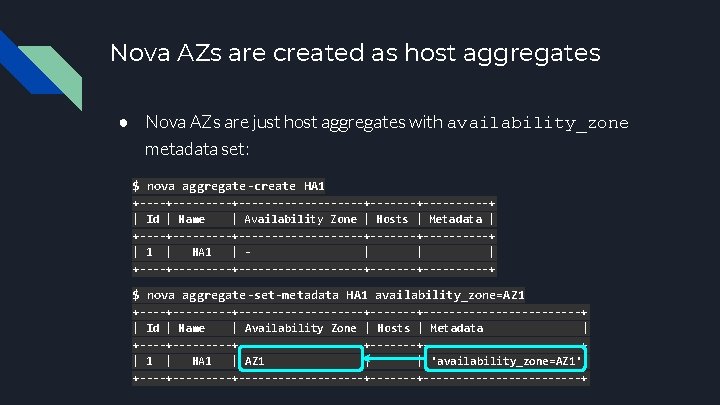

Nova AZs are created as host aggregates ● Nova AZs are just host aggregates with availability_zone metadata set: $ nova aggregate-create HA 1 +---------+----------+----------+ | Id | Name | Availability Zone | Hosts | Metadata | +---------+----------+----------+ | 1 | HA 1 | | +---------+----------+----------+ $ nova aggregate-set-metadata HA 1 availability_zone=AZ 1 +---------+----------+---------------+ | Id | Name | Availability Zone | Hosts | Metadata | +---------+----------+---------------+ | 1 | HA 1 | AZ 1 | | 'availability_zone=AZ 1'| +---------+----------+---------------+

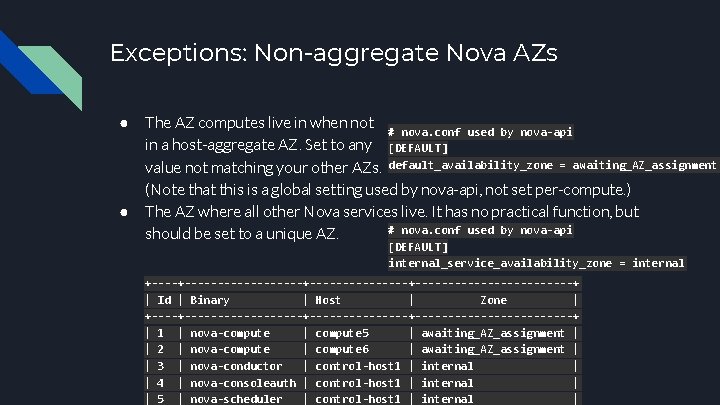

Exceptions: Non-aggregate Nova AZs ● ● The AZ computes live in when not # nova. conf used by nova-api in a host-aggregate AZ. Set to any [DEFAULT] value not matching your other AZs. default_availability_zone = awaiting_AZ_assignment (Note that this is a global setting used by nova-api, not set per-compute. ) The AZ where all other Nova services live. It has no practical function, but # nova. conf used by nova-api should be set to a unique AZ. [DEFAULT] internal_service_availability_zone = internal +------------------+-------------+ | Id | Binary | Host | Zone | +------------------+-------------+ | 1 | nova-compute | compute 5 | awaiting_AZ_assignment | | 2 | nova-compute | compute 6 | awaiting_AZ_assignment | | 3 | nova-conductor | control-host 1 | internal | | 4 | nova-consoleauth | control-host 1 | internal | | 5 | nova-scheduler | control-host 1 | internal |

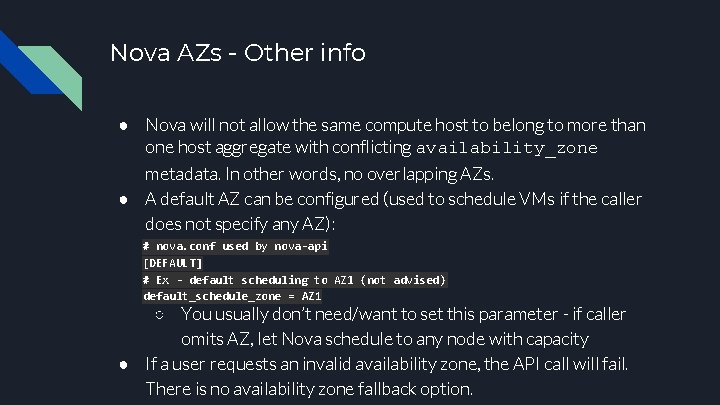

Nova AZs - Other info ● Nova will not allow the same compute host to belong to more than one host aggregate with conflicting availability_zone metadata. In other words, no overlapping AZs. ● A default AZ can be configured (used to schedule VMs if the caller does not specify any AZ): # nova. conf used by nova-api [DEFAULT] # Ex - default scheduling to AZ 1 (not advised) default_schedule_zone = AZ 1 ○ You usually don’t need/want to set this parameter - if caller omits AZ, let Nova schedule to any node with capacity ● If a user requests an invalid availability zone, the API call will fail. There is no availability zone fallback option.

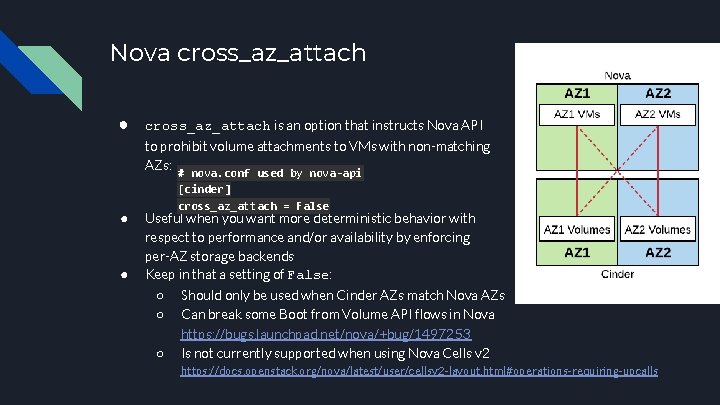

Nova cross_az_attach ● cross_az_attach is an option that instructs Nova API to prohibit volume attachments to VMs with non-matching AZs: # nova. conf used by nova-api ● ● [cinder] cross_az_attach = False Useful when you want more deterministic behavior with respect to performance and/or availability by enforcing per-AZ storage backends Keep in that a setting of False: ○ ○ ○ Should only be used when Cinder AZs match Nova AZs Can break some Boot from Volume API flows in Nova https: //bugs. launchpad. net/nova/+bug/1497253 Is not currently supported when using Nova Cells v 2 https: //docs. openstack. org/nova/latest/user/cellsv 2 -layout. html#operations-requiring-upcalls

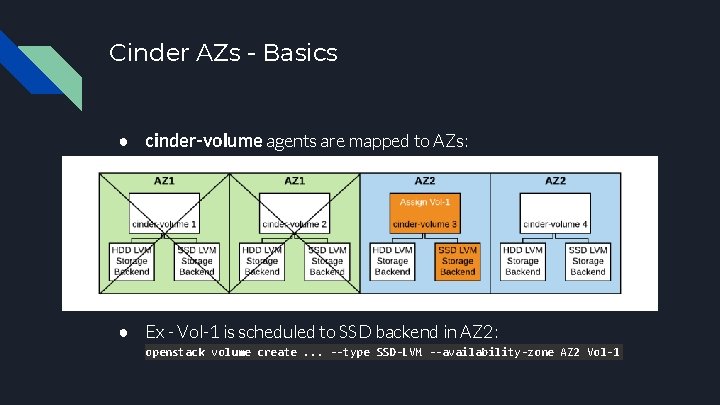

Cinder AZs - Basics ● cinder-volume agents are mapped to AZs: ● Ex - Vol-1 is scheduled to SSD backend in AZ 2: openstack volume create. . . --type SSD-LVM --availability-zone AZ 2 Vol-1

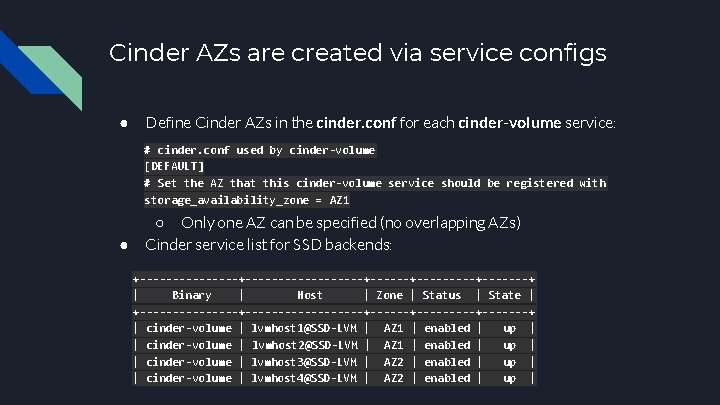

Cinder AZs are created via service configs ● Define Cinder AZs in the cinder. conf for each cinder-volume service: # cinder. conf used by cinder-volume [DEFAULT] # Set the AZ that this cinder-volume service should be registered with storage_availability_zone = AZ 1 ● ○ Only one AZ can be specified (no overlapping AZs) Cinder service list for SSD backends: +------------------+---------+-------+ | Binary | Host | Zone | Status | State | +------------------+---------+-------+ | cinder-volume | lvmhost 1@SSD-LVM | AZ 1 | enabled | up | | cinder-volume | lvmhost 2@SSD-LVM | AZ 1 | enabled | up | | cinder-volume | lvmhost 3@SSD-LVM | AZ 2 | enabled | up | | cinder-volume | lvmhost 4@SSD-LVM | AZ 2 | enabled | up |

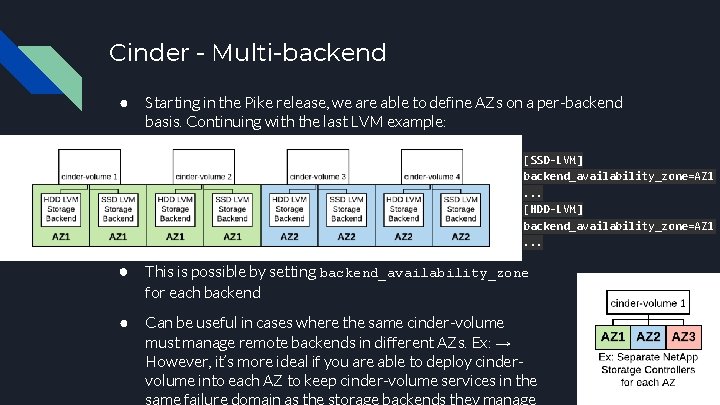

Cinder - Multi-backend ● Starting in the Pike release, we are able to define AZs on a per-backend basis. Continuing with the last LVM example: [SSD-LVM] backend_availability_zone=AZ 1. . . [HDD-LVM] backend_availability_zone=AZ 1. . . ● This is possible by setting backend_availability_zone for each backend ● Can be useful in cases where the same cinder-volume must manage remote backends in different AZs. Ex: → However, it’s more ideal if you are able to deploy cindervolume into each AZ to keep cinder-volume services in the

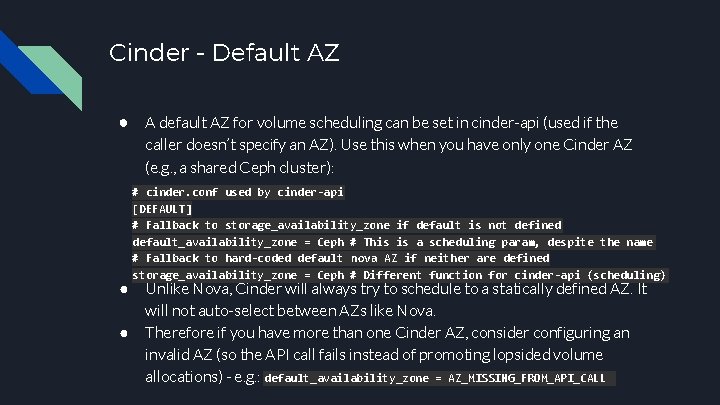

Cinder - Default AZ ● A default AZ for volume scheduling can be set in cinder-api (used if the caller doesn’t specify an AZ). Use this when you have only one Cinder AZ (e. g. , a shared Ceph cluster): ● ● # cinder. conf used by cinder-api [DEFAULT] # Fallback to storage_availability_zone if default is not defined default_availability_zone = Ceph # This is a scheduling param, despite the name # Fallback to hard-coded default nova AZ if neither are defined storage_availability_zone = Ceph # Different function for cinder-api (scheduling) Unlike Nova, Cinder will always try to schedule to a statically defined AZ. It will not auto-select between AZs like Nova. Therefore if you have more than one Cinder AZ, consider configuring an invalid AZ (so the API call fails instead of promoting lopsided volume allocations) - e. g. : default_availability_zone = AZ_MISSING_FROM_API_CALL

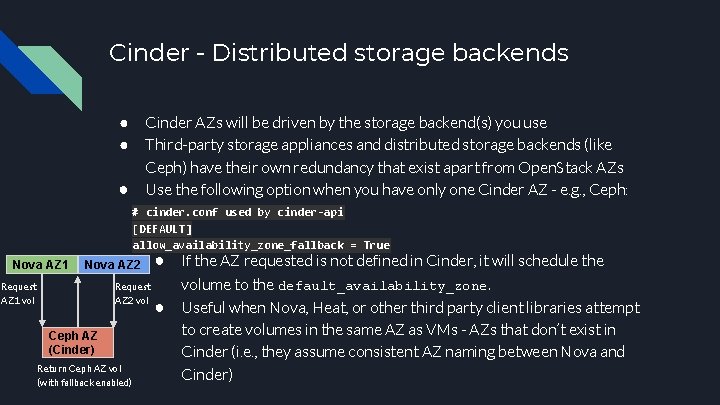

Cinder - Distributed storage backends Cinder AZs will be driven by the storage backend(s) you use Third-party storage appliances and distributed storage backends (like Ceph) have their own redundancy that exist apart from Open. Stack AZs Use the following option when you have only one Cinder AZ - e. g. , Ceph: ● ● ● # cinder. conf used by cinder-api [DEFAULT] allow_availability_zone_fallback = True Nova AZ 1 ● If the AZ requested is not defined in Cinder, it will schedule the Request volume to the default_availability_zone. AZ 2 vol ● Useful when Nova, Heat, or other third party client libraries attempt Nova AZ 2 Request AZ 1 vol Ceph AZ (Cinder) Return Ceph AZ vol (with fallback enabled) to create volumes in the same AZ as VMs - AZs that don’t exist in Cinder (i. e. , they assume consistent AZ naming between Nova and Cinder)

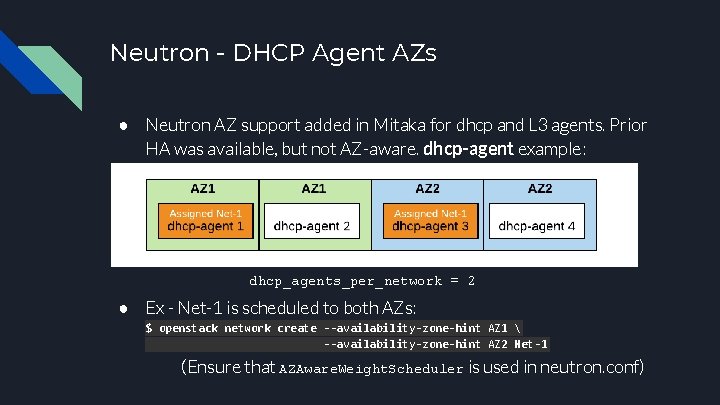

Neutron - DHCP Agent AZs ● Neutron AZ support added in Mitaka for dhcp and L 3 agents. Prior HA was available, but not AZ-aware. dhcp-agent example: dhcp_agents_per_network = 2 ● Ex - Net-1 is scheduled to both AZs: $ openstack network create --availability-zone-hint AZ 1 --availability-zone-hint AZ 2 Net-1 (Ensure that AZAware. Weight. Scheduler is used in neutron. conf)

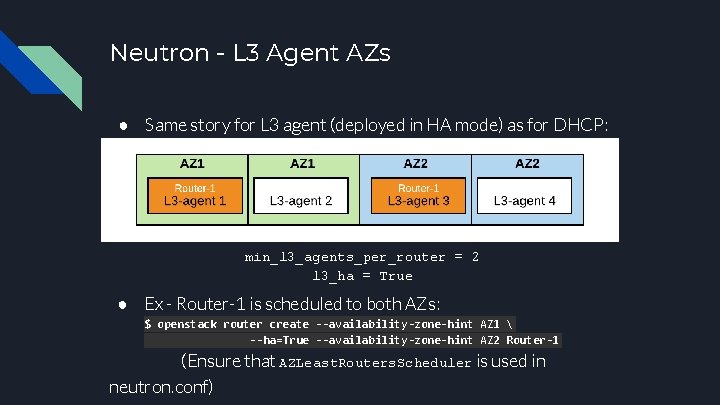

Neutron - L 3 Agent AZs ● Same story for L 3 agent (deployed in HA mode) as for DHCP: min_l 3_agents_per_router = 2 l 3_ha = True ● Ex - Router-1 is scheduled to both AZs: $ openstack router create --availability-zone-hint AZ 1 --ha=True --availability-zone-hint AZ 2 Router-1 (Ensure that AZLeast. Routers. Scheduler is used in neutron. conf)

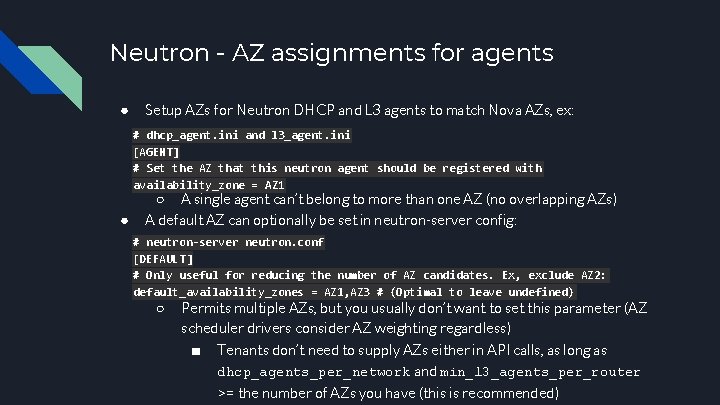

Neutron - AZ assignments for agents ● Setup AZs for Neutron DHCP and L 3 agents to match Nova AZs, ex: # dhcp_agent. ini and l 3_agent. ini [AGENT] # Set the AZ that this neutron agent should be registered with availability_zone = AZ 1 ● ○ A single agent can’t belong to more than one AZ (no overlapping AZs) A default AZ can optionally be set in neutron-server config: # neutron-server neutron. conf [DEFAULT] # Only useful for reducing the number of AZ candidates. Ex, exclude AZ 2: default_availability_zones = AZ 1, AZ 3 # (Optimal to leave undefined) ○ Permits multiple AZs, but you usually don’t want to set this parameter (AZ scheduler drivers consider AZ weighting regardless) ■ Tenants don’t need to supply AZs either in API calls, as long as dhcp_agents_per_network and min_l 3_agents_per_router >= the number of AZs you have (this is recommended)

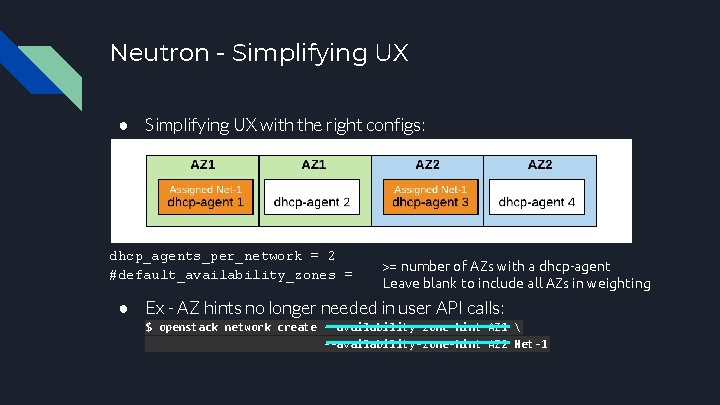

Neutron - Simplifying UX ● Simplifying UX with the right configs: dhcp_agents_per_network = 2 #default_availability_zones = >= number of AZs with a dhcp-agent Leave blank to include all AZs in weighting ● Ex - AZ hints no longer needed in user API calls: $ openstack network create --availability-zone-hint AZ 1 --availability-zone-hint AZ 2 Net-1

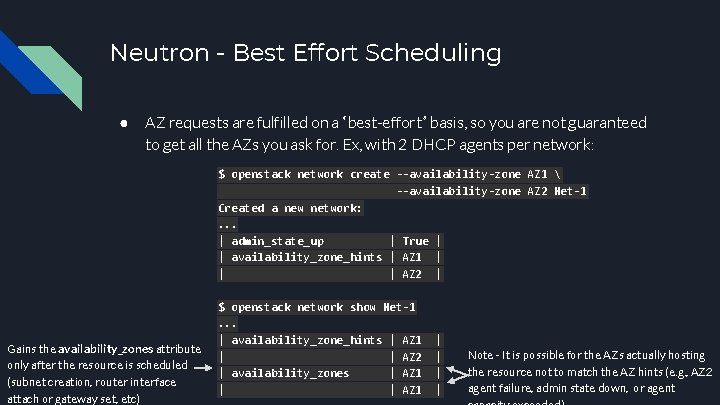

Neutron - Best Effort Scheduling ● AZ requests are fulfilled on a “best-effort” basis, so you are not guaranteed to get all the AZs you ask for. Ex, with 2 DHCP agents per network: $ openstack network create --availability-zone AZ 1 --availability-zone AZ 2 Net-1 Created a new network: . . . | admin_state_up | True | | availability_zone_hints | AZ 1 | | | AZ 2 | Gains the availability_zones attribute only after the resource is scheduled (subnet creation, router interface attach or gateway set, etc) $ openstack network show Net-1. . . | availability_zone_hints | AZ 1 | | AZ 2 | availability_zones | AZ 1 | | | | Note - It is possible for the AZs actually hosting the resource not to match the AZ hints (e. g. , AZ 2 agent failure, admin state down, or agent

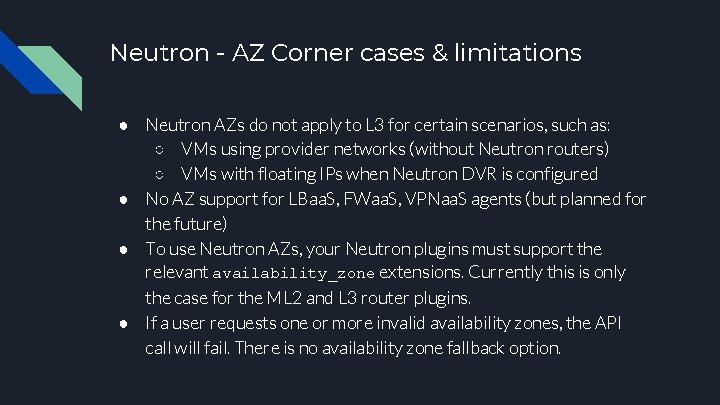

Neutron - AZ Corner cases & limitations ● Neutron AZs do not apply to L 3 for certain scenarios, such as: ○ VMs using provider networks (without Neutron routers) ○ VMs with floating IPs when Neutron DVR is configured ● No AZ support for LBaa. S, FWaa. S, VPNaa. S agents (but planned for the future) ● To use Neutron AZs, your Neutron plugins must support the relevant availability_zone extensions. Currently this is only the case for the ML 2 and L 3 router plugins. ● If a user requests one or more invalid availability zones, the API call will fail. There is no availability zone fallback option.

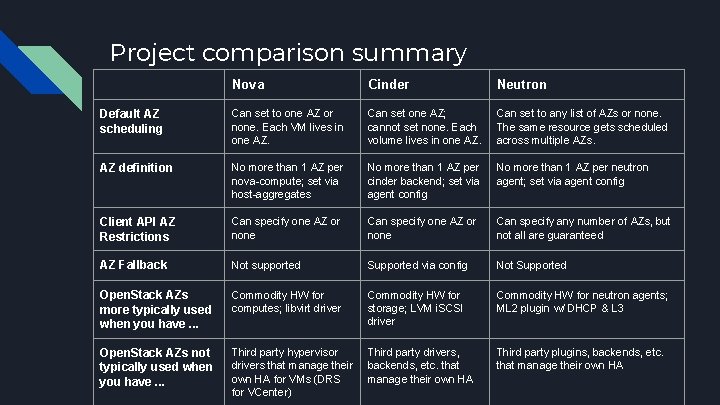

Project comparison summary Nova Cinder Neutron Default AZ scheduling Can set to one AZ or none. Each VM lives in one AZ. Can set one AZ; cannot set none. Each volume lives in one AZ. Can set to any list of AZs or none. The same resource gets scheduled across multiple AZs. AZ definition No more than 1 AZ per nova-compute; set via host-aggregates No more than 1 AZ per cinder backend; set via agent config No more than 1 AZ per neutron agent; set via agent config Client API AZ Restrictions Can specify one AZ or none Can specify any number of AZs, but not all are guaranteed AZ Fallback Not supported Supported via config Not Supported Open. Stack AZs more typically used when you have. . . Commodity HW for computes; libvirt driver Commodity HW for storage; LVM i. SCSI driver Commodity HW for neutron agents; ML 2 plugin w/ DHCP & L 3 Open. Stack AZs not typically used when you have. . . Third party hypervisor drivers that manage their own HA for VMs (DRS for VCenter) Third party drivers, backends, etc. that manage their own HA Third party plugins, backends, etc. that manage their own HA

Summary of the AZ Curse (challenges) ● Good AZ design requires careful planning and coordination and at all layers of the solution stack: ○ End-users / tenant application must be built for HA and be AZ-capable, and the AZ design informed by their availability/application requirements ○ AZs should be analyzed on a case-by-case basis for each Open. Stack project in the scope of deployment with respect to current limitations, implementation differences, and general UX ○ AZ-aware software update/upgrade processes are needed to get the most out of AZs (to the extent that they are the leading cause of service interruptions) ○ Storage and Network architecture designed with AZs in mind ○ AZ-aware planned data center maintenance activities (e. g. , evacuations for node servicing, rewiring or relocating physical equipment, etc) ○ Informed AZ planning from the understanding of likely data center modes of failure (ideally backed up with supporting data)

Don’t forget the cost-benefit analysis ● Defining AZs is one thing. Actually achieving higher application availability is another. ● Don’t use AZs for the sake of using AZs. You need to be able to show some tangible value of having them, or a plan to get there (e. g. , developing AZ-aware update/upgrade processes, maintenance procedures, etc)

Thank You Questions? Craig Anderson craig. anderson@att. com

- Slides: 32