CSE P 501 Compiler Construction n Compiler Backend

CSE P 501 – Compiler Construction n Compiler Backend Organization n n Instruction Selection n n Spring 2014 Instruction Selection Instruction Scheduling Registers Allocation Peephole Optimization Peephole Instruction Selection Jim Hogg - UW - CSE - P 501 N-1

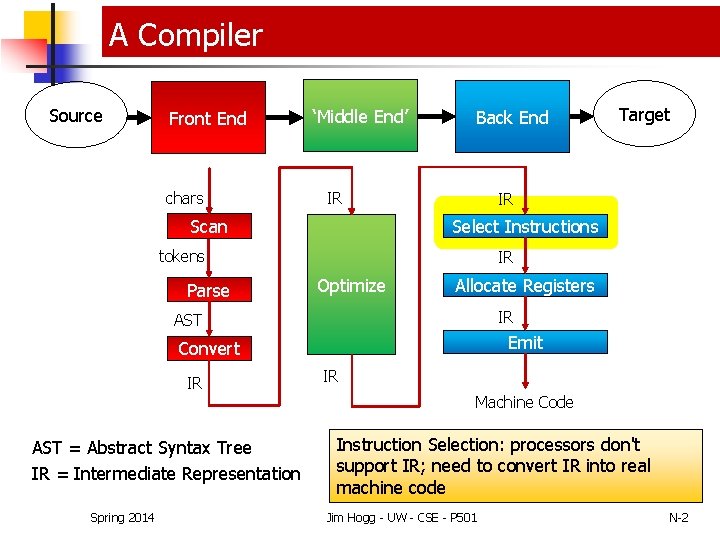

A Compiler Source Front End chars ‘Middle End’ Back End IR IR Select Instructions Scan tokens Parse IR Optimize Allocate Registers IR AST Emit Convert IR AST = Abstract Syntax Tree IR = Intermediate Representation Spring 2014 Target IR Machine Code Instruction Selection: processors don't support IR; need to convert IR into real machine code Jim Hogg - UW - CSE - P 501 N-2

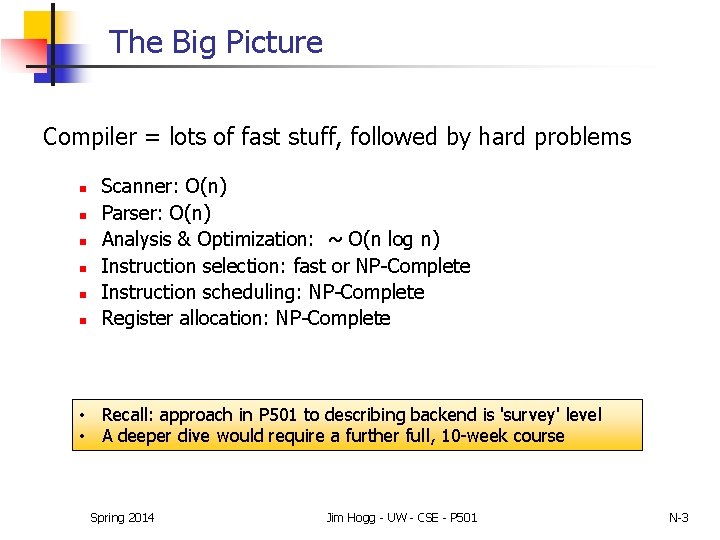

The Big Picture Compiler = lots of fast stuff, followed by hard problems n n n Scanner: O(n) Parser: O(n) Analysis & Optimization: ~ O(n log n) Instruction selection: fast or NP-Complete Instruction scheduling: NP-Complete Register allocation: NP-Complete • Recall: approach in P 501 to describing backend is 'survey' level • A deeper dive would require a further full, 10 -week course Spring 2014 Jim Hogg - UW - CSE - P 501 N-3

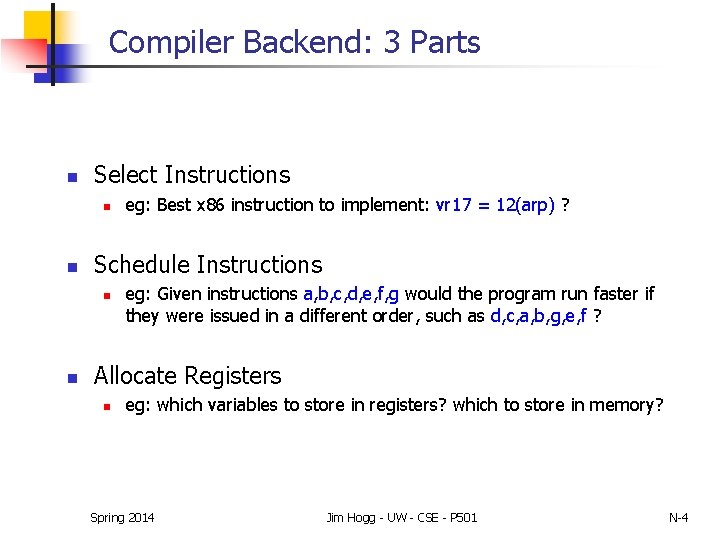

Compiler Backend: 3 Parts n Select Instructions n n Schedule Instructions n n eg: Best x 86 instruction to implement: vr 17 = 12(arp) ? eg: Given instructions a, b, c, d, e, f, g would the program run faster if they were issued in a different order, such as d, c, a, b, g, e, f ? Allocate Registers n eg: which variables to store in registers? which to store in memory? Spring 2014 Jim Hogg - UW - CSE - P 501 N-4

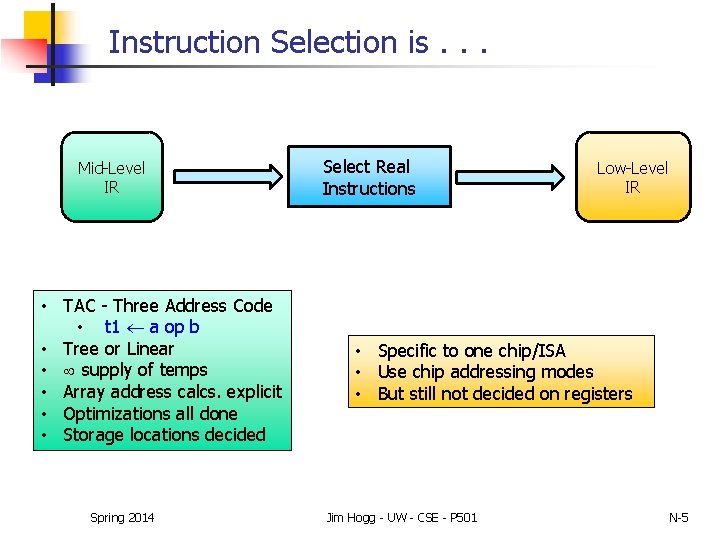

Instruction Selection is. . . Mid-Level IR • TAC - Three Address Code • t 1 a op b • Tree or Linear • supply of temps • Array address calcs. explicit • Optimizations all done • Storage locations decided Spring 2014 Select Real Instructions Low-Level IR • Specific to one chip/ISA • Use chip addressing modes • But still not decided on registers Jim Hogg - UW - CSE - P 501 N-5

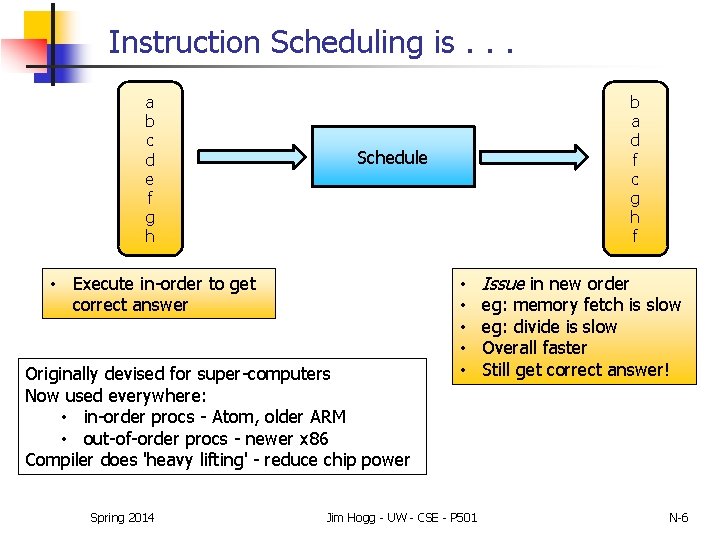

Instruction Scheduling is. . . a b c d e f g h Schedule • Execute in-order to get correct answer Originally devised for super-computers Now used everywhere: • in-order procs - Atom, older ARM • out-of-order procs - newer x 86 Compiler does 'heavy lifting' - reduce chip power Spring 2014 b a d f c g h f • • • Jim Hogg - UW - CSE - P 501 Issue in new order eg: memory fetch is slow eg: divide is slow Overall faster Still get correct answer! N-6

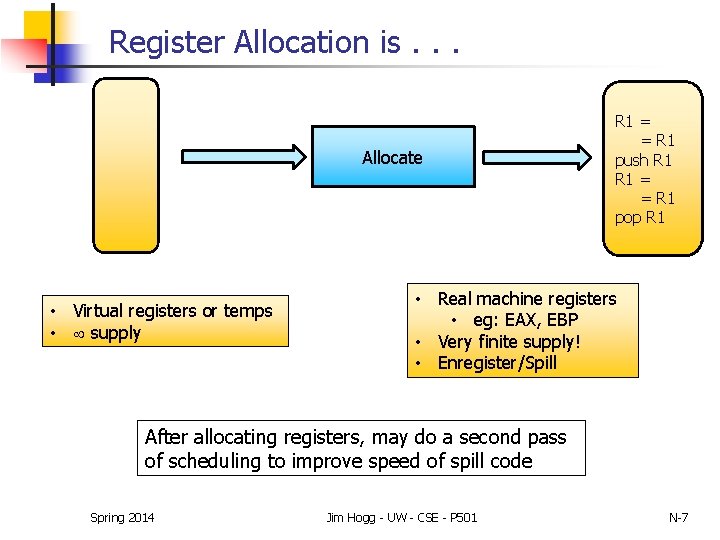

Register Allocation is. . . Allocate • Virtual registers or temps • supply R 1 = = R 1 push R 1 = = R 1 pop R 1 • Real machine registers • eg: EAX, EBP • Very finite supply! • Enregister/Spill After allocating registers, may do a second pass of scheduling to improve speed of spill code Spring 2014 Jim Hogg - UW - CSE - P 501 N-7

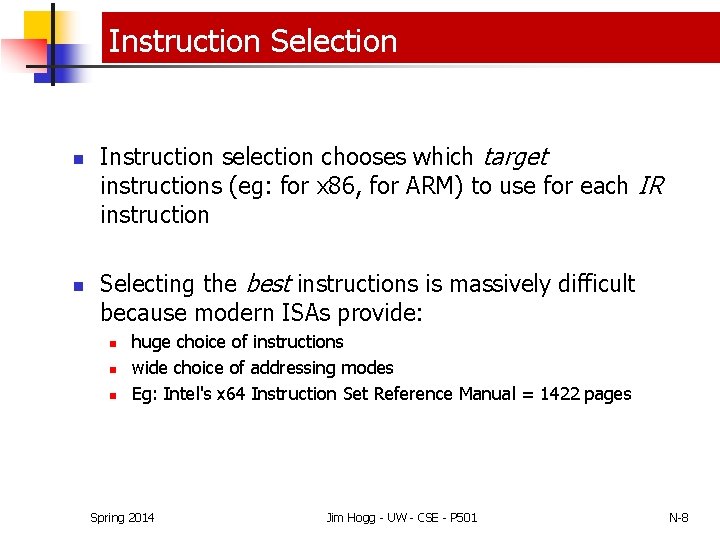

Instruction Selection n n Instruction selection chooses which target instructions (eg: for x 86, for ARM) to use for each IR instruction Selecting the best instructions is massively difficult because modern ISAs provide: n n n huge choice of instructions wide choice of addressing modes Eg: Intel's x 64 Instruction Set Reference Manual = 1422 pages Spring 2014 Jim Hogg - UW - CSE - P 501 N-8

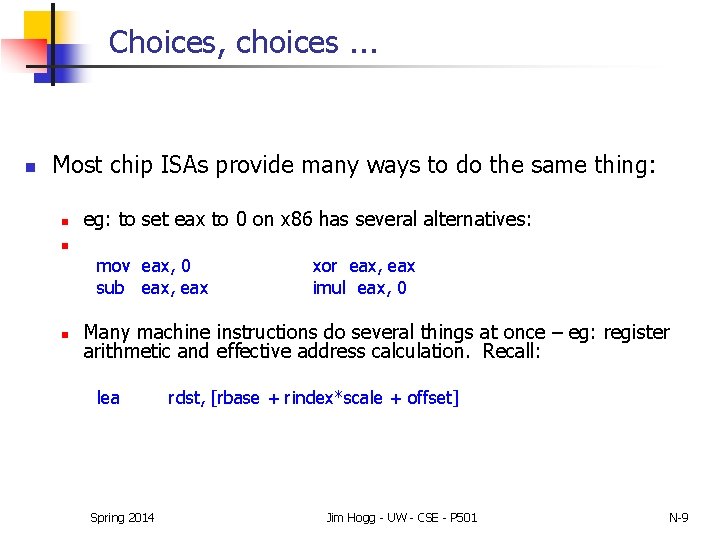

Choices, choices. . . n Most chip ISAs provide many ways to do the same thing: n eg: to set eax to 0 on x 86 has several alternatives: n mov eax, 0 sub eax, eax n xor eax, eax imul eax, 0 Many machine instructions do several things at once – eg: register arithmetic and effective address calculation. Recall: lea Spring 2014 rdst, [rbase + rindex*scale + offset] Jim Hogg - UW - CSE - P 501 N-9

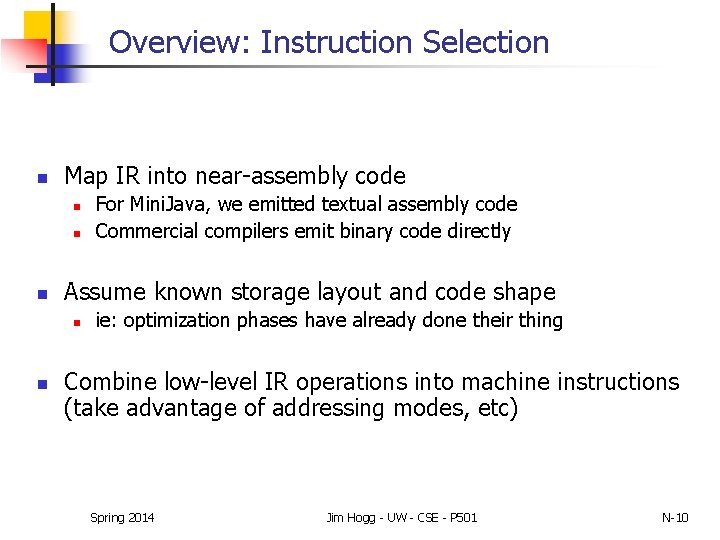

Overview: Instruction Selection n Map IR into near-assembly code n n n Assume known storage layout and code shape n n For Mini. Java, we emitted textual assembly code Commercial compilers emit binary code directly ie: optimization phases have already done their thing Combine low-level IR operations into machine instructions (take advantage of addressing modes, etc) Spring 2014 Jim Hogg - UW - CSE - P 501 N-10

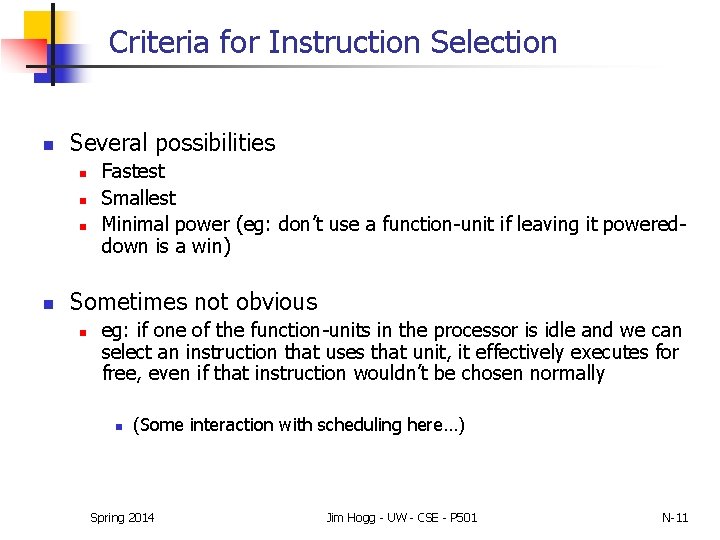

Criteria for Instruction Selection n Several possibilities n n Fastest Smallest Minimal power (eg: don’t use a function-unit if leaving it powereddown is a win) Sometimes not obvious n eg: if one of the function-units in the processor is idle and we can select an instruction that uses that unit, it effectively executes for free, even if that instruction wouldn’t be chosen normally n (Some interaction with scheduling here…) Spring 2014 Jim Hogg - UW - CSE - P 501 N-11

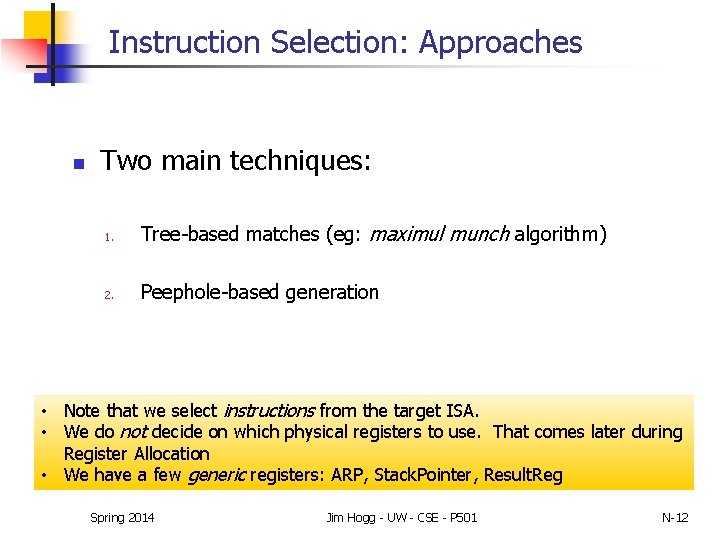

Instruction Selection: Approaches n Two main techniques: 1. Tree-based matches (eg: maximul munch algorithm) 2. Peephole-based generation • Note that we select instructions from the target ISA. • We do not decide on which physical registers to use. That comes later during Register Allocation • We have a few generic registers: ARP, Stack. Pointer, Result. Reg Spring 2014 Jim Hogg - UW - CSE - P 501 N-12

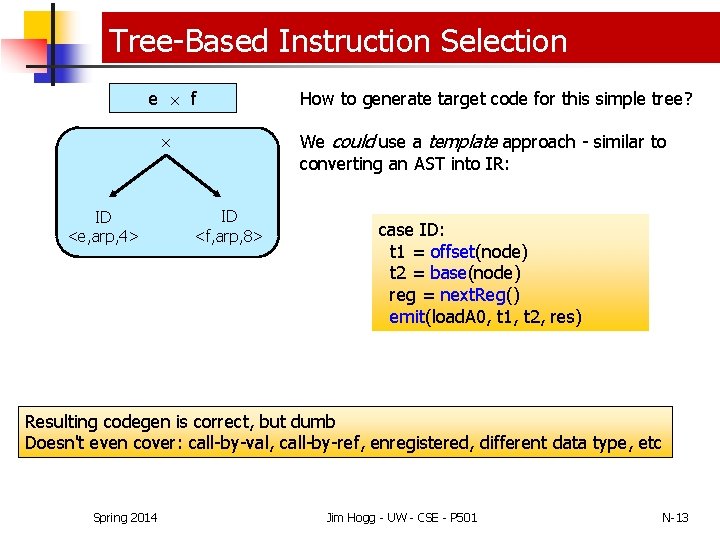

Tree-Based Instruction Selection e f ID <e, arp, 4> How to generate target code for this simple tree? We could use a template approach - similar to converting an AST into IR: ID <f, arp, 8> case ID: t 1 = offset(node) t 2 = base(node) reg = next. Reg() emit(load. A 0, t 1, t 2, res) Resulting codegen is correct, but dumb Doesn't even cover: call-by-val, call-by-ref, enregistered, different data type, etc Spring 2014 Jim Hogg - UW - CSE - P 501 N-13

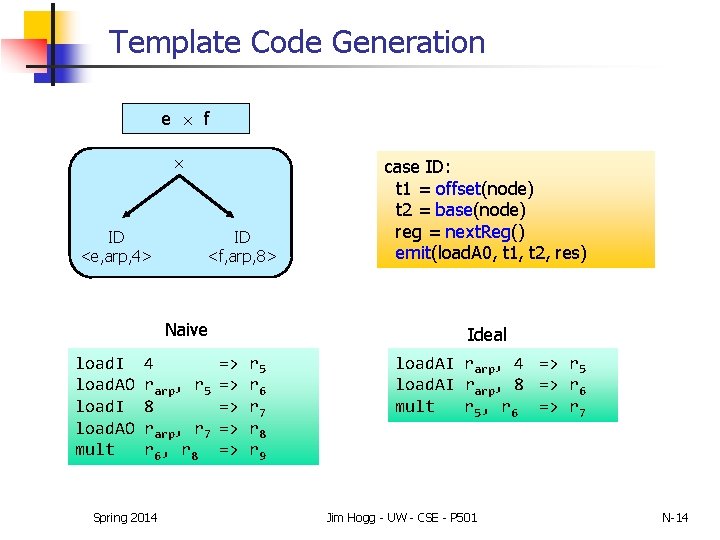

Template Code Generation e f ID <e, arp, 4> ID <f, arp, 8> Naive load. I load. AO mult 4 rarp, r 5 8 rarp, r 7 r 6, r 8 Spring 2014 case ID: t 1 = offset(node) t 2 = base(node) reg = next. Reg() emit(load. A 0, t 1, t 2, res) Ideal => => => r 5 r 6 r 7 r 8 r 9 load. AI rarp, 4 => r 5 load. AI rarp, 8 => r 6 mult r 5, r 6 => r 7 Jim Hogg - UW - CSE - P 501 N-14

IR (3 -address Code) Tree-lets Rules for Tree-to-Target Conversion (prefix notation) 5 6 8 9 10 11 14 15 16 19 20 Production. . . Reg Lab Reg Num Reg 1 Reg + Reg 1 Reg 2 Reg + Reg 1 Num 2 Reg + Lab 1 Reg 2 Reg + Reg 1 Num 2 Reg + Lab 1 Reg 2. . . ILOC Template load l => rn load. A 0 load. AI add. I r 1, r 2 r 1, n 2 r 2, l 1 r 1, r 2 r 1, 22 r 2, l 1 => rn => rn Memory Dereference Spring 2014 Jim Hogg - UW - CSE - P 501 11 + Reg 1 Num 2 N-15

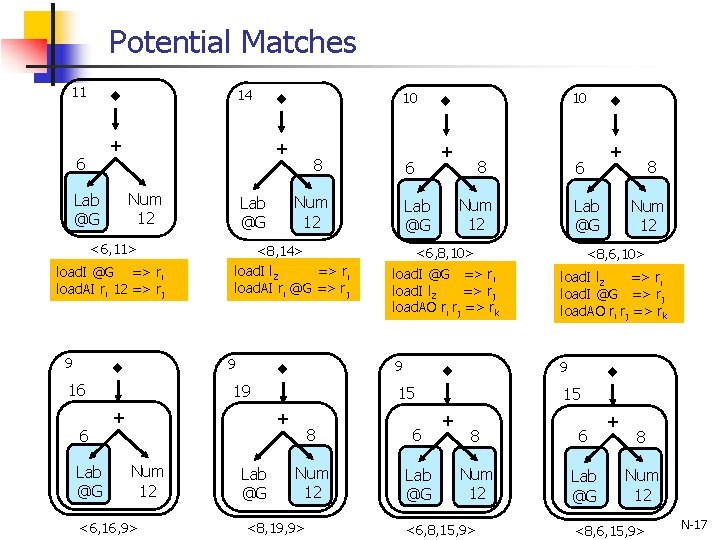

Example: Tiling a tiny 4 -node tree Load variable <c, @G, 12> + Lab @G Num 12 • The tree above shows code to access variable, c, stored at offset 12 bytes from label G • How many ways can we tile this tree into equivalent ILOC code? Spring 2014 Jim Hogg - UW - CSE - P 501 N-16

Potential Matches 11 14 + 6 <6, 11> load. I @G => ri load. AI ri 12 => rj 9 Lab @G 8 <8, 14> load. I l 2 => ri load. AI ri @G => rj 19 + <6, 16, 9> Lab @G 10 + 8 Lab @G 8 Num 12 <6, 8, 10> load. I @G => ri load. I l 2 => rj load. AO ri rj => rk load. I l 2 => ri load. I @G => rj load. AO ri rj => rk 9 9 8 6 Num 12 Lab @G <8, 19, 9> + 6 Num 12 Lab @G 15 + Num 12 6 Num 12 Lab @G 9 16 6 10 + Num 12 Lab @G <8, 6, 10> 15 + 8 6 Num 12 Lab @G <6, 8, 15, 9> + 8 Num 12 <8, 6, 15, 9> N-17

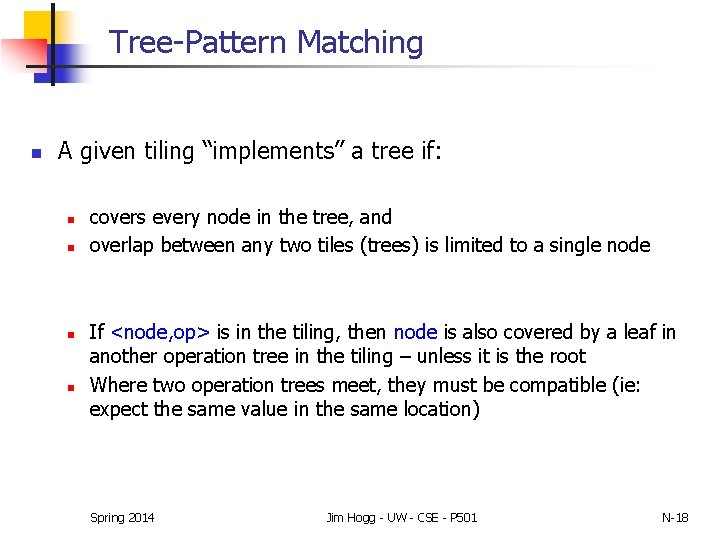

Tree-Pattern Matching n A given tiling “implements” a tree if: n n covers every node in the tree, and overlap between any two tiles (trees) is limited to a single node If <node, op> is in the tiling, then node is also covered by a leaf in another operation tree in the tiling – unless it is the root Where two operation trees meet, they must be compatible (ie: expect the same value in the same location) Spring 2014 Jim Hogg - UW - CSE - P 501 N-18

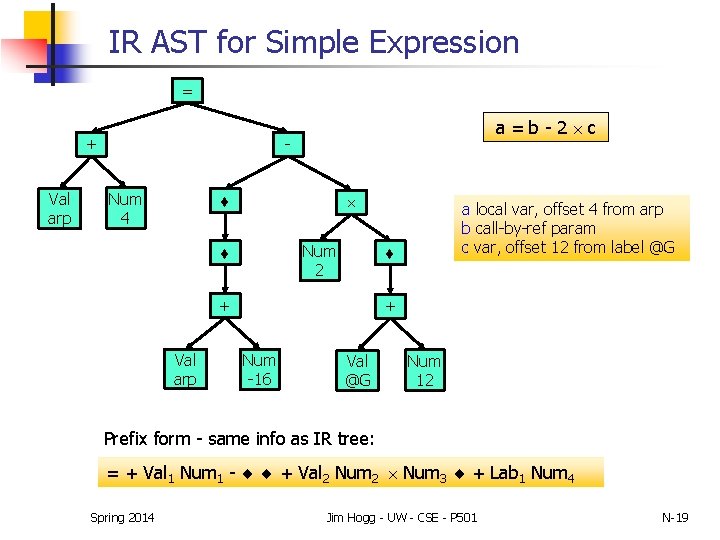

IR AST for Simple Expression = - + Val arp a=b-2 c Num 4 Num 2 + Val arp a local var, offset 4 from arp b call-by-ref param c var, offset 12 from label @G + Num -16 Val @G Num 12 Prefix form - same info as IR tree: = + Val 1 Num 1 - + Val 2 Num 2 Num 3 + Lab 1 Num 4 Spring 2014 Jim Hogg - UW - CSE - P 501 N-19

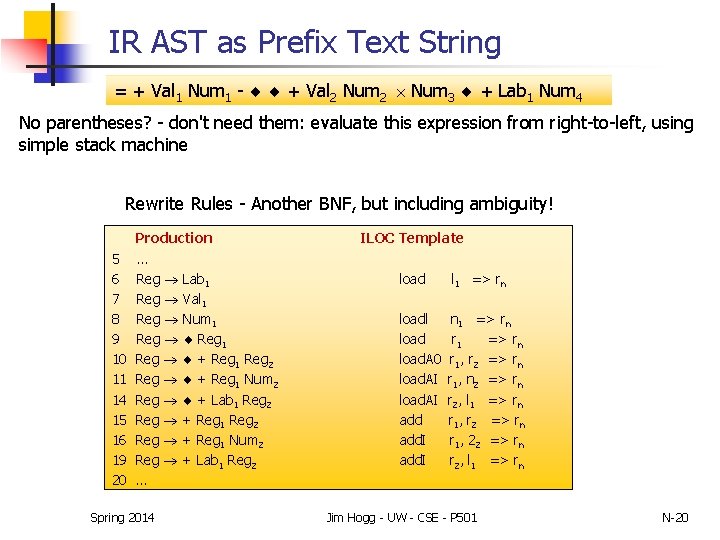

IR AST as Prefix Text String = + Val 1 Num 1 - + Val 2 Num 2 Num 3 + Lab 1 Num 4 No parentheses? - don't need them: evaluate this expression from right-to-left, using simple stack machine Rewrite Rules - Another BNF, but including ambiguity! 5 6 7 8 9 10 11 14 15 16 19 20 Production. . . Reg Lab 1 Reg Val 1 Reg Num 1 Reg + Reg 1 Reg 2 Reg + Reg 1 Num 2 Reg + Lab 1 Reg 2 Reg + Reg 1 Num 2 Reg + Lab 1 Reg 2. . . Spring 2014 ILOC Template load l 1 => rn loadl load. A 0 load. AI add. I n 1 => rn r 1, r 2 => rn r 1, n 2 => rn r 2, l 1 => rn r 1, r 2 => rn r 1, 22 => rn r 2, l 1 => rn Jim Hogg - UW - CSE - P 501 N-20

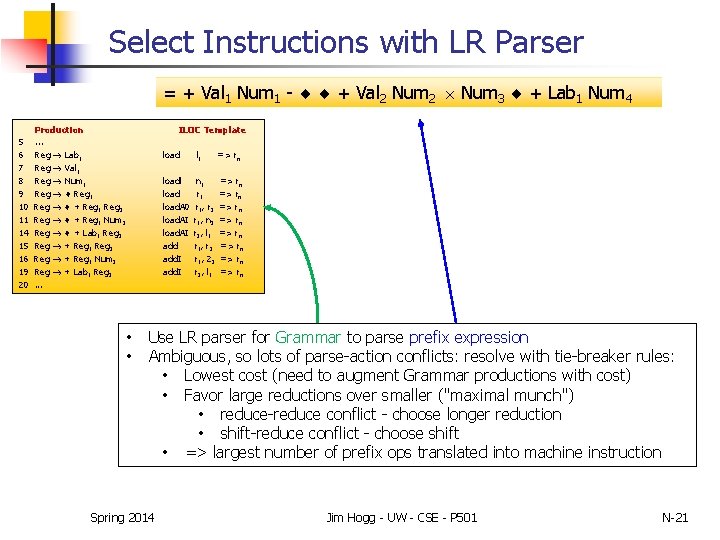

Select Instructions with LR Parser = + Val 1 Num 1 - + Val 2 Num 2 Num 3 + Lab 1 Num 4 5 6 7 8 9 10 11 14 15 16 19 20 Production. . . Reg Lab 1 Reg Val 1 Reg Num 1 Reg + Reg 1 Reg 2 Reg + Reg 1 Num 2 Reg + Lab 1 Reg 2 Reg + Reg 1 Num 2 Reg + Lab 1 Reg 2. . . ILOC Template • • load l 1 => rn loadl load. A 0 load. AI add. I n 1 r 1, r 2 r 1, n 2 r 2, l 1 r 1, r 2 r 1, 22 r 2, l 1 => rn => rn Use LR parser for Grammar to parse prefix expression Ambiguous, so lots of parse-action conflicts: resolve with tie-breaker rules: • Lowest cost (need to augment Grammar productions with cost) • Favor large reductions over smaller ("maximal munch") • reduce-reduce conflict - choose longer reduction • shift-reduce conflict - choose shift • => largest number of prefix ops translated into machine instruction Spring 2014 Jim Hogg - UW - CSE - P 501 N-21

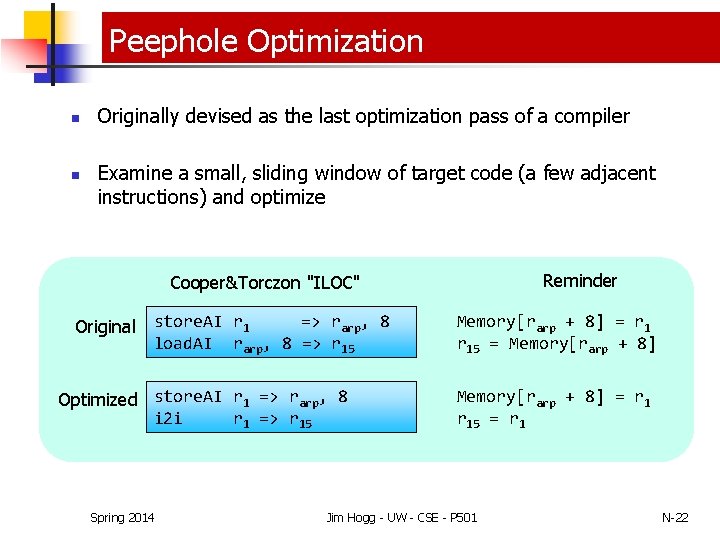

Peephole Optimization n n Originally devised as the last optimization pass of a compiler Examine a small, sliding window of target code (a few adjacent instructions) and optimize Reminder Cooper&Torczon "ILOC" => rarp, 8 Original store. AI r 1 load. AI rarp, 8 => r 15 Optimized store. AI r 1 => rarp, 8 i 2 i r 1 => r 15 Spring 2014 Memory[rarp + 8] = r 15 = r 1 Jim Hogg - UW - CSE - P 501 N-22

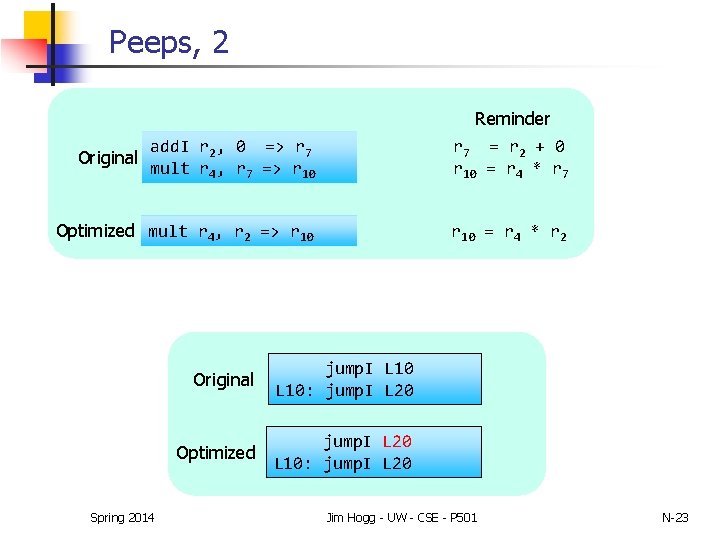

Peeps, 2 Reminder add. I r 2, 0 => r 7 mult r 4, r 7 => r 10 r 7 = r 2 + 0 r 10 = r 4 * r 7 Optimized mult r 4, r 2 => r 10 = r 4 * r 2 Original Spring 2014 Original jump. I L 10: jump. I L 20 Optimized jump. I L 20 L 10: jump. I L 20 Jim Hogg - UW - CSE - P 501 N-23

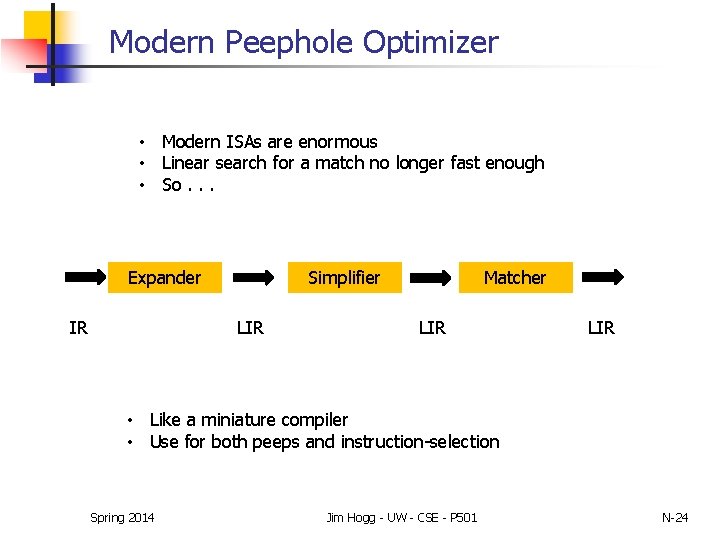

Modern Peephole Optimizer • Modern ISAs are enormous • Linear search for a match no longer fast enough • So. . . Expander IR Simplifier LIR Matcher LIR • Like a miniature compiler • Use for both peeps and instruction-selection Spring 2014 Jim Hogg - UW - CSE - P 501 N-24

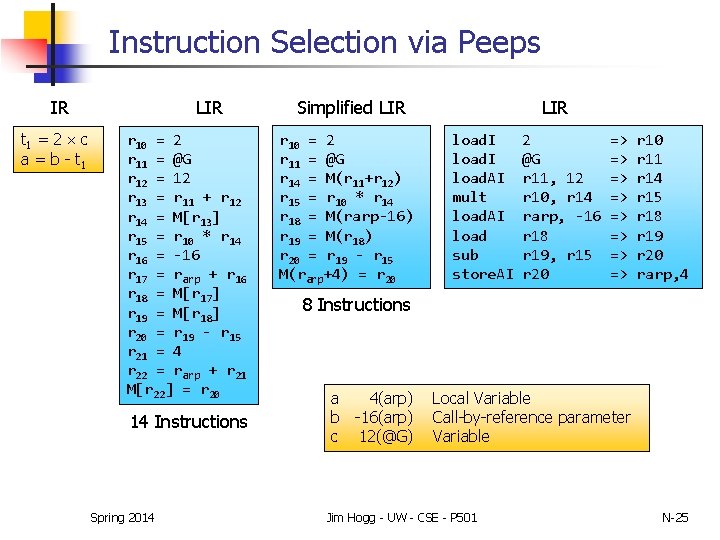

Instruction Selection via Peeps IR t 1 = 2 c a = b - t 1 LIR r 10 = 2 r 11 = @G r 12 = 12 r 13 = r 11 + r 12 r 14 = M[r 13] r 15 = r 10 * r 14 r 16 = -16 r 17 = rarp + r 16 r 18 = M[r 17] r 19 = M[r 18] r 20 = r 19 - r 15 r 21 = 4 r 22 = rarp + r 21 M[r 22] = r 20 14 Instructions Spring 2014 LIR Simplified LIR r 10 = 2 r 11 = @G r 14 = M(r 11+r 12) r 15 = r 10 * r 14 r 18 = M(rarp-16) r 19 = M(r 18) r 20 = r 19 - r 15 M(rarp+4) = r 20 load. I load. AI mult load. AI load sub store. AI 2 @G r 11, 12 r 10, r 14 rarp, -16 r 18 r 19, r 15 r 20 => => r 10 r 11 r 14 r 15 r 18 r 19 r 20 rarp, 4 8 Instructions a 4(arp) b -16(arp) c 12(@G) Local Variable Call-by-reference parameter Variable Jim Hogg - UW - CSE - P 501 N-25

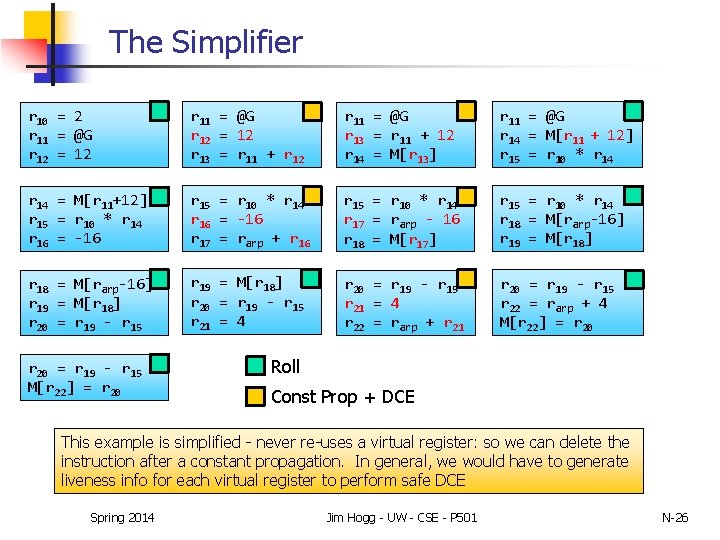

The Simplifier r 10 = 2 r 11 = @G r 12 = 12 r 13 = r 11 + r 12 r 11 = @G r 13 = r 11 + 12 r 14 = M[r 13] r 11 = @G r 14 = M[r 11 + 12] r 15 = r 10 * r 14 = M[r 11+12] r 15 = r 10 * r 14 r 16 = -16 r 17 = rarp + r 16 r 15 = r 10 * r 14 r 17 = rarp - 16 r 18 = M[r 17] r 15 = r 10 * r 14 r 18 = M[rarp-16] r 19 = M[r 18] r 20 = r 19 - r 15 r 21 = 4 r 22 = rarp + r 21 r 20 = r 19 - r 15 r 22 = rarp + 4 M[r 22] = r 20 = r 19 - r 15 M[r 22] = r 20 Roll Const Prop + DCE This example is simplified - never re-uses a virtual register: so we can delete the instruction after a constant propagation. In general, we would have to generate liveness info for each virtual register to perform safe DCE Spring 2014 Jim Hogg - UW - CSE - P 501 N-26

Next n Instruction Scheduling n Register Allocation n And more…. Spring 2014 Jim Hogg - UW - CSE - P 501 N-27

- Slides: 27