CSE 431 Computer Architecture Fall 2005 Lecture 22

- Slides: 18

CSE 431 Computer Architecture Fall 2005 Lecture 22. Virtual Memory Hardware Support Mary Jane Irwin ( www. cse. psu. edu/~mji ) www. cse. psu. edu/~cg 431 [Adapted from Computer Organization and Design, Patterson & Hennessy, © 2005] CSE 431 L 22 TLBs. 1 Irwin, PSU, 2005

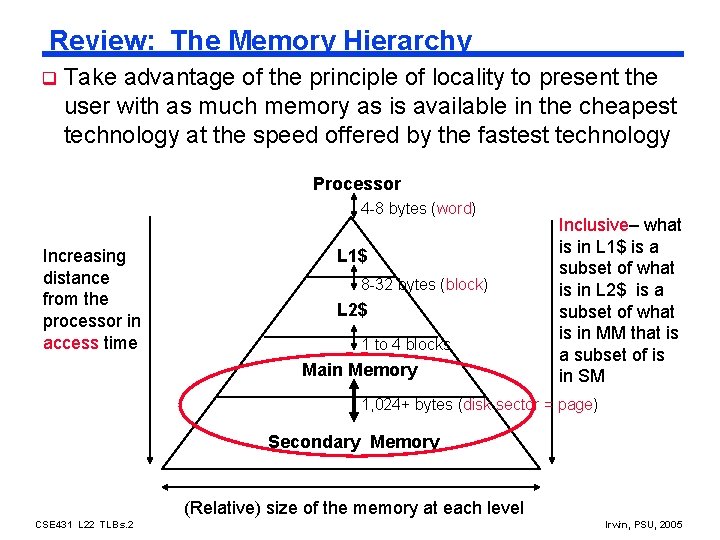

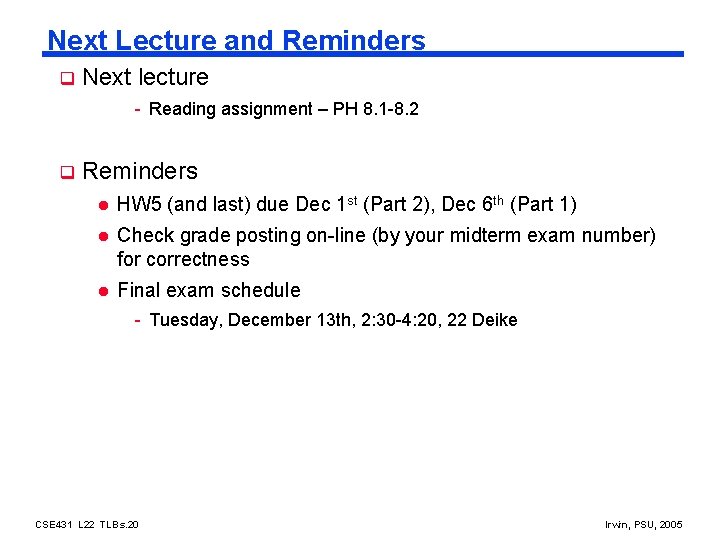

Review: The Memory Hierarchy q Take advantage of the principle of locality to present the user with as much memory as is available in the cheapest technology at the speed offered by the fastest technology Processor 4 -8 bytes (word) Increasing distance from the processor in access time L 1$ 8 -32 bytes (block) L 2$ 1 to 4 blocks Main Memory Inclusive– what is in L 1$ is a subset of what is in L 2$ is a subset of what is in MM that is a subset of is in SM 1, 024+ bytes (disk sector = page) Secondary Memory (Relative) size of the memory at each level CSE 431 L 22 TLBs. 2 Irwin, PSU, 2005

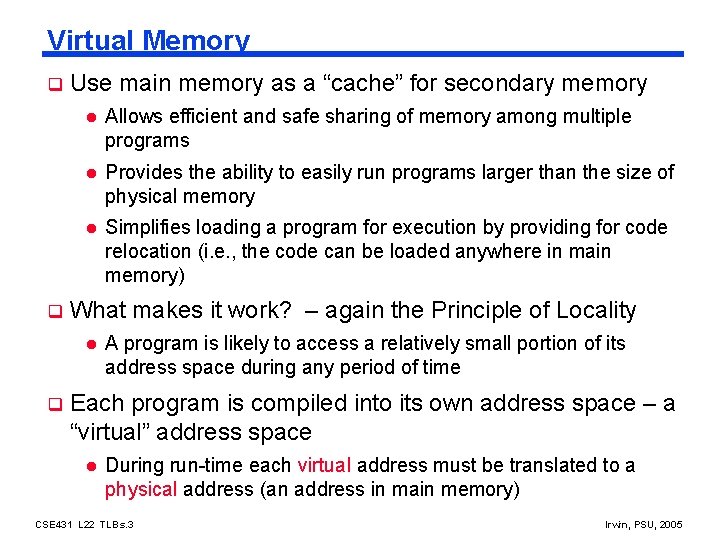

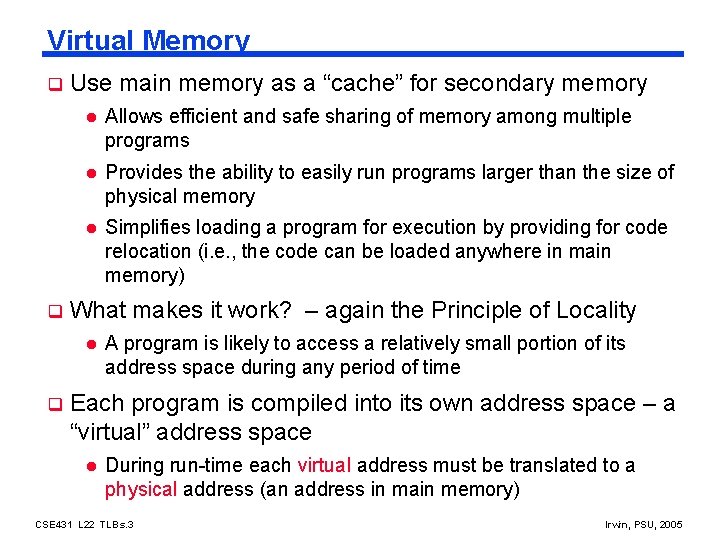

Virtual Memory q q Use main memory as a “cache” for secondary memory l Allows efficient and safe sharing of memory among multiple programs l Provides the ability to easily run programs larger than the size of physical memory l Simplifies loading a program for execution by providing for code relocation (i. e. , the code can be loaded anywhere in main memory) What makes it work? – again the Principle of Locality l q A program is likely to access a relatively small portion of its address space during any period of time Each program is compiled into its own address space – a “virtual” address space l During run-time each virtual address must be translated to a physical address (an address in main memory) CSE 431 L 22 TLBs. 3 Irwin, PSU, 2005

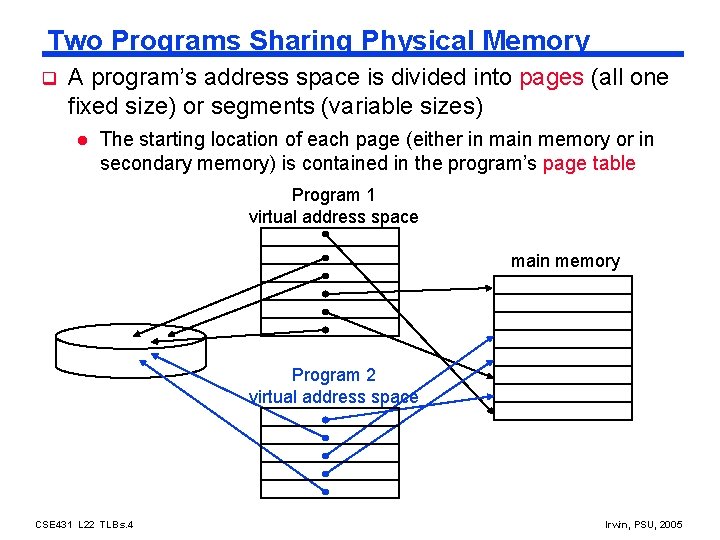

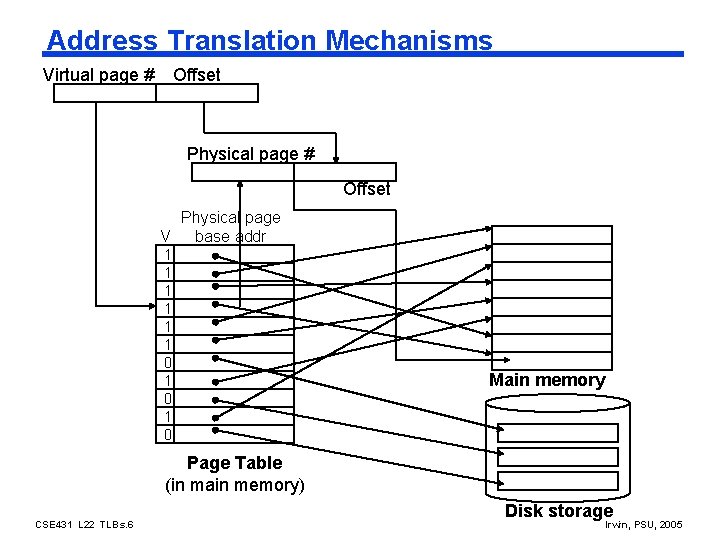

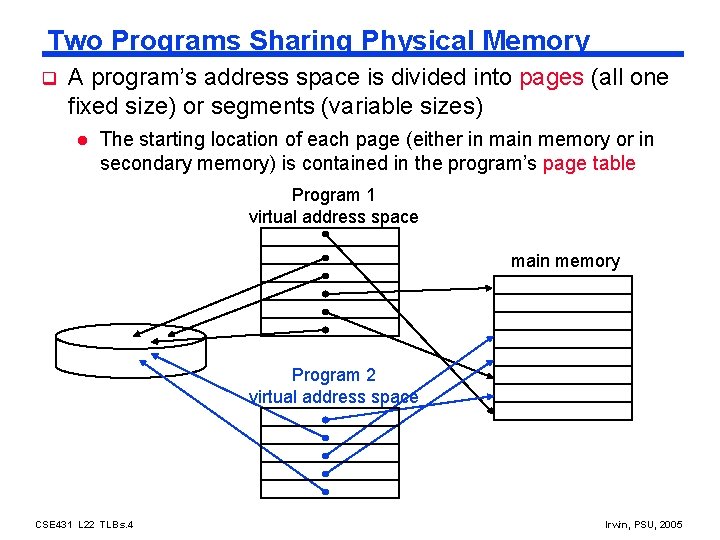

Two Programs Sharing Physical Memory q A program’s address space is divided into pages (all one fixed size) or segments (variable sizes) l The starting location of each page (either in main memory or in secondary memory) is contained in the program’s page table Program 1 virtual address space main memory Program 2 virtual address space CSE 431 L 22 TLBs. 4 Irwin, PSU, 2005

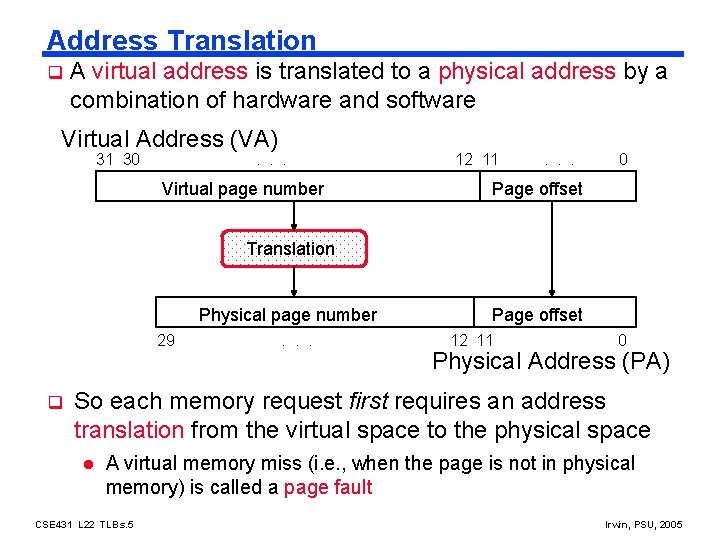

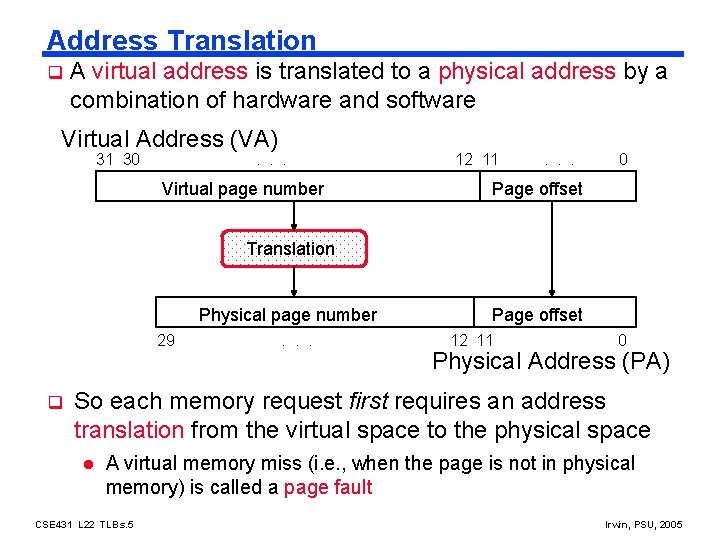

Address Translation q A virtual address is translated to a physical address by a combination of hardware and software Virtual Address (VA) 31 30 . . . Virtual page number 12 11 . . . 0 Page offset Translation Physical page number 29 q . . . Page offset 12 11 0 Physical Address (PA) So each memory request first requires an address translation from the virtual space to the physical space l A virtual memory miss (i. e. , when the page is not in physical memory) is called a page fault CSE 431 L 22 TLBs. 5 Irwin, PSU, 2005

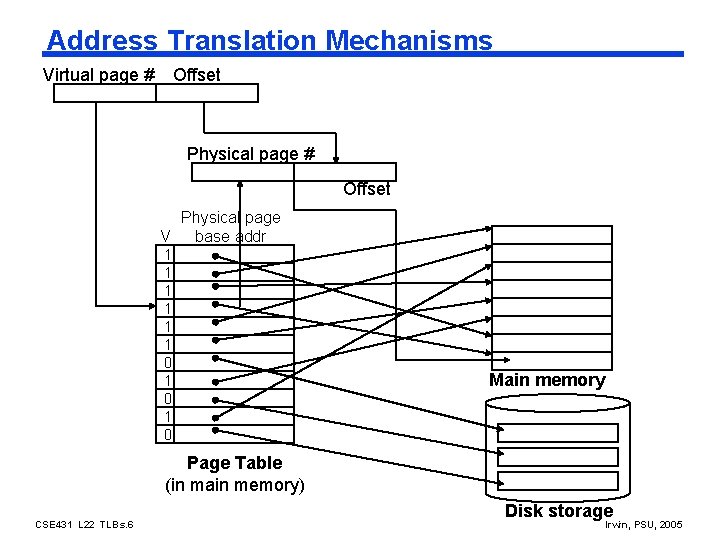

Address Translation Mechanisms Virtual page # Offset Physical page V base addr 1 1 1 0 1 0 Main memory Page Table (in main memory) CSE 431 L 22 TLBs. 6 Disk storage Irwin, PSU, 2005

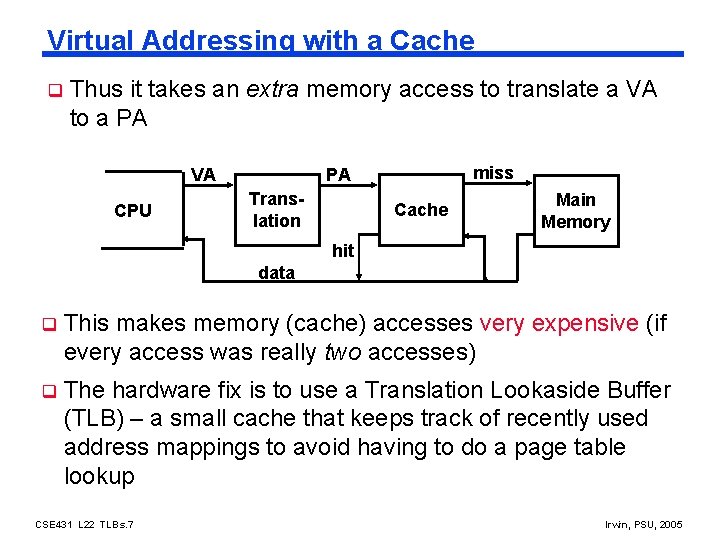

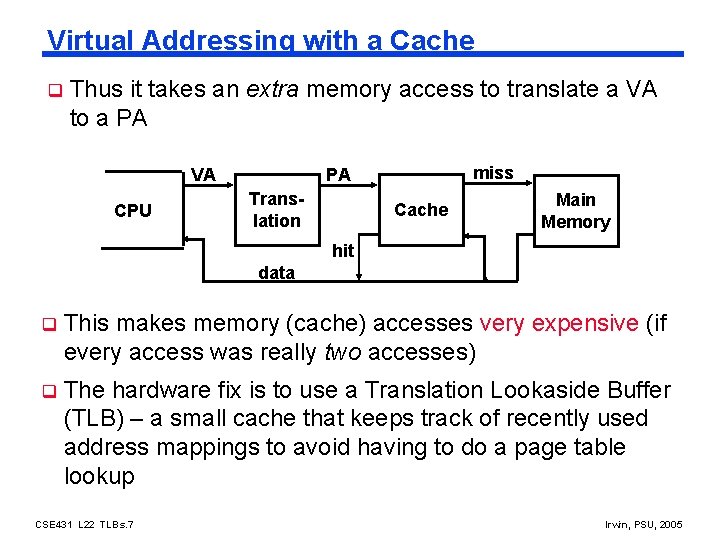

Virtual Addressing with a Cache q Thus it takes an extra memory access to translate a VA to a PA VA CPU miss PA Translation Cache Main Memory hit data q This makes memory (cache) accesses very expensive (if every access was really two accesses) q The hardware fix is to use a Translation Lookaside Buffer (TLB) – a small cache that keeps track of recently used address mappings to avoid having to do a page table lookup CSE 431 L 22 TLBs. 7 Irwin, PSU, 2005

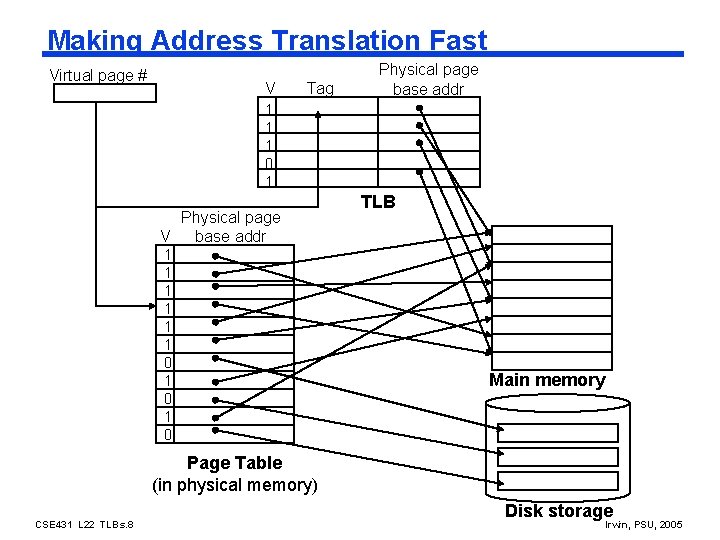

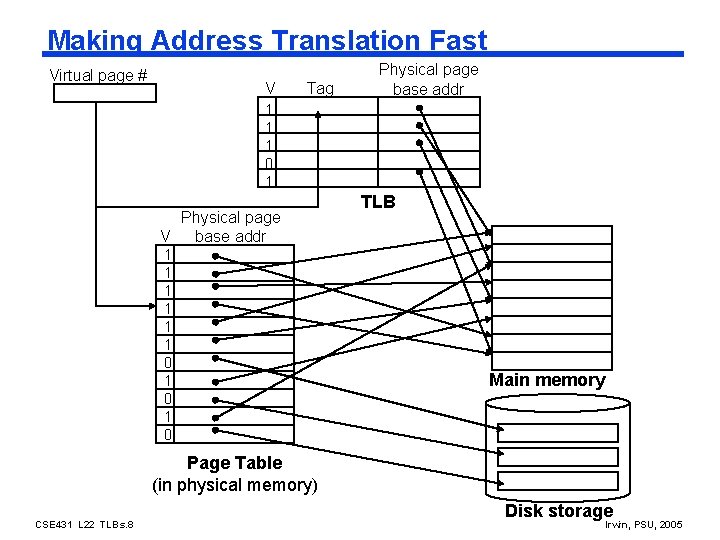

Making Address Translation Fast Virtual page # V Tag Physical page base addr 1 1 1 0 1 Physical page V base addr 1 1 1 0 1 0 TLB Main memory Page Table (in physical memory) CSE 431 L 22 TLBs. 8 Disk storage Irwin, PSU, 2005

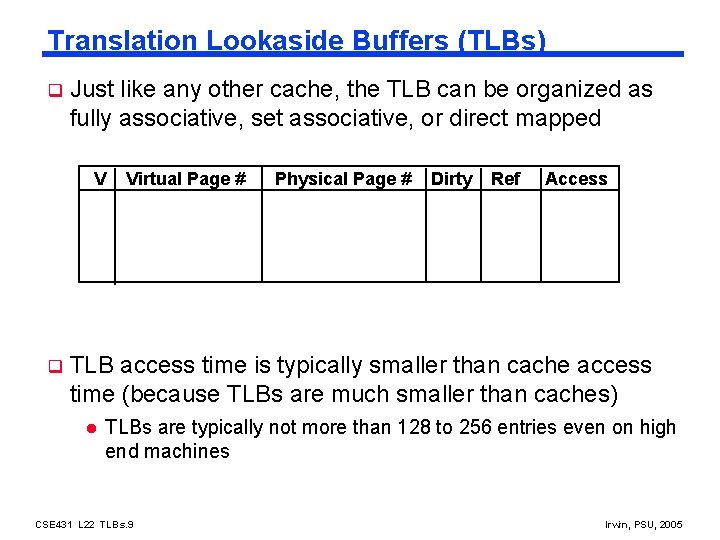

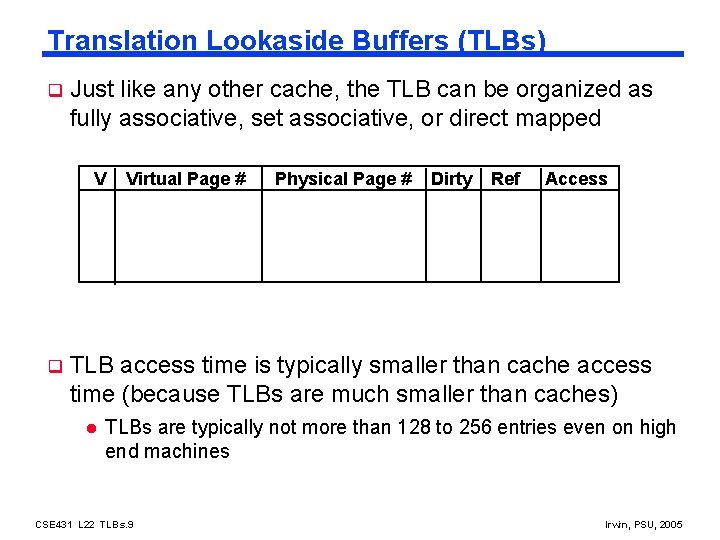

Translation Lookaside Buffers (TLBs) q Just like any other cache, the TLB can be organized as fully associative, set associative, or direct mapped V q Virtual Page # Physical Page # Dirty Ref Access TLB access time is typically smaller than cache access time (because TLBs are much smaller than caches) l TLBs are typically not more than 128 to 256 entries even on high end machines CSE 431 L 22 TLBs. 9 Irwin, PSU, 2005

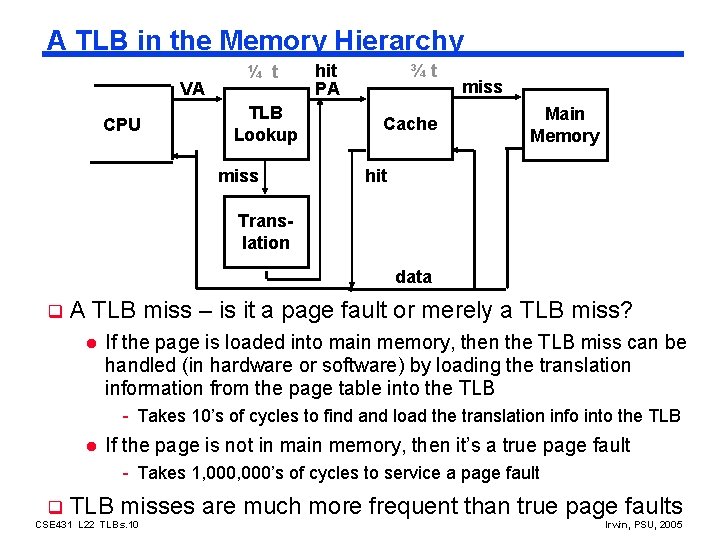

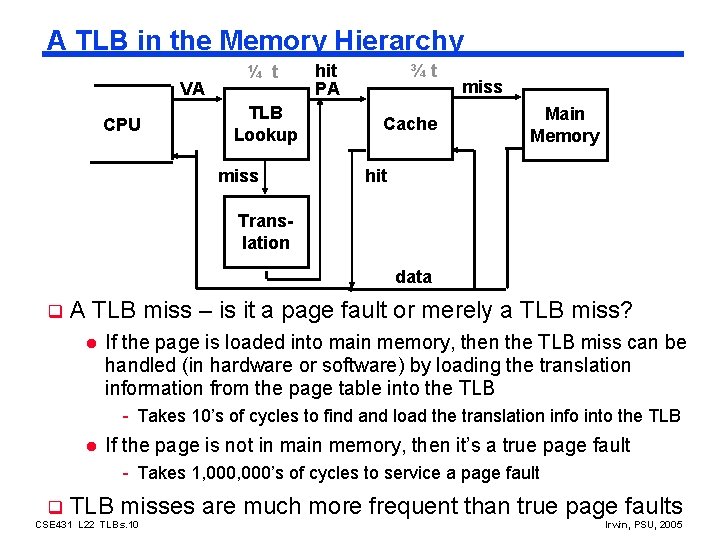

A TLB in the Memory Hierarchy VA CPU ¼ t TLB Lookup miss hit PA ¾t Cache miss Main Memory hit Translation data q A TLB miss – is it a page fault or merely a TLB miss? l If the page is loaded into main memory, then the TLB miss can be handled (in hardware or software) by loading the translation information from the page table into the TLB - Takes 10’s of cycles to find and load the translation info into the TLB l If the page is not in main memory, then it’s a true page fault - Takes 1, 000’s of cycles to service a page fault q TLB misses are much more frequent than true page faults CSE 431 L 22 TLBs. 10 Irwin, PSU, 2005

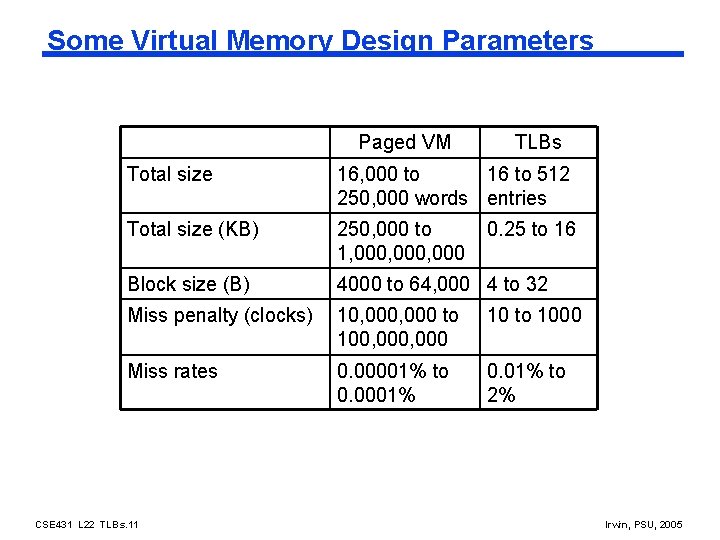

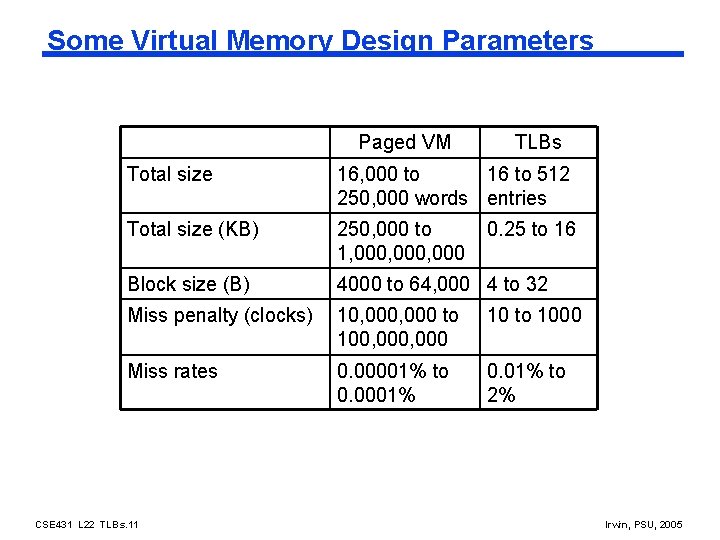

Some Virtual Memory Design Parameters Paged VM TLBs Total size 16, 000 to 16 to 512 250, 000 words entries Total size (KB) 250, 000 to 1, 000, 000 Block size (B) 4000 to 64, 000 4 to 32 Miss penalty (clocks) 10, 000 to 100, 000 10 to 1000 Miss rates 0. 00001% to 0. 0001% 0. 01% to 2% CSE 431 L 22 TLBs. 11 0. 25 to 16 Irwin, PSU, 2005

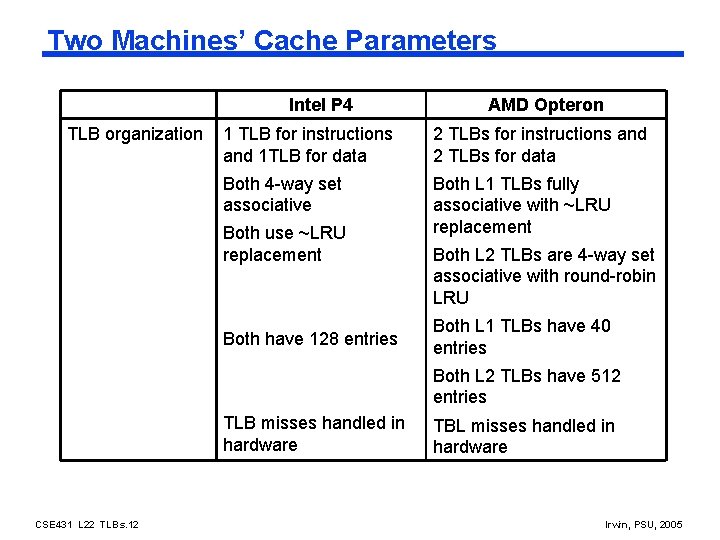

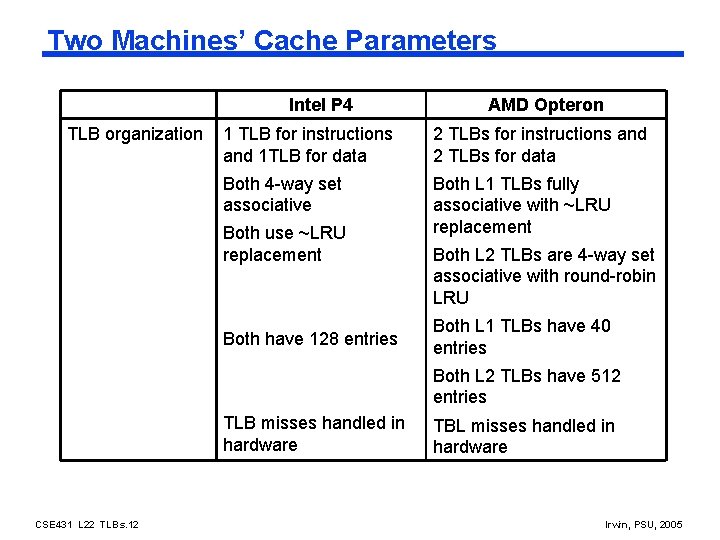

Two Machines’ Cache Parameters Intel P 4 TLB organization AMD Opteron 1 TLB for instructions and 1 TLB for data 2 TLBs for instructions and 2 TLBs for data Both 4 -way set associative Both L 1 TLBs fully associative with ~LRU replacement Both use ~LRU replacement Both have 128 entries Both L 2 TLBs are 4 -way set associative with round-robin LRU Both L 1 TLBs have 40 entries Both L 2 TLBs have 512 entries TLB misses handled in hardware CSE 431 L 22 TLBs. 12 TBL misses handled in hardware Irwin, PSU, 2005

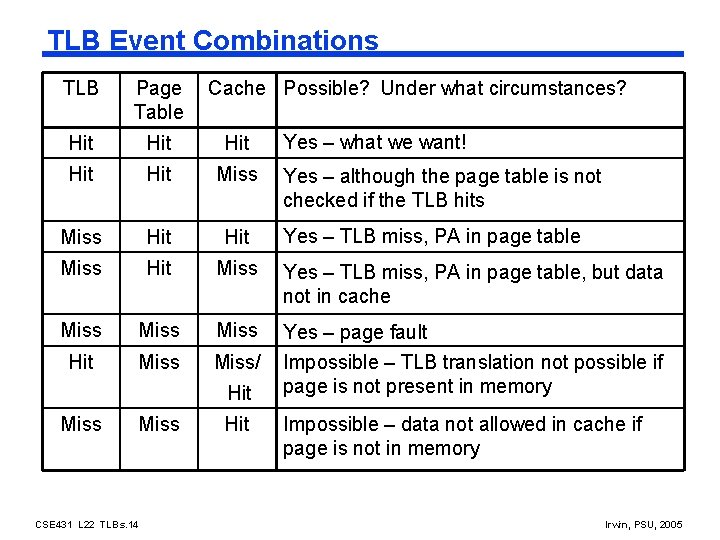

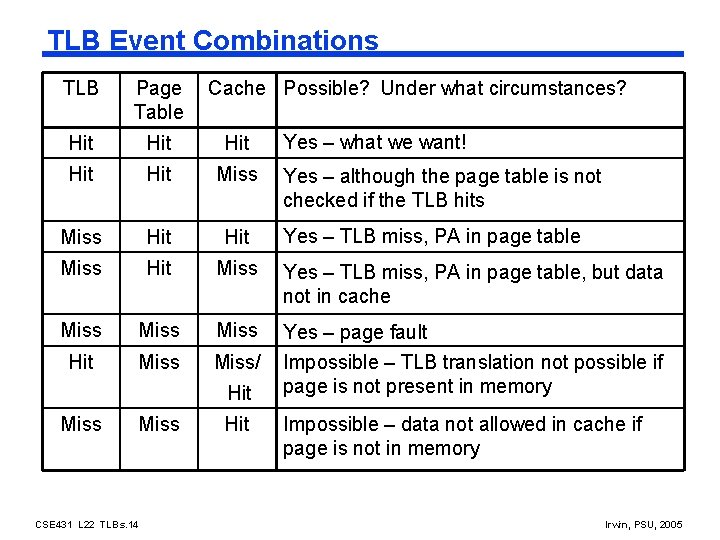

TLB Event Combinations TLB Page Table Cache Possible? Under what circumstances? Hit Hit Hit Miss Hit Miss Yes – TLB miss, PA in page table, but data not in cache Miss Hit Miss/ Yes – page fault Impossible – TLB translation not possible if page is not present in memory Hit Miss CSE 431 L 22 TLBs. 14 Hit Yes – what we want! Yes – although the page table is not checked if the TLB hits Yes – TLB miss, PA in page table Impossible – data not allowed in cache if page is not in memory Irwin, PSU, 2005

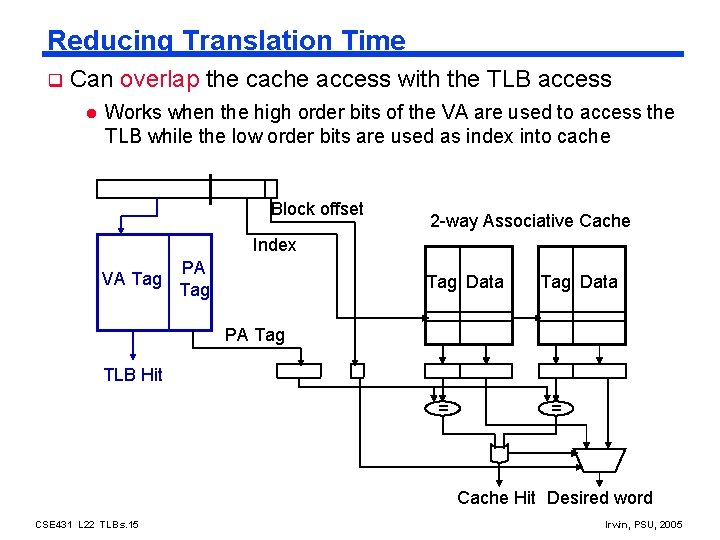

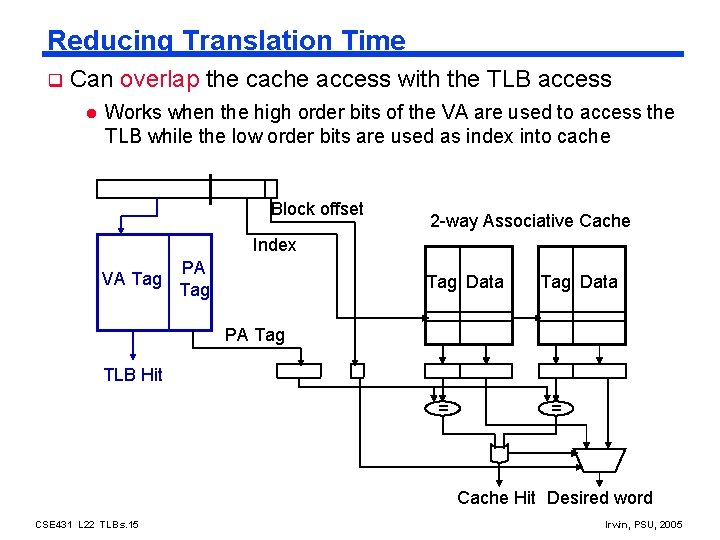

Reducing Translation Time q Can overlap the cache access with the TLB access l Works when the high order bits of the VA are used to access the TLB while the low order bits are used as index into cache Block offset 2 -way Associative Cache Index VA Tag PA Tag Data PA Tag TLB Hit = = Cache Hit Desired word CSE 431 L 22 TLBs. 15 Irwin, PSU, 2005

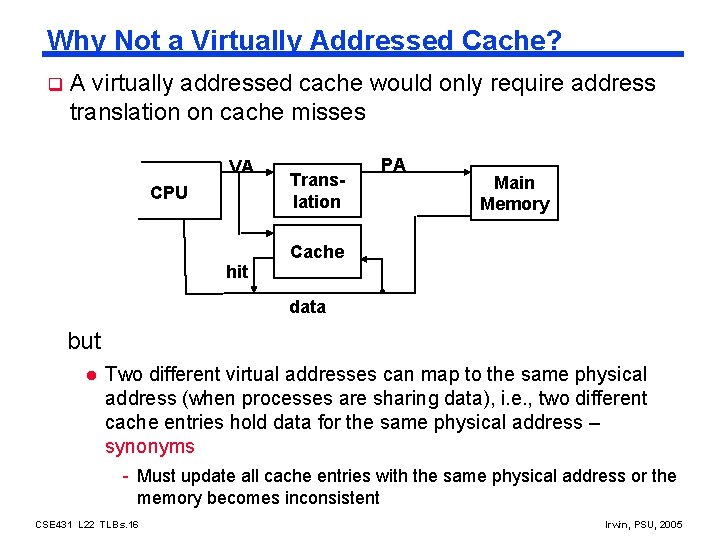

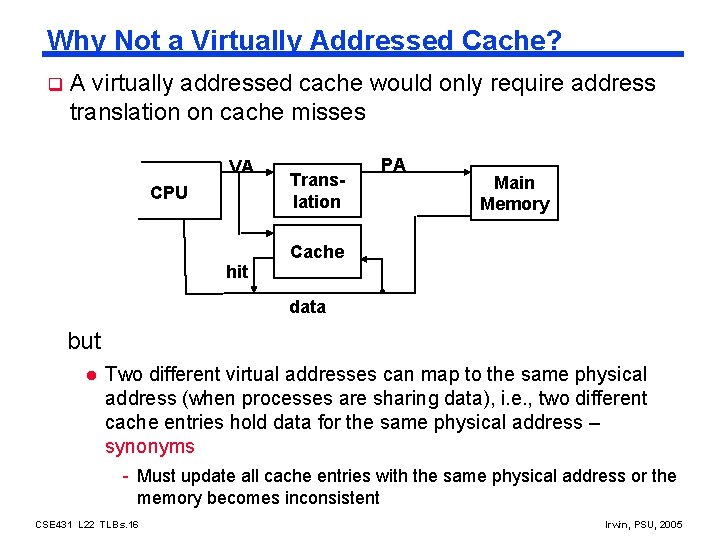

Why Not a Virtually Addressed Cache? q A virtually addressed cache would only require address translation on cache misses VA CPU hit Translation PA Main Memory Cache data but l Two different virtual addresses can map to the same physical address (when processes are sharing data), i. e. , two different cache entries hold data for the same physical address – synonyms - Must update all cache entries with the same physical address or the memory becomes inconsistent CSE 431 L 22 TLBs. 16 Irwin, PSU, 2005

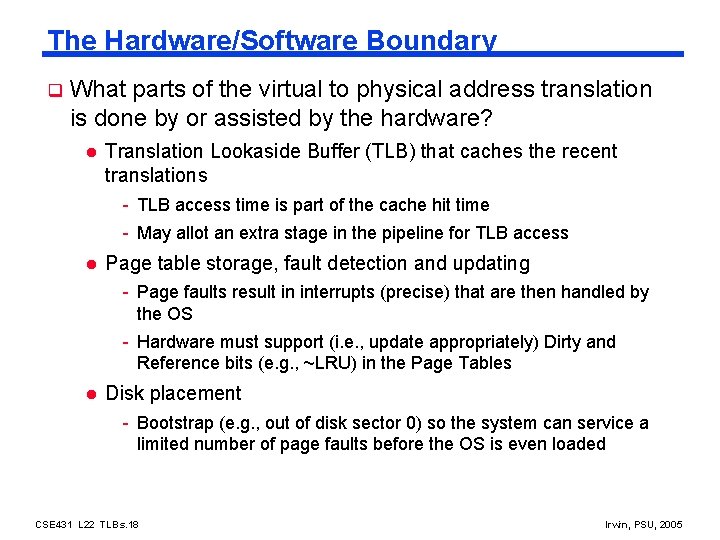

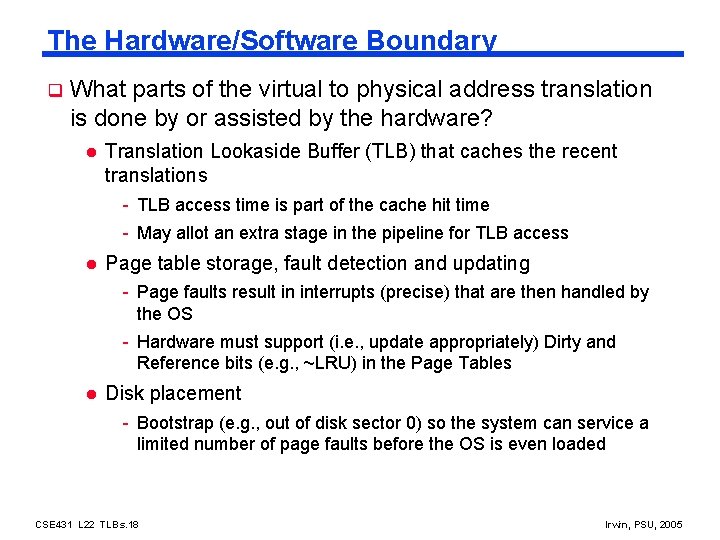

The Hardware/Software Boundary q What parts of the virtual to physical address translation is done by or assisted by the hardware? l Translation Lookaside Buffer (TLB) that caches the recent translations - TLB access time is part of the cache hit time - May allot an extra stage in the pipeline for TLB access l Page table storage, fault detection and updating - Page faults result in interrupts (precise) that are then handled by the OS - Hardware must support (i. e. , update appropriately) Dirty and Reference bits (e. g. , ~LRU) in the Page Tables l Disk placement - Bootstrap (e. g. , out of disk sector 0) so the system can service a limited number of page faults before the OS is even loaded CSE 431 L 22 TLBs. 18 Irwin, PSU, 2005

Summary q The Principle of Locality: l q q Program likely to access a relatively small portion of the address space at any instant of time. - Temporal Locality: Locality in Time - Spatial Locality: Locality in Space Caches, TLBs, Virtual Memory all understood by examining how they deal with the four questions 1. Where can block be placed? 2. How is block found? 3. What block is replaced on miss? 4. How are writes handled? Page tables map virtual address to physical address l TLBs are important for fast translation CSE 431 L 22 TLBs. 19 Irwin, PSU, 2005

Next Lecture and Reminders q Next lecture - Reading assignment – PH 8. 1 -8. 2 q Reminders l HW 5 (and last) due Dec 1 st (Part 2), Dec 6 th (Part 1) l Check grade posting on-line (by your midterm exam number) for correctness l Final exam schedule - Tuesday, December 13 th, 2: 30 -4: 20, 22 Deike CSE 431 L 22 TLBs. 20 Irwin, PSU, 2005