CSCI1680 Transport Layer III Congestion Control Strikes Back

CSCI-1680 Transport Layer III Congestion Control Strikes Back Theophilus Benson Based partly on lecture notes by. Rodrigo Foncesa, David Mazières, Phil Levis, John Jan

This Week • Congestion Control Continued – Quick Review • TCP Friendliness – Equation Based Rate Control • TCP’s Inherent unfairness • TCP on Lossy Links • Congestion Control versus Avoidance – Getting help from the network • Cheating TCP

Glossary of Terms • RTT = Round Trip Time • MSS = Maximum Segment Size – Largest amount of TCP data in a packet • Sequence Numbers • RTO = Timeout • Dup-Ack = a duplicate Acknowledgement • Transmission Rounds

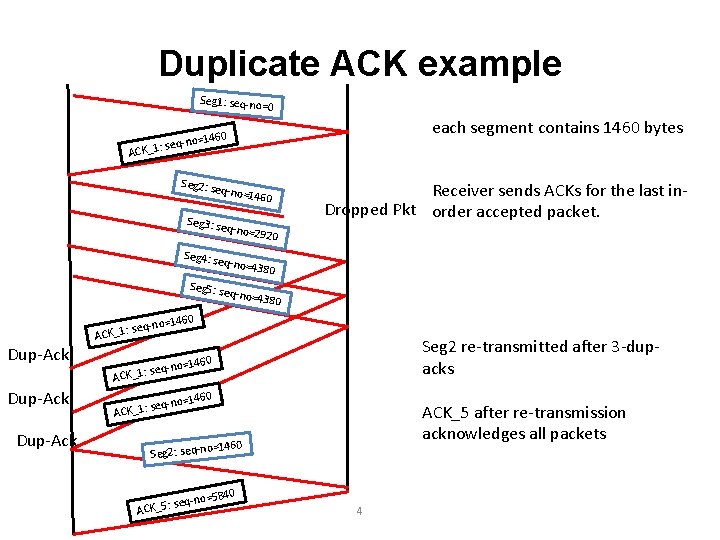

Duplicate ACK example Seg 1: seq-no= 0 14 eq-no= CK_1: s each segment contains 1460 bytes 60 A Seg 2: se q-no=1 Receiver sends ACKs for the last in. Dropped Pkt order accepted packet. 460 Seg 3: se q-no=2 920 Seg 4: se q-no=4 380 Seg 5: se q-no=4 38 0 o=1460 Dup-Ack seq-n ACK_1: Seg 2 re-transmitted after 3 -dupacks 460 q-no=1 se ACK_1: ACK_5 after re-transmission acknowledges all packets o=1460 Seg 2: seq-n -no=58 seq ACK_5: 40 4

Glossary of Terms • RTT = Round Trip Time • MSS = Maximum Segment Size – Largest amount of TCP data in a packet • Sequence Numbers • RTO = Timeout • Dup-Ack = a duplicate Acknowledgement • Transmission Rounds

MSS + Sequence Numbers Your Code wants to send 146 KB over the network • Network MTU = 1500 B • Protocol header = IP(20 B)+TCP(20 B) = 40 B • What about link layer header? (ethernet or ATM? ) MSS =1500 B-40 B = 1460 B # of TCP data segments = 146 KB/1460 B = 100 Each TCP Data segment has a sequence number: • Location of first byte in the data segment.

Glossary of Terms • RTT = Round Trip Time • MSS = Maximum Segment Size – Largest amount of TCP data in a packet • Sequence Numbers • RTO = Timeout • Dup-Ack = a duplicate Acknowledgement • Transmission Rounds

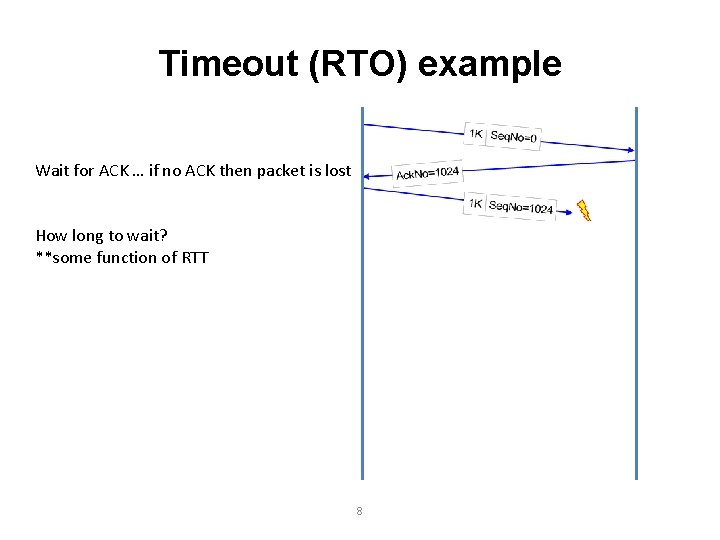

Timeout (RTO) example Wait for ACK … if no ACK then packet is lost How long to wait? **some function of RTT 8

Glossary of Terms • RTT = Round Trip Time • MSS = Maximum Segment Size – Largest amount of TCP data in a packet • Sequence Numbers • RTO = Timeout • Dup-Ack = a duplicate Acknowledgement • Transmission Rounds

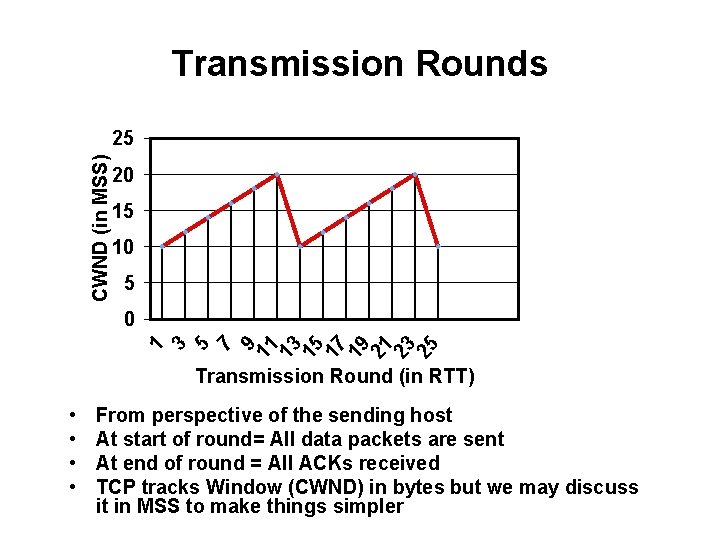

CWND (in MSS) Transmission Rounds Transmission Round (in RTT) • • From perspective of the sending host At start of round= All data packets are sent At end of round = All ACKs received TCP tracks Window (CWND) in bytes but we may discuss it in MSS to make things simpler

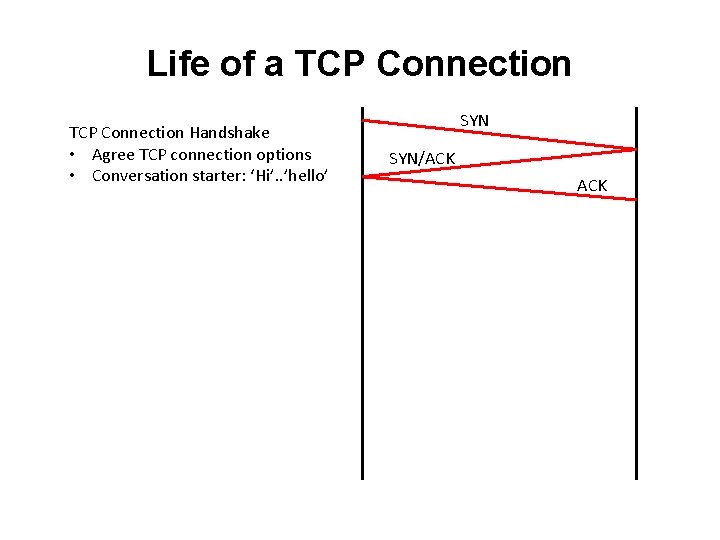

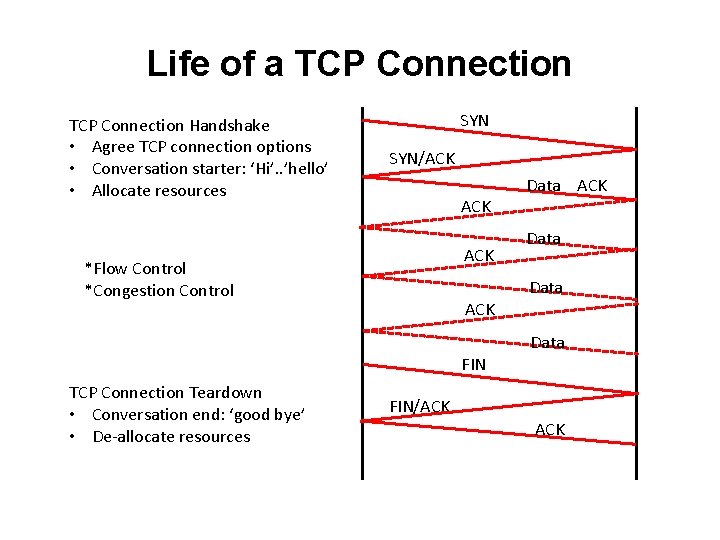

Life of a TCP Connection Handshake • Agree TCP connection options • Conversation starter: ‘Hi’. . ’hello’ SYN/ACK

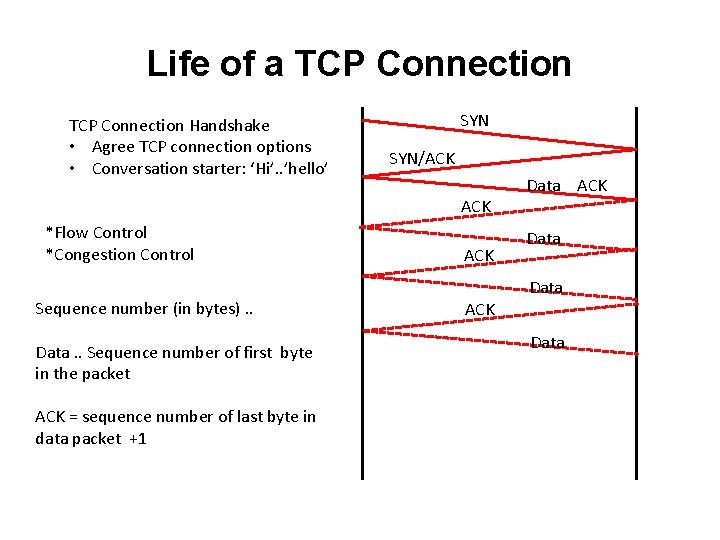

Life of a TCP Connection Handshake • Agree TCP connection options • Conversation starter: ‘Hi’. . ’hello’ SYN/ACK *Flow Control *Congestion Control Sequence number (in bytes). . Data. . Sequence number of first byte in the packet ACK = sequence number of last byte in data packet +1 ACK Data ACK Data

Life of a TCP Connection Handshake • Agree TCP connection options • Conversation starter: ‘Hi’. . ’hello’ • Allocate resources SYN/ACK ACK *Flow Control *Congestion Control Data ACK Data FIN TCP Connection Teardown • Conversation end: ‘good bye’ • De-allocate resources FIN/ACK

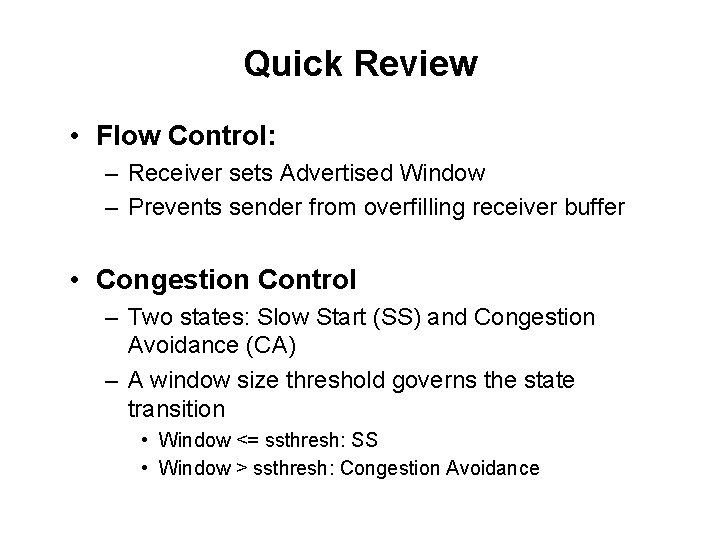

Quick Review • Flow Control: – Receiver sets Advertised Window – Prevents sender from overfilling receiver buffer • Congestion Control – Two states: Slow Start (SS) and Congestion Avoidance (CA) – A window size threshold governs the state transition • Window <= ssthresh: SS • Window > ssthresh: Congestion Avoidance

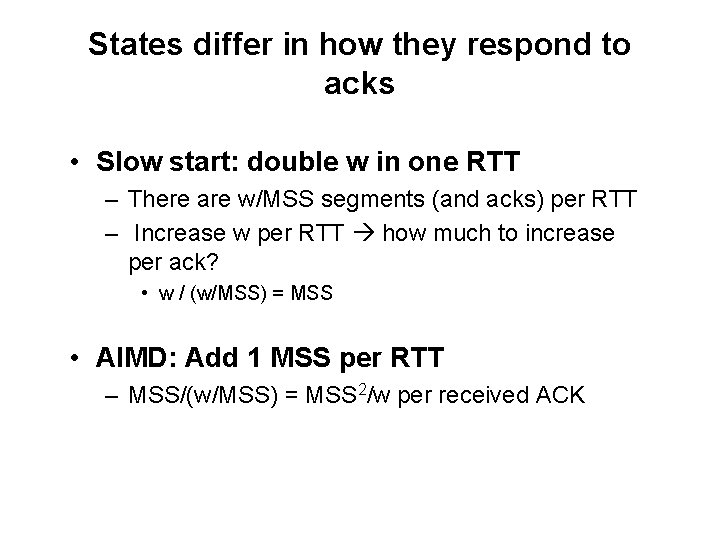

States differ in how they respond to acks • Slow start: double w in one RTT – There are w/MSS segments (and acks) per RTT – Increase w per RTT how much to increase per ack? • w / (w/MSS) = MSS • AIMD: Add 1 MSS per RTT – MSS/(w/MSS) = MSS 2/w per received ACK

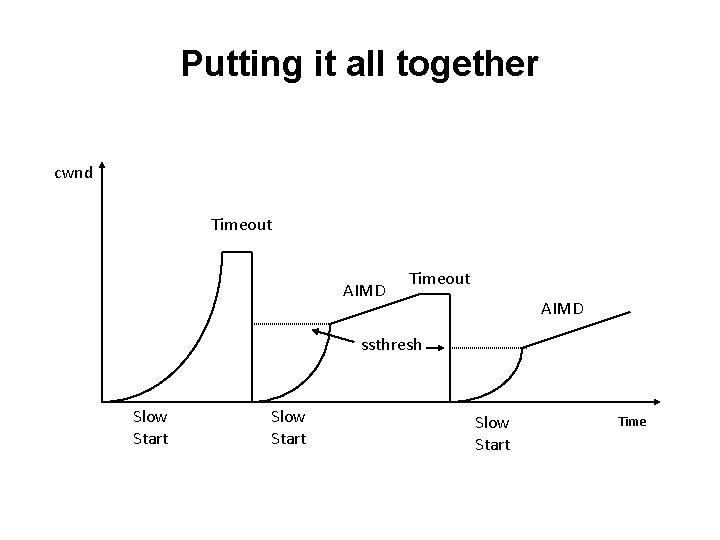

Putting it all together cwnd Timeout AIMD ssthresh Slow Start Time

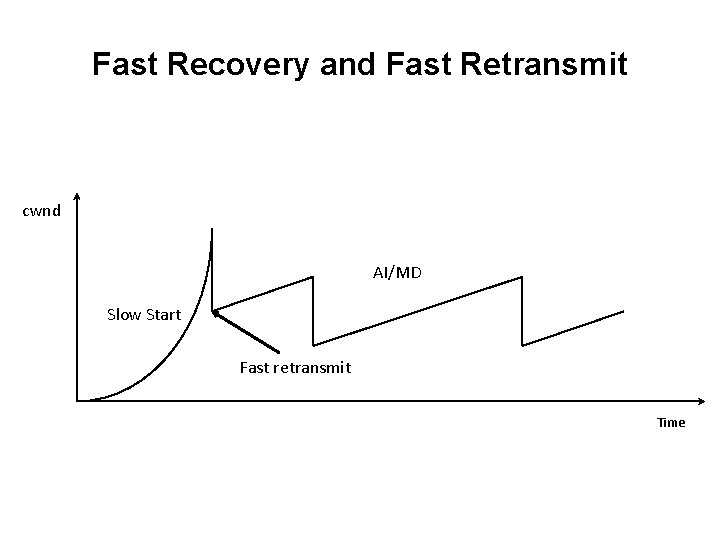

Fast Recovery and Fast Retransmit cwnd AI/MD Slow Start Fast retransmit Time

This Week • Congestion Control Continued – Quick Review • TCP Friendliness – Equation Based Rate Control • TCP’s Inherent unfairness • TCP on Lossy Links • Congestion Control versus Avoidance – Getting help from the network • Cheating TCP

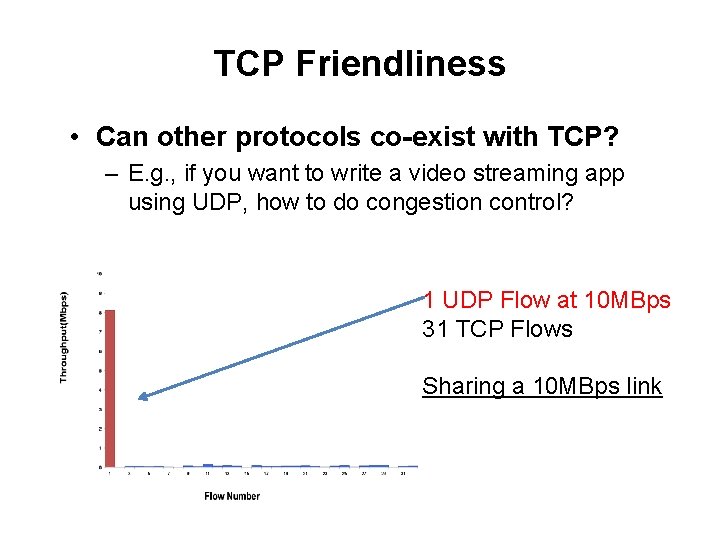

TCP Friendliness • Can other protocols co-exist with TCP? – E. g. , if you want to write a video streaming app using UDP, how to do congestion control? 1 UDP Flow at 10 MBps 31 TCP Flows Sharing a 10 MBps link

TCP Friendliness • Can other protocols co-exist with TCP? – E. g. , if you want to write a video streaming app using UDP, how to do congestion control? • Equation-based Congestion Control – Instead of implementing TCP’s CC, estimate the rate at which TCP would send. Function of what? – RTT, MSS, Loss • Measure RTT, Loss, send at that rate!

TCP Friendliness • Can other protocols co-exist with TCP? – E. g. , if you want to write a video streaming app using UDP, how to do congestion control? • Equation-based Congestion Control – Instead of implementing TCP’s CC, estimate the rate at which TCP would send. Function of what? – RTT, MSS, Loss Fair Througput= function( ? , ? ) • Measure RTT, Loss, send at that rate!

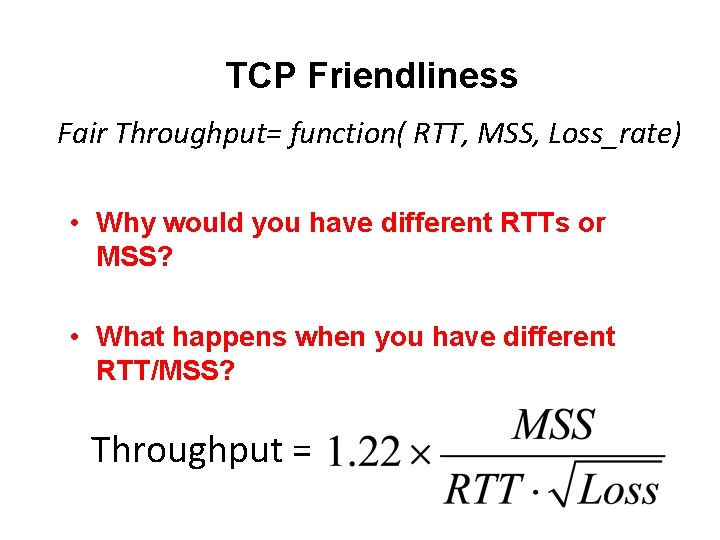

TCP Friendliness • Can other protocols co-exist with TCP? – E. g. , if you want to write a video streaming app using UDP, how to do congestion control? • Equation-based Congestion Control – Instead of implementing TCP’s CC, estimate the rate at which TCP would send. Function of what? Fair Throughput= function( RTT, MSS, Loss_rate) • Measure RTT, Loss, MMSS – Calculate and send at fair share rate!

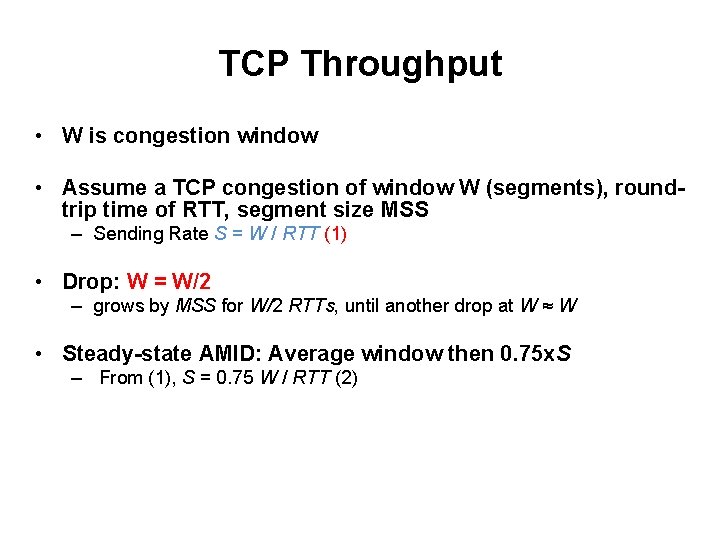

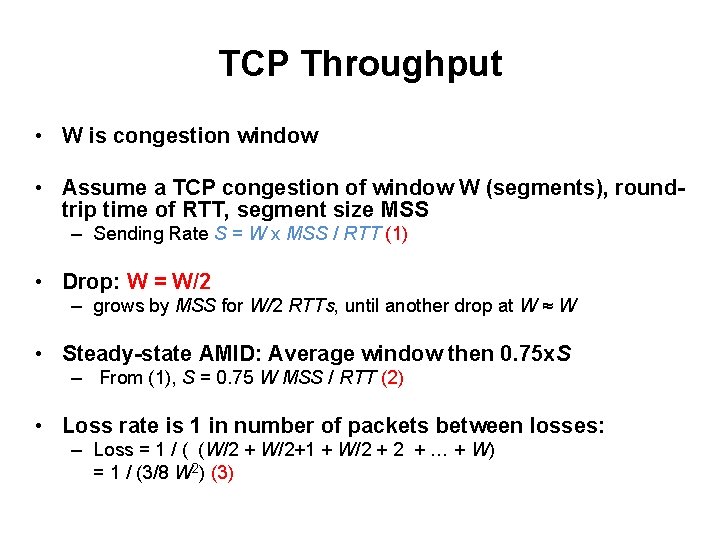

TCP Throughput • W is congestion window • Assume a TCP congestion of window W (segments), roundtrip time of RTT, segment size MSS – Sending Rate S = W / RTT (1) • Drop: W = W/2 – grows by MSS for W/2 RTTs, until another drop at W ≈ W • Steady-state AMID: Average window then 0. 75 x. S – From (1), S = 0. 75 W / RTT (2) • Loss rate is 1 in number of packets between losses: – Loss = 1 / ( 1 + (W/2 + W/2+1 + W/2 + … + W) = 1 / (3/8 W 2) (3)

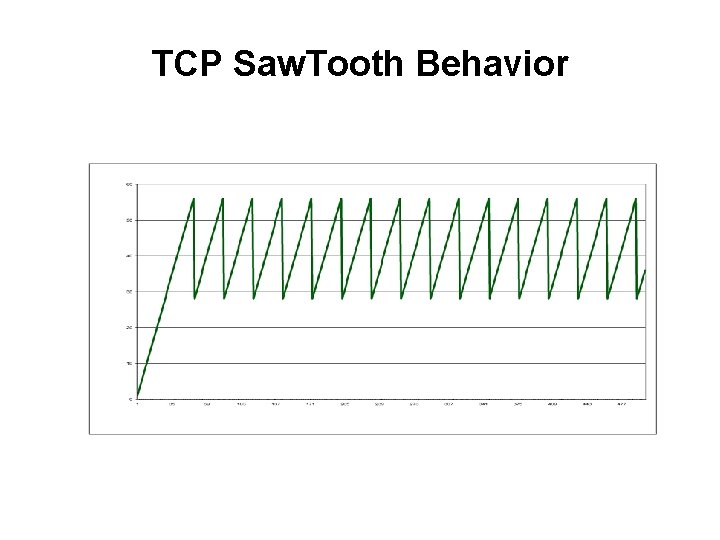

TCP Saw. Tooth Behavior

TCP Throughput • W is congestion window • Assume a TCP congestion of window W (segments), roundtrip time of RTT, segment size MSS – Sending Rate S = W x MSS / RTT (1) • Drop: W = W/2 – grows by MSS for W/2 RTTs, until another drop at W ≈ W • Steady-state AMID: Average window then 0. 75 x. S – From (1), S = 0. 75 W MSS / RTT (2) • Loss rate is 1 in number of packets between losses: – Loss = 1 / ( (W/2 + W/2+1 + W/2 + … + W) = 1 / (3/8 W 2) (3)

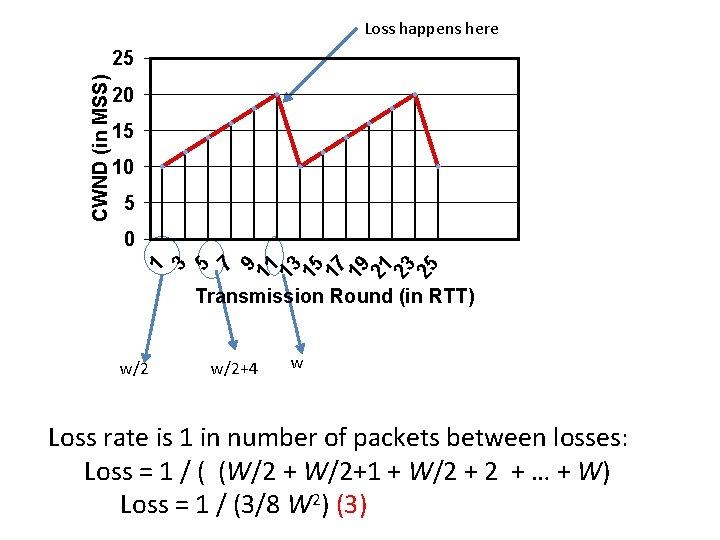

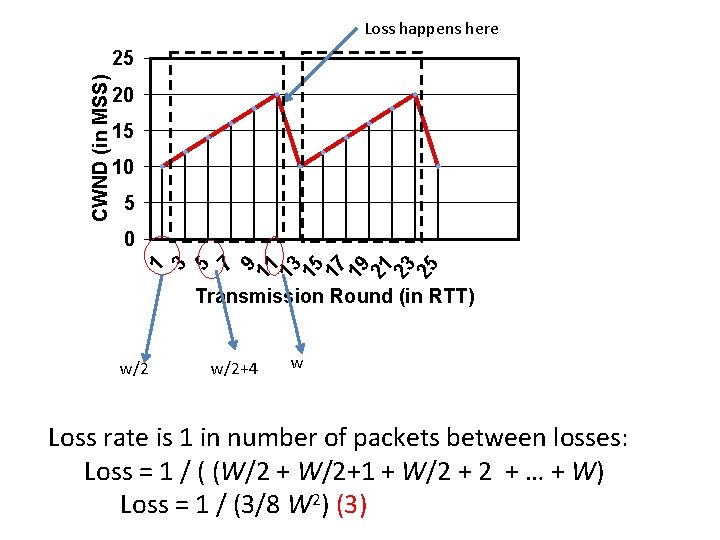

CWND (in MSS) Loss happens here Transmission Round (in RTT) w/2+4 w Loss rate is 1 in number of packets between losses: Loss = 1 / ( (W/2 + W/2+1 + W/2 + … + W) Loss = 1 / (3/8 W 2) (3)

CWND (in MSS) Transmission Round (in RTT)

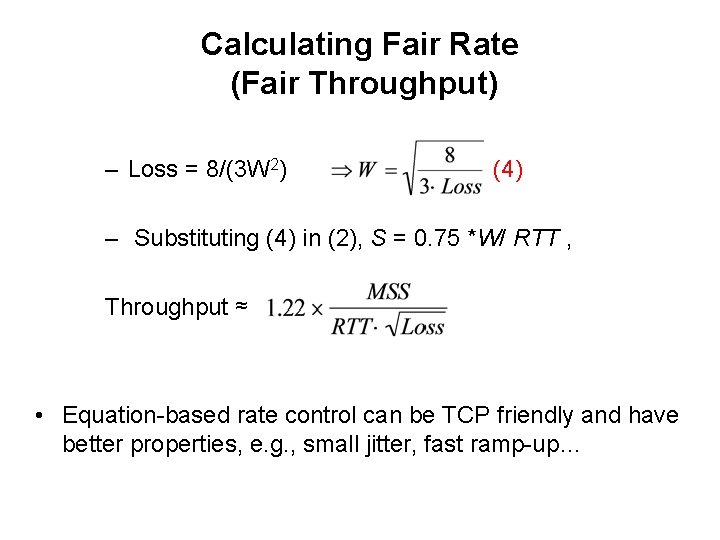

Calculating Fair Rate (Fair Throughput) – Loss = 8/(3 W 2) (4) – Substituting (4) in (2), S = 0. 75 *W/ RTT , Throughput ≈ • Equation-based rate control can be TCP friendly and have better properties, e. g. , small jitter, fast ramp-up…

This Week • Congestion Control Continued – Quick Review • TCP Friendliness – Equation Based Rate Control • TCP’s Inherent unfairness • TCP on Lossy Links • Congestion Control versus Avoidance – Getting help from the network • Cheating TCP

Assumptions Made by TCP • Loss == congestion – Loss only happens when: – Aggregate sending Rate > capacity • All flows in the network have the same: – RTT – MSS – Same loss rates • Everyone Plays Nicely

TCP Friendliness Fair Throughput= function( RTT, MSS, Loss_rate) • Why would you have different RTTs or MSS? • What happens when you have different RTT/MSS? Throughput =

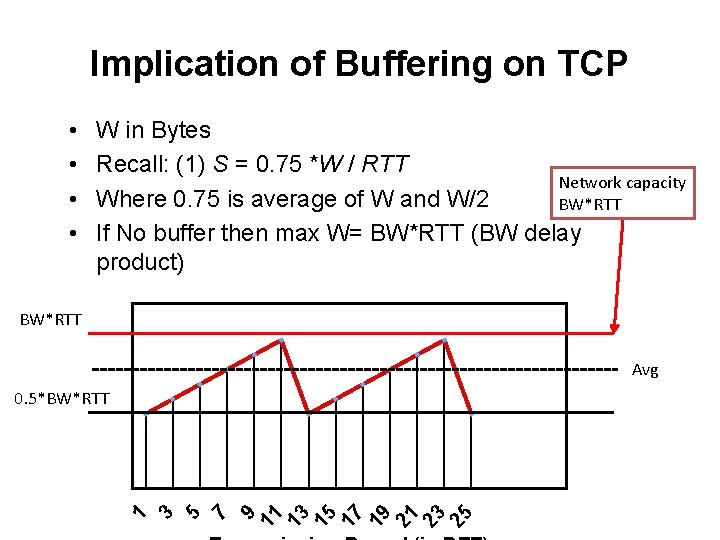

Implication of Buffering on TCP • • W in Bytes Recall: (1) S = 0. 75 *W / RTT Where 0. 75 is average of W and W/2 If No buffer then max W= BW*RTT (BW delay product)

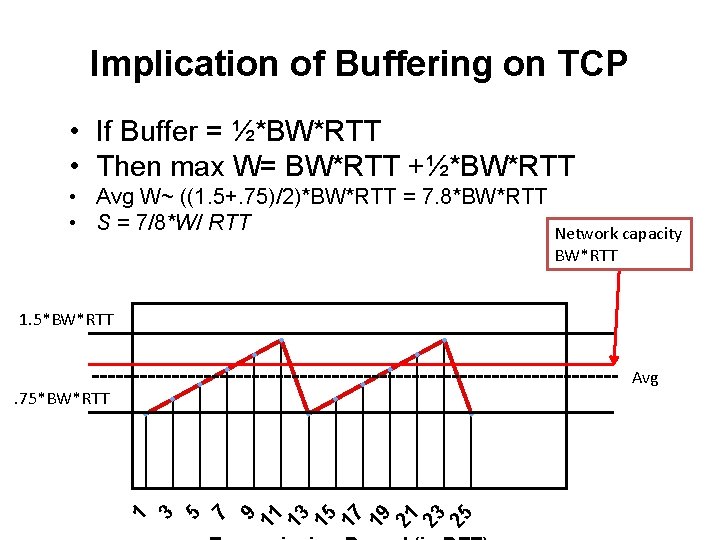

Implication of Buffering on TCP • If Buffer = ½*BW*RTT • Then max W= BW*RTT +½*BW*RTT • Avg W~ ((1. 5+. 75)/2)*BW*RTT = 7. 8*BW*RTT • S = 7/8*W/ RTT • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

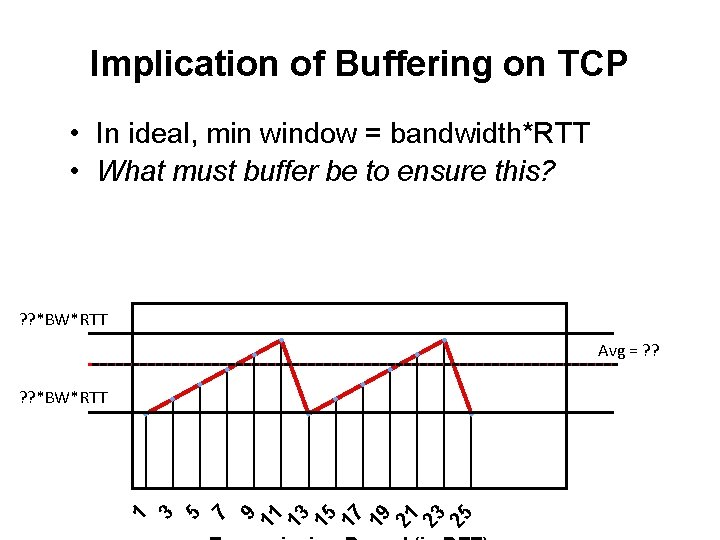

Implication of Buffering on TCP • In ideal, min window = bandwidth*RTT • What must buffer be to ensure this? • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

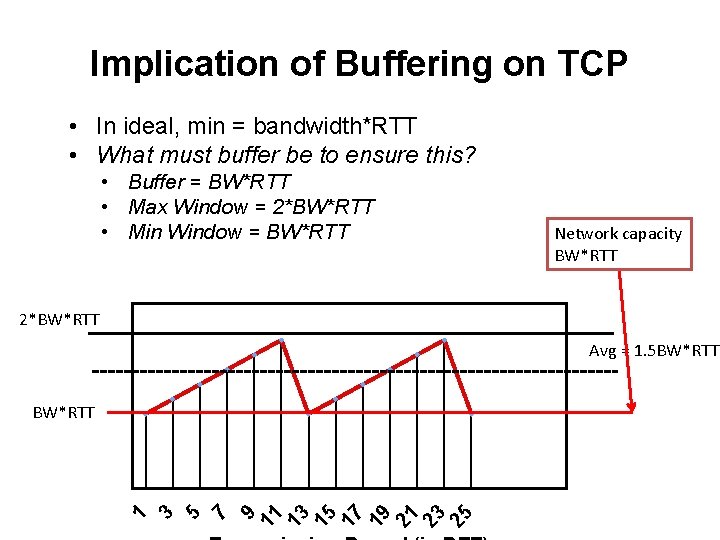

Implication of Buffering on TCP • In ideal, min = bandwidth*RTT • What must buffer be to ensure this? • Buffer = BW*RTT • Max Window = 2*BW*RTT • Min Window = BW*RTT • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

This Week • Congestion Control Continued – Quick Review • TCP Friendliness – Equation Based Rate Control • TCP’s Inherent unfairness • TCP on Lossy Links • Congestion Control versus Avoidance – Getting help from the network • Cheating TCP

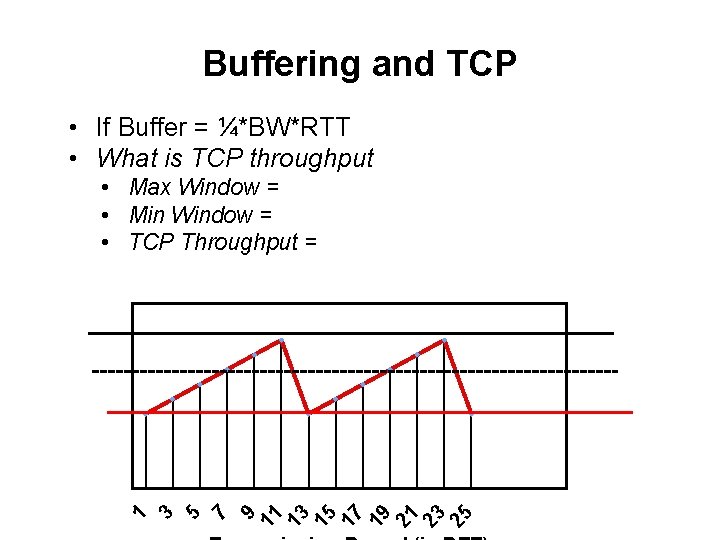

Buffering and TCP • If Buffer = ¼*BW*RTT • What is TCP throughput • Max Window = • Min Window = • TCP Throughput = • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

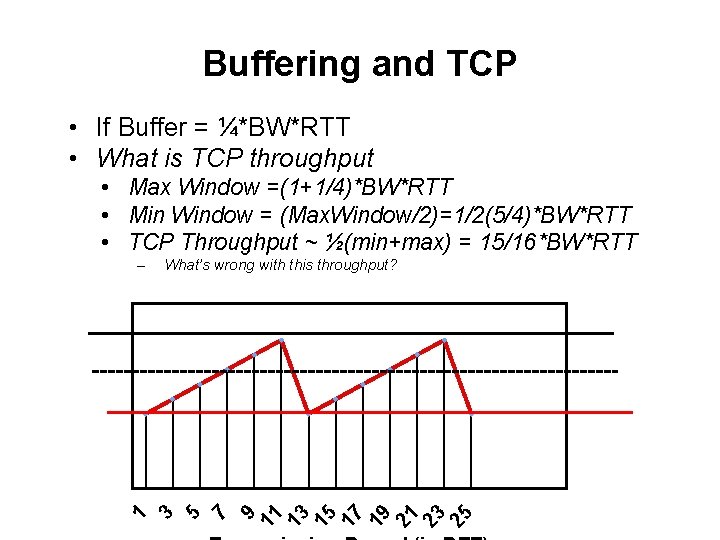

Buffering and TCP • If Buffer = ¼*BW*RTT • What is TCP throughput • Max Window =(1+1/4)*BW*RTT • Min Window = (Max. Window/2)=1/2(5/4)*BW*RTT • TCP Throughput ~ ½(min+max) = 15/16*BW*RTT – What’s wrong with this throughput? • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

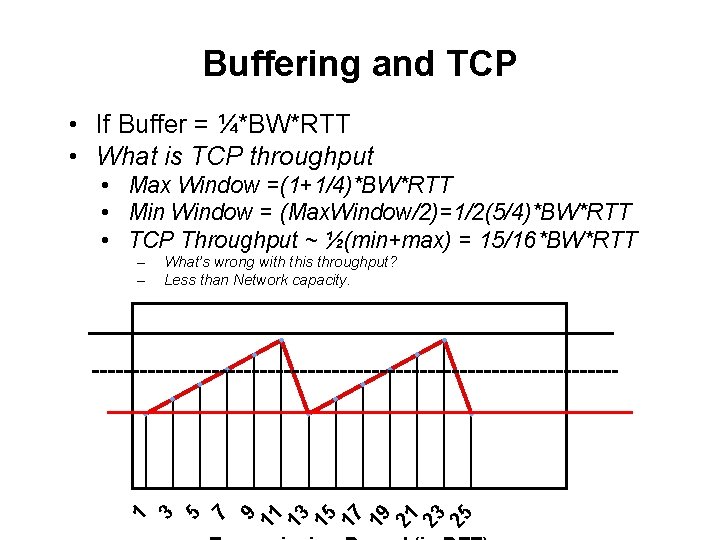

Buffering and TCP • If Buffer = ¼*BW*RTT • What is TCP throughput • Max Window =(1+1/4)*BW*RTT • Min Window = (Max. Window/2)=1/2(5/4)*BW*RTT • TCP Throughput ~ ½(min+max) = 15/16*BW*RTT – – What’s wrong with this throughput? Less than Network capacity. • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

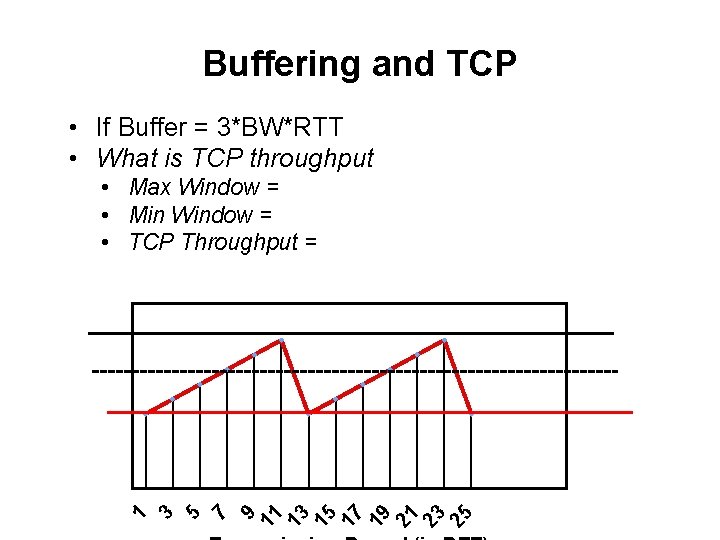

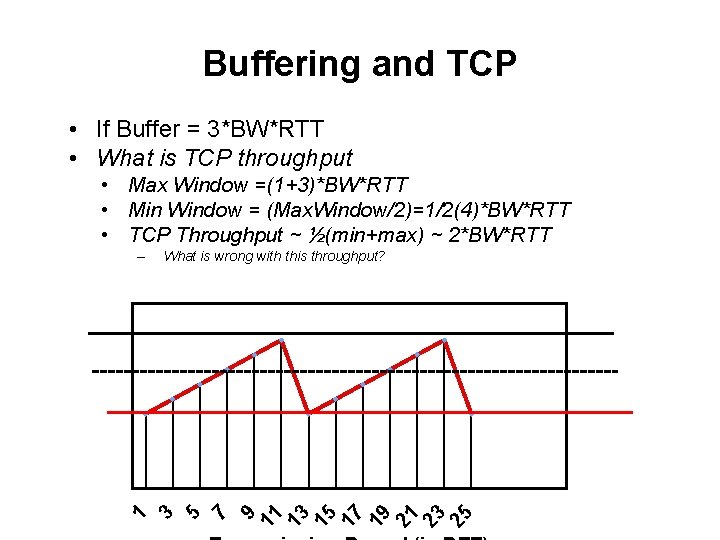

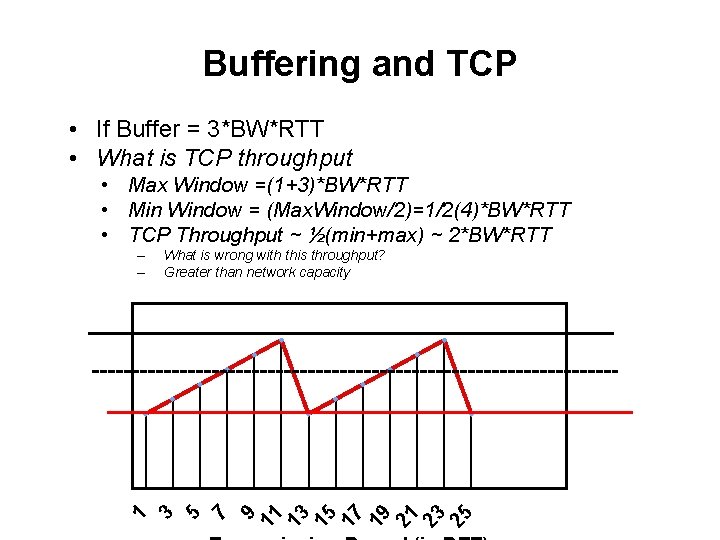

Buffering and TCP • If Buffer = 3*BW*RTT • What is TCP throughput • Max Window = • Min Window = • TCP Throughput = • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

Buffering and TCP • If Buffer = 3*BW*RTT • What is TCP throughput • Max Window =(1+3)*BW*RTT • Min Window = (Max. Window/2)=1/2(4)*BW*RTT • TCP Throughput ~ ½(min+max) ~ 2*BW*RTT – What is wrong with this throughput? • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

Buffering and TCP • If Buffer = 3*BW*RTT • What is TCP throughput • Max Window =(1+3)*BW*RTT • Min Window = (Max. Window/2)=1/2(4)*BW*RTT • TCP Throughput ~ ½(min+max) ~ 2*BW*RTT – – What is wrong with this throughput? Greater than network capacity • If No buffer then W*MSS = capacity – S = 0. 75* Capacity/RTT • What is ideal Sending rate? – (2) S = Capacity/RTT • What is ideal buffering?

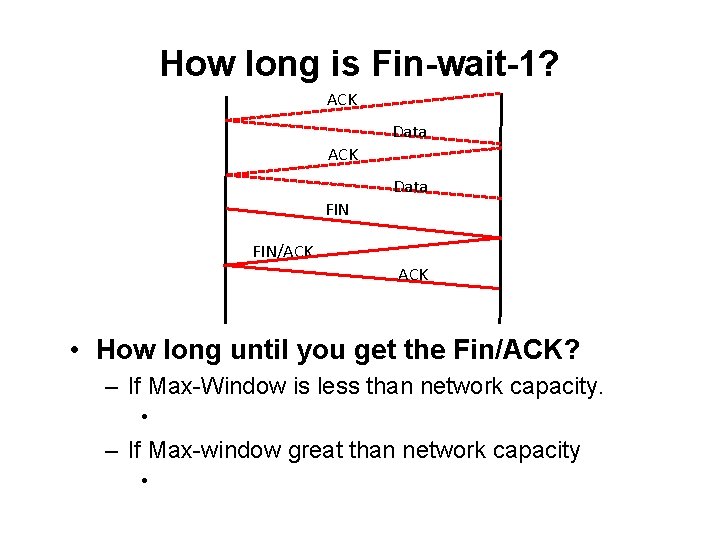

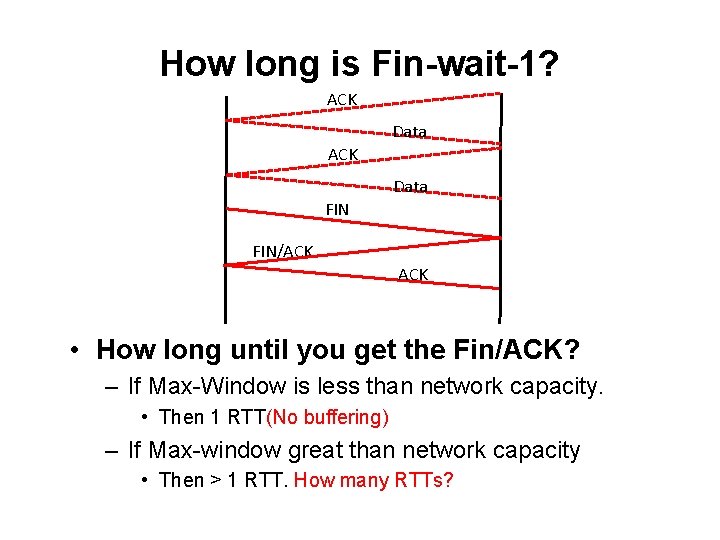

How long is Fin-wait-1? ACK Data FIN/ACK • How long until you get the Fin/ACK? – If Max-Window is less than network capacity. • – If Max-window great than network capacity •

How long is Fin-wait-1? ACK Data FIN/ACK • How long until you get the Fin/ACK? – If Max-Window is less than network capacity. • Then 1 RTT(No buffering) – If Max-window great than network capacity • Then > 1 RTT. How many RTTs?

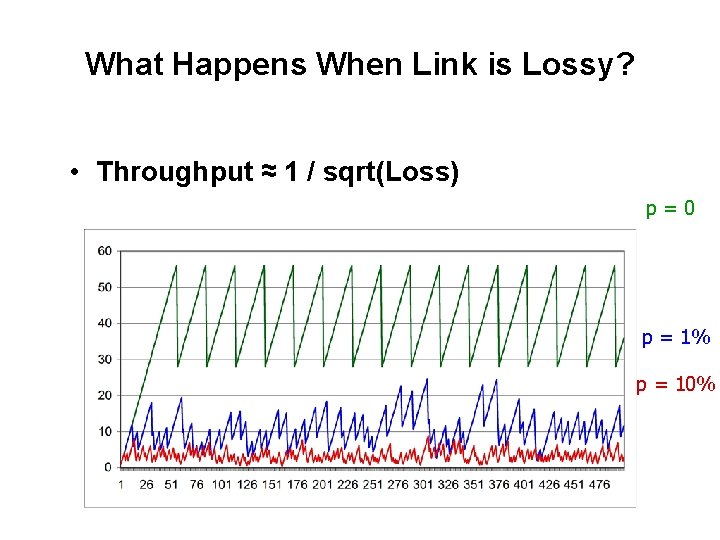

What Happens When Link is Lossy? • Throughput ≈ 1 / sqrt(Loss) p=0 p = 1% p = 10%

Assumptions Made by TCP • TCP works extremely well when its assumptions are valid • Loss == congestion – Loss only happens when: – Aggregate sending Rate > capacity • All flows in the network have the same: – RTT – MSS – Same loss rates • Everyone Plays Nicely – Everyone implements TCP correctly

What can we do about it? • Two types of losses: congestion and corruption • One option: mask corruption losses from TCP – Retransmissions at the link layer – E. g. Snoop TCP: intercept duplicate acknowledgments, retransmit locally, filter them from the sender • Another option: – Network tells the sender about the cause for the drop – Requires modification to the TCP endpoints

Congestion Avoidance • TCP creates congestion to then back off – Queues at bottleneck link are often full: increased delay – Sawtooth pattern: jitter • Alternative strategy – Predict when congestion is about to happen – Reduce rate early • Two approaches – Host centric: TCP Vegas – Router-centric: RED, ECN, DECBit, DCTCP

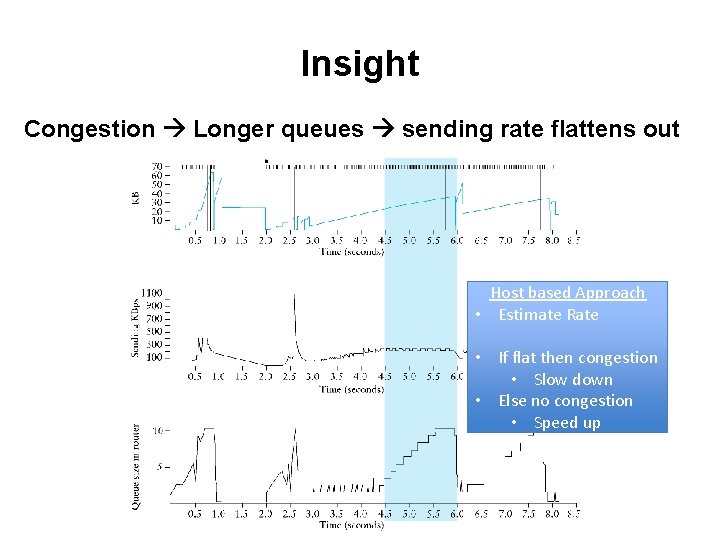

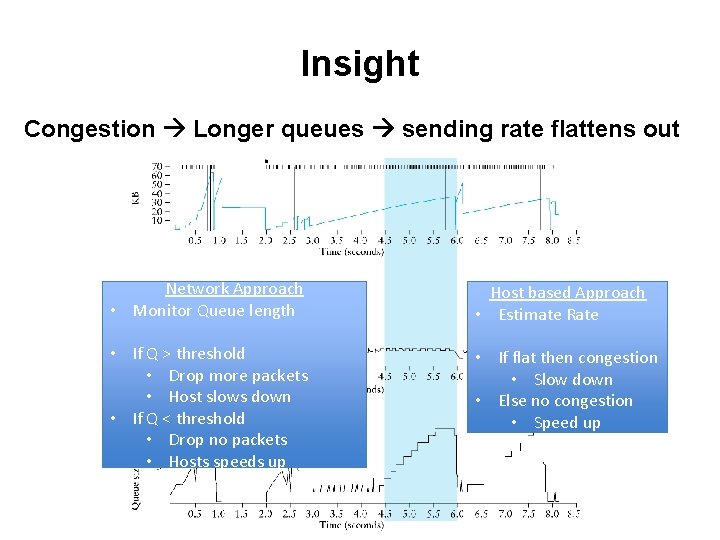

Insight Congestion Longer queues sending rate flattens out Host based Approach • Estimate Rate • If flat then congestion • Slow down • Else no congestion • Speed up

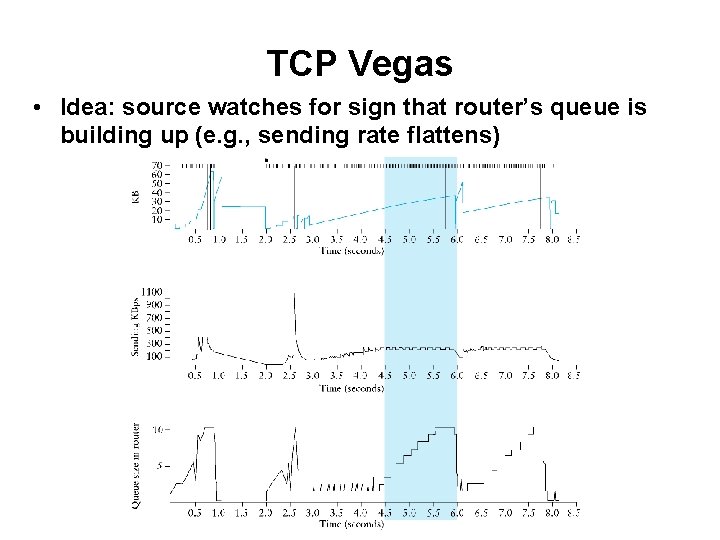

TCP Vegas • Idea: source watches for sign that router’s queue is building up (e. g. , sending rate flattens)

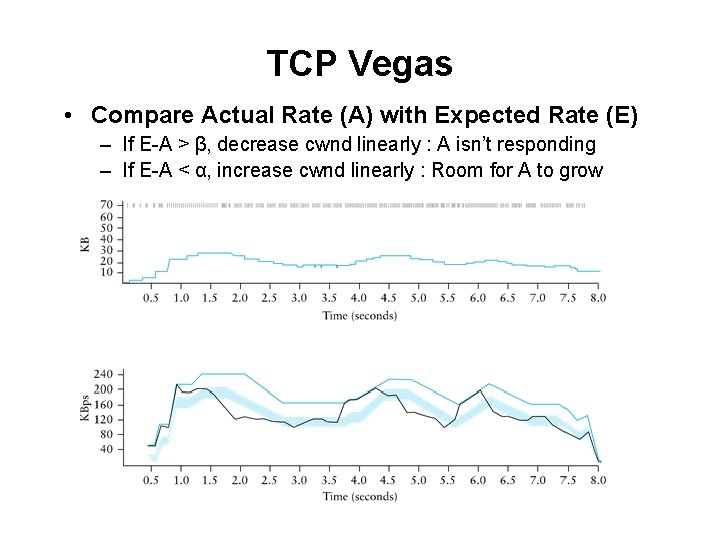

TCP Vegas • Compare Actual Rate (A) with Expected Rate (E) – If E-A > β, decrease cwnd linearly : A isn’t responding – If E-A < α, increase cwnd linearly : Room for A to grow

Vegas • Shorter router queues • Lower jitter • Problem: – Doesn’t compete well with Reno. Why? – Reacts earlier, Reno is more aggressive, ends up with higher bandwidth…

Insight Congestion Longer queues sending rate flattens out Network Approach • Monitor Queue length Host based Approach • Estimate Rate • If Q > threshold • Drop more packets • Host slows down • If Q < threshold • Drop no packets • Hosts speeds up • If flat then congestion • Slow down • Else no congestion • Speed up

Help from the network • What if routers could tell TCP that congestion is happening? – Congestion causes queues to grow: rate mismatch • TCP responds to drops • Idea: Random Early Drop (RED) – Rather than wait for queue to become full, drop packet with some probability that increases with queue length – TCP will react by reducing cwnd – Could also mark instead of dropping: ECN

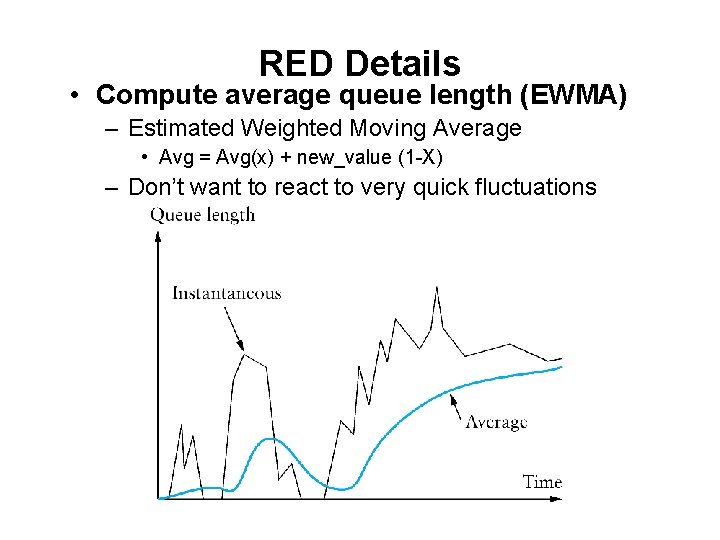

RED Details • Compute average queue length (EWMA) – Estimated Weighted Moving Average • Avg = Avg(x) + new_value (1 -X) – Don’t want to react to very quick fluctuations

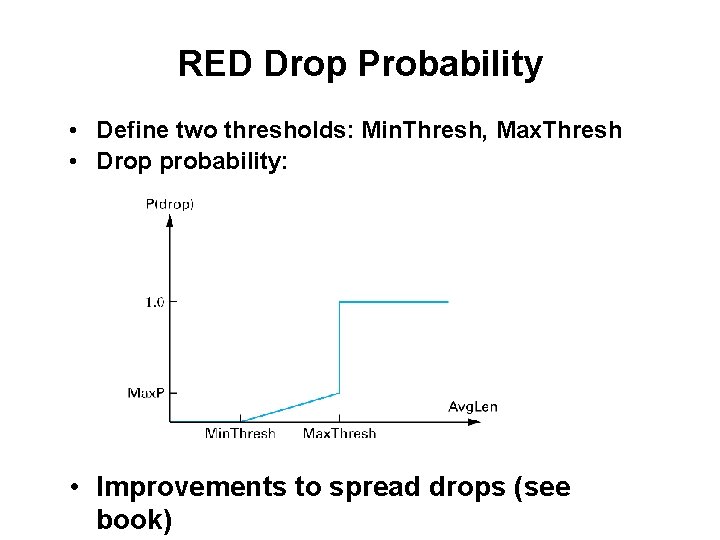

RED Drop Probability • Define two thresholds: Min. Thresh, Max. Thresh • Drop probability: • Improvements to spread drops (see book)

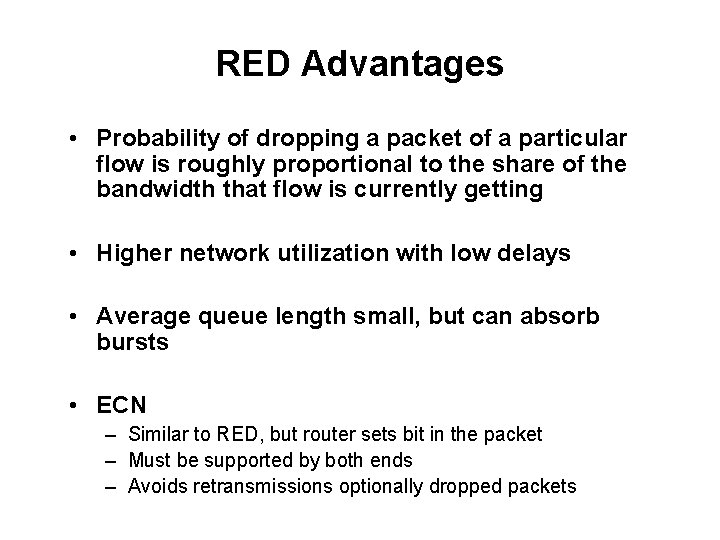

RED Advantages • Probability of dropping a packet of a particular flow is roughly proportional to the share of the bandwidth that flow is currently getting • Higher network utilization with low delays • Average queue length small, but can absorb bursts • ECN – Similar to RED, but router sets bit in the packet – Must be supported by both ends – Avoids retransmissions optionally dropped packets

More help from the network • Problem: still vulnerable to malicious flows! – RED will drop packets from large flows preferentially, but they don’t have to respond appropriately • Idea: Multiple Queues (one per flow) – – – Serve queues in Round-Robin Nagle (1987) Good: protects against misbehaving flows Disadvantage? Flows with larger packets get higher bandwidth

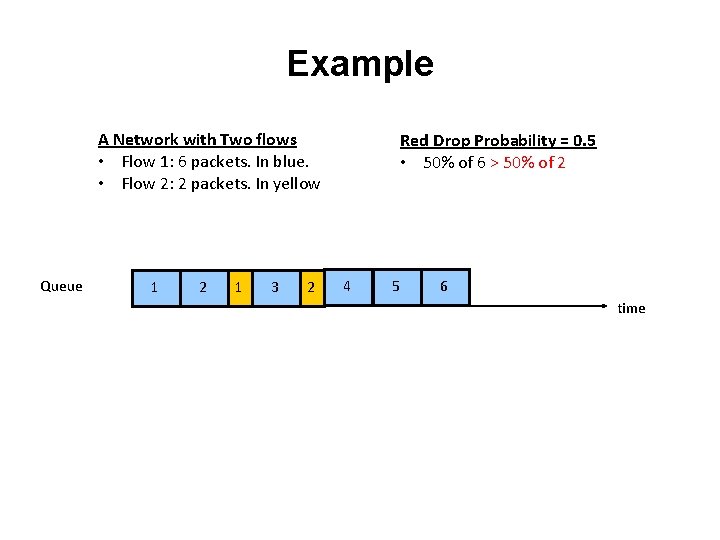

Example A Network with Two flows • Flow 1: 6 packets. In blue. • Flow 2: 2 packets. In yellow Queue 1 2 1 3 2 Red Drop Probability = 0. 5 • 50% of 6 > 50% of 2 4 5 6 time

More help from the network • Problem: still vulnerable to malicious flows! – RED will drop packets from large flows preferentially, but they don’t have to respond appropriately • Idea: Multiple Queues (one per flow) – – – Serve queues in Round-Robin Nagle (1987) Good: protects against misbehaving flows Disadvantage? Flows with larger packets get higher bandwidth

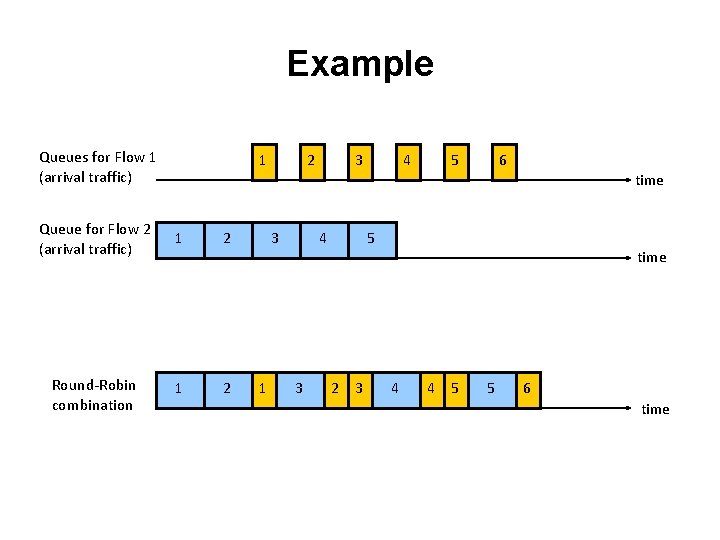

Example Queues for Flow 1 (arrival traffic) 1 2 3 4 5 6 time Queue for Flow 2 (arrival traffic) 1 2 Round-Robin combination 1 2 3 1 4 3 5 2 3 time 4 4 5 5 6 time

Big Picture • Network Queuing doesn’t eliminate congestion: just manages it • You need both, ideally: – End-host congestion control to adapt – Router congestion control to provide isolation and ensure sharing

This Week • Congestion Control Continued – Quick Review • TCP Friendliness – Equation Based Rate Control • TCP’s Inherent unfairness • TCP on Lossy Links • Congestion Control versus Avoidance – Getting help from the network • Cheating TCP

What happens if not everyone cooperates? • Possible ways to cheat – – Increasing cwnd faster Large initial cwnd Opening many connections Ack Division Attack

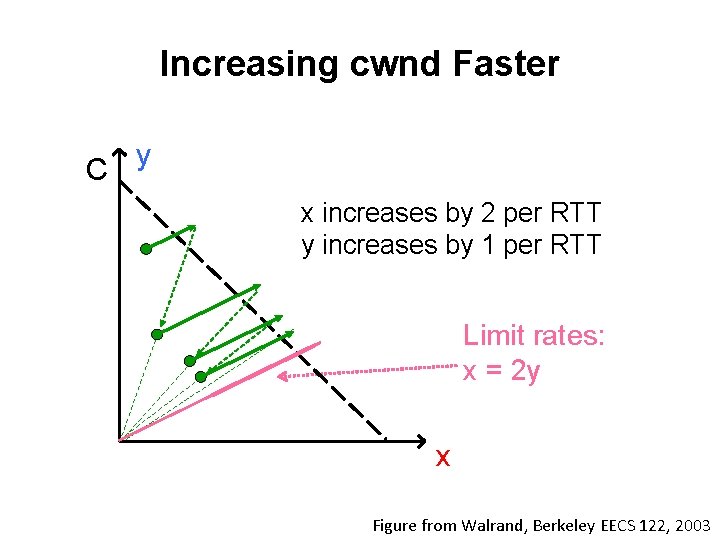

Increasing cwnd Faster C y x increases by 2 per RTT y increases by 1 per RTT Limit rates: x = 2 y x Figure from Walrand, Berkeley EECS 122, 2003

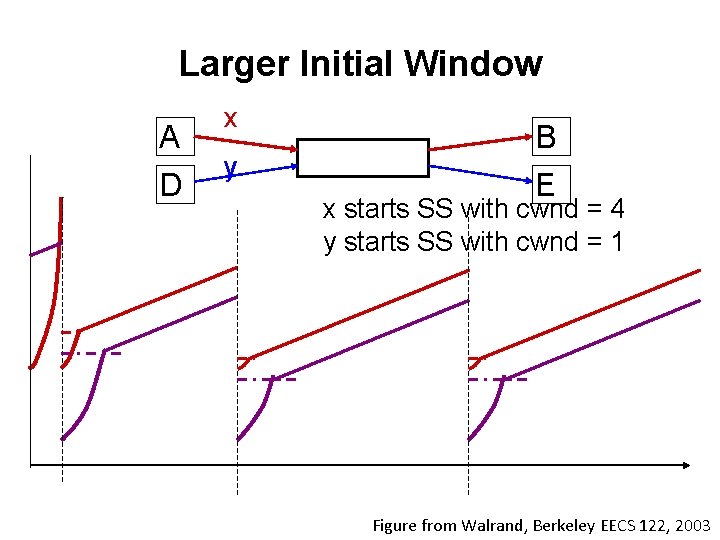

Larger Initial Window A D x y B E x starts SS with cwnd = 4 y starts SS with cwnd = 1 Figure from Walrand, Berkeley EECS 122, 2003

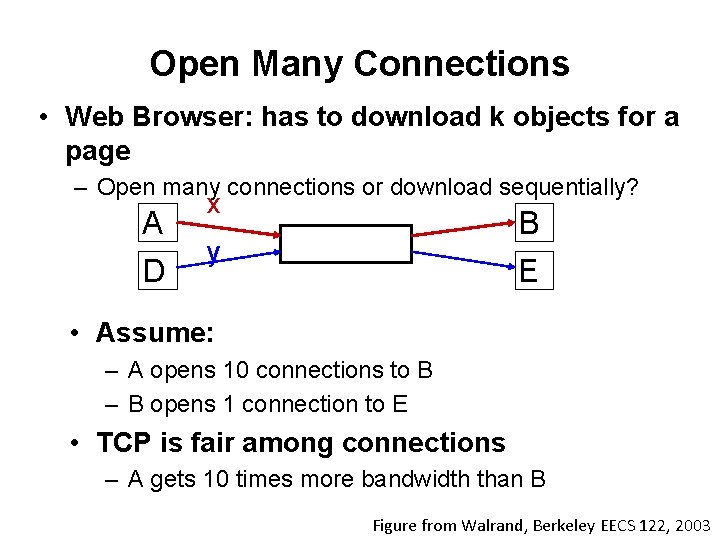

Open Many Connections • Web Browser: has to download k objects for a page – Open many connections or download sequentially? A D x B y E • Assume: – A opens 10 connections to B – B opens 1 connection to E • TCP is fair among connections – A gets 10 times more bandwidth than B Figure from Walrand, Berkeley EECS 122, 2003

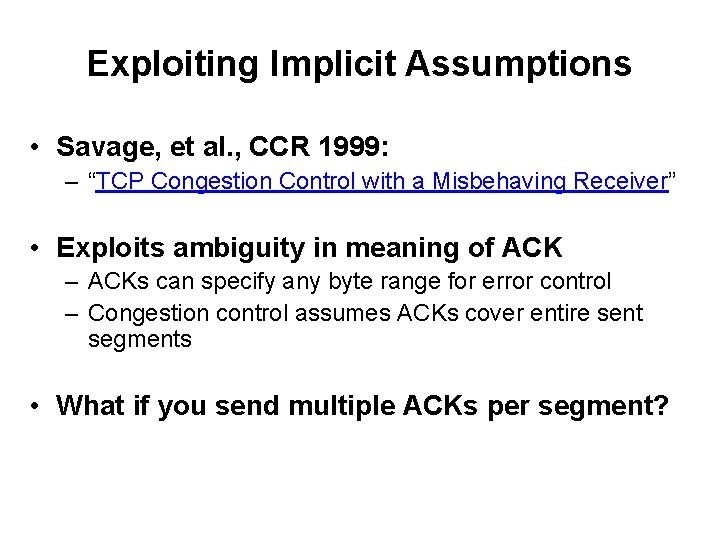

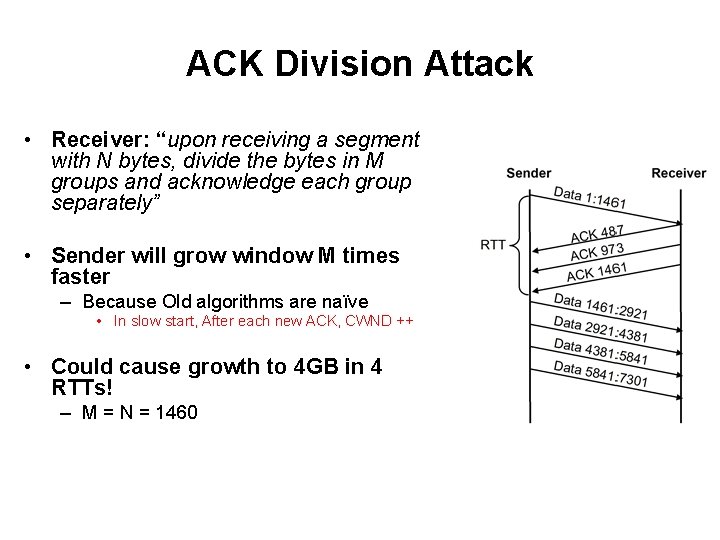

Exploiting Implicit Assumptions • Savage, et al. , CCR 1999: – “TCP Congestion Control with a Misbehaving Receiver” • Exploits ambiguity in meaning of ACK – ACKs can specify any byte range for error control – Congestion control assumes ACKs cover entire sent segments • What if you send multiple ACKs per segment?

What happens if not everyone cooperates? • Possible ways to cheat – – Increasing cwnd faster Large initial cwnd Opening many connections Ack Division Attack • Evaluating Incentives and trade-offs of cheating

ACK Division Attack • Receiver: “upon receiving a segment with N bytes, divide the bytes in M groups and acknowledge each group separately” • Sender will grow window M times faster – Because Old algorithms are naïve • In slow start, After each new ACK, CWND ++ • Could cause growth to 4 GB in 4 RTTs! – M = N = 1460

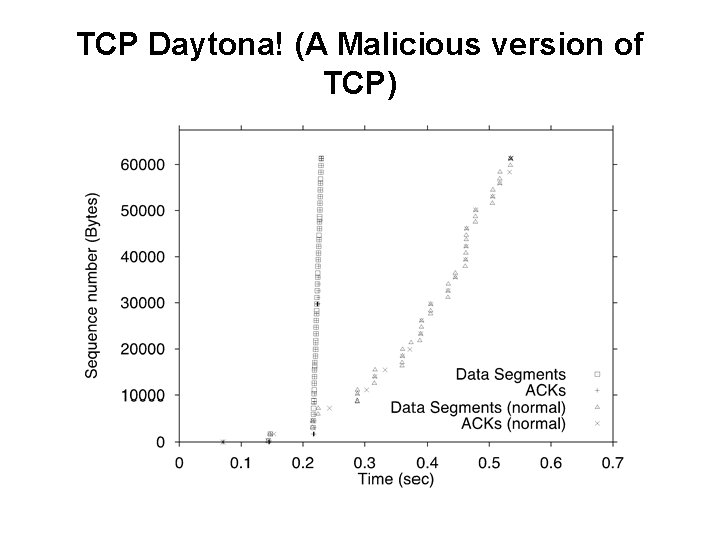

TCP Daytona! (A Malicious version of TCP)

Defense • OLD TCP – In slow start, cwnd++ • Appropriate Byte Counting – [RFC 3465 (2003), RFC 5681 (2009)] – In slow start, cwnd += min (N, MSS) where N is the number of newly acknowledged bytes in the received ACK

What happens if not everyone cooperates? • Possible ways to cheat – – Increasing cwnd faster Large initial cwnd Opening many connections Ack Division Attack • Evaluating Incentives and trade-offs of cheating

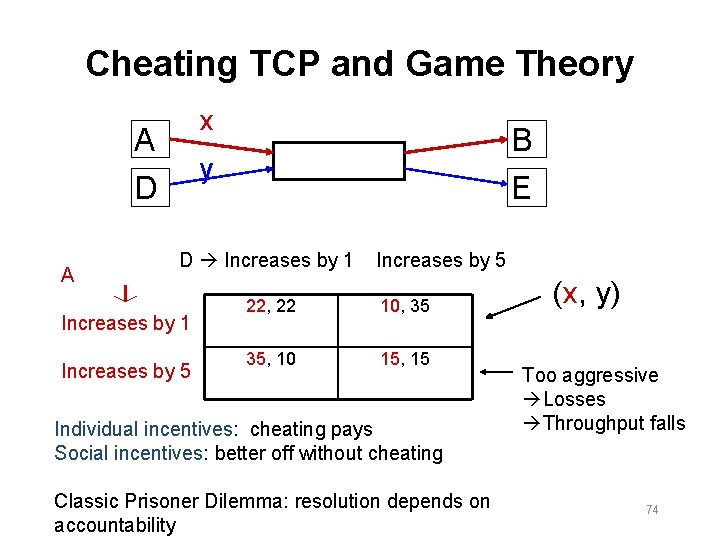

Cheating TCP and Game Theory x A y D A B E D Increases by 1 Increases by 5 22, 22 10, 35 35, 10 15, 15 Individual incentives: cheating pays Social incentives: better off without cheating Classic Prisoner Dilemma: resolution depends on accountability (x, y) Too aggressive Losses Throughput falls 74

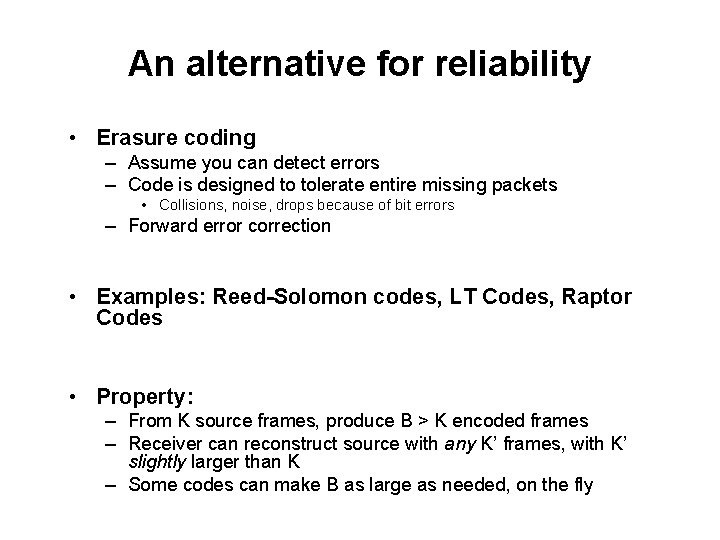

An alternative for reliability • Erasure coding – Assume you can detect errors – Code is designed to tolerate entire missing packets • Collisions, noise, drops because of bit errors – Forward error correction • Examples: Reed-Solomon codes, LT Codes, Raptor Codes • Property: – From K source frames, produce B > K encoded frames – Receiver can reconstruct source with any K’ frames, with K’ slightly larger than K – Some codes can make B as large as needed, on the fly

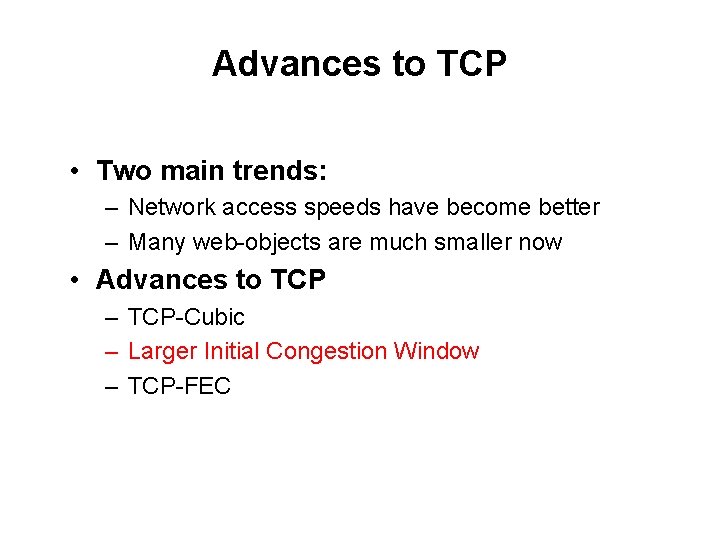

Advances to TCP • Two main trends: – Network access speeds have become better – Many web-objects are much smaller now • Advances to TCP – TCP-Cubic – Larger Initial Congestion Window – TCP-FEC

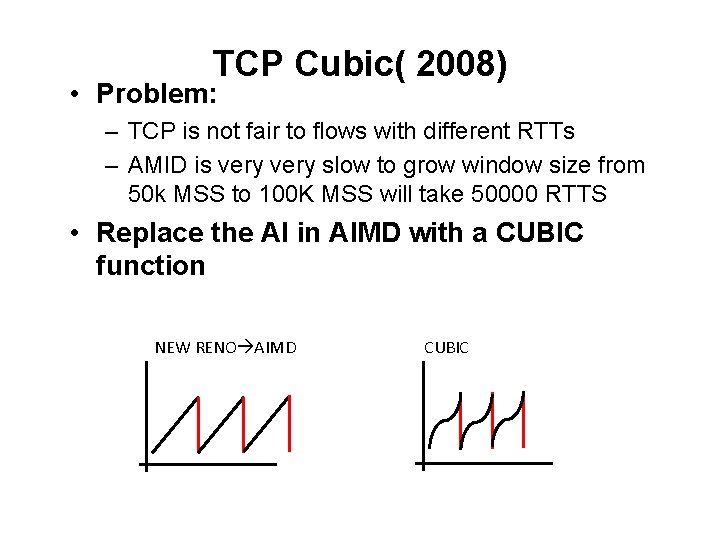

TCP Cubic( 2008) • Problem: – TCP is not fair to flows with different RTTs – AMID is very slow to grow window size from 50 k MSS to 100 K MSS will take 50000 RTTS • Replace the AI in AIMD with a CUBIC function NEW RENO AIMD CUBIC

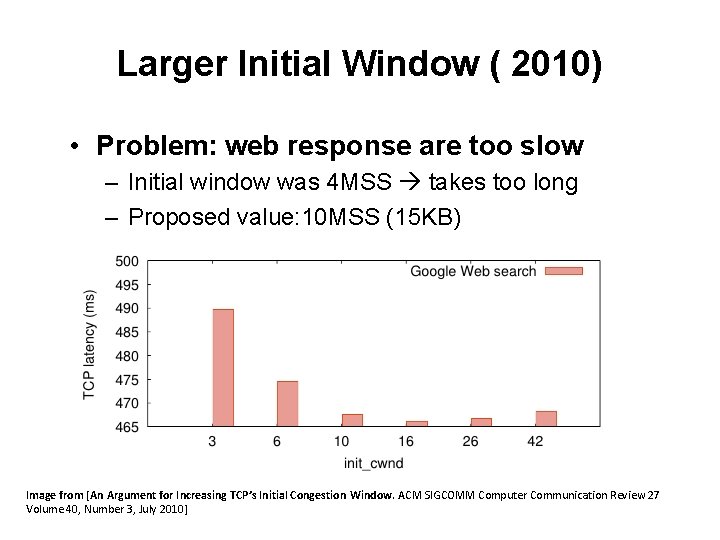

Larger Initial Window ( 2010) • Problem: web response are too slow – Initial window was 4 MSS takes too long – Proposed value: 10 MSS (15 KB) Image from [An Argument for Increasing TCP’s Initial Congestion Window. ACM SIGCOMM Computer Communication Review 27 Volume 40, Number 3, July 2010]

TCP+FEC( 2014) • Problem: high probability of dropping 1 of the last data packets – When this happens you may have a time-out (RTO) – No duplicate ACKS because no more data to generate Dup-Acks • Important because: – Most objects fit in the initial congestion window • So less than 15 KB – So can be sent in one RTT – If Time-out occurs, you wait an 2 RTTs (1 to learn, 1 to retransmit) • So now response time triples!!! • Solution: – Use FEC, send N+1 packet (assume only one packet is dropped) – Use last packet for correction

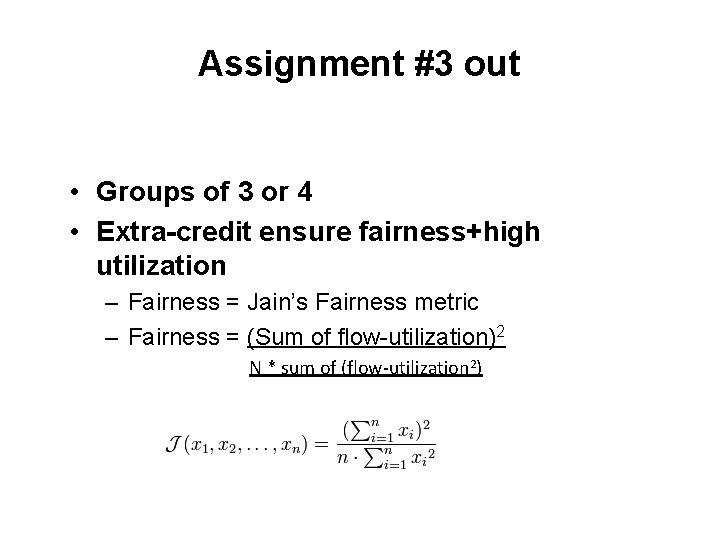

Assignment #3 out • Groups of 3 or 4 • Extra-credit ensure fairness+high utilization – Fairness = Jain’s Fairness metric – Fairness = (Sum of flow-utilization)2 N * sum of (flow-utilization 2)

Next Time • Move into the application layer • DNS, Web, Security, and more… • Next week Guest lectures – DNS + CDN • Bruce Maggs – Co-Founder of Akamai (largest public CDN)

- Slides: 81