CSC 3050 Computer Architecture Memory and Cache Prof

- Slides: 75

CSC 3050 – Computer Architecture Memory and Cache Prof. Yeh-Ching Chung School of Science and Engineering Chinese University of Hong Kong, Shenzhen National Tsing Hua University ® copyright OIA 1

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 2

Memory Technology l Random access – Access time same for all locations – SRAM (Static Random Access Memory) • Low density, high power, expensive, fast • Static: content will last forever until lose power • Address not divided • Use for caches – DRAM (Dynamic Random Access Memory) • High density, low power, cheap, slow • Dynamic: need to be refreshed regularly • Addresses in 2 halves (memory as a 2 D matrix) – RAS/CAS (Row/Column Access Strobe) • Use for main memory l Magnetic disk National Tsing Hua University ® copyright OIA 3

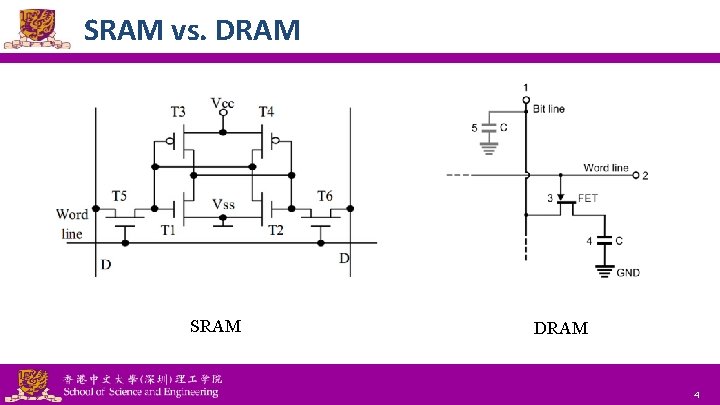

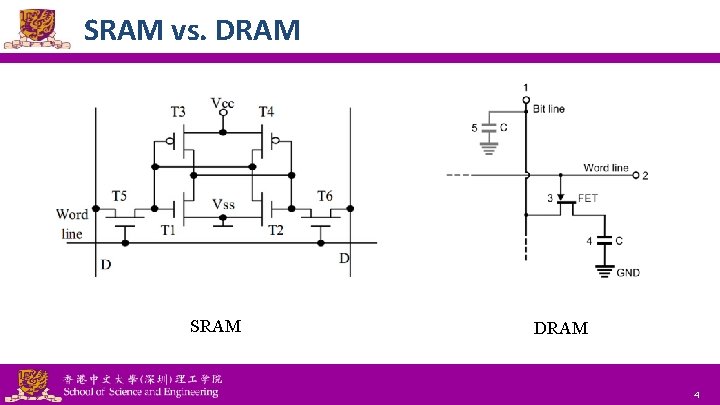

SRAM vs. DRAM SRAM National Tsing Hua University ® copyright OIA DRAM 4

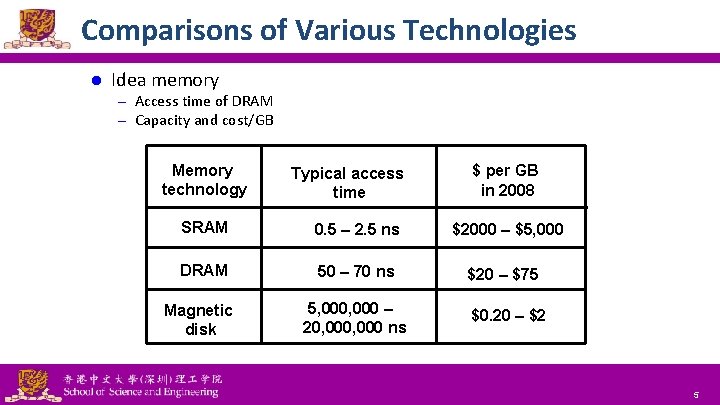

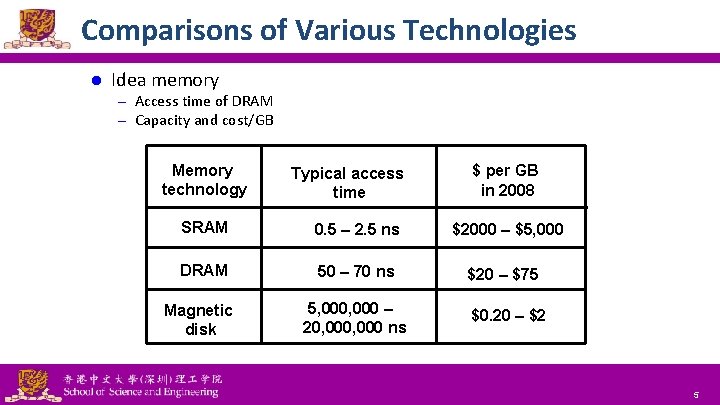

Comparisons of Various Technologies l Idea memory – Access time of DRAM – Capacity and cost/GB Memory technology Typical access time SRAM 0. 5 – 2. 5 ns DRAM 50 – 70 ns Magnetic disk National Tsing Hua University ® copyright OIA 5, 000 – 20, 000 ns $ per GB in 2008 $2000 – $5, 000 $20 – $75 $0. 20 – $2 5

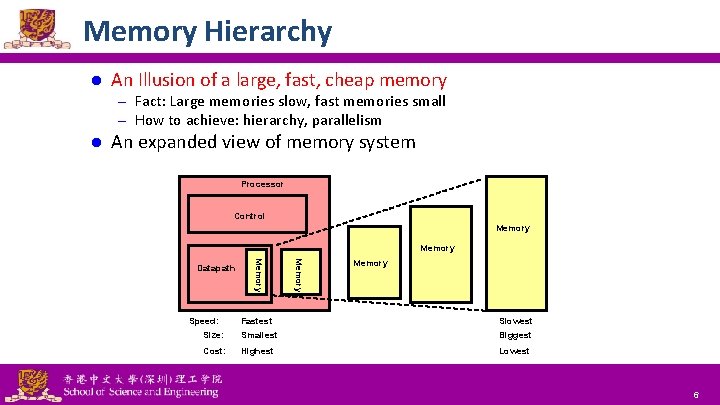

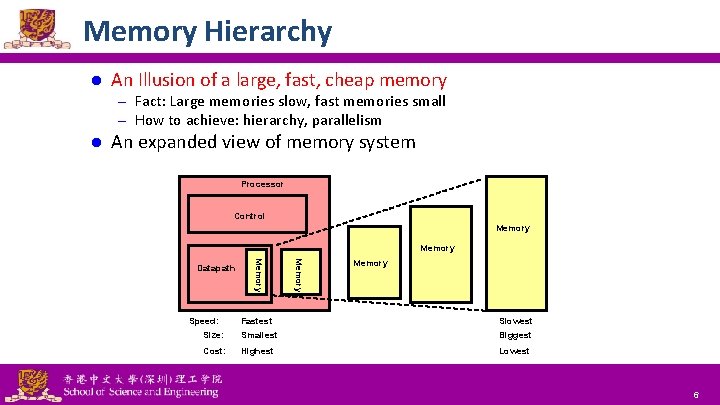

Memory Hierarchy l An Illusion of a large, fast, cheap memory – Fact: Large memories slow, fast memories small – How to achieve: hierarchy, parallelism l An expanded view of memory system Processor Control Memory Speed: Memory Datapath Memory Fastest Slowest Size: Smallest Biggest Cost: Highest Lowest National Tsing Hua University ® copyright OIA 6

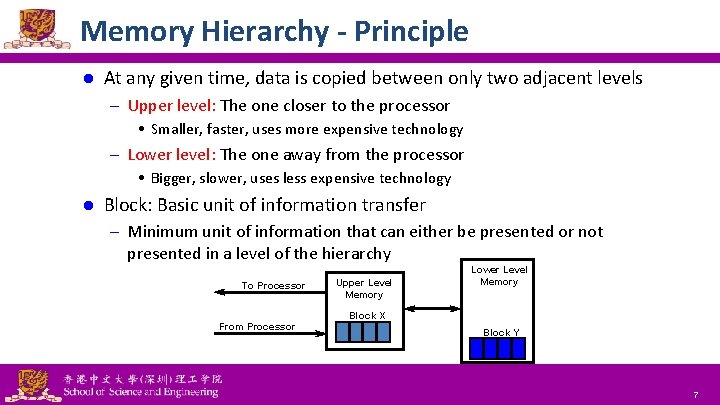

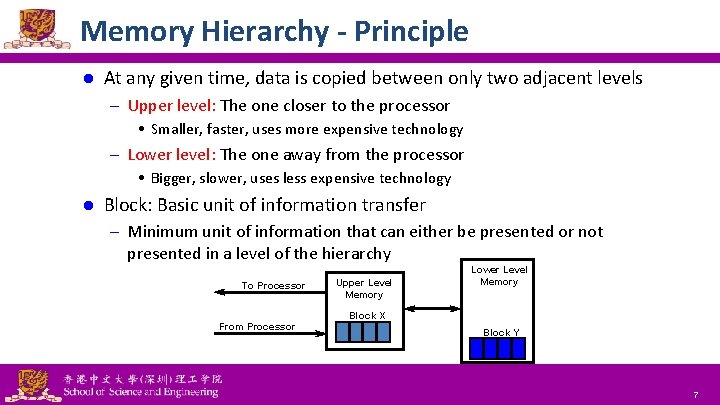

Memory Hierarchy - Principle l At any given time, data is copied between only two adjacent levels – Upper level: The one closer to the processor • Smaller, faster, uses more expensive technology – Lower level: The one away from the processor • Bigger, slower, uses less expensive technology l Block: Basic unit of information transfer – Minimum unit of information that can either be presented or not presented in a level of the hierarchy To Processor Upper Level Memory Lower Level Memory Block X From Processor National Tsing Hua University ® copyright OIA Block Y 7

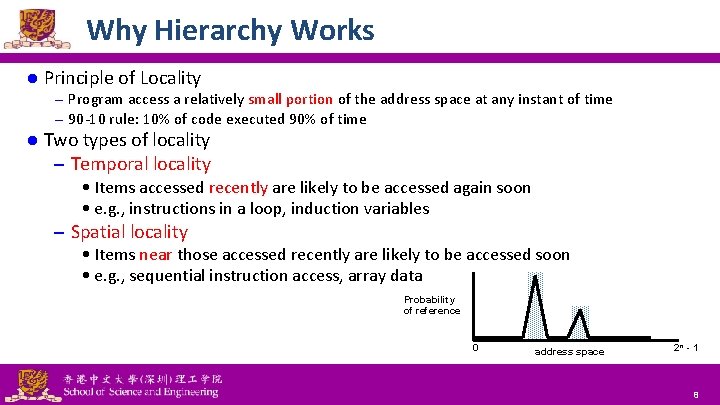

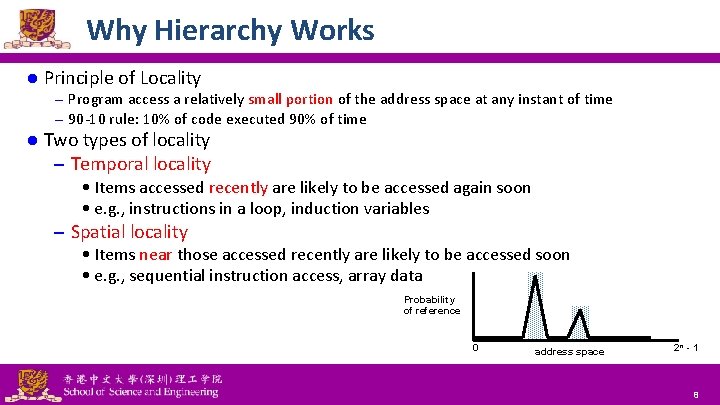

Why Hierarchy Works l Principle of Locality – Program access a relatively small portion of the address space at any instant of time – 90 -10 rule: 10% of code executed 90% of time l Two types of locality – Temporal locality • Items accessed recently are likely to be accessed again soon • e. g. , instructions in a loop, induction variables – Spatial locality • Items near those accessed recently are likely to be accessed soon • e. g. , sequential instruction access, array data Probability of reference 0 National Tsing Hua University ® copyright OIA address space 2 n - 1 8

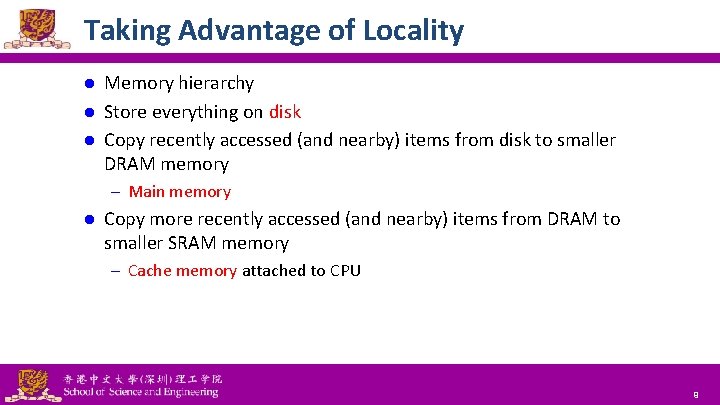

Taking Advantage of Locality Memory hierarchy l Store everything on disk l Copy recently accessed (and nearby) items from disk to smaller DRAM memory l – Main memory l Copy more recently accessed (and nearby) items from DRAM to smaller SRAM memory – Cache memory attached to CPU National Tsing Hua University ® copyright OIA 9

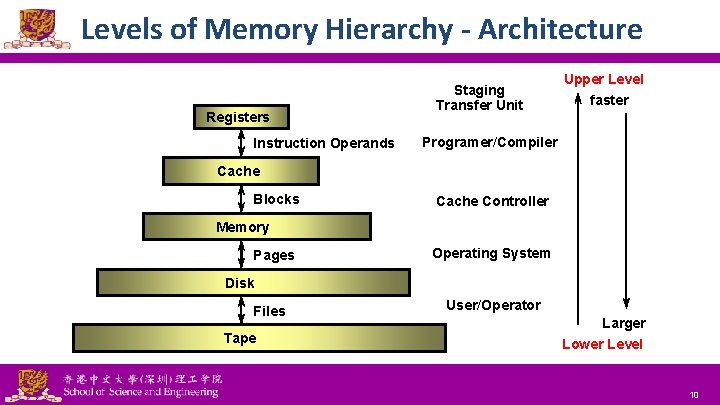

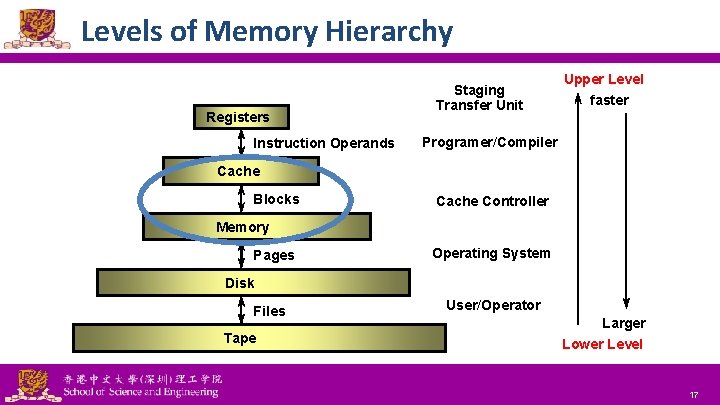

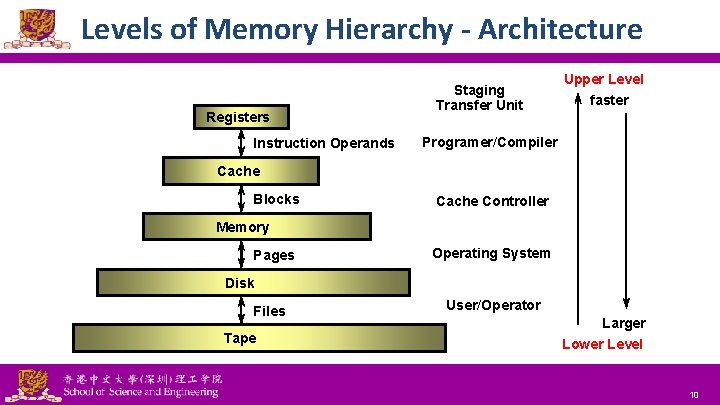

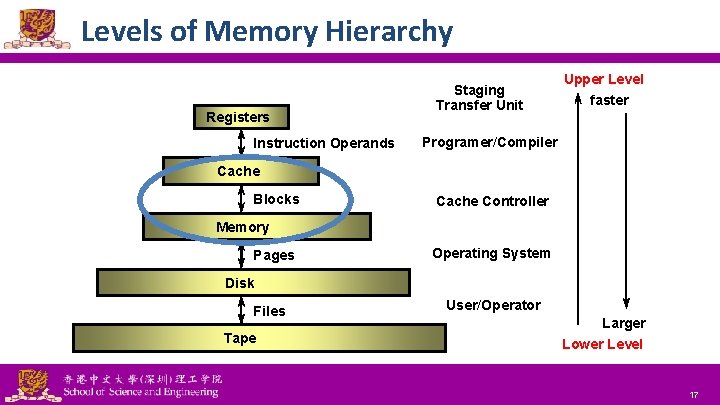

Levels of Memory Hierarchy - Architecture Registers Instruction Operands Staging Transfer Unit Upper Level faster Programer/Compiler Cache Blocks Cache Controller Memory Pages Operating System Disk Files Tape National Tsing Hua University ® copyright OIA User/Operator Larger Lower Level 10

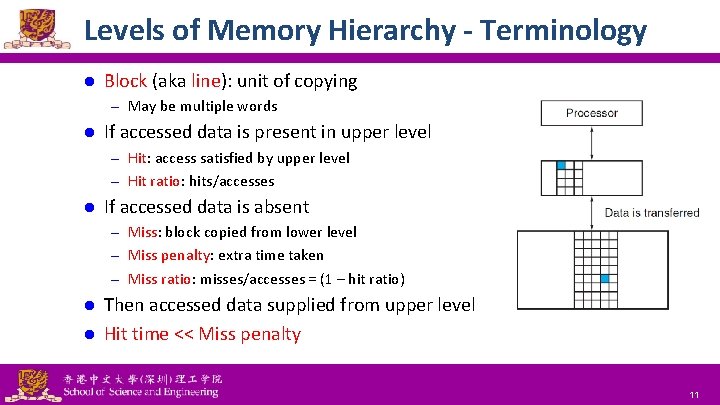

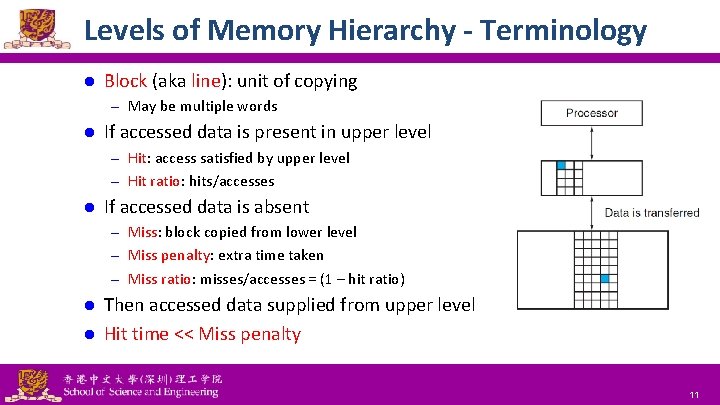

Levels of Memory Hierarchy - Terminology l Block (aka line): unit of copying – May be multiple words l If accessed data is present in upper level – Hit: access satisfied by upper level – Hit ratio: hits/accesses l If accessed data is absent – Miss: block copied from lower level – Miss penalty: extra time taken – Miss ratio: misses/accesses = (1 – hit ratio) Then accessed data supplied from upper level l Hit time << Miss penalty l National Tsing Hua University ® copyright OIA 11

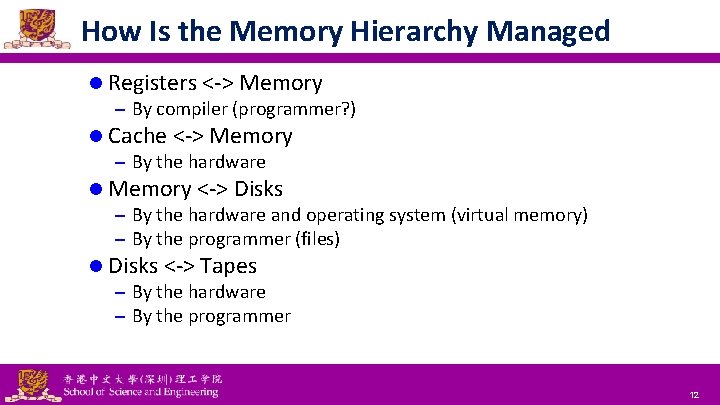

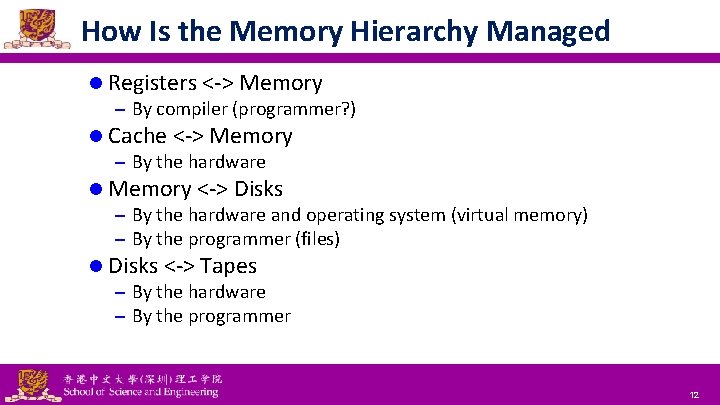

How Is the Memory Hierarchy Managed l Registers <-> Memory – By compiler (programmer? ) l Cache <-> Memory – By the hardware l Memory <-> Disks – By the hardware and operating system (virtual memory) – By the programmer (files) l Disks <-> Tapes – By the hardware – By the programmer National Tsing Hua University ® copyright OIA 12

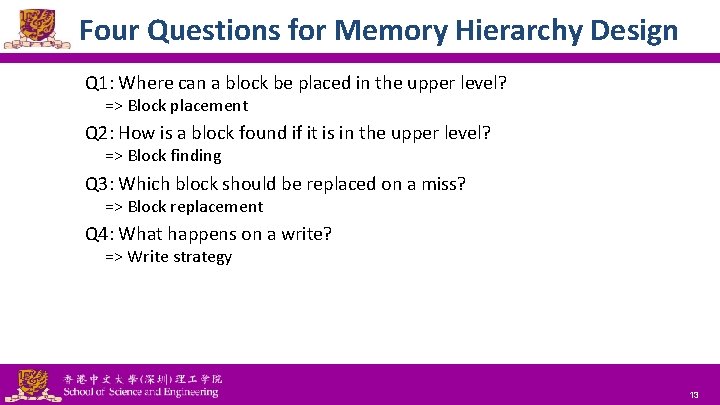

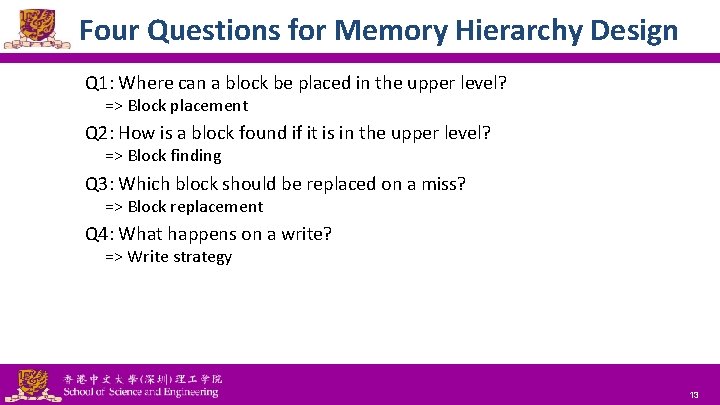

Four Questions for Memory Hierarchy Design Q 1: Where can a block be placed in the upper level? => Block placement Q 2: How is a block found if it is in the upper level? => Block finding Q 3: Which block should be replaced on a miss? => Block replacement Q 4: What happens on a write? => Write strategy National Tsing Hua University ® copyright OIA 13

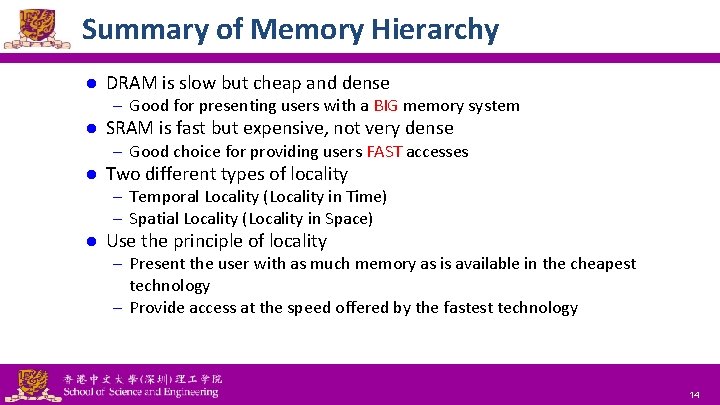

Summary of Memory Hierarchy l DRAM is slow but cheap and dense – Good for presenting users with a BIG memory system l SRAM is fast but expensive, not very dense – Good choice for providing users FAST accesses l Two different types of locality – Temporal Locality (Locality in Time) – Spatial Locality (Locality in Space) l Use the principle of locality – Present the user with as much memory as is available in the cheapest technology – Provide access at the speed offered by the fastest technology National Tsing Hua University ® copyright OIA 14

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 15

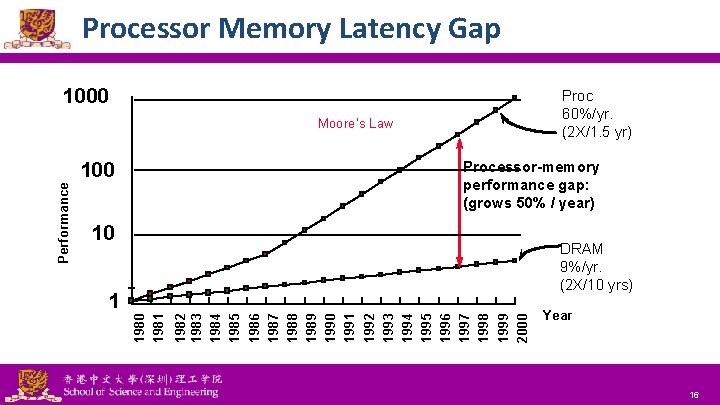

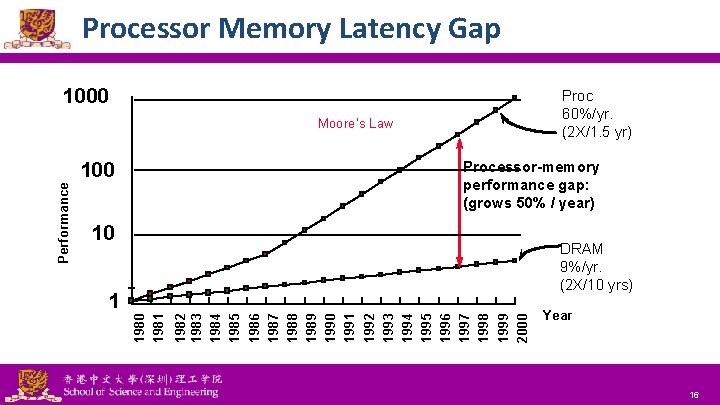

Processor Memory Latency Gap 1000 Proc 60%/yr. (2 X/1. 5 yr) Moore’s Law Processor-memory performance gap: (grows 50% / year) Performance 100 10 DRAM 9%/yr. (2 X/10 yrs) 1982 1983 1984 1985 1986 1987 1988 1989 1990 1991 1992 1993 1994 1995 1996 1997 1998 1999 2000 1981 1 National Tsing Hua University ® copyright OIA Year 16

Levels of Memory Hierarchy Registers Instruction Operands Staging Transfer Unit Upper Level faster Programer/Compiler Cache Blocks Cache Controller Memory Pages Operating System Disk Files Tape National Tsing Hua University ® copyright OIA User/Operator Larger Lower Level 17

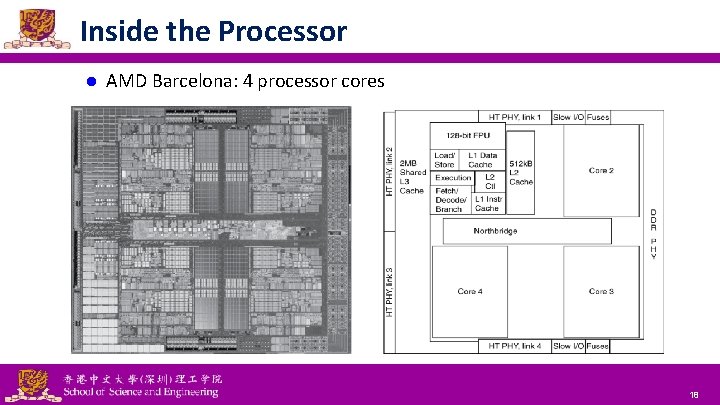

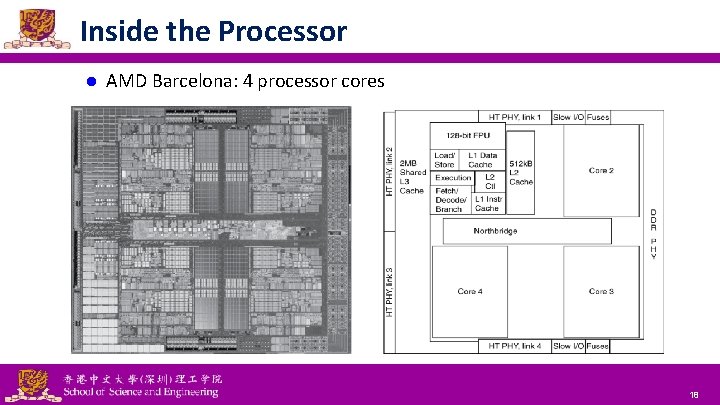

Inside the Processor l AMD Barcelona: 4 processor cores National Tsing Hua University ® copyright OIA 18

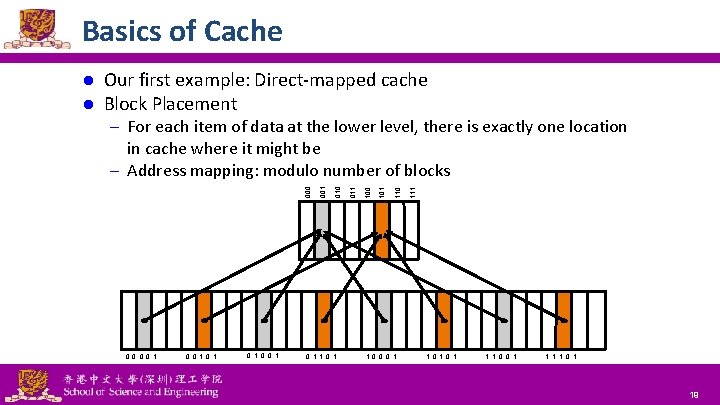

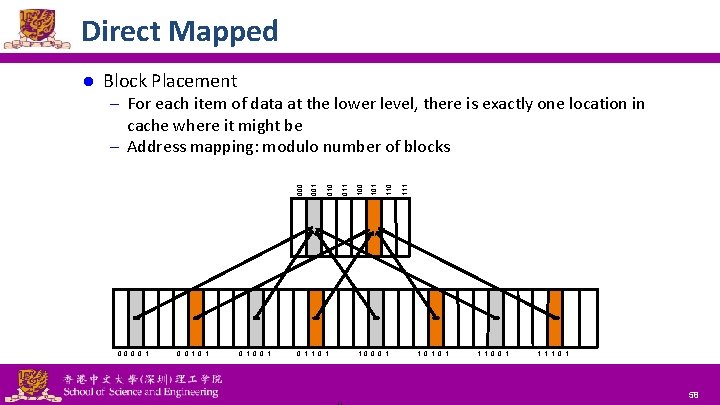

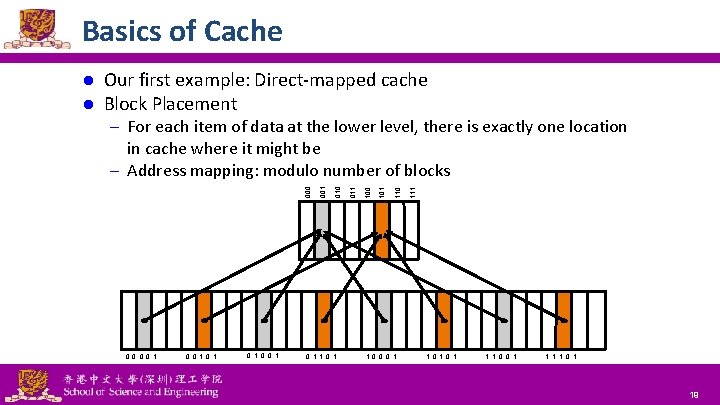

Basics of Cache l l Our first example: Direct-mapped cache Block Placement – For each item of data at the lower level, there is exactly one location 0 0 1 0 1 0 0 1 National Tsing Hua University ® copyright OIA 0 1 1 0 0 0 1 110 101 100 011 010 001 000 in cache where it might be – Address mapping: modulo number of blocks 1 0 1 1 1 0 0 1 19

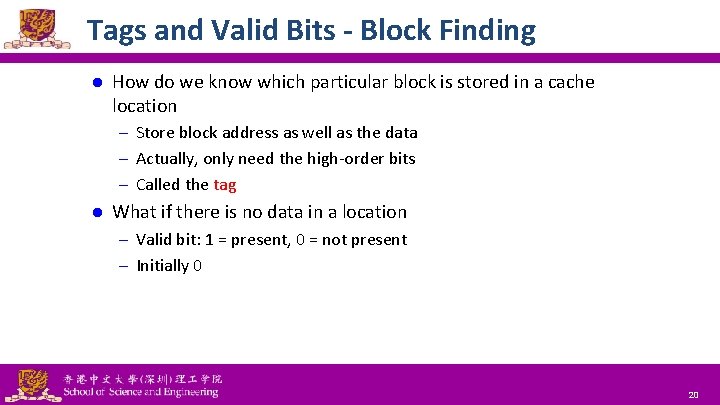

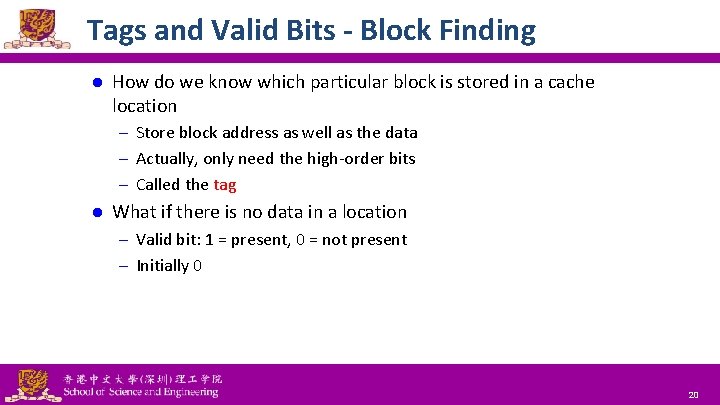

Tags and Valid Bits - Block Finding l How do we know which particular block is stored in a cache location – Store block address as well as the data – Actually, only need the high-order bits – Called the tag l What if there is no data in a location – Valid bit: 1 = present, 0 = not present – Initially 0 National Tsing Hua University ® copyright OIA 20

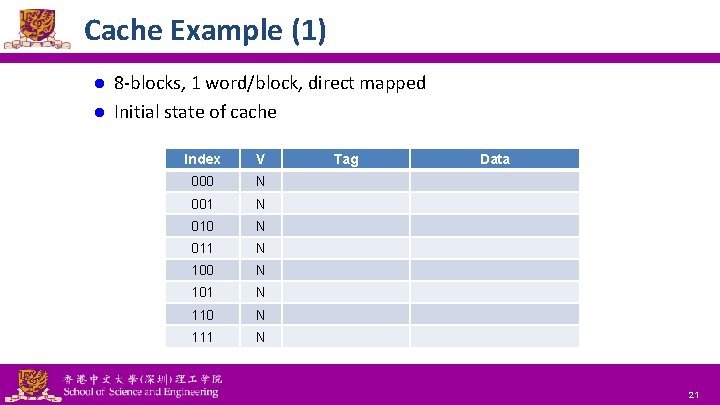

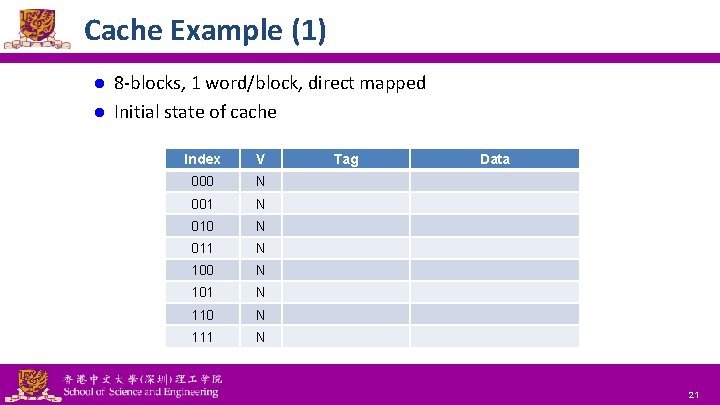

Cache Example (1) 8 -blocks, 1 word/block, direct mapped l Initial state of cache l Index V 000 N 001 N 010 N 011 N 100 N 101 N 110 N 111 N National Tsing Hua University ® copyright OIA Tag Data 21

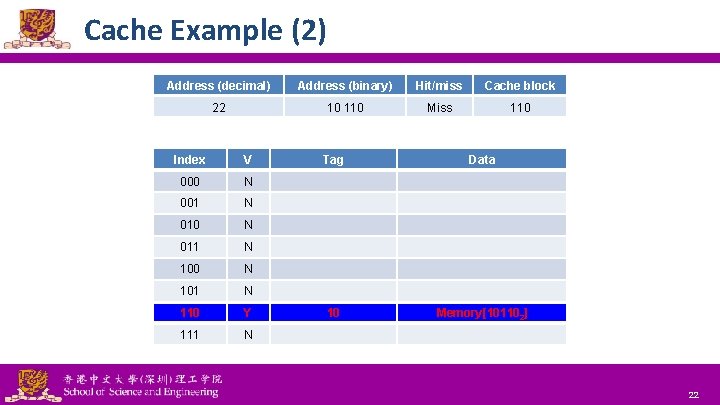

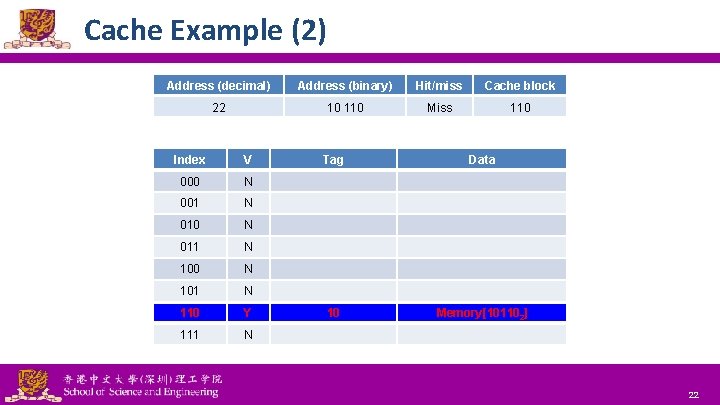

Cache Example (2) Address (decimal) Address (binary) Hit/miss Cache block 22 10 110 Miss 110 Index V 000 N 001 N 010 N 011 N 100 N 101 N 110 N Y 111 N National Tsing Hua University ® copyright OIA Tag Data 10 Memory[101102] 22

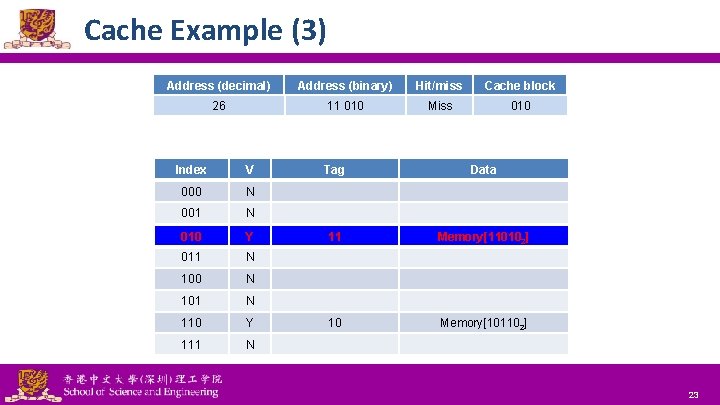

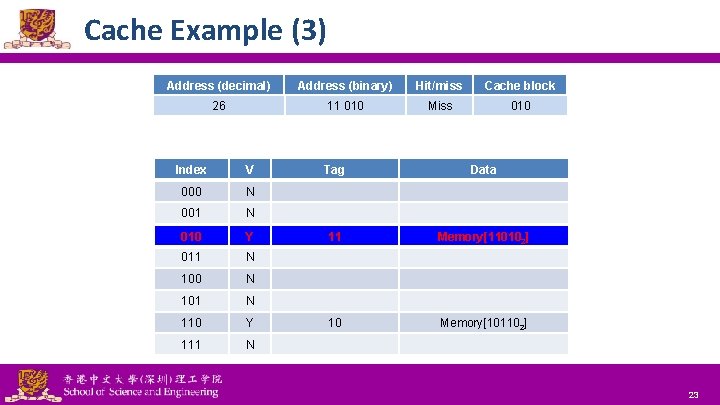

Cache Example (3) Address (decimal) Address (binary) Hit/miss Cache block 26 11 010 Miss 010 Index V 000 N 001 N 010 N Y 011 N 100 N 101 N 110 Y 111 N National Tsing Hua University ® copyright OIA Tag Data 11 Memory[110102] 10 Memory[101102] 23

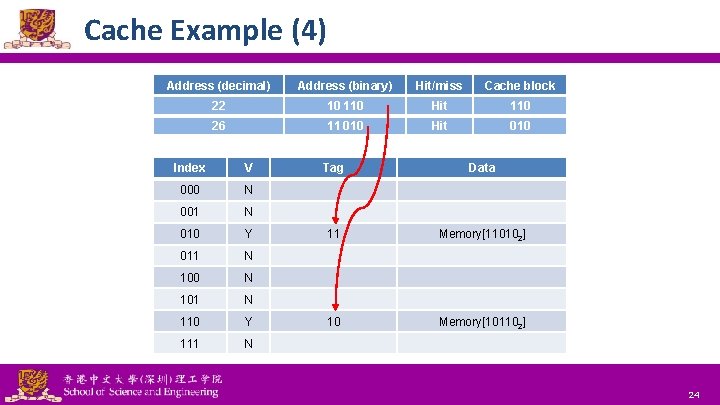

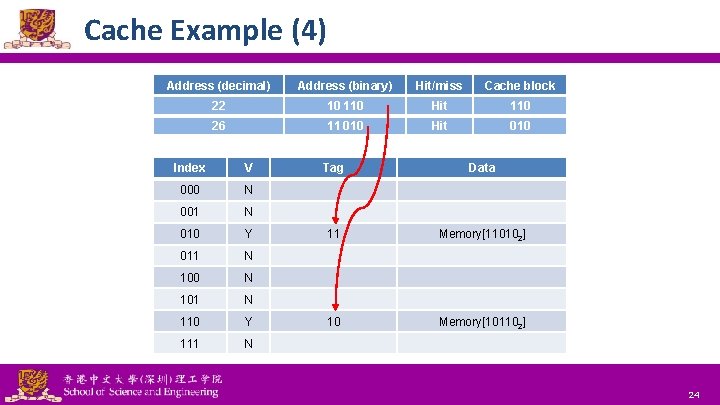

Cache Example (4) Address (decimal) Address (binary) Hit/miss Cache block 22 10 110 Hit 110 26 11 010 Hit 010 Index V 000 N 001 N 010 Y 011 N 100 N 101 N 110 Y 111 N National Tsing Hua University ® copyright OIA Tag Data 11 Memory[110102] 10 Memory[101102] 24

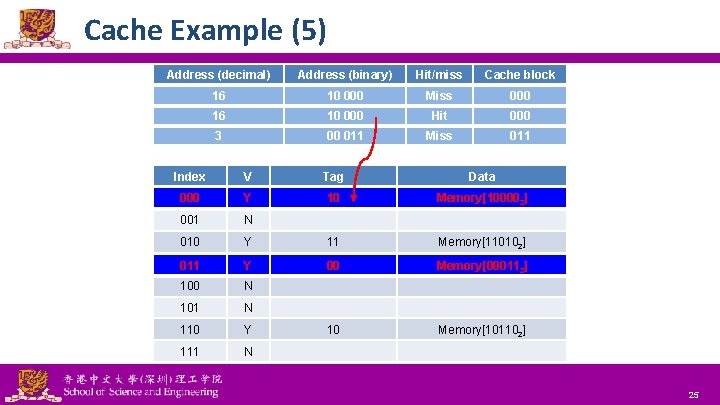

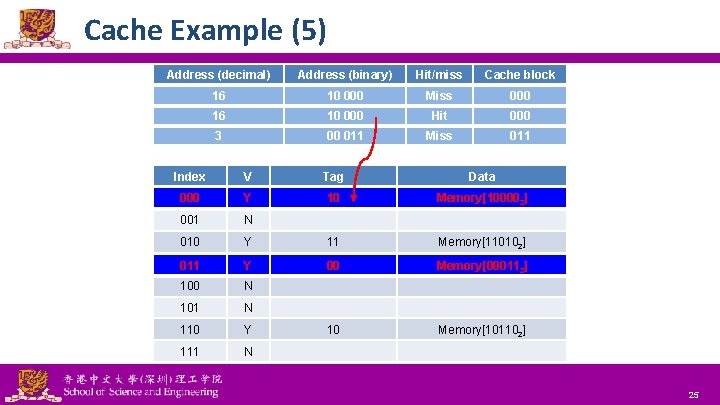

Cache Example (5) Address (decimal) Address (binary) Hit/miss Cache block 16 10 000 Miss 000 16 10 000 Hit 000 3 00 011 Miss 011 Index V Tag Data 000 N Y 10 Memory[100002] 001 N 010 Y 11 Memory[110102] 011 N Y 00 Memory[000112] 100 N 101 N 110 Y 10 Memory[101102] 111 N National Tsing Hua University ® copyright OIA 25

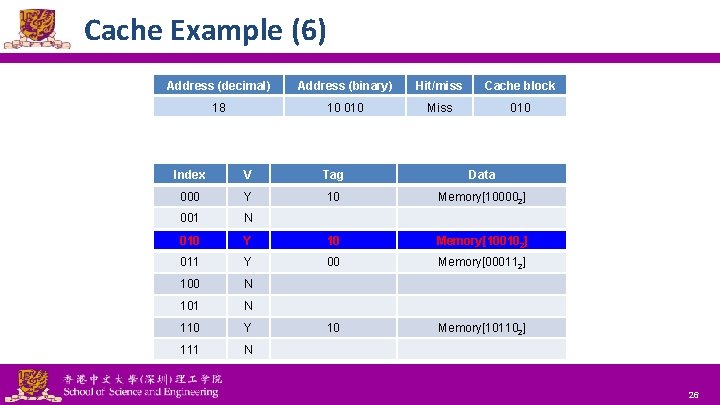

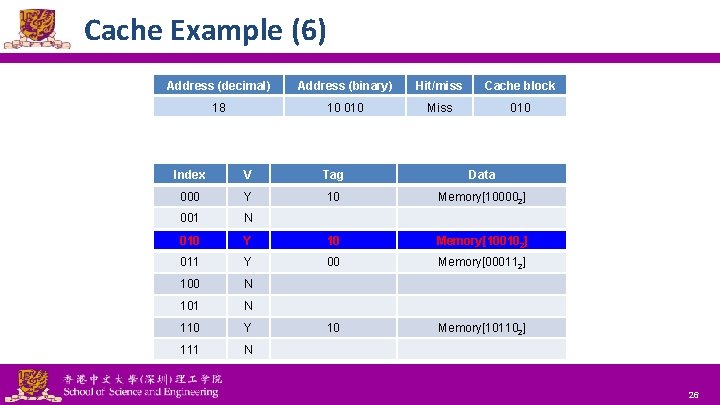

Cache Example (6) Address (decimal) Address (binary) Hit/miss Cache block 18 10 010 Miss 010 Index V Tag Data 000 Y 10 Memory[100002] 001 N 010 N Y 10 Memory[100102] 011 Y 00 Memory[000112] 100 N 101 N 110 Y 10 Memory[101102] 111 N National Tsing Hua University ® copyright OIA 26

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 27

Memory Address 100000 100001 100010 100011 100100 100101 100110 100111 101000 National Tsing Hua University ® copyright OIA 28

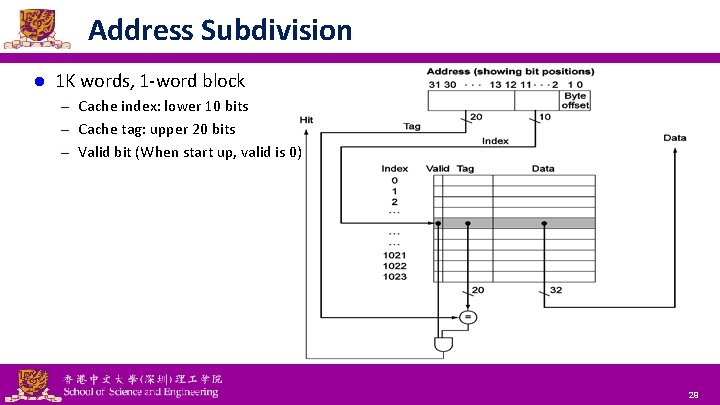

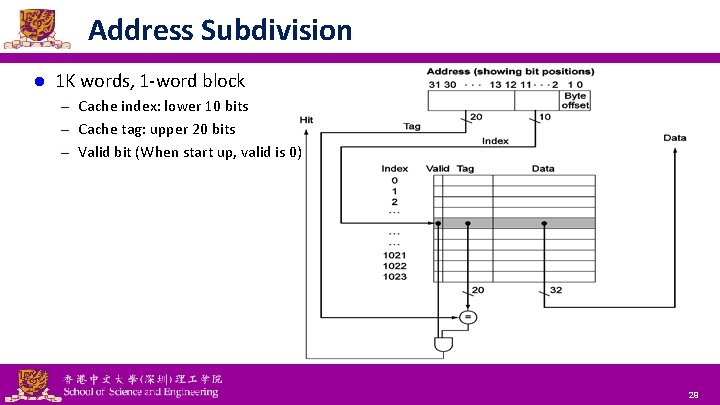

Address Subdivision l 1 K words, 1 -word block – Cache index: lower 10 bits – Cache tag: upper 20 bits – Valid bit (When start up, valid is 0) National Tsing Hua University ® copyright OIA 29

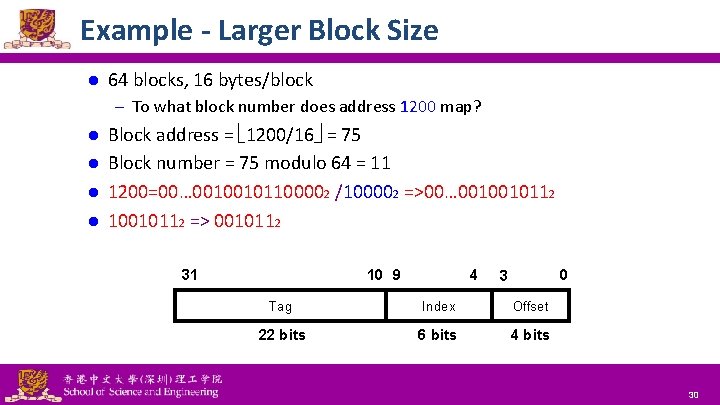

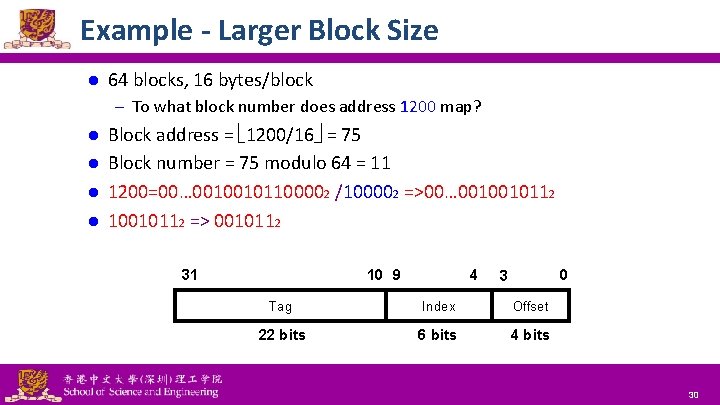

Example - Larger Block Size l 64 blocks, 16 bytes/block – To what block number does address 1200 map? Block address = 1200/16 = 75 l Block number = 75 modulo 64 = 11 l 1200=00… 00100101100002 /100002 =>00… 0010010112 l 10010112 => 0010112 l 31 National Tsing Hua University ® copyright OIA 10 9 4 0 3 Tag Index Offset 22 bits 6 bits 4 bits 30

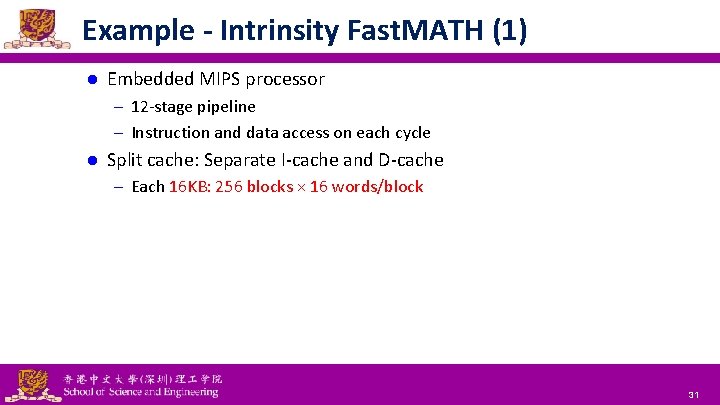

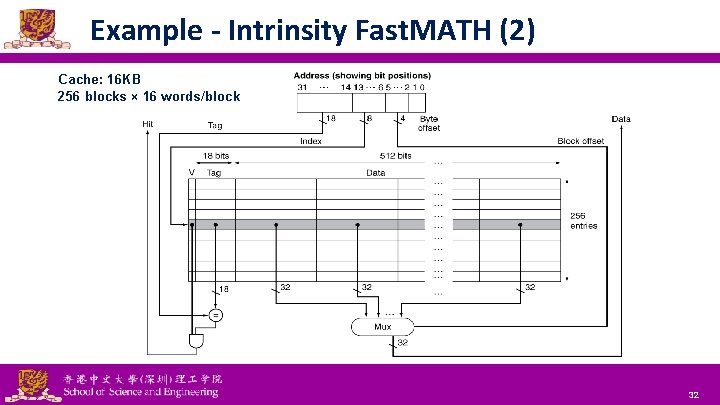

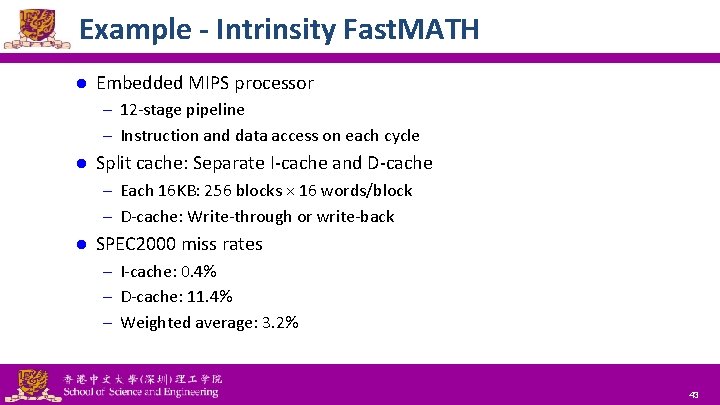

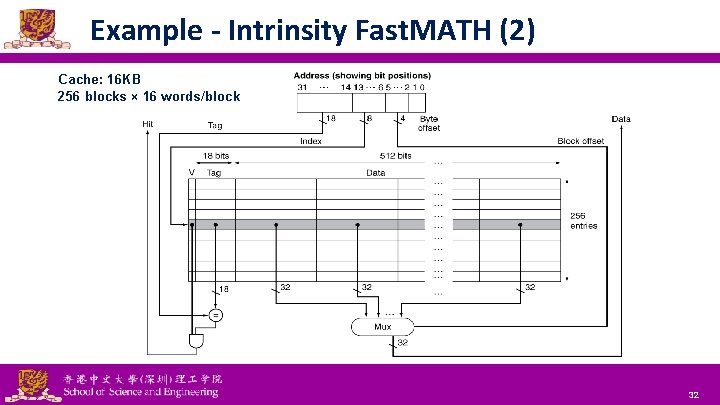

Example - Intrinsity Fast. MATH (1) l Embedded MIPS processor – 12 -stage pipeline – Instruction and data access on each cycle l Split cache: Separate I-cache and D-cache – Each 16 KB: 256 blocks × 16 words/block National Tsing Hua University ® copyright OIA 31

Example - Intrinsity Fast. MATH (2) Cache: 16 KB 256 blocks × 16 words/block National Tsing Hua University ® copyright OIA 32

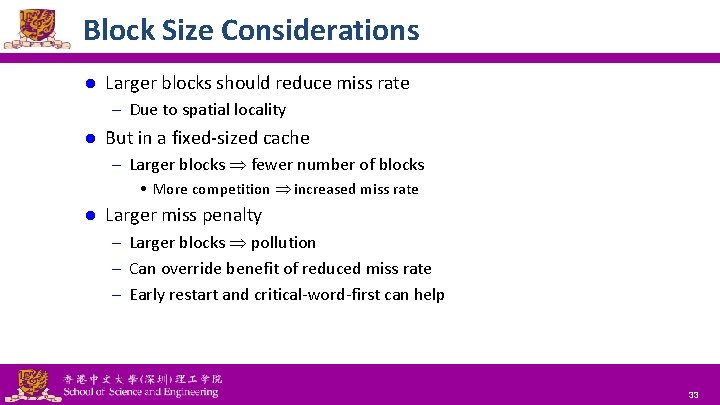

Block Size Considerations l Larger blocks should reduce miss rate – Due to spatial locality l But in a fixed-sized cache – Larger blocks fewer number of blocks • More competition increased miss rate l Larger miss penalty – Larger blocks pollution – Can override benefit of reduced miss rate – Early restart and critical-word-first can help National Tsing Hua University ® copyright OIA 33

Block Size on Performance l Increase block size tends to decrease miss rate National Tsing Hua University ® copyright OIA 34

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 35

Cache Hit and Miss l Cache read – Read hit – Read miss l Cache write – Write hit • Write-through • Write-around • Write-back – Write miss National Tsing Hua University ® copyright OIA 36

Read Cache Read Hit l On cache hit, CPU proceeds normally Read Miss l On cache miss – Stall the CPU pipeline – Fetch block from next level of hierarchy – Instruction cache miss • Restart instruction fetch – Data cache miss • Complete data access National Tsing Hua University ® copyright OIA 37

Write Hit - Write-Through Policy l Write through: Also update data in memory – Advantage: Ensure fast retrieval while making sure the data is in the backing store and is not lost in case the cache is disrupted – Disadvantage: Writing data will experience latency as you have to write to two places every time • Increase the traffic to memory • Also make writes take longer – If base CPI = 1, 10% of instructions are stores, write to memory takes 100 cycles, e. q. , Effective CPI = 1 + 0. 1× 100 = 11 l Solution: Write buffer – Hold data waiting to be written to memory – CPU continues immediately • Only stalls on write if write buffer is already full National Tsing Hua University ® copyright OIA 38

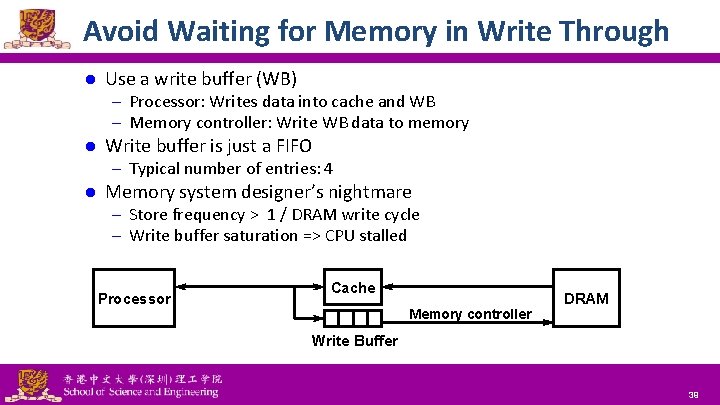

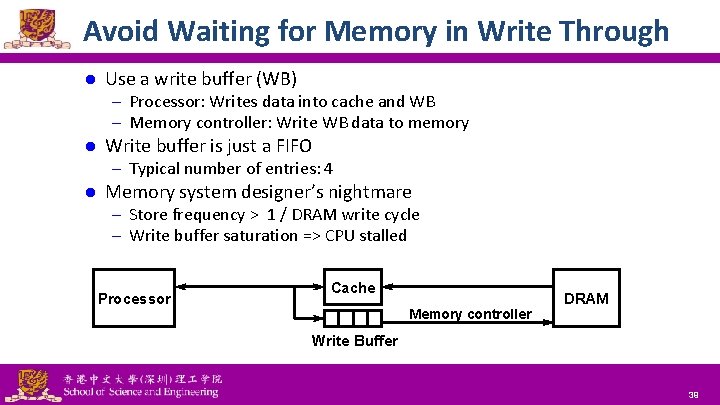

Avoid Waiting for Memory in Write Through l Use a write buffer (WB) – Processor: Writes data into cache and WB – Memory controller: Write WB data to memory l Write buffer is just a FIFO – Typical number of entries: 4 l Memory system designer’s nightmare – Store frequency > 1 / DRAM write cycle – Write buffer saturation => CPU stalled Processor Cache Memory controller DRAM Write Buffer National Tsing Hua University ® copyright OIA 39

Write Hit - Write-Around Policy l Data is written only to the backing store without writing to the cache – I/O completion is confirmed as soon as the data is written to the backing store Advantage: Good for not flooding the cache with data that may not subsequently be re-read. l Disadvantage: Reading recently written data will result in a cache miss (and so a higher latency) because the data can only be read from the slower backing store l National Tsing Hua University ® copyright OIA 40

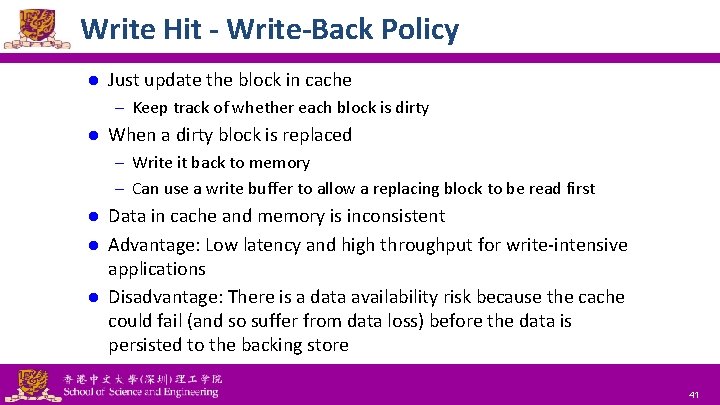

Write Hit - Write-Back Policy l Just update the block in cache – Keep track of whether each block is dirty l When a dirty block is replaced – Write it back to memory – Can use a write buffer to allow a replacing block to be read first Data in cache and memory is inconsistent l Advantage: Low latency and high throughput for write-intensive applications l Disadvantage: There is a data availability risk because the cache could fail (and so suffer from data loss) before the data is persisted to the backing store l National Tsing Hua University ® copyright OIA 41

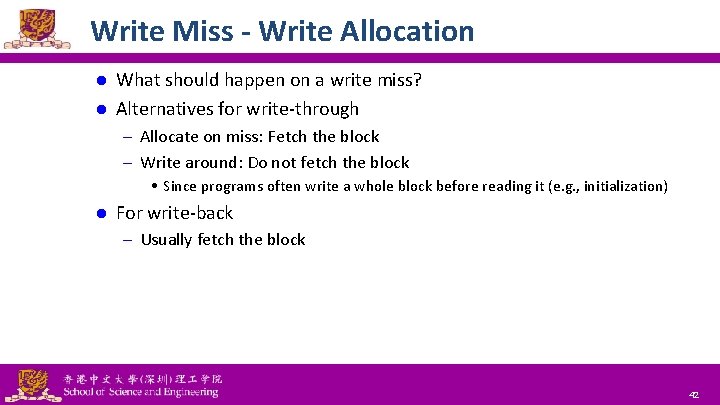

Write Miss - Write Allocation What should happen on a write miss? l Alternatives for write-through l – Allocate on miss: Fetch the block – Write around: Do not fetch the block • Since programs often write a whole block before reading it (e. g. , initialization) l For write-back – Usually fetch the block National Tsing Hua University ® copyright OIA 42

Example - Intrinsity Fast. MATH l Embedded MIPS processor – 12 -stage pipeline – Instruction and data access on each cycle l Split cache: Separate I-cache and D-cache – Each 16 KB: 256 blocks × 16 words/block – D-cache: Write-through or write-back l SPEC 2000 miss rates – I-cache: 0. 4% – D-cache: 11. 4% – Weighted average: 3. 2% National Tsing Hua University ® copyright OIA 43

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 44

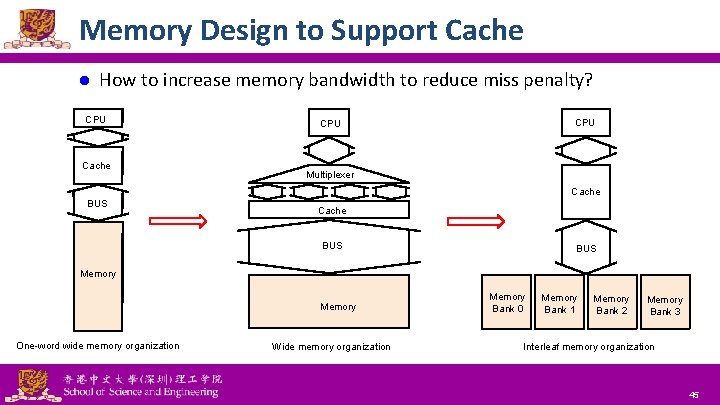

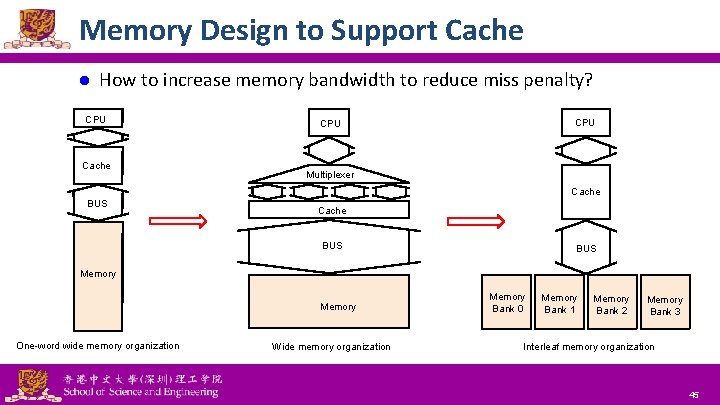

Memory Design to Support Cache l How to increase memory bandwidth to reduce miss penalty? CPU Cache CPU Multiplexer Cache BUS BUS Memory One-word wide memory organization National Tsing Hua University ® copyright OIA Wide memory organization Memory Bank 0 Memory Bank 1 Memory Bank 2 Memory Bank 3 Interleaf memory organization 45

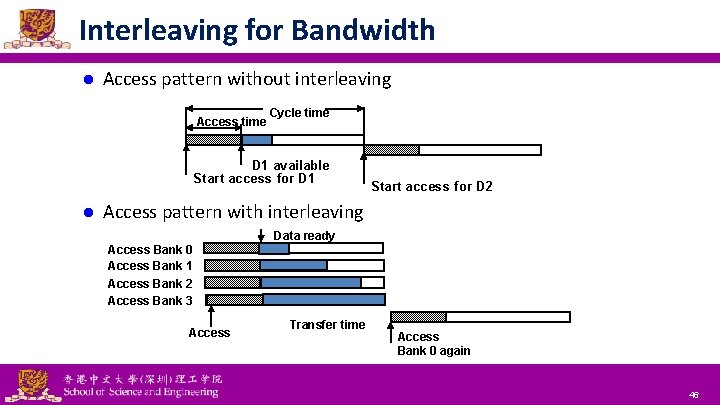

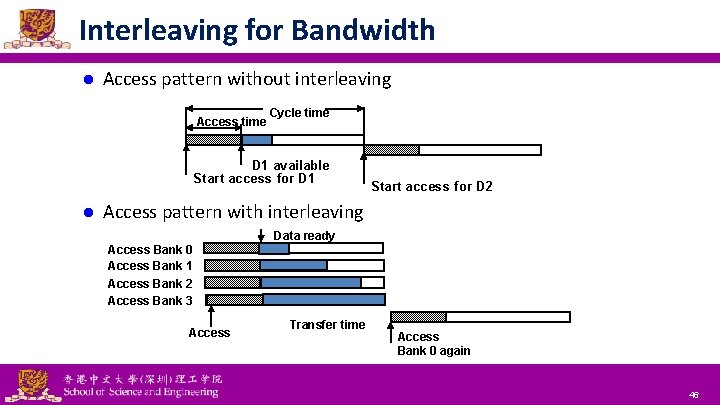

Interleaving for Bandwidth l Access pattern without interleaving Access time Cycle time D 1 available Start access for D 1 l Start access for D 2 Access pattern with interleaving Data ready Access Bank 0 Access Bank 1 Access Bank 2 Access Bank 3 Access National Tsing Hua University ® copyright OIA Transfer time Access Bank 0 again 46

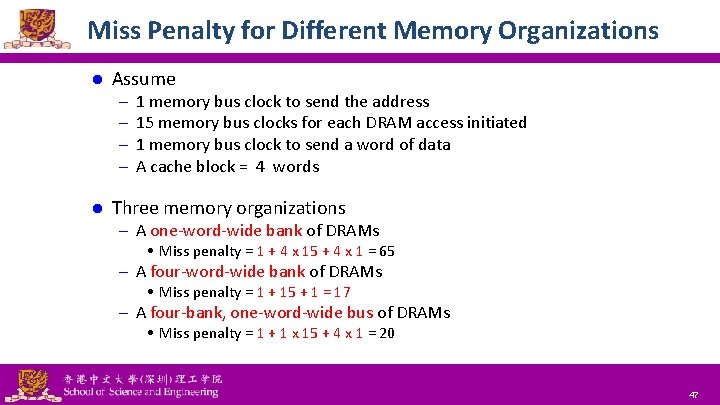

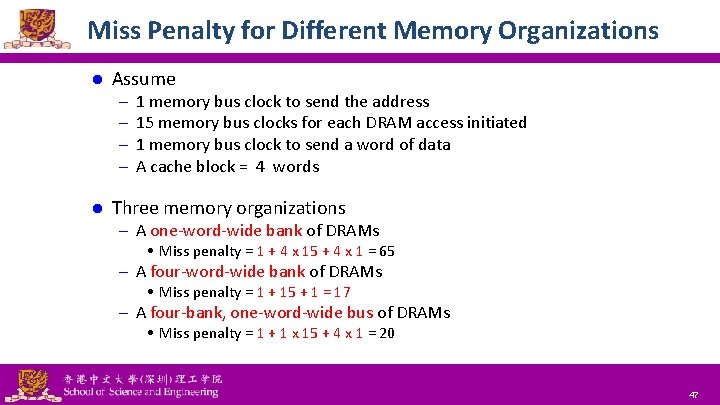

Miss Penalty for Different Memory Organizations l Assume – – l 1 memory bus clock to send the address 15 memory bus clocks for each DRAM access initiated 1 memory bus clock to send a word of data A cache block = 4 words Three memory organizations – A one-word-wide bank of DRAMs • Miss penalty = 1 + 4 x 15 + 4 x 1 = 65 – A four-word-wide bank of DRAMs • Miss penalty = 1 + 15 + 1 = 17 – A four-bank, one-word-wide bus of DRAMs • Miss penalty = 1 + 1 x 15 + 4 x 1 = 20 National Tsing Hua University ® copyright OIA 47

Access of DRAM 2048 x 2048 array 21 -0 National Tsing Hua University ® copyright OIA 48

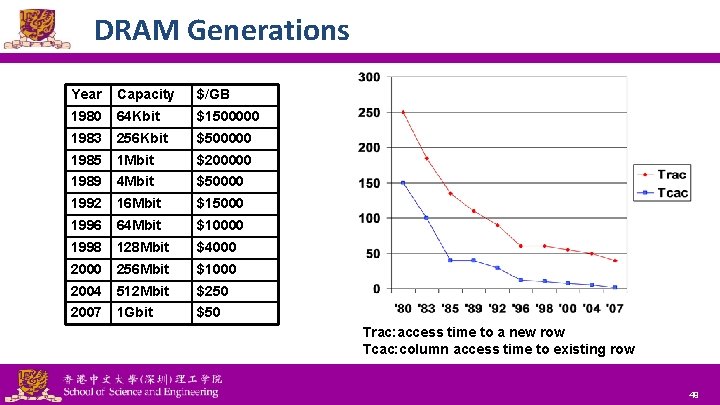

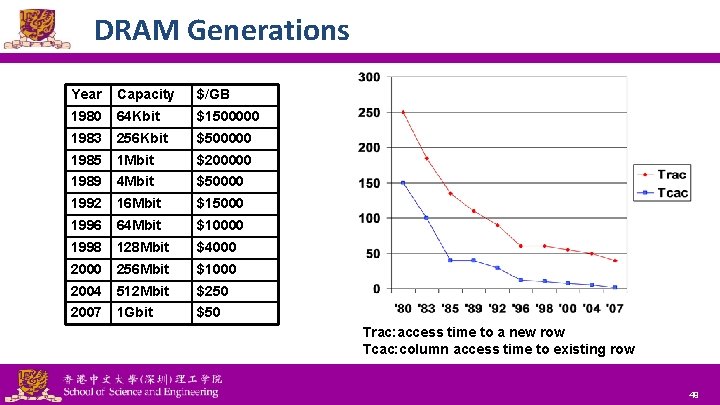

DRAM Generations Year Capacity $/GB 1980 64 Kbit $1500000 1983 256 Kbit $500000 1985 1 Mbit $200000 1989 4 Mbit $50000 1992 16 Mbit $15000 1996 64 Mbit $10000 1998 128 Mbit $4000 256 Mbit $1000 2004 512 Mbit $250 2007 1 Gbit $50 Trac: access time to a new row Tcac: column access time to existing row National Tsing Hua University ® copyright OIA 49

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 51

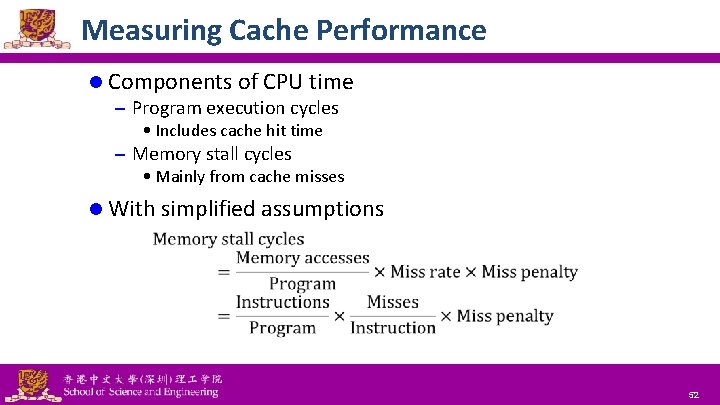

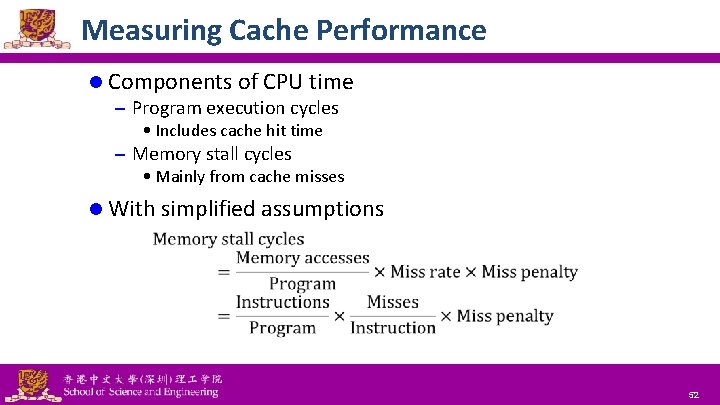

Measuring Cache Performance l Components of CPU time – Program execution cycles • Includes cache hit time – Memory stall cycles • Mainly from cache misses l With simplified assumptions National Tsing Hua University ® copyright OIA 52

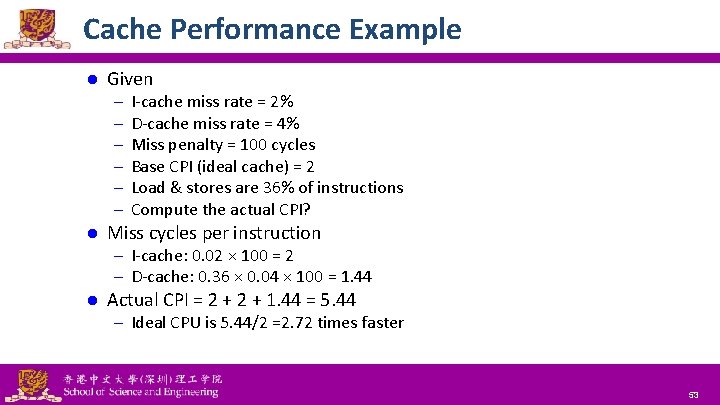

Cache Performance Example l Given – – – l I-cache miss rate = 2% D-cache miss rate = 4% Miss penalty = 100 cycles Base CPI (ideal cache) = 2 Load & stores are 36% of instructions Compute the actual CPI? Miss cycles per instruction – I-cache: 0. 02 × 100 = 2 – D-cache: 0. 36 × 0. 04 × 100 = 1. 44 l Actual CPI = 2 + 1. 44 = 5. 44 – Ideal CPU is 5. 44/2 =2. 72 times faster National Tsing Hua University ® copyright OIA 53

Average Access Time l Hit time is also important for performance l Average memory access time (AMAT) – AMAT = Hit time + Miss rate × Miss penalty l Example – CPU with 1 ns clock, hit time = 1 cycle, miss penalty = 20 cycles, I- cache miss rate = 5% – AMAT = 1 + 0. 05 × 20 = 2 ns • 2 cycles per instruction National Tsing Hua University ® copyright OIA 54

Performance Summary l When CPU performance increased – Miss penalty becomes more significant l Decreasing base CPI – Greater proportion of time spent on memory stalls l Increasing clock rate – Memory stalls account for more CPU cycles l Cannot neglect cache behavior when evaluating system performance National Tsing Hua University ® copyright OIA 55

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 56

Improving Cache Performance l Reduce the time to hit in the cache l Decrease the miss ratio l Decrease the miss penalty National Tsing Hua University ® copyright OIA 57

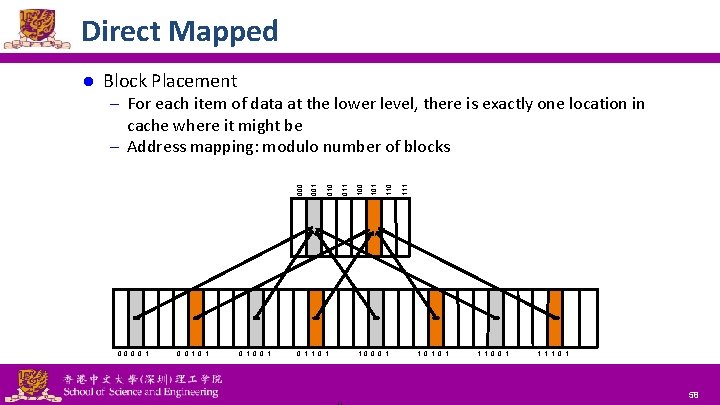

Direct Mapped l Block Placement – For each item of data at the lower level, there is exactly one location in 0 0 1 0 1 0 0 1 National Tsing Hua University ® copyright OIA 0 1 1 0 0 0 1 110 101 100 011 010 001 000 cache where it might be – Address mapping: modulo number of blocks 1 0 1 1 1 0 0 1 1 0 1 58

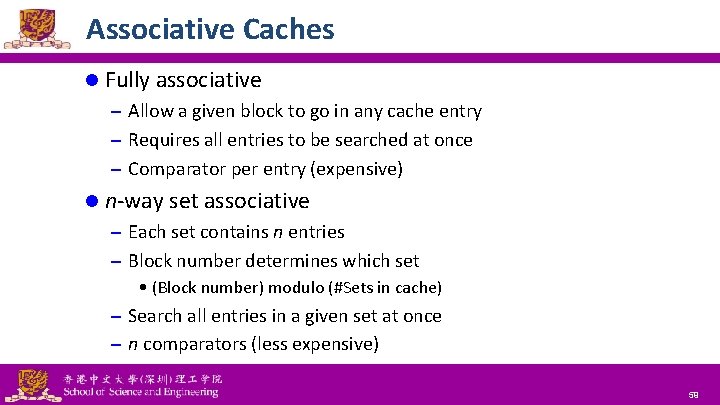

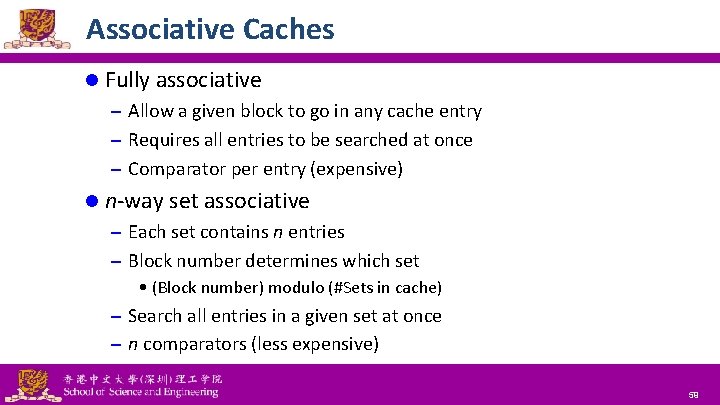

Associative Caches l Fully associative – Allow a given block to go in any cache entry – Requires all entries to be searched at once – Comparator per entry (expensive) l n-way set associative – Each set contains n entries – Block number determines which set • (Block number) modulo (#Sets in cache) – Search all entries in a given set at once – n comparators (less expensive) National Tsing Hua University ® copyright OIA 59

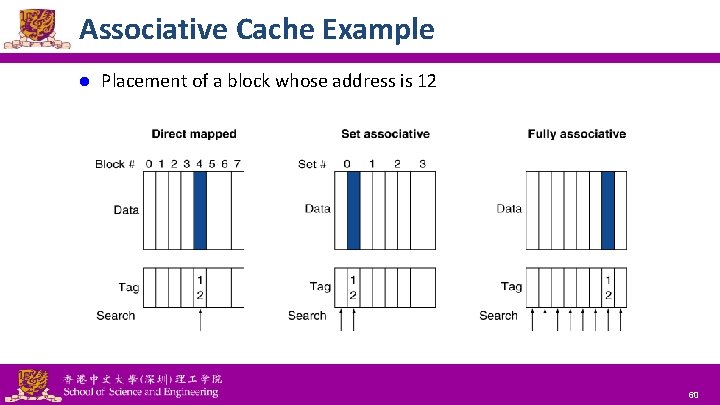

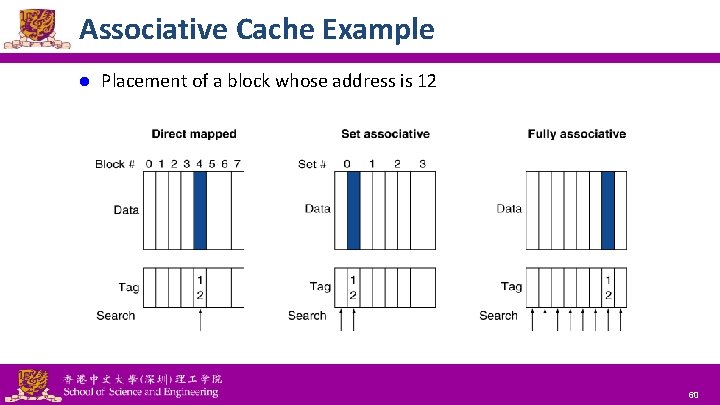

Associative Cache Example l Placement of a block whose address is 12 National Tsing Hua University ® copyright OIA 60

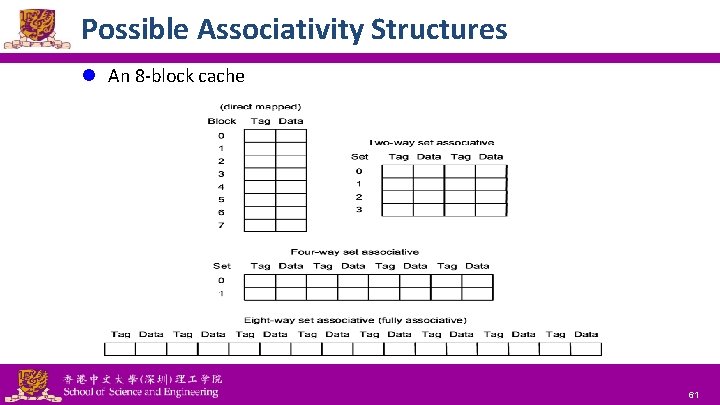

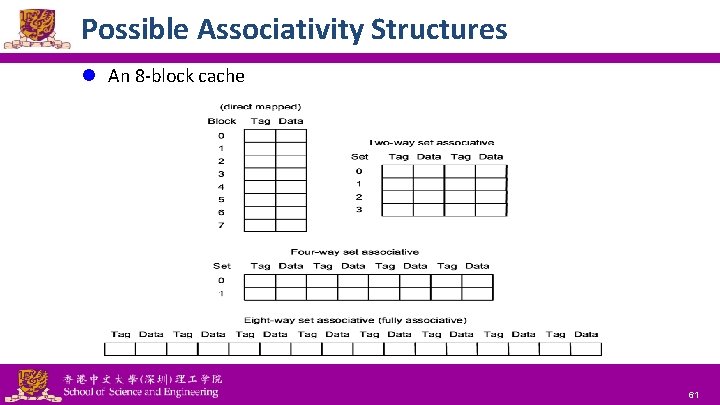

Possible Associativity Structures l An 8 -block cache National Tsing Hua University ® copyright OIA 61

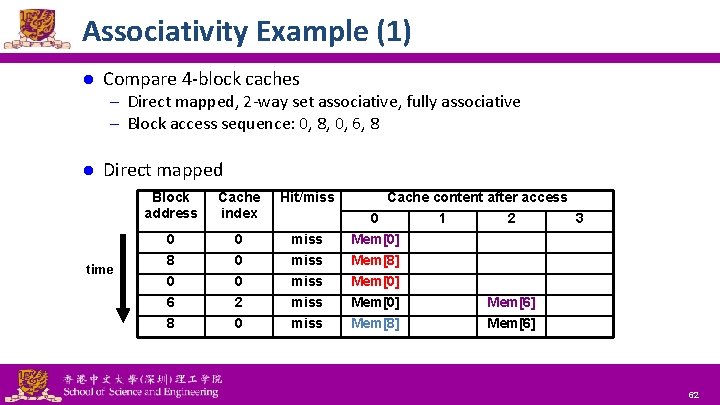

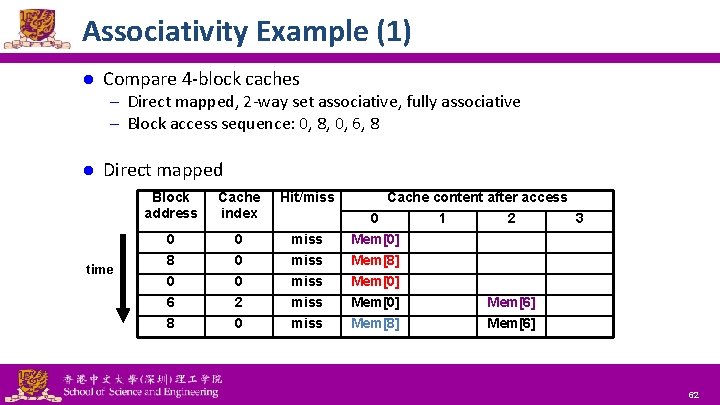

Associativity Example (1) l Compare 4 -block caches – Direct mapped, 2 -way set associative, fully associative – Block access sequence: 0, 8, 0, 6, 8 l Direct mapped time Block address Cache index Hit/miss 0 8 0 6 8 0 0 0 2 0 miss miss National Tsing Hua University ® copyright OIA Cache content after access 0 1 2 3 Mem[0] Mem[8] Mem[0] Mem[6] Mem[8] Mem[6] 62

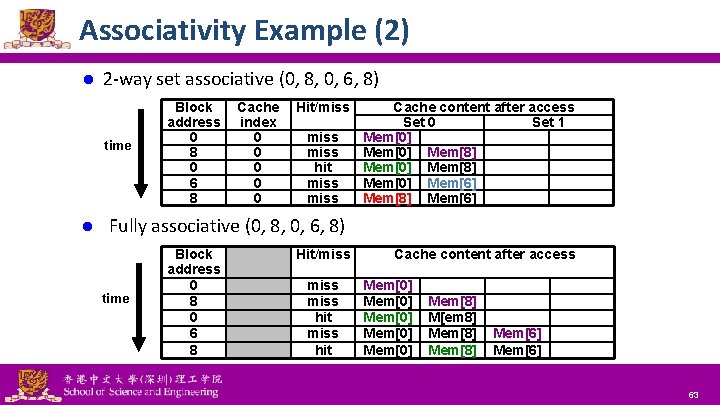

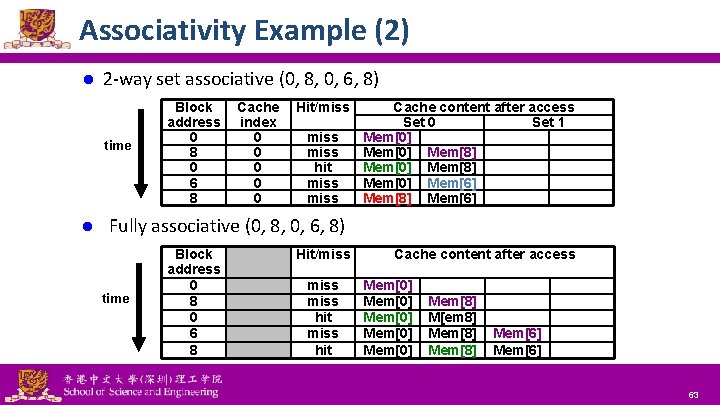

Associativity Example (2) l 2 -way set associative (0, 8, 0, 6, 8) time l Block address 0 8 0 6 8 Cache index 0 0 0 Hit/miss hit miss Cache content after access Set 0 Set 1 Mem[0] Mem[8] Mem[0] Mem[6] Mem[8] Mem[6] Fully associative (0, 8, 0, 6, 8) time Block address 0 8 0 6 8 National Tsing Hua University ® copyright OIA Hit/miss hit Cache content after access Mem[0] Mem[0] Mem[8] M[em 8] Mem[8] Mem[6] 63

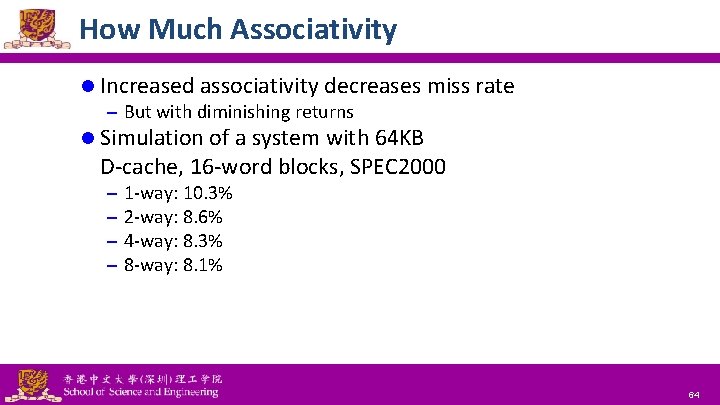

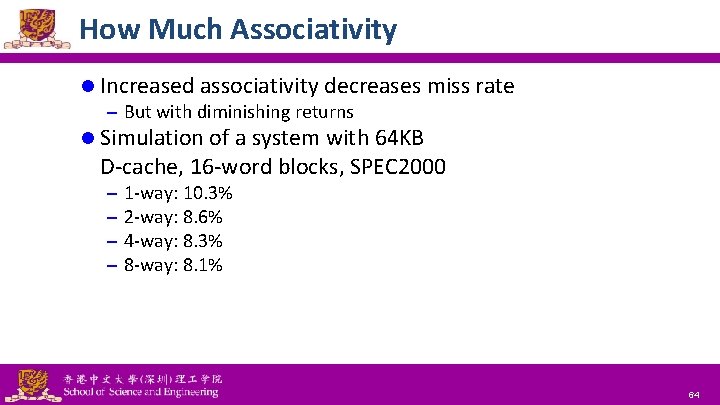

How Much Associativity l Increased associativity decreases miss rate – But with diminishing returns l Simulation of a system with 64 KB D-cache, 16 -word blocks, SPEC 2000 – – 1 -way: 10. 3% 2 -way: 8. 6% 4 -way: 8. 3% 8 -way: 8. 1% National Tsing Hua University ® copyright OIA 64

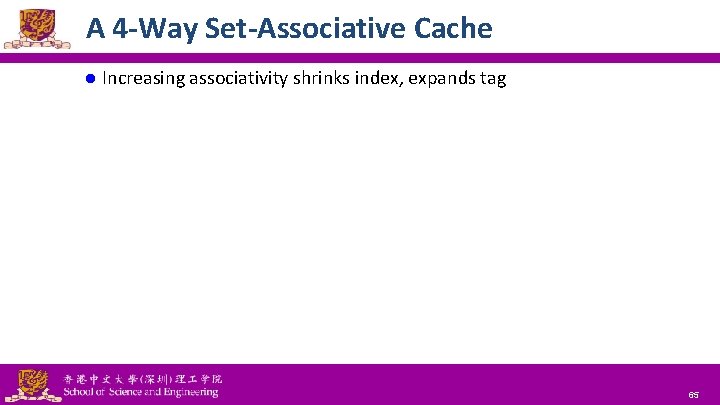

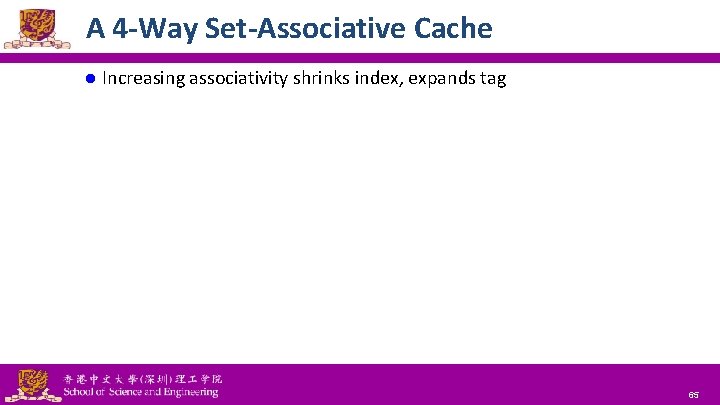

A 4 -Way Set-Associative Cache l Increasing associativity shrinks index, expands tag National Tsing Hua University ® copyright OIA 65

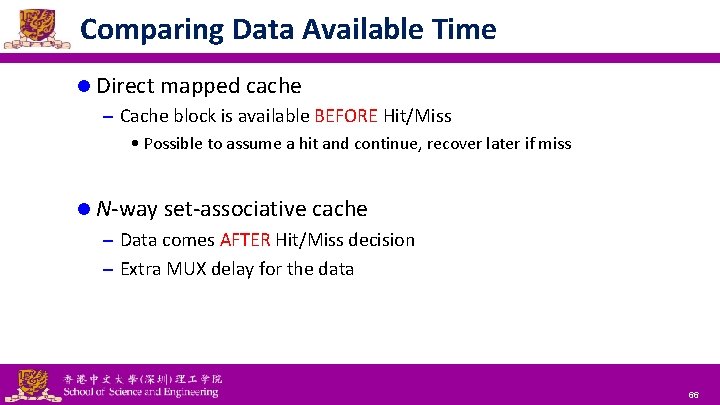

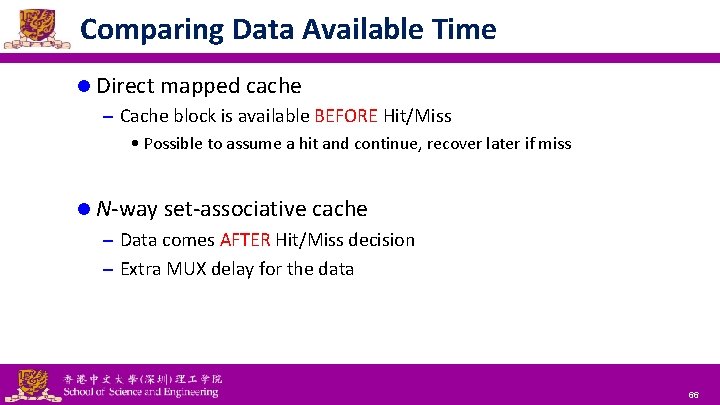

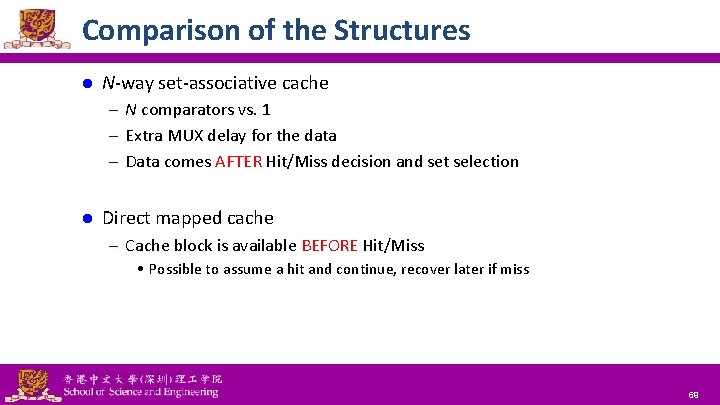

Comparing Data Available Time l Direct mapped cache – Cache block is available BEFORE Hit/Miss • Possible to assume a hit and continue, recover later if miss l N-way set-associative cache – Data comes AFTER Hit/Miss decision – Extra MUX delay for the data National Tsing Hua University ® copyright OIA 66

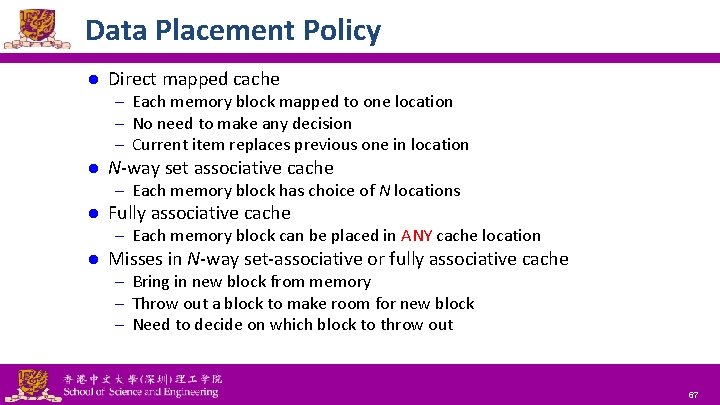

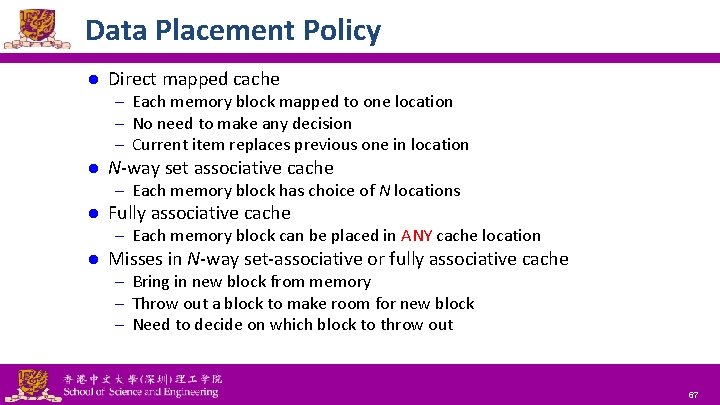

Data Placement Policy l Direct mapped cache – Each memory block mapped to one location – No need to make any decision – Current item replaces previous one in location l N-way set associative cache – Each memory block has choice of N locations l Fully associative cache – Each memory block can be placed in ANY cache location l Misses in N-way set-associative or fully associative cache – Bring in new block from memory – Throw out a block to make room for new block – Need to decide on which block to throw out National Tsing Hua University ® copyright OIA 67

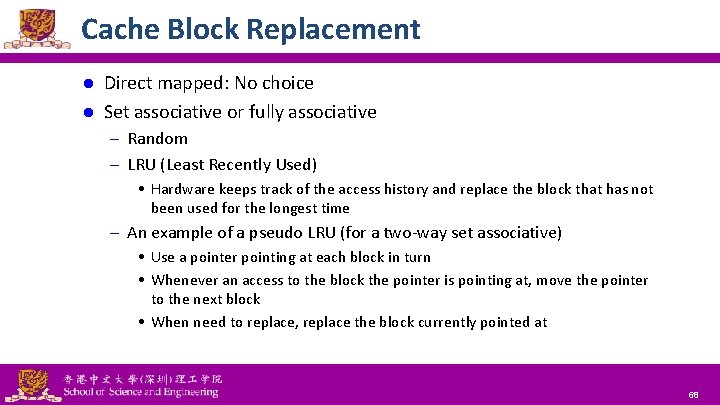

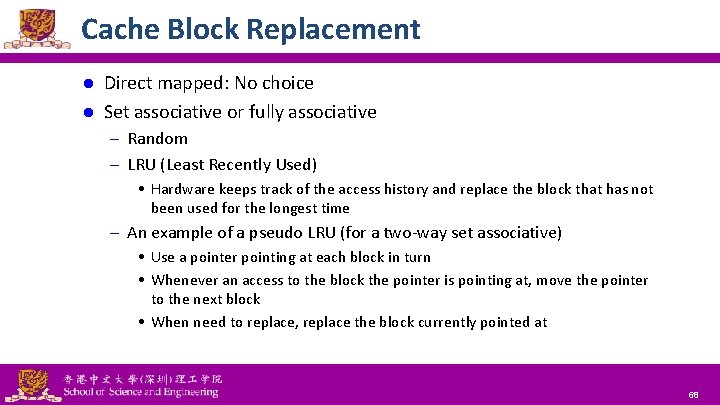

Cache Block Replacement Direct mapped: No choice l Set associative or fully associative l – Random – LRU (Least Recently Used) • Hardware keeps track of the access history and replace the block that has not been used for the longest time – An example of a pseudo LRU (for a two-way set associative) • Use a pointer pointing at each block in turn • Whenever an access to the block the pointer is pointing at, move the pointer to the next block • When need to replace, replace the block currently pointed at National Tsing Hua University ® copyright OIA 68

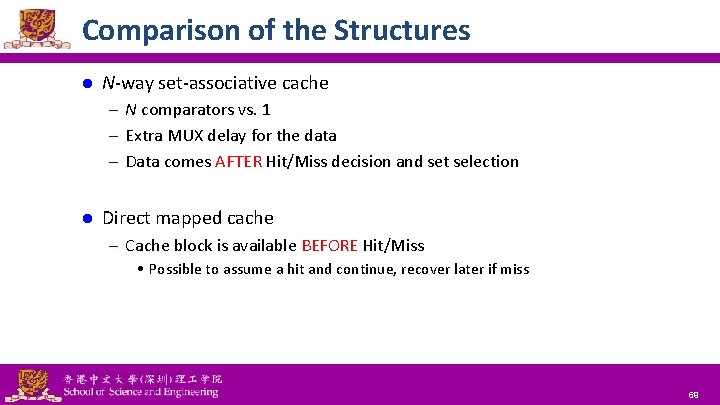

Comparison of the Structures l N-way set-associative cache – N comparators vs. 1 – Extra MUX delay for the data – Data comes AFTER Hit/Miss decision and set selection l Direct mapped cache – Cache block is available BEFORE Hit/Miss • Possible to assume a hit and continue, recover later if miss National Tsing Hua University ® copyright OIA 69

Outline Memory hierarchy l The basics of caches l – Direct-mapped cache – Address sub-division – Cache hit and miss – Memory support Measuring cache performance l Improving cache performance l – Set associative cache – Multiple level cache National Tsing Hua University ® copyright OIA 70

Multilevel Caches l Primary cache attached to CPU – Small, but fast l Level-2 cache services misses from primary cache – Larger, slower, but still faster than main memory Main memory services L-2 cache misses l Some high-end systems include L-3 cache l National Tsing Hua University ® copyright OIA 71

Multilevel Cache Example (1) l Given – CPU base CPI = 1, clock rate = 4 GHz – Miss rate/instruction = 2% – Main memory access time = 100 ns l With just primary cache – Miss penalty = 100 ns/0. 25 ns = 400 cycles – Effective CPI = 1 + 0. 02 × 400 = 9 National Tsing Hua University ® copyright OIA 72

Multilevel Cache Example (2) l Now add L-2 cache – L-1 miss rate to L-2 = 2% (with one cache: to M) – L-2 access time = 5 ns (to M: 100 ns) – L-2 miss rate to main memory = 0. 5% l Primary miss with L-2 hit (2%) – Penalty to access L-2 = 5 ns/0. 25 ns = 20 cycles l Primary miss with L-2 miss (0. 5%) – Penalty to access memory = 400 cycles CPI = 1 + 0. 02 × 20 + 0. 005 × 400 = 3. 4 l Performance ratio = 9/3. 4 = 2. 6 l National Tsing Hua University ® copyright OIA 73

Multilevel Cache Considerations l Primary cache – Focus on minimal hit time l L-2 cache – Focus on low miss rate to avoid main memory access – Hit time has less overall impact l Results – L-1 cache usually smaller than a single-level cache – L-1 block size smaller than L-2 block size National Tsing Hua University ® copyright OIA 74

Interactions with Advanced CPUs l Out-of-order CPUs can execute instructions during cache miss – Pending store stays in load/store unit – Dependent instructions wait in reservation stations • Independent instructions continue l Effect of miss depends on program data flow – Much harder to analyze – Use system simulation National Tsing Hua University ® copyright OIA 75

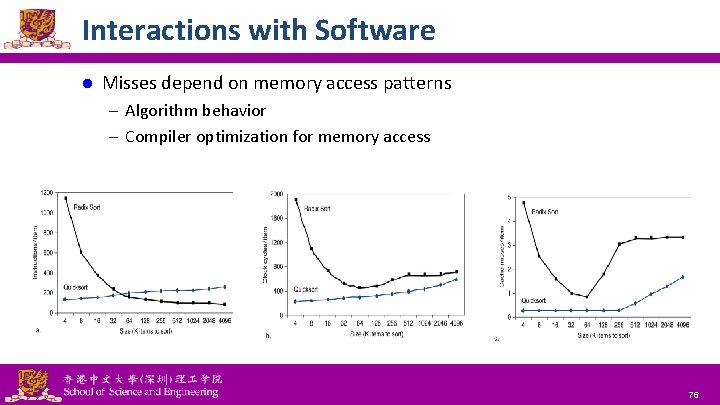

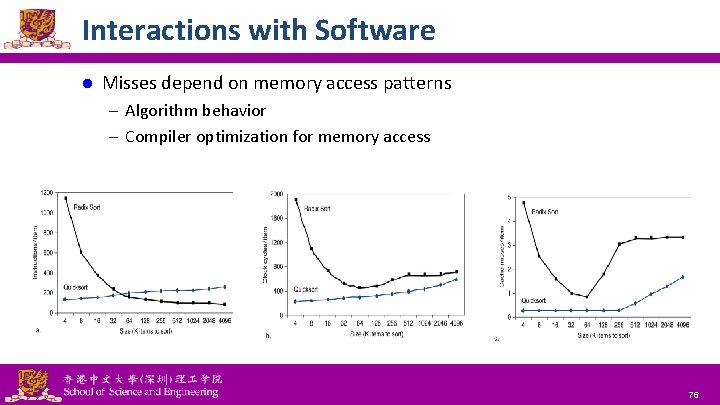

Interactions with Software l Misses depend on memory access patterns – Algorithm behavior – Compiler optimization for memory access National Tsing Hua University ® copyright OIA 76