CS 603 Process Synchronization February 11 2002 Synchronization

CS 603 Process Synchronization February 11, 2002

Synchronization: Basics • Problem: Shared Resources – Generally data – But could be others P 1 • Approaches: – Model as sequential process – Model as parallel process Models assume global state! P 2 P 3

Global State • Plausible in sequential system – But expensive – Solution: Send only needed portions of state • What about Parallel model? – Distributed Virtual Memory! – With all the advantages and problems – What about non-memory resources? • Question: What is global state?

Alternative: Mutual Exclusion • Control access to shared resources – Only one process allowed to use resource at a time – Doesn’t require global state • Sequential simulation can be viewed as mutual exclusion on entire state – But we can do better – Control only when resource in contention

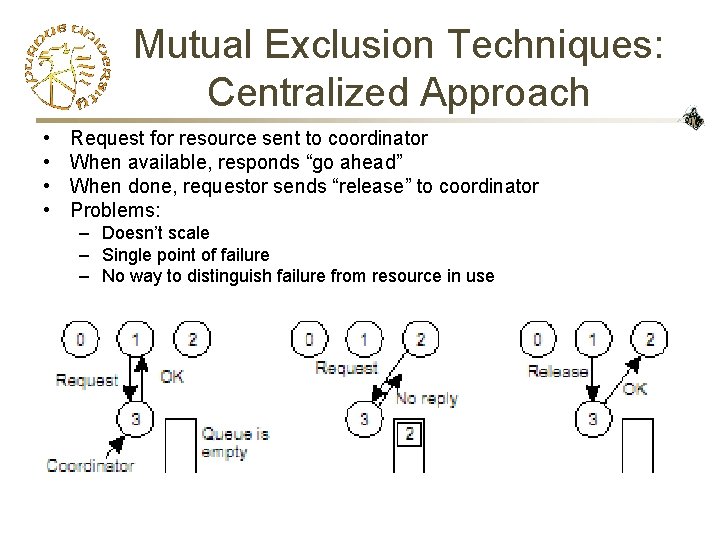

Mutual Exclusion Techniques: Centralized Approach • • Request for resource sent to coordinator When available, responds “go ahead” When done, requestor sends “release” to coordinator Problems: – Doesn’t scale – Single point of failure – No way to distinguish failure from resource in use

Evaluating Mutual Exclusion Techniques • Does it guarantee mutual exclusion? • Does it prevent starvation? – If a process requests a resource, it is guaranteed to eventually get it – Assumes resource use bounded • Is it fair? – What do we mean by fair? • Does it scale? • Does it handle failures?

Mutual Exclusion: Token Passing • Token associated with resource – Initially held by “first” process – When resource not in use, token passed to next process • Problems: – Potential delay • Resource need and path of token unrelated – Communication cost – Failure causing “loss of token”

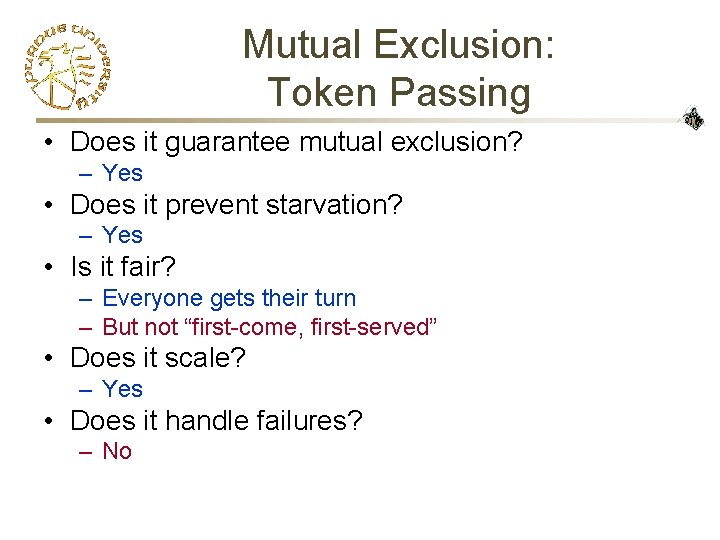

Mutual Exclusion: Token Passing • Does it guarantee mutual exclusion? – Yes • Does it prevent starvation? – Yes • Is it fair? – Everyone gets their turn – But not “first-come, first-served” • Does it scale? – Yes • Does it handle failures? – No

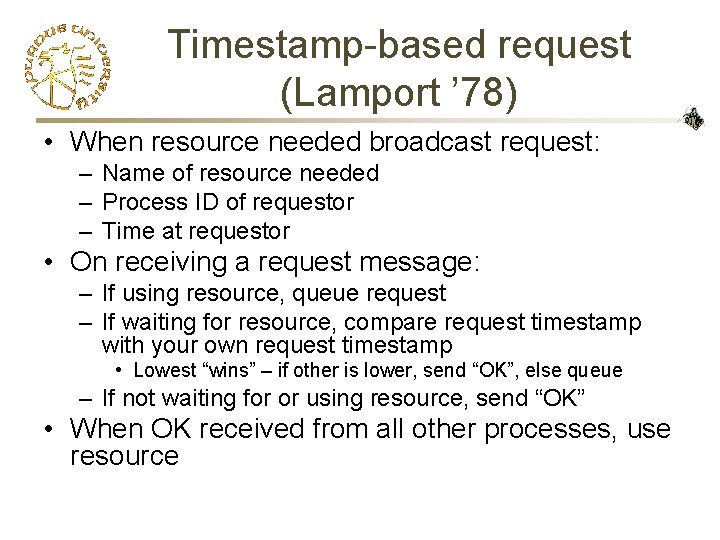

Timestamp-based request (Lamport ’ 78) • When resource needed broadcast request: – Name of resource needed – Process ID of requestor – Time at requestor • On receiving a request message: – If using resource, queue request – If waiting for resource, compare request timestamp with your own request timestamp • Lowest “wins” – if other is lower, send “OK”, else queue – If not waiting for or using resource, send “OK” • When OK received from all other processes, use resource

Timestamp-based request (Lamport ’ 78) • Does it guarantee mutual exclusion? – Yes • Does it prevent starvation? – Yes • Is it fair? – First-come, first-served Where “first” defined by clock synchronization • Does it scale? – Requires all processes to respond to any request • Does it handle failures? – No single point of failure – Instead, n points of failure!

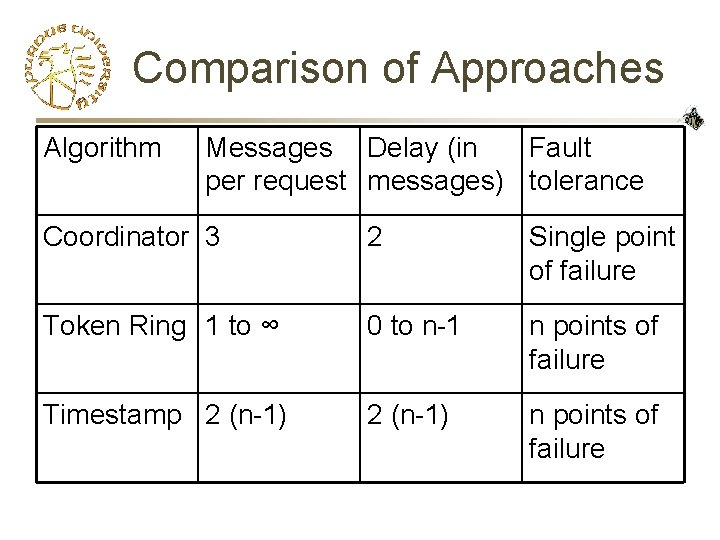

Comparison of Approaches Algorithm Messages Delay (in Fault per request messages) tolerance Coordinator 3 2 Single point of failure Token Ring 1 to ∞ 0 to n-1 n points of failure Timestamp 2 (n-1) n points of failure

What’s next? • Fault tolerant solutions – Colored Ticket Algorithm • Multiple resources – Dining philosophers problem

- Slides: 12