CS 594 Empirical Methods in HCC Experimental Research

- Slides: 27

CS 594: Empirical Methods in HCC Experimental Research in HCI (Part 1) Dr. Debaleena Chattopadhyay Department of Computer Science debchatt@uic. edu debaleena. com hci. cs. uic. edu

Agenda • • • Overview Sampling Significance Testing Study Design Parametric Statistics – – – Correlation Regression T-test ANOVA Multilevel Linear Modeling

Overview • Experimental research is used in HCI to answer questions of causality. • To show the manipulation of one variable of interest has a direct causal influence on another variable of interest • Exp. Research in HCI builds upon the tradition of psychology, sociology, cognitive science, information science, and broadly social science. • Exp. Research in HCI can be theoretically driven or engineering driven.

Advantages and Limitations of Exp. Research • Advantages – Internal validity – Demonstrate a strong causal connection – Provides a systematic process to test theoretical propositions and advance theory • Disadvantages – Requires well-defined, testable hypotheses, and a small set of wellcontrolled variables. – Risk of low external and ecological validity – A poorly executed experiment may have the veneer of “scientific validity” because of the methodological rigor, but ultimately provides little more than well-measured noise.

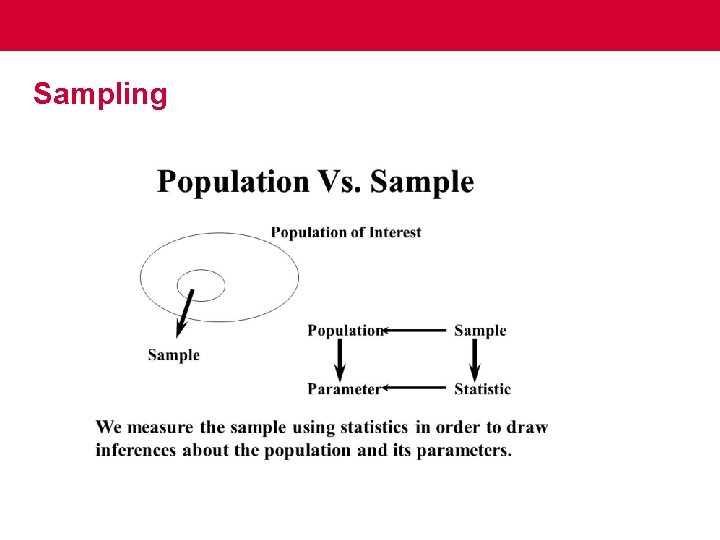

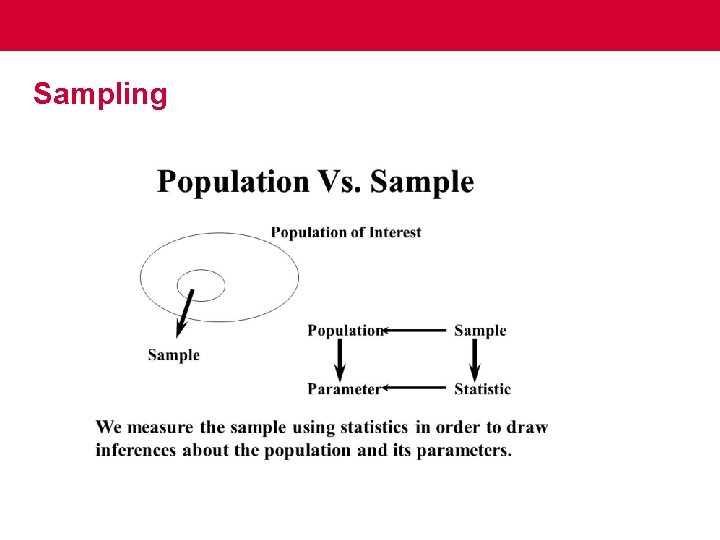

Sampling

Sampling (cont…. ) • Non-probability sampling – Snowball sampling – Quota sampling • Probability sampling – Random selection – To err is human, to randomly err is statistically divine.

Hypothesis Formulation • • Precise Meaningful Testable Falsifiable

Study Design • Independent and Dependent variable • Operational definition • Manipulation check and check for operational confounds • Reliability and Validity of the dependent variable – – – Rules for quantifying Scope and boundaries of what is to be measured Face validity, concurrent validity, predictive validity Use standardized measures whenever you can Consider sensitivity and practicality

Significance Testing • The first step in null hypothesis significance testing is to formulate the original research hypothesis as a null hypothesis and an alternative hypothesis. • The null hypothesis (H 0 ) is set up as a falsifiable statement that predicts no difference between experimental conditions. • The alternative hypothesis (often written as HA or H 1) captures departures from the null hypothesis.

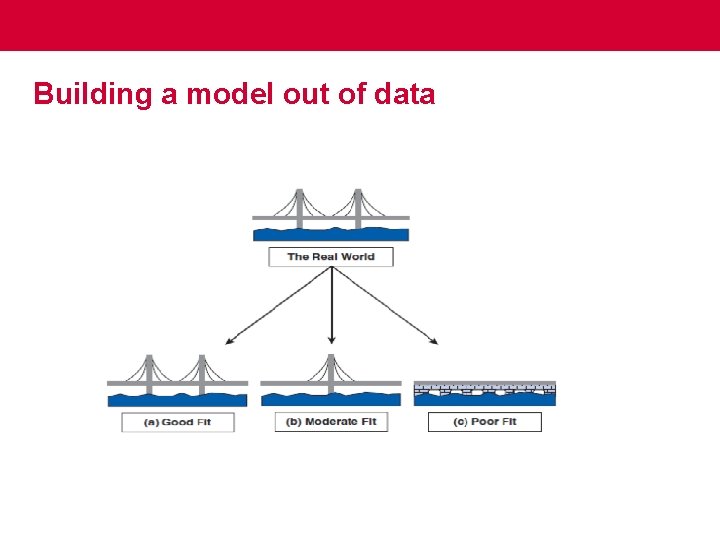

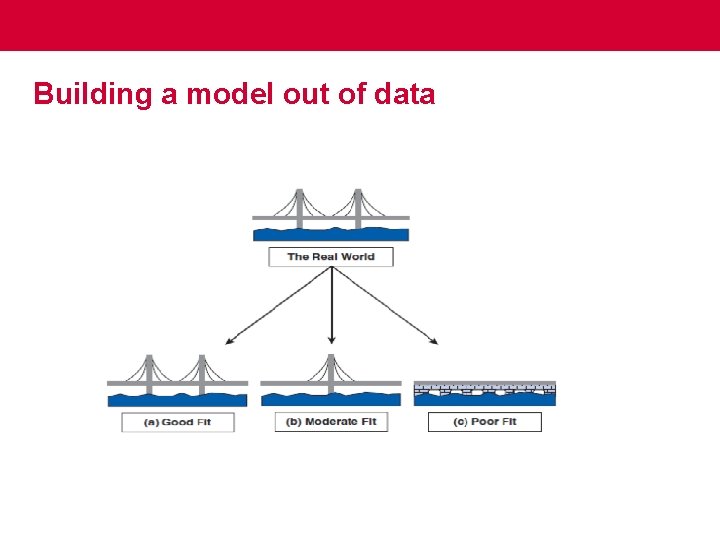

Building a model out of data

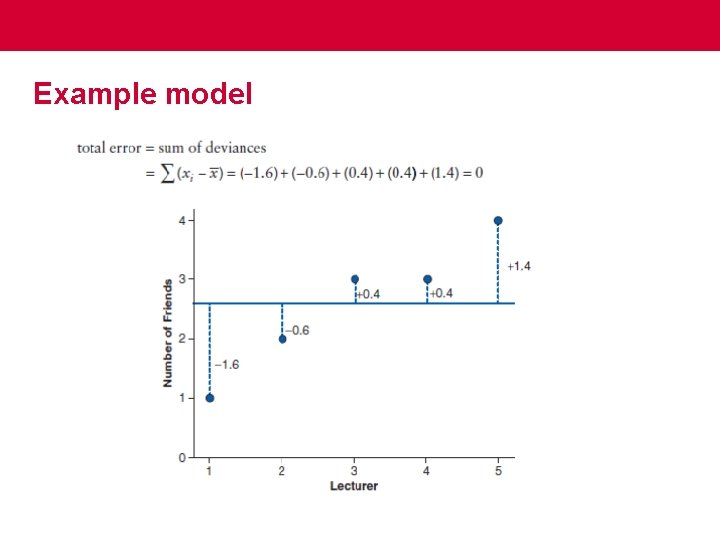

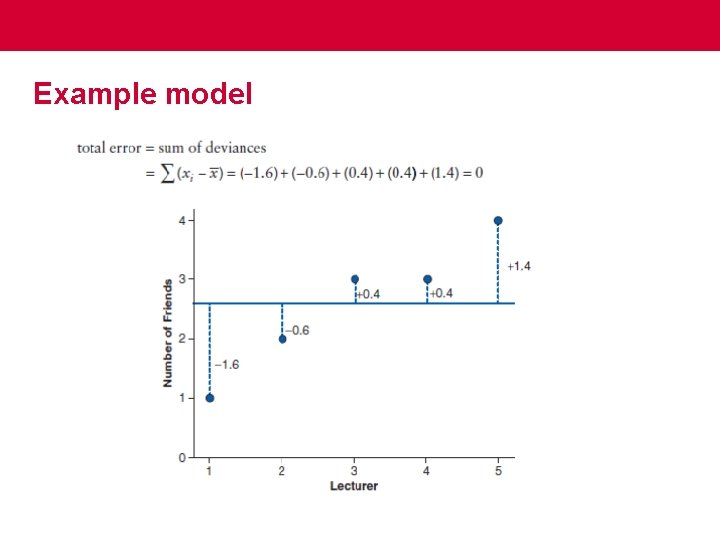

Example model

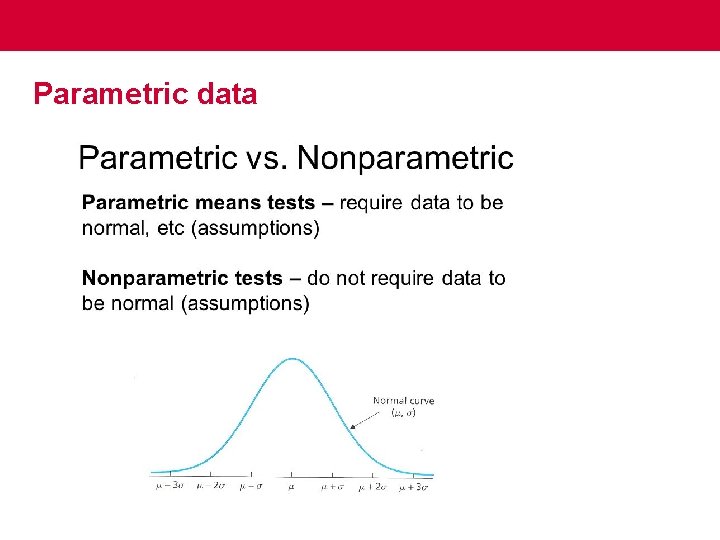

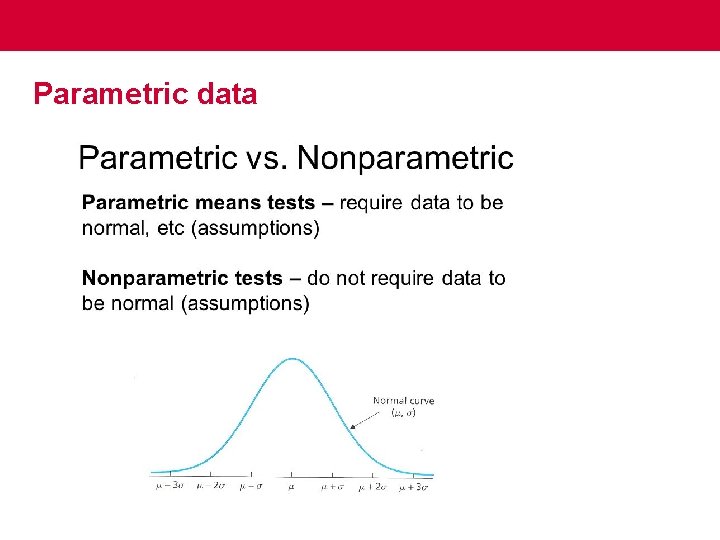

Parametric data

Skewness and Kurtosis • • Positively skewed Negatively skewed Platykurtic Leptokurtic

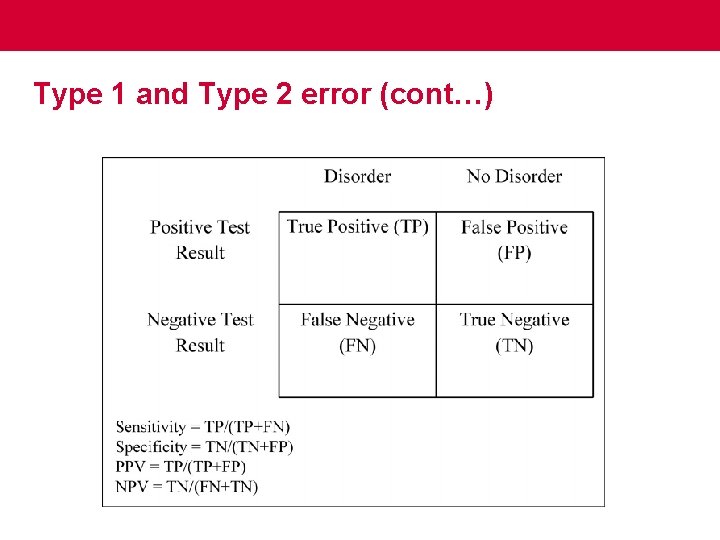

Significance Testing (cont…) • Type 1 error = Pr(reject H 0 | H 0 true). (also significance level or alpha) • p value describes the probability of obtaining the observed data, or more extreme data, if the null hypothesis were true. Pr(observed data| H 0 true) • p <. 05 means the chances of a Type I error occurring are less than 5 %.

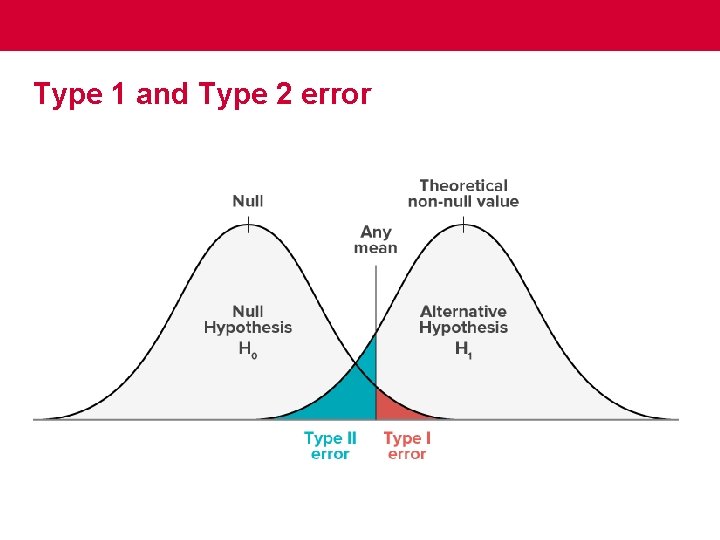

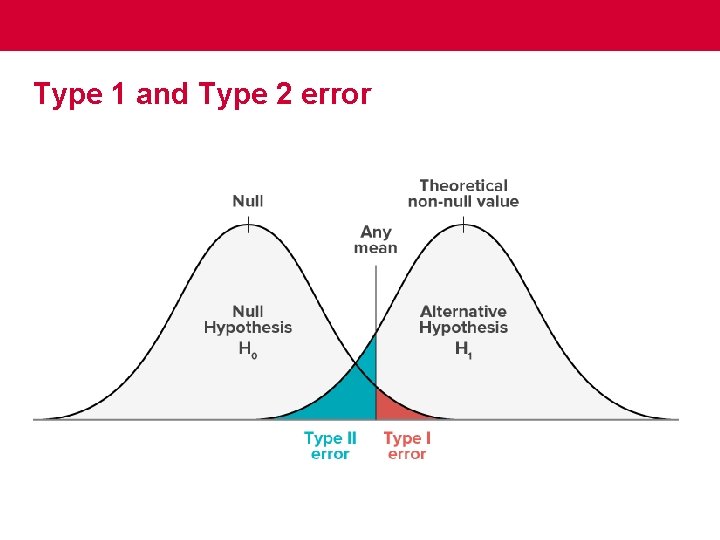

Type 1 and Type 2 error

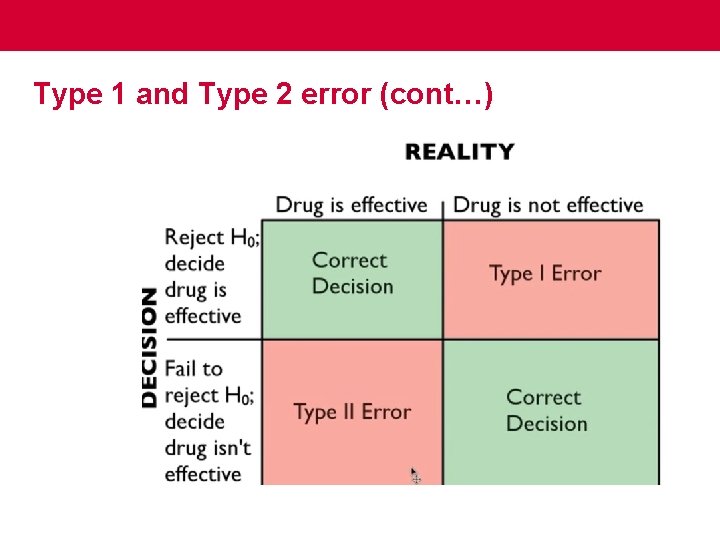

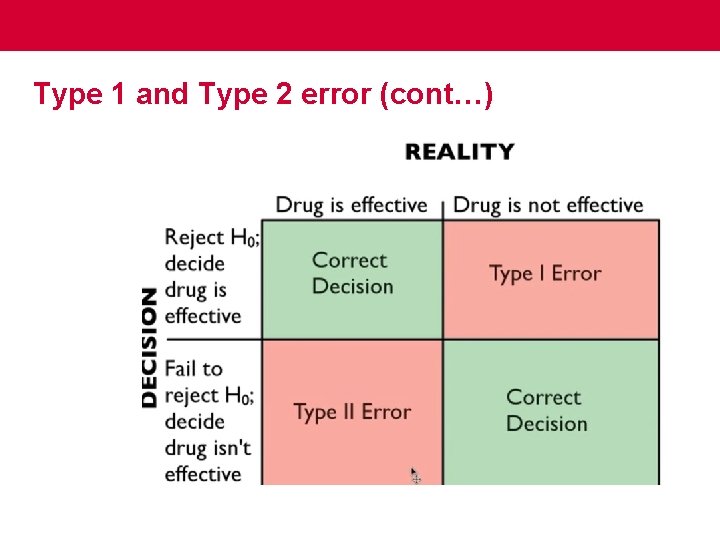

Type 1 and Type 2 error (cont…)

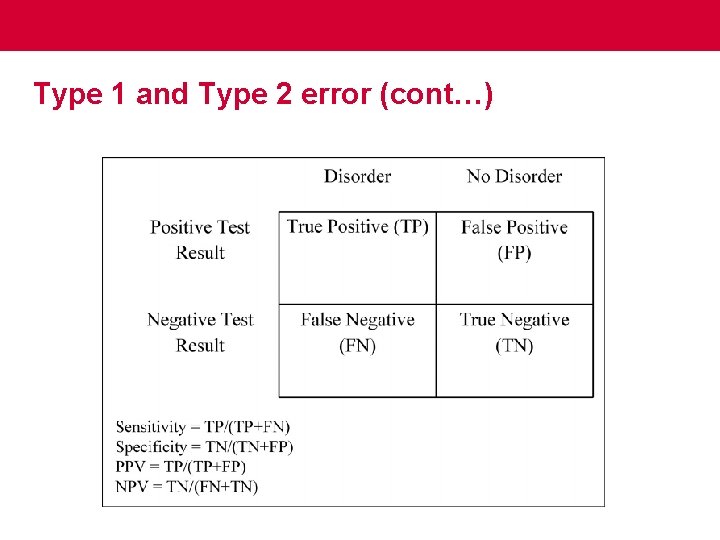

Type 1 and Type 2 error (cont…)

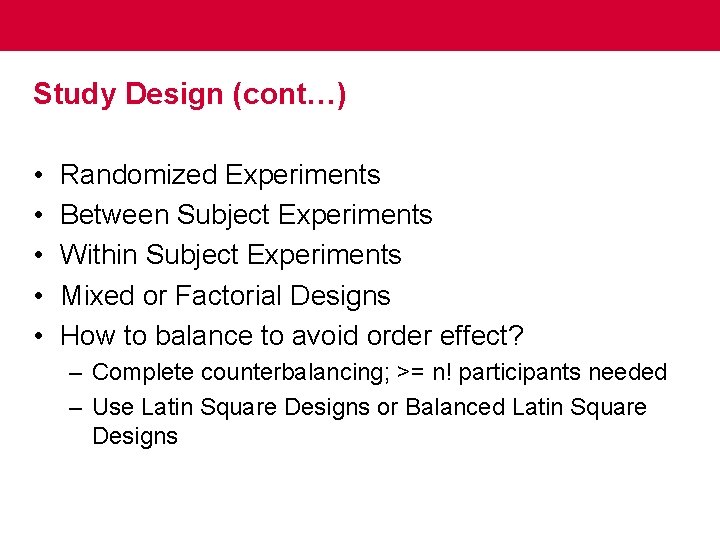

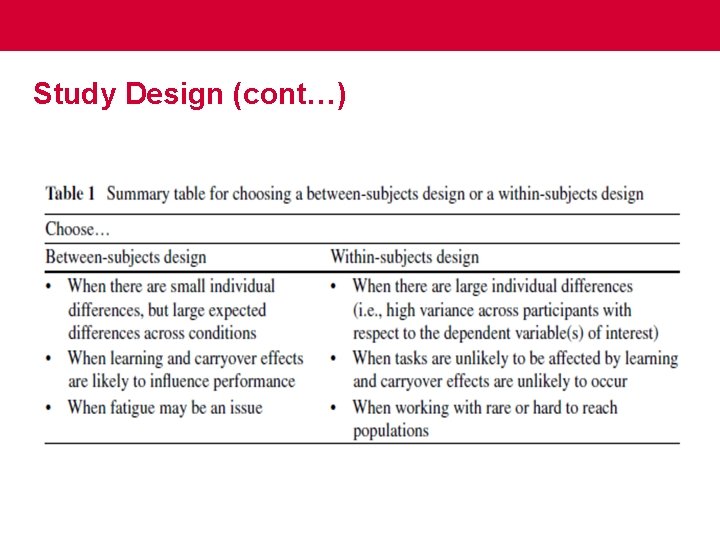

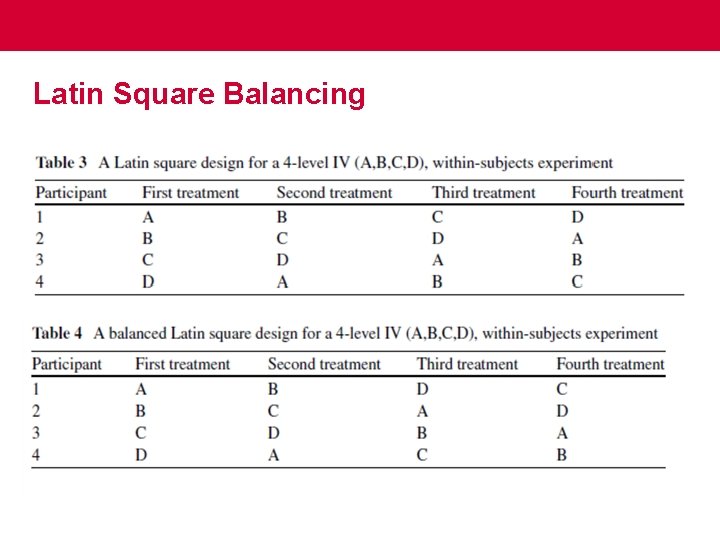

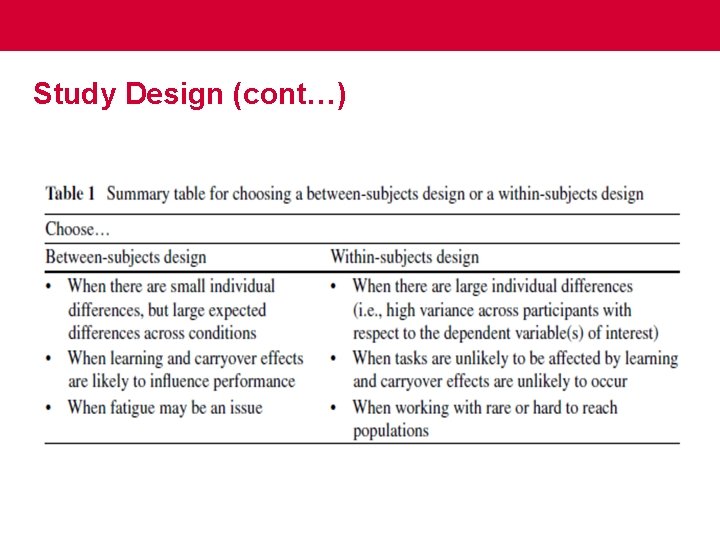

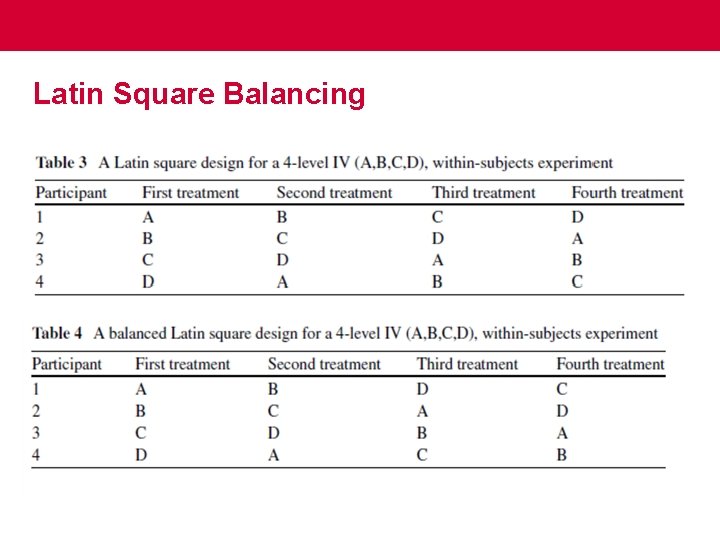

Study Design (cont…) • • • Randomized Experiments Between Subject Experiments Within Subject Experiments Mixed or Factorial Designs How to balance to avoid order effect? – Complete counterbalancing; >= n! participants needed – Use Latin Square Designs or Balanced Latin Square Designs

Study Design (cont…)

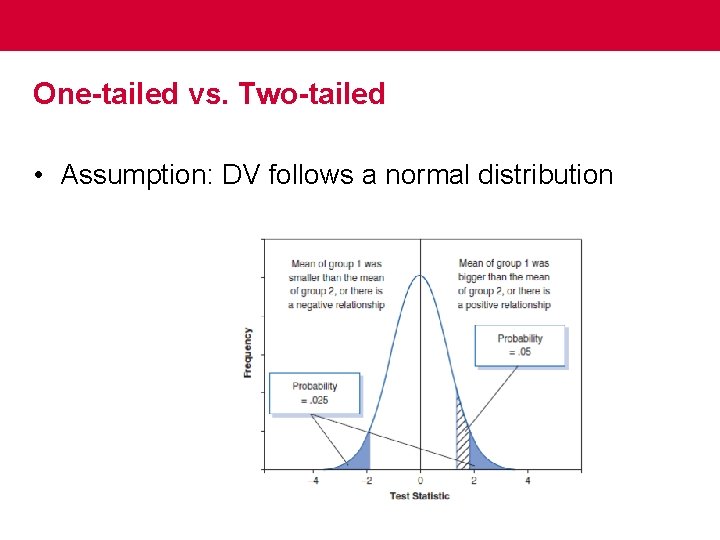

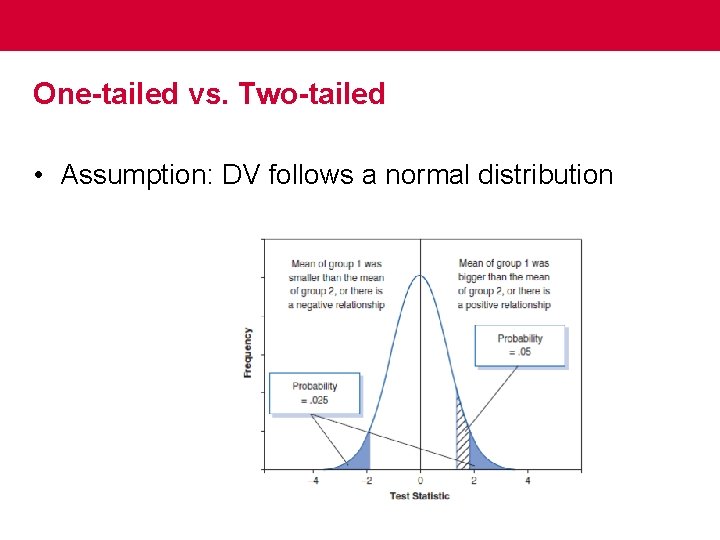

One-tailed vs. Two-tailed • Assumption: DV follows a normal distribution

Latin Square Balancing

Quasi-Experimental Designs in HCI • Non-equivalent group designs – Pre-test/post-test design • Interrupted Time-Series Design – Infers the effects of an independent variable by comparing multiple measures obtained before and after an intervention takes place. – major threats to internal validity

Writing up Experimental Research • Answer “Why should anyone care? ” • Use APA conventions—mostly accepted • Results should contain the study design and statistical tests • Discussion should contain how the results address the HCI research question operationalized using the metrics and tested. • Report effect size. • Pre-register large experiments with OSF

Assumptions of parametric data • • Normally distributed data Homogeneity of variance Interval data Independence

Statistical Power and Effect Sizes • Effect sizes are useful because they provide an objective measure of the importance of an effect. • Effect size depends on: – Sample size – Alpha – Statistical power of the test used • The power of a test is the probability that a given test will find an effect assuming that one exists in the population. If β, the probability of a Type II error, power = 1 - β.

Parametric tests • • • Correlation Regression T-test ANOVA GLM

Upcoming: • Proposal due Sep 24, 11: 59 pm • Start working on your annotated bibliography