CS 541 Artificial Intelligence Lecture II Problem Solving

CS 541: Artificial Intelligence Lecture II: Problem Solving and Search

Course Information (1) � CS 541 Artificial Intelligence � Term: Fall 2013 � Instructor: Prof. Gang Hua � Class time: Tuesday 2: 00 pm— 4: 30 pm � Location: EAS 330 � Office Hour: Wednesday 4: 00 pm— 5: 00 pm by appointment � Office: Lieb/Room 305 � Course Assistant: Yizhou Lin � Course Website: � http: //www. cs. stevens. edu/~ghua/ghweb/ cs 541_artificial_intelligence_fall_2013. htm

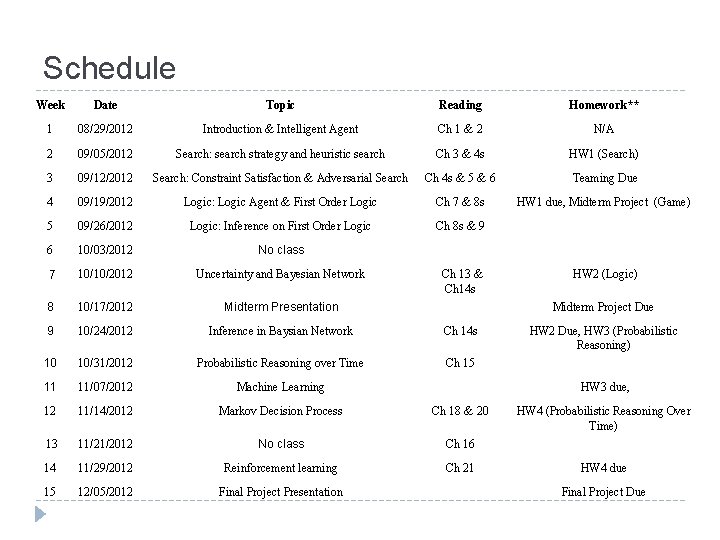

Schedule Week Date Topic Reading Homework** 1 08/29/2012 Introduction & Intelligent Agent Ch 1 & 2 N/A 2 09/05/2012 Search: search strategy and heuristic search Ch 3 & 4 s HW 1 (Search) 3 09/12/2012 Search: Constraint Satisfaction & Adversarial Search Ch 4 s & 5 & 6 Teaming Due 4 09/19/2012 Logic: Logic Agent & First Order Logic Ch 7 & 8 s HW 1 due, Midterm Project (Game) 5 09/26/2012 Logic: Inference on First Order Logic Ch 8 s & 9 6 10/03/2012 No class 7 10/10/2012 Uncertainty and Bayesian Network HW 2 (Logic) 8 10/17/2012 Midterm Presentation Ch 13 & Ch 14 s 9 10/24/2012 Inference in Baysian Network Ch 14 s 10 10/31/2012 Probabilistic Reasoning over Time Ch 15 HW 2 Due, HW 3 (Probabilistic Reasoning) 11 11/07/2012 Machine Learning HW 3 due, 12 11/14/2012 Markov Decision Process Ch 18 & 20 13 11/21/2012 No class Ch 16 HW 4 (Probabilistic Reasoning Over Time) 14 11/29/2012 Reinforcement learning Ch 21 HW 4 due 15 12/05/2012 Final Project Presentation Final Project Due Midterm Project Due

Re-cap Lecture I � Agents interact with environments through actuators and sensors � The agent function describes what the agent does in all circumstances � The performance measure evaluates the environment sequence � A perfectly rational agent maximizes expected performance � Agent programs implement (some) agent functions � PEAS descriptions define task environments � Environments are categorized along several dimensions: � Observable? Deterministic? Episodic? Static? Discrete? Singleagent? � Several basic agent architectures exist:

Outline � Problem-solving agents � Problem types � Problem formulation � Example problems � Basic search algorithms

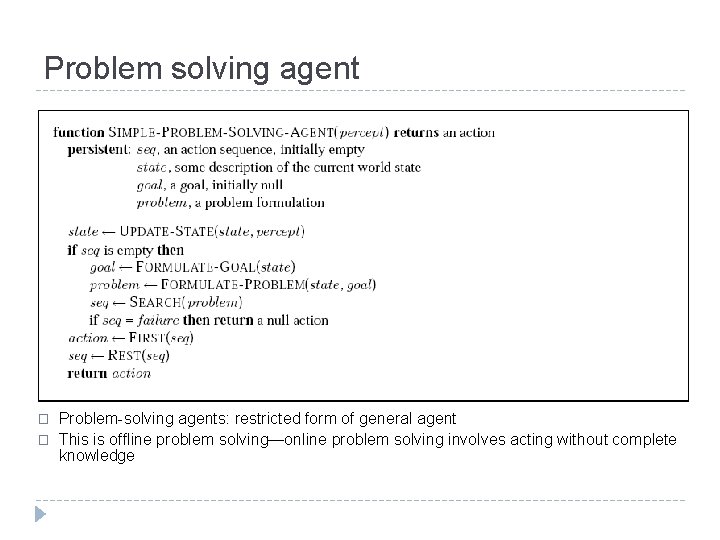

Problem solving agent � � Problem-solving agents: restricted form of general agent This is offline problem solving—online problem solving involves acting without complete knowledge

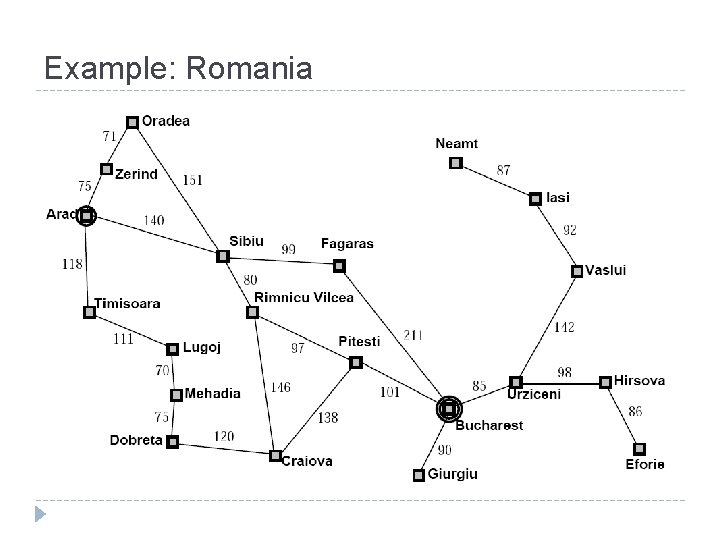

Example: Romania

Example: Romania � On holiday in Romania; currently in Arad � Flight leaves tomorrow from Bucharest � Formulate goal: � Be in Bucharest � Formulate problem: � States: various cities � Actions: drive between cities � Find solution: � Sequence of cities, e. g. , Arad, Sibiu, Fagaras, Bucharest

Problem types � Deterministic, fully observable single-state problem � Agent knows exactly which state it will be in; solution is a sequence � Non-observable conformant problem � Agent may have no idea where it is; solution (if any) is a sequence � Nondeterministic and/or partially observable contingency problem � Percepts provide new information about current state � Solution is a contingent plan or a policy � Often interleave search, execution � Unknown state space exploration problem (“online”)

![Example: vacuum world � Single-state, start in #5. Solution? ? � � [Right, Suck] Example: vacuum world � Single-state, start in #5. Solution? ? � � [Right, Suck]](http://slidetodoc.com/presentation_image/d8bb025750ce2a3680dbcce414917308/image-10.jpg)

Example: vacuum world � Single-state, start in #5. Solution? ? � � [Right, Suck] Conformant, start in [1, 2, 3, 4, 5, 6, 7, 8], e. g. , right to [2, 4, 6, 8]. Solution? ? � [Right, Suck, Left, Suck] Contingency, start in #5 Non-deterministic: Suck can dirty a clean carpet Local sensing: dirt, location only. Solution? ? � � [Right, if dirt then Suck]

Single state problem formulation � A problem is defined by four items � Initial state � E. g. , “at Arad” � Action or successor function S(x)= set of action-state pair, � E. g. , S(Arad)={<Arad Zerind, Zerind>, …} � Goal test, can be � Explicit, e. g. , x=“at Bucharest” � Implicit, e. g. , No. Dirt(x) � Path cost (additive) � E. g. , sum of distances, number of actions executed, etc. � c(x, a, y) is the step cost, assumed to be ≥ 0 � A Solution is a sequence of actions � Leads from the initial state to a goal state

Selecting a state space � Real world is absurdly complex � State space must be abstracted for problem solving � (Abstract) state = set of real states � (Abstract) action = complex combination of real actions � E. g. , “Arad Zerind” represents a complex set of possible routes, detours, rest stops, etc. � For guaranteed realizability, any real state “in Arad” must get to some real state “in Zerind” � (Abstract) solution = � Set of real paths that are solutions in the real world � Each abstract action should be “easier” than the original problem

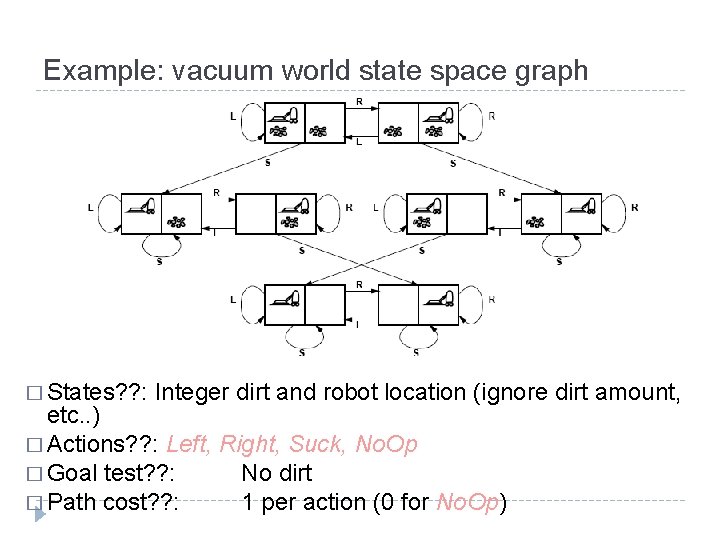

Example: vacuum world state space graph � States? ? : Integer dirt and robot location (ignore dirt amount, etc. . ) � Actions? ? : Left, Right, Suck, No. Op � Goal test? ? : No dirt � Path cost? ? : 1 per action (0 for No. Op)

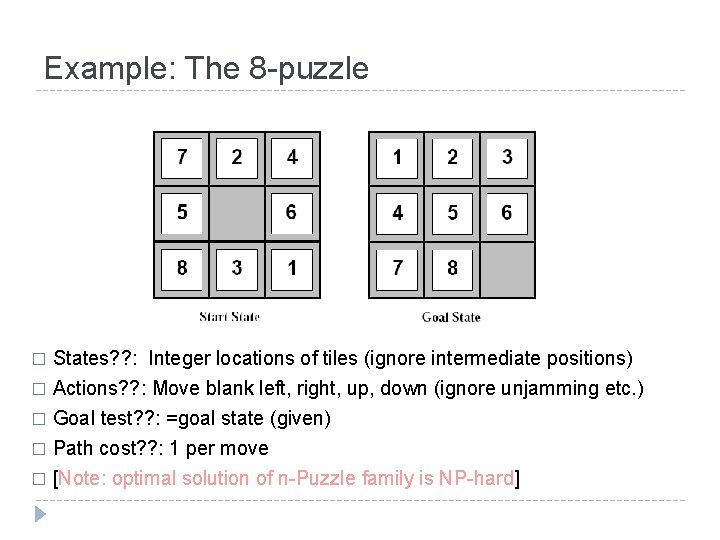

Example: The 8 -puzzle States? ? : Integer locations of tiles (ignore intermediate positions) � Actions? ? : Move blank left, right, up, down (ignore unjamming etc. ) � Goal test? ? : =goal state (given) � Path cost? ? : 1 per move � [Note: optimal solution of n-Puzzle family is NP-hard] �

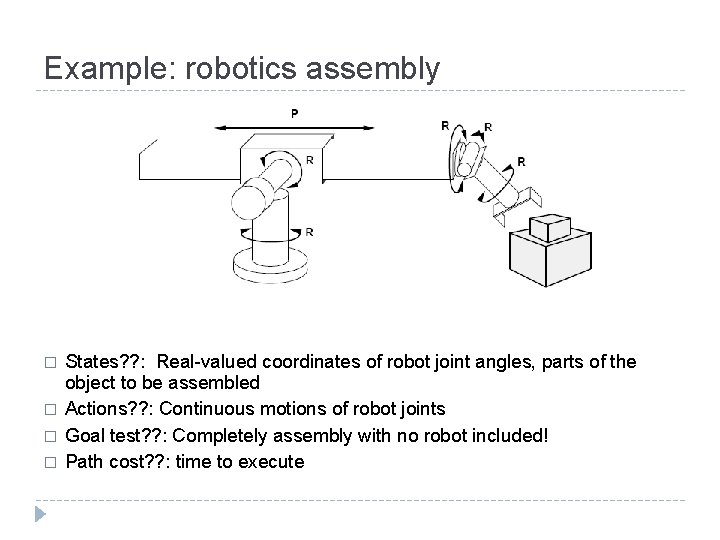

Example: robotics assembly � � States? ? : Real-valued coordinates of robot joint angles, parts of the object to be assembled Actions? ? : Continuous motions of robot joints Goal test? ? : Completely assembly with no robot included! Path cost? ? : time to execute

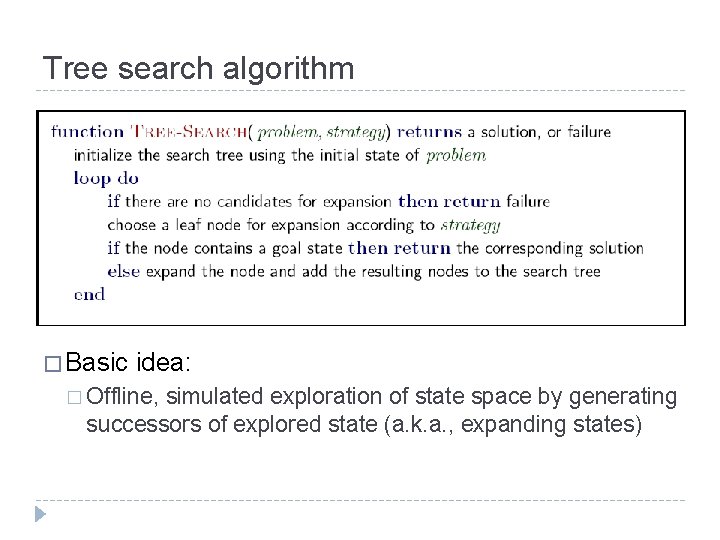

Tree search algorithm � Basic idea: � Offline, simulated exploration of state space by generating successors of explored state (a. k. a. , expanding states)

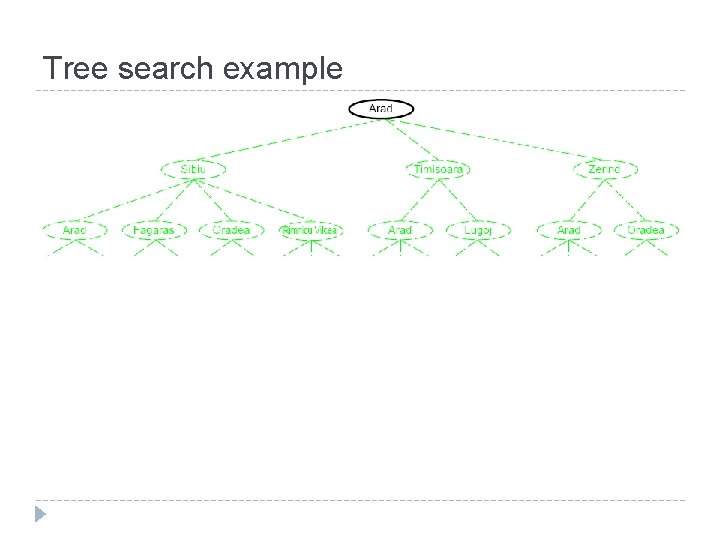

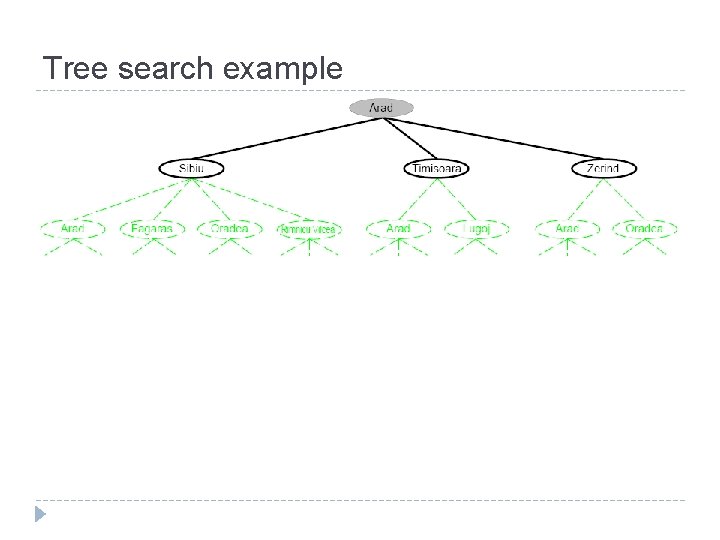

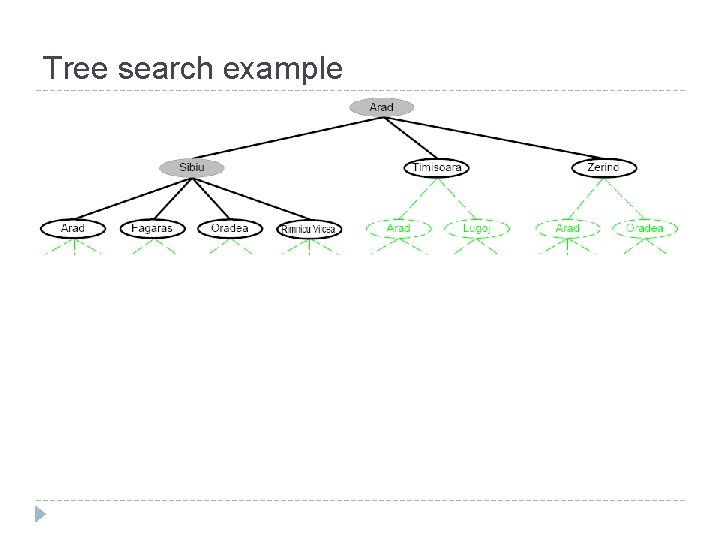

Tree search example

Tree search example

Tree search example

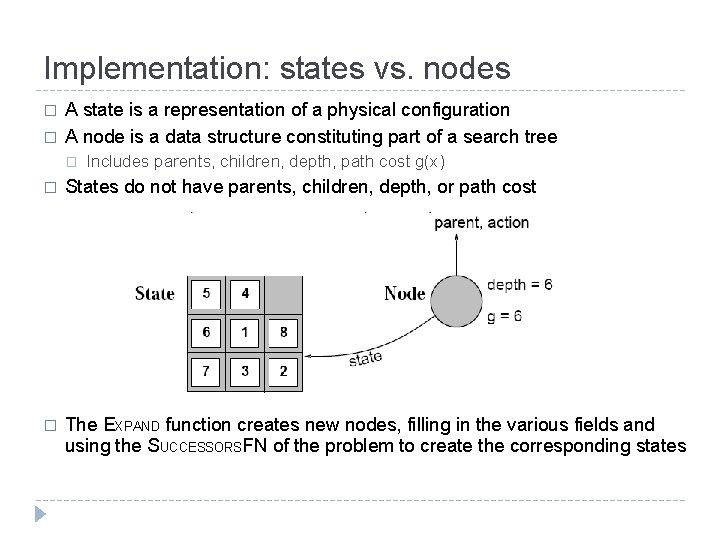

Implementation: states vs. nodes � � A state is a representation of a physical configuration A node is a data structure constituting part of a search tree � Includes parents, children, depth, path cost g(x) � States do not have parents, children, depth, or path cost � The EXPAND function creates new nodes, filling in the various fields and using the SUCCESSORSFN of the problem to create the corresponding states

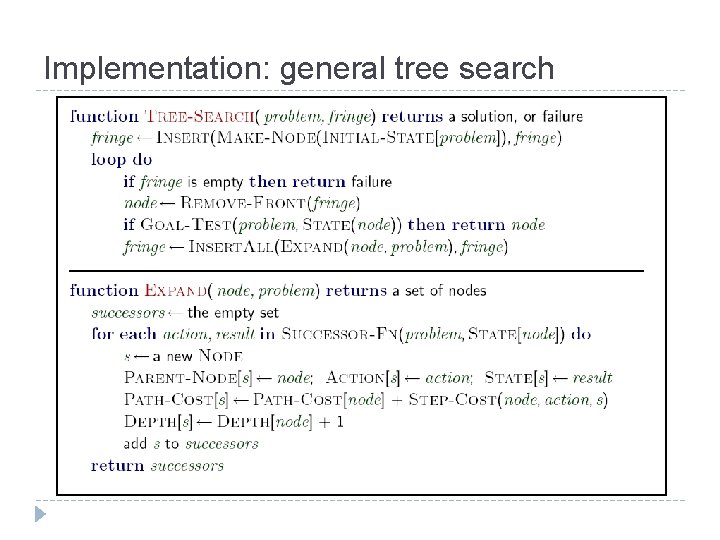

Implementation: general tree search

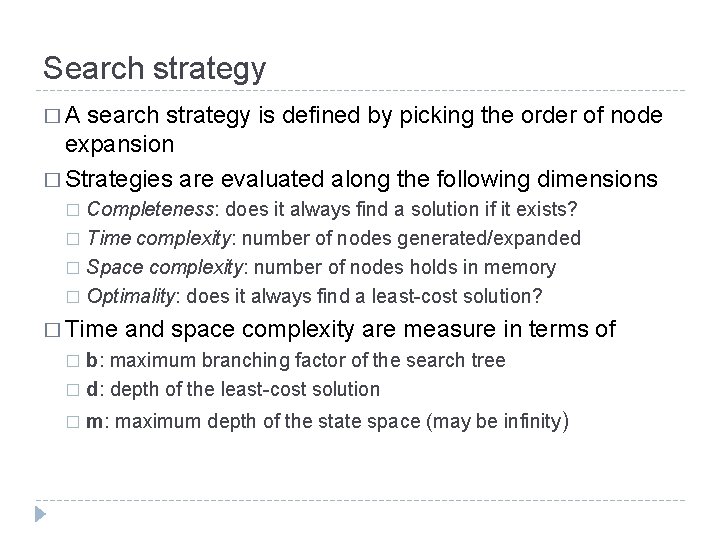

Search strategy � A search strategy is defined by picking the order of node expansion � Strategies are evaluated along the following dimensions Completeness: does it always find a solution if it exists? � Time complexity: number of nodes generated/expanded � Space complexity: number of nodes holds in memory � Optimality: does it always find a least-cost solution? � � Time and space complexity are measure in terms of b: maximum branching factor of the search tree � d: depth of the least-cost solution � � m: maximum depth of the state space (may be infinity)

Uninformed search strategies � Uninformed search strategies use only the information available in the problem definition � List of uninformed search strategies � Breadth-first search � Uniform-cost search � Depth-first search � Depth-limited search � Iterative deepening search

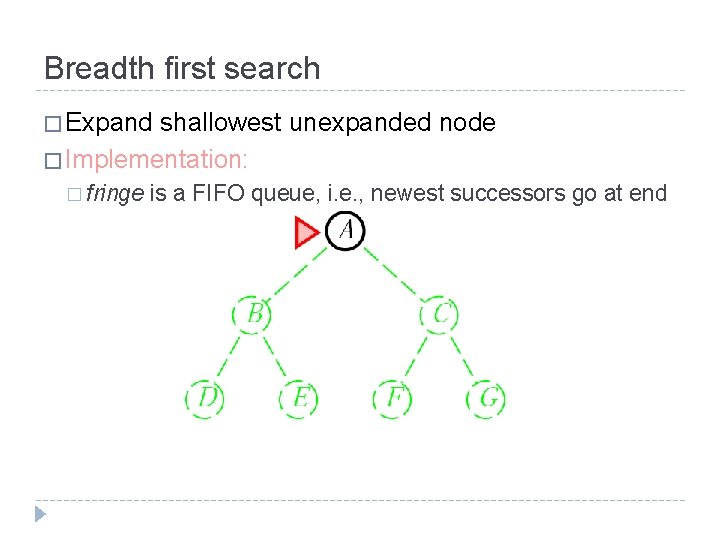

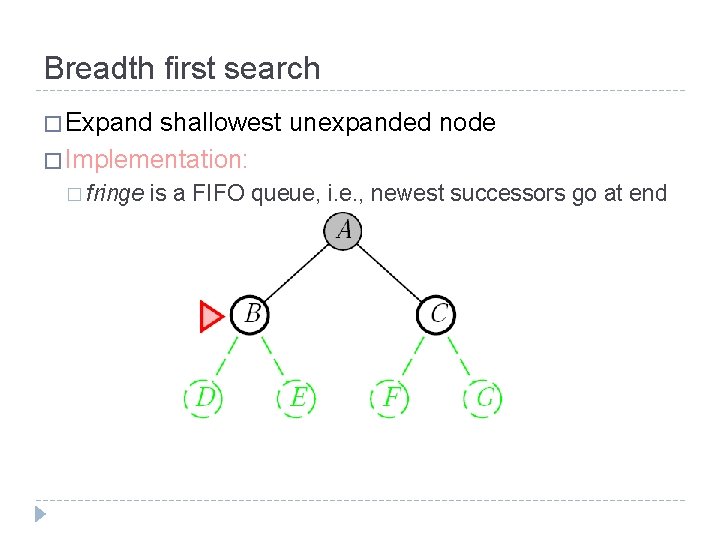

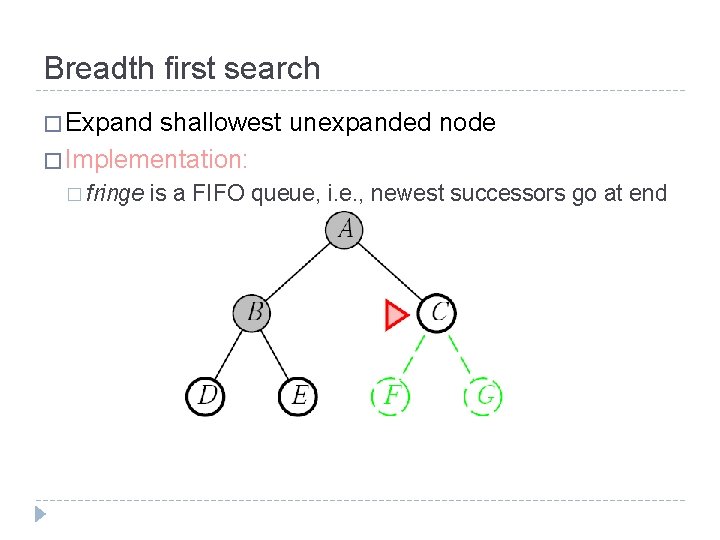

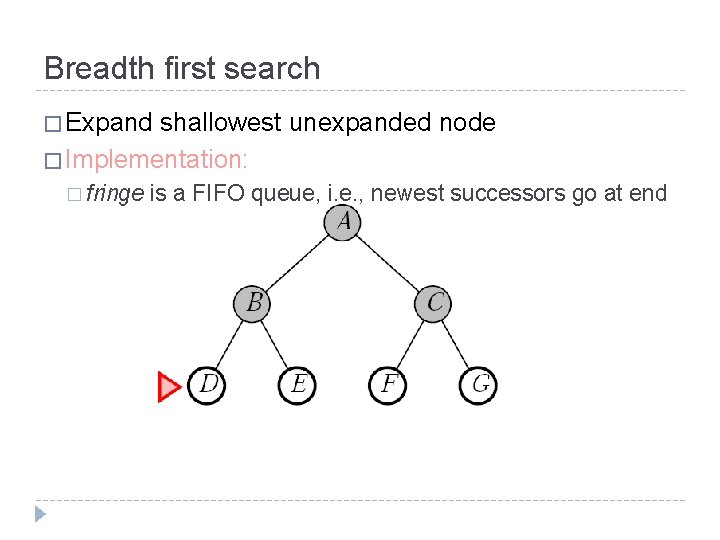

Breadth first search � Expand shallowest unexpanded node � Implementation: � fringe is a FIFO queue, i. e. , newest successors go at end

Breadth first search � Expand shallowest unexpanded node � Implementation: � fringe is a FIFO queue, i. e. , newest successors go at end

Breadth first search � Expand shallowest unexpanded node � Implementation: � fringe is a FIFO queue, i. e. , newest successors go at end

Breadth first search � Expand shallowest unexpanded node � Implementation: � fringe is a FIFO queue, i. e. , newest successors go at end

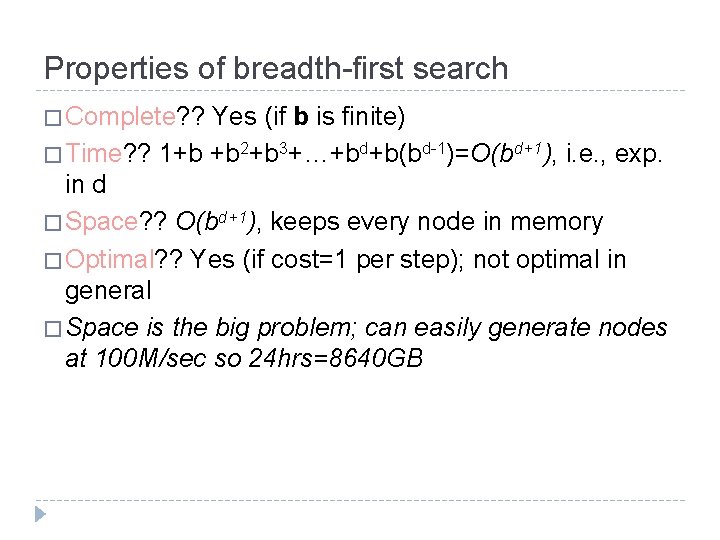

Properties of breadth-first search � Complete? ? Yes (if b is finite) � Time? ? 1+b +b 2+b 3+…+bd+b(bd-1)=O(bd+1), i. e. , exp. in d � Space? ? O(bd+1), keeps every node in memory � Optimal? ? Yes (if cost=1 per step); not optimal in general � Space is the big problem; can easily generate nodes at 100 M/sec so 24 hrs=8640 GB

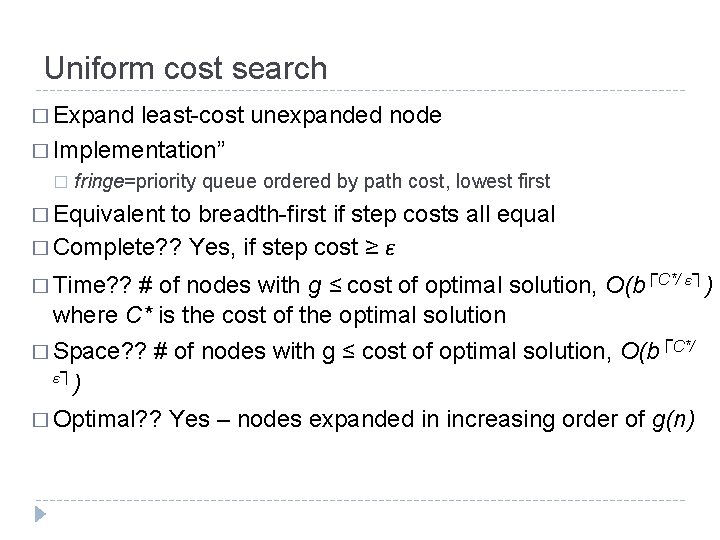

Uniform cost search � Expand least-cost unexpanded node � Implementation” � fringe=priority queue ordered by path cost, lowest first � Equivalent to breadth-first if step costs all equal � Complete? ? Yes, if step cost ≥ ε ≤ cost of optimal solution, O(b┌C*/ ε┐) where C* is the cost of the optimal solution � Time? ? # of nodes with g ┌ � Space? ? # of nodes with g ≤ cost of optimal solution, O(b C*/ ε ┐) � Optimal? ? Yes – nodes expanded in increasing order of g(n)

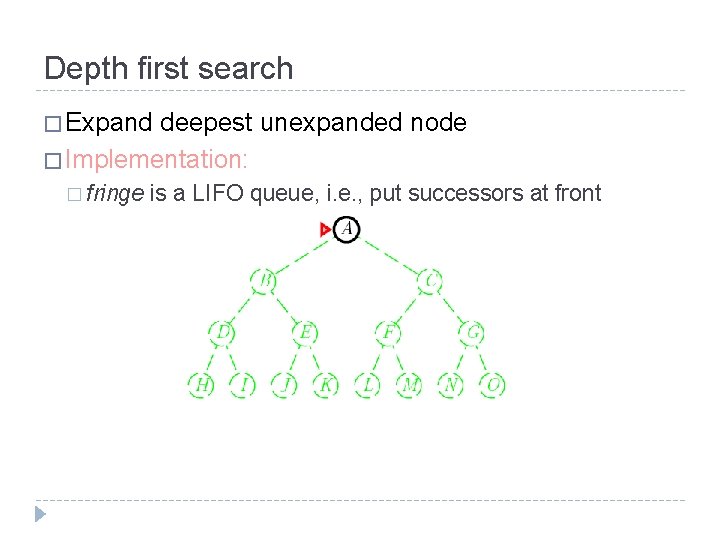

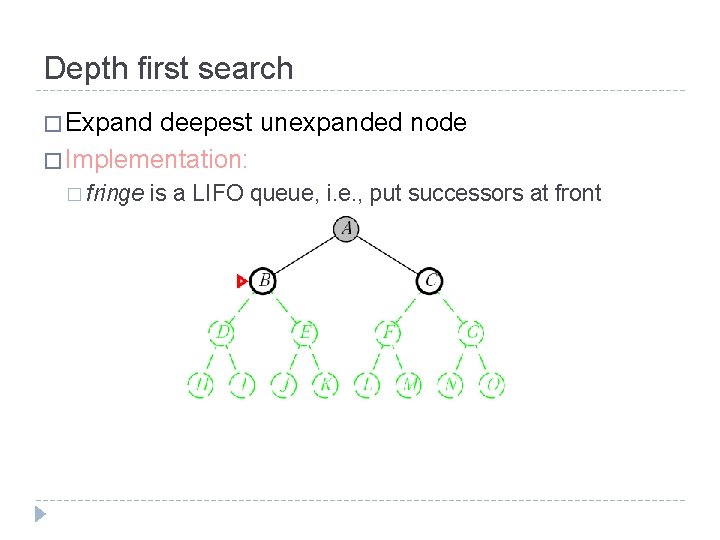

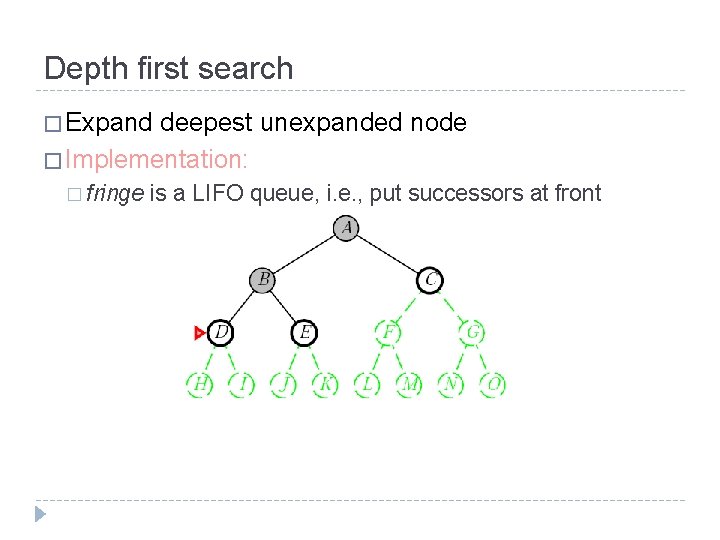

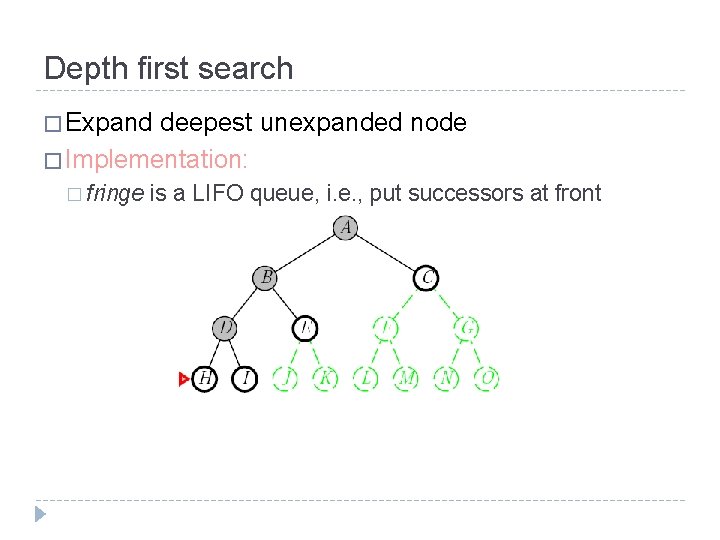

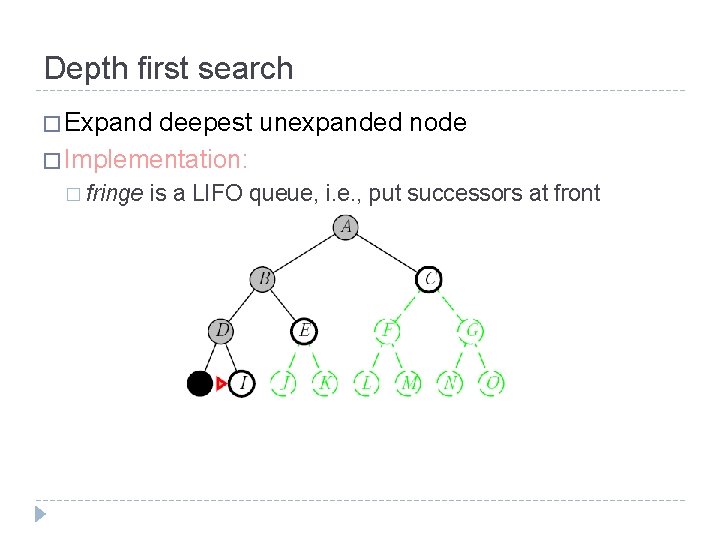

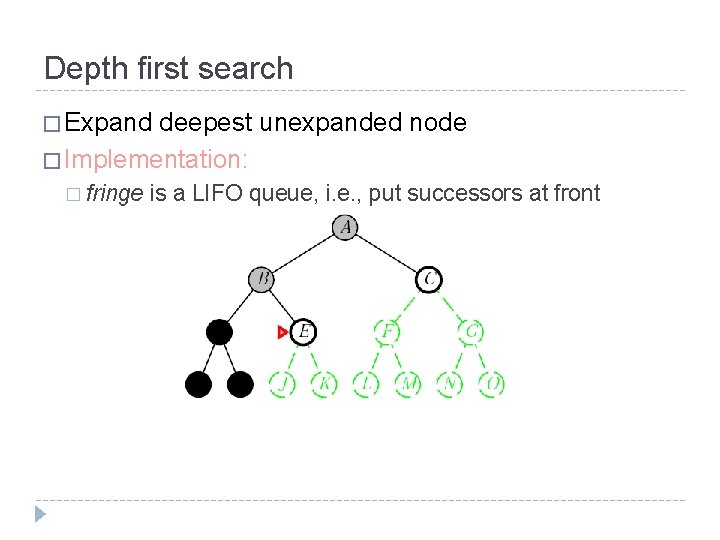

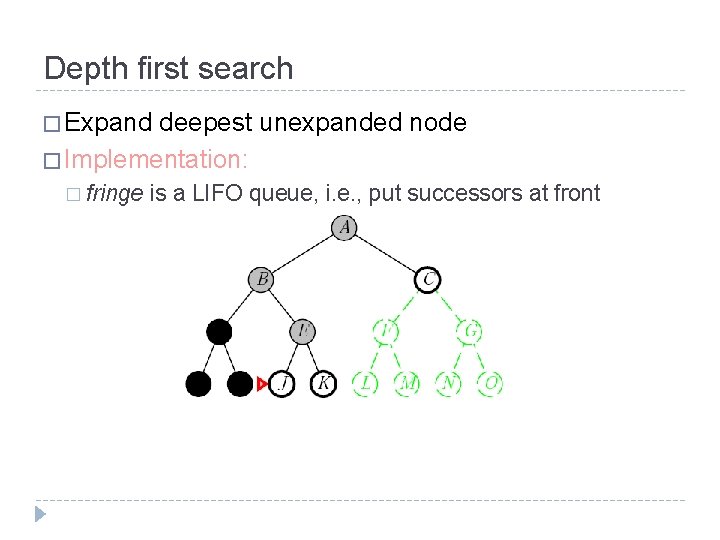

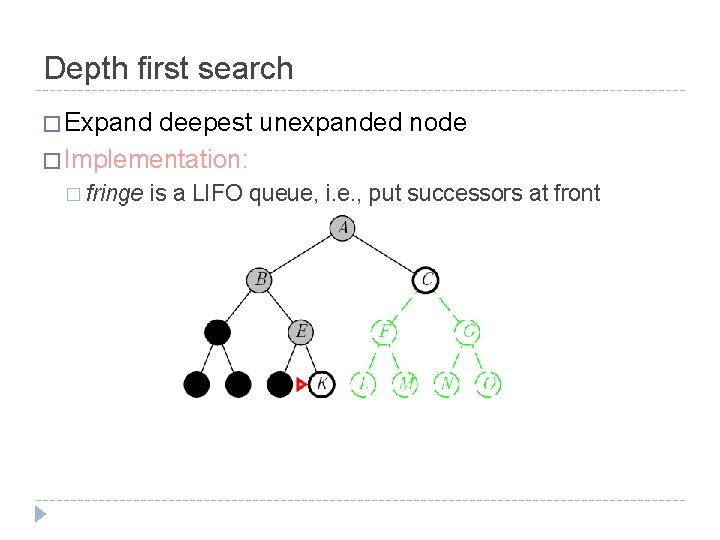

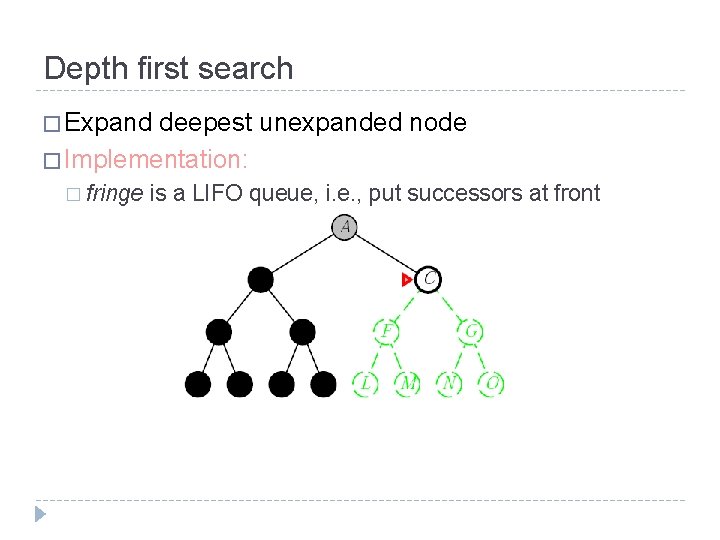

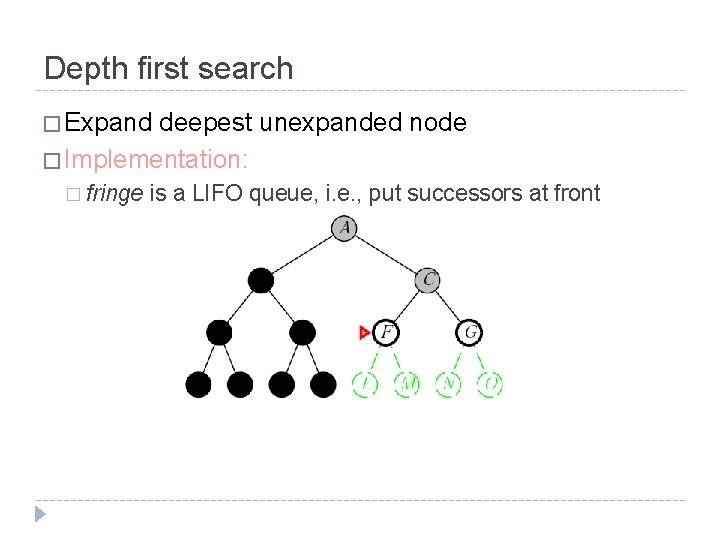

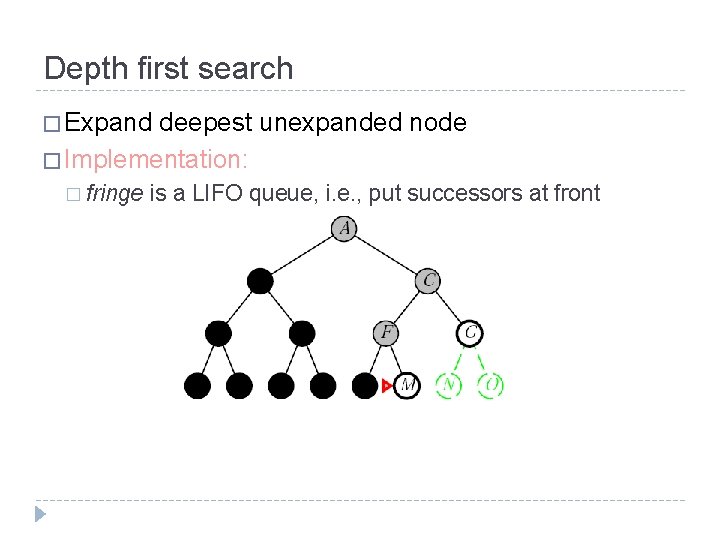

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

Depth first search � Expand deepest unexpanded node � Implementation: � fringe is a LIFO queue, i. e. , put successors at front

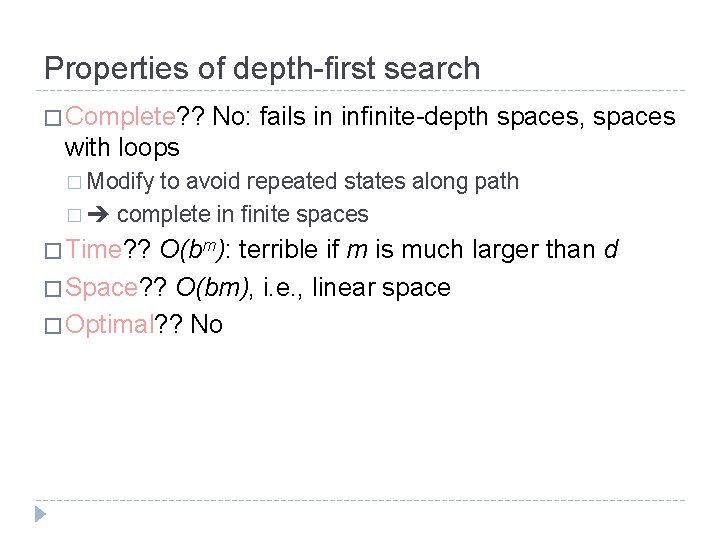

Properties of depth-first search � Complete? ? No: fails in infinite-depth spaces, spaces with loops � Modify to avoid repeated states along path � complete in finite spaces � Time? ? O(bm): terrible if m is much larger than d � Space? ? O(bm), i. e. , linear space � Optimal? ? No

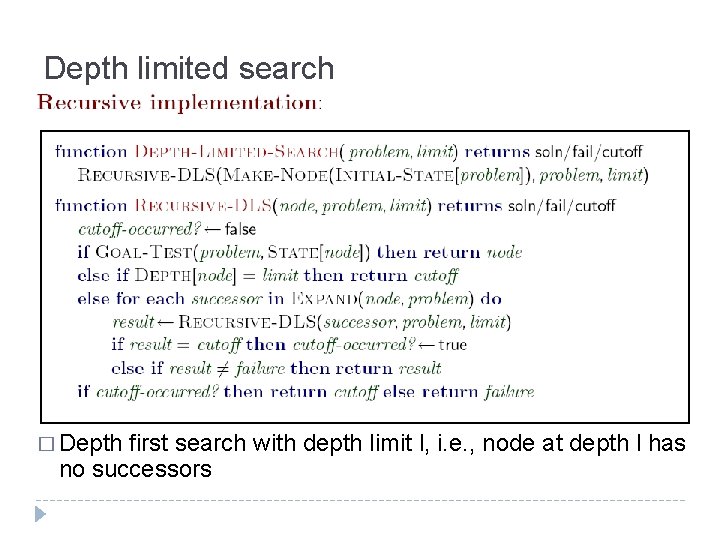

Depth limited search � Depth first search with depth limit l, i. e. , node at depth l has no successors

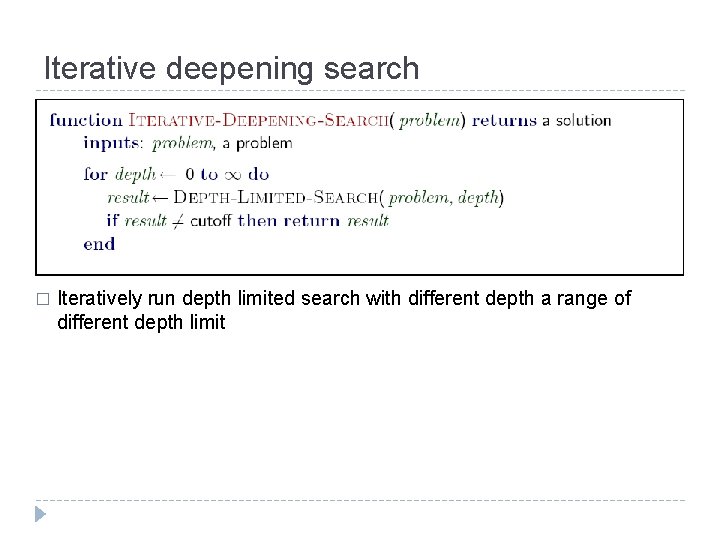

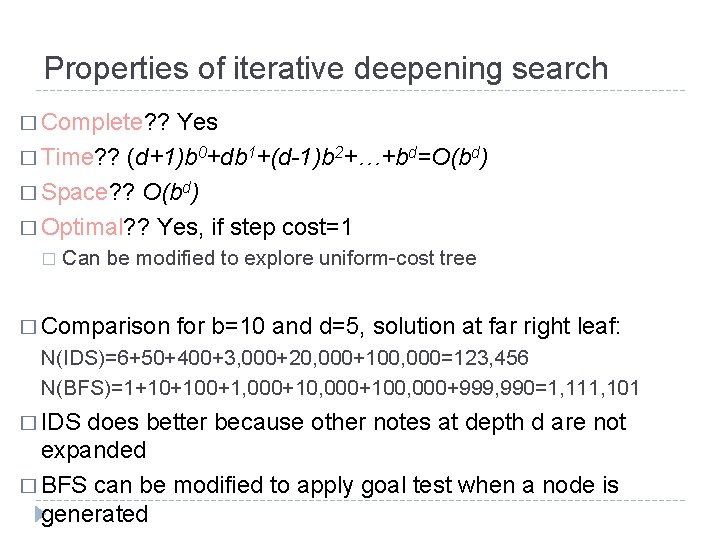

Iterative deepening search � Iteratively run depth limited search with different depth a range of different depth limit

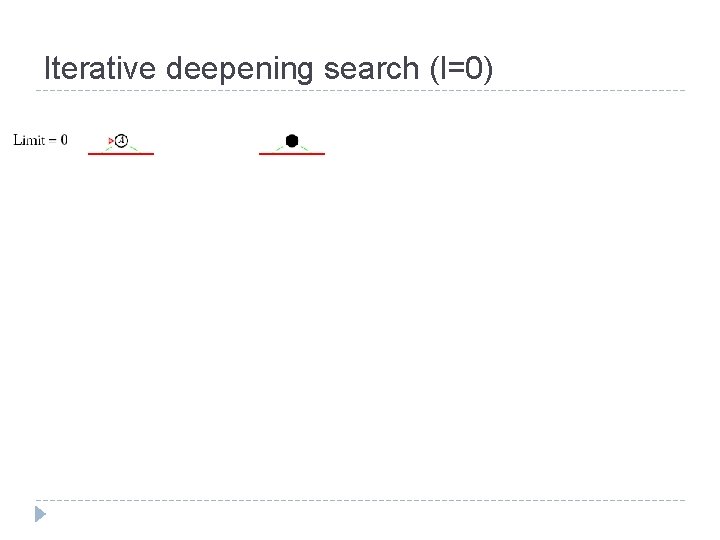

Iterative deepening search (l=0)

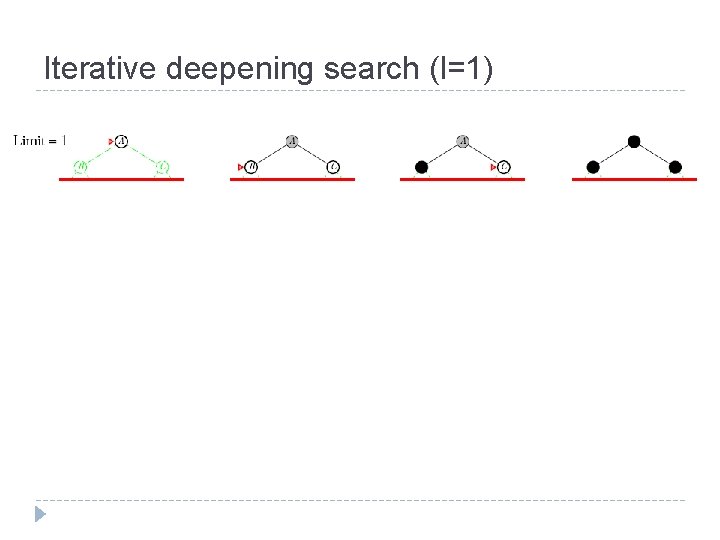

Iterative deepening search (l=1)

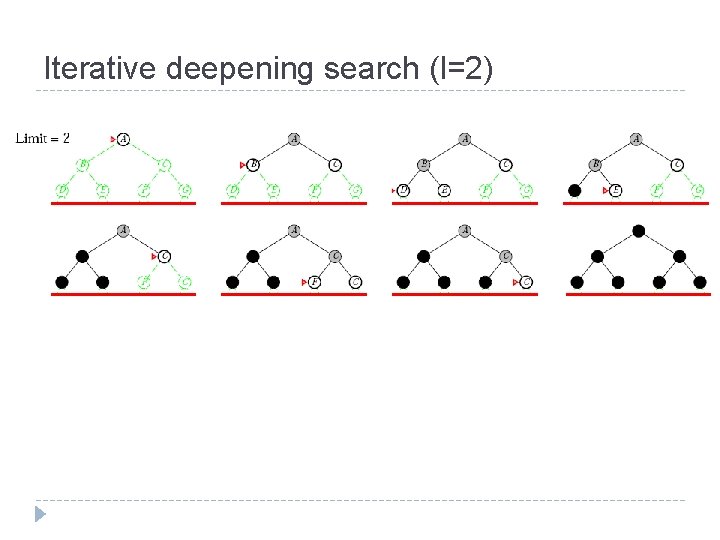

Iterative deepening search (l=2)

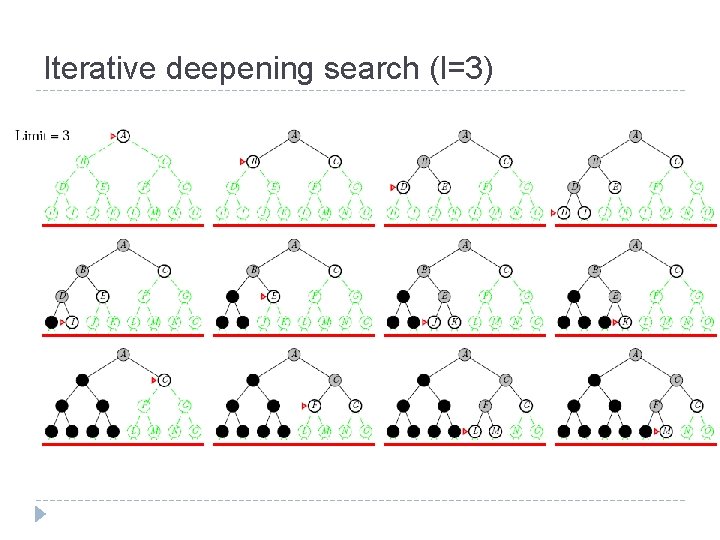

Iterative deepening search (l=3)

Properties of iterative deepening search � Complete? ? Yes � Time? ? (d+1)b 0+db 1+(d-1)b 2+…+bd=O(bd) � Space? ? O(bd) � Optimal? ? Yes, if step cost=1 � Can be modified to explore uniform-cost tree � Comparison for b=10 and d=5, solution at far right leaf: N(IDS)=6+50+400+3, 000+20, 000+100, 000=123, 456 N(BFS)=1+10+100+1, 000+100, 000+999, 990=1, 111, 101 � IDS does better because other notes at depth d are not expanded � BFS can be modified to apply goal test when a node is generated

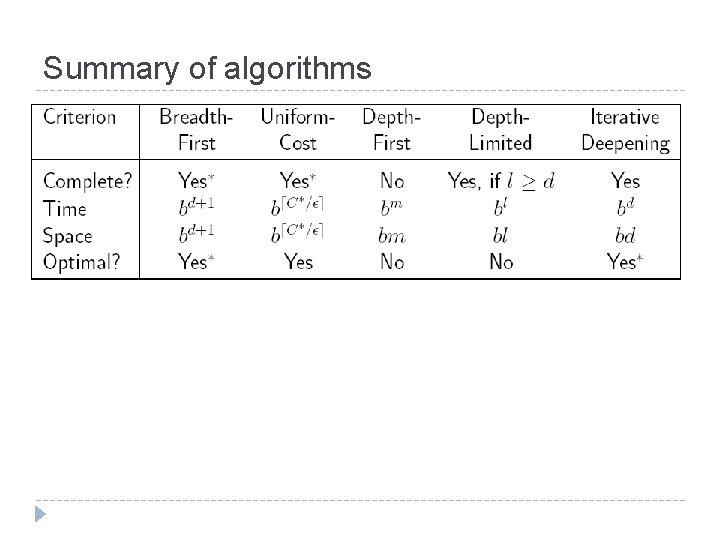

Summary of algorithms

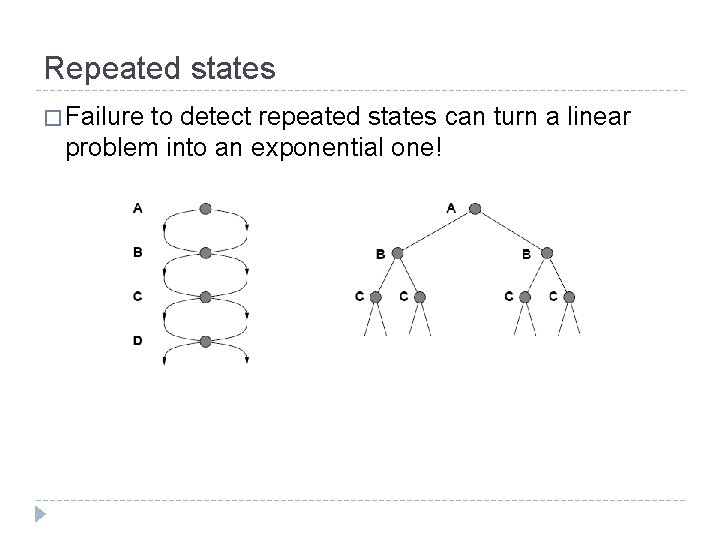

Repeated states � Failure to detect repeated states can turn a linear problem into an exponential one!

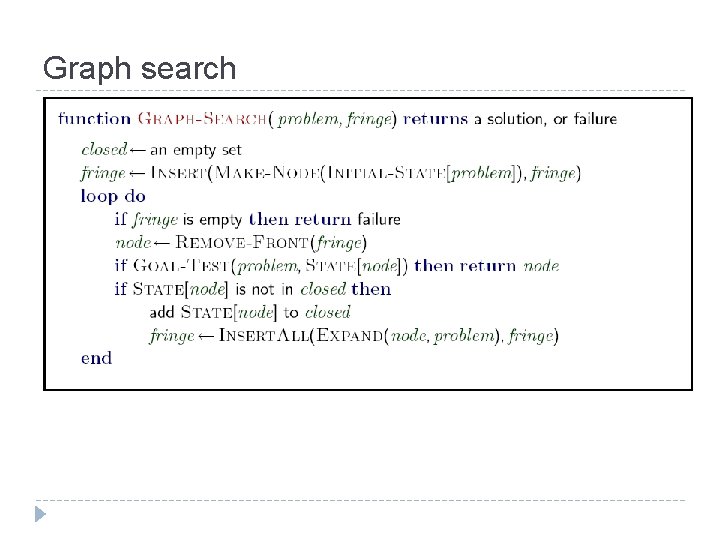

Graph search

Summary � Problem formulation usually requires abstracting away real -world details to define a state space that can feasibly be explored � Variety of uninformed search strategies � Iterative deepening search uses only linear space and not much more time than other uninformed algorithms � Graph search can be exponentially more efficient than tree search

Informed search & non-classical search Lecture II: Part II

Outline � Informed search � Best first search � A* search � Heuristics � Non-classical search � Hill climbing � Simulated annealing � Genetic algorithms (briefly) � Local search in continuous spaces (very briefly)

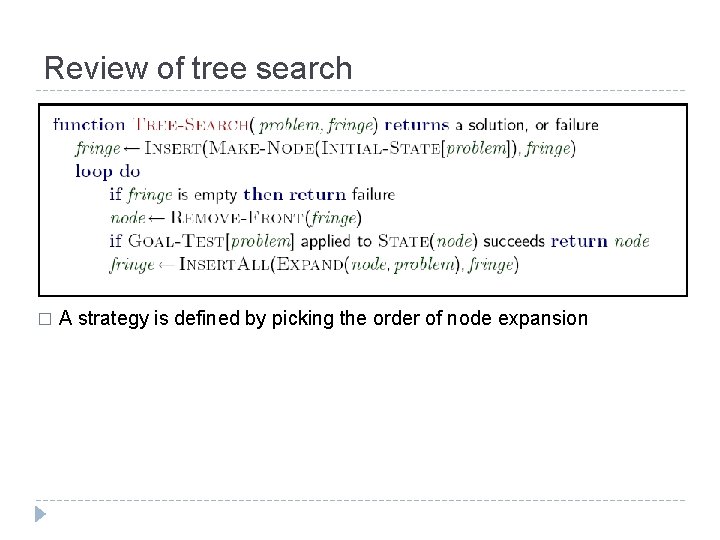

Review of tree search � A strategy is defined by picking the order of node expansion

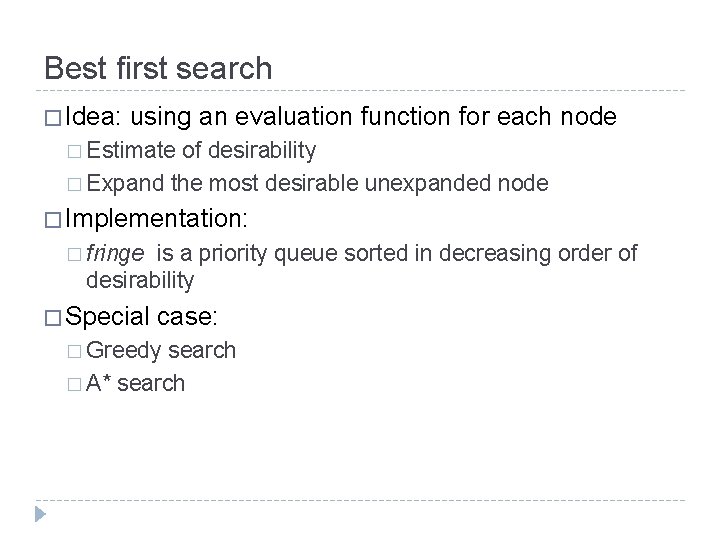

Best first search � Idea: using an evaluation function for each node � Estimate of desirability � Expand the most desirable unexpanded node � Implementation: � fringe is a priority queue sorted in decreasing order of desirability � Special case: � Greedy search � A* search

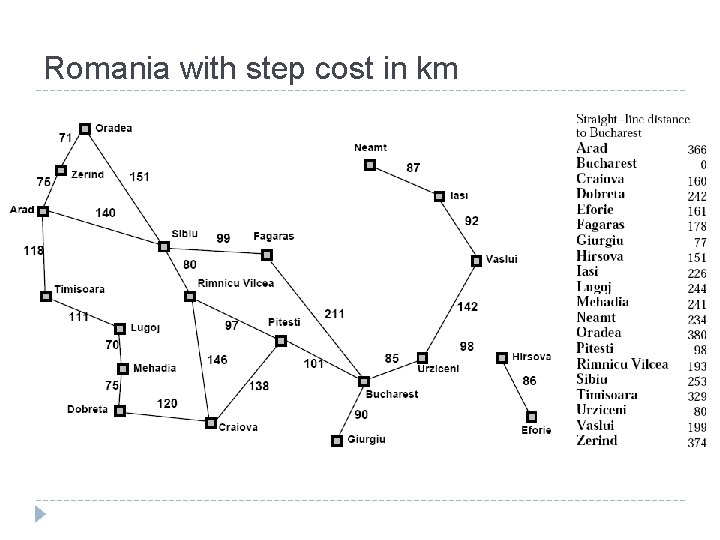

Romania with step cost in km

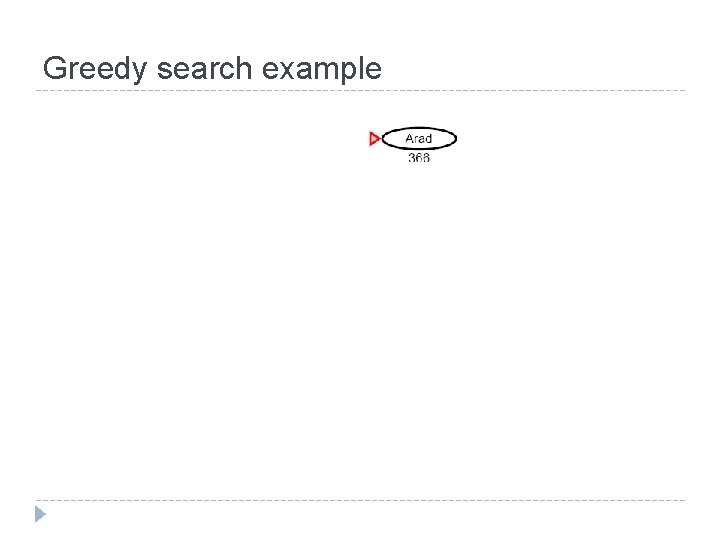

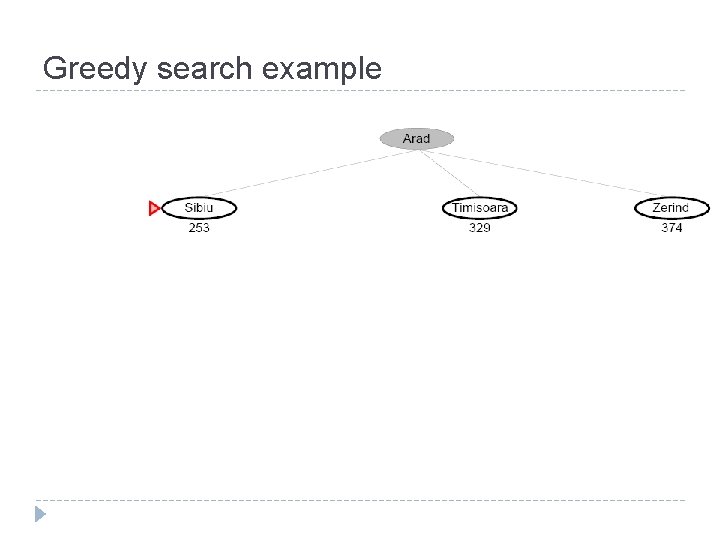

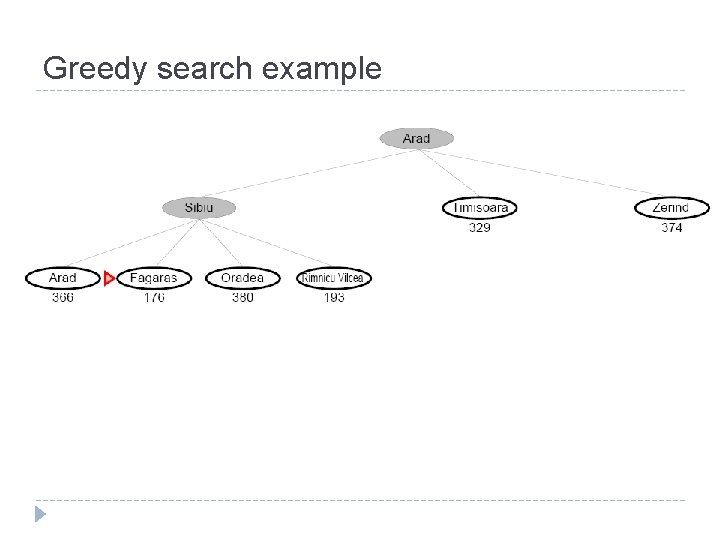

Greedy search � Evaluation function h(n) (heuristic) � Estimate of cost from n to the closest goal � E. g. , h. SLD(n)=straight-line distance from n to Bucharest � Greedy search expands the node that appears to be closest to the goal

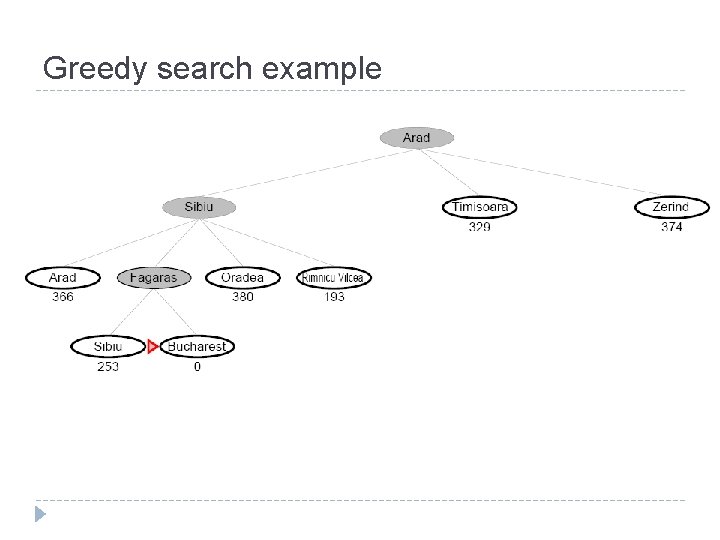

Greedy search example

Greedy search example

Greedy search example

Greedy search example

Properties of greedy search � Complete? ? No: can get stuck in loops � Lasi Neamt (when Oradea is the goal) � Time? ? O(bm): but a good heuristic can give dramatic improvement � Space? ? O(bm), i. e. , keep everything in memory � Optimal? ? No

A* search � Idea: avoid expanding paths that are already expensive � Evaluation function f(n)=g(n)+h(n) g(n)=cost so far to reach n � h(n)=estimated cost to goal from n � f(n)= estimated total cost of path through n to goal � � A* search uses an admissible heuristic i. e. , h(n)≤h*(n) where h*(n) is the true cost from n � also require h(n)≥ 0, so h(G)=0 for any goal G. � � E. g. , h. SLD(n) never overestimates the actual road distance � Theorem: A* search is optimal (given h(n) is admissible in tree search, and consistent in graph search

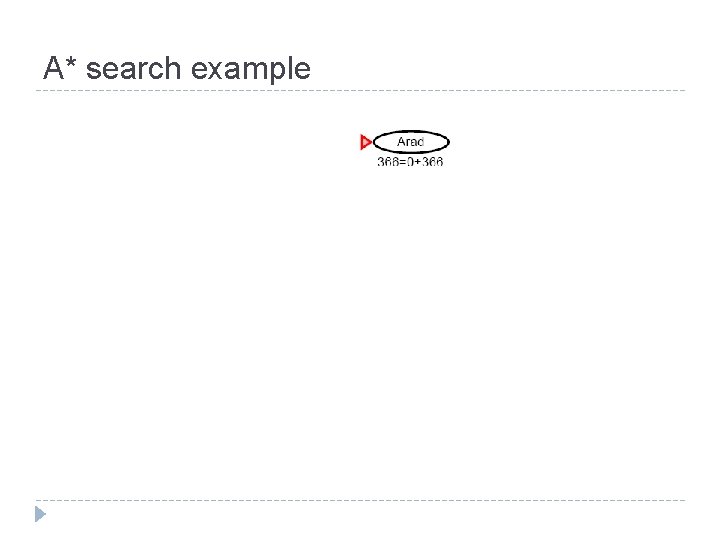

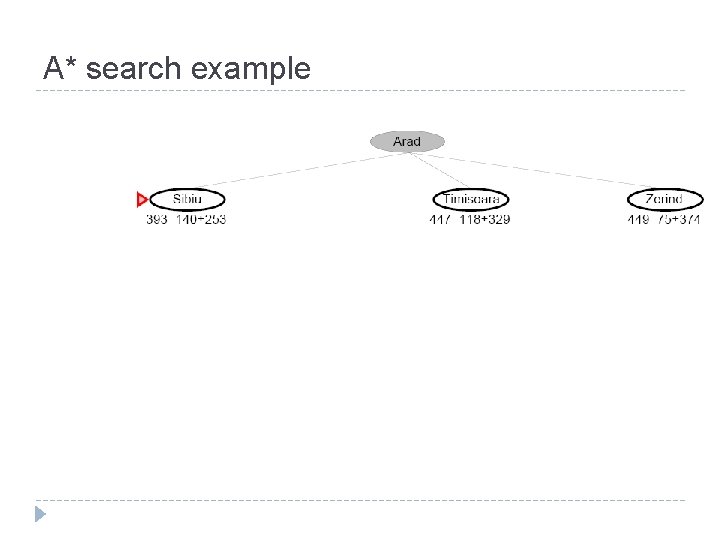

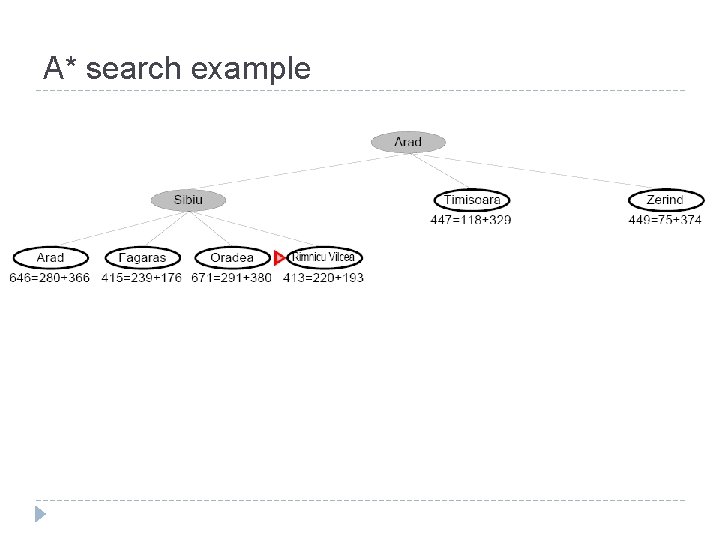

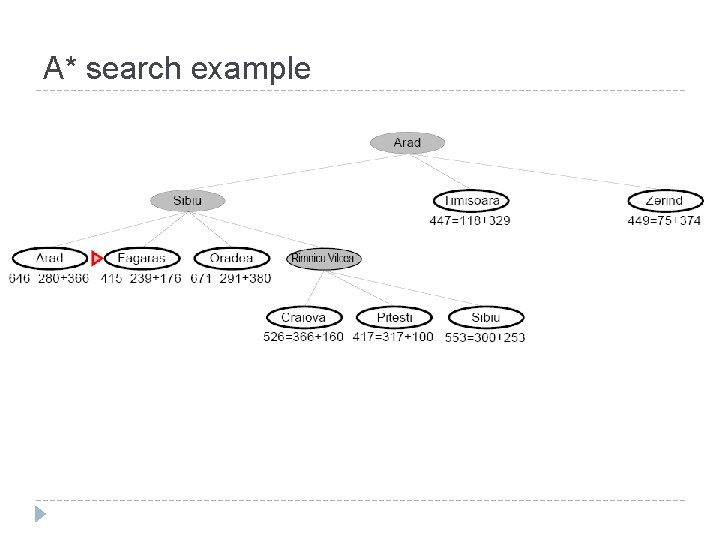

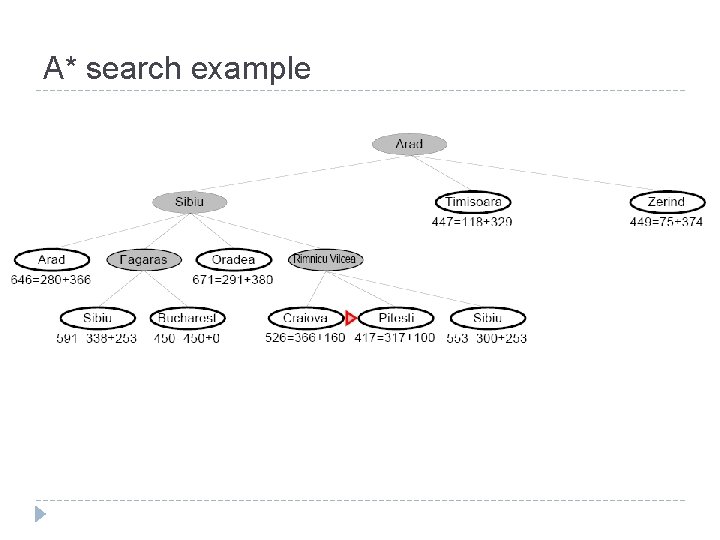

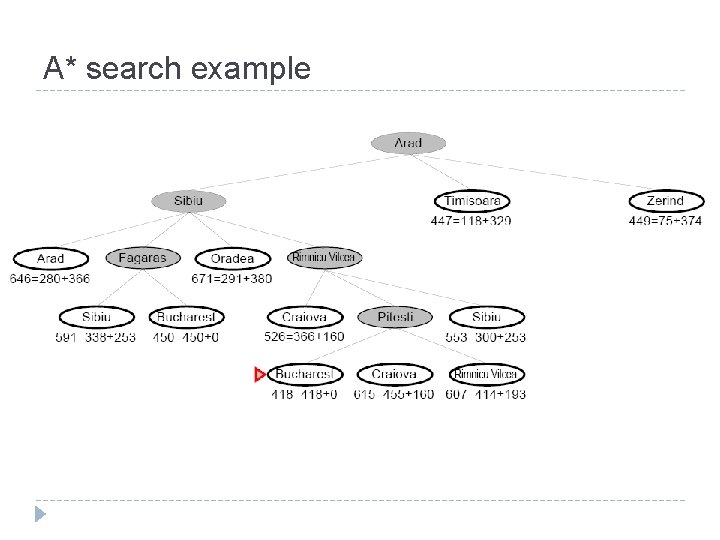

A* search example

A* search example

A* search example

A* search example

A* search example

A* search example

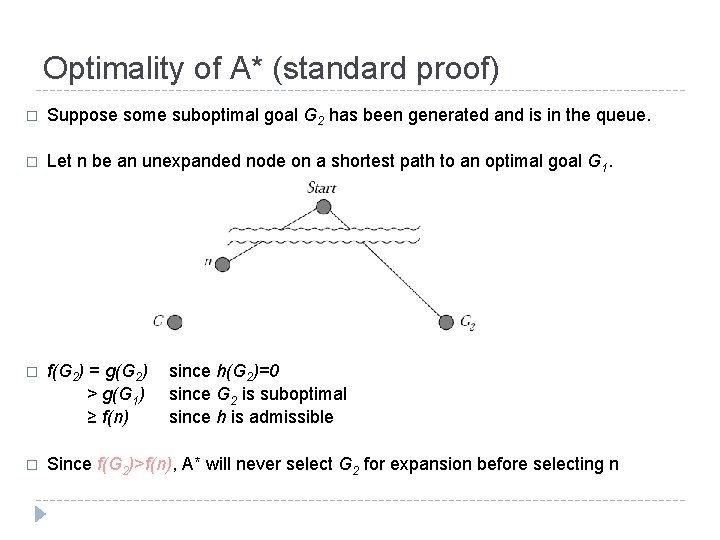

Optimality of A* (standard proof) � Suppose some suboptimal goal G 2 has been generated and is in the queue. � Let n be an unexpanded node on a shortest path to an optimal goal G 1. � f(G 2) = g(G 2) > g(G 1) ≥ f(n) � Since f(G 2)>f(n), A* will never select G 2 for expansion before selecting n since h(G 2)=0 since G 2 is suboptimal since h is admissible

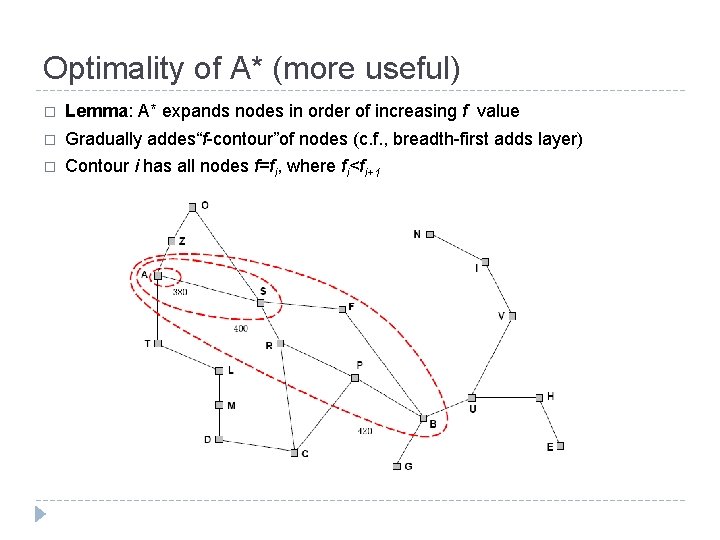

Optimality of A* (more useful) � Lemma: A* expands nodes in order of increasing f value � Gradually addes“f-contour”of nodes (c. f. , breadth-first adds layer) � Contour i has all nodes f=fi, where fi<fi+1

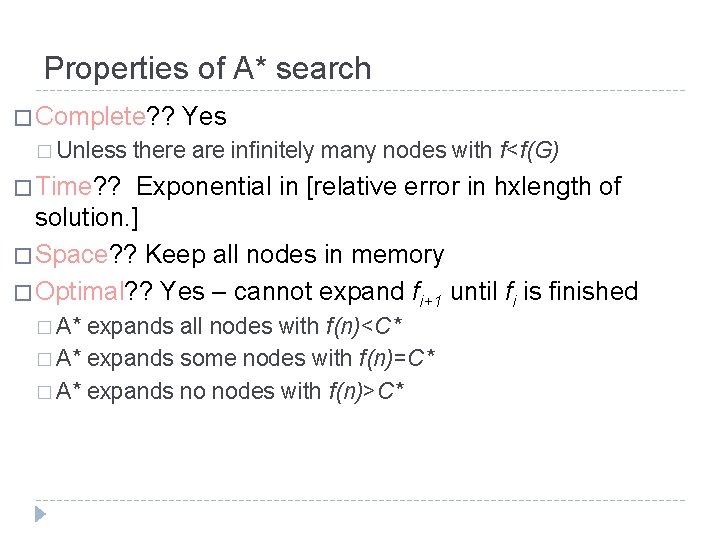

Properties of A* search � Complete? ? Yes � Unless there are infinitely many nodes with f<f(G) � Time? ? Exponential in [relative error in hxlength of solution. ] � Space? ? Keep all nodes in memory � Optimal? ? Yes – cannot expand fi+1 until fi is finished � A* expands all nodes with f(n)<C* � A* expands some nodes with f(n)=C* � A* expands no nodes with f(n)>C*

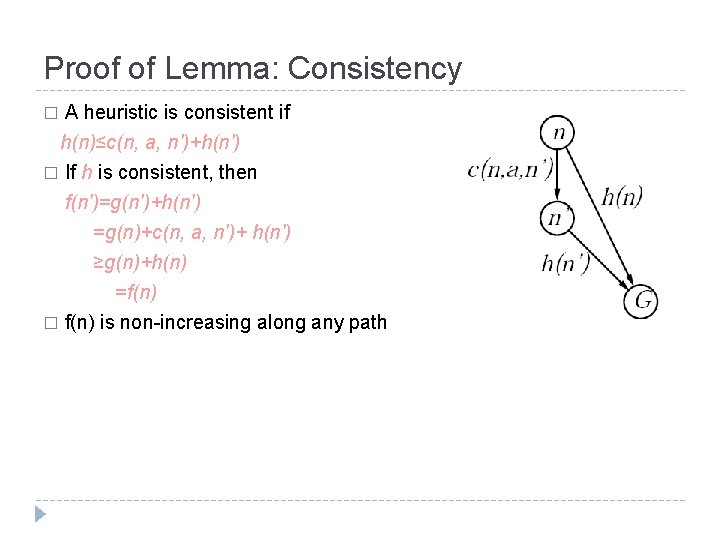

Proof of Lemma: Consistency A heuristic is consistent if h(n)≤c(n, a, n')+h(n') � If h is consistent, then f(n')=g(n')+h(n') =g(n)+c(n, a, n')+ h(n') ≥g(n)+h(n) =f(n) � f(n) is non-increasing along any path �

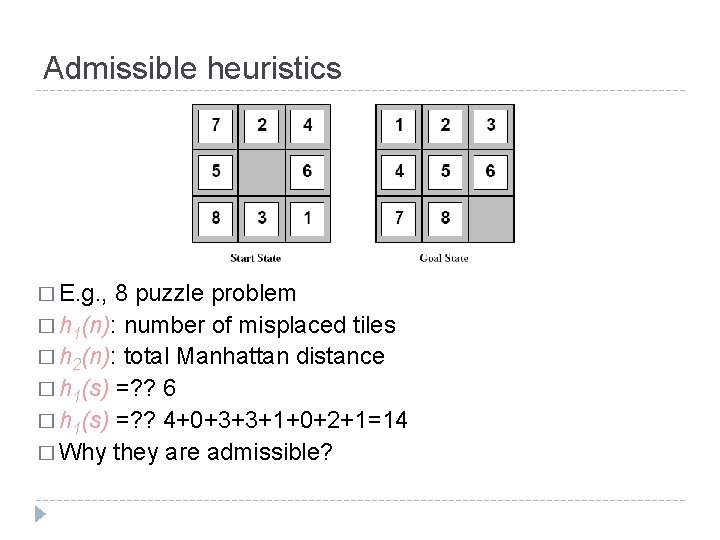

Admissible heuristics � E. g. , 8 puzzle problem � h 1(n): number of misplaced tiles � h 2(n): total Manhattan distance � h 1(s) =? ? 6 � h 1(s) =? ? 4+0+3+3+1+0+2+1=14 � Why they are admissible?

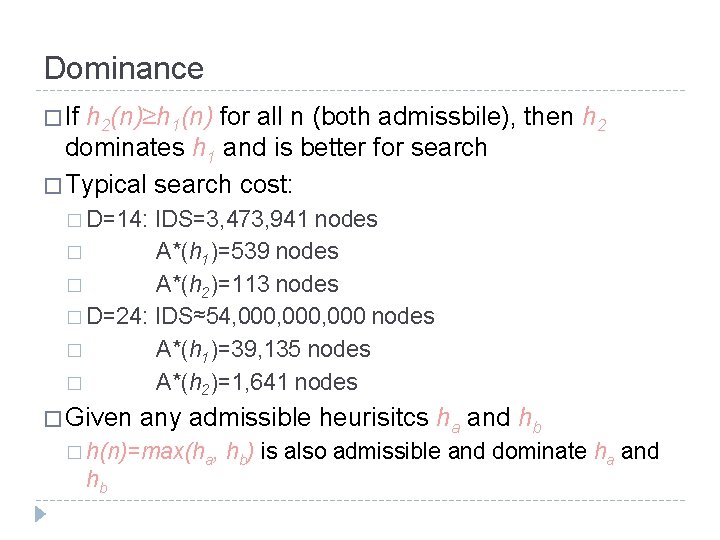

Dominance � If h 2(n)≥h 1(n) for all n (both admissbile), then h 2 dominates h 1 and is better for search � Typical search cost: � D=14: IDS=3, 473, 941 nodes � A*(h 1)=539 nodes � A*(h 2)=113 nodes � D=24: IDS≈54, 000, 000 nodes � A*(h 1)=39, 135 nodes � A*(h 2)=1, 641 nodes � Given any admissible heurisitcs ha and hb � h(n)=max(ha, hb hb) is also admissible and dominate ha and

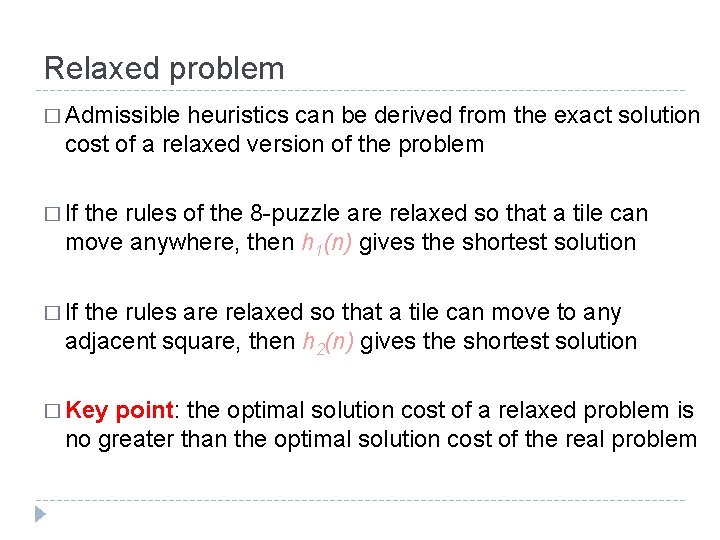

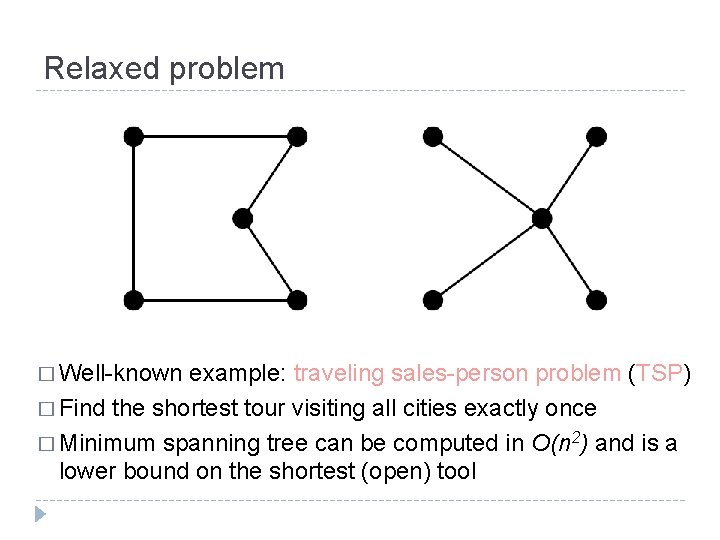

Relaxed problem � Admissible heuristics can be derived from the exact solution cost of a relaxed version of the problem � If the rules of the 8 -puzzle are relaxed so that a tile can move anywhere, then h 1(n) gives the shortest solution � If the rules are relaxed so that a tile can move to any adjacent square, then h 2(n) gives the shortest solution � Key point: the optimal solution cost of a relaxed problem is no greater than the optimal solution cost of the real problem

Relaxed problem � Well-known example: traveling sales-person problem (TSP) � Find the shortest tour visiting all cities exactly once � Minimum spanning tree can be computed in O(n 2) and is a lower bound on the shortest (open) tool

Summary � Heuristic functions estimate costs of shortest paths � Good heuristics can dramatically reduce search cost � Greedy best-first search expands lowest h � Incomplete and not always optimal � A* search expands lowest g + h complete and optimal � also optimally efficient (up to tie-breaks, forward search) � � Admissible heuristics can be derived from exact solution of relaxed problems

Local search algorithms Beyond classical search

Iterative improvement algorithms � In many optimization problems, path is irrelevant; � The goal state itself is the solution � Then state space = set of "complete" configurations; Find optimal configuration, e. g. , TSP � Or, find configuration satisfying constraints, e. g. , timetable � � In such cases, can use iterative improvement algorithms; � keep a single "current" state, try to improve it � Constant space, suitable for online as well as online search

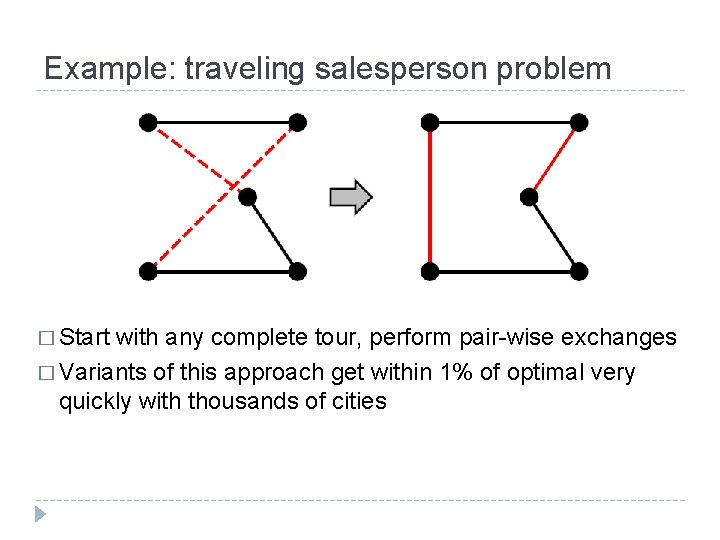

Example: traveling salesperson problem � Start with any complete tour, perform pair-wise exchanges � Variants of this approach get within 1% of optimal very quickly with thousands of cities

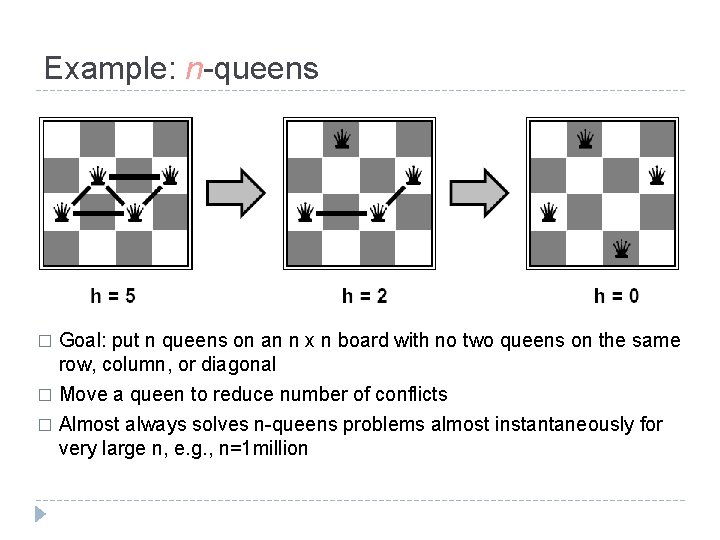

Example: n-queens � Goal: put n queens on an n x n board with no two queens on the same row, column, or diagonal Move a queen to reduce number of conflicts � Almost always solves n-queens problems almost instantaneously for very large n, e. g. , n=1 million �

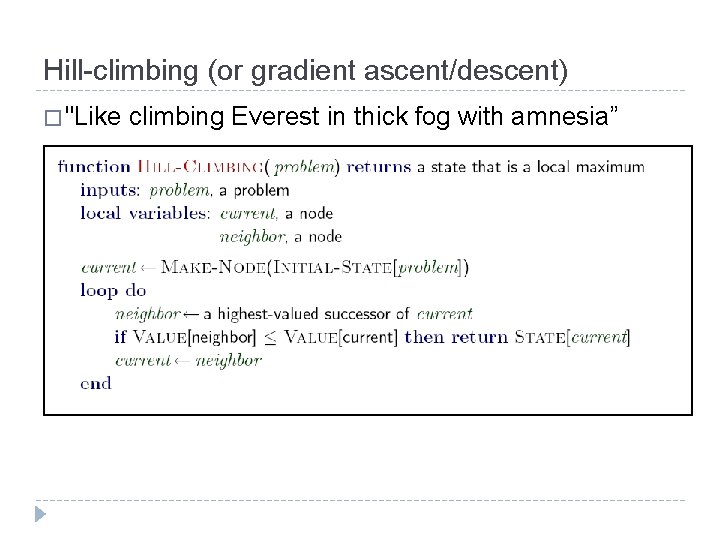

Hill-climbing (or gradient ascent/descent) � "Like climbing Everest in thick fog with amnesia”

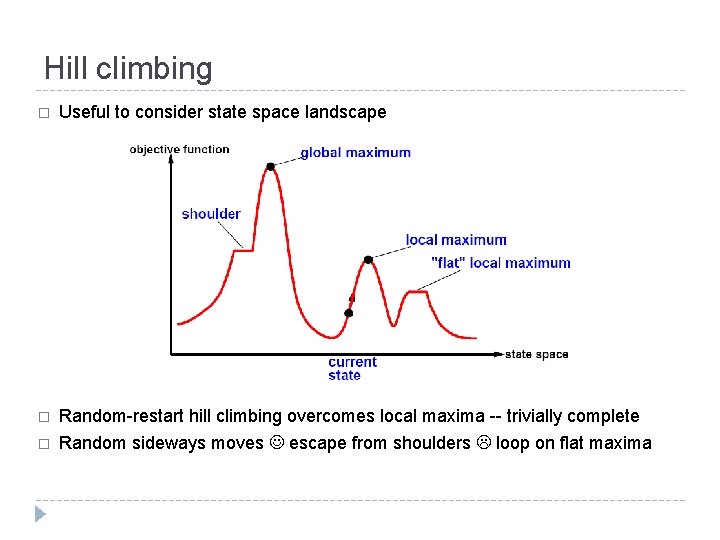

Hill climbing � Useful to consider state space landscape � Random-restart hill climbing overcomes local maxima -- trivially complete � Random sideways moves escape from shoulders loop on flat maxima

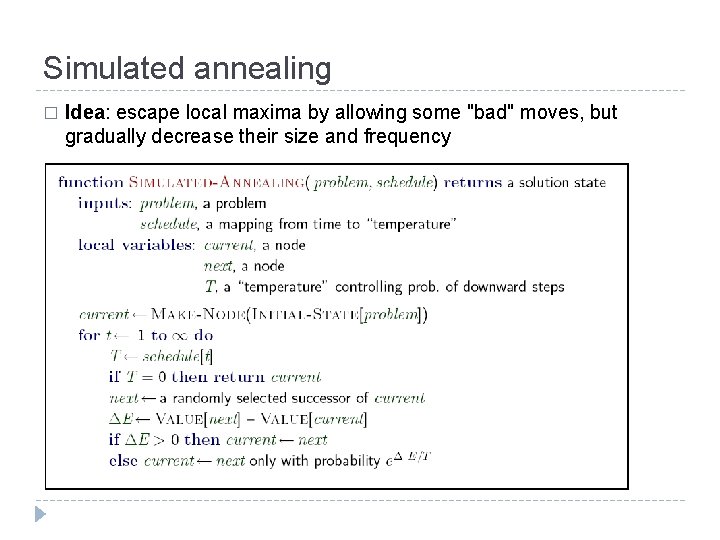

Simulated annealing � Idea: escape local maxima by allowing some "bad" moves, but gradually decrease their size and frequency

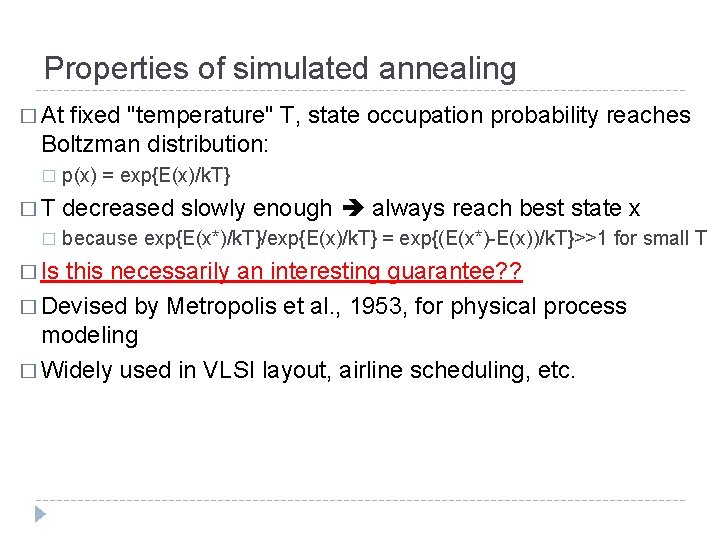

Properties of simulated annealing � At fixed "temperature" T, state occupation probability reaches Boltzman distribution: � p(x) = exp{E(x)/k. T} � T decreased slowly enough always reach best state x � because exp{E(x*)/k. T}/exp{E(x)/k. T} = exp{(E(x*)-E(x))/k. T}>>1 for small T � Is this necessarily an interesting guarantee? ? � Devised by Metropolis et al. , 1953, for physical process modeling � Widely used in VLSI layout, airline scheduling, etc.

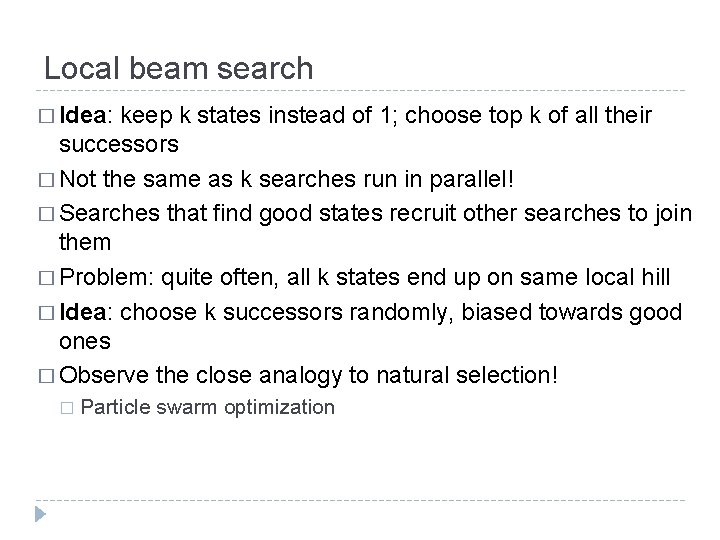

Local beam search � Idea: keep k states instead of 1; choose top k of all their successors � Not the same as k searches run in parallel! � Searches that find good states recruit other searches to join them � Problem: quite often, all k states end up on same local hill � Idea: choose k successors randomly, biased towards good ones � Observe the close analogy to natural selection! � Particle swarm optimization

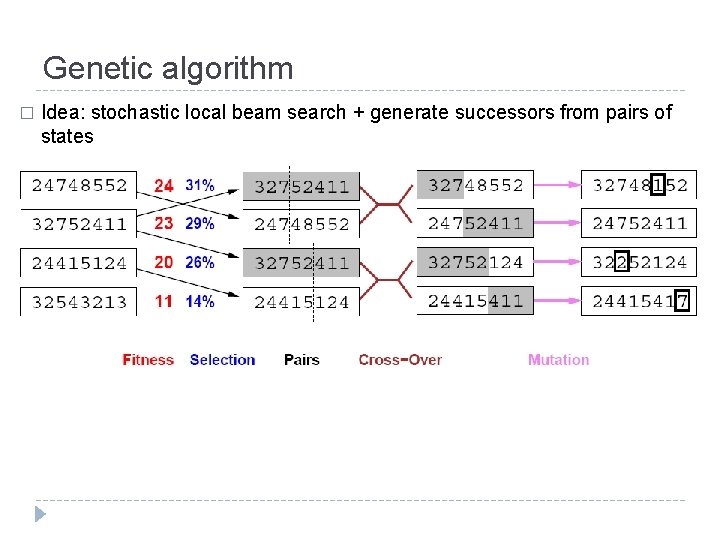

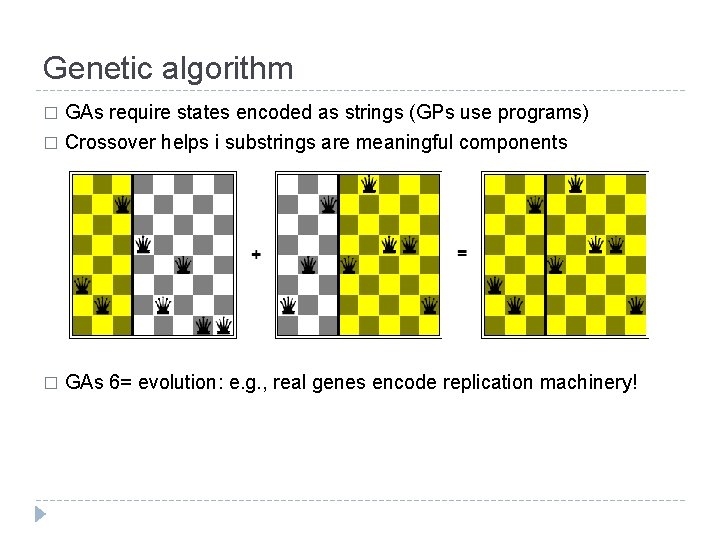

Genetic algorithm � Idea: stochastic local beam search + generate successors from pairs of states

Genetic algorithm GAs require states encoded as strings (GPs use programs) � Crossover helps i substrings are meaningful components � � GAs 6= evolution: e. g. , real genes encode replication machinery!

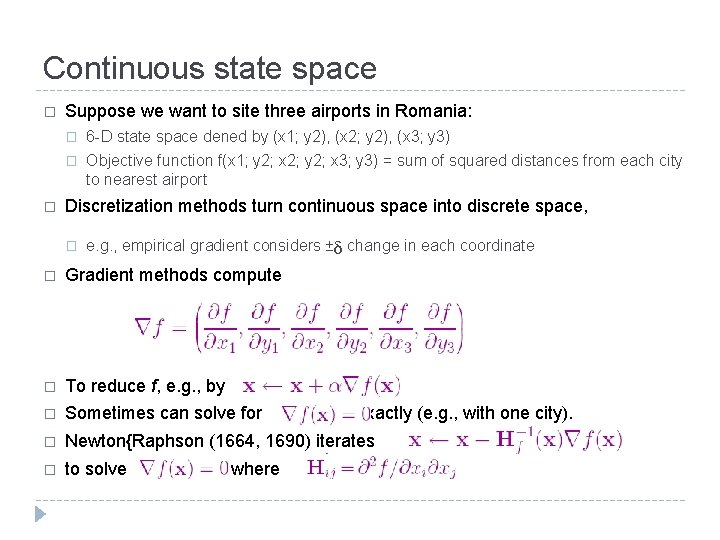

Continuous state space � � Suppose we want to site three airports in Romania: � 6 -D state space dened by (x 1; y 2), (x 2; y 2), (x 3; y 3) � Objective function f(x 1; y 2; x 2; y 2; x 3; y 3) = sum of squared distances from each city to nearest airport Discretization methods turn continuous space into discrete space, � ᵟ e. g. , empirical gradient considers ± change in each coordinate � Gradient methods compute � To reduce f, e. g. , by � Sometimes can solve for exactly (e. g. , with one city). � Newton{Raphson (1664, 1690) iterates � to solve , where

Assignment Reading Chapter 1&2 � Reading Chapter 3&4 � � Required: � Program Outcome 31. 5: Given a search problem, I am able to analyze and formalize the problem (as a state space, graph, etc. ) and select the appropriate search method � � Program Outcome 31. 11: I am able to find appropriate idealizations for converting real world problems into AI search problems formulated using the appropriate search algorithm. � � Problem 3: Russel & Norvig Book Problem 3. 3 (2 points) Program outcome 31. 9: I am able to explain important search concepts, such as the difference between informed and uninformed search, the definitions of admissible and consistent heuristics and completeness and optimality. I am able to give the time and space complexities for standard search algorithms, and write the algorithm for it. � � Problem 2: Russel & Norvig Book Problem 3. 2 (2 points) Program outcome 31. 12: I am able to implement A* and iterative deepening search. I am able to derive heuristic functions for A* search that are appropriate for a given problem. � � Problem 1: Russel & Norvig Book Problem 3. 9 (programming needed) (4 points) Problem 4: Russel & Norvig Book Problem 3. 18 (2 points) Optional bonus you can gain: � Russel & Norvig Book Problem 4. 3 � � Outlining algorithm, 1 piont Real programming, 3 point

- Slides: 93