CS 430 INFO 430 Information Retrieval Lecture 3

- Slides: 29

CS 430 / INFO 430 Information Retrieval Lecture 3 Searching Full Text 3 1

Course Administration 2

Books on Information Retrieval Ricardo Baeza-Yates and Berthier Ribeiro-Neto, Modern Information Retrieval, Addison Wesley, 1999. Covers most of the standard topics. Chapters vary in quality. William B. Frakes and Ricardo Baeza-Yates, Information Retrieval Data Structures and Algorithms. Prentice Hall, 1992. Good coverage of algorithms, but of date. Several good chapters. G. Salton and M. J. Mc. Gill, Introduction to Modern Information Retrieval, Mc. Graw-Hill, 1983. The classic description of the underlying methods. 3

Books on Information Retrieval Amy Langville and Carl Meyer, Google's Page. Rank and Beyond: the Science of Search Engine Rankings. Princeton University Press, 2006. A mathematical treatment of link based methods with summaries for the non-mathematician. 4

Observations from Discussion Class 1 • The quality of a search service can be no better than the quality of information in the underlying document collection. • The interface presented to a trained user can offer more features than the interface presented to an untrained user. • Very different methods are needed for searching a full text index of online materials (which are immediately available) and a catalog of physical items (which must be retrieved). • Subject headings are a form of controlled vocabulary that can be very effective in specialized domains, e. g. , medicine. 5

From the Library of Congress FAQ 13. How does relevance ranking work and where is it available? The results of a Keyword search on the "Basic Search" screen are displayed in order of their relevance to your search. In the Title List following a Keyword search, the display will include a column with a "Relevance" indicator; the most relevant items display first. The relevance is determined by three factors: * Uniqueness of search terms within the database. * Proximity of search terms to each other within the catalog record. * Number of different search terms present in a catalog record. 6

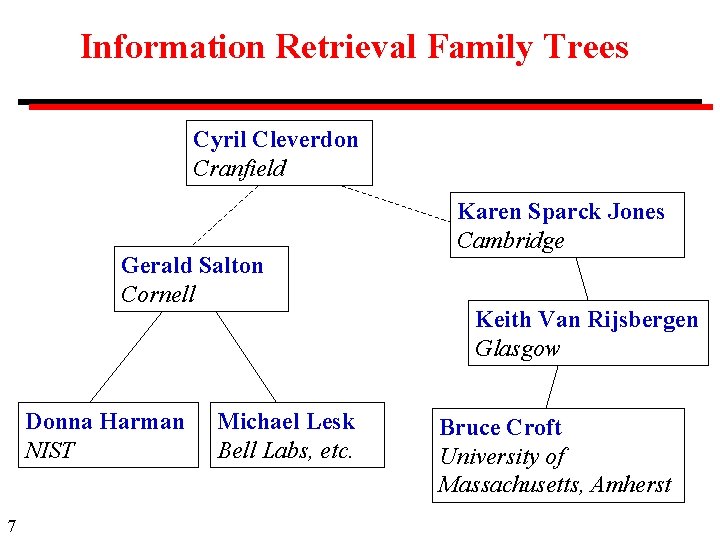

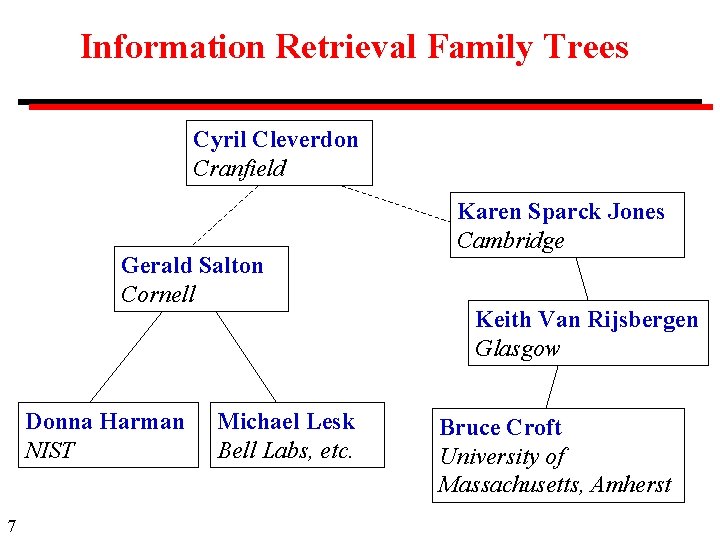

Information Retrieval Family Trees Cyril Cleverdon Cranfield Gerald Salton Cornell Donna Harman NIST 7 Michael Lesk Bell Labs, etc. Karen Sparck Jones Cambridge Keith Van Rijsbergen Glasgow Bruce Croft University of Massachusetts, Amherst

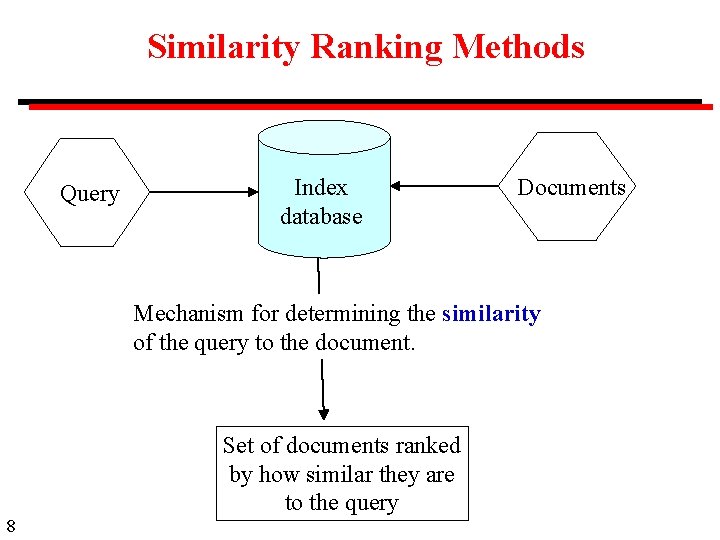

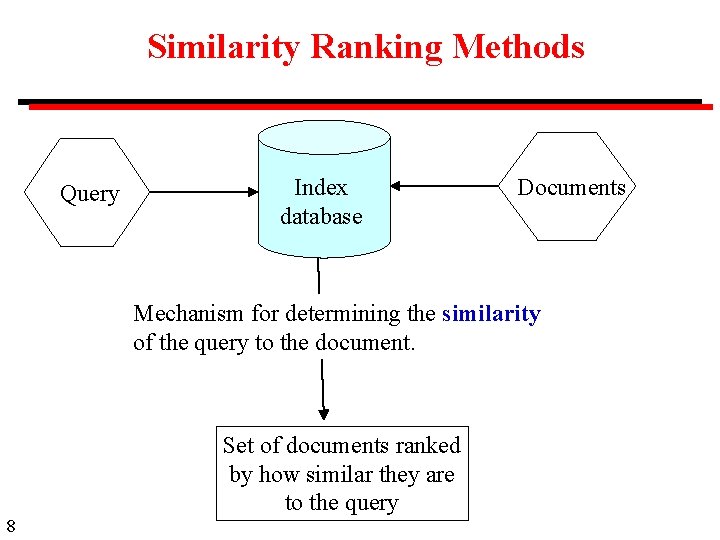

Similarity Ranking Methods Query Index database Documents Mechanism for determining the similarity of the query to the document. Set of documents ranked by how similar they are to the query 8

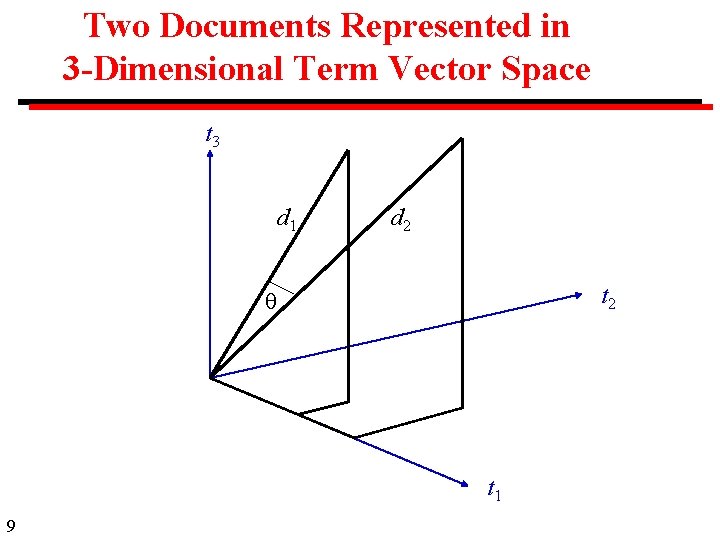

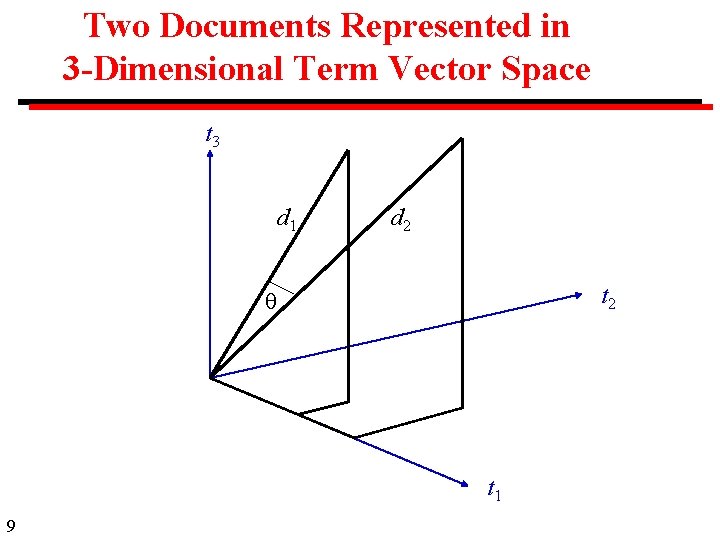

Two Documents Represented in 3 -Dimensional Term Vector Space t 3 d 1 d 2 t 1 9

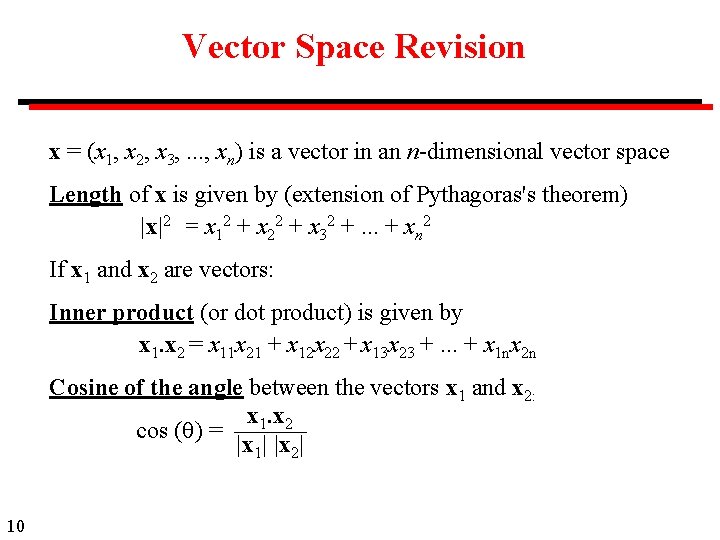

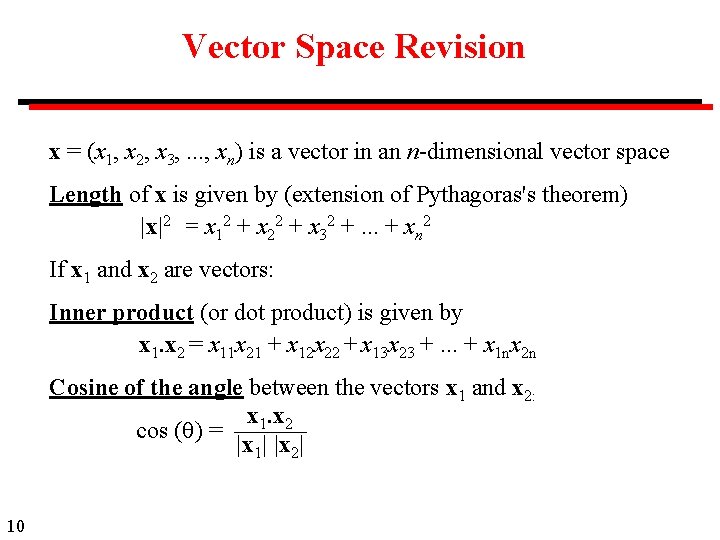

Vector Space Revision x = (x 1, x 2, x 3, . . . , xn) is a vector in an n-dimensional vector space Length of x is given by (extension of Pythagoras's theorem) |x|2 = x 12 + x 22 + x 32 +. . . + xn 2 If x 1 and x 2 are vectors: Inner product (or dot product) is given by x 1. x 2 = x 11 x 21 + x 12 x 22 + x 13 x 23 +. . . + x 1 nx 2 n Cosine of the angle between the vectors x 1 and x 2: x 1. x 2 cos ( ) = |x 1| |x 2| 10

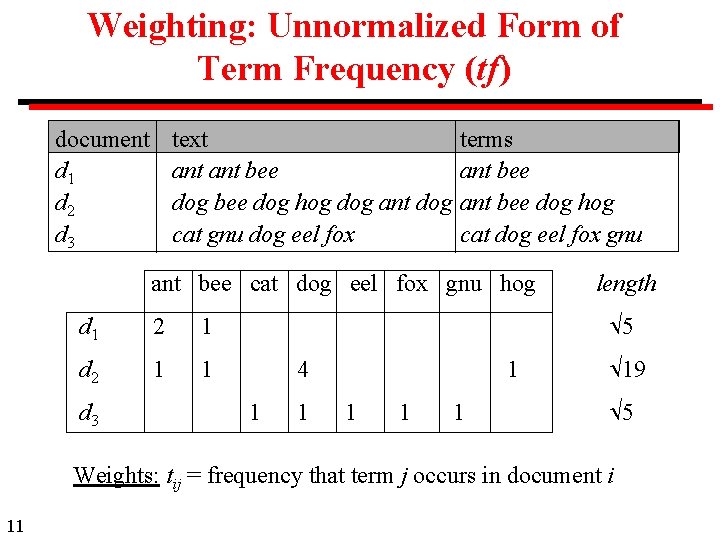

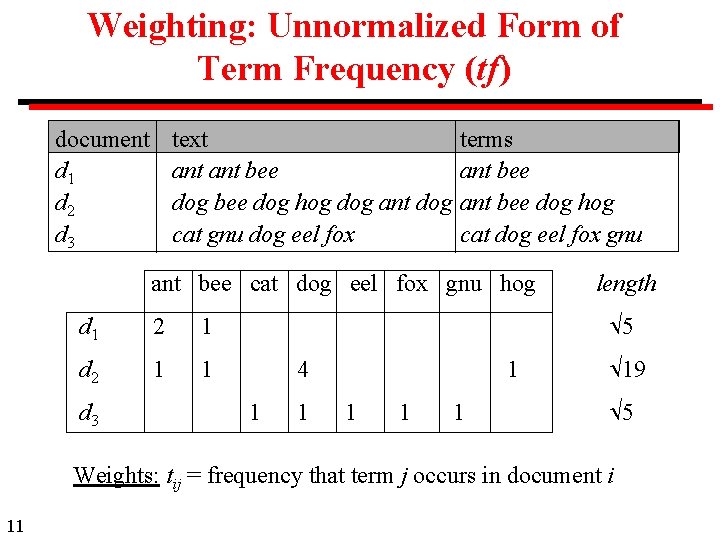

Weighting: Unnormalized Form of Term Frequency (tf) document d 1 d 2 d 3 text ant bee dog hog dog ant dog cat gnu dog eel fox terms ant bee dog hog cat dog eel fox gnu ant bee cat dog eel fox gnu hog d 1 2 1 d 2 1 1 d 3 length 5 4 1 1 1 19 5 Weights: tij = frequency that term j occurs in document i 11

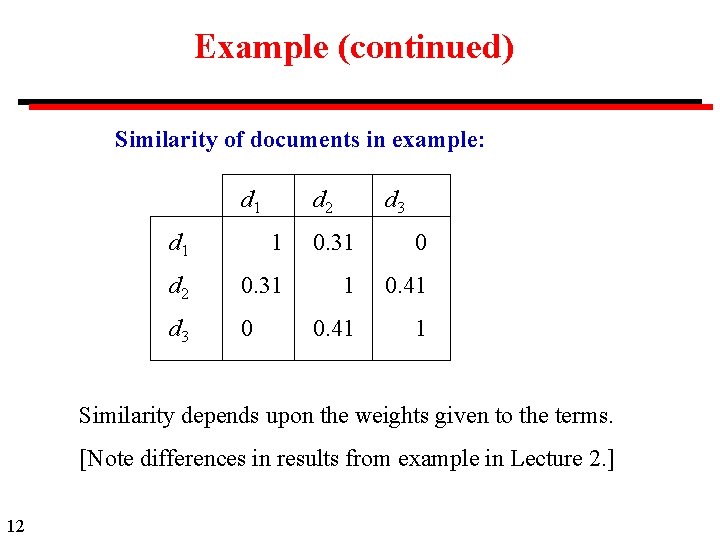

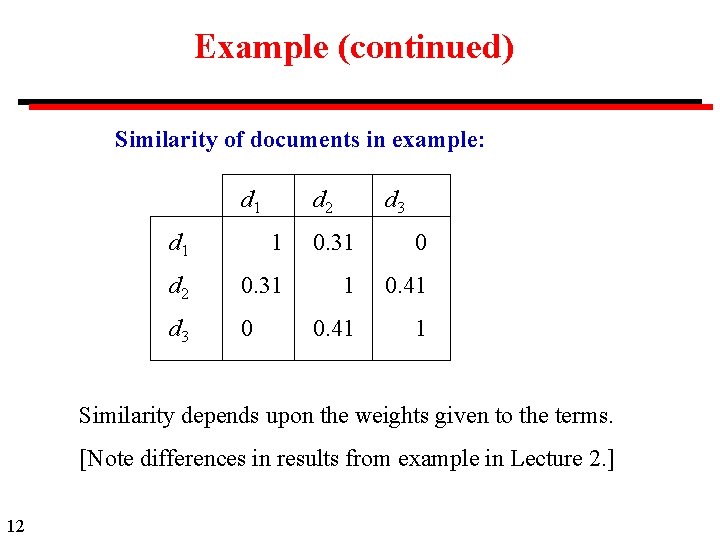

Example (continued) Similarity of documents in example: d 1 d 2 d 3 d 1 1 0. 31 0 d 2 0. 31 1 0. 41 d 3 0 0. 41 1 Similarity depends upon the weights given to the terms. [Note differences in results from example in Lecture 2. ] 12

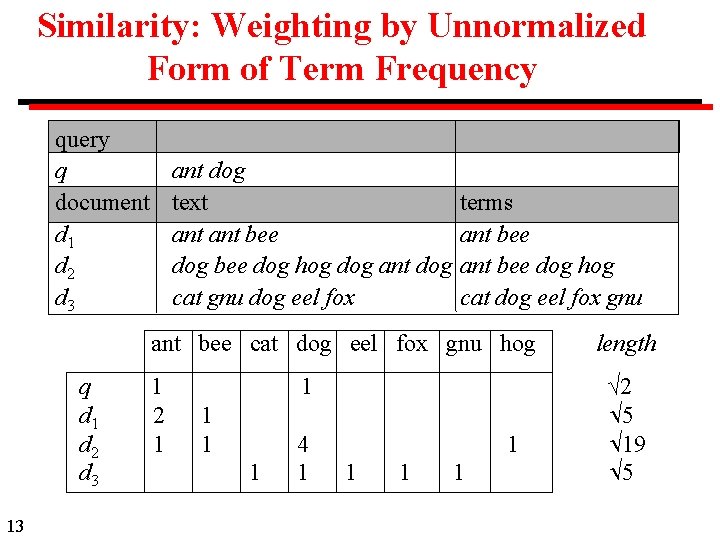

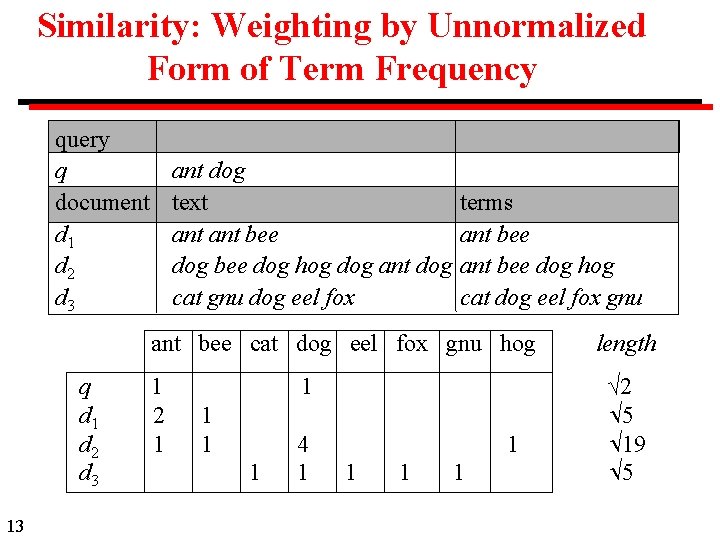

Similarity: Weighting by Unnormalized Form of Term Frequency query q document d 1 d 2 d 3 ant dog text ant bee dog hog dog ant dog cat gnu dog eel fox terms ant bee dog hog cat dog eel fox gnu ant bee cat dog eel fox gnu hog q d 1 d 2 d 3 13 1 2 1 1 1 4 1 1 1 length √ 2 5 19 5

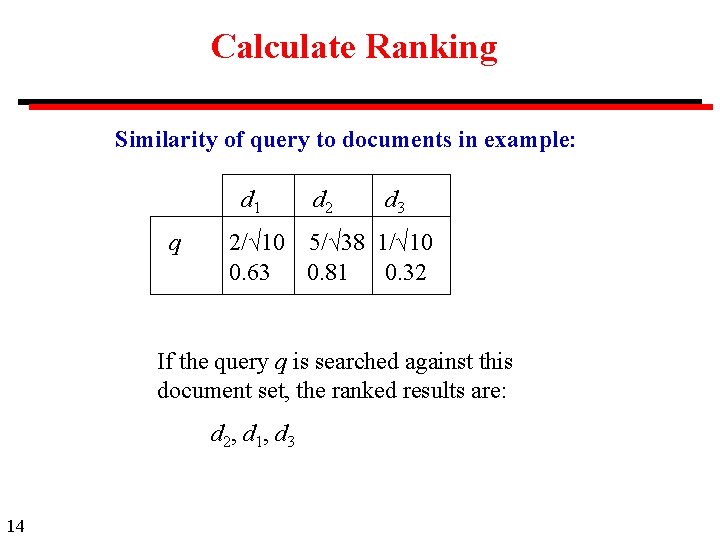

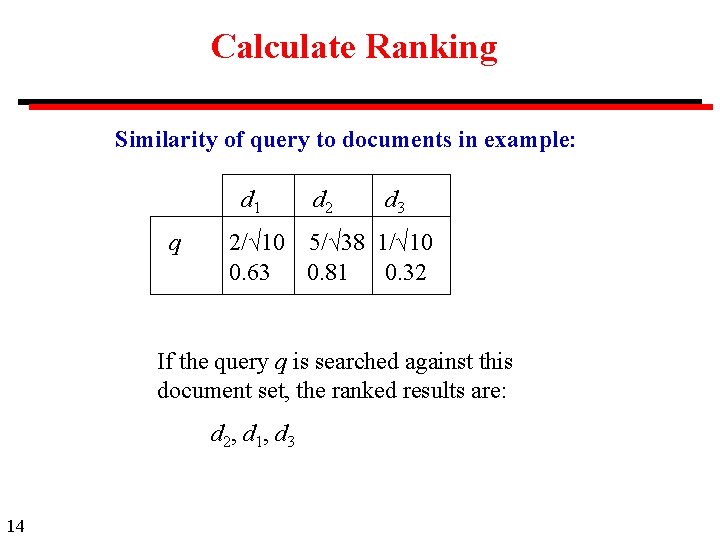

Calculate Ranking Similarity of query to documents in example: d 1 q d 2 d 3 2/√ 10 5/√ 38 1/√ 10 0. 63 0. 81 0. 32 If the query q is searched against this document set, the ranked results are: d 2, d 1, d 3 14

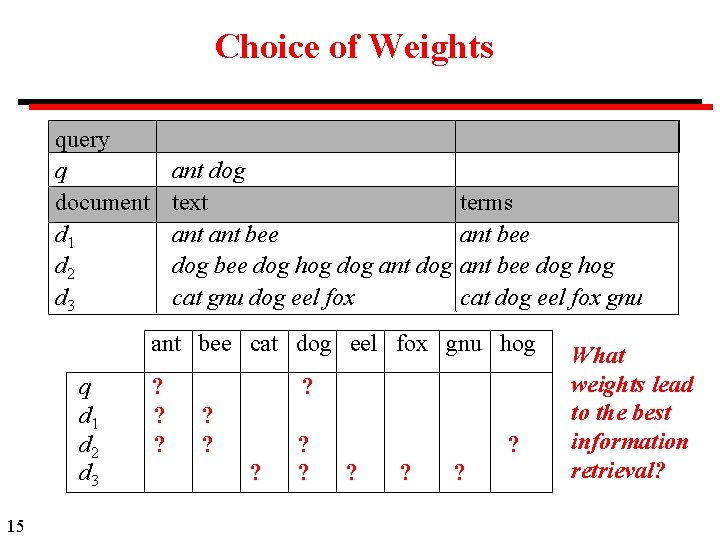

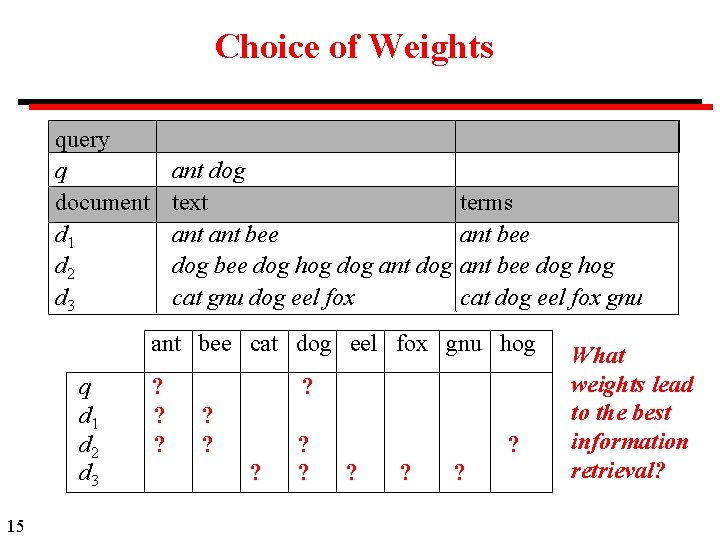

Choice of Weights query q document d 1 d 2 d 3 ant dog text ant bee dog hog dog ant dog cat gnu dog eel fox terms ant bee dog hog cat dog eel fox gnu ant bee cat dog eel fox gnu hog q d 1 d 2 d 3 15 ? ? ? ? ? What weights lead to the best information retrieval?

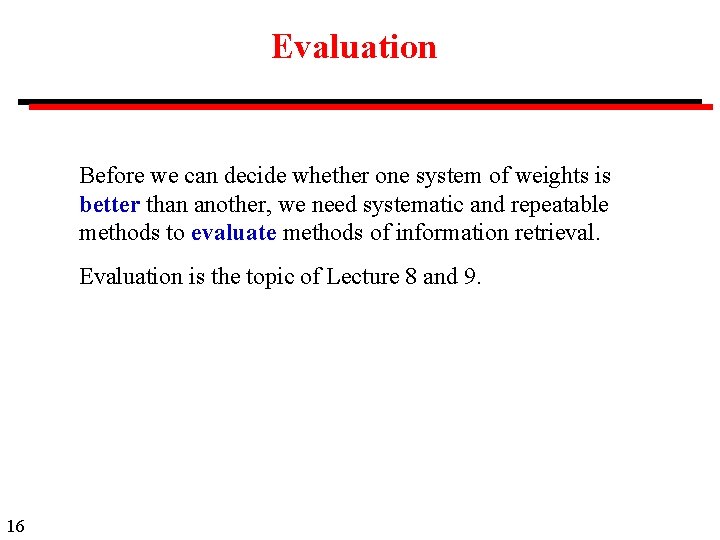

Evaluation Before we can decide whether one system of weights is better than another, we need systematic and repeatable methods to evaluate methods of information retrieval. Evaluation is the topic of Lecture 8 and 9. 16

Methods for Selecting Weights Empirical Test a large number of possible weighting schemes with actual data. (This lecture, based on work of Salton, et al. ) Model based Develop a mathematical model of word distribution and derive weighting scheme theoretically. (Probabilistic model of information retrieval. ) 17

Weighting Term Frequency (tf) Suppose term j appears fij times in document i. What weighting should be given to a term j? Term Frequency: Concept A term that appears many times within a document is likely to be more important than a term that appears only once. 18

Normalized Form of Term Frequency: Free-text Document Length of document Unnormalized method is to use fij as the term frequency. . but, in free-text documents, terms are likely to appear more often in long documents. Therefore fij should be scaled by some variable related to document length. 19

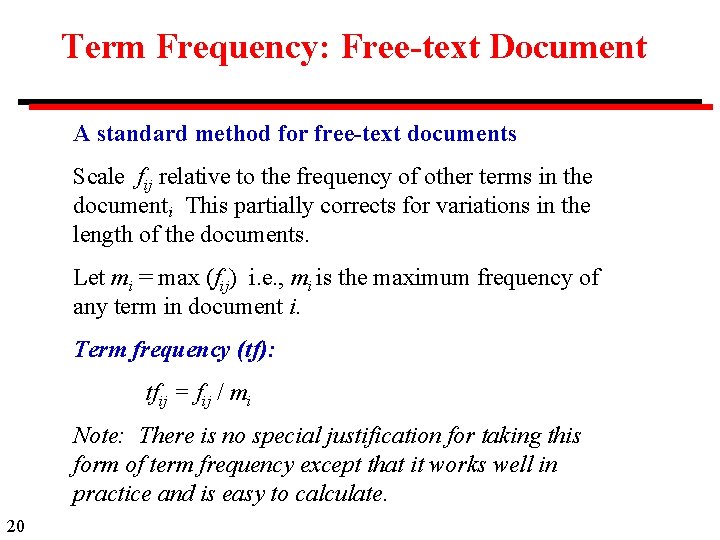

Term Frequency: Free-text Document A standard method for free-text documents Scale fij relative to the frequency of other terms in the document. i This partially corrects for variations in the length of the documents. Let mi = max (fij) i. e. , mi is the maximum frequency of any term in document i. Term frequency (tf): tfij = fij / mi Note: There is no special justification for taking this form of term frequency except that it works well in practice and is easy to calculate. 20

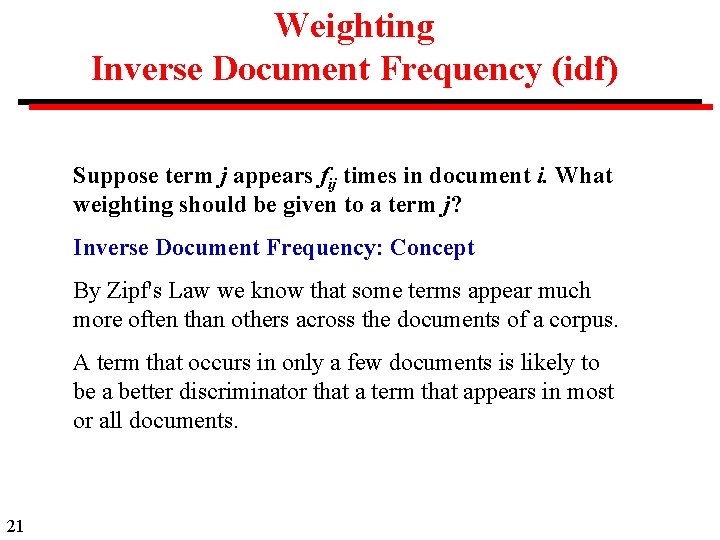

Weighting Inverse Document Frequency (idf) Suppose term j appears fij times in document i. What weighting should be given to a term j? Inverse Document Frequency: Concept By Zipf's Law we know that some terms appear much more often than others across the documents of a corpus. A term that occurs in only a few documents is likely to be a better discriminator that a term that appears in most or all documents. 21

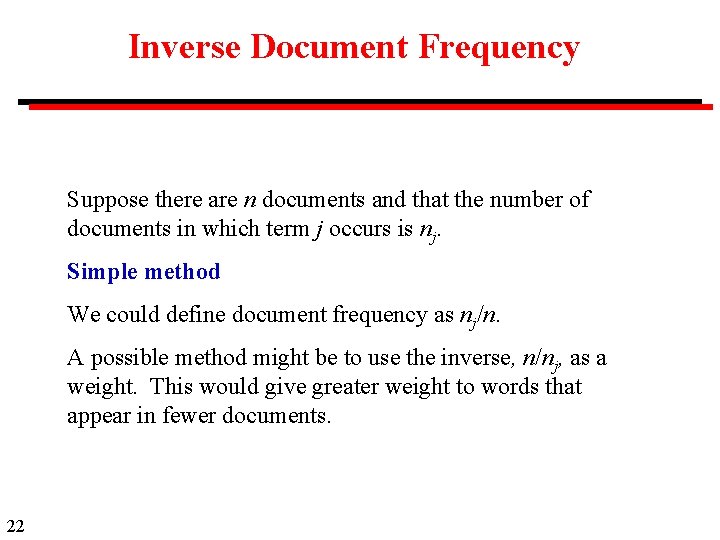

Inverse Document Frequency Suppose there are n documents and that the number of documents in which term j occurs is nj. Simple method We could define document frequency as nj/n. A possible method might be to use the inverse, n/nj, as a weight. This would give greater weight to words that appear in fewer documents. 22

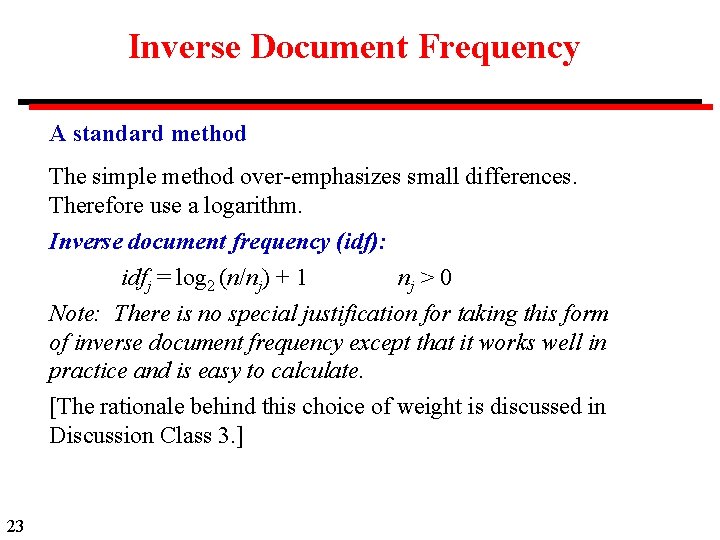

Inverse Document Frequency A standard method The simple method over-emphasizes small differences. Therefore use a logarithm. Inverse document frequency (idf): idfj = log 2 (n/nj) + 1 nj > 0 Note: There is no special justification for taking this form of inverse document frequency except that it works well in practice and is easy to calculate. [The rationale behind this choice of weight is discussed in Discussion Class 3. ] 23

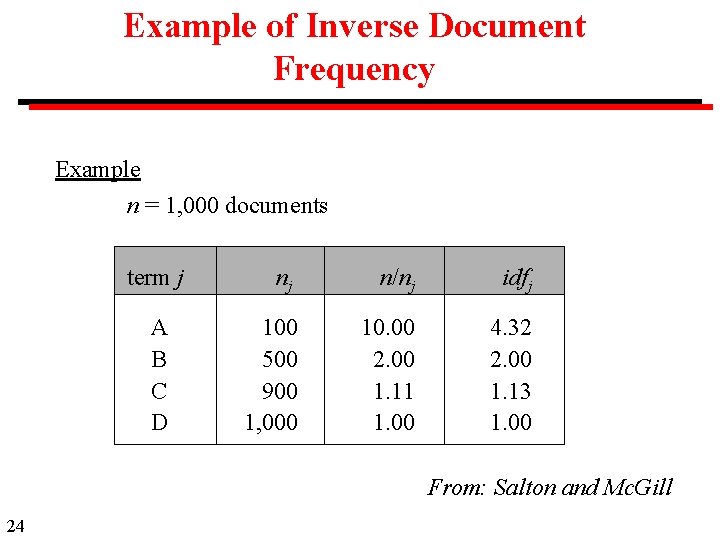

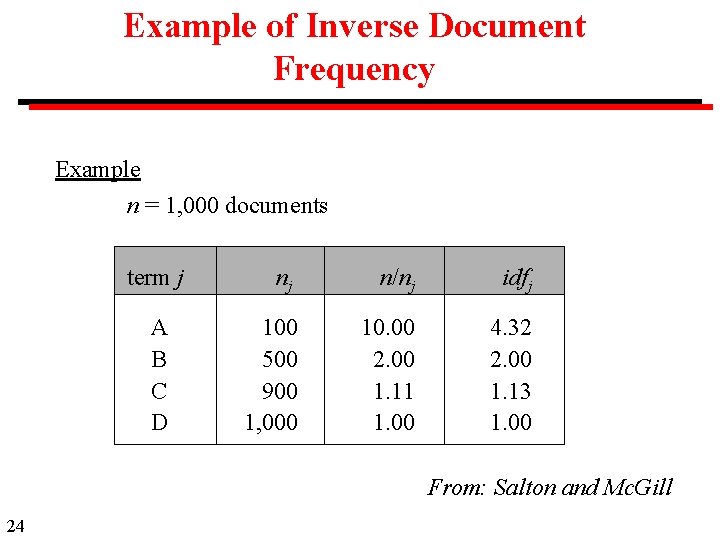

Example of Inverse Document Frequency Example n = 1, 000 documents term j A B C D nj n/nj idfj 100 500 900 1, 000 10. 00 2. 00 1. 11 1. 00 4. 32 2. 00 1. 13 1. 00 From: Salton and Mc. Gill 24

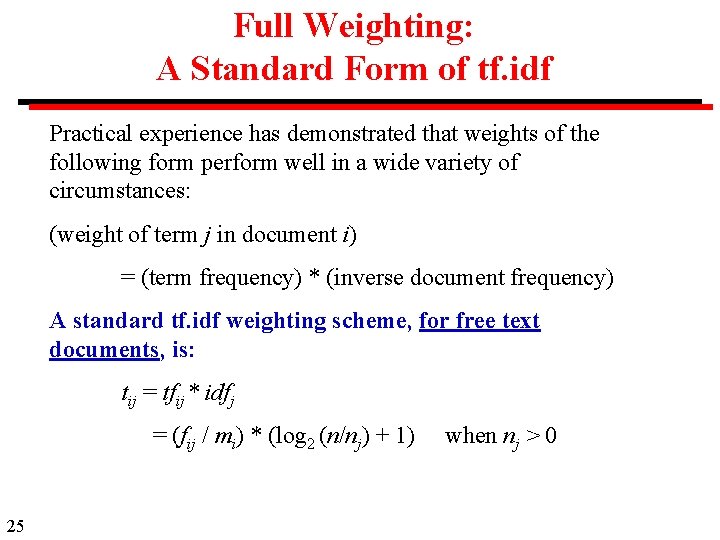

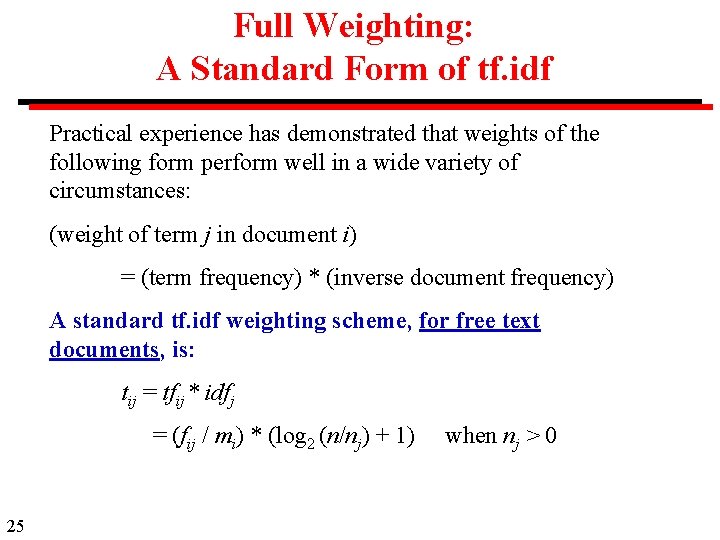

Full Weighting: A Standard Form of tf. idf Practical experience has demonstrated that weights of the following form perform well in a wide variety of circumstances: (weight of term j in document i) = (term frequency) * (inverse document frequency) A standard tf. idf weighting scheme, for free text documents, is: tij = tfij * idfj = (fij / mi) * (log 2 (n/nj) + 1) 25 when nj > 0

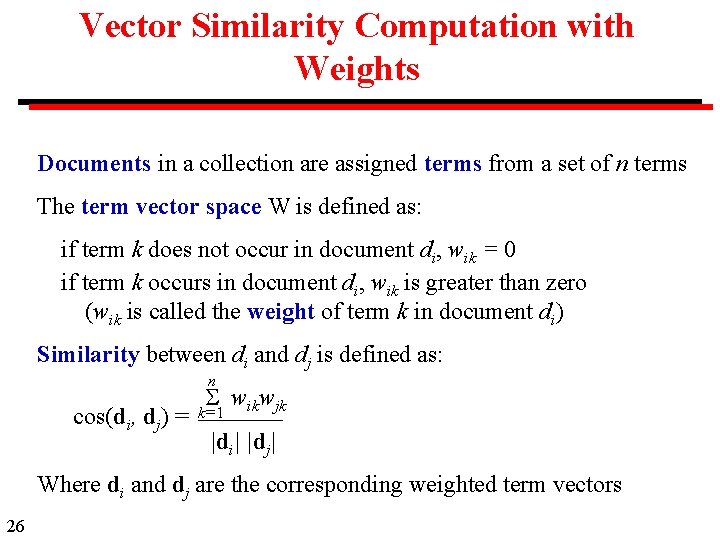

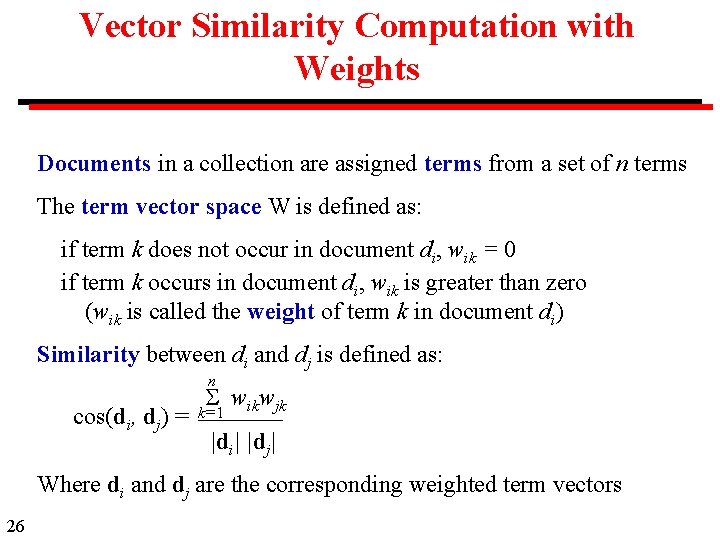

Vector Similarity Computation with Weights Documents in a collection are assigned terms from a set of n terms The term vector space W is defined as: if term k does not occur in document di, wik = 0 if term k occurs in document di, wik is greater than zero (wik is called the weight of term k in document di) Similarity between di and dj is defined as: n cos(di, dj) = wikwjk k=1 |di| |dj| Where di and dj are the corresponding weighted term vectors 26

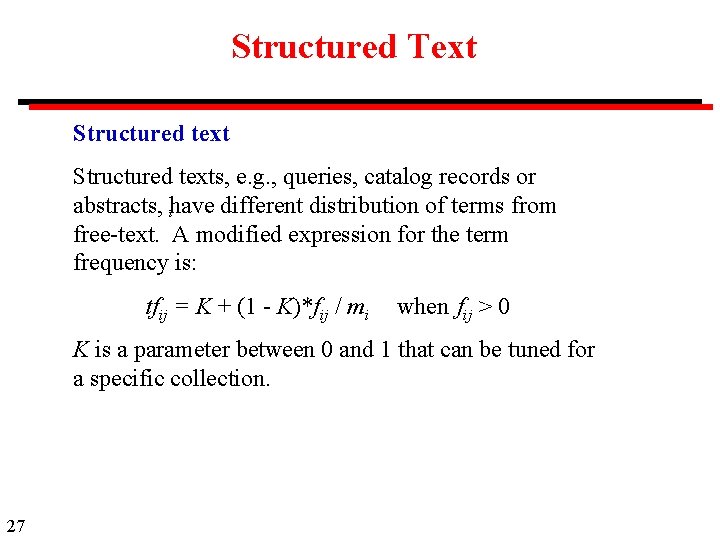

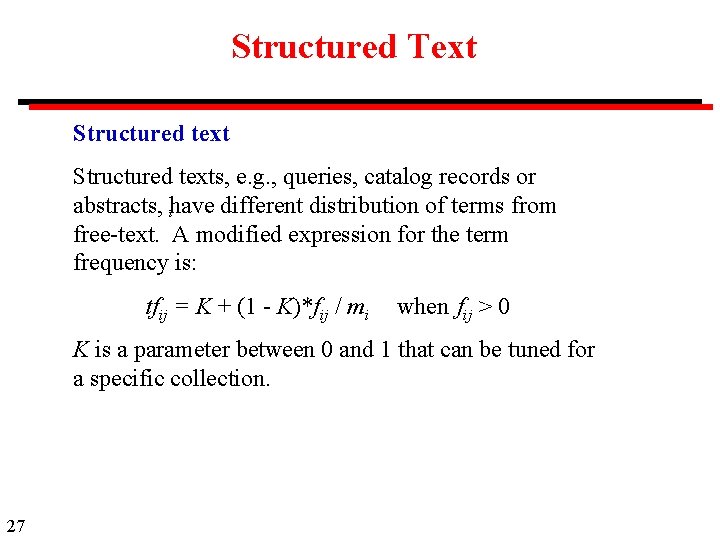

Structured Text Structured texts, e. g. , queries, catalog records or abstracts, have different distribution of terms from i free-text. A modified expression for the term frequency is: tfij = K + (1 - K)*fij / mi when fij > 0 K is a parameter between 0 and 1 that can be tuned for a specific collection. 27

Structured Text Query To weigh terms in the query, Salton and Buckley recommend i K equal to 0. 5. However, in practice it is rare for a term to be repeated in a query. Therefore the standard form of tf can be used, i. e. , with K = 0 and m = 1. 28

Discussion of Similarity The choice of similarity measure is widely used and works well on a wide range of documents, but has no theoretical basis. 1. There are several possible measures other that angle between vectors 2. There is a choice of possible definitions of tf and idf 3. With fielded searching, there are various ways to adjust the weight given to each field. 4. The definitions on Slide 25 can be considered the standard. 29