CS 3700 Networks and Distributed Systems Distributed Consensus

CS 3700 Networks and Distributed Systems Distributed Consensus and Fault Tolerance (or, why can’t we all just get along? )

Black Box Online Services Black Box Service Storing and retrieving data from online services is commonplace We tend to treat these services as black boxes • Data goes in, we assume outputs are correct • We have no idea how the service is implemented

![Black Box Online Services debit_transaction(-$75) OK get_recent_transactions() […, “-$75”, …] Storing and retrieving data Black Box Online Services debit_transaction(-$75) OK get_recent_transactions() […, “-$75”, …] Storing and retrieving data](http://slidetodoc.com/presentation_image_h/2fd9d1eff31746a53e5ffad78fbae250/image-3.jpg)

Black Box Online Services debit_transaction(-$75) OK get_recent_transactions() […, “-$75”, …] Storing and retrieving data from online services is commonplace We tend to treat these services as black boxes • Data goes in, we assume outputs are correct • We have no idea how the service is implemented

![Black Box Online Services post_update(“I LOLed”) OK get_newsfeed() […, {“txt”: “I LOLed”, “likes”: 87}] Black Box Online Services post_update(“I LOLed”) OK get_newsfeed() […, {“txt”: “I LOLed”, “likes”: 87}]](http://slidetodoc.com/presentation_image_h/2fd9d1eff31746a53e5ffad78fbae250/image-4.jpg)

Black Box Online Services post_update(“I LOLed”) OK get_newsfeed() […, {“txt”: “I LOLed”, “likes”: 87}] Storing and retrieving data from online services is commonplace We tend to treat these services as black boxes • Data goes in, we assume outputs are correct • We have no idea how the service is implemented

Peeling Back the Curtain ? Black Box Service How are large services implemented? • Different types of services may have different requirements • Leads to different design decisions

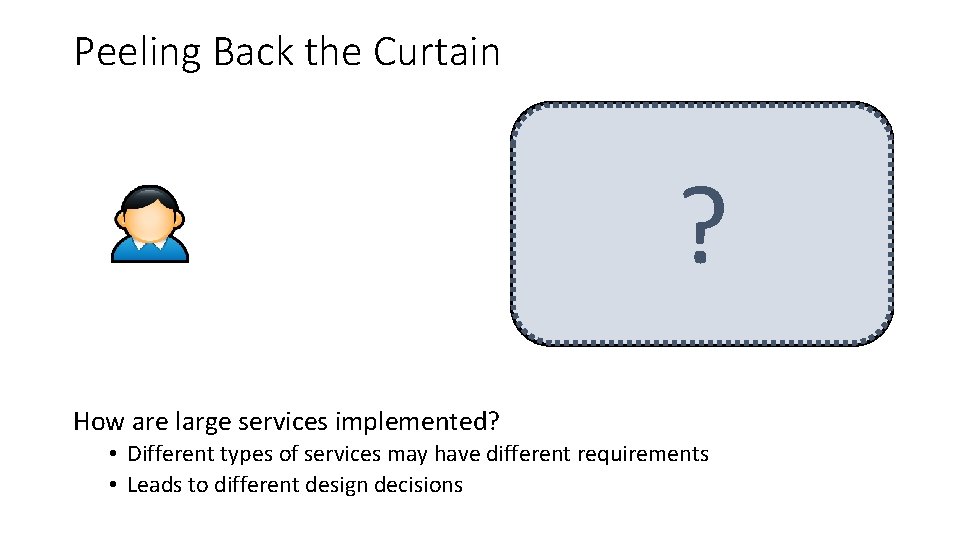

Centralization debit_transaction(-$75) OK Bob get_account_balance() ? Bob: $300 Bob: $225 Advantages of centralization • Easy to setup and deploy • Consistency is guaranteed (assuming correct software implementation) Shortcomings • No load balancing • Single point of failure

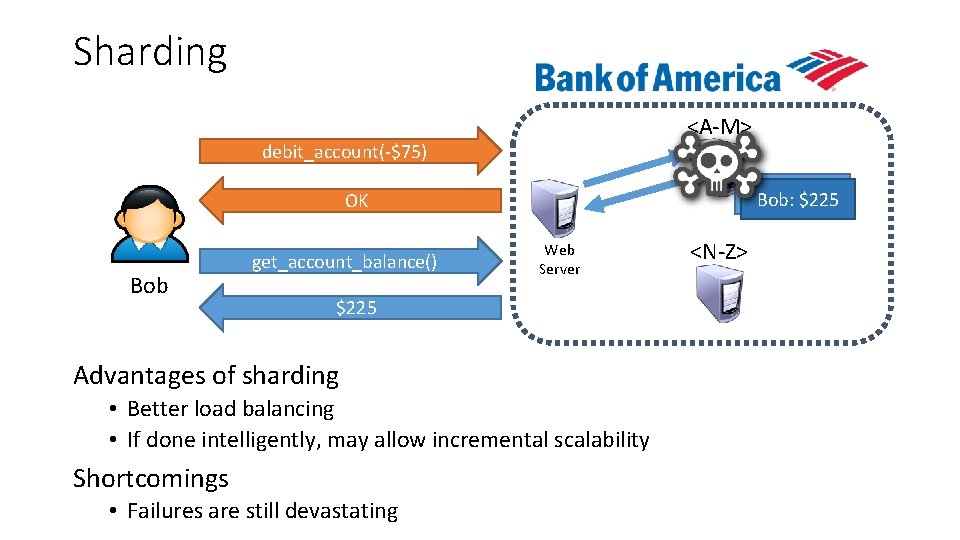

Sharding <A-M> debit_account(-$75) Bob: $300 Bob: $225 OK Bob get_account_balance() Web Server $225 Advantages of sharding • Better load balancing • If done intelligently, may allow incremental scalability Shortcomings • Failures are still devastating <N-Z>

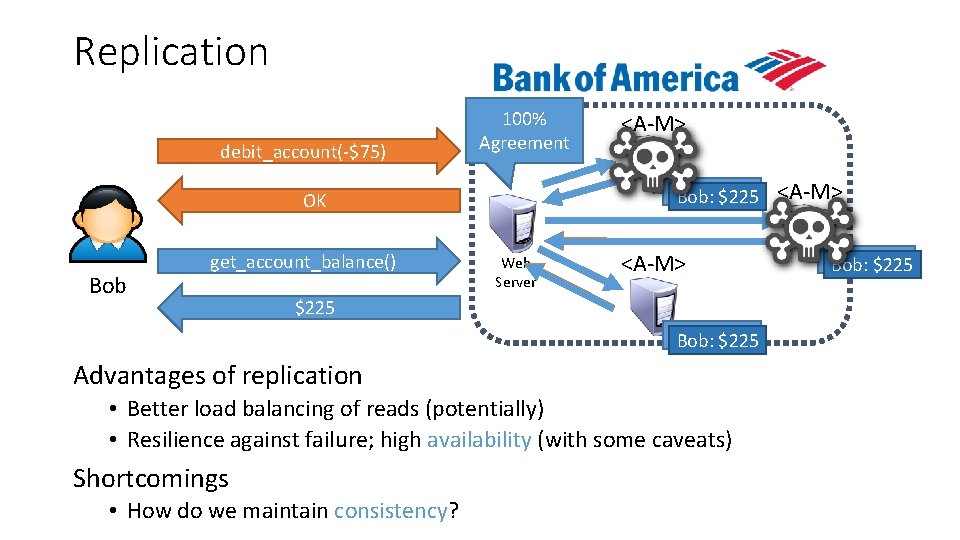

Replication debit_account(-$75) 100% Agreement Bob: $300 Bob: $225 OK Bob get_account_balance() <A-M> Web Server <A-M> $225 Bob: $300 Bob: $225 Advantages of replication • Better load balancing of reads (potentially) • Resilience against failure; high availability (with some caveats) Shortcomings • How do we maintain consistency? <A-M> Bob: $300 Bob: $225

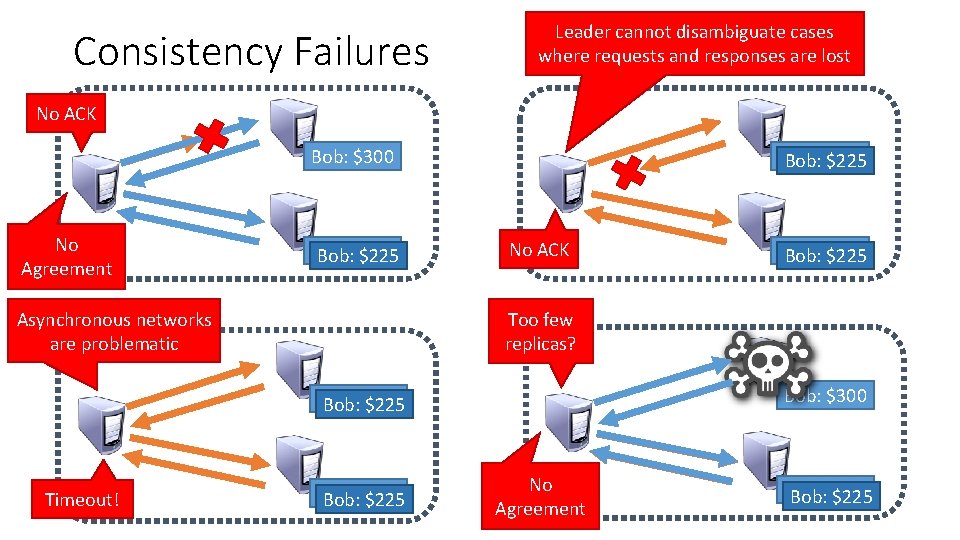

Consistency Failures Leader cannot disambiguate cases where requests and responses are lost No ACK Bob: $300 No Agreement Bob: $300 Bob: $225 No ACK Too few replicas? Asynchronous networks are problematic Bob: $300 Bob: $225 Timeout! Bob: $300 Bob: $225 No Agreement Bob: $300 Bob: $225

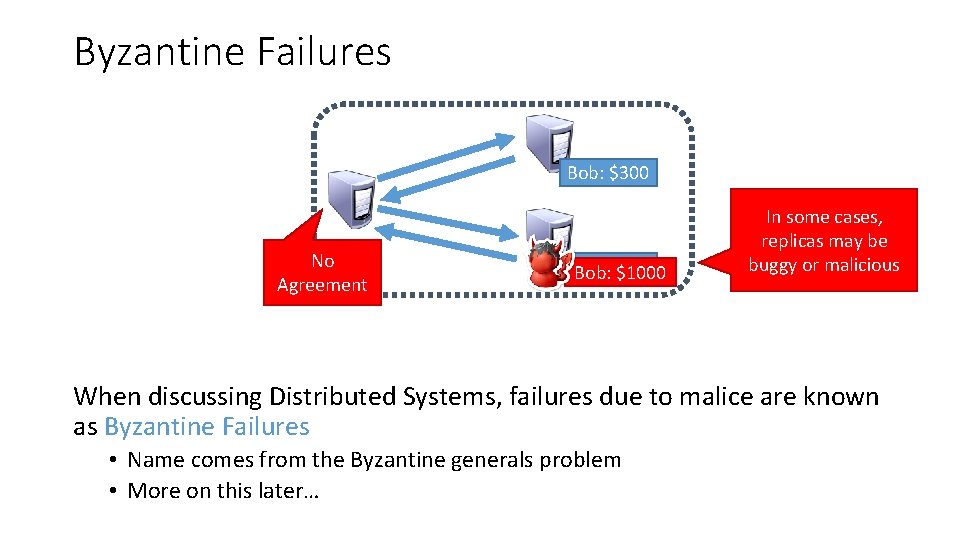

Byzantine Failures Bob: $300 No Agreement Bob: $300 Bob: $1000 In some cases, replicas may be buggy or malicious When discussing Distributed Systems, failures due to malice are known as Byzantine Failures • Name comes from the Byzantine generals problem • More on this later…

Problem and Definitions Build a distributed system that meets the following goals: • The system should be able to reach consensus • Consensus [n]: general agreement • The system should be consistent • Data should be correct; no integrity violations • The system should be highly available • Data should be accessible even in the face of arbitrary failures Challenges: • Many, many different failure modes • Theory tells us that these goals are impossible to achieve (more on this later)

Distributed Commits (2 PC and 3 PC) Theory (FLP and CAP) Quorums (Paxos)

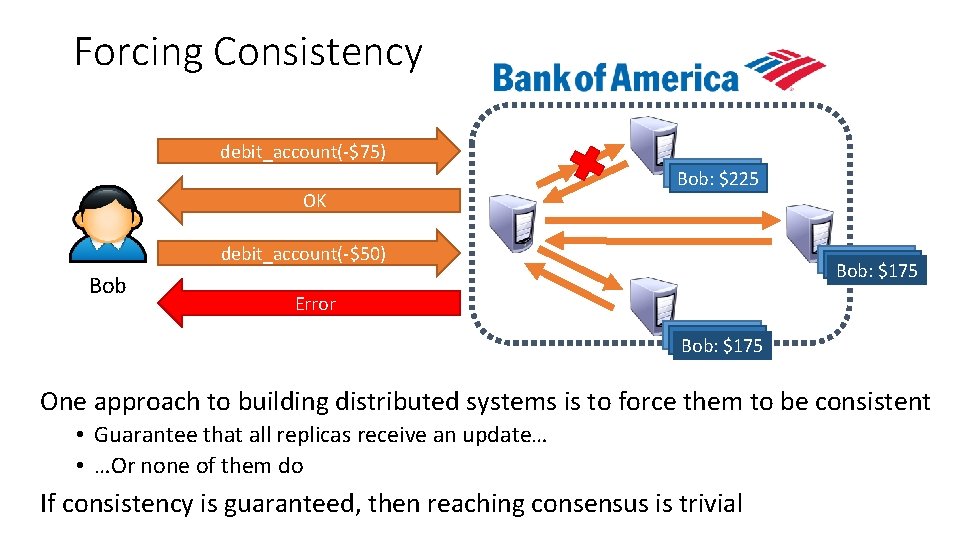

Forcing Consistency debit_account(-$75) OK Bob: $300 Bob: $225 debit_account(-$50) Bob: $300 Bob: $225 Bob: $175 Error Bob: $300 Bob: $225 Bob: $175 One approach to building distributed systems is to force them to be consistent • Guarantee that all replicas receive an update… • …Or none of them do If consistency is guaranteed, then reaching consensus is trivial

Distributed Commit Problem Application that performs operations on multiple replicas or databases • We want to guarantee that all replicas get updated, or none do Distributed commit problem: 1. Operation is committed when all participants can perform the action 2. Once a commit decision is reached, all participants must perform the action Two steps gives rise to the Two Phase Commit protocol

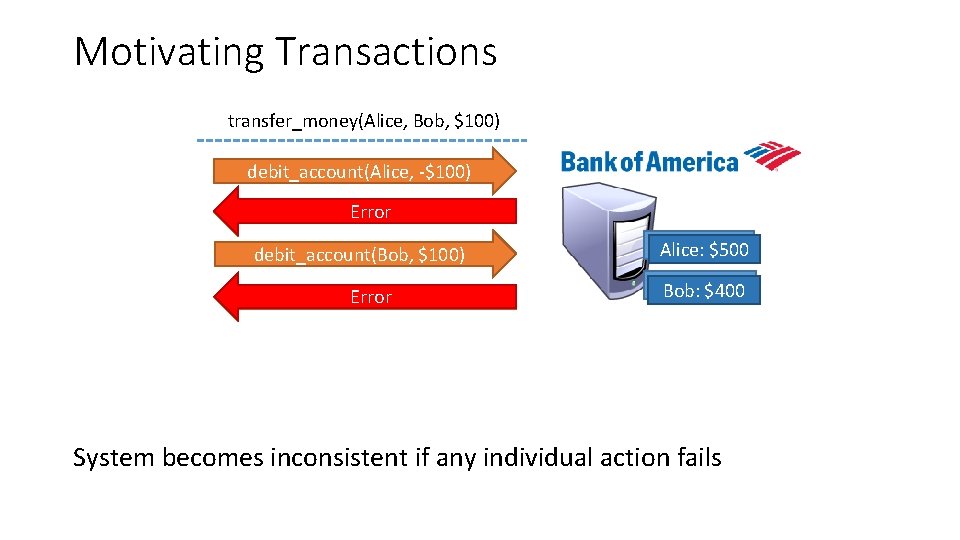

Motivating Transactions transfer_money(Alice, Bob, $100) debit_account(Alice, -$100) Error OK debit_account(Bob, $100) Error OK Alice: $600 Alice: $500 Bob: $300 Bob: $400 System becomes inconsistent if any individual action fails

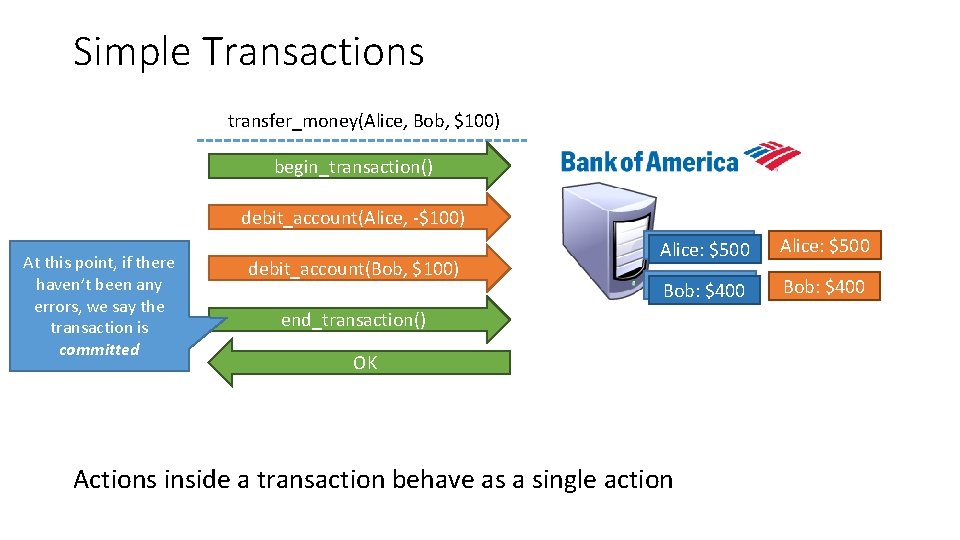

Simple Transactions transfer_money(Alice, Bob, $100) begin_transaction() debit_account(Alice, -$100) At this point, if there haven’t been any errors, we say the transaction is committed debit_account(Bob, $100) Alice: $600 Alice: $500 Bob: $300 Bob: $400 end_transaction() OK Actions inside a transaction behave as a single action

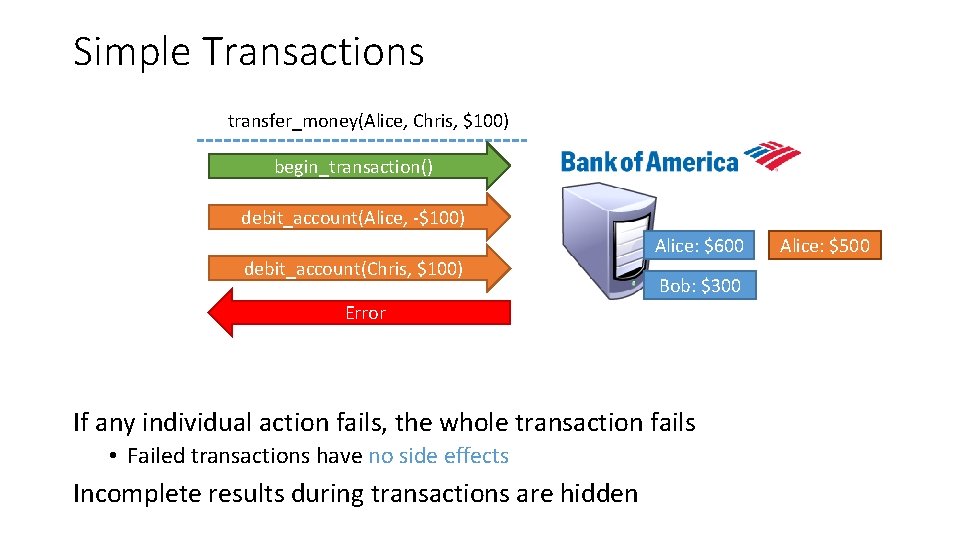

Simple Transactions transfer_money(Alice, Chris, $100) begin_transaction() debit_account(Alice, -$100) debit_account(Chris, $100) Alice: $600 Bob: $300 Error If any individual action fails, the whole transaction fails • Failed transactions have no side effects Incomplete results during transactions are hidden Alice: $500

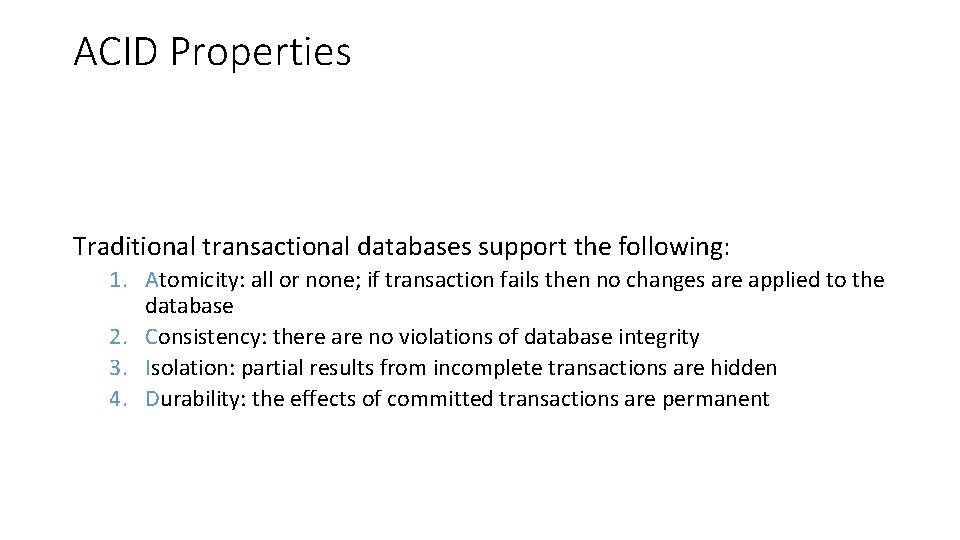

ACID Properties Traditional transactional databases support the following: 1. Atomicity: all or none; if transaction fails then no changes are applied to the database 2. Consistency: there are no violations of database integrity 3. Isolation: partial results from incomplete transactions are hidden 4. Durability: the effects of committed transactions are permanent

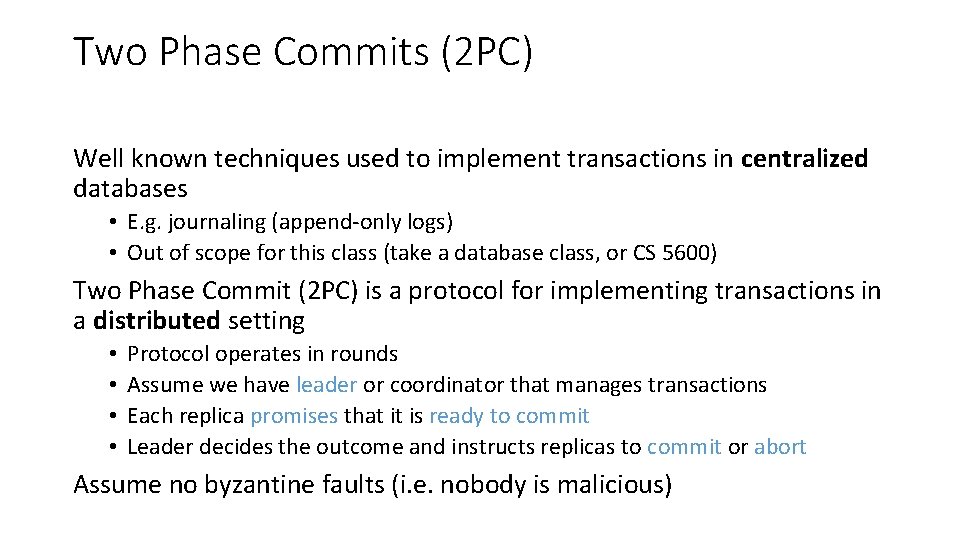

Two Phase Commits (2 PC) Well known techniques used to implement transactions in centralized databases • E. g. journaling (append-only logs) • Out of scope for this class (take a database class, or CS 5600) Two Phase Commit (2 PC) is a protocol for implementing transactions in a distributed setting • • Protocol operates in rounds Assume we have leader or coordinator that manages transactions Each replica promises that it is ready to commit Leader decides the outcome and instructs replicas to commit or abort Assume no byzantine faults (i. e. nobody is malicious)

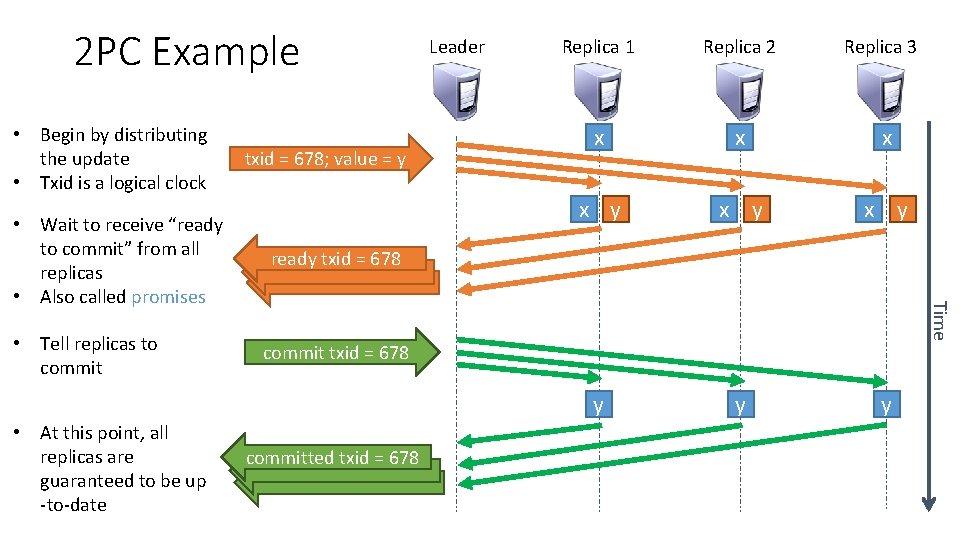

2 PC Example • Begin by distributing the update • Txid is a logical clock • Tell replicas to commit • At this point, all replicas are guaranteed to be up -to-date Replica 1 Replica 2 Replica 3 x x y x y y ready txid = 678 Time • Wait to receive “ready to commit” from all replicas • Also called promises txid = 678; value = y Leader commit txid = 678 committed txid = 678

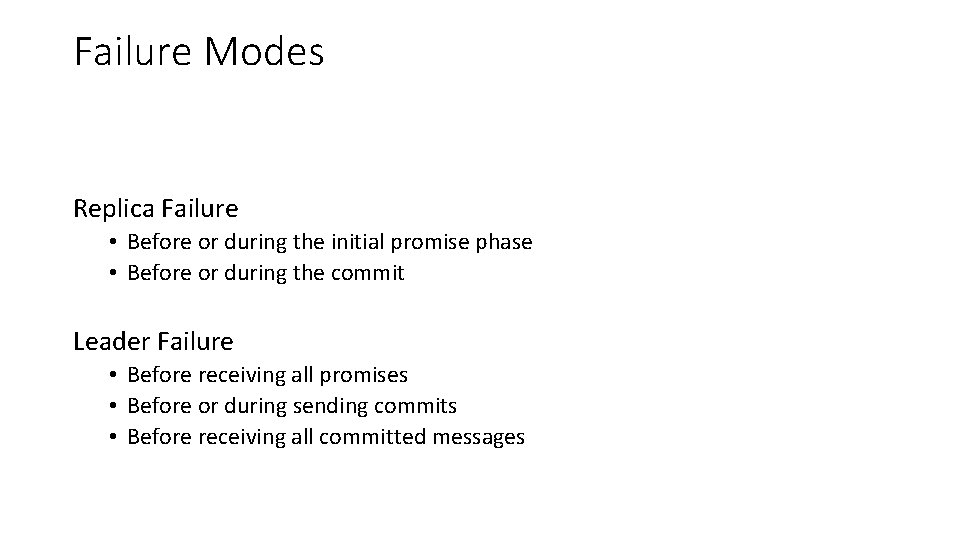

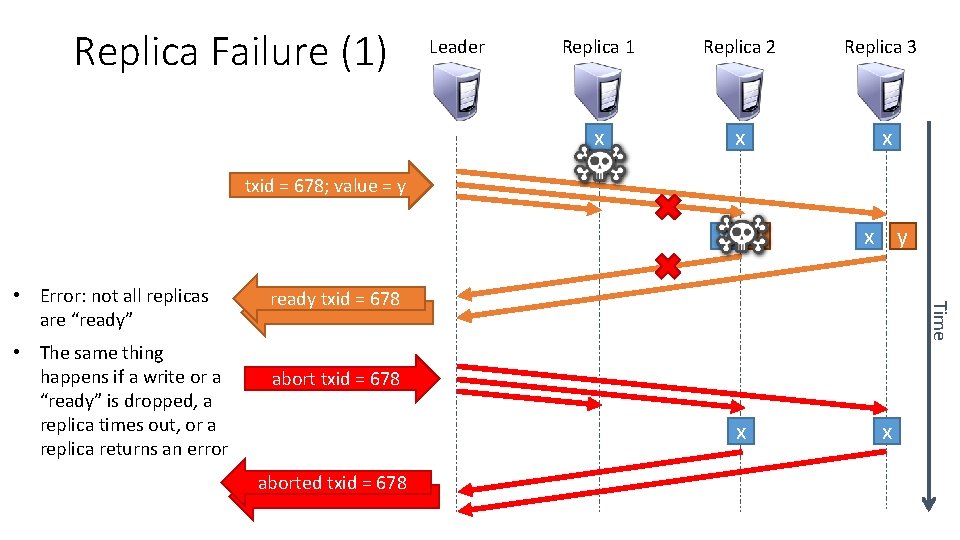

Failure Modes Replica Failure • Before or during the initial promise phase • Before or during the commit Leader Failure • Before receiving all promises • Before or during sending commits • Before receiving all committed messages

Replica Failure (1) Leader Replica 1 Replica 2 Replica 3 x x y x x txid = 678; value = y • The same thing happens if a write or a “ready” is dropped, a replica times out, or a replica returns an error ready txid = 678 Time • Error: not all replicas are “ready” abort txid = 678 aborted txid = 678

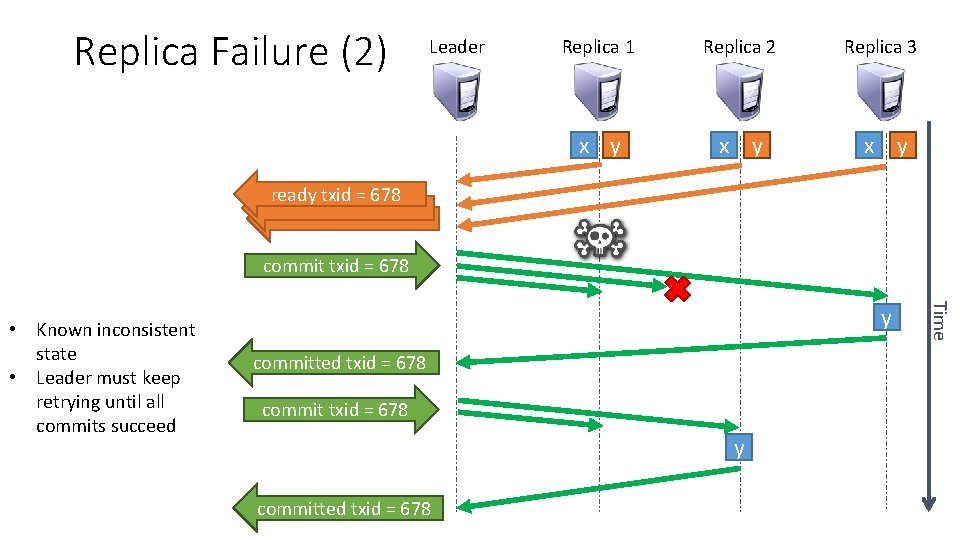

Replica Failure (2) Leader Replica 1 Replica 2 Replica 3 x y x y ready txid = 678 commit txid = 678 y committed txid = 678 Time • Known inconsistent state • Leader must keep retrying until all commits succeed

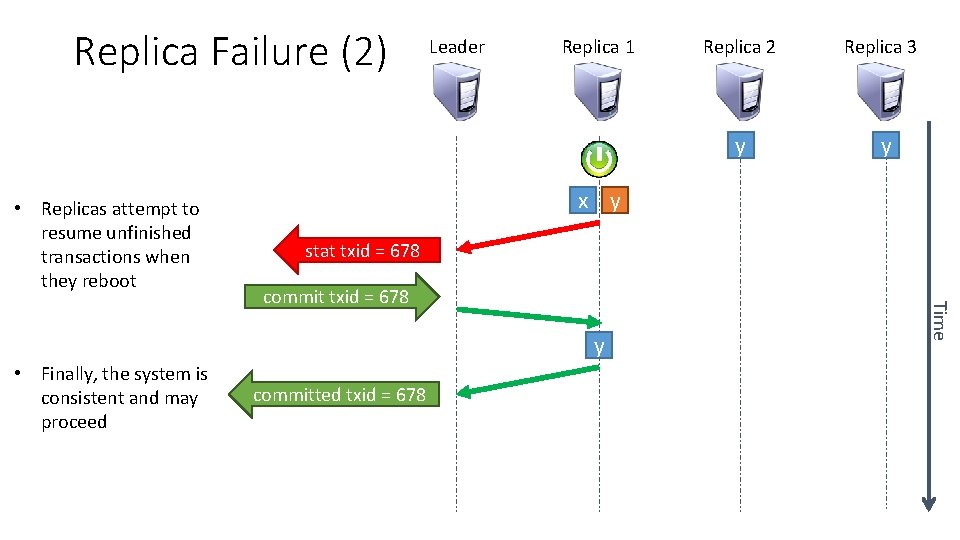

Replica Failure (2) • Replicas attempt to resume unfinished transactions when they reboot Leader Replica 1 Replica 3 y y x y stat txid = 678 committed txid = 678 Time commit txid = 678 y • Finally, the system is consistent and may proceed Replica 2

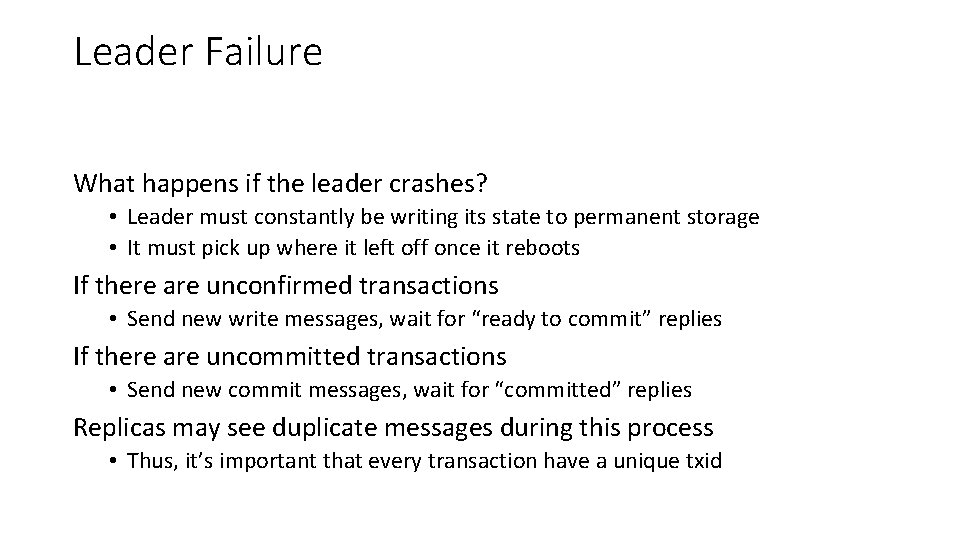

Leader Failure What happens if the leader crashes? • Leader must constantly be writing its state to permanent storage • It must pick up where it left off once it reboots If there are unconfirmed transactions • Send new write messages, wait for “ready to commit” replies If there are uncommitted transactions • Send new commit messages, wait for “committed” replies Replicas may see duplicate messages during this process • Thus, it’s important that every transaction have a unique txid

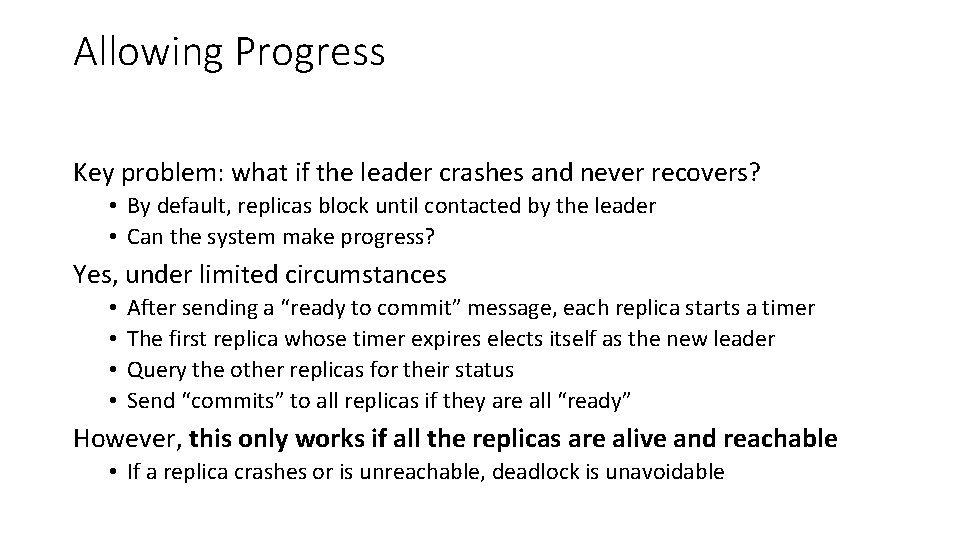

Allowing Progress Key problem: what if the leader crashes and never recovers? • By default, replicas block until contacted by the leader • Can the system make progress? Yes, under limited circumstances • • After sending a “ready to commit” message, each replica starts a timer The first replica whose timer expires elects itself as the new leader Query the other replicas for their status Send “commits” to all replicas if they are all “ready” However, this only works if all the replicas are alive and reachable • If a replica crashes or is unreachable, deadlock is unavoidable

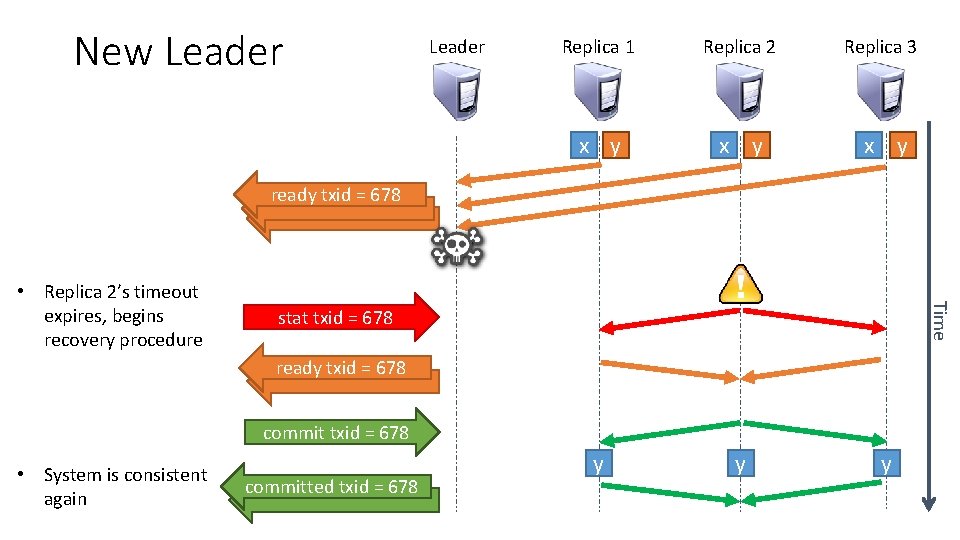

New Leader Replica 1 Replica 2 Replica 3 x y x y y ready txid = 678 Time • Replica 2’s timeout expires, begins recovery procedure stat txid = 678 ready txid = 678 commit txid = 678 • System is consistent again committed txid = 678

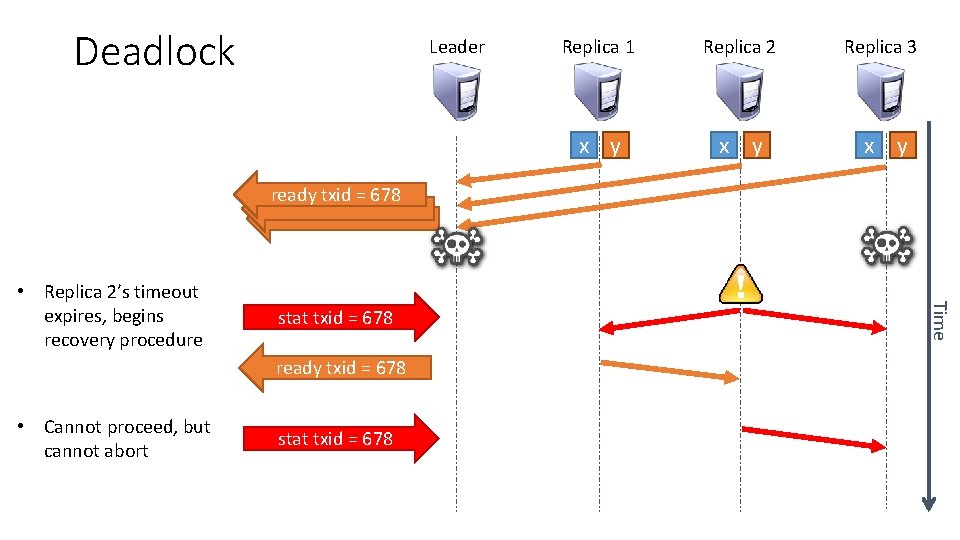

Deadlock Leader Replica 1 Replica 2 Replica 3 x y x y ready txid = 678 stat txid = 678 ready txid = 678 • Cannot proceed, but cannot abort stat txid = 678 Time • Replica 2’s timeout expires, begins recovery procedure

Garbage Collection 2 PC is somewhat of a misnomer: there is a third phase • Garbage collection Replicas must retain records of past transactions, just in case the leader fails • Example, suppose the leader crashes, reboots, and attempts to commit a transaction that has already been committed • Replicas must remember that this past transaction was already committed, since committing a second time may lead to inconsistencies In practice, leader periodically tells replicas to garbage collect • All transactions <= some txid may be deleted

2 PC Summary Message complexity: O(2 n) The good: guarantees consistency The bad: • • Write performance suffers if there are failures during the commit phase Does not scale gracefully (possible, but difficult to do) A pure 2 PC system blocks all writes if the leader fails Smarter 2 PC systems still blocks all writes if the leader + 1 replica fail 2 PC sacrifices availability in favor of consistency

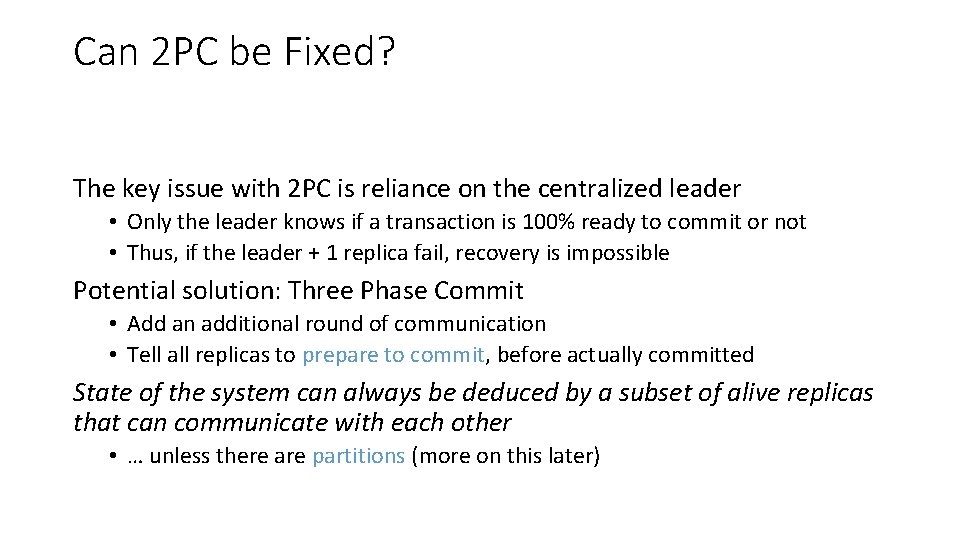

Can 2 PC be Fixed? The key issue with 2 PC is reliance on the centralized leader • Only the leader knows if a transaction is 100% ready to commit or not • Thus, if the leader + 1 replica fail, recovery is impossible Potential solution: Three Phase Commit • Add an additional round of communication • Tell all replicas to prepare to commit, before actually committed State of the system can always be deduced by a subset of alive replicas that can communicate with each other • … unless there are partitions (more on this later)

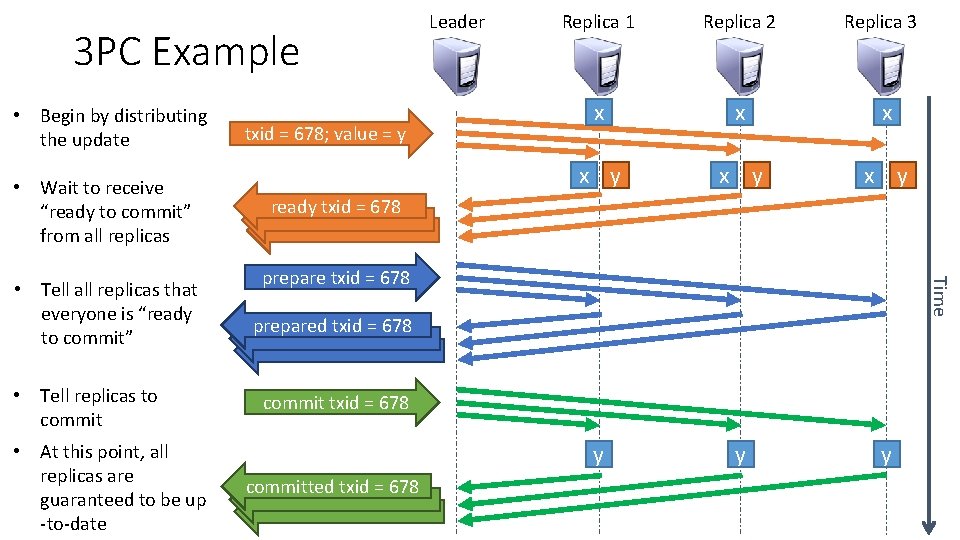

3 PC Example • Begin by distributing the update • Wait to receive “ready to commit” from all replicas • Tell replicas to commit • At this point, all replicas are guaranteed to be up -to-date Replica 1 Replica 2 Replica 3 x x y x y y ready txid = 678 prepare txid = 678 Time • Tell all replicas that everyone is “ready to commit” txid = 678; value = y Leader prepared txid = 678 committed txid = 678

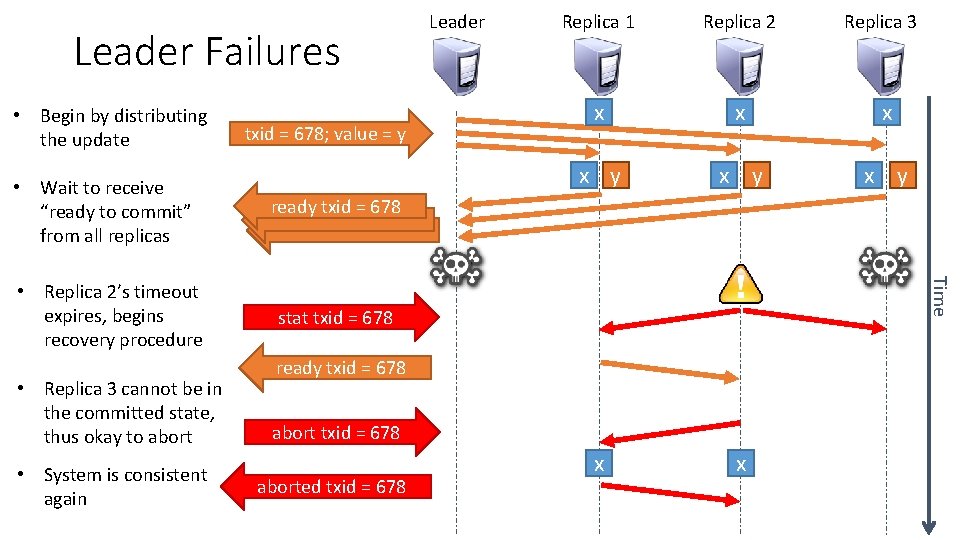

Leader Failures • Begin by distributing the update txid = 678; value = y ready txid = 678 • Replica 2’s timeout expires, begins recovery procedure stat txid = 678 • Replica 3 cannot be in the committed state, thus okay to abort • System is consistent again Replica 1 Replica 2 x x y x y x x ready txid = 678 aborted txid = 678 Replica 3 Time • Wait to receive “ready to commit” from all replicas Leader

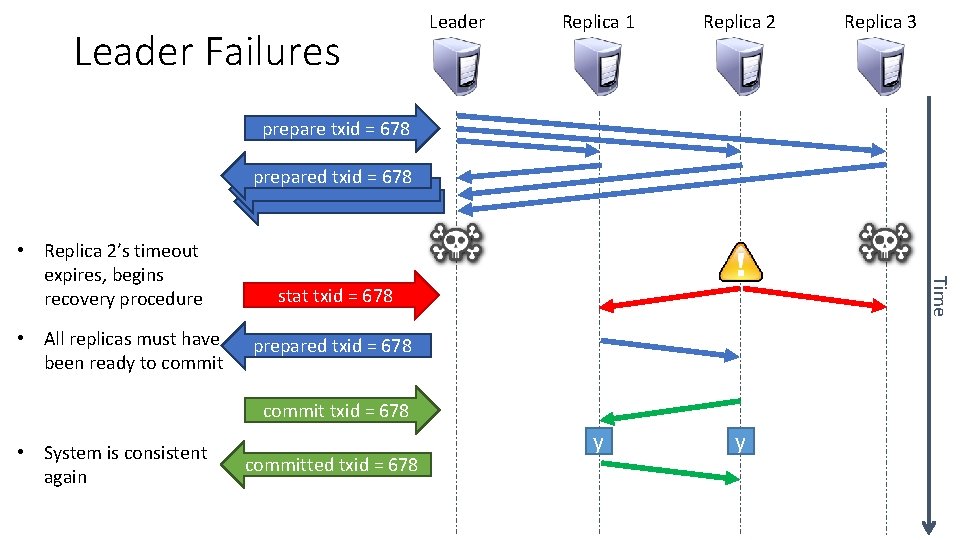

Leader Failures Leader Replica 1 Replica 2 y y Replica 3 prepare txid = 678 prepared txid = 678 • All replicas must have been ready to commit Time • Replica 2’s timeout expires, begins recovery procedure stat txid = 678 prepared txid = 678 commit txid = 678 • System is consistent again committed txid = 678

Oh Great, I Fixed Everything! Wrong 3 PC is not robust against network partitions What is a network partition? • A split in the network, such that full n-to-n connectivity is broken • i. e. not all servers can contact each other Partitions split the network into one or more disjoint subnetworks How can a network partition occur? • A switch or a router may fail, or it may receive an incorrect routing rule • A cable connecting two racks of servers may develop a fault Network partitions are very real, they happen all the time

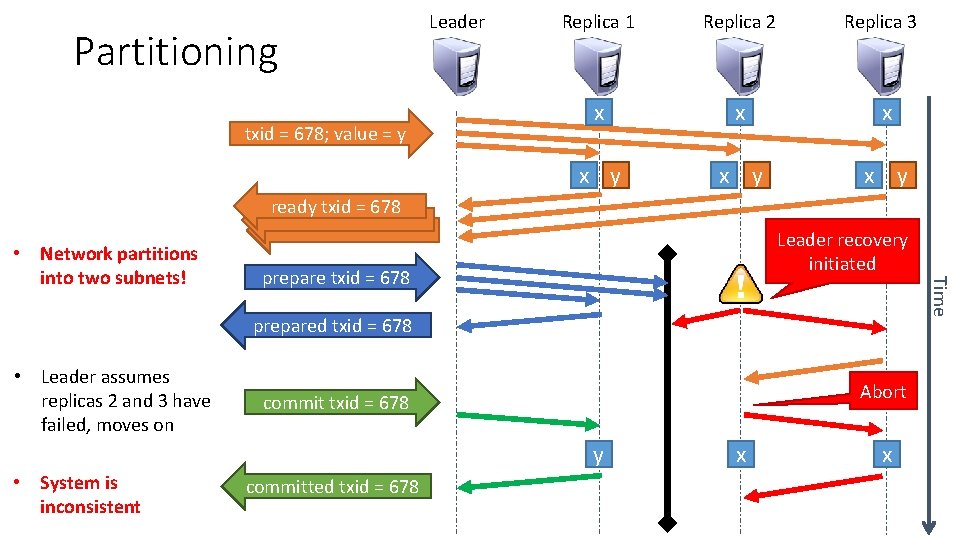

Partitioning txid = 678; value = y Leader Replica 1 Replica 2 Replica 3 x x y x y ready txid = 678 Leader recovery initiated prepare txid = 678 Time • Network partitions into two subnets! prepared txid = 678 • Leader assumes replicas 2 and 3 have failed, moves on Abort commit txid = 678 y • System is inconsistent committed txid = 678 x x

3 PC Summary Adds an additional phase vs. 2 PC • Message complexity: O(3 n) • Really four phases with garbage collection The good: allows the system to make progress under more failure conditions The bad: • Extra round of communication makes 3 PC even slower than 2 PC • Does not work if the network partitions • 2 PC will simply deadlock if there is a partition, rather than become inconsistent In practice, nobody used 3 PC • Additional complexity and performance penalty just isn’t worth it • Loss of consistency during partitions is a deal breaker

Distributed Commits (2 PC and 3 PC) Theory (FLP and CAP) Quorums (Paxos)

A Moment of Reflection • Goals, revisited: • The system should be able to reach consensus • Consensus [n]: general agreement • The system should be consistent • Data should be correct; no integrity violations • The system should be highly available • Data should be accessible even in the face of arbitrary failures • Achieving these goals may be harder than we thought : ( • Huge number of failure modes • Network partitions are difficult to cope with • We haven’t even considered byzantine failures

What Can Theory Tell Us? • Lets assume the network is synchronous and reliable • Algorithm can be divided into discreet rounds • If a message from host r is not received in a round, then r must be faulty • Remember, the network is reliable in this case • Since we’re assuming synchrony, packets cannot be delayed arbitrarily • During each round, r may send m <= n messages • n is the total number of replicas • You might crash before sending all n messages • If we are willing to tolerate f total failures (f < n), how many rounds of communication do we need to guarantee consensus?

Consensus in a Synchronous System • Initialization: • All replicas choose a value 0 or 1 (can generalize to more values if you want) • Properties: • Agreement: all non-faulty processes ultimately choose the same value • Either 0 or 1 in this case • Validity: if a replica decides on a value, then at least one replica must have started with that value • This prevents the trivial solution of all replicas always choosing 0, which is technically perfect consensus but is practically useless • Termination: the system must converge in finite time

Algorithm Sketch • Each replica maintains a map M of all known values • Initially, the vector only contains the replica’s own value • e. g. M = {‘replica 1’: 0} • Each round, broadcast M to all other replicas • On receipt, construct the union of received M and local M • Algorithm terminates when all non-faulty replicas have the values from all other non-faulty replicas • Example with three non-faulty replicas (1, 3, and 5) • M = {‘replica 1’: 0, ‘replica 3’: 1, ‘replica 5’: 0} • Final value is min(M. values())

Bounding Convergence Time • How many rounds will it take if we are willing to tolerate f failures? • f + 1 rounds • Key insight: all replicas must be sure that all replicas that did not crash have the same information (so they can make the same decision) • Proof sketch, assuming f = 2 • Worst case scenario is that replicas crash during rounds 1 and 2 • During round 1, replica x crashes • All other replicas don’t know if x it alive or dead • During round 2, replica y crashes • Clear that x is not alive, but unknown if y is alive or dead • During round 3, no more replicas may crash • All replicas are guaranteed to receive updated info from all other replicas • Final decision can be made

A More Realistic Model • The previous result is interesting, but totally unrealistic • We assume that the network is synchronous and reliable • Of course, neither of these things are true in reality • What if the network is asynchronous and reliable? • Replicas may take an arbitrarily long time to respond to messages • Let’s also assume that all faults are crash faults • i. e. if a replica has a problem it crashes and never wakes up • No byzantine faults

The FLP Result There is no asynchronous algorithm that achieves consensus on a 1 -bit value in the presence of crash faults. The result is true even if no crash actually occurs! • This is known as the FLP result • Michael J. Fischer, Nancy A. Lynch, and Michael S. Paterson, 1985 • Extremely powerful result because: • If you can’t agree on 1 -bit, generalizing to larger values isn’t going to help you • If you can’t guarantee convergence with crash faults, no way you can guarantee convergence with byzantine faults • If you can’t guarantee convergence on a reliable network, no way you can on an unreliable network

FLP Proof Sketch • In an asynchronous system, a replica x cannot tell whether a nonresponsive replica y has crashed or is just slow • What can x do? • If x waits, it will block indefinitely since it might never receive the message from y • If x decides, it may find out later that y made a different decision • Proof constructs a scenario where each attempt to decide is overruled by a delayed, asynchronous message • Thus, the system oscillates between 0 and 1 never converges

Impact of FLP • FLP proves that any fault-tolerant distributed algorithm attempting to reach consensus has runs that never terminate • These runs are extremely unlikely (“probability zero”) • Yet they imply that we can’t find a totally correct solution • And so – “consensus is impossible” (“not always possible”) • So what can we do? • Use randomization, probabilistic guarantees (gossip protocols) • Avoid consensus, use quorum systems (Paxos or Raft) • In other words, trade-off consistency in favor of availability

Consistency vs. Availability (START HERE) • FLP states that perfect consistency is impossible • Practically, we can get close to perfect consistency, but at significant cost • e. g. using 3 PC • Availability begins to suffer dramatically under failure conditions • Is there a way to formalize the tradeoff between consistency and availability?

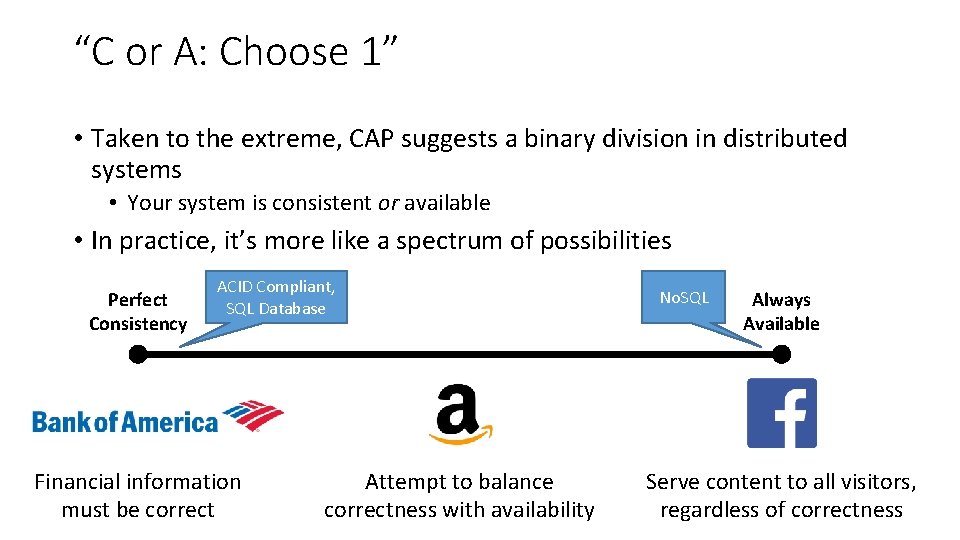

Eric Brewer’s CAP Theorem • CAP theorem for distributed data replication • Consistency: updates to data are applied to all or none • Availability: must be able to access all data • Network Partition Tolerance: failures can partition network into subtrees • The Brewer Theorem • No system can simultaneously achieve C and A and PT • Typical interpretation: “C, A, and P: choose 2” • In practice, all networks may partition, thus you must choose PT • So a better interpretation might be “C or A: choose 1” • Never formally proved or published • Yet widely accepted as a rule of thumb

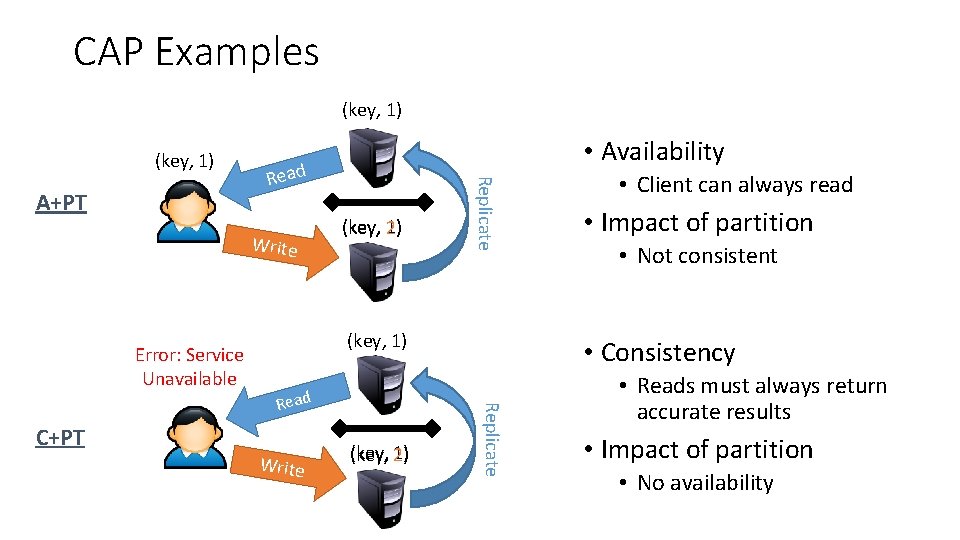

CAP Examples (key, 1) Read Write Error: Service Unavailable (key, 1) (key, 2) Replicate A+PT • Availability (key, 1) C+PT Write (key, 1) (key, 2) • Impact of partition • Not consistent • Consistency Replicate Read • Client can always read • Reads must always return accurate results • Impact of partition • No availability

“C or A: Choose 1” • Taken to the extreme, CAP suggests a binary division in distributed systems • Your system is consistent or available • In practice, it’s more like a spectrum of possibilities Perfect Consistency ACID Compliant, SQL Database Financial information must be correct Attempt to balance correctness with availability No. SQL Always Available Serve content to all visitors, regardless of correctness

Distributed Commits (2 PC and 3 PC) Theory (FLP and CAP) Quorums (Paxos)

Strong Consistency, Revisited • 2 PC and 3 PC achieve strong consistency, but they have significant shortcomings • 2 PC cannot make progress in the face of leader + 1 replica failures • 3 PC loses consistency guarantees in the face of network partitions • Where do we go from here? • Observation: 2 PC and 3 PC attempt to reach 100% agreement • What if 51% of the replicas agree?

Quorum Systems •

Advantages of Quorums • Availability: quorum systems are more resilient in the face of failures • Quorum systems can be designed to tolerate both benign and byzantine failures • Efficiency: can significantly reduce communication complexity • Do not require all servers in order to perform an operation • Requires a subset of them for each operation

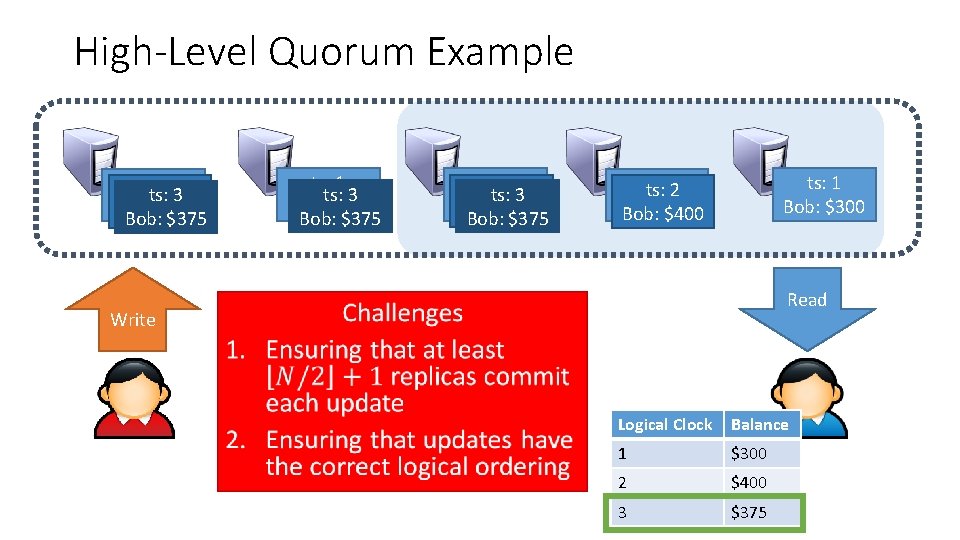

High-Level Quorum Example ts: 1 ts: 3 Bob: $300 Bob: $375 ts: 1 ts: 2 ts: 3 Bob: $300 Bob: $400 Bob: $375 ts: 1 Bob: $300 ts: 1 ts: 2 Bob: $300 Bob: $400 Read Write • Logical Clock Balance 1 $300 2 $400 3 $375

Paxos •

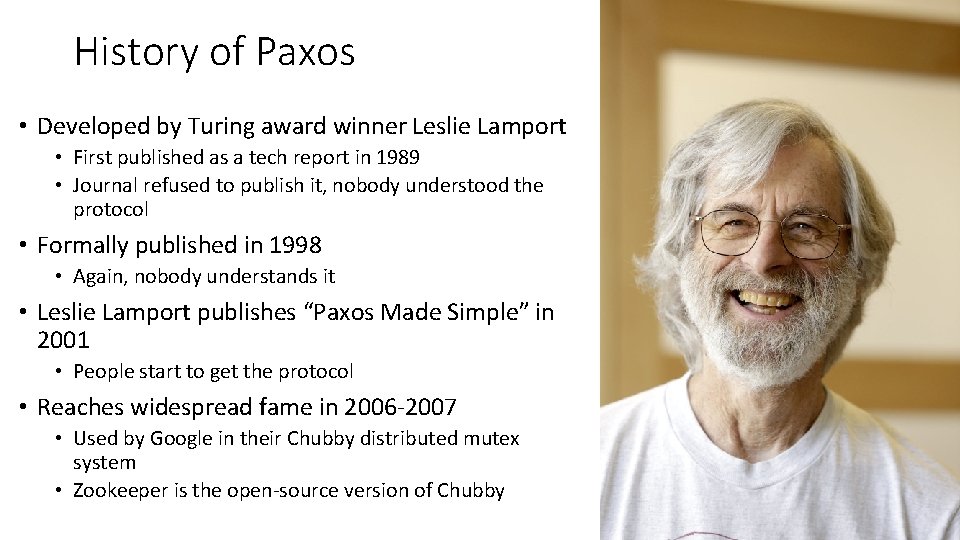

History of Paxos • Developed by Turing award winner Leslie Lamport • First published as a tech report in 1989 • Journal refused to publish it, nobody understood the protocol • Formally published in 1998 • Again, nobody understands it • Leslie Lamport publishes “Paxos Made Simple” in 2001 • People start to get the protocol • Reaches widespread fame in 2006 -2007 • Used by Google in their Chubby distributed mutex system • Zookeeper is the open-source version of Chubby

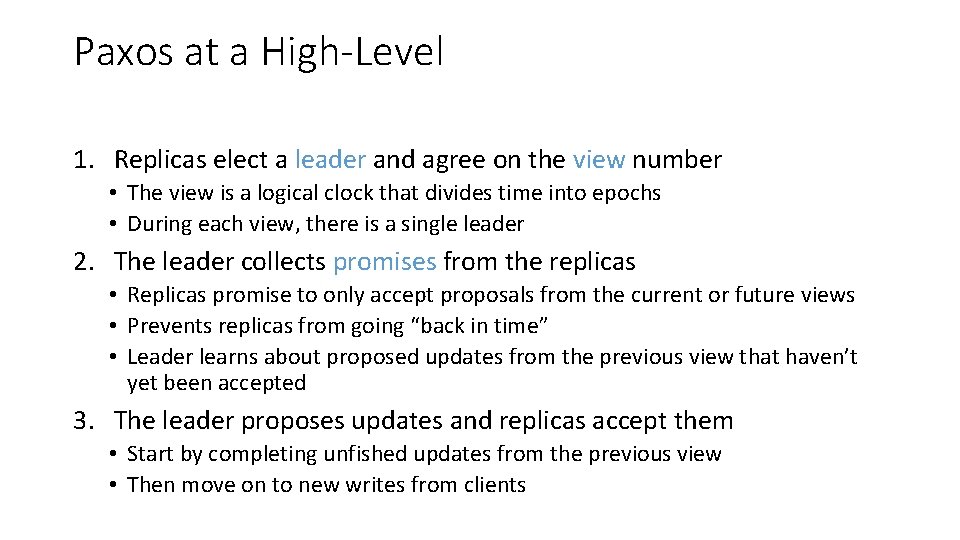

Paxos at a High-Level 1. Replicas elect a leader and agree on the view number • The view is a logical clock that divides time into epochs • During each view, there is a single leader 2. The leader collects promises from the replicas • Replicas promise to only accept proposals from the current or future views • Prevents replicas from going “back in time” • Leader learns about proposed updates from the previous view that haven’t yet been accepted 3. The leader proposes updates and replicas accept them • Start by completing unfished updates from the previous view • Then move on to new writes from clients

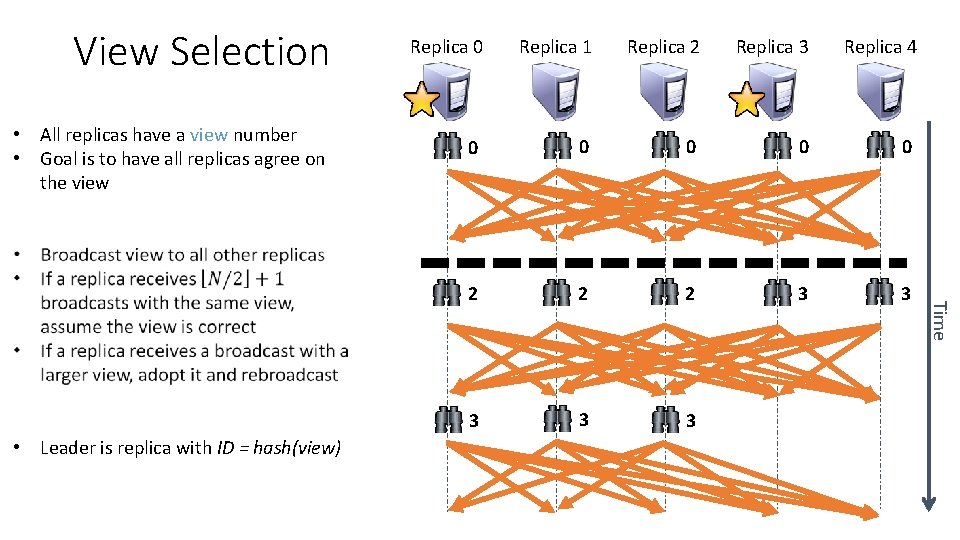

View Selection • All replicas have a view number • Goal is to have all replicas agree on the view Replica 0 Replica 1 Replica 2 Replica 3 Replica 4 0 0 0 2 2 2 3 3 3 Time • Leader is replica with ID = hash(view)

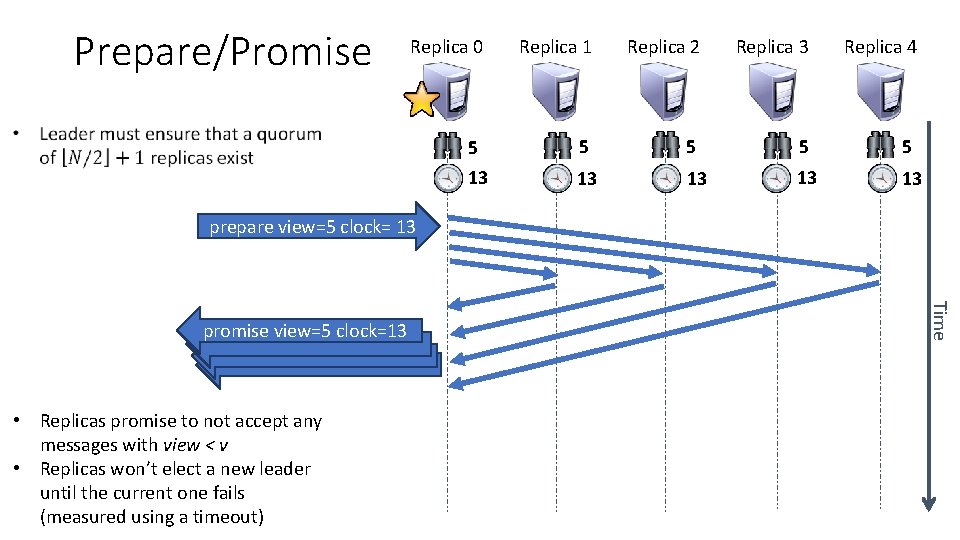

Prepare/Promise Replica 0 Replica 1 Replica 2 Replica 3 Replica 4 5 5 5 13 13 13 prepare view=5 clock= 13 • Replicas promise to not accept any messages with view < v • Replicas won’t elect a new leader until the current one fails (measured using a timeout) Time promise view=5 clock=13

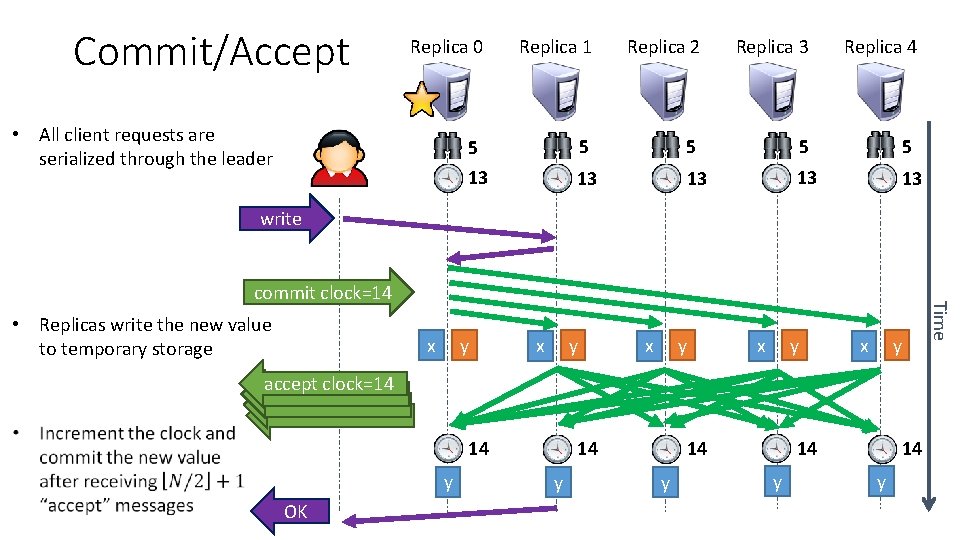

Commit/Accept Replica 0 Replica 1 Replica 2 Replica 3 Replica 4 5 5 5 13 13 13 • All client requests are serialized through the leader write • Replicas write the new value to temporary storage x y y x y x Time commit clock=14 y x accept clock=14 14 y OK 14 y 14 14 y y 14 y

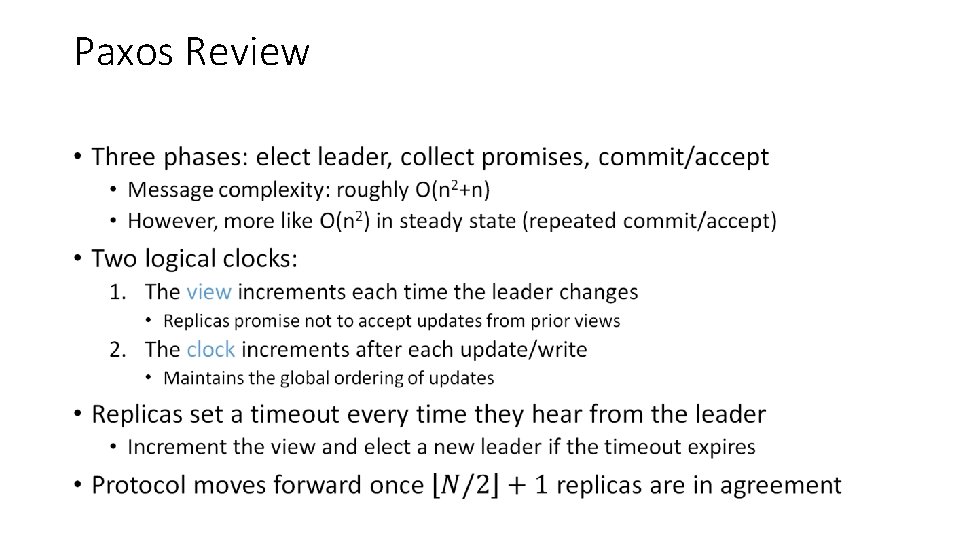

Paxos Review •

Failure Modes 1. What happens if a commit fails? 2. What happens during a partition? 3. What happens after the leader fails?

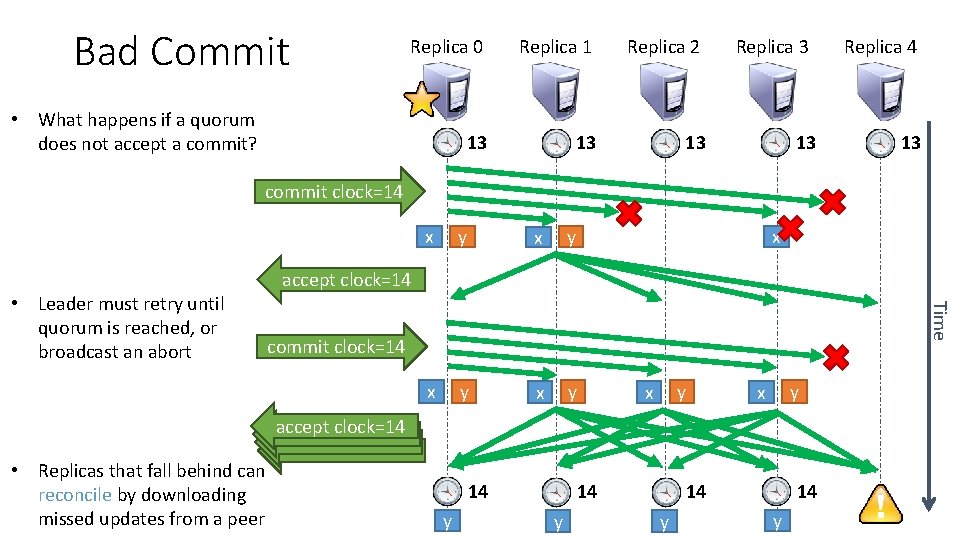

Bad Commit Replica 0 Replica 1 Replica 2 13 13 13 • What happens if a quorum does not accept a commit? Replica 3 13 Replica 4 13 commit clock=14 y x x y accept clock=14 Time • Leader must retry until quorum is reached, or broadcast an abort x commit clock=14 y x accept clock=14 • Replicas that fall behind can reconcile by downloading missed updates from a peer 14 y 14 14 y y

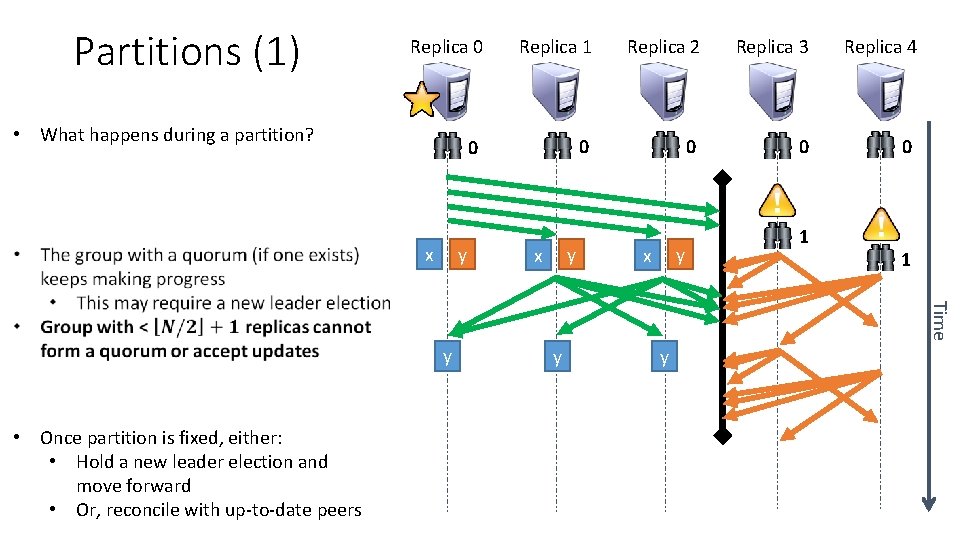

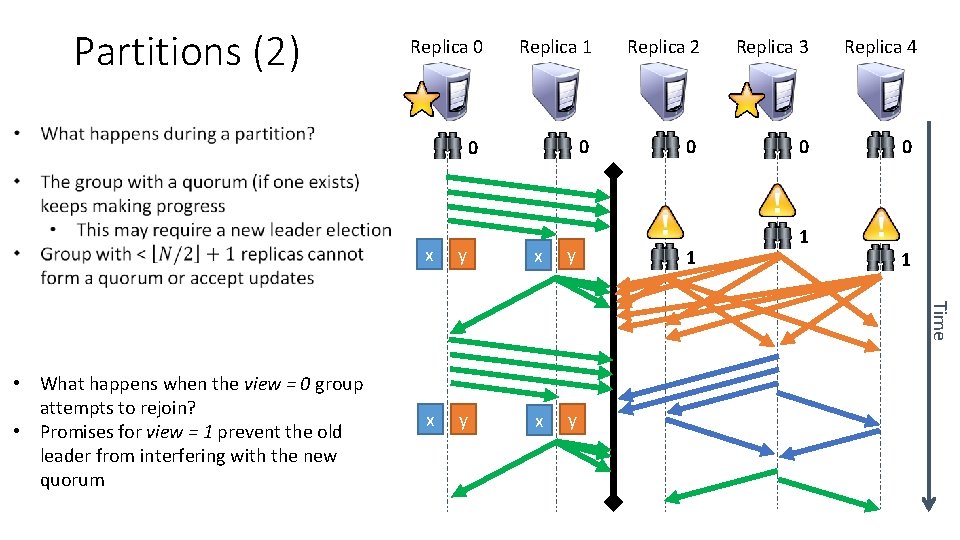

Partitions (1) Replica 0 Replica 1 Replica 2 Replica 3 Replica 4 0 0 0 • What happens during a partition? x y y x 1 1 Time y • Once partition is fixed, either: • Hold a new leader election and move forward • Or, reconcile with up-to-date peers y y

Partitions (2) Replica 0 Replica 1 Replica 2 Replica 3 Replica 4 0 0 0 y x y x y 1 1 Time x 1 • What happens when the view = 0 group attempts to rejoin? • Promises for view = 1 prevent the old leader from interfering with the new quorum

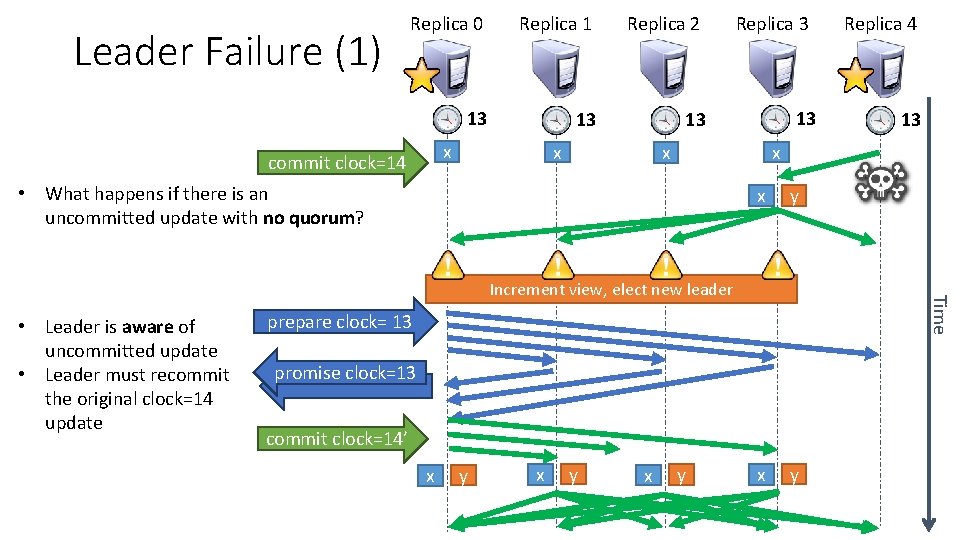

Leader Failure (1) Replica 0 Replica 1 Replica 2 13 13 13 x commit clock=14 x Replica 3 13 x y Time Increment view, elect new leader prepare clock= 13 promise clock=13 commit clock=14’ x y x 13 x x • What happens if there is an uncommitted update with no quorum? • Leader is aware of uncommitted update • Leader must recommit the original clock=14 update Replica 4 y

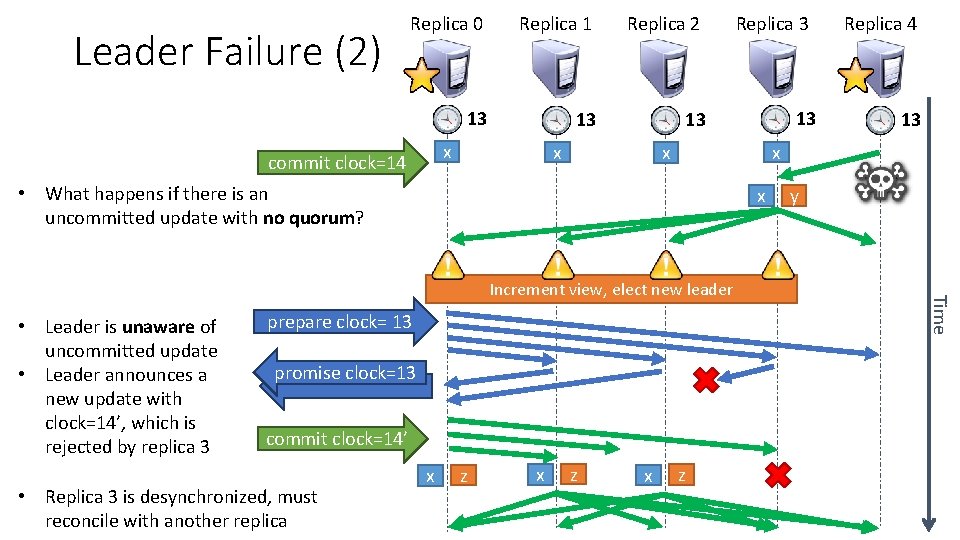

Leader Failure (2) Replica 0 Replica 1 Replica 2 13 13 13 x commit clock=14 x x promise clock=13 commit clock=14’ z x 13 z y Time prepare clock= 13 x Replica 4 x Increment view, elect new leader • Replica 3 is desynchronized, must reconcile with another replica 13 x • What happens if there is an uncommitted update with no quorum? • Leader is unaware of uncommitted update • Leader announces a new update with clock=14’, which is rejected by replica 3 Replica 3

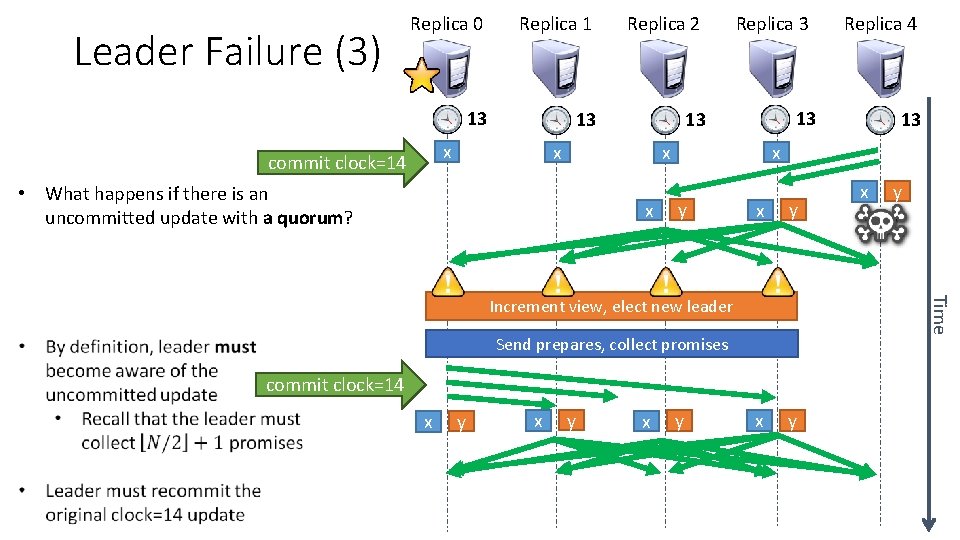

Leader Failure (3) Replica 0 Replica 1 Replica 2 13 13 13 x commit clock=14 x Replica 3 13 x y y x y Send prepares, collect promises commit clock=14 x y x y Time x Increment view, elect new leader 13 x x • What happens if there is an uncommitted update with a quorum? Replica 4

The Devil in the Details • Clearly, Paxos is complicated • Things we haven’t covered: • Reconciliation – how to bring a replica up-to-date • Managing the queue of updates from clients • Updates may be sent to any replica • Replicas are responsible for responding to clients who contact them • Replicas may need to re-forward updates if the leader changes • Garbage collection • Replicas need to remember the exact history of updates, in case the leader changes • Periodically, the lists need to be garbage collected

Odds and Ends Byzantine Generals Gossip

Byzantine Generals Problem • Name coined by Leslie Lamport • Several Byzantine Generals are laying siege to an enemy city • They have to agree on a common strategy: attack or retreat • They can only communicate by messenger • Some generals may be traitors (their identity is unknown) Do you see the connection with the consensus problem?

Byzantine Distributed Systems • Goals 1. All loyal lieutenants obey the same order 2. If the commanding general is loyal, then every loyal lieutenant obeys the order he sends • Can the problem be solved? • Yes, iff there at least 3 m+1 honest generals in the presence of m traitors • E. g. if there are 3 honest generals, even 1 traitor makes the problem unsolvable • Bazillion variations on the basic problem • What if messages are cryptographically signed (e. g. they are unforgeable)? • What if communication is not g x g (i. e. some pairs of generals cannot communicate)? • Most algos have byzantine versions (e. g. Byzantine Paxos)

Alternatives to Quorums • Quorums favor consistency over availability • If no quorum exists, then the system stops accepting writes • Significant overhead maintaining consistent replicated state • What if eventual consistency is okay? • Favor availability over consistency • Results may be stale or incorrect sometimes (hopefully only in rare cases) • Gossip protocols • • Replicas periodically, randomly exchange state with each other No strong consistency guarantees but… Surprisingly fast and reliable convergence to up-to-date state Requires vector clocks or better in order to causally order events • Extreme cases of divergence may be irreconcilable

Sources 1. Some slides courtesy of Cristina Nita-Rotaru (http: //cnitarot. github. io/courses/ds_Fall_2016/) 2. The Part-Time Parliament, Leslie Lamport. http: //research. microsoft. com/en-us/um/people/lamport/pubs/lamportpaxos. pdf 3. Paxos Made Simple, Leslie Lamport. http: //research. microsoft. com/en-us/um/people/lamport/pubs/paxos-simple. pdf 4. Paxos for System Builders, Jonathan Kirsch and Yair Amir. http: //www. cs. jhu. edu/~jak/docs/paxos_for_system_builders. pdf 5. The Chubby Lock Service for Loosely-Coupled Distributed Systems, Mike Burrows. http: //research. google. com/archive/chubby-osdi 06. pdf 6. Paxos Made Live – An Engineering Perspective, Tushar Deepak Chandra, Robert Griesemer, Joshua Redstone. http: //research. google. com/archive/paxos_made_live. pdf 7. Apache Zookeeper https: //zookeeper. apache. org/

- Slides: 76