CS 347 Parallel and Distributed Data Management Notes

CS 347: Parallel and Distributed Data Management Notes X: S 4 Hector Garcia-Molina CS 347 Lecture 9 B 1

Material based on: CS 347 Lecture 9 B 2

S 4 • Platform for processing unbounded data streams – general purpose – distributed – scalable – partially fault tolerant (whatever this means!) CS 347 Lecture 9 B 3

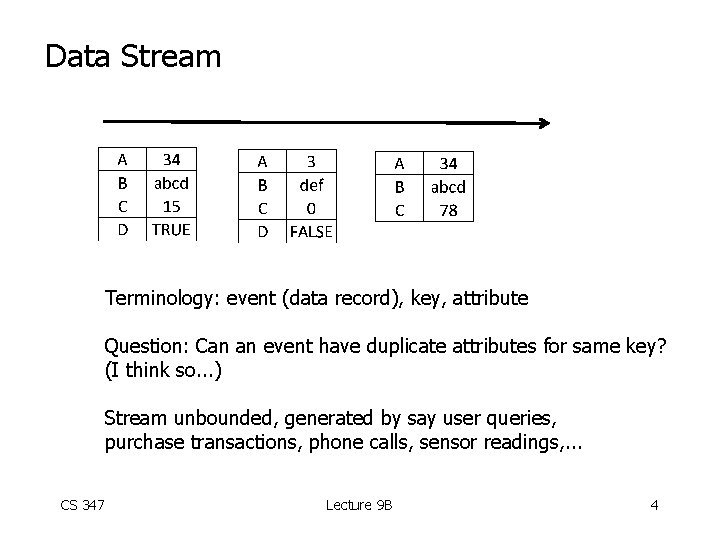

Data Stream Terminology: event (data record), key, attribute Question: Can an event have duplicate attributes for same key? (I think so. . . ) Stream unbounded, generated by say user queries, purchase transactions, phone calls, sensor readings, . . . CS 347 Lecture 9 B 4

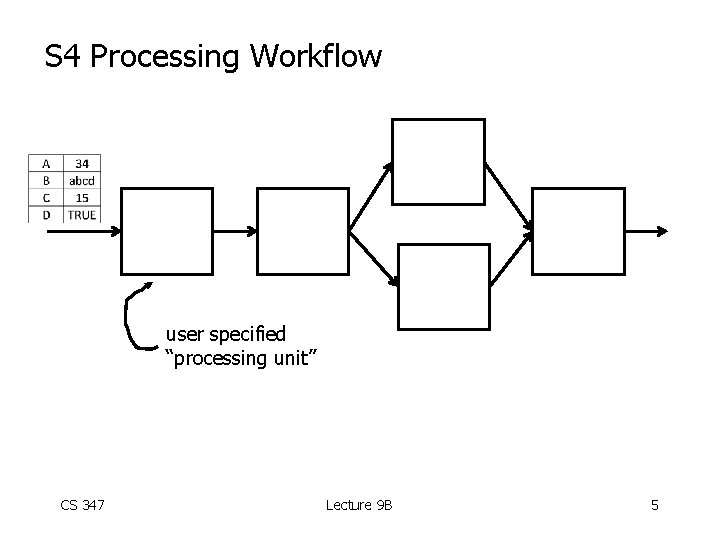

S 4 Processing Workflow user specified “processing unit” CS 347 Lecture 9 B 5

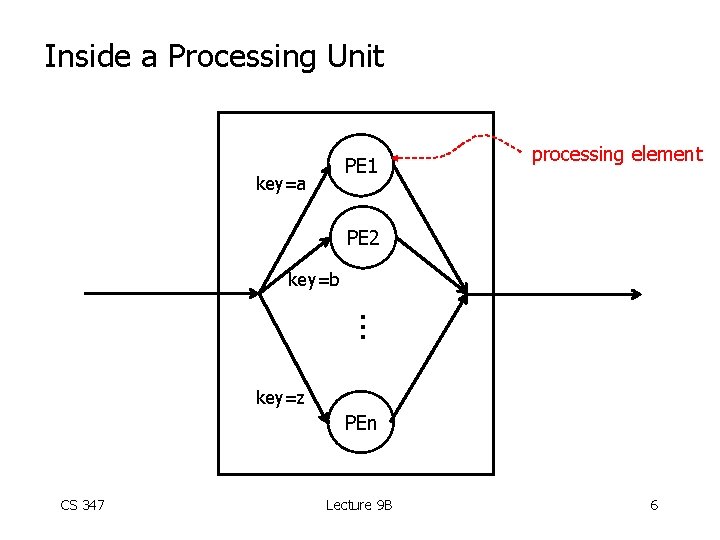

Inside a Processing Unit PE 1 key=a processing element PE 2 key=b . . . key=z PEn CS 347 Lecture 9 B 6

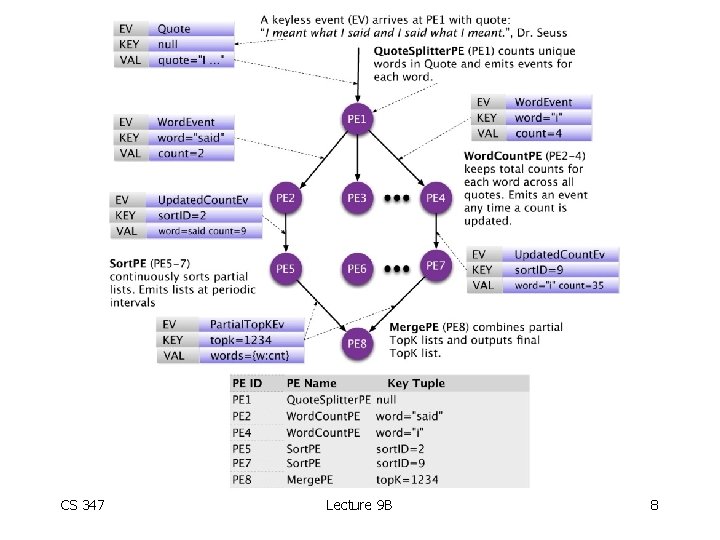

Example: • Stream of English quotes • Produce a sorted list of the top K most frequent words CS 347 Lecture 9 B 7

CS 347 Lecture 9 B 8

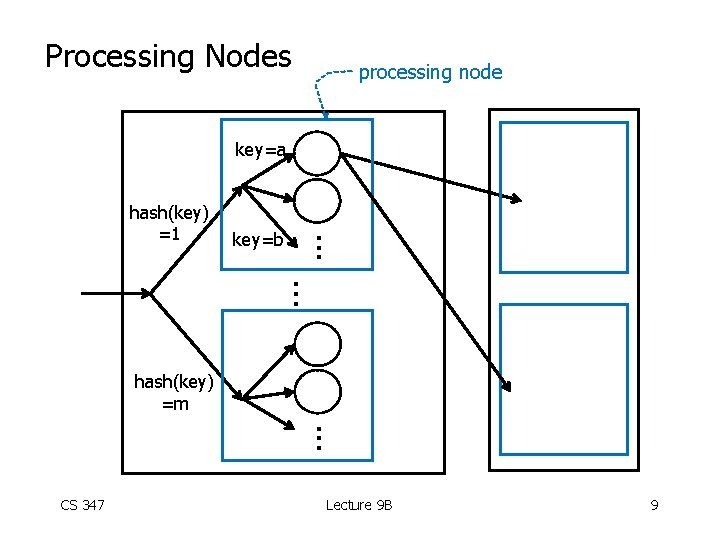

Processing Nodes processing node key=a key=b . . . hash(key) =1 . . . hash(key) =m . . . CS 347 Lecture 9 B 9

Dynamic Creation of PEs • As a processing node sees new key attributes, it dynamically creates new PEs to handle them • Think of PEs as threads CS 347 Lecture 9 B 10

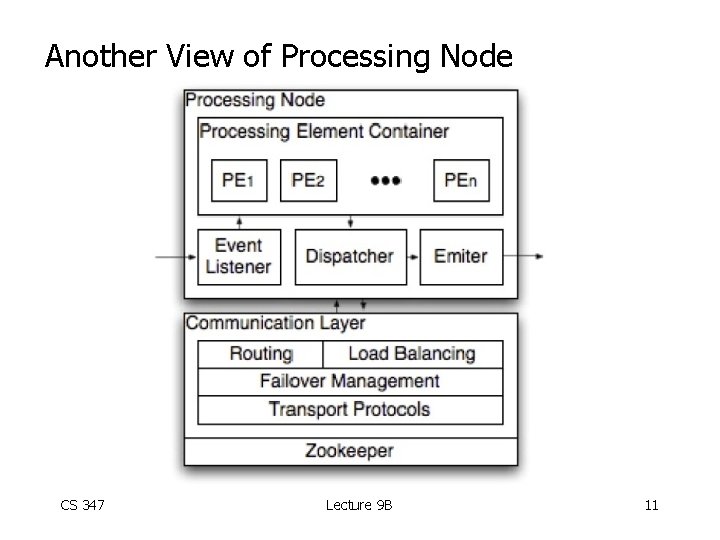

Another View of Processing Node CS 347 Lecture 9 B 11

Failures • Communication layer detects node failures and provides failover to standby nodes • What happens events in transit during failure? (My guess: events are lost!) CS 347 Lecture 9 B 12

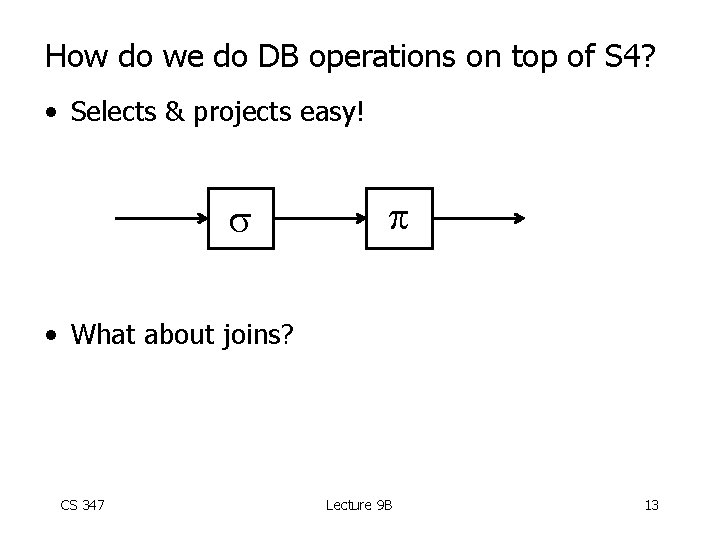

How do we do DB operations on top of S 4? • Selects & projects easy! • What about joins? CS 347 Lecture 9 B 13

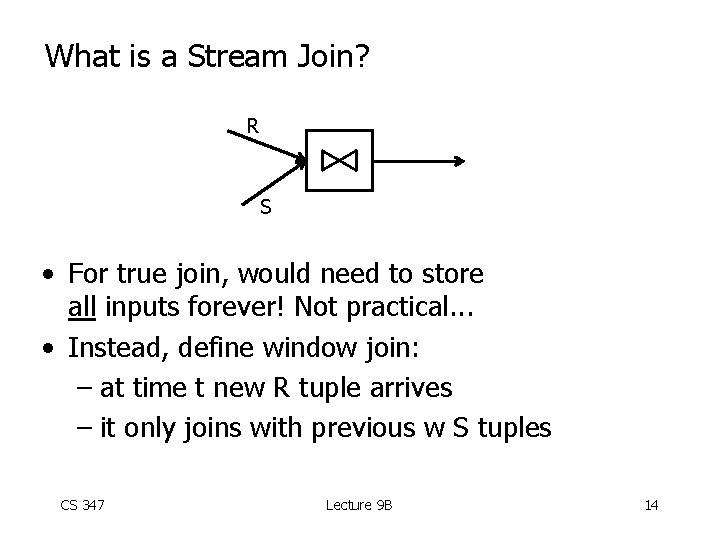

What is a Stream Join? R S • For true join, would need to store all inputs forever! Not practical. . . • Instead, define window join: – at time t new R tuple arrives – it only joins with previous w S tuples CS 347 Lecture 9 B 14

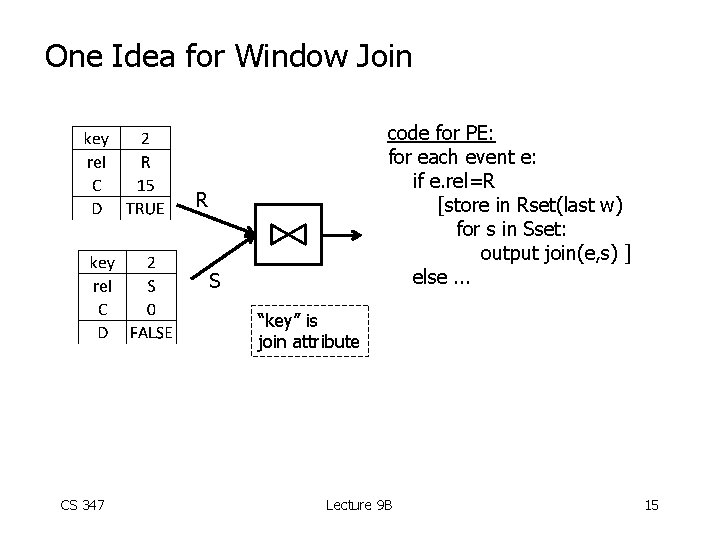

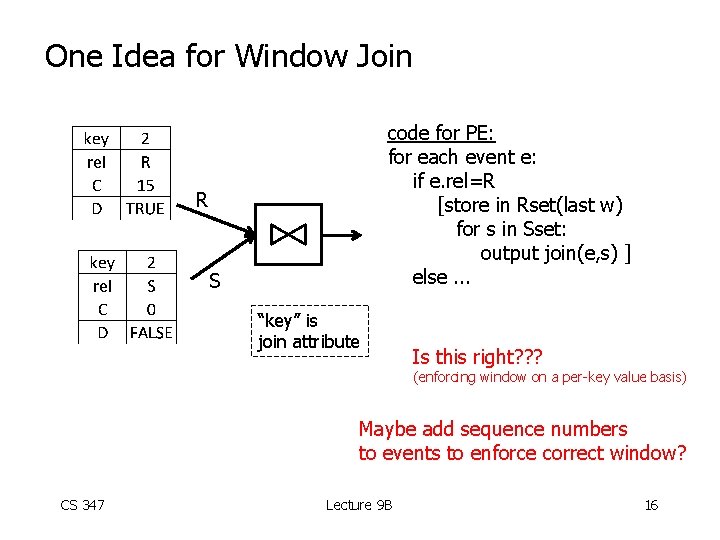

One Idea for Window Join code for PE: for each event e: if e. rel=R [store in Rset(last w) for s in Sset: output join(e, s) ] else. . . R S “key” is join attribute CS 347 Lecture 9 B 15

One Idea for Window Join code for PE: for each event e: if e. rel=R [store in Rset(last w) for s in Sset: output join(e, s) ] else. . . R S “key” is join attribute Is this right? ? ? (enforcing window on a per-key value basis) Maybe add sequence numbers to events to enforce correct window? CS 347 Lecture 9 B 16

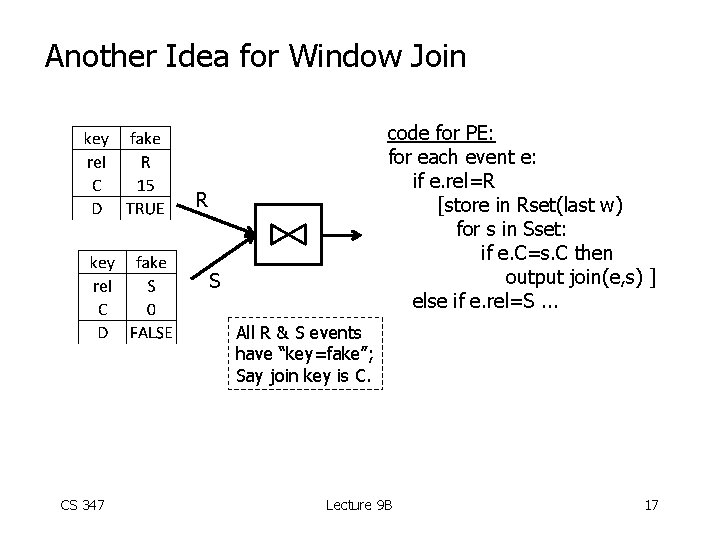

Another Idea for Window Join code for PE: for each event e: if e. rel=R [store in Rset(last w) for s in Sset: if e. C=s. C then output join(e, s) ] else if e. rel=S. . . R S All R & S events have “key=fake”; Say join key is C. CS 347 Lecture 9 B 17

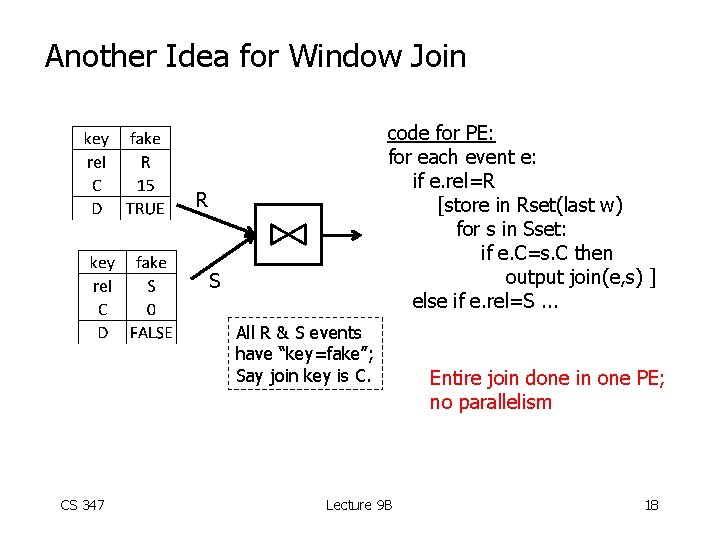

Another Idea for Window Join code for PE: for each event e: if e. rel=R [store in Rset(last w) for s in Sset: if e. C=s. C then output join(e, s) ] else if e. rel=S. . . R S All R & S events have “key=fake”; Say join key is C. CS 347 Lecture 9 B Entire join done in one PE; no parallelism 18

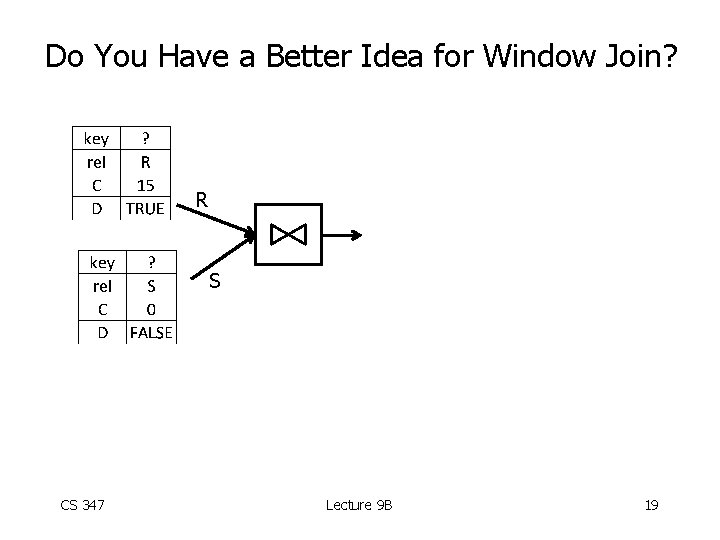

Do You Have a Better Idea for Window Join? R S CS 347 Lecture 9 B 19

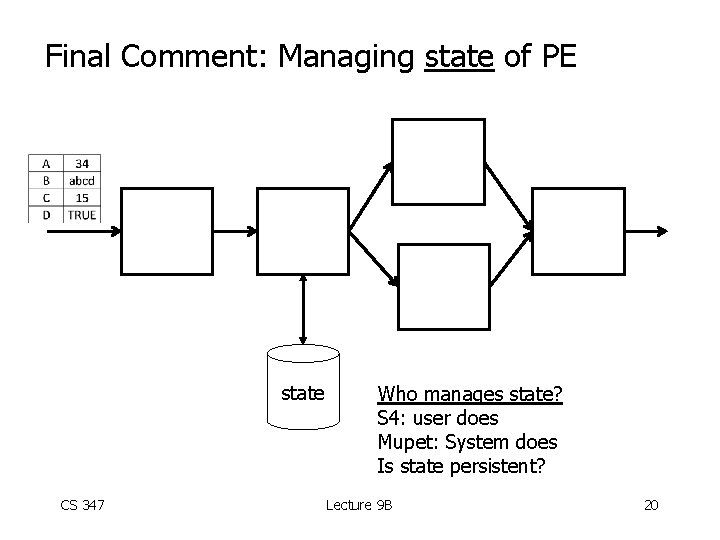

Final Comment: Managing state of PE state CS 347 Who manages state? S 4: user does Mupet: System does Is state persistent? Lecture 9 B 20

CS 347: Parallel and Distributed Data Management Notes X: Hyracks Hector Garcia-Molina CS 347 Lecture 9 B 21

Hyracks • Generalization of map-reduce • Infrastructure for “big data” processing • Material here based on: Appeared in ICDE 2011 CS 347 Lecture 9 B 22

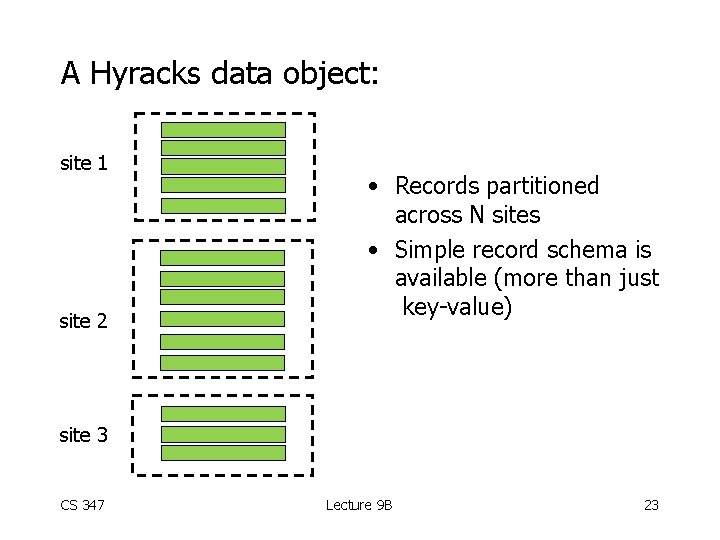

A Hyracks data object: site 1 site 2 • Records partitioned across N sites • Simple record schema is available (more than just key-value) site 3 CS 347 Lecture 9 B 23

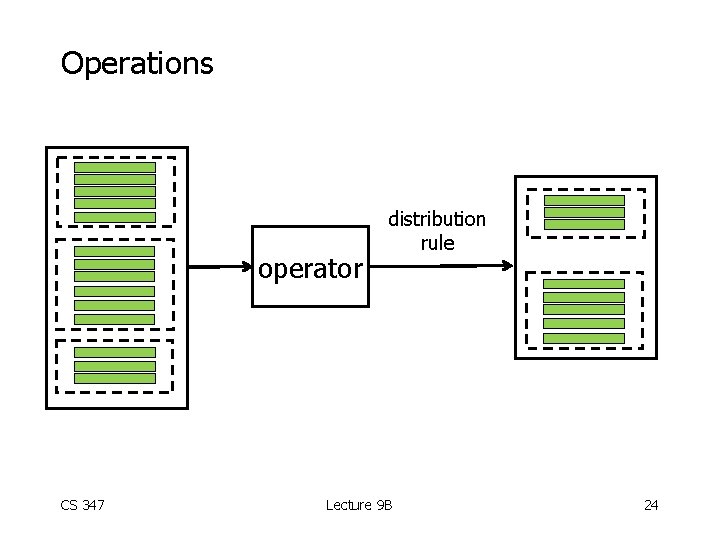

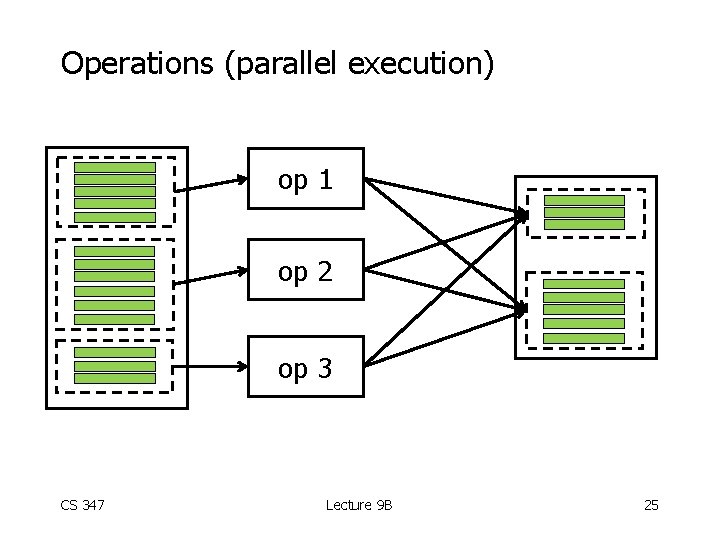

Operations operator CS 347 distribution rule Lecture 9 B 24

Operations (parallel execution) op 1 op 2 op 3 CS 347 Lecture 9 B 25

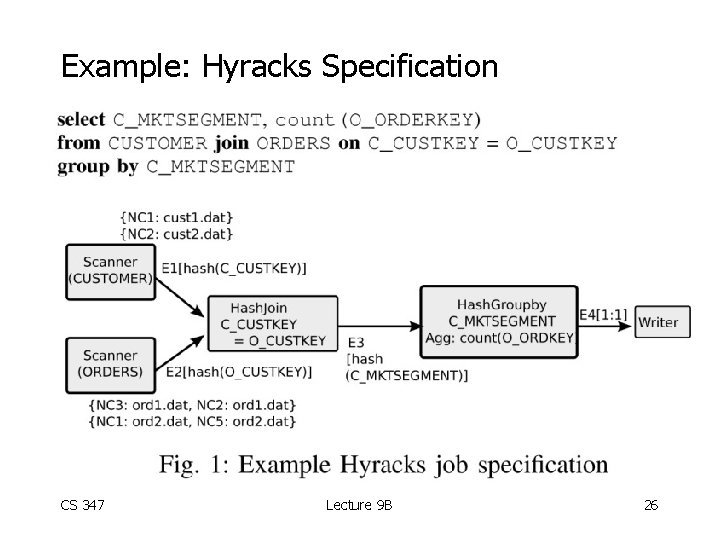

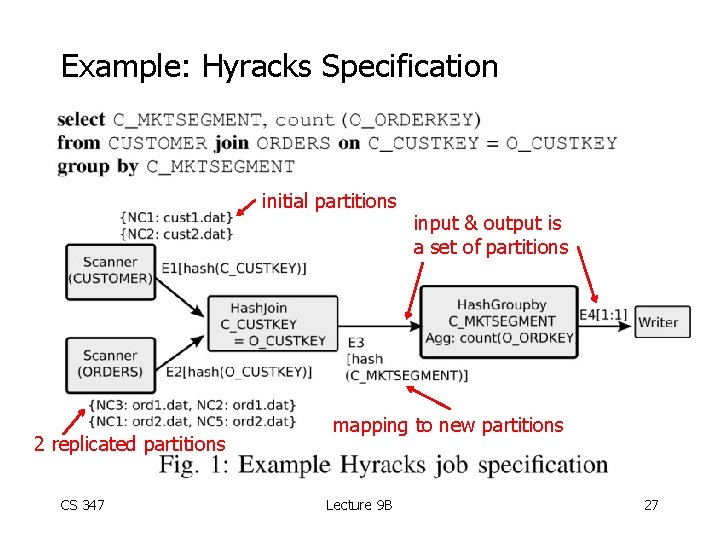

Example: Hyracks Specification CS 347 Lecture 9 B 26

Example: Hyracks Specification initial partitions 2 replicated partitions CS 347 input & output is a set of partitions mapping to new partitions Lecture 9 B 27

Notes • Job specification can be done manually or automatically CS 347 Lecture 9 B 28

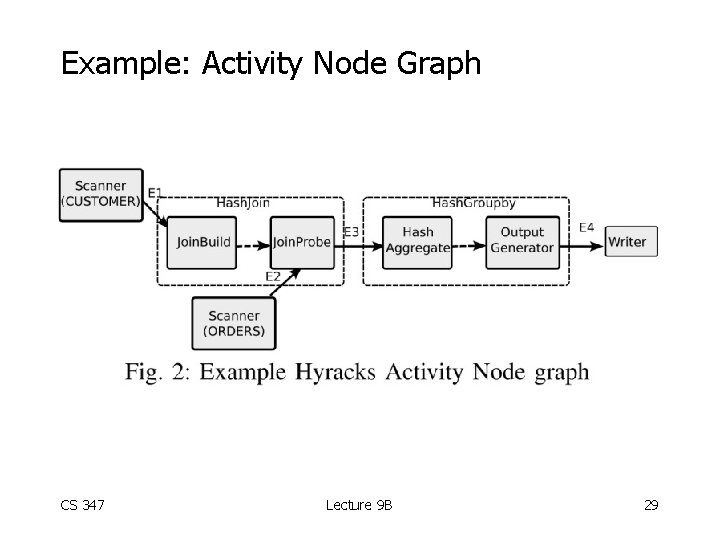

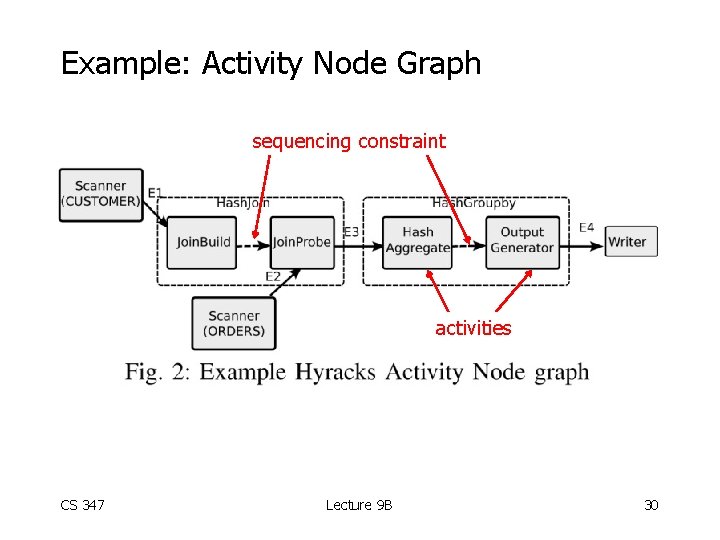

Example: Activity Node Graph CS 347 Lecture 9 B 29

Example: Activity Node Graph sequencing constraint activities CS 347 Lecture 9 B 30

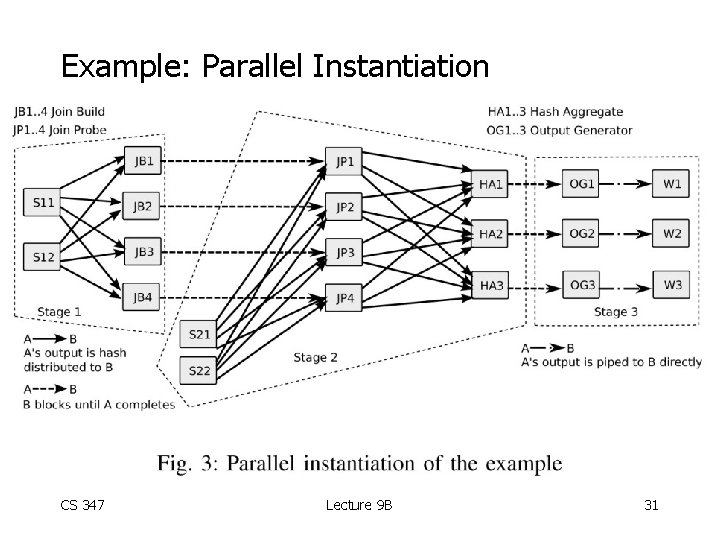

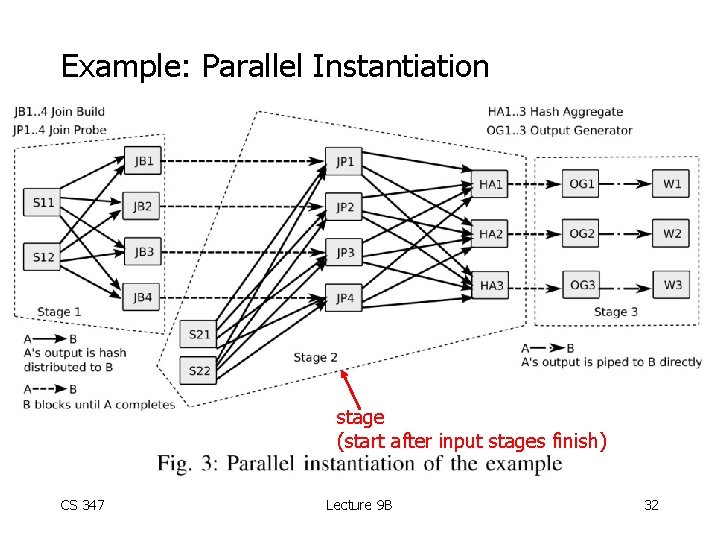

Example: Parallel Instantiation CS 347 Lecture 9 B 31

Example: Parallel Instantiation stage (start after input stages finish) CS 347 Lecture 9 B 32

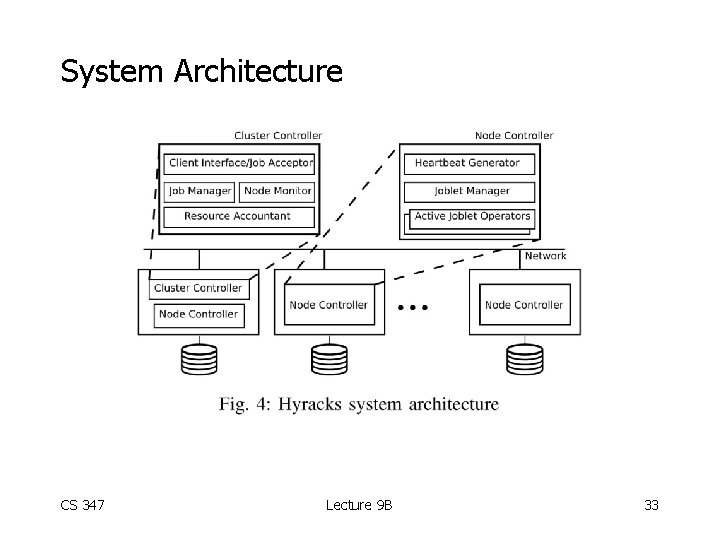

System Architecture CS 347 Lecture 9 B 33

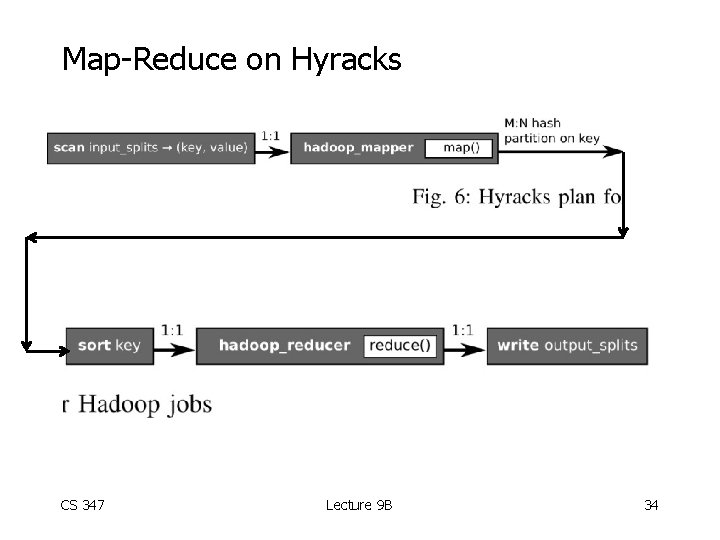

Map-Reduce on Hyracks CS 347 Lecture 9 B 34

Library of Operators: • • • File reader/writers Mappers Sorters Joiners (various types 0 Aggregators • Can add more CS 347 Lecture 9 B 35

Library of Connectors: • • • N: M 1: 1 hash partitioner hash-partitioning merger (input sorted) rage partitioner (with partition vector) replicator • Can add more! CS 347 Lecture 9 B 36

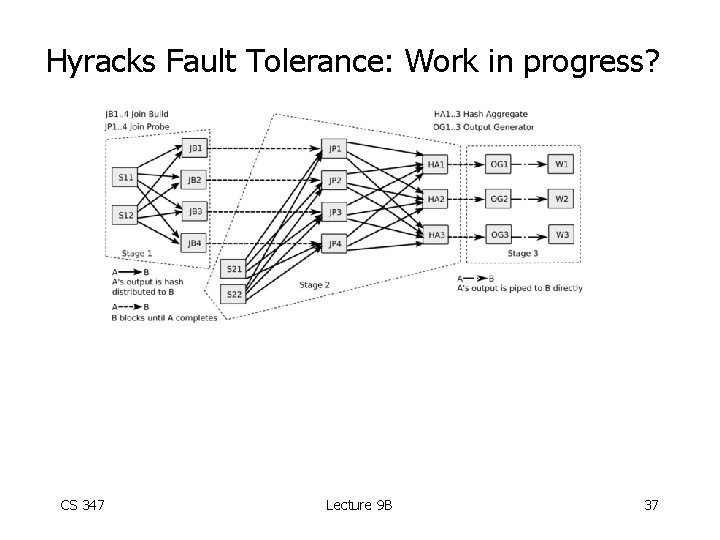

Hyracks Fault Tolerance: Work in progress? CS 347 Lecture 9 B 37

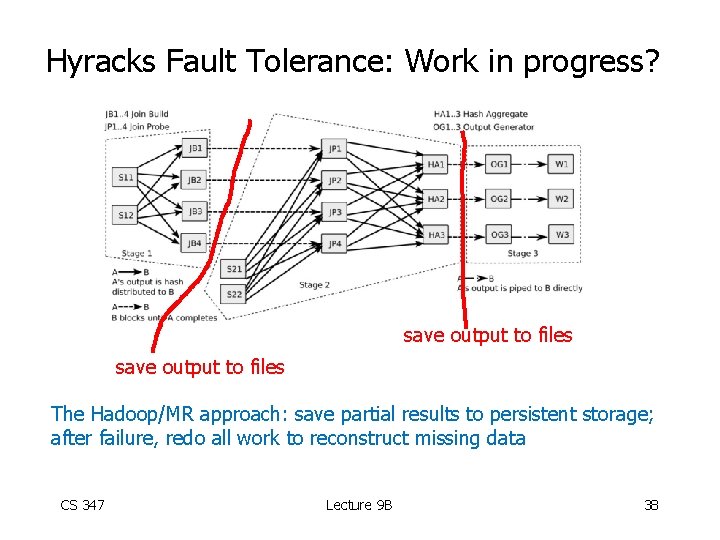

Hyracks Fault Tolerance: Work in progress? save output to files The Hadoop/MR approach: save partial results to persistent storage; after failure, redo all work to reconstruct missing data CS 347 Lecture 9 B 38

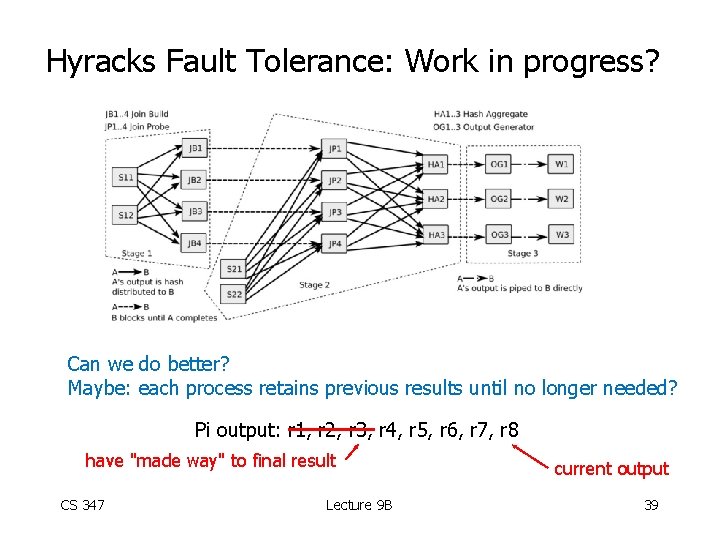

Hyracks Fault Tolerance: Work in progress? Can we do better? Maybe: each process retains previous results until no longer needed? Pi output: r 1, r 2, r 3, r 4, r 5, r 6, r 7, r 8 have "made way" to final result CS 347 Lecture 9 B current output 39

CS 347: Parallel and Distributed Data Management Notes X: Pregel Hector Garcia-Molina CS 347 Lecture 9 B 40

Material based on: • In SIGMOD 2010 • Note there is an open-source version of Pregel called GIRAPH CS 347 Lecture 9 B 41

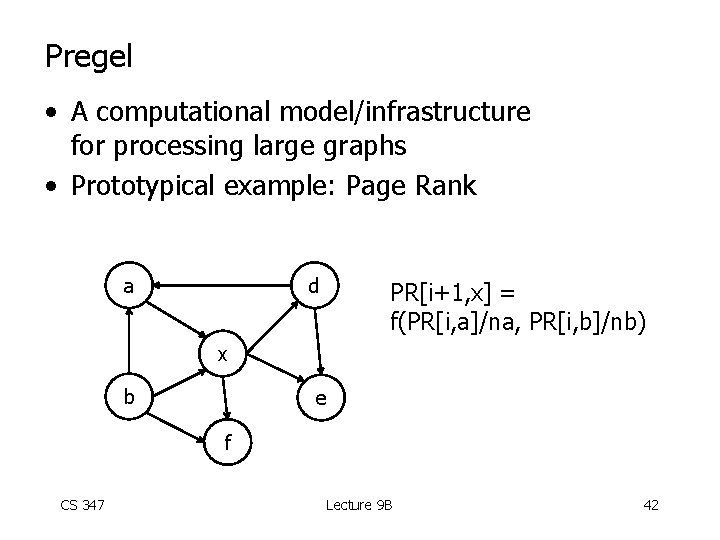

Pregel • A computational model/infrastructure for processing large graphs • Prototypical example: Page Rank a d PR[i+1, x] = f(PR[i, a]/na, PR[i, b]/nb) x b e f CS 347 Lecture 9 B 42

![Pregel a PR[i+1, x] = f(PR[i, a]/na, PR[i, b]/nb) d x b e f Pregel a PR[i+1, x] = f(PR[i, a]/na, PR[i, b]/nb) d x b e f](http://slidetodoc.com/presentation_image/b6dfc5859499d622ecb834795ee9b88a/image-43.jpg)

Pregel a PR[i+1, x] = f(PR[i, a]/na, PR[i, b]/nb) d x b e f • Synchronous computation in iterations • In one iteration, each node: – gets messages from neighbors – computes – sends data to neighbors CS 347 Lecture 9 B 43

Pregel vs Map-Reduce/S 4/Hyracks/. . . • In Map-Reduce, S 4, Hyracks, . . . workflow separate from data • In Pregel, data (graph) drives data flow CS 347 Lecture 9 B 44

Pregel Motivation • Many applications require graph processing • Map-Reduce and other workflow systems not a good fit for graph processing • Need to run graph algorithms on many procesors CS 347 Lecture 9 B 45

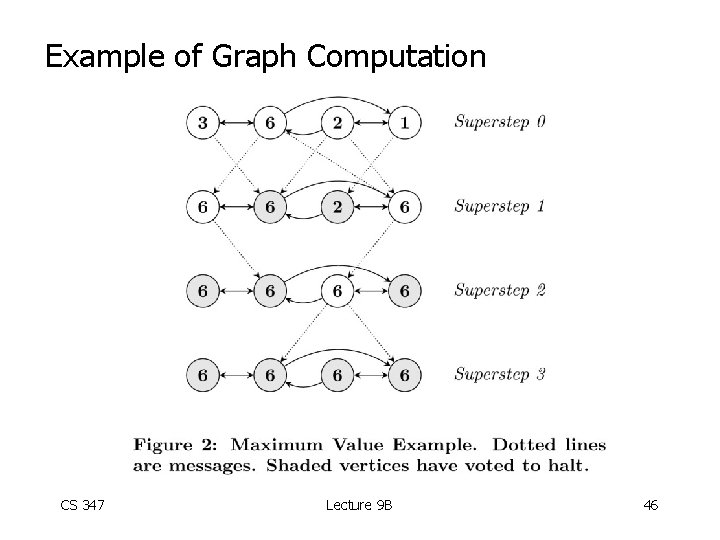

Example of Graph Computation CS 347 Lecture 9 B 46

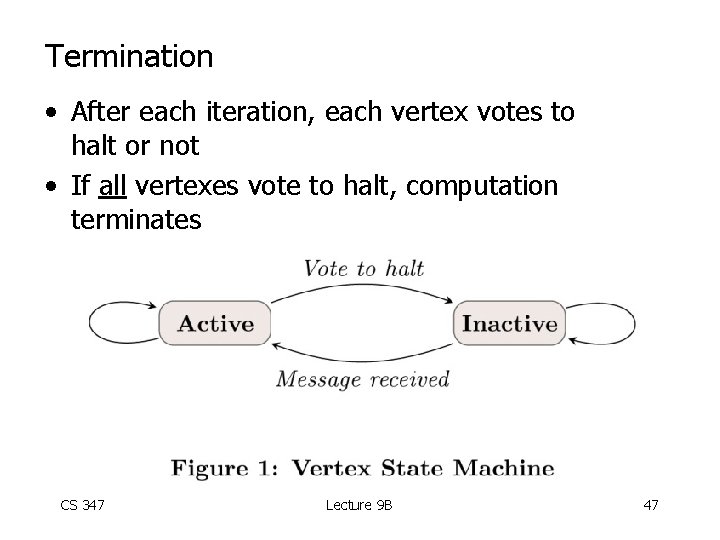

Termination • After each iteration, each vertex votes to halt or not • If all vertexes vote to halt, computation terminates CS 347 Lecture 9 B 47

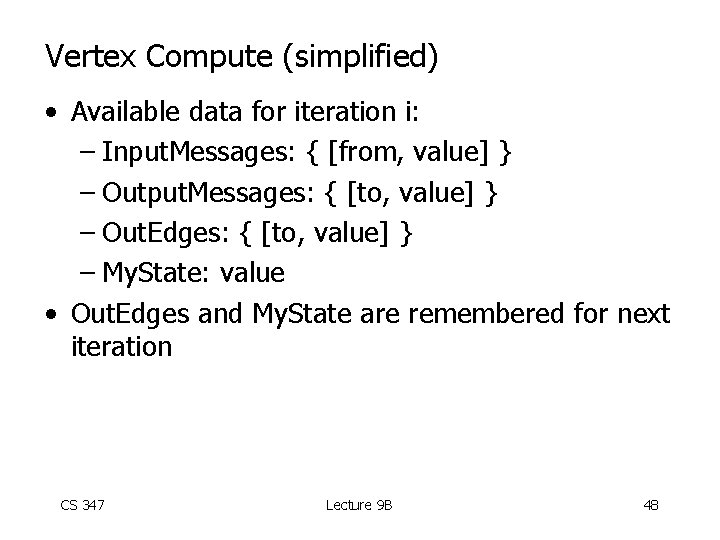

Vertex Compute (simplified) • Available data for iteration i: – Input. Messages: { [from, value] } – Output. Messages: { [to, value] } – Out. Edges: { [to, value] } – My. State: value • Out. Edges and My. State are remembered for next iteration CS 347 Lecture 9 B 48

![Max Computation • change : = false • for [f, w] in Input. Messages Max Computation • change : = false • for [f, w] in Input. Messages](http://slidetodoc.com/presentation_image/b6dfc5859499d622ecb834795ee9b88a/image-49.jpg)

Max Computation • change : = false • for [f, w] in Input. Messages do if w > My. State. value then [ My. State. value : = w change : = true ] • if (superstep = 1) OR change then for [t, w] in Out. Edges do add [t, My. State. value] to Output. Messages else vote to halt CS 347 Lecture 9 B 49

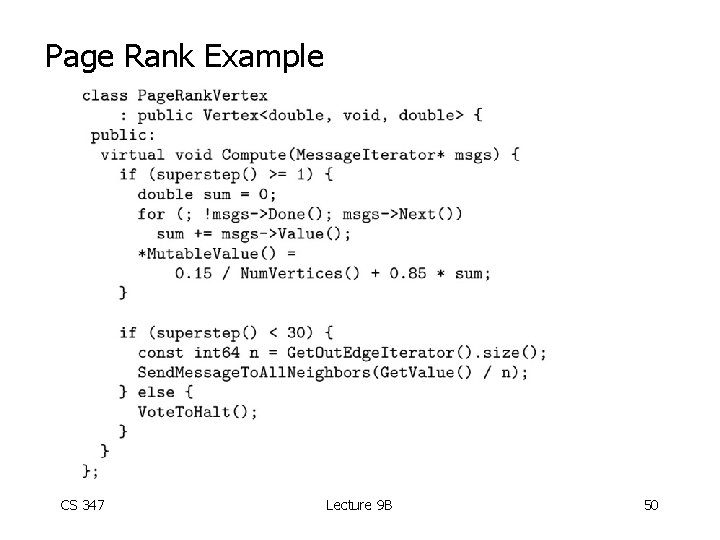

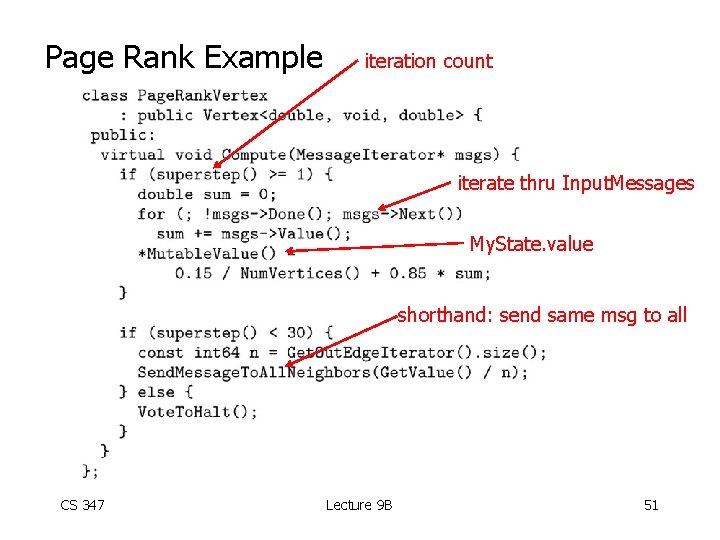

Page Rank Example CS 347 Lecture 9 B 50

Page Rank Example iteration count iterate thru Input. Messages My. State. value shorthand: send same msg to all CS 347 Lecture 9 B 51

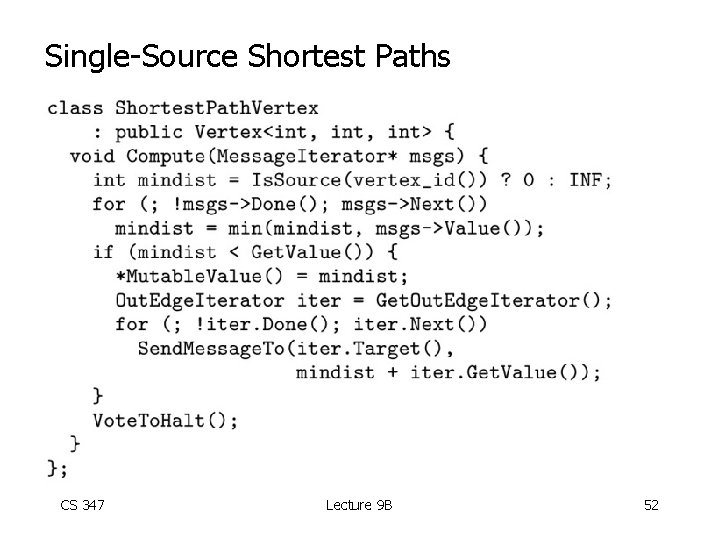

Single-Source Shortest Paths CS 347 Lecture 9 B 52

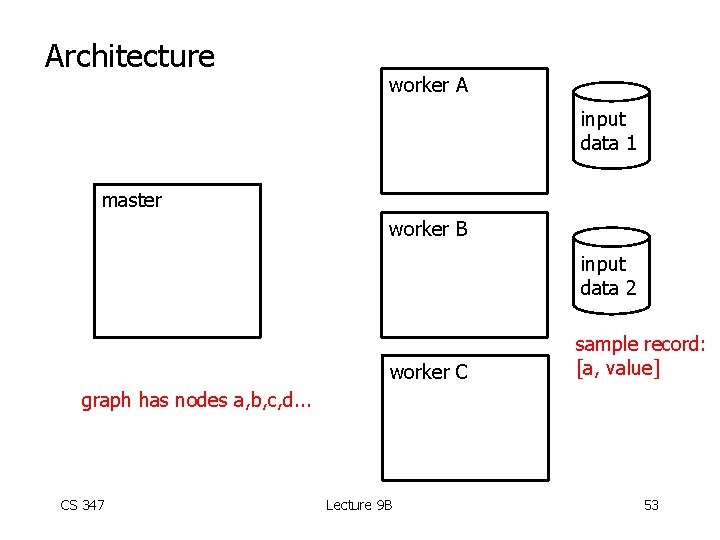

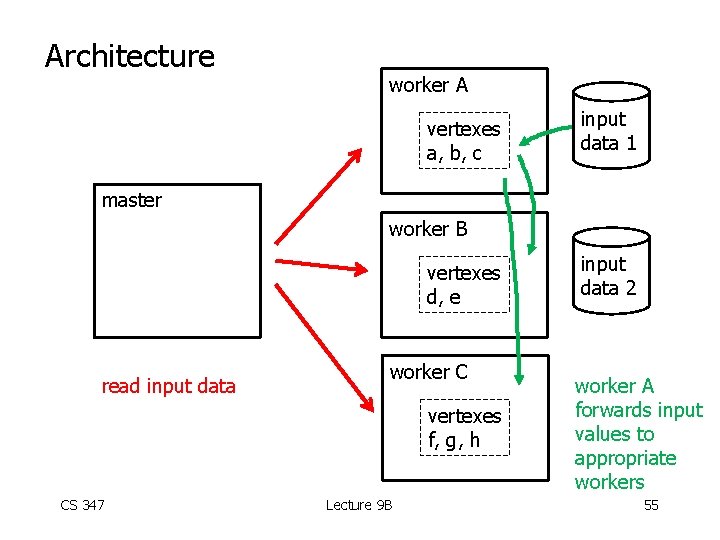

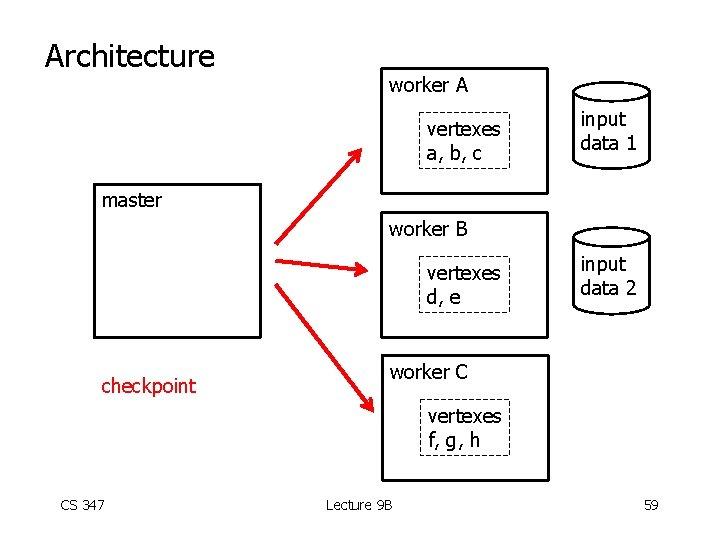

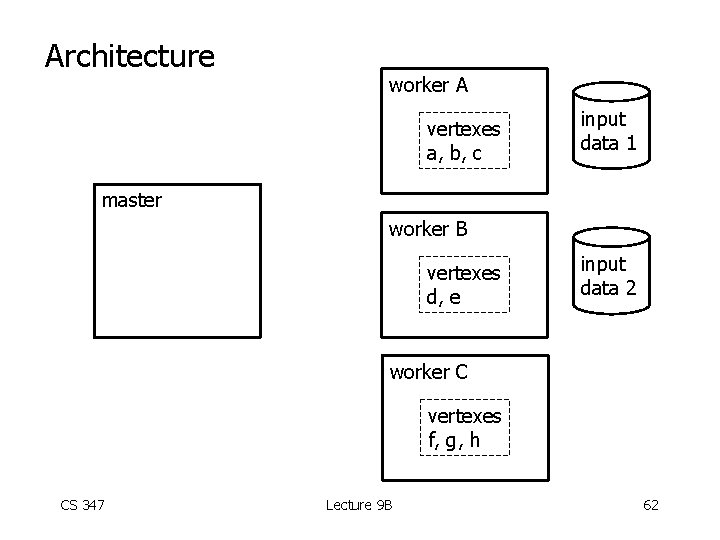

Architecture worker A input data 1 master worker B input data 2 worker C sample record: [a, value] graph has nodes a, b, c, d. . . CS 347 Lecture 9 B 53

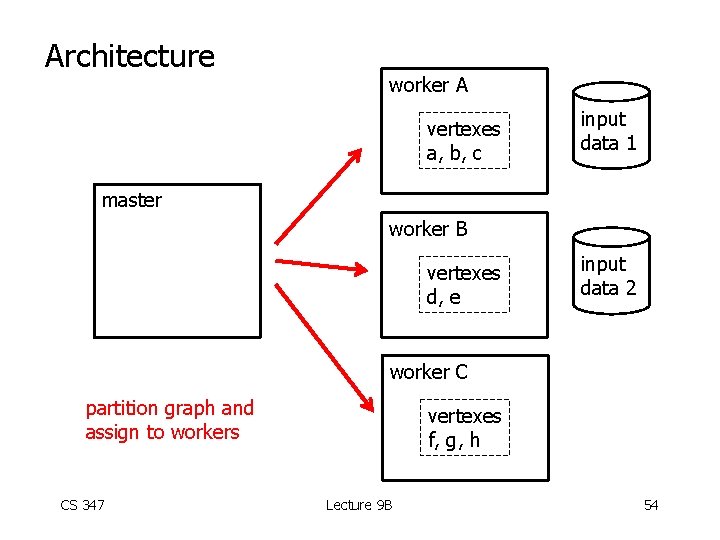

Architecture worker A vertexes a, b, c input data 1 master worker B vertexes d, e input data 2 worker C partition graph and assign to workers CS 347 vertexes f, g, h Lecture 9 B 54

Architecture worker A vertexes a, b, c input data 1 master worker B vertexes d, e read input data worker C vertexes f, g, h CS 347 Lecture 9 B input data 2 worker A forwards input values to appropriate workers 55

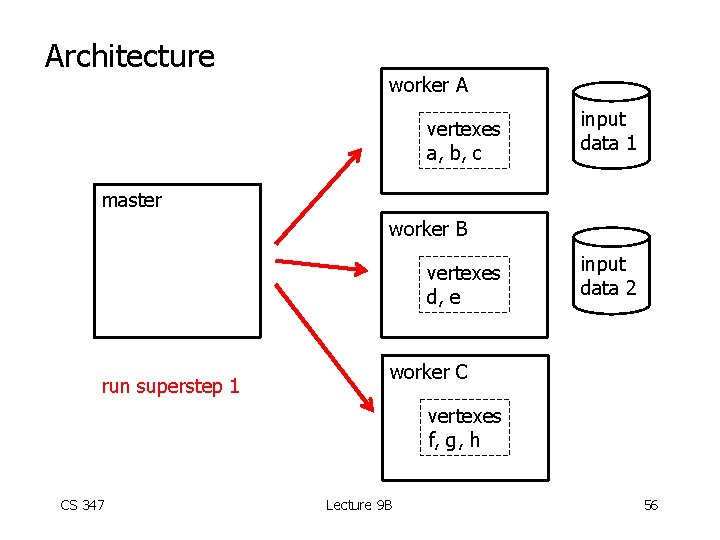

Architecture worker A vertexes a, b, c input data 1 master worker B vertexes d, e run superstep 1 input data 2 worker C vertexes f, g, h CS 347 Lecture 9 B 56

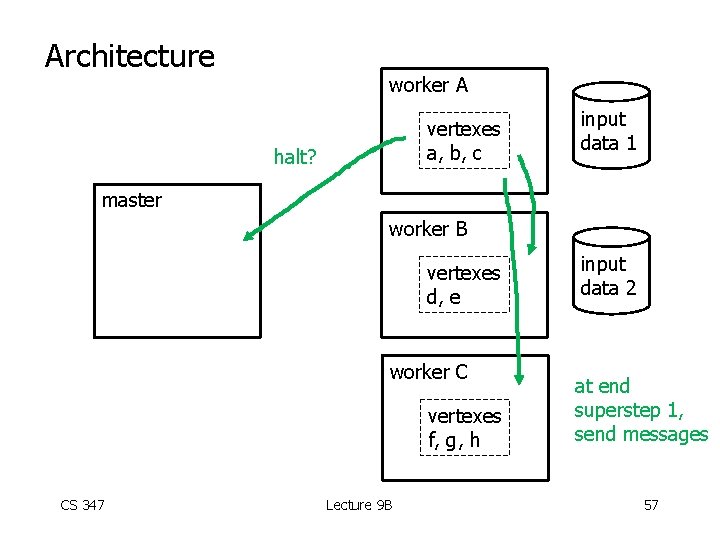

Architecture worker A vertexes a, b, c halt? input data 1 master worker B vertexes d, e worker C vertexes f, g, h CS 347 Lecture 9 B input data 2 at end superstep 1, send messages 57

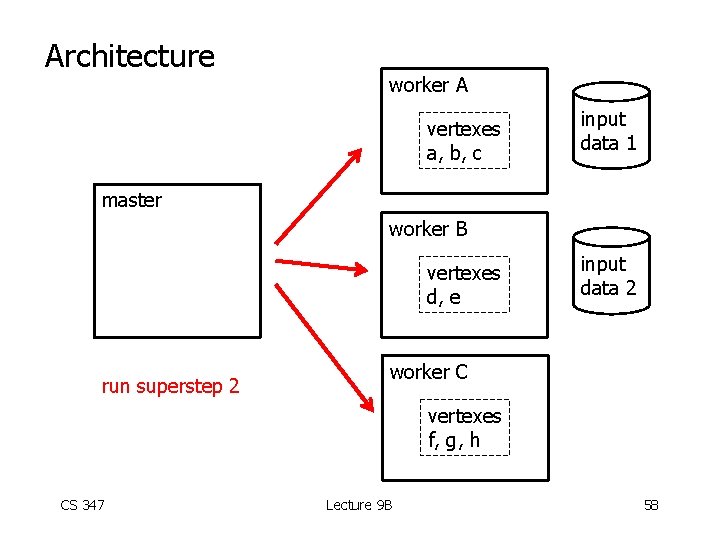

Architecture worker A vertexes a, b, c input data 1 master worker B vertexes d, e run superstep 2 input data 2 worker C vertexes f, g, h CS 347 Lecture 9 B 58

Architecture worker A vertexes a, b, c input data 1 master worker B vertexes d, e checkpoint input data 2 worker C vertexes f, g, h CS 347 Lecture 9 B 59

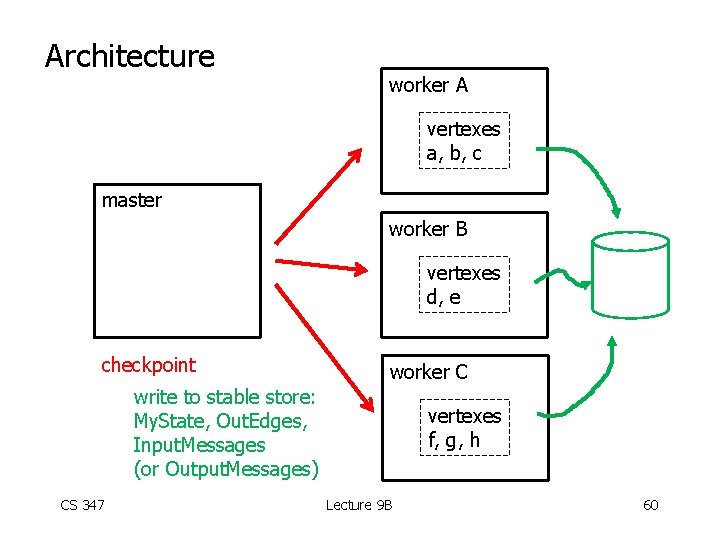

Architecture worker A vertexes a, b, c master worker B vertexes d, e checkpoint worker C write to stable store: My. State, Out. Edges, Input. Messages (or Output. Messages) CS 347 vertexes f, g, h Lecture 9 B 60

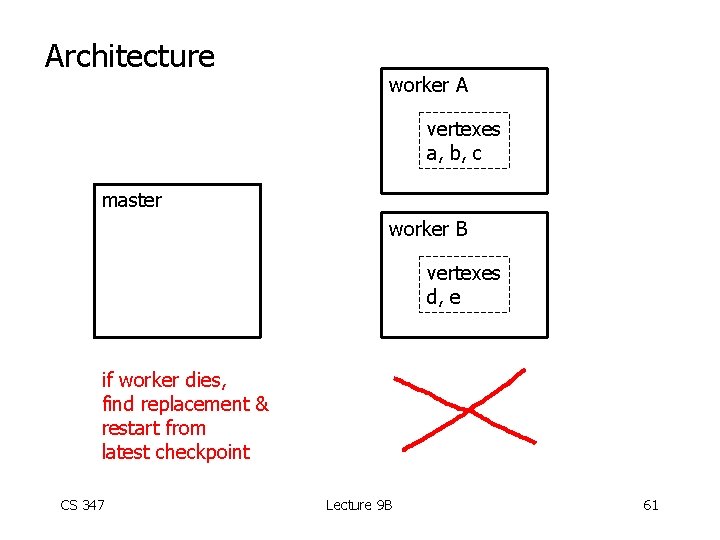

Architecture worker A vertexes a, b, c master worker B vertexes d, e if worker dies, find replacement & restart from latest checkpoint CS 347 Lecture 9 B 61

Architecture worker A vertexes a, b, c input data 1 master worker B vertexes d, e input data 2 worker C vertexes f, g, h CS 347 Lecture 9 B 62

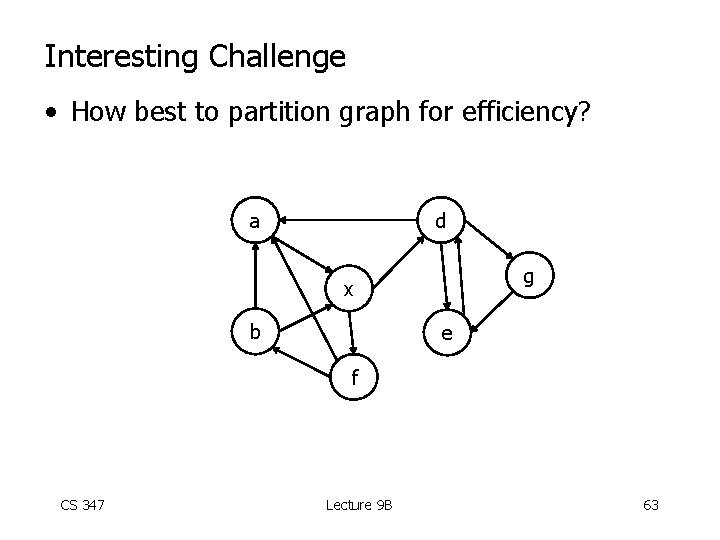

Interesting Challenge • How best to partition graph for efficiency? a d g x b e f CS 347 Lecture 9 B 63

CS 347: Parallel and Distributed Data Management Notes X: Big. Table, HBASE, Cassandra Hector Garcia-Molina CS 347 Lecture 9 B 64

Sources • HBASE: The Definitive Guide, Lars George, O’Reilly Publishers, 2011. • Cassandra: The Definitive Guide, Eben Hewitt, O’Reilly Publishers, 2011. • Big. Table: A Distributed Storage System for Structured Data, F. Chang et al, ACM Transactions on Computer Systems, Vol. 26, No. 2, June 2008. CS 347 Lecture 9 B 65

Lots of Buzz Words! • “Apache Cassandra is an open-source, distributed, decentralized, elastically scalable, highly available, fault-tolerant, tunably consistent, column-oriented database that bases its distribution design on Amazon’s dynamo and its data model on Google’s Big Table. ” • Clearly, it is buzz-word compliant!! CS 347 Lecture 9 B 66

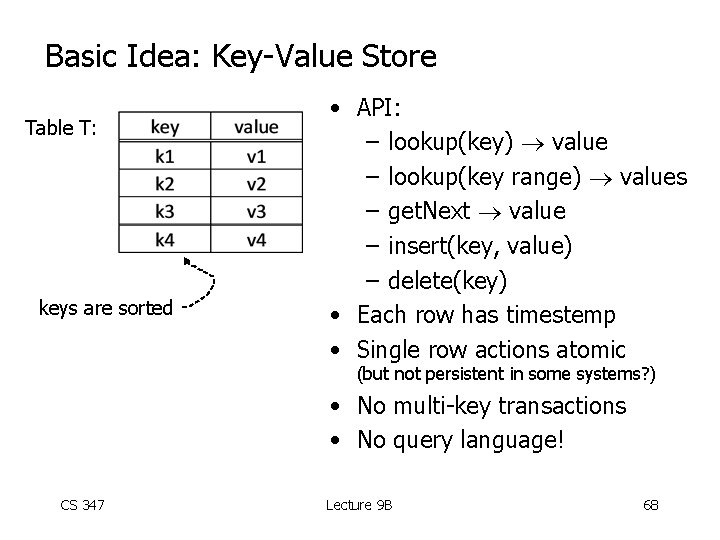

Basic Idea: Key-Value Store Table T: CS 347 Lecture 9 B 67

Basic Idea: Key-Value Store Table T: keys are sorted • API: – lookup(key) value – lookup(key range) values – get. Next value – insert(key, value) – delete(key) • Each row has timestemp • Single row actions atomic (but not persistent in some systems? ) • No multi-key transactions • No query language! CS 347 Lecture 9 B 68

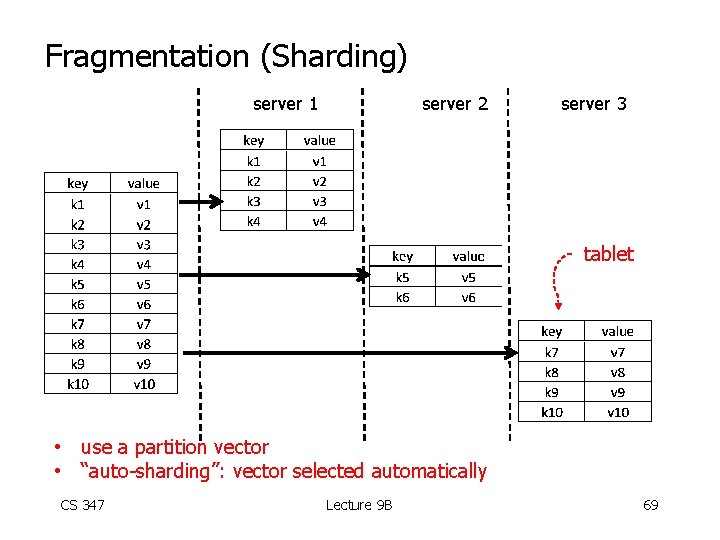

Fragmentation (Sharding) server 1 server 2 server 3 tablet • use a partition vector • “auto-sharding”: vector selected automatically CS 347 Lecture 9 B 69

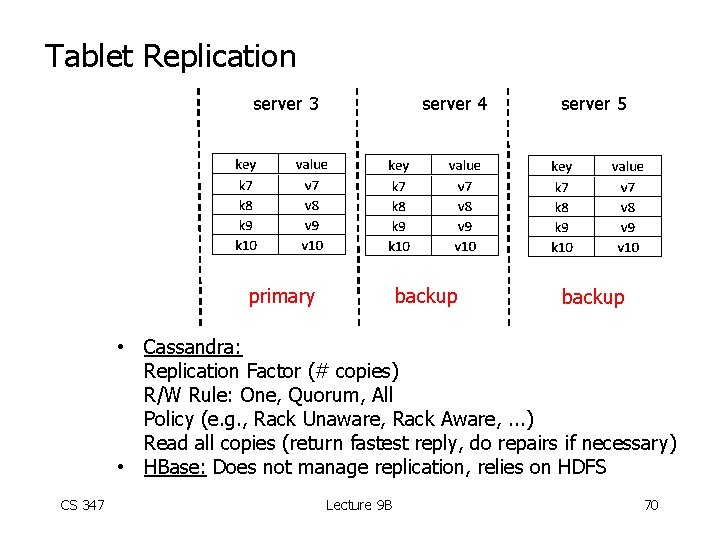

Tablet Replication server 3 server 4 primary backup server 5 backup • Cassandra: Replication Factor (# copies) R/W Rule: One, Quorum, All Policy (e. g. , Rack Unaware, Rack Aware, . . . ) Read all copies (return fastest reply, do repairs if necessary) • HBase: Does not manage replication, relies on HDFS CS 347 Lecture 9 B 70

Need a “directory” • Table Name: Key Server that stores key Backup servers • Can be implemented as a special table. CS 347 Lecture 9 B 71

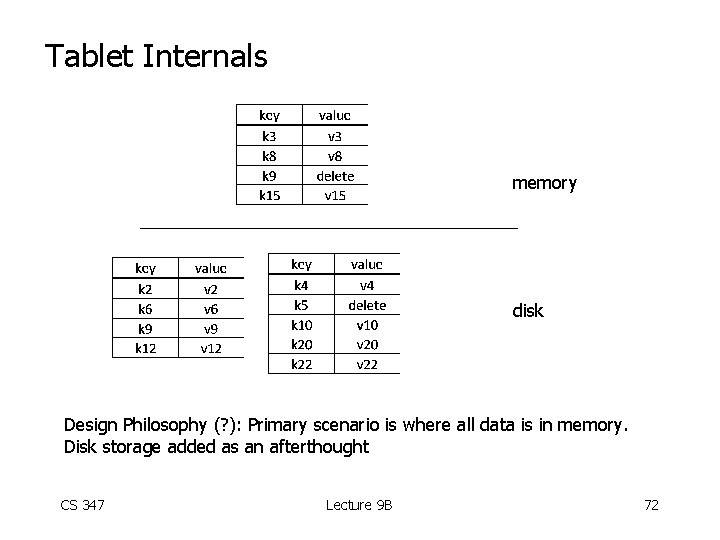

Tablet Internals memory disk Design Philosophy (? ): Primary scenario is where all data is in memory. Disk storage added as an afterthought CS 347 Lecture 9 B 72

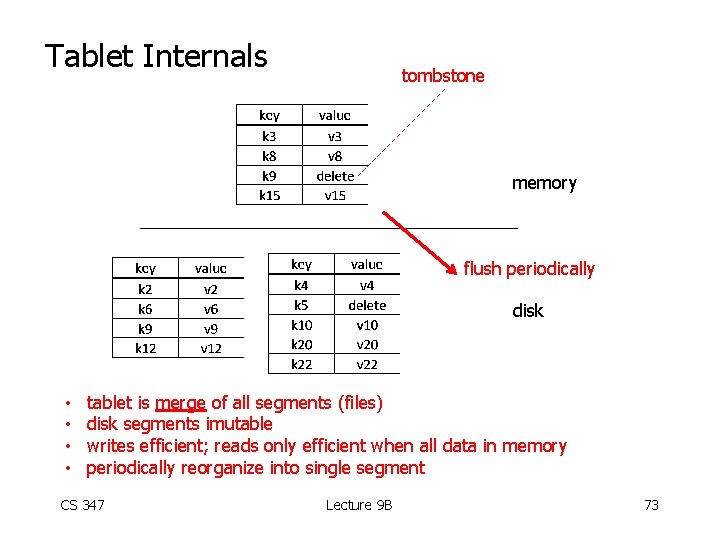

Tablet Internals tombstone memory flush periodically disk • • tablet is merge of all segments (files) disk segments imutable writes efficient; reads only efficient when all data in memory periodically reorganize into single segment CS 347 Lecture 9 B 73

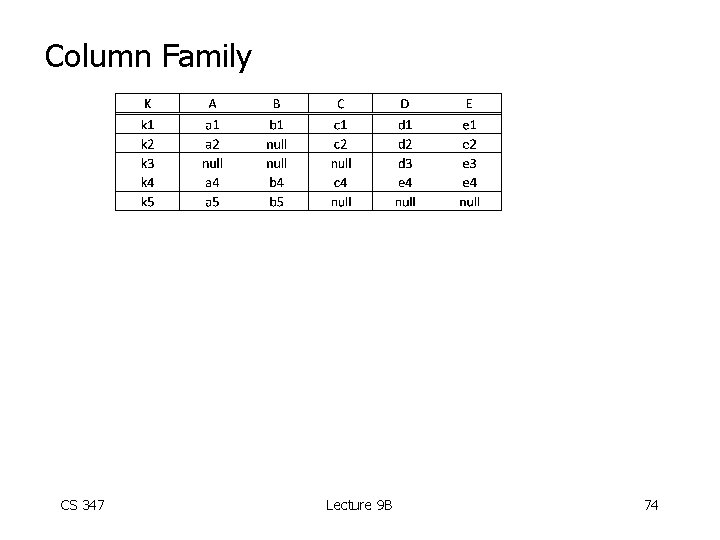

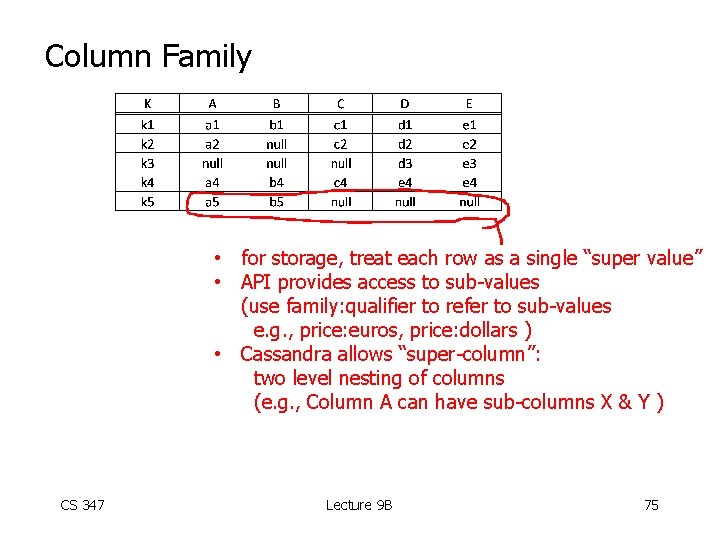

Column Family CS 347 Lecture 9 B 74

Column Family • for storage, treat each row as a single “super value” • API provides access to sub-values (use family: qualifier to refer to sub-values e. g. , price: euros, price: dollars ) • Cassandra allows “super-column”: two level nesting of columns (e. g. , Column A can have sub-columns X & Y ) CS 347 Lecture 9 B 75

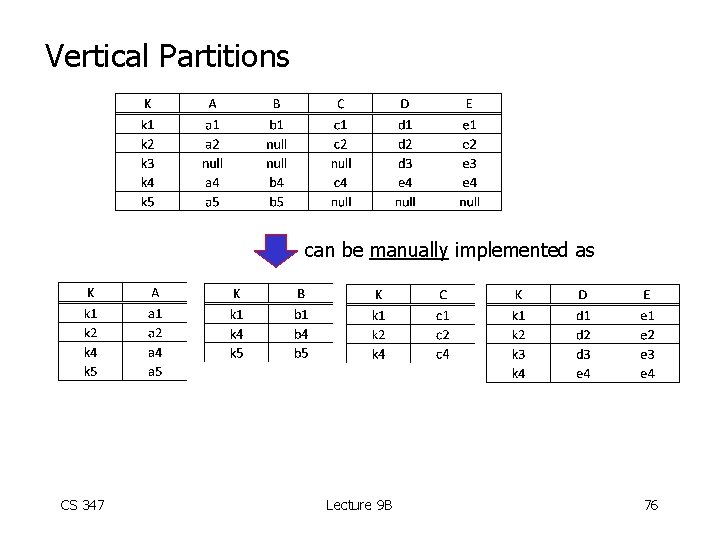

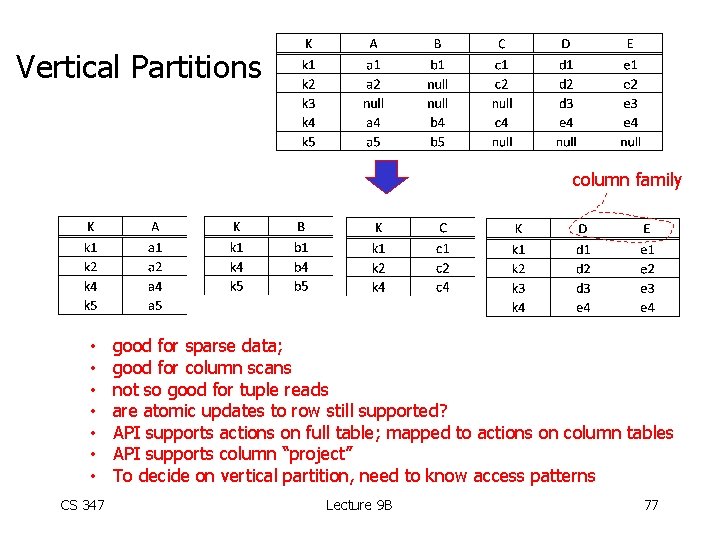

Vertical Partitions can be manually implemented as CS 347 Lecture 9 B 76

Vertical Partitions column family • • CS 347 good for sparse data; good for column scans not so good for tuple reads are atomic updates to row still supported? API supports actions on full table; mapped to actions on column tables API supports column “project” To decide on vertical partition, need to know access patterns Lecture 9 B 77

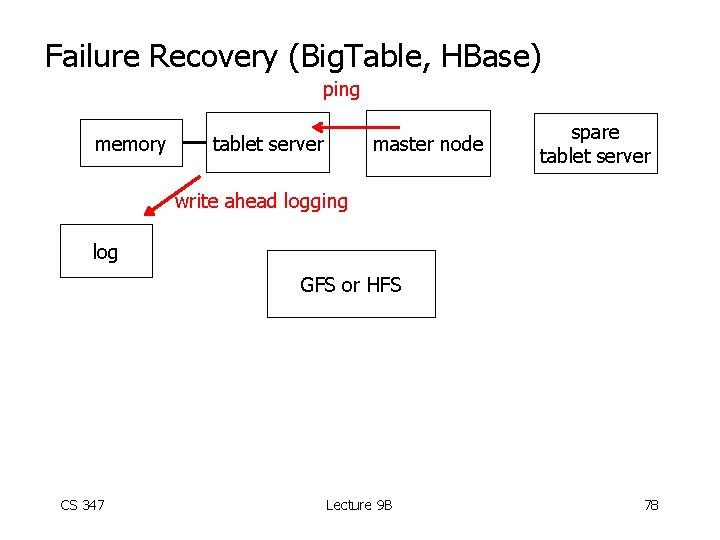

Failure Recovery (Big. Table, HBase) ping memory tablet server master node spare tablet server write ahead logging log GFS or HFS CS 347 Lecture 9 B 78

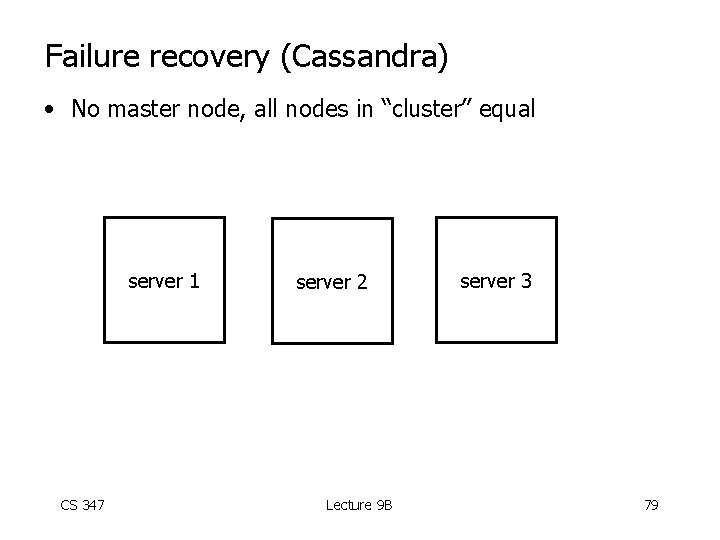

Failure recovery (Cassandra) • No master node, all nodes in “cluster” equal server 1 CS 347 server 2 Lecture 9 B server 3 79

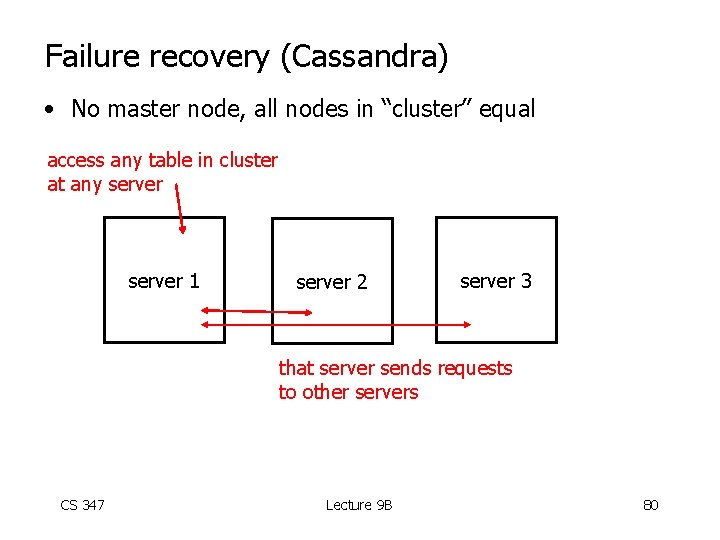

Failure recovery (Cassandra) • No master node, all nodes in “cluster” equal access any table in cluster at any server 1 server 2 server 3 that server sends requests to other servers CS 347 Lecture 9 B 80

CS 347: Parallel and Distributed Data Management Notes X: Mem. Cache. D Hector Garcia-Molina CS 347 Lecture 9 B 81

Mem. Cache. D • General-purpose distributed memory caching system • Open source CS 347 Lecture 9 B 82

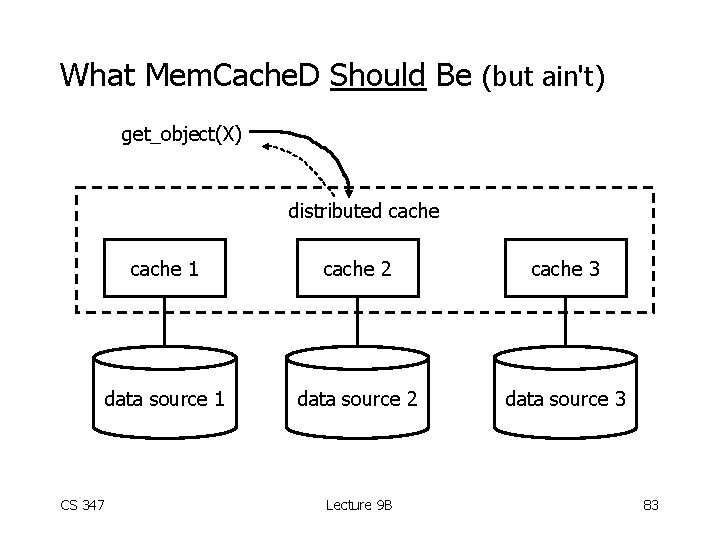

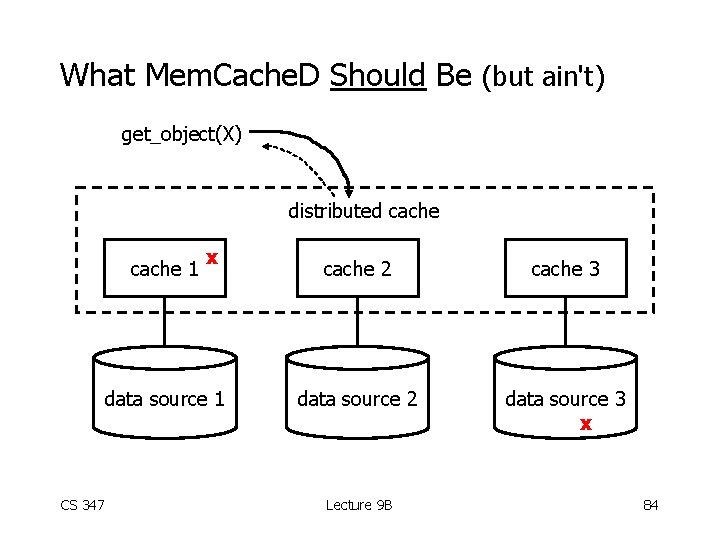

What Mem. Cache. D Should Be (but ain't) get_object(X) distributed cache CS 347 cache 1 cache 2 cache 3 data source 1 data source 2 data source 3 Lecture 9 B 83

What Mem. Cache. D Should Be (but ain't) get_object(X) distributed cache 1 x data source 1 CS 347 cache 2 cache 3 data source 2 data source 3 x Lecture 9 B 84

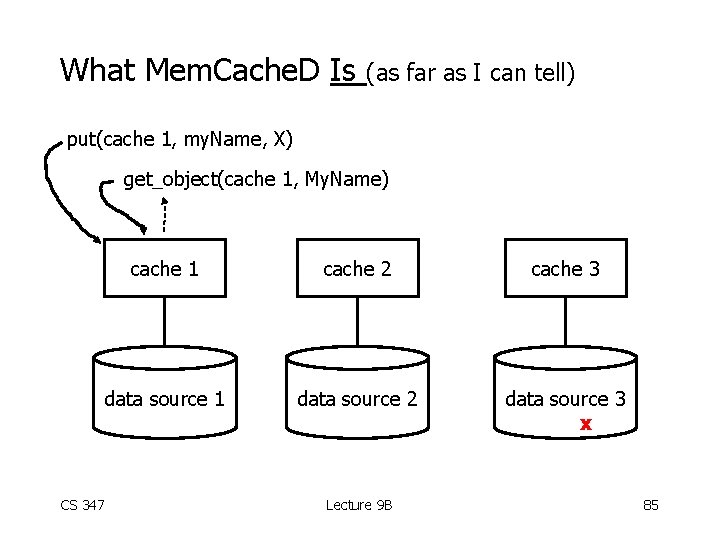

What Mem. Cache. D Is (as far as I can tell) put(cache 1, my. Name, X) get_object(cache 1, My. Name) CS 347 cache 1 cache 2 cache 3 data source 1 data source 2 data source 3 x Lecture 9 B 85

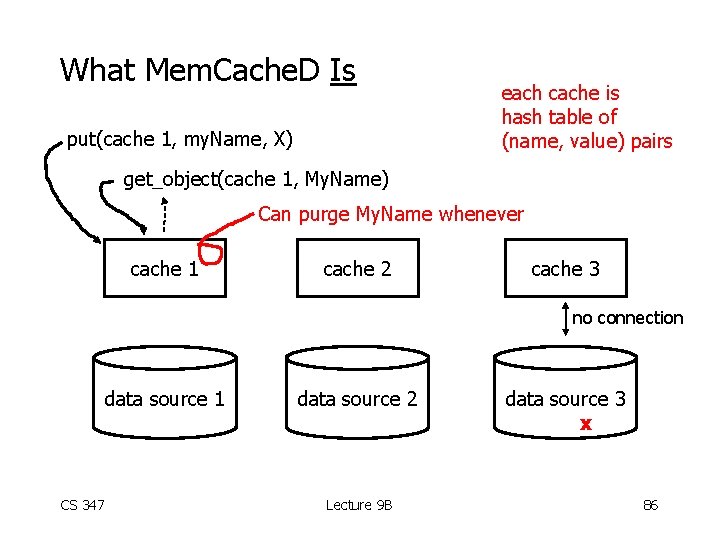

What Mem. Cache. D Is put(cache 1, my. Name, X) each cache is hash table of (name, value) pairs get_object(cache 1, My. Name) Can purge My. Name whenever cache 1 cache 2 cache 3 no connection data source 1 CS 347 data source 2 Lecture 9 B data source 3 x 86

CS 347: Parallel and Distributed Data Management Notes X: Zoo. Keeper Hector Garcia-Molina CS 347 Lecture 9 B 87

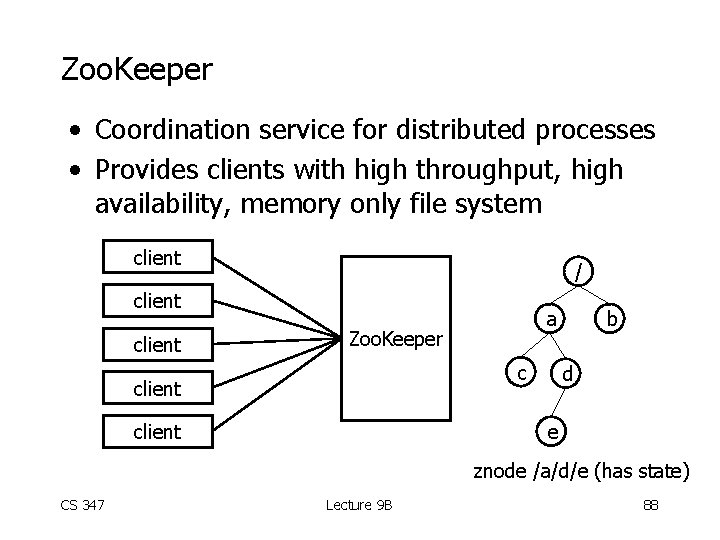

Zoo. Keeper • Coordination service for distributed processes • Provides clients with high throughput, high availability, memory only file system client / client a Zoo. Keeper c client b d e client znode /a/d/e (has state) CS 347 Lecture 9 B 88

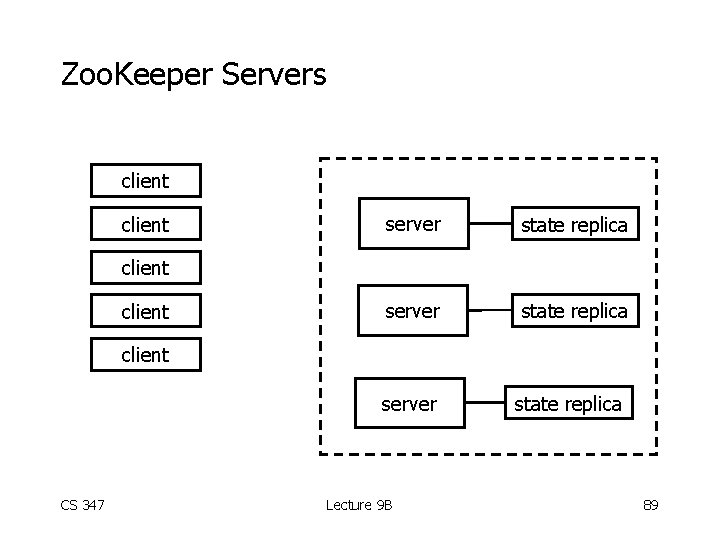

Zoo. Keeper Servers client server state replica client server CS 347 Lecture 9 B state replica 89

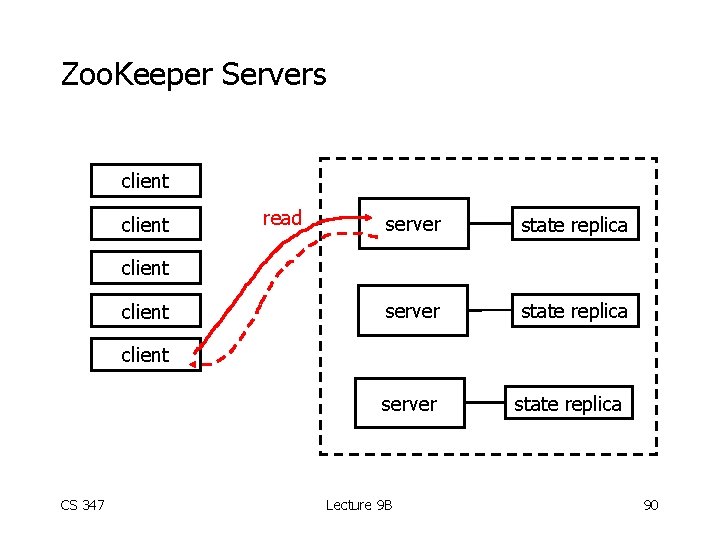

Zoo. Keeper Servers client read server state replica client server CS 347 Lecture 9 B state replica 90

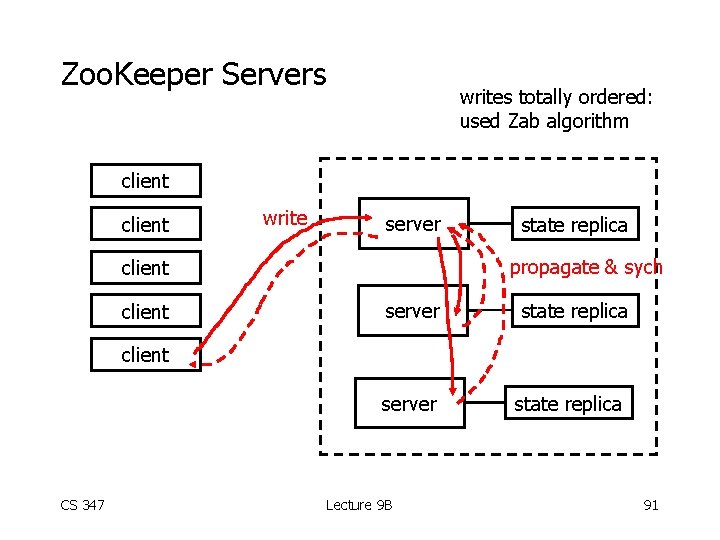

Zoo. Keeper Servers writes totally ordered: used Zab algorithm client write server propagate & sych client state replica server state replica client server CS 347 Lecture 9 B state replica 91

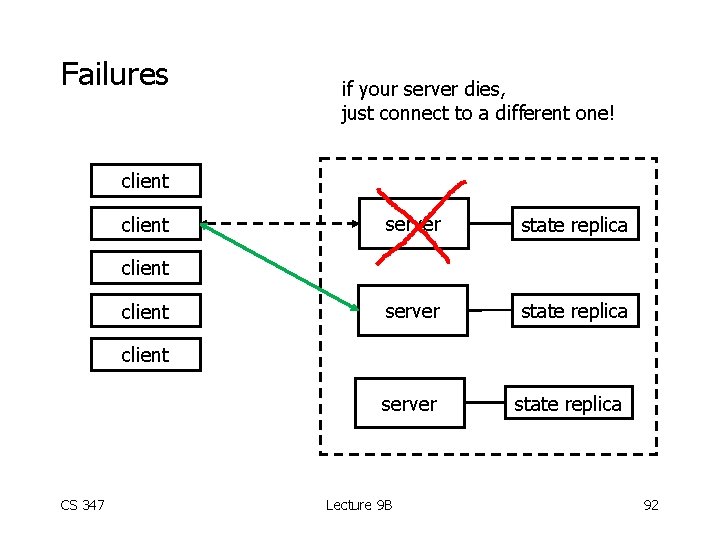

Failures if your server dies, just connect to a different one! client server state replica client server CS 347 Lecture 9 B state replica 92

Zoo. Keeper Notes • Differences with file system: – all nodes can store data – storage size limited • API: insert node, read children, delete node, . . . • Can set triggers on nodes • Clients and servers must know all servers • Zoo. Keeper works as long as a majority of servers are available • Writes totally ordered; read ordered w. r. t. writes CS 347 Lecture 9 B 93

CS 347: Parallel and Distributed Data Management Notes X: Kestrel Hector Garcia-Molina CS 347 Lecture 9 B 94

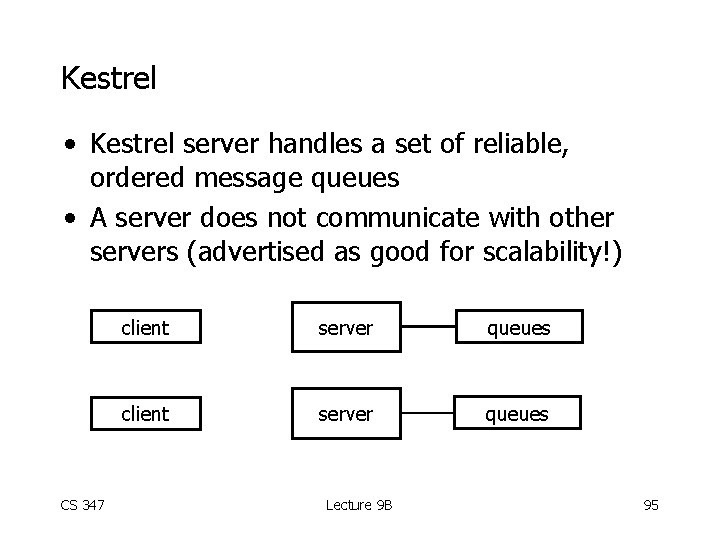

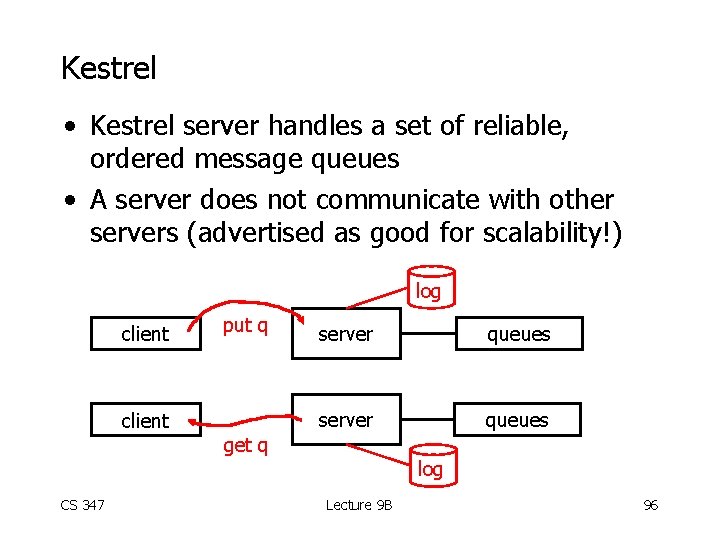

Kestrel • Kestrel server handles a set of reliable, ordered message queues • A server does not communicate with other servers (advertised as good for scalability!) CS 347 client server queues Lecture 9 B 95

Kestrel • Kestrel server handles a set of reliable, ordered message queues • A server does not communicate with other servers (advertised as good for scalability!) log client put q client server queues get q CS 347 log Lecture 9 B 96

- Slides: 96