CS 345 A Data Mining Map Reduce This

- Slides: 13

CS 345 A Data Mining Map. Reduce This presentation has been altered

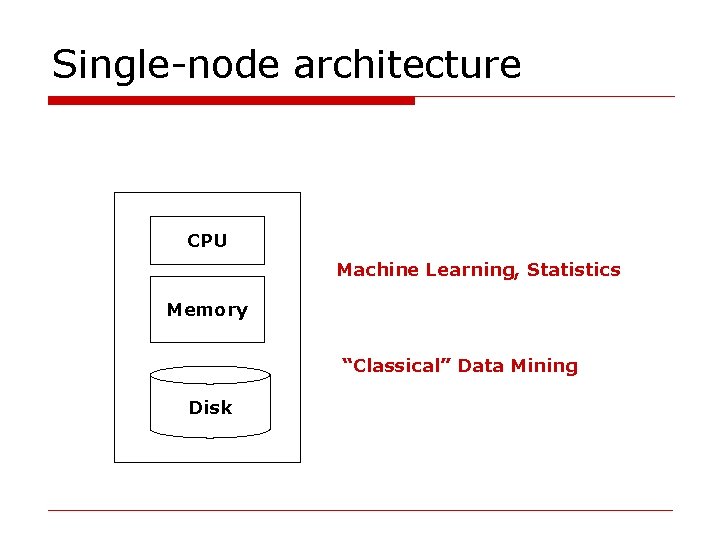

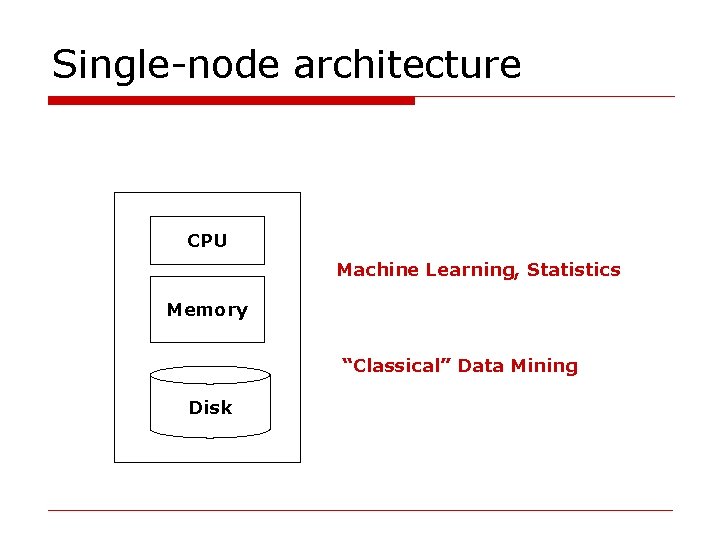

Single-node architecture CPU Machine Learning, Statistics Memory “Classical” Data Mining Disk

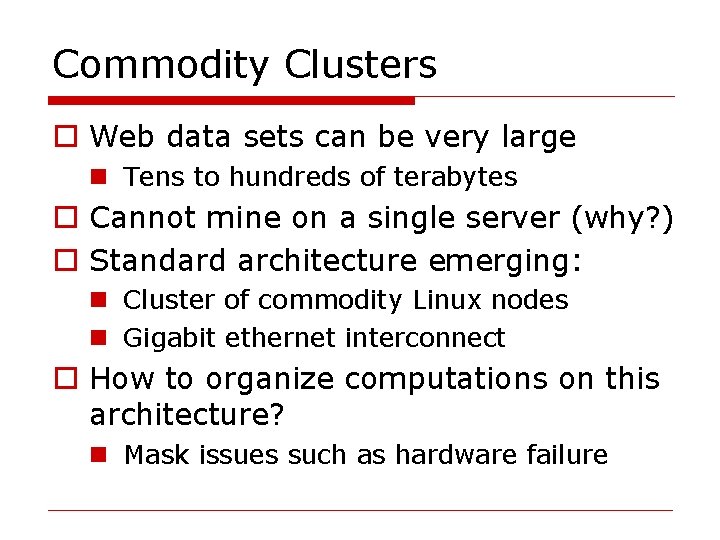

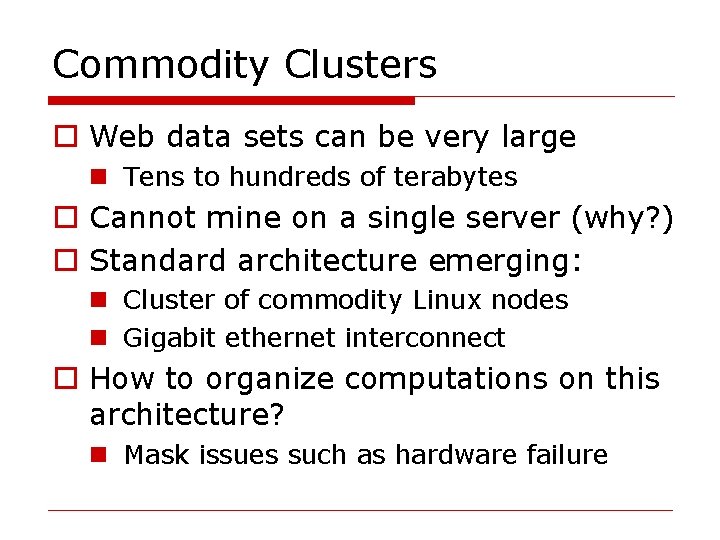

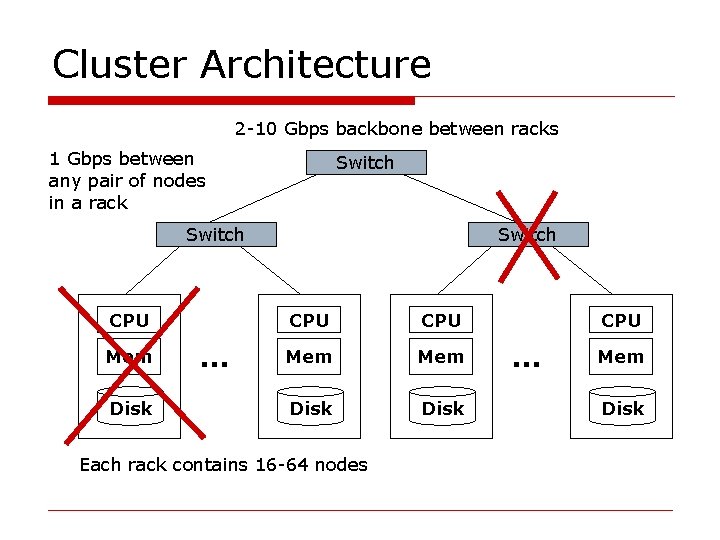

Commodity Clusters o Web data sets can be very large n Tens to hundreds of terabytes o Cannot mine on a single server (why? ) o Standard architecture emerging: n Cluster of commodity Linux nodes n Gigabit ethernet interconnect o How to organize computations on this architecture? n Mask issues such as hardware failure

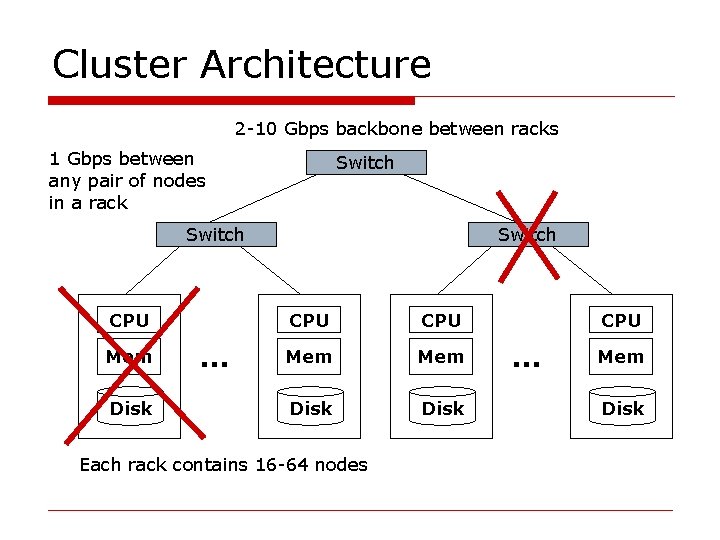

Cluster Architecture 2 -10 Gbps backbone between racks 1 Gbps between any pair of nodes in a rack Switch CPU Mem Disk … Switch CPU Mem Disk Each rack contains 16 -64 nodes CPU … Mem Disk

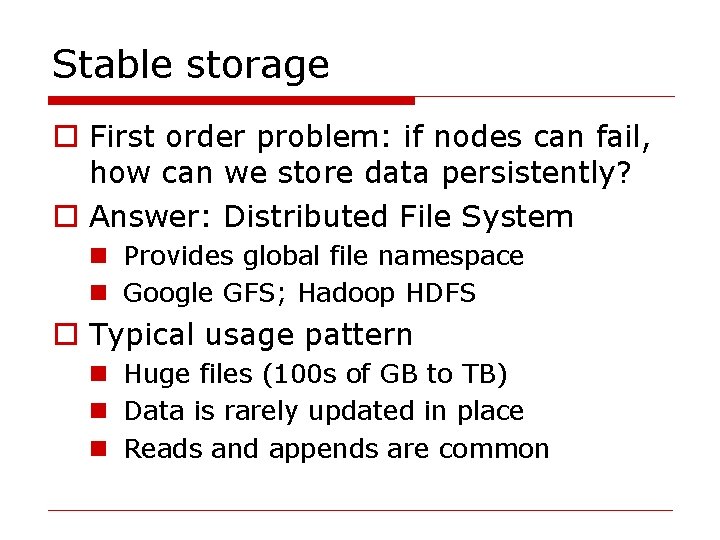

Stable storage o First order problem: if nodes can fail, how can we store data persistently? o Answer: Distributed File System n Provides global file namespace n Google GFS; Hadoop HDFS o Typical usage pattern n Huge files (100 s of GB to TB) n Data is rarely updated in place n Reads and appends are common

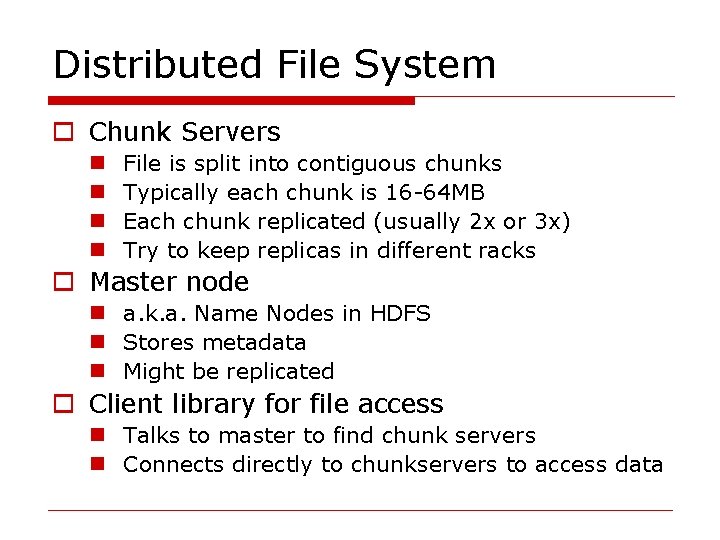

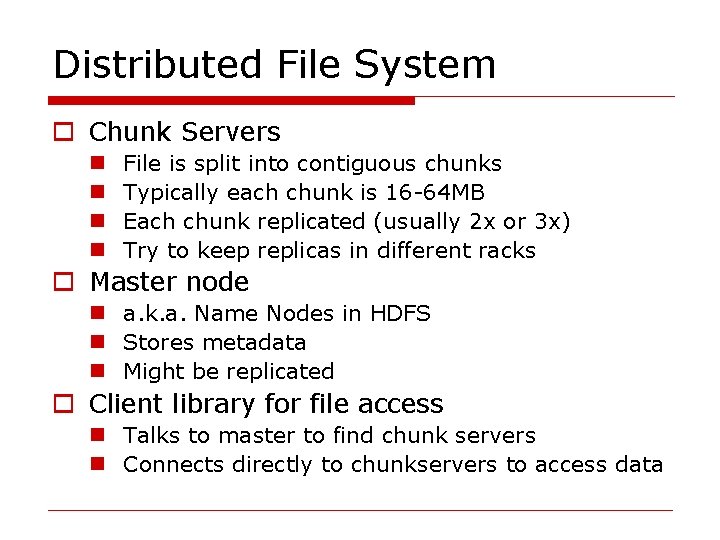

Distributed File System o Chunk Servers n n File is split into contiguous chunks Typically each chunk is 16 -64 MB Each chunk replicated (usually 2 x or 3 x) Try to keep replicas in different racks o Master node n a. k. a. Name Nodes in HDFS n Stores metadata n Might be replicated o Client library for file access n Talks to master to find chunk servers n Connects directly to chunkservers to access data

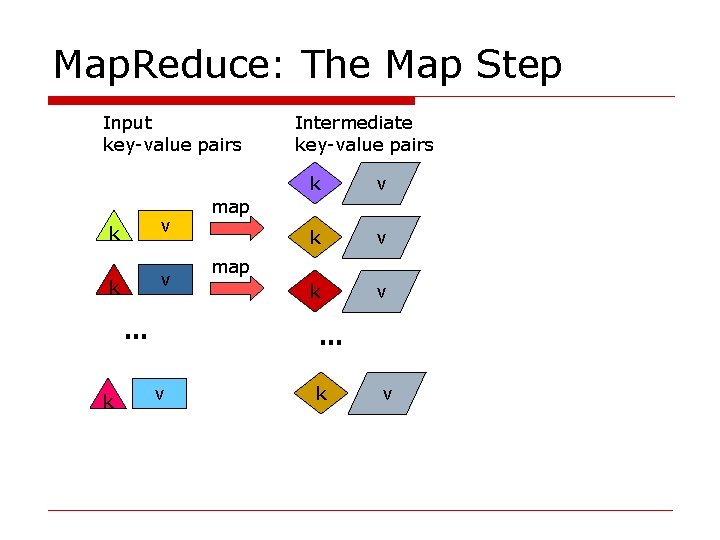

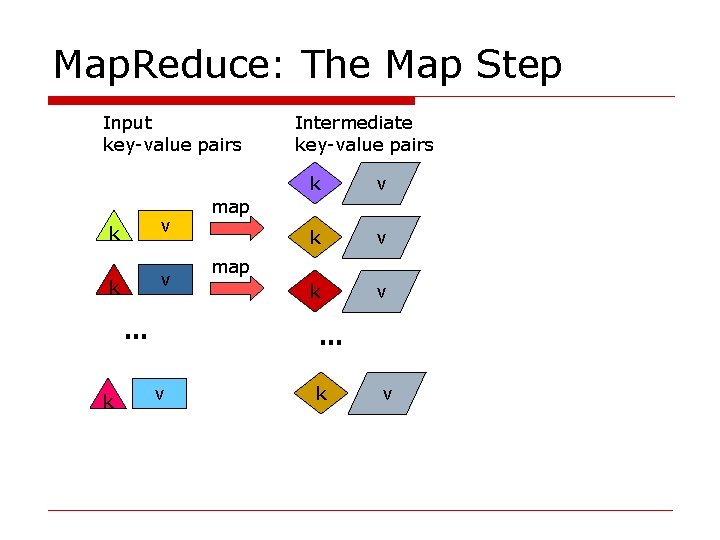

Map. Reduce: The Map Step Input key-value pairs k v … k Intermediate key-value pairs k v k v map … v k v

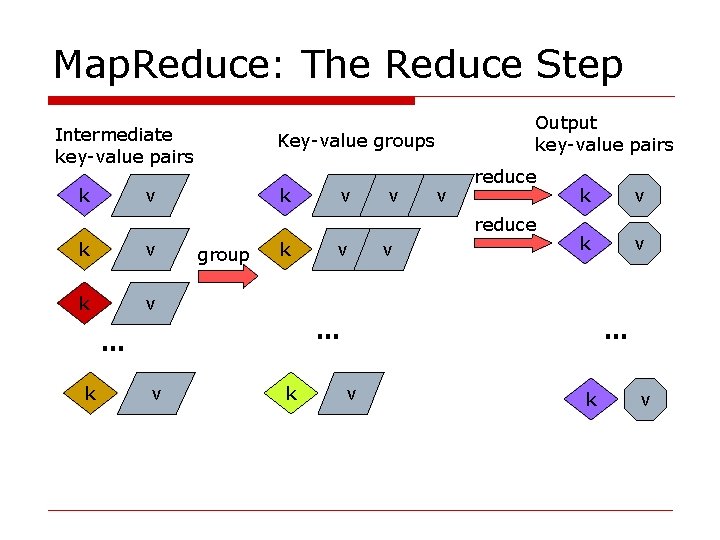

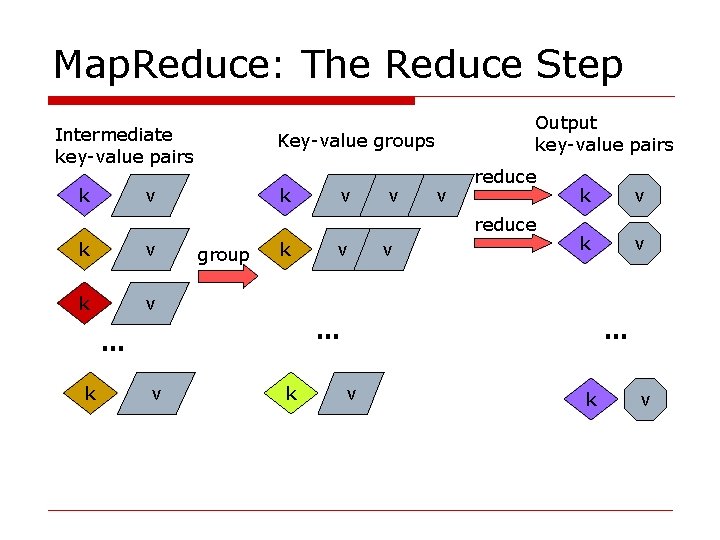

Map. Reduce: The Reduce Step Intermediate key-value pairs Key-value groups v k k v v v Output key-value pairs reduce k v group k v v k v … … k v k … v k v

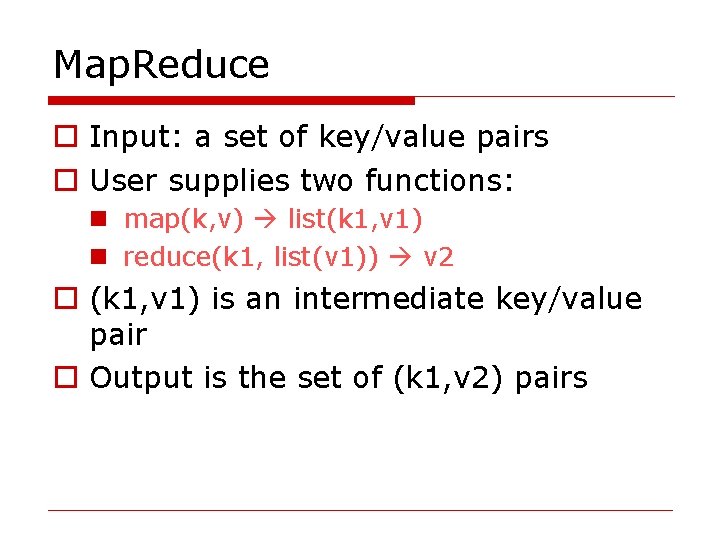

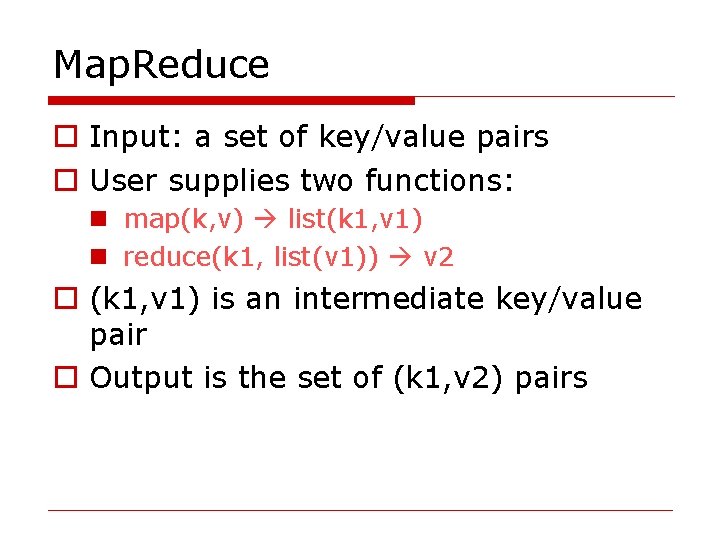

Map. Reduce o Input: a set of key/value pairs o User supplies two functions: n map(k, v) list(k 1, v 1) n reduce(k 1, list(v 1)) v 2 o (k 1, v 1) is an intermediate key/value pair o Output is the set of (k 1, v 2) pairs

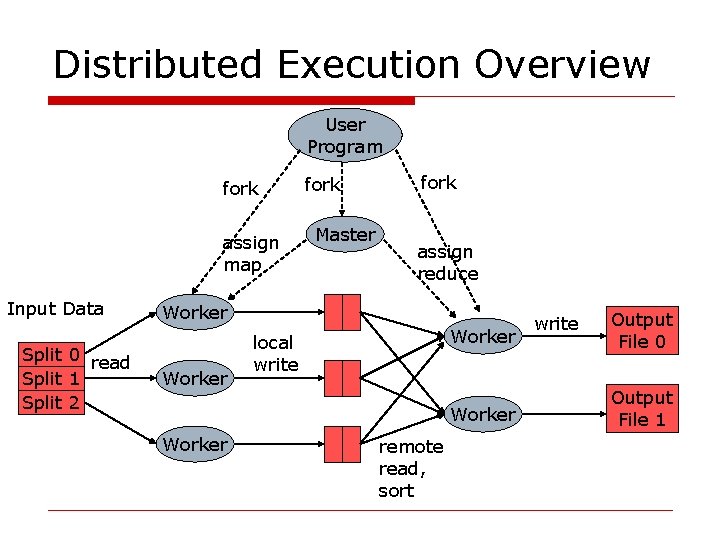

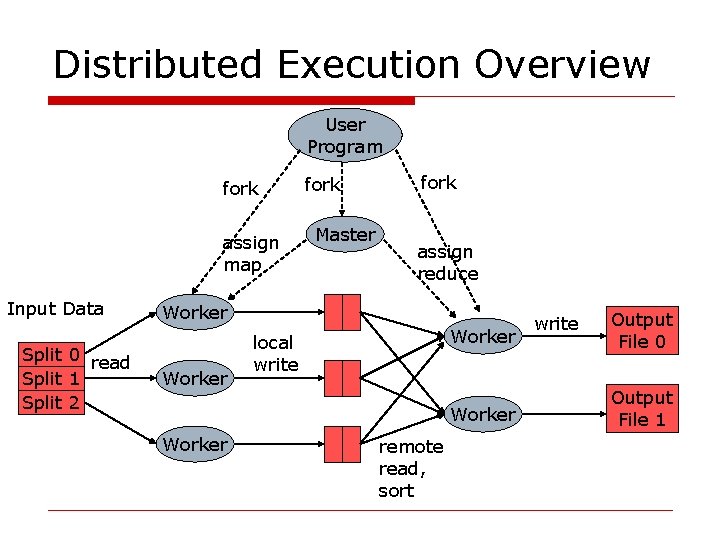

Distributed Execution Overview User Program fork assign map Input Data Split 0 read Split 1 Split 2 fork Master fork assign reduce Worker local write Worker remote read, sort write Output File 0 Output File 1

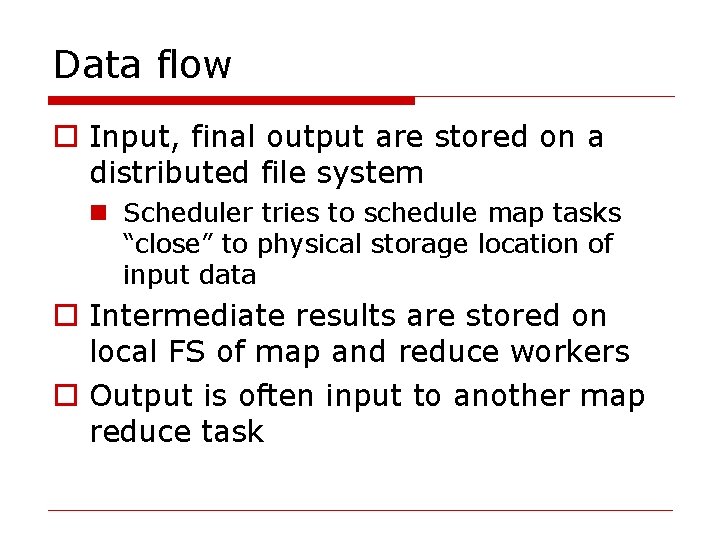

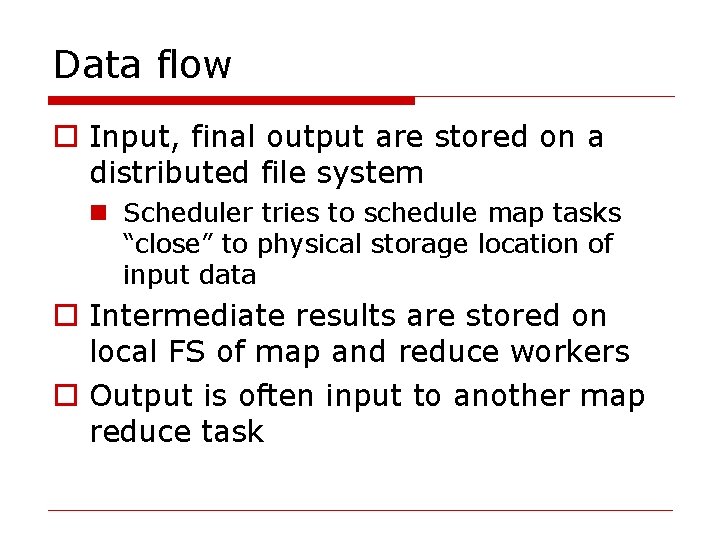

Data flow o Input, final output are stored on a distributed file system n Scheduler tries to schedule map tasks “close” to physical storage location of input data o Intermediate results are stored on local FS of map and reduce workers o Output is often input to another map reduce task

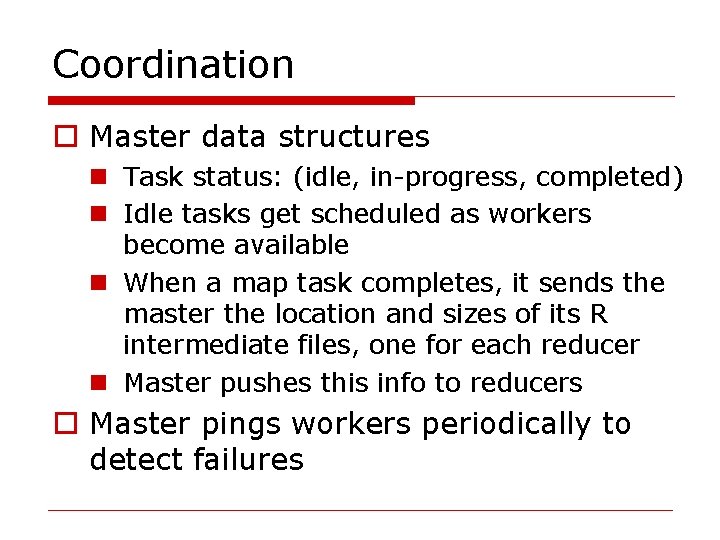

Coordination o Master data structures n Task status: (idle, in-progress, completed) n Idle tasks get scheduled as workers become available n When a map task completes, it sends the master the location and sizes of its R intermediate files, one for each reducer n Master pushes this info to reducers o Master pings workers periodically to detect failures

Failures o Map worker failure n Map tasks completed or in-progress at worker are reset to idle n Reduce workers are notified when task is rescheduled on another worker o Reduce worker failure n Only in-progress tasks are reset to idle o Master failure n Map. Reduce task is aborted and client is notified