CS 294 1 Deeply Embedded Networks Tiny OS

CS 294 -1 Deeply Embedded Networks Tiny. OS Sept 4, 2003 David Culler Fall 2003 University of California, Berkeley 9/4/03 cs 294 -1 f 03

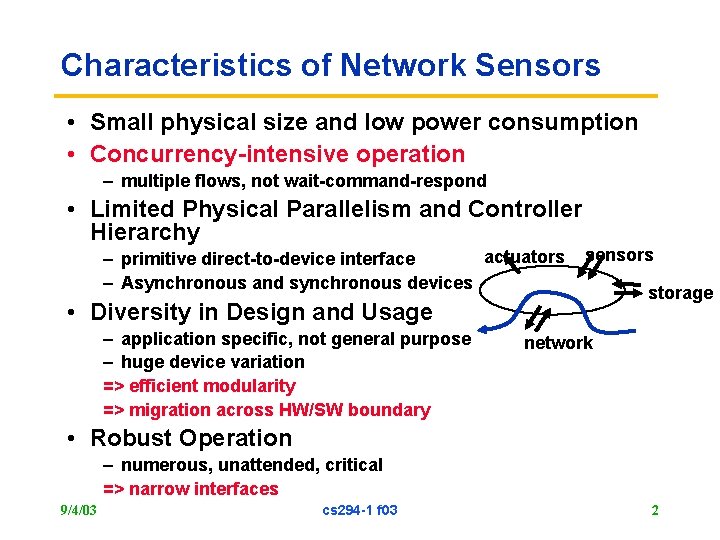

Characteristics of Network Sensors • Small physical size and low power consumption • Concurrency-intensive operation – multiple flows, not wait-command-respond • Limited Physical Parallelism and Controller Hierarchy actuators – primitive direct-to-device interface – Asynchronous and synchronous devices sensors • Diversity in Design and Usage – application specific, not general purpose – huge device variation => efficient modularity => migration across HW/SW boundary storage network • Robust Operation – numerous, unattended, critical => narrow interfaces 9/4/03 cs 294 -1 f 03 2

Classical RTOS approaches • Responsiveness – Provide some form of user-specified interrupt handler » User threads in kernel, user-level interrupts • Controlled Scheduling – Static set of tasks with specified deadlines & constraints » Generate overall schedule » Doesn’t deal with unpredictable events (comm) – Threads + synchronization operations » Sophisticated scheduler to coerce into meeting constraints • Priorities, earliest deadline first, rate monotonic • Priority inversion, load shedding, live lock, deadlock » Sophisticated mutex and signal operations • Communication among parallel entities – Shared (global) variables: ultimate unstructured programming – Mail boxes (msg passing) » dynamic allocation, storage reuse, parsing • external communication considered harmful – Fold in as RPC • Requires multiple (sparse) stacks 9/4/03 – Preemption or yield cs 294 -1 f 03 3

Alternative Starting Points • Event-driven models – Easy to schedule handfuls of small, roughly uniform things » State transitions (but what storage and comm model? ) – Usually results in brittle monolithic dispatch structures • Structured event-driven models – Logical chunks of computation and state that service events via execution of internal threads • Threaded Abstract machine – Developed as compilation target of inherently parallel languages » vast dynamic parallelism » Hide long-latency operations – Simple two-level scheduling hierarchy – Dynamic tree of code- block activations with internal inlets and threads • Active Messages – Both parties in communication know format of the message – Fine-grain dispatch and consume without parsing • Concurrent Data-structures – Non-blocking, lock-free (Herlihy) 9/4/03 cs 294 -1 f 03 4

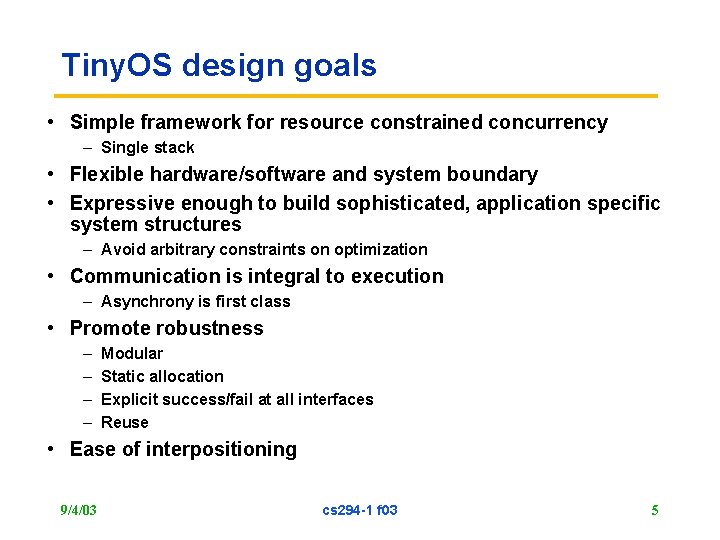

Tiny. OS design goals • Simple framework for resource constrained concurrency – Single stack • Flexible hardware/software and system boundary • Expressive enough to build sophisticated, application specific system structures – Avoid arbitrary constraints on optimization • Communication is integral to execution – Asynchrony is first class • Promote robustness – – Modular Static allocation Explicit success/fail at all interfaces Reuse • Ease of interpositioning 9/4/03 cs 294 -1 f 03 5

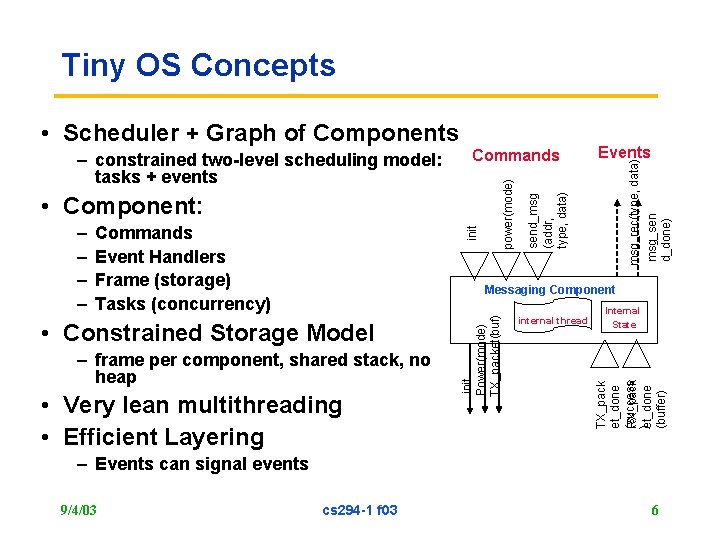

Tiny OS Concepts • Component: init Commands Event Handlers Frame (storage) Tasks (concurrency) Messaging Component – frame per component, shared stack, no heap • Very lean multithreading • Efficient Layering internal thread Internal State TX_pack et_done (success RX_pack )et_done (buffer) • Constrained Storage Model init Power(mode) TX_packet(buf) – – Events send_msg (addr, type, data) Commands power(mode) – constrained two-level scheduling model: tasks + events msg_rec(type, data) msg_sen d_done) • Scheduler + Graph of Components – Events can signal events 9/4/03 cs 294 -1 f 03 6

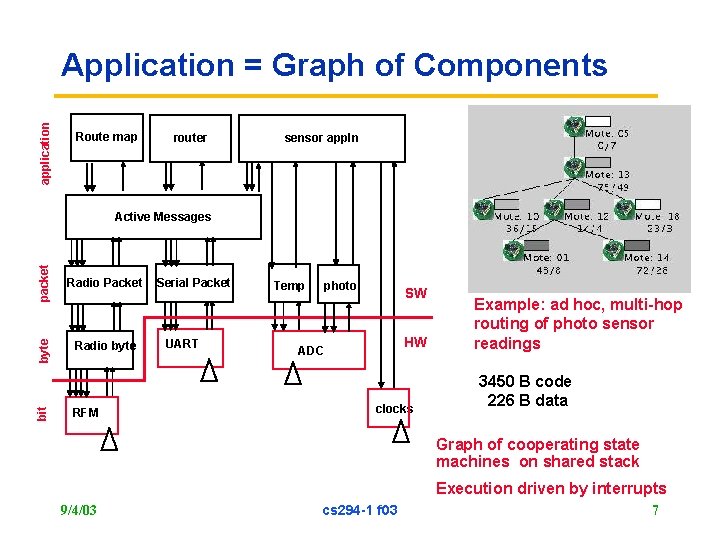

application Application = Graph of Components Route map router sensor appln packet Radio byte bit Radio Packet byte Active Messages RFM Serial Packet UART Temp photo SW HW ADC clocks Example: ad hoc, multi-hop routing of photo sensor readings 3450 B code 226 B data Graph of cooperating state machines on shared stack Execution driven by interrupts 9/4/03 cs 294 -1 f 03 7

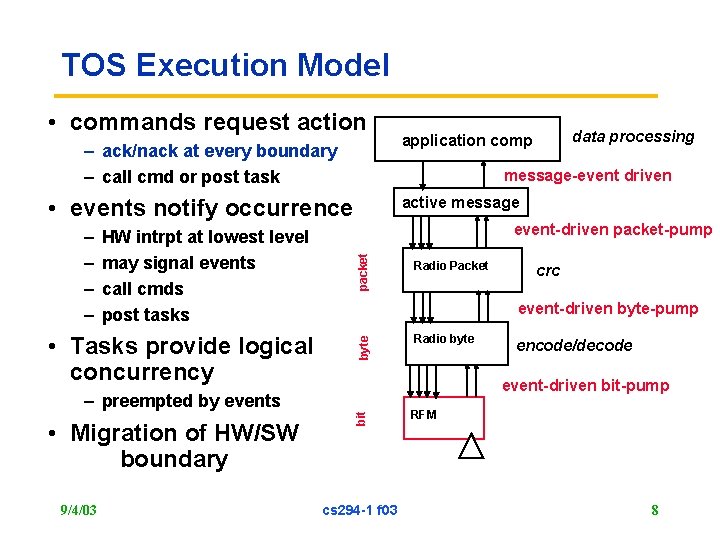

TOS Execution Model • commands request action – ack/nack at every boundary – call cmd or post task message-event driven active message • events notify occurrence • Tasks provide logical concurrency – preempted by events • Migration of HW/SW boundary 9/4/03 packet event-driven packet-pump Radio Packet crc event-driven byte-pump byte HW intrpt at lowest level may signal events call cmds post tasks Radio byte encode/decode event-driven bit-pump bit – – data processing application comp cs 294 -1 f 03 RFM 8

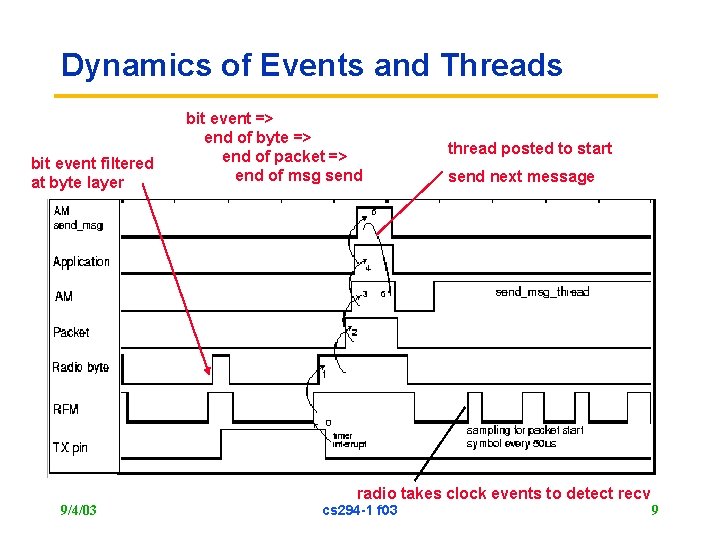

Dynamics of Events and Threads bit event filtered at byte layer bit event => end of byte => end of packet => end of msg send thread posted to start send next message radio takes clock events to detect recv 9/4/03 cs 294 -1 f 03 9

Programming Tiny. OS - nes. C • Tiny. OS 1. x is written in an extension of C, called nes. C • Applications are too! – just additional components composed with the OS components • Provides syntax for Tiny. OS concurrency and storage model – commands, events, tasks – local frame variables • Rich Compositional Support – separation of definition and linkage – robustness through narrow interfaces and reuse – interpositioning • Whole system analysis and optimization 9/4/03 cs 294 -1 f 03 10

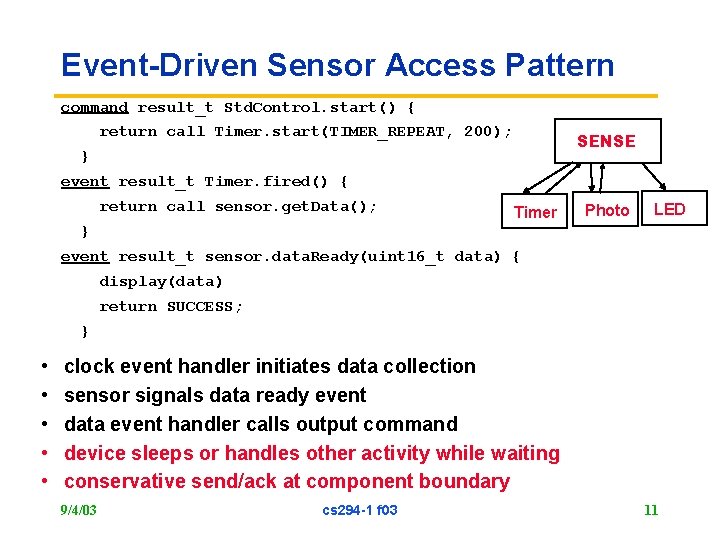

Event-Driven Sensor Access Pattern command result_t Std. Control. start() { return call Timer. start(TIMER_REPEAT, 200); SENSE } event result_t Timer. fired() { return call sensor. get. Data(); Timer Photo LED } event result_t sensor. data. Ready(uint 16_t data) { display(data) return SUCCESS; } • • • clock event handler initiates data collection sensor signals data ready event data event handler calls output command device sleeps or handles other activity while waiting conservative send/ack at component boundary 9/4/03 cs 294 -1 f 03 11

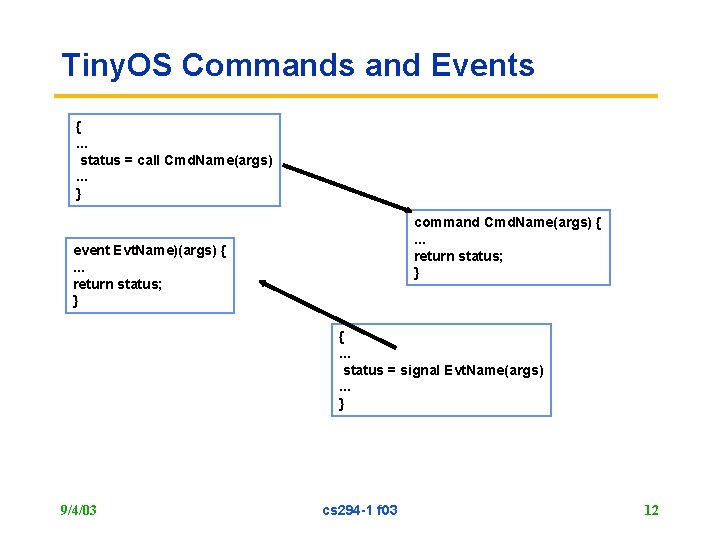

Tiny. OS Commands and Events {. . . status = call Cmd. Name(args). . . } command Cmd. Name(args) {. . . return status; } event Evt. Name)(args) {. . . return status; } {. . . status = signal Evt. Name(args). . . } 9/4/03 cs 294 -1 f 03 12

Split-phase abstraction of HW • Command synchronously initiates action • Device operates concurrently • Signals event(s) in response – – – ADC Clock Send (UART, Radio, …) Recv – depending on model Coprocessor • Higher level (SW) processes don’t wait or poll – Allows automated power management • Higher level components behave the same way – Tasks provide internal concurrency where there is no explicit hardware concurrency • Components (even subtrees) replaced by HW and vice versa 9/4/03 cs 294 -1 f 03 13

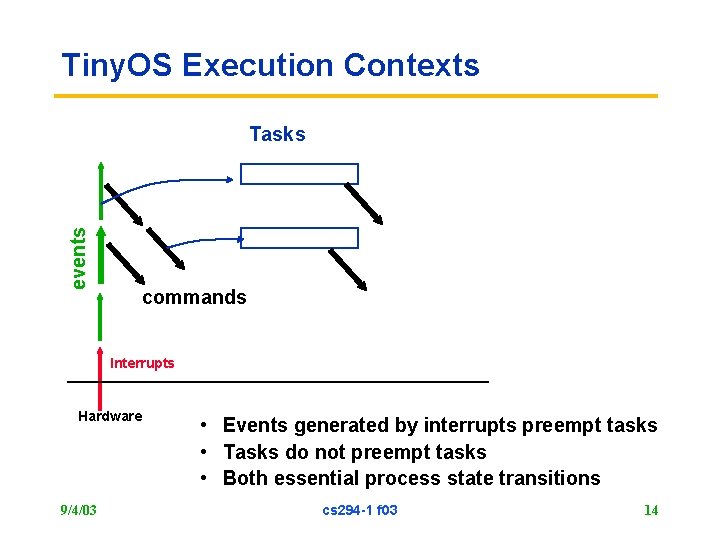

Tiny. OS Execution Contexts events Tasks commands Interrupts Hardware 9/4/03 • Events generated by interrupts preempt tasks • Tasks do not preempt tasks • Both essential process state transitions cs 294 -1 f 03 14

Storage Model • Local storage associated with each component (or instance of) – Internally managed • Only objects passed by reference are message buffers 9/4/03 cs 294 -1 f 03 15

Data sharing • Passed as arguments to command or event handler – Don’t make intra-node communication heavy-weight • If queuing is appropriate, implement it – Send queue – Receive queue – Intermediate queue • Bounded depth, overflow is explicit – Most components implement 1 -deep queues at the interface • Don’t force marshalling/unmarshalling through generic queue mechanism • If you want shared state, created an explicit component with interfaces to it. 9/4/03 cs 294 -1 f 03 16

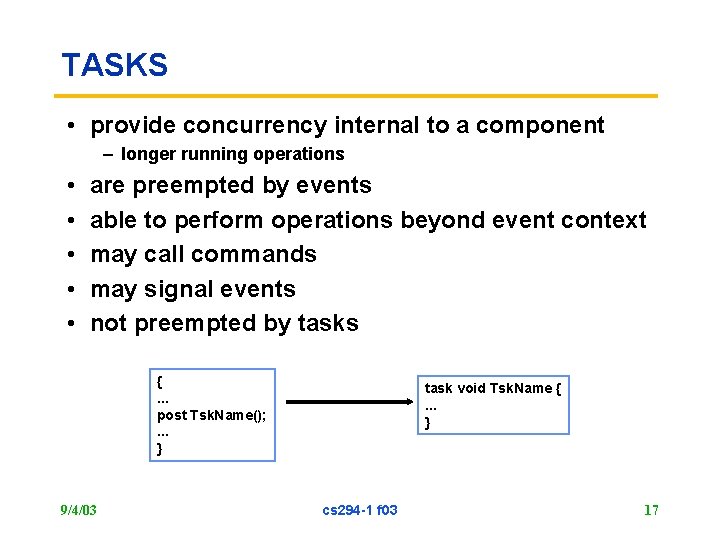

TASKS • provide concurrency internal to a component – longer running operations • • • are preempted by events able to perform operations beyond event context may call commands may signal events not preempted by tasks {. . . post Tsk. Name(); . . . } 9/4/03 task void Tsk. Name {. . . } cs 294 -1 f 03 17

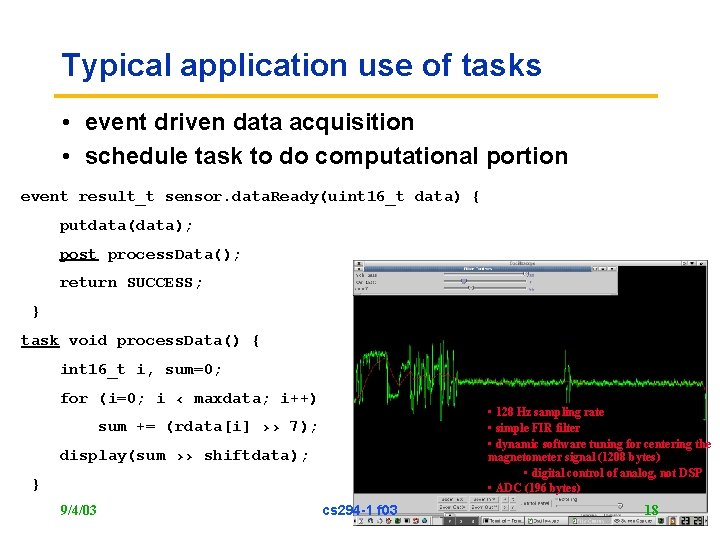

Typical application use of tasks • event driven data acquisition • schedule task to do computational portion event result_t sensor. data. Ready(uint 16_t data) { putdata(data); post process. Data(); return SUCCESS; } task void process. Data() { int 16_t i, sum=0; for (i=0; i ‹ maxdata; i++) • 128 Hz sampling rate • simple FIR filter • dynamic software tuning for centering the magnetometer signal (1208 bytes) • digital control of analog, not DSP • ADC (196 bytes) sum += (rdata[i] ›› 7); display(sum ›› shiftdata); } 9/4/03 cs 294 -1 f 03 18

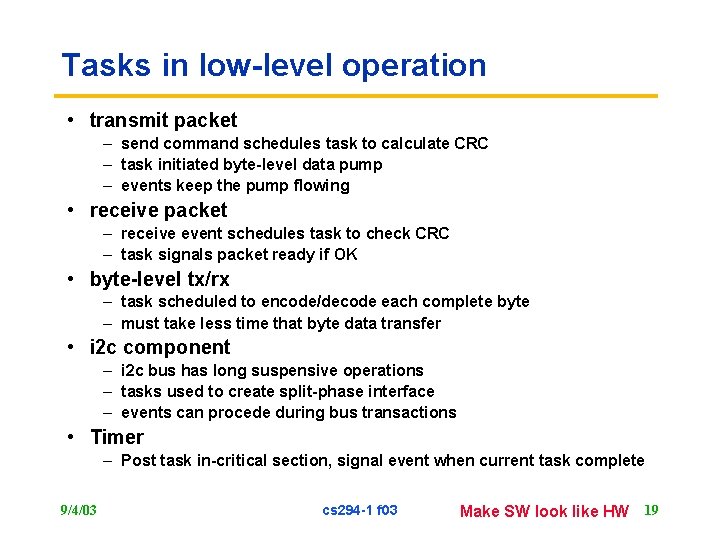

Tasks in low-level operation • transmit packet – send command schedules task to calculate CRC – task initiated byte-level data pump – events keep the pump flowing • receive packet – receive event schedules task to check CRC – task signals packet ready if OK • byte-level tx/rx – task scheduled to encode/decode each complete byte – must take less time that byte data transfer • i 2 c component – i 2 c bus has long suspensive operations – tasks used to create split-phase interface – events can procede during bus transactions • Timer – Post task in-critical section, signal event when current task complete 9/4/03 cs 294 -1 f 03 Make SW look like HW 19

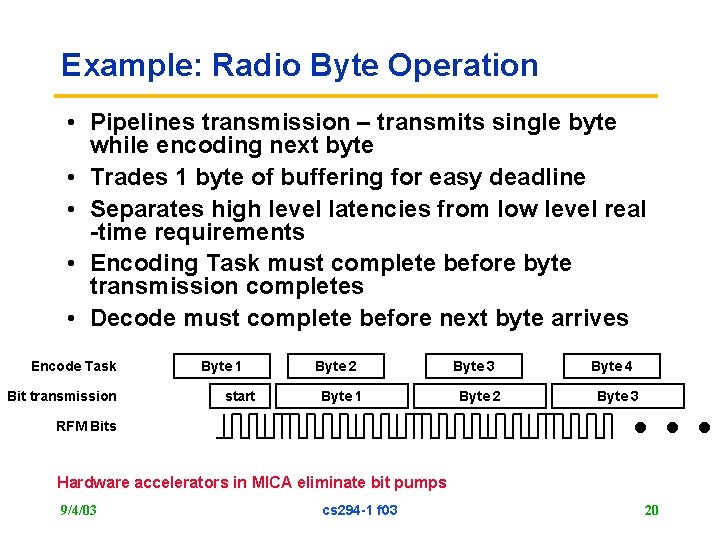

Example: Radio Byte Operation • Pipelines transmission – transmits single byte while encoding next byte • Trades 1 byte of buffering for easy deadline • Separates high level latencies from low level real -time requirements • Encoding Task must complete before byte transmission completes • Decode must complete before next byte arrives Encode Task Bit transmission Byte 1 start Byte 2 Byte 1 RFM Bits Byte 3 Byte 2 … Byte 4 Byte 3 Hardware accelerators in MICA eliminate bit pumps 9/4/03 cs 294 -1 f 03 20

Task Scheduling • Currently simple fifo scheduler • Bounded number of pending tasks • When idle, shuts down node (except clock) • Uses non-blocking task queue data structure • Simple event-driven structure + control over complete application/system graph – instead of complex task priorities and IPC 9/4/03 cs 294 -1 f 03 21

Structured Events vs Multi-tasking • Storage • Control Paradigm – Always block/yield – rely on thread switching – Never block – rely on event signaling • Communication & Coordination among potentially parallel activities – Threads: global variables/mailboxes, mutex, signaling – Preemptive – handle many potential races – Non-premptive » All interactions protected by costs system synch ops – Events: signaling • Scheduling: – Complex threads require sophisticating scheduling – Collections of simple events ? ? 9/4/03 cs 294 -1 f 03 22

Communication • Essentially just like a call • Receive is inherently asynchronous • Don’t introduce potentially unbounded storage allocation • Avoid copies and gather/scatter (mbuf problem) 9/4/03 cs 294 -1 f 03 23

Tiny Active Messages • Sending – – Declare buffer storage in a frame Request Transmission Name a handler Handle Completion signal • Receiving – Declare a handler – Firing a handler » automatic » behaves like any other event • Buffer management – strict ownership exchange – tx: done event => reuse – rx: must rtn a buffer 9/4/03 cs 294 -1 f 03 24

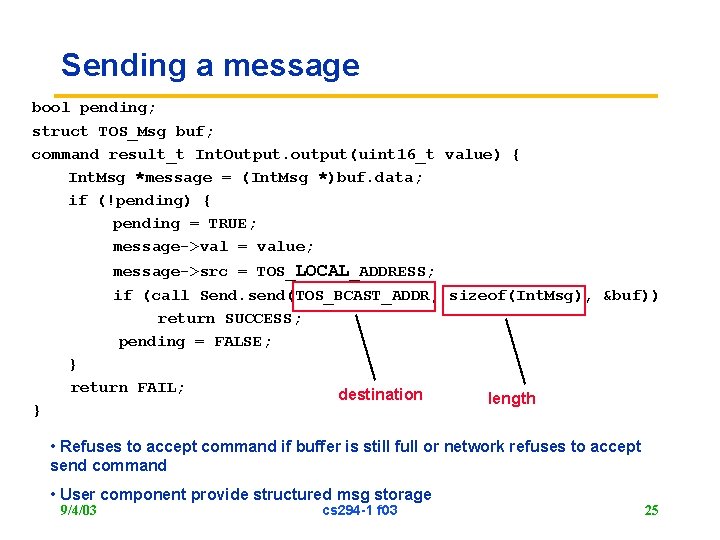

Sending a message bool pending; struct TOS_Msg buf; command result_t Int. Output. output(uint 16_t value) { Int. Msg *message = (Int. Msg *)buf. data; if (!pending) { pending = TRUE; message->val = value; message->src = TOS_LOCAL_ADDRESS; if (call Send. send(TOS_BCAST_ADDR, sizeof(Int. Msg), &buf)) return SUCCESS; pending = FALSE; } return FAIL; } destination length • Refuses to accept command if buffer is still full or network refuses to accept send command • User component provide structured msg storage 9/4/03 cs 294 -1 f 03 25

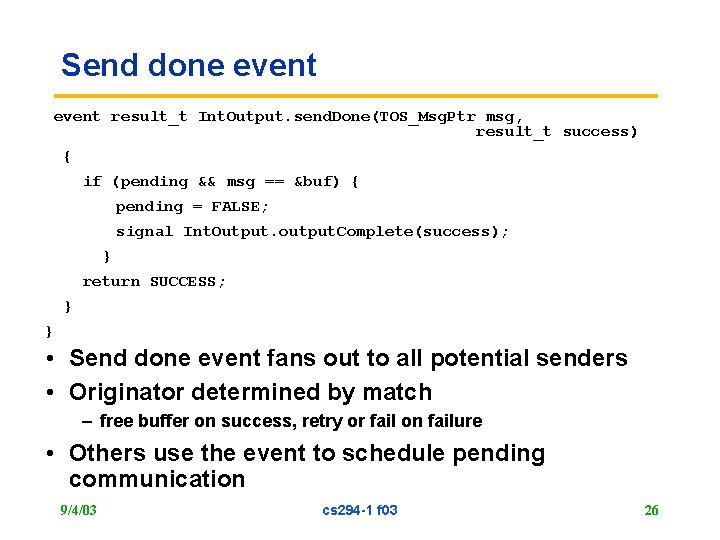

Send done event result_t Int. Output. send. Done(TOS_Msg. Ptr msg, result_t success) { if (pending && msg == &buf) { pending = FALSE; signal Int. Output. output. Complete(success); } return SUCCESS; } } • Send done event fans out to all potential senders • Originator determined by match – free buffer on success, retry or fail on failure • Others use the event to schedule pending communication 9/4/03 cs 294 -1 f 03 26

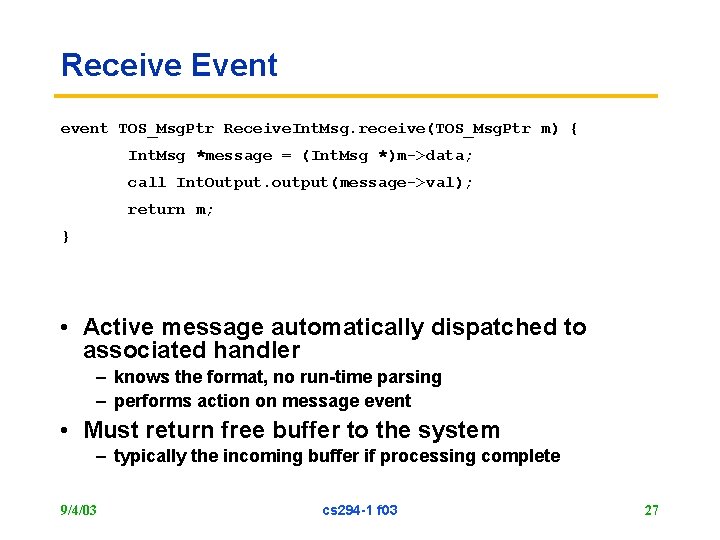

Receive Event event TOS_Msg. Ptr Receive. Int. Msg. receive(TOS_Msg. Ptr m) { Int. Msg *message = (Int. Msg *)m->data; call Int. Output. output(message->val); return m; } • Active message automatically dispatched to associated handler – knows the format, no run-time parsing – performs action on message event • Must return free buffer to the system – typically the incoming buffer if processing complete 9/4/03 cs 294 -1 f 03 27

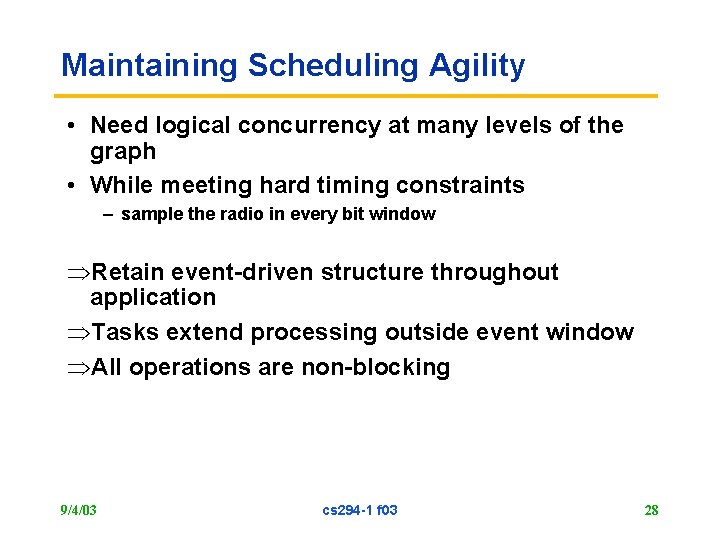

Maintaining Scheduling Agility • Need logical concurrency at many levels of the graph • While meeting hard timing constraints – sample the radio in every bit window ÞRetain event-driven structure throughout application ÞTasks extend processing outside event window ÞAll operations are non-blocking 9/4/03 cs 294 -1 f 03 28

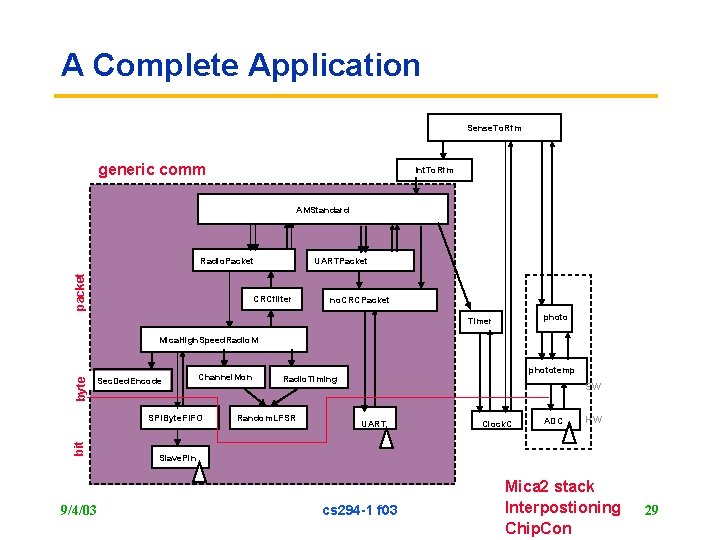

A Complete Application Sense. To. Rfm generic comm Int. To. Rfm AMStandard packet Radio. Packet UARTPacket CRCfilter no. CRCPacket photo Timer byte Mica. High. Speed. Radio. M Sec. Ded. Encode Channel. Mon bit SPIByte. FIFO 9/4/03 phototemp Radio. Timing Random. LFSR SW UART Clock. C ADC HW Slave. Pin cs 294 -1 f 03 Mica 2 stack Interpostioning Chip. Con 29

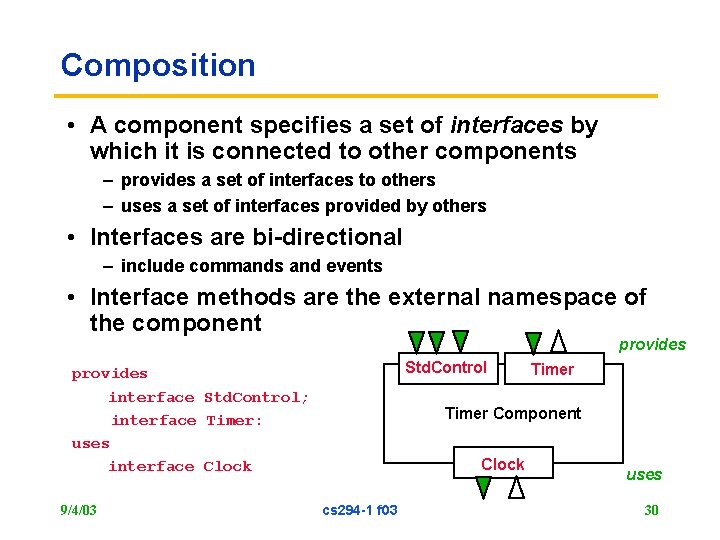

Composition • A component specifies a set of interfaces by which it is connected to other components – provides a set of interfaces to others – uses a set of interfaces provided by others • Interfaces are bi-directional – include commands and events • Interface methods are the external namespace of the component provides Std. Control provides interface Std. Control; interface Timer: uses interface Clock 9/4/03 Timer Component Clock cs 294 -1 f 03 uses 30

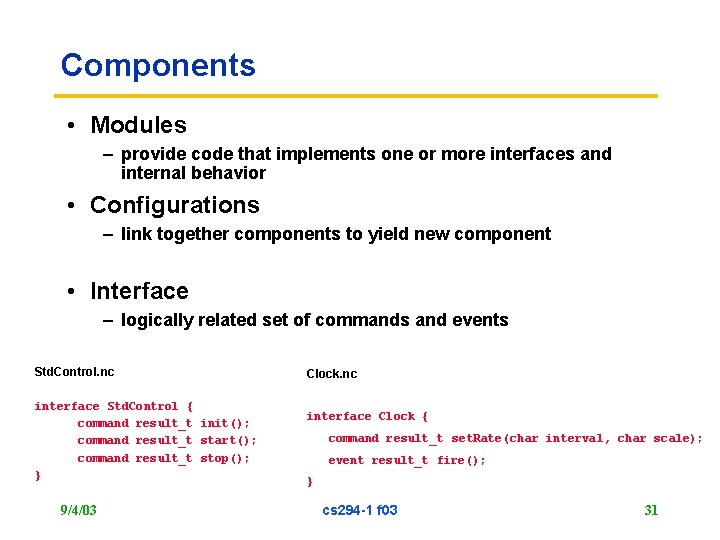

Components • Modules – provide code that implements one or more interfaces and internal behavior • Configurations – link together components to yield new component • Interface – logically related set of commands and events Std. Control. nc interface Std. Control { command result_t init(); command result_t start(); command result_t stop(); } 9/4/03 Clock. nc interface Clock { command result_t set. Rate(char interval, char scale); event result_t fire(); } cs 294 -1 f 03 31

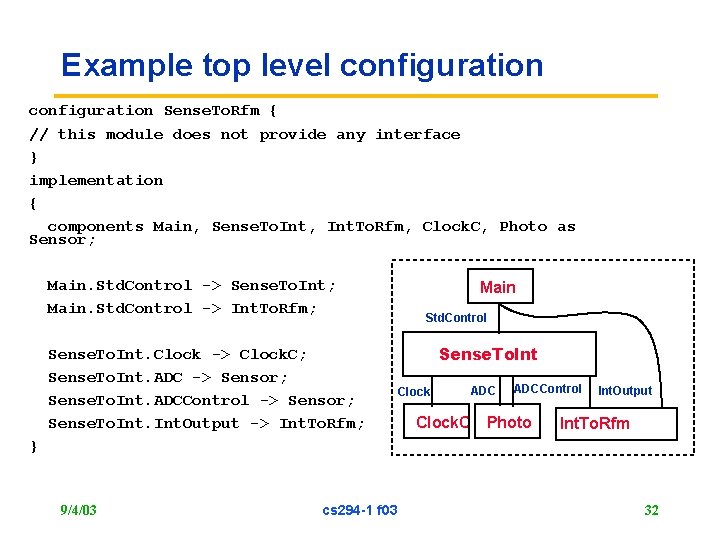

Example top level configuration Sense. To. Rfm { // this module does not provide any interface } implementation { components Main, Sense. To. Int, Int. To. Rfm, Clock. C, Photo as Sensor; Main. Std. Control -> Sense. To. Int; Main. Std. Control -> Int. To. Rfm; Sense. To. Int. Clock -> Clock. C; Sense. To. Int. ADC -> Sensor; Sense. To. Int. ADCControl -> Sensor; Sense. To. Int. Output -> Int. To. Rfm; Main Std. Control Sense. To. Int Clock ADCControl Clock. C Photo Int. Output Int. To. Rfm } 9/4/03 cs 294 -1 f 03 32

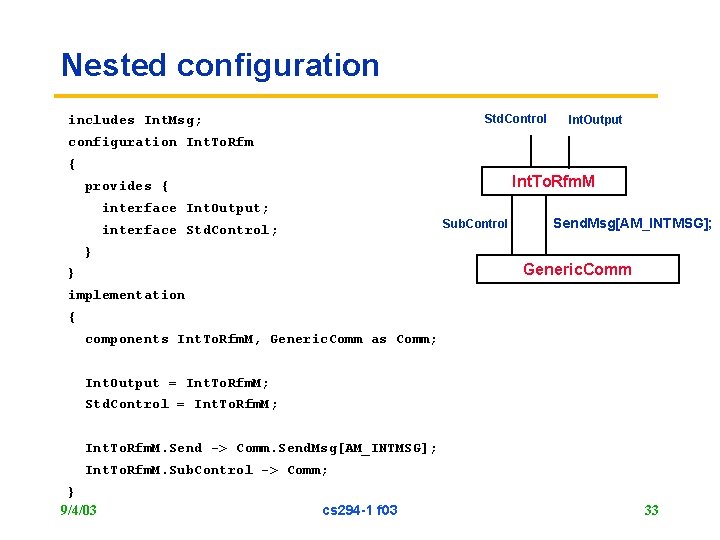

Nested configuration Std. Control includes Int. Msg; Int. Output configuration Int. To. Rfm { Int. To. Rfm. M provides { interface Int. Output; Sub. Control interface Std. Control; Send. Msg[AM_INTMSG]; } Generic. Comm } implementation { components Int. To. Rfm. M, Generic. Comm as Comm; Int. Output = Int. To. Rfm. M; Std. Control = Int. To. Rfm. M; Int. To. Rfm. M. Send -> Comm. Send. Msg[AM_INTMSG]; Int. To. Rfm. M. Sub. Control -> Comm; } 9/4/03 cs 294 -1 f 03 33

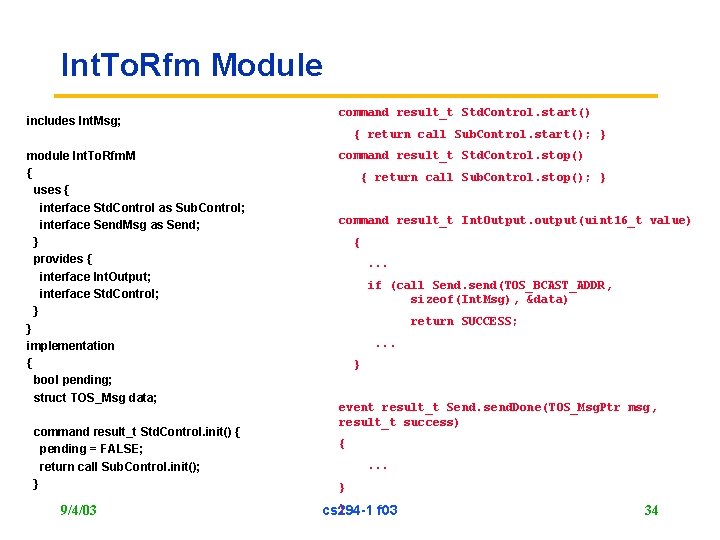

Int. To. Rfm Module includes Int. Msg; command result_t Std. Control. start() { return call Sub. Control. start(); } module Int. To. Rfm. M { uses { interface Std. Control as Sub. Control; interface Send. Msg as Send; } provides { interface Int. Output; interface Std. Control; } } implementation { bool pending; struct TOS_Msg data; command result_t Std. Control. init() { pending = FALSE; return call Sub. Control. init(); } 9/4/03 command result_t Std. Control. stop() { return call Sub. Control. stop(); } command result_t Int. Output. output(uint 16_t value) {. . . if (call Send. send(TOS_BCAST_ADDR, sizeof(Int. Msg), &data) return SUCCESS; . . . } event result_t Send. send. Done(TOS_Msg. Ptr msg, result_t success) {. . . } } cs 294 -1 f 03 34

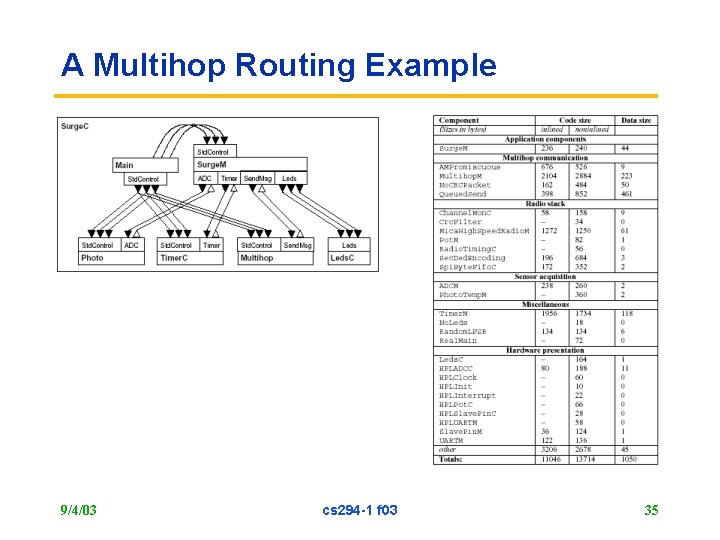

A Multihop Routing Example 9/4/03 cs 294 -1 f 03 35

Given the framework, what’s the system? • Core Subsystems – – – – Simple Display (LEDS) Identity Timer Bus interfaces (i 2 c, SPI, UART, 1 -wire) Data Acquisition Link-level Communication Power management Non-volatile storage • Higher Level Subsystems – Network-level communication » Broadcast, Multihop Routing – Time Synchronization, Ranging, Localization – Network Programming – Neighborhood Management – Catalog, Config, Query Processing, Virtual Machine 9/4/03 cs 294 -1 f 03 36

Timer • Clock abstraction over HW mechanism – – 3 -4 registers of various sizes Operations to set scale, phase, limit One-shot, periodic Signals critical event • One physical clock must operate in minimum energy state with rest of the device power off • Timer provides collection of logical clocks – Clean units – One-shot or periodic – Manage Underlyng Clock Resources » Mapping to physical clock » Minimum spacing and accuracy – Signals non-critical event 9/4/03 cs 294 -1 f 03 37

Data Acquistion • Many different kinds of sensor connections – Analog sensor into ADC – Digital Sensors (i 2 c) – External ADCs • Get Command to Logical Sensor • Data-ready signal • Power management • Quality of sampling interval (jitter) depends on timer and sensor substack • Sample rate limits? 9/4/03 cs 294 -1 f 03 38

Communication • Parameterized Active Message Abstraction • Multiple Media – Radio » RFM, Mica-RFM, Chip. Con – UART – i 2 C • Media routing based on destination address • Side Attributes – Link-level ack – Timestamp – Signal-strength • Security can be interposed (Tiny. Sec) • Inherently Bursty and Unpredictable – Unless higher level protocols make it predictable 9/4/03 cs 294 -1 f 03 39

Composable Power Management • Scheduler – On idle drops into preset sleep state till interrupt » Proc: Active: 5 m. A, Idle: 2 m. A, Sleep: 5 u. A » Radio, Sensor, Co-Processors • All Components implement Std_Control Interface – Power mgmt of non-processor resources – Start: bring subsystem to operational level, inform pwr_mgmt – Stop: bring subsystem to sleep, inform pwr_mgmt • Power-management Component – Establishes sleep state should scheduler idle » Knows what is shut down, potential sleep duration • Timer – Pulls system out of deep sleep – What happens after depends on what event handler does • Applications compose policy 9/4/03 cs 294 -1 f 03 40

Example Pwr Mgmt Policies • Great Duck Island – Every 5 mins: sample, send, low_power_route – Low-power listen » Transmit include wake preamble (x) » Receive powers off radio (~x) – if sufficiently long – Application just adjusts x • Generic Sensor Kit: epochs – Periodic: Active phase / Sleep Phase – Active » Sample, process query, route, aggregate – Sleep » Power down for set time 9/4/03 cs 294 -1 f 03 41

Dynamics • All sleep till clock expires • Interrupts proc to HW event – Critical handling, signal clock event • Timer Handles Clock Event – If merely expired as part of longer sleep, system returns to deep sleep – If events are associated with event, they are signalled – Post tasks – Call Start, … 9/4/03 cs 294 -1 f 03 42

Typical Operational Mode • Major External Events – Trigger collection of small processing steps (tasks and events) – May have interval of hard real time sampling » Radio » Sensor – Interleaved with moderate amount of processing in small chunks at various levels • Periods of sleep – Interspersed with timer mgmt 9/4/03 cs 294 -1 f 03 43

Where to classical RTOS issues arise? • Low latency events – Two-level scheduling, short critical events – Lowest layer of components complete critical section before signalling logical event – Closely aligned low-latency events? » Radio/uart/timer happen to hit at same time • Periodic, pseudo-periodic – Timer component design » Static scheduling » Rate monotonic? – Scheduler? – Networking is bursty. Network sheduling? • Deadline? – Data queuing • Load shedding? – At load duty cycle? • Provability? 9/4/03 cs 294 -1 f 03 44

Supporting HW evolution • Distribution broken into – – apps: top-level applications lib: shared application components system: hardware independent system components platform: hardware dependent system components » includes HPLs and hardware. h • Component design so HW and SW look the same – example: temp component » may abstract particular channel of ADC on the microcontroller » may be a SW i 2 C protocol to a sensor board with digital sensor or ADC • HW/SW boundary can move up and down with minimal changes 9/4/03 cs 294 -1 f 03 45

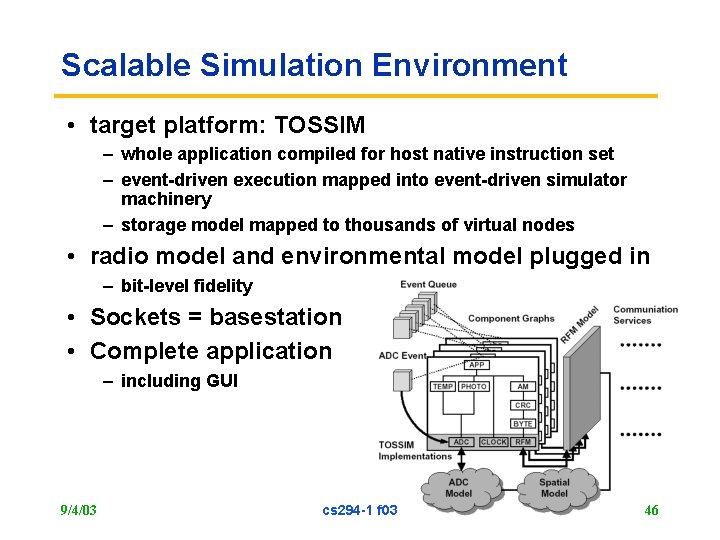

Scalable Simulation Environment • target platform: TOSSIM – whole application compiled for host native instruction set – event-driven execution mapped into event-driven simulator machinery – storage model mapped to thousands of virtual nodes • radio model and environmental model plugged in – bit-level fidelity • Sockets = basestation • Complete application – including GUI 9/4/03 cs 294 -1 f 03 46

Issues • Timer Architecture far more fundamental than anticipated – Simple applications built around clock – One component set period, all took multiples of it. – Poor composition. • Application Oblivious Timer too imprecise • More complex applications have 1 high precision timer – – maybe 2 sometimes high rate Besides radio Must be serviced by hardware periodic counter • Other general uses should have a clean way to obtain intervals in multiples of primary tick in clean manner – Offset in phase to avoid collision – Coordinate multiple low-jitter subsystems » UART and Radio 9/4/03 cs 294 -1 f 03 47

Multiple levels of tasks • Easy to implement multiple, static priorities upon single stack • Lowest level events handler immediately • “System” tasks to realize concurrency within components – Must still be quick to keep flow throughout system smooth – Grains of sand, not bricks • “Background” tasks – Run as long as they want, preempted by system tasks – Build next to it, but not on top of it. 9/4/03 cs 294 -1 f 03 48

Co-processors for fidelity • Several designs have subsystems with dedicated tiny. OS processors – Ranging – Moto. Control 9/4/03 cs 294 -1 f 03 49

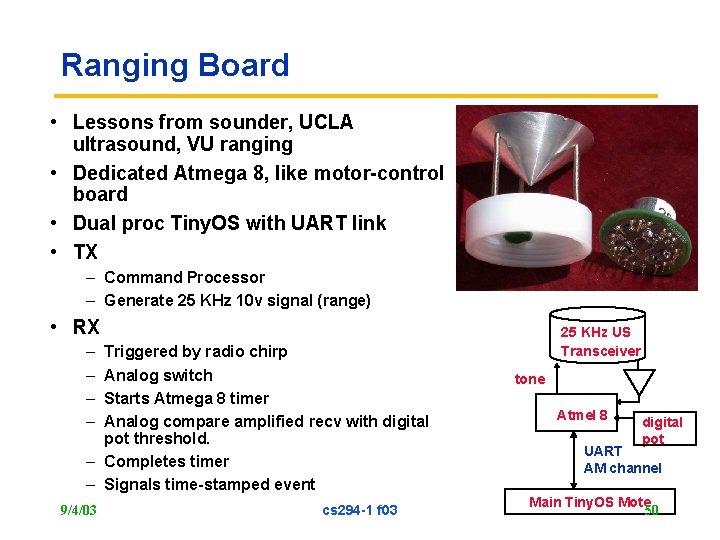

Ranging Board • Lessons from sounder, UCLA ultrasound, VU ranging • Dedicated Atmega 8, like motor-control board • Dual proc Tiny. OS with UART link • TX – Command Processor – Generate 25 KHz 10 v signal (range) • RX – – Triggered by radio chirp Analog switch Starts Atmega 8 timer Analog compare amplified recv with digital pot threshold. – Completes timer – Signals time-stamped event 9/4/03 cs 294 -1 f 03 25 KHz US Transceiver tone Atmel 8 digital pot UART AM channel Main Tiny. OS Mote 50

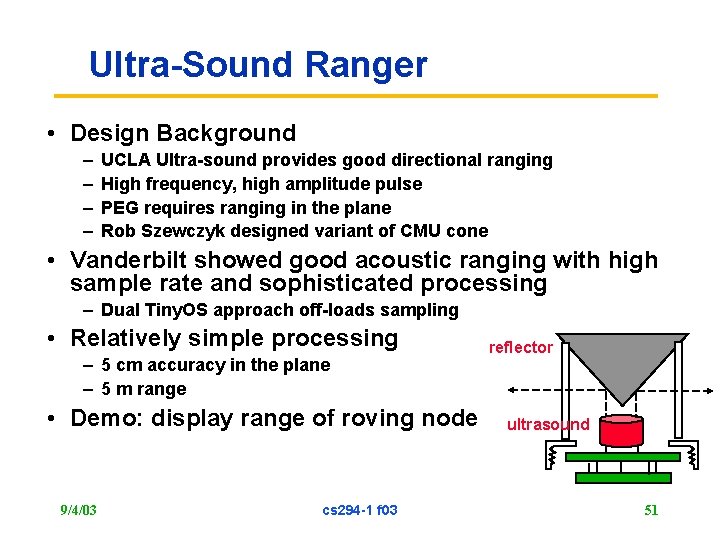

Ultra-Sound Ranger • Design Background – – UCLA Ultra-sound provides good directional ranging High frequency, high amplitude pulse PEG requires ranging in the plane Rob Szewczyk designed variant of CMU cone • Vanderbilt showed good acoustic ranging with high sample rate and sophisticated processing – Dual Tiny. OS approach off-loads sampling • Relatively simple processing – 5 cm accuracy in the plane – 5 m range • Demo: display range of roving node 9/4/03 cs 294 -1 f 03 reflector ultrasound 51

Managing Critical Section • General philosophy – Lowest level (hardware abstraction) components perform minimal processing to package interrupt – Reenable before signally event – Implies that events may stack » Non-interference from separation of components at the leaves » No multiple interrupts within component » Event handling less than interval for particular type – Where insufficient, build a queue • Interrupt disable may be used to realized atomicity not provided in the hardware 9/4/03 cs 294 -1 f 03 52

Avoiding Races • AC code – reachable from a hardware interrupt • SC code – reachable only from a task • AC code requires great care to avoid potential races • SC code can be much sloppier (and cheaper) if there is no sharing with AC code – EX: managing a pending flag • Most complex components are higher level and will never be connected to truly asynchronous event sources • Compiler can help – detect race conditions knowing context of components use 9/4/03 cs 294 -1 f 03 53

Other points of discussion • No dynamic allocation of msg buffers may be too draconian • Encapsulation in stack composition – Component behavior dependent on composition – Layered Headers • System construction as synthesis from specification 9/4/03 cs 294 -1 f 03 54

- Slides: 54