CS 184 b Computer Architecture Abstractions and Optimizations

CS 184 b: Computer Architecture (Abstractions and Optimizations) Day 12: May 3, 2003 Shared Memory Caltech CS 184 Spring 2003 -- De. Hon 1

Today • Shared Memory – Model – Bus-based Snooping – Cache Coherence • Synchronization – Primitives – Algorithms – Performance Caltech CS 184 Spring 2003 -- De. Hon 2

Shared Memory Model • Same model as multithreaded uniprocessor – Single, shared, global address space – Multiple threads (PCs) – Run in same address space – Communicate through memory • Memory appear identical between threads • Hidden from users (looks like memory op) Caltech CS 184 Spring 2003 -- De. Hon 3

Synchronization • For correctness have to worry about synchronization – Otherwise non-deterministic behavior – Threads run asynchronously – Without additional/synchronization discipline • Cannot say anything about relative timing Caltech CS 184 Spring 2003 -- De. Hon 4

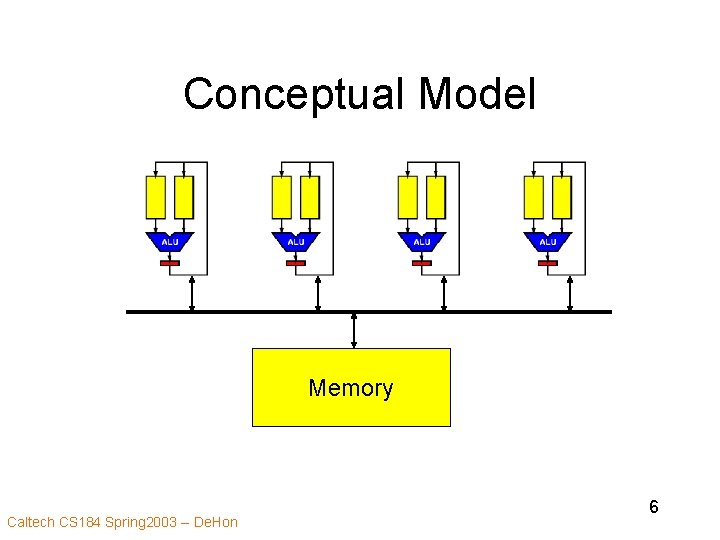

Models • Conceptual model: – Processor per thread – Single shared memory • Programming Model: – Sequential language – Thread Package – Synchronization primitives • Architecture Model: Multithreaded uniprocessor Caltech CS 184 Spring 2003 -- De. Hon 5

Conceptual Model Memory Caltech CS 184 Spring 2003 -- De. Hon 6

Architecture Model Implications • Coherent view of memory – Any processor reading at time X will see same value – All writes eventually effect memory • Until overwritten – Writes to memory seen in same order by all processors • Sequentially Consistent Memory View Caltech CS 184 Spring 2003 -- De. Hon 7

Sequential Consistency • Memory must reflect some valid sequential interleaving of the threads Caltech CS 184 Spring 2003 -- De. Hon 8

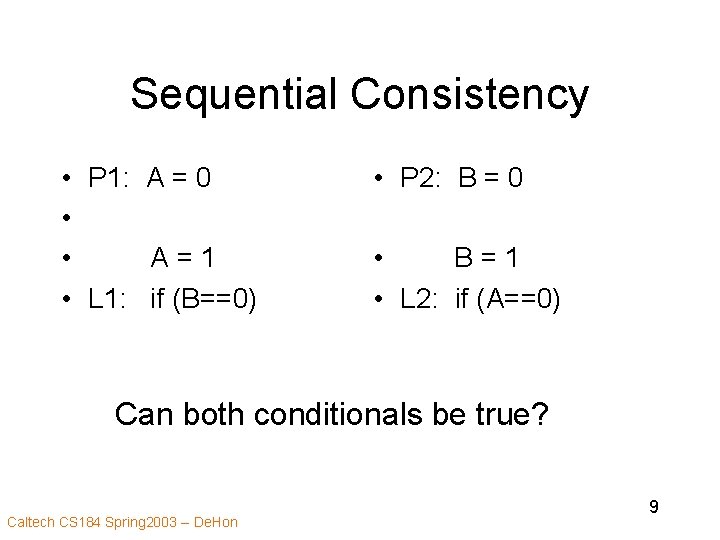

Sequential Consistency • P 1: A = 0 • • A=1 • L 1: if (B==0) • P 2: B = 0 • B=1 • L 2: if (A==0) Can both conditionals be true? Caltech CS 184 Spring 2003 -- De. Hon 9

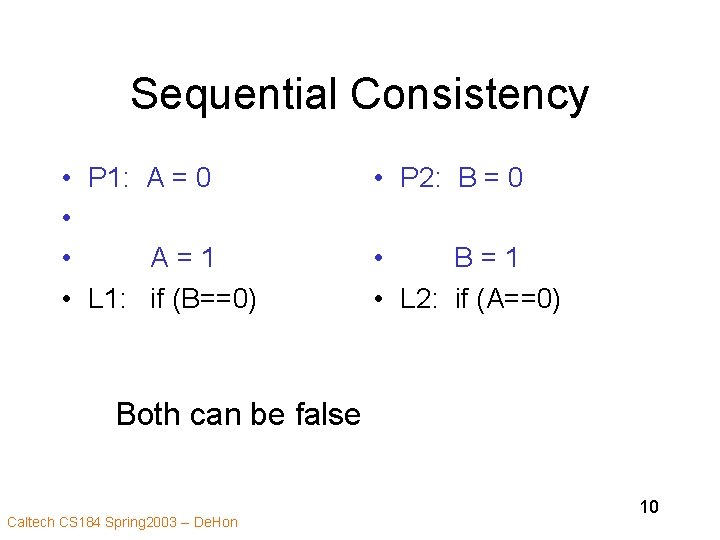

Sequential Consistency • P 1: A = 0 • • A=1 • L 1: if (B==0) • P 2: B = 0 • B=1 • L 2: if (A==0) Both can be false Caltech CS 184 Spring 2003 -- De. Hon 10

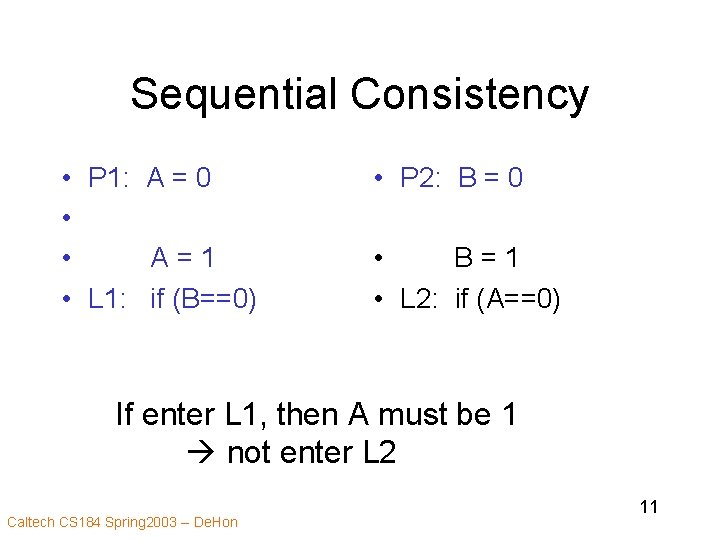

Sequential Consistency • P 1: A = 0 • • A=1 • L 1: if (B==0) • P 2: B = 0 • B=1 • L 2: if (A==0) If enter L 1, then A must be 1 not enter L 2 Caltech CS 184 Spring 2003 -- De. Hon 11

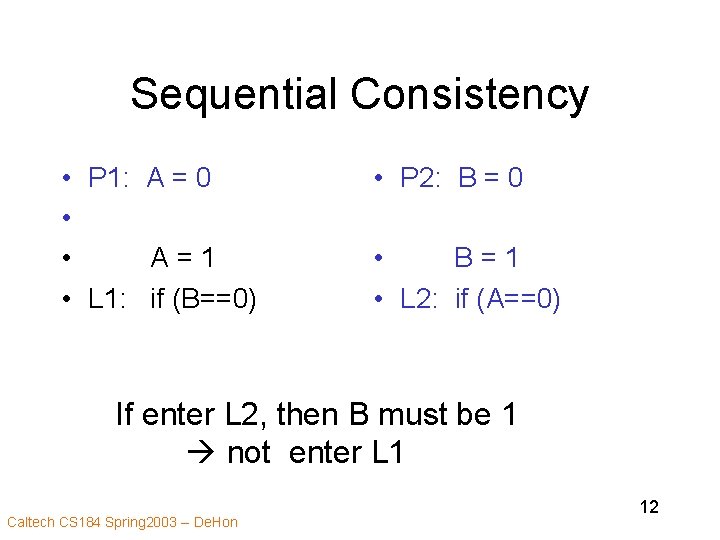

Sequential Consistency • P 1: A = 0 • • A=1 • L 1: if (B==0) • P 2: B = 0 • B=1 • L 2: if (A==0) If enter L 2, then B must be 1 not enter L 1 Caltech CS 184 Spring 2003 -- De. Hon 12

Coherence Alone • Coherent view of memory – Any processor reading at time X will see same value – All writes eventually effect memory • Until overwritten – Writes to memory seen in same order by all processors • Coherence alone does not guarantee sequential consistency Caltech CS 184 Spring 2003 -- De. Hon 13

Sequential Consistency • P 1: A = 0 • • A=1 • L 1: if (B==0) • P 2: B = 0 • B=1 • L 2: if (A==0) If not force visible changes of variable, (assignments of A, B), could end up inside both. Caltech CS 184 Spring 2003 -- De. Hon 14

Consistency • Deals with when written value must be seen by readers • Coherence – w/ respect to same memory location • Consistency – w/ respect to other memory locations • …there are less strict consistency models… Caltech CS 184 Spring 2003 -- De. Hon 15

Implementation Caltech CS 184 Spring 2003 -- De. Hon 16

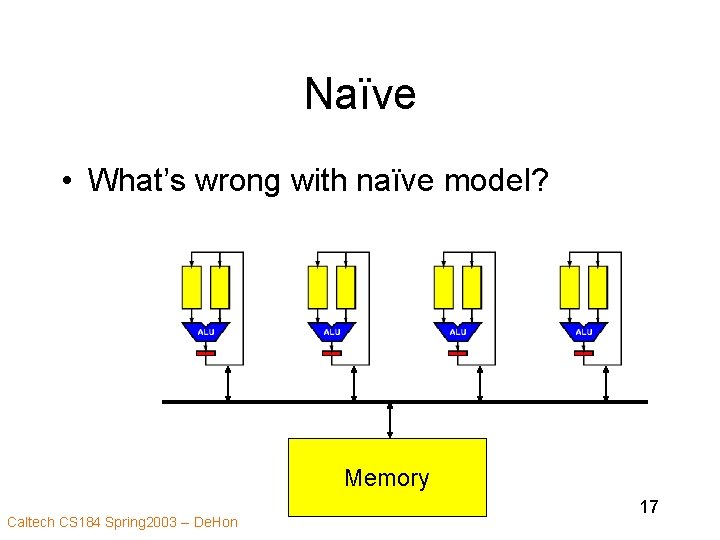

Naïve • What’s wrong with naïve model? Memory Caltech CS 184 Spring 2003 -- De. Hon 17

What’s Wrong? • Memory bandwidth – 1 instruction reference per instruction – 0. 3 memory references per instruction – 333 ps cycle – N*5 Gwords/s ? • Interconnect • Memory access latency Caltech CS 184 Spring 2003 -- De. Hon 18

Optimizing • How do we improve? Caltech CS 184 Spring 2003 -- De. Hon 19

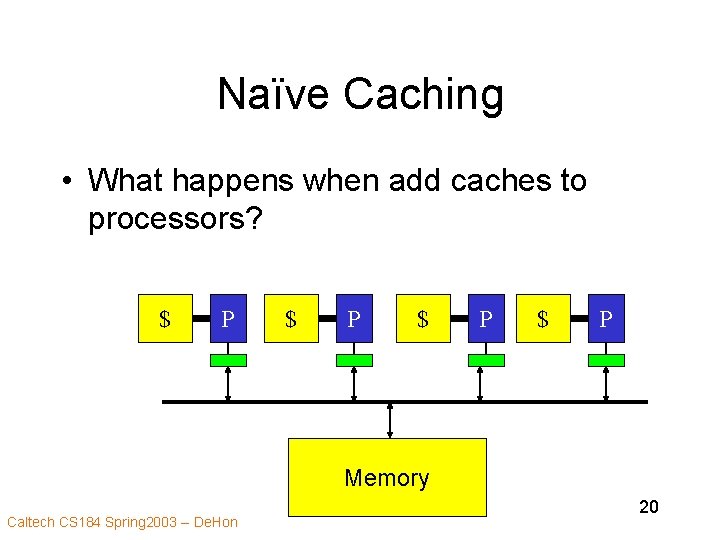

Naïve Caching • What happens when add caches to processors? $ P $ P Memory Caltech CS 184 Spring 2003 -- De. Hon 20

Naïve Caching • Cached answers may be stale • Shadow the correct value Caltech CS 184 Spring 2003 -- De. Hon 21

How have both? • Keep caching – Reduces main memory bandwidth – Reduces access latency • Satisfy Model Caltech CS 184 Spring 2003 -- De. Hon 22

Cache Coherence • Make sure everyone sees same values • Avoid having stale values in caches • At end of write, all cached values should be the same Caltech CS 184 Spring 2003 -- De. Hon 23

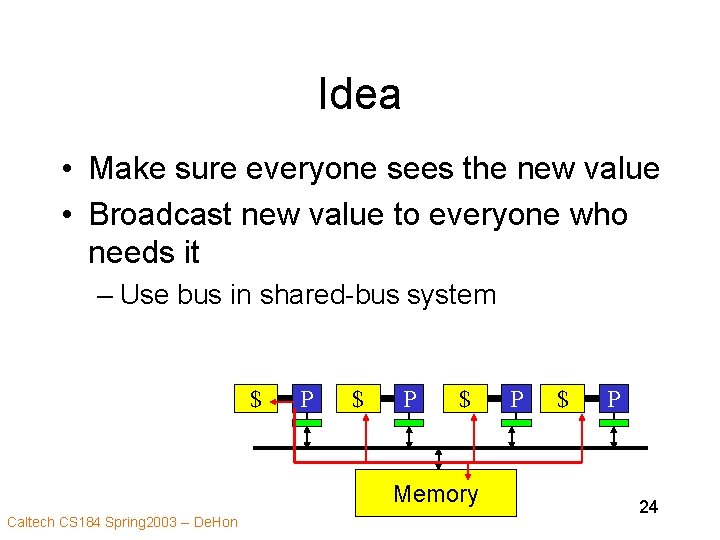

Idea • Make sure everyone sees the new value • Broadcast new value to everyone who needs it – Use bus in shared-bus system $ P $ Memory Caltech CS 184 Spring 2003 -- De. Hon P $ P 24

Effects • Memory traffic is now just: – Cache misses – All writes Caltech CS 184 Spring 2003 -- De. Hon 25

Additional Structure? • Only necessary to write/broadcast a value if someone else has it cached • Can write locally if know sole owner – Reduces main memory traffic – Reduces write latency Caltech CS 184 Spring 2003 -- De. Hon 26

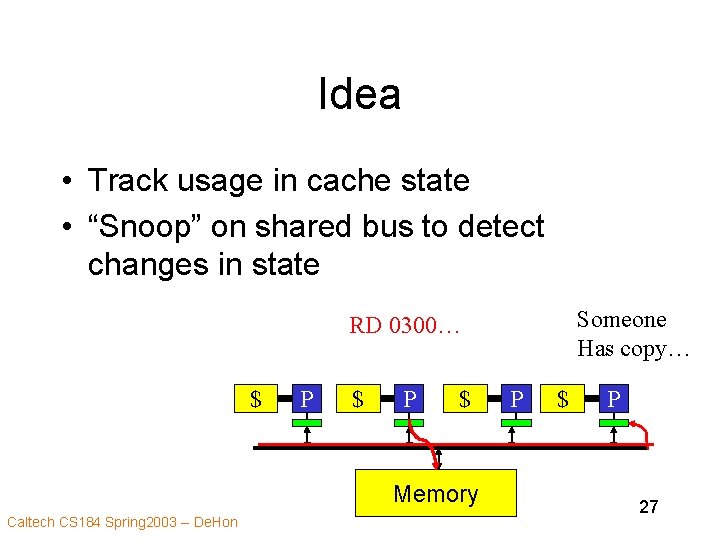

Idea • Track usage in cache state • “Snoop” on shared bus to detect changes in state Someone Has copy… RD 0300… $ P $ Memory Caltech CS 184 Spring 2003 -- De. Hon P $ P 27

Cache State • Data in cache can be in one of several states – Not cached (not present) – Exclusive (not shared) • Safe to write to – Shared • Must share writes with others • Update state with each memory op Caltech CS 184 Spring 2003 -- De. Hon 28

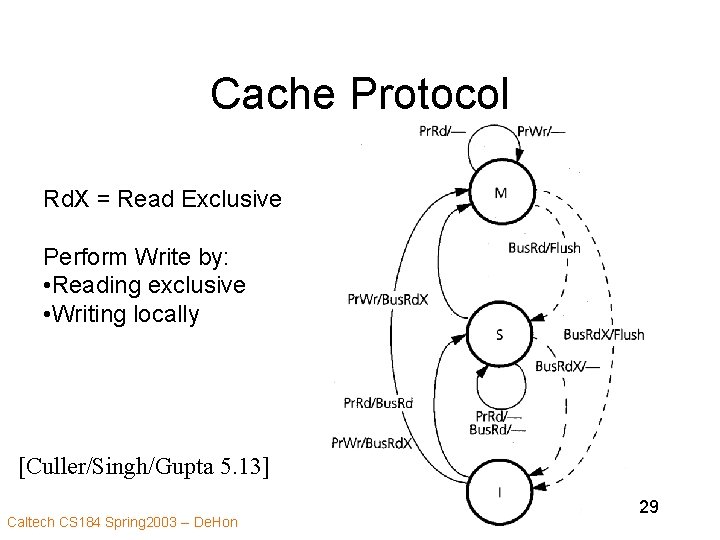

Cache Protocol Rd. X = Read Exclusive Perform Write by: • Reading exclusive • Writing locally [Culler/Singh/Gupta 5. 13] Caltech CS 184 Spring 2003 -- De. Hon 29

![Snoopy Cache Organization [Culler/Singh/Gupta 6. 4] Caltech CS 184 Spring 2003 -- De. Hon Snoopy Cache Organization [Culler/Singh/Gupta 6. 4] Caltech CS 184 Spring 2003 -- De. Hon](http://slidetodoc.com/presentation_image_h2/4d3066dfe07ee00fbe7a00d4dffd05e1/image-30.jpg)

Snoopy Cache Organization [Culler/Singh/Gupta 6. 4] Caltech CS 184 Spring 2003 -- De. Hon 30

Cache States • Extra bits in cache – Like valid, dirty Caltech CS 184 Spring 2003 -- De. Hon 31

![Misses #s are cache line size [Culler/Singh/Gupta 5. 23] Caltech CS 184 Spring 2003 Misses #s are cache line size [Culler/Singh/Gupta 5. 23] Caltech CS 184 Spring 2003](http://slidetodoc.com/presentation_image_h2/4d3066dfe07ee00fbe7a00d4dffd05e1/image-32.jpg)

Misses #s are cache line size [Culler/Singh/Gupta 5. 23] Caltech CS 184 Spring 2003 -- De. Hon 32

![Misses [Culler/Singh/Gupta 5. 27] Caltech CS 184 Spring 2003 -- De. Hon 33 Misses [Culler/Singh/Gupta 5. 27] Caltech CS 184 Spring 2003 -- De. Hon 33](http://slidetodoc.com/presentation_image_h2/4d3066dfe07ee00fbe7a00d4dffd05e1/image-33.jpg)

Misses [Culler/Singh/Gupta 5. 27] Caltech CS 184 Spring 2003 -- De. Hon 33

Synchronization Caltech CS 184 Spring 2003 -- De. Hon 34

Problem • If correctness requires an ordering between threads, – have to enforce it • Was not a problem we had in the singlethread case – does occur in the multiple threads on single processor case Caltech CS 184 Spring 2003 -- De. Hon 35

Desired Guarantees • Precedence – barrier synchronization • Everything before barrier completes before anything after begins – producer-consumer • Consumer reads value produced by producer • Atomic Operation Set • Mutual exclusion Caltech CS 184 Spring 2003 -- De. Hon 36

Read/Write Locks? • Try implement lock with r/w: if (~A. lock) A. lock=true do stuff A. lock=false Caltech CS 184 Spring 2003 -- De. Hon 37

Problem with R/W locks? • Consider context switch between test (~A. lock=true? ) and assignment (A. lock=true) if (~A. lock) A. lock=true do stuff A. lock=false Caltech CS 184 Spring 2003 -- De. Hon 38

Primitive Need • Need Indivisible primitive to enabled atomic operations Caltech CS 184 Spring 2003 -- De. Hon 39

Original Examples • Test-and-set – combine test of A. lock and set into single atomic operation – once have lock • can guarantee mutual exclusion at higher level • Read-Modify-Write – atomic read…write sequence • Exchange Caltech CS 184 Spring 2003 -- De. Hon 40

Examples (cont. ) • Exchange – Exchange true with A. lock – if value retrieved was false • this process got the lock – if value retrieved was true • already locked • (didn’t change value) • keep trying – key is, only single exchanger get the false value Caltech CS 184 Spring 2003 -- De. Hon 41

Implementing. . . • What required to implement? – Uniprocessor – Bus-based Caltech CS 184 Spring 2003 -- De. Hon 42

Implement: Uniprocessor • Prevent Interrupt/context switch • Primitives use single address – so page fault at beginning – then ok, to computation (defer faults…) • SMT? Caltech CS 184 Spring 2003 -- De. Hon 43

Implement: Snoop Bus • Need to reserve for Write – write-through • hold the bus between read and write • Guarantee no operation can intervene – write-back • need exclusive read • and way to defer other writes until written Caltech CS 184 Spring 2003 -- De. Hon 44

Performance Concerns? • • Locking resources reduce parallelism Bus (network) traffic Processor utilization Latency of operation Caltech CS 184 Spring 2003 -- De. Hon 45

Basic Synch. Components • Acquisition • Waiting • Release Caltech CS 184 Spring 2003 -- De. Hon 46

Possible Problems • Spin wait generates considerable memory traffic • Release traffic • Bottleneck on resources • Invalidation – can’t cache locally… • Fairness Caltech CS 184 Spring 2003 -- De. Hon 47

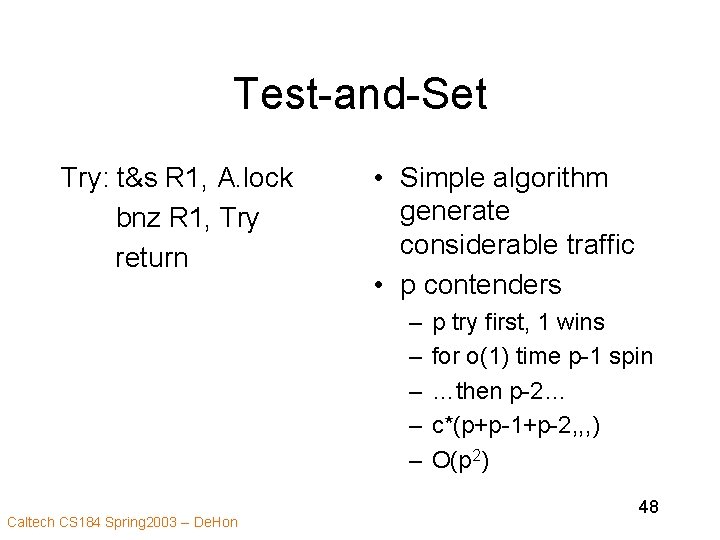

Test-and-Set Try: t&s R 1, A. lock bnz R 1, Try return • Simple algorithm generate considerable traffic • p contenders – – – Caltech CS 184 Spring 2003 -- De. Hon p try first, 1 wins for o(1) time p-1 spin …then p-2… c*(p+p-1+p-2, , , ) O(p 2) 48

Backoff • Instead of immediately retrying – wait some time before retry – reduces contention – may increase latency • (what if I’m only contender and is about to be released? ) Caltech CS 184 Spring 2003 -- De. Hon 49

![Primitive Bus Performance Caltech CS 184 Spring 2003 -- De. Hon [Culler/Singh/Gupta 5. 29] Primitive Bus Performance Caltech CS 184 Spring 2003 -- De. Hon [Culler/Singh/Gupta 5. 29]](http://slidetodoc.com/presentation_image_h2/4d3066dfe07ee00fbe7a00d4dffd05e1/image-50.jpg)

Primitive Bus Performance Caltech CS 184 Spring 2003 -- De. Hon [Culler/Singh/Gupta 5. 29] 50

Bad Effects • Performance Decreases with users – From growing traffic already noted Caltech CS 184 Spring 2003 -- De. Hon 51

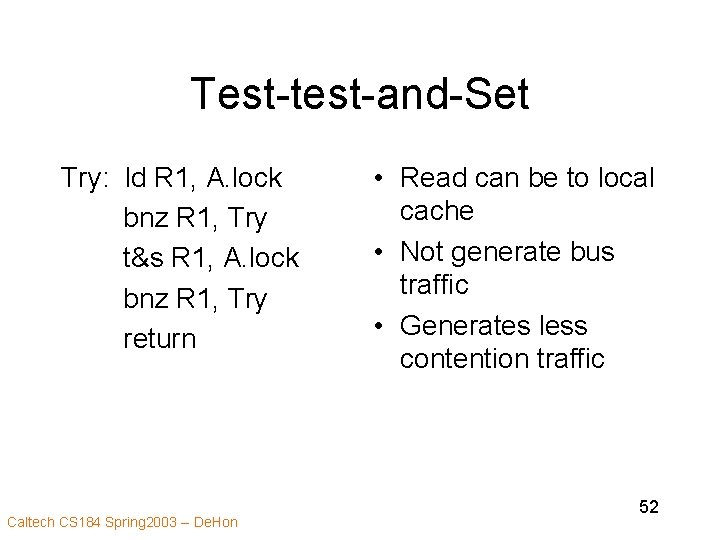

Test-test-and-Set Try: ld R 1, A. lock bnz R 1, Try t&s R 1, A. lock bnz R 1, Try return Caltech CS 184 Spring 2003 -- De. Hon • Read can be to local cache • Not generate bus traffic • Generates less contention traffic 52

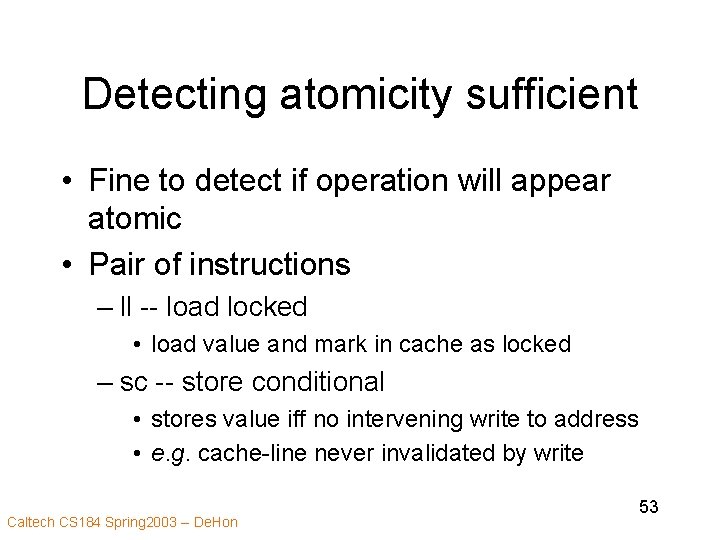

Detecting atomicity sufficient • Fine to detect if operation will appear atomic • Pair of instructions – ll -- load locked • load value and mark in cache as locked – sc -- store conditional • stores value iff no intervening write to address • e. g. cache-line never invalidated by write Caltech CS 184 Spring 2003 -- De. Hon 53

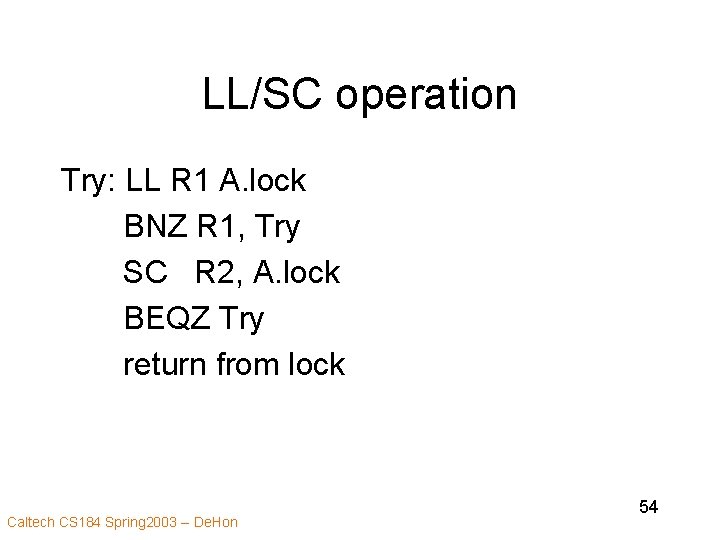

LL/SC operation Try: LL R 1 A. lock BNZ R 1, Try SC R 2, A. lock BEQZ Try return from lock Caltech CS 184 Spring 2003 -- De. Hon 54

LL/SC • Pair doesn’t really lock value • Just detects if result would appear that way • Ok to have arbitrary interleaving between LL and SC • Ok to have capacity eviction between LL and SC – will just fail and retry Caltech CS 184 Spring 2003 -- De. Hon 55

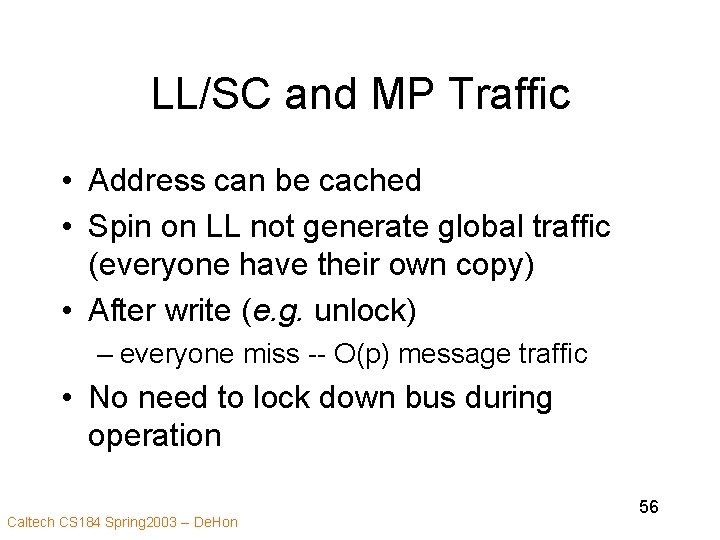

LL/SC and MP Traffic • Address can be cached • Spin on LL not generate global traffic (everyone have their own copy) • After write (e. g. unlock) – everyone miss -- O(p) message traffic • No need to lock down bus during operation Caltech CS 184 Spring 2003 -- De. Hon 56

![Performance Bus [talk about array+ticket later] Caltech CS 184 Spring 2003 -- De. Hon Performance Bus [talk about array+ticket later] Caltech CS 184 Spring 2003 -- De. Hon](http://slidetodoc.com/presentation_image_h2/4d3066dfe07ee00fbe7a00d4dffd05e1/image-57.jpg)

Performance Bus [talk about array+ticket later] Caltech CS 184 Spring 2003 -- De. Hon [Culler/Singh/Gupta 5. 30] 57

Big Ideas • Simple Model – Preserve model – While optimizing implementation • Exploit Locality – Reduce bandwidth and latency Caltech CS 184 Spring 2003 -- De. Hon 58

Big Ideas • Simple primitives – Must have primitives to support atomic operations – don’t have to implement atomicly • just detect non-atomicity • Make fast case common – optimize for locality – minimize contention Caltech CS 184 Spring 2003 -- De. Hon 59

- Slides: 59