Cryptowars Sharon Goldberg CS 558 Network Security Boston

Cryptowars Sharon Goldberg CS 558 Network Security Boston University December 10, 2015

In 1994 http: //www. nytimes. com/1994/06/12/magazine/battle-of-the-clipper-chip. html? pagewanted=all

From: The Battle of the Clipper Chip • Little more than two months after taking office, the Clinton Administration announced the existence of the Clipper chip and directed the National Institute of Standards and Technology to consider it as a Government standard. • It seems improbable that this black Chiclet is the focal point of a battle that may determine the degree to which our civil liberties survive in the next century. But that is the shared belief in this room. • The Clipper chip has prompted what might be considered the first holy war of the information highway. Two weeks ago, the war got bloodier, as a researcher circulated a report that the chip might have a serious technical flaw. But at its heart, the issue is political, not technical. The Cypherpunks consider the Clipper the lever that Big Brother is using to pry into the conversations, messages and transactions of the computer age. These high-tech Paul Reveres are trying to mobilize America against the evil portent of a "cyberspace police state, " as one of their Internet jeremiads put it. Joining them in the battle is a formidable force, including almost all of the communications and computer industries, many members of Congress and political columnists of all stripes.

20 years later…

http: //www. wired. co. uk/news/archive/2015 -11/03/surveillance-bill-ban-strong-encryption-apple-imessage

The Washington Post says: How to resolve this? A police “back door” for all smartphones is undesirable — a back door can and will be exploited by bad guys, too. However, with all their wizardry, perhaps Apple and Google could invent a kind of secure golden key they would retain and use only when a court has approved a search warrant. Ultimately, Congress could act and force the issue, but we’d rather see it resolved in law enforcement collaboration with the manufacturers and in a way that protects all three of the forces at work: technology, privacy and rule of law. https: //www. washingtonpost. com/opinions/compromise-needed-onsmartphone-encryption/2014/10/03/96680 bf 8 -4 a 77 -11 e 4 -891 d 713 f 052086 a 0_story. html

BUT! • Is there actually a difference between a backdoor and a “secure golden key”?

https: //keybase. io/blog/2014 -10 -08/the-horror-of-a-secure-golden-key Or, as Chris Coyne says more eloquently… • This theoretical “secure golden key” would protect privacy while allowing privileged access in cases of legal or state-security emergency. Kidnappers and terrorists are exposed, and the rest of us are safe. Sounds nice. But this proposal is nonsense, and, given the sensitivity of the issue, highly dangerous. Here’s why. • A “golden key” is just another, more pleasant, word for a backdoor—something that allows people access to your data without going through you directly. This backdoor would, by design, allow Apple and Google to view your passwordprotected files if they received a subpoena or some other government directive. You'd pick your own password for when you needed your data, but the companies would also get one, of their choosing. With it, they could open any of your docs: your photos, your messages, your diary, whatever. • The Post assumes that a “secure key” means hackers, foreign governments, and curious employees could never break into this system. They also assume it would be immune to bugs. They envision a magic tool that only the righteous may wield. Does this sound familiar? • Practically speaking, the Washington Post has proposed the impossible. If Apple, Google and Uncle Sam hold keys to your documents, you will be at great risk.

Or Bruce Schneier • Ah, but that's the thing: You can't build a backdoor that only the good guys can walk through. Encryption protects against cybercriminals, industrial competitors, the Chinese secret police and the FBI. You're either vulnerable to eavesdropping by any of them, or you're secure from eavesdropping from all of them. • Backdoor access built for the good guys is routinely used by the bad guys. In 2005, some unknown group surreptitiously used the lawful-intercept capabilities built into the Greek cell phone system. The same thing happened in Italy in 2006. • In 2010, Chinese hackers subverted an intercept system Google had put into Gmail to comply with US government surveillance requests. Back doors in our cell phone system are currently being exploited by the FBI and unknown others. • This doesn't stop the FBI and Justice Department from pumping up the fear. Attorney General Eric Holder threatened us with kidnappers and sexual predators. • The former head of the FBI's criminal investigative division went even further, conjuring up kidnappers who are also sexual predators. And, of course, terrorists. https: //www. schneier. com/blog/archives/2014/10/iphone_encrypti_1. html

Backdoors in the wild • • Stingrays Gemalto key theaft Google back door The Athens Affair and “lawful intercepts”

• http: //arstechnica. com/techpolicy/2013/09/meet-themachines-that-steal-yourphones-data/

• http: //spectrum. ieee. org/telecom/security/the-athens-affair The key to understanding the hack at the heart of the Athens affair is knowing how the Ericsson AXE allows lawful intercepts—what are popularly called “wiretaps. ” Though the details differ from country to country, in Greece, as in most places, the process starts when a law enforcement official goes to a court and obtains a warrant, which is then presented to the phone company whose customer is to be tapped. It took guile and some serious programming chops to manipulate the lawful call-intercept functions in Vodafone's mobile switching centers. The intruders' task was particularly complicated because they needed to install and operate the wiretapping software on the exchanges without being detected by Vodafone or Ericsson system administrators. From time to time the intruders needed access to the rogue software to update the lists of monitored numbers and shadow phones. These activities had to be kept off all logs, while the software itself had to be invisible to the system administrators conducting routine maintenance activities. The intruders achieved all these objectives.

Gemalto https: //theintercept. com/2015/02/19/great-sim-heist/

http: //www. theverge. com/2015/2/24/8101585/thensas-sim-heist-could-have-given-it-the-power-to-plantspyware-on

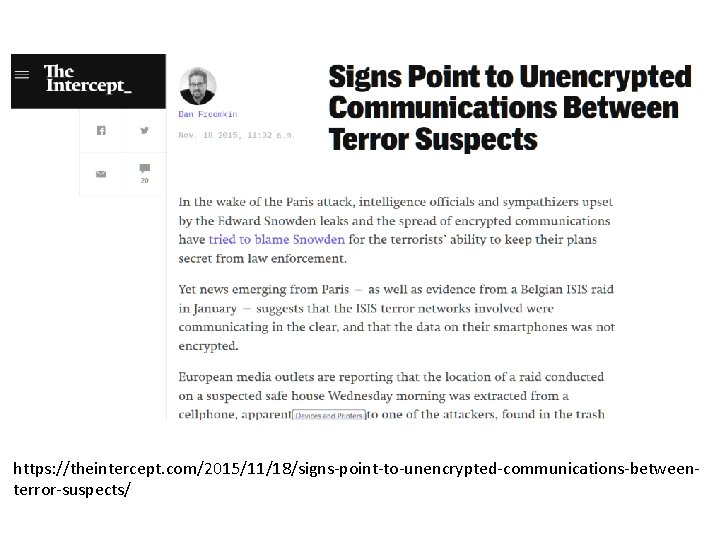

https: //theintercept. com/2015/11/18/signs-point-to-unencrypted-communications-betweenterror-suspects/

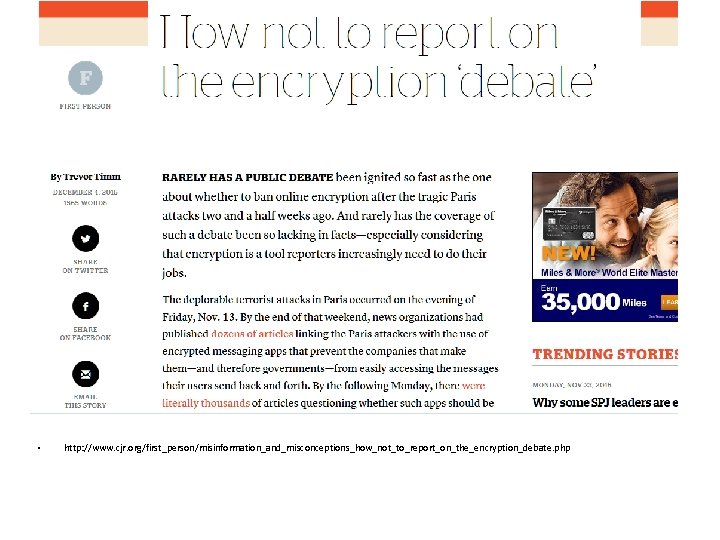

• http: //www. cjr. org/first_person/misinformation_and_misconceptions_how_not_to_report_on_the_encryption_debate. php

Then there’s the question of why journalists always frame the encryption debate as a perilous balance between privacy and security. It’s the government’s favorite dichotomy to trot out right before it proposes to violate your privacy a little more. But more importantly, it’s not accurate. While end-to-end encryption certainly gives us an extra layer of privacy protection at a time when our rights are constantly being eroded, this is actually a security vs. security debate. Encryption’s main purpose is to protect us from hackers of all sorts—the kind responsible for the disastrous data breaches at Target, JP Morgan Chase, or the US government itself. The government is complaining that companies cannot unlock certain communications because only the sender and the receiver hold the key—the company itself does not. When tech companies do not have a way to access all their customers’ data at once, neither do hackers “Weakening security with the aim of advancing security simply does not make sense. ”

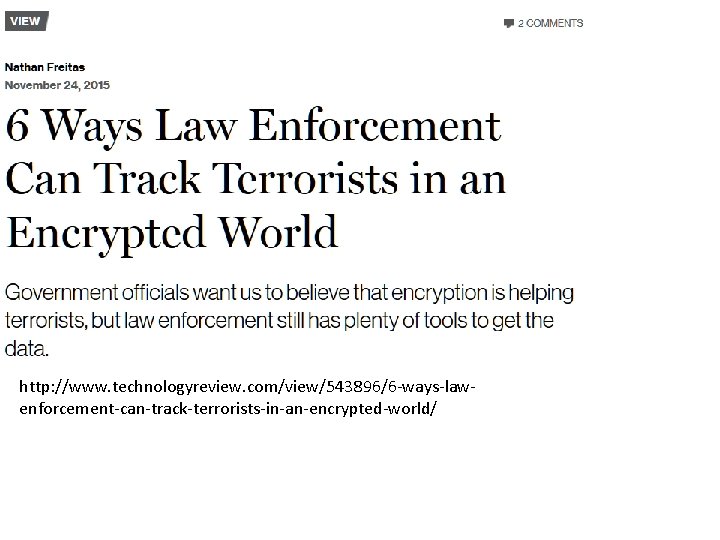

http: //www. technologyreview. com/view/543896/6 -ways-lawenforcement-can-track-terrorists-in-an-encrypted-world/

1) If someone is carrying a mobile phone, their every movement, phone call, and use of the Internet access is being tracked and logged by the mobile service provider. Accessing that data often does not require a warrant, just a phone number and a contact at the phone company. 2) Messaging apps like Whats. App and Telegram require users to register their accounts with a working telephone number. Use of the app is tied to this number, and to all the phone numbers of the people they are communicating with. See number one for what you can do with a list of phone numbers. 3) The kind of encryption implemented in mainstream apps today is not automatic. Even in well-regarded implementations by Whats. App and Apple, knowing when and how encryption is active and verified is unclear. It is likely possible to disable access to or reduce the strength of encryption on a per-user basis, without the user knowing. 4) Even an end-to-end encrypted chat can be monitored if the app supports group chat or syncing conversations between multiple devices. If you can compel the app service provider to add a new device to an account or participant into a group without notifying existing users, then you are in. 5) Full storage encryption of smartphones is not on by default for Android, and only in effect on i. OS when the device is powered off. Most of these apps are not password-protected on the device itself. Get access to a phone with the screen unlocked, or crack the screen lock app itself, and you are in. Compel the owner of a fingerprint-locked device to unlock it with their thumbprint, and you are in. Trick the user into installing (or force their app store to do so) a keystroke-logging keyboard or a hidden surveillance app and you are in. 6) Most cloud data is only encrypted to protect it from outside attackers, and not from the service provider themselves. Some services say, “We encrypt data at rest in the cloud, ” but they mean they do so with an encryption key that they hold, not one the user holds. Rather than backdoor the messages in real time, just get access to a cloud backup of all the messages, contacts, calendars, photos, location data, and more that users often unwittingly store there.

Diane Feinstein says: • I have concern about a Playstation which my grandchildren might use and a predator getting on the other end, talking to them, and it’s all encrypted. • Marcy Wheeler says: “Someone needs to explain to Di. Fi that her grandkids are probably at greater risk from predators hacking Sony to get personal information about them to then use that to abduct or whatever them. Sony’s the perfect example of how security hawks like Feinstein need to choose: either grandkids face risks because Sony doesn’t encrypt its systems, or they do because it does. The former risk is likely the much greater risk. ”

• Comey had previously argued that tech companies could somehow come up with a “solution” that allowed for government access but didn’t weaken security. Tech experts called this a “magic pony” and mocked him for his naivete. • Now, Comey said at a Senate Judiciary Committee hearing Wednesday morning, extensive conversations with tech companies have persuaded him that “it’s not a technical issue. ” • “It is a business model question, ” he said. “The question we have to ask is: Should they change their business model? ” • Comey’s clear implication was that companies that think it’s a good business model to offer endto-end encryption — or, like Apple, allow users to fully encrypt their i. Phones — should roll those

- Slides: 34