Crowdsourcing research data UMBC ebiquity 2010 03 01

Crowdsourcing research data UMBC ebiquity, 2010 -03 -01

Overview • How did we get into this • Crowdsourcing defined • Amazon Mechanical Turk • Cloud. Flower • Two examples –Annotating tweets for named entities –Evaluating word clouds • Conclusions

Motivation • Needed to train a named entity recognizer for Twitter statuses – Need human judgments on 1000 s of tweets to identify NERs of type PER, ORG or LOC anand drove to boston to see the red sox play PER LOC ORG • NAACL 2010 Workshop: Creating Speech and Language Data With Amazon’s Mechanical Turk • Shared task papers: what can you do with $100

Crowdsourcing • Crowdsourcing = Crowd + Outsourcing • Tasks normally performed by employees outsourced via an open call to a large community • Some examples – Netflix prize – Inno. Centive: solve R&D challenges – DARPA Network Challenge

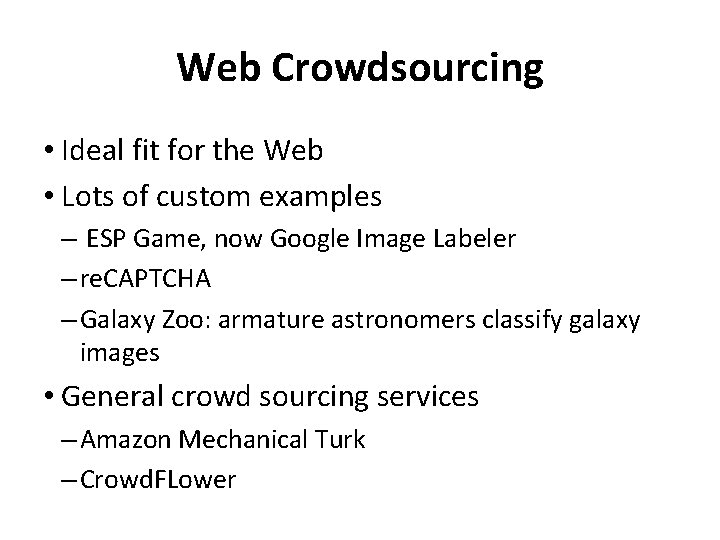

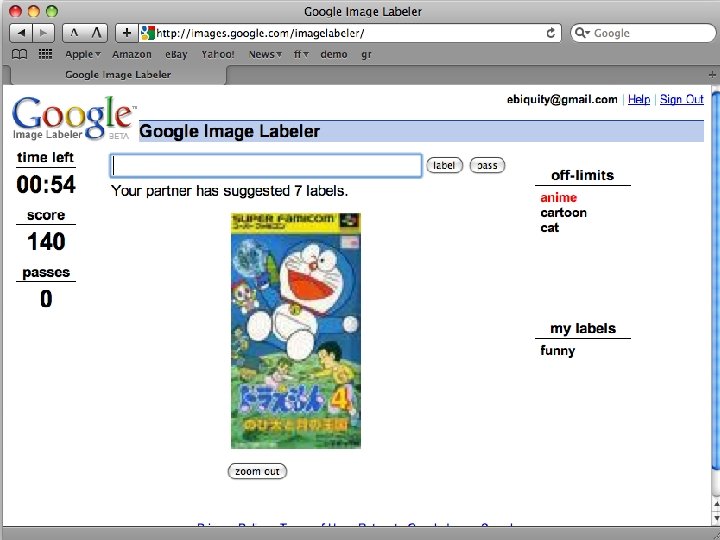

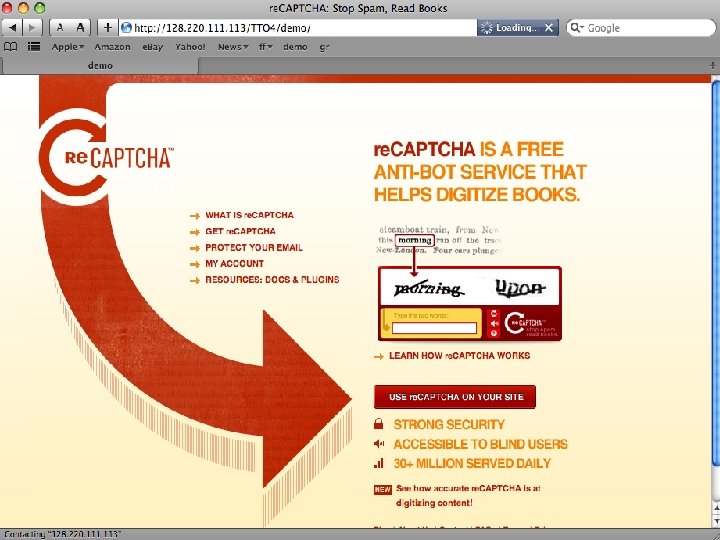

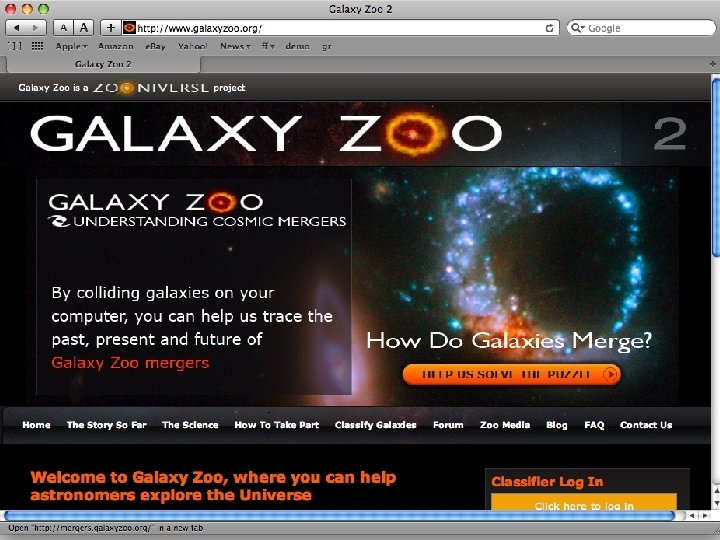

Web Crowdsourcing • Ideal fit for the Web • Lots of custom examples – ESP Game, now Google Image Labeler – re. CAPTCHA – Galaxy Zoo: armature astronomers classify galaxy images • General crowd sourcing services – Amazon Mechanical Turk – Crowd. FLower

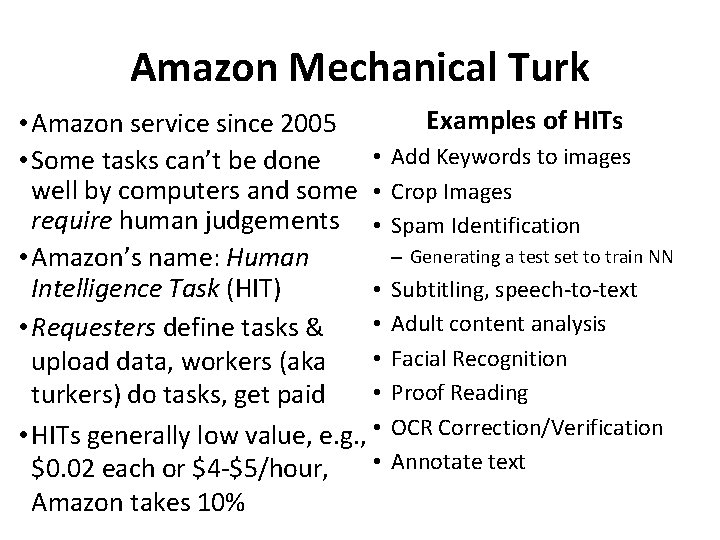

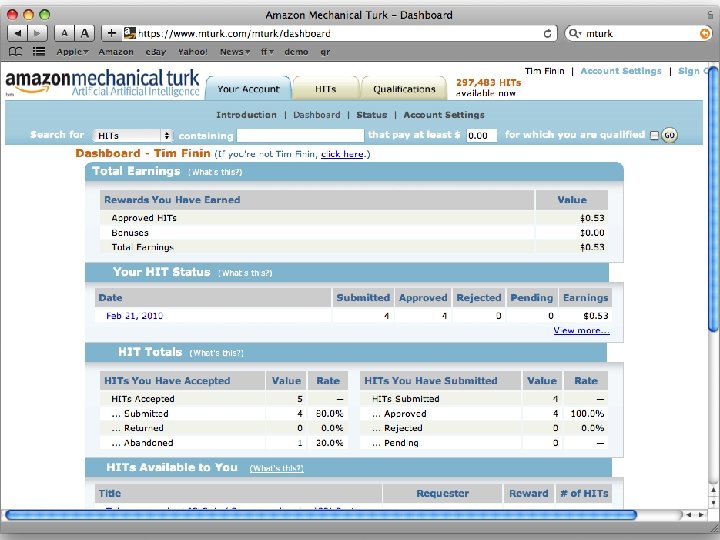

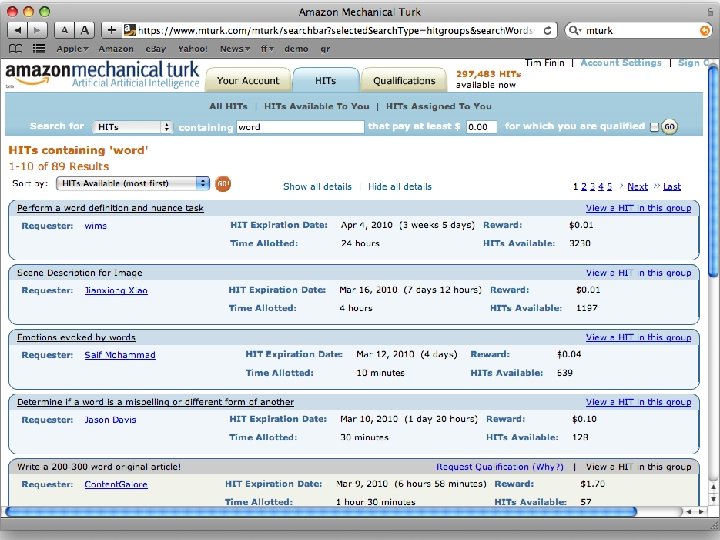

Amazon Mechanical Turk • Amazon service since 2005 • • Some tasks can’t be done well by computers and some • require human judgements • • Amazon’s name: Human Intelligence Task (HIT) • • • Requesters define tasks & • upload data, workers (aka • turkers) do tasks, get paid • HITs generally low value, e. g. , • • $0. 02 each or $4 -$5/hour, Amazon takes 10% Examples of HITs Add Keywords to images Crop Images Spam Identification – Generating a test set to train NN Subtitling, speech-to-text Adult content analysis Facial Recognition Proof Reading OCR Correction/Verification Annotate text

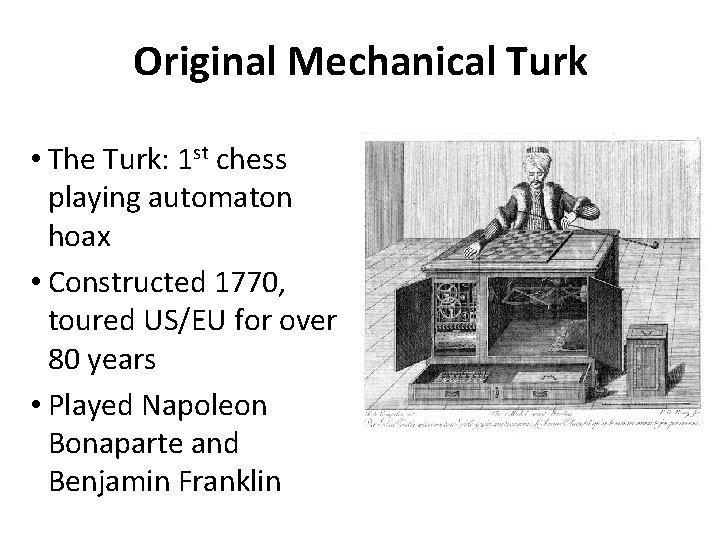

Original Mechanical Turk • The Turk: 1 st chess playing automaton hoax • Constructed 1770, toured US/EU for over 80 years • Played Napoleon Bonaparte and Benjamin Franklin

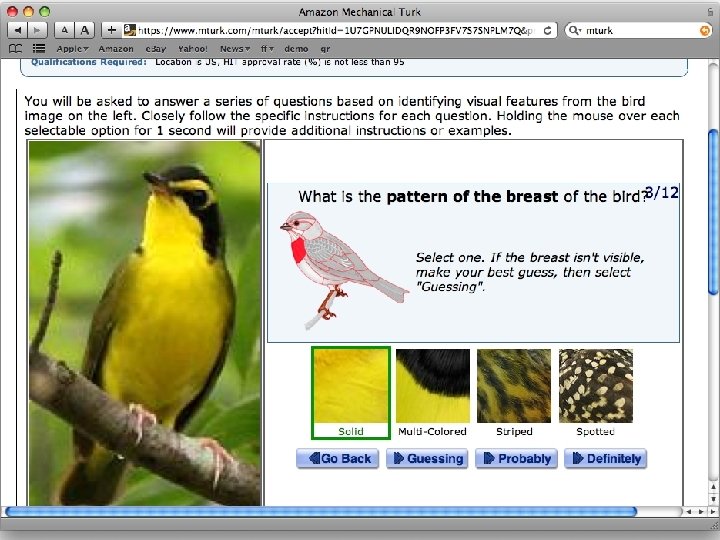

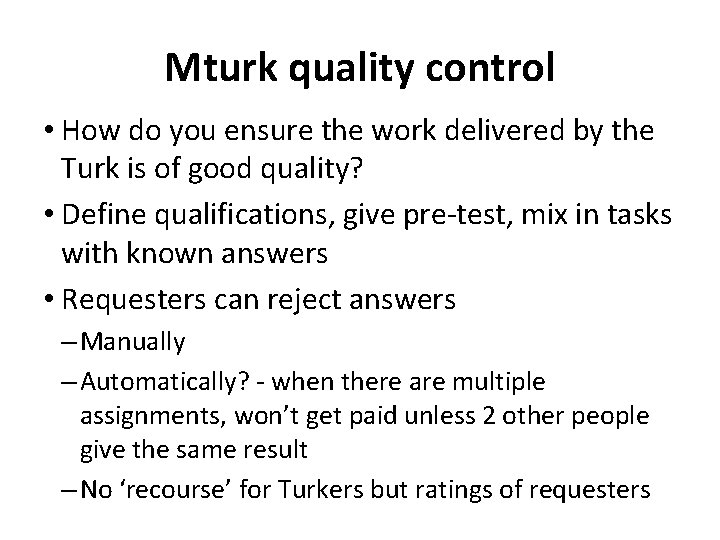

Mturk quality control • How do you ensure the work delivered by the Turk is of good quality? • Define qualifications, give pre-test, mix in tasks with known answers • Requesters can reject answers – Manually – Automatically? - when there are multiple assignments, won’t get paid unless 2 other people give the same result – No ‘recourse’ for Turkers but ratings of requesters

AMT Demo annotating NEs

Crowd. Flower • Commercial effort by Dolores Labs • Sits on top of AMT • Real time results • Choose multiple worker channel like AMT, Samasource • Quality control measures

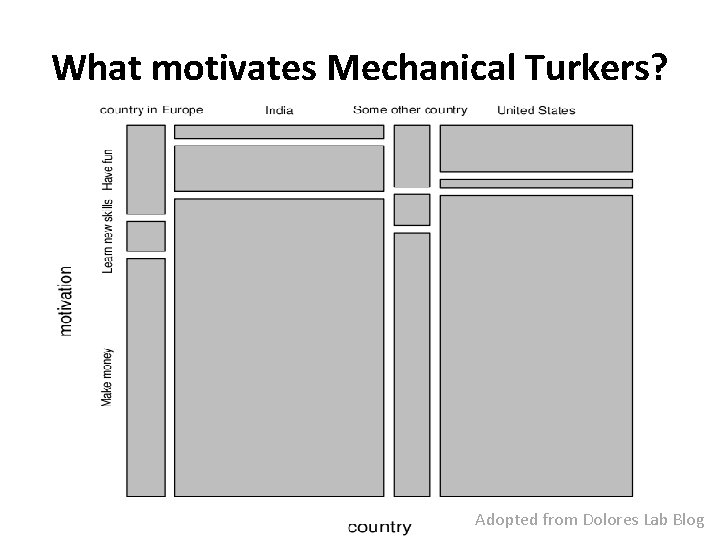

What motivates Mechanical Turkers? Adopted from Dolores Lab Blog

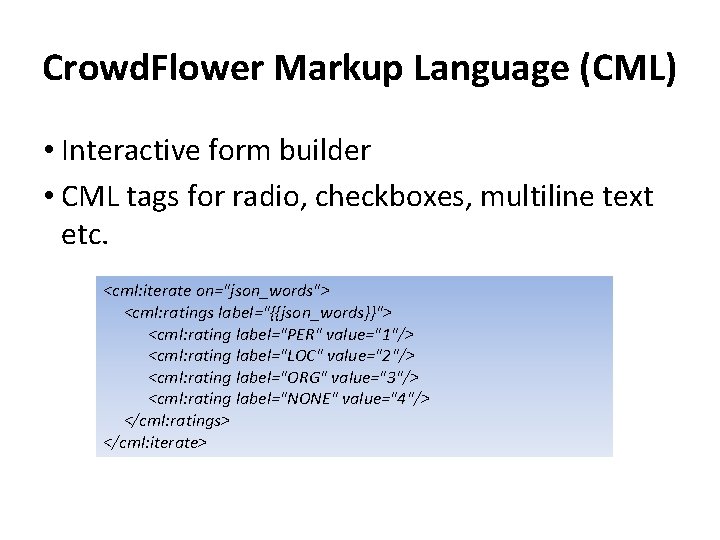

Crowd. Flower Markup Language (CML) • Interactive form builder • CML tags for radio, checkboxes, multiline text etc. <cml: iterate on="json_words"> <cml: ratings label="{{json_words}}"> <cml: rating label="PER" value="1"/> <cml: rating label="LOC" value="2"/> <cml: rating label="ORG" value="3"/> <cml: rating label="NONE" value="4"/> </cml: ratings> </cml: iterate>

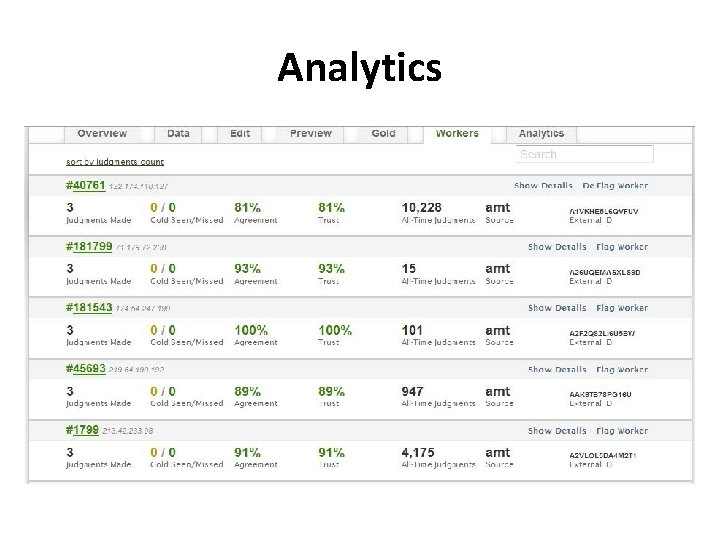

Analytics

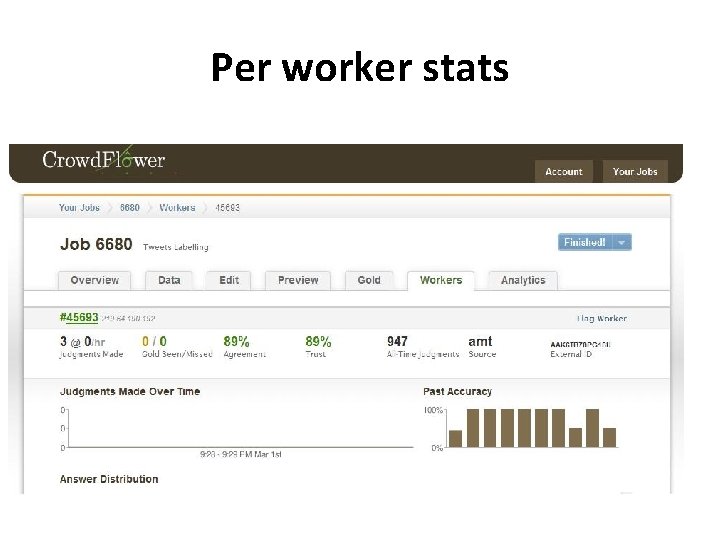

Per worker stats

Gold Standards • Ensure quality, prevents scammers from giving bad results • Interface to monitor Gold stats • If a worker makes mistake on a known result, he will be notified and shown his mistake. • The error rates without the gold standard is more than twice as high as when we do use a gold standard. • Helps in 2 ways - improves worker accuracy - allows Crowd. Flower to determine who is giving accurate answers Adopted from http: //crowdflower. com/docs

Conclusion • Ask us after Spring break how it went • You might find AMT useful to collect annotations or judgments for your research • $25 -$50 can go a long way

AMT Demo Better Word Cloud?

- Slides: 25