Crowdsourcing Blog Track Top News Judgments at TREC

Crowdsourcing Blog Track Top News Judgments at TREC Richard Mc. Creadie, Craig Macdonald, Iadh Ounis {richardm, craigm, ounis}@dcs. gla. ac. uk 1

Outline • Relevance Assessment and TREC (4 slides) • Crowdsourcing Interface (4 slides) • Research Questions and Results (6 slides) • Conclusions and Best Practices (1 slide) 2

Relevance Assessment and TREC Slides 4 -7/20 3

Relevance Assessment • Relevance assessments are vital when evaluating information retrieval (IR) systems at TREC • Is this document relevant to the information need expressed in the user query? • Created by human assessors • Specialist paid assessors, e. g. TREC assessors • Typically, only one assessor per judgement (for cost reasons) • Researchers themselves 4

Limitations • Creating relevance assessments is costly • $$$ • Time • Equipment (lab, computers, electricity, etc) • May not scale well • How many people are available to make assessments • Can the work be done in parallel? 5

Task • Could we do relevance assessment using crowdsourcing at TREC? • TREC 2010 Blog Track • Top news stories identification subtask System Task: “What are the newsworthy stories on day d for a category c? ” Crowdsourcing Task: Was the story ‘Sony Announces NGP’ an important story on the 1 st February for the Science/Technology category? 6

Crowdsourcing Interface Slides 9 -12/20 7

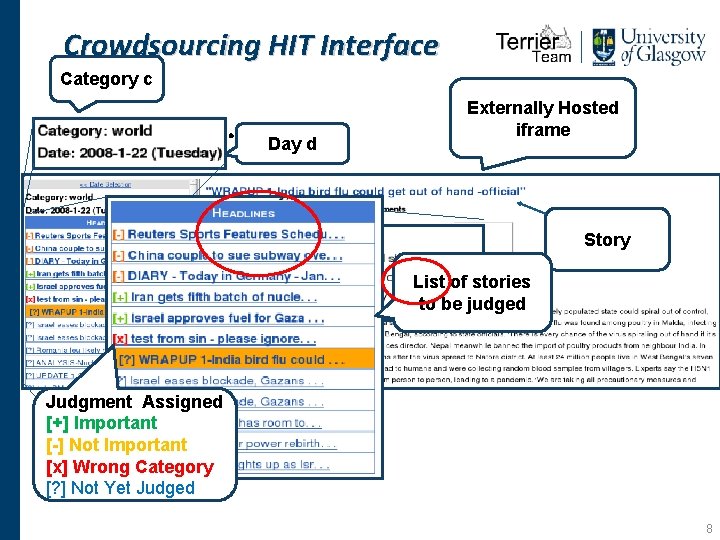

Crowdsourcing HIT Interface Category c Instructions. . . Day d Externally Hosted iframe Story List of stories to be judged Judgment Assigned [+] Important Comment Box [-] Not Important [x] Wrong Category [? ] Not Yetbutton Judged Submit 8

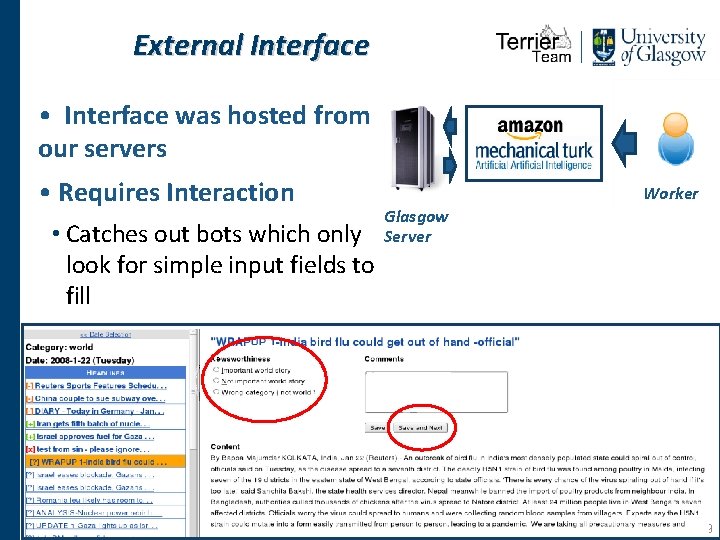

External Interface • Interface was hosted from our servers • Requires Interaction • Catches out bots which only look for simple input fields to fill Worker Glasgow Server 9

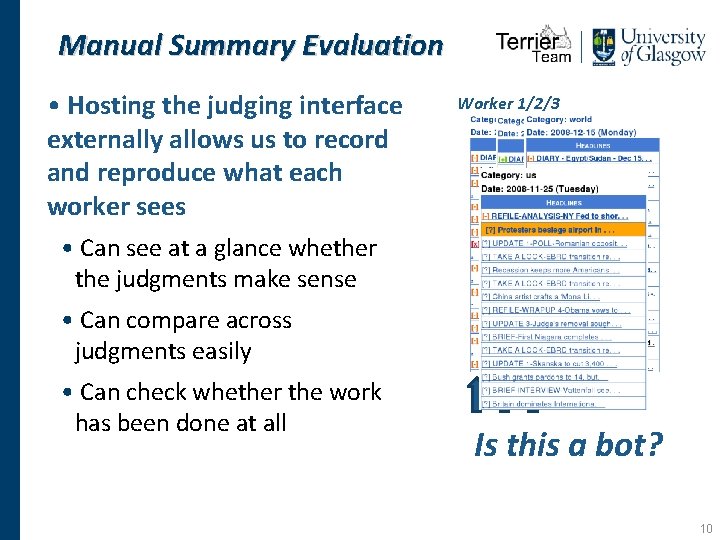

Manual Summary Evaluation • Hosting the judging interface externally allows us to record and reproduce what each worker sees Worker 1/2/3 • Can see at a glance whether the judgments make sense • Can compare across judgments easily • Can check whether the work has been done at all Is this a bot? 10

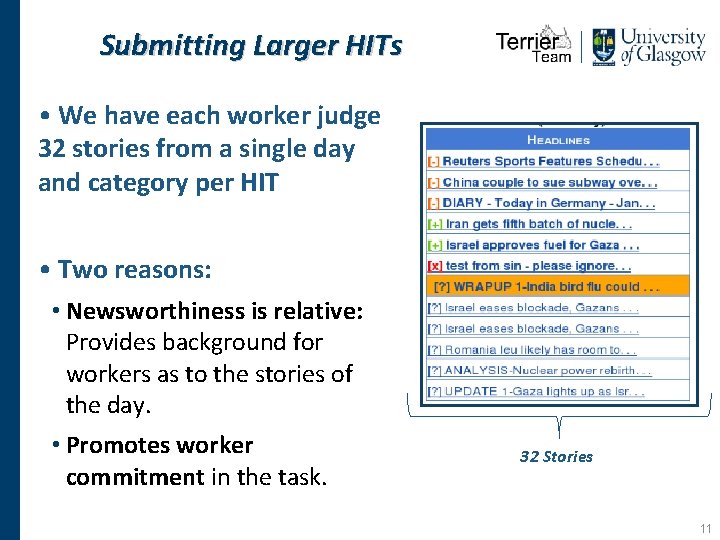

Submitting Larger HITs • We have each worker judge 32 stories from a single day and category per HIT • Two reasons: • Newsworthiness is relative: Provides background for workers as to the stories of the day. • Promotes worker commitment in the task. 32 Stories 11

Experimental Results Slides 14 -20/20 12

Research Questions 1. Was crowdsourcing Blog Track judgments fast and cheap? Was crowdsourcing a good idea? 2. Are there high levels of agreement between assessors? 3. Is having redundant judgments even necessary? Can we do better? 4. If we use worker agreement to infer multiple grades of importance, how would this effect the final ranking of systems at TREC? 13

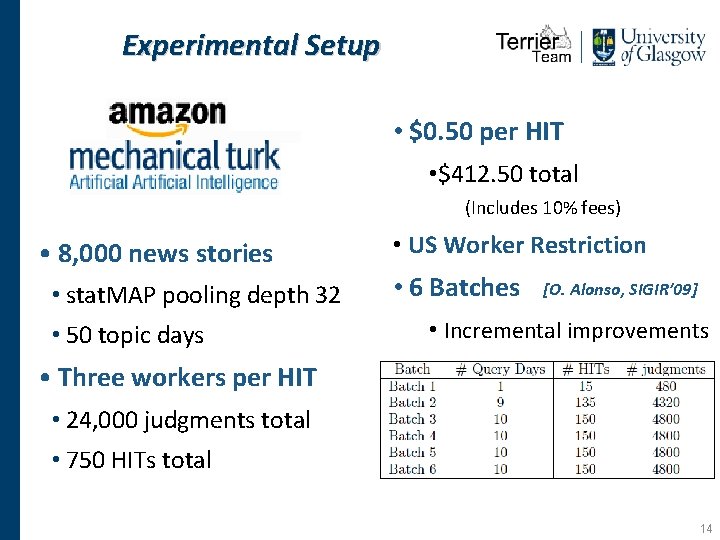

Experimental Setup • $0. 50 per HIT • $412. 50 total (Includes 10% fees) • 8, 000 news stories • stat. MAP pooling depth 32 • 50 topic days • US Worker Restriction • 6 Batches [O. Alonso, SIGIR’ 09] • Incremental improvements • Three workers per HIT • 24, 000 judgments total • 750 HITs total 14

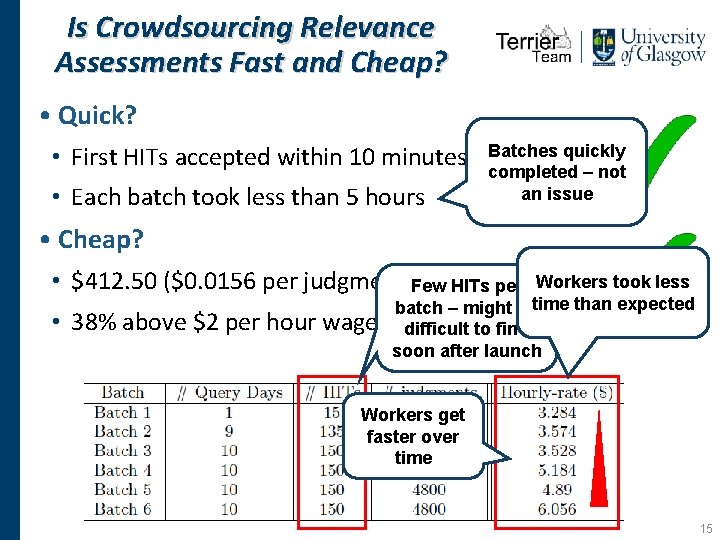

Is Crowdsourcing Relevance Assessments Fast and Cheap? • Quick? • First HITs accepted within 10 minutes of. Batches launchquickly • Each batch took less than 5 hours completed – not an issue • Cheap? • $412. 50 ($0. 0156 per judgment)Few HITs per • 38% above $2 per hour wage Workers took less batch – might betime than expected ondifficult average to find soon after launch Workers get faster over time 15

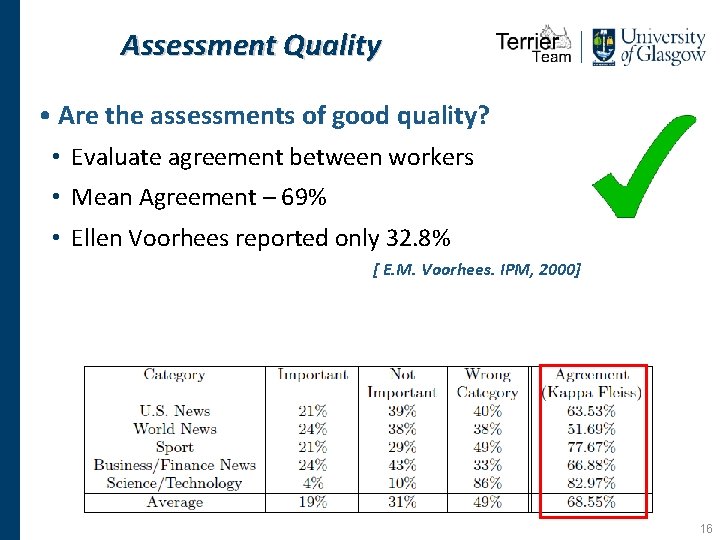

Assessment Quality • Are the assessments of good quality? • Evaluate agreement between workers • Mean Agreement – 69% • Ellen Voorhees reported only 32. 8% [ E. M. Voorhees. IPM, 2000] 16

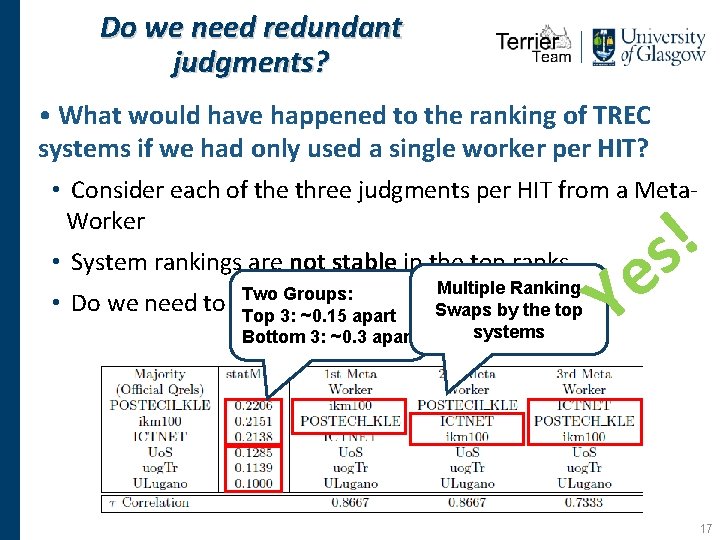

Do we need redundant judgments? • What would have happened to the ranking of TREC systems if we had only used a single worker per HIT? • Consider each of the three judgments per HIT from a Meta. Worker • System rankings are not stable in the top ranks • Do we need to Multiple Ranking Two Groups: average over three Swaps workers? by the top Top 3: ~0. 15 apart systems Bottom 3: ~0. 3 apart ! s e Y 17

Conclusions and Best Practices • Crowdsourcing top stories relevance assessments can be done successfully at TREC • . . . But we need at least three assessors for each story • Best Practices • Don’t be afraid to use larger HITs • If you have an existing interface integrate it with MTurk • Gold Judgments are not the only validation method • Re-cost your HITs as necessary Questions? 18

- Slides: 18