Crossmodal Hashing Through Ranking Subspace Learning Kai Li

Cross-modal Hashing Through Ranking Subspace Learning Kai Li, Guojun Qi, Jun Ye, Kien A. Hua Department of Computer Science University of Central Florida ICME 2016 Presented by Kai Li

Motivation and Background The amount of multimedia data has exploded in the information age. A topic or event can be described by data from multiple sources. Explore semantic correlations among multi-modal data is meaningful

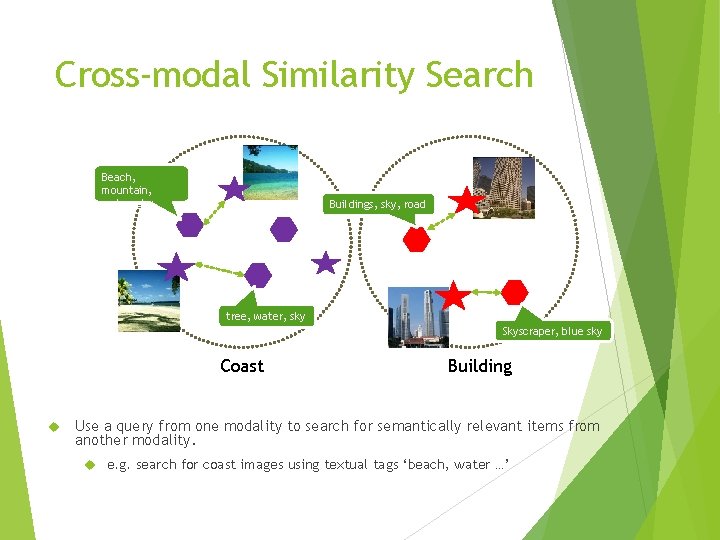

Cross-modal Similarity Search Beach, mountain, water, sky, trees Buildings, sky, road … tree, water, sky … Coast Skyscraper, blue sky … Building Use a query from one modality to search for semantically relevant items from another modality. e. g. search for coast images using textual tags ‘beach, water …’

Cross-modal Search Challenges 3. 8 trillion images by 2010 ! Huge database of high-dimensional data, high computational costs …

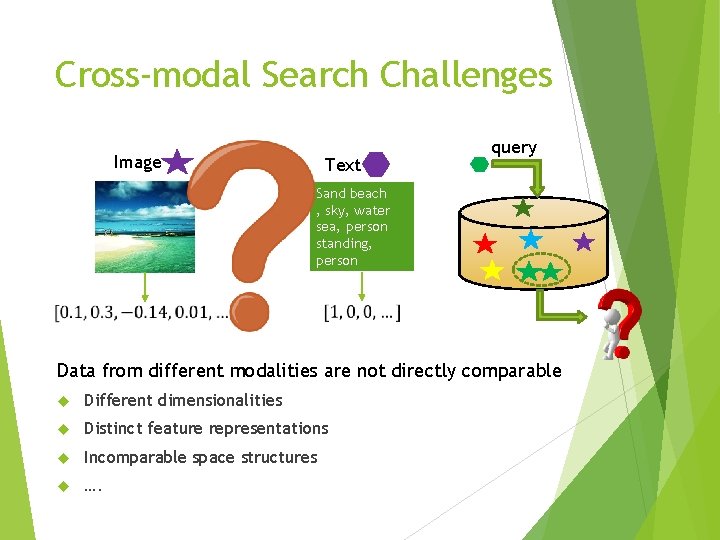

Cross-modal Search Challenges Image Text query Sand beach , sky, water sea, person standing, person walking, Data from different modalities are not directly comparable Different dimensionalities Distinct feature representations Incomparable space structures ….

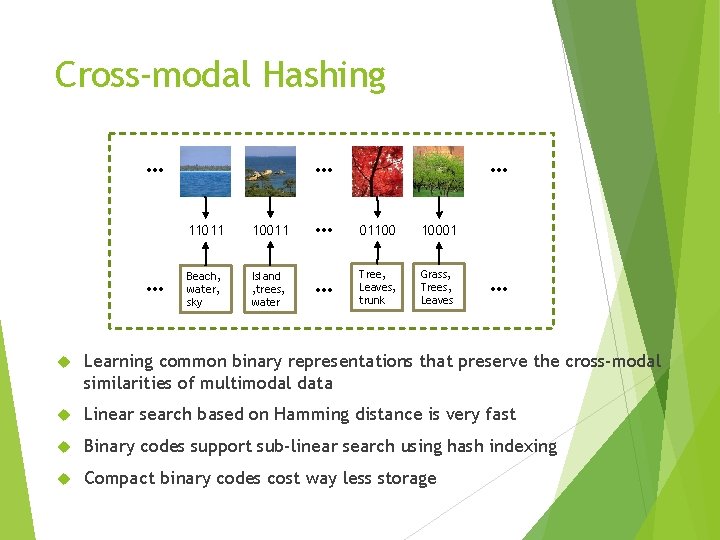

Cross-modal Hashing … … 11011 10011 Beach, water, sky Island , trees, water … … 01100 10001 Tree, Leaves, trunk Grass, Trees, Leaves … Learning common binary representations that preserve the cross-modal similarities of multimodal data Linear search based on Hamming distance is very fast Binary codes support sub-linear search using hash indexing Compact binary codes cost way less storage

Existing Cross-modal Hashing Significant amount of research has emerged recently CMSSH: (Bronstein et al. , 2010) Cross-modal Similarity Sensitive Hashing CVH: (Kumar et al. , 2011) Cross-view Hashing CRH: (Zhen et al. , 2012) Co-regularized Hashing IMH: (Song et al. , 2013) Inter-media Hashing LSSH: (Zhou et al. 2014) Latent Semantic Sparse Hashing SCM: (Zhang et al. , 2014) Semantic Correlation Maximization CMFH: (Ding et al. , 2014) Collective Matrix Factorization Hashing and more …

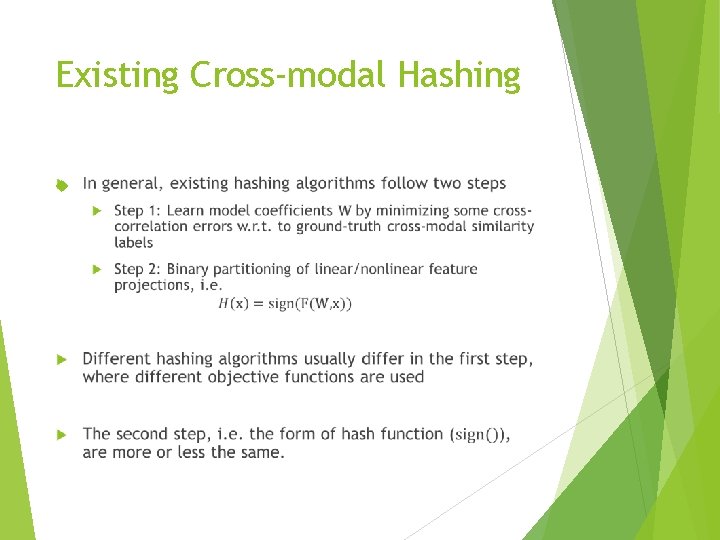

Existing Cross-modal Hashing

Motivation and Contribution

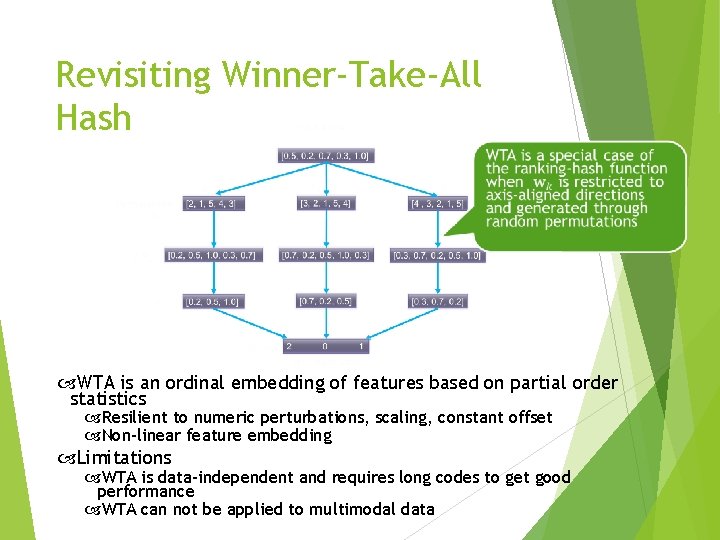

Revisiting Winner-Take-All Hash WTA is an ordinal embedding of features based on partial order statistics Resilient to numeric perturbations, scaling, constant offset Non-linear feature embedding Limitations WTA is data-independent and requires long codes to get good performance WTA can not be applied to multimodal data

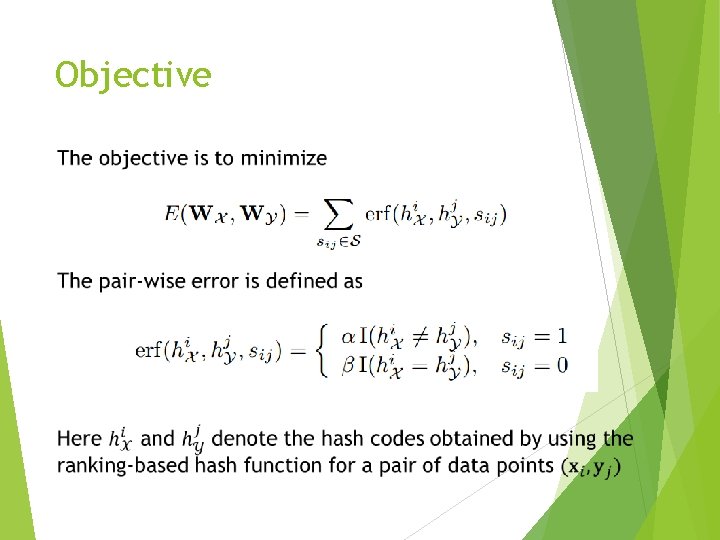

Objective

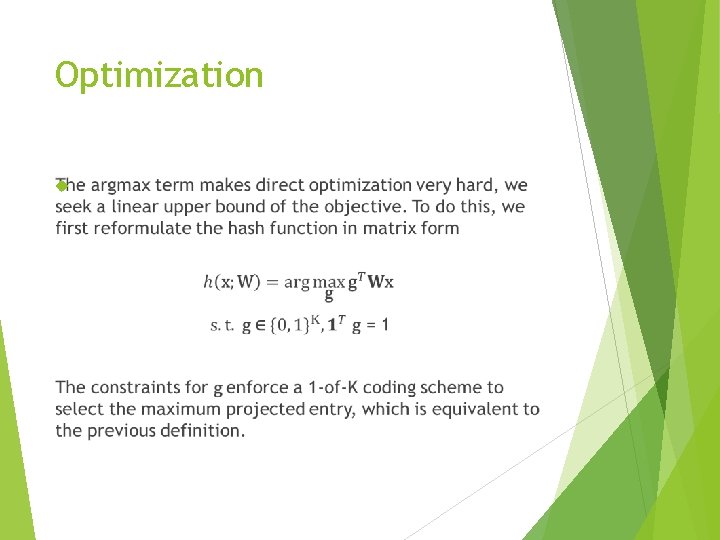

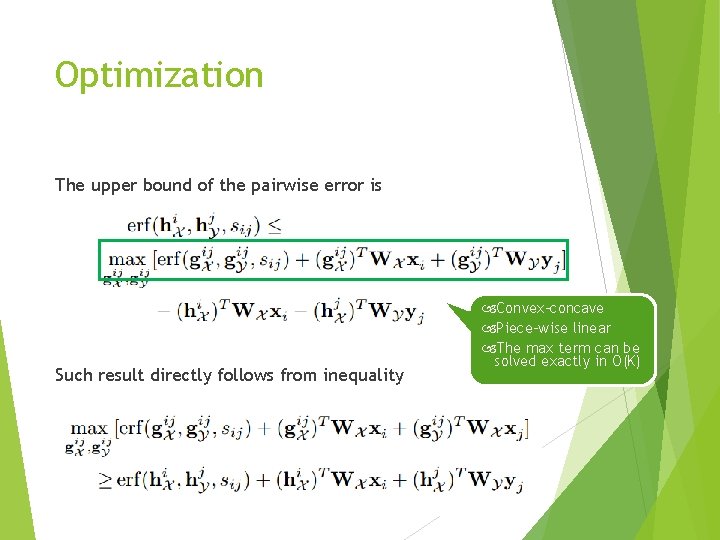

Optimization

Optimization The upper bound of the pairwise error is Such result directly follows from inequality Convex-concave Piece-wise linear The max term can be solved exactly in O(K)

![Hash function learning convergence study [Norouzi et al. , 2011] [Bishop, 2006] Hash function learning convergence study [Norouzi et al. , 2011] [Bishop, 2006]](http://slidetodoc.com/presentation_image_h/11e3c10b329bd436e93e28eed38b80e4/image-14.jpg)

Hash function learning convergence study [Norouzi et al. , 2011] [Bishop, 2006]

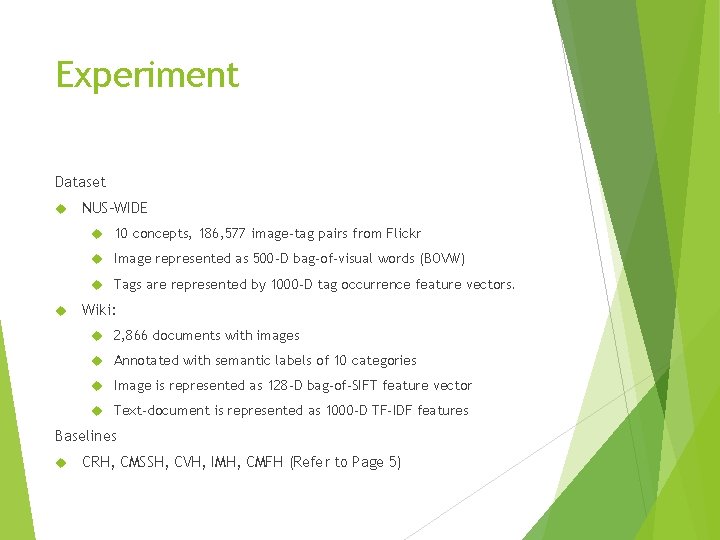

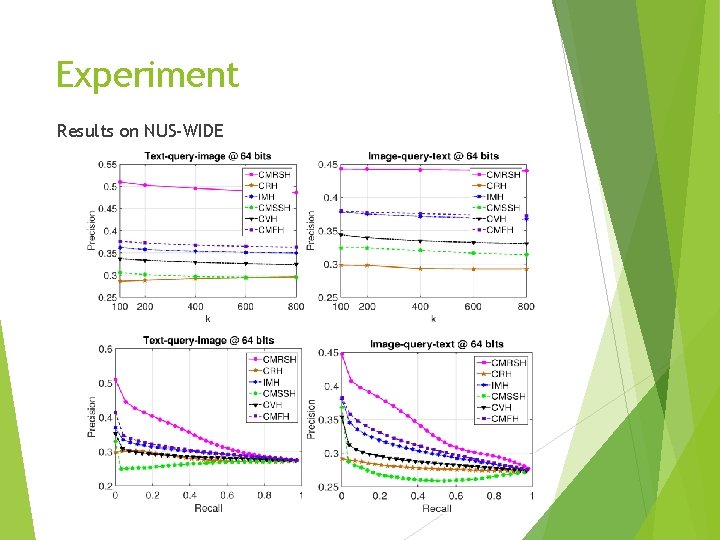

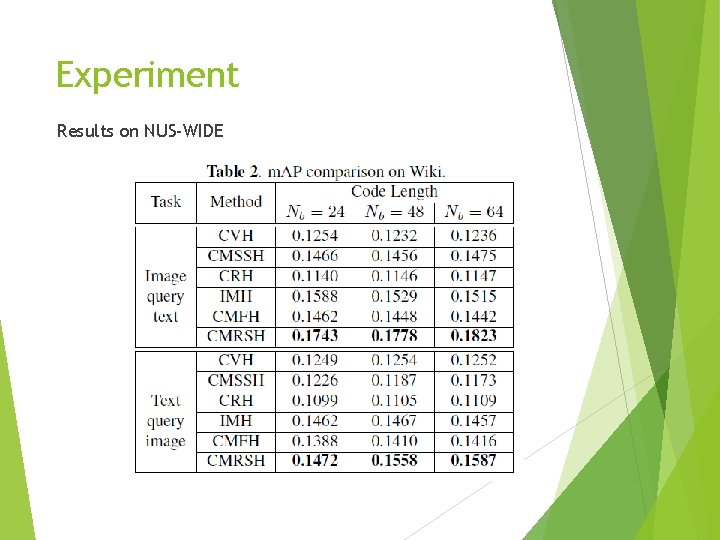

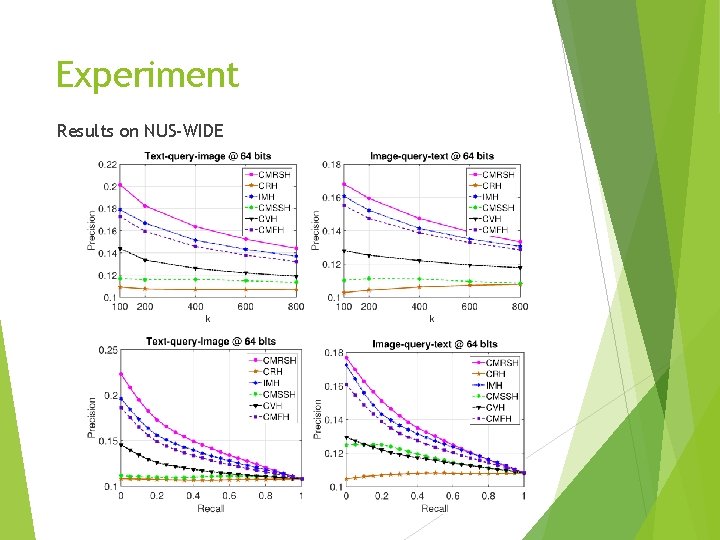

Experiment Dataset NUS-WIDE 10 concepts, 186, 577 image-tag pairs from Flickr Image represented as 500 -D bag-of-visual words (BOVW) Tags are represented by 1000 -D tag occurrence feature vectors. Wiki: 2, 866 documents with images Annotated with semantic labels of 10 categories Image is represented as 128 -D bag-of-SIFT feature vector Text-document is represented as 1000 -D TF-IDF features Baselines CRH, CMSSH, CVH, IMH, CMFH (Refer to Page 5)

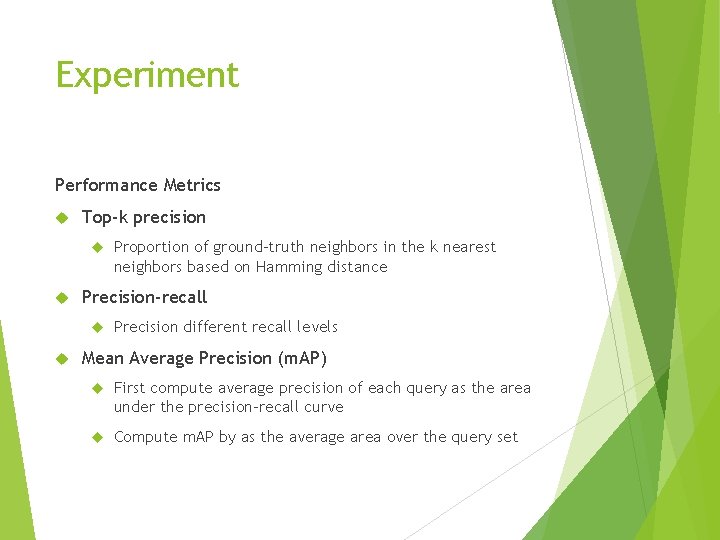

Experiment Performance Metrics Top-k precision Precision-recall Proportion of ground-truth neighbors in the k nearest neighbors based on Hamming distance Precision different recall levels Mean Average Precision (m. AP) First compute average precision of each query as the area under the precision-recall curve Compute m. AP by as the average area over the query set

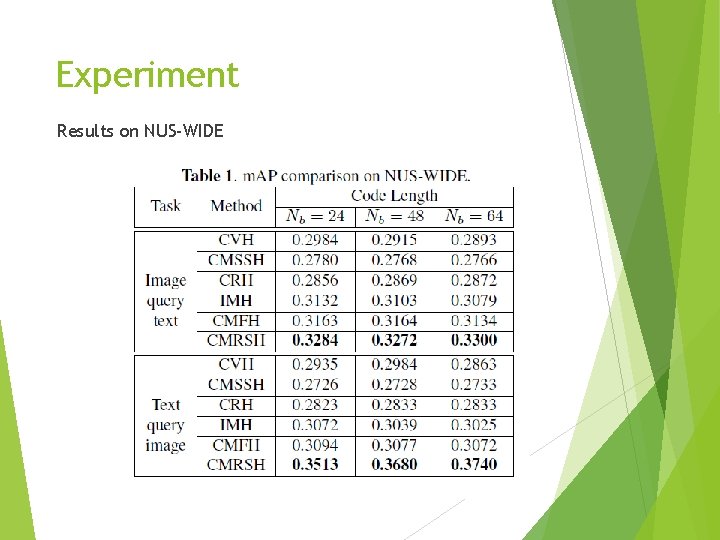

Experiment Results on NUS-WIDE

Experiment Results on NUS-WIDE

Experiment Results on NUS-WIDE

Experiment Results on NUS-WIDE

Conclusion and Future Work Key Contributions The first cross-modal hashing scheme to exploit rankingbased hash function Effective perceptron-like learning algorithm that solve the problem efficiently Superior cross-modal retrieval performance on realworld datasets Future Work Extend to kernel subspace ranking Incorporate feature learning stages and develop a deep ranking framework

- Slides: 21