CPU Scheduling CPU Scheduling n Basic Concepts n

CPU Scheduling

CPU Scheduling n Basic Concepts n Scheduling Criteria n Scheduling Algorithms n Thread Scheduling n Multiple-Processor Scheduling n Operating Systems Examples n Algorithm Evaluation

Objectives n To introduce CPU scheduling, which is the basis for multiprogrammed operating systems n To describe various CPU-scheduling algorithms n To discuss evaluation criteria for selecting a CPU-scheduling algorithm for a particular system n To examine the scheduling algorithms of several operating systems

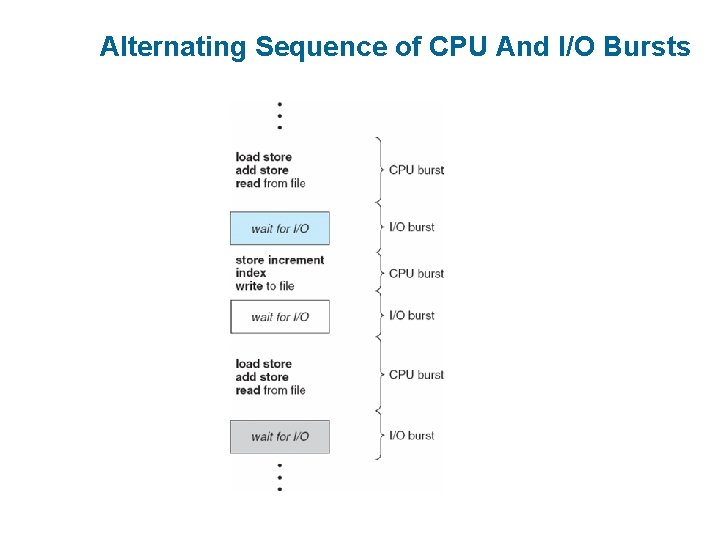

Basic Concepts n Maximum CPU utilization obtained with multiprogramming n CPU–I/O Burst Cycle – Process execution consists of a cycle of CPU execution and I/O wait n CPU burst distribution

Alternating Sequence of CPU And I/O Bursts

Histogram of CPU-burst Times

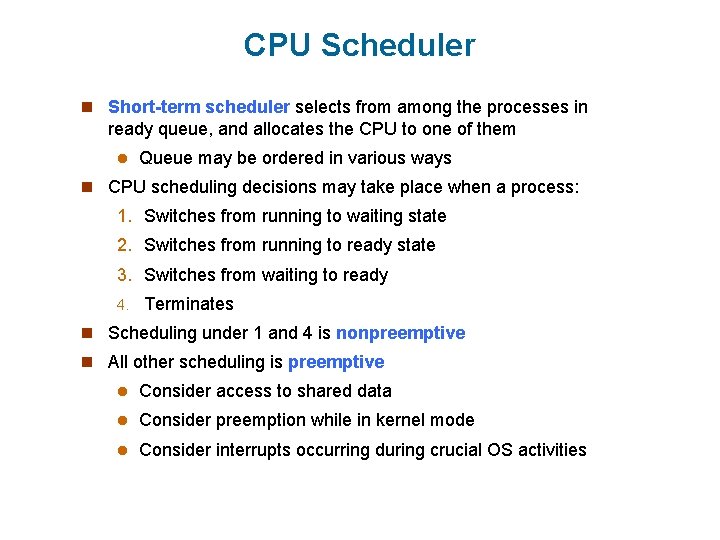

CPU Scheduler n Short-term scheduler selects from among the processes in ready queue, and allocates the CPU to one of them l Queue may be ordered in various ways n CPU scheduling decisions may take place when a process: 1. Switches from running to waiting state 2. Switches from running to ready state 3. Switches from waiting to ready 4. Terminates n Scheduling under 1 and 4 is nonpreemptive n All other scheduling is preemptive l Consider access to shared data l Consider preemption while in kernel mode l Consider interrupts occurring during crucial OS activities

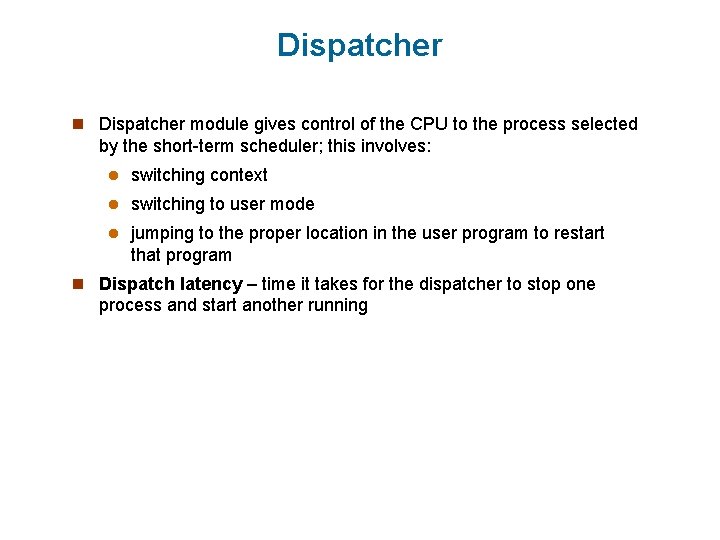

Dispatcher n Dispatcher module gives control of the CPU to the process selected by the short-term scheduler; this involves: l switching context l switching to user mode l jumping to the proper location in the user program to restart that program n Dispatch latency – time it takes for the dispatcher to stop one process and start another running

Scheduling Criteria n CPU utilization – keep the CPU as busy as possible n Throughput – # of processes that complete their execution per time unit n Turnaround time – amount of time to execute a particular process n Waiting time – amount of time a process has been waiting in the ready queue n Response time – amount of time it takes from when a request was submitted until the first response is produced, not output (for timesharing environment)

Scheduling Algorithm Optimization Criteria n Max CPU utilization n Max throughput n Min turnaround time n Min waiting time n Min response time

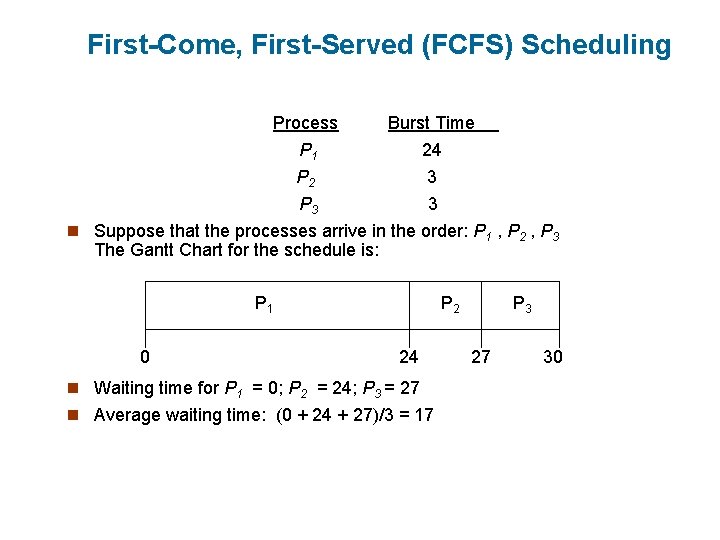

First-Come, First-Served (FCFS) Scheduling Process Burst Time P 1 24 P 2 3 P 3 3 n Suppose that the processes arrive in the order: P 1 , P 2 , P 3 The Gantt Chart for the schedule is: P 1 0 P 2 24 n Waiting time for P 1 = 0; P 2 = 24; P 3 = 27 n Average waiting time: (0 + 24 + 27)/3 = 17 P 3 27 30

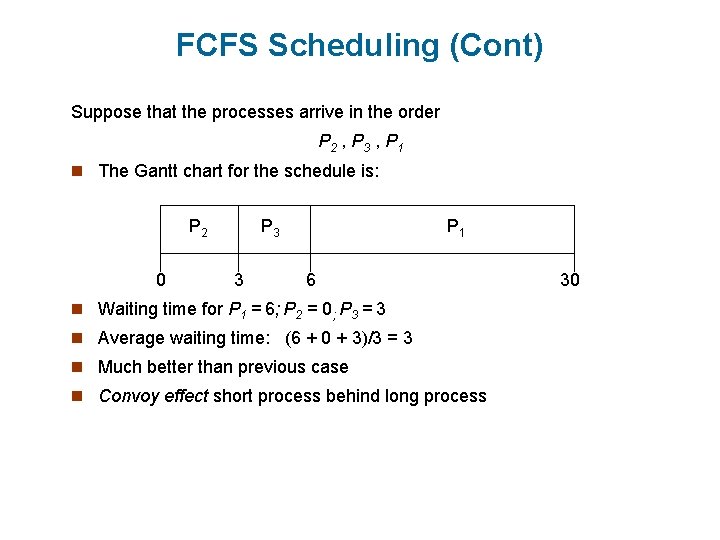

FCFS Scheduling (Cont) Suppose that the processes arrive in the order P 2 , P 3 , P 1 n The Gantt chart for the schedule is: P 2 0 P 3 3 P 1 6 n Waiting time for P 1 = 6; P 2 = 0; P 3 = 3 n Average waiting time: (6 + 0 + 3)/3 = 3 n Much better than previous case n Convoy effect short process behind long process 30

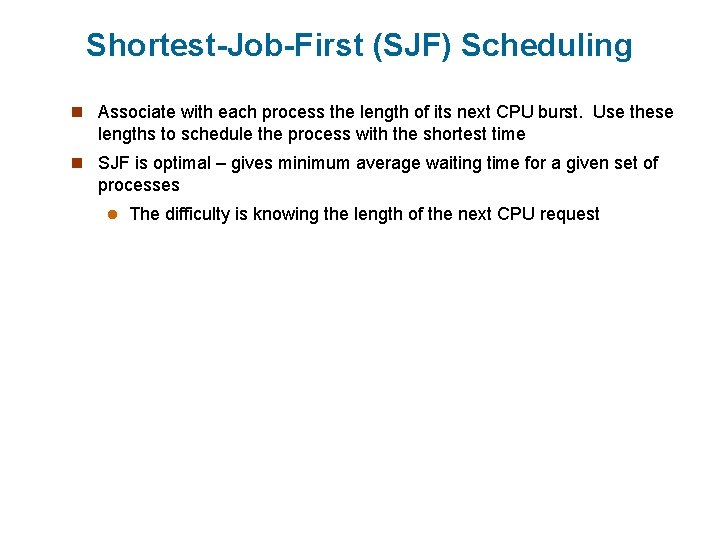

Shortest-Job-First (SJF) Scheduling n Associate with each process the length of its next CPU burst. Use these lengths to schedule the process with the shortest time n SJF is optimal – gives minimum average waiting time for a given set of processes l The difficulty is knowing the length of the next CPU request

Two schemes: - nonpreemptive – once CPU given to the process it cannot be preempted until completes its CPU burst. - preemptive if a new process arrives with CPU burst length less than remaining time of current executing process, preempt. This scheme is know as the Shortest-Remaining-Time-First (SRTF). SJF is optimal – gives minimum average waiting time for a given set of processes.

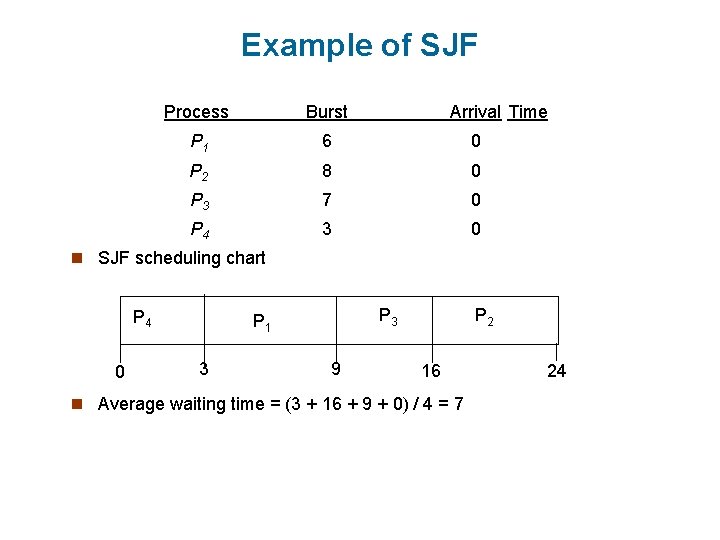

Example of SJF Process Burst Arrival Time P 1 6 0 P 2 8 0 P 3 7 0 P 4 3 0 n SJF scheduling chart P 4 0 P 3 P 1 3 9 P 2 16 n Average waiting time = (3 + 16 + 9 + 0) / 4 = 7 24

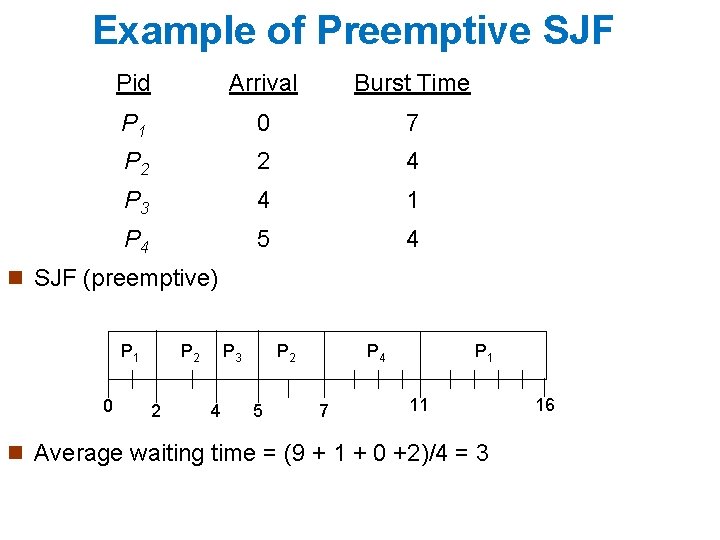

Example of Preemptive SJF Pid Arrival Burst Time P 1 0 7 P 2 2 4 P 3 4 1 P 4 5 4 n SJF (preemptive) P 1 0 P 2 2 P 3 4 P 2 5 P 4 7 P 1 11 n Average waiting time = (9 + 1 + 0 +2)/4 = 3 16

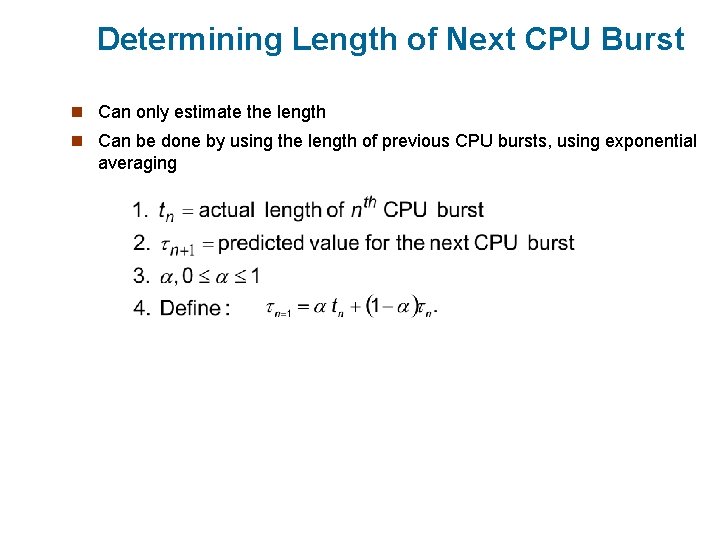

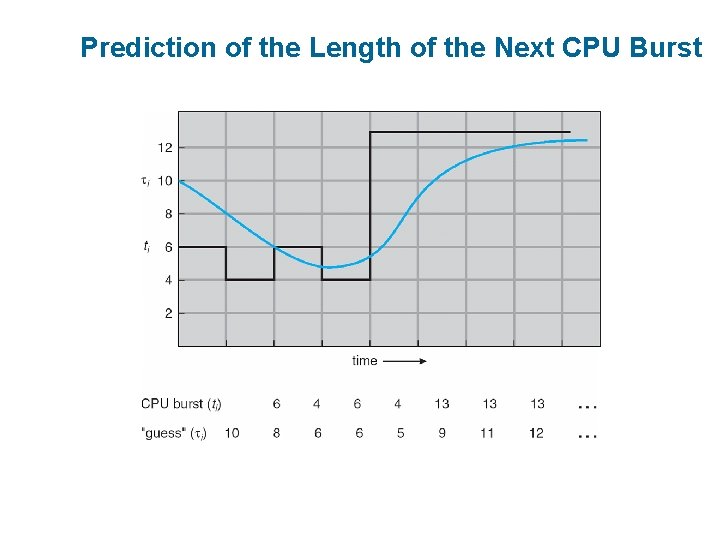

Determining Length of Next CPU Burst n Can only estimate the length n Can be done by using the length of previous CPU bursts, using exponential averaging

Prediction of the Length of the Next CPU Burst

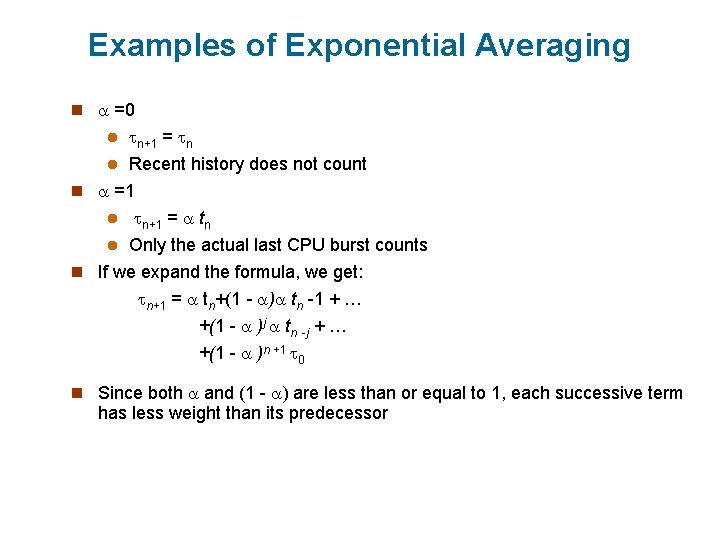

Examples of Exponential Averaging n =0 n+1 = n l Recent history does not count n =1 l n+1 = tn l Only the actual last CPU burst counts n If we expand the formula, we get: n+1 = tn+(1 - ) tn -1 + … +(1 - )j tn -j + … l +(1 - )n +1 0 n Since both and (1 - ) are less than or equal to 1, each successive term has less weight than its predecessor

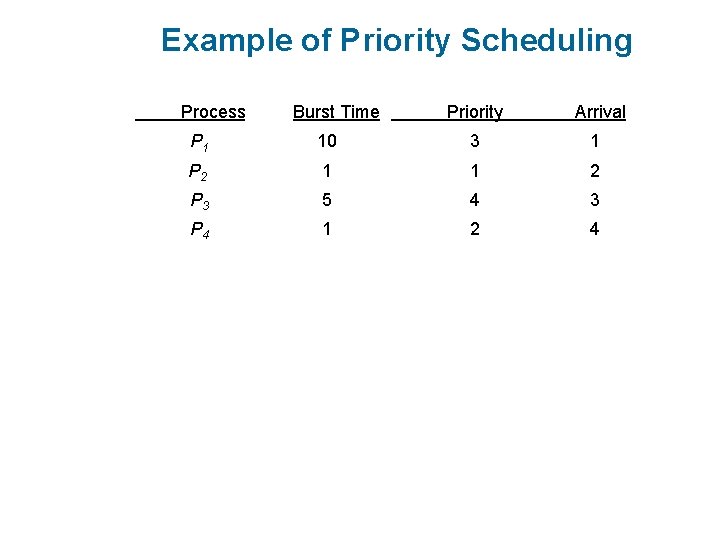

Priority Scheduling n A priority number (integer) is associated with each process n The CPU is allocated to the process with the highest priority (smallest integer highest priority) l Preemptive l nonpreemptive n SJF is a priority scheduling where priority is the predicted next CPU burst time n Problem Starvation – low priority processes may never execute n Solution Aging – as time progresses increase the priority of the process

Example of Priority Scheduling Process. A arri Burst Time. T Priority Arrival P 1 10 3 1 P 2 1 1 2 P 3 5 4 3 P 4 1 2 4

Round Robin (RR) n Each process gets a small unit of CPU time (time quantum), usually 10 -100 milliseconds. After this time has elapsed, the process is preempted and added to the end of the ready queue. n If there are n processes in the ready queue and the time quantum is q, then each process gets 1/n of the CPU time in chunks of at most q time units at once. No process waits more than (n-1)q time units. n Performance l q large FIFO l q small q must be large with respect to context switch, otherwise overhead is too high

Example of RR with Time Quantum = 4 Process Burst Time P 1 P 2 P 3 24 3 3 n The Gantt chart is: P 1 0 P 2 4 P 3 7 P 1 10 P 1 14 P 1 18 22 P 1 26 P 1 30 n Typically, higher average turnaround than SJF, but better response

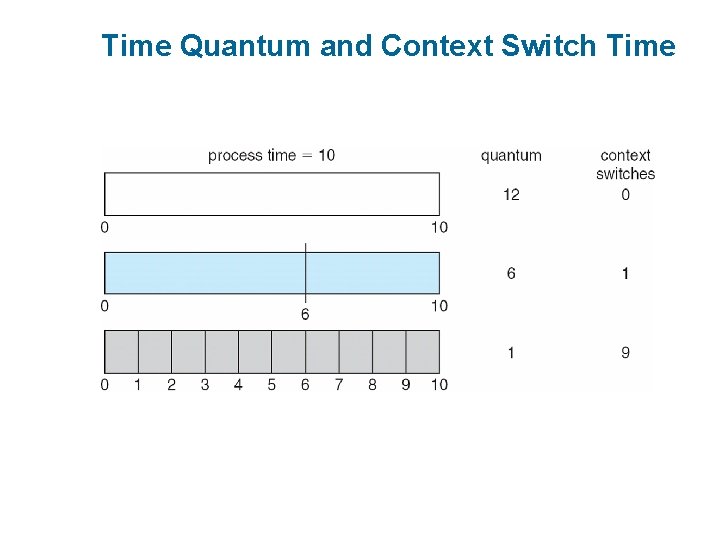

Time Quantum and Context Switch Time

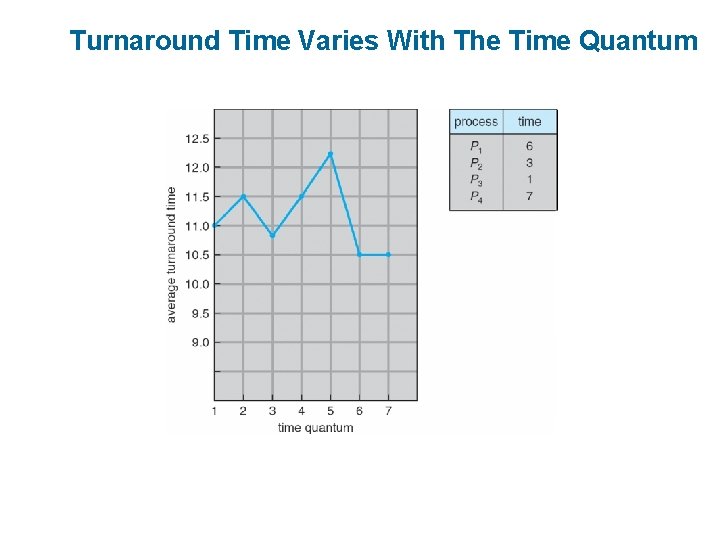

Turnaround Time Varies With The Time Quantum

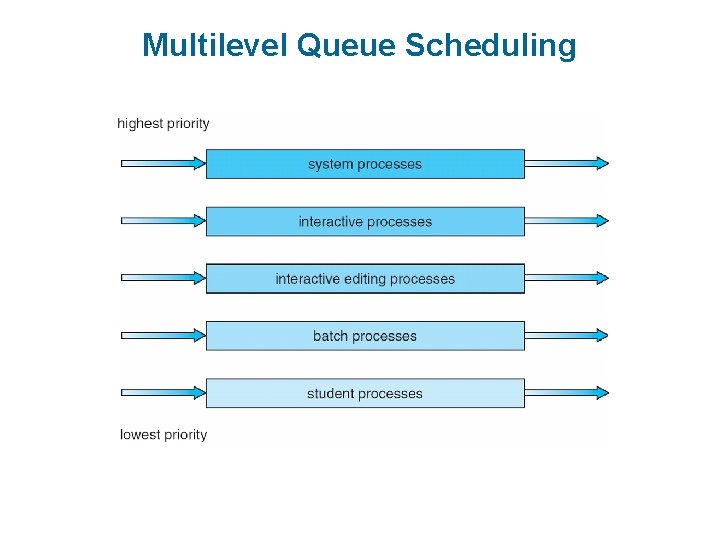

Multilevel Queue n Ready queue is partitioned into separate queues: foreground (interactive) background (batch) n Each queue has its own scheduling algorithm l foreground – RR l background – FCFS n Scheduling must be done between the queues l Fixed priority scheduling; (i. e. , serve all from foreground then from background). Possibility of starvation. l Time slice – each queue gets a certain amount of CPU time which it can schedule amongst its processes; i. e. , 80% to foreground in RR l 20% to background in FCFS

Multilevel Queue Scheduling

Multilevel Feedback Queue System (MFQS) n A process can move between the various queues; aging can be implemented this way n Multilevel-feedback-queue scheduler defined by the following parameters: l number of queues l scheduling algorithms for each queue l method used to determine when to upgrade a process l method used to determine when to demote a process l method used to determine which queue a process will enter when that process needs service

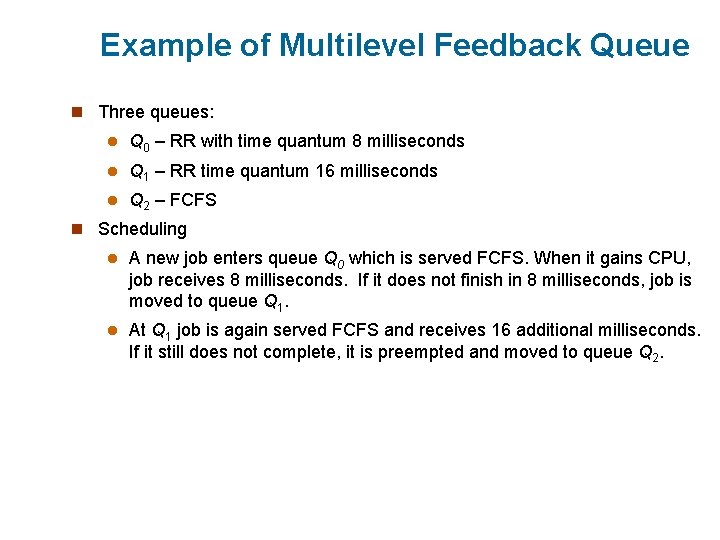

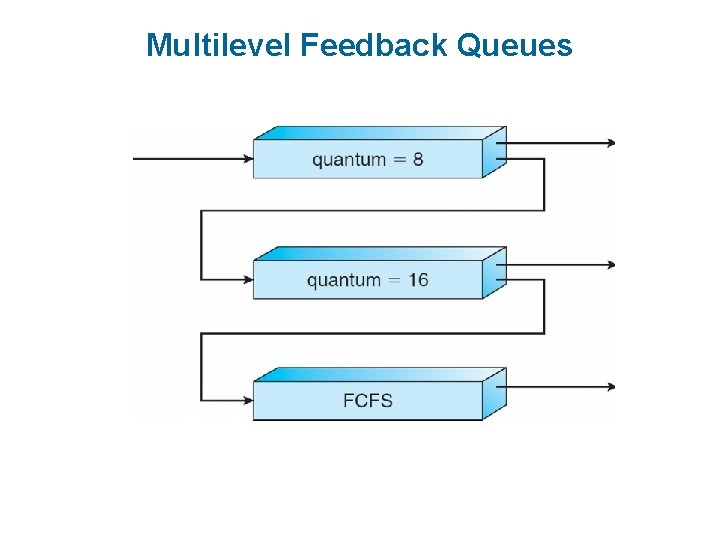

Example of Multilevel Feedback Queue n Three queues: l Q 0 – RR with time quantum 8 milliseconds l Q 1 – RR time quantum 16 milliseconds l Q 2 – FCFS n Scheduling l A new job enters queue Q 0 which is served FCFS. When it gains CPU, job receives 8 milliseconds. If it does not finish in 8 milliseconds, job is moved to queue Q 1. l At Q 1 job is again served FCFS and receives 16 additional milliseconds. If it still does not complete, it is preempted and moved to queue Q 2.

Multilevel Feedback Queues

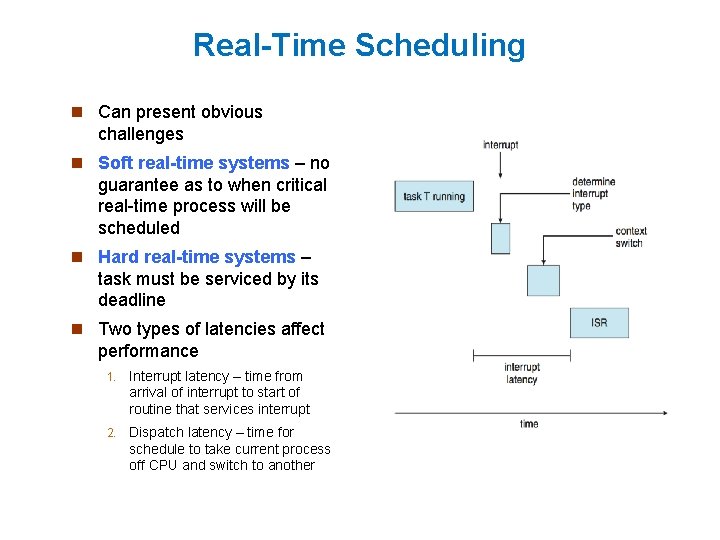

Real-Time Scheduling n Can present obvious challenges n Soft real-time systems – no guarantee as to when critical real-time process will be scheduled n Hard real-time systems – task must be serviced by its deadline n Two types of latencies affect performance 1. Interrupt latency – time from arrival of interrupt to start of routine that services interrupt 2. Dispatch latency – time for schedule to take current process off CPU and switch to another

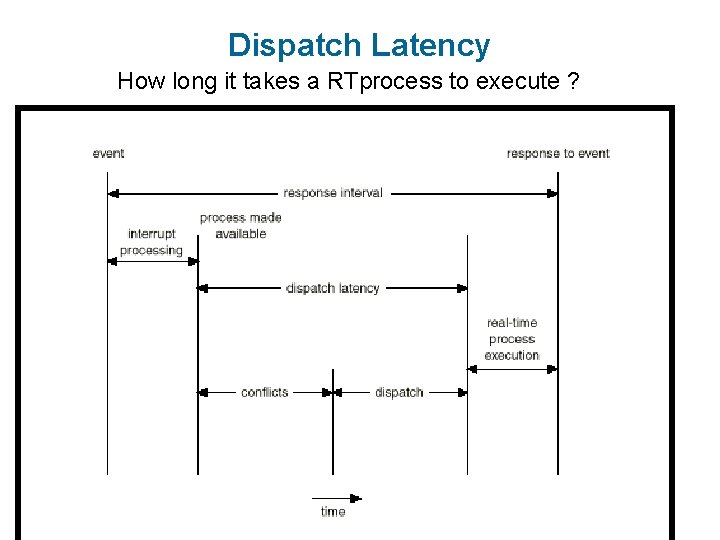

Dispatch Latency q How long it takes a real-time (RT) process to execute in the CPU once it arrives. q RT processes have the highest-priority q Dispatch latency must be small for RT process to execute fast

Dispatch Latency q Most OS force a wait for: q System call to complete (may be complex and long) q I/O block transfer to complete (may be too slow) before a context switch can happen

Dispatch Latency q Dispatch latency is long q Solution: place preemption points and force context switch in the middle of the other process’s syscall or I/O. q. Caveat: Preemption points must be placed at “safe” locations avoid modifying kernel data structures.

Dispatch Latency What to do if RT process is waiting for resources heldby one or more low-priority processes? q Priority Inversion (priority inheritance protocol) q All of them inherit the RT process’s high priority q Complete their respective tasks q Release all their resources for the RT process q All low-priority processes will revert to their original low priorities

Dispatch Latency q Dispatch latency phases: q Conflicts q Dispatch

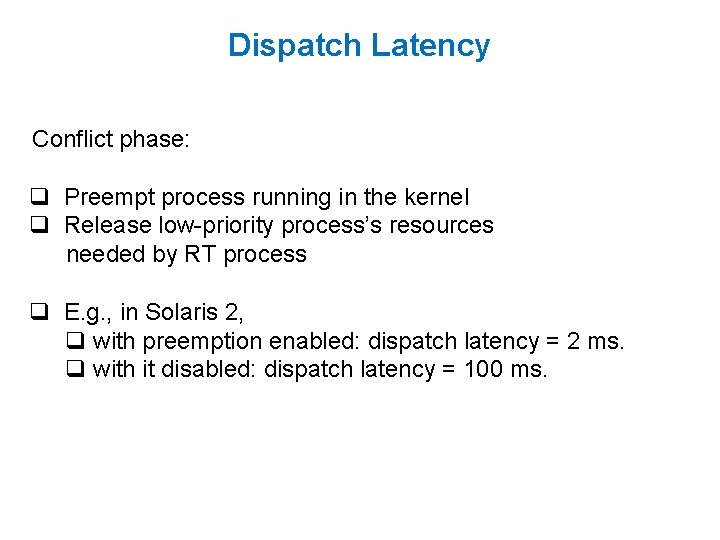

Dispatch Latency Conflict phase: q Preempt process running in the kernel q Release low-priority process’s resources needed by RT process q E. g. , in Solaris 2, q with preemption enabled: dispatch latency = 2 ms. q with it disabled: dispatch latency = 100 ms.

Dispatch Latency How long it takes a RTprocess to execute ?

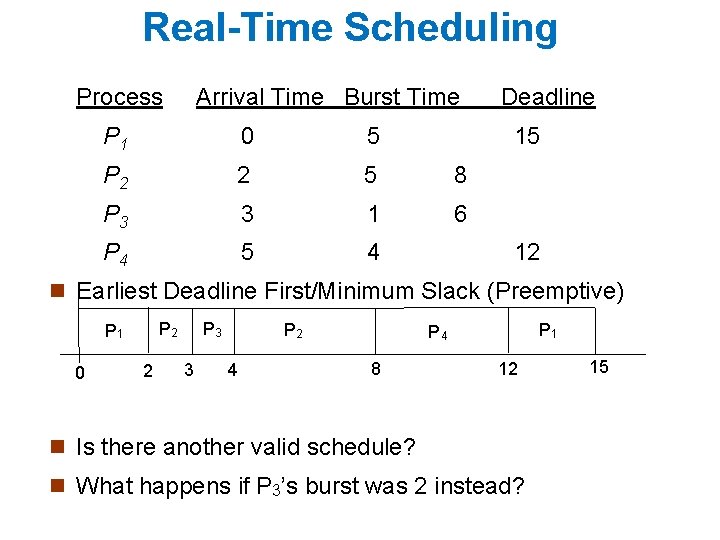

Real-Time Scheduling Process Arrival Time Burst Time P 1 0 5 P 2 2 5 8 P 3 3 1 6 P 4 5 4 Deadline 15 12 n Earliest Deadline First/Minimum Slack (Preemptive) 0 P 3 P 2 P 1 2 3 P 2 4 P 1 P 4 8 12 n Is there another valid schedule? n What happens if P 3’s burst was 2 instead? 15

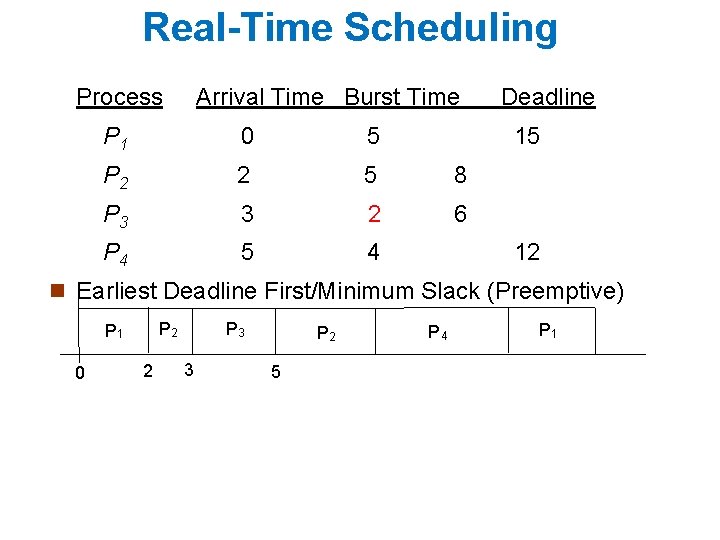

Real-Time Scheduling Process Arrival Time Burst Time P 1 0 5 P 2 2 5 8 P 3 3 2 6 P 4 5 4 Deadline 15 12 n Earliest Deadline First/Minimum Slack (Preemptive) 0 P 3 P 2 P 1 2 3 P 2 5 P 4 P 1

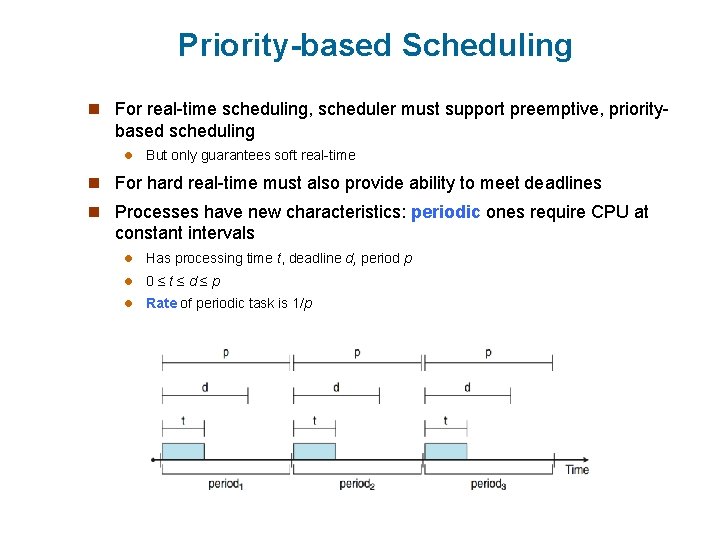

Priority-based Scheduling n For real-time scheduling, scheduler must support preemptive, priority- based scheduling l But only guarantees soft real-time n For hard real-time must also provide ability to meet deadlines n Processes have new characteristics: periodic ones require CPU at constant intervals l Has processing time t, deadline d, period p l 0≤t≤d≤p l Rate of periodic task is 1/p

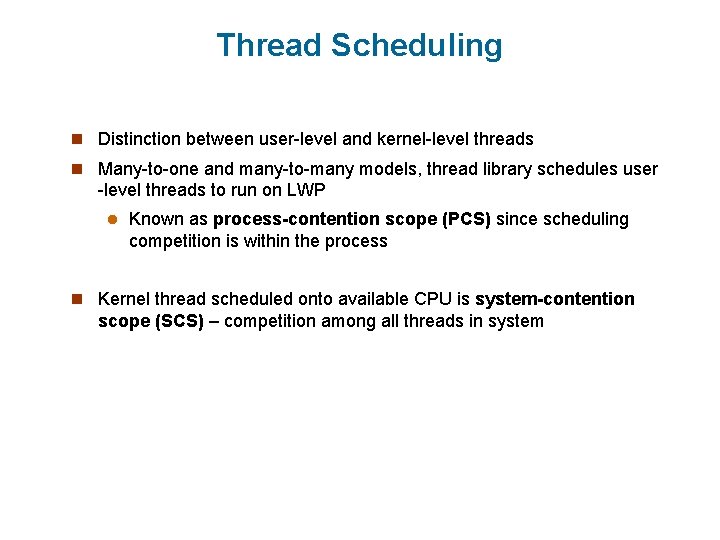

Thread Scheduling n Distinction between user-level and kernel-level threads n Many-to-one and many-to-many models, thread library schedules user -level threads to run on LWP l Known as process-contention scope (PCS) since scheduling competition is within the process n Kernel thread scheduled onto available CPU is system-contention scope (SCS) – competition among all threads in system

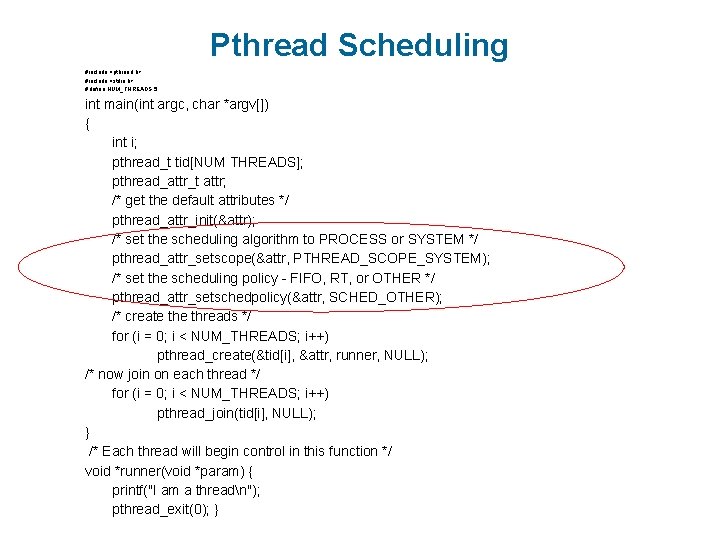

Pthread Scheduling n API allows specifying either PCS or SCS during thread creation l PTHREAD SCOPE PROCESS schedules threads using PCS scheduling l PTHREAD SCOPE SYSTEM schedules threads using SCS scheduling.

Pthread Scheduling #include <pthread. h> #include <stdio. h> #define NUM_THREADS 5 int main(int argc, char *argv[]) { int i; pthread_t tid[NUM THREADS]; pthread_attr_t attr; /* get the default attributes */ pthread_attr_init(&attr); /* set the scheduling algorithm to PROCESS or SYSTEM */ pthread_attr_setscope(&attr, PTHREAD_SCOPE_SYSTEM); /* set the scheduling policy - FIFO, RT, or OTHER */ pthread_attr_setschedpolicy(&attr, SCHED_OTHER); /* create threads */ for (i = 0; i < NUM_THREADS; i++) pthread_create(&tid[i], &attr, runner, NULL); /* now join on each thread */ for (i = 0; i < NUM_THREADS; i++) pthread_join(tid[i], NULL); } /* Each thread will begin control in this function */ void *runner(void *param) { printf("I am a threadn"); pthread_exit(0); }

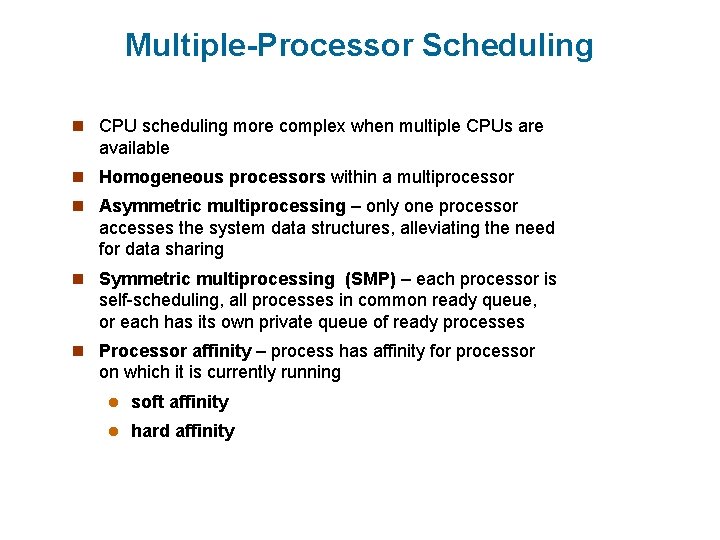

Multiple-Processor Scheduling n CPU scheduling more complex when multiple CPUs are available n Homogeneous processors within a multiprocessor n Asymmetric multiprocessing – only one processor accesses the system data structures, alleviating the need for data sharing n Symmetric multiprocessing (SMP) – each processor is self-scheduling, all processes in common ready queue, or each has its own private queue of ready processes n Processor affinity – process has affinity for processor on which it is currently running l soft affinity l hard affinity

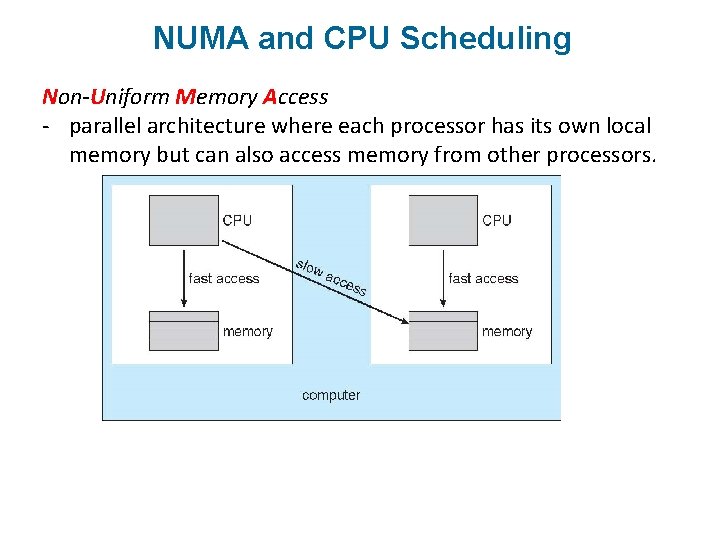

NUMA and CPU Scheduling Non-Uniform Memory Access - parallel architecture where each processor has its own local memory but can also access memory from other processors.

Multiple-Processor Scheduling – Load Balancing n If SMP, need to keep all CPUs loaded for efficiency n Load balancing attempts to keep workload evenly distributed n Push migration – periodic task checks load on each processor, and if found pushes task from overloaded CPU to other CPUs n Pull migration – idle processors pulls waiting task from busy processor

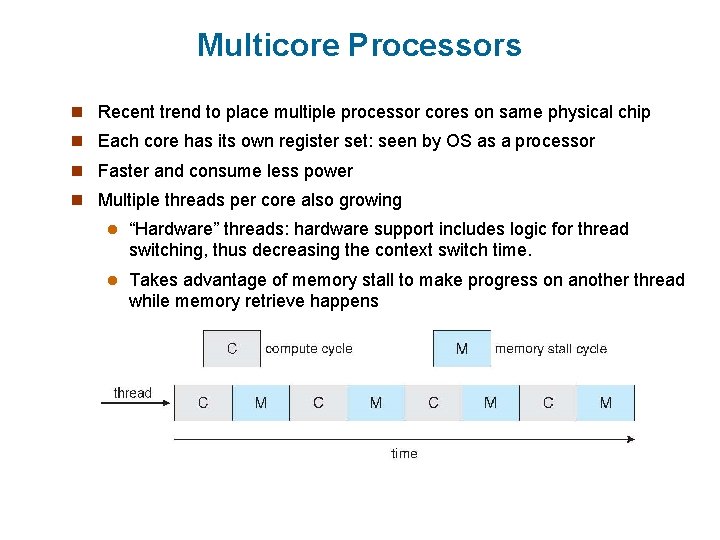

Multicore Processors n Recent trend to place multiple processor cores on same physical chip n Each core has its own register set: seen by OS as a processor n Faster and consume less power n Multiple threads per core also growing l “Hardware” threads: hardware support includes logic for thread switching, thus decreasing the context switch time. l Takes advantage of memory stall to make progress on another thread while memory retrieve happens

Multithreaded Multicore System 0 1 2 different levels of scheduling: • Mapping software thread onto hardware thread - traditional scheduling algorithms like those discussed last time • Which hardware thread a core will run next - Round Robin (Ultra Sparc 1) or dynamic priority-based (Intel Itanium, dual-core processor with two hardware-managed threads per core)

Operating System Examples n UNIX Scheduling n Linux scheduling n Windows XP scheduling n Solaris scheduling

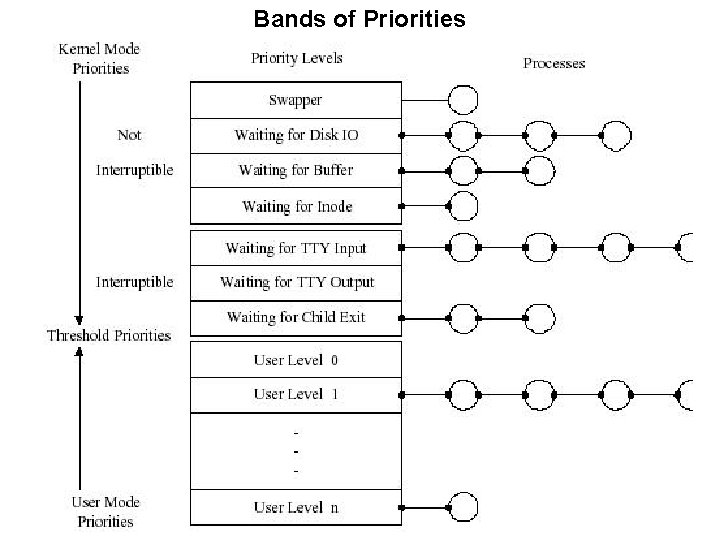

UNIX schedulers

UNIX schedulers

Bands of Priorities

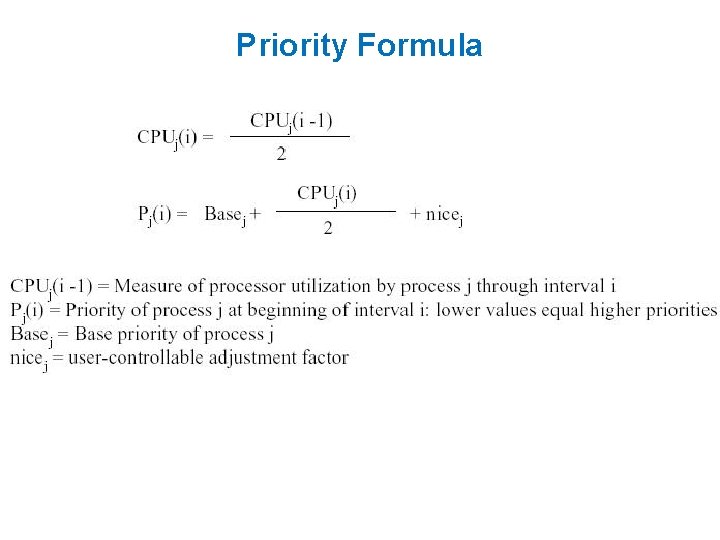

Priority Formula

Linux pre-2. 6 O(n) Scheduler O(n) scheduling a task takes O(n) where n = # of tasks One runqueue for all processors in a symmetric multiprocessor system - Task can be scheduled on any CPU - Good for load balancing - Bad for memory cache movements, e. g. , task previously on CPU 1 is run on CPU 2 move cache 1 to cache 2 Single runqueue CPUs had to contend with shared lock. No preemption allowed higher priority process may have to wait.

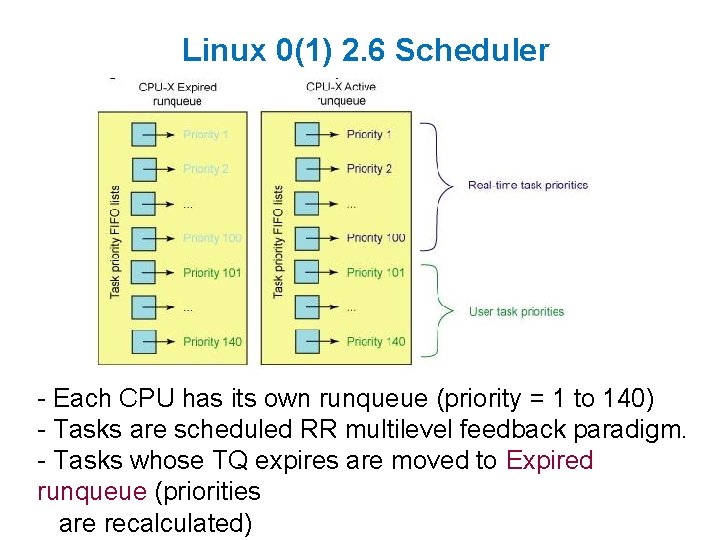

Linux 0(1) 2. 6 Scheduler - Each CPU has its own runqueue (priority = 1 to 140) - Tasks are scheduled RR multilevel feedback paradigm. - Tasks whose TQ expires are moved to Expired runqueue (priorities are recalculated)

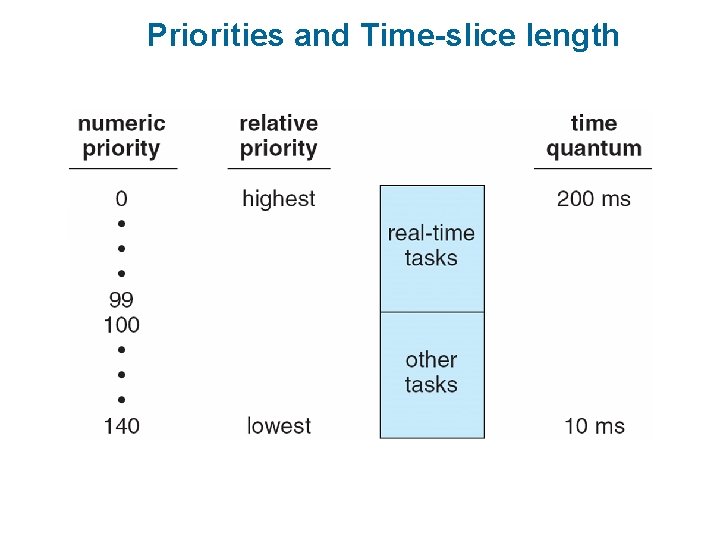

Linux Scheduling n Constant order O(1) scheduling time n Two priority ranges: time-sharing and real-time n Real-time range from 0 to 99 and nice value from 100 to 140

Linux 0(1) 2. 6 Scheduler Why O(1)? - Bitmap of priorities is read (each priority level points to process) - Since size of bitmap is 140, selection of process does not depend on the number of processes in the runqueue. Active runqueue pointer - When active runqueue is empty, pointer is set to expired runqueue, i. e. , expired runqueue now becomes active runqueue and vice versa - Each CPU sets locks on its own runqueues all CPUs can schedule without contention from other CPUs.

Linux 0(1) 2. 6 Scheduler Dynamic Priority Assignment - CPU bound processes are penalized (increased by 5 levels) - I/O bound rewarded (priority# decreased by 5 levels) I/O bound use CPU to set up I/O and is suspended give other processes a chance to execute altruistic Heuristic for I/O or CPU bound category? ? - interactivity heuristic based on: time task executes compared with time it is suspended. - I/O bound sleep time is large increase in interactivity metric rewarded. - Priority adjustments only applied to user processes.

Priorities and Time-slice length

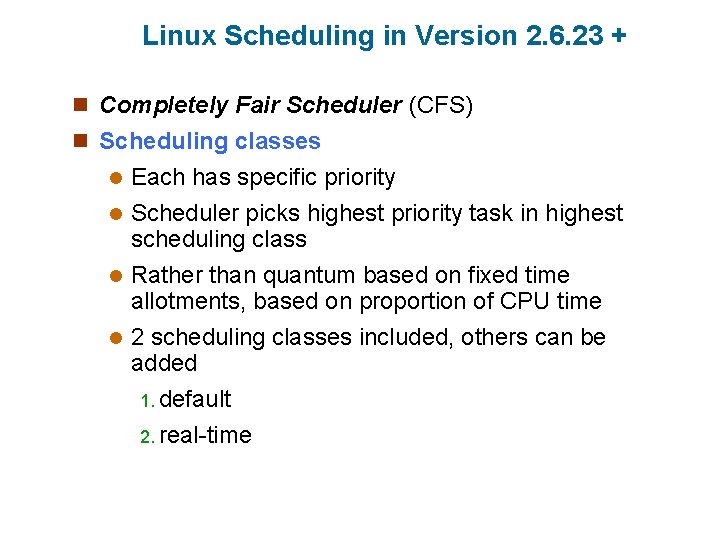

Linux Scheduling in Version 2. 6. 23 + n Completely Fair Scheduler (CFS) n Scheduling classes Each has specific priority l Scheduler picks highest priority task in highest scheduling class l Rather than quantum based on fixed time allotments, based on proportion of CPU time l 2 scheduling classes included, others can be added 1. default 2. real-time l

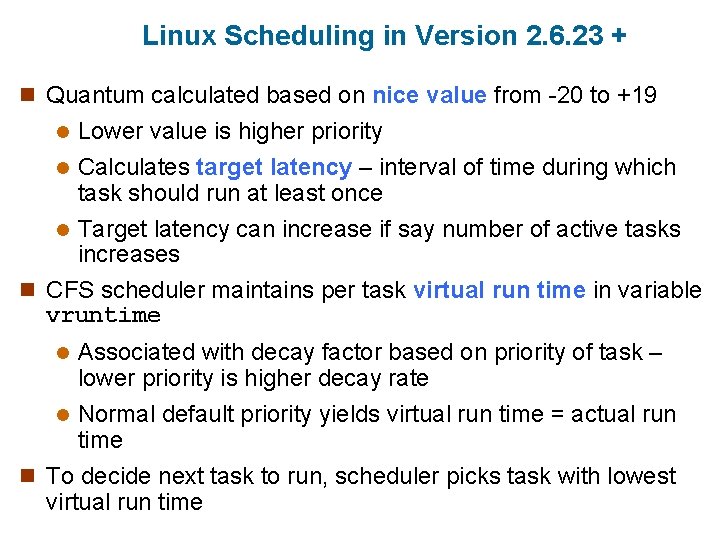

Linux Scheduling in Version 2. 6. 23 + n Quantum calculated based on nice value from -20 to +19 Lower value is higher priority l Calculates target latency – interval of time during which task should run at least once l Target latency can increase if say number of active tasks increases n CFS scheduler maintains per task virtual run time in variable vruntime l Associated with decay factor based on priority of task – lower priority is higher decay rate l Normal default priority yields virtual run time = actual run time n To decide next task to run, scheduler picks task with lowest virtual run time l

CFS Performance

Linux Scheduling (Cont. ) n Real-time scheduling according to POSIX. 1 b l Real-time tasks have static priorities n Real-time plus normal map into global priority scheme n Nice value of -20 maps to global priority 100 n Nice value of +19 maps to priority 139

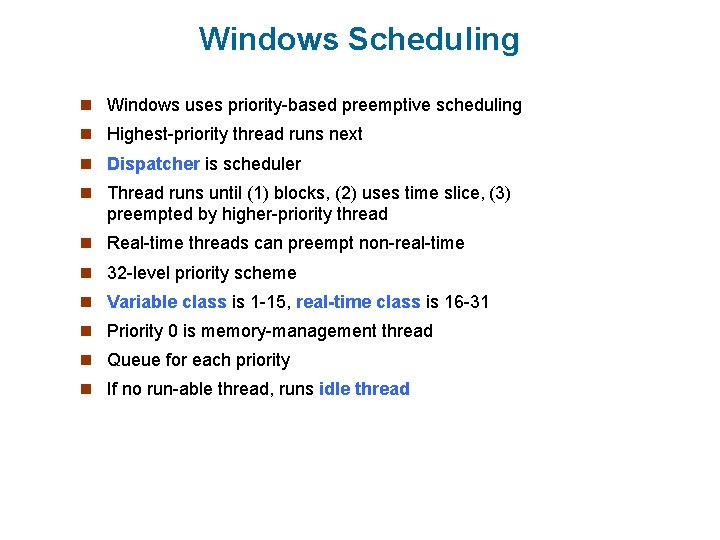

Windows Scheduling n Windows uses priority-based preemptive scheduling n Highest-priority thread runs next n Dispatcher is scheduler n Thread runs until (1) blocks, (2) uses time slice, (3) preempted by higher-priority thread n Real-time threads can preempt non-real-time n 32 -level priority scheme n Variable class is 1 -15, real-time class is 16 -31 n Priority 0 is memory-management thread n Queue for each priority n If no run-able thread, runs idle thread

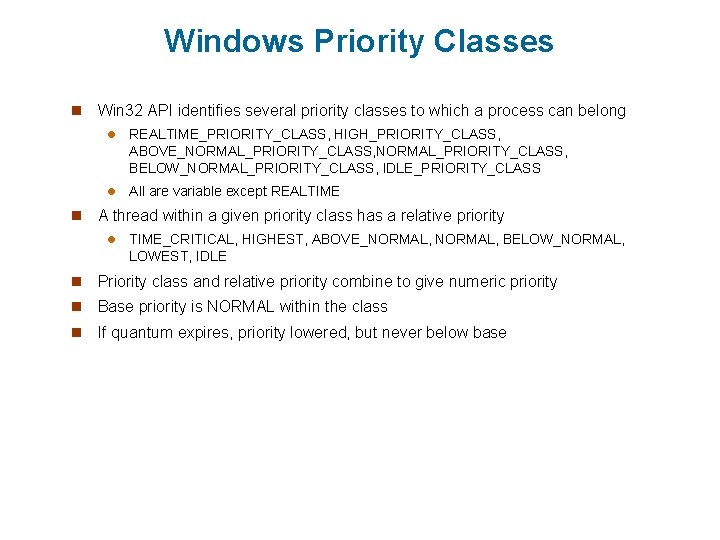

Windows Priority Classes n n Win 32 API identifies several priority classes to which a process can belong l REALTIME_PRIORITY_CLASS, HIGH_PRIORITY_CLASS, ABOVE_NORMAL_PRIORITY_CLASS, BELOW_NORMAL_PRIORITY_CLASS, IDLE_PRIORITY_CLASS l All are variable except REALTIME A thread within a given priority class has a relative priority l TIME_CRITICAL, HIGHEST, ABOVE_NORMAL, BELOW_NORMAL, LOWEST, IDLE n Priority class and relative priority combine to give numeric priority n Base priority is NORMAL within the class n If quantum expires, priority lowered, but never below base

Windows Priority Classes (Cont. ) n If wait occurs, priority boosted depending on what was waited for n Foreground window given 3 x priority boost n Windows 7 added user-mode scheduling (UMS) l Applications create and manage threads independent of kernel l For large number of threads, much more efficient l UMS schedulers come from programming language libraries like C++ Concurrent Runtime (Conc. RT) framework

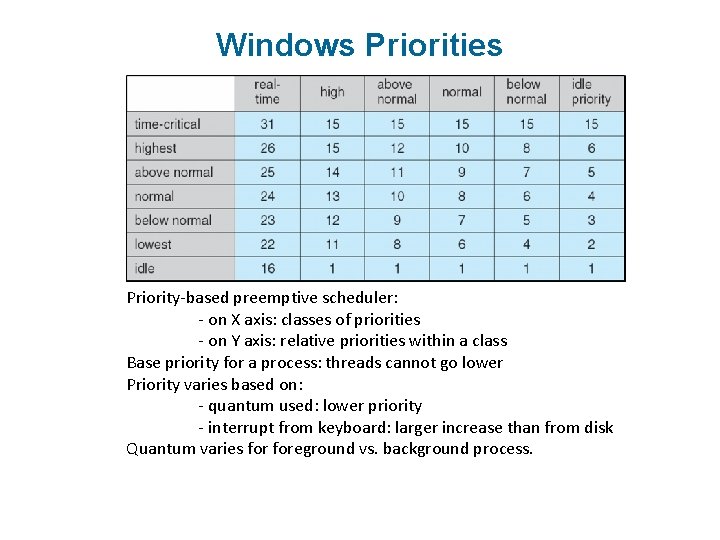

Windows Priorities Priority-based preemptive scheduler: - on X axis: classes of priorities - on Y axis: relative priorities within a class Base priority for a process: threads cannot go lower Priority varies based on: - quantum used: lower priority - interrupt from keyboard: larger increase than from disk Quantum varies foreground vs. background process.

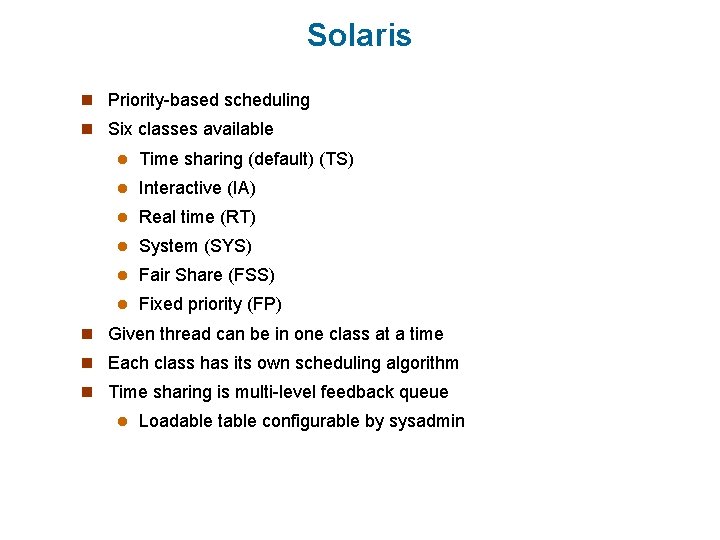

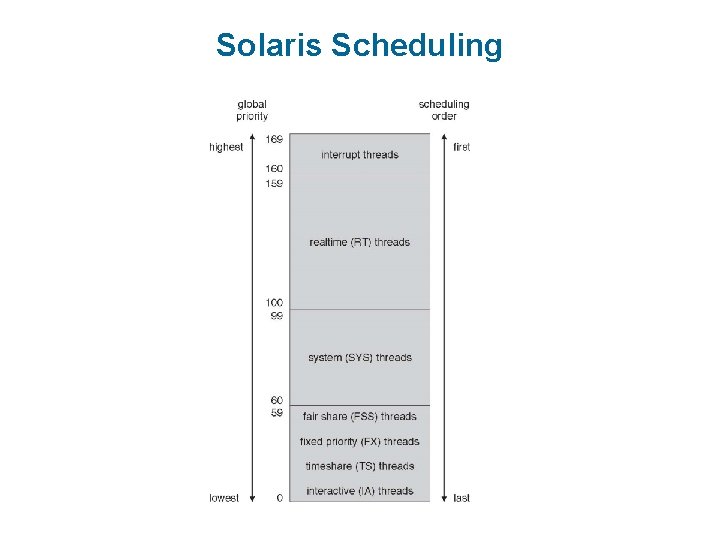

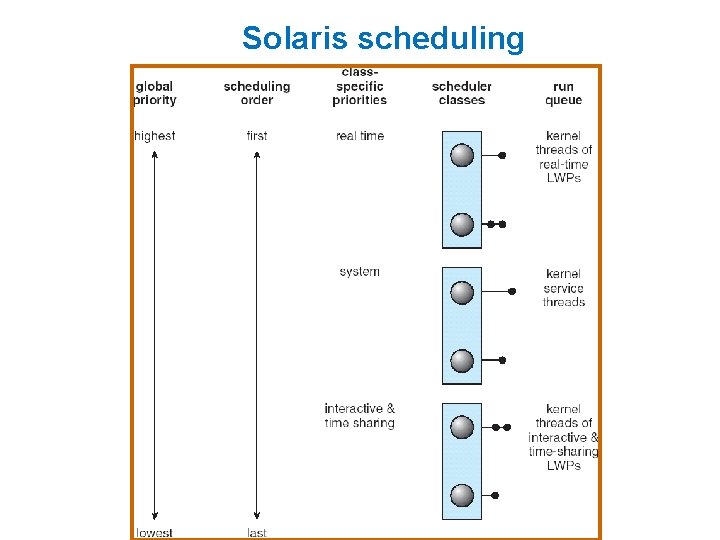

Solaris n Priority-based scheduling n Six classes available l Time sharing (default) (TS) l Interactive (IA) l Real time (RT) l System (SYS) l Fair Share (FSS) l Fixed priority (FP) n Given thread can be in one class at a time n Each class has its own scheduling algorithm n Time sharing is multi-level feedback queue l Loadable table configurable by sysadmin

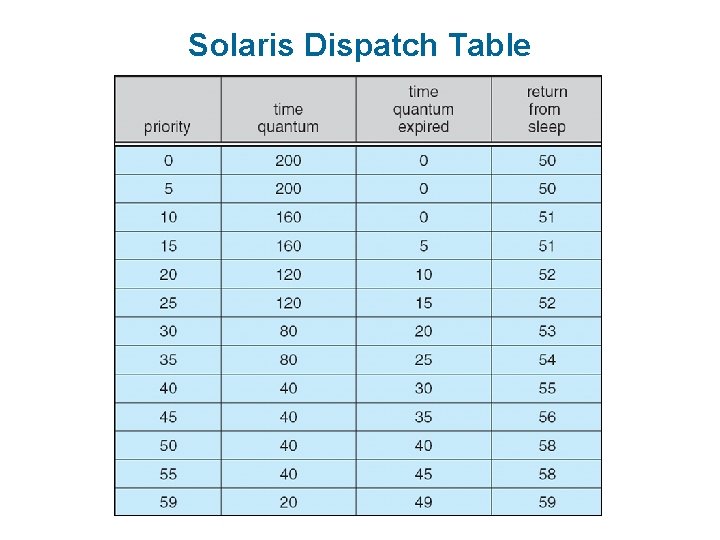

Solaris Dispatch Table

Solaris Scheduling

Solaris scheduling

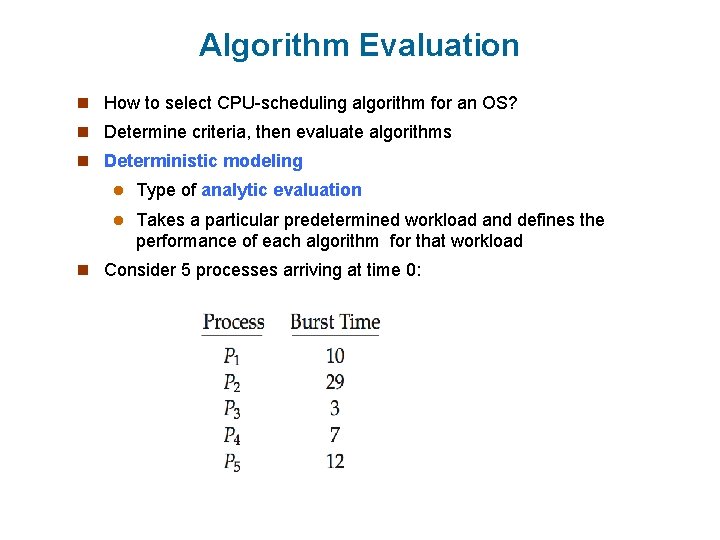

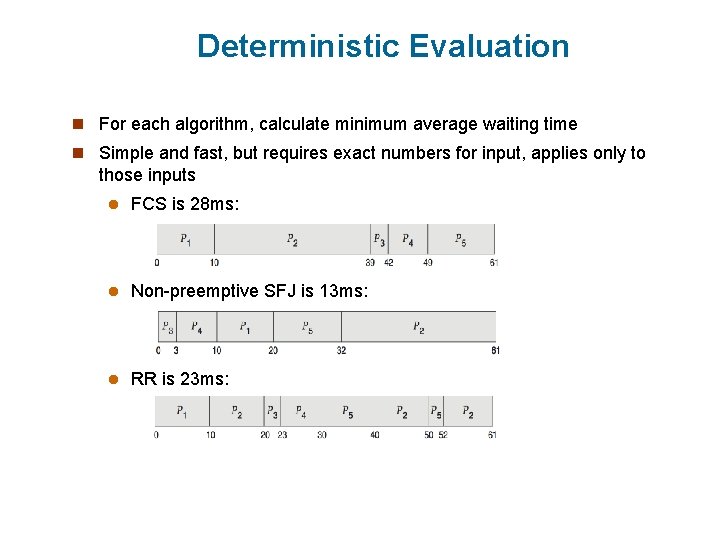

Algorithm Evaluation n How to select CPU-scheduling algorithm for an OS? n Determine criteria, then evaluate algorithms n Deterministic modeling l Type of analytic evaluation l Takes a particular predetermined workload and defines the performance of each algorithm for that workload n Consider 5 processes arriving at time 0:

Deterministic Evaluation n For each algorithm, calculate minimum average waiting time n Simple and fast, but requires exact numbers for input, applies only to those inputs l FCS is 28 ms: l Non-preemptive SFJ is 13 ms: l RR is 23 ms:

Queueing Models n Describes the arrival of processes, and CPU and I/O bursts probabilistically l Commonly exponential, and described by mean l Computes average throughput, utilization, waiting time, etc n Computer system described as network of servers, each with queue of waiting processes l Knowing arrival rates and service rates l Computes utilization, average queue length, average wait time, etc

Little’s Formula n n = average queue length n W = average waiting time in queue n λ = average arrival rate into queue n Little’s law – in steady state, processes leaving queue must equal processes arriving, thus: n=λx. W l Valid for any scheduling algorithm and arrival distribution n For example, if on average 7 processes arrive per second, and normally 14 processes in queue, then average wait time per process = 2 seconds

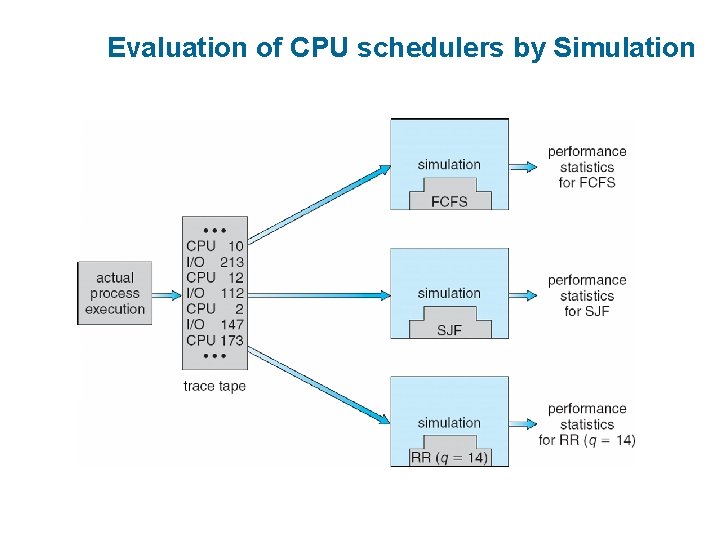

Simulations n Queueing models limited n Simulations more accurate l Programmed model of computer system l Clock is a variable l Gather statistics indicating algorithm performance l Data to drive simulation gathered via 4 Random number generator according to probabilities 4 Distributions 4 Trace defined mathematically or empirically tapes record sequences of real events in real systems

Evaluation of CPU schedulers by Simulation

Implementation n Even simulations have limited accuracy n Just implement new scheduler and test in real systems n High cost, high risk n Environments vary n Most flexible schedulers can be modified per-site or per-system n Or APIs to modify priorities n But again environments vary

- Slides: 79