CPSC 7373 Artificial Intelligence Lecture 5 Probabilistic Inference

CPSC 7373: Artificial Intelligence Lecture 5: Probabilistic Inference Jiang Bian, Fall 2014 University of Arkansas for Medical Sciences University of Arkansas at Little Rock

From last course • Probability theory • Bayes Net – Concise representation of a joint probability distribution • Independence • Probabilistic Inference: – How to answer questions based on a Bayes Net.

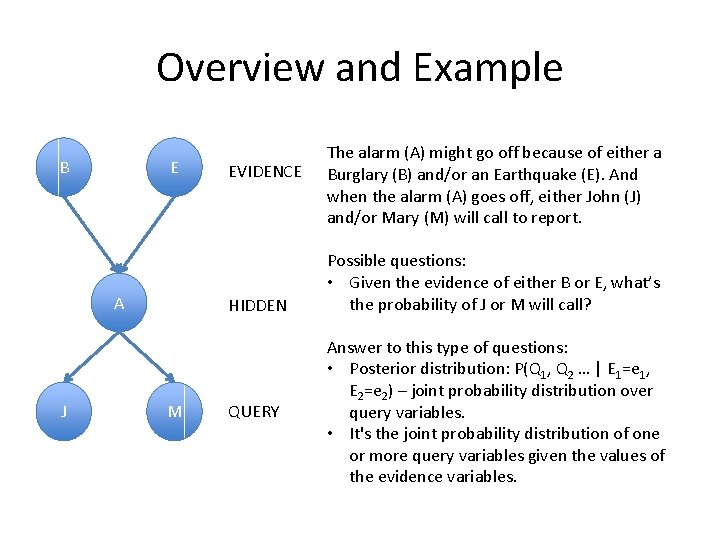

Overview and Example B E A J EVIDENCE HIDDEN M QUERY The alarm (A) might go off because of either a Burglary (B) and/or an Earthquake (E). And when the alarm (A) goes off, either John (J) and/or Mary (M) will call to report. Possible questions: • Given the evidence of either B or E, what’s the probability of J or M will call? Answer to this type of questions: • Posterior distribution: P(Q 1, Q 2 … | E 1=e 1, E 2=e 2) – joint probability distribution over query variables. • It's the joint probability distribution of one or more query variables given the values of the evidence variables.

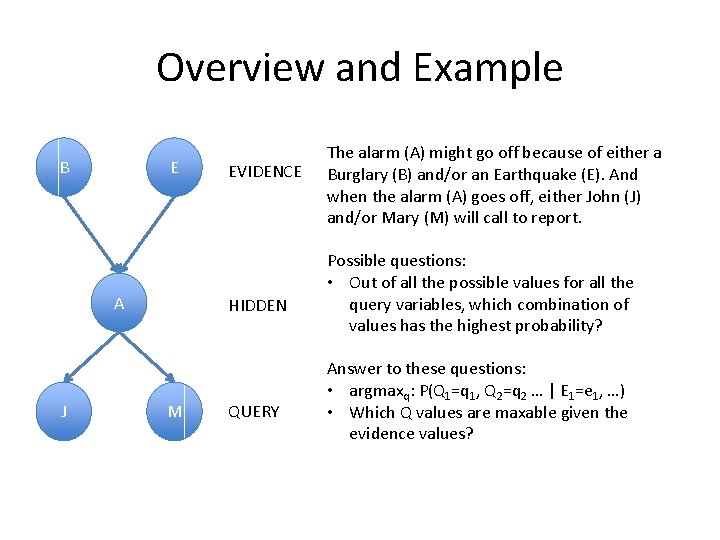

Overview and Example B E A J EVIDENCE HIDDEN M QUERY The alarm (A) might go off because of either a Burglary (B) and/or an Earthquake (E). And when the alarm (A) goes off, either John (J) and/or Mary (M) will call to report. Possible questions: • Out of all the possible values for all the query variables, which combination of values has the highest probability? Answer to these questions: • argmaxq: P(Q 1=q 1, Q 2=q 2 … | E 1=e 1, …) • Which Q values are maxable given the evidence values?

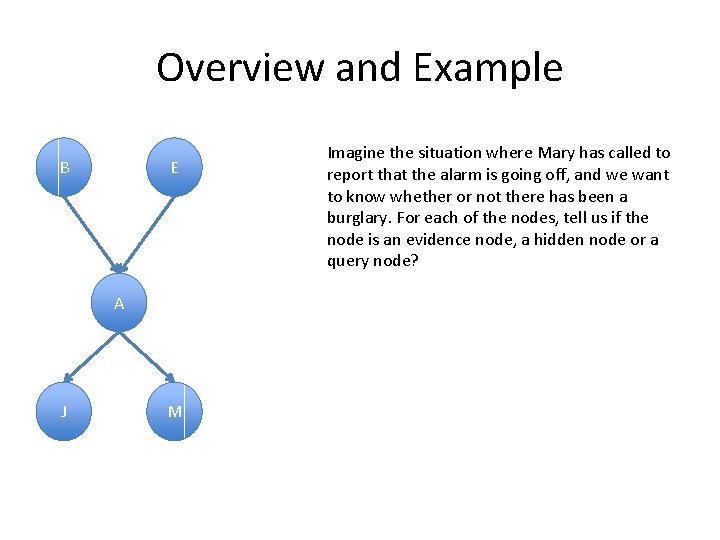

Overview and Example B E A J M Imagine the situation where Mary has called to report that the alarm is going off, and we want to know whether or not there has been a burglary. For each of the nodes, tell us if the node is an evidence node, a hidden node or a query node?

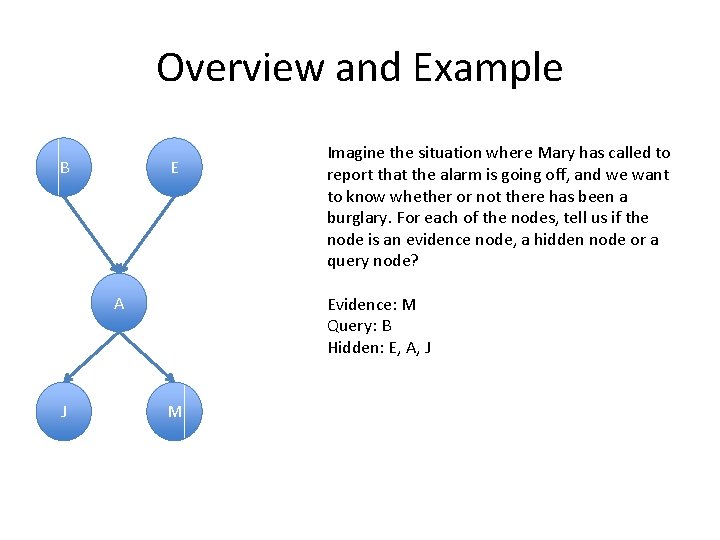

Overview and Example B E A J Imagine the situation where Mary has called to report that the alarm is going off, and we want to know whether or not there has been a burglary. For each of the nodes, tell us if the node is an evidence node, a hidden node or a query node? Evidence: M Query: B Hidden: E, A, J M

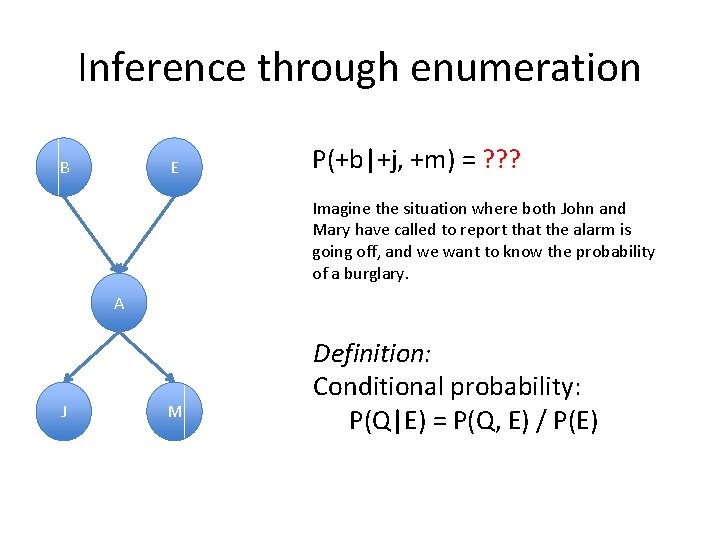

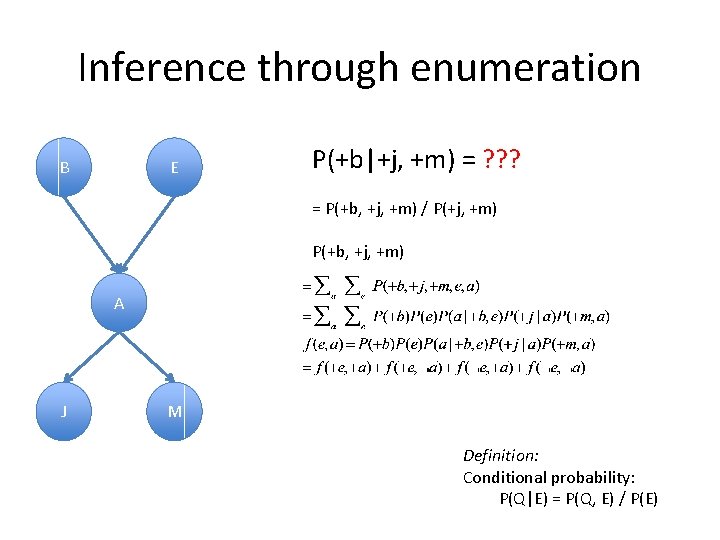

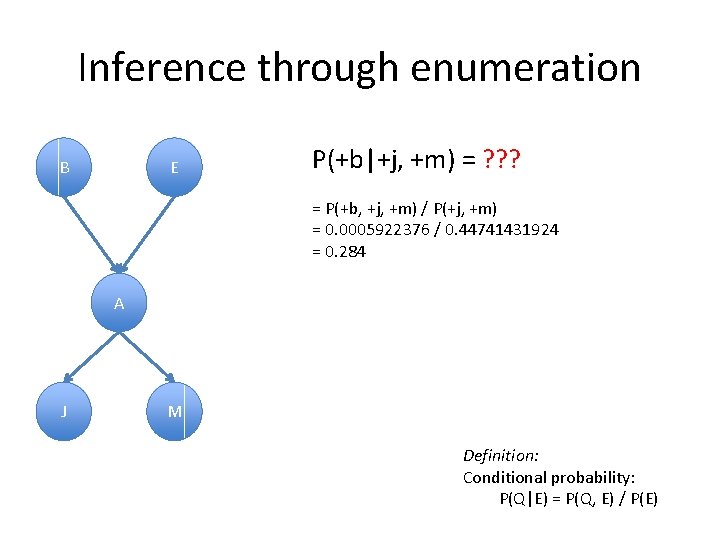

Inference through enumeration B E P(+b|+j, +m) = ? ? ? Imagine the situation where both John and Mary have called to report that the alarm is going off, and we want to know the probability of a burglary. A J M Definition: Conditional probability: P(Q|E) = P(Q, E) / P(E)

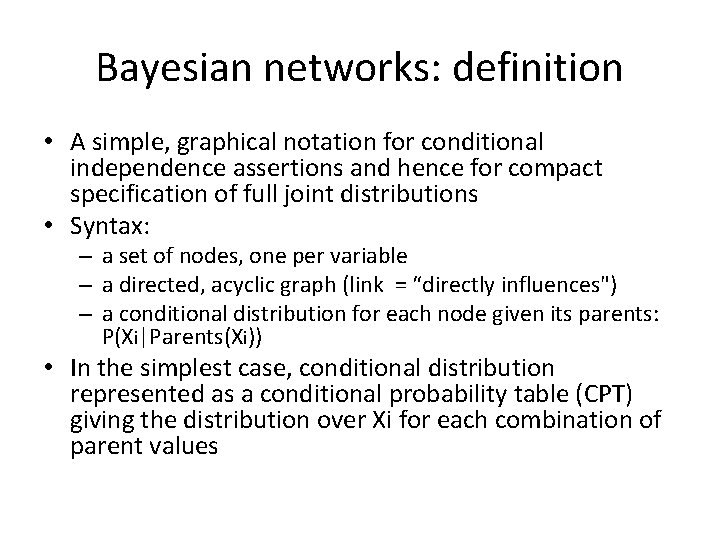

Bayesian networks: definition • A simple, graphical notation for conditional independence assertions and hence for compact specification of full joint distributions • Syntax: – a set of nodes, one per variable – a directed, acyclic graph (link = “directly influences") – a conditional distribution for each node given its parents: P(Xi|Parents(Xi)) • In the simplest case, conditional distribution represented as a conditional probability table (CPT) giving the distribution over Xi for each combination of parent values

Inference through enumeration B E P(+b|+j, +m) = ? ? ? = P(+b, +j, +m) / P(+j, +m) P(+b, +j, +m) A J M Definition: Conditional probability: P(Q|E) = P(Q, E) / P(E)

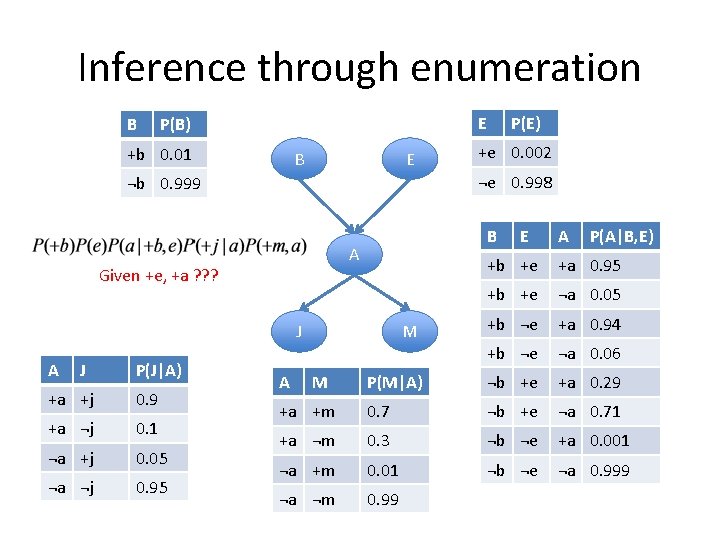

Inference through enumeration B E P(B) +b 0. 01 B E B A +a +j 0. 9 +a ¬j 0. 1 ¬a +j 0. 05 ¬a ¬j 0. 95 A P(A|B, E) +a 0. 95 +b +e ¬a 0. 05 M +b ¬e +a 0. 94 +b ¬e ¬a 0. 06 P(M|A) ¬b +e +a 0. 29 +a +m 0. 7 ¬b +e ¬a 0. 71 +a ¬m 0. 3 ¬b ¬e +a 0. 001 ¬a +m 0. 01 ¬b ¬e ¬a 0. 999 ¬a ¬m 0. 99 J P(J|A) E +b +e Given +e, +a ? ? ? J +e 0. 002 ¬e 0. 998 ¬b 0. 999 A P(E) A M

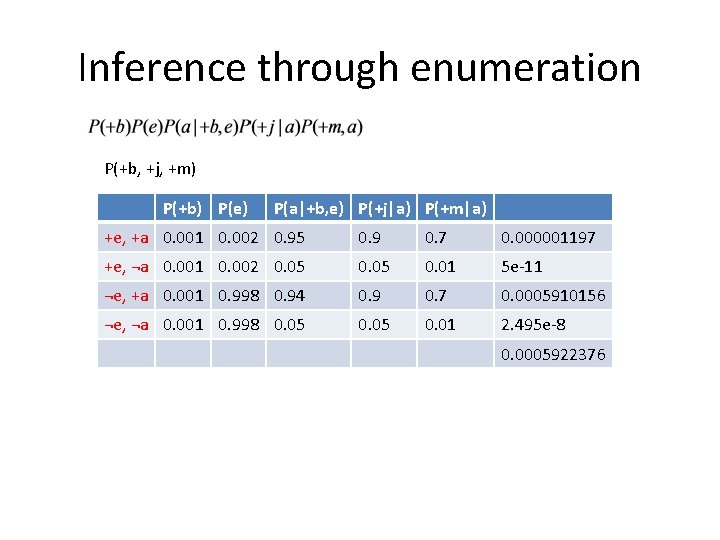

Inference through enumeration P(+b, +j, +m) P(+b) P(e) P(a|+b, e) P(+j|a) P(+m|a) +e, +a 0. 001 0. 002 0. 95 0. 9 0. 7 0. 000001197 +e, ¬a 0. 001 0. 002 0. 05 0. 01 5 e-11 ¬e, +a 0. 001 0. 998 0. 94 0. 9 0. 7 0. 0005910156 ¬e, ¬a 0. 001 0. 998 0. 05 0. 01 2. 495 e-8 0. 0005922376

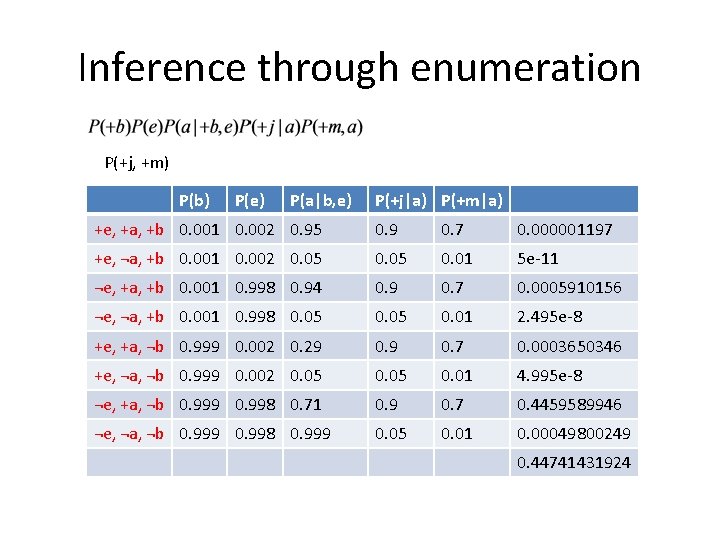

Inference through enumeration P(+j, +m) P(b) P(e) P(a|b, e) P(+j|a) P(+m|a) +e, +a, +b 0. 001 0. 002 0. 95 0. 9 0. 7 0. 000001197 +e, ¬a, +b 0. 001 0. 002 0. 05 0. 01 5 e-11 ¬e, +a, +b 0. 001 0. 998 0. 94 0. 9 0. 7 0. 0005910156 ¬e, ¬a, +b 0. 001 0. 998 0. 05 0. 01 2. 495 e-8 +e, +a, ¬b 0. 999 0. 002 0. 29 0. 7 0. 0003650346 +e, ¬a, ¬b 0. 999 0. 002 0. 05 0. 01 4. 995 e-8 ¬e, +a, ¬b 0. 999 0. 998 0. 71 0. 9 0. 7 0. 4459589946 ¬e, ¬a, ¬b 0. 999 0. 998 0. 999 0. 05 0. 01 0. 00049800249 0. 44741431924

Inference through enumeration B E P(+b|+j, +m) = ? ? ? = P(+b, +j, +m) / P(+j, +m) = 0. 0005922376 / 0. 44741431924 = 0. 284 A J M Definition: Conditional probability: P(Q|E) = P(Q, E) / P(E)

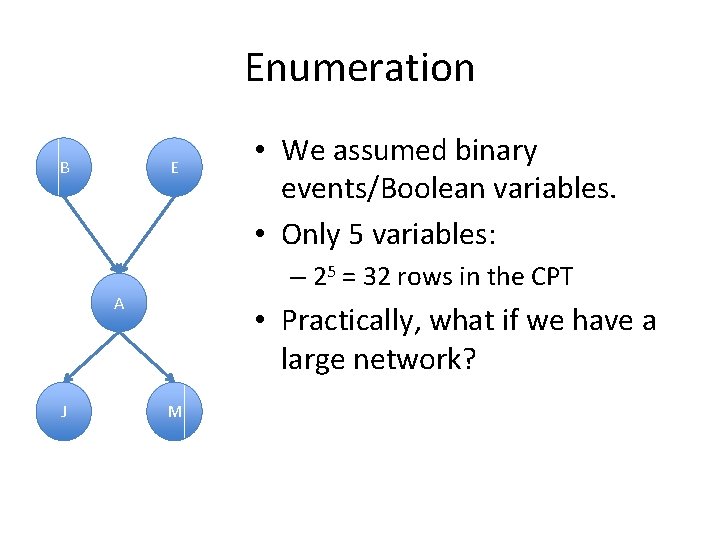

Enumeration B E – 25 = 32 rows in the CPT A J • We assumed binary events/Boolean variables. • Only 5 variables: • Practically, what if we have a large network? M

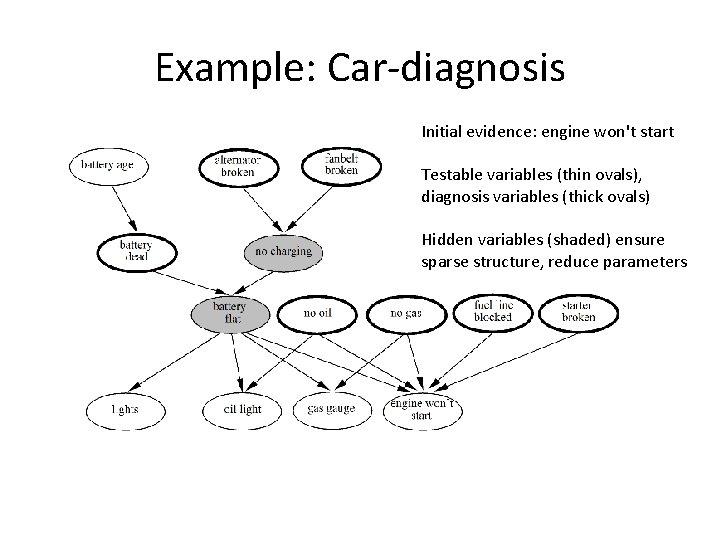

Example: Car-diagnosis Initial evidence: engine won't start Testable variables (thin ovals), diagnosis variables (thick ovals) Hidden variables (shaded) ensure sparse structure, reduce parameters

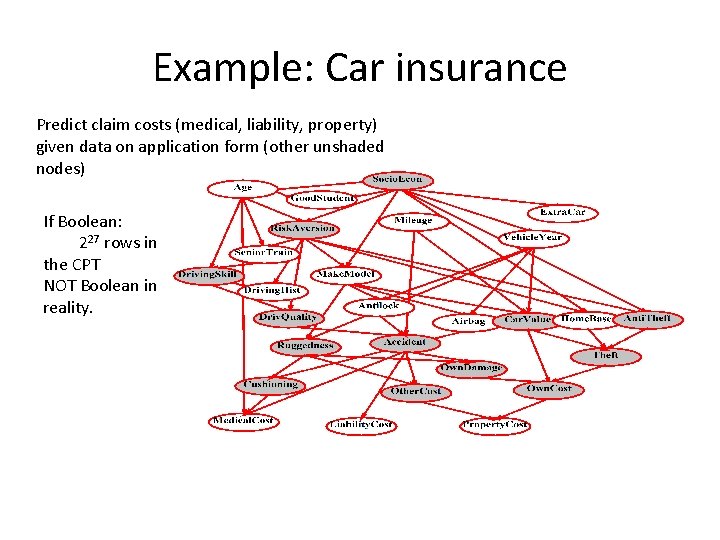

Example: Car insurance Predict claim costs (medical, liability, property) given data on application form (other unshaded nodes) If Boolean: 227 rows in the CPT NOT Boolean in reality.

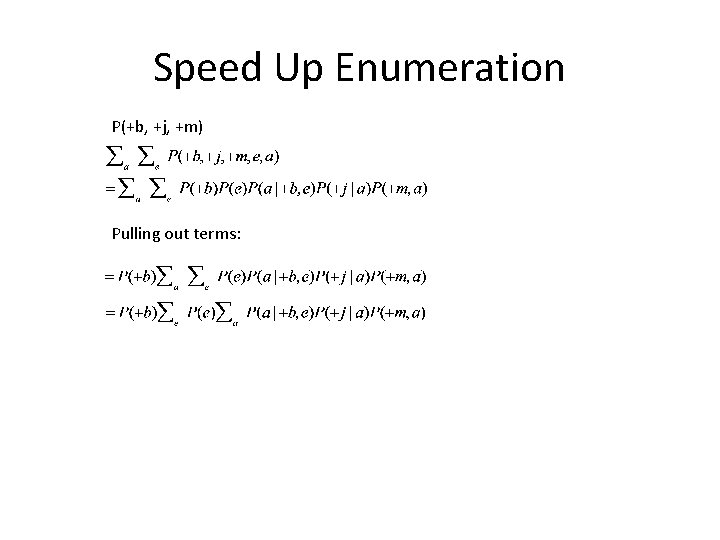

Speed Up Enumeration P(+b, +j, +m) Pulling out terms:

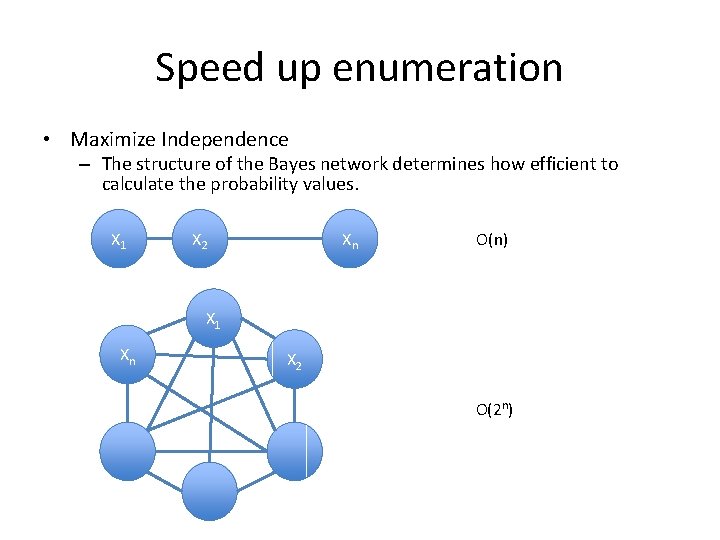

Speed up enumeration • Maximize Independence – The structure of the Bayes network determines how efficient to calculate the probability values. X 1 X 2 Xn O(n) X 1 Xn X 2 O(2 n)

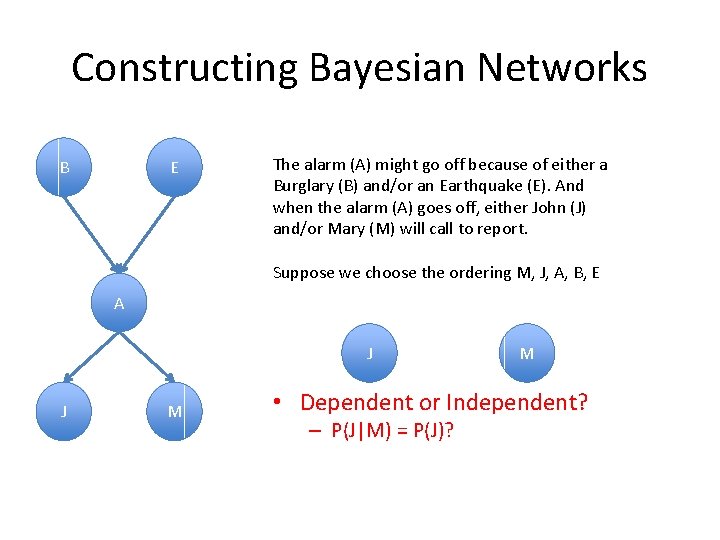

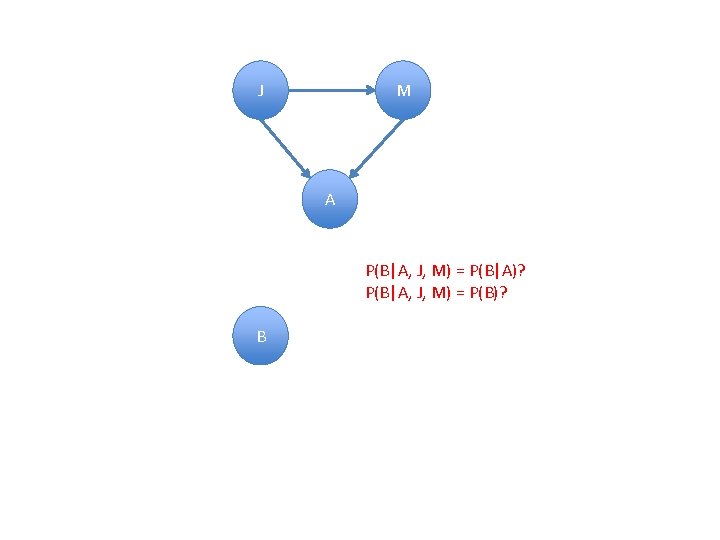

Constructing Bayesian Networks B E The alarm (A) might go off because of either a Burglary (B) and/or an Earthquake (E). And when the alarm (A) goes off, either John (J) and/or Mary (M) will call to report. Suppose we choose the ordering M, J, A, B, E A J J M M • Dependent or Independent? – P(J|M) = P(J)?

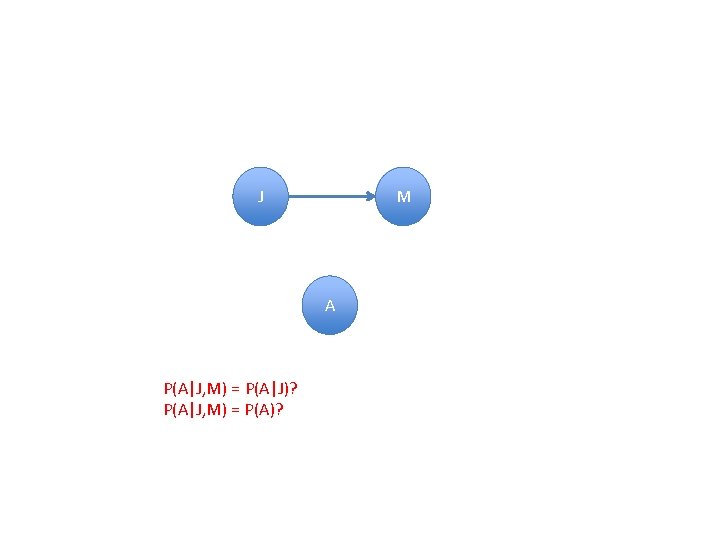

J M A P(A|J, M) = P(A|J)? P(A|J, M) = P(A)?

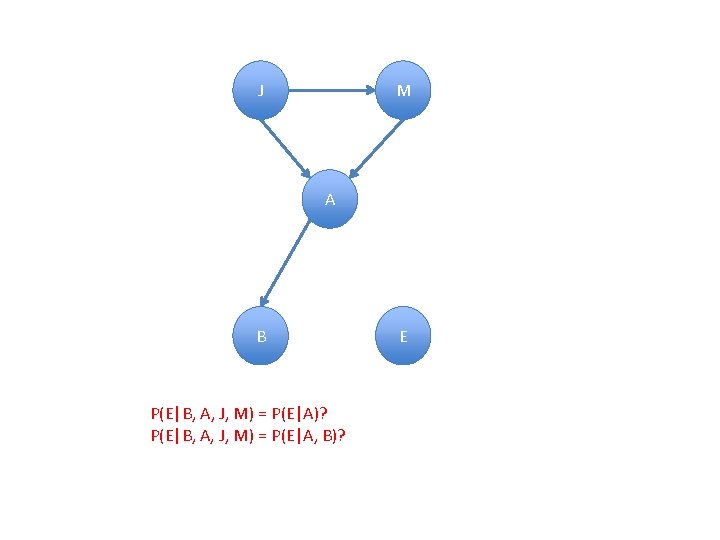

J M A P(B|A, J, M) = P(B|A)? P(B|A, J, M) = P(B)? B

J M A B P(E|B, A, J, M) = P(E|A)? P(E|B, A, J, M) = P(E|A, B)? E

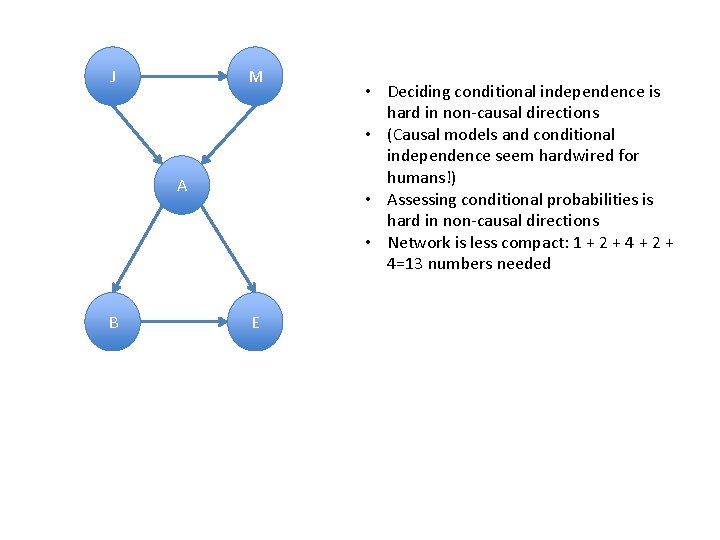

J M A B E • Deciding conditional independence is hard in non-causal directions • (Causal models and conditional independence seem hardwired for humans!) • Assessing conditional probabilities is hard in non-causal directions • Network is less compact: 1 + 2 + 4=13 numbers needed

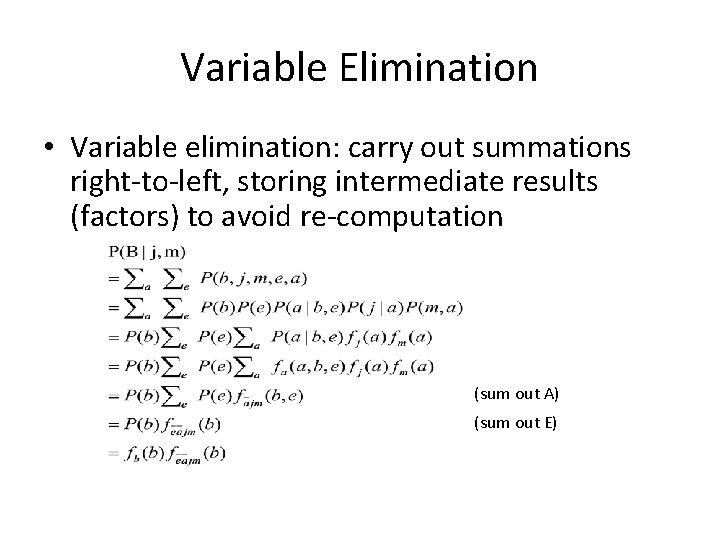

Variable Elimination • Variable elimination: carry out summations right-to-left, storing intermediate results (factors) to avoid re-computation (sum out A) (sum out E)

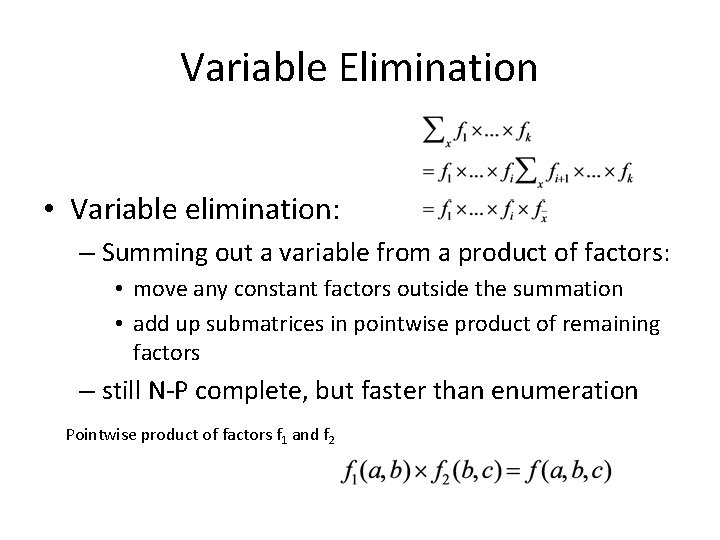

Variable Elimination • Variable elimination: – Summing out a variable from a product of factors: • move any constant factors outside the summation • add up submatrices in pointwise product of remaining factors – still N-P complete, but faster than enumeration Pointwise product of factors f 1 and f 2

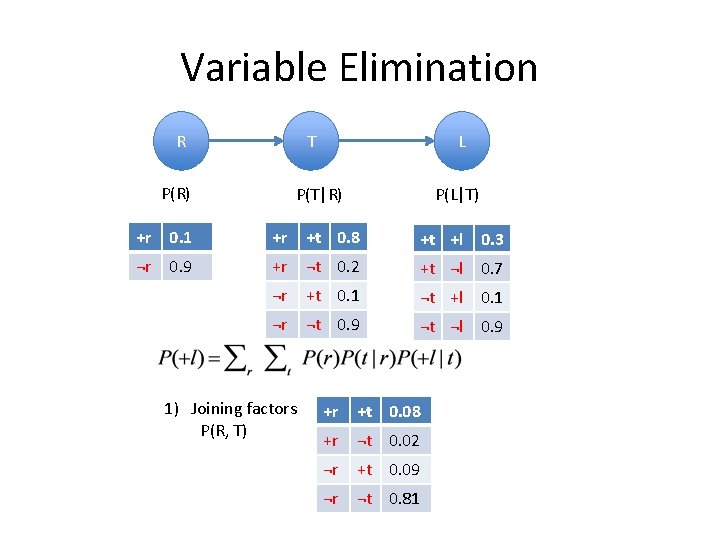

Variable Elimination R T P(R) L P(T|R) P(L|T) +r 0. 1 +r +t 0. 8 +t +l 0. 3 ¬r 0. 9 +r ¬t 0. 2 +t ¬l 0. 7 ¬r +t 0. 1 ¬t +l 0. 1 ¬r ¬t 0. 9 ¬t ¬l 0. 9 1) Joining factors P(R, T) +r +t 0. 08 +r ¬t 0. 02 ¬r +t 0. 09 ¬r ¬t 0. 81

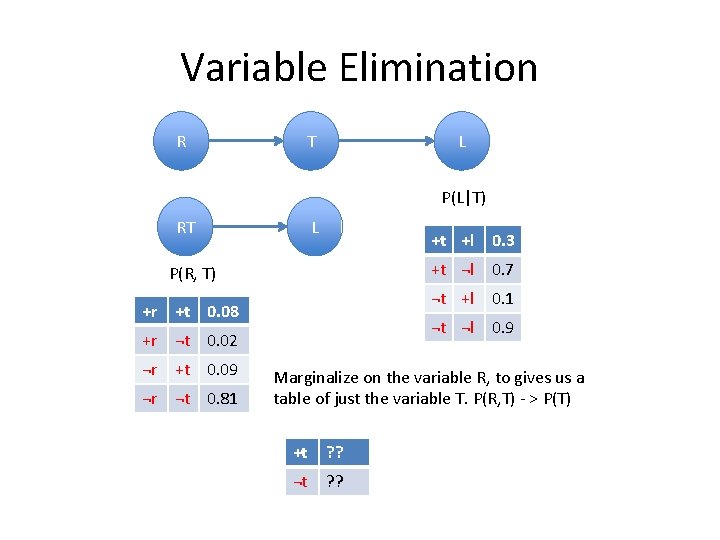

Variable Elimination R T L P(L|T) RT L +t +l 0. 3 P(R, T) +r +t 0. 08 +r ¬t 0. 02 ¬r +t 0. 09 ¬r ¬t 0. 81 +t ¬l 0. 7 ¬t +l 0. 1 ¬t ¬l 0. 9 Marginalize on the variable R, to gives us a table of just the variable T. P(R, T) - > P(T) +t ? ? ¬t ? ?

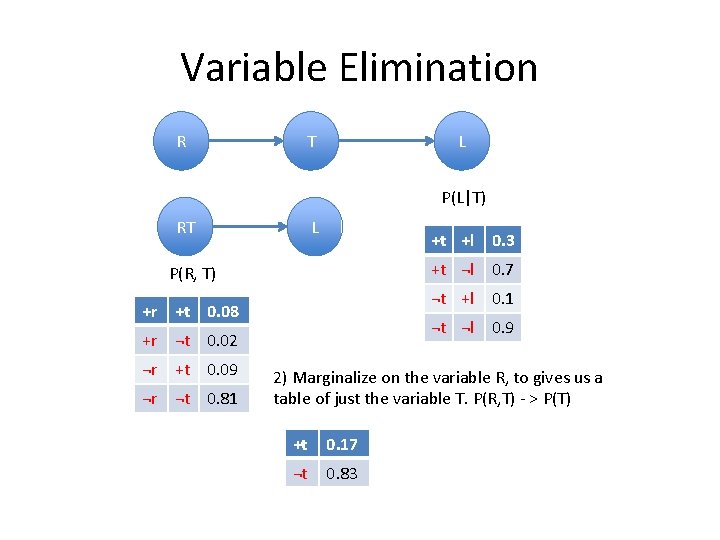

Variable Elimination R T L P(L|T) RT L +t +l 0. 3 P(R, T) +r +t 0. 08 +r ¬t 0. 02 ¬r +t 0. 09 ¬r ¬t 0. 81 +t ¬l 0. 7 ¬t +l 0. 1 ¬t ¬l 0. 9 2) Marginalize on the variable R, to gives us a table of just the variable T. P(R, T) - > P(T) +t 0. 17 ¬t 0. 83

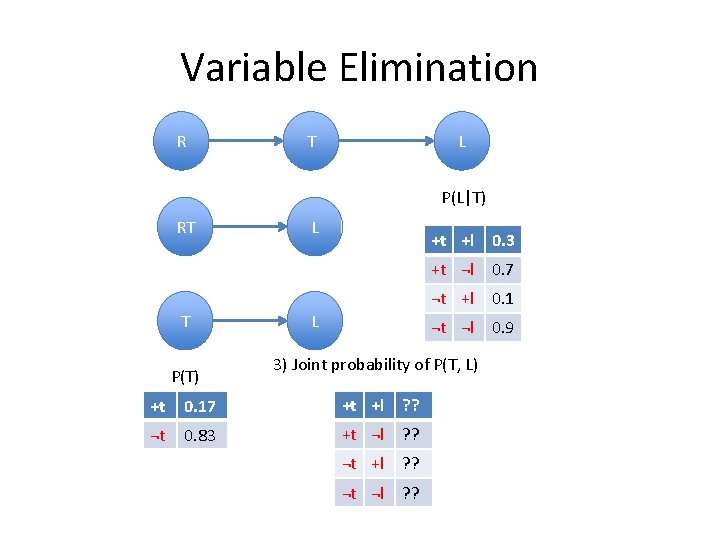

Variable Elimination R T L P(L|T) RT T P(T) L +t +l 0. 3 L +t ¬l 0. 7 ¬t +l 0. 1 ¬t ¬l 0. 9 3) Joint probability of P(T, L) +t 0. 17 +t +l ? ? ¬t 0. 83 +t ¬l ? ? ¬t +l ? ? ¬t ¬l ? ?

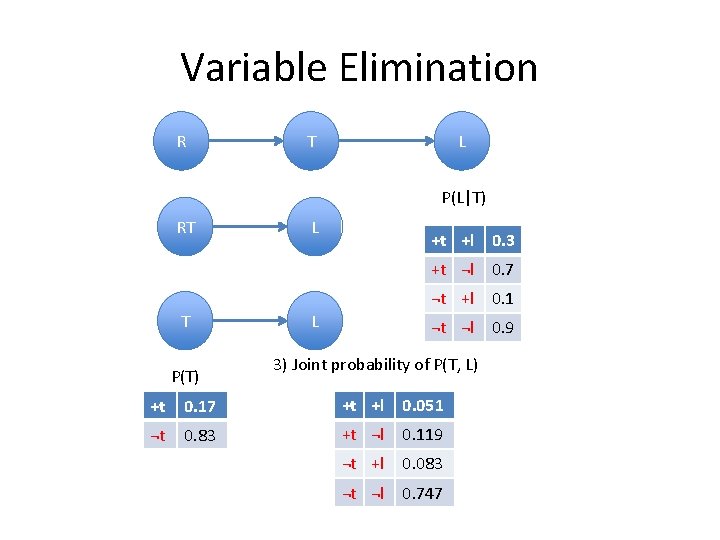

Variable Elimination R T L P(L|T) RT T P(T) L +t +l 0. 3 L +t ¬l 0. 7 ¬t +l 0. 1 ¬t ¬l 0. 9 3) Joint probability of P(T, L) +t 0. 17 +t +l 0. 051 ¬t 0. 83 +t ¬l 0. 119 ¬t +l 0. 083 ¬t ¬l 0. 747

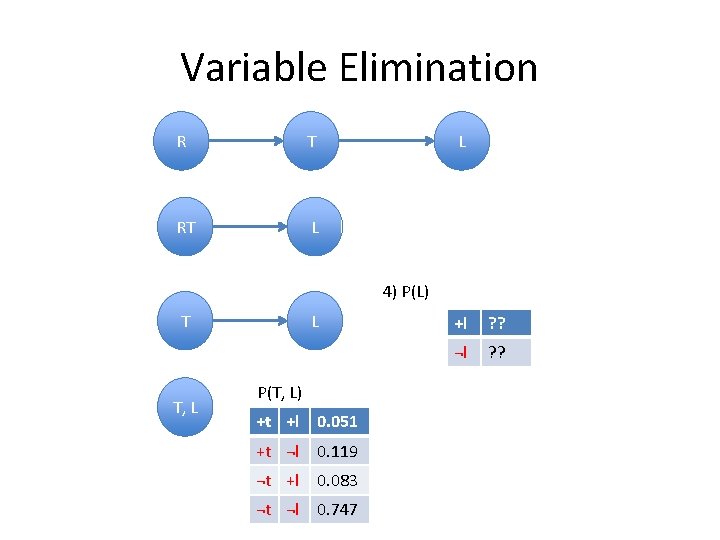

Variable Elimination R T L RT L 4) P(L) T T, L L P(T, L) +t +l 0. 051 +t ¬l 0. 119 ¬t +l 0. 083 ¬t ¬l 0. 747 +l ? ? ¬l ? ?

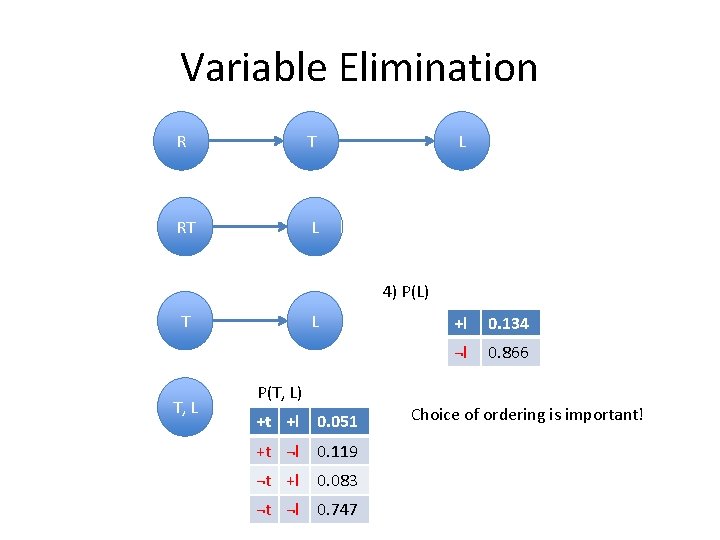

Variable Elimination R T L RT L 4) P(L) T T, L L +l 0. 134 ¬l 0. 866 P(T, L) +t +l 0. 051 +t ¬l 0. 119 ¬t +l 0. 083 ¬t ¬l 0. 747 Choice of ordering is important!

Approximate Inference: Sampling • Joint probability of heads and tails of a 1 cent, and a 5 cent coin. • Advantages: – Computationally easier. – Works even without CPTs. 1 cent 5 cent H H H T T

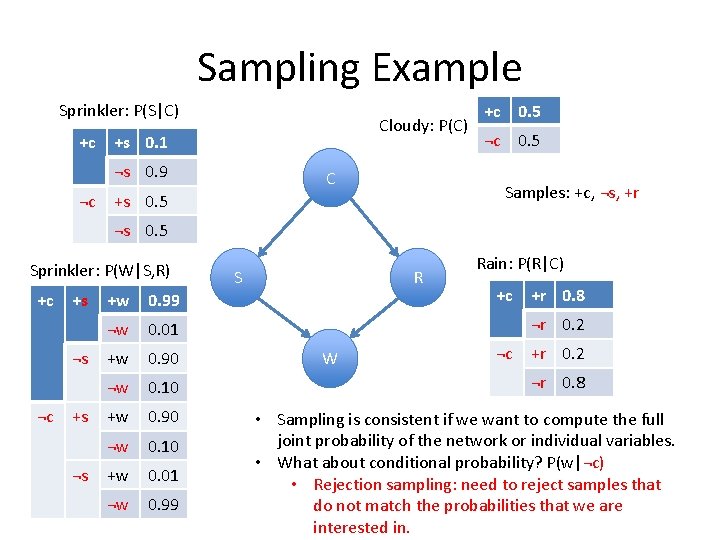

Sampling Example Sprinkler: P(S|C) +c Cloudy: P(C) +s 0. 1 ¬s 0. 9 ¬c C +c 0. 5 ¬c 0. 5 Samples: +c, ¬s, +r +s 0. 5 ¬s 0. 5 Sprinkler: P(W|S, R) +c +s ¬s ¬c +s ¬s +w 0. 99 ¬w 0. 01 +w 0. 90 ¬w 0. 10 +w 0. 01 ¬w 0. 99 S R Rain: P(R|C) +c +r 0. 8 ¬r 0. 2 W ¬c +r 0. 2 ¬r 0. 8 • Sampling is consistent if we want to compute the full joint probability of the network or individual variables. • What about conditional probability? P(w|¬c) • Rejection sampling: need to reject samples that do not match the probabilities that we are interested in.

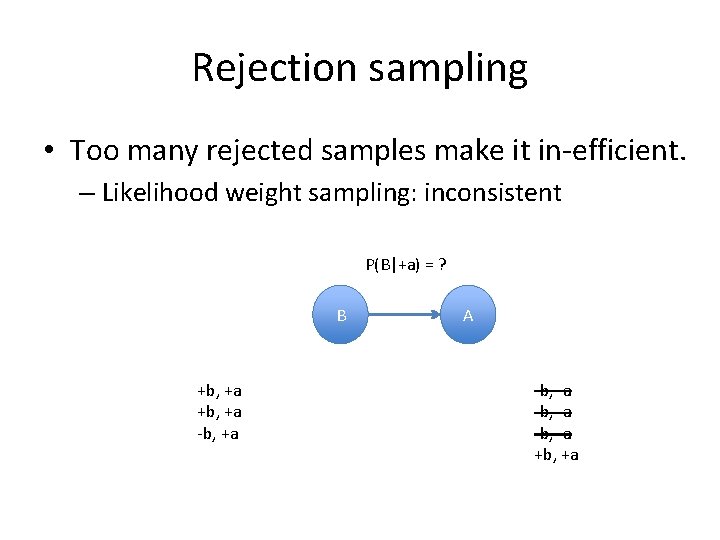

Rejection sampling • Too many rejected samples make it in-efficient. – Likelihood weight sampling: inconsistent P(B|+a) = ? B +b, +a -b, +a A -b, -a +b, +a

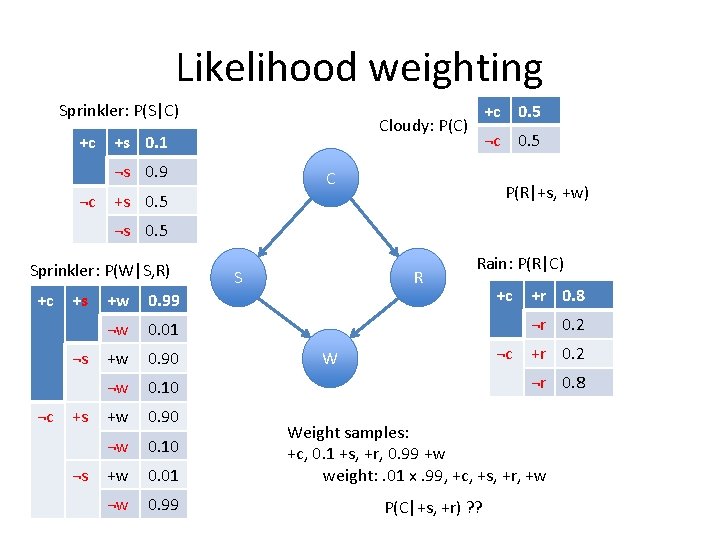

Likelihood weighting Sprinkler: P(S|C) +c +s 0. 1 ¬s 0. 9 ¬c +c 0. 5 Cloudy: P(C) ¬c 0. 5 C P(R|+s, +w) +s 0. 5 ¬s 0. 5 Sprinkler: P(W|S, R) +c +s ¬s ¬c +s ¬s +w 0. 99 ¬w 0. 01 +w 0. 90 ¬w 0. 10 +w 0. 01 ¬w 0. 99 S R Rain: P(R|C) +c +r 0. 8 ¬r 0. 2 ¬c W +r 0. 2 ¬r 0. 8 Weight samples: +c, 0. 1 +s, +r, 0. 99 +w weight: . 01 x. 99, +c, +s, +r, +w P(C|+s, +r) ? ?

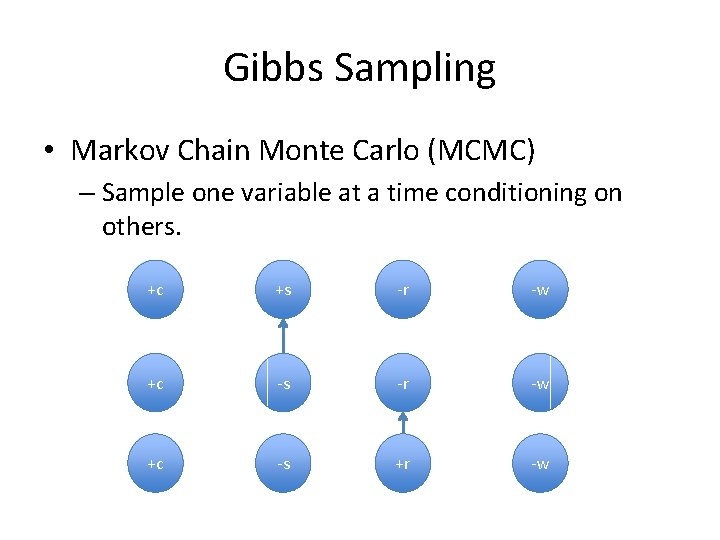

Gibbs Sampling • Markov Chain Monte Carlo (MCMC) – Sample one variable at a time conditioning on others. +c +s -r -w +c -s +r -w

Monty Hall Problem • Suppose you're on a game show, and you're given the choice of three doors: Behind one door is a car; behind the others, goats. You pick a door, say No. 2 [but the door is not opened], and the host, who knows what's behind the doors, opens another door, say No. 1, which has a goat. He then says to you, "Do you want to pick door No. 3? " Is it to your advantage to switch your choice? P(C=3|H=1, S=2) = ? ? P(C=2|H=1, S=2) = ? ?

Monty Hall Problem • Suppose you're on a game show, and you're given the choice of three doors: Behind one door is a car; behind the others, goats. You pick a door, say No. 2 [but the door is not opened], and the host, who knows what's behind the doors, opens another door, say No. 1, which has a goat. He then says to you, "Do you want to pick door No. 3? " Is it to your advantage to switch your choice? P(C=3|H=1, S=2) = 1/3 P(C=2|H=1, S=2) = 2/3 Why? ? ?

Monty Hall Problem • P(C=3|H=1, S=2) – = P(H=1|C=3, S=1)P(C=3|S=1)/SUM(P(H=1|C=i, S=2)P(C=i|S=2) = 2/3 • P(C=1|S=2) = P(C=2|S=2)=P(C=3|S=2) = 1/3

- Slides: 40