CPS 110 Implementing threadslocks on a uniprocessor Landon

- Slides: 37

CPS 110: Implementing threads/locks on a uni-processor Landon Cox

Recall, thread interactions 1. Threads can access shared data ê Use locks, monitors, and semaphores ê What we’ve done so far 2. Threads also share hardware ê CPU (uni-processor) ê Memory

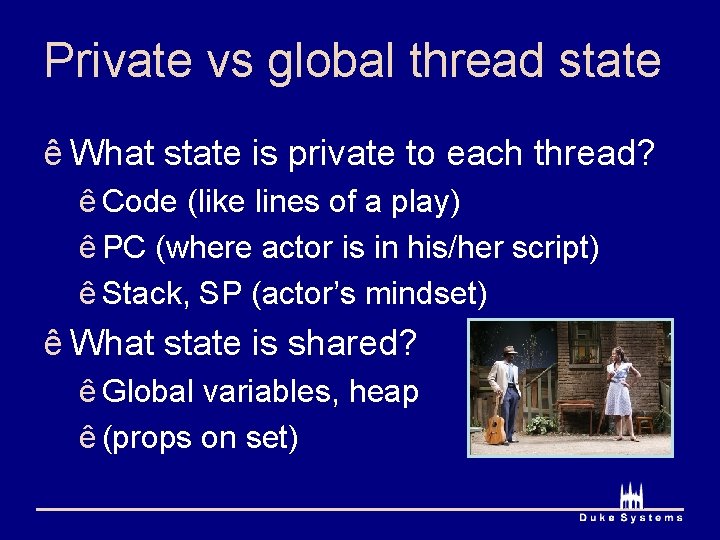

Private vs global thread state ê What state is private to each thread? ê Code (like lines of a play) ê PC (where actor is in his/her script) ê Stack, SP (actor’s mindset) ê What state is shared? ê Global variables, heap ê (props on set)

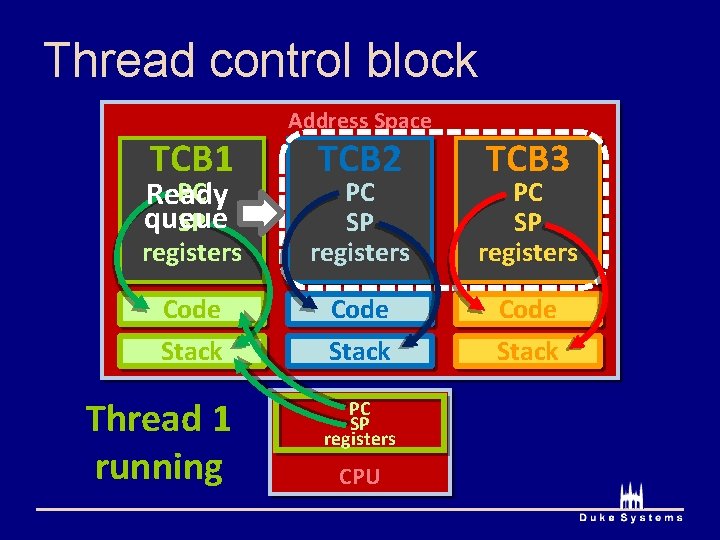

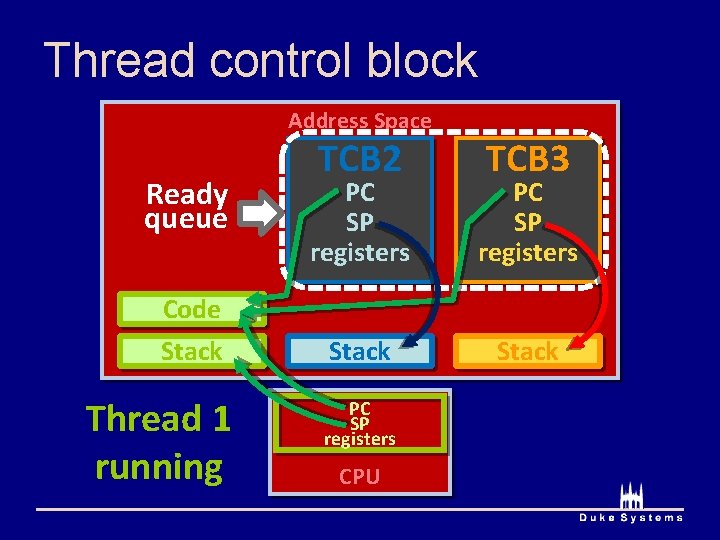

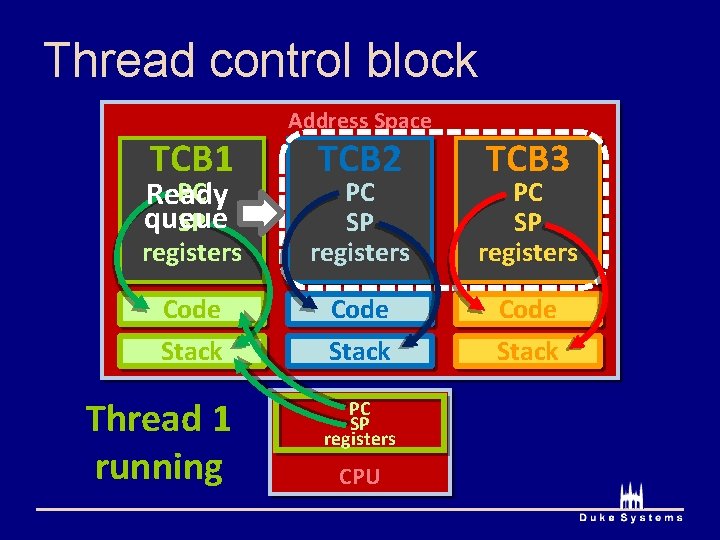

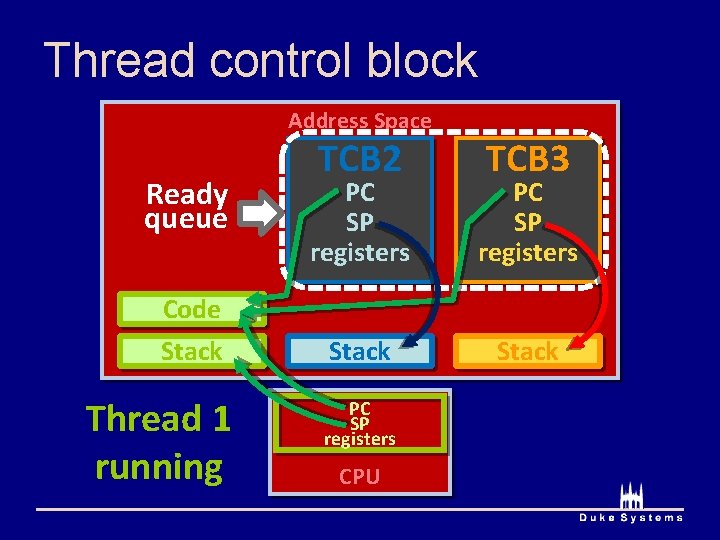

Thread control block (TCB) ê Running thread’s private data is on CPU ê Non-running thread’s private state is in TCB ê Thread control block (TCB) ê Container for non-running thread’s private data ê (PC, code, SP, stack, registers)

Thread control block TCB 1 Address Space TCB 2 TCB 3 PC Ready queue SP registers PC SP registers Code Stack Thread 1 running PC SP registers CPU

Thread control block Address Space Ready queue Code Stack Thread 1 running TCB 2 TCB 3 PC SP registers Stack PC SP registers CPU

Thread states ê Running ê Currently using the CPU ê Ready to run, but waiting for the CPU ê Blocked ê Stuck in lock (), wait () or down ()

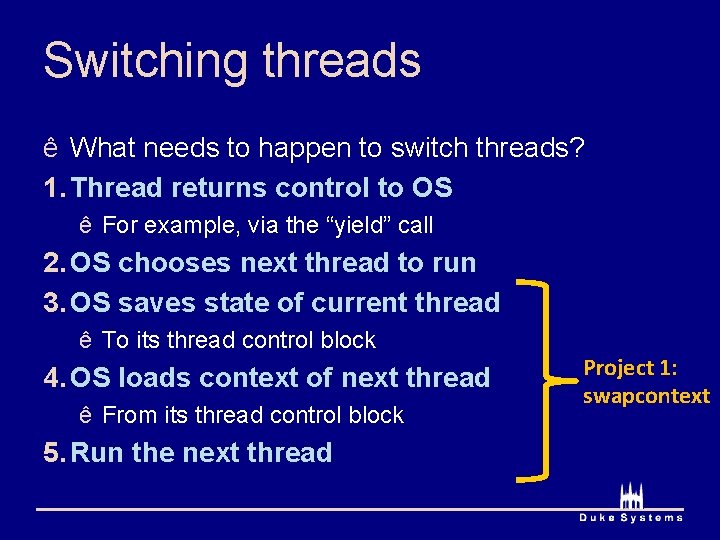

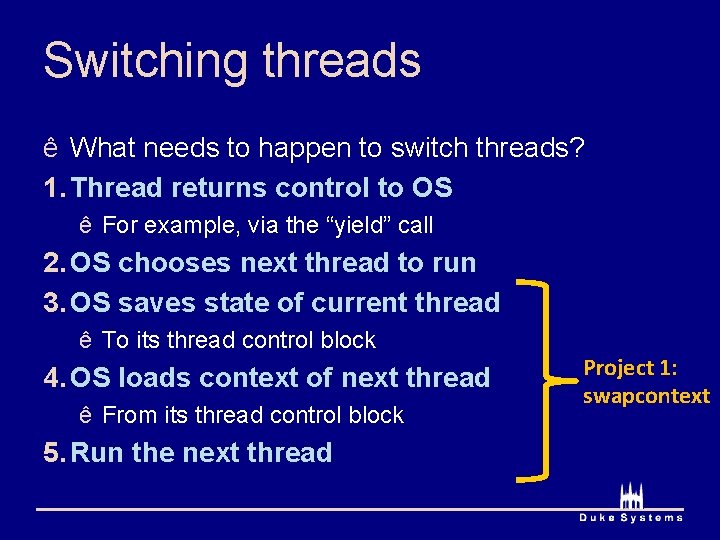

Switching threads ê What needs to happen to switch threads? 1. Thread returns control to OS ê For example, via the “yield” call 2. OS chooses next thread to run 3. OS saves state of current thread ê To its thread control block 4. OS loads context of next thread ê From its thread control block 5. Run the next thread Project 1: swapcontext

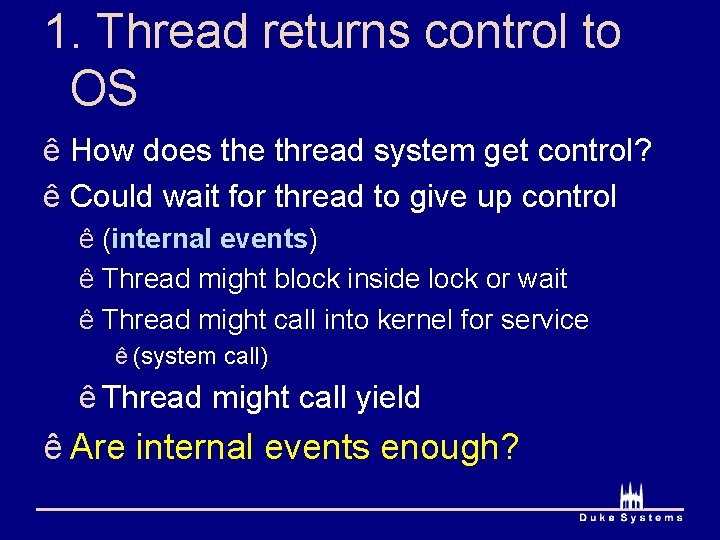

1. Thread returns control to OS ê How does the thread system get control? ê Could wait for thread to give up control ê (internal events) ê Thread might block inside lock or wait ê Thread might call into kernel for service ê (system call) ê Thread might call yield ê Are internal events enough?

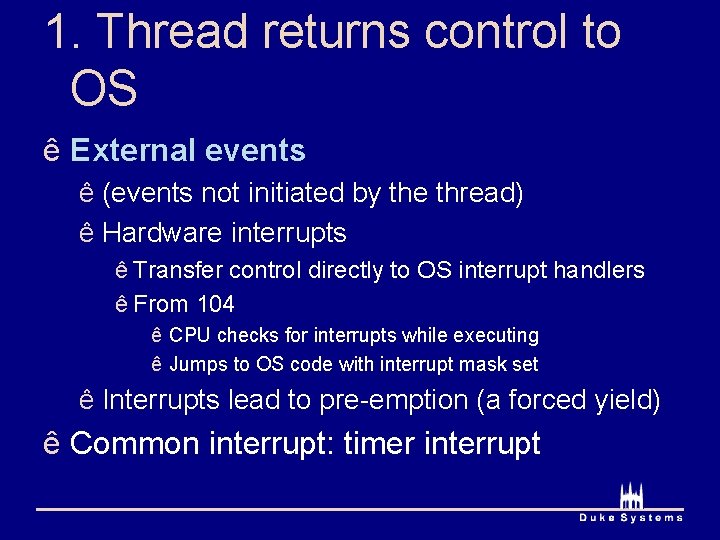

1. Thread returns control to OS ê External events ê (events not initiated by the thread) ê Hardware interrupts ê Transfer control directly to OS interrupt handlers ê From 104 ê CPU checks for interrupts while executing ê Jumps to OS code with interrupt mask set ê Interrupts lead to pre-emption (a forced yield) ê Common interrupt: timer interrupt

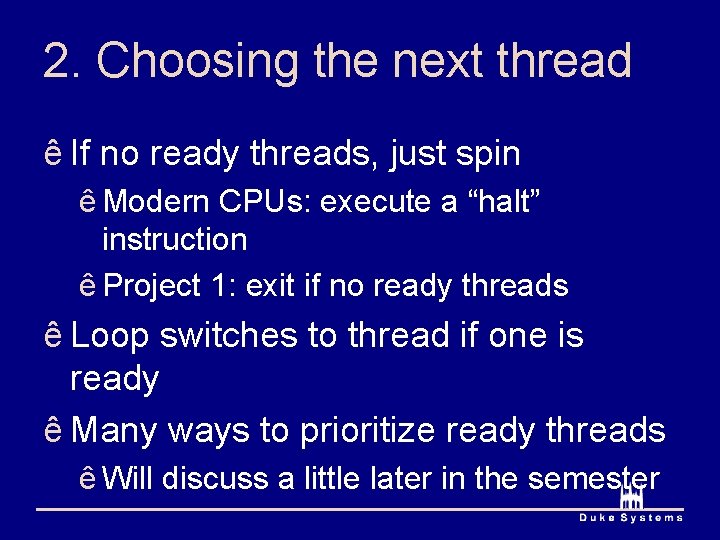

2. Choosing the next thread ê If no ready threads, just spin ê Modern CPUs: execute a “halt” instruction ê Project 1: exit if no ready threads ê Loop switches to thread if one is ready ê Many ways to prioritize ready threads ê Will discuss a little later in the semester

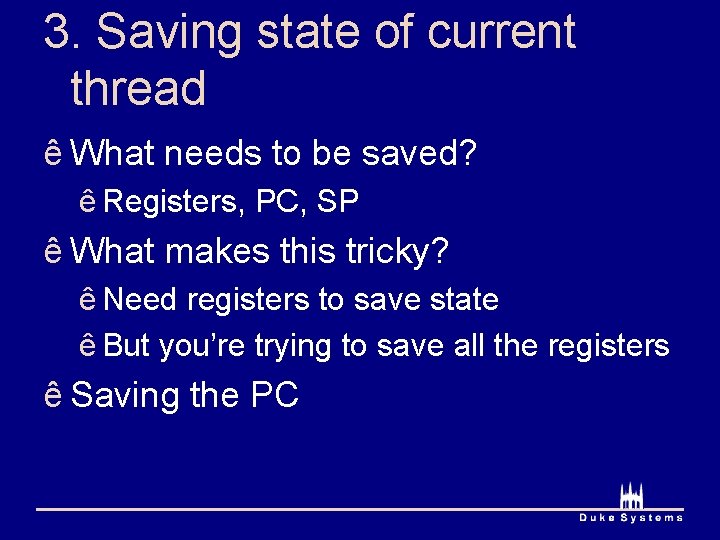

3. Saving state of current thread ê What needs to be saved? ê Registers, PC, SP ê What makes this tricky? ê Need registers to save state ê But you’re trying to save all the registers ê Saving the PC

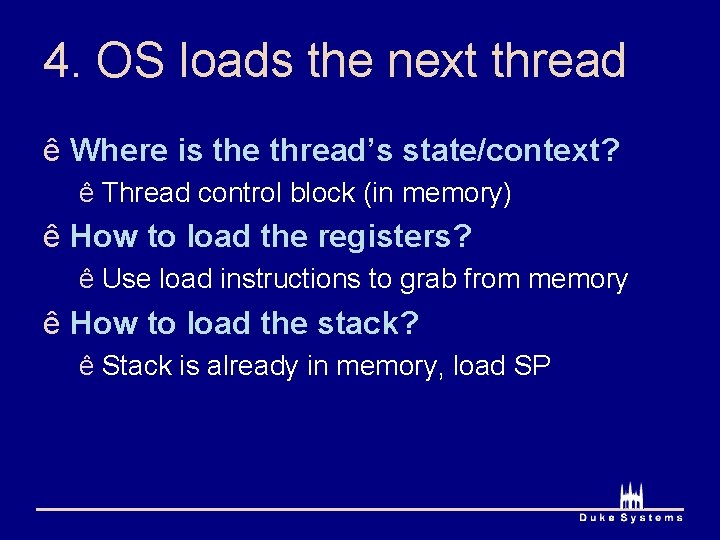

4. OS loads the next thread ê Where is the thread’s state/context? ê Thread control block (in memory) ê How to load the registers? ê Use load instructions to grab from memory ê How to load the stack? ê Stack is already in memory, load SP

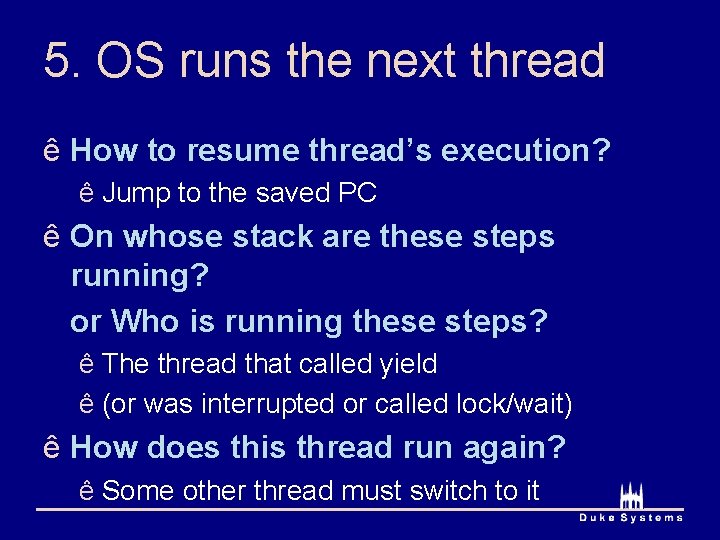

5. OS runs the next thread ê How to resume thread’s execution? ê Jump to the saved PC ê On whose stack are these steps running? or Who is running these steps? ê The thread that called yield ê (or was interrupted or called lock/wait) ê How does this thread run again? ê Some other thread must switch to it

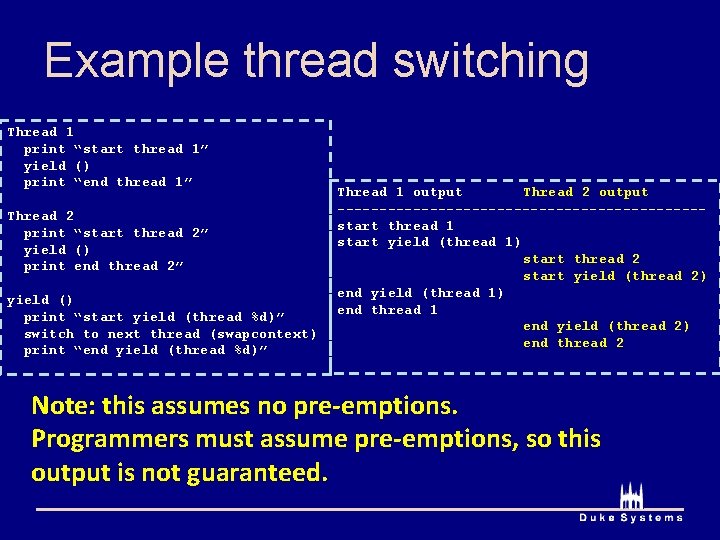

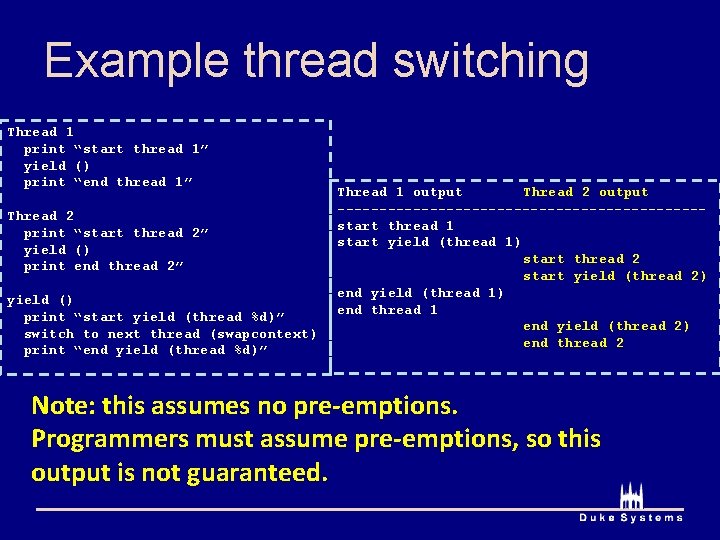

Example thread switching Thread 1 print “start thread 1” yield () print “end thread 1” Thread 2 print “start thread 2” yield () print end thread 2” yield () print “start yield (thread %d)” switch to next thread (swapcontext) print “end yield (thread %d)” Thread 1 output Thread 2 output ----------------------start thread 1 start yield (thread 1) start thread 2 start yield (thread 2) end yield (thread 1) end thread 1 end yield (thread 2) end thread 2 Note: this assumes no pre-emptions. Programmers must assume pre-emptions, so this output is not guaranteed.

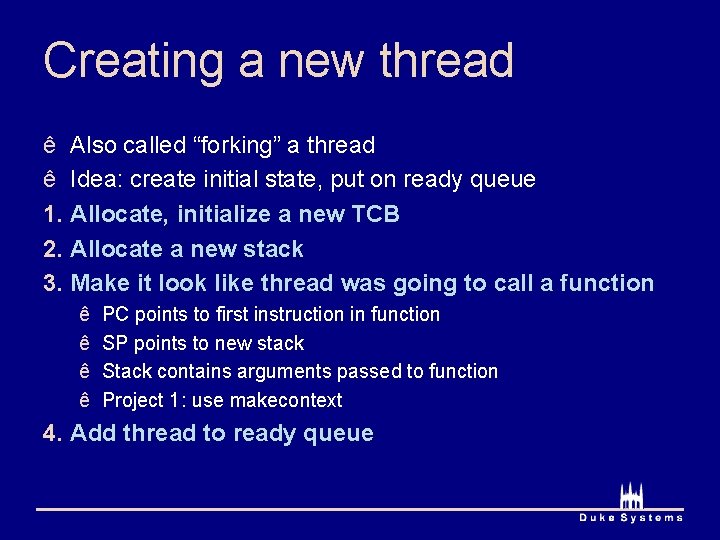

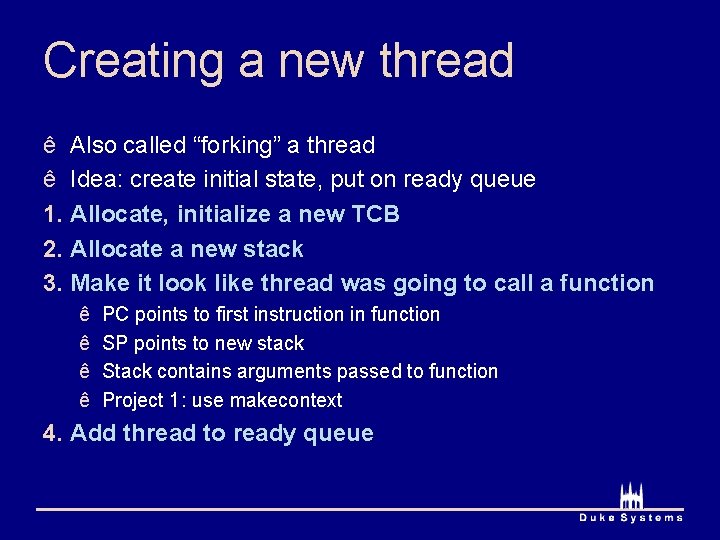

Creating a new thread ê Also called “forking” a thread ê Idea: create initial state, put on ready queue 1. Allocate, initialize a new TCB 2. Allocate a new stack 3. Make it look like thread was going to call a function ê ê PC points to first instruction in function SP points to new stack Stack contains arguments passed to function Project 1: use makecontext 4. Add thread to ready queue

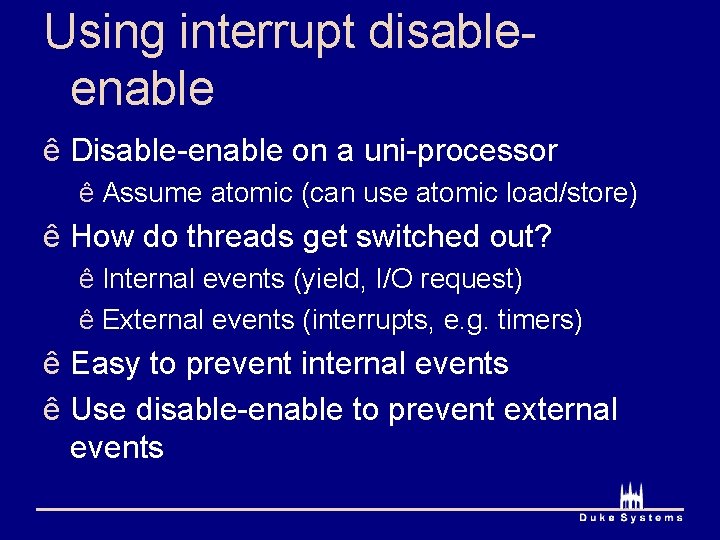

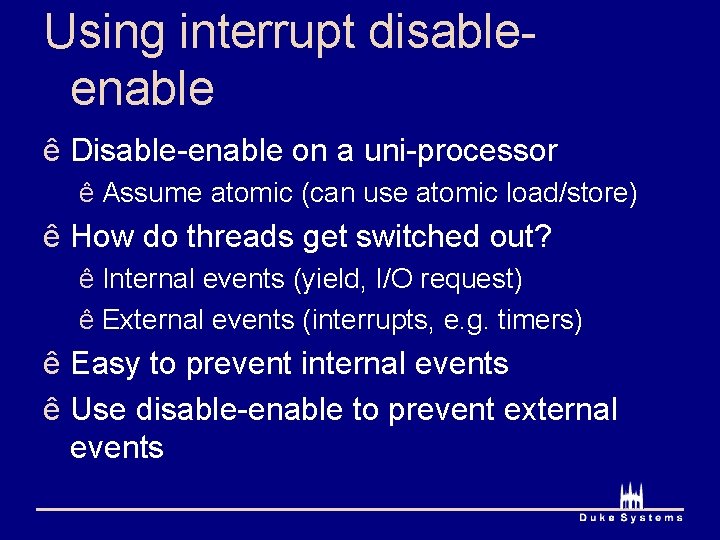

Using interrupt disableenable ê Disable-enable on a uni-processor ê Assume atomic (can use atomic load/store) ê How do threads get switched out? ê Internal events (yield, I/O request) ê External events (interrupts, e. g. timers) ê Easy to prevent internal events ê Use disable-enable to prevent external events

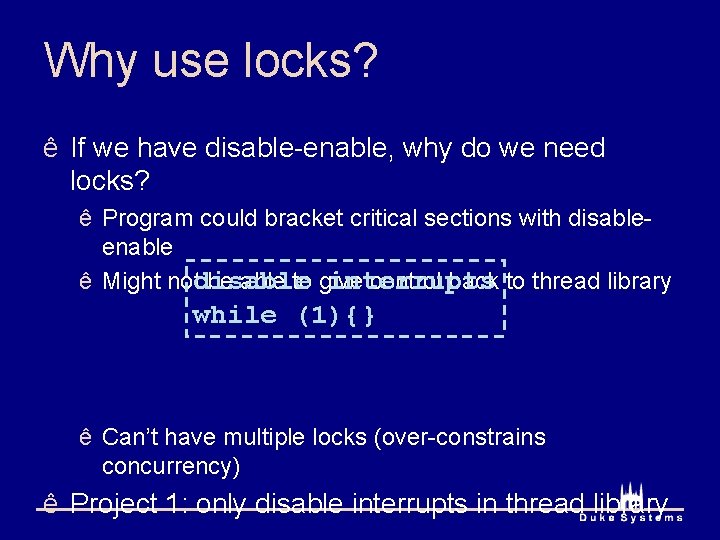

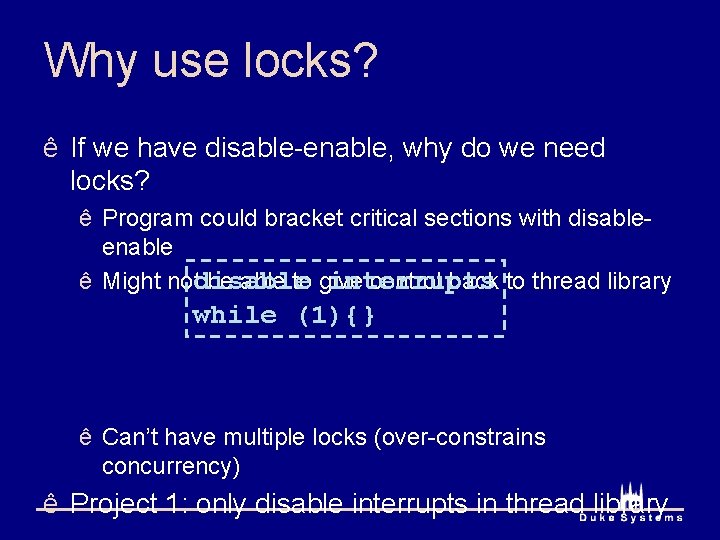

Why use locks? ê If we have disable-enable, why do we need locks? ê Program could bracket critical sections with disableenable interrupts ê Might notdisable be able to give control back to thread library while (1){} ê Can’t have multiple locks (over-constrains concurrency) ê Project 1: only disable interrupts in thread library

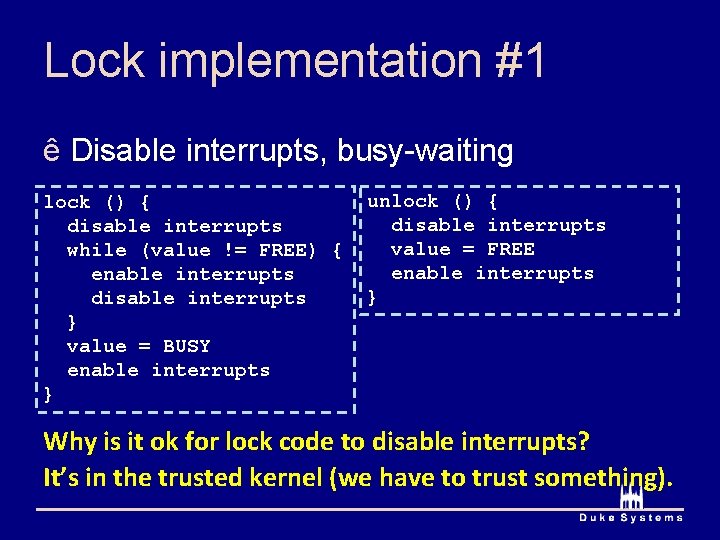

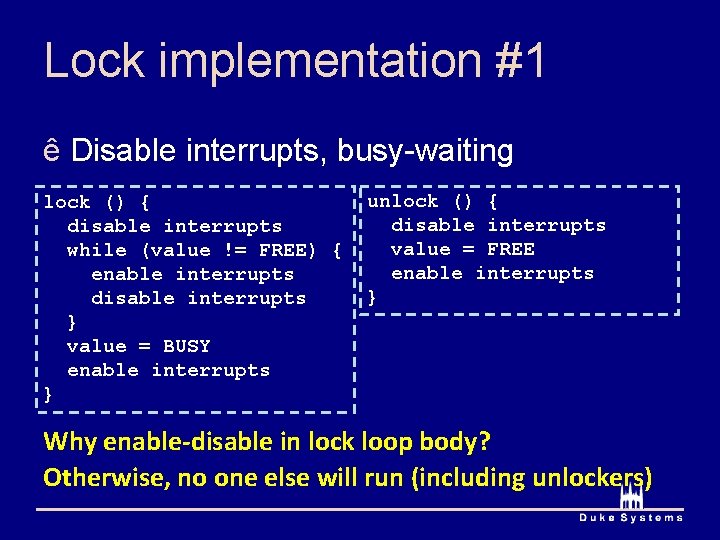

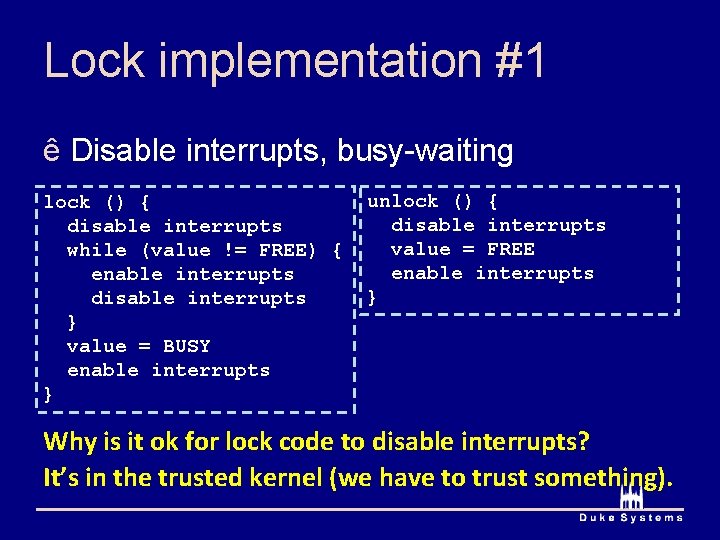

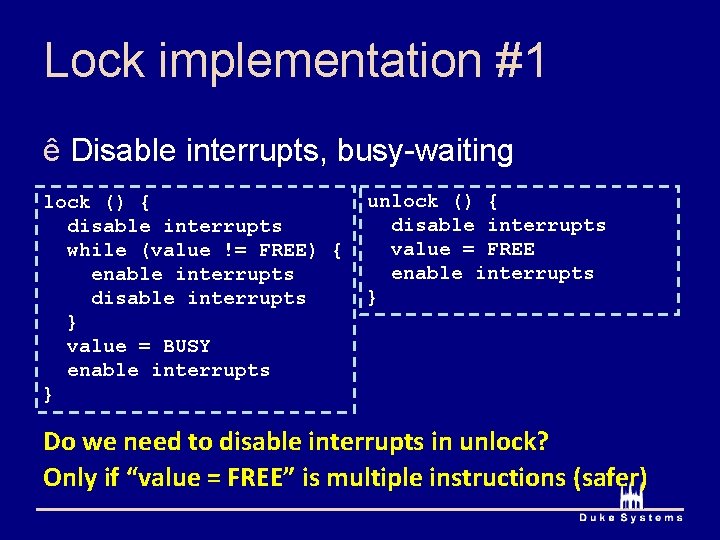

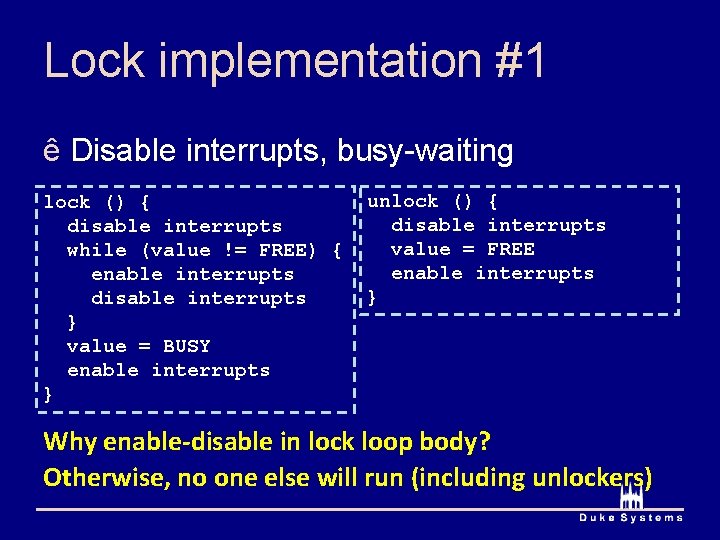

Lock implementation #1 ê Disable interrupts, busy-waiting lock () { disable interrupts while (value != FREE) { enable interrupts disable interrupts } value = BUSY enable interrupts } unlock () { disable interrupts value = FREE enable interrupts } Why is it ok for lock code to disable interrupts? It’s in the trusted kernel (we have to trust something).

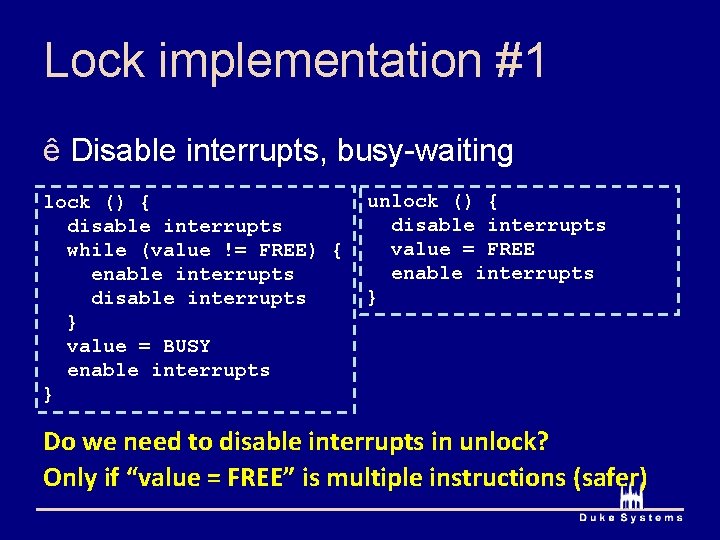

Lock implementation #1 ê Disable interrupts, busy-waiting lock () { disable interrupts while (value != FREE) { enable interrupts disable interrupts } value = BUSY enable interrupts } unlock () { disable interrupts value = FREE enable interrupts } Do we need to disable interrupts in unlock? Only if “value = FREE” is multiple instructions (safer)

Lock implementation #1 ê Disable interrupts, busy-waiting lock () { disable interrupts while (value != FREE) { enable interrupts disable interrupts } value = BUSY enable interrupts } unlock () { disable interrupts value = FREE enable interrupts } Why enable-disable in lock loop body? Otherwise, no one else will run (including unlockers)

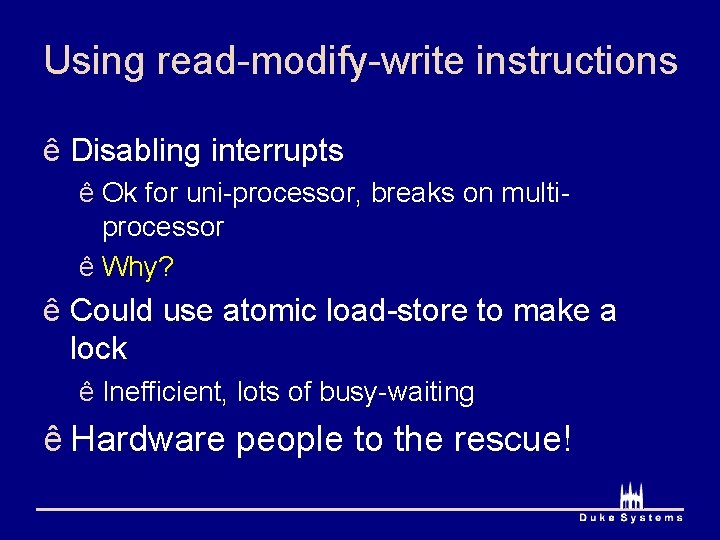

Using read-modify-write instructions ê Disabling interrupts ê Ok for uni-processor, breaks on multiprocessor ê Why? ê Could use atomic load-store to make a lock ê Inefficient, lots of busy-waiting ê Hardware people to the rescue!

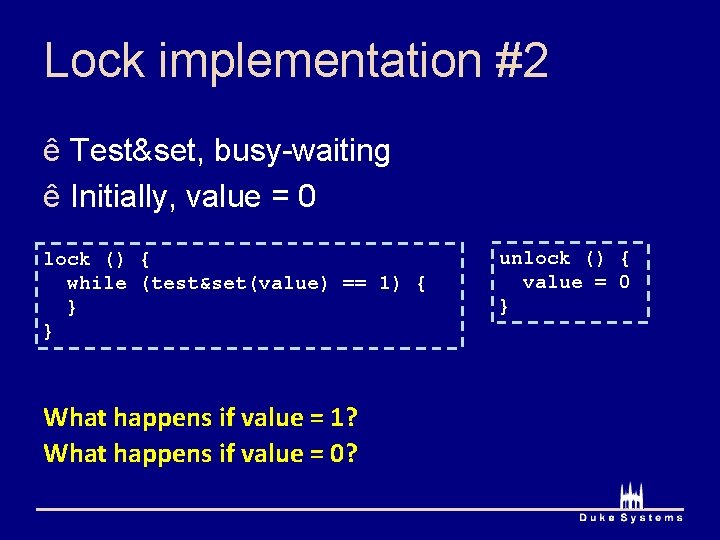

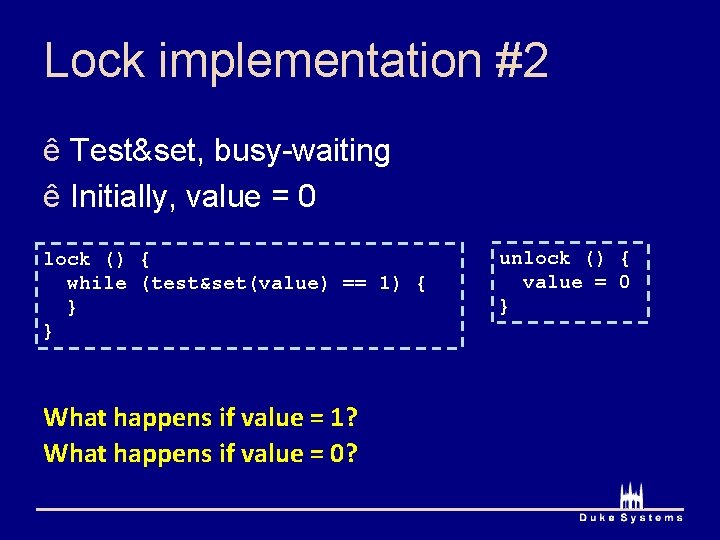

Lock implementation #2 ê Test&set, busy-waiting ê Initially, value = 0 lock () { while (test&set(value) == 1) { } } What happens if value = 1? What happens if value = 0? unlock () { value = 0 }

Locks and busy-waiting ê All implementations have used busywaiting ê Wastes CPU cycles ê To reduce busy-waiting, integrate ê Lock implementation ê Thread dispatcher data structures

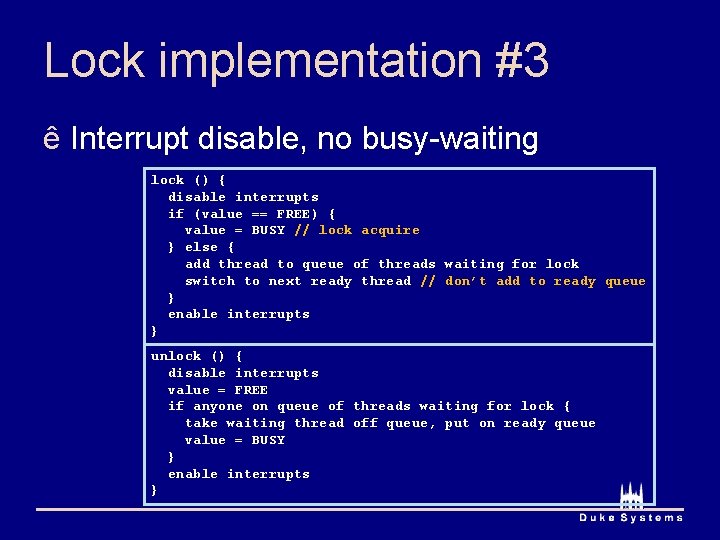

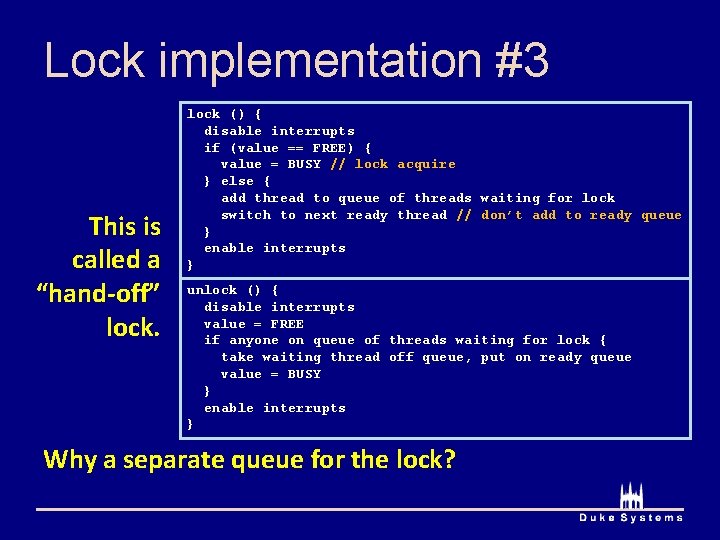

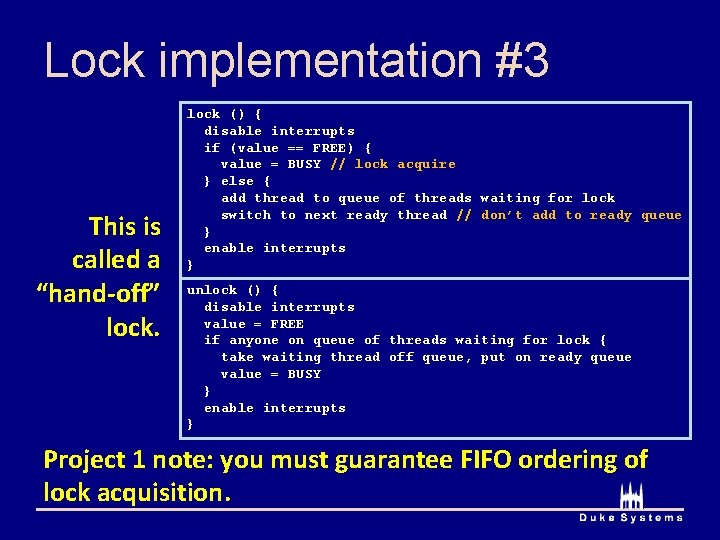

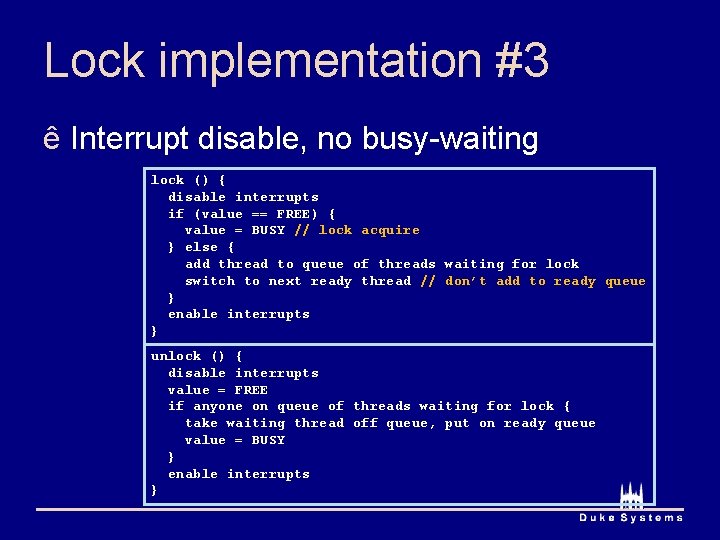

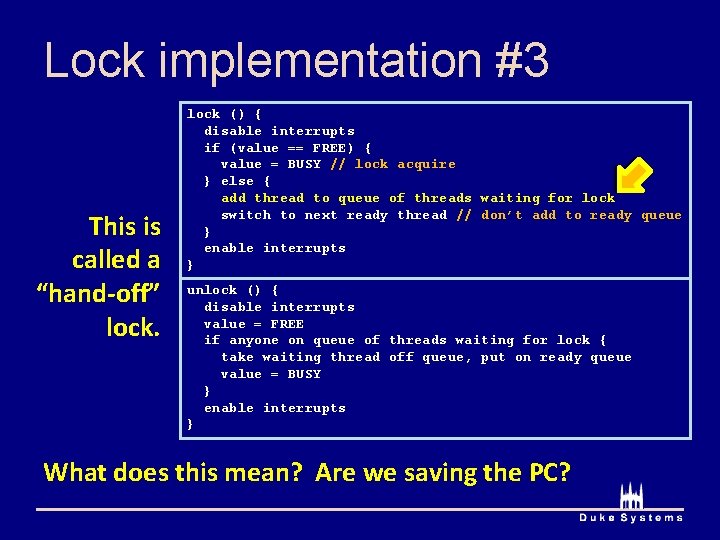

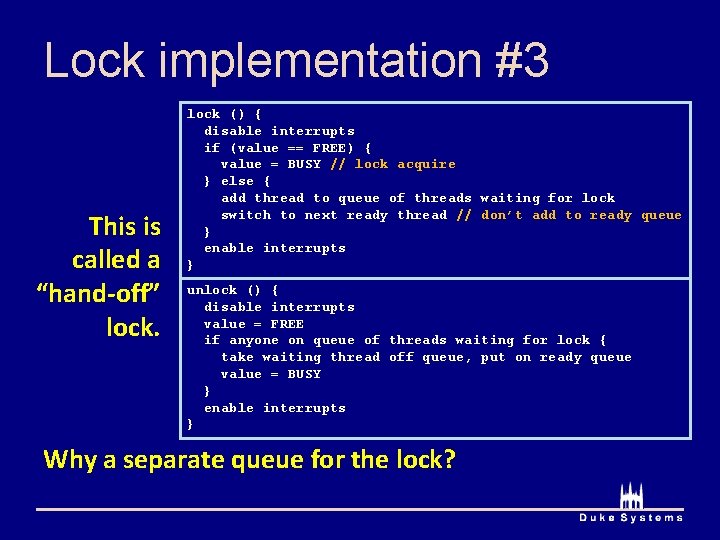

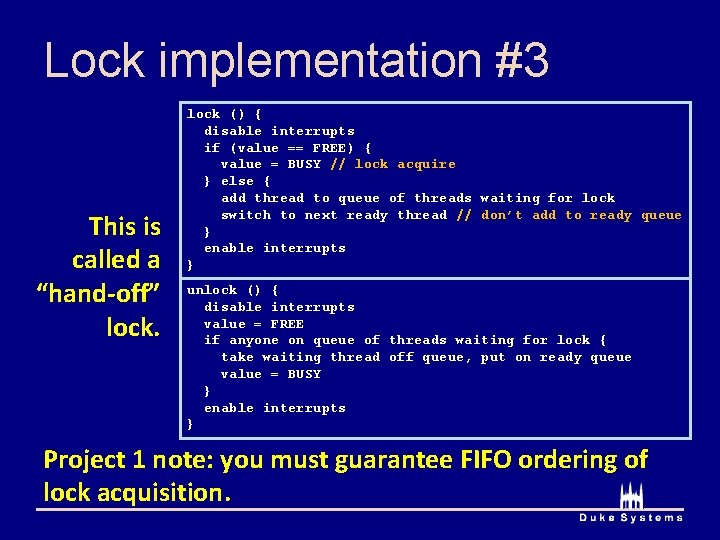

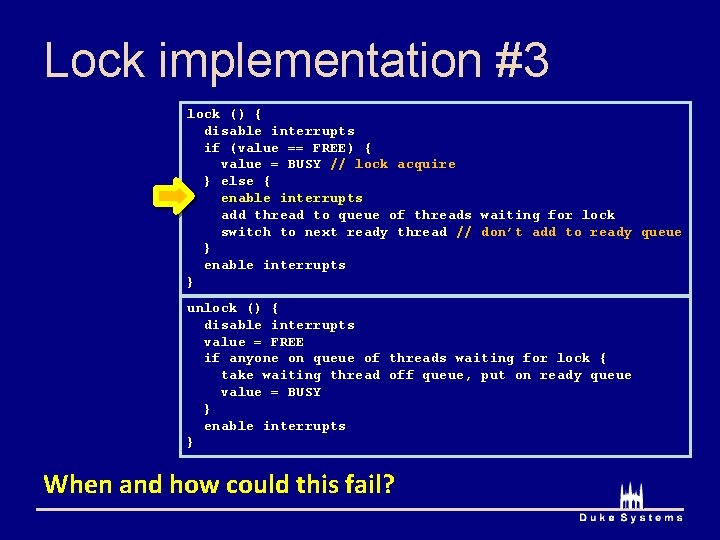

Lock implementation #3 ê Interrupt disable, no busy-waiting lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts }

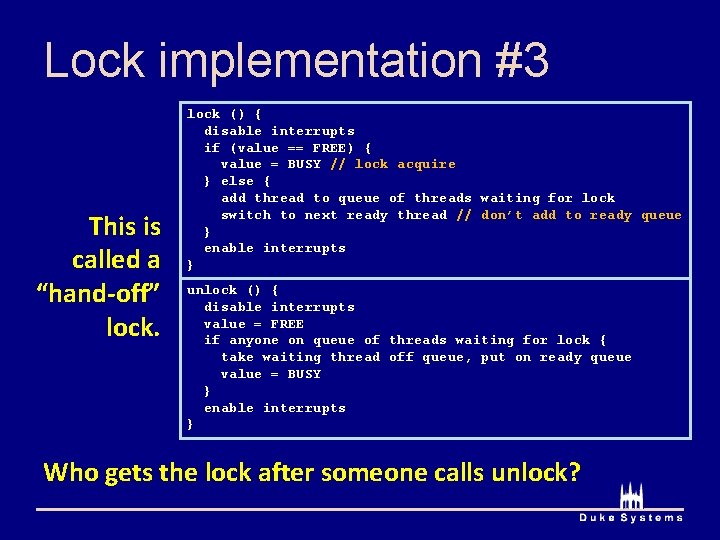

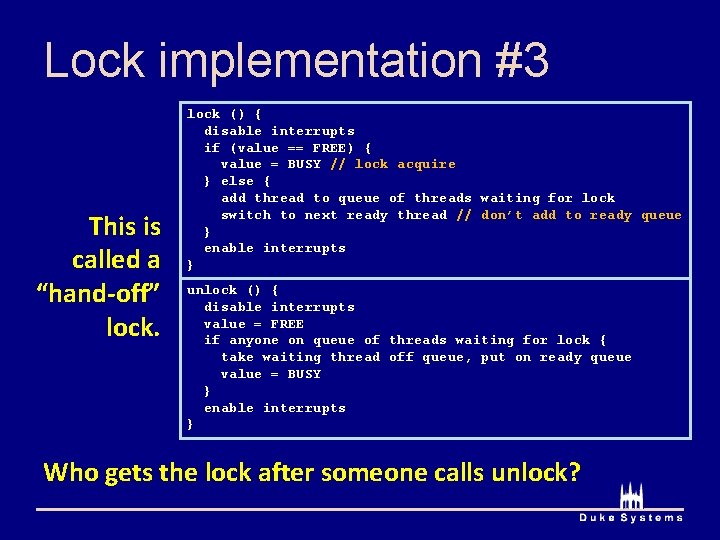

Lock implementation #3 This is called a “hand-off” lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } Who gets the lock after someone calls unlock?

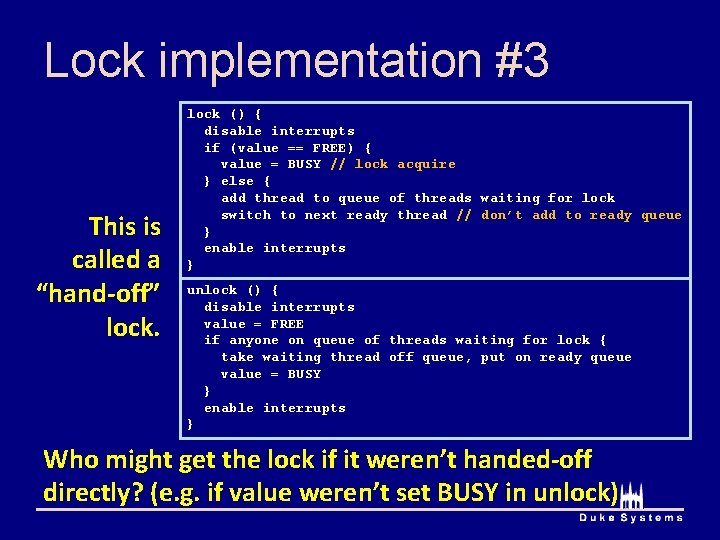

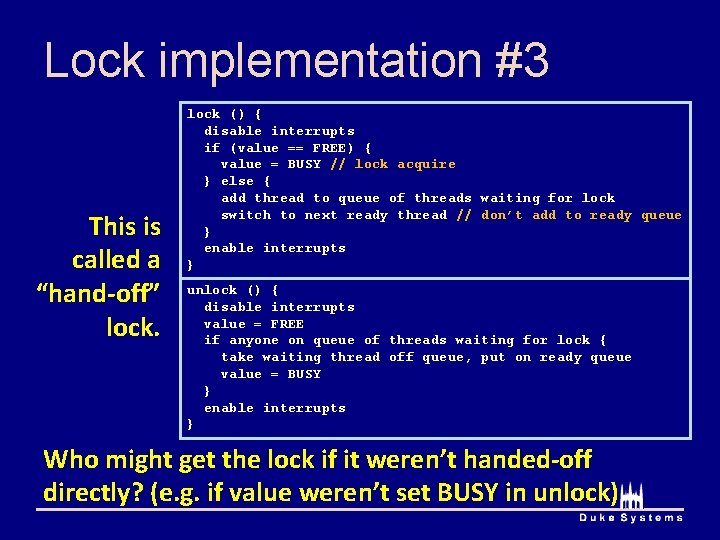

Lock implementation #3 This is called a “hand-off” lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } Who might get the lock if it weren’t handed-off directly? (e. g. if value weren’t set BUSY in unlock)

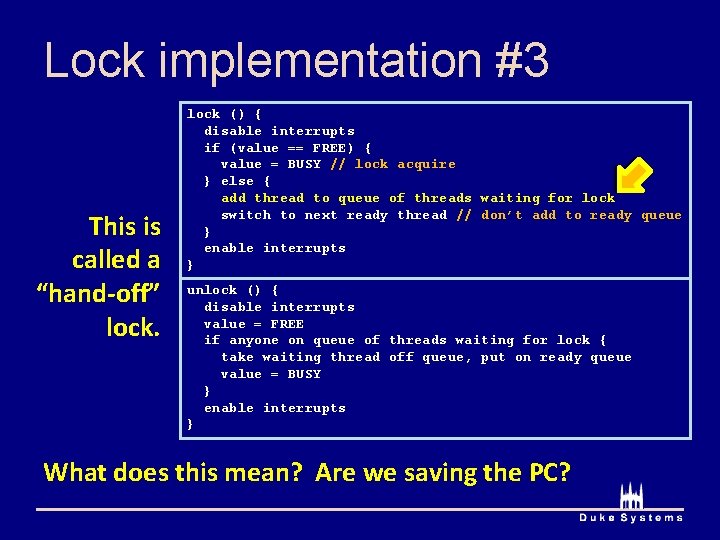

Lock implementation #3 This is called a “hand-off” lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } What does this mean? Are we saving the PC?

Lock implementation #3 This is called a “hand-off” lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } Why a separate queue for the lock?

Lock implementation #3 This is called a “hand-off” lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } Project 1 note: you must guarantee FIFO ordering of lock acquisition.

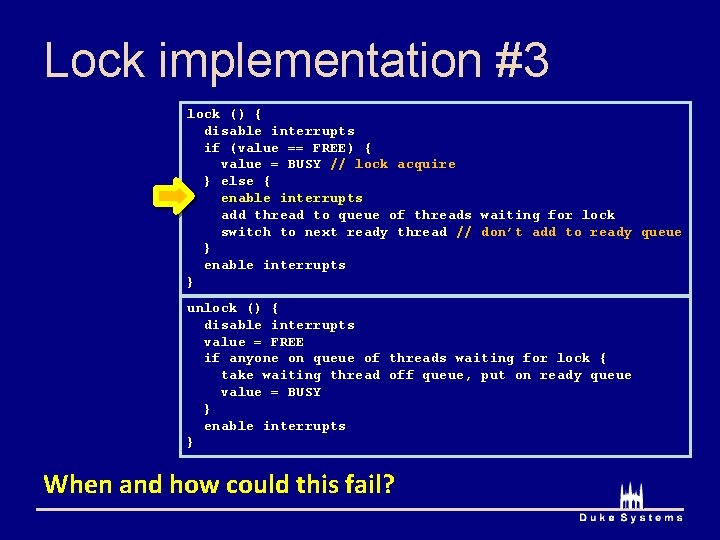

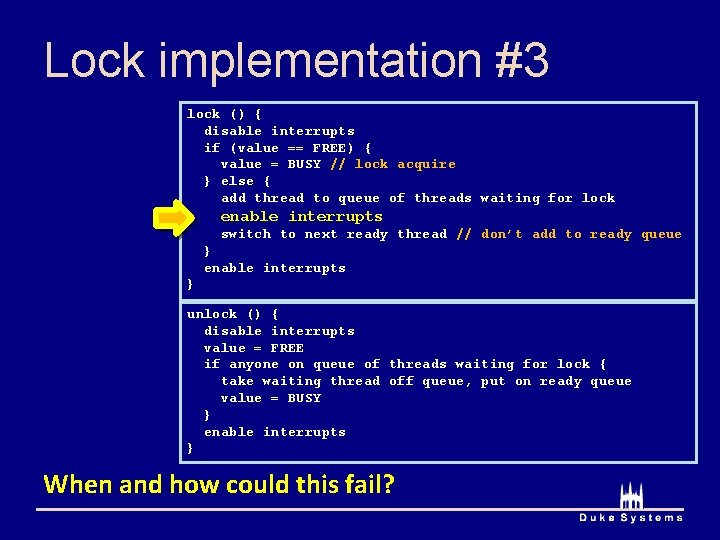

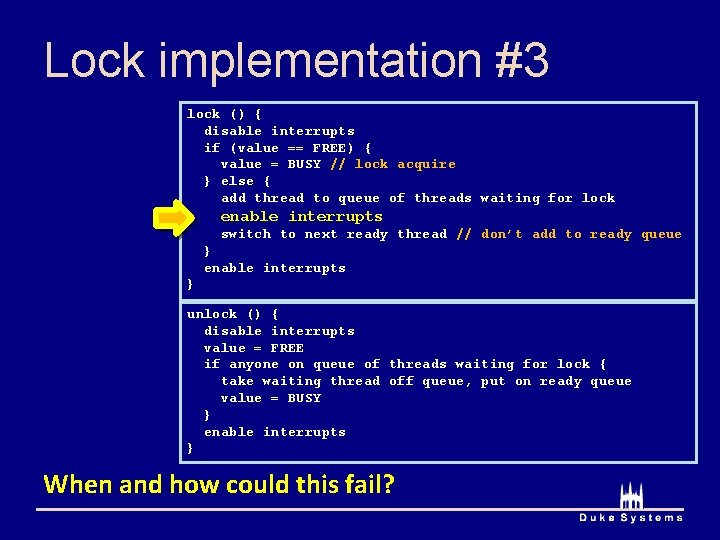

Lock implementation #3 lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { enable interrupts add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } When and how could this fail?

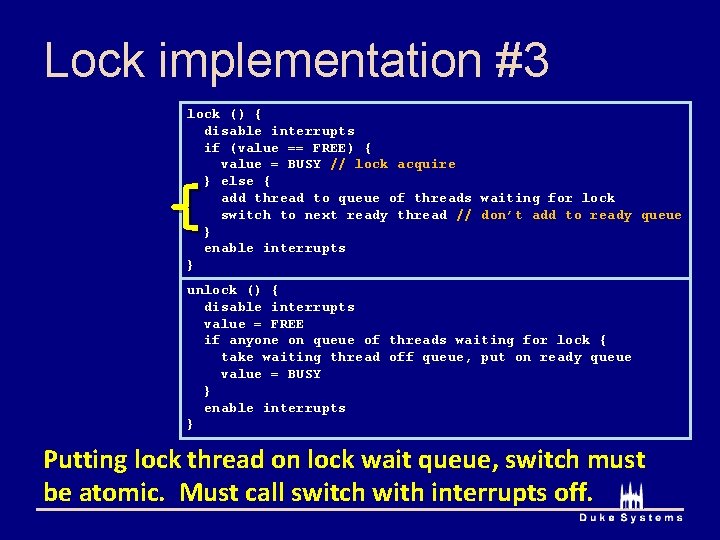

Lock implementation #3 lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock enable interrupts switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } When and how could this fail?

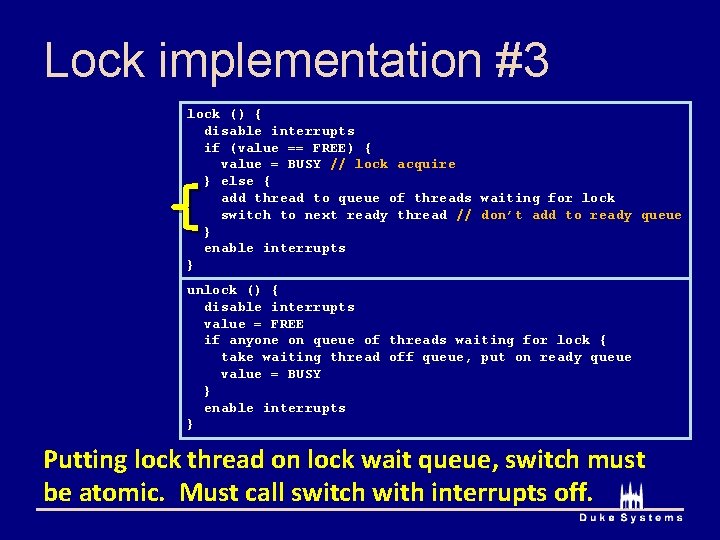

Lock implementation #3 lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } Putting lock thread on lock wait queue, switch must be atomic. Must call switch with interrupts off.

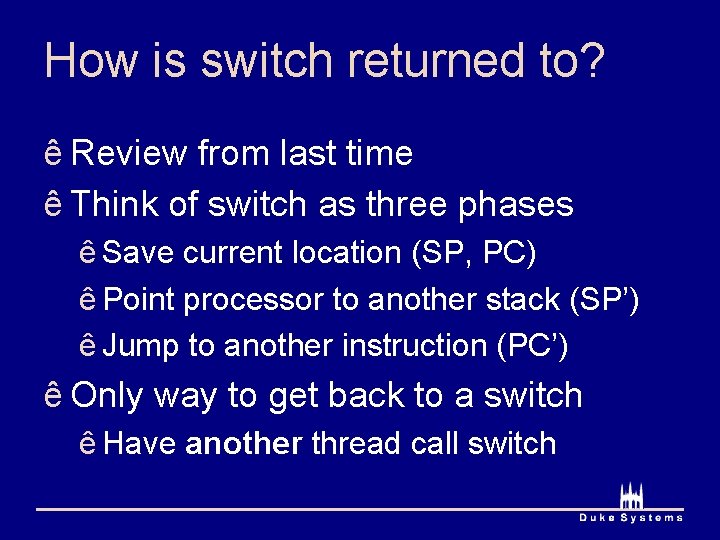

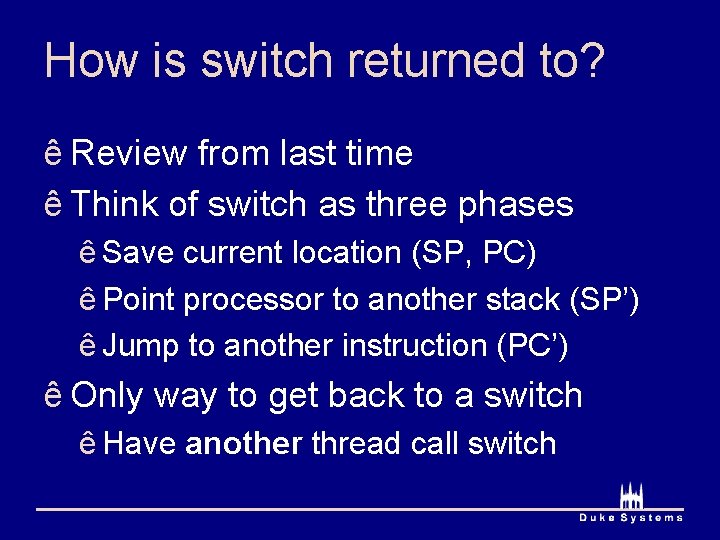

How is switch returned to? ê Review from last time ê Think of switch as three phases ê Save current location (SP, PC) ê Point processor to another stack (SP’) ê Jump to another instruction (PC’) ê Only way to get back to a switch ê Have another thread call switch

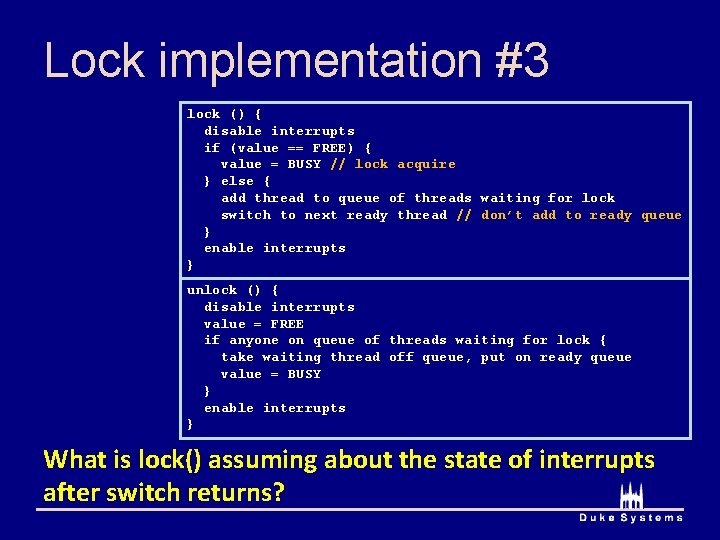

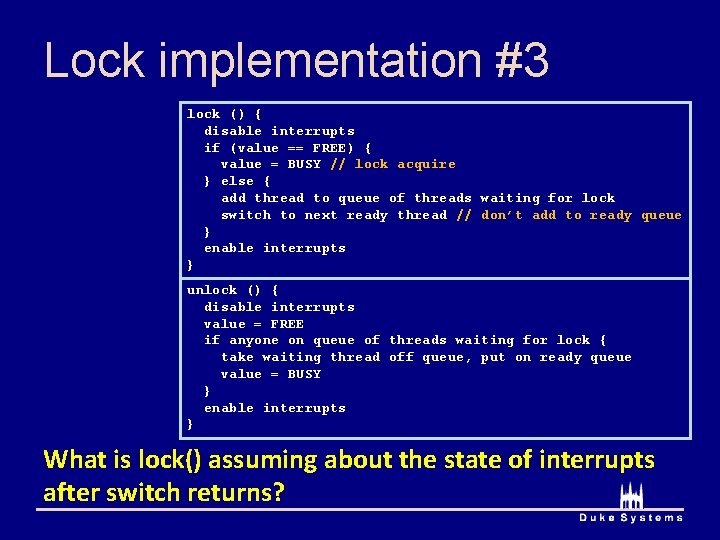

Lock implementation #3 lock () { disable interrupts if (value == FREE) { value = BUSY // lock acquire } else { add thread to queue of threads waiting for lock switch to next ready thread // don’t add to ready queue } enable interrupts } unlock () { disable interrupts value = FREE if anyone on queue of threads waiting for lock { take waiting thread off queue, put on ready queue value = BUSY } enable interrupts } What is lock() assuming about the state of interrupts after switch returns?

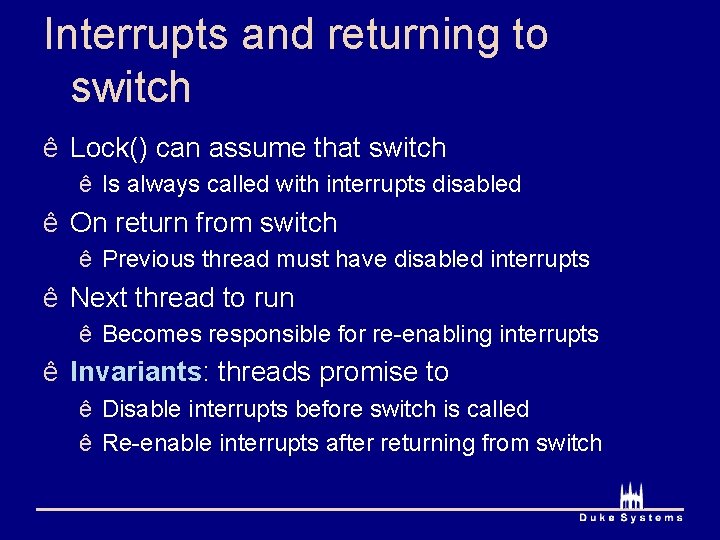

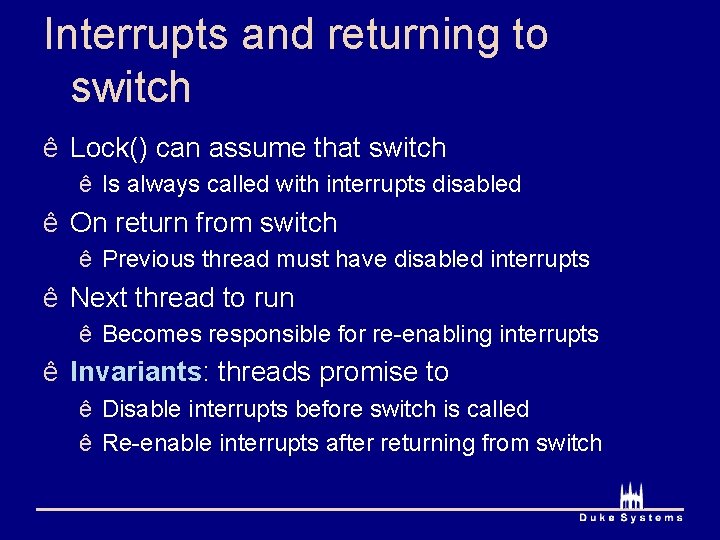

Interrupts and returning to switch ê Lock() can assume that switch ê Is always called with interrupts disabled ê On return from switch ê Previous thread must have disabled interrupts ê Next thread to run ê Becomes responsible for re-enabling interrupts ê Invariants: threads promise to ê Disable interrupts before switch is called ê Re-enable interrupts after returning from switch

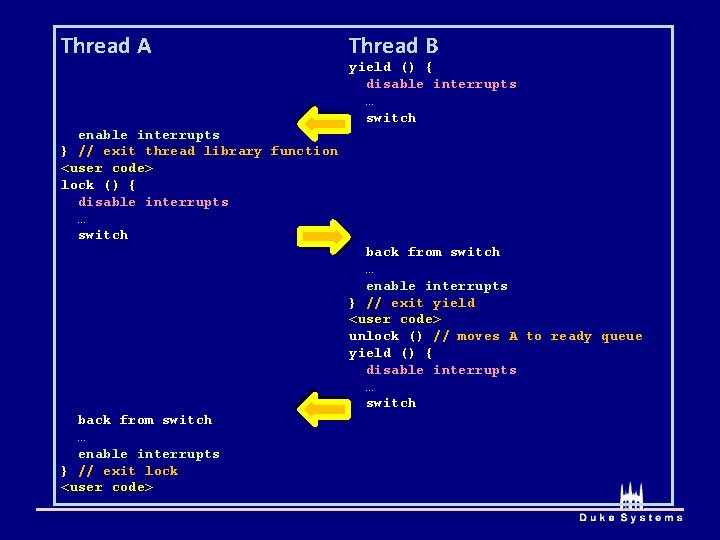

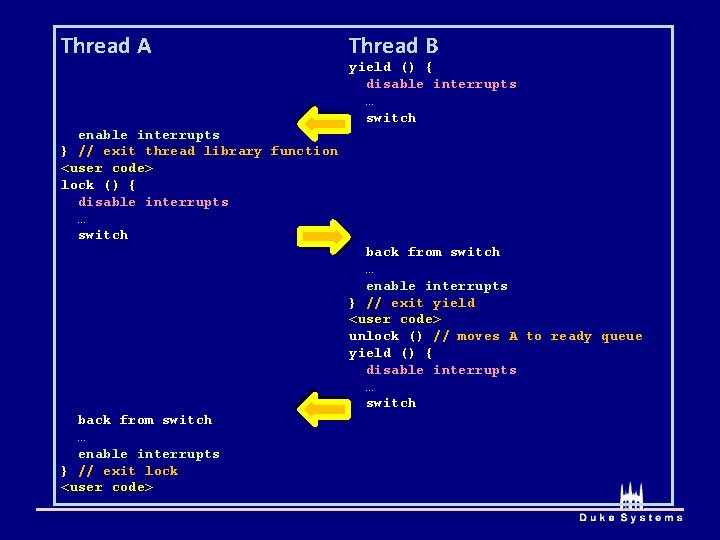

Thread A Thread B yield () { disable interrupts … switch enable interrupts } // exit thread library function <user code> lock () { disable interrupts … switch back from switch … enable interrupts } // exit yield <user code> unlock () // moves A to ready queue yield () { disable interrupts … switch back from switch … enable interrupts } // exit lock <user code>