Coupled Monte Carlo Neutronics and Fluid Flow Simulation

- Slides: 20

Coupled Monte Carlo Neutronics and Fluid Flow Simulation of Small Modular Reactors (Exa. SMR) Tom Evans (PI) ECP Application Development HPC Users Forum April 17, 2017; Santa Fe, NM ORNL is managed by UT-Battelle for the US Department of Energy

What is the Exascale Computing Project (ECP)? • Created in support of President Obama’s National Strategic Computing initiative • A collaborative effort of Two US Department of Energy (DOE) organizations: – Office of Science (DOE-SC) – National Nuclear Security Administration (NNSA) • A 10 -year project to accelerate the development of a capable exascale ecosystem – Led by DOE laboratories – Executed in collaboration with academia and industry 2 Exa. SMR A capable exascale computing system will have a well-balanced ecosystem (software, hardware, applications)

What is the Exascale Computing Project (ECP)? ECP was established to accelerate development of a capable exascale computing system that integrates hardware and software capability to deliver approximately 50× more performance than today’s 20 PF machines for mission-critical and science-essential applications 3 Exa. SMR ECP will not build the first exascale supercomputer Systems will be acquired by the DOE labs as they have always been ECP will drive pre-exascale application development, hardware, and software, R&D to ensure that the US has a capable exascale ecosystem in the early 2023 time frame

Project Team • ORNL – Tom Evans (PI), Jeffrey Vetter, Steven Hamilton, Seth Johnson, Seyong Lee • ANL – Andrew Siegel (Co-PI), Elia Merzari, Paul Romano, Amanda Lund, Aleks Obabko, Ron Rahaman • MIT – Kord Smith (Co-PI), Ben Forget • INL – Leslie Kerby, Mark De. Hart 4 Exa. SMR

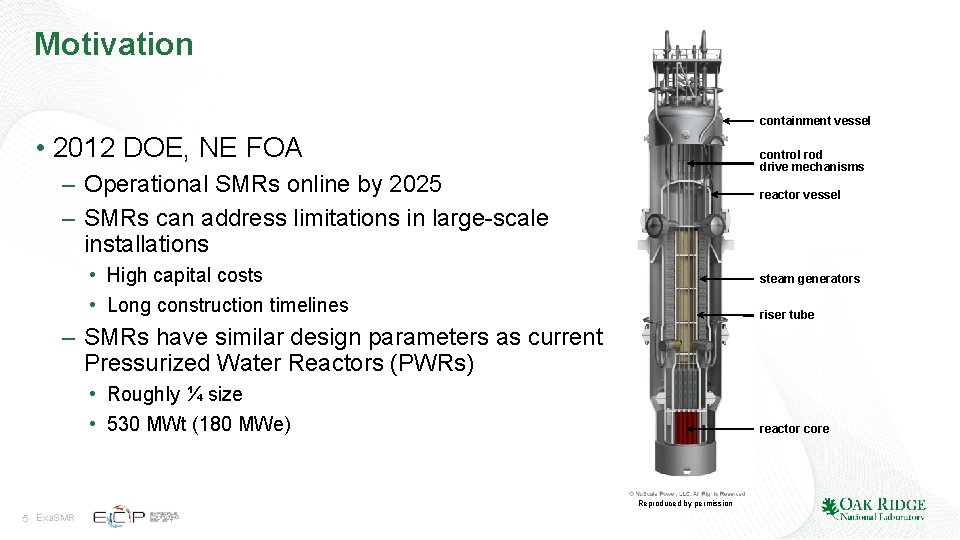

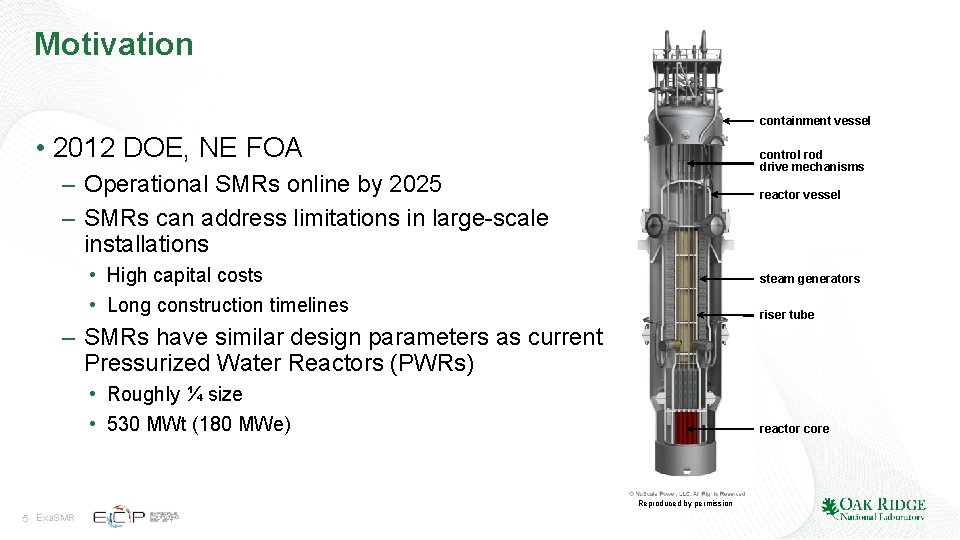

Motivation containment vessel • 2012 DOE, NE FOA control rod drive mechanisms – Operational SMRs online by 2025 – SMRs can address limitations in large-scale installations reactor vessel • High capital costs • Long construction timelines steam generators riser tube – SMRs have similar design parameters as current Pressurized Water Reactors (PWRs) • Roughly ¼ size • 530 MWt (180 MWe) reactor core Reproduced by permission 5 Exa. SMR

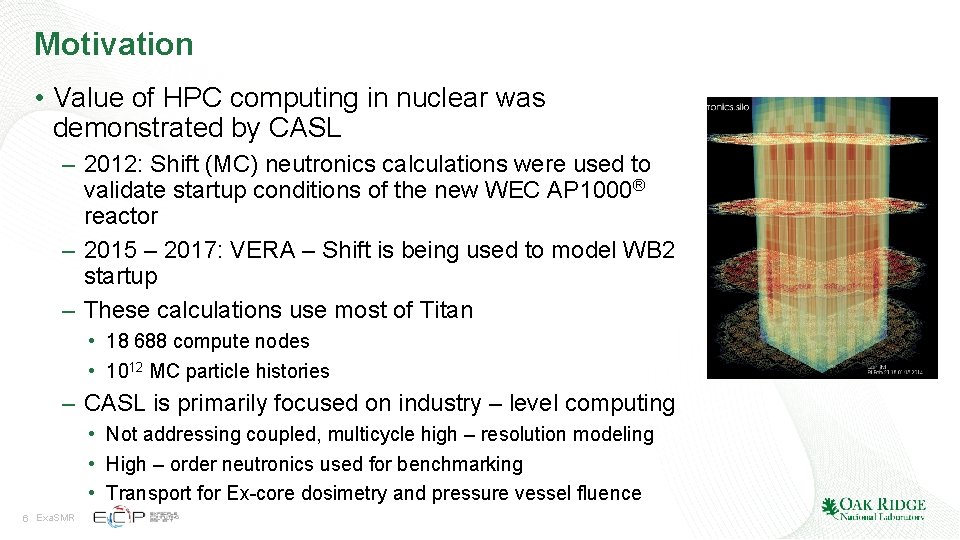

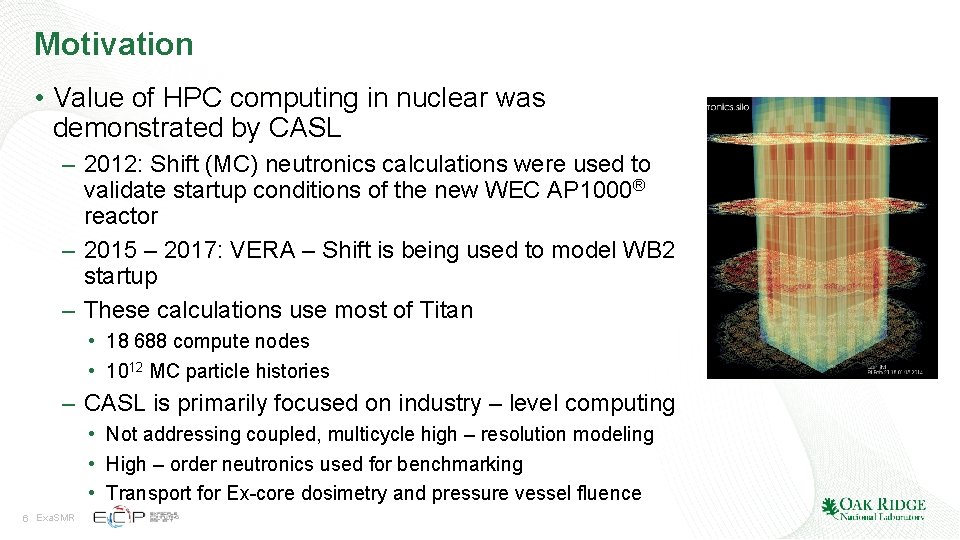

Motivation • Value of HPC computing in nuclear was demonstrated by CASL – 2012: Shift (MC) neutronics calculations were used to validate startup conditions of the new WEC AP 1000® reactor – 2015 – 2017: VERA – Shift is being used to model WB 2 startup – These calculations use most of Titan • 18 688 compute nodes • 1012 MC particle histories – CASL is primarily focused on industry – level computing • Not addressing coupled, multicycle high – resolution modeling • High – order neutronics used for benchmarking • Transport for Ex-core dosimetry and pressure vessel fluence 6 Exa. SMR

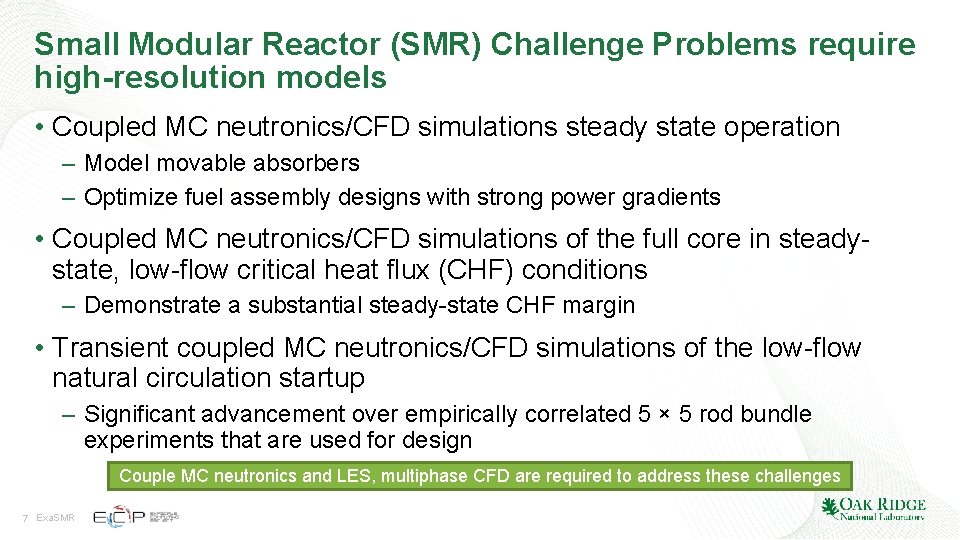

Small Modular Reactor (SMR) Challenge Problems require high-resolution models • Coupled MC neutronics/CFD simulations steady state operation – Model movable absorbers – Optimize fuel assembly designs with strong power gradients • Coupled MC neutronics/CFD simulations of the full core in steadystate, low-flow critical heat flux (CHF) conditions – Demonstrate a substantial steady-state CHF margin • Transient coupled MC neutronics/CFD simulations of the low-flow natural circulation startup – Significant advancement over empirically correlated 5 × 5 rod bundle experiments that are used for design Couple MC neutronics and LES, multiphase CFD are required to address these challenges 7 Exa. SMR

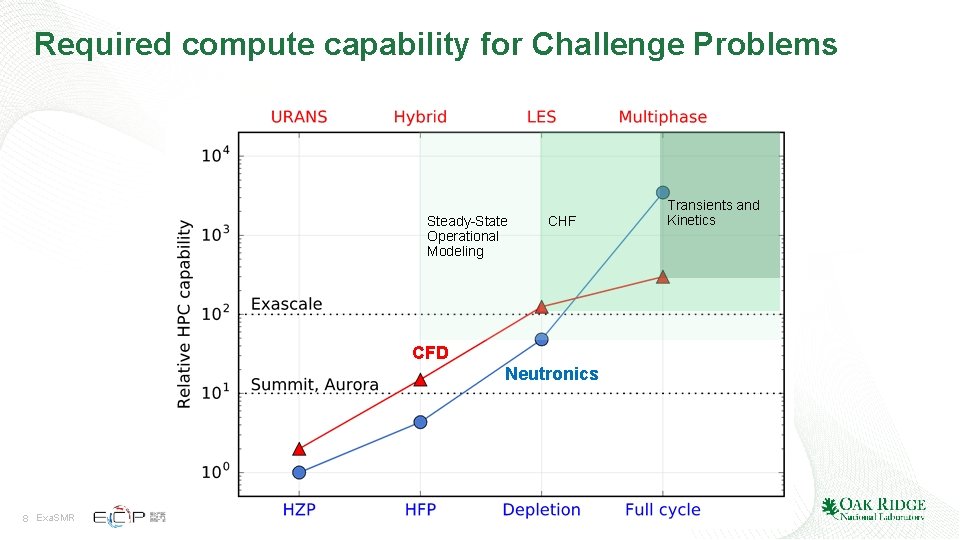

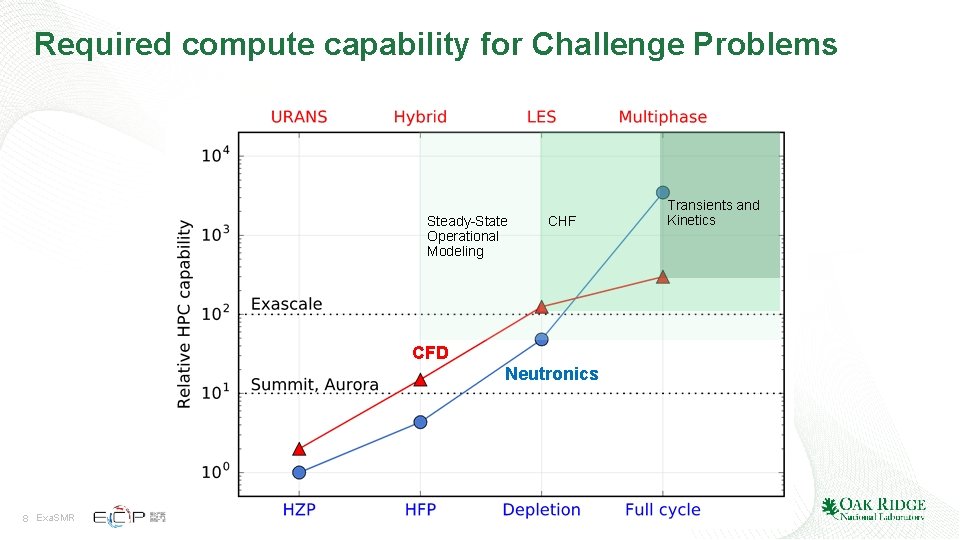

Required compute capability for Challenge Problems Steady-State Operational Modeling CHF CFD Neutronics 8 Exa. SMR Transients and Kinetics

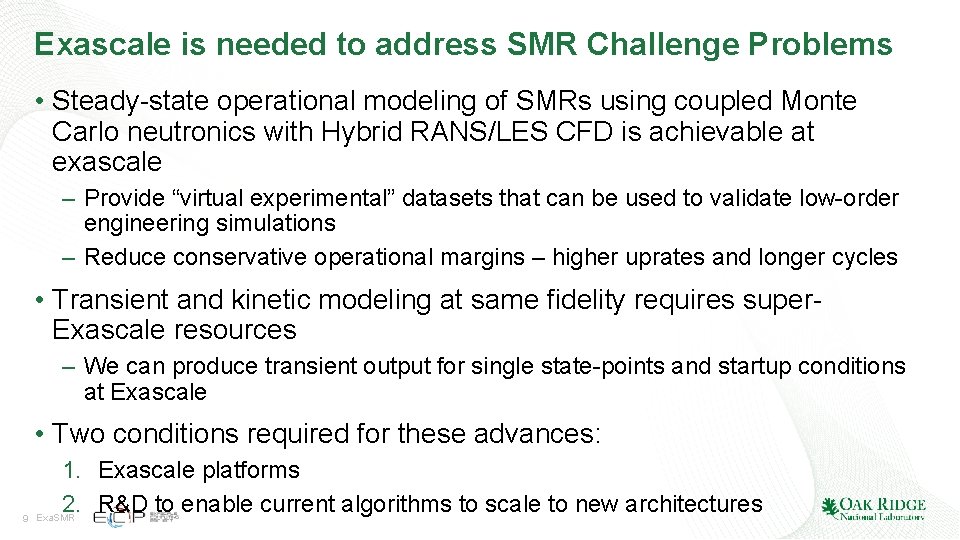

Exascale is needed to address SMR Challenge Problems • Steady-state operational modeling of SMRs using coupled Monte Carlo neutronics with Hybrid RANS/LES CFD is achievable at exascale – Provide “virtual experimental” datasets that can be used to validate low-order engineering simulations – Reduce conservative operational margins – higher uprates and longer cycles • Transient and kinetic modeling at same fidelity requires super. Exascale resources – We can produce transient output for single state-points and startup conditions at Exascale • Two conditions required for these advances: 9 1. Exascale platforms 2. R&D to enable current algorithms to scale to new architectures Exa. SMR

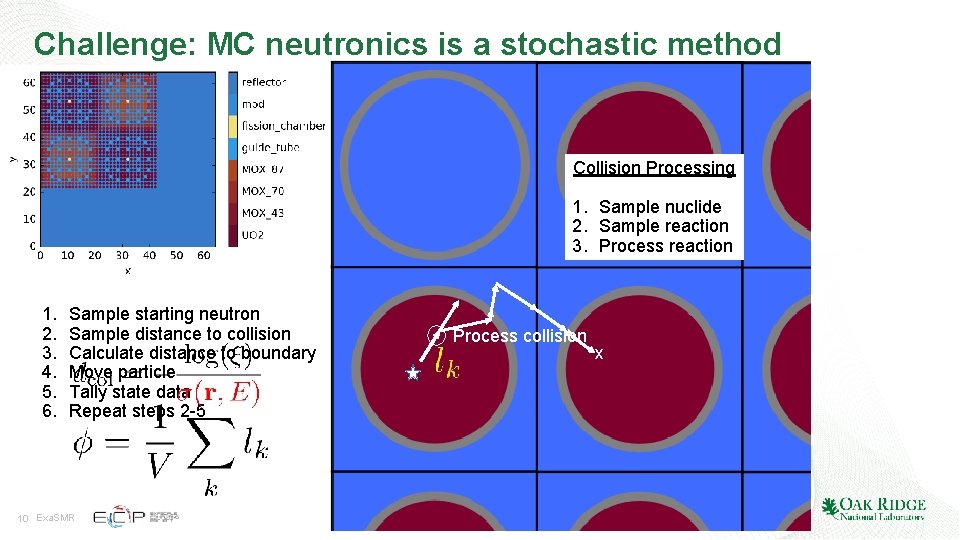

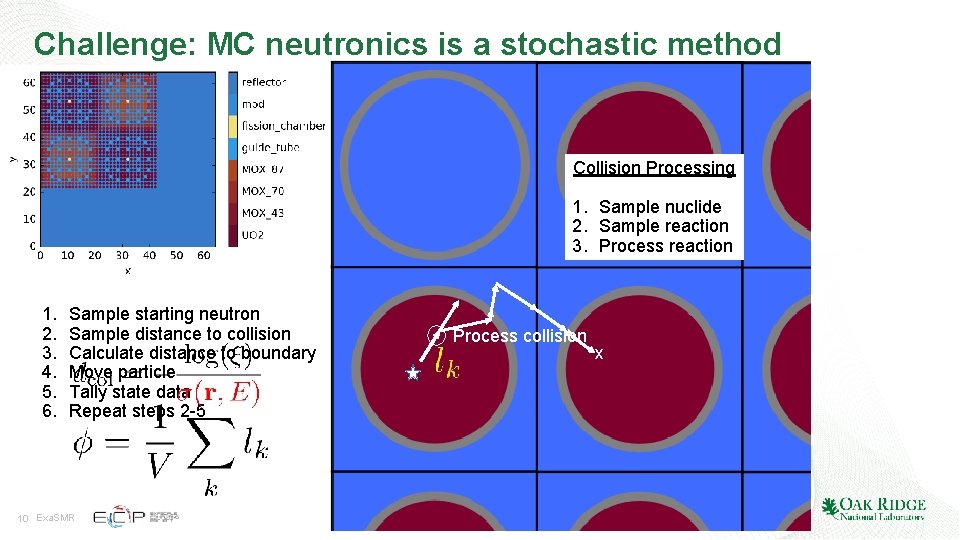

Challenge: MC neutronics is a stochastic method Collision Processing 1. Sample nuclide 2. Sample reaction 3. Process reaction 1. 2. 3. 4. 5. 6. Sample starting neutron Sample distance to collision Calculate distance to boundary Move particle Tally state data Repeat steps 2 -5 10 Exa. SMR Process collision x

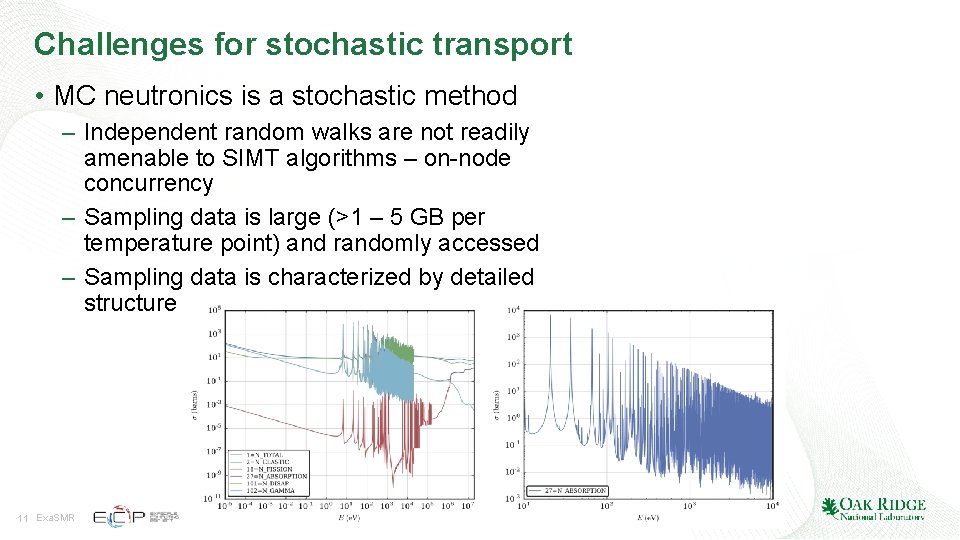

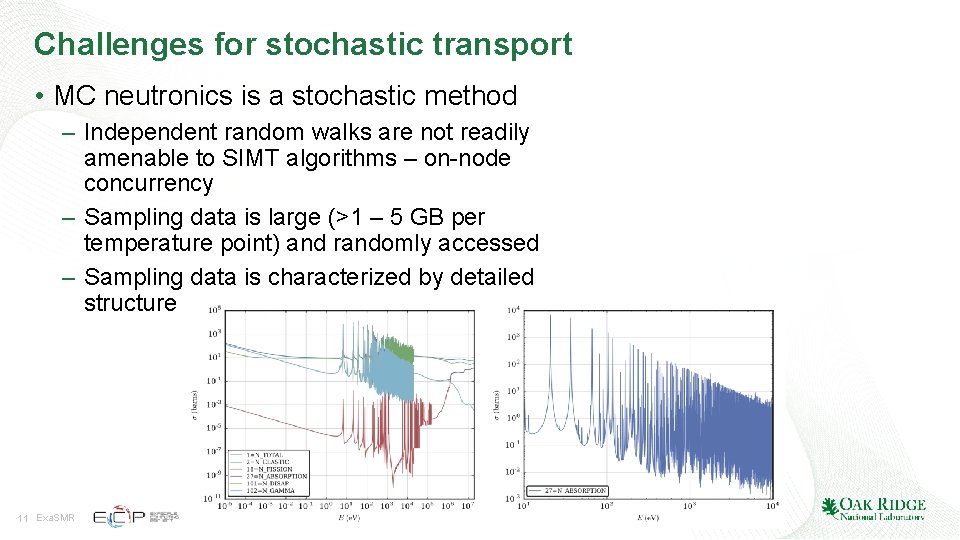

Challenges for stochastic transport • MC neutronics is a stochastic method – Independent random walks are not readily amenable to SIMT algorithms – on-node concurrency – Sampling data is large (>1 – 5 GB per temperature point) and randomly accessed – Sampling data is characterized by detailed structure 11 Exa. SMR

Algorithmic approaches Standard history-based algorithm 12 Exa. SMR Event-based algorithm

Current Progress • Implemented intra-node kernels on NVIDIA GPU’s and Intel Phi’s for Monte Carlo neutronics – Phi: Open. MC – GPU: Shift (modified history-based and event-based) – Documented in ECP Report, Monte Carlo Device Implementation, ECP Milestone Report, WBS 1. 2. 1. 08, ECP-SE-08 -2000. • In progress – Optimization of baseline MC kernels – Implementation of Phi and GPU kernels in Nek 5000 13 Exa. SMR

GPU MC Development at ORNL – Thread Divergence • On GPU, groups of 32 threads (warps) execute together in lockstep – Any code branch taken by a single thread must be taken by all threads in the warp – Inactive threads are automatically masked and computed results discarded • High-level thread divergence is always detrimental to performance • Low-level thread divergence may not be problematic – Cost of executing multiple paths typically small compared to memory latency GOAL 14 Exa. SMR Address thread divergence with algorithms that mirror standard CPU algorithms as closely as possible

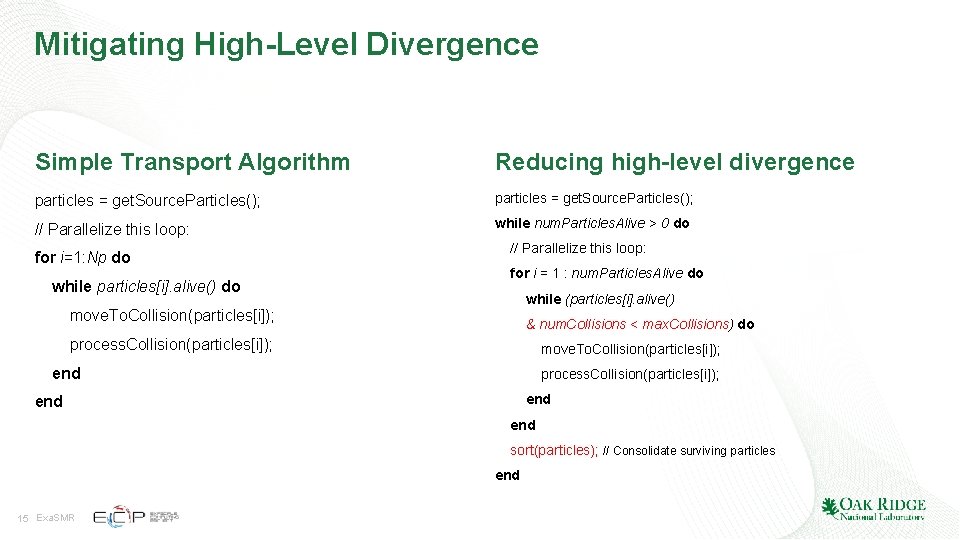

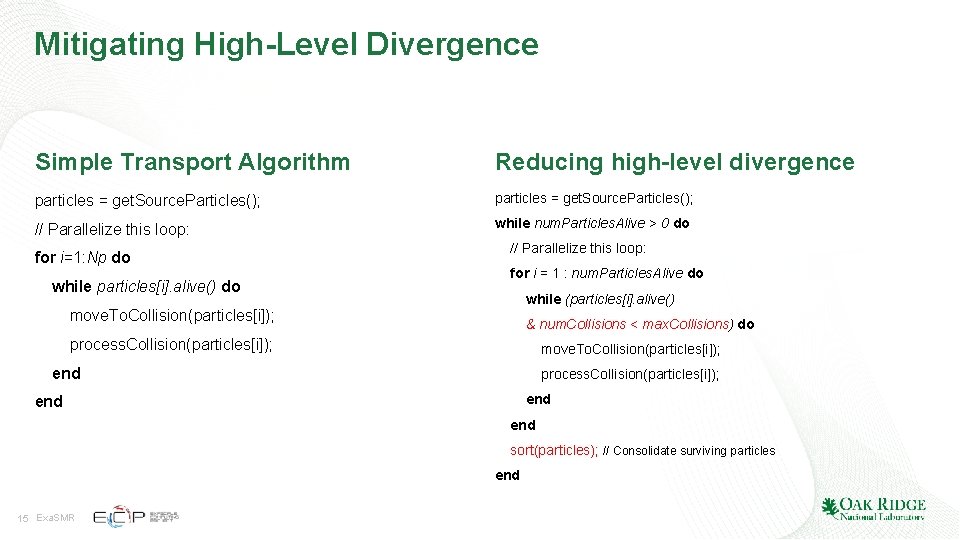

Mitigating High-Level Divergence Simple Transport Algorithm Reducing high-level divergence particles = get. Source. Particles(); // Parallelize this loop: while num. Particles. Alive > 0 do for i=1: Np do while particles[i]. alive() do // Parallelize this loop: for i = 1 : num. Particles. Alive do while (particles[i]. alive() move. To. Collision(particles[i]); & num. Collisions < max. Collisions) do process. Collision(particles[i]); move. To. Collision(particles[i]); end process. Collision(particles[i]); end end sort(particles); // Consolidate surviving particles end 15 Exa. SMR

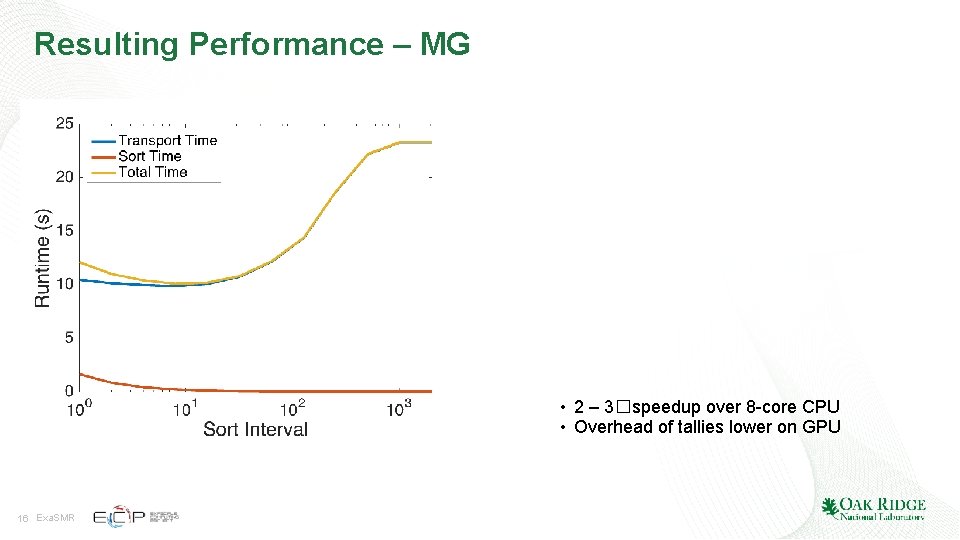

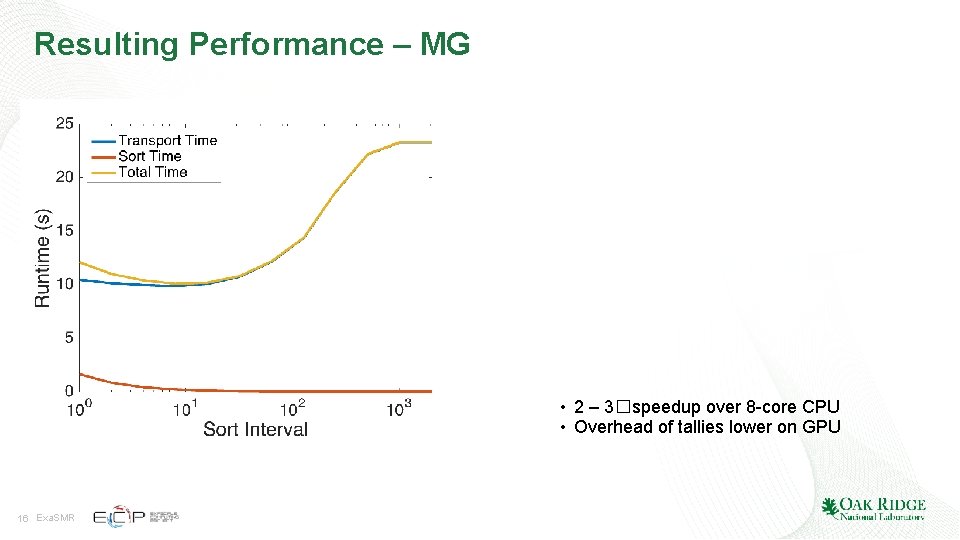

Resulting Performance – MG • 2 – 3�speedup over 8 -core CPU • Overhead of tallies lower on GPU 16 Exa. SMR

GPU Performance • Modified history-based outperforms event-based algorithms by 2 – 3� • Reasons: – Low-level thread divergence not severe – Sort cost – Memory access and fetching • Sorting over more events creates additional indirection in vector, which results in factors-of-performance degradation 17 Exa. SMR

Continuous – energy physics presents further challenges • History–based results do not appear to extend to continous–energy transport needed for many analyses – Initial implementation: 2. 5 – 3 x speedup over single core CPU – History truncation and resorting has minimal impact – Multiple additional levels of thread divergence • It appears that low–level thread divergence is much higher in CE MC – Many more interaction channels compared to MG – We are currently investigating methods that oversubscribe threads to address low-level thread divergence from at the transport level 18 Exa. SMR

Acknowledgements This research was supported by the Exascale Computing Project (17 -SC-20 -SC), a collaborative effort of two U. S. Department of Energy organizations (Office of Science and the National Nuclear Security Administration) responsible for the planning and preparation of a capable exascale ecosystem, including software, applications, hardware, advanced system engineering and early testbed platforms, in support of the nation’s exascale computing imperative. 19 Exa. SMR

Questions? 20 Exa. SMR