Cosmological Model Selection David Parkinson with Andrew Liddle

- Slides: 18

Cosmological Model Selection David Parkinson (with Andrew Liddle & Pia Mukherjee)

Outline • The Evidence: the Bayesian model selection statistic • Methods • Nested Sampling • Results

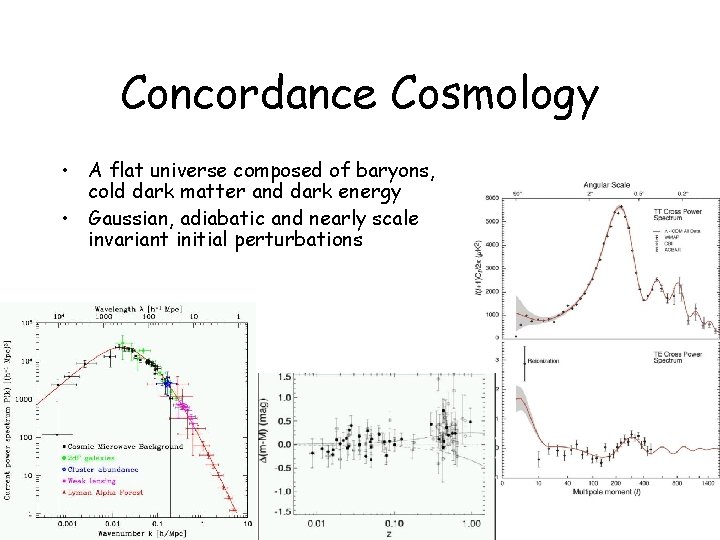

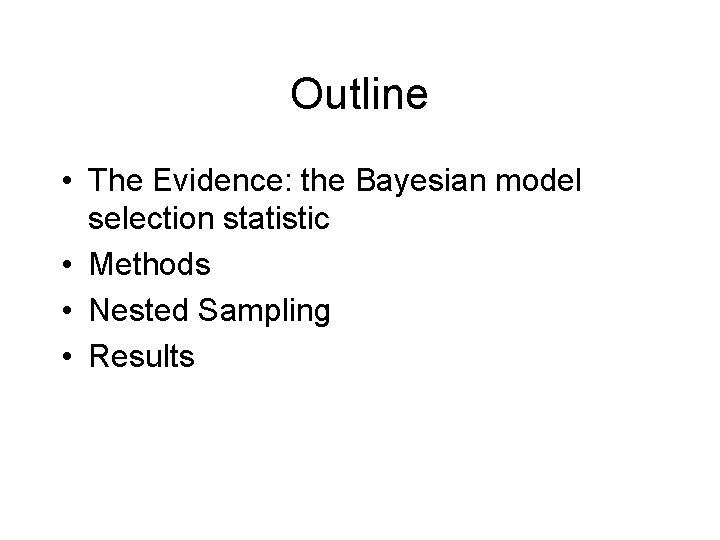

Concordance Cosmology • A flat universe composed of baryons, cold dark matter and dark energy • Gaussian, adiabatic and nearly scale invariant initial perturbations

Model Extensions • Do we really need only 5 numbers to describe the universe ( b, CDM, H 0, As, )? • Extra dynamic properties: curvature ( k), massive neutrinos (M ), dynamic dark energy (w(z)) etc. . • More complex initial conditions: tilt (ns) and running (nrun) of the adiabatic power spectrum, entropy perturbations etc. . • How do we decide if these extensions are justified?

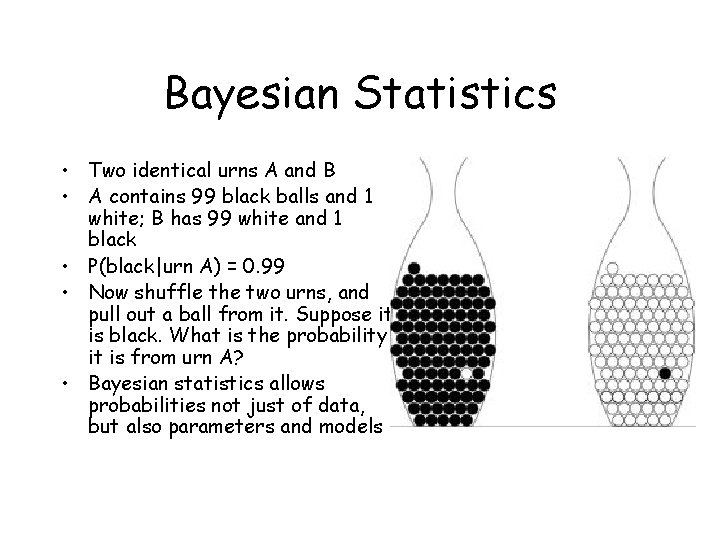

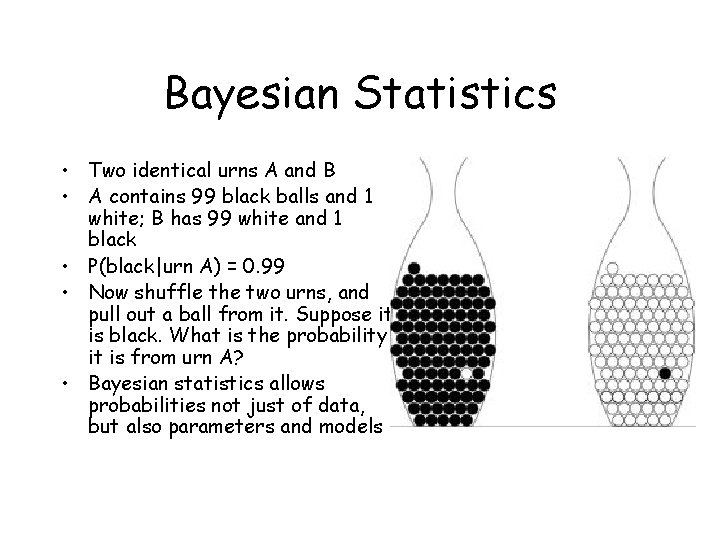

Bayesian Statistics • Two identical urns A and B • A contains 99 black balls and 1 white; B has 99 white and 1 black • P(black|urn A) = 0. 99 • Now shuffle the two urns, and pull out a ball from it. Suppose it is black. What is the probability it is from urn A? • Bayesian statistics allows probabilities not just of data, but also parameters and models

Bayes’ Theorem • Bayes’ theorem gives the posterior probability of the parameters ( ) of a model (H) given data (D) • Marginalizing over the evidence is • Evidence = average likelihood of the data over the prior parameter space of the model

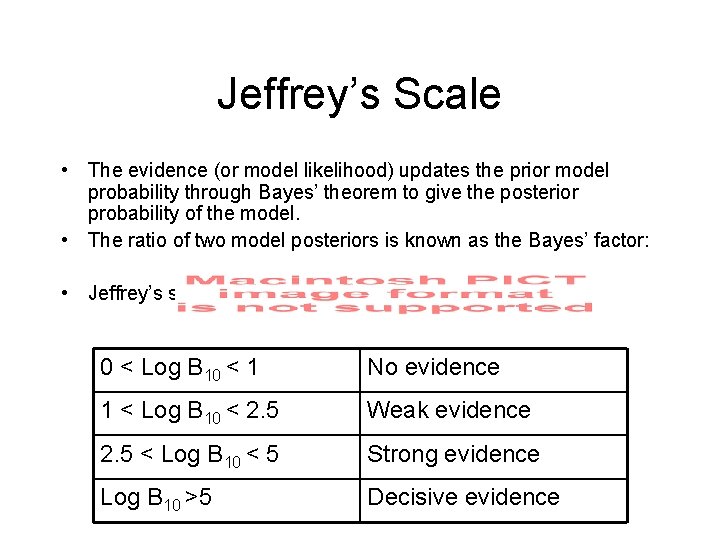

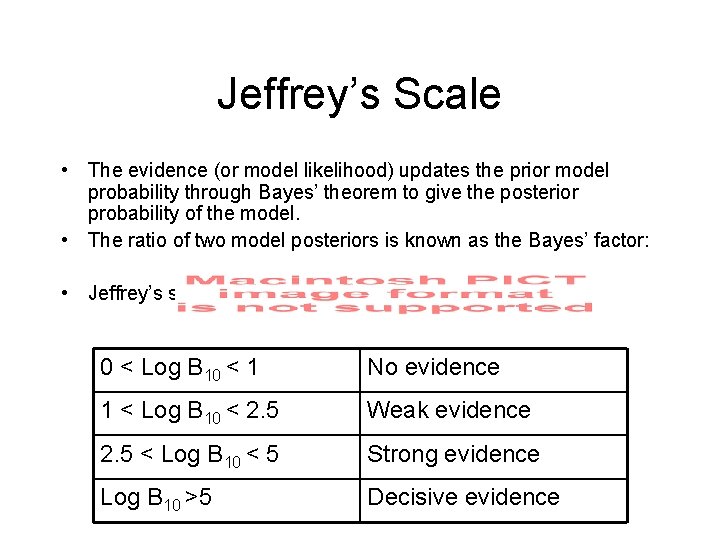

Jeffrey’s Scale • The evidence (or model likelihood) updates the prior model probability through Bayes’ theorem to give the posterior probability of the model. • The ratio of two model posteriors is known as the Bayes’ factor: • Jeffrey’s scale 0 < Log B 10 < 1 No evidence 1 < Log B 10 < 2. 5 Weak evidence 2. 5 < Log B 10 < 5 Strong evidence Log B 10 >5 Decisive evidence

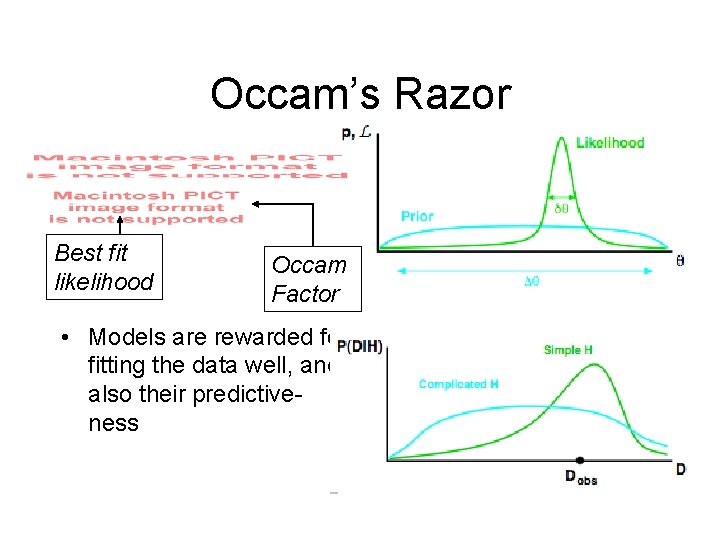

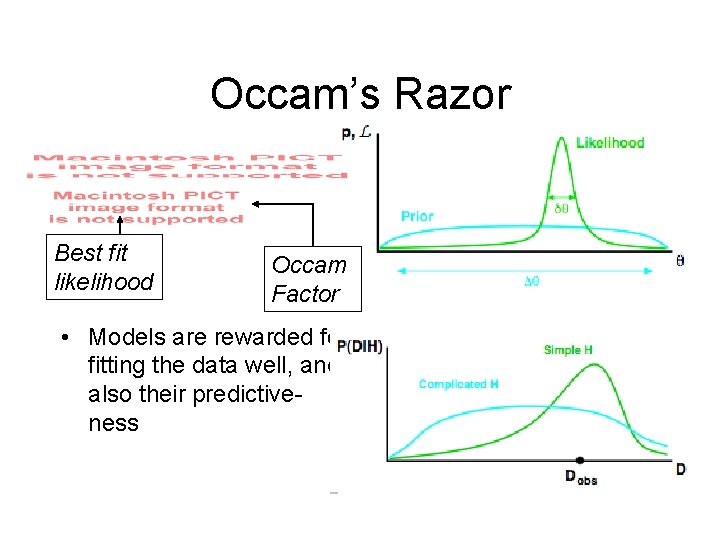

Occam’s Razor Best fit likelihood Occam Factor • Models are rewarded for fitting the data well, and also their predictiveness

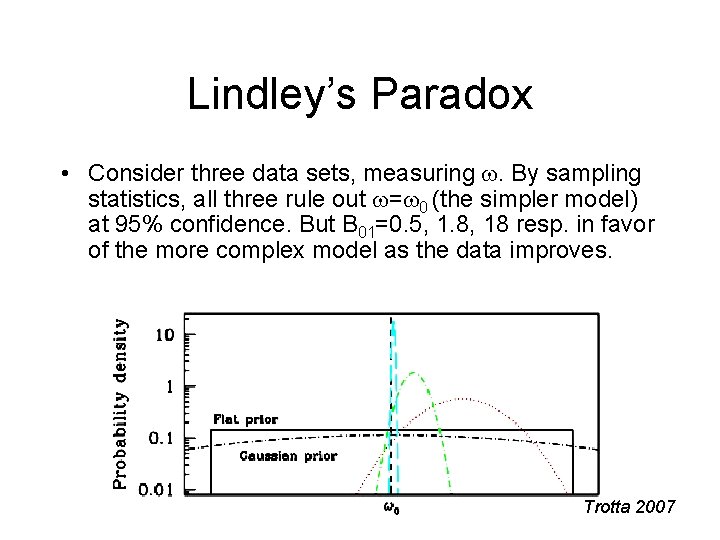

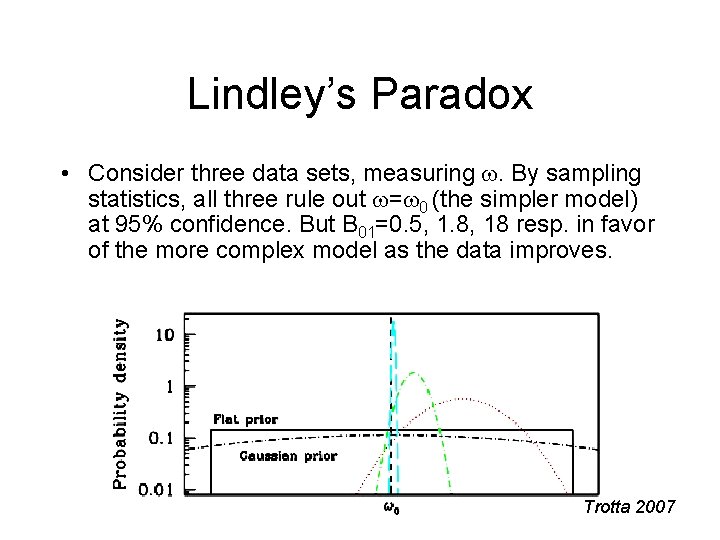

Lindley’s Paradox • Consider three data sets, measuring . By sampling statistics, all three rule out = 0 (the simpler model) at 95% confidence. But B 01=0. 5, 1. 8, 18 resp. in favor of the more complex model as the data improves. Trotta 2007

Methods • The Laplace Approximation – Assumes that the P( |D, M) is a multi-dimensional Gaussian • The Savage-Dickey Density Ratio – Needs separable priors and nested model – (and the reference value to be in the high likelihood region of the more complex model for accuracy) • Thermodynamic Integration – Needs a series of MCMC’s at different temperatures – Accurate but computationally very intensive • VEGAS – Likelihood surface needs to be “not too far” from Gaussian

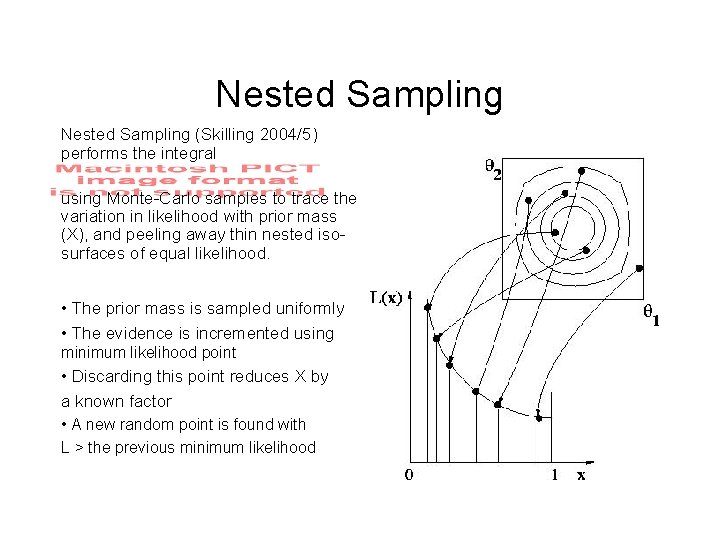

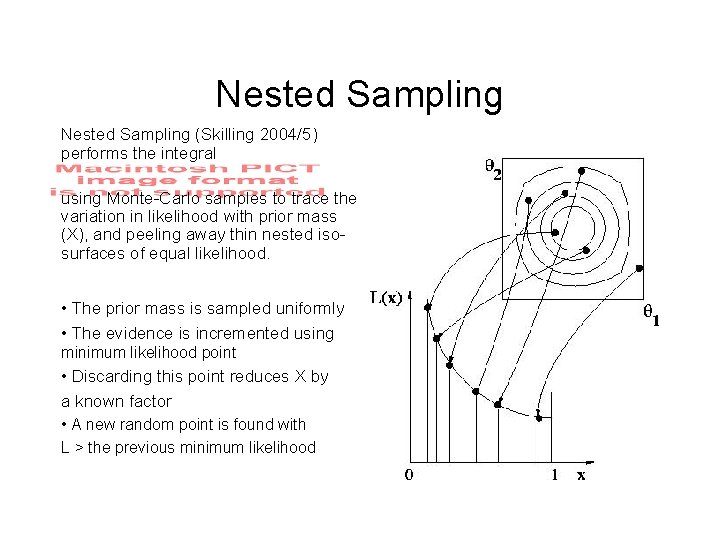

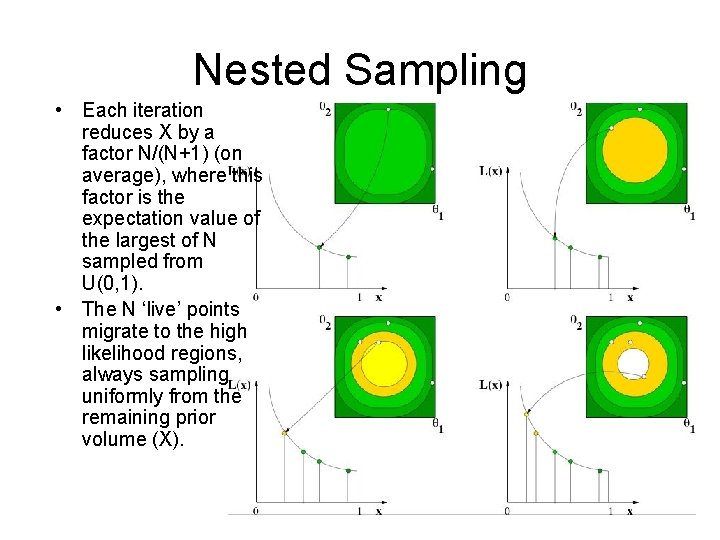

Nested Sampling (Skilling 2004/5) performs the integral using Monte-Carlo samples to trace the variation in likelihood with prior mass (X), and peeling away thin nested isosurfaces of equal likelihood. • The prior mass is sampled uniformly • The evidence is incremented using minimum likelihood point • Discarding this point reduces X by a known factor • A new random point is found with L > the previous minimum likelihood

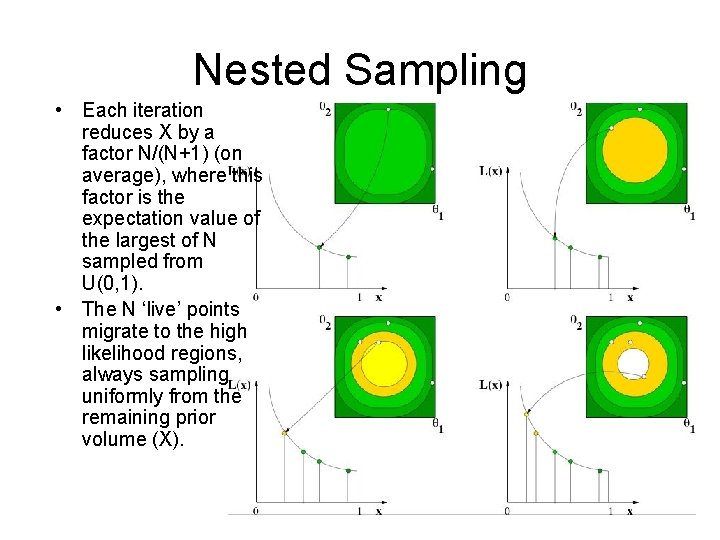

Nested Sampling • Each iteration reduces X by a factor N/(N+1) (on average), where this factor is the expectation value of the largest of N sampled from U(0, 1). • The N ‘live’ points migrate to the high likelihood regions, always sampling uniformly from the remaining prior volume (X).

Movie

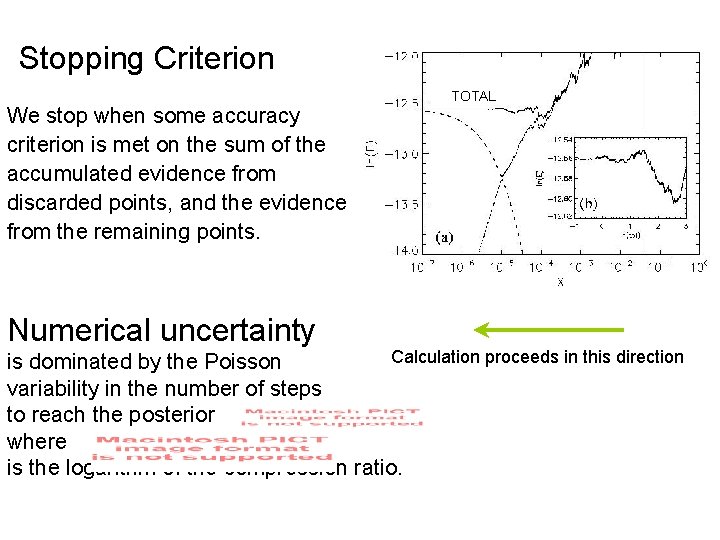

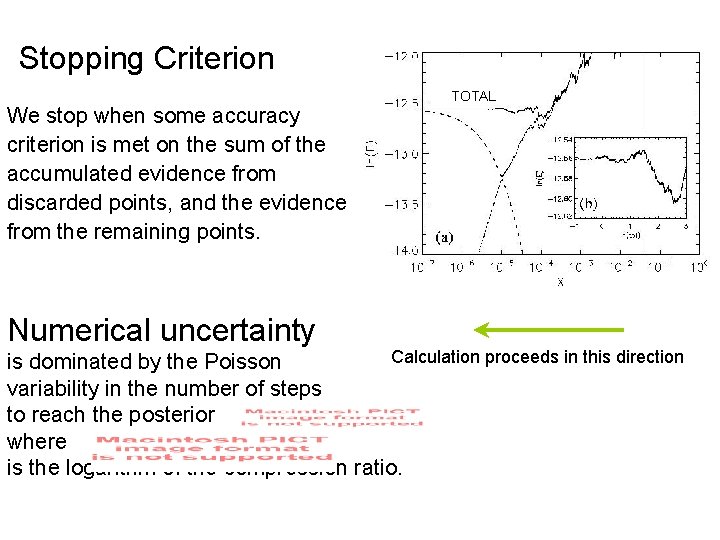

Stopping Criterion We stop when some accuracy criterion is met on the sum of the accumulated evidence from discarded points, and the evidence from the remaining points. Numerical uncertainty TOTAL Calculation proceeds in this direction is dominated by the Poisson variability in the number of steps to reach the posterior where is the logarithm of the compression ratio.

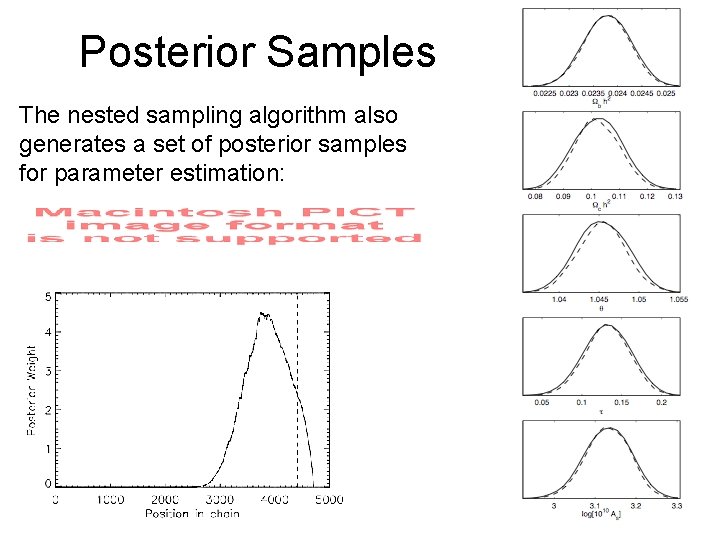

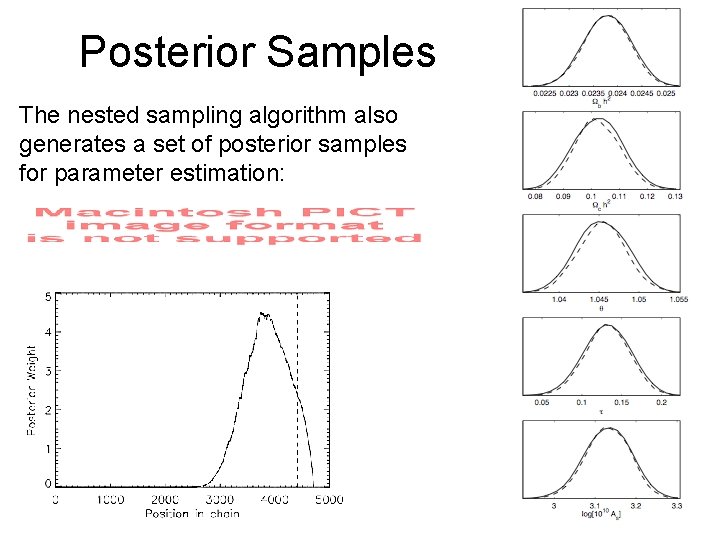

Posterior Samples The nested sampling algorithm also generates a set of posterior samples for parameter estimation:

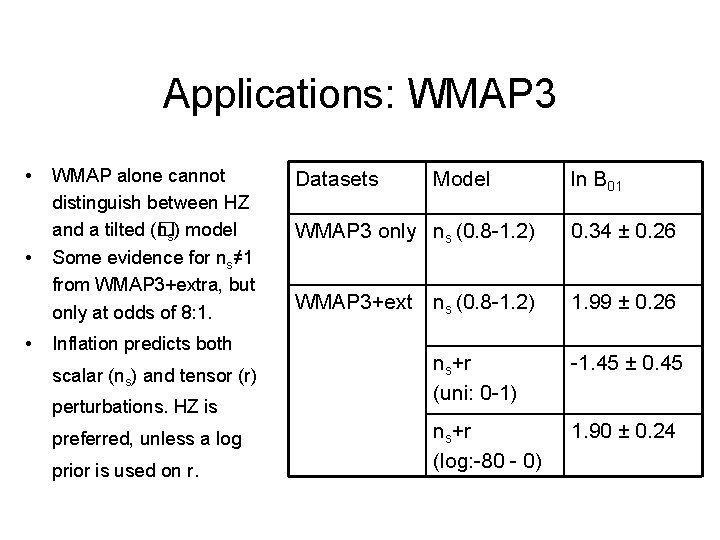

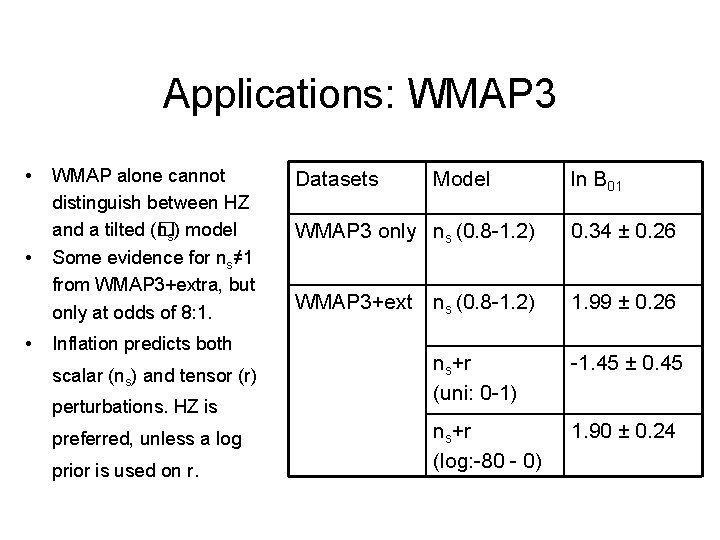

Applications: WMAP 3 • • • WMAP alone cannot distinguish between HZ and a tilted (� ns) model Some evidence for ns≠ 1 from WMAP 3+extra, but only at odds of 8: 1. Inflation predicts both scalar (ns) and tensor (r) perturbations. HZ is preferred, unless a log prior is used on r. Datasets Model ln B 01 WMAP 3 only ns (0. 8 -1. 2) 0. 34 ± 0. 26 WMAP 3+ext ns (0. 8 -1. 2) 1. 99 ± 0. 26 ns+r (uni: 0 -1) -1. 45 ± 0. 45 ns+r (log: -80 - 0) 1. 90 ± 0. 24

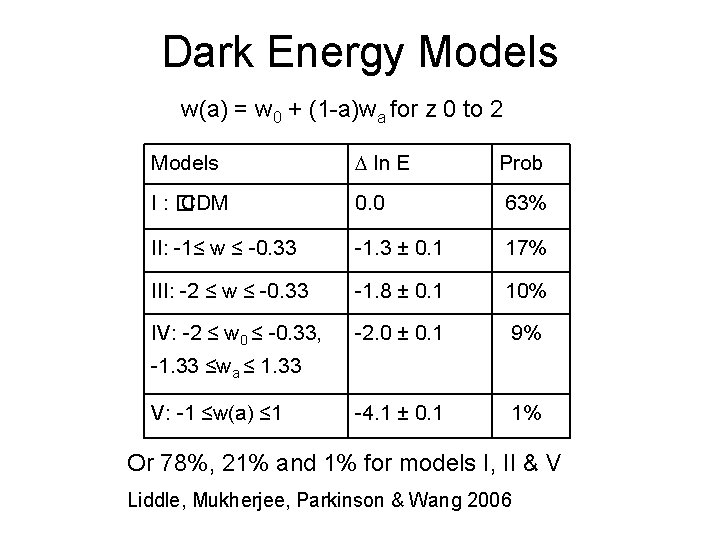

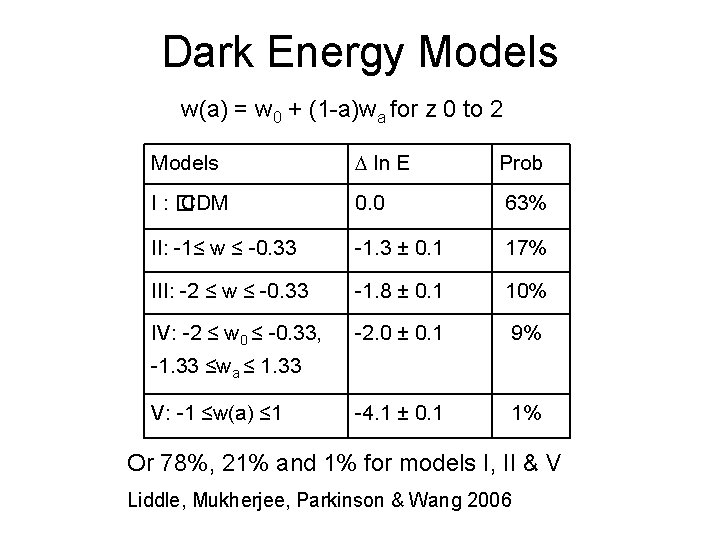

Dark Energy Models w(a) = w 0 + (1 -a)wa for z 0 to 2 Models ln E Prob I: � CDM 0. 0 63% II: -1≤ w ≤ -0. 33 -1. 3 ± 0. 1 17% III: -2 ≤ w ≤ -0. 33 -1. 8 ± 0. 1 10% IV: -2 ≤ w 0 ≤ -0. 33, -2. 0 ± 0. 1 9% -4. 1 ± 0. 1 1% -1. 33 ≤wa ≤ 1. 33 V: -1 ≤w(a) ≤ 1 Or 78%, 21% and 1% for models I, II & V Liddle, Mukherjee, Parkinson & Wang 2006

Conclusions • Model selection (via Bayesian evidences) and parameter estimation are two levels of inference. • The nested sampling scheme computes evidences accurately and efficiently; also gives parameter posteriors (www. cosmonest. org) • Applications - simple models still favoured – model selection based forecasting – Bayesian model averaging – many others… Foreground contamination, cosmic topology, cosmic strings…